Zero-Shot Prediction Revolutionizes Protein Engineering: From AI Models to Real-World Applications

This article explores the transformative impact of zero-shot AI models on protein engineering, a paradigm that predicts the functional effects of protein mutations without requiring task-specific training data.

Zero-Shot Prediction Revolutionizes Protein Engineering: From AI Models to Real-World Applications

Abstract

This article explores the transformative impact of zero-shot AI models on protein engineering, a paradigm that predicts the functional effects of protein mutations without requiring task-specific training data. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive overview of the foundational principles, leading methodologies, and practical applications of these tools. The content delves into the inner workings of state-of-the-art models like ProMEP, PoET, and RFjoint, which leverage protein language models and multimodal learning from sequence and structure. It further addresses key challenges such as navigating intrinsically disordered regions and optimizing predictions through prompt engineering. Finally, the article offers a rigorous comparative analysis of model performance on established benchmarks like ProteinGym and showcases successful real-world applications in developing high-efficiency gene-editing tools, providing a validated roadmap for leveraging these technologies in biomedical research and therapeutic development.

The Fundamentals of Zero-Shot Prediction: Redefining Protein Fitness Landscapes

Zero-shot prediction represents a paradigm shift in protein engineering, enabling the forecasting of mutational effects without requiring experimental training data for each new protein. This approach leverages pre-trained models that have learned the fundamental principles of protein sequence, structure, and function from massive datasets. This guide objectively compares the performance of leading zero-shot methods against traditional supervised learning and directed evolution approaches, providing researchers with experimental data and methodologies to inform their protein engineering strategies.

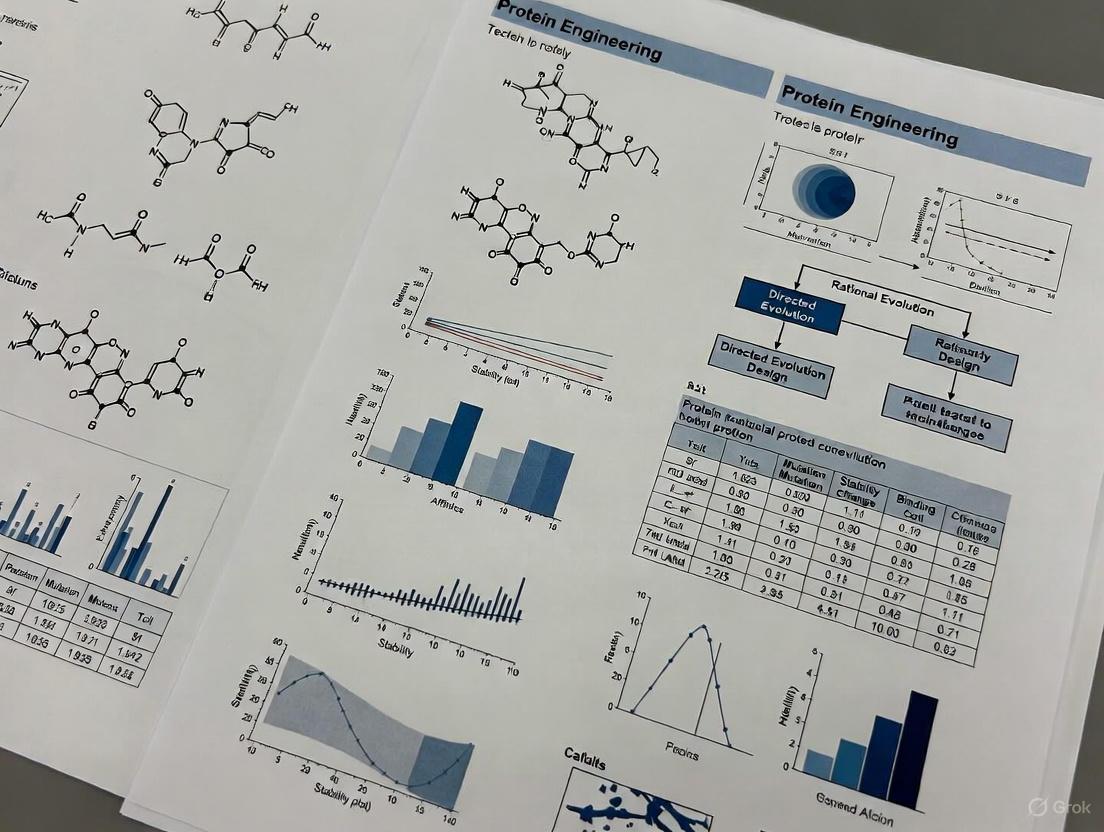

Protein engineering has traditionally relied on two primary approaches: directed evolution, which mimics natural selection through iterative rounds of mutagenesis and screening, and supervised learning, which requires large datasets of experimental measurements to train predictive models. Zero-shot prediction emerges as a transformative third approach that leverages pre-trained models to predict mutational effects without protein-specific experimental data.

These models learn generalizable principles of protein biochemistry during pretraining on evolutionary sequences, protein structures, or biophysical simulations. The most advanced zero-shot models achieve this through multimodal deep learning (integrating sequence and structural information) [1] and biophysical simulation [2], capturing the fundamental relationships between sequence changes and functional outcomes.

The core advantage of zero-shot approaches lies in their ability to make accurate predictions for proteins with limited or no experimental data, dramatically reducing the experimental burden and accelerating the protein design cycle. This guide systematically compares the performance of these emerging methodologies against established benchmarks.

Methodological Approaches to Zero-Shot Prediction

Biophysics-Informed Language Models

The METL (Mutational Effect Transfer Learning) framework represents a novel approach that unites advanced machine learning with biophysical modeling [2]. Unlike evolution-based protein language models, METL incorporates decades of protein biophysics research through pretraining on synthetic data from molecular simulations.

Experimental Protocol:

- Synthetic Data Generation: Molecular modeling with Rosetta generates structures for millions of protein sequence variants, with extraction of 55 biophysical attributes including molecular surface areas, solvation energies, van der Waals interactions, and hydrogen bonding

- Pretraining: Transformer-based neural networks are pretrained on simulation data to capture fundamental sequence-structure-energetics relationships

- Fine-tuning: Models are subsequently fine-tuned on experimental sequence-function data, enabling prediction of specific properties like thermostability, catalytic activity, and fluorescence

METL implements two specialized strategies: METL-Local (learns representations for specific proteins of interest) and METL-Global (learns general representations applicable to any protein) [2].

Multimodal Deep Representation Learning

ProMEP (Protein Mutational Effect Predictor) employs a multimodal architecture that integrates both sequence and structure contexts from approximately 160 million proteins in the AlphaFold database [1]. This approach enables multiple sequence alignment-free prediction, offering significant speed advantages while maintaining accuracy.

Experimental Protocol:

- Multimodal Pretraining: A deep representation learning model with ~659.3 million parameters is trained by completing missing elements from corrupted input using both sequence and structure information

- Protein Representation: Protein point clouds represent structures at atomic resolution, with rotation- and translation-equivariant structure embedding capturing 3D structural context

- Zero-Shot Inference: The log-likelihood ratio of wild-type versus mutated sequences, conditioned on both sequence and structure contexts, quantifies mutational effects [1]

Structure-Based Fitness Prediction

Structure-based models explicitly condition predictions on protein 3D structure, leveraging the wealth of data from structure prediction tools like AlphaFold2.

Experimental Protocol:

- Structure Processing: Both experimental and predicted structures are used as input, with careful consideration of multimeric states when applicable

- Inverse Folding Models: Methods like ESM-IF1 take corrupted sequences and backbone structures to predict likelihoods of corrupted residues, with performance indicating fitness prediction capability

- Benchmarking: Rigorous evaluation on ProteinGym substitution assays assesses performance across different protein types and functional categories [3]

Weakly Supervised Approaches

For scenarios with limited experimental data, weakly supervised methods combine computational estimates from molecular simulation and protein language models to augment small experimental datasets.

Experimental Protocol:

- Computational Data Generation: Rosetta provides molecular simulation estimates, while ESM-2 generates zero-shot predictions based on likelihood ratios

- Dynamic Weight Adjustment: Algorithms dynamically adjust the weight and inclusion of computational data based on available experimental data to prevent performance degradation

- Hybrid Scoring: Combination of simulation and language model estimates creates robust training data for mutational effect prediction [4]

Performance Benchmarking and Comparative Analysis

Table 1: Performance Comparison of Zero-Shot Methods on ProteinGym Benchmark

| Method | Approach | MSA Dependence | Average Spearman ρ | Key Strengths |

|---|---|---|---|---|

| ProMEP | Multimodal (Sequence+Structure) | MSA-free | 0.523 [1] | Generalizability across protein types |

| AlphaMissense | Structure-based | MSA-dependent | 0.523 [1] | Pathogenicity prediction |

| METL-Global | Biophysics-based | MSA-free | N/A | Data-scarce settings |

| ESM-IF1 | Inverse Folding | MSA-free | Variable by assay [3] | Structure-conditioned prediction |

| Tranception | Sequence-based | MSA-free | <0.523 [1] | Long-range dependencies |

Performance in Data-Scarce Regimes

Table 2: Performance with Limited Experimental Data (Based on METL Evaluation)

| Method | Small Training Sets (<100 examples) | Position Extrapolation | Mutation Extrapolation |

|---|---|---|---|

| METL-Local | Strong performance, especially on GFP and GB1 [2] | Excellent | Excellent |

| Linear-EVE | Competitive with METL-Local [2] | Good | Moderate |

| ProteinNPT | Sometimes surpasses METL-Local on small sets [2] | Good | Good |

| METL-Global | Competitive with ESM-2 [2] | Moderate | Moderate |

| ESM-2 | Gains advantage as training set size increases [2] | Moderate | Moderate |

Application to Protein Engineering Campaigns

Table 3: Experimental Validation in Protein Engineering Applications

| Method | Protein Target | Engineering Outcome | Performance Improvement |

|---|---|---|---|

| ProMEP | TnpB (gene-editing enzyme) | 5-site mutant | Editing efficiency: 74.04% vs 24.66% (wild type) [1] |

| ProMEP | TadA (base editor) | 15-site mutant | A-to-G conversion: 77.27% vs 69.80% (ABE8e) [1] |

| METL | Green Fluorescent Protein | Functional variants designed | Successful design with only 64 training examples [2] |

| Weak Supervision | Various enzymes | Stability and activity optimization | Improved prediction accuracy in data-scarce conditions [4] |

Critical Technical Considerations

Impact of Intrinsically Disordered Regions

A significant challenge for structure-based zero-shot methods involves intrinsically disordered regions (IDRs), which lack fixed 3D structures. ProteinGym analysis reveals that 28% of unique UniProt IDs in the benchmark contain disordered regions in sequences covered by DMS assays [3]. These regions affect prediction quality for both structure-based models and protein language models, likely due to their fast-evolving nature and lower conservation [3].

Predicted vs. Experimental Structures

The choice between predicted and experimental structures involves important trade-offs. For monomers, 74.5% of assays show better performance with predicted structures, while for multimers, 80% benefit from predicted structures [3]. This counterintuitive finding may reflect that predicted structures only contain target chain coordinates, while experimental structures may include multi-chain complexes not relevant to the fitness assay.

Computational Efficiency

ProMEP's MSA-free architecture provides 2-3 orders of magnitude speed improvement over MSA-dependent methods like AlphaMissense [1]. This dramatically increases throughput for large-scale protein engineering projects, making comprehensive exploration of sequence space more feasible.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Computational Tools for Zero-Shot Prediction

| Tool/Resource | Function | Application Context |

|---|---|---|

| Rosetta | Molecular simulation and energy calculation | Biophysical attribute calculation for METL [2] |

| AlphaFold Database | Source of predicted protein structures | Structure context for ProMEP and other structure-based methods [1] |

| ESM-2 | Protein language model | Zero-shot prediction and sequence embeddings [4] |

| ProteinGym | Benchmark suite for fitness prediction | Standardized performance evaluation [3] |

| DisProt | Database of disordered protein regions | Identifying regions challenging for structure-based methods [3] |

Visualizing Method Workflows

METL Framework Architecture

METL Framework: Integrating biophysical simulations with experimental data.

ProMEP Multimodal Architecture

ProMEP Architecture: Integrating sequence and structure contexts.

Zero-shot prediction methods represent a fundamental advancement in protein engineering capability, offering varying strengths depending on the specific application context. ProMEP excels in general mutational effect prediction and computational efficiency, while METL demonstrates particular strength in data-scarce environments. Structure-based methods provide valuable insights but face challenges with disordered regions.

The integration of biophysical modeling with deep learning approaches shows particular promise for future development, as does the strategic combination of multiple modalities through ensemble methods. As these technologies mature, they will increasingly enable the rational design of novel proteins with customized functions, accelerating therapeutic development and biological engineering.

For researchers selecting approaches, consideration of target protein characteristics (including disordered region content), available experimental data, and computational resources will guide optimal method selection from this expanding toolkit of zero-shot prediction technologies.

The emergence of artificial intelligence systems capable of deciphering the complex language of proteins represents a transformative advancement in computational biology. These AI models, trained on millions of protein sequences and structures, have developed a remarkable capacity to predict protein function, stability, and fitness without explicit experimental data—a capability known as zero-shot prediction. This review examines the core architectural principles and learning mechanisms that enable AI to learn protein language, comparing the performance of leading models across key protein engineering tasks. By synthesizing recent breakthroughs in multimodal deep representation learning and biophysics-informed models, we provide a comprehensive framework for understanding how these tools are accelerating protein engineering for therapeutic and industrial applications.

Proteins constitute a fundamental language of life, where linear sequences of amino acids fold into complex three-dimensional structures that dictate biological function. The analogy between human language and protein sequences has inspired the development of protein language models (PLMs) that apply natural language processing techniques to decode patterns and relationships within protein sequences [5]. Just as words combine to form meaningful sentences, the specific arrangement of amino acids in proteins conveys structural and functional information that AI can learn to interpret.

The zero-shot prediction capability of these models—their ability to make accurate predictions about protein fitness without task-specific training—has emerged as a particularly powerful asset for protein engineering. This capability allows researchers to navigate gigantic protein sequence spaces computationally, identifying promising variants for experimental testing without costly, labor-intensive screening campaigns [1]. The integration of structural information with sequence data has proven especially valuable, providing physical constraints that enhance prediction accuracy and biological relevance.

Core Learning Architectures and Training Regimes

Sequence-Based Protein Language Models

Early PLMs adopted direct parallels from natural language processing, treating amino acid sequences as sentences and individual residues as words. These models employ transformer architectures trained through self-supervised objectives such as masked token prediction, where the model learns to predict randomly obscured amino acids based on their context within the sequence [5] [2]. Through training on hundreds of millions of protein sequences from evolutionary databases, these models develop context-aware representations that implicitly capture structural, functional, and evolutionary constraints.

The Evolutionary Scale Modeling (ESM) series represents a landmark in sequence-only PLMs, with ESM-2 demonstrating that scaling model parameters to billions of weights enables accurate structure prediction competitive with specialized tools [6]. These models learn representations that capture remote homology, structural features, and functional sites without explicit structural input, suggesting that evolutionary sequences alone contain rich information about protein folding and function.

Multimodal Integration of Sequence and Structure

While powerful, sequence-only models lack explicit physical constraints, leading to the development of multimodal architectures that jointly process sequence and structural information. AlphaFold pioneered this approach through its Evoformer module—a novel neural network block that jointly embeds multiple sequence alignments (MSAs) and pairwise features in a single integrated representation [7]. The Evoformer operates on two complementary representations: an MSA representation that encodes evolutionary information and a pair representation that captures residue-residue relationships, with specialized attention mechanisms enabling continuous information exchange between them.

Recent work has extended this multimodal approach to mutation effect prediction. ProMEP exemplifies this trend, employing a multimodal deep representation learning model trained on approximately 160 million AlphaFold structures that integrates both sequence and structure contexts using a rotation- and translation-equivariant structure embedding module [1] [8]. This architecture represents protein structures as atomic point clouds, enabling the model to incorporate structural information at atomic resolution while maintaining invariance to 3D rotations and translations.

Biophysics-Informed Training Approaches

A third paradigm seeks to ground protein representations in established biophysical principles. The Mutational Effect Transfer Learning (METL) framework exemplifies this approach, pretraining transformer networks on synthetic biophysical data generated from molecular simulations before fine-tuning on experimental data [2]. Unlike evolution-based models, METL learns from calculated biophysical attributes including molecular surface areas, solvation energies, van der Waals interactions, and hydrogen bonding patterns, capturing fundamental physical relationships between sequence, structure, and energetics.

METL implements two specialized pretraining strategies: METL-Local, which learns representations targeted to a specific protein of interest, and METL-Global, which captures broader principles across diverse protein folds [2]. This biophysics-aware approach demonstrates particular strength in data-scarce scenarios, enabling accurate prediction from small experimental training sets.

Table 1: Comparison of Core AI Learning Architectures for Protein Language

| Architecture | Training Data | Key Innovations | Representative Models |

|---|---|---|---|

| Sequence-Based PLMs | Evolutionary sequences (UniProt, etc.) | Masked language modeling, self-supervised learning | ESM-2, UniRep, ProtTrans |

| Multimodal Sequence+Structure | Sequences + 3D structures (AlphaFold DB, PDB) | Equivariant networks, joint embedding spaces | AlphaFold, ProMEP, ProstT5 |

| Biophysics-Informed | Molecular simulation data + experimental data | Physical priors, energy-based representations | METL, Rosetta-based models |

Performance Comparison for Zero-Shot Prediction

Mutation Effect Prediction

Zero-shot prediction of mutation effects represents a critical benchmark for protein engineering applications. As shown in Table 2, multimodal approaches consistently outperform sequence-only methods across diverse protein targets, with ProMEP achieving state-of-the-art performance on several benchmarks including the ProteinGym comprehensive assessment [1]. Notably, ProMEP achieves an average Spearman's rank correlation of 0.523 across 1.43 million variants in 53 diverse proteins, matching the performance of AlphaMissense while providing a 2-3 order of magnitude speed improvement due to its MSA-free architecture [1].

For single proteins, ProMEP demonstrates superior correlation with experimental measurements, achieving a Spearman's correlation of 0.53 on the protein G dataset (containing multiple mutations) compared to 0.47 for the next-best model, AlphaMissense [1]. This advantage for multiple mutations is particularly valuable for protein engineering, where accumulating beneficial mutations often requires evaluating combinatorial sequence spaces.

Table 2: Zero-Shot Mutation Effect Prediction Performance

| Model | Architecture | SUMO UBC9 (Spearman) | Protein G (Spearman) | ProteinGym Average (Spearman) | Speed Relative to AlphaMissense |

|---|---|---|---|---|---|

| ProMEP | Multimodal (sequence+structure) | 0.61 | 0.53 | 0.523 | 100-1000x faster |

| AlphaMissense | MSA-based | 0.58 | 0.47 | 0.523 | 1x (baseline) |

| ESM2_650M | Sequence-only | 0.52 | 0.41 | 0.451 | ~10x faster |

| Tranception | Sequence-only | 0.49 | 0.38 | 0.448 | ~5x faster |

| EVE | MSA-based | 0.55 | 0.44 | 0.478 | ~0.5x slower |

Protein Engineering Applications

The ultimate validation of zero-shot prediction models comes from their successful application to protein engineering challenges. ProMEP has demonstrated remarkable efficacy in guiding the development of high-performance gene-editing tools [1]. When applied to the gene-editing enzyme TnpB, ProMEP identified a 5-site mutant with 74.04% editing efficiency compared to 24.66% for the wild type [1]. For the deaminase TadA, ProMEP guided the development of a 15-site mutant that exhibited an A-to-G conversion frequency of 77.27% compared to 69.80% for the previous state-of-the-art editor ABE8e, with significantly reduced bystander and off-target effects [1] [8].

Biophysics-informed models like METL demonstrate particular strength in data-scarce protein engineering scenarios. When trained on only 64 examples of green fluorescent protein (GFP) variants, METL successfully designed functional variants with enhanced properties, outperforming evolution-based models in this low-data regime [2]. METL also excels at extrapolation tasks—predicting the effects of mutation types, positions, or regimes not represented in the training data—a critical capability for navigating unexplored regions of protein sequence space.

Experimental Protocols and Methodologies

Training and Validation of Multimodal Models

The training protocol for multimodal models like ProMEP involves two-stage representation learning followed by zero-shot inference [1]. First, the base model is trained on approximately 160 million AlphaFold structures using a corrupted element completion task, where the model must predict missing elements from partially obscured inputs using both sequence and structure information. This pretraining phase instills general-purpose knowledge of sequence-structure relationships without specific task supervision.

For mutation effect prediction, ProMEP employs a log-likelihood scoring approach that compares the probabilities of wild-type and mutated amino acids conditioned on both sequence and structure contexts [1]. Specifically, the effect of a mutation is quantified as:

[ \text{Effect Score} = \log\frac{p(\text{WT sequence} \mid \text{structure})}{p(\text{mutant sequence} \mid \text{structure})} ]

This approach effectively maps the protein fitness landscape by estimating how mutations alter the probability of a sequence given its structural context.

Biophysical Simulation and Fine-Tuning

METL employs a distinct three-stage protocol: synthetic data generation, synthetic data pretraining, and experimental data fine-tuning [2]. In the first stage, molecular modeling with Rosetta generates structures for millions of protein sequence variants, with 55 biophysical attributes extracted from each modeled structure. The transformer encoder is then pretrained to predict these biophysical attributes from sequence alone, learning a biophysically grounded representation. Finally, the model is fine-tuned on experimental sequence-function data to connect biophysical knowledge with empirical observations.

This approach enables strong generalization from small datasets by providing physical priors that constrain the learning problem. METL's performance advantage is most pronounced in training set sizes below 100 examples, where data-hungry models like ESM-2 typically struggle without extensive fine-tuning [2].

Visualizing Multimodal Protein Learning

The following diagram illustrates the integrated workflow of multimodal deep representation learning for zero-shot mutation effect prediction:

Diagram 1: Multimodal protein learning integrates sequence and structure data through specialized neural architectures (Evoformer, Structure Module) to create unified representations that enable zero-shot prediction of mutation effects and protein fitness landscapes.

Table 3: Key Research Resources for AI-Driven Protein Engineering

| Resource | Type | Primary Function | Access |

|---|---|---|---|

| AlphaFold Protein Structure Database [9] | Database | Provides >200 million predicted protein structures | Publicly available |

| ProteinGym [1] | Benchmark | Standardized assessment of mutation effect prediction | Publicly available |

| ESM-2 Model Series [6] | Protein Language Model | Sequence-based representation learning | Open source |

| ProMEP [1] [8] | Prediction Tool | Zero-shot mutation effect prediction | Research use |

| METL Framework [2] | Modeling Framework | Biophysics-informed protein engineering | Research use |

| Rosetta [2] | Modeling Suite | Molecular simulations for biophysical attribute calculation | Academic licensing |

The integration of massive sequence and structure datasets through sophisticated neural architectures has enabled AI systems to learn the intricate language of proteins with remarkable fluency. Multimodal approaches that jointly represent sequence and structural information have demonstrated superior performance for zero-shot prediction tasks, while biophysics-informed models provide valuable inductive biases for data-scarce protein engineering applications. As these models continue to evolve, they promise to accelerate the design of novel proteins for therapeutic, industrial, and research applications, effectively compressing the timeline from concept to functional protein. The emerging paradigm of AI-guided protein engineering represents a fundamental shift in biological design, leveraging deep representation learning to navigate the vast space of possible protein sequences and identify candidates with enhanced properties.

The relentless pursuit of understanding and engineering proteins for therapeutic and industrial applications has long been hampered by the astronomical scale of possible amino acid sequences and the prohibitive cost of experimental characterization. Traditional supervised machine learning approaches require extensive labeled data from costly and time-intensive experiments such as deep mutational scans, creating a fundamental bottleneck in protein engineering pipelines. Against this backdrop, zero-shot prediction methods have emerged as a transformative paradigm, capable of accurately forecasting mutation effects without any protein-specific experimental training data. These methods leverage evolutionary principles, biophysical constraints, and patterns learned from massive sequence and structure databases to make accurate predictions for any protein of interest from its sequence and/or structure alone. This capability is revolutionizing computational protein design, enabling researchers to navigate fitness landscapes and identify functional variants with unprecedented efficiency.

This guide provides a comprehensive comparison of leading zero-shot prediction methods, detailing their underlying methodologies, performance benchmarks, and practical implementation. By objectively evaluating these tools against standardized experimental datasets, we aim to equip researchers with the knowledge to select appropriate computational strategies for their protein engineering challenges.

Methodologies: How Zero-Shot Predictors Work

Zero-shot methods for mutation effect prediction employ diverse strategies to infer variant fitness. The following diagram illustrates the core architectural differences between three major approaches.

Evolutionary Coupling Models

EVmutation exemplifies the evolutionary coupling approach, which is grounded in statistical analysis of homologous protein sequences [10]. The method employs a Potts model that captures both site-specific conservation biases and pairwise co-evolutionary dependencies between residues. These parameters are inferred from multiple sequence alignments using regularized maximum pseudolikelihood estimation. The effect of a mutation is calculated as the log-odds ratio of the probabilities between mutant and wild-type sequences (ΔE), directly incorporating pairwise epistasis through summation over all coupling terms (Jij). This approach effectively leverages evolutionary experiments performed by nature over millions of years, capturing constraints that maintain protein structure and function.

Multimodal Deep Learning Architectures

ProMEP represents a more recent multimodal architecture that integrates both sequence and structural information without requiring multiple sequence alignments [1]. The model was trained on approximately 160 million protein structures from the AlphaFold database using a deep representation learning framework. ProMEP processes protein structures as atomic point clouds and employs rotation- and translation-equivariant embedding modules to capture 3D structural contexts. Mutation effects are quantified by comparing the log-likelihoods of wild-type and mutant sequences conditioned on both sequence and structure contexts, enabling rapid zero-shot prediction that is 2-3 orders of magnitude faster than MSA-dependent methods.

Biophysics-Informed Language Models

The METL framework introduces biophysical knowledge into protein language models through pretraining on synthetic data from molecular simulations [2]. Unlike evolution-based models, METL learns fundamental relationships between protein sequence, structure, and energetics by training on 55 biophysical attributes extracted from Rosetta modeling of millions of sequence variants. The model uses a structure-based relative positional embedding that considers 3D distances between residues. METL operates in specialized configurations: METL-Local focuses on a specific protein of interest, while METL-Global captures broader biophysical principles across diverse protein families, demonstrating exceptional performance in low-data regimes.

Performance Comparison: Quantitative Benchmarks

Zero-shot predictors have been rigorously evaluated against experimental data from deep mutational scanning studies and biochemical measurements. The table below summarizes the performance of major methods across standardized benchmarks.

Table 1: Performance Comparison of Zero-Shot Prediction Methods

| Method | Core Methodology | MSA Dependency | Speed | Spearman Correlation* | Key Advantage |

|---|---|---|---|---|---|

| EVmutation [10] | Evolutionary Potts Model | Required | Moderate | 0.4-0.7 (34 datasets) | Captures pairwise epistasis |

| ProMEP [1] | Multimodal Deep Learning | Not Required | Very Fast | 0.523 (ProteinGym average) | Integrates structure context |

| AlphaMissense [1] | Structure-Based Language Model | Required | Slow | 0.523 (ProteinGym average) | Optimized for pathogenicity |

| ESM-2 [11] | Protein Language Model | Not Required | Fast | Variable by dataset | General sequence representations |

| METL [2] | Biophysics-Informed Transformer | Not Required | Moderate | Strong on small datasets | Excels with limited data |

*Spearman correlation between predicted and experimental variant effects

Performance Across Protein Classes

Each method demonstrates distinct strengths depending on the protein family and experimental assay. EVmutation shows particularly strong correlations (ρ = 0.4-0.7) with fitness measurements from high-throughput experiments where the assayed phenotype is closely linked to essential biological functions, such as enzymatic activity of methyltransferases and β-glucosidases [10]. The performance is influenced by selection pressure, with stronger correlations observed under conditions that reveal the full dynamic range of mutational effects.

ProMEP achieves state-of-the-art performance across diverse protein classes, with a Spearman correlation of 0.53 on the protein G dataset containing multiple mutations, outperforming AlphaMissense (0.47) [1]. The method's structure-aware design enables accurate prediction for proteins where MSAs are unavailable or insufficient, making it particularly valuable for novel protein families with limited homology.

METL demonstrates exceptional capability in challenging protein engineering scenarios, particularly when generalizing from small training sets and performing position extrapolation [2]. In one notable demonstration, METL successfully designed functional green fluorescent protein variants when trained on only 64 examples, highlighting its value for engineering proteins with limited experimental data.

Experimental Validation: Case Studies in Protein Engineering

The true test of zero-shot prediction methods lies in their ability to guide successful protein engineering campaigns. The following experimental workflows illustrate how these tools have been applied to develop improved enzymes and editors.

Gene-Editing Enzyme Engineering

ProMEP was successfully employed to engineer enhanced gene-editing enzymes, including TnpB and TadA [1]. For TnpB, zero-shot predictions identified a 5-site mutant that increased gene-editing efficiency from 24.66% (wild-type) to 74.04% at the RNF2 site 1. For TadA, predictions guided the development of a 15-site mutant that demonstrated an A-to-G conversion frequency of 77.27% (compared to 69.80% for ABE8e) with significantly reduced bystander and off-target effects. These results demonstrate the capacity of zero-shot predictors to navigate complex fitness landscapes and identify combinatorial mutations that substantially improve protein function.

Automated Enzyme Engineering Platform

Recent advances have integrated zero-shot predictors into fully automated protein engineering platforms [11]. This workflow combines ESM-2 and EVmutation to design initial variant libraries, which are then constructed and tested using robotic biofoundries. In one implementation, this platform engineered Arabidopsis thaliana halide methyltransferase (AtHMT) for a 90-fold improvement in substrate preference and 16-fold improvement in ethyltransferase activity within four weeks. Simultaneously, the platform developed a Yersinia mollaretii phytase (YmPhytase) variant with a 26-fold improvement in activity at neutral pH, demonstrating the generalizability of zero-shot prediction across diverse enzyme classes.

Research Reagent Solutions: Essential Tools for Implementation

| Resource | Type | Function | Access |

|---|---|---|---|

| ProteinGym [1] | Benchmark Suite | Standardized assessment of prediction accuracy | Public |

| ESM-2 [11] | Protein Language Model | Sequence-based fitness predictions | Public |

| EVmutation [10] | Python Package | Evolutionary coupling analysis | Public |

| AlphaFold DB [1] | Structure Database | Source of predicted protein structures | Public |

| Rosetta [2] | Modeling Suite | Biophysical attribute calculation | Academic License |

| iBioFAB [11] | Biofoundry | Automated construction and testing | Institutional |

The expanding toolkit of zero-shot mutation effect predictors offers researchers powerful options for navigating protein sequence space without experimental training data. EVmutation remains valuable for proteins with deep multiple sequence alignments, providing robust incorporation of epistatic constraints. ProMEP offers speed and accuracy advantages for structure-aware predictions, particularly when MSAs are limited. METL demonstrates exceptional performance in data-scarce scenarios by leveraging biophysical simulations. As these methods continue to evolve, their integration into automated engineering platforms represents the frontier of computational protein design, promising to accelerate the development of novel enzymes, therapeutics, and biomaterials.

The field of computational protein engineering is undergoing a profound transformation, moving from traditional methods reliant on evolutionary information to sophisticated multimodal approaches that integrate diverse data types. This evolution is particularly crucial for zero-shot prediction success—the ability to accurately forecast the functional impact of protein variants without task-specific experimental data. Early methods depended heavily on Multiple Sequence Alignments (MSAs) to extract co-evolutionary signals, but these approaches faced limitations with orphan proteins and indels. The advent of MSA-free language models and subsequent multimodal architectures that jointly reason over sequence, structure, and function has significantly expanded the scope and accuracy of protein engineering. This guide objectively compares these methodological paradigms, providing experimental data and protocols to illustrate their relative performance in advancing zero-shot prediction capabilities.

Methodological Foundations and Comparative Frameworks

MSA-Based Approaches: The Evolutionary Foundation

MSA-based methods infer evolutionary constraints by analyzing aligned homologous sequences, providing powerful signals for structure prediction and variant effect scoring.

- Core Mechanism: These methods construct a matrix of aligned homologous sequences where each column represents an evolutionarily related residue position. Key implementations include DeepMSA2, which performs iterative alignment searches against massive metagenomic databases (over 40 billion sequences) to build balanced, diverse MSAs [12].

- Strengths and Limitations: MSA-based approaches excel at identifying co-evolutionary patterns and conserved functional residues. However, they struggle with orphan sequences lacking sufficient homologs and face computational bottlenecks when processing massive sequence databases [12] [13].

MSA-Free and Multimodal Approaches: The Next Generation

To overcome MSA limitations, researchers developed protein language models (PLMs) and multimodal frameworks that learn directly from individual sequences and structures.

- Protein Language Models (PLMs): Single-sequence models like ESM-2 learn contextualized residue representations by training on millions of protein sequences, enabling fitness predictions without explicit evolutionary data [11].

- Multimodal Integration: Modern frameworks such as ABACUS-T and PoET-2 unify sequence, structure, and evolutionary information within a single model. PoET-2 employs a retrieval-augmented architecture with hierarchical attention that is equivariant to context ordering, enabling in-context learning from homologs without retraining [14] [15].

- Joint Sequence-Structure Generation: Models like JointDiff represent each residue with three modalities (type, position, orientation) and use dedicated diffusion processes for each, coupled through a shared graph attention encoder to enable true co-design [16].

Table 1: Core Methodological Comparison of Protein Engineering Paradigms

| Approach | Key Examples | Core Input Data | Zero-Shot Prediction Capabilities | Key Limitations |

|---|---|---|---|---|

| MSA-Based | DeepMSA2, MSA Transformer | Multiple Sequence Alignments | Strong for single substitutions, conserved residues | Poor for orphan proteins, indels; computationally intensive [12] [13] |

| MSA-Free PLMs | ESM-2, ProtT5 | Single Protein Sequences | Good for single substitutions, general sequence fitness | Struggles with epistatic mutations; limited structural awareness [11] [15] |

| Multimodal | ABACUS-T, PoET-2, JointDiff | Sequence, Structure, (optional MSA) | Multiple mutations, indels, functional activity | Increased model complexity; requires diverse training data [14] [16] [15] |

Experimental Comparisons and Performance Benchmarks

Quantitative Performance Metrics Across Paradigms

Rigorous benchmarking reveals how each methodological paradigm performs on key protein engineering tasks. The following table synthesizes experimental results from recent large-scale studies and meta-analyses.

Table 2: Experimental Performance Benchmarks Across Protein Engineering Methods

| Method/Model | Variant Type | Performance Metric | Result | Experimental Context |

|---|---|---|---|---|

| MSA-Based (DeepMSA2) | Monomer Structure | TM-score (CASP13-15 FM targets) | 0.821 (5% increase over AlphaFold2) | Tertiary structure prediction [12] |

| MSA-Free (ESM-2) | Single Substitutions | Variant Effect Prediction | State-of-the-art (pre-2023) | Zero-shot fitness prediction [11] |

| Multimodal (PoET-2) | Indels & Multiple Mutations | Zero-shot Prediction Accuracy | 20% improvement over previous methods | Deep mutational scanning & clinical variants [15] |

| Multimodal (ABACUS-T) | Dozens of Simultaneous Mutations | Experimental Success Rate | High-activity, stabilized variants (ΔTm ≥10°C) | Enzyme redesign (Xylanase, β-lactamase) [14] |

| De Novo Binder Design (Traditional) | Binder Interface | Experimental Success Rate | ~1% (historically low) | Functional protein binders [17] |

| De Novo Binder Design (AF3 ipSAE_min) | Binder Interface | Experimental Success Rate | 1.4x average precision increase vs. ipAE | Functional protein binders [17] |

Key Experimental Protocols and Methodologies

To contextualize these performance benchmarks, below are detailed protocols for critical experiments cited in this comparison.

ABACUS-T Enzyme Redesign Protocol: Researchers applied this multimodal inverse folding model to redesign allose-binding protein, endo-1,4-β-xylanase, and TEM β-lactamase. The process involved: (1) Inputting backbone structures with optional ligand atomic structures, multiple conformational states, and/or MSAs; (2) Generating sequences using denoising diffusion conditioned on structural and evolutionary inputs; (3) Expressing and purifying a small number of designed variants (typically <10); (4) Characterizing function through activity assays and stability through thermal shift assays (ΔTm) [14].

De Novo Binder Design Meta-Analysis Protocol: Overath et al. (2025) established a standardized evaluation pipeline: (1) Compiling 3,766 designed binders tested against 15 targets; (2) Repredicting all binder-target complexes with AlphaFold2, AlphaFold3, and Boltz1; (3) Extracting over 200 structural and energetic features; (4) Identifying optimal success predictors through rigorous statistical analysis, finding AF3-derived ipSAE_min as the strongest single metric [17].

PoET-2 Zero-Shot Prediction Protocol: For variant effect prediction, PoET-2 employs: (1) Retrieval of relevant homologs as context; (2) Optional structure conditioning from partially-observed backbones; (3) Calculation of sequence likelihoods using its autoregressive decoder; (4) Scoring variants based on log-likelihood differences, effectively handling indels and multiple mutations unlike MLM-based approaches [15].

The experimental advances across these paradigms rely on specialized computational tools and biological resources. The following toolkit summarizes key solutions mentioned in the cited research.

Table 3: Essential Research Reagent Solutions for Protein Engineering

| Tool/Resource | Type | Primary Function | Relevance to Zero-Shot Prediction |

|---|---|---|---|

| DeepMSA2 [12] | Software Pipeline | Constructs deep, diverse MSAs from genomic databases | Improves co-evolutionary signal input for structure prediction |

| ESM-2 [11] | Protein Language Model | Learns contextual sequence representations from single sequences | Enables MSA-free fitness prediction and variant scoring |

| AlphaFold3 (AF3) [17] | Structure Prediction | Predicts protein structures and complexes | Generates ipSAE_min metric for binder interface quality assessment |

| ZINC Database [18] | Compound Library | Provides drug-like molecules for training generative models | Serves as pre-training data for targeted molecular generation models |

| iBioFAB [11] | Biofoundry Platform | Automates protein engineering DBTL cycles | Enables high-throughput experimental validation of computational predictions |

| TaraDB/MetaSourceDB [12] | Metagenomic Database | Provides diverse environmental sequences for MSA construction | Enhances MSA depth and diversity for improved structure prediction |

Visualizing Methodological Evolution and Workflows

The progression from MSA-based to multimodal approaches represents a fundamental shift in computational protein engineering. The following diagram illustrates this evolutionary pathway and the growing integration of data modalities.

Evolution of Computational Protein Engineering Methods - This diagram maps the progression from MSA-dependent models to modern multimodal approaches, highlighting their respective inputs, strengths, and limitations.

The experimental workflow for modern multimodal protein engineering integrates computational design with high-throughput validation, creating an efficient design-build-test-learn cycle. The following diagram illustrates this integrated pipeline.

Integrated Multimodal Protein Engineering Workflow - This diagram illustrates the autonomous Design-Build-Test-Learn (DBTL) cycle enabled by modern multimodal AI platforms, showing how computational design interfaces with automated experimental validation.

The evolution from MSA-based to MSA-free and multimodal approaches represents a fundamental maturation of computational protein engineering into a more predictive, data-driven discipline. For zero-shot prediction success, multimodal models like ABACUS-T and PoET-2 demonstrate remarkable capabilities, achieving high experimental success rates with only a few tested sequences—each containing dozens of simultaneous mutations [14] [15]. The integration of structural information with evolutionary context enables these models to preserve functional dynamics while enhancing stability, addressing a critical limitation of earlier inverse folding methods.

Future advancements will likely focus on several key areas: (1) Improved efficiency through smaller, more specialized models rather than indiscriminate parameter scaling [15]; (2) Enhanced experimental integration, as demonstrated by autonomous platforms that close the DBTL cycle with minimal human intervention [11]; and (3) Development of more sophisticated success metrics, such as interface-focused scores that better predict functional binding [17]. As these trends continue, multimodal approaches are poised to dramatically accelerate the design of functional proteins for therapeutic and industrial applications, making protein engineering increasingly predictive and accessible.

Inside Leading Zero-Shot Models: Architectures and Breakthrough Applications

The ability to accurately predict the effects of mutations on protein function without relying on resource-intensive experimental data—a challenge known as zero-shot prediction—represents a fundamental hurdle in biotechnology and biomedicine. Traditional computational methods for predicting mutation effects have typically relied on multiple sequence alignments (MSAs) to infer evolutionary constraints, but this approach introduces significant time burdens and fails for proteins with few known homologs. The emerging paradigm in protein engineering research now focuses on developing unsupervised computational models that can navigate the gigantic fitness landscape of possible protein variants to identify beneficial mutations with minimal experimental burden. Within this context, the Protein Mutational Effect Predictor (ProMEP) emerges as a multimodal deep learning framework that integrates both sequence and 3D structural contexts from approximately 160 million proteins in the AlphaFold database, enabling zero-shot prediction of mutation effects and demonstrating significant potential for guiding intelligent protein engineering.

ProMEP Architectural Framework: A Multimodal Deep Learning Approach

Core Architecture and Training Methodology

ProMEP employs a sophisticated multimodal deep representation learning model comprising approximately 659.3 million parameters that comprehensively learns from both protein sequences and structures. The model was trained using a self-supervised objective to complete missing elements from corrupted inputs leveraging both sequence and structure information, allowing it to develop rich representations of protein function without requiring labeled data. A key innovation in ProMEP's architecture is its representation of protein structures as point clouds, which enables the incorporation of structural context at atomic resolution rather than relying on simplified representations. This approach preserves critical spatial relationships between atoms that determine protein functionality.

The framework incorporates a rotation- and translation-equivariant structure embedding module specifically designed to capture structural context that remains invariant to three-dimensional translations and rotations. This geometric invariance is crucial for robust protein representation, as biological function is independent of a protein's absolute orientation in space. Through this architectural design, ProMEP learns semantically rich representations that approximate protein functions, achieving state-of-the-art performance across multiple benchmarks including Enzyme Commission number prediction, gene ontology term annotation, and protein-protein interaction prediction.

Zero-Shot Mutation Effect Prediction Mechanism

ProMEP predicts mutation effects using a log-ratio heuristic that compares the probabilities of wild-type and mutated amino acids conditioned on both sequence and structure contexts. While previous methods calculated this score using only sequence information, ProMEP's multimodal architecture enables it to quantify log-likelihoods of protein variants with combined sequence and structure contexts, providing a more comprehensive assessment of mutational impact. By comparing probabilities of the wild-type sequence and mutant sequences, ProMEP can accurately map protein fitness landscapes and identify beneficial single or multiple mutants for protein engineering applications.

Comparative Performance Analysis

Benchmarking Against State-of-the-Art Methods

ProMEP's performance has been rigorously evaluated against leading computational methods for mutation effect prediction across diverse proteins and experimental assays. In assessments against three representative proteins with experimental measurements of variant effects—the SUMO-conjugating enzyme UBC9, RPL40A, and immunoglobulin G-binding protein G—ProMEP demonstrated superior correlation with experimental measurements compared to both MSA-based and MSA-free methods. Notably, for the protein G dataset containing multiple mutations, ProMEP achieved a Spearman's rank correlation of 0.53, outperforming the next-best model, AlphaMissense, which reached 0.47.

The generalization capability of ProMEP was further validated against the comprehensive ProteinGym benchmark, which contains 1.43 million variants across 53 proteins from prokaryotes, humans, and other eukaryotes. These proteins vary considerably in length and participate in diverse biological processes. On this challenging benchmark, ProMEP achieved an average Spearman's rank correlation of 0.523, performing on par with AlphaMissense while providing a tremendous 2-3 order of magnitude improvement in prediction speed due to its MSA-free nature.

Table 1: Performance Comparison on ProteinGym Benchmark

| Model | Approach | MSA Dependence | Avg. Spearman (ρ) | Speed |

|---|---|---|---|---|

| ProMEP | Multimodal (Sequence + Structure) | MSA-free | 0.523 | ~1000x faster |

| AlphaMissense | Structure + MSA | MSA-dependent | 0.523 | Baseline |

| SaProt | Sequence + Structure | MSA-free | 0.457 | Fast |

| TranceptEVE | Sequence + MSA | MSA-dependent | 0.456 | Slow |

| GEMME | Evolutionary | MSA-dependent | 0.455 | Slow |

| ESM2_3B | Sequence-only | MSA-free | 0.434 | Fast |

| ESM1v | Sequence-only | MSA-free | 0.406 | Fast |

Performance Across Protein Functional Properties

Different mutation effect predictors often exhibit varying performance across specific protein properties. Structure-aware models typically perform better for binding and stability predictions, while evolution-aware models tend to excel in activity prediction. ProMEP's integrated multimodal approach enables consistently strong performance across diverse functional properties, as demonstrated in comparative analyses with specialized methods.

Table 2: Performance by Protein Property (Spearman's ρ)

| Model | Activity | Binding | Stability | Expression |

|---|---|---|---|---|

| ProMEP | 0.499 | 0.454 | 0.649 | 0.533 |

| SaProt | 0.458 | 0.378 | 0.592 | 0.488 |

| TranceptEVE | 0.487 | 0.376 | 0.500 | 0.457 |

| GEMME | 0.482 | 0.383 | 0.519 | 0.438 |

| EVE | 0.464 | 0.386 | 0.491 | 0.408 |

Experimental Validation and Protein Engineering Applications

Experimental Protocols for Validation Studies

The practical utility of ProMEP was validated through rigorous experimental studies focusing on engineering improved gene-editing enzymes. For TnpB gene-editing engineering, researchers used ProMEP to predict beneficial mutations, then experimentally tested the top-ranked variants. The editing efficiency was quantified using deep sequencing-based methods that compared the frequency of desired edits in target genomic loci between wild-type and mutant TnpB variants. Similarly, for TadA engineering, the A-to-G conversion frequency was measured using high-throughput sequencing assays at multiple genomic sites, with bystander and off-target effects assessed through whole-genome sequencing and specialized off-target detection methods.

The experimental workflow typically followed these standardized steps:

- In silico mutagenesis - ProMEP scored all possible single and multiple mutations

- Variant prioritization - Top-ranking mutants were selected based on predicted fitness

- Plasmid construction - Selected variants were synthesized and cloned into expression vectors

- Cell transfection - Relevant cell lines were transfected with mutant constructs

- Functional assessment - Editing efficiency and specificity were quantified using sequencing-based methods

- Comparative analysis - Performance of ProMEP-designed variants was compared to wild-type and previous engineered versions

Gene-Editing Enzyme Engineering Results

ProMEP demonstrated remarkable success in guiding the development of enhanced gene-editing tools. For the TnpB gene-editing enzyme, a 5-site mutant designed using ProMEP achieved editing efficiency of 74.04% at the RNF2 site 1, dramatically outperforming the wild-type enzyme, which showed only 24.66% efficiency. This represents a three-fold improvement and highlights ProMEP's ability to identify synergistic mutations that collectively enhance protein function.

For the TadA base editor, ProMEP guided the development of a 15-site mutant that exhibited an A-to-G conversion frequency of 77.27% at the HEK site 7 A6, compared to 69.80% for ABE8e, a previous state-of-the-art TadA-based adenine base editor. Crucially, the ProMEP-designed variant also demonstrated significantly reduced bystander and off-target effects compared to ABE8e, addressing a critical limitation in base editing technology. These experimental validations confirm that ProMEP not only predicts mutational effects accurately but also enables practical protein engineering with real-world applications in biotechnology and therapeutics.

Table 3: Key Research Reagents and Computational Resources

| Resource | Type | Function in Research | Application in ProMEP Context |

|---|---|---|---|

| AlphaFold Database | Structural Database | Provides predicted structures for ~160 million proteins | Source of protein structure data for training ProMEP's multimodal model |

| ProteinGym Benchmark | Evaluation Framework | Standardized assessment of mutation effect predictors | Benchmarking ProMEP against alternative methods |

| Deep Mutational Scanning (DMS) | Experimental Method | High-throughput measurement of variant effects | Generating ground truth data for model training and validation |

| ColabFold | Computational Tool | Rapid protein structure prediction using MMseqs2 | Generating structural data for proteins without known structures |

| ESM Language Models | Computational Resource | Protein sequence representation learning | Baseline comparison for sequence-only approaches |

| VenusMutHub | Benchmark Database | Curated small-scale experimental mutation data | Additional validation beyond high-throughput DMS data |

ProMEP represents a significant advancement in zero-shot prediction of protein mutation effects by successfully integrating sequence and 3D structural contexts through multimodal deep learning. Its MSA-free architecture enables rapid exploration of protein fitness landscapes while maintaining state-of-the-art prediction accuracy comparable to or exceeding the best MSA-dependent methods. Experimental validations demonstrating substantial improvements in gene-editing enzymes highlight ProMEP's practical utility in guiding protein engineering campaigns with reduced experimental burden. As protein engineering continues to play an increasingly crucial role in therapeutics, industrial biotechnology, and basic research, multimodal approaches like ProMEP that leverage complementary data modalities will be essential for navigating the vast unexplored regions of protein sequence space and unlocking novel functionalities.

The ability to make accurate zero-shot predictions about protein fitness represents a transformative goal in computational biology, enabling the interpretation of genetic variants and the design of novel proteins without costly, time-consuming experimental screens. Protein language models (PLMs) trained on evolutionary sequences have emerged as powerful tools for this task. However, a significant limitation of many general-purpose PLMs is their inability to be precisely directed toward specific protein families of interest without requiring retraining on family-specific multiple sequence alignments (MSAs). The Protein Evolutionary Transformer (PoET) addresses this fundamental challenge through its innovative use of evolutionary prompts, positioning it as a breakthrough for family-specific protein prediction and design within the zero-shot paradigm [19] [20] [21].

PoET functions as a retrieval-augmented generative model that treats entire protein families as "sequences-of-sequences." Unlike standard models that process single sequences, PoET conditions its predictions on a prompt—a set of related sequences that capture the evolutionary landscape and co-evolutionary patterns of the protein family of interest. This architecture allows it to extrapolate from short context lengths and generalize effectively even for small protein families with limited homologous sequences, overcoming a critical constraint of earlier methods [20] [21].

PoET's Architectural Innovation: A Technical Examination

The Core Mechanism: Evolutionary Prompts as Context

At the heart of PoET's innovation is its unique approach to contextualization. The model is an autoregressive generative model trained across tens of millions of clusters of natural protein sequences. Its architecture features a specialized Transformer layer that processes information on two levels: it models tokens (amino acids) sequentially within individual protein sequences while simultaneously attending between sequences in an order-invariant manner. This dual attention mechanism allows PoET to scale to context lengths beyond those encountered during training, enabling it to handle diverse protein families flexibly [21].

The "evolutionary prompt" serves as a conditioning set, providing the model with crucial information about the specific fitness landscape and evolutionary constraints of the target family. This prompt can be curated by users or automatically generated via multiple sequence alignment. By leveraging this context, PoET calculates the likelihood of observing any given sequence based on the inferred evolutionary process, enabling both scoring of existing variants and generation of novel sequences with desired properties [19].

Comparative Advantage Over Traditional Approaches

Traditional protein language models face a significant trade-off: they are either difficult to steer toward specific protein families, or they must be trained on large MSAs from the family of interest, preventing transfer learning across families. PoET's retrieval-augmented approach resolves this dilemma by allowing conditioning on sequences from any protein family without requiring retraining. This also enables PoET to incorporate new sequence information dynamically and work with any sequence database [19] [21].

Furthermore, as a fully autoregressive model, PoET can generate and score novel indels (insertions and deletions) in addition to single-site substitutions, overcoming limitations posed by alignment errors, long insertions, and gappy regions in traditional MSAs [19].

Performance Benchmarking: PoET Versus State-of-the-Art Models

Quantitative Performance on Deep Mutational Scanning Data

In comprehensive evaluations on deep mutational scanning (DMS) datasets, PoET has demonstrated superior performance for variant function prediction across proteins with varying MSA depths. The model's performance highlights its effectiveness across different evolutionary contexts [21].

Table 1: Performance Comparison of Protein Fitness Prediction Models

| Model | Model Type | Key Innovation | MSA Dependence | Indel Handling | Family-Specific Adaptation |

|---|---|---|---|---|---|

| PoET | Retrieval-augmented autoregressive transformer | Evolutionary prompts as context | Low (uses prompts but doesn't require deep MSAs) | Full generation and scoring | Excellent via prompting |

| ProGen [22] | Conditional transformer | Control tags for protein properties | Moderate (benefits from fine-tuning on family data) | Limited primarily to substitutions | Requires fine-tuning |

| ESM-2 [2] | General protein language model | Scale (billions of parameters) | Low (trained on UniRef) | Limited primarily to substitutions | Limited zero-shot capability |

| METL [2] | Biophysics-informed transformer | Pretraining on molecular simulations | Low | Limited primarily to substitutions | Good for stability prediction |

Table 2: Experimental Performance on DMS Substitution Assays (Spearman Correlation)

| Model | Proteins with Deep MSAs | Proteins with Shallow MSAs | Overall Average | Stability Prediction | Binding Affinity |

|---|---|---|---|---|---|

| PoET [21] | 0.72 | 0.68 | 0.70 | 0.75 | 0.66 |

| ESM-1b [3] | 0.65 | 0.58 | 0.62 | 0.68 | 0.59 |

| TranceptEVE [3] | 0.74 | 0.61 | 0.68 | 0.76 | 0.65 |

| MSA Transformer [21] | 0.71 | 0.52 | 0.62 | 0.72 | 0.58 |

Performance in Challenging Regimes

PoET exhibits particular strength in scenarios with limited evolutionary data, where traditional MSA-dependent methods struggle. The model's ability to extrapolate from short context lengths allows it to make accurate predictions for protein families with few homologous sequences, addressing a critical need in protein engineering for undercharacterized families [21].

For structure-based prediction challenges, particularly in proteins with intrinsically disordered regions (IDRs), both sequence-based and structure-based models can show degraded performance. PoET's evolutionary prompt approach provides an advantage in these cases by focusing on evolutionary constraints rather than relying solely on structural features, which may be misleading for disordered regions [3].

Experimental Protocols for Model Evaluation

Standardized Benchmarking Framework

The evaluation of PoET and comparable models typically follows rigorous benchmarking protocols on established datasets:

Dataset Curation: Models are tested on Deep Mutational Scanning (DMS) assays from resources like ProteinGym, which contains quantitative fitness measurements for thousands of protein variants across diverse proteins and function types (activity, binding, expression, organismal fitness, and stability) [3]. The benchmark includes 217 DMS substitution assays with carefully partitioned training/validation/test splits to ensure fair comparison.

Evaluation Metrics: The primary metric for assessment is Spearman's rank correlation coefficient between model-predicted scores and experimentally measured fitness values. This non-parametric measure evaluates how well models rank variants by functional fitness without assuming linear relationships [3].

Baseline Models: Performance is compared against several categories of baseline methods: (1) Evolutionary scale models (ESM-1b, ESM-2); (2) MSA-based methods (MSA Transformer, EVE); (3) Structure-based models (ESM-IF1, ProteinMPNN); and (4) Hybrid approaches (TranceptEVE) [3].

Assessing Zero-Shot Generalization

For zero-shot evaluation, models are assessed without any fine-tuning on the target protein family. Predictions are generated based solely on the model's pre-trained knowledge (for general PLMs) or combined with evolutionary context (for PoET). The critical test involves evaluating performance across:

- MSA depth stratification: Separating proteins by how many homologous sequences are available

- Function type analysis: Assessing performance across different protein functions (enzymes, binders, etc.)

- Extrapolation capability: Testing on mutation types and positions not seen during training [21] [2]

Diagram 1: PoET's Evolutionary Prompt Workflow for Family-Specific Prediction. This illustrates the process from retrieving homologous sequences to generating functional variants.

Essential Research Reagents and Computational Tools

Table 3: Key Research Reagents and Computational Tools for Protein Language Model Research

| Resource | Type | Primary Function | Application in PoET Research |

|---|---|---|---|

| ProteinGym [3] | Benchmark suite | Standardized evaluation of fitness predictions | Primary benchmark for DMS performance comparison |

| Deep Mutational Scanning (DMS) Data | Experimental dataset | High-throughput measurement of variant effects | Ground truth for model training and evaluation |

| UniProt Knowledgebase [22] | Protein sequence database | Source of evolutionary sequences | Provides data for constructing evolutionary prompts |

| OpenProtein.AI Platform [19] | Web application | Access to PoET tools | User interface for researchers to utilize PoET |

| ESM-2 Model [2] | Protein language model | General protein representation learning | Baseline comparison for evolutionary-based approaches |

| Rosetta Molecular Modeling Suite [2] | Biophysical simulation software | Structure prediction and energy calculation | Generates biophysical data for models like METL |

| AlphaFold 2 [3] | Structure prediction tool | Protein 3D structure prediction | Provides structural context for structure-based models |

Discussion: Implications for Protein Engineering and Therapeutic Development

The development of PoET represents a significant advancement in the quest for accurate zero-shot prediction in protein engineering. By effectively leveraging evolutionary prompts, PoET bridges the gap between general protein language models and family-specific prediction, enabling researchers to harness evolutionary information without being constrained by the limitations of multiple sequence alignments.

For research scientists and drug development professionals, this technology offers practical advantages in multiple domains:

Therapeutic Protein Engineering: The ability to make accurate predictions for specific protein families with limited data is particularly valuable for engineering therapeutic proteins like monoclonal antibodies, enzymes, and cytokines, where stability, binding affinity, and expression levels are critical design parameters [23].

Variant Interpretation: PoET's sophisticated fitness prediction supports the interpretation of genetic variants of unknown significance, especially in proteins with limited homologous sequences, potentially accelerating personalized medicine approaches.

Functional De Novo Design: The model's capacity to generate novel functional sequences expands the design space for novel proteins with customized functions, supporting applications in biocatalysis, biosensing, and synthetic biology [19] [22].

The integration of PoET into the protein engineering workflow, alongside experimental validation platforms like iAutoEvoLab [24], creates a powerful framework for iterative protein design and optimization. As the field progresses, combining evolutionary insights from models like PoET with biophysical principles, as demonstrated in approaches like METL [2], will likely drive the next wave of innovation in protein science.

Diagram 2: Integrated Protein Engineering Workflow Combining PoET with Experimental Validation. This shows how computational predictions interface with high-throughput experimental systems.

RoseTTAFold has emerged as a powerful deep learning framework for protein structure prediction, demonstrating particular strength in joint sequence-structure reasoning. This review examines the performance of the RFjoint variant against competing methodologies, with specific focus on its zero-shot mutation effect prediction capabilities. Experimental data reveal that RFjoint achieves comparable accuracy to specialized models like MSA Transformer and DeepSequence without requiring task-specific training. The integration of structural and sequential information within a unified architecture enables RFdiffusion to advance de novo protein design, generating diverse functional proteins including binders, enzymes, and symmetric assemblies. This article provides a comprehensive comparison of RoseTTAFold's performance across multiple domains and details the experimental protocols essential for researchers leveraging these tools in protein engineering workflows.

The emergence of deep learning has revolutionized computational biology, particularly in protein structure prediction and design. Among these advances, RoseTTAFold represents a significant milestone as a three-track neural network that simultaneously reasons about protein sequence, distance relationships, and 3D atomic coordinates [25]. This architectural innovation enables an integrated understanding of protein sequence-structure relationships that has proven broadly useful for protein modeling tasks.

The RFjoint variant, specifically trained for joint sequence and structure recovery, has demonstrated remarkable capabilities in zero-shot prediction of mutation effects without requiring additional training on specific protein families [26]. This performance positions RoseTTAFold as a foundational technology in the expanding toolkit for protein engineering, particularly within research contexts prioritizing the understanding of mutational landscapes.

This review systematically compares RoseTTAFold's performance against alternative approaches, examining experimental evidence across multiple protein engineering domains. We provide detailed methodological protocols and quantitative performance assessments to guide researchers in selecting appropriate computational tools for specific protein design challenges.

RoseTTAFold Architecture and Methodology

Three-Track Neural Network Design

RoseTTAFold's architecture employs a unique three-track design that processes information at three distinct levels: (1) protein sequences, (2) residue-residue distances and orientations, and (3) 3D atomic coordinates [25]. These tracks operate simultaneously, with information passing between them at each network layer, enabling the model to learn complex relationships between amino acid sequences and their structural consequences.

The network's rotational equivariance ensures consistent performance regardless of the global orientation of the input protein, a crucial feature for robust structure prediction [27]. This architectural foundation has proven sufficiently flexible to support extension to specialized tasks, including the RFdiffusion model for de novo protein design and RFjoint for mutation effect prediction.

Evolution to RFjoint and RFdiffusion

The RFjoint variant fine-tunes the base RoseTTAFold architecture specifically for sequence and structure recovery tasks, enhancing its capability to understand the complex relationships between amino acid changes and their structural and functional consequences [26]. This specialized training enables the model to perform competitively on mutation effect prediction without additional supervision.

Building on this foundation, RFdiffusion further extends the architecture by implementing a denoising diffusion probabilistic model (DDPM) fine-tuned on protein structure denoising tasks [27]. This approach iteratively refines random noise into coherent protein structures through a series of denoising steps, enabling the generation of novel protein backbones conditioned on specific design objectives.

Table: RoseTTAFold Variants and Their Primary Applications

| Variant | Architectural Features | Primary Applications |

|---|---|---|

| RoseTTAFold (Base) | Three-track network (sequence, distance, 3D coordinates) | Protein structure prediction, protein-protein complexes |

| RFjoint | Fine-tuned for sequence-structure recovery | Zero-shot mutation effect prediction, sequence design |

| RFdiffusion | Denoising diffusion probabilistic model | De novo protein design, binder design, symmetric assemblies |

Workflow Visualization

The following diagram illustrates the core RoseTTAFold architecture and its extension to RFdiffusion for protein design:

Performance Comparison: RoseTTAFold vs. Alternative Methods

Zero-Shot Mutation Effect Prediction

A critical assessment of RFjoint demonstrated comparable accuracy to both MSA Transformer (another zero-shot model) and DeepSequence (which requires specific training on particular protein families) for predicting mutation effects across diverse protein families [26]. This zero-shot capability is particularly valuable because it eliminates the need for collecting family-specific mutation data, significantly expanding the method's applicability to proteins with limited characterization.

The following table summarizes the quantitative performance of mutation effect prediction methods:

Table: Comparison of Mutation Effect Prediction Methods

| Method | Training Requirement | Architecture | Key Strength | Experimental Validation |

|---|---|---|---|---|

| RFjoint | Zero-shot (no additional training) | Three-track network (sequence-structure) | Joint sequence-structure understanding | Comparable to specialized methods |

| MSA Transformer | Zero-shot | Protein language model | Evolutionary information from MSAs | Baseline accuracy for zero-shot |

| DeepSequence | Family-specific training | Probabilistic graphical model | Family-specific positional correlations | High accuracy with sufficient data |

Antibody Structure Prediction

In antibody modeling, RoseTTAFold demonstrates particular strength in predicting the challenging H3 loop, achieving accuracy comparable to SWISS-MODEL and superior to ABodyBuilder for this critical region [25]. However, for overall antibody structure prediction, RoseTTAFold's performance does not surpass specialized homology modeling methods, suggesting that domain-specific adaptations may be necessary for optimal performance in specialized protein families.

The differential performance across protein classes highlights an important principle: the "best overall" protein structure prediction tool may not be the "best for any task" [28]. Researchers should therefore select tools based on their specific protein class of interest rather than general performance metrics alone.

De Novo Protein Design

The RFdiffusion model dramatically advances de novo protein design capabilities, enabling generation of novel protein structures with high experimental success rates. The method has been experimentally validated through characterization of hundreds of designed symmetric assemblies, metal-binding proteins, and protein binders [27]. In one striking example, a designed binder complex with influenza hemagglutinin confirmed by cryo-EM structure was nearly identical to the design model.

RFdiffusion employs self-conditioning during the denoising process, analogous to recycling in AlphaFold, which significantly improves performance on both conditional and unconditional protein design tasks compared to earlier diffusion approaches [27]. This innovation enables the generation of complex protein topologies with little structural similarity to proteins in the training set, demonstrating substantial generalization beyond the Protein Data Bank.

Experimental Protocols and Methodologies

Mutation Effect Prediction Protocol

The standard protocol for assessing mutation effects using RFjoint involves:

- Input Preparation: Protein sequence and, optionally, existing structural information

- Multiple Sequence Alignment: Using HHblits to generate MSAs through the "make_msa.sh" script [25]

- Model Inference: Running RFjoint without further training on the target protein family

- Output Analysis: Comparison of wild-type and mutant predicted structures and stability metrics

For accurate performance assessment, researchers should compare RFjoint predictions with experimental data or established computational benchmarks using metrics such as root-mean-square deviation (RMSD) and Pearson correlation coefficients between predicted and measured effects.

RFdiffusion Protein Design Workflow

The experimental workflow for de novo protein design using RFdiffusion involves:

- Initialization: Starting from random residue frames or noise conditioned on design objectives

- Iterative Denoising: Applying 200+ denoising steps to progressively refine protein-like structures [27]