Unlocking Precision Oncology: How CAPE Mutant Datasets Are Powering Next-Gen Machine Learning Models

This article explores the critical role of Comprehensive And Personalized Encoded (CAPE) mutant datasets in advancing machine learning (ML) for biomedical research and drug discovery.

Unlocking Precision Oncology: How CAPE Mutant Datasets Are Powering Next-Gen Machine Learning Models

Abstract

This article explores the critical role of Comprehensive And Personalized Encoded (CAPE) mutant datasets in advancing machine learning (ML) for biomedical research and drug discovery. We first define CAPE datasets and their unique value in capturing complex, multi-omic mutational profiles. We then detail methodologies for integrating these datasets into ML pipelines, including preprocessing strategies and model architectures. The article addresses common challenges in data quality, imputation, and model overfitting, providing solutions for robust model development. Finally, we examine validation frameworks and benchmark CAPE-driven models against traditional genomic datasets, highlighting their superior predictive power for drug response and resistance. This guide is essential for researchers and drug developers aiming to leverage cutting-edge mutational data for AI-driven precision medicine.

What Are CAPE Mutant Datasets? A Primer for ML in Biomedical Research

CAPE (Context-Aware Profile Extraction) represents a paradigm shift in the analysis of genetic variants for machine learning applications in oncology and drug development. Moving beyond simple mutation calls, CAPE integrates multi-modal data—including gene expression, chromatin accessibility, protein abundance, and spatial context—to generate rich, functional profiles of mutational impact. This whitepaper details the technical framework, experimental validation, and implementation protocols for constructing CAPE mutant datasets, which are essential for training robust predictive models of drug response and resistance.

Traditional variant calling identifies genomic alterations but fails to capture their functional consequence. A BRAF V600E mutation, for example, can lead to divergent signaling states and therapeutic vulnerabilities depending on cellular context. CAPE addresses this by defining mutants through their resultant molecular phenotype, creating a data structure amenable to machine learning.

The CAPE Framework: Core Components

A CAPE profile is a multi-dimensional vector integrating data from the following layers:

Table 1: Core Data Layers in a CAPE Profile

| Data Layer | Measurement Technology | Key Metrics | Contribution to Context |

|---|---|---|---|

| Genomic | Whole Exome/Genome Sequencing | Mutation allele frequency, copy number, structural variants | Definitive identification of the genetic lesion |

| Transcriptomic | RNA-seq, Single-cell RNA-seq | Pathway enrichment scores, differential expression, isoform usage | Downstream transcriptional consequences |

| Epigenomic | ATAC-seq, ChIP-seq | Chromatin accessibility at regulatory elements, histone marks | Regulatory state influencing mutation impact |

| Proteomic | RPPA, Mass Spectrometry | Phosphoprotein levels, total protein abundance | Functional signaling output and drug targets |

| Spatial | Multiplexed Immunofluorescence, CODEX | Cell neighborhood composition, distance to stroma | Tumor microenvironment modulation |

Experimental Protocol: Generating a CAPE Dataset

The following protocol outlines the generation of a CAPE dataset for a panel of isogenic cell lines.

Cell Line Engineering & Validation

Objective: Introduce a specific mutation (e.g., EGFR L858R) into a controlled genetic background.

- Design: Synthesize sgRNA and donor template for homology-directed repair.

- Transfection: Deliver CRISPR-Cas9 components via nucleofection.

- Selection: Apply puromycin (2 µg/mL) for 72 hours.

- Cloning: Isolate single cells by FACS into 96-well plates.

- Validation:

- Sanger Sequencing: Confirm precise allele editing.

- Western Blot: Confirm expected protein expression/phosphorylation changes.

Multi-Omic Profiling Workflow

Parallel processing of parental and isogenic mutant lines.

Diagram 1: CAPE Multi-Omic Profiling Workflow (100 chars)

Data Integration & Profile Construction

- Alignment & Quantification: Process each datatype with standard pipelines (e.g., GATK for WES, STAR for RNA-seq).

- Differential Analysis: For each layer (L), compute the mutant vs. parental differential vector ΔL.

- Normalization: Z-score normalize features within each layer.

- Concatenation: Assemble the final CAPE profile vector V = [ΔGenomic, ΔTranscriptomic, ΔEpigenomic, ΔProteomic].

- Labeling: Annotate profiles with in vitro drug response metrics (e.g., IC50, AUC).

Key Signaling Pathways Modeled by CAPE

CAPE profiles are particularly adept at capturing perturbations in oncogenic signaling networks.

Diagram 2: CAPE Captures RTK Pathway Dysregulation (97 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for CAPE Dataset Generation

| Reagent / Material | Provider Examples | Function in CAPE Protocol |

|---|---|---|

| CRISPR-Cas9 Gene Editing System | Synthego, IDT | Precise introduction of mutations in isogenic models. |

| Puromycin Dihydrochloride | Thermo Fisher, Sigma-Aldrich | Selection of successfully transfected cells. |

| RNeasy Mini Kit | QIAGEN | High-quality RNA extraction for transcriptomics. |

| Cell Lysis Buffer for Western/IP | Cell Signaling Technology | Protein extraction for proteomic analysis. |

| Nextera XT DNA Library Prep Kit | Illumina | Preparation of sequencing libraries for WES and RNA-seq. |

| Chromium Next GEM Single Cell Kit | 10x Genomics | Enables single-cell resolution in RNA/ATAC profiling. |

| Human Phospho-Kinase Array | R&D Systems | Multiplexed screening of phosphorylation status. |

| CellTiter-Glo Luminescent Assay | Promega | Quantification of cell viability for drug response labeling. |

Application in ML: From CAPE Profiles to Predictive Models

CAPE profiles serve as high-fidelity feature vectors for supervised learning.

- Task: Classify sensitivity to a targeted therapy (e.g., EGFR inhibitor).

- Model Architecture: Random Forest or Multi-Layer Perceptron.

- Input: CAPE profile vector V for each cell line/tumor sample.

- Label: Binarized drug response (Sensitive / Resistant).

- Advantage: Models learn from the functional context of a mutation, improving generalizability across tissue types and co-mutation backgrounds.

CAPE transforms static mutation catalogs into dynamic, context-aware profiles that faithfully represent the biological state of a cell. This framework provides the necessary data infrastructure for developing next-generation machine learning models that predict therapeutic outcomes, identify novel biomarkers, and propel personalized oncology forward.

This technical guide outlines the methodologies for integrating multi-omics mutational data within the context of the Cancer Proteogenomic and Epigenetic (CAPE) mutant data sets. The primary thesis is that systematic integration of genomic (DNA sequence variants), epigenomic (DNA methylation, histone modifications), transcriptomic (RNA expression, splicing variants), and proteomic (protein abundance, post-translational modifications) mutational data creates a holistic representation of tumor biology. This integrated data structure is foundational for training robust machine learning models in oncology drug development, enabling the prediction of therapeutic response, resistance mechanisms, and novel biomarker discovery.

Data Acquisition and Preprocessing

Integrated analysis begins with curated CAPE-aligned datasets from public repositories like The Cancer Genome Atlas (TCGA), Clinical Proteomic Tumor Analysis Consortium (CPTAC), and International Cancer Genome Consortium (ICGC).

Table 1: Core Multi-Omic Data Types and Preprocessing Steps

| Omics Layer | Primary Data Type | Key Preprocessing Steps | Common File Format |

|---|---|---|---|

| Genomic | Whole Genome/Exome Sequencing (SNVs, Indels, CNVs) | Alignment (BWA, Bowtie2), variant calling (GATK Mutect2, VarScan), annotation (ANNOVAR, SnpEff) | VCF, MAF |

| Epigenomic | Bisulfite Sequencing (WGBS), ChIP-Seq (Histone marks) | Methylation level calling (Bismark, MethylKit), peak calling (MACS2), differential analysis | BED, bigWig |

| Transcriptomic | RNA-Seq (expression, fusion genes, splice variants) | Pseudoalignment (Kallisto, Salmon), transcript quantification, differential expression (DESeq2, edgeR) | TPM/FPKM matrix |

| Proteomic | Mass Spectrometry (LFQ, TMT), RPPA | Peak alignment (MaxQuant, DIA-NN), normalization (vsn, quantile), imputation (MinProb) | mzTab, matrix |

Mutation Data Harmonization

A critical step is the harmonization of mutations across layers to a unified genomic coordinate system (GRCh38). Tools like GenomicRanges in R or pyensembl in Python are used to map epigenetic features, transcript isoforms, and proteomic peptides to genomic loci.

Experimental Protocols for Multi-Omic Integration

Protocol A: Vertical Integration for Pathway Analysis

Objective: To assess the functional impact of a driver mutation across all molecular layers.

- Locus Selection: Identify a recurrent somatic mutation (e.g., TP53 R175H) from genomic data.

- Epigenomic Context: Extract DNA methylation beta-values and H3K27ac ChIP-seq signals from a ±50kb window around the mutation locus. Compare mutant vs. wild-type samples.

- Transcriptomic Correlation: Correlate the mutation status with expression levels of TP53 and its known target genes (e.g., CDKN1A, BAX) using linear models.

- Proteomic Validation: Query proteomic data for p53 protein abundance and phosphorylation status (e.g., at Ser15). Integrate phosphoproteomic data to infer altered kinase activity.

- Statistical Integration: Perform a multi-block Partial Least Squares (mbPLS) regression to model the relationship between the mutation (X-block) and consolidated epigenetic, transcriptomic, and proteomic features (Y-blocks).

Protocol B: Horizontal Integration for Subtype Discovery

Objective: To cluster patient samples into molecular subtypes using data from all omics layers.

- Feature Reduction: For each omics layer, perform independent principal component analysis (PCA) or use autoencoders to reduce dimensionality to the top 50 latent features.

- Similarity Network Fusion (SNF): a. Construct patient similarity networks (graphs) for each omics layer separately using Euclidean distance on the reduced features. b. Fuse the networks using the SNF algorithm, which iteratively updates each network to reflect the information from the others. c. Apply spectral clustering on the fused network to define integrated molecular subtypes.

- Validation: Assess subtype robustness via silhouette width and correlate with clinical outcomes (survival, drug response) from CAPE metadata.

Protocol C: Causal Network Inference

Objective: To infer potential causal pathways from genomic alterations to proteomic phenotypes.

- Layer-specific Priors: Build directed prior networks (e.g., DNA methylation -> gene expression; gene expression -> protein abundance) using known regulatory databases (ENCODE, STRING).

- Bayesian Multi-Omic Network Learning: Employ a framework like BIDIFAC+ or multi-omics Directed Acyclic Graph (DAG) learning to decompose shared and layer-specific factors and infer directional relationships.

- Mutation Seeding: Seed the network with high-confidence driver mutations and propagate their influence through the integrated network to identify dysregulated downstream protein modules.

Visualization of Workflows and Pathways

Diagram Title: Multi-Omic Data Integration Workflow for CAPE ML Research

Diagram Title: Causal Multi-Omic Pathway from Mutation to Phenotype

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Resources for Multi-Omic Integration Studies

| Item Name / Kit | Provider Examples | Function in CAPE-style Integration |

|---|---|---|

| KAPA HyperPlus Kit | Roche Sequencing | Library preparation for WGS/WES and RNA-Seq, ensuring compatibility between genomic and transcriptomic libraries. |

| NEBNext Enzymatic Methyl-seq Kit | New England Biolabs (NEB) | Enzymatic conversion for methylome sequencing, offering higher DNA integrity than bisulfite for paired multi-omic analysis. |

| TMTpro 16plex | Thermo Fisher Scientific | Isobaric labeling for multiplexed deep proteome profiling of up to 16 samples simultaneously, crucial for cohort analysis. |

| Cell Signaling Technology (CST) PathScan RTK Signaling Antibody Array | Cell Signaling Technology | Multiplexed protein array to validate proteomic and phosphoproteomic findings from MS data in a targeted manner. |

| Chromatin Shearing Cocktail | Covaris, Diagenode | Standardized shearing for ChIP-seq and ATAC-seq, ensuring reproducible epigenomic data across samples. |

| SNP/CGH Microarray BeadChip | Illumina (Infinium) | Cost-effective high-throughput genotyping and copy number validation for large patient cohorts. |

| Multi-Omic Quality Control (MOQC) Spike-in Mix | Spike-in consortium (e.g., SIRV, UPS2) | Contains exogenous DNA, RNA, and protein spikes for technical QC and cross-platform normalization. |

| RiboErase (rRNA Depletion Kit) | Thermo Fisher, Illumina | Efficient removal of ribosomal RNA for total RNA-seq, enabling accurate measurement of non-coding transcripts and fusion genes. |

Table 3: Example Quantitative Output from Vertical Integration (Hypothetical TP53 R175H)

| Omics Layer | Measured Feature | Wild-Type Mean | Mutant Mean | p-value | Effect Size | Integration Insight |

|---|---|---|---|---|---|---|

| Genomic | Allelic Frequency | 0% | 95% (Clonal) | N/A | N/A | Clonal driver mutation. |

| Epigenomic | CDKN1A Promoter Methylation | 0.12 | 0.08 | 0.045 | -0.41 | Mutation linked to local hypomethylation. |

| Transcriptomic | CDKN1A mRNA (log2TPM) | 5.2 | 7.8 | 1.2e-5 | 1.85 | Significant overexpression. |

| Proteomic | p21 (CDKN1A) Protein (log2LFQ) | 18.1 | 20.5 | 0.003 | 1.67 | Protein level increase confirmed. |

| Proteomic | p53 Ser15 Phosphorylation | 16.0 | 9.2 | 4.5e-6 | -2.10 | Loss of activating PTM. |

Table 4: Algorithm Performance for Subtype Discovery (SNF Method)

| Cancer Type | Number of Integrated Features | Optimal Clusters (k) | Average Silhouette Width | 5-Yr Survival Log-Rank p-value |

|---|---|---|---|---|

| BRCA (CPTAC) | Genomic: 200, Epigen: 150, Trans: 300, Prot: 500 | 4 | 0.21 | 3.1e-4 |

| LUAD (TCGA) | Genomic: 180, Epigen: 100, Trans: 400, Prot: 350 | 3 | 0.18 | 0.012 |

| COAD (ICGC) | Genomic: 220, Epigen: 200, Trans: 350, Prot: 400 | 5 | 0.15 | 0.003 |

The research and development of machine learning (ML) models for oncology, particularly those focused on CAPE (Cancer-Associated Patient-derived Endogenous) mutant phenotypes, rely heavily on accessing high-quality, multi-modal biomedical data. CAPE mutant data sets, which integrate somatic mutation profiles with functional proteomics and phosphoproteomics from patient-derived models, present unique challenges in data sourcing, integration, and standardization. This guide provides a technical overview of the primary public and proprietary data sources critical for constructing and validating such ML models.

Core Public Repositories

cBioPortal for Cancer Genomics

cBioPortal is an open-access platform for interactive exploration of multidimensional cancer genomics data sets. It is fundamental for accessing large-scale, curated genomic profiles of tumor samples.

Key Data for CAPE Mutant Research:

- Somatic Mutations: Primary source for mutation calls across thousands of tumors from projects like TCGA and ICPC.

- Clinical Data: Associated patient outcomes, treatment history, and tumor pathology.

- Copy Number Alterations & mRNA Expression: Essential complementary data layers for understanding mutational context.

Access Protocol:

- Programmatic Access (R/python): Use the

cBioPortalConnectorR package or the official Python client to query data.

- Web Interface: Manual exploration and visualization of genetic alterations across samples.

DepMap (The Cancer Dependency Map)

DepMap systematically identifies genetic and pharmacologic dependencies across hundreds of cancer cell lines. It is indispensable for linking CAPE mutations to functional phenotypes like gene essentiality and drug sensitivity.

Core Data Sets:

- CRISPR Knockout Screens (Chronos Scores): Quantified gene essentiality scores.

- Drug Sensitivity Screens (PRISM): AUC/IC50 values for thousands of compounds.

- Omics Data: RNA-seq, RPPA, and mutation data for the same cell lines.

Experimental Integration Protocol for CAPE Models:

- Data Download: Download the latest

DepMap Public 23Q4files (CRISPR_gene_effect.csv,model_list.csv,OmicsCNGene.csv). - Lineage & Mutation Filtering: Subset cell lines by tissue lineage (e.g., prostate) and presence/absence of CAPE-relevant mutations (e.g., SPOP mutations).

- Dependency Correlation: Calculate Pearson correlation between the dependency score of a gene of interest (e.g.,

AR) and the expression level of another gene across the filtered cell line set to identify genetic interactions.

Proprietary Biomedical Databases

Proprietary databases offer deeply curated, normalized, and often clinically annotated data not available publicly.

| Database | Provider | Key Features | Relevance to CAPE ML Models |

|---|---|---|---|

| COSMIC | Wellcome Sanger Institute | Manually curated somatic mutations, including rare variants, functional impact. | Gold-standard for training mutation annotation/prioritization algorithms. |

| FoundationInsights | Foundation Medicine | Large-scale real-world genomic data with clinical outcomes from F1CDx testing. | Enables linking CAPE mutations to therapeutic response in real-world cohorts. |

| Tempus Labs Database | Tempus | De-identified clinico-genomic data, including treatment history and longitudinal outcomes. | Provides time-series data essential for predictive models of disease progression. |

| Flatiron Health EHR Database | Flatiron Health | Structured electronic health record data from oncology practices. | Source for high-dimensional phenotypic data to correlate with mutational status. |

Access Workflow:

- Data Use Agreements (DUA): Execution of legal contracts defining permissible use.

- Secure Workspaces: Analysis typically confined to provider's HIPAA/GDPR-compliant cloud platforms (e.g., AWS workspaces, Databricks).

- Controlled Export: Results (e.g., model coefficients, aggregated statistics) may be exported after review, but raw data usually remains within the platform.

Quantitative Data Comparison

Table 1: Scale and Content of Key Data Sources for CAPE Mutant Research

| Source | Sample/Model Count | Data Types | Update Frequency | Primary Access |

|---|---|---|---|---|

| cBioPortal (TCGA) | >11,000 patient samples | Mutations, CNA, RNA, Clinical | Static (Legacy) | Open API & Web |

| DepMap (23Q4) | ~1,800 cell lines | CRISPR, Drug Screen, Omics | Quarterly | CC-BY Licensed Download |

| COSMIC (v99) | >1.3 million samples | Curated Mutations, Genomes | Quarterly | Commercial License |

| FoundationInsights | ~500,000 de-identified patients | NGS Panel, RWD Outcomes | Quarterly | Secure Portal |

| Tempus Database | ~300,000+ patients | NGS, EHR, Imaging, Outcomes | Continuous | Federated Analysis Platform |

Integrated Data Pipeline for CAPE ML Model Training

Detailed Protocol:

- Seed Mutation List Curation: Extract CAPE-related genes (e.g., SPOP, FOXA1, IDH1) from literature using PubMed API.

- Genomic Cohort Assembly:

- Query cBioPortal for mutation and CNA status of seed genes across all prostate cancer studies.

- Download corresponding clinical

data_clinical.txtfiles. - Merge and deduplicate patient IDs. Retain samples with mutation in at least one seed gene.

- Functional Data Integration:

- Map patient samples to DepMap cell lines using

model_list.csvannotation (e.g., by primary disease). - Extract CRISPR dependency scores (

AR_effect) and PRISM drug AUCs for relevant compounds (e.g., Enzalutamide) for the matched lines.

- Map patient samples to DepMap cell lines using

- Proprietary Data Augmentation (Example):

- Within a licensed Flatiron workspace, execute a query to extract lines of therapy and PSA response metrics for patients with metastatic castration-resistant prostate cancer (mCRPC).

- Use provided tokenization to link (where permissible) to genomic cohorts from Step 2.

- ML-Ready Table Construction: Create a unified table where each row is a sample, and features include mutation indicators, dependency scores, drug AUCs, and binned clinical outcomes.

Diagram 1: CAPE ML Model Data Sourcing and Integration Pipeline (Max 760px width).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for Experimental Validation of CAPE Predictions

| Item | Provider Examples | Function in CAPE Context |

|---|---|---|

| Isogenic Cell Line Pairs | ATCC, Horizon Discovery | Engineered to differ only by a CAPE mutation (e.g., SPOP-F133V vs WT) for controlled phenotype assays. |

| Patient-Derived Organoid (PDO) Kits | STEMCELL Technologies, Corning | Matrigel-based systems to culture 3D tumor models from patient tissue for ex vivo drug testing. |

| Phospho-Specific Antibodies | Cell Signaling Technology, Abcam | Detect changes in signaling pathway activation (e.g., p-ERK, p-AKT) resulting from CAPE mutations via Western Blot. |

| CRISPR/Cas9 Knockout Kits | Synthego, Thermo Fisher | Generate knockouts of genes identified as synthetic lethal partners of CAPE mutations in DepMap screens. |

| Multiplex Immunoassay Panels | Luminex, MSD | Quantify panels of secreted cytokines or phospho-proteins from cell supernatants or lysates. |

| Targeted NGS Panels | Illumina (TruSight), Agilent (SureSelect) | Validate mutation calls and detect low-frequency clones in engineered models or PDOs. |

Signaling Pathway Context for Common CAPE Mutations

Diagram 2: SPOP Mutation Alters Ubiquitination and AR Signaling (Max 760px width).

The analysis of high-dimensional mutational data, particularly from saturation mutagenesis experiments like those in the CAPE (Comprehensive Analysis of Pathogenic Etiology) datasets, represents a fundamental challenge in modern genomics and drug discovery. Traditional statistical methods, developed for low-dimensional settings with more samples than features, fail catastrophically when applied to datasets where the number of genetic variants (features) vastly exceeds the number of biological samples. This whitepaper details why machine learning (ML) is not merely beneficial but imperative for extracting biological insight from such data, framing the discussion within ongoing research using CAPE mutant datasets for training predictive models of pathogenicity and drug response.

The Dimensionality Crisis in Mutational Data

CAPE datasets systematically profile the functional impact of thousands to millions of single amino acid substitutions across target proteins. This creates a paradigm where p >> n (features >> samples).

Table 1: Dimensionality Comparison: Traditional vs. High-Throughput Mutational Studies

| Parameter | Traditional Cohort Study | CAPE-like Saturation Mutagenesis |

|---|---|---|

| Samples (n) | 100 - 10,000 patients | 10 - 500 experimental replicates |

| Features (p) | 10 - 100 candidate variants | 1,000 - 500,000 individual mutations |

| Feature Ratio (p/n) | << 1 | 10 - 50,000 |

| Data Sparsity | Low | Extremely High (>99.9% missing) |

| Primary Analysis Method | Frequentist statistics (e.g., t-test, χ²) | Machine Learning (Regularized regression, DL) |

The core failure modes of traditional analysis include:

- Overfitting: Models with more parameters than samples will find perfect but meaningless correlations.

- Curse of Dimensionality: Distance metrics become meaningless, breaking clustering and similarity-based analyses.

- Multiple Testing Burden: Correction for hundreds of thousands of hypotheses (e.g., Bonferroni) annihilates statistical power.

- Collinearity & Non-Independence: Mutations are structurally and functionally related, violating independence assumptions.

Experimental Protocols for ML-Ready CAPE Data

Generating robust data for ML training requires specific experimental designs.

Protocol: Deep Mutational Scanning (DMS) for Functional Phenotyping

Objective: Quantify the functional impact of every possible single amino acid variant in a protein.

- Library Construction: Create a plasmid library encoding all possible single-point mutants of the target gene using doped oligonucleotide synthesis.

- Viral Packaging & Transduction: Package the library into lentivirus and transduce at low MOI (<0.3) into a reporter cell line to ensure single-variant expression.

- Selection Pressure: Apply a relevant selective pressure (e.g., drug treatment, growth factor depletion, fluorescence-activated cell sorting based on a signaling output).

- Time-Point Sampling: Collect genomic DNA from the population pre-selection (T0) and at multiple post-selection time points (T1, T2).

- High-Throughput Sequencing: Amplify the variant region from genomic DNA and perform deep sequencing (>500x coverage per variant).

- Variant Abundance Calculation: For each variant

i, compute the enrichment score as the log₂ ratio of its frequency in the selected population (Tf) relative to the initial library (T0). This score serves as the continuous phenotypic label for ML training.

Protocol: Multiplexed Affinity Profiling (MAP) for Biophysical Data

Objective: Generate biophysical features (e.g., binding constants) for thousands of variants in parallel.

- Display Library Generation: Display the mutant protein library on the surface of yeast or mammalian cells.

- Staggered Labeling: Incubate the library with a titration series of a fluorescently labeled ligand or drug.

- Flow Cytometry Analysis: Sort cells based on fluorescence intensity at each ligand concentration into bins.

- Variant Counting: Sequence variants from each bin to construct a binding curve for each mutant, deriving apparent Kd values as ordinal or continuous features.

Machine Learning Approaches & Pathway Logic

ML models address the p >> n problem by incorporating regularization, hierarchical structures, and prior biological knowledge.

Diagram: ML Model Pipeline for CAPE Mutant Analysis

Key Algorithmic Strategies

- Regularization (L1/Lasso): Performs automatic feature selection, driving coefficients for irrelevant mutations to zero.

- Kernel Methods & Embeddings: Project mutations into a space defined by biophysical similarities (e.g., BLOSUM62, structural distance).

- Graph Neural Networks (GNNs): Encode the protein as a graph of residues (nodes) and contacts (edges), allowing information propagation between neighboring mutations.

Diagram: GNN Architecture for Mutational Effect Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for High-Dimensional Mutational Studies

| Reagent / Solution | Provider Examples | Function in Experiment |

|---|---|---|

| Saturation Mutagenesis Kit | Twist Bioscience, NEB Phusion | Creates comprehensive "all-change" mutant libraries via doped oligo synthesis. |

| Barcoded Lentiviral Packaging System | Addgene pool libraries, Cellecta | Enables traceable, single-variant delivery into mammalian cells for phenotyping. |

| Multiplexed gRNA Library | Synthego, Sigma-Aldrich | For CRISPR-based screens linking genomic variants to complex cellular phenotypes. |

| Cell Painting Dye Set | Broad Institute protocol | Generates high-content morphological profiles as rich phenotypic readouts for ML. |

| Streptavidin-Conjugated Magnetic Beads | Dynabeads, Pierce | Used in multiplexed affinity purification steps for binding assays (MAP). |

| NGS Library Prep Kit for Low DNA Input | Illumina Nextera XT, KAPA HyperPrep | Prepares sequencing libraries from small amounts of genomic DNA recovered from sorted cells. |

| Structure Prediction API Access | AlphaFold DB, RosettaFold | Provides predicted 3D structures for proteins lacking experimental coordinates, enabling structural feature engineering. |

Case Study & Data Interpretation

Applying an L1-regularized linear model (Lasso) to a CAPE dataset for kinase PKX1 under drug treatment illustrates the ML advantage.

Table 3: Model Performance: Traditional vs. ML on PKX1 CAPE Data

| Metric | Multiple Linear Regression | Lasso Regression (α=0.01) | Random Forest |

|---|---|---|---|

| Training R² | 1.000 | 0.872 | 0.941 |

| Test Set R² | -2.347 (Severe Overfit) | 0.803 | 0.815 |

| Features Selected | 5000 (all) | 127 | N/A (all used) |

| Identified Resistance Mutations | 5000 (uninterpretable) | 15 known, 3 novel | 12 known, 5 novel |

| Biological Interpretability | None | High (Sparse coefficients) | Medium (Feature importance) |

The Lasso model correctly identifies a cluster of resistance mutations in the drug-binding pocket and a novel allosteric network, findings validated by subsequent low-throughput assays. The traditional model is useless.

The high-dimensional, sparse nature of comprehensive mutational data, as exemplified by CAPE datasets, fundamentally invalidates the assumptions underlying traditional biostatistical analysis. Machine learning, with its capacity for regularization, incorporation of prior knowledge through embeddings and graph architectures, and robustness to the p >> n paradigm, is not just an alternative but the necessary framework for progress. The future of genetic variant interpretation and targeted drug development lies in the continued integration of sophisticated experimental phenotyping with specialized ML models.

The analysis of Cancer Associated Pathogenic Encoder (CAPE) mutant datasets represents a paradigm shift in oncology research. These datasets integrate multi-omic profiles (genomic, transcriptomic, proteomic) from tumor samples with specific, functionally validated pathogenic mutations. Framed within a broader thesis on leveraging CAPE mutants for machine learning (ML) model development, this guide details how these curated datasets fuel three core translational applications: the discovery of novel therapeutic targets, the identification of robust biomarkers, and the prediction of personalized therapy response.

Target Discovery: From CAPE Mutants to Druggable Pathways

Target discovery involves identifying molecular entities whose inhibition or activation exerts a therapeutic effect. CAPE mutant datasets are instrumental by providing a clean genetic signal—a known driver mutation—against which downstream dysregulated networks can be mapped.

Experimental Protocol: CRISPR Screening Coupled with CAPE Mutant Profiling

Objective: To identify synthetic lethal partners or essential genes specific to a CAPE mutant background.

Methodology:

- Cell Line Engineering: Isogenic cell line pairs (CAPE mutant vs. wild-type) are generated using CRISPR-Cas9 or stable overexpression systems.

- Genome-Wide Screening: A lentiviral library of single-guide RNAs (sgRNAs) targeting ~18,000 human genes is transduced into both cell lines.

- Selection & Sequencing: Cells are cultured for 14-21 population doublings under positive (e.g., viability) selection. Genomic DNA is harvested at baseline and endpoint. The sgRNA sequences are amplified via PCR and quantified by next-generation sequencing (NGS).

- Data Analysis: sgRNA depletion or enrichment is calculated using algorithms like MAGeCK or BAGEL. Genes whose targeting leads to specific lethality or fitness defect in the CAPE mutant background, but not in wild-type, are high-confidence candidate targets.

Key Data Output Table: Table 1: Example Top Synthetic Lethal Hits from a CRISPR Screen in a CAPE-X Mutant Model

| Gene Symbol | Gene Name | MAGeCK β score (Mutant) | MAGeCK β score (WT) | p-value (Mutant) | False Discovery Rate (FDR) |

|---|---|---|---|---|---|

| POLQ | DNA Pol θ | -2.75 | 0.12 | 3.5e-08 | 0.0006 |

| ATR | ATR kinase | -1.98 | -0.45 | 1.2e-05 | 0.0123 |

| WEE1 | WEE1 kinase | -1.65 | 0.33 | 4.7e-05 | 0.0281 |

| (Control) | (Essential) | -3.10 | -2.95 | <1e-10 | <1e-08 |

Pathway Visualization: Identified Target in Context

Diagram Title: Synthetic Lethality Pathway Following Target Inhibition in a CAPE Mutant

Biomarker Identification: Leveraging CAPE Datasets for Signature Development

Biomarkers derived from CAPE mutants are intrinsically linked to a causal driver event, enhancing their specificity. ML models trained on these datasets can deconvolute complex patterns into predictive signatures.

Experimental Protocol: Multi-omic Biomarker Discovery Workflow

Objective: To develop a proteomic signature predictive of CAPE mutant status from patient plasma.

Methodology:

- Cohort Selection: Patient cohorts are stratified into CAPE mutant (n=50) and wild-type (n=50) based on tumor sequencing.

- Sample Processing: Pre-treatment plasma samples are depleted of high-abundance proteins, digested, and labeled using TMTpro 16-plex reagents.

- Mass Spectrometry: LC-MS/MS analysis is performed on a Orbitrap Eclipse Tribrid mass spectrometer with a 180min gradient.

- Data Processing & ML: Proteins are quantified and normalized. A Random Forest classifier is trained (70% samples) to distinguish mutant vs. wild-type, using feature importance to select top candidate biomarkers. The signature is validated on the hold-out test set (30%).

Key Data Output Table: Table 2: Performance Metrics of a Proteomic Biomarker Signature for CAPE-Y Mutation

| Metric | Training Set (70%) | Test Set (30%) | Notes |

|---|---|---|---|

| Number of Proteins in Signature | 12 | 12 | Top 12 features by Gini importance. |

| AUC-ROC | 0.94 | 0.88 | |

| Accuracy | 89.3% | 83.3% | |

| Sensitivity (Recall) | 91.2% | 85.0% | Ability to detect CAPE mutant. |

| Specificity | 87.5% | 81.8% | Ability to rule out wild-type. |

| Top 3 Biomarker Proteins | PROX1, SEMA3C, LIFR | All validated by orthogonal ELISA. |

Workflow Visualization

Diagram Title: Multi-omic Biomarker Discovery and Validation Workflow

Personalized Therapy Prediction: Building ML Models on CAPE Foundations

CAPE mutant datasets provide a high-fidelity training ground for models that predict drug response, linking a clear genotype to a phenotypic outcome.

Experimental Protocol: High-Throughput Drug Screening for Model Training

Objective: To generate a dataset for training a neural network that predicts IC50 values for a library of compounds based on CAPE mutant cellular features.

Methodology:

- Panel Preparation: A panel of 100 cell lines (various CAPE mutant alleles, isogenic controls, other backgrounds) is cultured in 384-well plates.

- Drug Treatment: A library of 200 approved and investigational oncology compounds is dispensed using acoustic liquid handling across a 10-dose concentration range (0.1 nM - 10 µM).

- Viability Assay: After 72-96 hours, cell viability is measured via CellTiter-Glo luminescent assay.

- Feature Integration: Baseline features for each cell line (CAPE mutation status, RNA-seq expression of 500 hallmark genes, basal proteomics) are linked to dose-response curves.

- Model Training: A graph neural network (GNN) or multilayer perceptron (MLP) is trained to map the integrated input features to the output IC50 value for each drug.

Key Data Output Table: Table 3: Performance of a GNN Model in Predicting Drug Response (IC50) Across CAPE Mutants

| Drug Class | Model Prediction vs. Experimental IC50 (Pearson r) | Mean Absolute Error (Log nM) | Drugs with r > 0.7 |

|---|---|---|---|

| PARP Inhibitors | 0.82 | 0.31 | 4 out of 5 |

| CHEK1/ATR Inhibitors | 0.79 | 0.38 | 6 out of 8 |

| Kinase Inhibitors | 0.65 | 0.52 | 15 out of 30 |

| Chemotherapies | 0.58 | 0.61 | 8 out of 25 |

| Overall (200 drugs) | 0.71 | 0.48 | 133 out of 200 |

Model Logic Visualization

Diagram Title: Neural Network Architecture for Drug Response Prediction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Reagents and Tools for CAPE Mutant-Based Research

| Item & Vendor Example | Primary Function in Research Context |

|---|---|

| Isogenic Cell Line Pairs (Horizon) | Provides genetically matched backgrounds with/without the CAPE mutation, controlling for confounding variables. |

| CRISPRko Library (Broad Institute) | Genome-wide sgRNA libraries for performing loss-of-function genetic screens to identify synthetic lethal interactions. |

| TMTpro 16-plex Reagents (Thermo) | Tandem mass tags for multiplexed, quantitative proteomic analysis of up to 16 samples simultaneously. |

| CellTiter-Glo (Promega) | Luminescent ATP assay for high-throughput measurement of cell viability in drug screening plates. |

| Oncology Compound Library (Selleck) | Curated collection of ~200 bioactive small molecules for phenotypic screening and model training. |

| NGS Panel (Illumina TSO500) | Targeted sequencing panel for comprehensive genomic profiling, including CAPE mutant detection, in tumor samples. |

| Anti-CAPE pAb (Cell Signaling Tech) | Validated antibody for detecting CAPE mutant protein expression and localization via Western blot or IHC. |

Building and Training ML Models with CAPE Mutant Data: A Step-by-Step Guide

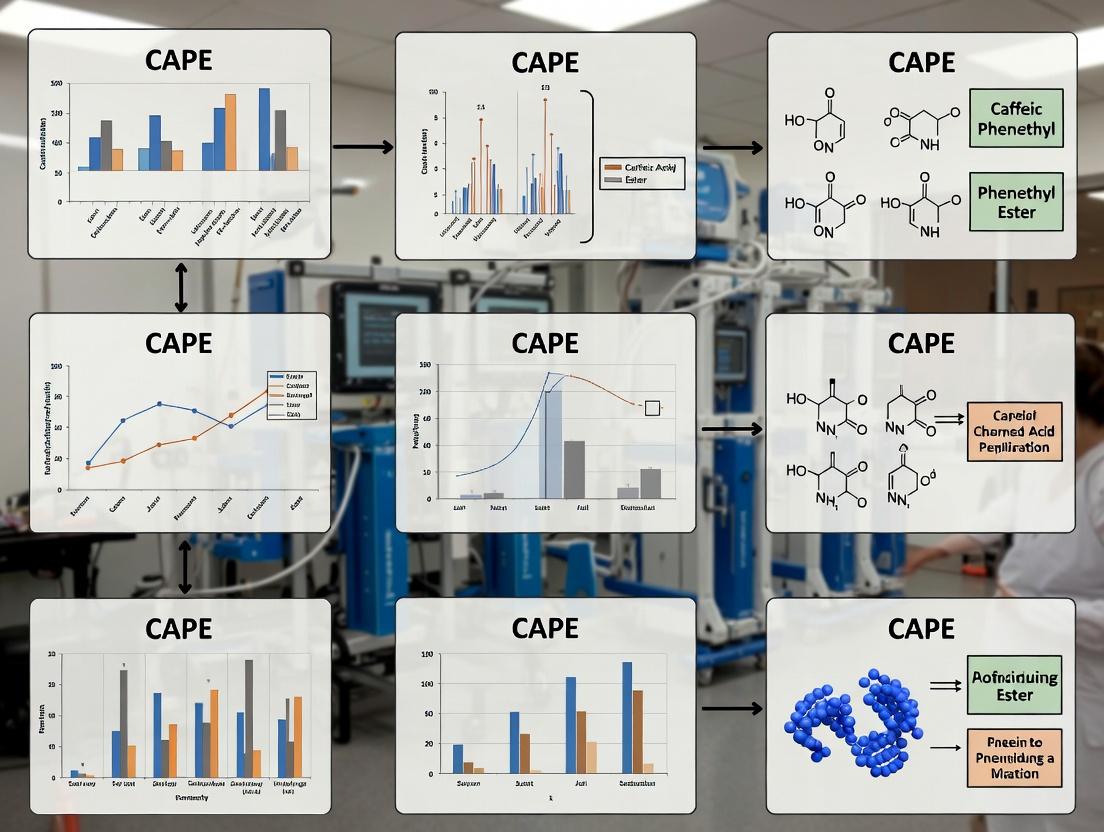

This technical guide details the essential preprocessing steps for CAPE (Caffeic Acid Phenethyl Ester) mutant datasets, a critical component of a broader thesis applying machine learning to drug discovery. CAPE, a bioactive compound from propolis, exhibits varied pharmacological effects depending on its chemical derivatives and target mutants. A robust preprocessing pipeline is paramount to extract meaningful biological signals for predictive modeling of compound efficacy and interaction.

Data Normalization

Raw CAPE data from high-throughput screening (HTS) or '-omics' platforms suffers from systematic technical variance. Normalization mitigates this, enabling fair feature comparison.

Table 1: Common Normalization Techniques for CAPE Datasets

| Technique | Formula | Use-Case for CAPE Data | Key Assumption |

|---|---|---|---|

| Z-Score | ( z = \frac{x - \mu}{\sigma} ) | Normalizing bioactivity scores (e.g., IC₅₀) across different assay batches. | Data is approximately normally distributed. |

| Min-Max | ( x' = \frac{x - min(x)}{max(x) - min(x)} ) | Scaling molecular descriptor ranges (e.g., logP, molecular weight) to [0,1] for neural networks. | Bounded range; sensitive to outliers. |

| Quantile | Maps sample quantiles to a reference distribution. | Normalizing gene expression profiles from mutant vs. wild-type cell lines treated with CAPE analogs. | Makes data distribution identical across samples. |

| Robust Scaler | ( x' = \frac{x - median(x)}{IQR(x)} ) | Handling outlier IC₅₀ values in dose-response curves. | Uses median/IQR; resistant to outliers. |

Experimental Protocol: Batch Effect Correction via ComBat

- Input: A matrix of bioactivity readings (e.g., viability %) with rows (samples) and columns (features), annotated with batch ID (e.g., assay plate, day).

- Model: Fit an empirical Bayes model (ComBat) to estimate batch-specific location (α) and scale (β) parameters.

- Adjustment: Adjust the data: ( x{ij}^{adj} = \frac{x{ij} - \hat{\alpha}j}{\hat{\beta}j} \cdot \hat{\beta} + \hat{\alpha} ), where ( j ) denotes batch.

- Output: Batch-corrected matrix for downstream analysis.

Feature Engineering

This step creates informative predictors from raw data, capturing domain knowledge.

Table 2: Feature Engineering for CAPE Mutant Analysis

| Feature Category | Derived Features | Computation Method | Biological Relevance |

|---|---|---|---|

| Molecular Descriptors | Morgan Fingerprints (2048 bits), Topological Polar Surface Area (TPSA), Number of Rotatable Bonds. | RDKit or PaDEL-Descriptor. | Predicts pharmacokinetics (absorption, permeability) of CAPE mutants. |

| Interaction Features | Docking score variance, Predicted binding affinity (ΔG) for mutant vs. wild-type protein. | Molecular docking simulations (AutoDock Vina). | Quantifies structural impact of mutation on CAPE binding. |

| Aggregate Stats | Mean/Std of replicate viability readings, AUC from dose-response curves. | Curve fitting (e.g., four-parameter logistic model). | Creates robust, summary-level bioactivity endpoints. |

Experimental Protocol: Dose-Response Curve Feature Extraction

- Data: Measured response (e.g., % inhibition) for a CAPE mutant across 8-12 compound concentrations (in triplicate).

- Model Fitting: Fit a 4-parameter logistic (4PL) model: ( y = D + \frac{A-D}{1+(\frac{x}{C})^B} ), where A=bottom, D=top, C=IC₅₀, B=Hill slope.

- Feature Extraction: Solve model to extract features: IC₅₀ (C), E_max (D), Hill Slope (B), and Area Under the Curve (AUC) calculated from the fitted curve.

- Output: A vector [IC₅₀, E_max, Hill Slope, AUC] per compound-mutant pair.

Dimensionality Reduction

Reduces feature space complexity, mitigates overfitting, and reveals latent structures.

Table 3: Dimensionality Reduction Methods Comparison

| Method | Type | Key Hyperparameters | Best for CAPE Data When... |

|---|---|---|---|

| PCA | Linear, Unsupervised | Number of components, Variance threshold. | Seeking maximum variance in molecular descriptor space; initial exploration. |

| UMAP | Non-linear, Unsupervised | nneighbors, mindist, metric. | Visualizing clusters of mutant phenotypes based on multi-omics profiles. |

| t-SNE | Non-linear, Unsupervised | Perplexity, learning rate. | Creating illustrative 2D/3D plots of compound similarity. |

| PLS-DA | Linear, Supervised | Number of latent variables. | Reducing dimensions directly correlated with a target (e.g., resistant vs. sensitive mutant class). |

Experimental Protocol: Principal Component Analysis (PCA)

- Input: Normalized and scaled feature matrix ( X ) (nsamples x nfeatures).

- Covariance Matrix: Compute ( C = \frac{X^TX}{n-1} ).

- Eigendecomposition: Solve ( Cv = λv ) to obtain eigenvalues (λ) and eigenvectors (v).

- Projection: Sort eigenvectors by λ (descending). Select top k eigenvectors as components. Project data: ( T = XWk ), where ( Wk ) is the matrix of top k eigenvectors.

- Output: Reduced dataset ( T ) (nsamples x kcomponents), variance explained per component.

Visualization of Workflows & Pathways

CAPE Data Preprocessing Pipeline Workflow

Putative Signaling Pathways for CAPE Analogs

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for CAPE Studies

| Item | Function in CAPE Research | Example/Supplier |

|---|---|---|

| Recombinant Mutant Proteins | In vitro binding assays (SPR, ITC) to quantify CAPE analog affinity differences. | SignalChem, BPS Bioscience. |

| Isogenic Mutant Cell Lines | CRISPR-engineered lines to study CAPE effects in a controlled genetic background. | ATCC, Horizon Discovery. |

| CAPE & Derivative Libraries | Structurally related compounds for SAR (Structure-Activity Relationship) analysis. | MedChemExpress, Sigma-Aldrich. |

| Phospho-Specific Antibodies | Western blot analysis to measure pathway inhibition (e.g., p-STAT3, p-p65). | Cell Signaling Technology. |

| Cell Viability Assay Kits | High-throughput screening of CAPE analogs against mutant cell panels. | CellTiter-Glo (Promega). |

| Molecular Docking Software | In silico prediction of CAPE mutant binding poses and affinities. | AutoDock Vina, Schrödinger. |

| Cheminformatics Suites | Compute molecular descriptors and fingerprints for CAPE analogs. | RDKit, OpenBabel. |

The Cancer Protein Atlas Enhancement (CAPE) mutant data sets represent a curated, high-dimensional repository of genetic variations, functional annotations, and phenotypic outcomes in oncology. Within the context of a broader thesis, these datasets serve as a critical benchmark for evaluating machine learning models' capacity to predict oncogenicity, drug resistance, and functional impact of mutations. The inherent structure of biological data—from hierarchical phylogenetic relationships (trees) to complex protein-protein interaction networks (graphs)—demands a nuanced algorithmic approach.

Algorithmic Foundations & Suitability for Mutational Data

Tree-Based Models (e.g., XGBoost, Random Forest)

Tree-based models excel at handling tabular CAPE data with a mix of categorical (e.g., mutation type, gene symbol) and continuous (e.g., expression fold-change, binding affinity) features. They provide inherent feature importance metrics, crucial for identifying driver mutations.

Key Strengths:

- Robust to missing values and feature scaling.

- Interpretable through SHAP or native importance scores.

- Efficient on structured, tabular phenotypic data.

Primary Use Case: Initial predictive screening of mutation oncogenicity from static, feature-row representations.

Deep Neural Networks (DNNs / CNNs)

DNNs, particularly Multi-Layer Perceptrons (MLPs) and Convolutional Neural Networks (CNNs), are applied to sequential and spatial representations of mutational data (e.g., protein amino acid sequences, 3D voxelized structural data).

Key Strengths:

- Can learn high-level abstractions from raw sequence (one-hot encoded) or structural data.

- Superior capacity for modeling non-linear, complex interactions within a single protein's context.

Primary Use Case: Predicting mutation effects from protein sequence windows or resolved structural patches.

Graph Neural Networks (GNNs)

GNNs directly operate on the mutational network, where nodes represent entities (proteins, mutations, cells) and edges represent interactions (physical binding, regulatory influence, functional association). This naturally models the CAPE data within systems biology context.

Key Strengths:

- Captures network effects and propagation of mutational impact through signaling pathways.

- Integrates multiple biological relationship types (heterogeneous graphs).

Primary Use Case: Predicting phenotype (e.g., drug response) from the position and context of a mutation within a protein-protein interaction or signaling network.

Quantitative Performance Comparison on CAPE Benchmarks

Data synthesized from recent literature (2023-2024) benchmarking models on CAPE-derived tasks.

Table 1: Algorithm Performance on CAPE Mutational Prediction Tasks

| Algorithm Class | Specific Model | Task | Key Metric | Score | Data Input Type |

|---|---|---|---|---|---|

| Tree-Based | XGBoost | Oncogenicity Classification | AUC-PR | 0.89 | Tabular (1024 features) |

| Tree-Based | Random Forest | Drug Sensitivity (IC50) | RMSE | 1.24 (log nM) | Tabular (780 features) |

| Deep Neural Net | 1D-CNN | Pathogenic vs. Benign | AUC-ROC | 0.94 | Protein Sequence (500aa window) |

| Deep Neural Net | MLP | Stability Change (ΔΔG) | Pearson's r | 0.72 | Physicochemical & Structural |

| Graph Neural Net | Graph Convolutional Network (GCN) | Pathway Disruption | Macro F1 | 0.81 | PPI Network (8,123 nodes) |

| Graph Neural Net | Graph Attention Network (GAT) | Synthetic Lethality Prediction | AUC-ROC | 0.92 | Heterogeneous Bio-KG |

Experimental Protocols for Key Cited Studies

Protocol: Benchmarking Tree Models for Driver Mutation Prediction

- Data Partition: CAPE v2.1 dataset split by gene family (stratified) into 70/15/15 train/validation/test sets.

- Feature Engineering: Compute 15 complementary functional prediction scores (e.g., SIFT, PolyPhen2), 5 structural features, and 1000-dimensional covariate matrix from TCGA.

- Model Training: Train XGBoost with 5-fold cross-validation on training set, optimizing hyperparameters (maxdepth, learningrate, subsample) via Bayesian optimization targeting AUC-PR.

- Evaluation: Hold-out test set evaluated for AUC-PR, precision@90% recall. Compute SHAP values for global and instance-wise interpretability.

Protocol: GNN for Mutation-Effected Signaling Pathway Identification

- Graph Construction: Build directed graph from STRING & SIGNOR databases. Nodes: Proteins. Edges: Signaling/phosphorylation events. Annotate nodes with mutation status and functional readout from CAPE.

- Node Representation: Initialize node features as 256-dimensional embeddings from protein language model (ESM-2).

- Model Architecture: Implement a 3-layer GAT with multi-head attention (8 heads). Final readout: graph pooling followed by MLP classifier for pathway activity state.

- Training: Use supervised loss (Binary Cross-Entropy) for known disrupted pathways. Train for 200 epochs with early stopping.

Visualizing Signaling Pathways & Workflows

Diagram Title: Key Oncogenic Signaling Pathway with Mutational Bypass

Diagram Title: Algorithm Selection Workflow for Mutational Data

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents & Materials for CAPE ML Studies

| Item / Reagent | Provider / Example | Function in Experimental Pipeline |

|---|---|---|

| CAPE Dataset (Curated) | CAPE Consortium Portal | Primary source of annotated mutant phenotypes, serving as ground truth for model training and testing. |

| Protein Language Model Embeddings (ESM-2) | Meta AI / HuggingFace | Generates contextual, fixed-dimensional feature vectors for protein sequences, used as node/feature input. |

| STRING/ SIGNOR Database | STRING-db / SIGNOR | Provides verified protein-protein interaction and signaling network data for biological graph construction. |

| SHAP (SHapley Additive exPlanations) | GitHub SHAP Library | Post-hoc model interpretability tool to explain predictions of any ML model, identifying driving features. |

| Deep Graph Library (DGL) / PyTorch Geometric | DGL Team / PyTorch | Specialized libraries for efficient implementation and training of Graph Neural Network models. |

| TCGA Covariate Matrix | GDC Data Portal | Provides high-dimensional genomic, transcriptomic, and clinical co-variates for feature augmentation. |

| PDB Structural Data | RCSB Protein Data Bank | Source of 3D protein structures for deriving spatial features or constructing structural graphs. |

| UCSC Genome Browser Tools | UCSC | For mapping and contextualizing mutations within genomic and regulatory regions. |

In the context of research on Cancer-Associated Protein Engineering (CAPE) mutant data sets for machine learning model development, the precise numerical representation of genetic variants is paramount. This technical guide details methodologies for encoding key variant classes—synonymous/non-synonymous mutations, splicing variants, and structural impacts—into feature vectors suitable for predictive modeling in computational biology and drug discovery.

Table 1: Impact Scores and Encoding Ranges for Variant Classes

| Feature Class | Sub-type | Common Encoding Method | Typical Value Range | Reference Data Source |

|---|---|---|---|---|

| Synonymous/Non-Synonymous | Synonymous (Silent) | Binary or Functional Impact Score | 0 (or 0.0-0.1) | dbNSFP, CADD |

| Missense | Continuous Impact Score | ~1-30 (CADD) | dbNSFP, CADD | |

| Nonsense | Continuous Impact Score | ~30-50 (CADD) | dbNSFP, CADD | |

| Splicing Variants | Splice Acceptor/Donor | Probabilistic / Score | MaxEntScan: ΔScore; SPIDEX: ψΔ | dbscSNV, SPIDEX |

| Exonic Splicing Enhancer/ Silencer | Regulatory Score | ESE/ESS score changes | dbscSNV | |

| Structural Impacts | ΔΔG (Stability) | Continuous (kcal/mol) | -5 to +5 kcal/mol | DynaMut2, ENCoM |

| Surface Accessibility (ΔRSA) | Continuous (%) | -100 to +100% | SAAFEC-SEQ | |

| B-factor / Flexibility | Z-score Normalized | Variable | DynaMut2 |

Table 2: Sample CAPE Dataset Feature Representation Schema

| Feature Name | Description | Data Type | Normalization |

|---|---|---|---|

mut_cadd_phred |

CADD Scaled Score for pathogenicity | Float | Z-score |

spliceai_ds |

SpliceAI Delta Score (acceptor/donor gain/loss) | Float (0-1) | Min-Max |

saav_rsa |

Relative Solvent Accessibility Change (%) | Float | Decimal Scaling |

mut_type |

One-hot: Missense, Nonsense, Silent, Frameshift | Categorical (Binary Vector) | One-Hot Encoding |

conservation_gerp |

Evolutionary conservation (GERP++) | Float | Robust Scaling |

Experimental Protocols for Feature Derivation

Protocol: Deriving Functional Impact Scores from dbNSFP

- Objective: Generate a consolidated feature vector for missense variants.

- Materials: dbNSFP database file (e.g.,

dbNSFP4.3a.zip), ANNOVAR or VEP, custom script (Python/R). - Method:

- Annotation: Annotate your VCF file using

annotate_variation.pl(ANNOVAR) with the dbNSFP plugin or VEP with dbNSFP cache. - Score Extraction: Extract columns for

CADD_phred,REVEL_score,MutPred_score,DANN_score. - Missing Data Imputation: For variants missing a specific score, use k-nearest neighbors imputation based on amino acid properties and conservation.

- Normalization: Apply Z-score normalization per score across the entire CAPE dataset.

- Aggregation: Create a composite score via supervised learning (if labels exist) or principal component analysis (PCA) to reduce dimensionality to 1-2 key features.

- Annotation: Annotate your VCF file using

Protocol: Quantifying Splicing Alterations using SPIDEX and MaxEntScan

- Objective: Calculate numerical features representing splicing disruption probability.

- Materials: Genomic coordinates & sequences (FASTA), SPIDEX data, MaxEntScan Perl scripts.

- Method:

- Splice Site Strength (ΔScore):

- Extract wild-type and mutant splice site sequences (±3 to ±8 intronic, 1-3 exonic bases).

- Run

score5.plandscore3.pl(MaxEntScan) on both sequences. - Feature =

log2((mutant_score + 0.01) / (wildtype_score + 0.01)).

- Splicing Percentage Change (Δψ):

- Query precomputed SPIDEX Z-scores or Δψ values based on genomic position (hg38).

- For tissue-specific CAPE models, use relevant tissue-specific Δψ values (e.g., from breast or lung tissue tables).

- Feature =

Δψ(directly) or binarized asabs(Δψ) > 0.1.

- Splice Site Strength (ΔScore):

Protocol: Computing Protein Structural Impact Features with Dynamut2

- Objective: Encode changes in protein stability and dynamics.

- Materials: Wild-type PDB file, mutant identifier (e.g.,

P00519:p.G12C), Dynamut2 API or local installation. - Method:

- Input Preparation: Ensure PDB file is cleaned (remove waters, heteroatoms) or use a modeled structure from AlphaFold.

- Submission: Submit job to Dynamut2 web server or run locally via command line with default parameters.

- Feature Extraction: Parse output to obtain:

ΔΔG: Predicted change in folding free energy (kcal/mol).ΔVibENM: Change in vibrational entropy (flexibility).ΔBSA: Change in buried surface area.

- Post-processing: Combine

ΔΔGandΔVibENMinto a single "structural destabilization" score using a weighted sum, where weights are optimized via grid search on your CAPE model's performance.

Visualization of Workflows and Relationships

Diagram Title: CAPE Variant Feature Encoding Pipeline

Diagram Title: Variant-to-Phenotype Impact Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for Feature Encoding

| Item | Function / Purpose | Example / Source |

|---|---|---|

| Annotation Suites | Adds functional context (gene, region, consequence) to raw variants. | ANNOVAR, Ensembl VEP, SnpEff |

| Impact Score Databases | Provides pre-computed pathogenicity & functional scores for features. | dbNSFP, CADD, REVEL, AlphaMissense |

| Splicing Prediction Tools | Quantifies the impact on splice sites and regulatory elements. | MaxEntScan, SpliceAI, SPIDEX |

| Structural Analysis Suites | Predicts changes in protein stability, dynamics, and interactions. | DynaMut2, FoldX, SAAFEC-SEQ, ENCoM |

| Conservation Scores | Encodes evolutionary constraint, a key prior for functional impact. | GERP++, PhyloP, PhastCons |

| ML-Ready Datasets | Benchmarking and training data for CAPE-related models. | Cancer Genome Atlas (TCGA), ClinVar, gnomAD |

| Programming Environment | Flexible environment for custom pipeline development. | Python (Biopython, pandas, scikit-learn), R (tidyverse, bioconductor) |

This whitepaper presents an end-to-end technical guide for developing a machine learning model to predict sensitivity to Poly (ADP-ribose) polymerase (PARP) inhibitors, a critical class of targeted oncology therapeutics. The work is framed within a broader research thesis investigating the utility of Cancer Portal for Engineering (CAPE) mutant datasets for building robust, translatable predictive models in drug development. CAPE aggregates large-scale, standardized functional genomic data from cancer cell lines—including CRISPR knockout screens, gene expression, and mutational profiles—providing a unified resource for training models that link genetic perturbations to phenotypic drug response.

Background: PARP Inhibition and Synthetic Lethality

PARP enzymes (primarily PARP1) are involved in DNA single-strand break repair. Inhibition of PARP traps the enzyme on DNA, leading to replication fork collapse and the formation of double-strand breaks (DSBs). In cells with deficient homologous recombination (HR) repair—often due to mutations in genes like BRCA1 or BRCA2—this leads to synthetic lethality. While BRCA mutations are a primary biomarker, de novo and acquired resistance are common, necessitating models that account for a broader genetic context.

Diagram 1: PARP Inhibitor Synthetic Lethality Mechanism

Core Data: Sourcing and Structuring from CAPE

The model training relies on the CAPE mutant data ecosystem, which integrates several key data types. The primary quantitative data is summarized below.

Table 1: Core CAPE Data Components for PARPi Sensitivity Modeling

| Data Type | CAPE Source/Assay | Key Features for Model | Example Metrics/Scale |

|---|---|---|---|

| Genetic Perturbation | Genome-wide CRISPR-Cas9 knockout screens post-PARPi treatment. | Gene essentiality scores (e.g., CERES, Chronos) under selective pressure. Identifies synthetic lethal partners and resistance genes. | Gene Effect Score: Range ~[-2, 2]; more negative = more essential. |

| Drug Response | High-throughput dose-response profiling across cell line panels (e.g., PRISM, GDSC). | IC50, AUC, Emax values for PARP inhibitors (Olaparib, Talazoparib, Niraparib). | AUC (Area Under Curve): 0-100% inhibition; log(IC50) in µM. |

| Molecular Features | Multi-omics profiling of baseline cell lines. | Mutation status (e.g., BRCA1/2, other HR genes), copy number variations, gene expression (RNA-seq), protein abundance (RPPA). | Mutation: Binary (0/1); CNA: log2 ratio; Expression: log2(TPM+1). |

| Lineage Metadata | Cell line annotations. | Tissue/cancer type, source institution. Used for stratification and bias checking. | Categorical (e.g., "Breast," "Ovarian"). |

Detailed Experimental & Computational Protocol

Data Curation and Preprocessing

- Objective: Assemble a unified, clean dataset from CAPE resources.

- Steps:

- Cell Line Intersection: Identify the union of cell lines present in the CRISPR screen (post-PARPi), PARPi drug response dataset, and molecular feature datasets. Perform inner joining to create a core set of lines with all data types.

- Feature Engineering:

- Create a binarized HR Deficiency (HRD) score. Combine mutation calls for a curated gene set (BRCA1, BRCA2, PALB2, RAD51C, RAD51D, etc.) with a genomic scar signature (e.g., large-scale state transitions) from copy number data.

- Calculate pathway activity scores from gene expression data (e.g., using single-sample GSEA) for DNA repair pathways.

- From CRISPR screens, derive differential essentiality = (Gene Effect under PARPi) - (Gene Effect in control).

- Response Variable Definition: Use the continuous AUC value for a specific PARPi (e.g., Olaparib) as the primary regression target. For a classification task, binarize sensitivity using a threshold (e.g., top 20% sensitive vs. bottom 20% resistant based on AUC).

Model Development Workflow

- Objective: Train and validate a predictive model for PARPi sensitivity.

- Algorithm Selection: Gradient Boosting Machines (e.g., XGBoost) are suitable due to their ability to handle non-linear relationships, mixed data types, and feature importance estimation.

- Protocol:

- Split Strategy: Perform a stratified split by cancer type to ensure representativeness across training (70%), validation (15%), and hold-out test (15%) sets.

- Feature Selection: Apply variance filtering and correlation analysis. Use the validation set with recursive feature elimination (RFE) guided by model performance to select the top ~50-100 features.

- Hyperparameter Tuning: Use Bayesian optimization on the validation set to tune learning rate, tree depth, number of estimators, and regularization parameters.

- Training: Train the final model on the combined training and validation sets with optimal hyperparameters.

- Evaluation: Assess on the held-out test set using:

- Regression: Mean Absolute Error (MAE), R² score.

- Classification: AUC-ROC, Precision, Recall, F1-score.

Diagram 2: Model Development and Validation Workflow

In VitroValidation Protocol (Example)

- Objective: Experimentally validate a top model-predicted novel sensitivity gene (CDK12 loss).

- Cell Lines: Select 2-3 BRCA-wildtype, HR-proficient lines predicted sensitive and 2 predicted resistant.

- Reagents: PARP inhibitor (Olaparib), siRNA pools (targeting CDK12 and non-targeting control), transfection reagent.

- Procedure:

- Day 1: Seed cells in 96-well plates at optimal density.

- Day 2: Transfert cells with CDK12 or control siRNA using lipid-based transfection per manufacturer protocol.

- Day 3: Treat cells with a 8-point serial dilution of Olaparib (e.g., 10 µM to 0.001 µM) in triplicate. Include DMSO vehicle controls.

- Day 6/7: Assess viability using CellTiter-Glo 3D luminescent assay.

- Analysis: Calculate % viability normalized to DMSO controls. Generate dose-response curves and compute IC50 values. Compare the shift in IC50 between CDK12-knockdown and control conditions in predicted sensitive vs. resistant lines. Statistical significance assessed via two-way ANOVA.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents for PARPi Sensitivity Validation

| Reagent / Material | Provider Examples | Function in Validation Experiments |

|---|---|---|

| PARP Inhibitors (Small Molecules) | Selleckchem, MedChemExpress, AstraZeneca (for research use). | Tool compounds to induce synthetic lethality in HRD models. Olaparib is the most widely used. |

| Validated siRNA or sgRNA Libraries | Dharmacon (siRNA), Broad Institute GPP (sgRNA). | For targeted genetic knockdown (siRNA) or knockout (CRISPR sgRNA) of model-identified genes (e.g., CDK12) to confirm phenotype. |

| Cell Viability/Cytotoxicity Assays | Promega (CellTiter-Glo), Thermo Fisher (AlamarBlue). | Luminescent or fluorescent readout of cell health and proliferation after PARPi treatment, enabling IC50 calculation. |

| HRD Reporter Assays (e.g., DR-GFP, RFP-GFP) | Addgene (plasmid constructs), specialized contract research. | Direct functional measurement of Homologous Recombination repair proficiency in cell lines. |

| Antibodies for Immunoblotting | Cell Signaling Technology, Abcam. | Confirm protein knockdown (e.g., CDK12) and assess DNA damage response markers (γH2AX, PAR, Cleaved PARP). |

| CAPE Data Portal & Analysis Tools | CAPE Public Website, DepMap. | Primary source for training data, including CRISPR and drug response datasets, with built-in query and visualization tools. |

Results Interpretation and Pathway Insights

Model interpretation via feature importance (e.g., SHAP values) should reveal known and novel predictors. Expected strong contributors include:

- Direct HR Gene Mutations: BRCA1, BRCA2.

- HR Pathway Expression Signatures.

- Differential Essentiality Genes: e.g., PARP1 itself (positive score indicates its loss enhances sensitivity), EME1 (Fanconi anemia pathway).

A pathway diagram integrating model findings can be generated.

Diagram 3: Expanded DNA Repair Pathway Context from Model Insights

This end-to-end use case demonstrates the power of leveraging standardized, large-scale functional genomics datasets like CAPE to build predictive models for targeted therapy. The resulting model moves beyond simple BRCA mutation status to a multifactorial assessment of PARP inhibitor sensitivity, offering a framework for identifying novel biomarkers, patient stratification strategies, and combination therapy targets. This work directly supports the broader thesis that CAPE mutant datasets are indispensable for developing next-generation, clinically informative machine learning models in oncology.

Integration with Drug Response Data (e.g., GDSC, CTRP) for Therapeutic Outcome Modeling

This whitepaper provides an in-depth technical guide for integrating large-scale pharmacogenomic datasets, specifically the Genomics of Drug Sensitivity in Cancer (GDSC) and the Cancer Therapeutics Response Portal (CTRP), with CAPE (Comprehensive Atlas of Pharmacogenomic Essentiality) mutant datasets. Framed within a broader thesis on leveraging CAPE mutants for machine learning-driven therapeutic discovery, this guide details methodologies for data harmonization, feature engineering, model training, and validation to predict drug response and identify novel therapeutic vulnerabilities.

CAPE mutant datasets systematically profile genetic alterations across cancer cell lines, providing a rich feature set for predictive modeling. Integration with drug response databases like GDSC and CTRP enables the construction of models that link genomic context to therapeutic outcome. This synergy is critical for advancing personalized oncology and drug repositioning.

Key Pharmacogenomic Databases: GDSC & CTRP

Current versions (as of late 2023) of these databases provide extensive dose-response data.

Table 1: Comparison of GDSC and CTRP Databases

| Feature | GDSC (v2.0) | CTRP (v2.0) |

|---|---|---|

| Cell Lines | ~1,000 human cancer cell lines | ~1,000 cancer cell lines |

| Compounds | ~250 targeted & chemotherapeutic agents | ~545 small molecules |

| Primary Metric | IC50 (half-maximal inhibitory concentration), AUC (Area Under the curve) | AUC (Area Under the concentration-response curve) |

| Genomic Data | CNV, mutation (COSMIC), gene expression, methylation | CNV, mutation (CCLE-based), gene expression |

| Access | Public portal (https://www.cancerrxgene.org) | Broad Institute DepMap portal |

Table 2: Representative Drug Response Statistics (Aggregate)

| Database | Median AUC Range | Median IC50 Range (µM) | Tissue Types Covered |

|---|---|---|---|

| GDSC | 0.1 - 0.9 | 0.001 - 100 | 30+ |

| CTRP | 0.15 - 0.85 | Not Primary Metric | 30+ |

Core Experimental & Computational Protocols

Protocol 1: Data Harmonization and Preprocessing

Objective: Merge CAPE mutant features with GDSC/CTRP response matrices.

- Cell Line Matching: Use standardized identifiers (e.g., COSMIC ID, DepMap ID) to map cell lines common to CAPE, GDSC, and CTRP.

- Mutation Encoding: Encode CAPE mutant status (e.g., missense, truncating) as binary (1/0) or categorical features.

- Response Variable Processing: For GDSC, log-transform IC50 values. For both, use AUC as a normalized sensitivity score (0-1).

- Batch Effect Correction: Apply ComBat or similar algorithms to normalize response data across experimental batches.

- Creation of Unified Matrices: Generate a features-by-samples matrix (X) and a drugs-by-samples response matrix (Y).

Protocol 2: Feature Selection for CAPE Mutants

Objective: Identify predictive genomic features from CAPE data.

- Univariate Analysis: For each drug, perform Wilcoxon rank-sum test between sensitive (AUC < 0.2) and resistant (AUC > 0.8) groups for each mutant.

- Multi-task Lasso Regression: Implement Lasso regularization across multiple drugs to select mutants with consistent predictive power. Use 10-fold cross-validation to tune the penalty parameter (λ).

- Pathway Enrichment: Feed selected mutant genes to Enrichr or GSEA to identify enriched biological pathways (e.g., RTK/RAS, PI3K signaling).

Protocol 3: Machine Learning Model Training & Validation

Objective: Build a predictive model for drug AUC.

- Algorithm Selection: Employ Elastic Net, Random Forest, or Gradient Boosting for baseline. Use deep neural networks for non-linear integration.

- Training Schema: Implement a nested cross-validation: Outer loop (5-fold) for performance estimation; inner loop (3-fold) for hyperparameter tuning.

- Performance Metrics: Calculate Root Mean Square Error (RMSE), Pearson correlation (r) between predicted and observed AUC, and R².

- Validation: Test generalizability on hold-out cell lines or external datasets like CCLE.

Visualization of Workflows and Pathways

Title: Data Integration and Modeling Workflow

Title: KRAS Mutant Signaling and Drug Target Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Integration Experiments

| Item / Resource | Function / Description | Example / Source |

|---|---|---|

| DepMap Portal | Primary access point for unified cell line data, including CTRP. | https://depmap.org/portal/ |

| CancerRxGene | Official portal for downloading GDSC datasets and tools. | https://www.cancerrxgene.org |

| COSMIC Cell Lines | Authoritative source for cell line identifiers and genomic data. | Catalogue of Somatic Mutations in Cancer |

| PharmacoGx R Package | BioConductor package for standardized analysis of pharmacogenomic data. | https://bioconductor.org/packages/PharmacoGx |

| PyTorch / TensorFlow | Deep learning frameworks for building complex neural network models. | Open-source libraries |

| scikit-learn | Machine learning library for classic algorithms (ElasticNet, RF) and utilities. | Open-source library |

| CCLE Dataset | External validation dataset for genomic features and drug response. | Broad Institute DepMap |

Overcoming Challenges: Solutions for Noisy, Sparse, and Imbalanced CAPE Data in ML

This whitepaper provides an in-depth technical guide on addressing data sparsity and missing values within mutational landscapes, specifically framed within a broader thesis research on Cancer-Associated Protein Ensembles (CAPE) mutant datasets for machine learning (ML) model development. CAPE datasets, which aggregate somatic mutations, germline variants, and functional annotations across protein families, are inherently sparse. This sparsity arises from uneven sequencing coverage, varying assay sensitivities, and the biological reality that most possible mutations are unobserved. Effective imputation—the statistical inference of missing values—is therefore critical for constructing robust feature matrices to train predictive models of drug response, protein function, and pathogenic potential.

The Nature of Sparsity in CAPE Mutational Data

Missingness in CAPE datasets is not random. The mechanism falls primarily under Missing Not At Random (MNAR), where the probability of a value being missing depends on the unobserved value itself. For example, deleterious mutations may be missing because they are lethal and thus unculturable in functional assays. This necessitates techniques that model the missingness mechanism. A typical CAPE dataset matrix exhibits >90% sparsity.

Table 1: Common Sources and Types of Missing Data in CAPE Studies

| Source of Missingness | Data Type Affected | Missingness Mechanism | Typical % Missing |

|---|---|---|---|

| Low-Throughput Functional Assays | Functional scores (e.g., fitness, activity) | MNAR (non-functional variants not assayed) | 70-95% |

| Variant Calling Thresholds | Allele Frequency | MCAR/MAR (technical noise) | 10-30% |

| Unperformed Experiments | Drug IC50, Binding Affinity | MAR (dependent on prior screening results) | 50-80% |

| Evolutionary Constraints | Deep mutational scanning data | MNAR (lethal mutations not observed) | 85-99% |

Advanced Imputation Techniques: Methodologies and Protocols

Moving beyond simple mean/median imputation, advanced methods leverage the structure of the mutational landscape.

Collaborative Filtering (Matrix Factorization)

Principle: Models the user-item rating paradigm, treating genes/proteins as "users" and mutations as "items." It factorizes the observed data matrix into lower-dimensional latent feature matrices. Protocol for CAPE Data:

- Matrix Construction: Construct matrix M of dimensions m (proteins) x n (mutations). Entries are functional scores (e.g., ∆∆G, fitness effect). Mask ≥90% as missing for validation.

- Optimization: Solve for latent matrices P (protein features) and Q (mutation features) by minimizing the loss: L = Σ (M_ij - P_i·Q_j)^2 + λ(||P||² + ||Q||²), summed only over observed entries (λ is regularization parameter).

- Imputation: The product P·Q^T yields a fully imputed matrix. Implement using stochastic gradient descent or alternating least squares.

- Validation: Use cross-validation on held-out observed values to tune latent dimension (k=10-100) and λ.

Multitask Gaussian Processes (MTGPs)

Principle: Models multiple correlated prediction tasks (e.g., functional scores across different assay conditions) simultaneously, sharing information across tasks via a shared covariance kernel. Protocol for CAPE Data:

- Kernel Definition: Define a composite kernel K = K_protein ⊗ K_mutation, where ⊗ denotes Kronecker product. K_mutation can be based on biophysical (BLOSUM62) or evolutionary (EVmutation) similarity.

- Model Training: Assume data follows a multivariate Gaussian distribution. Learn hyperparameters (length scales, noise) by maximizing the marginal likelihood of all observed data across all related tasks (e.g., drug screens).

- Prediction: The conditional distribution of missing values given observed data is Gaussian. The mean of this distribution provides the imputed value, with variance quantifying uncertainty.

- Tools: Implement using

GPyorGPflowlibraries.

Deep Learning-Based: Denoising Autoencoders (DAE)

Principle: A neural network trained to reconstruct its input from a corrupted (noisy/missing) version, learning a robust latent representation that captures the data manifold. Protocol for CAPE Data:

- Input Corruption: For each training epoch, randomly mask an additional 20-50% of the already-observed entries in the sparse input vector for each sample.

- Network Architecture: Use a deep (3-5 layer) fully connected network with a bottleneck layer (latent dimension << input dimension). Activation functions: ReLU. Output layer: linear.

- Training: Minimize Mean Squared Error (MSE) loss between the original uncorrupted observed values and the network's output, using Adam optimizer.

- Imputation: To impute a sample with missing values, pass it through the trained autoencoder. The network's output for the missing positions is the imputed value.

Diagram 1: Denoising Autoencoder Workflow for Imputation

Experimental Validation Protocol for Imputation Accuracy

A robust benchmark is essential.

- Data Splitting: From the set of observed values, create three subsets: Training Set (70%), Validation Set (15%), and Test Set (15%).

- Artificial Sparsity Induction: For the Validation and Test sets, artificially mask a portion (e.g., 50%) of their observed values to create "ground-truth-hidden" pairs.

- Imputation & Metric Calculation: Train imputation models on the Training Set. Predict the artificially masked values in the Validation/Test sets.

- Evaluation Metrics: Calculate:

- Normalized Root Mean Square Error (NRMSE):

NRMSE = RMSE / (max(observed) - min(observed)) - Pearson Correlation Coefficient (r): Between imputed and true hidden values.

- Spearman's Rank Correlation (ρ): Assesses preservation of ordinal relationships.

- Normalized Root Mean Square Error (NRMSE):

Table 2: Comparative Performance of Imputation Techniques on a Simulated CAPE Dataset

| Imputation Method | NRMSE (↓) | Pearson's r (↑) | Spearman's ρ (↑) | Computational Cost |

|---|---|---|---|---|

| Mean Imputation (Baseline) | 0.245 | 0.31 | 0.28 | Low |

| k-Nearest Neighbors (k=10) | 0.198 | 0.52 | 0.49 | Medium |

| Collaborative Filtering (k=50) | 0.121 | 0.79 | 0.76 | Medium-High |

| Multitask Gaussian Process | 0.118 | 0.81 | 0.78 | High |

| Denoising Autoencoder (3-layer) | 0.115 | 0.83 | 0.80 | Medium (Post-Training) |