The Prediction Paradox: Strategies for Balancing Speed and Accuracy in Protein & Molecular Structure Prediction

This article provides a comprehensive analysis of the critical trade-off between speed and accuracy in computational structure prediction for biomedical research.

The Prediction Paradox: Strategies for Balancing Speed and Accuracy in Protein & Molecular Structure Prediction

Abstract

This article provides a comprehensive analysis of the critical trade-off between speed and accuracy in computational structure prediction for biomedical research. Targeting researchers and drug development professionals, we explore the fundamental principles of this dichotomy, survey cutting-edge methodologies like AI/ML integration and hybrid pipelines, offer practical troubleshooting and optimization strategies for real-world projects, and establish frameworks for rigorous validation and comparative analysis. The article synthesizes actionable insights to empower scientists in designing efficient, reliable, and scalable prediction workflows.

The Fundamental Trade-off: Understanding the Core Dilemma of Speed vs. Accuracy in Computational Biology

Technical Support Center: Troubleshooting Guides and FAQs

FAQ Section: Common Issues in Structure Prediction Workflows

Q1: During high-throughput virtual screening, my molecular docking results show an unusually high number of false-positive hits with excellent docking scores but poor experimental activity. What could be the cause and how can I address this?

A: This is a common issue tied to the inherent speed/accuracy trade-off. Potential causes and solutions include:

- Cause: Overly simplistic scoring functions optimized for speed over physical accuracy.

- Solution: Implement a multi-stage filtering protocol. Use a fast, less accurate scoring function for initial screening (e.g., Vina, PLP) followed by re-scoring top hits with more rigorous, computationally expensive methods (e.g., MM-PBSA/GBSA, FEP+).

- Protocol: 1) Primary screen with docking software (Glide SP, AutoDock Vina). 2) Cluster top 1000 poses. 3) Re-score top 100 poses using MM-GBSA. 4) Visually inspect top 50 complexes. 5) Select top 20 for experimental testing.

Q2: When refining a predicted protein structure with molecular dynamics (MD) simulation, the RMSD relative to the starting model plateaus but remains high (>4 Å). Does this indicate a failed refinement or a conformational change?

A: A high, stable RMSD requires investigation.

- Troubleshooting Steps:

- Check RMSF: Analyze the Root Mean Square Fluctuation (RMSF). If fluctuations are high only in loop regions, the core fold may be stable.

- Cluster Analysis: Perform clustering on the MD trajectory. A single dominant cluster suggests convergence to a stable, albeit different, conformation. Multiple clusters suggest the simulation hasn't converged.

- Experimental Validation Cross-Check: Compare the MD-refined model's pocket geometry or surface features with any available mutagenesis or biochemical data. Disagreement may suggest an incorrect initial model.

- Protocol for MD-Based Refinement: 1) Solvate the predicted model in a TIP3P water box. 2) Neutralize system with ions. 3) Minimize energy (5000 steps). 4) Heat system to 300K over 100 ps. 5) Equilibrate at 1 atm for 1 ns. 6) Production run (100 ns - 1 μs). 7) Analyze trajectory using RMSD, RMSF, and cluster analysis (e.g., with GROMACS and MDAnalysis).

Q3: My AlphaFold2 or RoseTTAFold prediction for a multi-domain protein has high per-residue confidence (pLDDT) scores but the inter-domain orientation clashes with known cross-linking data. Which model should I trust?

A: Trust the experimental data. AI predictions are statistical models of likely folds, not physical simulations.

- Actionable Guide: Use the experimental data as a restraint in subsequent refinement.

- Filter: Use the predicted aligned error (PAE) matrix from AlphaFold2 to see if low confidence is associated with the inter-domain region.

- Integrate: Convert cross-linking distance constraints (e.g., Cα-Cα < 30 Å for a specific lysine-lysine cross-linker) into harmonic restraints.

- Refine: Run a short, restrained MD simulation or use molecular modeling software (e.g., MODELLER, Rosetta) to satisfy the experimental constraints while maintaining high-confidence domain folds.

Quantitative Data Comparison: Speed vs. Accuracy in Common Methods

| Method | Typical Time per Structure | Typical Resolution/Accuracy | Best Use Case | Key Limitation |

|---|---|---|---|---|

| Virtual Screening (Docking) | 1-60 seconds | Ligand RMSD: 2-5 Å; Poor binding affinity prediction | Identifying hit compounds from million-scale libraries | Scoring function inaccuracy; Rigid receptor approximation |

| Homology Modeling | Minutes to Hours | ~1-5 Å Cα RMSD (vs. template) | When a >30% identity template exists | Template bias; Loop and side-chain errors |

| AlphaFold2 | Minutes to Hours | Median ~1 Å for single chains (CASP14) | De novo prediction of monomeric folds | Dynamics & multimeric states; Ligand binding sites |

| Molecular Dynamics (Refinement) | Days to Months | Can improve models by 0.5-2 Å RMSD | Refining models, studying dynamics & stability | Extreme computational cost; Force field accuracy |

| Cryo-EM Single Particle | Weeks to Months | 3-5 Å (routine), <2.5 Å (high-end) | Large complexes, membrane proteins | Sample preparation; Requires expensive instrumentation |

| X-ray Crystallography | Weeks to Years | <1.5 Å (Atomic) | Atomic detail, small molecules, well-diffracting crystals | Requires crystallization; Static snapshot |

Experimental Protocol: Integrated Workflow for Hit-to-Lead Optimization

Title: Integrated Protocol for Balancing Speed and Accuracy in Structure-Based Lead Optimization

Objective: To rapidly optimize a hit compound's potency using iterative computational prediction and experimental validation.

Materials: Docked hit-receptor complex, High-performance computing cluster, Molecular dynamics software (e.g., AMBER, GROMACS), Free energy perturbation software, Protein expression & purification system, Microscale thermophoresis/SPR/isothermal titration calorimetry.

Procedure:

- Initial Model Preparation: Prepare the protein-ligand complex from the HTS docking hit. Add hydrogens, assign partial charges (e.g., using antechamber), and parameterize the ligand.

- Fast Alanine Scanning: Perform a computational alanine scan on binding site residues using a method like FoldX (takes ~1 hour) to identify "hotspot" residues critical for binding.

- Focused Library Design: Based on the ligand's interaction with hotspots, generate a focused library of ~100-200 analog structures.

- Multi-Stage Docking & Scoring:

- Stage 1 (Speed): Dock all analogs using a fast scoring function (Vina). Select top 30 poses.

- Stage 2 (Accuracy): Re-score the top 30 poses using MM-GBSA. Select top 10 compounds.

- Binding Affinity Prediction: For the top 3-5 compounds, run absolute binding free energy calculations (e.g., FEP+) if resources allow (weeks of computation).

- Experimental Validation: Synthesize or purchase the top 2-3 predicted compounds. Measure binding affinity (Kd) using a biophysical method (e.g., MST). Iterate from Step 3 with new data.

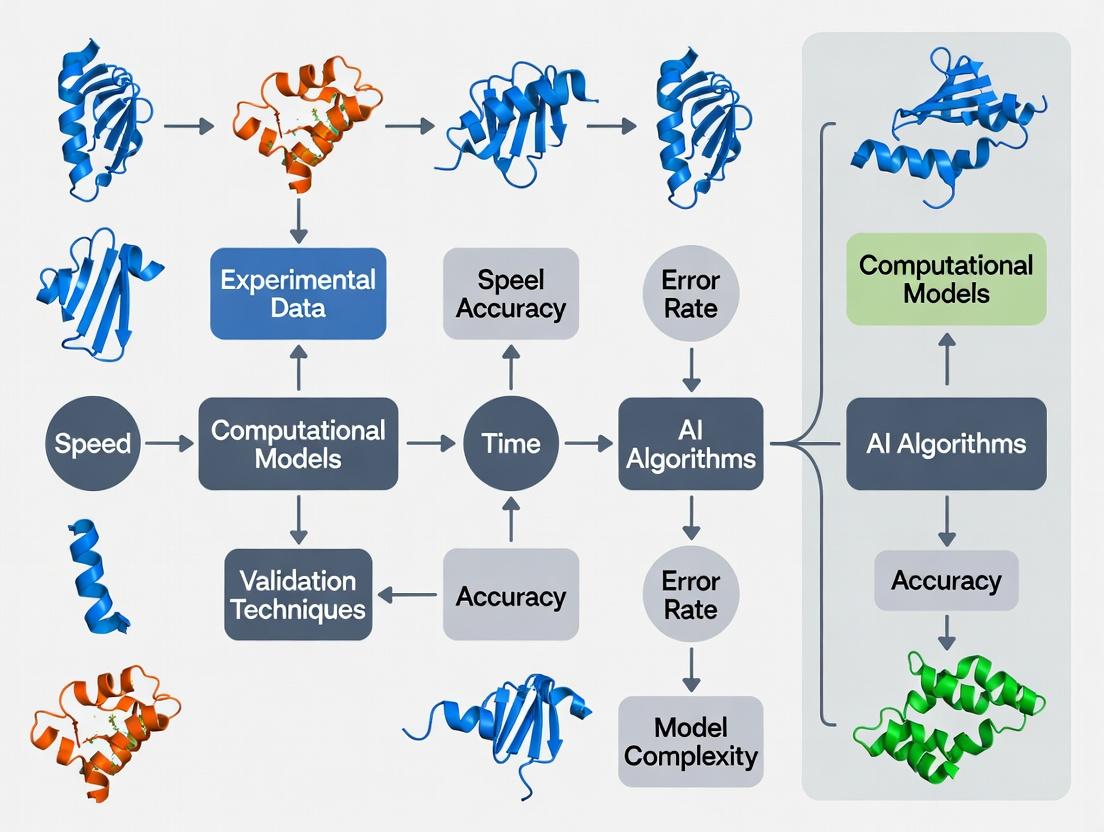

Visualizations

Title: Workflow: Balancing Speed & Accuracy in Drug Discovery

Title: Method Spectrum: Speed vs. Accuracy Trade-off

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Structure Prediction Pipeline | Example Product/Category |

|---|---|---|

| Purified Target Protein | Essential for experimental validation (biophysics, crystallography) and binding assays. | Recombinant protein, >95% purity, validated activity. |

| Chemical Fragment Library | For initial experimental screening to inform computational modeling of the binding site. | 500-2000 compounds, high solubility, known 3D coordinates. |

| Crystallization Screen Kits | To obtain atomic-resolution experimental structures for validation and template-based modeling. | Sparse-matrix screens (e.g., Hampton Research, Molecular Dimensions). |

| Cross-linking Reagents | To obtain distance restraints for validating predicted multi-domain or complex structures. | DSSO, BS3 (for mass spectrometry analysis). |

| Thermal Shift Dye | For fast, low-cost experimental validation of ligand binding (thermal shift assay). | SYPRO Orange, NanoDSF-capable instruments. |

| High-Fidelity Polymerase | For gene amplification and cloning to produce mutant proteins for validating predicted interactions. | Phusion, Q5. |

| GPU Computing Cluster Access | To run deep learning (AlphaFold2) and molecular dynamics simulations in a feasible timeframe. | NVIDIA A100/V100 nodes, cloud computing credits. |

| Specialized Software Licenses | For docking, molecular dynamics, and free energy calculations. | Schrödinger Suite, AMBER, GROMACS, Rosetta. |

Troubleshooting & FAQs

Q1: My AlphaFold2/ColabFold job is running out of memory (OOM) on my local GPU. What are the most effective parameters to reduce resource use while maintaining acceptable accuracy for initial screening? A: OOM errors typically occur during the Evoformer and structure module execution. To mitigate:

- Reduce

max_msaandmax_extra_msa: These control the number of sequence clusters and extra sequences used. For a 500-residue protein, trymax_msa:128andmax_extra_msa:1024(defaults are 512 and 1024, respectively). This directly reduces the MSA attention computation. - Use

unpaired_pdbinstead ofpaired_pdbfor templates: Thepaired_pdbmode is more accurate but requires significantly more memory. For initial runs, theunpaired_pdbtemplate mode is less memory-intensive. - Enable

low_memorymode in ColabFold: While slower, this trades compute time for reduced peak memory usage via gradient checkpointing. - Quantitative Impact: The table below summarizes the trade-offs.

| Parameter Adjustment | Approx. Memory Reduction | Expected ΔpLDDT (Accuracy) | Use Case |

|---|---|---|---|

max_msa:128 |

~30-40% | -1 to -3 points | Large-protein screening |

unpaired_pdb templates |

~20% | -0.5 to -2 points | When templates are low-confidence |

| 3 vs. 5 recycling steps | ~15% per step | -0.5 to -1.5 points per step | Convergent predictions |

Experimental Protocol for Parameter Sweeping:

- Target Selection: Choose a well-characterized protein of similar size to your target (e.g., PDB: 1A3A).

- Baseline Run: Execute prediction with default parameters (

max_msa:512,paired_pdbtemplates, 3 recycles). Record final pLDDT, ptmDTM scores, and GPU memory (vianvidia-smi). - Variable Runs: Run identical jobs, systematically varying one parameter (e.g.,

max_msaat 256, 128, 64). - Analysis: Plot pLDDT vs. memory usage and inference time. Determine the "knee in the curve" where accuracy loss accelerates.

Q2: When using molecular dynamics (MD) for relaxation/refinement after a neural network prediction, how do I decide between a fast (implicit solvent, 1ns) and a rigorous (explicit solvent, 50+ ns) simulation protocol? A: The choice hinges on the prediction's initial confidence and the biological question.

| Protocol | Computational Cost (CPU-hours) | Recommended For | Not Recommended For |

|---|---|---|---|

| Fast Implicit Solvent | 50-200 | High-confidence regions (pLDDT > 85), rapid side-chain packing, large-scale mutational screening. | Low-confidence loops, binding free energy calculations, folding simulations. |

| Explicit Solvent Long MD | 5,000-50,000 | Refining low-confidence flexible regions (pLDDT < 70), preparing structures for docking, assessing conformational stability. | High-throughput tasks or when the initial model is very poor (requires fold-level sampling). |

Detailed Protocol for Fast Implicit Solvent Relaxation (using AMBER):

- System Preparation: Use

pdb4amberto clean the PDB. Add hydrogens withreduce. - Parameter Assignment: Apply the

ff19SBforce field. - Solvation & Minimization: Solvate in a Generalized Born (GB) implicit solvent model (e.g.,

OBC1). Perform 500 steps of steepest descent minimization. - Thermalization & Production: Heat system to 300K over 20ps. Run 1ns of production MD with a 2fs timestep.

- Analysis: Extract the lowest potential energy frame as the refined model. Calculate RMSD from the initial prediction.

Q3: For docking small molecules, when should I use ultra-high-throughput virtual screening (Vina, 1 minute/pose) versus more expensive, accuracy-focused methods (FEP, 1 day/compound)? A: This is a classic speed/accuracy trade-off. Use a tiered funnel approach.

- Tier 1 - Ultra-High-Throughput: Screen 1M+ compounds using AutoDock Vina or QuickVina 2. Use a large grid box and low exhaustiveness (e.g., 8). Goal: 99.5% enrichment, not precise ranking.

- Tier 2 - Accuracy-Focused: Take the top 1,000 hits from Tier 1. Re-dock using GNINA (CNN scoring) or Glide SP/XP with stricter parameters and explicit water handling.

- Tier 3 - Free Energy Calculations: For the top 50-100 compounds, run alchemical Free Energy Perturbation (FEP) using Schrödinger FEP+ or OpenMM. This provides quantitative binding affinity predictions (goal: ~1 kcal/mol error).

Experimental Protocol for Tiered Screening Validation:

- Positive/Negative Control: Use a known binder (positive control) and a decoy (negative control) from the Directory of Useful Decoys (DUD-E).

- Metric: Calculate the Enrichment Factor (EF) at 1% of your library size for each tier. EF = (% actives in your top 1%) / (% actives in full database).

- Cost-Benefit Table:

| Screening Tier | Avg. Time per Compound | Approx. Cost per 10k Cpds* | Expected Correlation (R²) to Experiment |

|---|---|---|---|

| Vina (Tier 1) | 0.5 - 2 min | $20 (Cloud) | 0.2 - 0.4 |

| GNINA/Glide (Tier 2) | 5 - 15 min | $150 (Cloud) | 0.4 - 0.6 |

| FEP (Tier 3) | 24 - 72 hrs | $5,000 (HPC Cluster) | 0.6 - 0.8 |

*Cost estimates are for cloud/on-prem compute resources, excluding software licensing.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| AlphaFold2 (Local) / ColabFold | Core prediction engine. ColabFold offers faster, less resource-intensive MSA generation via MMseqs2. |

| OpenMM | Open-source MD engine for running explicit solvent simulations and FEP calculations with GPUs. |

| ChimeraX | Visualization and analysis. Critical for comparing predicted models, measuring RMSD, and preparing figures. |

| PyMOL | Alternative for high-quality rendering and presentation of molecular structures. |

| Rosetta Relax Protocol | Alternative to MD for fast, in-silico refinement of protein structures using a scoring function. |

| PDBfixer | (From OpenMM suite) Corrects common issues in PDB files (missing atoms, residues) before simulation. |

| GNINA | Docking software that uses convolutional neural networks for improved pose prediction and scoring. |

| AMBER/GAFF Force Fields | Parameter sets for modeling proteins and small molecules in MD simulations. |

Visualizations

Title: AlphaFold2 Prediction & Refinement Workflow

Title: Tiered Virtual Screening Funnel

Troubleshooting Guide & FAQs for Structure Prediction Experiments

FAQ 1: Why does my AI-predicted protein structure show high per-residue confidence (pLDDT) but poor overall stereochemical quality when validated?

- Answer: This is a classic speed-accuracy trade-off symptom. High-throughput AlphaFold2 or RoseTTAFold runs often use reduced template or recycle settings for speed, which can yield globally plausible but locally strained structures. To resolve, increase the number of recycles (--num_recycle in AlphaFold2, typically from 3 to 12 or 20) and enable template mode. This increases computation time significantly but improves side-chain packing and backbone angles.

FAQ 2: My molecular dynamics (MD) simulation of a predicted protein-ligand complex becomes unstable within nanoseconds. What steps should I take?

- Answer: Fast, automated docking and short MD equilibration protocols, while time-efficient, often produce unstable complexes. Follow this protocol:

- Re-dock: Use a more accurate, slower docking program (e.g., GLIDE SP or XP mode vs. high-throughput virtual screening).

- Extended Equilibration: Before production MD, run a multi-step equilibration:

- 100 ps NVT with heavy restraints on protein and ligand.

- 100 ps NPT with restraints on protein backbone and ligand.

- 100 ps NPT with restraints on protein C-alpha only.

- Explicit Solvation & Ions: Ensure the system uses explicit water (e.g., TIP3P) and physiological ion concentration (0.15M NaCl).

FAQ 3: How do I decide between a faster ab initio method and a slower, template-based method for a novel fold?

- Answer: The decision tree depends on remote homology detection. Run a sensitive sequence profile (HHblits, JackHMMER) against the PDB. If no templates with E-value < 0.001 are found, ab initio (like AlphaFold2 without templates) is your only option. If weak templates exist (E-value 0.001-0.1), use a hybrid method: run both fast ab initio and slower template-based folding, then compare consensus using a metric like TM-score. Investing time in the template-based run often provides a more accurate starting model for drug docking.

Experimental Protocol: Validating a Predicted Protein-Ligand Binding Pose Objective: To determine the accuracy of a computationally docked pose using a biophysical assay. Methodology:

- Protein Expression & Purification: Clone gene into pET vector, express in E. coli BL21(DE3), purify via Ni-NTA affinity and size-exclusion chromatography.

- Ligand Preparation: Obtain compound (>95% purity). Prepare 10 mM stock in DMSO.

- Surface Plasmon Resonance (SPR):

- Immobilize purified protein on a CMS chip via amine coupling to ~5000 RU.

- Run a 2-fold dilution series of ligand (from 50 µM to 0.39 µM) in running buffer (PBS + 0.05% Tween20, 2% DMSO).

- Contact time: 60 s, dissociation time: 120 s, flow rate: 30 µL/min.

- Fit sensograms to a 1:1 binding model to calculate KD.

- Comparison: If the experimental KD is within 10-fold of the computational predicted binding affinity (ΔG), the pose is considered plausible for further optimization. Major discrepancies require re-docking with the experimental data as a constraint.

Quantitative Data Summary: Impact of Prediction Parameters on Output

Table 1: AlphaFold2 Performance vs. Computational Time on a Standard GPU (NVIDIA V100)

| Parameter Set | Avg. pLDDT (Model 1) | Avg. TM-score | Wall-clock Time | Recommended Use Case |

|---|---|---|---|---|

| Fast (no templates, 3 recycles) | 85.2 | 0.89 | ~30 min | High-throughput target screening |

| Standard (with templates, 3 recycles) | 88.7 | 0.92 | ~1.5 hours | Standard single-target prediction |

| High Accuracy (with templates, 12 recycles) | 91.5 | 0.94 | ~6 hours | Critical drug target for lead optimization |

| Full DB Search (max templates, 20 recycles) | 92.1 | 0.95 | ~48 hours* | Final validation for clinical candidate |

*Time scales with MSA depth and sequence length.

Table 2: Error Rates in Virtual Screening Campaigns (2020-2023 Meta-Analysis)

| Screening Method | Avg. False Positive Rate | Avg. Hit Rate (Experimental) | Avg. Project Timeline (to hit validation) |

|---|---|---|---|

| Ultra-Fast (2D similarity, single docking) | 40-60% | 1-2% | 2-3 months |

| Balanced (ensemble docking, MD filter) | 20-35% | 5-10% | 4-6 months |

| Stringent (free energy perturbation, extensive MD) | 10-15% | 15-25% | 8-12 months |

Visualizations

Title: Speed vs Accuracy Decision Path in Early Discovery

Title: Structure Prediction Workflow with Parameter Inputs

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Structure Prediction & Validation

| Item | Function & Rationale |

|---|---|

| pET-28a(+) Vector | Standard bacterial expression vector with N-terminal His-tag for high-yield protein purification required for experimental validation. |

| Ni-NTA Superflow Resin | For immobilised metal affinity chromatography (IMAC) to rapidly purify His-tagged recombinant protein. |

| SEC Column (HiLoad 16/600 Superdex 200 pg) | For size-exclusion chromatography to purify protein to homogeneity and assess monomeric state—critical for accurate biophysics. |

| Biacore T200/Cytiva Series S CM5 Chip | Gold-standard SPR sensor chip for label-free, kinetic analysis of protein-ligand interactions to validate computational poses. |

| Molecular Dynamics Software (e.g., GROMACS, AMBER) | Open-source/licensed packages for running MD simulations to assess predicted complex stability and refine models. |

| Cryo-EM Grids (Quantifoil R1.2/1.3, 300 mesh Au) | For high-resolution structure determination of difficult targets unsuitable for crystallography, providing "ground truth." |

Troubleshooting Guide & FAQs

Q1: My homology model built with MODELLER has poor stereochemical quality (e.g., high Ramachandran outliers). What are the primary troubleshooting steps? A: This is a classic accuracy vs. speed trade-off. High outliers often stem from a poor template or incorrect alignment.

- Check Alignment: Manually inspect and refine the target-template sequence alignment. Even a single misaligned residue in a loop or secondary structure element can cause major distortions. Use multiple alignment tools (ClustalΩ, MAFFT) for consensus.

- Template Selection: If possible, use a template with higher sequence identity (>30-35%) and a solved structure in a similar conformation (active vs. inactive state). Consider using multiple templates for different domains.

- Sampling: Increase the number of models generated (e.g., from 5 to 50). MODELLER's

automodelroutine samples conformational space; more models increase the chance of a near-native structure. - Refinement: Subject your final model to a short, restrained molecular dynamics simulation in explicit solvent to relax clashes and improve side-chain rotamers.

Q2: When using AlphaFold2 (ColabFold) locally or on a cluster, I encounter "CUDA Out of Memory" errors. How can I proceed without a larger GPU? A: This balances computational speed (batch size, model size) against hardware limits.

- Reduce Model Size: Use the

--amberand/or--templatesflags selectively. Running the relaxation (AMBER) stage separately after prediction can save memory. - Adjust Sampling: Reduce the number of

num-recycle. The default is 3; try 1 or 2 for an initial test. Also, decreasenum-modelsfrom 5 to 1 or 3. - Sequence Chunking: For very long sequences (>1500 aa), use the

--chunk-sizeparameter (e.g.,--chunk-size 256) to process the sequence in overlapping segments. - Use CPU Mode: As a last resort, run with

--cpuonly. This is significantly slower but bypasses GPU memory constraints entirely.

Q3: The predicted aligned error (PAE) plot from my AlphaFold2 run shows low confidence (high error) for a specific domain or loop. How should I interpret and address this? A: The PAE plot is a critical accuracy metric, quantifying the model's self-estimated confidence.

- Interpretation: High inter-domain PAE (>15-20 Å) suggests flexible linkage between domains. High intra-domain PAE indicates intrinsically disordered regions or regions with no evolutionary constraints (e.g., long surface loops).

- Action - Template Search: Use this low-confidence region to search for a direct homologous template (via HHsearch) and build a targeted hybrid model, grafting the template loop onto the AF2 model.

- Action - Sampling: For loops, run dedicated loop modeling protocols (like RosettaNGK or MODELLER loop refinement) which sample more conformations than AF2's recycling steps, trading speed for local accuracy.

- Biological Insight: This may be a genuine feature—functionally important flexible regions. Consider complementary experiments (SAXS, NMR) to probe dynamics.

Q4: For molecular replacement in crystallography, when should I use a pure AlphaFold2 model vs. a refined hybrid model? A: This is a direct application of the speed-accuracy balance.

- Use Pure AlphaFold2 Model: For initial, rapid phasing attempts, especially if the predicted pLDDT is high (>85) across the entire chain and the PAE shows a rigid fold. This is the fastest path.

- Build a Hybrid Model: If phasing with the pure model fails, and your PAE/alignment suggests a reliable template exists for a low-confidence region, create a hybrid. This increases accuracy at the cost of manual modeling time. Always remove predicted disordered N/C-termini before running MR.

Experimental Protocols & Methodologies

Protocol 1: Building a Hybrid Model Using a High-Confidence AlphaFold2 Core and a Templated Loop

Objective: Integrate the global accuracy of AF2 with local precision from a homologous template for a problematic loop region (residues 50-65). Materials: See "Research Reagent Solutions" table. Procedure:

- Run AlphaFold2 (via ColabFold) on your target sequence. Download the highest-ranked model (

*_rank_1_*.pdb) and the PAE JSON file. - Visualize the PAE plot. Identify the low-confidence loop (high intra-chain error for residues 50-65).

- Perform a HHsearch (via the HMMER web server) of the target sequence against the PDB. Identify a homologous structure containing a resolved loop for your target region.

- Structurally align the template loop (residues 50-65) to the AF2 model core (residues 1-49 and 66-end) using PyMOL's

alignorsupercommand, minimizing RMSD in the stem regions. - In PyMOL, create a hybrid model by combining (

save) the AF2 core (with the original loop deleted) and the newly aligned template loop. - Perform energy minimization on the loop and its immediate surroundings (10 Å) using UCSF Chimera's

Minimize Structuretool (AMBER ff14SB force field, 100 steps) to relieve steric clashes.

Protocol 2: Comparative Assessment of Model Accuracy Using CASP Metrics

Objective: Quantitatively evaluate a newly generated model against a recently released experimental structure.

Materials: Your model (.pdb), the experimental structure (.pdb), and Molprobity or SWISS-MODEL Assessment server.

Procedure:

- Prepare Structures: Remove all heteroatoms and water molecules from both files. Ensure both structures contain the same residue numbering for the region of interest.

- Global Accuracy - TM-score: Upload both files to the TM-score web server. A TM-score >0.5 suggests a correct fold; >0.8 indicates high accuracy.

- Local Accuracy - RMSD: Perform a structural alignment in PyMOL (

align your_model, experimental_structure). Note the Ca-RMSD value. Lower is better (<2 Å for core regions). - Stereochemical Quality: Upload your model to the Molprobity server. Analyze key outputs:

- Ramachandran outliers (%): Target <0.5%.

- Clashscore: Target <5.

- CaBLAM outliers: Target <2%.

- Documentation: Record all metrics in a comparative table (see below).

Data Presentation

Table 1: Comparative Analysis of Protein Structure Prediction Methods

| Method (Example Tool) | Typical Speed (per target) | Typical Accuracy (Ca-RMSD vs. Experimental) | Key Strength | Primary Limitation |

|---|---|---|---|---|

| Homology Modeling (MODELLER, SWISS-MODEL) | Minutes to Hours | 1-5 Å (highly template-dependent) | Fast, explains known structural relationships. | Requires a close template (>25% seq. identity). |

| Threading/Fold Recognition (I-TASSER, Phyre2) | Hours | 3-8 Å (for distant homology) | Can detect remote homology when sequence alignment fails. | Less reliable for novel folds; accuracy varies. |

| Ab Initio/Physics-Based (Rosetta) | Days to Weeks | 3-10 Å (for small proteins) | Theoretically can model any fold; no template needed. | Computationally prohibitive for large proteins; low success rate. |

| Deep Learning (AlphaFold2) | Minutes to Hours | 0.5-2 Å (for most single-domain proteins) | Exceptional accuracy, even without clear templates. | Can struggle with multimers, large conformational changes, and novel orphan folds. |

| Ensemble/Hybrid Methods (AlphaFold2 + Template) | Hours to a Day | Can improve local accuracy by 0.5-1.5 Å over single method | Leverages strengths of multiple approaches; customizable. | Requires manual intervention and expertise. |

Table 2: Key Metrics for Model Quality Assessment

| Metric | Tool/Source | Ideal Value | Interpretation for Model Reliability |

|---|---|---|---|

| pLDDT | AlphaFold2 Output | >90 (Very High) | High confidence in atomic-level accuracy. |

| 70-90 (Confident) | Good backbone, variable side-chain accuracy. | ||

| <50 (Low) | Region likely disordered or unpredictable. | ||

| Predicted Aligned Error (PAE) | AlphaFold2 Output | Low Error (Dark Blue) | Confident in relative position/distance between residues. |

| High Error (Yellow/Red) | Uncertain spatial relationship (flexibility or disorder). | ||

| TM-score | TM-score Algorithm | 0-1 (1=perfect) | >0.5: Correct topological fold. >0.8: High accuracy. |

| Ramachandran Outliers | Molprobity, PROCHECK | <0.5% | Indicates good stereochemical backbone quality. |

| Clashscore | Molprobity | <5 | Low number of severe atomic steric overlaps. |

Mandatory Visualizations

Title: Decision Workflow for Modern Structure Prediction

Title: AlphaFold2 Architecture & Output Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Structure Prediction | Example/Note |

|---|---|---|

| Multiple Sequence Alignment (MSA) Database (UniRef, BFD, MGnify) | Provides evolutionary constraints essential for deep learning methods like AlphaFold2. Depth and diversity of MSA are critical for accuracy. | ColabFold default: UniRef30 (2022-03). |

| Template Structure Database (PDB) | Provides high-accuracy structural fragments for homology modeling and to guide deep learning models. | Always check release date; use latest. |

| Modeling Software Suite (PyMOL, ChimeraX, MODELLER) | For visualization, manual model building/editing, structural alignment, and hybrid model creation. | PyMOL is industry standard for visualization. |

| Validation Server (Molprobity, SWISS-MODEL Assessment) | Provides objective metrics on stereochemical quality, clash scores, and overall model plausibility. | Essential before publication or experimental use. |

| High-Performance Computing (HPC) Resources | Local GPU clusters or cloud computing credits (AWS, GCP) are necessary for running advanced models like AlphaFold2 on large proteins/complexes. | ColabFold provides free but limited access. |

| Specialized Modeling Tools (Rosetta, Amber, GROMACS) | For advanced refinement (molecular dynamics) and scoring of models, especially for docking or conformational sampling. | Used for post-prediction refinement. |

Advanced Methodologies: Cutting-Edge Tools and Hybrid Pipelines for Efficient & Accurate Predictions

Technical Support Center: Troubleshooting & FAQs for AI-Driven Protein Structure Prediction

This support center is designed within the thesis context of Balancing Speed and Accuracy in Structure Prediction Research. It addresses common issues encountered when using state-of-the-art AI models, helping researchers optimize their workflow for their specific need for rapid screening or high-accuracy analysis.

Frequently Asked Questions (FAQs)

Q1: My AlphaFold2/3 or ColabFold prediction for a monomeric protein has low pLDDT scores (<70) in specific regions. Does this always mean the structure is wrong? A: Not necessarily. Low confidence regions often correspond to intrinsically disordered regions (IDRs) or areas with high conformational flexibility. The model is accurately reporting its uncertainty. Cross-reference with disorder prediction tools like IUPred2A or check for coiled-coil predictions. For drug target sites, consider if the low-confidence region is in the binding pocket; if so, experimental validation is strongly recommended.

Q2: When using RoseTTAFold for a protein-protein complex, the predicted interface has high pae but the monomers look correct. What steps should I take? A: High interface PAE indicates uncertainty in the relative orientation. First, ensure your multiple sequence alignment (MSA) for the complex includes co-evolutionary signals (i.e., sequences where both partners are present). Try providing a weak constraint or distance hint based on known biological data (e.g., a known residue contact from mutagenesis studies). Alternatively, run the prediction with different random seeds to generate an ensemble and see if a consistent interface emerges.

Q3: ESMFold is incredibly fast but sometimes yields topologies different from AlphaFold. Which result should I trust? A: ESMFold's speed comes from bypassing the MSA, relying solely on the language model. This can be advantageous for orphan proteins or de novo designs but may lack evolutionary constraints. Use ESMFold for high-throughput scanning or when MSAs are poor/non-existent. For final, high-confidence predictions, prioritize AlphaFold2/3 or RoseTTAFold results, which integrate co-evolutionary information. The discrepancy itself is a valuable hypothesis generator about protein evolution and fold uniqueness.

Q4: How do I handle the prediction of large protein complexes (>1500 residues) that exceed the default memory limits? A: All major models now support "chunking" or tiling strategies.

- AlphaFold/ColabFold: Use the

--max-template-dateand--is-prokaryoteflags correctly to limit unnecessary database searches. For ColabFold, enable the "sequential" mode for the complex. - RoseTTAFold: The standalone version allows for explicit control of MSA depth (

-max_msa) to reduce memory. Use the-num 1flag to generate fewer models initially. - General Protocol: Consider breaking the complex into stable subdomains or pairs, predicting them individually, and then using a docking program like HADDOCK or ClusPro with the AI predictions as inputs, guided by the interface predictions from the full-complex low-resolution run.

Q5: The predicted structure has a stereochemical outlier (e.g., twisted peptide bond). How can I fix this? A: AI models prioritize global fold accuracy and may tolerate minor local violations. Do not use raw AI outputs for molecular dynamics or detailed mechanistic studies without refinement.

- Perform a short energy minimization using a tool like

AMBERorGROMACSwith restraints on the backbone (CA atoms) to preserve the overall fold while fixing clashes and angles. - Use dedicated refinement tools like

Rosetta relaxorModRefiner, which are designed to correct these issues while staying near the initial prediction. - Always validate the final refined model with

MolProbityorWHAT-IFto check geometry.

Comparative Performance Data (Summarized)

Table 1: Key Quantitative Metrics for Major Structure Prediction AI Models (Approximate Benchmarks)

| Model | Typical Runtime (Single Protein) | Key Accuracy Metric (Avg. on CASP14) | Primary Input | Ideal Use Case |

|---|---|---|---|---|

| AlphaFold2/3 | Minutes to Hours (varies) | GDT_TS ~92 (CASP14) | MSA + Templates | High-accuracy, definitive prediction; complexes. |

| ColabFold | <10-30 mins (GPU) | GDT_TS ~91 (CASP14) | MMseqs2 MSA (fast) | Rapid, near-AlphaFold2 accuracy without full DB setup. |

| RoseTTAFold | ~20-60 mins (GPU) | GDT_TS ~87 (CASP14) | MSA + Templates | Protein complexes, flexible with user constraints. |

| ESMFold | <1 second to seconds (GPU) | GDT_TS ~65-75 (orphan proteins) | Single Sequence Only | Ultra-high-throughput screening, metagenomics, poor MSA targets. |

Table 2: Troubleshooting Decision Guide: Speed vs. Accuracy Trade-off

| Your Research Goal | Recommended Primary Tool | Supporting Action for Accuracy | Expected Speed Gain |

|---|---|---|---|

| Screen 10,000 sequences for fold family | ESMFold | Cluster results; run top candidates via AlphaFold. | 1000x faster than full MSA methods |

| Predict a single, important drug target | AlphaFold2/3 or ColabFold | Generate multiple models; use alphafold-msa for deep MSA. |

Baseline for high accuracy |

| Model a complex with known site mutation data | RoseTTAFold | Incorporate distance restraints from experiments. | Faster complex modeling than AF2 |

| Get a reliable structure in under 10 minutes | ColabFold (with Amber off) | Use --num-recycle 3 to balance time/quality. |

3-10x faster than full AlphaFold2 pipeline |

Experimental Protocol: Validating AI Predictions with Cross-Linking Mass Spectrometry (XL-MS)

This protocol is a key methodology for experimentally testing the accuracy of predicted protein complexes, directly addressing the speed-accuracy balance by providing empirical constraints.

Title: XL-MS Validation of Predicted Complex Structures Objective: To obtain distance constraints for validating or refining AI-predicted quaternary structures. Materials: Purified protein complex, DSSO or BS3 crosslinker, trypsin/Lys-C, LC-MS/MS system, data analysis software (e.g., XlinkX, plink 2.0). Procedure:

- Cross-linking: Incubate 50 µg of purified protein complex with 1 mM DSSO crosslinker in PBS, pH 7.5, for 30 min at 25°C. Quench with 50 mM Tris-HCl, pH 7.5, for 15 min.

- Digestion: Denature with 2 M urea, reduce with 5 mM DTT, alkylate with 15 mM iodoacetamide. Digest with trypsin/Lys-C mix overnight at 37°C.

- LC-MS/MS Analysis: Desalt peptides. Analyze using a Q-Exactive HF mass spectrometer coupled to an Easy-nLC 1200. Use a data-dependent acquisition method with stepped HCD collision energies to capture cross-link fragments.

- Data Analysis: Identify cross-linked peptides using dedicated software (XlinkX, plink 2.0). Filter for high-confidence identifications (FDR < 1%).

- Validation/Refinement: Map identified cross-links (Cα-Cα distance typically < 30 Å) onto the AI-predicted complex structure. Use consistent cross-links as validation. Use inconsistent cross-links with high confidence to guide iterative refinement in HADDOCK or using Rosetta's constraint protocol.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for AI-Driven Structure Prediction Workflow

| Item | Function / Example | Role in Balancing Speed/Accuracy |

|---|---|---|

| MMseqs2 Software Suite | Rapid, sensitive sequence searching and MSA generation. | Drastically reduces MSA generation time from hours to minutes for ColabFold, with minimal accuracy loss. |

| AlphaFold DB | Repository of pre-computed predictions for the proteome. | Ultimate speed (instant access) for known structures; accuracy is fixed at time of DB generation. |

| PyMOL / ChimeraX | Molecular visualization software. | Critical for qualitative accuracy assessment (visual inspection of folds, pockets, interfaces). |

| pLDDT & PAE Plots | Built-in per-residue and pairwise confidence metrics from models. | Quantitative, model-generated accuracy estimates. Guide where to trust the prediction. |

| HADDOCK / ClusPro | Integrative docking platforms. | Refine low-confidence multimer predictions by incorporating AI outputs as starting models and experimental data as constraints. |

| MolProbity Server | All-atom structure validation tool. | Provides independent, geometric accuracy scoring to identify local errors in AI models post-prediction. |

Visualization: Experimental Workflows

Title: AI Structure Prediction Decision Workflow

Title: Core Architectural Logic of AI Prediction Models

This technical support center provides guidance for implementing hybrid structure prediction strategies. Framed within the thesis of balancing speed and accuracy, these resources address practical challenges researchers face when integrating rapid template-based modeling with high-precision refinement methods like ab initio folding or molecular dynamics (MD).

Troubleshooting Guides & FAQs

Q1: My template-based model has a high overall RMSD but excellent local geometry in the core. Should I refine the entire structure or just loop regions? A: Prioritize targeted refinement. Use the core as a fixed anchor and perform ab initio or MD refinement only on the low-confidence loop regions and termini. This preserves accurate domains while improving problematic segments.

Q2: During MD refinement, my protein backbone drifts excessively (>3 Å RMSD) from the initial template model, losing potentially correct features. How can I constrain this? A: Apply restrained MD. Use harmonic positional restraints on the backbone atoms of secondary structure elements identified in the template model with high confidence (e.g., pLDDT > 80). Gradually release these restraints over the simulation course.

Q3: After ab initio refinement of a template-derived fragment, the refined region clashes with the stable core. What's the optimal protocol? A: Implement a multi-stage protocol: 1) Isolate the fragment and refine it in vacuo using ab initio. 2) Use rigid-body docking to reposition it against the core. 3) Run a short, all-atom MD simulation with explicit solvent to relax the interface and resolve clashes.

Q4: How do I decide whether to use ab initio or MD for refinement post-template modeling? A: Base the decision on time resources and target size. See the quantitative comparison below.

Table 1: Refinement Method Decision Matrix

| Criterion | Ab Initio Refinement | MD Refinement |

|---|---|---|

| Best For | Large insertions (>25 aa), no homologous folds, high de novo content | Improving side-chain packing, resolving local clashes, refining dynamics |

| Typical Time Scale | Hours to Days (GPU-accelerated) | Days to Weeks (depending on system size & sampling) |

| System Size Limit | Up to ~250 residues (efficiently) | Up to ~500 residues (explicit solvent, conventional MD) |

| Key Output Metric | Lowest energy structure's RMSD & MolProbity score | RMSD plateau, stable energy, & improved Ramachandran outliers |

| Computational Cost | Moderate-High (sampling-intensive) | Very High (explicit solvent, long time-steps) |

Q5: My hybrid pipeline results are inconsistent; sometimes refinement improves the model, sometimes it worsens it. How can I stabilize the process? A: Implement a consensus scoring approach. Generate multiple refined decoys (e.g., 5-10 from ab initio, 3-5 MD trajectories). Select the final model not by a single score but by consensus across multiple metrics (e.g., Rosetta energy, DOPE score, MolProbity, ProSA-web Z-score).

Experimental Protocols

Protocol 1: Targeted Hybrid Refinement for a Single Domain Protein (150-300 residues)

- Input: Template-based model (e.g., from AlphaFold2, MODELLER, or SWISS-MODEL).

- Region Identification: Use per-residue confidence scores (e.g., pLDDT) or visual inspection to identify low-confidence regions (typically <70).

- Segmentation: Separate the protein into a stable core (high-confidence regions) and target regions (low-confidence loops/termini).

- Decoy Generation for Target Regions:

- For each target region, run a focused ab initio fragment assembly (using Rosetta or similar) with harmonic distance restraints to the anchor residues at the junction with the stable core.

- Generate 1000-5000 decoys.

- Selection and Integration:

- Cluster decoys by RMSD and select the center of the largest cluster.

- Graft the selected refined fragment onto the stable core using SCWRL4 or RosettaFixBB for side-chain repacking.

- Global Relaxation: Perform a final all-atom energy minimization or a short (5-10 ns) restrained MD simulation in explicit solvent to relax the entire composite structure.

Protocol 2: MD-Based Refinement of a Template-Based Complex

- Input: Template-based protein-ligand or protein-protein complex model.

- System Preparation: Use CHARMM-GUI or

tleapto solvate the complex in a cubic water box, add ions to neutralize, and set ionic concentration to 0.15 M. - Equilibration: Run a multi-stage NVT/NPT equilibration with positional restraints on protein heavy atoms (force constant starting at 5.0 kcal/mol/Ų, reduced to 0 over 1 ns).

- Production MD: Run unrestrained production MD for a timeframe dependent on system size (e.g., 100-500 ns). Use a thermostat (e.g., Langevin at 300 K) and barostat (Berendsen or Monte Carlo).

- Analysis & Model Extraction:

- Monitor RMSD, radius of gyration, and interaction energies.

- After RMSD plateaus, cluster the trajectory frames (backbone RMSD cutoff 2.0 Å).

- Select the centroid of the most populated cluster as the refined model.

- Validate using H-bond networks, binding interface complementarity, and computational mutagenesis.

Visualizations

Diagram Title: Hybrid Structure Prediction Workflow

Diagram Title: Logical Relationship: Thesis to Hybrid Solution

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials & Software for Hybrid Strategy Experiments

| Item Name / Software | Category | Primary Function in Hybrid Strategy |

|---|---|---|

| AlphaFold2 (ColabFold) | Modeling Software | Provides fast, high-accuracy template-based (or template-free) starting models with per-residue pLDDT confidence metrics. |

| Rosetta Suite | Modeling Software | Workhorse for ab initio fragment assembly and refinement; used for targeted loop rebuilding and side-chain optimization. |

| GROMACS / AMBER | MD Software | Performs all-atom, explicit-solvent molecular dynamics simulations for high-precision refinement and stability assessment. |

| MODELLER | Modeling Software | Traditional tool for homology modeling; useful for generating alternative template alignments. |

| ChimeraX / PyMOL | Visualization | Critical for visual inspection of models, identifying clashes, and analyzing refinement results. |

| MolProbity / PHENIX | Validation Server | Provides comprehensive structure validation (steric clashes, rotamer outliers, Ramachandran plots) pre- and post-refinement. |

| CHARMM36 / AMBER ff19SB | Force Field | Provides the physical parameters for MD simulations, critical for accurate energy calculations and dynamics. |

| TIP3P / OPC Water Model | Solvent Model | Explicit water models used in MD simulations to solvate the protein and provide a realistic environment. |

| GPUs (NVIDIA A100/V100) | Hardware | Accelerates both deep learning-based template prediction (AlphaFold) and MD simulations dramatically. |

| High-Throughput Cluster | Hardware | Enables parallel generation of multiple refinement decoys and long-timescale MD replicates for consensus. |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: During the initial fast filtering (Tier 1), my high-recall model is flagging over 95% of candidates, negating its speed benefit. What are the primary tuning knobs? A: This indicates a recall/specificity imbalance. Adjust the following in order:

- Classification Threshold: Lowering the probability threshold for inclusion increases recall but decreases precision. Start by raising it incrementally.

- Feature Set: Review features for relevance. Correlated or noisy features can degrade model discrimination. Use feature importance scores to prune.

- Model Choice: If using a simplistic model (e.g., Linear SVM), consider one with non-linear decision boundaries (e.g., Gradient Boosted Trees) while monitoring inference speed.

Q2: My high-accuracy Tier 2 (e.g., AlphaFold2) predictions are accurate but the pipeline throughput is too slow. How can I optimize? A: Optimize at the system and model level:

- Batch Processing: Ensure jobs are batched to maximize GPU utilization, not run serially.

- Template Restriction: For homologous targets, restrict the MSA/template search depth. This is the primary speed bottleneck.

- Hardware Check: Verify you are using a GPU with sufficient VRAM (>=16GB recommended). Monitor GPU utilization during runs; low utilization may indicate I/O or CPU bottlenecks.

- Early Stopping: Some systems allow prediction confidence estimation. Implement logic to abort low-confidence predictions early to save resources.

Q3: I encounter inconsistent results between pipeline runs with identical input data. What could cause this? A: Non-determinism is a common issue. Isolate the source:

- Tier 1 (ML Models): Set random seeds for all stochastic algorithms (e.g.,

random_statein scikit-learn,seedin TensorFlow/PyTorch). - Tier 2 (Structure Prediction): Check if your version of the prediction software uses stochastic sampling (e.g., in relaxation steps). Some tools have a deterministic mode flag.

- Concurrency: Race conditions in file I/O or database access in distributed workflows can cause inconsistencies. Implement proper job isolation and locking mechanisms.

Q4: How do I validate that the tiered system is providing a net benefit over a single-model approach? A: Conduct a cost-accuracy analysis. Measure:

- Compute Time: Record total wall-clock time for the full pipeline vs. running Tier 2 on all candidates.

- Accuracy Retention: Compare the accuracy (e.g., RMSD, GDT_TS) of final selected candidates from the tiered system vs. a Top-N selection from a hypothetical full Tier 2 run.

Validation Results Table

| Metric | Tiered System | Single-Tier (Tier 2 Only) | Benefit |

|---|---|---|---|

| Avg. Time per 1000 Candidates | 42 hours | 310 hours | 86% reduction |

| Mean RMSD of Top 50 Targets | 1.8 Å | 1.7 Å | 0.1 Å degradation |

| Cost per Candidate | $0.85 | $6.20 | 86% savings |

Q5: The handoff between my Tier 1 and Tier 2 systems is failing due to data format mismatches. What's the best practice? A: Implement a canonical data schema and validation layer. Use a structured format (e.g., JSON, Protocol Buffers) with a strict schema. The handoff service should validate all required fields (e.g., target sequence ID, pre-computed features, prior probability score) before Tier 2 execution. A lightweight Docker container can encapsulate this logic.

Experimental Protocols

Protocol 1: Establishing the Tier 1 High-Recall Filter Objective: Rapidly filter a large candidate pool (e.g., 100k proteins) to a manageable subset (~5-10%) with minimal false negatives. Methodology:

- Feature Engineering: Compute sequence-based features (e.g., length, amino acid composition, predicted disorder from

pyHCA, simple homology scores fromHMMER). - Model Training: Train a Gradient Boosting Classifier (e.g.,

XGBoost) on historical data labeled with "high-value" vs. "low-value" targets. - Calibration: Use Platt Scaling or Isotonic Regression to calibrate the model's output probabilities, ensuring they are meaningful for thresholding.

- Threshold Selection: On a validation set, identify the probability threshold that yields >98% recall while maximizing precision.

Protocol 2: Executing Tier 2 High-Accuracy Prediction Objective: Generate precise 3D structures for the Tier 1 output subset. Methodology:

- Input Preparation: Format the canonical JSON input containing target sequence and Tier 1 metadata.

- MSA Generation: Run

MMseqs2against the UniRef and environmental databases (configured for speed:--max-seqs 100 --num-iterations 2). - Structure Prediction: Execute

AlphaFold2orRoseTTAFoldin no-template (--notemp) mode for de novo targets, or with templates if homology is high. - Confidence Scoring: Extract the predicted local distance difference test (pLDDT) score. Structures with mean pLDDT < 70 are flagged for review or rejection.

Protocol 3: Cost-Benefit Analysis of Tiered System Objective: Quantify the trade-off between speed and accuracy. Methodology:

- Baseline: Run Tier 2 prediction on a representative, random sample of 500 candidates from the original pool. Record time and accuracy.

- Tiered Run: Execute the full tiered pipeline on the same 500 candidates.

- Comparison: Compare the top 50 candidates (by pLDDT) from both runs using structural alignment tools (

TM-score). Compute aggregate time and cloud compute cost.

Visualizations

Title: Tiered Prediction Workflow

Title: Speed vs Accuracy Trade-off Matrix

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Tiered Systems | Key Consideration |

|---|---|---|

XGBoost / LightGBM |

Tier 1 ML model. Provides fast inference, good accuracy on structured features, and built-in feature importance. | Tune max_depth and n_estimators to balance speed and recall. |

MMseqs2 |

Ultra-fast protein sequence searching for MSA generation in Tier 2. Critical for speed. | Use pre-clustered target databases (e.g., UniClust30) to further accelerate searches. |

AlphaFold2 (ColabFold) |

High-accuracy Tier 2 prediction. ColabFold offers faster, optimized pipelines. | Manage GPU memory; use --amber flag only for final models to save time. |

Nextflow / Snakemake |

Workflow orchestrators. Manage dependencies, execution, and scaling of multi-tier pipelines across compute clusters. | Implement robust error-handling and checkpointing for long runs. |

pLDDT Score |

Per-residue and global confidence metric from AlphaFold2. Primary criterion for final prioritization. | Aggregate (mean) pLDDT is a reliable proxy for model accuracy. Use for ranking. |

Redis / RabbitMQ |

Message broker / queue. Manages the handoff between Tiers 1 and 2, enabling asynchronous, decoupled processing. | Essential for maintaining pipeline reliability and scalability under load. |

Docker / Singularity |

Containerization. Ensures consistency of software environments (e.g., specific AlphaFold2 version) across all pipeline stages. | Guarantees reproducibility and simplifies deployment on HPC/cloud. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My molecular dynamics simulation on my local HPC cluster is failing with an "Out of Memory (OOM)" error during the minimization step. What are my immediate options? A: This is common when system size exceeds node memory. Options:

- Check Partitioning: Request a high-memory node if your cluster has them (e.g.,

#SBATCH --mem=512G). - Optimize Input: Reduce

cutoffdistances or use a implicit solvent model if accuracy permits. - Scale to Cloud: Package the job and run it on a cloud instance with large, contiguous memory (e.g., AWS x2gd.16xlarge with 1024 GiB RAM). This offers immediate scale without queue wait.

Q2: When running AlphaFold2 on cloud VMs, my job is slow and I see high "Steal Time" in htop. What does this mean and how do I fix it?

A: High "Steal Time" indicates your VM is competing for physical CPU resources on the host server, a common issue on shared public cloud tenancy. This directly impacts prediction speed.

- Solution: Migrate to a dedicated host or bare metal instance (e.g., Google Cloud C2, AWS m5d.metal). This guarantees full hardware access, eliminating performance variability and optimizing for accurate benchmarking.

Q3: My ensemble docking campaign on a cloud batch service is costing more than projected. How can I control costs without sacrificing scale? A: This points to inefficient resource configuration or job management.

- Apply Spot/Preemptible Instances: Use low-cost, interruptible instances for fault-tolerant batch jobs. Can reduce costs by 60-80%.

- Right-size Instances: Match instance type to task. Use compute-optimized (C-series) for docking, not general-purpose.

- Implement Auto-scaling: Configure clusters to scale down to zero when idle. Use object storage (S3, GCS) for results, not keeping VMs running.

Q4: File I/O is a major bottleneck in my HPC workflow for analyzing thousands of prediction trajectories. How can I improve this? A: HPC parallel filesystems (like Lustre, GPFS) can become congested.

- Use Local Scratch: Stage data on a compute node's local NVMe SSD (

/tmp,$TMPDIR), process, then write final results back. - Optimize Pattern: Use MPI-IO or HDF5 for parallel reads/writes instead of thousands of small files.

- Cloud Alternative: Consider a cloud-based HPC cache (e.g., AWS FSx for Lustre) that can elastically scale I/O bandwidth with your bursty workload.

Q5: I need to compare the accuracy of my refined protein structures predicted on different infrastructures. What's a standardized protocol? A: Use the following methodology to ensure consistent, comparable accuracy metrics:

Protocol: Comparative Accuracy Assessment for Predicted Structures

- Baseline Generation: Run identical prediction jobs (same input sequence, model parameters) on both HPC (local cluster) and Cloud (dedicated VM) infrastructures.

- Output Collection: Collect the top 5 ranked PDB files from each run.

- Validation Metrics Calculation:

- pLDDT: Use AlphaFold's built-in per-residue confidence score. Calculate average for each model.

- MolProbity Score: Use

phenix.molprobityto assess steric clashes, rotamer outliers, and Ramachandran outliers. - RMSD: If a known reference structure exists, compute global and core RMSD using

USalign.

- Statistical Analysis: Perform a paired t-test on the per-model metric sets (e.g., pLDDT from HPC-run models vs. Cloud-run models) to determine if observed differences are statistically significant (p < 0.05).

Table 1: Representative Performance & Cost Comparison for a 400-Residue Protein Fold Prediction Data sourced from recent benchmark studies and public cloud pricing calculators (2024).

| Infrastructure Type | Instance / Node Type | Approx. Wall-clock Time (AlphaFold2) | Est. Cost per Run | Key Infrastructure Limitation |

|---|---|---|---|---|

| On-Premises HPC | 4x NVIDIA V100, 16 CPU cores | 45 minutes | (Capital/Operational Overhead) | Fixed queue times; limited GPU availability. |

| Public Cloud (On-Demand) | AWS g4dn.12xlarge (4x T4) | 68 minutes | ~$8.50 | Lower-performance GPUs; shared tenancy variability. |

| Public Cloud (High-Perf) | Azure ND A100 v4 (4x A100) | 22 minutes | ~$25.00 | Highest raw speed, but premium cost. |

| Public Cloud (Spot/Preempt) | Google Cloud a2-highgpu-4g (4x A100) | 22 minutes | ~$7.50 | Can be interrupted; not suitable for time-critical jobs. |

Table 2: Decision Matrix: Cloud vs. HPC for Common Scenarios

| Research Scenario | Recommended Infrastructure | Rationale |

|---|---|---|

| High-throughput virtual screening (>1M compounds) | Cloud Batch (with Spot Instances) | Elastic scale avoids queue; cost-effective with interruptible instances. |

| Long-timescale MD (µs-ms simulation) | On-Premises HPC (dedicated cluster) | Sustained, expensive compute favors owned infrastructure; data gravity. |

| Rapid prototyping of new prediction tools | Cloud (Dev/Test Workstation) | Fast provisioning, no IT ticket wait; tear down after use. |

| Reproducing a competitor's published result | Cloud (Identical instance type) | Guarantees hardware/software parity, removing a variable. |

Workflow Visualizations

Title: Infrastructure Decision Workflow for Researchers

Title: Structure Prediction Compute Pathways: HPC vs Cloud

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital Research Reagents for Structure Prediction

| Item / Solution | Primary Function | Example / Source |

|---|---|---|

| Prediction Software Suite | Core engine for generating 3D models from sequence. | AlphaFold2, RoseTTAFold, OpenFold, ESMFold. |

| Molecular Dynamics Engine | Refines and validates predictions via physics simulation. | GROMACS, AMBER, NAMD, OpenMM. |

| Container Image | Reproducible, portable software environment. | Docker/Singularity containers from NGC, BioContainers. |

| Parameter/Topology Files | Defines force field and residue properties for simulation. | CHARMM36, AMBER ff19SB, Rosetta's talaris2014. |

| Reference Databases | Provide evolutionary and structural context for prediction. | UniRef90, BFD, PDB, AlphaFold DB. |

| Validation Metrics Scripts | Quantifies prediction accuracy and quality. | MolProbity, PROCHECK, pLDDT calculators, USalign. |

| Job Definition Template | Standardizes compute job submission across infrastructures. | SLURM batch script, AWS Batch job spec, CWL/WDL workflow. |

FAQ Section: Core Concepts & Problem Diagnosis

Q1: Our rapid virtual screening (VS) campaign against a kinase target yielded no hits in validation assays. What went wrong? A: This is a common issue in balancing speed and accuracy. The most likely cause is an inaccurate or low-resolution protein structure used for screening. Rapid VS protocols often use homology models or unrefined AlphaFold2 predictions. If the binding site conformation, especially in flexible loops (like the DFG-loop in kinases), is incorrect, the screening will fail.

- Troubleshooting Steps:

- Perform a binding site analysis: Use a high-fidelity tool like Schrodinger's SiteMap, FTMap, or P2Rank to analyze the predicted binding pocket's druggability and compare it to a known crystal structure.

- Check model confidence: For AlphaFold2 models, examine the per-residue pLDDT score. Regions with scores below 70, especially in the binding site, are unreliable.

- Apply molecular dynamics (MD): Run a short, unbiased MD simulation (100 ns) of the apo protein to assess binding site stability and sample conformations.

Q2: During high-fidelity binding site analysis with MD, the ligand drifts out of the pocket. How do I stabilize the simulation? A: Ligand drift indicates insufficient system preparation or inadequate sampling.

- Troubleshooting Protocol:

- System Preparation: Ensure proper protonation states of binding site residues (use H++ or PropKa). Use a force field parameterization tool (e.g., CGenFF, ACPYPE) specifically for the ligand.

- Apply Restraints: Implement weak positional restraints (force constant of 1-5 kcal/mol·Å²) on the ligand's heavy atoms for the first 10-20 ns of equilibration, then release them for production runs.

- Use Enhanced Sampling: If drift persists, employ Gaussian Accelerated MD (GaMD) or metadynamics to more efficiently sample binding/unbinding events without losing the ligand.

Q3: How do we reconcile conflicting results between a high-throughput VS (millions of compounds) and a focused, high-fidelity analysis (hundreds of compounds)? A: This conflict is central to the speed-accuracy trade-off. The table below summarizes key differences.

Table 1: Conflict Resolution Matrix: Rapid VS vs. High-Fidelity Analysis

| Aspect | Rapid Virtual Screening | High-Fidelity Binding Site Analysis | Resolution Strategy |

|---|---|---|---|

| Primary Goal | Enrichment of hit candidates from vast libraries. | Accurate characterization of binding affinity & mode. | Use VS as a filter; apply high-fidelity methods only to top 500-1000 VS hits. |

| Typical Throughput | 1,000,000+ compounds/day. | 100-500 compounds/week. | Implement a tiered workflow (see Diagram 1). |

| Structure Source | Static crystal structure or AlphaFold2 model. | MD-refined ensemble of structures. | Generate a consensus pharmacophore from the MD ensemble to re-score VS hits. |

| Scoring Function | Fast, empirical (e.g., Vina, Glide SP). | Slow, physics-based (MM/GBSA, FEP+). | Use MM/GBSA as a secondary screen on VS hits before experimental testing. |

| False Positive Cause | Imprecise scoring, rigid receptor assumption. | Limited sampling, force field inaccuracies. | Consensus scoring from at least two different methods before proceeding. |

Experimental Protocols

Protocol 1: Hybrid Tiered Workflow for Balanced Screening

- Initial Filter: Use AlphaFold2 model with high confidence (pLDDT >80 in binding site) for ultra-fast rigid docking (using Vina or FRED) against a 10M compound library. Top 50,000 hits progress.

- Refinement: Re-dock the 50,000 hits using induced-fit docking (IFD) or a flexible docking method against a crystal structure. Top 5,000 hits progress.

- Binding Site Analysis: Subject the top 5,000 hits to MD-based MM/GBSA binding free energy estimation (using AMBER or GROMACS). Use a cluster representative from a 100ns apo MD simulation.

- High-Fidelity Validation: Perform alchemical free energy perturbation (FEP+) calculations on the top 100-200 ranked compounds for quantitative affinity prediction.

Protocol 2: Generating a MD-Derived Pharmacophore for VS Post-Processing

- Run a 500ns explicit solvent MD simulation of the target protein.

- Cluster the trajectories based on binding site residue RMSD to identify dominant conformations.

- For each dominant cluster frame, use a tool like

pharmitor LigandScout to detect interaction features (H-bond donors/acceptors, hydrophobic areas). - Generate a consensus pharmacophore model combining features present in >60% of clusters.

- Use this pharmacophore to filter and re-rank the initial high-throughput VS hits, prioritizing compounds that match the dynamic features of the binding site.

Visualizations

Tiered Screening Workflow: Speed to Accuracy

Dynamic Pharmacophore Generation from MD

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Reagent Solutions for Featured Experiments

| Item/Software | Category | Primary Function in Context |

|---|---|---|

| AlphaFold2 Protein Structure Database | Prediction Tool | Provides rapid, high-accuracy protein models for targets without crystal structures. Critical initial input for VS. |

| Schrodinger Maestro/Glide | Docking Suite | Enables high-throughput VS (Glide HT/SP) and high-accuracy induced-fit docking (IFD) refinement. |

| GROMACS/AMBER | MD Engine | Performs molecular dynamics simulations for binding site analysis, stability checks, and MM/GBSA calculations. |

| CHARMM36/GAFF2 | Force Field | Provides parameters for proteins and small molecules, essential for accurate MD and free energy calculations. |

| MM/GBSA Scripts (gmx_MMPBSA) | Analysis Tool | Calculates binding free energies from MD trajectories, offering a balance between speed and physics-based accuracy. |

| FEP+ (Schrodinger) | Free Energy Tool | Performs alchemical free energy perturbation calculations for high-fidelity binding affinity prediction on final candidates. |

| FTMap Server | Binding Site Analysis | Maps hot spots on protein surfaces to assess druggability and validate predicted binding sites. |

| PyMOL/Maestro Visualizer | Visualization | Critical for inspecting docking poses, MD trajectories, and binding site interactions at all stages. |

Practical Optimization: Troubleshooting Common Pitfalls and Fine-Tuning Your Prediction Workflow

Troubleshooting Guides & FAQs

Q1: My AlphaFold2 or RoseTTAFold run is taking days to complete. How do I know if the bottleneck is compute speed or model accuracy settings?

A: The primary bottleneck is often hardware-related for speed, and model parameter-related for accuracy. Follow this diagnostic protocol:

- Profile Hardware Utilization: Use

nvidia-smi(for GPU) or system monitoring tools (for CPU/RAM) during a short test run. Consistently high GPU utilization (>90%) indicates the compute is saturated and speed is likely limited by hardware. Low GPU usage suggests an I/O, memory, or software bottleneck. - Conduct a Scaling Test: Run the same prediction on a subset of your data (e.g., 25%, 50%) and time it. Plot runtime vs. input size.

- Modify Accuracy Parameters: Adjust key accuracy parameters in a controlled test (see Table 1).

Diagnostic Data Summary:

Table 1: Hardware vs. Accuracy Parameter Impact

| Component | Metric to Monitor | Typical Bottleneck Indicator | Potential Quick Fix |

|---|---|---|---|

| GPU | Utilization (%) | <70% during major model steps | Batch size adjustment, CUDA version check |

| CPU/RAM | CPU % / RAM Usage | CPU at 100% or RAM maxed out | Increase RAM, optimize data pipeline |

| I/O (Disk) | Read/Write Wait Times | High wait times during MSAs or template search | Use faster SSD, local storage |

| Model (Accuracy) | pLDDT/ipTM score | Low confidence scores on known structures | Increase MSA depth, enable template mode |

Q2: How can I quantitatively decide to trade pLDDT score for faster turnaround time?

A: This requires a calibration experiment specific to your target class. Experimental Protocol:

- Select a benchmark set of 5-10 proteins with known experimental structures from your area of interest (e.g., GPCRs, kinases).

- Run predictions with systematically varied speed/accuracy settings:

- Setting A: Max accuracy (full MSA, full ensemble, template mode).

- Setting B: Reduced MSA depth (e.g.,

max_msa_clusters:128). - Setting C: Single model, no templates, fast relaxation.

- Measure both (a) Runtime and (b) Accuracy (TM-score or RMSD to known structure).

- Plot results to find the "knee in the curve" where gains in accuracy diminish relative to increased compute time.

Q3: Are there specific stages in the prediction pipeline where bottlenecks most commonly occur?

A: Yes, the pipeline has distinct stages with different bottleneck profiles.

- Stage 1: Multiple Sequence Alignment (MSA) Generation: Often an I/O and memory bottleneck due to large database searches (BFD, MGnify). Slowness here points to network storage or insufficient CPU cores for HHblits/JackHMMER.

- Stage 2: Neural Network Inference: A pure GPU compute bottleneck. Speed scales directly with GPU memory and FLOPs.

- Stage 3: Relaxation/Miniaturization: Can be a CPU bottleneck, as it's often run on CPUs after GPU steps.

Pipeline Stages and Common Bottleneck Locations

Q4: What are the key reagent and software solutions for optimizing high-throughput structure prediction?

A: The Scientist's Toolkit

Table 2: Key Research Reagent & Software Solutions

| Item / Tool | Category | Primary Function | Impact on Speed/Accuracy |

|---|---|---|---|

| NVIDIA A100/A800 GPU | Hardware | Provides high VRAM and tensor cores for large model inference. | Speed: Major increase. Enables larger batch sizes and complex models. |

| AlphaFold2 (Local ColabFold) | Software | Integrated pipeline optimizing MSA generation and inference. | Speed: Faster than standard installs. Accuracy: Comparable with reduced DBs. |

| MMseqs2 Server | Software | Rapid, cloud-based MSA generation. | Speed: Dramatically reduces MSA time vs. local HHblits. Accuracy: Slightly lower for some targets. |

| UniRef90 & BFD Databases | Data | Curated protein sequence databases for MSA. | Accuracy: Critical for model confidence. Larger DBs increase accuracy but slow MSA. |

| PDB70 Database | Data | Database of known structures for template search. | Accuracy: Can significantly boost accuracy if good templates exist. Speed: Adds to search time. |

| Amber Force Field | Software | Used for the final relaxation step. | Accuracy: Improves stereochemical quality and physical plausibility. Speed: Adds CPU compute time. |

Diagnostic Decision Tree for Pipeline Bottlenecks

Troubleshooting Guides & FAQs

FAQ 1: My structure prediction experiment is taking an extremely long time to complete. How can I speed it up without a drastic loss in accuracy?

Answer: This is a core challenge in balancing speed and accuracy. Focus on tuning three key parameters: the conformational search space, the sampling algorithm, and the convergence criteria. First, consider refining your search space by applying biologically informed constraints (e.g., from homologous templates or NMR data) to reduce the number of degrees of freedom. Second, adjust sampling parameters. For Monte Carlo-based methods, increase the step size; for molecular dynamics, consider using enhanced sampling techniques like metadynamics which are more efficient. Third, loosen convergence criteria cautiously. For example, increase the convergence threshold for energy minimization from 0.001 kcal/mol to 0.01 kcal/mol. The table below summarizes the typical impact of these adjustments.

Table 1: Parameter Adjustments for Efficiency vs. Accuracy Trade-off

| Parameter | Adjustment for Speed | Potential Impact on Accuracy | Recommended Use Case |

|---|---|---|---|

| Search Space Radius | Reduce from 10Å to 6Å | May miss distant conformational minima | When strong template constraints are available |

| Monte Carlo Step Size | Increase from 0.5Å to 2.0Å | Lower resolution sampling | Preliminary screening phases |

| Energy Convergence Threshold | Loosen from 0.001 to 0.01 kcal/mol | Slightly less refined final structure | Large-scale virtual screening |

| Molecular Dynamics Time Step | Increase from 1 fs to 2 fs (with constraints) | Risk of integration instability | When using hydrogen mass repartitioning |

| Number of Genetic Algorithm Generations | Reduce from 50,000 to 10,000 | May not reach global minimum | Cluster-based pre-filtering |

Experimental Protocol for Tuning Sampling Rate:

- Baseline Run: Execute your prediction algorithm (e.g., Rosetta, AlphaFold2, GROMACS) with default "high-accuracy" settings. Record the final RMSD and computational time.

- Iterative Adjustment: Systematically adjust one parameter at a time (e.g., reduce the number of decoys from 50,000 to 5,000).

- Benchmarking: Run the modified protocol 3 times against a known benchmark set (e.g., CASP targets).

- Analysis: Calculate the average change in computational time and the change in accuracy (TM-score, RMSD). Plot these to identify the "knee in the curve" where speed gains outweigh accuracy losses.

FAQ 2: How do I know if my simulation has converged sufficiently, or if I'm stopping it too early?

Answer: Premature termination is a common source of irreproducible results. Implement quantitative, multi-metric convergence checks instead of relying solely on simulation time.

- Monitor Root Mean Square Deviation (RMSD) Plateau: Plot backbone RMSD over time. Convergence is suggested when the moving average fluctuates around a stable value.

- Observe Energy Stabilization: The total potential energy should reach a stable equilibrium.

- Use Cluster Analysis: Periodically cluster saved structures. Convergence is indicated when the population of the largest cluster exceeds 70-80% and stops growing.

- Check Observables: Monitor key distances or dihedral angles relevant to your biological question; they should become stationary.

Experimental Protocol for Defining Convergence:

- Define Metrics: Choose at least two convergence metrics (e.g., RMSD, cluster population, specific distance).

- Set Sliding Window: Analyze the last n nanoseconds (e.g., last 20% of simulation) for stability.

- Statistical Test: Apply a statistical test for stationarity (e.g., Wilcoxon signed-rank test) on the chosen metric within the sliding window. A p-value > 0.05 suggests no significant drift, indicating convergence.

- Implement Logic: In your workflow, script these checks to trigger automatic termination, ensuring consistency.

Title: Convergence Checking Workflow

FAQ 3: What are practical ways to define or constrain the initial search space for a novel protein target with no homologs?

Answer: For de novo targets, use a hierarchical approach that combines ab initio principles with sparse experimental data.

- Secondary Structure Prediction: Use tools like PSIPRED to define likely helical/strand regions and apply dihedral angle restraints.

- Contact Prediction: Utilize deep learning-based contact map predictors (e.g., from trRosetta, AlphaFold2) to generate distance restraints, dramatically narrowing the search space.

- SAXS Data: If available, use Small-Angle X-ray Scattering profiles to define overall shape and radius of gyration restraints.

- Sparse NMR: Use chemical shifts to define secondary structure and paramagnetic relaxation enhancement (PRE) data for long-range distance constraints.

Title: Defining Search Space for a Novel Target

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Efficient Structure Prediction Tuning

| Item | Function in Tuning for Efficiency |

|---|---|

| Molecular Dynamics Software (GROMACS, AMBER, NAMD) | Provides engines for sampling. Critical for adjusting timesteps, thermostat/barostat algorithms, and implementing enhanced sampling. |

| Enhanced Sampling Plugins (PLUMED) | Enables advanced techniques (metadynamics, umbrella sampling) to overcome energy barriers faster, improving sampling efficiency. |

| Structure Prediction Suites (Rosetta, MODELLER) | Allow direct control over search space size (e.g., fragment libraries), sampling cycles, and convergence score thresholds. |

| Clustering Algorithms (GROMOS, Daura) | Used to analyze convergence and assess the diversity and representativeness of sampled structures before stopping a run. |

| Bioinformatics Databases (PDB, UniProt) | Source of template structures and homologous sequences to inform and rationally limit the initial search space. |

| High-Performance Computing (HPC) Cluster with GPU Nodes | Essential infrastructure. GPU acceleration (e.g., for AlphaFold, MD) is the single largest factor for reducing wall-clock time. |

| Job Scheduling & Monitoring Scripts (Slurm, custom Python) | Automate parameter sweeps, collect performance metrics (time, energy), and manage large-scale tuning experiments. |

Technical Support Center

Troubleshooting Guide: Common Data Integrity Issues

Q1: My structure prediction model is producing highly variable results despite using the same algorithm. What could be the issue? A: This is a classic symptom of inconsistent input data preprocessing. Variability often stems from: