Protein Language Models vs. BLASTp: A Comparative Guide for Modern Enzyme Annotation

This article provides a comprehensive analysis for researchers and drug development professionals on the evolving landscape of protein function annotation, focusing on the comparative strengths of traditional BLASTp and emerging...

Protein Language Models vs. BLASTp: A Comparative Guide for Modern Enzyme Annotation

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on the evolving landscape of protein function annotation, focusing on the comparative strengths of traditional BLASTp and emerging protein Language Models (pLMs). We explore the foundational principles of both methods, detail their practical applications in workflows like Enzyme Commission (EC) number prediction, and offer optimization strategies. Drawing on the latest 2025 research, we present a head-to-head validation of their performance, particularly for difficult-to-annotate enzymes. The conclusion synthesizes key takeaways and future directions, advocating for a synergistic approach that leverages the precision of BLASTp with the powerful, homology-independent pattern recognition of pLMs to accelerate biomedical discovery.

From Sequence Alignment to Semantic Understanding: The Foundations of BLASTp and Protein LLMs

In the field of bioinformatics, accurately annotating protein function is a fundamental task that enables researchers to decipher biological processes, understand disease mechanisms, and identify drug targets. For decades, the gold standard tool for this task has been BLASTp (Basic Local Alignment Search Tool for protein sequences), which operates on the fundamental principle that enzymes sharing high sequence similarity likely have similar functions [1]. This homology-based approach has served as the backbone of genome annotation pipelines, allowing for the functional transfer of annotations from well-characterized proteins to novel sequences based on evolutionary relationships. However, the rapid emergence of protein language models (pLMs) like ESM2, ESM1b, and ProtBERT, based on deep learning transformer architectures, presents a powerful new paradigm for function prediction that does not rely exclusively on sequence homology [2] [3]. These models, pre-trained on millions of protein sequences, can learn complex patterns and structural features that extend beyond direct evolutionary relationships. This guide provides an objective comparison of these competing approaches, examining their relative performance through recent experimental data to help researchers and drug development professionals navigate the evolving landscape of protein annotation tools.

Methodological Foundations: How BLASTp and Protein LLMs Work

BLASTp: Sequence Alignment and Homology Transfer

The operational principle of BLASTp is straightforward: it takes a query protein sequence and searches it against a reference database of proteins with known functions. Using a heuristic algorithm, it identifies regions of local similarity between the query and database sequences. The key assumption is that sequence similarity implies evolutionary descent from a common ancestor (homology), which in turn implies functional similarity [1]. The tool then transfers the functional annotation—such as an Enzyme Commission (EC) number—from the best-matching sequence(s) in the database to the query sequence. This method is computationally efficient and biologically intuitive, explaining its enduring popularity. Notably, the National Center for Biotechnology Information (NCBI) has continuously enhanced BLASTp, announcing that by August 2025, the default database will transition to ClusteredNR, which groups redundant sequences to provide faster searches, decreased redundancy, and broader taxonomic coverage in results [4].

Protein Language Models: Learning Sequence Semantics

Protein language models represent a fundamentally different approach. Inspired by successes in natural language processing, pLMs treat protein sequences as "sentences" where amino acids are the "words." Models like ESM2 and ProtBERT are pre-trained on massive datasets of protein sequences (e.g., from UniProtKB) using self-supervised objectives, such as predicting masked amino acids in sequences [3] [5]. Through this process, they learn deep contextual representations of protein sequences, capturing information about biochemical properties, evolutionary constraints, and even structural features without explicit supervision. For downstream tasks like EC number prediction, these learned representations (embeddings) are extracted and used as input features for classifiers, typically fully connected neural networks, which learn to map the embeddings to functional classes [2] [1]. This approach allows pLMs to identify functional signatures even in the absence of close homologs.

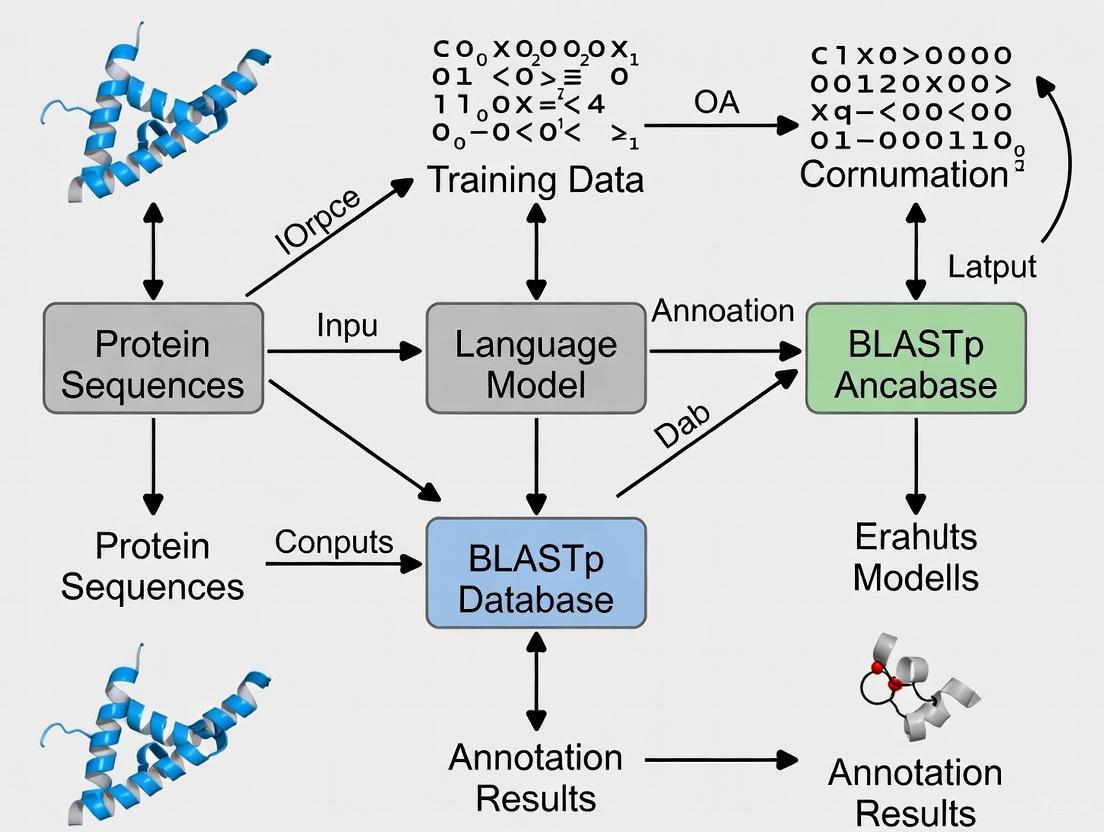

Visualizing the Core Annotation Paradigms

The following diagram illustrates the fundamental differences in how BLASTp and protein Language Models approach the problem of protein function annotation.

Experimental Comparison: Performance Metrics and Limitations

Direct Performance Comparison on EC Number Prediction

A comprehensive 2025 study directly compared the performance of BLASTp against several protein language models (ESM2, ESM1b, and ProtBERT) for predicting Enzyme Commission (EC) numbers, providing robust quantitative data on their relative strengths and weaknesses [2] [1]. The experimental protocol involved training deep learning models using embeddings from each pLM as features, with BLASTp serving as the baseline. The models were evaluated on their ability to correctly assign EC numbers in a multi-label classification setting, incorporating both promiscuous and multi-functional enzymes. The test datasets were constructed from UniProtKB, using only UniRef90 cluster representatives to ensure sequence diversity and avoid overfitting [1].

Table 1: Comparative Performance of BLASTp vs. Protein Language Models for EC Number Prediction

| Method | Overall Accuracy | Strength on High-Identity Sequences (>25%) | Performance on Low-Identity Sequences (<25%) | Ability to Annotate Orphans (No Homologs) |

|---|---|---|---|---|

| BLASTp | Marginally Better | Excellent | Limited | None |

| ESM2 (Best pLM) | Slightly Lower | Good | Good | Yes |

| ESM1b | Lower | Moderate | Moderate | Limited |

| ProtBERT | Lower | Moderate | Moderate | Limited |

The results demonstrated that while BLASTp provided marginally better results overall, the deep learning models provided complementary results, with each method excelling on different subsets of EC numbers [2]. Specifically, the ESM2 model stood out as the best performer among the pLMs, providing more accurate predictions on difficult annotation tasks and for enzymes without homologs in reference databases [1]. Crucially, the study concluded that pLMs still require further improvement to replace BLASTp as the gold standard in mainstream enzyme annotation routines, but they already offer valuable capabilities for specific challenging cases where traditional homology-based methods fail [2].

Performance on Challenging Cases and Low-Similarity Sequences

One of the most significant findings from recent comparative studies is the complementary nature of these approaches, particularly when dealing with sequences that have low similarity to proteins in reference databases. While BLASTp struggles when sequence identity falls below 25%, protein language models maintain reasonable predictive accuracy even for these difficult cases [1]. This capability is particularly valuable for annotating orphan enzymes—those with no recognizable homologs in current databases—which represent a significant challenge in genome annotation projects. The ESM2 model specifically demonstrated robust performance on these difficult annotation tasks, suggesting that pLMs capture functional signals beyond what is accessible through direct sequence comparison alone [2]. This complementary performance profile has led researchers to suggest that hybrid approaches combining both methods may offer the most robust solution for comprehensive genome annotation.

Next-Generation Annotation Systems: Integrating Multiple Approaches

The evolution of bacterial genome annotation systems illustrates the growing trend toward integrating multiple annotation methodologies. BASys2, a next-generation bacterial genome annotation system released in 2025, leverages over 30 bioinformatics tools and 10 different databases to achieve unprecedented annotation depth—generating up to 62 annotation fields per gene/protein while reducing annotation time from 24 hours to as little as 10 seconds [6]. While still relying on BLAST for certain annotation transfers, systems like BASys2 represent a move toward more comprehensive pipelines that can incorporate diverse prediction methods, including emerging deep learning approaches, to provide richer functional insights beyond what any single method can deliver.

Table 2: Key Research Tools for Protein Function Annotation

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| BLASTp | Sequence Alignment Tool | Identifies similar sequences in databases | Primary homology-based annotation |

| ClusteredNR | Protein Database | Non-redundant clustered reference database | Default BLASTp database (from Aug 2025) |

| ESM2 | Protein Language Model | Generates embeddings for function prediction | EC prediction without close homologs |

| ProtBERT | Protein Language Model | Transformer-based sequence representations | Alternative pLM for feature extraction |

| UniProtKB | Protein Knowledgebase | Manually/automatically annotated sequences | Training data source for pLMs |

| FANTASIA | Annotation Tool | Functional annotation based on embedding similarity | Large-scale proteome annotation with pLMs |

The comparative assessment of BLASTp and protein language models reveals a nuanced landscape where neither approach completely dominates the other. BLASTp maintains its position as the gold standard for routine annotation due to its marginally superior overall accuracy, computational efficiency, and deep integration into established bioinformatics workflows [2] [1]. Its upcoming transition to the ClusteredNR database will further enhance its performance by reducing redundancy and providing broader taxonomic coverage [4]. However, protein language models, particularly ESM2, have demonstrated compelling capabilities for addressing challenging annotation scenarios where traditional homology-based methods falter—especially for sequences with low similarity to known proteins and orphan enzymes without database homologs [2] [1]. Rather than viewing these approaches as mutually exclusive, the evidence suggests they offer complementary strengths. Forward-looking researchers and drug development professionals would be well-served by developing workflows that strategically employ both methodologies, leveraging BLASTp for high-confidence homology-based annotations while reserving protein language models for the growing subset of proteins that defy conventional classification through sequence similarity alone. As both technologies continue to evolve—with BLASTp benefiting from database optimizations and pLMs advancing through architectural improvements and training on larger datasets—their synergistic integration promises to push the boundaries of what's possible in protein function prediction.

The emerging paradigm of protein language models (pLMs) represents a fundamental shift in how computational biology approaches the central challenge of linking protein sequence to function. Inspired by breakthroughs in natural language processing, this new framework treats amino acid sequences as sentences in a foreign language, allowing models to learn the complex "grammar" that governs protein structure and function directly from unlabeled sequence data [7]. This approach marks a significant departure from traditional, homology-based methods like BLASTp, which have served as the gold standard for decades by transferring functional annotations from evolutionarily related proteins [8]. Where BLASTp operates on the principle of explicit sequence comparison, protein LLMs such as ESM2, ESM1b, and ProtBERT learn implicit patterns and biochemical constraints from millions of sequences, enabling them to make functional predictions even for proteins without clear homologs in existing databases [2] [9].

This comparative guide examines the performance of these two competing paradigms—the established homology-based approach and the emerging AI-driven framework—within the specific context of enzyme function prediction. By objectively evaluating experimental data on their relative strengths and limitations, we provide researchers, scientists, and drug development professionals with the evidence needed to select appropriate tools for their functional annotation workflows and to understand where the field is heading in the coming years.

Performance Comparison: pLMs vs. Traditional Methods

Quantitative Performance Benchmarks

Direct comparative studies reveal a nuanced performance landscape where traditional and AI-based methods each display distinct advantages depending on the specific prediction context.

Table 1: Performance Comparison of Protein LLMs vs. BLASTp for EC Number Prediction

| Method | Overall Accuracy | Performance on Low-Homology Sequences (<25% identity) | Key Strengths | Limitations |

|---|---|---|---|---|

| BLASTp | Marginally better overall [2] | Significant performance decrease [2] | Excellent for sequences with clear homologs [2] | Cannot annotate orphan sequences without homologs [10] |

| ESM2 (Best-performing pLM) | Slightly lower than BLASTp but complementary [2] | Superior performance on difficult-to-annotate enzymes [2] [10] | Predicts functions for sequences without homologs [2] | Not yet gold standard for mainstream annotation [2] |

| ESM1b | Lower than ESM2 [2] | Good performance on low-homology targets [2] | Useful feature extraction for function prediction [9] | Not state-of-the-art among pLMs [2] |

| ProtBERT | Lower than ESM2 [2] | Moderate performance on low-homology targets [2] | Can be fine-tuned for specific prediction tasks [10] | Underperforms compared to ESM models in benchmarks [2] |

Performance Across Different Prediction Contexts

Beyond enzyme commission number prediction, the relative performance of these methods varies across different functional annotation tasks:

Table 2: Method Performance Across Protein Analysis Tasks

| Task | Best Performing Methods | Key Findings |

|---|---|---|

| Gene Ontology (GO) Term Prediction | BLASTp, MMseqs2, DIAMOND (with optimal parameters) [8] | BLASTp and MMseqs2 consistently exceed other tools under default parameters [8] |

| Protein-Protein Interaction Prediction | SWING (Specialized Interaction Language Model) [11] | Specialized interaction language models outperform generic pLM embeddings for PPI prediction [11] |

| Structure Prediction | AlphaFold-Multimer, DeepSCFold [12] | Integration of sequence-based deep learning with co-evolutionary signals yields highest accuracy [12] |

Experimental Protocols and Methodologies

Standardized Evaluation Framework for EC Number Prediction

To enable fair comparison between traditional and AI-based methods, recent studies have established rigorous experimental protocols. The key methodology for benchmarking EC number prediction involves:

Data Preparation and Processing:

- Source Data: Protein sequences and EC number annotations are extracted from UniProtKB, encompassing both SwissProt (manually annotated) and TrEMBL (automatically annotated) databases [10].

- Sequence Clustering: To avoid homology bias, sequences are clustered using UniRef90, which groups sequences that share at least 90% identity. Only cluster representatives are used in training and testing sets [10].

- Problem Formulation: EC number prediction is treated as a multi-label classification problem, accounting for promiscuous and multi-functional enzymes with multiple EC numbers. The hierarchical nature of EC numbers is preserved, with classifiers challenged to predict the entire hierarchy of labels and their relationships [10].

Model Training and Architecture:

- pLM-Based Models: Protein LLMs (ESM2, ESM1b, ProtBERT) are used as feature extractors, generating embeddings from protein sequences. These embeddings are then fed into fully connected neural networks for EC number classification [2] [10].

- Baseline DL Models: Deep learning models like DeepEC and D-SPACE that rely on one-hot encodings of amino acid sequences are implemented for comparison [10].

- Traditional Methods: BLASTp searches are performed against reference databases with function transfer based on highest-scoring hits [2].

Evaluation Metrics:

- Standard classification metrics including precision, recall, F1-score, and area under the receiver operating characteristic curve [2].

- Stratified evaluation based on sequence similarity thresholds (e.g., <25% identity) to assess performance on low-homology targets [2].

- per-class performance analysis to identify which EC classes are better predicted by each method [2].

The "Protein-as-Second-Language" Framework

An emerging experimental approach reformulates protein function prediction as a zero-shot learning problem:

- Framework Design: Amino acid sequences are treated as sentences in a novel symbolic language. The framework adaptively constructs sequence-question-answer triples that reveal functional cues without task-specific training [13].

- Data Curation: A bilingual corpus of 79,926 protein-QA instances spanning attribute prediction, descriptive understanding, and extended reasoning is created to support this approach [13].

- Evaluation: The method is tested in a zero-shot setting across diverse LLMs, achieving improvements of up to 17.2% ROUGE-L (average +7%) and even surpassing fine-tuned protein-specific language models in some cases [13].

Workflow Visualization: From Sequence to Function

Traditional vs. pLM-Based Annotation Pathways

The fundamental differences between traditional homology-based approaches and the new pLM paradigm can be visualized through their distinct workflows:

pLM Architecture and Training Methodology

Protein language models learn the "grammar" of proteins through self-supervised training on massive sequence datasets:

Essential Research Reagents and Computational Tools

The Scientist's Toolkit for Protein Function Annotation

Table 3: Key Research Reagents and Computational Tools for Protein Function Prediction

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| ESM2 [2] [10] | Protein Language Model | Feature extraction from protein sequences | State-of-the-art for EC number prediction; best-performing among pLMs |

| ProtBERT [2] [10] | Protein Language Model | Feature extraction and fine-tuning for specific tasks | EC number prediction; can be fine-tuned for specific prediction tasks |

| BLASTp [2] [8] | Sequence Alignment Tool | Homology-based function transfer | Gold standard for sequences with clear homologs; widely used in annotation pipelines |

| DIAMOND [8] | Sequence Alignment Tool | Fast homology search | BLASTp alternative optimized for speed with slightly lower sensitivity |

| MMseqs2 [8] | Sequence Alignment Tool | Fast, sensitive sequence search | Performance comparable to BLASTp with correct parameter settings |

| SWING [11] | Specialized Interaction Model | Protein-protein interaction prediction | Outperforms generic pLMs for interaction-specific tasks |

| UniProtKB [10] | Protein Database | Source of annotated sequences | Primary data source for training and benchmarking |

| UniRef90 [10] | Clustered Protein Database | Sequence similarity-based clustering | Reduces homology bias in training datasets |

The experimental evidence clearly demonstrates that protein language models and traditional homology-based methods represent complementary rather than strictly competitive approaches to protein function annotation. While BLASTp maintains a marginal overall advantage for routine annotation of proteins with clear evolutionary relatives [2], protein LLMs excel in the critical task of predicting functions for difficult-to-annotate enzymes, particularly when sequence identity falls below 25% [2] [10]. This performance profile suggests an integrated future for protein function prediction, where LLMs handle the challenging cases that evade traditional homology-based methods while BLASTp continues to provide reliable annotations for sequences with clear homologs.

The most effective annotation pipelines will likely leverage both approaches, combining the evolutionary signals captured by traditional methods with the learned biochemical constraints embedded in protein LLMs. As these models continue to evolve—with emerging frameworks treating "protein as a second language" for LLMs [13] and specialized interaction language models like SWING [11] addressing specific prediction tasks—the gap between sequence and function will continue to narrow, accelerating discovery in basic research and drug development alike.

The field of protein function prediction has undergone a fundamental transformation, moving from traditional similarity-based methods toward deep learning approaches that capture complex biological patterns. For decades, BLASTp has served as the gold standard for protein annotation, operating on the principle that proteins with similar sequences share similar functions [3]. While effective for detecting clear homologs, this approach struggles with remote homology and fails to leverage the full contextual information embedded in protein sequences. The advent of protein Language Models (pLMs), built on Transformer architectures and self-attention mechanisms, represents a paradigm shift. These models, pre-trained on millions of protein sequences, learn the underlying "language of life," capturing intricate biochemical and structural properties that enable more accurate and generalizable function prediction, even for proteins with low sequence similarity to known proteins [14] [3].

This guide provides an objective comparison of these competing methodologies, focusing on their core architectures, performance benchmarks, and practical applications in bioinformatics and drug development. We present experimental data from recent, comprehensive studies to help researchers select the appropriate tool for their protein annotation needs.

Core Architectural Differences: BLASTp vs. Transformer-based pLMs

The fundamental difference between these approaches lies in how they process and interpret protein sequence information.

BLASTp: A Sequence Alignment Workhorse

BLASTp (Basic Local Alignment Search Tool for proteins) employs a local alignment strategy to identify regions of similarity between a query sequence and a database of known proteins. Its methodology is based on heuristics to rapidly find sequence matches, after which it estimates the statistical significance of these matches (E-values) [1]. The underlying assumption is that function can be transferred from a well-annotated protein to a query protein based on significant sequence similarity. While recent database improvements like ClusteredNR reduce redundancy and improve search speed, the core algorithm remains based on pairwise sequence comparison [4].

Transformer-based pLMs: Learning the Language of Proteins

In contrast, pLMs like ESM-2 and ProtT5 are based on the Transformer architecture. At their core is the self-attention mechanism, which allows the model to weigh the importance of all amino acids in a sequence when representing any single residue. This enables the model to capture long-range dependencies and complex interactions that are invisible to local alignment [14] [15].

These models are first pre-trained on massive datasets (e.g., UniRef) using self-supervised objectives like Masked Language Modeling (MLM), where the model learns to predict randomly masked amino acids based on their context. The resulting model contains rich, contextual representations of protein sequences, which can then be fine-tuned for specific downstream tasks such as protein-protein interaction prediction, enzyme classification, or crystallization propensity prediction [16] [17].

Table: Core Architectural Comparison between BLASTp and Transformer-based pLMs

| Feature | BLASTp | Transformer-based pLMs |

|---|---|---|

| Underlying Principle | Local sequence alignment & homology transfer | Contextual sequence representation via self-supervised learning |

| Core Mechanism | Heuristic search for similar sequence segments | Self-attention mechanism capturing residue-residue dependencies |

| Training Data | Reference protein databases (e.g., nr, ClusteredNR) | Large, unlabeled sequence corpora (e.g., UniRef) |

| Primary Output | Sequence matches with statistical significance (E-values) | Contextual embeddings for each residue and/or the entire protein |

| Key Strength | Excellent for finding clear homologs; intuitive interpretation | Superior for detecting remote homology & capturing functional signatures |

Performance Benchmarking: Experimental Data and Comparisons

Enzyme Commission (EC) Number Prediction

A comprehensive 2025 study directly compared the performance of pLMs and BLASTp for predicting enzyme functions, defined by their EC numbers [1]. The research evaluated several pLMs, including ESM2, ESM1b, and ProtBERT, against BLASTp on a large test set. The results revealed that while BLASTp provided marginally better overall results, the pLM-based models offered complementary performance, with each method excelling on different subsets of EC numbers [1].

Crucially, the study found that pLMs significantly outperformed BLASTp for enzymes where the identity between the query sequence and the reference database fell below 25%. This highlights the particular value of pLMs for annotating proteins with few or distant homologs. Among the pLMs, ESM2 stood out as the most effective, providing more accurate predictions for difficult annotation tasks [1].

Table: Performance Comparison in EC Number Prediction [1]

| Method | Overall Performance | Performance on Low-Identity Sequences (<25% Identity) | Key Strengths |

|---|---|---|---|

| BLASTp | Marginal overall advantage | Lower performance | Best for proteins with high-identity homologs |

| ESM2 (pLM) | Competitive, slightly lower overall | Significantly better performance | Superior for remote homology, difficult annotations |

| ESM1b (pLM) | Lower than ESM2 and BLASTp | Moderate | - |

| ProtBERT (pLM) | Lower than ESM2 and BLASTp | Moderate | - |

Protein-Protein Interaction (PPI) Prediction

The application of pLMs has expanded beyond single-protein annotation to predicting interactions between proteins. A 2025 study introduced PLM-interact, a model based on a fine-tuned ESM-2 architecture, specifically designed for PPI prediction [16]. The model was trained on human PPI data and tested on data from five other species (mouse, fly, worm, yeast, and E. coli) in a rigorous cross-species benchmark.

PLM-interact achieved state-of-the-art performance, outperforming six other PPI prediction methods (TUnA, TT3D, Topsy-Turvy, D-SCRIPT, PIPR, and DeepPPI) in most tested scenarios [16]. The performance improvement was particularly notable for the more challenging yeast and E. coli datasets, where PLM-interact achieved a 10% and 7% improvement in AUPR (Area Under the Precision-Recall Curve), respectively, over the next best method (TUnA) [16]. This demonstrates the power of transformer models to generalize learned interaction patterns across evolutionary distances.

Protein Crystallization Propensity Prediction

A 2025 benchmarking study evaluated various pLMs for predicting a protein's propensity to form crystals, a critical step in structural biology [17]. The research compared classifiers built on embedding representations from models including ESM2, Ankh, ProtT5-XL, ProstT5, xTrimoPGLM, and SaProt.

The study found that LightGBM classifiers utilizing embeddings from ESM2 models (with 30 and 36 transformer layers) outperformed all other sequence-based methods, including DeepCrystal, ATTCrys, and CLPred [17]. These ESM2-based predictors achieved performance gains of 3-5% in key metrics like AUPR, AUC, and F1-score on independent test sets, demonstrating the practical utility of pLM embeddings for challenging biophysical property prediction [17].

Experimental Protocols and Methodologies

Standard Protocol for pLM-based Function Prediction

A typical experimental pipeline for using pLMs in protein function prediction involves several key stages, as detailed in comparative studies [1] [17]:

- Sequence Input and Preprocessing: Protein amino acid sequences are obtained in FASTA format. Sequences are often filtered by length and quality.

- Embedding Generation: Sequence(s) are passed through a pre-trained pLM (e.g., ESM2) to generate numerical representations (embeddings). These can be per-residue embeddings or a single, pooled representation for the entire protein.

- Downstream Model Training: The generated embeddings are used as input features for a machine learning classifier (e.g., a fully connected neural network, LightGBM, or XGBoost) trained to predict specific functional labels (e.g., EC numbers, GO terms).

- Performance Evaluation: The model is evaluated on held-out test sets using standard metrics such as AUROC, AUPR, and F1-score. Cross-validation is often employed to ensure robustness.

Protocol for Cross-Species PPI Prediction with PLM-interact

The PLM-interact model demonstrated a specialized architecture and training regimen for interaction prediction [16]:

- Architecture Modification: Starts with a pre-trained ESM-2 model and extends it to jointly encode protein pairs. This is analogous to the "next-sentence prediction" task in natural language processing.

- Balanced Training: The model is fine-tuned using a combined loss function that balances the Masked Language Modeling (MLM) objective with a Next Sentence Prediction (classification) objective. A specific 1:10 ratio between classification and mask loss was found to be optimal.

- Training Data: The model is trained on large datasets of known interacting and non-interacting protein pairs, such as 421,792 human protein pairs.

- Cross-Species Validation: The model's generalizability is tested by evaluating its performance on protein interaction data from species not seen during training (e.g., training on human, testing on mouse, fly, worm, yeast, E. coli).

The following tools and databases are critical for researchers working in the field of protein annotation and function prediction.

Table: Essential Research Tools for Protein Annotation

| Tool Name | Type | Primary Function | Relevance |

|---|---|---|---|

| ESM-2 [16] [15] | Protein Language Model | Generates contextual embeddings from protein sequences | State-of-the-art pLM for various downstream tasks |

| TRILL [17] | Computational Platform | Democratizes access to multiple pLMs for property prediction | Allows easy benchmarking of different pLMs without deep coding expertise |

| ClusteredNR [4] | Protein Database | Non-redundant clustered protein database for BLAST | Reduces redundancy and speeds up BLAST searches |

| BASys2 [6] | Annotation Pipeline | Rapid, comprehensive bacterial genome annotation | Integrates >30 tools for functional and structural annotation |

| PLM-interact [16] | Specialized pLM | Predicts protein-protein interactions from sequence | Demonstrates extension of pLMs to complex relational tasks |

| CARD [18] | Specialized Database | Curated database of antimicrobial resistance genes | Essential for AMR annotation; used in minimal model benchmarking |

The experimental evidence clearly demonstrates that transformer-based pLMs and traditional BLASTp offer complementary strengths. BLASTp remains a robust, efficient tool for annotating proteins with clear, high-identity homologs. Its interpretability and speed make it a valuable first pass in many annotation pipelines.

However, pLMs have established a dominant advantage in scenarios involving remote homology, complex functional patterns, and predictions where pure sequence similarity is low. Their ability to learn the intricate "grammar" of protein sequences from unlabeled data allows them to uncover functional insights that evade alignment-based methods. As the field progresses, the most powerful annotation pipelines will likely strategically combine both approaches, leveraging the respective strengths of each method to achieve maximum accuracy and coverage [1]. Future developments will focus on increasingly specialized pLMs, improved interpretability of attention mechanisms [15], and integration of structural information for even more powerful protein function prediction.

The accurate prediction of enzyme function, classified by Enzyme Commission (EC) numbers, is a critical task in bioinformatics with profound implications for understanding cellular metabolism, drug discovery, and genome annotation. For decades, similarity-based search tools like BLASTp have served as the gold standard for this function. However, the recent emergence of protein Large Language Models (pLMs)—including ESM2, ESM1b, and ProtBERT—offers a powerful new paradigm for extracting functional insights directly from sequence data. This guide provides a objective, data-driven comparison of these three prominent pLMs, benchmarking their performance against each other and traditional BLASTp-based annotation to inform researchers and drug development professionals about their respective capabilities and optimal use cases.

The following tables summarize the key performance characteristics and experimental results for ESM2, ESM1b, and ProtBERT in the context of EC number prediction.

Table 1: Model Architectures and Key Performance Insights

| Model | Key Architecture/Pre-training | Overall Performance vs. BLASTp | Key Strength | Notable Limitation |

|---|---|---|---|---|

| ESM2 | Transformer; pre-trained on UniProtKB [1] | Best among pLMs; slightly behind BLASTp overall [1] | Most accurate for difficult annotations & enzymes without homologs [1] | - |

| ESM1b | Transformer; pre-trained on UniProtKB [1] [3] | Surpassed by ESM2 [1] | Widely applied for improving prediction accuracy [3] | Outperformed by newer ESM2 variants [1] |

| ProtBERT | Transformer; pre-trained on UniProtKB & BFD [1] | Surpassed by ESM2 [1] | Often fine-tuned for EC prediction [1] | - |

| BLASTp | Local sequence alignment & homology search [1] | Marginally better overall results than pLMs [1] | Excels for many EC numbers with clear homologs [1] | Cannot annotate proteins without homologous sequences [1] |

Table 2: Comparative Experimental Data on EC Number Prediction

| Metric / Characteristic | ESM2 | ESM1b | ProtBERT | BLASTp |

|---|---|---|---|---|

| Performance with Low Identity (<25%) | Good predictions [1] | Information missing | Information missing | Performance decreases [1] |

| Complementarity with BLASTp | Yes - predicts EC numbers that BLASTp misses [1] | Implied by category | Implied by category | Yes - predicts EC numbers that pLMs miss [1] |

| Input Representation | Embeddings (Feature Extraction) [1] | Embeddings (Feature Extraction) [1] | Embeddings (often Fine-tuning) [1] | Amino Acid Sequence (Direct Search) |

| Optimal Combined Use | More effective when used together with BLASTp [1] | More effective when used together with BLASTp [1] | More effective when used together with BLASTp [1] | More effective when used together with pLMs [1] |

Experimental Protocols and Methodology

A robust experimental framework is essential for a fair comparison of protein function prediction tools. The following workflow and detailed methodology outline the standard approach for benchmarking EC number prediction performance.

Experimental Workflow for EC Number Prediction

Detailed Methodological Components

Data Sourcing and Processing

The standard protocol utilizes protein data from UniProtKB (SwissProt and TrEMBL) downloaded in XML format. To ensure data quality and reduce redundancy, only UniRef90 cluster representatives are retained. These representatives are selected based on entry quality, annotation score, organism relevance, and sequence length, creating a non-redundant dataset ideal for model training and evaluation [1].

Problem Formulation

EC number prediction is formally defined as a global hierarchical multi-label classification problem. Each protein sequence is associated with a binary vector indicating all relevant EC numbers across all hierarchical levels (from the first digit to the fourth). This approach accounts for promiscuous and multi-functional enzymes, requiring a single classifier to predict the entire hierarchy of labels and their complex relationships [1].

Feature Extraction and Model Training

- pLM Embeddings: For ESM2, ESM1b, and ProtBERT, the standard approach is feature extraction. The embeddings (numeric representations) from a specific layer of the pre-trained model are used as input features for a downstream classifier. A commonly used and effective compression strategy is mean pooling, which averages embeddings across all amino acid positions in a sequence [5]. These embeddings are then processed by a fully connected neural network to predict the EC number vector [1].

- Baseline Models: For comparison, deep learning models like DeepEC and D-SPACE, which use one-hot encodings of amino acid sequences as input, are implemented. This setup allows for a direct comparison between traditional input representations and those learned by pLMs [1].

- BLASTp Protocol: The BLASTp baseline involves performing a standard similarity search against a curated database of enzymes with known EC numbers. The function is transferred from the best hit(s) based on sequence similarity thresholds [1].

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 3: Essential Resources for Protein Function Prediction Research

| Resource / Tool | Type | Primary Function in Research |

|---|---|---|

| UniProtKB Database | Data Repository | Provides the foundational, curated protein sequences and their functional annotations (including EC numbers) for model training and testing [1]. |

| ESM2 / ESM1b / ProtBERT | Protein Language Model | Serves as the core feature extractor, converting raw amino acid sequences into semantically rich, numerical embeddings (vector representations) for downstream prediction tasks [1]. |

| BLASTp | Bioinformatics Tool | Functions as the standard baseline for performance comparison based on sequence homology and homology-based function transfer [1]. |

| Fully Connected Neural Network | Deep Learning Model | Acts as the final classifier that takes pLM embeddings as input and performs the multi-label classification to assign EC numbers [1]. |

| ClusteredNR Database | Protein Sequence Database | An NCBI database of clustered protein sequences that reduces redundancy. It is becoming the new default for BLASTp, enabling faster searches with broader taxonomic coverage [4]. |

Critical Analysis and Research Implications

The experimental data leads to several critical conclusions for researchers. First, while BLASTp maintains a marginal overall advantage, the performance of pLMs and BLASTp is complementary [1]. Each method excels at predicting different subsets of EC numbers, suggesting that a combined approach is more powerful than either method in isolation.

Second, among the pLMs, ESM2 consistently emerges as the top performer, providing the most accurate predictions for challenging annotation tasks, especially for enzymes with no close homologs or when sequence identity to known proteins falls below 25% [1]. This makes ESM2 particularly valuable for exploring the "microbial dark matter" in metagenomic studies.

Finally, a crucial consideration for practical application is the balance between model size and performance. While larger pLMs exist, recent evidence suggests that medium-sized models (e.g., ESM2 650M) often achieve performance comparable to their much larger counterparts (e.g., ESM2 15B) in many transfer learning scenarios, offering a superior balance of computational efficiency and predictive power [5].

The comparative analysis of ESM2, ESM1b, and ProtBERT reveals a nuanced landscape in protein function prediction. ESM2 currently holds a slight edge among pLMs for EC number prediction, particularly for difficult cases with low sequence homology. However, the longstanding BLASTp tool remains a robust and marginally superior performer overall for routine annotations. The most effective strategy for researchers is not to choose one over the other, but to leverage their complementary strengths. Integrating pLM-based predictions with traditional homology-based methods like BLASTp provides the most comprehensive and accurate path forward for annotating the functional universe of enzymes.

In the era of high-throughput sequencing, functional genomics faces a critical bottleneck: the immense gap between the rapid accumulation of protein sequences and their functional characterization. As of February 2024, the UniProt database contains over 240 million protein sequences, yet less than 0.3% have experimentally validated functional annotations [9]. This staggering discrepancy represents what researchers term the "dark proteome" – a vast landscape of uncharacterized proteins that may hold keys to understanding biological processes, disease mechanisms, and therapeutic targets [19]. For researchers and drug development professionals, this annotation gap presents both a challenge and an imperative: without accurate functional annotations, genomic data remains largely uninterpretable, potentially obscuring valuable insights for drug discovery and basic biological research.

Traditional annotation methods, primarily relying on sequence similarity through tools like BLASTp, have fundamental limitations when dealing with proteins that lack clear homologs in databases [2] [19]. Approximately 30% of proteins in model organisms like Caenorhabditis elegans remain unannotated, with this figure rising to 50% for non-model organisms [19]. The rapid expansion of genomic data from large-scale initiatives such as the Earth BioGenome Project further exacerbates this problem, generating unprecedented volumes of genomic information that demand reliable annotation frameworks extending beyond conventional approaches [19].

This article examines the emerging landscape of annotation tools, with particular focus on the performance comparison between traditional homology-based methods and novel approaches leveraging protein language models (pLMs). We provide experimental data, methodological details, and practical resources to guide researchers in selecting appropriate tools for their functional genomics workflows.

Performance Comparison: pLMs Versus Traditional Methods

Quantitative Performance Metrics

Table 1: Comparative performance of annotation methods for Enzyme Commission (EC) number prediction

| Method | Overall Accuracy | Performance on Sequences <25% Identity | Key Strengths | Limitations |

|---|---|---|---|---|

| BLASTp | Marginally higher overall [2] | Significant performance decrease [2] | Excellent for sequences with clear homologs [2] | Limited for divergent sequences, orphans [2] [19] |

| ESM2 | High (best among pLMs) [2] | Maintains better accuracy on difficult annotations [2] | Predicts function without homologs; handles remote homology [2] | Still requires improvement to surpass BLASTp in routine annotation [2] |

| ProtT5 | High [20] [19] | Good performance on uncharacterized sequences [20] | Balanced performance; used in FANTASIA pipeline [19] | Computational resource requirements [19] |

| One-hot encoding + DL | Lower than pLMs [2] | Poor performance on sequences without homologs [2] | Simple implementation | Limited generalizability; inferior to modern pLMs [2] |

Table 2: Large-scale proteome annotation performance across animal taxa

| Method | Annotation Coverage | Informativeness of Terms | Novel Function Discovery | Computational Efficiency |

|---|---|---|---|---|

| Homology-based (Traditional) | ~50-60% of genes in non-model organisms [19] | Broad but shallow annotations | Limited to known homologs | Fast but incomplete [19] |

| FANTASIA (ProtT5) | Up to ~50% additional proteins annotated [19] | More precise and informative GO terms [19] | Reveals phylum-specific functions [19] | Moderate; scalable to full proteomes [19] |

| BASys2 | Comprehensive (62 annotation fields) [6] | Integrates multiple data types | Focused on metabolite annotation | Extremely fast (0.5 min average) [6] |

Key Insights from Comparative Studies

The comparative assessment reveals that BLASTp still provides marginally better results overall for routine annotation tasks, particularly when clear homologs exist in databases [2]. However, protein language models demonstrate complementary strengths, excelling in predicting certain EC numbers that challenge homology-based methods and maintaining performance on sequences with identity below 25% [2]. This suggests a synergistic relationship rather than outright replacement.

For large-scale proteome annotation, pLM-based approaches demonstrate remarkable advantages. The FANTASIA pipeline, leveraging ProtT5 embeddings, annotates up to 50% of proteins that remain uncharacterized by traditional homology-based methods [19]. This expanded coverage proves particularly valuable for non-model organisms, where homology-based tools fail to annotate nearly half of the genes, especially in less-studied phyla [19].

The ESM2 model stands out as the best performer among pLMs for EC number prediction, providing more accurate predictions on difficult annotation tasks and for enzymes without homologs [2]. Its architecture, trained on millions of diverse protein sequences, captures evolutionary patterns and structural constraints that enable functional inference beyond sequence similarity.

Experimental Protocols and Methodologies

EC Number Prediction Benchmarking

Experimental Objective: To compare the performance of protein language models (ESM2, ESM1b, ProtBERT) with BLASTp and one-hot encoding-based deep learning models for predicting Enzyme Commission numbers [2].

Data Preparation:

- Curated datasets of enzyme sequences with known EC numbers from public databases

- Partitioned sequences into training, validation, and test sets with careful attention to avoiding data leakage

- Generated sequence identity clusters to assess performance across different evolutionary distances

Model Configurations:

- ESM2: Used the 650M parameter model pre-trained on UniRef50, followed by a fully connected neural network classifier

- ESM1b: Implemented the 650M parameter model with similar architecture to ESM2

- ProtBERT: Utilized the BERT-base architecture pre-trained on BFD and UniRef50 datasets

- BLASTp: Conducted search against reference database with E-value threshold of 0.001

- One-hot encoding: Converted sequences to one-hot vectors followed by CNN architecture

Evaluation Metrics:

- Accuracy per EC number level and overall

- Precision-recall curves for different sequence identity thresholds

- Statistical significance testing between method performances [2]

Large-scale Proteome Annotation with FANTASIA

Experimental Objective: To assess the capability of protein language models for annotating complete proteomes across diverse animal taxa [19].

Pipeline Implementation:

- Input: Proteome files in FASTA format from 970 animal species (~23 million genes)

- Preprocessing: Filtered by length and sequence similarity to remove identical sequences

- Embedding Generation: Computed protein embeddings using ProtT5 model

- Similarity Search: Employed embedding similarity against GOA database

- Function Transfer: Predicted GO terms using closest hits or distance-based filtering

- Output: Functional predictions across three GO categories (biological process, molecular function, cellular component)

Validation Approach:

- Compared annotations with homology-based methods for model organisms

- Assessed coverage (proportion of annotated proteins) and informativeness (detail of terms)

- Conducted phylogenetic enrichment analysis to identify lineage-specific functions [19]

FANTASIA Pipeline: From proteome input to functional annotation

Visualization of Key Concepts and Relationships

The Annotation Gap and Tool Evolution

The Genomic Annotation Challenge: From data generation to interpretation

Complementary Strengths of BLASTp and pLMs

Annotation Strategies: Complementary approaches for comprehensive coverage

Table 3: Key resources for functional genomics annotation

| Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| FANTASIA | Annotation pipeline | Large-scale functional annotation using pLM embeddings [19] | Proteome-wide annotation, non-model organisms [19] |

| BASys2 | Bacterial annotation system | Rapid, comprehensive genome annotation with metabolic focus [6] | Bacterial genomics, metabolite annotation [6] |

| ESM2 | Protein language model | Protein sequence representation for downstream tasks [2] | EC number prediction, remote homology detection [2] |

| ProtT5 | Protein language model | Protein sequence embedding generation [20] [19] | Function prediction, embedding similarity searches [19] |

| SegmentNT | DNA foundation model | Nucleotide-resolution genome annotation [21] | Gene element prediction, regulatory element detection [21] |

| PLSDB | Plasmid database | Curated plasmid sequence resource [22] | Plasmid annotation, horizontal gene transfer studies [22] |

The field of functional genomics stands at a transitional point where traditional homology-based methods and emerging AI-driven approaches are converging toward a hybrid future. Current evidence suggests that protein language models still require refinement to become the gold standard over BLASTp in mainstream annotation routines [2]. However, their superior performance on difficult-to-annotate proteins and capacity to illuminate the "dark proteome" make them indispensable for comprehensive genomic interpretation [19].

For research and drug development professionals, practical implementation should consider a combined approach: using BLASTp for sequences with clear homologs while deploying pLM-based tools for orphan genes, rapidly evolving sequences, and non-model organisms. As these tools evolve, they promise to gradually close the annotation gap, transforming our ability to extract biological meaning from genomic sequence and accelerating discoveries in basic biology and therapeutic development.

The integration of pLMs into annotation pipelines like FANTASIA and BASys2 demonstrates the practical viability of these approaches at scale. With the continued expansion of genomic sequencing initiatives, such advanced annotation tools will become increasingly critical for translating genetic information into biological understanding and clinical applications.

Putting Tools to Work: Methodologies and Real-World Applications in Drug Discovery and Annotation

For decades, BLASTp (Basic Local Alignment Search Tool for protein sequences) has served as the cornerstone of bioinformatics workflows, enabling researchers to compare protein sequences against databases to infer functional and evolutionary relationships [23]. Its fundamental principle rests on identifying regions of local similarity between sequences, operating on the paradigm that sequence similarity often implies functional homology [1]. However, the emerging landscape of artificial intelligence has introduced a powerful new paradigm: protein language models (LLMs) like ESM2, ESM1b, and ProtBERT, which learn complex patterns from millions of protein sequences to predict function [1] [3]. This guide presents a comprehensive overview of the BLASTp workflow while contextualizing its performance and applications relative to these modern computational approaches.

Recent comparative studies reveal a nuanced relationship between traditional alignment tools and AI-based methods. Although BLASTp maintains marginal overall superiority in routine enzyme commission (EC) number annotation, protein LLMs demonstrate complementary strengths, particularly for difficult-to-annotate enzymes and sequences with low homology to known proteins [1] [2]. This evolving dynamic underscores the importance of understanding BLASTp's methodology, optimal implementation, and position within a modern bioinformatics toolkit that increasingly integrates multiple computational strategies.

The BLASTp Workflow: A Step-by-Step Protocol

The standard BLASTp workflow transforms a query protein sequence into functional hypotheses through a series of structured computational steps. The following diagram maps this logical progression from input to biological interpretation:

Input and Database Selection

The process initiates with the query protein sequence in FASTA format. Critical to success is selecting an appropriate protein database for comparison:

- nr (non-redundant): A comprehensive protein database incorporating multiple sources, though soon to be replaced as the BLASTp default [4].

- ClusteredNR: The forthcoming default database (effective August 2025), offering decreased redundancy and broader taxonomic coverage by clustering similar sequences and selecting representative members [4].

- SwissProt: A manually annotated, high-quality database with reduced false positives due to expert curation [1].

Execution and Algorithmic Core

BLASTp employs a heuristic search algorithm that balances sensitivity with computational speed. The process identifies High-scoring Segment Pairs (HSPs) through three core stages:

- Seed Matching: The algorithm identifies short, exact matches ("words") between the query and database sequences.

- Extension: Promising seeds are extended in both directions to form longer alignments, calculating alignment scores.

- Evaluation: Extended alignments are evaluated for statistical significance [23].

Recent BLAST+ 2.17.0 releases have enhanced performance, including faster blastp searches with the -task blastp-fast option and support for compressed FASTA files [24].

Results Interpretation and Statistical Analysis

Proper interpretation requires understanding key metrics that evaluate alignment quality and biological relevance:

- E-value (Expectation Value): The number of alignments expected by chance. Lower E-values (closer to zero) indicate greater statistical significance.

- Bit Score: A normalized score representing alignment quality, independent of database size. Higher scores indicate better matches.

- Percent Identity: The percentage of identical residues in the alignment, providing a straightforward measure of sequence conservation.

- Query Coverage: The percentage of the query sequence included in the alignment, indicating the extent of similarity.

BLASTp Versus Protein Language Models: Experimental Comparisons

Methodology for Comparative Performance Assessment

Recent studies have established rigorous experimental frameworks to evaluate BLASTp against protein LLMs for function prediction, specifically for annotating Enzyme Commission (EC) numbers [1]. The standard protocol involves:

Dataset Preparation:

- Source protein sequences and corresponding EC numbers from UniProtKB (SwissProt and TrEMBL) [1].

- Apply clustering (e.g., UniRef90) to reduce sequence redundancy, ensuring non-homologous training and test sets [1].

- Formulate EC number prediction as a multi-label classification problem accommodating promiscuous and multi-functional enzymes [1].

Model Implementation and Comparison:

- BLASTp: Perform sequence similarity searches against reference databases, transferring EC numbers from top hits based on highest similarity [1].

- Protein LLMs: Extract sequence representations (embeddings) from pre-trained models (ESM2, ESM1b, ProtBERT) and train fully connected neural networks for EC number prediction [1].

- Evaluation Metric: Use precision-recall analysis and accuracy measurements across different EC number hierarchy levels and sequence identity thresholds [1].

Comparative Performance Data

The table below summarizes quantitative findings from a 2025 comparative assessment:

Table 1: Performance Comparison of BLASTp versus Protein Language Models for EC Number Prediction

| Method | Overall Accuracy | Strength Scenarios | Weakness Scenarios | Computational Demand |

|---|---|---|---|---|

| BLASTp | Marginally Higher | High-identity sequences (>25-30% identity) [1] | Sequences with no homologs in databases [1] | Lower (heuristic algorithm) |

| Protein LLMs (ESM2) | Slightly Lower but Complementary | Low-identity sequences (<25% identity), difficult-to-annotate enzymes [1] [2] | Routine annotation of high-similarity sequences [1] | Higher (neural network inference) |

| Hybrid Approach | Highest Reported | Combines strengths of both methods [1] [2] | Implementation complexity | Highest (multiple systems) |

The experimental data reveals that ESM2 emerged as the top-performing protein LLM, providing more accurate predictions for challenging annotation tasks, particularly when sequence identity to known proteins falls below 25% [1] [2]. This suggests that protein LLMs learn functional patterns that extend beyond simple sequence homology.

Essential Research Reagents and Computational Tools

Successful implementation of sequence comparison and analysis requires specific computational tools and resources. The following table catalogs key components for BLASTp and protein LLM workflows:

Table 2: Research Reagent Solutions for Protein Sequence Analysis

| Tool/Resource | Type | Primary Function | Access |

|---|---|---|---|

| BLAST+ Suite | Software Package | Command-line execution of BLASTp searches and database formatting [24] | Free from NCBI |

| ClusteredNR Database | Protein Database | Non-redundant clustered database for reduced redundancy in results [4] | Free from NCBI |

| UniProtKB | Protein Database | Source of expertly curated (SwissProt) and automated (TrEMBL) sequences for training and validation [1] | Free from EMBL-EBI |

| ESM2 Model | Protein Language Model | State-of-the-art protein LLM for generating sequence embeddings and function prediction [1] | Free from Meta AI |

| PyMOL | Visualization Software | Molecular visualization system for structural analysis of query proteins and hits [25] | Commercial (academic pricing) |

Integrated Workflow for Modern Protein Annotation

The complementary strengths of BLASTp and protein language models suggest an integrated approach for comprehensive protein function prediction. The following workflow leverages both methodologies for robust annotation:

This integrated pathway begins with conventional BLASTp analysis, which remains highly effective for sequences with clear homology in reference databases. For sequences lacking significant database matches, or when BLASTp results have marginal statistical support, the workflow transitions to protein LLM analysis, leveraging their strength in identifying distant patterns and functional relationships. Cases where the two methods yield conflicting predictions represent particularly interesting targets for experimental validation, as they may indicate novel functions or protein families [1] [2].

BLASTp maintains its foundational role in bioinformatics workflows due to its proven accuracy, computational efficiency, and interpretable results for sequences with database homologs. However, the rising capabilities of protein language models demonstrate that AI-driven approaches now offer complementary functionality, particularly for annotating distant homologs and proteins with novel folds [1] [3].

Future directions in protein sequence analysis point toward hybrid frameworks that strategically deploy alignment-based and AI-based methods according to their strengths. The forthcoming transition of BLASTp's default database to ClusteredNR [4] represents an evolution of the traditional paradigm, reducing redundancy while expanding taxonomic coverage. Meanwhile, protein language models continue to advance in their capacity to capture the complex biophysical and evolutionary patterns underlying protein function [3].

For researchers in genomics, drug discovery, and synthetic biology, this evolving landscape offers an expanded toolkit for protein function prediction. Mastering the BLASTp workflow—while understanding its relationship to emerging computational methods—remains essential for rigorous bioinformatics analysis in the coming years.

The accurate annotation of protein function is a cornerstone of modern biology, directly impacting drug discovery, metabolic engineering, and our understanding of disease mechanisms. For decades, homology-based search tools like BLASTp have served as the gold standard for transferring functional knowledge from characterized proteins to novel sequences [1]. This paradigm, however, rests on the availability of evolutionarily related proteins with significant sequence similarity, creating a substantial annotation gap for remote homologs and orphan sequences.

The convergence of protein language models (pLMs) and deep learning (DL) classifiers represents a transformative shift in this landscape. pLMs, pre-trained on millions of protein sequences, learn fundamental principles of protein grammar and generate rich, numerical embeddings that encapsulate structural and functional information [3] [26]. When these embeddings are used as features for specialized DL classifiers, they enable a powerful, alignment-free approach to function prediction that can uncover relationships invisible to traditional sequence comparison methods [27] [28].

Framed within the broader thesis of pLM versus BLASTp annotation research, this guide provides a performance comparison of these integrated pLM-DL pipelines against established benchmarks. We synthesize recent experimental data, detail core methodologies, and provide resources to help researchers and drug development professionals select the optimal tool for their annotation challenges.

Performance Comparison: pLM-DL Models vs. Traditional Methods

Direct comparisons reveal the distinct performance profiles of traditional sequence search, pLM-based, and structure-based methods. The following tables summarize key quantitative findings from recent large-scale benchmarks.

Table 1: Overall Performance Comparison on Enzyme Commission (EC) Number Prediction

| Method | Type | Key Metric | Performance | Reference |

|---|---|---|---|---|

| BLASTp | Sequence Alignment | Overall Accuracy | Marginally Best | [1] |

| ESM2 (with DNN) | pLM + DL | Overall Accuracy | Very High, Complementary to BLASTp | [1] |

| ProtBERT (with DNN) | pLM + DL | Overall Accuracy | Very High | [1] |

| ESM1b (with DNN) | pLM + DL | Overall Accuracy | Very High | [1] |

| One-Hot Encoding (with DL) | Traditional DL | Overall Accuracy | Lower than pLM-based Models | [1] |

Table 2: Remote Homology Search Sensitivity (AUROC) on SCOPe40-test Dataset

| Method | Family-Level (AUROC) | Superfamily-Level (AUROC) | Fold-Level (AUROC) | Reference |

|---|---|---|---|---|

| PLMSearch | 0.928 | 0.826 | 0.438 | [27] |

| MMseqs2 | 0.318 | 0.050 | 0.002 | [27] |

| BLASTp | N/A | N/A | N/A | [27] |

| Foldseek (Structure) | Comparable to PLMSearch | Comparable to PLMSearch | Comparable to PLMSearch | [27] |

| Performance Gain (PLMSearch vs. MMseqs2) | 3x | 16x | 219x | [27] |

Table 3: Performance of Specialized pLM-DL Models on Specific Prediction Tasks

| Model | Task | Performance | Reference |

|---|---|---|---|

| NCSP-PLM | Non-Classical Secreted Protein Prediction | Accuracy: 94.12%, Sensitivity: 91.18%, Specificity: 97.06% | [28] |

| Fine-tuned pLMs (ESM2, ProtT5) | Viral Protein Function Prediction | Significant improvement in embedding quality and downstream task performance vs. pre-trained pLMs | [26] |

Experimental Protocols and Methodologies

To ensure reproducibility and provide clarity on how the data in the previous section was generated, this section outlines the standard experimental protocols used in benchmarking studies.

Standard Benchmarking Workflow for EC Number Prediction

A typical experimental protocol for comparing EC number prediction methods, as used in studies like the assessment of protein LLMs, involves several key stages [1]:

Data Curation and Preprocessing:

- Source: Protein sequences and their corresponding EC numbers are extracted from the UniProt Knowledgebase (UniProtKB). Manually reviewed Swiss-Prot entries are often preferred for creating high-confidence benchmark sets.

- De-redundancy: To avoid bias, sequences are clustered at a specific identity threshold (e.g., using UniRef90 clusters), retaining only a single representative sequence per cluster.

- Data Splitting: The dataset is split into training, validation, and independent test sets, ensuring that no proteins in the test set have high sequence similarity to those in the training set. This rigorously tests the model's ability to generalize.

Feature Extraction for pLM-DL Models:

- pLM Embedding Generation: For each protein sequence in the dataset, a pre-trained pLM (e.g., ESM2, ESM1b, ProtBERT) is used to generate a sequence embedding. This is typically done by taking the hidden state representations from the final or penultimate layer of the model, often by averaging across all amino acid positions to create a fixed-length vector per protein.

- Traditional Feature Generation (Baseline): For baseline models, features are generated using one-hot encoding of amino acid sequences or other classical feature extraction methods.

Model Training and Evaluation:

- Classifier Training: The extracted features (pLM embeddings or one-hot encodings) are used to train a deep learning classifier, such as a fully connected Deep Neural Network (DNN). The task is typically framed as a multi-label classification problem.

- Baseline Comparison: The pLM-DL models are compared against the baseline models and against the direct predictions from BLASTp. For BLASTp, a common approach is to transfer the EC number of the top-hit in the database with a defined E-value or identity cutoff.

- Performance Metrics: Models are evaluated on the held-out test set using metrics like AUROC (Area Under the Receiver Operating Characteristic curve), accuracy, precision, recall, and F1-score.

Workflow for Remote Homology Search (PLMSearch)

The PLMSearch method provides a protocol optimized for detecting remote evolutionary relationships [27]:

- Input and Pre-filtering: Query and target protein sequences are provided as input. An optional pre-filtering step (PfamClan) can quickly remove protein pairs that do not share any known protein domain clan.

- Embedding and Similarity Prediction: Protein sequence embeddings are generated using a large pLM (e.g., ESM2). These embeddings are then fed into a specialized Structural Similarity (SS) Predictor, which is a neural network trained to predict the TM-score (a measure of structural similarity) between two proteins based solely on their embeddings.

- Ranking and Output: The query-target protein pairs are ranked based on their predicted similarity score from the SS-predictor. This ranked list forms the primary output of PLMSearch.

- Alignment (Optional): For top-ranked pairs, a final alignment step (PLMAlign) can be invoked to generate a precise sequence alignment and alignment score using the pLM embeddings.

The following diagram illustrates the logical workflow and decision points in a typical pLM-DL benchmarking experiment, integrating the protocols above:

Successful implementation of pLM-DL pipelines relies on a suite of computational tools and datasets. The table below details key resources referenced in the studies covered in this guide.

Table 4: Key Research Reagents and Computational Tools

| Resource Name | Type | Primary Function in Research | Reference |

|---|---|---|---|

| UniProt Knowledgebase (UniProtKB) | Database | Provides the canonical source of protein sequences with high-quality functional annotations (e.g., EC numbers) for model training and testing. | [1] [3] |

| ESM2 (Evolutionary Scale Modeling) | Protein Language Model | A transformer-based pLM used to generate deep contextual embeddings from protein sequences for downstream prediction tasks. Available in multiple sizes (e.g., ESM2-3B, ESM2-15B). | [1] [27] [26] |

| ProtBERT | Protein Language Model | Another powerful transformer-based pLM, pre-trained on UniRef and BFD, used for generating protein sequence embeddings. | [1] [26] |

| ProtT5 | Protein Language Model | A pLM based on the T5 (Text-to-Text Transfer Transformer) architecture, known for producing high-quality sequence representations. | [27] [26] |

| BLASTp | Software Tool | The standard benchmark for sequence alignment-based function prediction; used for comparison and often in ensemble methods. | [1] [27] |

| MMseqs2 | Software Tool | A highly sensitive and fast sequence search tool used as a baseline for comparing remote homology detection performance. | [27] |

| PLMSearch | Software Suite | An integrated method for remote homology search that uses pLM embeddings and a structural similarity predictor, offering a web server. | [27] |

| SCOPe Database | Database | A curated database of protein structural classifications used as a gold-standard benchmark for evaluating fold-level homology detection. | [27] |

The integration of protein language model embeddings with deep learning classifiers has firmly established itself as a powerful and often superior alternative to traditional BLASTp annotation for specific, high-value scenarios. The experimental data demonstrates that while BLASTp retains a marginal overall advantage for routine annotation, pLM-DL models offer unparalleled sensitivity in detecting remote homologs and can achieve state-of-the-art accuracy on specialized prediction tasks like identifying non-classically secreted proteins.

The choice between these paradigms is not merely a binary one. As research progresses, the most effective strategies are likely to be hybrid, leveraging the speed and reliability of BLASTp for clear homologs while deploying the deep semantic power of pLM-DL models for the "dark matter" of the protein universe—sequences with no close relatives, extreme diversity, or from underrepresented biological families. For researchers and drug developers, this expanding toolkit promises to accelerate the functional elucidation of novel targets, ultimately driving innovation in biomedicine and biotechnology.

Enzyme function prediction is a critical task in bioinformatics, with direct implications for understanding cellular metabolism, drug discovery, and synthetic biology. The Enzyme Commission (EC) number system provides a standardized hierarchical framework (with four digits like 1.2.3.4) for classifying enzyme function [29]. This guide compares the performance of traditional sequence alignment tools, protein language models, and emerging hybrid approaches for EC number prediction, providing researchers with data-driven insights for method selection.

The table below summarizes the core performance characteristics of major EC number prediction approaches, highlighting their respective strengths and limitations.

| Method Type | Representative Tools | Key Strengths | Major Limitations |

|---|---|---|---|

| Sequence Alignment | BLASTp, MMseqs2 [2] [27] | High accuracy for enzymes with close homologs [2] [10] | Fails for novel enzymes without homologs; performance drops sharply at low sequence identity [29] [2] |

| Protein Language Models (PLMs) | ESM2, ESM1b, ProtBERT [2] [10] | Effective for remote homology and enzymes without close homologs; excels when sequence identity <25% [2] [10] | Marginally lower overall accuracy than BLASTp; requires substantial computational resources [2] [10] |

| Structure-Based Models | TopEC [30] | High accuracy (F-score: 0.72) by leveraging 3D structural information; robust to fold bias [30] | Dependent on availability of high-quality 3D structures, which can be scarce [29] [30] |

| Multi-Modal/Hybrid Models | MAPred [29] | State-of-the-art performance by integrating sequence and structural (3Di) data; respects EC number hierarchy [29] | Increased model complexity and computational cost [29] |

Accurately determining enzyme function is fundamental for applications ranging from genome annotation to drug design [3] [29]. However, experimental methods for function determination are time-consuming and resource-intensive, creating a massive gap between the number of known protein sequences and those with experimentally validated functions [3]. As of early 2024, out of over 240 million protein sequences in the UniProt database, less than 0.3% have been experimentally annotated [3]. This gap has driven the development of computational methods for automated function prediction, with the core challenge being to infer the correct EC number from an enzyme's amino acid sequence or structure.

Methodologies in Focus

Traditional Workhorse: Sequence Alignment with BLASTp

BLASTp (Basic Local Alignment Search Tool for proteins) operates on the principle of homology. It identifies similar sequences in annotated databases and transfers functional annotations from the best hits [10]. Its methodology is straightforward: a query protein sequence is compared against a reference database of known sequences using pairwise alignment, and EC numbers are assigned based on the highest sequence similarity matches [2] [10].

The Modern Contender: Protein Language Models (PLMs)

Inspired by large language models in NLP, PLMs like ESM2 and ProtBERT are pre-trained on millions of protein sequences in a self-supervised manner [3] [27]. They learn evolutionary patterns and structural constraints embedded in the sequence data.

Typical Experimental Protocol for PLM-based EC Prediction:

- Feature Extraction: A pre-trained PLM (e.g., ESM2) processes the input amino acid sequence, generating a numerical representation (embedding) for the entire protein or its constituent residues [2] [10].

- Classifier Training: These embeddings are used as input features to train a classifier, typically a fully connected neural network, on a large dataset of enzymes with known EC numbers (e.g., from UniProtKB) [2] [10].

- Hierarchical Prediction: The classifier is often trained as a multi-label model to predict all relevant EC digits simultaneously, accounting for promiscuous and multi-functional enzymes [10].

The Integrated Frontier: Multi-Modal and Hybrid Approaches

The latest methods integrate multiple data types to overcome the limitations of single-modality models.

MAPred (Multi-scale multi-modality Autoregressive Predictor) combines protein sequence with 3D structural information represented as 3Di tokens [29]. Its workflow is detailed below.

PLMSearch offers a different hybrid approach, using a PLM to generate deep sequence representations that are used to predict structural similarity (TM-score), enabling highly sensitive remote homology detection that is much faster than structure search tools [27].

Comparative Performance Analysis

Quantitative Benchmarking

The table below presents key performance metrics from recent comparative studies, offering a direct comparison of different methodologies.

| Method | Approach | Dataset | Key Metric | Performance | Reference |

|---|---|---|---|---|---|

| BLASTp | Sequence Alignment | UniProtKB-based | Overall Accuracy | Marginally better than individual PLMs [2] [10] | [2] |

| ESM2 + FCNN | Protein Language Model | UniProtKB-based | Overall Accuracy | Slightly lower than BLASTp, but complementary [2] [10] | [2] |

| TopEC | 3D Graph Neural Network | PDB300 (Fold Split) | F-score | 0.72 [30] | [30] |

| MAPred | Multi-modal (Seq + 3Di) | New-392, Price, New-815 | Accuracy | Outperforms existing models [29] | [29] |

| PLMSearch | PLM-based Similarity | SCOPe40-test (Fold-level) | AUROC | 0.438 (vs. MMseqs2: 0.002) [27] | [27] |

Strengths, Weaknesses, and Complementary Performance

BLASTp vs. PLMs: While BLASTp holds a slight overall edge, the performances are complementary [2] [10]. PLMs like ESM2 demonstrate a significant advantage in predicting functions for remote homologs and enzymes with no close relatives, particularly when sequence identity to known proteins falls below 25% [2] [10]. BLASTp excels when strong homologs exist in the database.

The Impact of Data Modality: Models incorporating structural information (e.g., MAPred, TopEC) consistently achieve superior performance [29] [30]. This is because an enzyme's function is directly determined by its 3D structure and the chemical environment of its active site, which cannot be fully captured by sequence alone.

Successful development and application of EC prediction pipelines rely on several key resources.

| Resource Name | Type | Primary Function in EC Prediction |

|---|---|---|

| UniProt Knowledgebase (UniProtKB) | Database | Provides curated protein sequences and functional annotations (including EC numbers) for model training and validation [3] [10]. |