Navigating the Exploration-Reliability Trade-Off: Modern Strategies for Protein Sequence Design in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on navigating the critical balance between exploring novel protein sequences and ensuring their functional reliability.

Navigating the Exploration-Reliability Trade-Off: Modern Strategies for Protein Sequence Design in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on navigating the critical balance between exploring novel protein sequences and ensuring their functional reliability. We cover the fundamental concepts of this trade-off, detail cutting-edge computational and experimental methodologies, address common challenges in optimization, and provide frameworks for rigorous validation. By synthesizing insights from recent advances in deep learning, directed evolution, and physics-based modeling, this resource aims to equip scientists with practical strategies to design proteins that are both innovative and robust for therapeutic and industrial applications.

The Core Dilemma: Understanding the Exploration vs. Exploitation Trade-off in Protein Design

In protein sequence design, a fundamental tension exists between Exploration (discovering novel sequences with potentially revolutionary functions) and Reliability (ensuring stable, well-folded, and functional proteins). This technical support center provides troubleshooting guidance for common experimental failures encountered while navigating this spectrum, framed within the thesis that successful research requires strategic balancing of these two imperatives.

Troubleshooting Guides & FAQs

Section 1: Stability & Folding Issues

Q1: My designed novel protein expresses solubly but is prone to aggregation during purification. How can I improve its stability without completely abandoning the novel fold? A: This is a classic Exploration-Reliability conflict. The novel fold may have marginal stability.

- Troubleshooting Steps:

- Diagnose: Perform a thermal shift assay (see Protocol 1) to determine the melting temperature (Tm). Compare to a stable native protein control.

- Stabilize: Consider computational minimal stabilization. Use a tool like Rosetta

ddg_monomerto predict point mutations that improve folding energy. Introduce 1-3 top-predicted stabilizing mutations, prioritizing mutations that do not contact the putative active site to preserve novel function. - Purification: Add 10% (v/v) glycerol or 150-300 mM Arginine to the purification buffers to suppress transient aggregation. Use a more gradual imidazole gradient during IMAC purification.

Q2: My high-throughput screening of a novel sequence library shows zero functional hits. Did I explore useless sequence space? A: Not necessarily. The issue may lie in the reliability of your screening assay for exploratory sequences.

- Troubleshooting Steps:

- Control Check: Ensure your screening assay (e.g., yeast display, phage display) includes a known positive control protein that is correctly expressed and detected. A failed control indicates an assay reliability issue.

- Expression Check: Use a fluorescent tag (e.g., GFP fusion) or a conformation-sensitive antibody (like anti-6xHis Tag Antibody, Cat. #MA1-21315) in parallel to determine if your novel sequences are even expressed and folded on the display platform.

- Library Design: Your exploration may have been too broad. Consider a focused exploration strategy: start from a stable scaffold and introduce novelty in localized regions only.

Section 2: Functional Validation Issues

Q3: The computationally designed enzyme has excellent stability metrics but shows <5% of the catalytic activity of the natural counterpart. Why? A: You have over-optimized for reliability (stability) at the cost of functional dynamics, which are crucial for exploration of catalysis.

- Troubleshooting Steps:

- Analyze Dynamics: Run short molecular dynamics (MD) simulations (e.g., using GROMACS) to see if the active site residues are rigidly locked or have appropriate flexibility. Compare the B-factors (mobility) to the natural enzyme.

- Introduce Controlled Flexibility: Identify distal "control lever" residues that rigidify the active site. Mutate these to smaller/glycine residues to allow necessary backbone motion (see Protocol 2).

- Check Electrostatics: Use a Poisson-Boltzmann solver (e.g., APBS) to calculate the electrostatic potential of the active site. Misaligned fields can drastically reduce activity. Consider mutating surface residues to tune the potential.

Q4: My de novo designed protein binds the target in ITC/SPR but with very weak affinity (Kd > 100 µM). How can I improve binding without starting over? A: Your exploratory design has achieved a proof-of-concept interaction. Now, reliability in binding needs to be engineered.

- Troubleshooting Steps:

- Identify Weak Links: Determine if the issue is on-rate (poor shape complementarity) or off-rate (lack of stabilizing interactions). Analyze the binding interface from a co-crystal structure or AlphaFold3 prediction.

- Affinity Maturation: Create a focused mutagenesis library targeting only the interface residues (e.g., using NNK codons). Screen for improved binders using a method with higher throughput than ITC (e.g., biolayer interferometry (BLI) or flow cytometry).

- Anchor & Optimize: If the interface is large, identify a sub-region with good shape complementarity and "anchor" it. Then, optimize the surrounding residues through iterative design-test cycles.

Experimental Protocols

Protocol 1: Thermal Shift Assay for Rapid Stability Profiling

Purpose: To determine the melting temperature (Tm) of a protein, comparing novel designs to stable controls. Materials: Purified protein, SYPRO Orange dye, real-time PCR machine, 96-well optical plate. Method:

- Dilute SYPRO Orange dye to 5X in assay buffer (e.g., PBS).

- Mix 20 µL of protein sample (0.2-0.5 mg/mL) with 5 µL of 5X dye in a PCR plate well.

- Run a temperature ramp from 25°C to 95°C at a rate of 1°C/min, with fluorescence measurement (ROX channel) at each step.

- Plot fluorescence vs. temperature. Fit a sigmoidal curve to determine the inflection point (Tm).

Protocol 2: Site-Saturation Mutagenesis for Balancing Flexibility & Stability

Purpose: To introduce controlled flexibility at a specific position to recover function. Materials: Plasmid DNA, primers with NNK degenerate codon, high-fidelity DNA polymerase (e.g., Q5), DpnI. Method:

- Design forward and reverse primers containing the NNK codon (encodes all 20 aa + TAG stop) at the target residue.

- Perform a whole-plasmid PCR amplification.

- Digest the PCR product with DpnI (37°C, 1 hr) to degrade the methylated parent template.

- Transform the digested product into competent E. coli, plate on selective media, and sequence individual colonies to characterize the library.

Table 1: Comparison of Stabilization Strategies for Novel Folds

| Strategy | Method | Typical ΔTm Gain | Risk to Novel Function | Best Use Case |

|---|---|---|---|---|

| Computational Redesign | Rosetta ddg_monomer / FlexDDG |

+2°C to +10°C | Medium (if active site perturbed) | Pre-experiment in silico stabilization |

| Ancestral Sequence Reconstruction | Phylogenetic inference & resurrection | +5°C to +15°C | Low (preserves historical function) | Adding reliability to an exploratory functional motif |

| Consensus Design | Multiple sequence alignment averaging | +3°C to +8°C | High (can average out unique features) | Stabilizing a novel scaffold with low natural identity |

| Laboratory Evolution | Random mutagenesis & selection for stability | +5°C to >20°C | Variable | Post-hoc stabilization of a functional but unstable hit |

Table 2: Troubleshooting Functional Failures in Novel Designs

| Symptom | Likely Cause (Exploration Bias) | Diagnostic Experiment | Mitigation (Adding Reliability) |

|---|---|---|---|

| No expression in E. coli | Codon bias, toxic sequences, no fold | mRNA quantification, aggregation test | Optimize codons, use lower temp induction, fuse to solubility tag |

| Soluble but monodisperse only at low [ ] | Marginal stability, exposed hydrophobics | Analytical SEC at 1-5 mg/mL, Tm assay | Add stabilizing mutations from homologs |

| Binds target but no catalysis | Rigid/ misaligned active site | MD simulation, ligand docking | Introduce flexibility loops, redesign electrostatic networks |

| High activity but poor thermo-stability | Over-optimized for dynamics | Tm assay, activity after 1hr @ 40°C | Add distal disulfide or salt bridge |

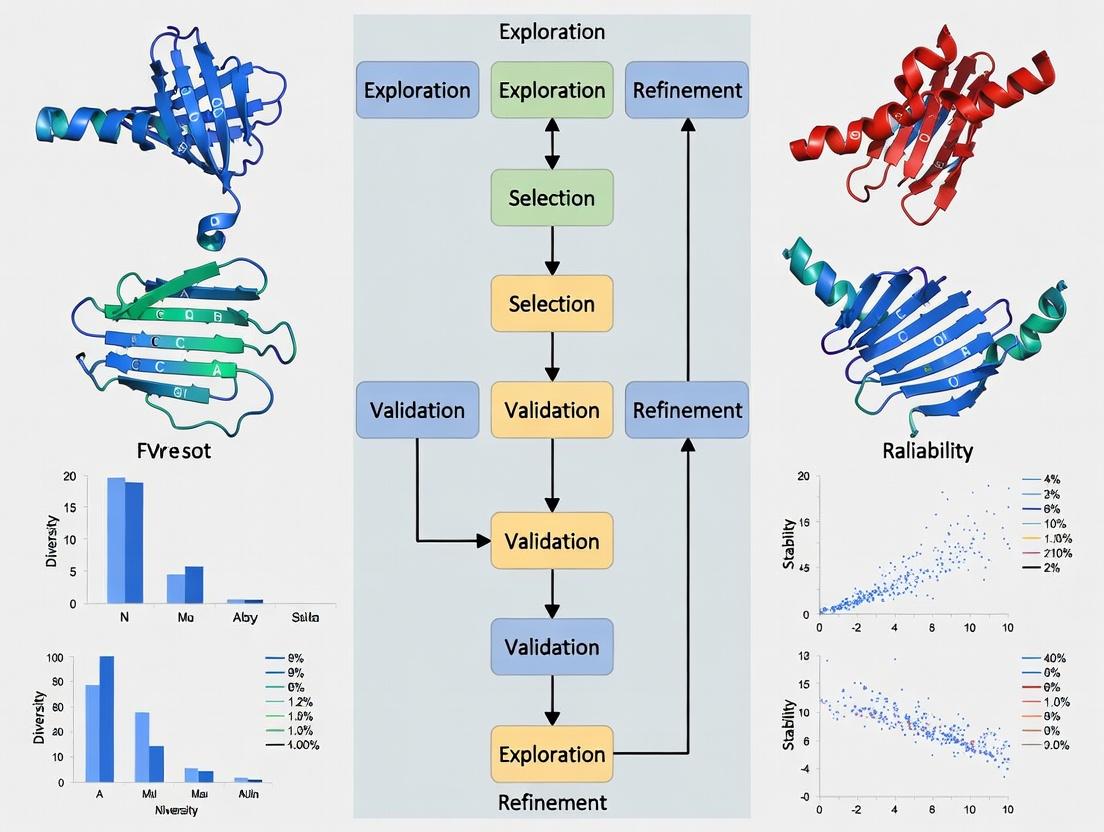

Visualizations

Diagram 1: Protein Design Spectrum & Decision Flow

Title: Design Spectrum and Troubleshooting Flow

Diagram 2: Stability-Function Optimization Cycle

Title: Stability-Function Balance Cycle

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Balancing Exploration & Reliability | Example Product / Specification |

|---|---|---|

| SYPRO Orange Dye | Binds to exposed hydrophobic patches upon protein unfolding; enables high-throughput thermal stability (Tm) screening of novel designs. | Thermo Fisher Scientific, Cat. #S6650 |

| NNK Degenerate Codon Oligos | Encodes all 20 amino acids + one stop codon; essential for creating smart, focused mutagenesis libraries to refine exploratory hits. | Integrated DNA Technologies (IDT), Ultramer DNA Oligos |

| HisTrap HP Column | Standardized immobilized metal affinity chromatography (IMAC) for reliable, high-yield purification of His-tagged novel proteins across expression batches. | Cytiva, Cat. #17524801 |

| Octet RED96e System | Biolayer interferometry (BLI) platform for medium-throughput kinetic screening (kon, koff, Kd) of binding function in crude supernatants, accelerating design-test cycles. | Sartorius |

| Q5 High-Fidelity DNA Polymerase | Provides highly reliable PCR amplification for gene synthesis and library construction, minimizing cloning errors that could confound analysis of exploratory designs. | New England Biolabs, Cat. #M0491S |

| Rosetta Software Suite | Premier computational protein modeling suite for both de novo exploration (fold design) and reliability optimization (energy minimization, ddg_monomer). |

https://www.rosettacommons.org/ |

In the field of protein sequence design, a fundamental tension exists between exploring novel, high-variance sequences and exploiting known, reliable motifs. Over-emphasis on exploration can lead to experimental failures due to structural instability or misfolding, while excessive conservation limits functional innovation and the discovery of superior designs. This Technical Support Center provides resources for navigating this balance, offering troubleshooting and experimental guidance grounded in current research.

Troubleshooting Guides & FAQs

FAQ: How do I diagnose a failed expression experiment for a novel protein variant?

Answer: Failed expression is a common issue when exploring highly novel sequences. Follow this diagnostic tree:

- Check Plasmid Integrity & Sequence: Confirm the insert sequence via Sanger sequencing. Verify promoter/ribosome binding site compatibility with your expression system (e.g., T7 for E. coli, CMV for mammalian).

- Assess Cell Health & Biomass: Low final biomass suggests toxicity. Plate serial dilutions on selective media to check for colony formation. Consider using a tightly regulated expression system (e.g., pET with lactose/IPTG, or an arabinose-inducible system) to mitigate toxicity during growth.

- Run an SDS-PAGE Gel: Load total cell lysate. If no band is visible at the expected molecular weight, the protein may be degraded.

- Action: Co-express with chaperone proteins (e.g., GroEL/ES, DnaK/DnaJ), lower the induction temperature (e.g., to 18-25°C), or add a protease inhibitor cocktail.

- If an insoluble pellet is suspected: Lys cells and centrifuge. Solubilize the pellet in urea or guanidine HCl and run on a gel. A band in the pellet fraction indicates inclusion bodies.

- If a band is present but purification fails: The protein may be aggregated or improperly folded. Analyze via size-exclusion chromatography (SEC) or dynamic light scattering (DLS).

FAQ: My conserved design is stable but lacks the desired catalytic activity. What are my next steps?

Answer: This is a hallmark of over-conservation. You must strategically introduce variation.

- Identify Functional Hotspots: Use a tool like

SCHEMAorRosettato identify sectors (co-evolving residues) or active site-adjacent positions that are predicted to modulate function without disrupting the fold. - Employ Saturation Mutagenesis: At 2-3 key positions, perform site-saturation mutagenesis via NNK codons. Use a high-throughput activity screen (e.g., fluorescence, growth selection) to identify beneficial point mutations.

- Explore Chimeric Designs: Create chimeras by recombining segments from homologous proteins with diverse functional profiles. Use tools like

SCHEMAto minimize disruptive contacts at fragment boundaries.

FAQ: How can I quantitatively assess the "exploratory risk" of a designed protein library before wet-lab experiments?

Answer: Utilize computational stability and fitness predictors to pre-screen libraries.

| Metric/Tool | Purpose | Typical Threshold for "High-Risk" | Interpretation |

|---|---|---|---|

| ΔΔG (Rosetta/ddG) | Predicts change in folding free energy. | > +2.0 kcal/mol | High probability of destabilization. |

| Predicted pLDDT (AlphaFold2) | Per-residue confidence score (0-100). | Average pLDDT < 70 | Low confidence in overall backbone structure. |

| AGADIR (for helices) | Predicts helix propensity. | < 5% propensity | Low chance of maintaining helical structure. |

| Conservation Score (HSSP) | Measures evolutionary conservation. | Score of 0 at a core position | Mutation at this highly conserved site is risky. |

- Protocol for Computational Pre-screening:

- Generate your sequence library (e.g., 10,000 variants).

- Run all sequences through a structure predictor (e.g., AlphaFold2 via ColabFold) or a folding energy calculator (e.g., Rosetta

fold_and_dockfor complexes). - Filter out sequences with average pLDDT < 70 or ΔΔG > +3.0 kcal/mol.

- For the remaining (~10-20%), perform more detailed molecular dynamics (MD) simulations (e.g., 50 ns) to check for early unfolding events. This creates a computationally validated, lower-risk library for experimental testing.

Key Experimental Protocols

Protocol 1: Deep Mutational Scanning (DMS) to Balance Exploration & Conservation

- Objective: Empirically measure the fitness effects of thousands of single-point mutants in a single experiment.

- Method:

- Library Construction: Use PCR-based site-saturation mutagenesis to create a plasmid library covering all amino acid substitutions at targeted positions.

- Selection: Clone library into a phage or yeast display vector, or transform into a bacterial strain where function links to growth.

- Challenge: Subject the population to a functional challenge (e.g., binding to a target, thermal stress, enzymatic reaction with a substrate).

- Sequencing & Analysis: Use next-generation sequencing (NGS) to count variant frequency before and after selection. Calculate enrichment scores (log₂(Final/Initial)).

- Data Integration: Map scores onto a protein structure. Identify positions where mutations are highly deleterious (require conservation) and those that are permissive (allow exploration).

Protocol 2: Multi-State Design for Functional Exploration

- Objective: Design sequences that are stable in multiple conformational states (e.g., apo and holo forms) to enable functional innovation.

- Method (using Rosetta):

- Prepare Input Files: Obtain PDB files for the different target states (e.g., open/closed, bound/unbound).

- Define Residue Positions: Specify which positions are allowed to design (typically substrate-binding pocket, hinge regions) and which are fixed (structural core).

- Run Multi-State Design Script: Use the

RosettaScriptsinterface with theMultiStateDesignmover. This optimizer finds a single sequence that minimizes the energy across all provided states. - Filter & Select: Rank output sequences by calculated average energy across states and by the smallest energy gap between the lowest and highest energy state. Select top candidates for experimental testing.

Visualizations

Title: Risk and Strategy Flow in Protein Design

Title: Deep Mutational Scanning Experimental Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function / Role in Balancing Exploration & Conservation |

|---|---|

| NNK Degenerate Codon Oligos | Enables site-saturation mutagenesis to explore all 20 amino acids at a target position with a single primer mixture. |

| Phage or Yeast Display Vectors (e.g., pIII, pYD1) | Provides a physical link between protein variant (phenotype) and its encoding DNA (genotype), enabling high-throughput selection and screening. |

| Thermostable Polymerase (Q5 or Phusion) | High-fidelity PCR for accurate library construction, minimizing spurious mutations during amplification. |

| RosettaSoftware Suite | Computational protein design platform for predicting stability (ΔΔG), performing multi-state design, and generating sequence libraries. |

| ColabFold (AlphaFold2+MMseqs2) | Provides fast, accurate protein structure prediction for novel sequences, allowing in-silico stability checks (via pLDDT score). |

| Next-Generation Sequencing (NGS) Service/Kit | Essential for Deep Mutational Scanning (DMS) to quantitatively measure variant fitness from complex pooled libraries. |

| Chaperone Plasmid Sets (e.g., Takara pG-KJE8) | Co-expression of chaperones like GroEL/ES can improve solubility of unstable, exploratory designs, rescuing some "high-risk" variants. |

| Size-Exclusion Chromatography (SEC) Column (e.g., Superdex 75) | Critical analytical tool to assess monodispersity and oligomeric state, diagnosing aggregation in failed purifications. |

Technical Support Center: Troubleshooting Protein Sequence Design Experiments

FAQs & Troubleshooting Guides

Q1: My designed protein library shows no functional variants in high-throughput screening, despite high predicted stability. What could be wrong? A: This often indicates an over-reliance on reliability (stability) metrics at the cost of exploration (functional diversity). Natural evolution balances these via mechanisms like somatic hypermutation, which introduces targeted diversity.

- Troubleshooting Steps:

- Analyze Library Diversity: Calculate the pairwise sequence similarity within your designed library. If the average similarity is >80%, your exploration space is too narrow.

- Check Fitness Landscape Model: Your predictive model may be suffering from "model collapse," where it only reinforces already stable sequences. Incorporate stochasticity or use an ensemble of models.

- Protocol – Introduce Controlled Diversity: Implement an in silico directed evolution protocol. Start with your stable backbone and use a Position-Specific Scoring Matrix (PSSM) derived from homologous natural sequences to guide residue substitutions at non-conserved positions, mimicking affinity maturation.

- Method: Use tools like

HMMERto build a PSSM. For each target position, allow substitutions with a probability weighted by the PSSM frequency, with a scaling factor (e.g., 0.7 for conservation, 0.3 for exploration).

- Method: Use tools like

- Key Data Metrics to Review:

- Library sequence entropy (should be >2.0 bits per variable position for meaningful exploration).

- In-silico docking score distribution (should show a spread, not a single peak).

Q2: How can I mitigate "off-target" binding or aggregation in my designed binding proteins? A: The immune system uses central and peripheral tolerance mechanisms to eliminate self-reactive clones. Translate this to your design pipeline.

- Troubleshooting Steps:

- Perform Negative Design In Silico: Explicitly design against non-target structures. For a protein binder, include negative design steps against homologous human proteins or common aggregation motifs (e.g., beta-sheet patches).

- Protocol – Negative Design Simulation:

- Method: During sequence optimization (e.g., using Rosetta

ProteinMPNNorRFdiffusion), add a negative energy term. For each candidate sequence, perform a brief (1-5 ns) molecular dynamics (MD) simulation or a fast folding prediction (e.g.,AlphaFold2on distilled models) in the presence of "off-target" protein structures. Penalize sequences that show stable docking (< -50 kcal/mol) or folding into off-target conformations.

- Method: During sequence optimization (e.g., using Rosetta

- Experimental Validation: Use BLI or SPR in a competition assay with the off-target protein to screen for cross-reactivity early.

Q3: My exploration algorithms generate highly novel folds, but they are insoluble when expressed in E. coli. How can I improve experimental reliability? A: Natural evolution operates within biophysical constraints. Your exploration must be bounded by these "rules" for reliable translation.

- Troubleshooting Steps:

- Incorporate Solubility & Expression Filters: Integrate predictors like

DeepSol,SoluProt, orPROSO IIinto your generative model's loss function. - Protocol – Bounded Exploration with Hallucination:

- Method: Use a protein "hallucination" framework (e.g.,

RosettaorProteinMPNNhallucination). Start with a loss function that favors novelty (e.g., low similarity to PDB). Then, add iterative constraints: a) predicted solubility score > 0.7, b) predicted aggregation propensity (viaTANGOorAGGRESCAN) below a threshold, c) codon adaptation index (CAI) for your expression host > 0.8. Optimize for 3-5 cycles.

- Method: Use a protein "hallucination" framework (e.g.,

- Use a Chaperone Co-expression System: As a standard practice, express problematic designs in BL21(DE3) cells with a co-expressed chaperone plasmid (e.g.,

pG-KJE8forGroEL/GroESandDnaK/DnaJ/GrpE).

- Incorporate Solubility & Expression Filters: Integrate predictors like

Key Experiment Data Summary

Table 1: Comparison of Library Design Strategies Balancing Exploration and Reliability

| Strategy | Exploration Metric (Avg. Seq. Entropy) | Reliability Metric (% Soluble Expression) | Key Lesson from Biological Precedent |

|---|---|---|---|

| Purely Stability-Based | 1.2 bits | 85% | Over-optimization leads to narrow diversity, akin to low-affinity IgM precursors. |

| Random Mutagenesis | 4.5 bits | 12% | Unguided exploration is highly inefficient, similar to untemplated V(D)J recombination. |

| Somatic Hypermutation-Inspired (PSSM-Guided) | 3.1 bits | 65% | Targeted diversity around a stable scaffold balances novelty and function. |

| Negative Design-Augmented | 2.8 bits | 78% | Explicit negative selection mimics immune tolerance, improving specificity. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Reliable Exploration in Protein Design

| Item | Function in Context |

|---|---|

| Rosetta Suite or ProteinMPNN | Computational core for sequence design and energy-based scoring, enabling both exploration (hallucination) and reliability (fixbb). |

| AlphaFold2 or ESMFold | Rapid structure prediction for novel sequences, providing a reliability check for fold integrity. |

| pET Series Vectors & BL21(DE3) Cells | Standard high-yield protein expression system for initial soluble expression screening. |

| Chaperone Plasmid Sets (e.g., Takara pG-Tf2) | Co-expression vectors to improve soluble yield of challenging, exploration-driven designs. |

| HisTrap HP Column & ÄKTA System | Standardized purification workflow for reliable, high-throughput protein recovery. |

| Bio-Layer Interferometry (BLI) Octet System | Label-free, high-throughput binding kinetics analysis to functionally screen diverse libraries. |

| Cytiva HiPrep Desalting Column | Essential for rapid buffer exchange post-purification, ensuring consistent sample conditions for assays. |

Experimental Protocol Visualizations

Title: Somatic Hypermutation-Inspired Design Workflow

Title: Conceptual Framework Linking Biology to Design

Welcome to the Technical Support Center for Fitness Landscape Navigation in Protein Design. This resource provides troubleshooting guides and FAQs framed within the critical thesis of Balancing exploration and reliability in protein sequence design research.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: Our directed evolution campaign has stalled, with successive rounds showing no improvement in function. We suspect we are stuck in a local optimum on a rugged fitness landscape. What strategies can we use to escape?

A1: This is a classic symptom of navigating a rugged landscape. Implement the following protocol to enhance exploration:

- Introduce Controlled Diversity: Shift from strict "top-N" selection to a probabilistic selection scheme (e.g., using fitness-proportional or tournament selection) to preserve some beneficial but sub-optimal variants.

- Increase Mutation Rate/Scope: Temporarily increase the mutation rate or use "soft randomization" focused on structurally permissive regions (guided by SCHEMA or 3D modeling) to create a larger jump in sequence space.

- Recombination: Introduce DNA shuffling or fragment recombination between diverse, high-fitness parents from different branches of your phylogenetic tree to create chimeric escapes.

- Alternate Fitness Pressure: If possible, apply a secondary or orthogonal selection pressure (e.g., stability under harsh conditions) to reveal peaks in a different dimension of the landscape.

Q2: How do we effectively map a sparse fitness landscape where functional variants are rare? High-throughput screening is expensive and low-throughput assays are not informative enough.

A2: Employ a tiered, model-guided exploration strategy.

- Experimental Protocol: Sparse Landscape Mapping

- Initial Probing: Use a deep mutational scanning (DMS) approach on a critical sub-domain. Even a low-coverage DMS can identify "hotspots" and "coldspots" for mutation.

- Predictive Filtering: Input DMS data into a machine learning model (e.g., variational autoencoder, Potts model) to predict the fitness of unsampled sequences.

- Focused Library Design: Generate a focused library of 10^4-10^5 variants enriched with sequences the model predicts as functional, plus a stochastic exploration component (~20% of library).

- Iterate: Use the new screening data to retrain the model and design the next library cycle.

Q3: Our ML model for fitness prediction performs well on validation data but fails to generalize and guide us to novel, high-fitness sequences. What might be wrong?

A3: This often indicates overfitting to a narrow region of the landscape or a training-test data leak. Troubleshoot as follows:

- Audit Training Data: Ensure your training and validation sets are phylogenetically separated. Use tools like

FastTreeto build a phylogenetic tree and split clusters. - Incorporate Uncertainty Estimation: Implement or switch to models that provide uncertainty estimates (e.g., Gaussian processes, ensembles, Bayesian neural networks). Prioritize exploring regions of high predicted fitness AND high uncertainty.

- Add Diverse Negative Data: Include confirmed low-fitness or non-functional sequences in training to better define the "valleys" and improve landscape topography prediction.

- Feature Evaluation: Re-evaluate your input features (e.g., evolutionary covariance, physicochemical properties, structural metrics). Ensure they are informative for novel sequences, not just interpolating between known ones.

Q4: When designing a new protein scaffold, how do we balance exploring radically new folds (high risk) versus optimizing known, stable folds (high reliability)?

A4: Adopt a phased "Explore-Exploit" pipeline with clear decision gates.

- Experimental Protocol: Phased Scaffold Design

- Phase 1 - Exploration (De Novo Focus): Use generative models (e.g., RFdiffusion, ProteinMPNN) to produce a diverse set of in silico scaffolds filtered for predicted folding confidence (pLDDT > 70). Synthesize and test a small batch (10-20) for basic expression and stability.

- Decision Gate: If ≥10% of exploratory scaffolds show promise, proceed. If not, expand exploration with different generative constraints or revert to a known, reliable backbone.

- Phase 2 - Exploitation (Optimization Focus): Take the most promising 1-2 scaffolds from Phase 1. Perform focused combinatorial mutagenesis on surface loops or active sites, using a high-throughput functional screen (e.g., yeast display + FACS).

- Phase 3 - Reliability Engineering: On your top functional variant, run a consensus-stability or computational stability scan (Rosetta ddG) to introduce stabilizing mutations without affecting function.

Table 1: Comparison of Landscape Navigation Strategies

| Strategy | Primary Goal | Typical Library Size | Key Risk | Best For |

|---|---|---|---|---|

| Saturation Mutagenesis | Exhaustively map a local site | 10^2 - 10^3 | Misses epistatic effects | Identifying key residues, fine-tuning |

| Directed Evolution (AVEx) | Climb local peak | 10^6 - 10^9 | Local optimum trapping | Optimizing an existing function |

| Family Shuffling | Recombine functional blocks | 10^5 - 10^7 | Generate non-functional chimeras | Exploring within a known fold family |

| Generative Model Design | Explore novel sequence space | 10^2 - 10^4 (physical) | Poor in vivo folding | De novo scaffold discovery |

| Model-Guided Iteration | Navigate sparse rewards | 10^4 - 10^5 per cycle | Model overfitting/error | When functional variants are <1% |

Table 2: Key Metrics for Fitness Landscape Analysis

| Metric | Calculation / Tool | Interpretation | Threshold for Action |

|---|---|---|---|

| Epistasis Density | Fraction of variant pairs showing non-additive effects | High density = Rugged landscape | >0.3 indicates strong need for exploration tactics |

| Sparsity Index | 1 - (Functional Variants / Total Variants Tested) | High index = Sparse landscape | >0.99 necessitates model-guided or ultra-deep screening |

| Predictive R² | Correlation (Predicted vs. Actual Fitness) on held-out clusters | Generalization ability of model | R² < 0.4 on cluster hold-out suggests model cannot guide exploration |

Visualizations

Diagram Title: Phased Explore-Exploit Protein Design Workflow

Diagram Title: Model-Guided Iterative Exploration Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in Landscape Navigation | Example / Note |

|---|---|---|

| NGS-based Deep Mutational Scanning (DMS) | Enables ultra-high-throughput fitness measurement for thousands of variants in parallel, mapping local landscape topography. | Use EMPIRIC or DIMPLE protocols for yeast surface display coupling. |

| Phage/ Yeast Display Libraries | Provides a physical linkage between genotype (DNA) and phenotype (protein function) for screening vast combinatorial libraries (>10^9). | Crucial for exploring rugged landscapes via directed evolution. |

| Rosetta Suite Software | Computational protein modeling for predicting stability (ddG) and structure, used to in silico pre-filter libraries and assess reliability. | RosettaDDGPrediction protocol for scanning stability. |

| RFdiffusion & ProteinMPNN | Generative AI models for de novo protein backbone design and sequence scaffolding, enabling radical exploration of fold space. | Key for exploring sparse regions beyond natural homologs. |

| Trimmomatic & FastTree | Bioinformatics tools for processing NGS data and constructing phylogenetic trees to ensure robust train/test splits for ML models. | Prevents data leakage, improving model generalizability. |

| Fluorescence-Activated Cell Sorting (FACS) | High-precision isolation of functional protein variants based on activity or binding, enabling selection from complex libraries. | Essential for the "exploitation" phase to climb fitness peaks. |

| Thermofluor (DSF) Assay | High-throughput measurement of protein thermal stability (Tm), a key reliability metric during optimization. | Use to ensure exploration does not catastrophically compromise stability. |

Bridging the Gap: Methodologies for Balanced Sequence Generation and Application

Technical Support Center

Troubleshooting Guide

Issue 1: Model Collapse in Conditional VAE Training Q: My conditional VAE for protein sequence generation is producing low-diversity, repetitive outputs. How can I diagnose and fix this? A: Model collapse is often due to an imbalanced Kullback-Leibler (KL) divergence term or a poorly structured latent space. Follow this protocol:

- Monitor KL Loss: Track the KL loss component during training. A rapid drop to near-zero indicates collapse.

- Adjust Beta (

β): Implement aβ-VAE framework. Start withβ = 0.001and gradually anneal it according to a schedule (e.g., increase to 0.1 over 50 epochs). Use the following table as a guideline:Epoch Range Beta (β) Value Purpose 1-20 0.001 to 0.01 Allow encoder to learn useful representations. 21-100 0.01 to 0.05 Gradually enforce latent space structure. 100+ 0.05 to 0.1 (max) Balance diversity and reconstruction. - Latent Space Inspection: Use PCA or UMAP to visualize the latent space for different conditions. Clustered, non-overlapping distributions are good; a single tight cluster indicates collapse.

- Decoder Strength: Reduce the initial learning rate of the decoder by a factor of 10 relative to the encoder to prevent it from overpowering the latent regularization too quickly.

Issue 2: Blurry or Unrealistic Samples from Diffusion Models Q: My diffusion model for protein backbone generation produces "averaged" or physically improbable structures. What steps should I take? A: This is typically a problem with the noise schedule and sampling process.

- Noise Schedule Analysis: Verify your variance schedule (

β_t). A linear schedule often leads to suboptimal results. Switch to a cosine schedule, which adds noise more slowly at the start and end. - Sampling Steps: Increase the number of reverse diffusion steps during sampling. While training might use 1000 steps, sampling can often use 250-500 steps with a DDIM sampler for clarity.

- Classifier-Free Guidance Scale: If using conditional generation, tune the guidance scale (

ω). High values can distort samples; low values reduce condition fidelity. Perform a grid search:Guidance Scale (ω) Result on Generated Protein Recommended Use 1.0 High diversity, low condition fidelity. Initial exploration. 3.0 - 5.0 Good balance of fidelity and novelty. Standard design. 7.0 - 10.0 High fidelity, reduced diversity. High-reliability scaffold grafting. - Loss Function: Incorporate a secondary loss term, such as a lightweight physics-based potential (e.g., Lennard-Jones clashes), into the training objective to penalize unrealistic conformations.

Issue 3: Poor Conditioning in Hierarchical Models Q: In my two-stage model (VAE for sequence, diffusion for structure), the final structure does not reflect the intended conditional property (e.g., stability). A: This is a conditioning leakage problem. Ensure gradient flow and information consistency.

- Gradient Check: Use automatic differentiation tools to verify that gradients from the final property predictor (e.g., stability predictor) propagate back through both the diffusion and VAE models. Frozen layers are a common culprit.

- Conditional Bottleneck: Introduce a dedicated, small conditional embedding network that processes your target property (e.g., ΔΔG) and injects it into both the VAE's latent space and the diffusion model's cross-attention layers. This creates multiple conditioning points.

- Alignment Metric: Implement a validation metric that explicitly measures the correlation between the generated samples' predicted properties and the target condition. Optimize for this metric directly.

Frequently Asked Questions (FAQs)

Q: How do I choose between a Conditional VAE (CVAE) and a Conditional Diffusion Model (CDM) for protein sequence design? A: The choice depends on your priority in the exploration-reliability trade-off.

- Use CVAE when: You need fast, diverse sampling and direct latent space interpolation for exploratory analysis. It's less computationally intensive for generation.

- Use CDM when: Your priority is sample quality and reliability, and you can afford longer sampling times. Diffusion models typically generate higher-fidelity, more physically plausible sequences/structures.

- Hybrid Approach: A common strategy is to use a CVAE for rapid exploration of the sequence space and a CDM for high-fidelity refinement of top candidates.

Q: What is a practical method to quantitatively evaluate "controlled diversity"? A: Use a combination of metrics, reported in a unified table for each model run:

| Metric | Formula/Description | Target for Controlled Diversity |

|---|---|---|

| Conditional Accuracy | Percentage of generated samples that meet the target property threshold (e.g., binding affinity > X). | High (>80%). Ensures reliability. |

| Intra-condition Diversity | Average pairwise Levenshtein distance (sequence) or RMSD (structure) within a condition group. | Moderate to High. Avoids collapse. |

| Inter-condition Separation | Silhouette score of latent embeddings grouped by condition. | High (>0.5). Clear condition control. |

| Novelty | Percentage of generated sequences not found in the training dataset (BLAST evalue > 1e-5). | User-defined. Balances exploration. |

Q: How can I incorporate a known protein motif as a hard constraint during generation? A: Use masked generation or inpainting.

- For Sequences (CVAE/Diffusion): Fix the tokens of the known motif during sampling. In diffusion, this is done by only adding noise to the "unknown" regions and denoising the full sequence conditioned on the fixed motif.

- For Structures (Diffusion): Use a 3D inpainting technique. Define a mask over the coordinates of the motif (set noise to zero) and only apply the diffusion process to the flexible regions. The model will generate a coherent structure that accommodates the fixed motif.

Experimental Protocols

Protocol 1: Training a Conditional VAE for Stable Protein Variants

Objective: Generate diverse protein sequences predicted to have high thermal stability (ΔΔG > 0) relative to a wild-type.

- Data Preparation: Curate a dataset of protein variants with experimentally measured ΔΔG. Represent sequences as one-hot tensors (L x 20). Normalize ΔΔG values.

- Model Architecture:

- Encoder: 1D CNN + bidirectional LSTM. Outputs

μandlog(σ)for a 128-dim latent vectorz. - Conditioning: Concatenate the target ΔΔG value to the encoder's flattened output and to the decoder's input.

- Decoder: Two-layer LSTM followed by a linear layer with softmax.

- Encoder: 1D CNN + bidirectional LSTM. Outputs

- Training: Use a loss

L = L_recon + β * L_KL. Use β-annealing from 1e-4 to 0.05 over 200 epochs. Adam optimizer (lr=3e-4). - Validation: Monitor (a) reconstruction accuracy on hold-out wild-types, and (b) the correlation between the target ΔΔG and the predicted ΔΔG (via a separate predictor) of generated samples.

Protocol 2: Fine-tuning a Latent Diffusion Model for Backbone Inpainting

Objective: Given a fixed protein scaffold and a defined active site region, generate diverse, plausible backbone conformations for the active site.

- Data & Featurization: Use the PDB to create training samples. Represent structures as backbone atom point clouds (N, Cα, C). For each sample, create a binary mask (1 for active site, 0 for scaffold).

- Model Setup: Start from a pre-trained unconditional protein diffusion model (e.g., RFDiffusion AlphaFold2 module). Modify the input to concatenate coordinate features with the mask channel.

- Conditional Training: Train only on the denoising task for the masked region. The loss is calculated only on the coordinates of the masked (active site) residues. The scaffold coordinates are passed as context but are not updated.

- Sampling: Use the DDIM sampler with 200 steps. The scaffold coordinates are fixed (no noise added). Noise is initialized only for the masked region, and the reverse process generates the new active site.

Visualizations

Diagram Title: CVAE-Diffusion Hybrid Workflow for Protein Design

Diagram Title: Conditional VAE Loss Components

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Generative Protein Design |

|---|---|

| ESMFold / AlphaFold2 | Protein structure prediction networks. Used as a rapid in-silico validation tool to assess the foldability of AI-generated sequences. Critical for reliability. |

| PyRosetta | Software suite for computational structural biology. Used to calculate physics-based energy scores (Rosetta Energy Units) and refine AI-generated models, adding a reliability check. |

| ProteinMPNN | A state-of-the-art inverse folding model. Often used after a generative model to "fix" or redesign sidechains for a given AI-generated backbone, enhancing plausibility. |

| PDB (Protein Data Bank) | The primary source of experimental protein structures. Used for training data, defining scaffolds, and benchmarking generated samples. |

Beta (β) Scheduler |

A software module to dynamically adjust the KL loss weight in a VAE during training. Essential for preventing posterior collapse and achieving controlled diversity. |

| Classifier-Free Guidance | An inference-time scaling technique for diffusion models. The key "knob" to tune the exploration (diversity) vs. reliability (condition fidelity) trade-off. |

| DDIM Sampler | An accelerated sampler for diffusion models. Allows for high-quality generation in fewer reverse steps (e.g., 250 vs. 1000), speeding up the design cycle. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My smart library, designed using a generative model, shows extremely low expression in E. coli. What could be the cause and how can I resolve it?

A: Low expression from a computationally designed library often stems from overlooked host-specific translational or folding rules. First, check the codon adaptation index (CAI) of your designed sequences using a tool like the EMBOSS cai program. Aim for a CAI >0.8 for E. coli. If CAI is low, perform in silico codon optimization, but avoid creating strong mRNA secondary structures near the ribosome binding site. Second, verify that your mutations have not unintentionally created aggregation-prone regions; use tools like TANGO or Aggrescan. Troubleshooting Protocol: 1) Clone and express 3-5 individual variants to confirm the issue is systemic. 2) Subclone your library into a vector with a stronger, tunable promoter (e.g., T7 or araBAD) to rule out promoter weakness. 3) Co-express with chaperone plasmids (e.g., pG-KJE8) to test if misfolding is the bottleneck.

Q2: During FACS-based screening, I observe a high rate of false positives. How can I improve sorting fidelity? A: High false positives in FACS often link to signal leakage or non-specific binding. Implement the following: 1) Increase Stringency: Use a more stringent gating strategy. Include a negative control (cells with no enzyme or inactive mutant) to set the lower boundary and a "low-activity" control to define your minimum desired signal. Apply doublet discrimination gates (FSC-H vs FSC-A) to exclude cell aggregates. 2) Signal Validation: Employ a dual-labeling strategy. For example, if screening for enzymatic activity, use a substrate that generates a fluorescent product at a different wavelength than your cell-labeling dye (e.g., GFP expression). Gate only on cells that are positive for both. 3) Pre-sort Enrichment: If possible, use a magnetic bead-based pre-enrichment step to remove the bulk of inactive clones before FACS, reducing background pressure.

Q3: The sequence-activity relationship data from my high-throughput screen is noisy and no clear fitness landscape emerges. What steps should I take? A: Noisy data can obscure evolutionary trajectories. 1) Replicate Screening: Perform at least three biological replicates of your screen. Calculate the coefficient of variation (CV) for each variant's measured activity. Filter out variants where the CV > 20% as unreliable. 2) Control Normalization: Use internal controls spiked into every screening plate. Include a known high-activity and a null variant. Normalize all raw reads or fluorescence values to the plate median of the high-activity control. 3) Apply Statistical Filters: Use a Z-score or median absolute deviation (MAD) threshold to identify hits significantly above the population median. A workflow for data refinement is provided below.

Title: Workflow for Refining Noisy HTS Data

Q4: When using machine learning to guide library design, how do I balance exploration of novel sequence space with exploitation of known productive regions? A: This is the core challenge of reliable sequence design. Implement an acquisition function within your active learning loop. 1) Algorithm Choice: Use Upper Confidence Bound (UCB) or Thompson sampling, which explicitly balance mean predicted fitness (exploitation) and prediction uncertainty (exploration). 2) Library Composition: Design each successive library as a blend: 70% of variants from the top of the exploitation ranking (high predicted value), 20% from the exploration ranking (high uncertainty), and 10% as random wild-card sequences to sample completely unexplored regions. This ratio can be adjusted based on iteration performance. The decision logic is visualized below.

Title: Balancing Exploration & Exploitation in Library Design

Q5: My high-throughput screening assay works in microtiter plates but fails when adapted to a microfluidic droplet format. What are common pitfalls? A: Droplet-based assays introduce new variables. Key issues and fixes: 1) Surface Binding: Your enzyme or substrate may adsorb to the droplet interface. Fix: Add non-ionic surfactants (e.g., 0.5-1% Pluronic F-68) and carrier proteins (0.1% BSA) to the aqueous phase. 2) Diffusion Limitations: The reaction may be quenched too slowly. Fix: Optimize the concentration of your quenching agent in the oil stream or collection buffer. Perform a time-course experiment in droplets to find the optimal incubation time before sorting. 3) Substrate Permeability: The substrate may not efficiently enter cells encapsulated in droplets. Fix: Use a substrate that is membrane-permeable or employ cell-free expression systems within droplets.

Table 1: Comparison of High-Throughput Screening Platforms

| Platform | Throughput (variants/day) | Cost per Variant | Typical False Positive Rate | Best for Library Type |

|---|---|---|---|---|

| Microtiter Plate (Robotic) | 10^4 | $0.50 - $2.00 | 5-15% | Small, focused libraries (<10^4) |

| Flow Cytometry (FACS) | 10^7 | $0.001 - $0.01 | 1-5%* | Large smart libraries (10^6 - 10^8) |

| Microfluidic Droplets | 10^8 | <$0.001 | 0.5-3%* | Ultra-large libraries (10^7 - 10^9) |

| Phage/yeast display | 10^9 | <$0.001 | Varies widely | Binding affinity, peptide libraries |

*With optimized gating and controls.

Table 2: Common Smart Library Design Strategies & Performance

| Design Strategy | Computational Model | Typical Library Diversity | Exploration vs. Reliability Bias | Key Experimental Validation |

|---|---|---|---|---|

| Site-Saturation Mutagenesis (SSM) | None (random) | 10^2 - 10^3 per site | High exploration, low reliability | Deep mutational scanning |

| Consensus Design | Sequence alignment | 10^1 - 10^2 | Low exploration, high reliability | Thermostability assays |

| TrRosetta/AlphaFold2 | Protein structure prediction | 10^3 - 10^4 | Moderate balance | Expression yield, solubility check |

| ProteinMPNN/RFdiffusion | Inverse folding, generative | 10^4 - 10^6 | Tunable (depends on training data) | Full functional screen required |

Experimental Protocol: Coupling FACS with NGS for Fitness Landscape Mapping

Objective: To quantitatively map the fitness of all variants in a smart library post-selection. Materials:

- Sorted cell populations (Top 1% and bottom 50% of activity).

- QIAamp DNA Micro Kit.

- PCR primers with overhangs for Illumina sequencing.

- KAPA HiFi HotStart ReadyMix.

- Illumina MiSeq or NextSeq system.

Methodology:

- Sorting: Perform FACS, collecting the top 1% (high-fitness) and bottom 50% (low-fitness/neutral) populations. Collect at least 10^6 cells per population.

- Genomic DNA Extraction: Isolate gDNA from both cell pools using the QIAamp kit. Elute in 30 µL.

- Amplification of Variant Region: Perform two-step PCR. Step 1: Amplify the variant gene region using gene-specific primers. Use ≤ 25 cycles. Step 2: Add Illumina flow cell adapters and unique dual indices (UDIs) using a limited-cycle (8-10 cycles) PCR.

- Sequencing: Pool purified libraries equimolarly and sequence on an Illumina platform to a depth of at least 500 reads per variant for the input library.

- Data Analysis: Count reads for each variant (barcode) in the Input, High, and Low populations. Calculate enrichment score (E) as: E = log2( (Counthigh / Totalhigh) / (Countinput / Totalinput) ). Filter variants with < 10 reads in input pool.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Directed Evolution 2.0 Workflows

| Item | Function | Example Product/Catalog # |

|---|---|---|

| Ultra-high fidelity DNA Polymerase | Error-free amplification of smart library constructs for cloning. | NEB Q5 High-Fidelity DNA Polymerase (M0491) |

| Golden Gate Assembly Mix | Efficient, seamless assembly of variant libraries into expression vectors. | NEB Golden Gate Assembly Kit (BsaI-HF v2) (E1601) |

| Membrane-permeable fluorogenic substrate | Enables intracellular enzyme activity screening in FACS or droplets. | Thermo Fisher Scientific LiveBLAzer FRET B/G Substrate |

| Next-generation sequencing kit | For deep mutational scanning and fitness landscape analysis. | Illumina DNA Prep Kit (20018705) |

| Chaperone plasmid set | Co-expression to improve folding of designed variants in E. coli. | Takara pG-KJE8 Chaperone Plasmid Set (3340) |

| Droplet generation oil & surfactant | For creating stable, biocompatible water-in-oil emulsions. | Bio-Rad Droplet Generation Oil for EvaGreen (1864005) |

Troubleshooting Guides & FAQs

Q1: My Rosetta design runs are producing structures with unexpectedly high total energy scores (positive REU). What are the primary causes and fixes?

A: Positive REF2015 or REF2021 energy values indicate instability. Common causes and solutions:

- Cause 1: Inadequate Relaxation. The designed sequence is strained in the starting backbone.

- Fix: Implement a more aggressive relaxation protocol after design. Use FastRelax with increased cycle counts (e.g.,

-default_max_cycles 200) and consider dual-space relaxation (-relax:dualspace true).

- Fix: Implement a more aggressive relaxation protocol after design. Use FastRelax with increased cycle counts (e.g.,

- Cause 2: Overly Restrictive Design Constraints. Over-constraining residue types (e.g., packing) can force incompatible sidechains.

- Fix: Use

-auto_detect_good_breakup trueduring packing or apply constraints via the-cst_fa_weightflag more judiciously, starting with lower weights (e.g., 1.0).

- Fix: Use

- Cause 3: Clashing from In-Parallel Design. Simultaneous optimization of many sidechains can create clashes.

- Fix: Use the

-packing:linmem_ig 10flag to improve packing accuracy and consider sequential design strategies.

- Fix: Use the

Q2: How do I balance the -fa_dun weight to improve backbone reliability without over-constraining sequence exploration?

A: The Dunbrack rotamer term (-fa_dun) is critical for reliability but can hinder exploration.

- High Weight (e.g., 0.7-1.0): Favors well-known, low-energy rotamers. Increases reliability of designs by selecting statistically common sidechain conformations. Use for conservative design near functional sites.

- Low/Zero Weight (e.g., 0.0-0.3): Allows exploration of novel rotamers. Use for de novo design or when exploring radically new sequences. Always follow with extensive full-energy scoring and relaxation to ensure reliability.

- Recommended Protocol: Start design with a lower weight (0.3) for exploration, then filter and refine top sequences with a standard weight (0.7) for reliability.

Q3: My designed proteins express but aggregate. Which energy terms should I re-evaluate to improve solubility and folding reliability?

A: Aggregation suggests exposed hydrophobic surface area or frustrated electrostatic interactions.

- Re-evaluate: The

-fa_sol(Lazaridis-Karplus solvation) and-fa_elec(FaElec2015) terms. - Experimental Protocol: Implement a post-design filter using the

InterfaceAnalyzermover orscore_jd2application to calculate the following metrics per design:- Hydrophobic SASA: Total SASA of apolar residues. Compare to natural monomeric proteins.

- ddG of Interface (for monomers): Should be positive and high, indicating no stable multimers.

- Electrostatic Complementarity: Use the

ECmetric. Poor scores (<0.5) suggest unfavorable polar interactions.

- Fix: Add a

-resfilecommand to repack surface residues with more polar amino acids (D, E, K, R, Q, N, S, T) based on the initial design's problematic patches.

Table 1: Impact of Energy Term Weighting on Design Outcomes

| Energy Term | Standard Weight (Reliability) | Low Weight (Exploration) | Key Metric Affected | Recommended Use Case |

|---|---|---|---|---|

-fa_dun (Rotamer) |

0.7 - 1.0 | 0.0 - 0.3 | Rotamer Probability | Lower for de novo cores; Standard for surface/interface |

-cst_fa_weight (Constraints) |

1.0 - 5.0 | 0.1 - 0.5 | Constraint Energy | Lower for initial exploration; Increase during refinement |

-relax:ramp_constraints |

true | false | Backbone Flexibility | Enable for reconciling conflicting constraints |

-fa_elec (Electrostatics) |

1.0 | Scale 0.5-2.0 | ddG Folding/Binding | Adjust to modulate polar interaction strength |

Table 2: Troubleshooting Energy Scores

| Problematic Output | Typical REF2015 Score Range | Target Score Range | Primary Diagnostic Movers |

|---|---|---|---|

| High-Energy Designs | > 50 REU | < 0 REU | FastRelax, PackRotamersMover |

| Unstable Backbone (post-relax) | > 100 REU (rama, paapp) | rama < 2, paapp < 1 | CartesianDDAMover, LoopModeler |

| Poor Interface Packing | InterfacedeltaX > 10 REU | InterfacedeltaX < -10 REU | InterfaceAnalyzer, FindInterfaceMotif |

Experimental Protocols

Protocol: Energy-Constrained Iterative Design for Reliability

- Input Preparation: Generate starting backbone(s) via remodeling or from a PDB. Define designable (

ALLAA/POLAR) and repackable (NATAA) residues using a.resfile. - Exploration Phase:

- Run

rosetta_scriptswith a reduced-fa_dun_weight(0.3) and moderate-cst_weight(1.0). - Use the

PackRotamersMoverwith-ex1 -ex2options to expand rotamer sampling. - Generate a large sequence diversity (5,000-10,000 designs).

- Run

- Energy-Based Filtering:

- Filter all outputs by total score (

ref2015_cartorref2021) and per-residue energy. - Select the top 10% by score for further analysis.

- Filter all outputs by total score (

- Reliability Refinement Phase:

- On filtered designs, run

FastRelaxwith standard energy weights (-fa_dun_weight 0.7) and-ramp_constraints true. - Apply

-dualspace trueif backbone moves are permitted.

- On filtered designs, run

- Validation Scoring:

- Score relaxed designs with

InterfaceAnalyzer(for complexes) orScoreMover. - Apply metrics from Table 2 as pass/fail filters.

- Output final designs with negative total energy and favorable sub-scores.

- Score relaxed designs with

Visualizations

Title: Rosetta Energy-Constrained Design Workflow

Title: Energy Constraint Logic in Rosetta

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Energy-Constrained Design Experiments

| Item | Function in Experiment | Key Consideration for Reliability |

|---|---|---|

| Rosetta Software Suite (v2024+) | Core platform for physics-based design and energy scoring. | Use the latest release for updated energy functions (e.g., REF2021). |

| High-Performance Computing Cluster | Enables large-scale sequence sampling and parallel relaxation runs. | Critical for generating statistically significant design libraries. |

| Structure Visualization Software (PyMOL, ChimeraX) | Visual inspection of designed models for packing, voids, and strain. | Essential for qualitative validation beyond energy scores. |

| Crystallography or Cryo-EM | Experimental high-resolution structure determination of top designs. | Ultimate validation of computational reliability and accuracy. |

| Differential Scanning Fluorimetry | Measures thermal stability (Tm) of expressed designs. | Correlates directly with computed total energy (REU). |

| SEC-MALS / DLS | Assesses monodispersity and aggregation state in solution. | Validates predictions from -fa_sol and interface energy terms. |

| Residue-Specific Constraints File | Defines desired H-bonds, distances, or motifs via Rosetta .cst format. |

Balances exploration (loose constraints) with reliability (tight constraints). |

Troubleshooting Guides & FAQs

Q1: I am encountering "CUDA out of memory" errors when running inference on ESM-2 or ESM-3 models. What are my options?

A: This is common when processing large proteins or batches. Solutions are tiered:

- Reduce Batch Size: Set

batch_size=1in your data loader. - Use Gradient Checkpointing: If training, enable activation checkpointing for memory-for-compute trade-off.

- Precision Reduction: Use mixed precision (

torch.cuda.amp) or load the model in FP16/BF16. - Model Truncation: Consider using a smaller variant (e.g., ESM-2 35M instead of 650M).

- Sequence Truncation: For very long sequences, analyze functional domains separately.

- Hardware: If possible, use a GPU with more VRAM (e.g., A100, RTX 4090/3090).

Q2: The per-residue log probabilities from my ESM model are extremely low (highly negative). Is this normal?

A: Yes. Log probabilities are negative, with more negative values indicating lower probability. The scale varies by model and sequence length. Focus on relative differences, not absolute values. For masked inference, the probability for the wild-type residue is often low, as the model is trained to predict likely alternatives.

Q3: How do I interpret the attention maps from a model like ESMFold or ESM-2? What do strong attention weights signify?

A: Attention weights indicate which residue pairs the model "attends to" when constructing a representation for a given residue. Strong weights often correlate with:

- Spatial proximity in the folded structure.

- Evolutionary co-variation (contacts).

- Functional relationships. Note: Attention is not a direct physical interaction map; it's a computational construct. Validate insights with structural data.

Q4: When using ESM embeddings for downstream tasks (e.g., fitness prediction), which layer's embeddings should I use?

A: There is no universal best layer. Performance depends on the task:

- Final Layer: Best for global, semantic features (e.g., protein family, solubility).

- Middle Layers (e.g., ~2/3 depth): Often capture structural and functional information optimal for variant effect prediction.

- Strategy: Conduct a simple hyperparameter sweep over layers on a validation set. A weighted combination of multiple layers can sometimes improve performance.

Table: ESM-2 Model Variants & Resource Requirements

| Model (ESM-2) | Parameters | Embedding Dim | Typical VRAM (Inference) | Max Sequence Length | Best For |

|---|---|---|---|---|---|

| esm2t68M_UR50D | 8 Million | 320 | ~1 GB | 1024 | Quick prototyping, embedding large families |

| esm2t1235M_UR50D | 35 Million | 480 | ~2 GB | 1024 | Balance of speed and accuracy |

| esm2t30150M_UR50D | 150 Million | 640 | ~4 GB | 1024 | High-quality embeddings for design |

| esm2t33650M_UR50D | 650 Million | 1280 | ~10 GB | 1024 | State-of-the-art representations |

| esm2t363B_UR50D | 3 Billion | 2560 | ~24 GB+ | 1024 | Cutting-edge research (requires high-end GPU) |

Q5: I want to use ESM to score designed sequences. Should I use masked marginal likelihood or pseudo-perplexity?

A: For scoring existing sequences without masking, use pseudo-log-likelihood (PLL). It computes the sum of log probabilities of each residue, conditionally masked on the rest of the sequence. Lower PPL (derived from PLL) indicates the sequence is more "natural" according to the model. This is a key metric for balancing exploration (new designs) with reliability (native-like sequences).

Detailed Experimental Protocol: Using ESM Pseudo-Likelihood to Evaluate Designed Protein Variants

Objective: Quantify the "naturalness" of a novel designed protein sequence using the ESM-2 model to compute its pseudo-perplexity (PPL), providing a prior for guiding exploration in design space.

Materials & Software:

- Python (3.8+)

- PyTorch (1.12+)

- FairSeq or Transformers (Hugging Face) library with ESM

- ESM-2 model weights (e.g.,

esm2_t33_650M_UR50D) - List of protein sequences in FASTA format.

Procedure:

- Environment Setup: Install required packages:

pip install fair-esm transformers torch. - Model Loading: Load the ESM-2 model and its associated tokenizer.

- Data Preparation: Tokenize the input sequence(s). Append a start (

<cls>) and end (<eos>) token as per model training. Pseudo-Likelihood Calculation: For each sequence position

i, mask tokeni(replace with<mask>), pass the sequence through the model, and retrieve the log probability assigned to the original residue at positioni.Aggregate Score: Sum the per-position log probabilities to get the total pseudo-log-likelihood (PLL) for the sequence.

Interpretation: Lower PPL values indicate the sequence is more probable under the model's learned evolutionary distribution. Compare designed variants against the wild-type PPL.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Sequence-Based Priors Research |

|---|---|

| ESM-2/ESM-3 Model Weights | Pre-trained protein language models that provide the foundational evolutionary prior for sequence scoring and embedding generation. |

| PyTorch / FairESM | Core deep learning framework and specific library for loading and running ESM models efficiently. |

| CUDA-Compatible GPU (e.g., NVIDIA A100, RTX 4090) | Accelerates model inference and training, essential for working with large models (650M+ parameters). |

| Hugging Face Transformers Library | Alternative API for loading and using ESM models, often integrated into modern ML pipelines. |

| AlphaFold2 or ESMFold | Structure prediction tools used to validate or provide structural context for sequences flagged by ESM as high-potential but novel. |

| Pandas & NumPy | For managing, processing, and analyzing large datasets of sequences and their associated model scores (PPL, embeddings). |

| Scikit-learn / PyTorch Lightning | For building downstream regression/classification models on top of ESM embeddings (e.g., predicting stability, function). |

| Biopython | For handling FASTA files, performing sequence alignments, and basic bioinformatics operations. |

Workflow Diagram: ESM-Guided Protein Design Prioritization

Diagram Title: Prioritizing Protein Designs with ESM Pseudo-Perplexity

Troubleshooting Guides and FAQs

FAQ 1: Why is my designed enzyme showing no catalytic activity after expression and purification?

- Possible Causes: Disruption of the active site architecture during design, incorrect folding, or aggregation.

- Troubleshooting Steps:

- Verify the structural integrity of your design. Perform a rapid in silico stability check using Rosetta

ddg_monomeror FoldX. - Check for soluble expression. Run an SDS-PAGE of both soluble and insoluble fractions.

- If insoluble, optimize expression conditions (lower temperature, autoinduction media) or co-express with chaperones.

- If soluble but inactive, verify active site residue geometry (distances, angles) against the native enzyme crystal structure using PyMOL or ChimeraX.

- Consider performing limited proteolysis or circular dichroism (CD) spectroscopy to confirm proper folding.

- Verify the structural integrity of your design. Perform a rapid in silico stability check using Rosetta

FAQ 2: My computational binder design has high predicted affinity but fails to bind in SPR/BLI experiments.

- Possible Causes: Epitope inaccessibility in the native target conformation, energetic frustration in the designed interface, or aggregation of the binder.

- Troubleshooting Steps:

- Ensure the target epitope is solvent-accessible in its physiological oligomerization state. Re-dock your design against a multi-chain structure or a cryo-EM map if available.

- Analyze the designed interface for buried unsatisfied polar atoms using Rosetta HBNet or MolProbity.

- Run a short molecular dynamics (MD) simulation (e.g., 100 ns) to check for rapid destabilization of the binding pose.

- Perform size-exclusion chromatography (SEC) coupled with multi-angle light scattering (SEC-MALS) on the purified binder to check for monodispersity and rule out aggregation.

FAQ 3: How do I balance exploration of novel sequences with the reliability of known scaffolds?

- Thesis Context: This core tension is addressed by hybrid workflows that combine de novo design with functional motif grafting onto stable, proven frameworks.

- Solution: Implement a tiered design protocol. Start with a highly reliable, conserved scaffold for your enzyme or binder (e.g., a TIM barrel, an FN3 scaffold). Use deep learning methods (e.g., ProteinMPNN, RFdiffusion) to generate exploratory sequence variants, but constrain them to maintain the core fold's critical stabilizing residues. Filter these exploratory designs through rigorous physics-based (Rosetta) and learned potential (AlphaFold2 pLDDT) reliability filters before experimental testing.

Experimental Protocols

Protocol 1: In Silico Affinity Maturation of a Designed Binder

Objective: Increase the binding affinity of a computationally designed protein binder through focused sequence exploration. Method:

- Input Structure: Start with the designed binder-target complex.

- Define Designable Regions: Select interfacial residues (within 6Å of the target) for sequence optimization.

- Generate Variants: Use a fixed-backbone sequence design tool (e.g., Rosetta

FastDesign, ProteinMPNN) to propose mutations at designable positions. Generate 10,000-50,000 sequence variants. - Filter for Stability & Affinity: Score all designs using:

- Rosetta

InterfaceAnalyzer(for dG_separated and Interface Score). - AlphaFold2 or AlphaFold-Multimer to predict the complex structure and compute pLDDT and ipTM.

- Rosetta

- Select Top Candidates: Apply multi-parameter filtering (e.g., dG_separated < -15 REU, ipTM > 0.7, no loss of core packing). Select 20-50 designs for experimental testing.

Protocol 2: Functional Validation of a Designed Therapeutic Enzyme

Objective: Characterize the catalytic activity and specificity of a de novo designed enzyme. Method:

- Cloning & Expression: Clone the gene into an appropriate expression vector (e.g., pET series for E. coli). Transform into expression cells.

- Purification: Express protein, lyse cells, and purify via affinity chromatography (His-tag) followed by size-exclusion chromatography (SEC).

- Activity Assay: Set up a reaction mix containing your substrate, appropriate buffer, and purified enzyme. Use a stopped assay or continuous spectroscopic readout (absorbance/fluorescence) to monitor product formation.

- Kinetic Analysis: Vary substrate concentration and fit initial velocity data to the Michaelis-Menten model to derive

k_catandK_M. - Specificity Check: Test against a panel of structurally similar substrates to rule of promiscuous activity.

Table 1: Comparison of Protein Design Software Output Metrics

| Software/Tool | Primary Use | Key Output Metric | Typical Value for a "Good" Design | Computational Cost (GPU/CPU time) |

|---|---|---|---|---|

| Rosetta FastDesign | Sequence design & refinement | Rosetta Energy Units (REU) | Interface dG < -15 REU | High (CPU hours-days) |

| ProteinMPNN | Sequence design | Sequence Recovery / Perplexity | Low perplexity (< 5.0) | Low (GPU minutes) |

| RFdiffusion | De novo backbone generation | pLDDT (predicted) | > 80 | High (GPU hours) |

| AlphaFold2 | Structure prediction | pLDDT & pTM | pLDDT > 80, pTM > 0.7 | Medium (GPU minutes-hours) |

| ESMFold | Structure from sequence | pLDDT | > 70 | Low (GPU minutes) |

Table 2: Experimental Validation Success Rates (Hypothetical Benchmark)

| Design Strategy | Phase | Number of Designs Tested | Success Criterion | Success Rate (%) | Notes |

|---|---|---|---|---|---|

| Pure De Novo (Exploration) | Expression & Solubility | 100 | Soluble, monomeric | ~15% | High failure rate due to folding |

| Grafted Motifs (Balanced) | Expression & Solubility | 100 | Soluble, monomeric | ~65% | Reliable scaffold improves yield |

| Grafted Motifs (Balanced) | Functional Activity | 20 | Measurable binding/activity | ~30% | Functional success requires precise grafting |

| Affinity Maturation (Reliability) | Binding Affinity | 50 | >10x affinity improvement | ~10% | Focused search on known binder |

Workflow and Pathway Diagrams

Title: Balancing Exploration and Reliability in Protein Design Workflow

Title: Computational Affinity Maturation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Therapeutic Protein Design | Example Product/Kit |

|---|---|---|

| High-Fidelity DNA Polymerase | Error-free amplification of designed gene sequences for cloning. | Q5 High-Fidelity DNA Polymerase (NEB) |

| Gibson Assembly Master Mix | Seamless, efficient cloning of multiple DNA fragments (e.g., gene into expression vector). | Gibson Assembly HiFi Master Mix (NEB) |

| Competent E. coli Cells | High-efficiency transformation for library cloning and protein expression. | NEB Stable Competent E. coli, BL21(DE3) |

| Affinity Purification Resin | Rapid, specific purification of tagged recombinant proteins. | Ni-NTA Agarose (QIAGEN), HisTrap HP columns (Cytiva) |

| Size-Exclusion Chromatography Column | Polishing step to separate monomeric protein from aggregates or fragments. | Superdex 75 Increase (Cytiva) |

| Surface Plasmon Resonance (SPR) Chip | Label-free, quantitative measurement of binding kinetics (KD, kon, koff). | Series S Sensor Chip CMS (Cytiva) |

| Fluorogenic/Chromogenic Substrate | Sensitive detection of enzymatic activity for kinetic characterization. | Varied by enzyme class (e.g., from Sigma-Aldrich, Thermo Fisher) |

| Stability Assay Kit | Assessment of protein thermal stability (Tm), a proxy for foldedness and aggregation resistance. | Protein Thermal Shift Dye Kit (Thermo Fisher) |

Overcoming Pitfalls: Troubleshooting Unstable or Non-Functional Designs

Troubleshooting Guides & FAQs

Q1: My purified protein shows high turbidity and precipitates during storage. What tests can confirm aggregation as the primary failure mode? A: This is a classic sign of aggregation. Perform the following diagnostic cascade:

- Dynamic Light Scattering (DLS): Measure the hydrodynamic radius. A polydisperse sample with large species (>100 nm) indicates aggregation.

- Size-Exclusion Chromatography (SEC): Compare the elution profile to a monomeric standard. A prominent peak at the void volume confirms large aggregates.

- Static Light Scattering (SLS) or SEC-MALS: Quantify the absolute molecular weight to distinguish between oligomers and larger aggregates.

Table 1: Quantitative Metrics for Aggregation Diagnosis

| Assay | Key Metric | Normal Range (Monomer) | Aggregation Indicator |

|---|---|---|---|

| DLS | Polydispersity Index (PDI) | PDI < 0.2 | PDI > 0.3, large size peak |

| SEC | Elution Volume (Ve) | Consistent with standard | Peak at column void volume (V0) |

| SEC-MALS | Absolute Mw (kDa) | ~Expected sequence mass | Mw >> Expected mass |

Protocol: Diagnostic SEC-MALS

- Equilibrate an analytical-grade SEC column (e.g., Superdex 200 Increase) with filtered/degassed buffer (e.g., PBS, 20 mM Tris, 150 mM NaCl).

- Calibrate the connected MALS (Multi-Angle Light Scattering) and dRI (differential Refractive Index) detectors according to manufacturer specs.

- Inject 50-100 µL of purified protein sample (0.5-2 mg/mL).

- Analyze data using ASTRA or equivalent software. The weight-average molar mass (Mw) across the peak is calculated directly from light scattering, independent of elution volume.

Q2: How can I differentiate between misfolding and loss of active site integrity? Both lead to loss of function. A: These are distinct failure modes requiring different assays. Misfolding is a global structural defect, while loss of active site integrity can occur in an otherwise folded protein.

Table 2: Differentiating Misfolding vs. Active Site Defects

| Assay | Probes | Result if Misfolded | Result if Active Site Defect Only |

|---|---|---|---|

| Circular Dichroism (CD) | Secondary/tertiary structure | Spectrum deviates wildly from reference | Spectrum may match folded reference |

| Differential Scanning Fluorimetry (DSF) | Thermal stability (Tm) | Significant ΔTm (< 45°C often) | Near-native Tm possible |

| Activity Assay | Substrate turnover | No activity | No activity |

| Ligand Binding (SPR/ITC) | Active site binder | No binding | No or weakened binding |

| Protease Sensitivity | Limited proteolysis | Rapid, non-native cleavage pattern | Native-like resistance pattern |

Protocol: Differential Scanning Fluorimetry (Thermal Shift)

- Prepare a master mix of protein (final conc. 1-5 µM) and a fluorescent dye (e.g., SYPRO Orange, 5X final) in assay buffer.

- Aliquot 20 µL into each well of a 96-well PCR plate. Include buffer-only controls.

- Run a thermal ramp from 25°C to 95°C at a rate of 1°C/min in a real-time PCR machine, monitoring fluorescence.

- Analyze the melt curve derivative to determine the protein's melting temperature (Tm). A ≥5°C decrease from a stable reference suggests destabilization/misfolding.

Q3: What experimental strategies can "rescue" a misfolded or aggregating variant identified in exploration? A: This is the critical pivot from exploration to reliability engineering. Implement a rescue workflow.

Diagram Title: Rescue Workflow for Protein Design Failure Modes

Q4: What are the most critical reagents for troubleshooting these failure modes? A: The Scientist's Toolkit - Research Reagent Solutions

| Reagent / Material | Primary Function in Diagnosis/Rescue |

|---|---|

| SEC-MALS System | Gold standard for quantifying aggregation state and absolute molecular weight in solution. |

| SYPRO Orange Dye | Environment-sensitive fluorescent dye for DSF, reporting on protein thermal unfolding. |

| Analytical SEC Columns (e.g., Superdex, Enrich) | High-resolution separation of monomers from oligomers and aggregates. |

| Chaotropic Agents (Urea, GdnHCl) | For generating unfolding curves (CD, fluorescence) to assess global stability. |

| Chemical Chaperones (e.g., Betaine, Proline, TMAO) | Additives to test for stabilization and suppression of aggregation in buffers. |

| Protease Cocktails (Trypsin, Thermolysin, Proteinase K) | For limited proteolysis assays to probe folding integrity and flexibility. |

| Site-Specific Activity Assay Kits | Quantify loss of catalytic function (e.g., hydrolysis, phosphorylation). |

| Surface Plasmon Resonance (SPR) Chip | Immobilize ligands to measure binding kinetics of designed variants. |

Diagram Title: Protein Design Cycle: From Exploration to Reliable Design

Technical Support Center: Troubleshooting Guides & FAQs

FAQ: Understanding & Applying Core Parameters

Q1: What is the practical effect of the 'temperature' parameter in my protein sequence generation model? Why does a high temperature sometimes produce non-functional or non-physical sequences? A1: Temperature (T) controls the stochasticity of the probability distribution during sequence generation (e.g., in autoregressive or diffusion models). A lower T (e.g., 0.1-0.5) makes the model more deterministic, favoring high-probability (likely reliable) amino acids. A higher T (e.g., 1.0-1.5) flattens the distribution, increasing exploration of lower-probability residues. Non-functional sequences at high T occur because the model excessively explores low-likelihood regions of sequence space, which may violate physical constraints (e.g., improper hydrophobicity, charge clashes). For initial exploration in a new design space, a moderate T (~0.8) is recommended, followed by refinement at lower T.