From Silicon to Lab Bench: A Modern Guide to Validating Computational Protein Designs

This article provides a comprehensive roadmap for researchers and drug development professionals navigating the critical process of experimentally validating computational protein designs.

From Silicon to Lab Bench: A Modern Guide to Validating Computational Protein Designs

Abstract

This article provides a comprehensive roadmap for researchers and drug development professionals navigating the critical process of experimentally validating computational protein designs. It explores the foundational principles of computational protein design, examines cutting-edge methodologies powered by artificial intelligence and deep learning, and addresses common troubleshooting and optimization challenges. A dedicated section on validation strategies and comparative analysis offers a framework for assessing design success, synthesizing key takeaways to highlight future implications for biomedical and clinical research.

The Computational Protein Design Lifecycle: From Inception to Validation

The inverse folding problem represents a fundamental challenge in computational protein design (CPD), tasking researchers with identifying amino acid sequences that fold into a predetermined three-dimensional structure. This problem is conceptually opposite to structure prediction, which determines a protein's 3D conformation from its sequence. The significance of inverse folding lies in its potential to engineer novel proteins with customized functions for therapeutic, industrial, and research applications. However, the problem is inherently underdetermined—countless sequences can theoretically fold into the same backbone structure, yet only a subset will achieve stable folding and maintain desired biological activity. This article examines contemporary computational approaches to inverse folding, comparing their methodologies, performance metrics, and experimental validation outcomes to define the current state of the field and guide researcher selection of appropriate tools for specific protein engineering challenges.

Computational Architectures for Inverse Folding

Multimodal and Retrieval-Augmented Frameworks

Recent advances have moved beyond single-modality models toward architectures that integrate multiple data types. ABACUS-T exemplifies this trend, unifying detailed atomic sidechains, ligand interactions, a pre-trained protein language model, multiple backbone conformational states, and evolutionary information from multiple sequence alignment (MSA) into a single framework. It employs a sequence-space denoising diffusion probabilistic model (DDPM) that progressively refines amino acid sequences from a fully "noised" starting point, with each denoising step conditioned on the input protein backbone structure [1].

The newly introduced PRISM framework incorporates a retrieval-augmented generation (RAG) mechanism, explicitly reusing fine-grained structure-sequence patterns conserved across natural proteins. This approach treats each residue with its local 3D neighborhood as a "potential motif," retrieving similar motifs from a database of known proteins to inform sequence design. Formulated as a latent-variable probabilistic model, PRISM factors the design process into representation, retrieval, attribution, and emission components, creating a theoretically grounded and computationally efficient architecture [2].

Knowledge Distillation and Regularization Approaches

AlphaFold distillation represents an innovative approach that leverages structure prediction networks to enhance inverse folding. This method uses knowledge distillation to create a faster, differentiable model (AFDistill) that predicts AlphaFold's confidence metrics (pTM/pLDDT), bypassing the computational expense of full structure prediction. The distilled model serves as a structure consistency regularizer during inverse folding training, integrating AlphaFold's domain expertise directly into the design process. This technique has demonstrated 1-3% improvements in sequence recovery and up to 45% enhancement in protein diversity while maintaining structural integrity [3].

Structure-Informed Language Models

General protein language models augmented with structural information offer another compelling approach. These models train on millions of non-redundant sequence-structure pairs using the inverse folding objective, learning to predict amino acid identities based on both preceding sequence context and full backbone coordinates. This architecture enables zero-shot mutational effect prediction without task-specific training data, successfully guiding evolution across diverse protein families and complexes. When applied to antibody-antigen complexes, these models demonstrate exceptional performance in identifying beneficial mutations that enhance binding affinity, despite being trained solely on single-chain proteins [4].

Performance Benchmarking and Comparative Analysis

Sequence Recovery and Structural Accuracy

Table 1: Performance Metrics Across Inverse Folding Methods

| Method | Architecture | Sequence Recovery (%) | Diversity Score | TM-score | Perplexity |

|---|---|---|---|---|---|

| GVP (Baseline) | Geometric Vector Perceptron GNN | 38.6 | 15.1 | 0.79 | - |

| GVP + SC Regularization | GNN with Structure Consistency | 40.8-42.8 | 22.6 | 0.92-0.95 | - |

| PRISM | Retrieval-Augmented Generation | State-of-the-art | - | Improved | State-of-the-art |

| ABACUS-T | Multimodal Diffusion | - | - | - | - |

| AlphaFold Distill | Knowledge Distillation | +1-3% vs. Baseline | +45% vs. Baseline | Maintained | Lower |

Note: Performance metrics vary across different benchmarking datasets including CATH-4.2, TS50, TS500, and CAMEO 2022. Dashes indicate metrics not explicitly reported in the reviewed literature [3] [2].

Retrieval-augmented approaches like PRISM establish new state-of-the-art performance across multiple benchmarks (CATH-4.2, TS50, TS500, CAMEO 2022), achieving superior perplexity and amino acid recovery while improving foldability metrics (RMSD, TM-score, pLDDT). The explicit reuse of conserved local motifs provides an inductive bias that enhances both sequence and structural accuracy. Regularization methods demonstrate more modest gains in sequence recovery (1-3% improvements) but substantially improve diversity (up to 45%), addressing the critical need for varied sequences that maintain structural consistency [3] [2].

Performance varies significantly between core and surface residues, with core residues exhibiting higher recovery but lower diversity due to structural constraints. Surface residues show the opposite pattern, offering greater design flexibility. This differential performance highlights how architectural choices affect various regions of the target protein [3].

Experimental Validation and Functional Success

Table 2: Experimental Validation of Designed Proteins

| Method | Protein System | Thermostability (ΔTm) | Functional Enhancement | Experimental Success Rate |

|---|---|---|---|---|

| ABACUS-T | Allose binding protein | ≥10°C | 17-fold higher affinity | High (multiple successful designs) |

| Endo-1,4-β-xylanase | ≥10°C | Maintained or surpassed wild-type activity | High | |

| TEM β-lactamase | ≥10°C | Maintained or surpassed wild-type activity | High | |

| OXA β-lactamase | ≥10°C | Altered substrate selectivity | High | |

| Structure-Informed Language Model | Ly-1404 Antibody | - | 26-fold improved neutralization vs BQ.1.1 | Leading success rate among ML methods |

| SA58 Antibody | - | 11-fold improved neutralization | All tested combinations showed improved activity | |

| Various (10 proteins) | - | Identified top-percentile substitutions | 9/10 proteins vs 2/10 for sequence-only |

The ultimate validation of inverse folding methods comes from experimental characterization of designed proteins. ABACUS-T demonstrates remarkable experimental success, with designed proteins achieving substantial thermostability improvements (ΔTm ≥ 10°C) while maintaining or enhancing function across multiple test cases. These enhancements were achieved with only a few tested sequences, each containing dozens of simultaneous mutations—a feat difficult to accomplish with traditional directed evolution [1].

Structure-informed language models achieve exceptional experimental success rates when applied to antibody engineering, surpassing previously reported machine learning-guided directed evolution methods. These models identified combinations of synergistic mutations that significantly improved neutralization potency and binding affinity against antibody-escaped viral variants, with all experimentally tested designs showing improved activity [4].

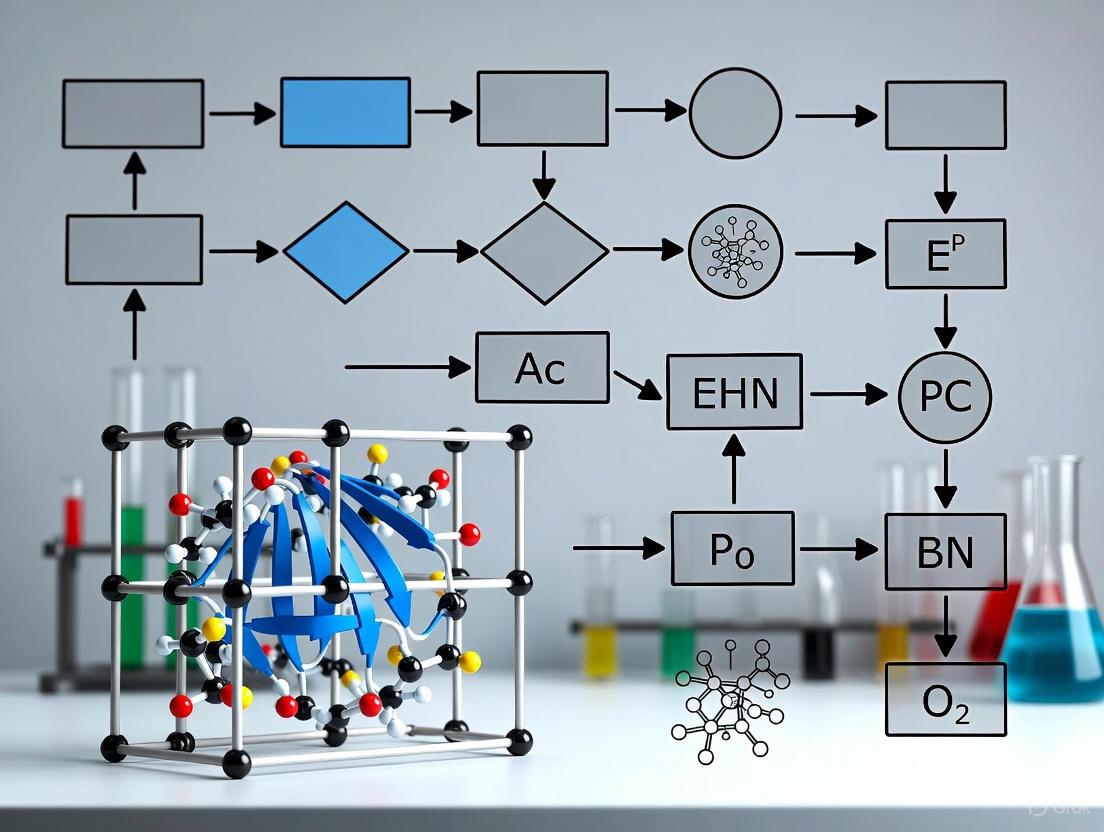

Diagram 1: Inverse Folding and Validation Workflow. The core process of computational protein design begins with a target structure, progresses through sequence design, and requires experimental validation to confirm function.

Methodologies and Experimental Protocols

Benchmarking Frameworks and Community Standards

The Protein Engineering Tournament has emerged as a standardized framework for evaluating computational protein design methods. This fully-remote competition consists of predictive and generative rounds, challenging participants to predict biophysical properties from sequences and subsequently design novel sequences that maximize desired properties. The tournament provides donated datasets covering diverse enzyme targets (aminotransferase, α-amylase, imine reductase, alkaline phosphatase, β-glucosidase, xylanase) with measured properties including expression, specific activity, and thermostability [5].

The tournament employs two evaluation tracks: zero-shot prediction without training data, and supervised prediction with pre-split training and test sets. This structure tests both the intrinsic generalizability of algorithms and their performance when trained on specific protein families. Such community benchmarks create transparent evaluation standards and accelerate methodological progress through direct comparison [5].

Experimental Validation Protocols

Experimental validation of designed proteins follows standardized biophysical and functional assays:

Thermostability Assessment: Melting temperature (Tm) measurements via circular dichroism or differential scanning fluorimetry to quantify ΔTm relative to wild-type.

Functional Characterization:

- Enzyme-specific activity assays with appropriate substrates

- Binding affinity measurements (KD) for binding proteins and antibodies via surface plasmon resonance or similar techniques

- Cellular neutralization assays for therapeutic antibodies

Structural Integrity Verification:

- X-ray crystallography or cryo-EM to confirm correct folding

- Comparison of experimental structures with design targets via RMSD and TM-score calculations

These protocols ensure consistent evaluation across different design methods and protein systems. The high experimental success rates reported for contemporary methods (with many studies testing fewer than 50 designs) demonstrates remarkable advancement in computational precision [1] [4].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Inverse Folding Validation

| Reagent / Resource | Function | Example Application |

|---|---|---|

| CATH Dataset | Curated protein structure classification | Training and benchmarking inverse folding algorithms |

| AlphaFold Protein Structure Database | Repository of predicted structures | Source of backbone structures for design |

| MGnify Protein Database | Catalog of non-redundant protein sequences | Source of evolutionary information for MSA |

| ProtaBank | Repository of protein engineering data | Limited-scope datasets for predictive modeling |

| ProteinGym | Curated deep mutational scanning benchmarks | Assessing mutational effect prediction |

| International Flavors and Fragrances Datasets | Multi-objective enzyme performance data | Tournament benchmarking for industrial enzymes |

| ESM Metagenomic Atlas | Vast collection of predicted structures | Expanding structural diversity for training |

The computational tools and experimental resources available for inverse folding research have expanded significantly. Benchmark datasets like CATH provide standardized testing grounds, while massive sequence and structure databases (MGnify, ESM Metagenomic Atlas, AlphaFold Database) offer training data and evolutionary context. Specialized resources like ProteinGym provide curated mutational scanning data for specific functional assessment [5] [6].

The emergence of donated industrial datasets (e.g., from International Flavors and Fragrances and Codexis) bridges academic research and industrial application, providing performance data on enzymes under realistic conditions. These resources collectively enable comprehensive training, benchmarking, and validation of inverse folding methods [5].

Diagram 2: Multimodal Data Integration. Modern inverse folding approaches combine structural, evolutionary, and complex information to generate sequences with higher functional success rates.

The inverse folding problem remains the core challenge of computational protein design, but contemporary approaches have dramatically advanced its solution. Multimodal frameworks like ABACUS-T, retrieval-augmented methods like PRISM, and distillation techniques represent distinct architectural philosophies with complementary strengths. Experimental validation confirms that these methods can generate functional proteins with enhanced properties, often with surprisingly few design-test cycles compared to traditional directed evolution.

The emerging paradigm integrates multiple data modalities—atomic structures, evolutionary information, conformational dynamics, and ligand interactions—to maintain function while enhancing stability and other desirable properties. Community benchmarking initiatives like the Protein Engineering Tournament establish transparent evaluation standards and accelerate progress. As these methods mature, inverse folding is poised to transform protein engineering across therapeutic development, industrial biocatalysis, and basic biological research.

For researchers selecting inverse folding approaches, considerations should include target protein complexity, available structural and evolutionary information, desired properties (stability, function, specificity), and experimental throughput. The methods profiled here offer diverse solutions to the fundamental challenge of designing sequences for structure, collectively expanding the accessible protein universe and enabling new applications across biotechnology.

In the field of computational protein design, energy functions and physical models serve as the fundamental scoring engine that powers the discrimination between viable and non-viable protein structures. Accurate scoring is the critical bottleneck in computational pipelines; without reliable functions to differentiate between native-like and non-native binding complexes, the accuracy of docking and design tools cannot be guaranteed [7]. These scoring functions leverage our understanding of molecular driving forces and evolutionary constraints to evaluate the structural and functional plausibility of computationally generated protein models, enabling researchers to sift through millions of potential conformations to identify those most likely to exist in nature [7] [8].

The revolution in deep learning has dramatically transformed this field, introducing new architectures that incorporate physical and biological knowledge about protein structure into their design [8]. Modern approaches now combine traditional physics-based models with evolutionary insights derived from multiple sequence alignments, creating hybrid systems that achieve unprecedented accuracy in protein structure prediction and design [8] [9]. As we examine the current landscape of scoring methodologies, it becomes evident that the integration of physical models with data-driven approaches represents the most promising path forward for computational protein design, enabling applications from drug discovery to the development of novel enzymes and sustainable biomaterials [10] [11].

Classical and Deep Learning-Based Scoring Approaches

Scoring functions for protein design and docking can be broadly categorized into classical approaches and modern deep learning-based methods. Classical approaches have traditionally dominated the field and can be further classified into distinct subtypes based on their theoretical foundations and implementation strategies [7].

Table 1: Categories of Classical Scoring Functions

| Type | Theoretical Basis | Representative Methods | Strengths | Limitations |

|---|---|---|---|---|

| Physics-Based | Classical force fields summing Van der Waals, electrostatic interactions, solvation effects [7] | Molecular dynamics simulations [12] | Strong theoretical foundation based on physical principles | High computational cost; challenging for large systems [7] |

| Empirical-Based | Weighted sum of energy terms calibrated against known binding affinities [7] | FireDock, RosettaDock, ZRANK2 [7] | Faster computation than physics-based methods; simpler implementation [7] | Dependent on quality and representativeness of training data |

| Knowledge-Based | Pairwise distances converted to potentials via Boltzmann inversion [7] | AP-PISA, CP-PIE, SIPPER [7] | Good balance between accuracy and speed [7] | Limited by available structural data in databases |

| Hybrid Methods | Combination of energetic and empirical criteria, sometimes with experimental data [7] | PyDock, HADDOCK [7] | Leverages multiple information sources; can incorporate experimental constraints | Parameter weighting can be challenging; complex implementation |

In contrast to these classical approaches, deep learning models offer alternatives to explicit empirical or mathematical functions for scoring protein complexes [7]. Methods such as AlphaFold2 and RoseTTAFold diffusion (RFdiffusion) have demonstrated remarkable capabilities in protein structure prediction and design by incorporating novel neural network architectures that jointly embed multiple sequence alignments and pairwise features [8] [9]. These approaches leverage the deep understanding of protein structure implicit in powerful structure prediction networks, fine-tuning them for specific design tasks such as unconditional protein monomer generation, protein binder design, and symmetric oligomer design [9].

Table 2: Deep Learning-Based Protein Design Methods

| Method | Architecture | Key Applications | Validation Approach |

|---|---|---|---|

| RFdiffusion | Diffusion model fine-tuned from RoseTTAFold structure prediction network [9] | Unconditional monomer design, binder design, symmetric architectures [9] | Experimental characterization of hundreds of designed symmetric assemblies and binders [9] |

| AlphaFold2 | Evoformer blocks with structure module for explicit 3D coordinate prediction [8] | Protein structure prediction with atomic accuracy [8] | CASP14 assessment; comparison to experimental structures [8] |

| ESMBind | Combined ESM-2 and ESM-IF foundation models [11] | Prediction of metal-binding proteins and protein-ligand interactions [11] | Comparison to X-ray crystallography data from synchrotron facilities [11] |

| ProteinMPNN | Neural network for sequence design given protein structures [9] | Protein sequence design for backbone structures generated by RFdiffusion [9] | In silico validation using AlphaFold2 structure predictions [9] |

Comparative Performance Analysis of Scoring Functions

Recent comprehensive evaluations have systematically compared the performance of classical and deep learning-based scoring functions across multiple datasets, revealing distinct strengths and limitations for each approach. These assessments typically measure the ability of scoring functions to identify near-native protein complex structures from decoy conformations, with success rates quantified as the percentage of targets for which a scoring function can correctly identify native-like structures [7].

Performance Across Diverse Datasets

A comprehensive survey evaluated eight classical methods and four cutting-edge deep learning-based methods across seven public and popular datasets to enable direct comparison of their capabilities [7]. The results demonstrated that while classical methods offer computational efficiency and interpretability, deep learning approaches generally achieve higher accuracy, particularly for complex targets with limited homology to known structures. The integration of physical constraints within deep learning architectures, as exemplified by AlphaFold2's incorporation of evolutionary, physical, and geometric constraints of protein structures, appears to be a key factor in this performance advantage [8].

Runtime Considerations for Large-Scale Applications

The computational efficiency of scoring functions directly impacts their utility in large-scale docking and design applications. Classical knowledge-based methods such as AP-PISA, CP-PIE, and SIPPER typically offer the best balance between speed and accuracy among traditional approaches, while physics-based methods incur significantly higher computational costs due to their explicit modeling of molecular interactions [7]. Deep learning methods, though computationally intensive during training, can achieve rapid inference times after training is complete, making them suitable for high-throughput screening applications once deployed [11]. For instance, the ESMBind workflow can run hundreds of thousands of simulations daily, dramatically accelerating the research process compared to experimental approaches [11].

Experimental Protocols for Validating Scoring Functions

Robust experimental validation is essential for establishing the reliability of computational scoring functions. The following protocols represent standardized methodologies for assessing whether computationally designed proteins fold and function as intended.

In Silico Validation Pipeline

Before experimental testing, computational designs typically undergo rigorous in silico validation. For RFdiffusion, this involves using AlphaFold2 to predict structures from single sequences, with success defined by three criteria: (1) high confidence predictions (mean pAE < 5), (2) global backbone accuracy within 2 Å RMSD of the designed structure, and (3) high local accuracy (within 1 Å backbone RMSD) on any scaffolded functional site [9]. This stringent in silico validation has been shown to correlate with experimental success and provides an efficient filter before committing resources to experimental characterization [9].

Experimental Characterization of Designed Proteins

Comprehensive experimental validation involves multiple techniques to assess folding, stability, and function:

- Circular Dichroism (CD) Spectroscopy: Used to verify secondary structure content and thermal stability. For example, RFdiffusion-designed proteins showed CD spectra consistent with their designed mixed alpha–beta topologies and exhibited exceptional thermostability [9].

- X-ray Crystallography and Cryo-EM: Provide high-resolution structural validation. The cryo-EM structure of an RFdiffusion-designed binder in complex with influenza hemagglutinin confirmed remarkable accuracy, being nearly identical to the design model [9].

- Functional Assays: Enzyme activity measurements assess functional success. In one study, researchers expressed and purified over 500 natural and generated sequences, defining experimental success as both expression in E. coli and activity above background in in vitro assays [13].

- Mechanical Stability Testing: For proteins designed for mechanical strength, single-molecule force spectroscopy measures unfolding forces. One study demonstrated computationally designed proteins with unfolding forces exceeding 1,000 pN, approximately 400% stronger than natural titin immunoglobulin domains [12].

Figure 1: Experimental validation workflow for computationally designed proteins, integrating both in silico and experimental verification steps.

Successful implementation of protein design workflows requires access to specialized computational tools and experimental resources. The following table outlines key components of the protein design toolkit.

Table 3: Essential Research Resources for Protein Design and Validation

| Resource Category | Specific Tools/Methods | Primary Function | Application in Workflow |

|---|---|---|---|

| Structure Prediction | AlphaFold2, RoseTTAFold, ESMFold [8] [9] | Predict 3D structures from amino acid sequences | Initial structure assessment, validation of designs |

| Generative Design | RFdiffusion, ProteinMPNN, ESM-MSA [9] [13] | Create novel protein sequences and structures | De novo protein design, sequence optimization |

| Specialized Scoring | FireDock, PyDock, ZRANK2, HADDOCK [7] | Evaluate protein-protein interaction quality | Docking refinement, complex structure selection |

| Molecular Visualization | PyMOL, ChimeraX, UCSF Chimera | 3D structure visualization and analysis | Result interpretation, figure generation |

| Experimental Validation | X-ray crystallography, Cryo-EM, CD spectroscopy [9] | Experimental structure determination | Final validation of designed proteins |

| Functional Assays | Enzyme activity assays, binding studies [13] | Measure biochemical function | Verification of designed protein activity |

Integrated Workflows and Future Directions

The most successful protein design approaches integrate multiple scoring strategies into cohesive workflows that leverage the complementary strengths of different methods. For example, the RFdiffusion method combines diffusion-based backbone generation with ProteinMPNN sequence design, followed by AlphaFold2-based validation [9]. This integrated pipeline enables the creation of novel proteins with specified structural and functional properties, as demonstrated by the experimental characterization of hundreds of designed symmetric assemblies, metal-binding proteins, and protein binders [9].

Future developments in scoring functions will likely address several current challenges, including the prediction of protein complexes with higher accuracy, modeling of conformational dynamics, and design of proteins with novel functions beyond those found in nature [14]. The incorporation of additional physical constraints, such as mechanical stability parameters inspired by natural mechanostable proteins like titin and silk fibroin, represents another promising direction [12]. As these methods mature, computational scoring engines will continue to expand their capabilities, pushing the boundaries of what is possible in protein design and opening new avenues for therapeutic development, biomaterial fabrication, and sustainable biotechnology [10] [11].

Figure 2: Integration of physical models with evolutionary and geometric information creates powerful hybrid scoring functions for diverse applications.

The Role of the Unfolded State and the Principles of Negative Design

Protein stability and folding are governed by the energy gap between the native state and the ensemble of unfolded, misfolded, and transition states [15]. Within this framework, two fundamental design strategies emerge: positive design and negative design. Positive design refers to the stabilization of the native fold by introducing favorable, attractive interactions between residues that are in contact in the native state. In contrast, negative design aims to widen the energy gap by selectively destabilizing non-native conformations, primarily through the introduction of repulsive interactions or unfavorable contacts that are encountered in misfolded states but are absent in the native structure [16] [15]. The stability of a protein is thus a double-edged sword, determined as much by the destabilization of incorrect states as by the stabilization of the correct one. Furthermore, the unfolded state ensemble is not a random coil but a dynamic entity with transient structural elements that can significantly influence folding pathways and stability. Rational targeting of this unfolded state provides a powerful, though less explored, route to engineering protein stability and function [17]. This guide objectively compares the performance of design strategies that target these different states, providing experimental data and methodologies central to contemporary computational protein design research.

Core Principles: Positive vs. Negative Design

Strategic Comparison and Applicability

The choice between emphasizing positive or negative design is not arbitrary; it is influenced by the inherent structural properties of the target protein fold. Research on lattice models and real proteins indicates that the balance between these strategies is largely determined by the protein's average "contact-frequency"—the fraction of states in a sequence's conformational ensemble in which a given pair of residues is in contact [16].

- Positive Design is favored in proteins with a low average contact-frequency. In these folds, the interactions that stabilize the native state are rarely found in non-native states. Therefore, simply strengthening these native contacts effectively widens the energy gap without significantly raising the energy of the unfolded ensemble [16].

- Negative Design is crucial in proteins with a high average contact-frequency. Here, the stabilizing interactions native to the fold are common in many non-native conformations. Relying solely on positive design would therefore also stabilize misfolded states. To achieve specificity and stability, negative design must be employed to make these competing non-native conformations energetically unfavorable [16].

This trade-off is strong and nearly perfect, as demonstrated by a near-perfect negative correlation (r = -0.96) between the contributions of positive and negative design in lattice model studies [16]. The principles are summarized in the table below.

Table 1: Comparative Analysis of Positive and Negative Design Strategies

| Feature | Positive Design | Negative Design |

|---|---|---|

| Primary Goal | Stabilize the native state | Destabilize non-native/misfolded states |

| Molecular Mechanism | Introduce favorable, attractive interactions between residues in contact in the native structure [15]. | Introduce repulsive interactions between residues that are not in contact in the native state but may interact in misfolded states [15]. |

| Energetic Outcome | Lowers the energy of the native state (ΔEnative ↓) | Raises the energy of misfolded states (ΔEmisfolded ↑) |

| Sequence Signature | Enrichment of strongly hydrophobic residues to drive burial in the native core [15]. | Enrichment of charged residues (e.g., D, E, K, R) that repel each other in non-native contexts [15]. |

| Correlated Mutations | Associated with residues in direct contact in the native state. | Can occur between residues distant in the native structure but which may contact in misfolded conformations [16] [15]. |

| Ideal Application | Folds with low average contact-frequency [16]. | Folds with high average contact-frequency, disordered proteins, and proteins dependent on chaperonins [16]. |

The Unfolded State as a Design Target

A distinct strategy from negative design is the direct targeting of the unfolded state ensemble. Whereas negative design specifically destabilizes compact misfolds, unfolded state design aims to reduce the conformational entropy of the denatured chain, thereby making its conversion to the ordered native state more favorable without introducing specific repulsive contacts.

A key method involves substituting glycine residues, which have unique conformational freedom, with more restricted residues. However, because glycine is often found in tight turns or helical C-capping motifs that require positive φ angles—conformations disfavored for L-amino acids—replacing them with D-amino acids like D-alanine has proven effective. This substitution reduces the configurational entropy of the unfolded state while maintaining compatibility with the native backbone geometry [17].

Experimental testing across multiple proteins, including the engrailed homeodomain (EH) and the GA albumin-binding domain (GA), has shown that Gly-to-D-Ala substitutions at solvent-exposed C-capping positions can increase stability by ~0.6 to 1.9 kcal/mol [17]. This confirms that targeting the unfolded state is a viable and general strategy for rational protein stabilization.

Experimental Validation and Performance Comparison

Quantifying Stability and Folding Kinetics

The efficacy of any design strategy must be rigorously validated through experimental biophysics. The table below summarizes key experimental protocols and the type of data they yield for evaluating designed proteins.

Table 2: Key Experimental Methods for Validating Designed Proteins

| Method | Experimental Protocol | Key Measured Parameters | Data Interpretation |

|---|---|---|---|

| Thermal Denaturation | Protein sample is heated while monitoring a signal (e.g., fluorescence, CD) sensitive to structure. | Melting temperature (Tm), enthalpy change (ΔH). | Higher Tm indicates greater thermal stability. |

| Chemical Denaturation | Protein is titrated with a denaturant (e.g., urea, GdmCl) while monitoring structure. | Free energy of unfolding (ΔGunf), m-value. | More positive ΔGunf indicates greater thermodynamic stability. |

| Laser Temperature-Jump | A laser pulse rapidly increases sample temperature, and relaxation to equilibrium is monitored. | Folding/unfolding rate constants (kf, ku). | Faster kf indicates accelerated folding, often from a stabilized transition state [18]. |

| Single-Molecule Force Spectroscopy (AFM) | The protein is mechanically unfolded using an atomic force microscope tip. | Unfolding force, contour length of unfolded chain. | Higher unfolding force indicates greater mechanical stability, often from shearing hydrogen bonds [12]. |

Performance Data from Design Studies

The following table compiles experimental data from various studies that implemented positive, negative, or unfolded state design strategies, providing a direct comparison of their outcomes.

Table 3: Experimental Performance of Proteins from Different Design Strategies

| Design Strategy / Protein | Experimental Change | Measured Effect | Interpretation & Implication |

|---|---|---|---|

| Unfolded State Design (Gly→D-Ala) | |||

| NTL9 [17] | ΔΔG° | +1.87 kcal/mol (stabilizing) | Reduced unfolded state entropy without native state clashes. |

| UBA Domain [17] | ΔΔG° | +0.6 kcal/mol (stabilizing) | |

| Negative & Positive Design (Thermal Adaptation) | |||

| Model Thermophilic Proteins [15] | Amino Acid Composition | Increased IVYWREL content (Hydrophobic + Charged) | "From both ends of hydrophobicity scale" trend: hydrophobics for positive, charged for negative design. |

| Positive Design (Hydrogen Bond Maximization) | |||

| De Novo Superstable β-sheet [12] | Unfolding Force (AFM) | >1000 pN (400% stronger than titin Ig domain) | Maximized backbone H-bond network confers extreme mechanostability. |

| Thermal Stability | Withstood 150°C | ||

| Positive Design (Transition State Stabilization) | |||

| GTT mutant of FiP35 WW domain [18] | ΔΔG° | Increased stability vs. wild-type | Computational design stabilized the turn in the transition state, accelerating folding. |

| Folding Rate | Increased rate vs. wild-type |

Visualization of Workflows and Concepts

The Energy Landscape of Protein Design

The following diagram illustrates the core concepts of how positive, negative, and unfolded state design strategies manipulate the energy landscape to achieve a stable, well-folded protein.

Integrated Computational-Experimental Workflow

This diagram outlines a generalized workflow for the computational design and experimental validation of proteins, integrating the strategies discussed in this guide.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of the experimental protocols mentioned in this guide requires specific reagents and instrumentation. The following table details key solutions and materials essential for this field of research.

Table 4: Essential Research Reagents and Materials for Protein Design Validation

| Item | Function/Application | Example Use-Case |

|---|---|---|

| Urea / Guanidine HCl | Chemical denaturants used to progressively unfold proteins in solution for equilibrium unfolding experiments. | Determining the free energy of unfolding (ΔGunf) and m-value via chemical denaturation curves [17]. |

| Fluorescent Tryptophan Analog | Intrinsic fluorophore used to monitor changes in the local protein environment during folding/unfolding. | Tracking real-time fluorescence changes during temperature-jump relaxation kinetics or denaturation titrations [18]. |

| Size-Exclusion Chromatography (SEC) Resins | For purifying folded proteins based on their hydrodynamic radius and assessing sample monodispersity. | Separating correctly folded monomers from aggregates or misfolded species after protein expression and purification. |

| D-Amino Acids (e.g., D-Alanine) | Non-natural amino acids used in solid-phase peptide synthesis or chemical ligation to incorporate specific conformational constraints. | Replacing glycine in C-capping motifs or turns to reduce unfolded state entropy without causing steric clashes [17]. |

| Molecular Dynamics Software (GROMACS, CHARMM) | Software for performing all-atom molecular dynamics simulations and free energy calculations. | Validating the structural and dynamic properties of designed proteins and predicting stability changes from mutations [17] [12]. |

| Double-Mutant Cycle (DMC) Analysis | An experimental method to measure the energetic coupling between two residues, revealing direct or allosteric interactions. | Quantifying the strength of both native (short-range) and non-native (long-range) pairwise interactions in a protein [16]. |

The fields of protein engineering and design have long been driven by two complementary paradigms: rational computational design and laboratory-directed evolution. Computational protein design (CPD) employs advanced algorithms, physics-based models, and machine learning to predict protein structures and design sequences that fold into desired conformations with specific functions [19]. In contrast, directed evolution (DE) mimics natural selection in the laboratory through iterative cycles of mutagenesis and screening to optimize protein fitness for a desired application [20]. While directed evolution requires no prior structural knowledge and has proven highly successful for optimizing existing protein functions, it can be inefficient when mutations exhibit non-additive, or epistatic, behavior and struggles to explore vast sequence spaces comprehensively [21]. Computational design provides a rational framework for creating entirely new protein folds and functions but often suffers from inaccuracies in energy functions and limited consideration of functional dynamics.

The integration of these approaches creates a powerful synergistic workflow that leverages the strengths of each method while mitigating their individual limitations. This review compares the performance, experimental validation, and practical implementation of integrated computational design and directed evolution platforms, providing researchers with objective data to guide methodology selection for protein engineering projects.

Performance Comparison of Integrated Platforms

Recent advancements have produced several distinct frameworks for integrating computational design with directed evolution. The table below compares the performance characteristics of three prominent approaches based on published experimental data.

Table 1: Performance Comparison of Integrated Computational Design and Directed Evolution Platforms

| Platform/Approach | Key Methodology | Reported Performance | Experimental Validation | Primary Applications |

|---|---|---|---|---|

| Computer-Aided Protein Directed Evolution (CAPDE) [22] | Computational tools to assist DE by analyzing library diversity, evolutionary conservation, and mutational effects | High frequency of active variants in focused libraries; Reduced screening burden | Improved activity/stability of biocatalysts under unnatural conditions [22] | Enzyme engineering for thermo stability, solvent tolerance, enzymatic activity |

| Active Learning-Assisted Directed Evolution (ALDE) [21] | Iterative machine learning with uncertainty quantification to explore epistatic protein landscapes | 12% to 93% product yield in 3 rounds; ~0.01% of design space explored [21] | Optimization of non-native cyclopropanation reaction in protoglobin; Computational simulations on protein fitness landscapes [21] | Optimizing proteins with strong epistatic effects; Navigating rugged fitness landscapes |

| Automated Continuous Evolution (iAutoEvoLab) [23] | Industrial automation coupled with genetic circuits for growth-coupled continuous evolution | Successful evolution of T7 RNA polymerase fusion protein (CapT7) with novel mRNA capping function [23] | Direct application in in vitro mRNA transcription and mammalian systems [23] | High-throughput protein engineering; Systematic exploration of protein adaptive landscapes |

Experimental Protocols and Methodologies

CAPDE Workflow and Implementation

The Computer-Aided Protein Directed Evolution (CAPDE) approach encompasses four major computational areas that assist directed evolution experiments [22]:

Library Characterization: Tools including MAP2.03D and PEDEL-AA provide statistical analysis of mutant libraries at the protein level, predicting residue mutability and amino acid substitution patterns resulting from random mutagenesis methods [22].

Evolutionary Conservation Analysis: Servers such as ConSurf use multiple sequence alignment (MSA) to identify evolutionarily conserved and variable regions, guiding focused library design to functionally significant regions [22].

Structure-Based Design: Tools utilizing protein structural data to identify key residues for mutagenesis, particularly those surrounding active sites but located in the second coordination sphere [22].

Mutational Effect Prediction: Machine learning and statistical approaches predict the effects of mutations on protein stability and function by estimating relative free energy changes [22].

Experimental validation of CAPDE has demonstrated successful engineering of cytochrome P450BM-3, D-amino acid oxidase, phytase, and other enzymes with improved catalytic properties and stability [22].

ALDE Experimental Protocol

The Active Learning-Assisted Directed Evolution (ALDE) methodology was recently validated through optimization of five epistatic residues in the active site of a Pyrobaculum arsenaticum protoglobin (ParPgb) for enhanced cyclopropanation activity [21]. The experimental protocol comprised:

Table 2: Key Research Reagent Solutions for ALDE Experiments

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Protein Scaffold | Pyrobaculum arsenaticum protoglobin (ParPgb) | Engineered hemoprotein with high thermostability (T50 ~ 60°C) and small size (~200 aa) [21] |

| Reaction Components | 4-vinylanisole (1a), ethyl diazoacetate (EDA) | Substrates for cyclopropanation reaction to produce cyclopropanes trans-2a and cis-2a [21] |

| Mutagenesis Method | PCR-based mutagenesis with NNK degenerate codons | Simultaneous mutation at five active-site positions (W56, Y57, L59, Q60, F89) [21] |

| Analytical Method | Gas chromatography | Screening for cyclopropanation products (yield and diastereomer selectivity) [21] |

| ML Model | Batch Bayesian optimization with supervised learning | Mapping sequence to fitness; prioritization of variants for subsequent screening rounds [21] |

Step-by-Step Workflow:

- Define Combinatorial Space: Select five active-site residues (W56, Y57, L59, Q60, F89) known to impact non-native activity with evidence of epistasis [21].

- Initial Library Construction: Generate variants through sequential rounds of PCR-based mutagenesis using NNK degenerate codons at all five positions.

- Primary Screening: Assess cyclopropanation activity using gas chromatography to measure yield and diastereomer selectivity.

- Active Learning Cycle:

- Train supervised machine learning model on collected sequence-fitness data

- Apply acquisition function to rank all sequences in design space

- Select top N variants for subsequent experimental testing

- Iterative Optimization: Repeat active learning cycle for three rounds, significantly improving the yield of desired cyclopropanation product from 12% to 93% with high diastereoselectivity (14:1) [21].

The ALDE workflow demonstrated particular effectiveness for navigating rugged fitness landscapes with strong epistatic interactions, where traditional directed evolution approaches stagnated at local optima [21].

Automated Continuous Evolution System

The iAutoEvoLab platform represents an industrial-grade automated approach to protein evolution that integrates continuous evolution with high-throughput screening [23]:

Key System Components:

- OrthoRep Continuous Evolution System: Engineered for growth-coupled evolution of proteins with diverse functionalities [23].

- Genetic Circuits: Implement dual selection systems (e.g., for improving lactate sensitivity of LldR) and NIMPLY circuits (e.g., for increasing operator selectivity of LmrA) [23].

- Automated Laboratory Infrastructure: Enables continuous and scalable protein evolution with minimal human intervention, operational for approximately one month autonomously [23].

Experimental Implementation: The platform successfully evolved proteins from inactive precursors to fully functional entities, notably generating a T7 RNA polymerase fusion protein (CapT7) with novel mRNA capping functionality that was directly applicable to in vitro mRNA transcription and mammalian systems [23]. This integrated system demonstrates how automation can dramatically accelerate the protein engineering cycle while systematically exploring protein adaptive landscapes.

Workflow Visualization

The synergistic relationship between computational design and directed evolution can be visualized through the following workflow, which integrates computational prediction with experimental validation in an iterative feedback loop:

Integrated Computational and Experimental Workflow for Protein Engineering

This workflow illustrates how computational design informs initial library generation, followed by experimental screening and data collection, with machine learning bridging the cycle through iterative refinement based on empirical data. The feedback loop enables continuous improvement of protein variants through successive rounds of computational prediction and experimental validation.

Discussion and Future Perspectives

The integration of computational design with directed evolution represents a paradigm shift in protein engineering, overcoming limitations of both individual approaches. Performance data across multiple platforms demonstrates that synergistic methods consistently outperform traditional directed evolution, particularly for challenging engineering tasks involving epistatic residues or novel functional designs.

Key Advantages of Integrated Approaches:

- Efficiency: ALDE achieved optimization of five epistatic residues exploring only ~0.01% of design space [21]

- Functionality: Automated continuous evolution generated novel protein functions (mRNA capping activity) not present in starting scaffolds [23]

- Precision: CAPDE enables focused library generation with higher frequencies of improved variants [22]

Future developments in artificial intelligence-guided protein design [12], expanded continuous evolution systems [23], and more sophisticated active learning algorithms [21] will further enhance the capabilities of integrated platforms. As these technologies mature, they promise to accelerate the development of novel biocatalysts, therapeutic proteins, and functional biomaterials across diverse biotechnology applications.

The experimental protocols and performance metrics outlined in this review provide researchers with practical frameworks for implementing these integrated approaches, enabling more efficient navigation of protein fitness landscapes and expanding the scope of accessible protein functions through rational computational design coupled with empirical laboratory evolution.

In the field of computational protein design, the transition from an in silico model to a validated biological reality hinges on the robustness of experimental validation. This process confirms that a designed protein not exists as a physical entity but also performs its intended function, whether that is binding a target, catalyzing a reaction, or forming a specific structure. For researchers, scientists, and drug development professionals, establishing a clear "gold standard" for validation is paramount to translating computational predictions into reliable tools and therapeutics. This guide objectively compares the performance of various computational methods used in protein design and ligand affinity prediction, detailing the key experimental protocols that form the cornerstone of successful validation.

Comparative Performance of Computational Methods

A critical step in computational protein design and drug discovery is the accurate prediction of how strongly a small molecule (ligand) binds to its protein target. Several computational methods are employed for this task, each balancing accuracy, computational cost, and ease of use differently. The table below summarizes the performance of popular free energy calculation methods based on multiple benchmark studies.

Table 1: Performance Comparison of Free Energy Calculation Methods

| Method | Theoretical Basis | Reported Accuracy (Correlation with Experiment) | Computational Cost | Primary Use Case |

|---|---|---|---|---|

| Free Energy Perturbation (FEP) | Alchemical pathway, rigorous physics-based [24] | High (R²: 0.57-0.85, MUE: ~0.6-1.2 kcal/mol) [25] [26] [27] | Very High | Lead optimization, relative binding affinity for congeneric series [24] |

| MM/PBSA | End-point, molecular mechanics & implicit solvation [28] [29] | Moderate (Spearman R: ~0.49-0.66) [30] [27] | Medium | Binding pose prediction, affinity ranking where FEP is infeasible [30] |

| MM/GBSA | End-point, molecular mechanics & implicit solvation (GB model) [28] [29] | Moderate to Good (Spearman R: ~0.66, outperforms MM/PBSA in some benchmarks) [30] [29] | Medium | Rescoring docking poses, affinity ranking; often more efficient than MM/PBSA [30] |

| Molecular Docking Scoring Functions | Empirical, knowledge-based, or force-field based approximations [30] | Lower (Less accurate than MM/GBSA and MM/PBSA for ranking) [29] [30] | Low | High-throughput virtual screening, initial pose generation [30] |

Key Insights from Comparative Benchmarks:

- FEP is consistently the most accurate method for predicting relative binding affinities, with modern implementations achieving accuracy near the reproducibility limits of experimental assays (mean unsigned errors around 1 kcal/mol or less) [24] [26].

- MM/GBSA and MM/PBSA offer a balanced compromise between accuracy and computational cost. They are significantly more accurate than standard docking scoring functions for identifying correct binding poses and ranking affinities, making them excellent for post-docking refinement [29] [30].

- Performance is system-dependent. For example, in soluble proteins, MM/GBSA can achieve competitive correlation with experiment, but its performance can vary with parameters like the solute dielectric constant and the chosen Generalized Born model [28] [29].

Key Experimental Protocols for Validation

Computational predictions must be validated against experimental data to establish their reliability. The following are detailed methodologies for key experiments used to characterize designed proteins and their interactions.

Binding Affinity Measurements

The primary metric for validating a protein-ligand design is the experimental measurement of binding strength.

- Isothermal Titration Calorimetry (ITC): This technique directly measures the heat change associated with ligand binding. It provides the dissociation constant (Kd), as well as the stoichiometry (n), enthalpy (ΔH), and entropy (ΔS) of the interaction, offering a complete thermodynamic profile [24].

- Surface Plasmon Resonance (SPR): SPR measures biomolecular interactions in real-time without labeling. It determines the association (kₐ) and dissociation (kḍ) rate constants, from which the equilibrium Kd can be calculated. This provides kinetic context to the binding affinity [24].

- Inhibition Constant (Ki) Assays: For enzymes, the inhibitory potency of a ligand is measured via functional assays, yielding an inhibition constant (Ki) or half-maximal inhibitory concentration (IC50). Under specific conditions, IC50 values can be converted to Ki, allowing for comparison with computed free energies [24] [29].

Assessing Thermostability

A common goal in protein design is to enhance stability, which is crucial for industrial and therapeutic applications.

- Differential Scanning Calorimetry (DSC): DSC measures the heat capacity of a protein solution as it is heated. The midpoint of the thermal unfolding transition (Tm) provides a direct measure of the protein's thermal stability. An increase in Tm for a designed protein compared to its wild-type counterpart is a key validation success [12].

- Circular Dichroism (CD) Spectroscopy: Far-UV CD monitors changes in the secondary structure of a protein as a function of temperature or denaturant concentration. This also allows for the determination of the Tm and provides information on the folded state's structural integrity [12].

Structural Characterization

Verifying that a designed protein adopts the intended three-dimensional structure is critical.

- X-ray Crystallography: This provides an atomic-resolution structure of the designed protein or protein-ligand complex. It is the ultimate validation for confirming a designed binding pose, fold, or active site geometry [24] [30].

- Nuclear Magnetic Resonance (NMR) Spectroscopy: NMR can be used to determine protein structures in solution and study protein dynamics. It is particularly valuable for characterizing conformational changes and validating the solution-state behavior of designs [12].

The following diagram illustrates the typical workflow integrating computational design with experimental validation.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful experimental validation relies on a suite of reliable reagents and instruments. The table below details key solutions and their functions in the context of validating computational designs.

Table 2: Key Research Reagent Solutions for Experimental Validation

| Reagent / Material | Function in Validation |

|---|---|

| Stabilized Protein Constructs | Engineered proteins with enhanced stability (e.g., via maximized hydrogen bonds) are crucial for withstanding the conditions of biophysical assays and for practical application [12]. |

| Characterized Ligand Libraries | Libraries of small molecules with known binding affinities (e.g., for a target like PLK1) are essential as positive controls and for benchmarking computational methods [25]. |

| High-Purity Buffers & Chemicals | Essential for ensuring that observed effects in ITC, SPR, and DSC are due to the protein-ligand interaction and not buffer artifacts or impurities. |

| Crystallization Screening Kits | Commercial kits containing a wide array of precipitant conditions are used to identify initial conditions for growing protein crystals for X-ray studies [30]. |

| Well-Characterized Benchmark Datasets | Publicly available datasets (e.g., from PDBbind) of protein-ligand complexes with known structures and affinities are indispensable for retrospective method validation [24] [30]. |

Visualizing the Hierarchy of Computational Methods

The relationships between different computational approaches, from high-throughput screening to rigorous free energy calculations, can be visualized as a hierarchy of accuracy and computational expense.

Establishing the gold standard for experimental validation in computational protein design requires a multi-faceted approach. There is no single "winner" among computational methods; rather, the choice depends on the project's stage and goals. For rapid virtual screening, docking is indispensable. For more reliable affinity ranking and pose prediction, MM/GBSA and MM/PBSA provide a valuable balance of accuracy and speed. Finally, for the most critical lead optimization decisions, FEP stands as the current gold standard for computational affinity prediction, with accuracy that can rival experimental reproducibility. Ultimately, successful validation is demonstrated through a convergence of evidence: high-accuracy computational predictions confirmed by robust, reproducible data from multiple orthogonal experimental techniques, culminating in a high-resolution structure that reveals the precise molecular interactions designed in silico.

AI, Automation, and Real-World Applications in Protein Design

The field of computational protein design is undergoing a revolutionary transformation, driven by artificial intelligence (AI) methods that can decipher the complex relationships between protein sequence, structure, and function. Among these, protein language models (pLMs) like the Evolutionary Scale Modeling (ESM) family and inverse folding models such as ProteinMPNN have emerged as particularly powerful tools. These models enable researchers to generate novel protein sequences for desired structures and functions with unprecedented accuracy and efficiency. For researchers, scientists, and drug development professionals, understanding the relative strengths, limitations, and optimal application domains of these tools is critical for advancing therapeutic development and basic biological research. This guide provides a comprehensive, data-driven comparison of these technologies, grounded in experimentally validated performance metrics, to inform their effective implementation in protein design pipelines.

Protein Language Models (ESM Family)

Protein language models, including the ESM series, are primarily trained on millions of protein sequences through self-supervised learning, often using masked language modeling objectives where the model learns to predict missing amino acids in a sequence. This process allows them to internalize fundamental principles of protein biochemistry and evolution, capturing both local and global structural and functional properties [31] [32]. These models excel at producing rich, contextual embeddings (numerical representations) for protein sequences, which can be leveraged for various downstream predictive tasks via transfer learning. The ESM model family includes architectures of vastly different scales, from 8 million to 15 billion parameters, with performance and computational requirements varying significantly by size [31].

Inverse Folding Models (ProteinMPNN and Beyond)

Inverse folding models address a different core problem: given a protein backbone structure, generate a sequence that will fold into that structure. ProteinMPNN, a leading model in this category, uses a message-passing neural network architecture operating on a graph representation of the protein, where residues are nodes and edges are defined by spatial proximity [33]. These models are structurally conditioned, meaning their predictions are directly guided by three-dimensional atomic coordinates rather than sequence context alone. The ecosystem of inverse folding tools has expanded to include specialized variants such as LigandMPNN (which incorporates small molecules, nucleotides, and metals) [33] and ABACUS-T (a multimodal model that integrates multiple backbone states and evolutionary information) [1].

Performance Benchmarking: A Quantitative Comparison

Sequence Recovery and Design Accuracy

Sequence recovery—the percentage of amino acids in a native sequence that a model correctly predicts—is a fundamental metric for evaluating inverse folding models. The table below summarizes the performance of various models across different structural contexts, based on large-scale benchmarking studies.

Table 1: Sequence Recovery Rates (%) of Inverse Folding Models

| Model | General Protein | Small Molecule Context | Nucleotide Context | Metal Context |

|---|---|---|---|---|

| ProteinMPNN | ~50.4% [33] | ~50.4% [33] | ~34.0% [33] | ~40.6% [33] |

| LigandMPNN | - | ~63.3% [33] | ~50.5% [33] | ~77.5% [33] |

| ESM-IF | - | - | - | - |

| AntiFold | Superior in Fab design [34] [35] | - | - | - |

| LM-Design | Adaptable across antibodies [34] [35] | - | - | - |

The data demonstrates that LigandMPNN significantly outperforms ProteinMPNN and Rosetta in designing sequences for residues interacting with non-protein components, highlighting the importance of specialized architectures for specific design contexts [33]. For antibody-specific design, AntiFold and LM-Design show particular promise, with AntiFold excelling in Fab antibody design and LM-Design demonstrating adaptability across diverse antibody types, including VHH antibodies [34] [35].

Transfer Learning and Fitness Prediction

For protein language models like ESM, performance is often measured by their effectiveness in transfer learning—using pre-trained model embeddings as features for predicting functional properties like stability or activity.

Table 2: Transfer Learning Performance of ESM Models

| Model Size Category | Parameter Range | Recommended Context | Key Findings |

|---|---|---|---|

| Small Models | <100 million | Limited data scenarios | - |

| Medium Models (ESM-2 650M, ESM C 600M) | 100M - 1B | Optimal balance for most realistic datasets | Perform nearly as well as larger models despite being many times smaller [31] |

| Large Models (ESM-2 15B, ESM C 6B) | >1 billion | Data-rich environments | Maximum performance but with high computational cost [31] |

A critical finding from systematic evaluations is that larger models do not necessarily outperform smaller ones, especially when training data is limited. Medium-sized models such as ESM-2 650M and ESM C 600M demonstrate consistently good performance, falling only slightly behind their larger counterparts while offering dramatically better computational efficiency [31]. This makes them particularly suitable for practical laboratory settings where computational resources may be constrained.

Embedding Compression for Transfer Learning

When using pLM embeddings for transfer learning, the high dimensionality of these representations often necessitates compression before downstream prediction tasks. Research comparing various compression methods has found that mean pooling (averaging embeddings across all sequence positions) consistently outperforms more complex alternatives like max pooling, inverse Discrete Cosine Transform (iDCT), and PCA [31]. This holds true particularly for diverse protein sequences, where mean pooling led to an increase in variance explained between 20 and 80 percentage points compared to other methods [31].

Experimental Validation and Functional Success

Experimental Protocols for Model Validation

Rigorous experimental validation is crucial for establishing the real-world utility of computational designs. Standard protocols include:

Deep Mutational Scanning (DMS) Validation: For mutational effect predictions, models are evaluated by correlating their prediction scores (e.g., log-likelihoods from inverse folding models) with experimentally measured fitness or binding affinity changes (ΔΔG) from DMS experiments [34] [35]. Spearman correlation between predicted and experimental values is a common metric.

Structure-Based Sequence Recovery: This protocol evaluates a model's ability to reproduce native sequences given their backbone structures. The benchmark typically involves held-out test sets from the Protein Data Bank, with designs evaluated by amino acid recovery rates and structural accuracy via metrics like sc-TM (side-chain TM-score) [36].

Functional Characterization of Designed Proteins: Designed sequences are experimentally synthesized and tested for target functions. For enzymes, this involves activity assays under specific substrate conditions; for binding proteins, surface plasmon resonance (SPR) or similar biophysical methods quantify binding affinity and specificity [1] [33]. Thermostability is commonly assessed by measuring melting temperature (Tₘ) differential scanning fluorimetry.

Case Studies of Experimentally Validated Designs

LigandMPNN for Small-Molecule Binding: LigandMPNN has been used to design over 100 experimentally validated small-molecule and DNA-binding proteins, with high affinity and structural accuracy confirmed by X-ray crystallography. In one instance, redesigning Rosetta small-molecule binder designs increased binding affinity by as much as 100-fold [33].

ABACUS-T for Functional Enzyme Design: ABACUS-T has demonstrated remarkable success in redesigning enzymes while maintaining or enhancing function. Redesigned allose binding protein achieved 17-fold higher affinity while retaining conformational change; endo-1,4-β-xylanase and TEM β-lactamase maintained or surpassed wild-type activity with substantially increased thermostability (ΔTₘ ≥ 10 °C) [1].

AiCE Framework for Base Editor Engineering: The AiCE approach, which uses inverse folding models to identify high-fitness mutations, successfully developed enhanced base editors including enABE8e, enSdd6-CBE (with 1.3-fold improved fidelity), and enDdd1-DdCBE (with up to 14.3-fold enhanced mitochondrial activity) [37].

Experimental Workflow for Computational Protein Design

Table 3: Key Research Reagents and Computational Tools for Protein Design

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| ESM-2/ESM-C Models | Protein Language Model | Generate sequence embeddings; predict variant effects | Transfer learning for function prediction; zero-shot mutation effect prediction [31] [38] |

| ProteinMPNN | Inverse Folding Model | Sequence design for given backbones | General protein design; stable scaffold generation [33] [38] |

| LigandMPNN | Specialized Inverse Folding | Sequence design with molecular context | Enzyme active site design; small-molecule binder design [33] |

| AntiFold | Specialized Inverse Folding | Antibody CDR sequence design | Therapeutic antibody engineering [34] [35] |

| ABACUS-T | Multimodal Inverse Folding | Sequence design with MSA and conformational states | Functional enzyme design with stability enhancements [1] |

| Rosetta | Biophysical Suite | Structure modeling, refinement, and scoring | Physics-based validation and refinement of ML designs [38] |

| AlphaFold2 | Structure Prediction | Protein 3D structure prediction | In silico validation of designed sequences [38] |

| SAbDab | Data Repository | Structural antibody database | Curated datasets for antibody design benchmarking [34] [35] |

Integrated Design Strategies and Best Practices

Hybrid Approaches and Consensus Strategies

The most successful protein design pipelines often combine multiple AI approaches rather than relying on a single model. Research indicates that sampling sequences from an average of predictions across multiple models (ESM-2, MIF-ST, ProteinMPNN) can yield superior results compared to individual models alone [38]. Furthermore, integrating AI-based sampling with biophysics-based scoring and refinement using tools like Rosetta remains a powerful strategy, as ML models excel at purging deleterious mutations while physical scoring can provide critical validation [38].

Practical Recommendations for Different Scenarios

Model Selection Guide for Different Protein Design Scenarios

Based on the benchmarking data and experimental validations, the following strategic recommendations emerge:

For general protein design tasks without specialized context, ProteinMPNN provides robust performance and high speed. Supplementing with ESM-2 embeddings (from medium-sized models) for scoring can improve functional outcomes [31] [38].

For antibody engineering, specialized models like AntiFold (for Fab antibodies) or LM-Design (for VHH and diverse antibody types) significantly outperform general-purpose models due to their domain-specific training [34] [35].

For enzyme design and small-molecule binding proteins, LigandMPNN is the current state-of-the-art, explicitly modeling interactions with non-protein atoms [33]. For complex enzymatic functions requiring conformational dynamics, ABACUS-T's integration of multiple backbone states and evolutionary information is advantageous [1].

Under data-limited conditions for downstream prediction tasks, medium-sized ESM models (e.g., ESM-2 650M) with mean-pooled embeddings offer the best balance of performance and efficiency [31].

The AI revolution in protein design has matured beyond proof-of-concept demonstrations to deliver robust, experimentally validated tools that are accelerating therapeutic development and basic research. Protein language models like ESM and inverse folding tools like ProteinMPNN represent complementary approaches in the computational toolbox, each with distinct strengths and optimal application domains. The benchmarking data and case studies presented here provide a framework for researchers to select appropriate models based on their specific design objectives, whether engineering therapeutic antibodies, designing functional enzymes, or predicting mutation effects. As the field continues to evolve, the integration of these data-driven approaches with physics-based methods and high-throughput experimental validation will further expand the boundaries of what is possible in protein design.

The field of de novo protein design is undergoing a revolutionary transformation, moving from reliance on natural templates to the computational creation of entirely novel proteins with customized functions. This paradigm shift is largely driven by the emergence of artificial intelligence (AI) and generative models that can explore the vast, uncharted regions of the protein functional universe [6]. Among these tools, RFdiffusion has established itself as a powerful and versatile framework for designing novel protein structures and functions from simple molecular specifications [9] [39]. This guide provides an objective comparison of RFdiffusion's performance against other computational methods, grounded in experimental validation data that demonstrates its capabilities and current limitations. The ability to design proteins atomically accurately opens new avenues for therapeutic development, enzyme engineering, and synthetic biology [40] [41].

The fundamental challenge in de novo protein design stems from the astronomical scale of possible protein sequences. For a mere 100-residue protein, there are approximately 20^100 (≈1.27 × 10^130) possible amino acid arrangements, exceeding the estimated number of atoms in the observable universe by more than fifty orders of magnitude [6]. Conventional protein engineering methods, such as directed evolution, remain tethered to natural evolutionary pathways and require experimental screening of immense variant libraries, confining discovery to incremental improvements within well-explored neighborhoods of the sequence-structure space [6]. RFdiffusion and other AI-driven approaches transcend these limitations by enabling systematic exploration of genuinely novel functional regions that lie beyond natural evolutionary boundaries.

Table: Key Milestones in AI-Driven De Novo Protein Design

| Year | Development | Significance |

|---|---|---|

| 2023 | RFdiffusion Introduction [9] | Demonstrated de novo design of protein structures and binders using diffusion models |

| 2024 | RFdiffusion for Antibodies [40] | Achieved atomically accurate design of antibody variable heavy chains (VHHs) and scFvs |

| 2025 | RFdiffusion3 [41] | Extended capabilities to all-atom biomolecular design including protein-DNA and protein-ligand interactions |

| 2025 | AlphaDesign Framework [42] | Introduced hallucination-based alternative combining AlphaFold with autoregressive diffusion models |

Core Architecture and Training

RFdiffusion operates on a denoising diffusion probabilistic model (DDPM) framework, similar to those used for generating images from text prompts [9] [43]. The method was developed by fine-tuning the RoseTTAFold structure prediction network on protein structure denoising tasks, leveraging its deep understanding of protein sequence-structure relationships [9]. The model uses a rigid-frame representation of protein backbones comprising Cα coordinates and N-Cα-C orientations for each residue [9].

The training process involves a noising schedule that corrupts protein structures from the Protein Data Bank (PDB) over multiple timesteps toward random prior distributions [40]. During training, a PDB structure and random timestep are sampled, noise is applied, and RFdiffusion learns to predict the de-noised structure. At inference time, the process starts from random noise and iteratively refines it through a reverse denoising process to generate novel protein backbones [40] [9]. This approach enables the generation of diverse protein structures not limited to existing folds in nature.

Specialized Implementations for Different Design Challenges

The RFdiffusion framework has been adapted for specialized protein design tasks through targeted fine-tuning:

Antibody Design: A specialized version fine-tuned on antibody structures enables de novo generation of antibody variable heavy chains (VHHs), single-chain variable fragments (scFvs), and full antibodies that bind to user-specified epitopes with atomic-level precision [40] [44]. This implementation conditions the framework structure and sequence while designing complementary-determining regions (CDRs) and overall rigid-body placement.

All-Atom Design (RFdiffusion3): The latest iteration operates at atomic resolution, capable of generating protein backbones, sidechains, and complex interactions with ligands, DNA, and other non-protein molecules simultaneously [41]. This unified framework employs co-diffusion, generating the protein and its binding partner concurrently for more natural interfaces.

Symmetric Assemblies: RFdiffusion can design higher-order symmetric architectures by applying symmetry operations to the initial noise, enabling creation of complex oligomers with cyclic, dihedral, and tetrahedral symmetries [9] [45].

Performance Comparison: RFdiffusion vs. Alternative Methods

Computational Benchmarking

Table: Computational Performance Metrics Across Design Methods

| Method | Monomer Design Success Rate | Binder Design Success | All-Atom Design Capability | Sequence-Structure Consistency |

|---|---|---|---|---|

| RFdiffusion | 72-98% (50-300 AA) [42] | High success for protein-protein interfaces [9] | Yes (RFdiffusion3) [41] | High (pAE <5, RMSD <2Å) [9] |

| AlphaDesign | 73-98% (50-300 AA) [42] | Limited beyond short peptides [42] | No (relies on AlphaFold) | High (pLDDT >70, scRMSD <2Å) [42] |

| Physics-Based (Rosetta) | Variable, length-dependent [6] | Requires extensive sampling [6] | Limited (computationally expensive) | Lower (force field approximations) [6] |

| Earlier Deep Learning | Limited success in generating foldable sequences [9] | Limited to helical/strand interactions [40] | No | Variable (often poor for novel folds) |

The computational success rates demonstrate RFdiffusion's strong performance across various design challenges. For monomer design, success rates remain high (72-98%) even for larger proteins up to 300 residues [42]. In binder design, RFdiffusion has demonstrated particular strength, with cryo-electron microscopy structures confirming near-atomic accuracy in designed binders complexed with targets like influenza haemagglutinin [9].

Experimental Validation

The ultimate test of any protein design method lies in experimental characterization of designed proteins. RFdiffusion has been extensively validated through wet-lab experiments:

Structural Validation: Cryo-EM analysis of five designed antibodies targeting influenza haemagglutinin and Clostridium difficile toxin B confirmed that four interacted with their binding partners exactly as intended, demonstrating remarkable accuracy in computational design [40] [44]. High-resolution structures verified atomic accuracy of designed complementarity-determining regions (CDRs).

Functional Characterization: For enzyme design, RFdiffusion3 successfully scaffolded catalytic motifs in 90% of tested cases, significantly outperforming previous methods. Experimental testing of a designed cysteine hydrolase demonstrated functional efficiency (kcat/Km = 3557 M⁻¹s⁻¹) with 35 out of 190 designs showing catalytic activity [41].

Binding Affinity: Initial computational designs typically exhibit modest affinity (tens to hundreds of nanomolar Kd), but affinity maturation using systems like OrthoRep enables production of single-digit nanomolar binders that maintain intended epitope selectivity [40].

Table: Experimental Success Metrics for RFdiffusion Designs

| Application | Experimental Success Rate | Key Performance Metrics | Validation Method |

|---|---|---|---|