Evaluating Mutation Effect Prediction Accuracy: From Traditional Algorithms to AI-Driven Models

This article provides a comprehensive evaluation of mutation effect prediction tools for researchers and drug development professionals.

Evaluating Mutation Effect Prediction Accuracy: From Traditional Algorithms to AI-Driven Models

Abstract

This article provides a comprehensive evaluation of mutation effect prediction tools for researchers and drug development professionals. It explores the foundational principles of these algorithms, compares the performance and methodology of traditional versus next-generation AI models, addresses key challenges like inter-algorithm disagreement and low negative predictive value, and outlines rigorous validation frameworks. The synthesis of current benchmarks reveals that while no single algorithm is perfectly accurate, strategic combination of tools and emerging multimodal deep learning methods significantly enhance prediction reliability for clinical and research applications.

The Foundation of Mutation Effect Prediction: Why Accuracy Matters in Genomics

Cancer cells accumulate hundreds to thousands of somatic mutations throughout their lifetime, yet only a select few—termed "driver mutations"—directly promote cancer progression by conferring a selective growth advantage [1]. The vast majority are "passenger mutations," biologically neutral events that do not contribute to tumorigenesis but accumulate passively during cell division [1]. In a pan-cancer cohort of 160,969 patients, approximately 80% of somatic mutations detected were variants of unknown significance (VUS), creating a substantial interpretation challenge for clinicians and researchers [2]. The clinical implications of accurately distinguishing these mutation types are profound, as driver mutations influence cell cycle control, insensitivity to growth inhibitory signals, and immune escape mechanisms [1].

The distribution of driver mutations is highly heterogeneous, ranging from about one driver mutation per patient in sarcomas, thyroid, and testicular cancers, to approximately four in bladder, endometrial, and colorectal cancers [1]. This classification is further complicated by the context-dependent nature of some mutations, where "latent drivers" may only promote cancer progression at certain disease stages or in conjunction with other genetic alterations [1]. The ability to accurately identify driver mutations from this genetic noise has become a cornerstone of precision oncology, directly informing diagnosis, prognostic stratification, and therapeutic targeting.

Computational frameworks for driver mutation prediction

Methodological approaches and underlying principles

Computational methods for driver mutation prediction leverage distinct biological principles and data types, leading to varied performance characteristics:

Evolution-based methods primarily rely on evolutionary conservation metrics, operating under the principle that genomic positions critical for function are conserved across species and thus intolerant to mutation [2]. Methods incorporating protein structure leverage 3D protein information, predicting that mutations disrupting binding sites or folding are more likely to be pathogenic [2]. Ensemble and deep learning methods integrate multiple data types—including evolutionary, structural, and functional genomic features—using high-dimensional machine learning architectures [2]. Tumor type-specific methods incorporate cancer-specific signals like mutational recurrence and 3D clustering patterns within particular cancer contexts [2].

Performance comparison of leading prediction tools

Table 1: Performance comparison of computational methods for identifying known cancer drivers

| Method Category | Representative Tools | AUROC (Oncogenes) | AUROC (Tumor Suppressors) | Key Strengths |

|---|---|---|---|---|

| Deep Learning (Multimodal) | AlphaMissense | 0.98 | 0.98 | Superior performance identifying known pathogenic mutations |

| Ensemble Methods | VARITY, REVEL | 0.85-0.95 | 0.90-0.97 | Strong performance leveraging human-curated data |

| Evolution-based Methods | EVE | 0.83 | 0.92 | Best-performing evolution-only method |

| Tumor Type-Specific | CHASMplus, BoostDM | Varies by context | Varies by context | Captures cancer-specific mutational patterns |

In benchmarking studies, methods incorporating protein structure or functional genomic data consistently outperformed those trained exclusively on evolutionary conservation [2]. AlphaMissense significantly surpassed other deep learning methods and best-in-class alternatives for predicting oncogenic mutations, achieving an AUROC of 0.98 for both oncogenes and tumor suppressor genes at the population level [2]. Ensemble methods like VARITY and REVEL, trained on human-curated data, outperformed CADD, which utilizes weaker population-derived labels [2]. Notably, sensitivity was generally higher for tumor suppressor genes than oncogenes across all methods, though significant gene-level variation exists [2].

Experimental validation: Bridging computational prediction and clinical relevance

Validation methodologies for predicted driver mutations

Validating computational predictions presents significant challenges, as traditional functional assays are labor-intensive and can only characterize a limited number of variants [2]. Contemporary approaches have developed four key validation strategies using real-world patient data:

- Association with known binding sites: Testing whether mutations predicted as pathogenic are enriched at protein-protein interaction or ligand binding residues [2]

- Clinical outcome correlation: Assessing whether VUSs predicted as pathogenic associate with worse overall survival in patient cohorts [2]

- Pathway mutual exclusivity: Determining if predicted driver mutations exhibit mutual exclusivity with known oncogenic alterations in the same pathways [2]

- Drug response association: Validating predictions by correlation with treatment responses in clinically annotated datasets [2]

In one comprehensive analysis, mutations affecting binding residues were significantly more likely to be annotated as oncogenic (Fisher's test, q-value = 0, odds ratio = 10.02, 95% CI = [9.45, 10.63]) [2]. Furthermore, mutations occurring at binding residues were universally more likely to be reclassified as pathogenic across computational methods [2].

Clinical validation in non-small cell lung cancer

Table 2: Clinical validation of AI-predicted driver mutations in NSCLC patient cohorts

| Validation Metric | Gene Example | Finding | Clinical Significance |

|---|---|---|---|

| Overall Survival | KEAP1 | "Pathogenic" VUSs associated with worse survival | Prognostic stratification |

| Overall Survival | SMARCA4 | "Pathogenic" VUSs associated with worse survival | Prognostic stratification |

| Pathway Mutual Exclusivity | Multiple | "Pathogenic" VUSs mutually exclusive with known drivers | Supports biological validity |

| Survival Discrimination | KEAP1/SMARCA4 | "Benign" VUSs showed no survival difference | Validates prediction specificity |

In two non-overlapping non-small cell lung cancer cohorts (N = 7965 and 977 patients), VUSs identified as pathogenic drivers by AI in KEAP1 and SMARCA4 were consistently associated with worse survival, unlike "benign" VUSs [2]. These "pathogenic" VUSs also exhibited mutual exclusivity with known oncogenic alterations at the pathway level, further supporting their biological validity as true driver events [2].

Advanced multi-representation frameworks and emerging approaches

Integrated frameworks for cancer classification and mutation interpretation

Next-generation prediction frameworks are increasingly adopting multi-representation approaches that integrate complementary data modalities. The GraphVar framework exemplifies this trend by generating both spatial variant maps (encoding gene-level variant categories as pixel intensities) and numeric feature matrices (capturing population allele frequencies and mutation spectra) [3]. This dual-stream architecture employs a ResNet-18 backbone to extract image-level features and a Transformer encoder to model numeric profiles, achieving remarkable 99.82% accuracy across 33 cancer types in a cohort of 10,112 patients [3].

Similarly, DeepTarget represents a significant advancement in predicting cancer drug targets by integrating large-scale drug and genetic knockdown viability screens with omics data [4]. In benchmark testing, DeepTarget outperformed existing tools like RoseTTAFold All-Atom and Chai-1 in seven out of eight drug-target test pairs for predicting both primary and secondary drug targets and their mutation specificity [4].

Metabolic dependency prediction for "undruggable" drivers

For traditionally "undruggable" driver mutations, innovative approaches are identifying associated metabolic vulnerabilities. DeepMeta, a graph deep learning-based metabolic vulnerability prediction model, accurately predicts dependent metabolic genes for cancer samples based on transcriptome and metabolic network information [5]. This approach has successfully identified that CTNNB1 T41A-activating mutations show experimentally confirmed vulnerability to purine/pyrimidine metabolism inhibition [5]. Notably, TCGA patients with predicted pyrimidine metabolism dependency showed dramatically improved clinical responses to chemotherapeutic drugs targeting this pathway [5].

Critical datasets and knowledge bases

Table 3: Essential research resources for driver mutation prediction and validation

| Resource Name | Type | Primary Function | Key Application |

|---|---|---|---|

| OncoKB | Knowledge Base | Annotates pathogenic/actionable mutations | Validation benchmark for predictions |

| AACR Project GENIE | Dataset | Pan-cancer cohort of ~160,969 patients | Training data and population-level validation |

| COSMIC Mutational Signatures | Database | Catalog of mutational patterns | Contextualizing mutation background |

| TCGA Data Portal | Data Repository | Somatic variant data across 33 cancer types | Model training and testing |

Computational tools and environments

The experimental protocols for evaluating driver mutation prediction methods typically utilize Python-based environments with specialized libraries including PyTorch for deep learning implementations, scikit-learn for performance metrics and traditional machine learning models, and custom pipelines for data preprocessing [3]. Critical computational steps include 10-fold cross-validation to mitigate overfitting, grid search for hyperparameter optimization, and stratified sampling to maintain class balance across cancer types [6] [3]. For model interpretation, SHAP analysis and Grad-CAM visualizations are employed to identify feature importance and localize decisive genomic patterns [6] [3].

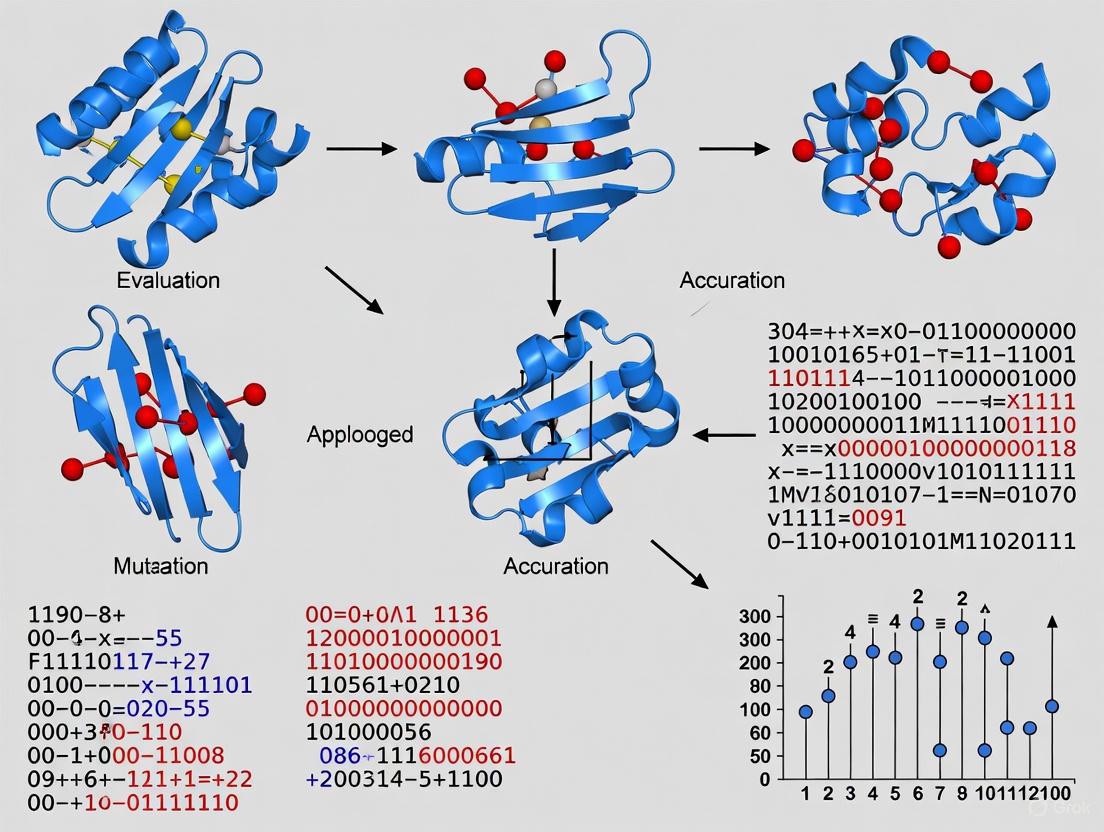

Visualizing experimental workflows and analytical frameworks

Driver mutation prediction and validation workflow

Multi-representation framework architecture

The field of driver mutation prediction has evolved from conservation-based methods to sophisticated multimodal frameworks that integrate structural, functional, and clinical data. Current evidence demonstrates that methods incorporating protein structure or functional genomic data outperform those trained exclusively on evolutionary conservation [2]. The clinical validation of these predictions represents the most critical step toward clinical translation, with studies showing that AI-predicted driver VUSs in genes like KEAP1 and SMARCA4 associate with worse survival in NSCLC patients [2]. Emerging approaches that predict metabolic dependencies for "undruggable" drivers and integrate multi-representation data streams offer promising avenues for expanding the therapeutic targeting of cancer driver mutations [3] [5]. As these computational tools mature, their integration into clinical decision-making pipelines holds tremendous potential for advancing personalized cancer therapy.

Accurately predicting the functional consequences of protein mutations is a fundamental challenge in computational biology with profound implications for understanding genetic diseases and engineering novel enzymes. The core premise underlying most modern prediction algorithms is that evolution and structure hold the key to discernment. These methods operate on the principle that positions in a protein critical for its function, stability, or folding are evolutionarily conserved, and that mutations disrupting favorable structural interactions are likely to be deleterious. This guide provides an objective comparison of the diverse algorithmic strategies—ranging from evolutionary analysis to physics-based simulations and deep learning—that leverage these two core principles, evaluating their performance, underlying protocols, and optimal applications based on current benchmarking studies.

The landscape of mutation effect predictors can be broadly categorized into several methodological paradigms, each with distinct approaches to utilizing evolutionary and structural data. The table below summarizes the core principles, data requirements, and outputs of the main types of algorithms.

Table 1: Comparison of Major Methodological Paradigms in Mutation Effect Prediction

| Method Paradigm | Core Principles | Primary Data Input | Representative Tools | Typical Output |

|---|---|---|---|---|

| Evolutionary Conservation | Quantifies site-specific evolutionary pressure from homologous sequences; conserved sites are assumed critical. | Multiple Sequence Alignments (MSAs), Phylogenetic Trees | SIFT, PROVEAN, phyloP, GERP++, LIST [7] [8] | Conservation score, Deleterious/Benign prediction |

| Taxonomy-Aware Evolution | Extends conservation by weighing sequence homologs based on taxonomic distance to the query species. | MSAs, Species Taxonomy Tree | LIST [7] | Pathogenicity probability score |

| Physics-Based Simulation | Uses molecular dynamics and statistical thermodynamics to calculate free energy changes (ΔΔG) from atomic forces. | Protein 3D Structure, Force Field Parameters | QresFEP-2 [9], FEP+ [9] | Estimated ΔΔG (kcal/mol) |

| AI & Multimodal Deep Learning | Learns complex sequence-structure-function relationships from vast datasets of protein sequences and structures. | Primary Sequence, Predicted/Experimental Structures | ProMEP [10], AlphaMissense [10], PrimateAI [11] | Fitness impact score, Pathogenicity probability |

Detailed Examination of Core Algorithms and Experimental Protocols

Evolutionary Conservation with Taxonomic Weighting: The LIST Algorithm

The LIST algorithm introduces a novel framework that moves beyond traditional conservation scores by explicitly incorporating the taxonomic distance of homologs [7].

Experimental Protocol:

- Input Processing: A multiple sequence alignment (MSA) of the protein of interest and its homologs is required.

- Variant Shared Taxa (VST) Calculation: For a given human variant at position τ, the algorithm identifies all sequences in the MSA with the matching amino acid. Among these, it selects the sequence with the highest local sequence identity to the human query. The VST score is defined as the number of branches in the taxonomy tree shared between humans and the species of the selected sequence [7].

- Shared Taxa Profile (STP) Calculation: This measure assesses position-specific variability across the taxonomy. For each position τ, it creates a vector where each element corresponds to a specific taxonomic distance (Shared Taxa value). The value stored is the highest local sequence identity found among all sequences at that taxonomic distance that do not match the human reference amino acid [7].

- Integration and Prediction: LIST uses a hierarchical combination of modules that leverage VST, STP, and amino acid swap-ability to generate a final pathogenicity prediction [7].

Physics-Based Free Energy Calculations: The QresFEP-2 Protocol

Physics-based methods like QresFEP-2 provide a first-principles approach by computationally simulating the biophysical process of mutation [9].

Experimental Protocol:

- System Preparation: The protocol starts with a high-resolution 3D structure of the protein (e.g., from PDB or AlphaFold). The structure is solvated in a water box, and ions are added to simulate physiological conditions.

- Hybrid Topology Construction: QresFEP-2 employs a "dual-like" hybrid topology. The protein backbone is treated with a single topology (unchanged), while the wild-type and mutant side chains are represented with separate, dual topologies. This avoids transforming atom types or bonded parameters, enhancing convergence and automation [9].

- Restraint Application: To ensure sufficient phase-space overlap during the simulation, positional restraints are applied between topologically equivalent atoms in the wild-type and mutant side chains if they are within 0.5 Å in the initial structure [9].

- Free Energy Perturbation (FEP): The simulation uses molecular dynamics to gradually transform the wild-type side chain into the mutant side chain over a series of discrete "windows" or "λ states." The relative free energy change (ΔΔG) is calculated by integrating the energy differences across these windows, providing a quantitative estimate of the mutation's impact on stability or binding affinity [9].

Multimodal Deep Learning: The ProMEP Framework

ProMEP represents the state-of-the-art in AI-driven methods, integrating both sequence and structural context without relying on computationally expensive MSAs [10].

Experimental Protocol:

- Model Architecture and Training: A multimodal deep neural network with ~659 million parameters is trained on ~160 million predicted protein structures from the AlphaFold database. The model uses a self-supervised objective, learning to complete missing elements from corrupted inputs using both sequence and structure information [10].

- Structure Representation: Protein structures are represented as atomic point clouds. A rotation- and translation-equivariant embedding module is used to capture 3D structural context, ensuring the model's predictions are invariant to the orientation of the input structure [10].

- Zero-Shot Effect Prediction: For a given mutation, ProMEP computes the log-likelihood of both the wild-type and mutant amino acids conditioned on the combined sequence and structure context of the entire protein. The effect is predicted from the log-ratio of these probabilities, enabling prediction without task-specific training [10].

The logical workflow for ProMEP, from input to prediction, is outlined below.

Performance Benchmarking and Experimental Validation

Benchmarking on ClinVar/ExAC Datasets

The performance of taxonomy-aware evolutionary methods was rigorously tested against established conservation-based tools. Using a clinically relevant test set from ClinVar and ExAC, the LIST method achieved an Area Under the Curve (AUC) of 0.888, substantially outperforming phyloP (AUC: 0.820), SIFT (AUC: 0.818), and PROVEAN (AUC: 0.816) [7]. This demonstrates the predictive advantage gained by incorporating taxonomic distance.

Benchmarking on Protein Stability and Functional Datasets

The VenusMutHub benchmark, a comprehensive collection of 905 small-scale experimental datasets spanning 527 proteins, provides a robust platform for evaluating predictors on diverse properties like stability, activity, and binding affinity [12]. This resource is critical as it involves direct biochemical measurements rather than surrogate readouts.

In protein stability prediction, physics-based FEP protocols show excellent accuracy. The QresFEP-2 protocol was benchmarked on a dataset of nearly 600 mutations across 10 protein systems, demonstrating high accuracy and the highest computational efficiency among available FEP protocols [9].

For functional effect prediction, ProMEP was evaluated on the ProteinGym benchmark, which encompasses 1.43 million variants across 53 diverse proteins. ProMEP achieved state-of-the-art performance, with a particularly strong Spearman's rank correlation of 0.53 on the protein G dataset containing multiple mutations, outperforming the next-best model, AlphaMissense [10].

Table 2: Performance Comparison of Select Predictors on Key Benchmarks

| Predictor | Method Paradigm | Benchmark / Dataset | Performance Metric | Result |

|---|---|---|---|---|

| LIST [7] | Taxonomy-Aware Evolution | ClinVar/ExAC Test Set | AUC (Area Under Curve) | 0.888 |

| phyloP [7] | Evolutionary Conservation | ClinVar/ExAC Test Set | AUC (Area Under Curve) | 0.820 |

| ProMEP [10] | Multimodal Deep Learning | Protein G Dataset (DMS) | Spearman's Correlation | 0.53 |

| AlphaMissense [10] | MSA-based Deep Learning | Protein G Dataset (DMS) | Spearman's Correlation | 0.47 |

| QresFEP-2 [9] | Physics-Based Simulation | Protein Stability (10 proteins, ~600 mutations) | Accuracy & Computational Efficiency | Best in class |

Clinical and Protein Engineering Validation

Beyond retrospective benchmarks, these tools have been validated in real-world applications. In a clinical context, the PrimateAI deep neural network, trained on common variants from non-human primates, distinguished between de novo mutations in neurodevelopmental disorder patients versus healthy controls with an accuracy of 88% [11].

In protein engineering, ProMEP guided the design of high-performance gene-editing tools. A TnpB enzyme with a 5-site mutant predicted by ProMEP showed a gene-editing efficiency of 74.04%, a dramatic improvement over the wild-type efficiency of 24.66% [10].

Successful application and development of mutation effect predictors rely on a suite of key data resources and software tools.

Table 3: Key Research Reagents and Resources for Mutation Effect Prediction

| Resource Name | Type | Primary Function | Relevance in Research |

|---|---|---|---|

| Protein Data Bank (PDB) | Database | Repository of experimentally determined 3D protein structures. | Provides atomic-level structural data essential for structure-based methods like FEP and for training structure-aware AI models [9] [13]. |

| AlphaFold Protein Structure Database [10] | Database | Repository of high-accuracy predicted protein structures for numerous proteomes. | Enables structural analysis for proteins without experimental structures and serves as a massive training set for multimodal AI models like ProMEP. |

| ClinVar [7] [11] | Database | Public archive of reports on human genetic variants and their relationship to phenotype. | Serves as a critical source of curated "truth" data for training and benchmarking the prediction of pathogenic mutations. |

| gnomAD / ExAC [7] [11] | Database | Catalog of human genetic variation from large-scale sequencing projects. | Provides a robust set of population-frequency data to identify benign, common variants, which are used as negative training examples. |

| ConSurf [14] [13] | Software Tool | Calculates evolutionary conservation scores and maps them onto protein structures. | Allows for the visual integration of evolutionary and structural data to identify functionally important regions like active sites. |

| ProteinGym [10] | Benchmark | A large-scale benchmark suite of deep mutational scanning (DMS) data. | Provides a standardized and comprehensive platform for the empirical evaluation of mutation effect prediction algorithms. |

| VenusMutHub [12] | Benchmark | A collection of small-scale, high-quality experimental data on mutational effects. | Offers a benchmark for predictors on specific protein engineering tasks, focusing on direct biochemical measurements of stability, activity, and affinity. |

Integrated Workflow for Mutation Effect Analysis

The following diagram illustrates a potential integrated workflow that combines the strengths of different algorithmic paradigms for a comprehensive analysis of protein mutations, suitable for both research and industrial applications.

In the field of precision oncology, the identification of pathogenic mutations amidst thousands of genomic variants represents a fundamental challenge. Massively parallel sequencing studies consistently reveal that tumors harbor numerous mutations, most of which are functionally insignificant "passenger" mutations, while a critical minority are causal "driver" mutations that propel tumorigenesis [8]. To address this challenge, numerous computational mutation effect prediction algorithms have been developed to differentiate biologically consequential mutations from neutral polymorphisms. However, these algorithms employ diverse methodologies, training datasets, and underlying assumptions, resulting in often contradictory predictions that complicate their utility in both research and clinical settings [8] [15].

The landmark benchmarking study "Benchmarking mutation effect prediction algorithms using functionally validated cancer-related missense mutations," published in Genome Biology in 2014, represents a critical effort to establish performance baselines for these prediction tools using rigorously validated mutation sets [8]. This comprehensive analysis of 15 prediction algorithms against 989 functionally characterized mutations established a new standard for methodological evaluation in the field, providing researchers with essential guidance for tool selection and interpretation. For drug development professionals and researchers, understanding the capabilities and limitations of these prediction algorithms is paramount for prioritizing mutations for functional validation, selecting patient populations for clinical trials, and identifying novel therapeutic targets [16].

Experimental Design and Methodology

Mutation Curation and Functional Classification

The benchmarking study established a "gold standard" dataset of single nucleotide variants (SNVs) through exhaustive literature and database mining focused on 15 cancer genes, including bona fide oncogenes (BRAF, KIT, PIK3CA, KRAS, EGFR, ERRB2), recently described cancer genes (ESR1, DICER1, MYOD1, IDH1, IDH2, SF3B1), and established tumor suppressor genes (TP53, BRCA1, BRCA2) [8].

The researchers implemented a rigorous, evidence-based classification system for mutations:

- Non-neutral mutations (n=849): SNVs with experimental validation of functional impact on protein function or proven causation of hereditary cancer syndromes (Li-Fraumeni syndrome, early onset breast and ovarian cancer syndrome)

- Neutral mutations (n=140): SNVs experimentally validated as non-functional or demonstrated not to cause hereditary cancer syndromes

- Uncertain mutations (n=2,602): Variants without definitive functional evidence or classified as germline variants of unknown significance (excluded from performance calculations)

This curation process yielded a final dataset of 3,591 SNVs after excluding dinucleotide and trinucleotide changes to accommodate technical limitations of certain prediction tools [8].

Algorithm Selection and Classification Standardization

The study evaluated 15 mutation effect prediction algorithms, encompassing both independent predictors and meta-predictors that aggregate results from multiple algorithms [8]. The selected algorithms represented the state-of-the-art at the time of publication:

Independent Predictors:

- CHASM (breast, lung, melanoma)

- FATHMM (cancer, missense)

- Mutation Assessor

- MutationTaster

- PolyPhen-2

- PROVEAN

- SIFT

- VEST

Meta-predictors:

- CanDrA (breast, lung, melanoma)

- Condel

To enable cross-algorithm comparison, the researchers standardized the diverse output classifications (e.g., "deleterious," "damaging," "functional") into a binary "neutral" or "non-neutral" categorization system, with careful attention to preserving the intended interpretation of each algorithm's original output [8].

Performance Metrics and Statistical Analysis

The benchmarking employed multiple statistical approaches to evaluate algorithm performance:

- Calculation of standard performance metrics: Accuracy, positive predictive value (PPV), negative predictive value (NPV), sensitivity, and specificity

- Inter-rater agreement assessment: Pairwise unweighted Cohen's Kappa coefficients to quantify agreement between predictors

- Unsupervised clustering: To visualize patterns in prediction results across algorithms and mutation types

- Combination analysis: Evaluation of whether aggregating predictions from multiple algorithms improved performance

The experimental workflow below illustrates the comprehensive benchmarking process implemented in the study:

Key Findings and Performance Comparison

Algorithm Performance Variation

The benchmarking revealed substantial variation in algorithm performance characteristics, with notable patterns emerging across different classes of predictors [8]. While all algorithms demonstrated consistently strong positive predictive values, their negative predictive values varied considerably, reflecting differential capabilities in correctly identifying truly neutral mutations. Cancer-specific predictors generally exhibited higher accuracy for their intended applications but showed substantial variability in agreement levels—ranging from no agreement to almost perfect concordance depending on the specific algorithm pair compared [8].

Non-cancer-specific predictors demonstrated more moderate agreement levels, highlighting the fundamental methodological differences in their approaches to mutation effect prediction. This performance heterogeneity underscores the context-dependent utility of different algorithms and the importance of selecting tools appropriate for specific research questions.

Quantitative Performance Metrics

Table 1: Performance Metrics of Mutation Effect Prediction Algorithms

| Algorithm | Type | Accuracy | PPV | NPV | Sensitivity | Specificity |

|---|---|---|---|---|---|---|

| CHASM (Breast) | Cancer-specific | Moderate | High | Variable | Moderate | Moderate |

| FATHMM (Cancer) | Cancer-specific | Moderate | High | Variable | Moderate | Moderate |

| Mutation Assessor | General | Moderate | High | Variable | Moderate | Moderate |

| PolyPhen-2 | General | Moderate | High | Variable | Moderate | Moderate |

| PROVEAN | General | Moderate | High | Variable | Moderate | Moderate |

| SIFT | General | Moderate | High | Variable | Moderate | Moderate |

| CanDrA (Breast) | Meta-predictor | Moderate | High | Variable | Moderate | Moderate |

| Condel | Meta-predictor | Moderate | High | Variable | Moderate | Moderate |

Note: Specific numerical values were not provided in the source publication, which reported relative performance patterns across algorithms. PPV = Positive Predictive Value; NPV = Negative Predictive Value. Adapted from [8].

Inter-Algorithm Agreement and Combination Approaches

The study employed Cohen's Kappa coefficients to quantify agreement between prediction algorithms, revealing diverse patterns of concordance and discordance [8]. Unsupervised clustering of prediction results demonstrated that algorithms developed with similar methodologies or training datasets tended to cluster together, while those with fundamentally different approaches showed divergent prediction patterns.

Critically, the investigation revealed that combining predictions from multiple algorithms resulted in modest improvements in overall accuracy but substantially enhanced negative predictive values [8]. This finding suggests that aggregating orthogonal information from complementary algorithms can significantly improve the identification of truly neutral mutations, potentially reducing false positives in clinical and research applications. The relationship between different algorithm types and their combined performance can be visualized as follows:

Research Reagent Solutions for Mutation Effect Analysis

Table 2: Essential Research Tools for Mutation Effect Prediction Studies

| Resource Category | Specific Examples | Primary Function | Application in Benchmarking |

|---|---|---|---|

| Mutation Databases | COSMIC, TCGA, ICGC | Catalog somatic mutations across cancer types | Provide source data for mutation curation and validation |

| Functional Validation Resources | Experimental assays, Hereditary disease databases | Establish ground truth for mutation effects | Generate gold standard datasets for algorithm training |

| Prediction Algorithms | SIFT, PolyPhen-2, Mutation Assessor, CHASM, FATHMM | Predict functional impact of missense mutations | Serve as subjects for performance comparison |

| Meta-predictors | Condel, CanDrA | Aggregate predictions from multiple algorithms | Evaluate combined approach performance |

| Statistical Frameworks | Cohen's Kappa, clustering algorithms, performance metrics | Quantify agreement and prediction accuracy | Enable standardized algorithm comparison |

Implications for Research and Clinical Applications

Practical Guidance for Algorithm Selection

The benchmarking study provides crucial insights for researchers and drug development professionals selecting mutation effect prediction tools:

- No single algorithm demonstrated sufficient accuracy to independently guide experimental or clinical decision-making [8]

- Cancer-specific algorithms (CHASM, FATHMM-cancer) showed superior performance for oncological applications but with substantial variability between tools

- Algorithm combinations significantly improved negative predictive value, suggesting that consensus approaches may reduce false positives in clinical interpretation

- Tissue-specific considerations are crucial, as demonstrated by CanDrA's differential performance across breast versus lung/melanoma predictions [8]

These findings underscore the importance of context-specific algorithm selection and the potential benefits of implementing multi-algorithm consensus approaches for high-stakes applications such as patient stratification or therapeutic target identification.

Relevance to Modern Drug Development

The benchmarking principles established in this study remain highly relevant to contemporary drug development pipelines, particularly as multimodal approaches integrating DNA and RNA sequencing become increasingly prevalent [16]. The validation framework provides a template for evaluating new computational methods in precision oncology, including:

- Biomarker discovery: Prioritizing mutations for functional validation as potential predictive or prognostic biomarkers

- Clinical trial enrichment: Selecting patient populations based on mutational profiles likely to influence therapeutic response

- Target identification: Distinguishing driver mutations from passenger mutations in novel cancer genes

- Diagnostic development: Establishing rigorous validation standards for computational components of clinical assays

As drug discovery platforms increasingly incorporate artificial intelligence and machine learning approaches [17], the rigorous benchmarking methodology established by this study provides an essential foundation for evaluating algorithm performance in biologically and clinically meaningful contexts.

The landmark benchmarking study of mutation effect prediction algorithms established critical performance baselines and methodological standards that continue to inform research and clinical applications in precision oncology. By employing rigorously validated mutation sets and comprehensive evaluation metrics, the study demonstrated that while current algorithms show promising capabilities, particularly when used in combination, significant limitations remain in their ability to independently guide experimental prioritization or clinical decision-making [8].

For researchers and drug development professionals, these findings highlight the importance of implementing multi-algorithm consensus approaches and maintaining rigorous functional validation standards when evaluating putative pathogenic mutations. As the field advances toward increasingly sophisticated computational methods and multimodal data integration [16], the benchmarking framework established by this study provides an essential foundation for the development and validation of next-generation mutation effect prediction tools.

In the era of high-throughput sequencing, researchers and clinicians are confronted with a vast number of genetic variants whose biological and clinical significance must be deciphered. Central to this challenge is the systematic classification of mutations based on their functional impact, typically categorized as neutral, non-neutral (or pathogenic), or uncertain. This classification is not merely academic; it directly influences research directions, diagnostic conclusions, and therapeutic development. This guide provides a comparative analysis of the experimental and computational frameworks used to define these categories, offering drug development professionals and scientists a data-driven overview of the tools and methodologies at their disposal.

Defining the Categories: A Functional Framework

The classification of mutations hinges on direct experimental evidence or strong clinical association data. These categories form the "gold standard" against which computational prediction algorithms are benchmarked [18].

- Non-Neutral Mutations: These are mutations that have a demonstrably detrimental effect on protein function or are proven to be causative of a disease. Evidence includes:

- Experimental Validation: In vitro or in vivo assays showing a damaging effect on protein activity, stability, binding affinity, or cellular growth [18] [12].

- Hereditary Disease Association: Mutations identified as the cause of Mendelian disorders (e.g., Li-Fraumeni syndrome for TP53 mutations or early-onset breast and ovarian cancer syndrome for BRCA1 and BRCA2 mutations) as recorded in curated databases like OMIM and ClinVar [18] [19].

- Neutral Mutations: These are changes with no measurable impact on protein function. Supporting evidence includes:

- Experimental Validation: Functional assays confirming that the mutation does not alter protein activity, stability, or other relevant biochemical properties [18].

- Absence in Disease Cohorts: Demonstration that the variant is not causative of a hereditary disease and may be present in population databases (e.g., gnomAD) at frequencies too high to be consistent with severe pathogenic effects [19].

- Variants of Uncertain Significance (VUS): This category encompasses the majority of variants discovered through sequencing. A VUS is a genetic alteration for which the clinical and functional impact is unknown [19]. It lacks sufficient evidence from either functional studies or population data to be classified as either neutral or non-neutral. The primary challenge in the field is to reclassify VUS into one of the definitive categories.

Table 1: Evidence for Classifying Mutation Impact

| Category | Experimental Evidence | Clinical/Population Evidence | Example |

|---|---|---|---|

| Non-Neutral | Altered protein function in biochemical assays; reduced cell growth in functional studies [18] [12] | Causative of hereditary disease; de novo in severe dominant conditions; absent from population controls [18] [19] | TP53 R175H (oncogenic) |

| Neutral | No measurable effect on protein activity or stability in assays [18] | Not segregated with disease in families; high frequency in population databases [19] | A synonymous change not affecting splicing |

| Uncertain (VUS) | No functional data available or available data is conflicting/inconclusive | Insufficient clinical data for classification; not previously reported [19] | A novel missense mutation in BRCA1 |

Benchmarking Mutation Effect Prediction Algorithms

Computational predictors offer a high-throughput method to prioritize mutations for experimental validation. However, they are not a substitute for functional evidence and should be used as guides for further investigation.

Performance Comparison of Prediction Tools

A comprehensive benchmark study evaluating 15 mutation effect predictors revealed considerable variation in their performance and agreement. The study utilized a "gold standard" set of 989 experimentally validated missense mutations (849 non-neutral and 140 neutral) across 15 cancer genes [18].

Table 2: Comparative Performance of Selected Mutation Effect Predictors

| Predictor | Methodology | Best For | Performance Notes |

|---|---|---|---|

| SIFT [20] | Sequence homology and physical properties of amino acids [20] | General functional impact | Good positive predictive value [18] |

| PolyPhen-2 [20] | Bayesian models based on substitution scores, phylogenetic conservation, and structural features [20] | General functional impact | Good positive predictive value [18] |

| CHASM [18] [20] | Random Forest classifier trained on cancer mutations from COSMIC [18] | Differentiating cancer drivers from passengers | Cancer-specific |

| FATHMM [20] | Hidden Markov Models with pathogenicity weights; recognizes sensitive protein domains [18] | Cancer-specific and other disease-specific predictions | Cancer-specific |

| MutationAssessor [20] | Evolutionary conservation at subfamily-specific sites [20] | Functional sites in protein families | Shows no-to-moderate agreement with other tools [18] |

| PROVEAN [20] | Sequence homology-based; predicts effects of substitutions, insertions, and deletions [20] | Scanning multiple mutations | Allows for multiple mutations |

| Condel [18] | Meta-predictor that combines scores from other algorithms [18] | Consensus deleteriousness score | Meta-predictor |

| CanDrA [18] | Support vector machine using 95 features and scores from 10 other algorithms [18] | Cancer driver annotation | Meta-predictor |

Key Findings from Benchmarking Studies

- No Single Best Algorithm: The accuracy of prediction algorithms varies considerably. No single algorithm is sufficient to predict all Single Nucleotide Variants (SNVs) with high accuracy for experimental or clinical follow-up [18].

- High Positive Predictive Value, Variable Negative Predictive Value: While most algorithms perform well at identifying deleterious mutations (high positive predictive value), their ability to correctly identify neutral mutations (negative predictive value) is much more variable and often lower [18].

- Combining Predictors Improves Performance: Aggregating predictions from multiple algorithms, especially those that use orthogonal information (e.g., sequence-based, structure-based, and machine-learning-based), can modestly improve overall accuracy and significantly enhance the negative predictive value. This approach helps mitigate the limitations of any single tool [18].

- The Rise of AI and Structure-Based Predictors: Artificial intelligence (AI) is accelerating the interpretation of VUS. Newer AI-based models, including those that incorporate protein structural data, are showing promise in improving the accuracy and efficiency of predictions [21].

- Benchmarking with Direct Biochemical Measurements: Evaluations beyond high-throughput surrogate assays are crucial. Benchmarks like VenusMutHub, which uses 905 small-scale experimental datasets with direct measurements of stability, activity, and binding affinity, provide a more rigorous assessment of a predictor's utility in protein engineering and drug development contexts [12].

Experimental Protocols for Functional Validation

The following are core methodologies used to generate the functional evidence required for definitive mutation classification.

Protocol: Assessing the Impact of Mutations on Protein Stability

Objective: To determine if a missense mutation alters the thermodynamic stability of a protein, which can impair its function and lead to disease [12].

Workflow:

- Site-Directed Mutagenesis: Introduce the specific point mutation into a plasmid containing the wild-type gene of interest.

- Protein Expression and Purification: Express and purify both the wild-type and mutant proteins from a suitable expression system (e.g., E. coli, mammalian cells).

- Stability Measurement:

- Equilibrium Denaturation: Incubate the wild-type and mutant proteins with increasing concentrations of a chemical denaturant (e.g., urea or guanidine hydrochloride).

- Signal Monitoring: Use circular dichroism (CD) or fluorescence spectroscopy to monitor the unfolding of the protein as a function of denaturant concentration.

- Data Analysis: Plot the folding signal against denaturant concentration and fit the data to a model to calculate the free energy of unfolding (ΔG). A significant decrease in the ΔG of the mutant protein compared to the wild-type indicates a destabilizing mutation.

Protocol: Evaluating the Impact of Mutations on Protein-Protein Binding Affinity

Objective: To quantify how a mutation affects the binding affinity between a protein and its interaction partner, a common mechanism for pathogenic variants [20].

Workflow:

- Sample Preparation: Generate wild-type and mutant proteins and the binding partner (e.g., a protein, DNA, or small molecule). Label one interaction partner with a fluorescent tag or other detectable moiety.

- Titration Experiment: Hold the concentration of the labeled partner constant while titrating in the unlabeled partner (wild-type or mutant).

- Binding Measurement:

- Surface Plasmon Resonance (SPR): Immobilize one partner on a chip and measure the binding kinetics (association rate, kon; dissociation rate, koff) as the other partner flows over it. The dissociation constant (KD) is calculated from koff/kon.

- Isothermal Titration Calorimetry (ITC): Titrate one binding partner into the other in a sample cell. Measure the heat released or absorbed during binding. Directly fit the heat data to a binding model to obtain KD, stoichiometry (n), and thermodynamic parameters (ΔH, ΔS).

- Interpretation: A higher K_D value for the mutant compared to the wild-type indicates a weakening of the binding interaction (reduced affinity).

Experimental Workflow for Binding Affinity

Successful classification of mutation impact relies on a suite of public databases, software tools, and experimental reagents.

Table 3: Key Resources for Mutation Annotation and Analysis

| Resource Name | Type | Function and Utility |

|---|---|---|

| COSMIC [20] | Database | Comprehensive resource for somatic mutations in cancer; critical for identifying mutation hotspots and recurrence [20]. |

| ClinVar [20] [19] | Database | Public archive of reports of the relationships among human variations and phenotypes, with supporting evidence [20]. |

| OMIM [20] [19] | Database | Catalog of human genes and genetic phenotypes, focusing on Mendelian disorders and germline mutations [20]. |

| gnomAD | Database | Population database of genetic variation; used to assess the frequency of a variant in control populations [19]. |

| FoldX [20] | Software | Predicts the change in protein stability (ΔΔG) upon mutation using an empirical force field [20]. |

| CADD | In Silico Tool | Integrates multiple annotations into a single score (C-score) to rank the deleteriousness of variants [19]. |

| REVEL | In Silico Tool | An ensemble method that combines scores from multiple individual predictors to rank missense variants [19]. |

| Site-Directed Mutagenesis Kit | Laboratory Reagent | Essential for introducing specific point mutations into plasmid DNA for subsequent functional testing. |

| Surface Plasmon Resonance (SPR) Instrument | Laboratory Equipment | Used for label-free, real-time analysis of biomolecular interactions to determine binding affinity and kinetics. |

The precise categorization of mutations into neutral, non-neutral, and uncertain categories is a cornerstone of modern genetics and drug discovery. This process is iterative and relies on a multi-faceted approach. While a rich ecosystem of computational predictors exists to prioritize variants, their limitations necessitate caution. The most reliable classifications are grounded in direct experimental evidence measuring specific biochemical properties. As AI and structural biology continue to advance, the future promises more accurate in silico tools. However, close integration between computational prediction and robust experimental validation will remain the definitive path to resolving the clinical and functional significance of genetic variants.

From SIFT to Deep Learning: A Taxonomy of Prediction Methods and Their Applications

In the field of genomics and personalized medicine, accurately predicting the functional impact of genetic variants is a fundamental challenge. Among the vast array of computational tools developed for this purpose, SIFT, PolyPhen-2, and PROVEAN have established themselves as traditional workhorses, widely utilized by researchers and clinicians for initial variant filtration and annotation [22]. These tools represent foundational approaches that leverage distinct methodologies—from evolutionary conservation to structural analysis—to assess whether amino acid substitutions are likely to be deleterious or neutral. Despite the emergence of newer machine learning and AI-based predictors, these established tools remain integral to variant interpretation pipelines. This guide provides a comprehensive comparison of SIFT, PolyPhen-2, and PROVEAN, examining their underlying algorithms, performance metrics, and optimal use cases within the broader context of mutation effect prediction accuracy research.

Methodology and Experimental Protocols

Understanding the methodological foundations of these tools is crucial for interpreting their predictions and recognizing their respective strengths and limitations.

Tool Methodologies

SIFT (Sorting Intolerant From Tolerant) operates on the principle that functionally important amino acid positions in a protein are evolutionarily conserved. The algorithm performs sequence homology analysis to gather related sequences, constructs multiple sequence alignments, and calculates probabilities for each amino acid at every position. Positions that can tolerate variation are assigned higher probabilities, while conserved positions are assigned lower probabilities. A variant is predicted as "deleterious" if the normalized probability score is ≤ 0.05, and "tolerated" otherwise [23].

PolyPhen-2 (Polymorphism Phenotyping v2) utilizes a combination of evolutionary conservation, physicochemical properties, and structural parameters to classify variants. The tool extracts features from multiple sequence alignments and known protein structures (or predicted structural attributes), which are then fed into a naive Bayes classifier. Variants are classified into three categories: "probably damaging," "possibly damaging," or "benign," based on a model trained on human disease mutations and neutral variants [24].

PROVEAN (Protein Variation Effect Analyzer) employs a sequence similarity-based approach that calculates the change in sequence similarity of a protein before and after introducing a variant. The tool clusters BLAST hits and computes a delta alignment score by comparing the reference and variant protein sequences against homologous sequences. The final PROVEAN score represents the average of these delta scores across sequence clusters. A score equal to or below a default threshold of -2.5 predicts a "deleterious" effect, while a score above this threshold predicts a "neutral" effect [23].

Benchmarking Experimental Design

Standardized evaluation protocols are essential for comparative performance assessment. Typical benchmarking experiments involve:

- Curated Benchmark Datasets: Utilizing databases like ClinVar or UniProt which contain variants with established pathogenic or benign classifications [25] [23]. These datasets are often filtered to include only high-confidence variants reviewed by multiple submitters or expert panels to minimize misclassification.

- Performance Metrics: Calculation of standard metrics including sensitivity (ability to correctly identify pathogenic variants), specificity (ability to correctly identify benign variants), accuracy (overall correctness), and balanced accuracy (accounting for class imbalance) [25] [23]. The area under the receiver operating characteristic curve (AUC/AUROC) is also widely used as a threshold-independent measure of predictive power [24].

- Variant Type Focus: Most evaluations concentrate on missense variants (single amino acid substitutions), as these represent the most common type of coding variation and pose significant interpretation challenges [25] [24].

The following diagram illustrates the core methodological differences and relationships between the three tools:

Performance Comparison and Experimental Data

Comprehensive benchmarking studies across diverse datasets provide critical insights into the relative performance of these traditional predictors.

Independent evaluations on standardized datasets reveal the comparative performance of SIFT, PolyPhen-2, and PROVEAN:

Table 1: Overall Performance Metrics on Human Protein Variants (Single Amino Acid Substitutions)

| Prediction Tool | Sensitivity (%) | Specificity (%) | Accuracy (%) | Balanced Accuracy (%) | No Prediction Rate (%) |

|---|---|---|---|---|---|

| SIFT | 85.0 | 69.0 | 74.8 | 77.0 | 2.0 |

| PolyPhen-2 | 88.7 | 62.5 | 72.0 | 75.6 | 3.9 |

| PROVEAN | 79.8 | 78.6 | 79.0 | 79.2 | 0 |

Data adapted from Choi et al. (2015) using UniProt human protein variant datasets [23].

Table 2: Performance in Specific Disease Contexts

| Tool | ccRCC Prediction Accuracy [22] | AD-related VUS Performance [26] | CHD Variant Sensitivity [27] |

|---|---|---|---|

| SIFT | 0.75 | Moderate | 93.0 |

| PolyPhen-2 | 0.69 | Moderate | Not top performer |

| PROVEAN | 0.70 | Not assessed | Not top performer |

Recent large-scale assessments indicate that while these traditional tools remain valuable, their performance tends to be surpassed by modern meta-predictors and AI-based approaches. A 2025 benchmark study of 28 prediction methods revealed that tools like MetaRNN and ClinPred, which incorporate conservation, other prediction scores, and allele frequencies as features, demonstrated the highest predictive power on rare variants [25]. The study also noted that for most methods, specificity was lower than sensitivity, and performance metrics tended to decline as allele frequency decreased [25].

Impact of Allele Frequency on Performance

The handling of allele frequency (AF) information significantly influences tool performance, particularly for rare variants:

- SIFT does not incorporate AF information in its predictions [25].

- PolyPhen-2 does not utilize AF as a direct feature in its algorithm [25].

- PROVEAN does not integrate AF data in its core methodology [25].

This absence of AF integration may contribute to the observed performance decline in rare variant assessment. The 2025 benchmark study found that across various AF ranges, most performance metrics tended to decline as AF decreased, with specificity showing a particularly large decline [25]. This highlights a significant limitation of these traditional tools in the context of rare variant interpretation, which is crucial for Mendelian disorders and cancer predisposition.

Research Reagent Solutions

The experimental workflow for variant effect prediction relies on several key resources and databases that serve as essential research reagents:

Table 3: Essential Research Resources for Variant Effect Prediction

| Resource Name | Type | Primary Function | Relevance to SIFT/PolyPhen-2/PROVEAN |

|---|---|---|---|

| ClinVar | Database | Public archive of variant interpretations | Primary source of benchmark variants with clinical significance [25] |

| UniProt | Database | Protein sequence and functional information | Provides reference sequences and functional annotations [24] |

| dbNSFP | Database | Compilation of pathogenicity predictions | Source of precomputed scores for multiple tools [25] |

| gnomAD | Database | Population allele frequency data | Filtering of common polymorphisms; assessment of variant rarity [25] |

| OMIA | Database | Genetic variants in animals | Enables cross-species validation and application [24] |

SIFT, PolyPhen-2, and PROVEAN represent foundational approaches in the variant effect prediction landscape, each with distinct methodological strengths. Evaluation data demonstrates that these tools offer complementary rather than redundant predictive value. SIFT provides strong sensitivity in identifying pathogenic variants, particularly in disease-gene families like CHD genes [27]. PolyPhen-2 offers robust integration of structural features but with slightly lower specificity. PROVEAN delivers balanced performance with the advantage of predicting various mutation types beyond single amino acid substitutions [23].

In contemporary research applications, these traditional tools maintain their utility for initial variant filtration and annotation. However, researchers should recognize their limitations, particularly regarding rare variant interpretation where modern tools incorporating allele frequency information and ensemble methods may offer superior performance [25]. The optimal strategy for variant effect prediction often involves combining multiple complementary tools, including both these established workhorses and newer AI-based approaches like AlphaMissense [27] [26], while always grounding computational predictions in biological context and experimental validation.

Cancer is a genetic disease driven by somatic mutations, yet the vast majority of mutations detected in tumor cells are functionally neutral "passenger" mutations that do not confer a growth advantage. Distinguishing the critical "driver" mutations from biologically irrelevant passengers represents a fundamental challenge in cancer genomics and precision oncology. Current estimates suggest that approximately 80% of somatic mutations in clinically sequenced tumors are classified as variants of unknown significance (VUS), creating a critical bottleneck in therapeutic decision-making [28].

Computational prediction algorithms have emerged as essential tools for prioritizing candidate driver mutations. Among these, cancer-specific predictors—trained specifically on cancer mutation data—have demonstrated superior performance over general-purpose variant effect predictors. CHASM (Cancer-specific High-throughput Annotation of Somatic Mutations), FATHMM (Functional Analysis Through Hidden Markov Models), and CanDrA (Cancer Driver Annotation) represent three significant approaches to this problem, each employing distinct methodologies to identify mutations with functional implications for cancer development and progression [29] [30].

This guide provides a comprehensive comparison of these three cancer-specific driver mutation prediction tools, evaluating their performance across multiple experimental benchmarks to inform researchers and clinicians in selecting appropriate methods for variant prioritization.

Methodologies and Technical Approaches

Core Algorithmic Approaches

CHASM employs a supervised machine learning framework based on random forest classifiers trained to distinguish between known driver and passenger mutations. The method incorporates 69 predictive features spanning evolutionary conservation, protein structure, and sequence composition. A key innovation of CHASM is its use of tumor-type specific training, creating customized models that account for the distinct selective pressures across cancer types [29].

FATHMM leverages hidden Markov models (HMMs) trained on conserved protein domains and alignments. The cancer-specific version (FATHMM-cancer) incorporates weighting schemes that emphasize features particularly relevant to oncogenesis. Unlike many general-purpose predictors, FATHMM-cancer is specifically optimized to identify variants with potential driver effects in cancer genes [29].

CanDrA utilizes a support vector machine (SVM) classifier with 65 structural and evolutionary features, but distinguishes itself through its focus on protein structure-based attributes. These include solvent accessibility, secondary structure, and physicochemical properties, enabling the detection of mutations likely to impact protein function through structural disruption [29].

Key Methodological Differences

Table 1: Core Methodological Differences Between Prediction Tools

| Tool | Algorithm Type | Key Features | Training Data | Cancer-Specific |

|---|---|---|---|---|

| CHASM | Random Forest | Evolutionary conservation, sequence features, structural metrics | Known driver vs. passenger mutations from cancer genomics data | Yes |

| FATHMM | Hidden Markov Model | Sequence conservation, domain information, evolutionary constraints | Multiple sequence alignments with cancer-specific weighting | Yes (separate cancer version) |

| CanDrA | Support Vector Machine | Structural features (solvent accessibility, secondary structure), evolutionary metrics | Known driver mutations and putative passenger mutations | Yes |

The workflow for identifying driver mutations typically begins with variant calling from sequencing data, followed by annotation and prioritization using these computational tools, culminating in experimental validation of top candidate mutations.

Diagram 1: Driver Mutation Prediction Workflow. Computational prediction forms a key step between variant annotation and experimental validation.

Performance Benchmarking and Experimental Validation

Comprehensive Benchmarking Across Multiple Datasets

A rigorous assessment of 33 computational algorithms published in Genome Biology evaluated performance across five complementary benchmark datasets representing different aspects of driver mutations: (1) mutation clustering patterns in protein 3D structures, (2) literature annotation from OncoKB, (3) TP53 mutation effects on transactivation, (4) tumor formation in xenograft experiments, and (5) functional annotation from in vitro cell viability assays [29].

In the critical benchmark of 3D spatial clustering—where driver mutations tend to form hotspots in protein structures—all three tools demonstrated strong performance:

Table 2: Performance in 3D Clustering Benchmark (AUC Scores)

| Tool | AUC (3D Clustering) | Rank Among 33 Tools | Sensitivity | Specificity |

|---|---|---|---|---|

| CanDrA | 0.97 | 1 | 0.89 | 0.93 |

| CHASM | 0.94 | 3 | 0.86 | 0.89 |

| FATHMM-cancer | 0.92 | 5 | 0.84 | 0.88 |

CanDrA achieved the highest accuracy (0.91) in binary predictions for the 3D clustering benchmark, followed closely by CHASM and FATHMM-cancer, which both ranked among the top five performers [29].

Performance Across Different Benchmark Types

The comparative analysis revealed that performance varies significantly across different benchmark types:

Table 3: Performance Across Multiple Benchmark Datasets (AUC Scores)

| Tool | OncoKB Annotation | TP53 Transactivation | Xenograft Assays | Cell Viability |

|---|---|---|---|---|

| CHASM | 0.90 | 0.88 | 0.85 | 0.82 |

| FATHMM-cancer | 0.87 | 0.85 | 0.82 | 0.80 |

| CanDrA | 0.92 | 0.84 | 0.81 | 0.79 |

For the OncoKB literature annotation benchmark, which evaluates ability to recapitulate known cancer drivers, CanDrA achieved the highest AUC (0.92), with CHASM (0.90) and FATHMM-cancer (0.87) also performing strongly [29].

A notable finding across all benchmarks was that cancer-specific algorithms significantly outperformed general-purpose prediction methods, with mean AUC scores of 92.2% versus 79.0% (Wilcoxon rank sum test, p = 1.6 × 10⁻⁴) in the 3D clustering benchmark [29].

Recent Developments and Emerging Trends

The field of driver mutation prediction continues to evolve rapidly, with several important trends emerging since the development of these established tools:

Integration of Additional Data Types: Newer predictors increasingly incorporate protein structural and functional genomic data. AlphaMissense, while not cancer-specific, demonstrates how incorporating structural features can enhance performance, significantly outperforming other deep learning methods in identifying known cancer drivers [28].

Improved Passenger Mutation Definitions: Recent approaches like CDMPred address a fundamental limitation in earlier tools—the quality of negative training examples. By incorporating high-quality passenger mutations from curated databases, these newer methods achieve improved performance with AUC values of 0.83 on training sets and 0.80 on independent tests [31] [32].

Validation in Clinical Cohorts: Modern evaluation increasingly uses real-world patient data for validation. Recent studies have demonstrated that VUSs predicted as pathogenic by AI tools in genes like KEAP1 and SMARCA4 show association with worse overall survival in NSCLC patients (N=7965 and 977), unlike "benign" VUSs, providing clinical relevance to computational predictions [28].

Ensemble Approaches: Combining multiple prediction methods through ensemble approaches has shown promise. Random forest models incorporating multiple VEPs as inputs have demonstrated improved performance over individual methods, with AUCs up to 0.998 for predicting oncogenic mutations [28].

Research Reagent Solutions and Practical Implementation

Table 4: Key Research Resources for Driver Mutation Prediction

| Resource | Type | Function | Relevance to Prediction |

|---|---|---|---|

| COSMIC | Database | World's largest somatic cancer mutation repository | Provides training data and benchmarking for cancer-specific predictors [30] |

| OncoKB | Database | Precision oncology knowledge base | Source of curated cancer driver mutations for validation [28] |

| TCGA | Data Resource | Comprehensive cancer genomics dataset | Source of mutation frequencies and patterns across cancer types [30] |

| dbCPM | Database | Cancer passenger mutation database | Provides high-quality negative training examples [31] [32] |

| Cancer3D | Database | Protein structural mapping of cancer mutations | Enables structural analysis of mutation distribution [30] |

Implementation Considerations

For researchers implementing these tools, several practical considerations emerge:

Complementary Strengths: The three tools exhibit complementary strengths, with CanDrA excelling in structural benchmarks, CHASM performing consistently well across multiple benchmarks, and FATHMM-cancer providing strong performance with its conservation-based approach. Using multiple tools in concert may provide more robust predictions than relying on any single method.

Tumor-Type Specificity: CHASM's tumor-type specific models may be advantageous for pan-cancer analyses where molecular mechanisms differ across tissues, while FATHMM-cancer and CanDrA offer more generalized cancer predictions.

Interpretability: CanDrA's structural features provide more biologically interpretable predictions compared to the more complex feature sets of CHASM and FATHMM-cancer, which may be advantageous for generating testable hypotheses about mutation mechanisms.

CHASM, FATHMM, and CanDrA represent significant milestones in the development of cancer-specific driver mutation prediction, demonstrating that domain-specific training substantially improves performance over general-purpose variant effect predictors. While each employs distinct methodological approaches—random forests, hidden Markov models, and support vector machines, respectively—all three have proven effective at identifying mutations with functional significance in cancer.

The ongoing evolution of this field points toward several future directions: increased integration of structural and functional genomic data, improved definition of passenger mutations for training, validation in large clinical cohorts, and the development of ensemble approaches that leverage the complementary strengths of multiple prediction methods. As precision oncology continues to advance, computational prediction of driver mutations will remain an essential tool for interpreting the vast landscape of somatic variation in cancer genomes.

Accurately predicting the functional consequences of amino acid substitutions represents a fundamental challenge across biomedical research, with direct implications for understanding genetic diseases and engineering novel proteins. Traditional computational methods have often relied on multiple sequence alignments (MSAs), which leverage evolutionary information from homologous sequences but are computationally intensive and can fail for proteins with few known relatives. The emerging class of zero-shot artificial intelligence predictors, exemplified by ProMEP (Protein Mutational Effect Predictor) and AlphaMissense, marks a significant shift in this landscape. These models leverage modern deep learning architectures trained on vast datasets of protein sequences and structures, enabling them to predict mutation effects without explicit task-specific training or reliance on MSAs. This guide provides a detailed, objective comparison of these two state-of-the-art tools, evaluating their architectural principles, performance benchmarks, and practical applications to assist researchers in selecting the appropriate tool for their specific research context.

ProMEP and AlphaMissense share the common goal of predicting mutation effects but diverge significantly in their underlying architectures, information sources, and intended applications.

ProMEP is a multimodal deep representation learning model designed specifically for zero-shot prediction of mutation effects on protein function. Its architecture uniquely integrates both sequence and structural context by training on approximately 160 million proteins from the AlphaFold database. A key innovation is its use of protein point cloud representations to handle structural information at atomic resolution, processed through a rotation- and translation-equivariant structure embedding module. This allows ProMEP to capture crucial long-range contact information and spatial constraints critical for protein functionality. The model employs a transformer encoder to generate comprehensive protein representations by combining sequence and structure embeddings, calculating mutation effects by comparing the log-likelihood of wild-type and mutated sequences conditioned on both sequence and structure contexts [10] [33].

AlphaMissense, developed by DeepMind, also leverages structural insights but through a different approach. Built upon the AlphaFold2 architecture, it combines deep learning with structural biology principles to predict the pathogenicity of missense variants. The model was trained on human and primate population variant data and leverages the evolutionary conservation patterns learned by AlphaFold2, though it incorporates additional training specifically focused on distinguishing pathogenic from benign variants. Unlike ProMEP, AlphaMissense does utilize MSAs as part of its input, which contributes to its strong performance on pathogenicity prediction but increases computational requirements [34] [35].

Table 1: Core Architectural Comparison of ProMEP and AlphaMissense

| Feature | ProMEP | AlphaMissense |

|---|---|---|

| Primary Objective | General mutation effect on protein function | Pathogenicity classification |

| Architecture Type | Multimodal (sequence + structure) | AlphaFold2-based |

| Structure Integration | Protein point clouds with SE(3)-equivariant embeddings | Structural constraints from AlphaFold2 |

| MSA Dependence | MSA-free | MSA-dependent |

| Training Data | ~160 million AlphaFold structures | Human and primate genetic variants |

| Output Interpretation | Fitness effect (log probability ratio) | Pathogenicity probability (0-1) |

| Computational Speed | Faster (2-3 orders magnitude vs. AlphaMissense) | Slower due to MSA processing |

Performance Comparison: Benchmarking Predictive Accuracy

General Mutation Effect Prediction

Comprehensive benchmarking reveals distinct performance profiles for each tool across different prediction tasks. On general protein variant effect prediction, ProMEP demonstrates state-of-the-art performance, achieving superior Spearman's rank correlation with experimental measurements compared to other leading methods including AlphaMissense. Specifically, on the ProteinGym benchmark comprising 1.43 million variants across 53 proteins from diverse organisms, ProMEP achieves competitive average performance. For the immunoglobulin G-binding protein G dataset containing multiple mutations, ProMEP attained a Spearman's correlation of 0.53, outperforming AlphaMissense (0.47) and other MSA-free methods like ESM2 variants [10].

A significant advantage of ProMEP is its robust performance on proteins with low sequence similarity or where MSAs are unavailable, making it particularly valuable for exploring poorly characterized protein families or de novo designed proteins. Additionally, ProMEP's MSA-free nature provides tremendous speed advantages, operating 2-3 orders of magnitude faster than AlphaMissense according to published reports, enabling high-throughput exploration of mutational space [10] [33].

Pathogenicity Prediction Performance

AlphaMissense excels specifically in pathogenicity prediction, demonstrating outstanding performance across diverse protein groups when validated against ClinVar data. Comprehensive evaluation shows Matthew's Correlation Coefficient (MCC) scores predominantly between 0.6-0.74 for various protein categories including soluble, transmembrane, and mitochondrial proteins. The tool achieves sensitivity and specificity of approximately 92% and 78%, respectively, for pathogenicity classification when benchmarked against manually curated variants classified according to ACMG/AMP guidelines [34] [35].

Performance varies across protein types, with reduced accuracy on intrinsically disordered regions and specific proteins like CFTR when validated against certain ClinVar data. However, when benchmarked against the higher-quality CFTR2 database, AlphaMissense achieves an MCC of 0.725, highlighting how data quality impacts perceived performance. For transmembrane proteins, it performs surprisingly well despite hydrophobicity reducing sequence variance, with 88% correct predictions in TM regions versus 85% for soluble regions, possibly due to spatial constraints enhancing structure-based predictions [34].

Table 2: Experimental Performance Benchmarks Across Key Studies

| Benchmark Context | ProMEP Performance | AlphaMissense Performance | Validation Dataset |

|---|---|---|---|

| General Mutation Effect | Spearman's correlation: 0.53 (Protein G, multiple mutations) | Spearman's correlation: 0.47 (Protein G, multiple mutations) | DMS datasets (UBC9, RPL40A, Protein G) |

| Large-Scale Benchmarking | Competitive average performance across 53 proteins | Not specifically reported | ProteinGym (53 proteins, 1.43M variants) |

| Pathogenicity Prediction | Not specifically designed for pathogenicity | MCC: 0.6-0.74 across protein groups; Sensitivity: 92%, Specificity: 78% | ClinVar, ACMG/AMP classifications |

| Transmembrane Proteins | Not specifically reported | 88% correct predictions in TM regions | Human Transmembrane Proteome |

| Computational Efficiency | 2-3 orders faster than AlphaMissense | Slower due to MSA requirements | Implementation comparisons |

Experimental Validation and Applications

Protein Engineering Applications

ProMEP has demonstrated exceptional capabilities in guiding protein engineering campaigns. In a landmark application, researchers used ProMEP to engineer enhanced versions of the gene-editing enzymes TnpB and TadA. For TnpB, ProMEP identified a 5-site mutant that increased gene-editing efficiency from 24.66% (wild-type) to 74.04% at the RNF2 site 1. For TadA, a 15-site mutant (in addition to the A106V/D108N double mutation) was developed into a base editing tool exhibiting an A-to-G conversion frequency of up to 77.27%, outperforming the previous standard ABE8e (69.80%) while significantly reducing bystander and off-target effects [10].

In another successful application, ProMEP guided the engineering of a Cas9 variant for base editors. Researchers constructed a virtual single-point saturation mutagenesis library containing 25,992 Cas9 single mutants, used ProMEP to calculate fitness scores, and selected 18 top-ranked mutations for experimental validation. Several single mutants (e.g., G1218R, G1218K, C80K) showed enhanced editing efficiency across all tested endogenous sites. Ultimately, combinations of beneficial mutations were identified, leading to the development of AncBE4max-AI-8.3, a high-performance variant achieving a 2-3-fold increase in average editing efficiency [36].

Clinical Variant Interpretation

AlphaMissense shows significant utility in clinical genetics for addressing the challenge of Variants of Uncertain Significance (VUS). In a comprehensive evaluation of 5,845 missense variants in 59 genes associated with neurological, musculoskeletal, and neuromuscular disorders, incorporating AlphaMissense predictions enabled reclassification of 56 VUS as likely pathogenic when used alongside existing ACMG/AMP criteria. When AlphaMissense replaced all existing computational evidence, 63 variants were reclassified as likely pathogenic, demonstrating its potential value in clinical variant interpretation [35].