ESM2 vs ESM1b vs ProtBERT: A Comparative Guide for Biomedical AI Researchers

This article provides a comprehensive analysis and practical comparison of three leading protein language models—ESM2, ESM1b, and ProtBERT.

ESM2 vs ESM1b vs ProtBERT: A Comparative Guide for Biomedical AI Researchers

Abstract

This article provides a comprehensive analysis and practical comparison of three leading protein language models—ESM2, ESM1b, and ProtBERT. Tailored for researchers, scientists, and drug development professionals, it covers foundational principles, methodological applications, optimization strategies, and rigorous validation benchmarks. The guide synthesizes the latest information to help practitioners select and deploy the optimal model for tasks ranging from structure prediction and function annotation to variant effect analysis, offering actionable insights to accelerate computational biology and therapeutic discovery.

Understanding the Landscape: Core Architectures and Evolutionary Context of ESM2, ESM1b, and ProtBERT

The advent of large-scale protein language models (pLMs) represents a paradigm shift in computational biology, enabling the transition from analyzing primary amino acid sequences to extracting complex semantic and functional representations. This technical guide frames the advancements within the context of evaluating three seminal architectures: ESM2, ESM1b, and ProtBERT. These models leverage transformer-based architectures pre-trained on massive protein sequence databases, learning evolutionary patterns, structural constraints, and functional signatures in a self-supervised manner. Their ability to generate high-dimensional embeddings that capture biological meaning is accelerating research in protein design, function prediction, and therapeutic discovery.

The field is dominated by several key models, each with distinct architectural choices and training data.

- ProtBERT (ProtBERT-BFD): One of the earliest pLMs, it adapts the BERT (Bidirectional Encoder Representations from Transformers) architecture from NLP. It is trained on the BFD (Big Fantastic Database) of protein sequences using masked language modeling (MLM), where randomly masked amino acids in a sequence are predicted.

- ESM-1b (Evolutionary Scale Modeling-1b): A transformer model from Meta AI, trained with MLM on the UniRef50 database. It introduced improvements in training efficiency and scale over earlier versions.

- ESM-2: The successor to ESM-1b, featuring a more modern transformer architecture with Rotary Position Embeddings (RoPE) and SwiGLU activation functions. It is trained on a larger dataset (UniRef50) and scales from 8M to 15B parameters, with the 650M parameter version being a common benchmark model. ESM-2 embeddings have demonstrated state-of-the-art performance in predicting protein structure (via ESMFold) and function.

Quantitative Comparison of Core pLMs

Table 1: Architectural and training data specifications for key pLMs.

| Model (Representative Version) | Developer | Base Architecture | Key Features | Training Data | Parameters | Context Length |

|---|---|---|---|---|---|---|

| ProtBERT-BFD | ProtBert Team | BERT | Masked Language Modeling (MLM) | BFD (2.1B clusters) | ~420M | 512 |

| ESM-1b | Meta AI | Transformer (BERT-like) | MLM, learned positional embeddings | UniRef50 (26M sequences) | 650M | 1024 |

| ESM-2 (650M) | Meta AI | Transformer (RoPE, SwiGLU) | Rotary Position Embeddings, MLM | UniRef50 (updated) | 650M | 1024 |

| ESM-2 (15B) | Meta AI | Transformer (RoPE, SwiGLU) | Largest pLM, enables high-accuracy structure prediction | UniRef50 (updated) | 15B | 1024 |

Performance Benchmark Comparison

Table 2: Benchmark performance on common downstream tasks (higher scores are better). Representative scores from recent literature.

| Model | Remote Homology Detection (FLOP) | Secondary Structure Prediction (Q3 Accuracy) | Contact Prediction (Top-L/precision) | Solubility Prediction (AUC) | Fluorescence Landscape Prediction (Spearman's ρ) |

|---|---|---|---|---|---|

| ProtBERT | 0.75 | 0.78 | 0.35 | 0.85 | 0.68 |

| ESM-1b | 0.82 | 0.81 | 0.48 | 0.87 | 0.73 |

| ESM-2 (650M) | 0.87 | 0.84 | 0.52 | 0.89 | 0.79 |

Experimental Protocols for pLM Evaluation and Application

Protocol 1: Generating Protein Embeddings for Downstream Analysis

Objective: To extract fixed-length contextual representations (embeddings) from a protein sequence using a pLM for use in supervised learning tasks.

Methodology:

- Sequence Input: Provide a FASTA file containing the target protein sequence(s). Ensure sequences do not exceed the model's context length (typically 1024).

- Model Loading: Load the pre-trained model (e.g.,

esm2_t33_650M_UR50D) and its associated tokenizer using thetransformerslibrary or official model repositories. - Tokenization & Masking: Tokenize the sequence, adding special tokens (

<cls>,<eos>). The tokenizer converts amino acids to integer IDs. - Embedding Extraction: Pass token IDs through the model. To obtain a per-protein embedding, extract the hidden state associated with the

<cls>token from the final layer. For per-residue embeddings, extract the hidden states for all residue positions. - Dimensionality Reduction & Analysis: Use the embeddings (e.g., 1280-dimensional for ESM-1b) as input features for classifiers (e.g., for function prediction) or use PCA/t-SNE for visualization.

Protocol 2: Fine-tuning for a Specific Prediction Task

Objective: To adapt a pre-trained pLM to a specialized dataset (e.g., enzyme classification, binding affinity prediction).

Methodology:

- Dataset Preparation: Curate a labeled dataset. Split into training, validation, and test sets.

- Model Modification: Add a task-specific head (e.g., a linear layer, multi-layer perceptron) on top of the base pLM encoder.

- Training Loop: Employ gradual unfreezing or differential learning rates. Fine-tune the added head with a higher learning rate (e.g., 1e-3) while gently tuning the base model with a lower rate (e.g., 1e-5). Use a task-appropriate loss function (Cross-Entropy for classification, MSE for regression).

- Validation & Early Stopping: Monitor performance on the validation set to prevent overfitting.

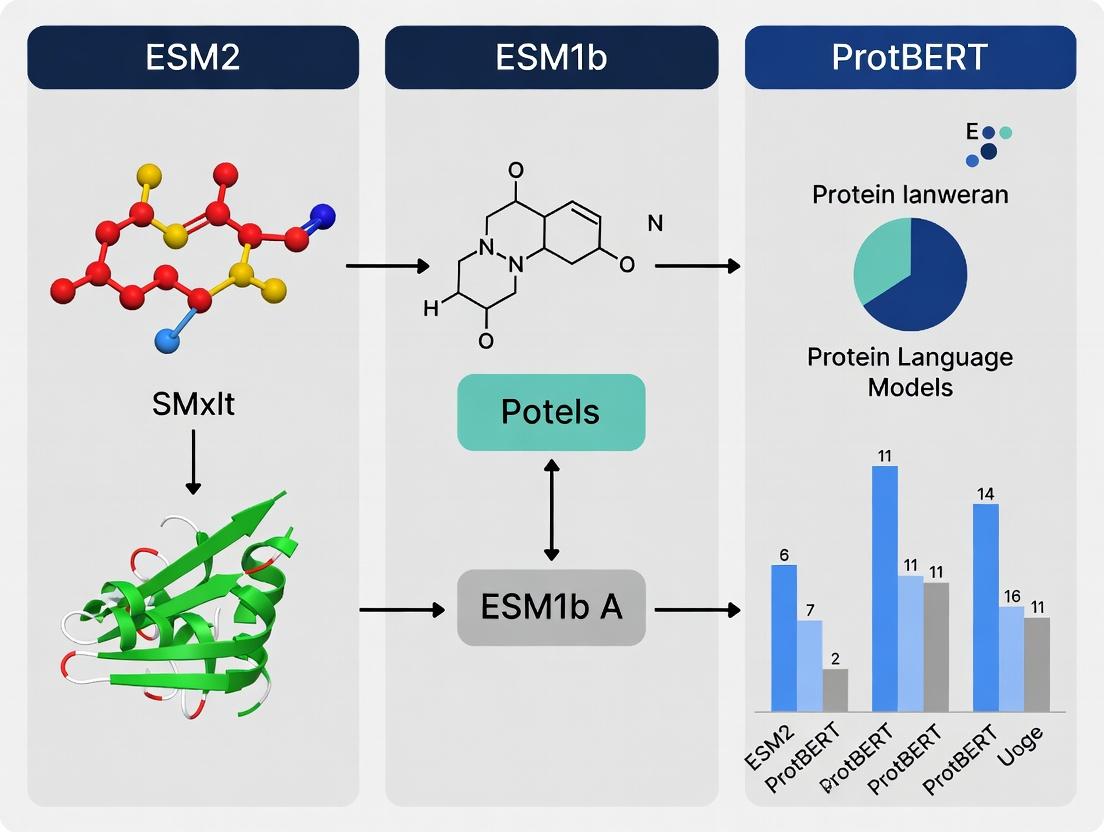

Visualizing pLM Workflows and Relationships

Diagram 1: pLM Training and Application Workflow (100 chars)

Diagram 2: pLM Model Architecture Comparison (100 chars)

Table 3: Key resources for working with protein language models.

| Resource Name | Type | Function / Description | Source / Example |

|---|---|---|---|

| UniRef50/UniRef90 | Database | Clustered protein sequence database used for pre-training pLMs. Provides diversity and reduces redundancy. | UniProt Consortium |

| BFD (Big Fantastic Database) | Database | Large collection of metagenomic protein sequences used to train ProtBERT and other early models. | Steinegger & Söding, 2018 |

| ESM Models (ESM-1b, ESM-2) | Pre-trained Model | State-of-the-art pLMs available in various sizes. Provided as PyTorch weights. | Hugging Face / Meta AI GitHub |

| ProtBERT Model | Pre-trained Model | Early BERT-based pLM, useful for baseline comparisons. | Hugging Face Model Hub |

| Transformers Library | Software Library | Python library by Hugging Face for downloading, loading, and fine-tuning transformer models. | pip install transformers |

| PyTorch / JAX | Framework | Deep learning frameworks required to run and train pLMs. ESM models use PyTorch; some newer models use JAX. | pytorch.org / jax.readthedocs.io |

| BioPython | Software Library | For handling protein sequence data (FASTA files), performing basic bioinformatics operations. | pip install biopython |

| FASTA File | Data Format | Standard text-based format for representing nucleotide or amino acid sequences. Input for pLM tokenizers. | N/A |

| Fine-tuning Datasets (e.g., FLIP, Proteinea) | Benchmark Dataset | Curated datasets for specific tasks (e.g., variant effect, stability) used to evaluate and fine-tune pLMs. | Literature-specific |

| GPU (e.g., NVIDIA A100/H100) | Hardware | Accelerator essential for efficient inference and fine-tuning of large pLMs (especially >3B parameters). | N/A |

In the rapidly evolving field of protein language models (pLMs), researchers and drug development professionals are presented with a suite of powerful tools for predicting protein structure, function, and fitness. Three foundational architectures dominate the landscape: ESM2 (Evolutionary Scale Modeling), its predecessor ESM1b, and ProtBERT, which pioneered the application of the BERT (Bidirectional Encoder Representations from Transformers) architecture to protein sequences. While the ESM models, developed by Meta AI, leverage a causal (auto-regressive) masked language modeling objective on vast evolutionary-scale datasets, ProtBERT established the paradigm of applying a bidirectional transformer encoder, pre-trained on a large corpus of protein sequences, to a wide array of downstream biological tasks. This whitepaper provides an in-depth technical guide to ProtBERT, its methodologies, and its role in the comparative analysis of modern pLMs.

Core Architecture and Pre-training Methodology

ProtBERT is a direct adaptation of the BERT-base architecture (110M parameters) for the "language" of proteins. Its alphabet consists of the 20 standard amino acids, plus two special tokens: [CLS] for classification and [SEP] for separation.

Pre-training Protocol:

- Dataset: The model was pre-trained on the UniRef100 database (version 2018-06), comprising approximately 216 million protein sequences.

- Tokenization: Each amino acid is treated as a single token. Sequences are tokenized and padded/truncated to a maximum length of 512 tokens.

- Objective: It employs the Masked Language Modeling (MLM) objective. During training, 15% of amino acid tokens in each sequence are randomly selected for masking. Of these:

- 80% are replaced with the

[MASK]token. - 10% are replaced with a random amino acid token.

- 10% are left unchanged.

- 80% are replaced with the

- The model is trained to predict the original tokens at the masked positions, learning contextualized representations of amino acids based on their bidirectional context within the sequence.

Diagram: ProtBERT Pre-training Workflow

Title: ProtBERT Pre-training and Masked Language Modeling

Quantitative Performance Comparison: ProtBERT vs. ESM1b vs. ESM2

The following tables summarize key architectural details and benchmark performance across representative tasks. Data is aggregated from recent literature and model repositories.

Table 1: Model Architecture & Pre-training Specifications

| Feature | ProtBERT | ESM1b | ESM2 (3B variant) |

|---|---|---|---|

| Base Architecture | BERT (Bidirectional) | Transformer (Causal) | Transformer (Causal) |

| Parameters | 110M | 650M | 3B |

| Pre-training Data | UniRef100 (216M seqs) | UniRef50 (27M seqs) | Unified UR50/UR100/SFD (∼60M seqs) |

| Context Window | 512 tokens | 1024 tokens | 1024 tokens |

| Pre-training Objective | Masked Language Model (MLM) | Causal Language Model (CLM) | Causal Language Model (CLM) |

| Public Release Year | 2020 | 2021 | 2022 |

Table 2: Benchmark Performance on Downstream Tasks

| Task (Dataset) | Metric | ProtBERT | ESM1b | ESM2 (3B) | Notes |

|---|---|---|---|---|---|

| Secondary Structure (CB513) | 3-state Accuracy (%) | 72.5 | 78.0 | 81.2 | ESM models benefit from larger size & CLM. |

| Contact Prediction (test set) | Precision@L/5 (↑) | 0.39 | 0.68 | 0.67 | ESM1b/2 show superior structural insight. |

| Fluorescence (Landscape) | Spearman's ρ (↑) | 0.68 | 0.73 | 0.83 | Scaling improves fitness prediction. |

| Remote Homology (Fold) | Top-1 Accuracy (%) | 27.0 | 45.5 | 51.2 | Evolutionary modeling strength of ESM. |

| Solubility Prediction | AUC (↑) | 0.79 | 0.82 | 0.85 | General representation quality. |

Experimental Protocols for Downstream Task Fine-tuning

ProtBERT representations can be leveraged for diverse tasks via feature extraction or end-to-end fine-tuning. Below is a generalized protocol for a supervised task like protein function classification.

Protocol: Fine-tuning ProtBERT for Sequence Classification

Data Preparation:

- Format labeled protein sequences (FASTA) and labels (e.g., enzyme class).

- Split data into training, validation, and test sets (e.g., 80/10/10).

- Tokenize sequences using ProtBERT's tokenizer (padding/truncation to 512).

Model Setup:

- Load the pre-trained

prot_bertmodel with a sequence classification head. - The

[CLS]token's final hidden state is used as the aggregate sequence representation for classification.

- Load the pre-trained

Training Configuration:

- Optimizer: AdamW with weight decay.

- Learning Rate: 2e-5 to 5e-5 (with linear warmup and decay).

- Batch Size: 16 or 32, depending on GPU memory.

- Epochs: 5-10, with early stopping based on validation loss.

Evaluation: Predict on the held-out test set and report standard metrics (Accuracy, F1-score, MCC).

Diagram: ProtBERT Fine-tuning Workflow

Title: End-to-end Fine-tuning of ProtBERT

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Resources for Working with ProtBERT and pLMs

| Item | Function/Description | Source/Example |

|---|---|---|

| ProtBERT Model Weights | Pre-trained parameters for inference or fine-tuning. | Hugging Face Hub (Rostlab/prot_bert) |

| ESM Model Weights | Pre-trained parameters for ESM1b and ESM2 models. | ESM GitHub Repository (FAIR) |

| Tokenizers (ProtBERT) | Converts amino acid sequences to model input IDs. | BertTokenizer from transformers library |

| UniRef Database | Curated protein sequence clusters for training or analysis. | UniProt Consortium |

| PDB (Protein Data Bank) | Experimental 3D structures for validation and analysis. | RCSB.org |

| Pytorch / Transformers | Core deep learning framework and model library. | PyTorch.org, Hugging Face |

| BioPython | For general sequence manipulation, parsing FASTA files. | BioPython.org |

| EVcouplings / MSA Tools | For generating evolutionary context (MSAs) as baseline. | EVcouplings.org, HH-suite |

| GPU Computing Instance | Necessary for model training/inference (e.g., NVIDIA A100). | Cloud providers (AWS, GCP, Azure) |

Within the landscape of protein language models, the Evolutionary Scale Modeling (ESM) series from Meta AI (formerly Facebook AI) represents a pivotal advancement. This guide provides an in-depth technical analysis of ESM1b, positioned between the initial ESM-1 (ESM1) and the subsequent, more parameter-rich ESM2. For researchers comparing ESM1b, ESM2, and ProtBERT, understanding ESM1b's architecture and training paradigm is crucial. ESM1b's primary thesis was the demonstration that scaling model size and dataset size, using a masked language modeling (MLM) objective on protein sequences, leads to emergent biological understanding and state-of-the-art performance on downstream tasks, even without explicit evolutionary information from multiple sequence alignments (MSAs).

Core Architecture & Technical Specifications

ESM1b is a Transformer encoder model based on the original BERT architecture, adapted for the protein sequence alphabet (20 standard amino acids plus special tokens). Its design emphasizes scale as the dominant factor in performance.

Key Architectural Features:

- Model Size: 650 million parameters.

- Layers: 33 Transformer encoder layers.

- Hidden Dimension: 1280.

- Attention Heads: 20 attention heads per layer.

- Positional Encodings: Learned positional embeddings.

- Activation Function: GELU (Gaussian Error Linear Unit).

- Vocabulary: A token for each of the 20 standard amino acids, plus special tokens for padding, masking, beginning of sequence, and end of sequence.

- Training Objective: Masked Language Modeling (MLM). A percentage of amino acids in each sequence are replaced with a mask token, and the model is trained to predict the original identity from its context.

Training Methodology & Dataset

The training of ESM1b was a landmark exercise in scaling for biological sequences.

- Dataset: UniRef50 (clustered at 50% identity), comprising approximately 30 million unique protein sequences. This dataset is orders of magnitude larger than the curated MSAs used by earlier methods like profile Hidden Markov Models.

- Training Compute: Extensive training on NVIDIA V100 GPUs, emphasizing the "scale-dominated" aspect of the project.

- Masking Strategy: Employed the standard BERT-style masking with a 15% masking probability.

Quantitative Performance Comparison

The following tables summarize key benchmark results for ESM1b against its predecessor, successor, and a key competitor, ProtBERT. Data is compiled from original publications and subsequent evaluations.

Table 1: Model Architecture & Training Scale

| Model | Parameters | Layers | Hidden Dim | Training Data | Primary Training Objective |

|---|---|---|---|---|---|

| ESM1b | 650M | 33 | 1280 | UniRef50 (~30M seqs) | Masked Language Modeling |

| ESM-1 (ESM1) | 8M - 670M | 12 - 48 | 480 - 1280 | UniRef50 | Masked Language Modeling |

| ESM2 (15B) | 15B | 48 | 5120 | UniRef50 + UR90/100 | Masked Language Modeling |

| ProtBERT (BFD) | 420M | 30 | 1024 | BFD (~2.1B seqs) | Masked Language Modeling |

Table 2: Performance on Downstream Tasks (Representative Scores)

| Task (Metric) | ESM1b | ESM-1 (650M) | ESM2 (15B) | ProtBERT |

|---|---|---|---|---|

| Remote Homology Detection (Top-1 Accuracy) | 0.81 | 0.79 | 0.91 | 0.45 |

| Fluorescence Prediction (Spearman's ρ) | 0.68 | 0.67 | 0.83 | 0.47 |

| Stability Prediction (Spearman's ρ) | 0.73 | 0.71 | 0.85 | 0.58 |

| Contact Prediction (Top-L Precision) | 0.43 | 0.40 | 0.65 | 0.22 |

Experimental Protocols for Key Findings

Experiment 1: Zero-Shot Contact Prediction from Attention Maps

- Objective: To demonstrate that the self-attention mechanisms in a model trained only on MLM learn structural information, enabling prediction of residue-residue contacts without fine-tuning.

- Protocol:

- Input: A query protein sequence.

- Forward Pass: Run the sequence through the ESM1b model to obtain attention matrices from all heads and layers.

- Averaging: Compute a weighted geometric mean of attention maps across specific layers (typically the middle to later layers) and heads.

- Symmetrization: Convert the directed attention map to a symmetric contact map by taking the average of (Aij + Aji).

- Post-processing: Apply an isotropic Gaussian filter to smooth the map. Extract top-L predictions (where L is sequence length) and calculate precision.

Experiment 2: Supervised Fine-tuning for Function Prediction

- Objective: Adapt the generally pre-trained ESM1b to specific prediction tasks (e.g., fluorescence, stability).

- Protocol:

- Pooling: For each protein sequence, the token representations from the final layer are pooled (e.g., mean pooling) to create a fixed-size per-sequence embedding.

- Add Task Head: Append a simple regression or classification head (e.g., a linear layer or small MLP) on top of the pooled embedding.

- Fine-tune: Train the model on the labeled dataset. Common practice is to initially freeze the ESM1b backbone and only train the task head for a few epochs, then unfreeze the entire model for end-to-end training with a low learning rate.

- Evaluation: Predict on the held-out test set and report task-specific metrics (e.g., Spearman's correlation for regression, accuracy for classification).

Visualization: ESM1b Workflow & Contact Prediction

ESM1b Pre-training and Zero-Shot Contact Prediction

ESM1b Context in the Protein Language Model Landscape

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Resources for Working with ESM1b

| Item | Function/Description | Source/Example |

|---|---|---|

| Pre-trained ESM1b Weights | The core model parameters required for inference or fine-tuning. | Hugging Face Transformers Library (facebook/esm1b_t33_650M_UR50S), ESM GitHub Repository. |

| ESM Python Library | Official Meta AI library for loading models, extracting representations, and running contact prediction. | GitHub: facebookresearch/esm. |

Hugging Face Transformers |

Alternative, standardized interface for loading the model and integrating into ML pipelines. | from transformers import AutoModelForMaskedLM. |

| PyTorch | Deep learning framework required to run the ESM models. | torch |

| UniRef or Custom Protein Dataset | Sequences for inference or fine-tuning. | UniProt, Pfam, or custom experimental data. |

| Fine-tuning Datasets | Labeled data for supervised tasks (e.g., fluorescence, stability). | DeepSEA, ProteinGym, or task-specific benchmarks. |

| GPU with Ample VRAM (>16GB) | Hardware necessary for efficient inference and fine-tuning of this large model. | NVIDIA V100, A100, or similar. |

| Biopython / NumPy / SciPy | Standard libraries for handling biological sequences and numerical computations. | Common Python ecosystem packages. |

The Evolutionary Scale Modeling (ESM) family represents a paradigm shift in protein language modeling, enabling the prediction of protein structure and function directly from sequence. This guide situates the 15-billion-parameter ESM2 within the context of its predecessors (ESM-1b) and a key alternative (ProtBERT), providing a technical framework for researchers in computational biology and drug discovery.

Comparative Model Architecture and Performance

ESM2's architecture scales transformer-based models to unprecedented sizes for biology. The key innovation is its integrated structure prediction head, which maps learned sequence representations to 3D coordinates.

Table 1: Model Architecture & Scale Comparison

| Feature | ESM-1b (650M params) | ESM2 (15B params) | ProtBERT (420M params) |

|---|---|---|---|

| Parameters | 650 Million | 15 Billion | 420 Million |

| Layers | 33 | 48 | 30 |

| Embedding Dim | 1280 | 5120 | 1024 |

| Attention Heads | 20 | 40 | 16 |

| Training Tokens | ~250B | >1 Trillion | ~200B |

| Key Output | Sequence Representations | Sequence + 3D Structure | Sequence Representations |

| Structure Prediction | No (Requires downstream finetuning) | Yes (Integrated head) | No |

Table 2: Benchmark Performance (Average)

| Benchmark Task | ESM-1b | ESM2 (15B) | ProtBERT |

|---|---|---|---|

| Remote Homology (Fold Recall) | 0.81 | 0.92 | 0.75 |

| Fluorescence Prediction (Spearman's ρ) | 0.68 | 0.83 | 0.61 |

| Stability Prediction (Spearman's ρ) | 0.73 | 0.86 | 0.65 |

| Contact Prediction (Precision@L/5) | 0.58 | 0.84 | 0.45 |

| Mean AlphaFold2 pLDDT of predicted structure | N/A | ~85 | N/A |

Experimental Protocol: Utilizing ESM2 for Structure Prediction

This protocol details the inference of protein 3D structure from a single sequence using the pretrained ESM2 15B model.

Materials & Computational Requirements:

- Input: Protein amino acid sequence in FASTA format.

- Software: Python 3.9+, PyTorch 1.12+, fair-esm library, Biopython.

- Hardware: GPU with >40GB VRAM (e.g., NVIDIA A100) is mandatory for the 15B model in full precision. Inference in bfloat16 is possible with reduced memory.

Step-by-Step Workflow:

- Environment Setup: Install the

fair-esmpackage and dependencies (pip install fair-esm). - Model Loading: Load the pretrained ESM2 model and its associated alphabet (for tokenization).

- Data Preparation: Tokenize the input sequence(s).

- Structure Inference: Pass tokens through the model to generate the structure.

- Output Processing: Extract the predicted backbone coordinates (in Angstroms) and save as a PDB file.

Figure 1: ESM2 15B structure prediction workflow.

Table 3: Essential Digital & Computational Resources

| Resource Name | Type | Primary Function |

|---|---|---|

| ESM Metagenomic Atlas | Database | Precomputed ESM2 representations for ~600M metagenomic proteins. |

| ESMFold (API/Web Server) | Software Tool | Public interface for ESM2 structure prediction without local GPU. |

HuggingFace esm2_t48_15B |

Model Weights | Direct access to model checkpoints for fine-tuning. |

| PyTorch / fair-esm | Library | Core framework for model inference and downstream task development. |

| AlphaFold2 (Colab) | Software Tool | Benchmark for comparing ESM2-predicted structures. |

| PDB (Protein Data Bank) | Database | Ground truth experimental structures for validation. |

Core Technical Innovation: The Structure Head

Unlike ESM-1b and ProtBERT, which output only sequence representations, ESM2 integrates a folded attention-based structure module. This head operates on the final layer's residue embeddings.

Logical Process:

- The transformer's output embeddings for each residue are processed through a series of triangular self-attention layers, analogous to those in AlphaFold2, to model residue-pair relationships.

- A Invariant Point Attention (IPA) mechanism refines these pairwise features, incorporating rotational and translational invariance crucial for 3D space.

- The network directly outputs the 3D coordinates for the backbone atoms (N, Cα, C, O) via a frame-aligned point error (FAPE) loss during training.

Figure 2: ESM2's integrated structure head logic.

Research Application Protocol: Zero-Shot Fitness Prediction

ESM2 enables zero-shot prediction of mutational effects without task-specific training.

Protocol:

- Generate Wild-type and Mutant Embeddings: Pass the wild-type sequence and each mutant variant through ESM2. Extract the

<CLS>token or mean residue embedding from the final layer. - Compute Evolutionary Index: Use the model's internal probabilities to calculate the pseudo-log-likelihood for each sequence variant.

- Derive Fitness Score: The normalized difference in log-likelihood between mutant and wild-type serves as a predicted fitness score.

- Validation: Correlate predicted scores with experimental deep mutational scanning (DMS) data using Spearman's rank correlation.

ESM2 15B establishes a new state-of-the-art by unifying scaled sequence modeling with end-to-end structure prediction, outperforming both ESM-1b and ProtBERT in functional and structural benchmarks. Its integrated architecture provides a powerful, unified tool for researchers exploring protein engineering, functional annotation, and drug target discovery.

1. Introduction & Thesis Context This technical guide details the core evolutionary milestones from ProtBERT to ESM2, framed within a broader thesis contrasting these foundational protein language models (pLMs). For researchers comparing ESM2, ESM1b, and ProtBERT, understanding this progression is critical. The evolution is defined by scaling laws, architectural innovations, and an expanded understanding of protein semantics, directly impacting applications in variant effect prediction, structure inference, and therapeutic design.

2. Model Evolution: Architectural and Scale Milestones

Table 1: Quantitative Comparison of Key pLM Generations

| Feature | ProtBERT (2020) | ESM1b (2021) | ESM2 (2022) |

|---|---|---|---|

| Base Architecture | BERT (Transformer Encoder) | Transformer Encoder (RoBERTa-style) | Evoformer Stack (Inspired by AlphaFold2) |

| Parameters | ~420M | 650M | 650M to 15B (ESM2 15B) |

| Training Tokens | ~215B (Uniref100) | ~250B (Uniref50) | Up to ~1.1T (Uniref + MGnify) |

| Context Length | 512 | 1024 | 1024 |

| Key Innovation | First dedicated deep pLM; Masked Language Modeling (MLM) on protein sequences. | Optimized training, larger corpus; established scaling laws for fitness prediction. | Integrated structural bias via Evoformer; unified sequence-structure learning; massive scale. |

| Primary Output | Sequence embeddings for downstream tasks. | Superior sequence embeddings for variant effect. | Direct generation of residue-level distances (3D coordinates via IF1). |

Diagram 1: Core evolutionary path from ProtBERT to ESM2.

3. Experimental Protocols & Validation

Protocol 1: Zero-Shot Fitness Prediction (Core Benchmark)

- Objective: Assess model's ability to predict the functional impact of missense mutations without task-specific training.

- Methodology:

- Input Representation: For a wild-type sequence and its mutant variant, generate per-residue hidden state embeddings from the model's final layer.

- Logit Extraction: For the mutated position, extract the pseudo-log-likelihood (PLL) or the masked marginal score for the wild-type amino acid vs. the mutant.

- Score Calculation: The fitness score is typically the negative log probability of the mutant sequence given by the model. The delta score (mutant - wild-type) correlates with experimental fitness.

- Validation: Compute Spearman's rank correlation between model-derived delta scores and experimentally measured fitness (e.g., from deep mutational scanning libraries like GB1, TEM-1, or avGFP).

Protocol 2: Contact & Structure Prediction

- Objective: Evaluate the model's emergent understanding of protein 3D structure.

- Methodology (ESM1b/ProtBERT):

- Embedding Extraction: Generate attention maps or covariance matrices from the model's attention heads.

- Post-processing: Apply techniques like Average Product Correction (APC) to remove noise and indirect correlations.

- Prediction: Predict residue-residue contacts from the top off-diagonal signals in the processed matrix.

- Methodology (ESM2):

- Direct Inference: Utilize the integrated Evoformer's output to directly predict a distogram (pairwise distance distribution) or 3D coordinates via the attached Inverse Folding head (IF1).

- Refinement: Optional step using structure refinement tools (e.g., OpenMM, Rosetta).

- Validation: Compute precision of top-L/L predicted contacts (L=sequence length) against true structures (PDB). For 3D coordinates, calculate TM-score or RMSD.

Diagram 2: Key experimental validation workflows for pLMs.

4. The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagent Solutions for pLM Experimentation

| Reagent / Tool | Function | Example / Source |

|---|---|---|

| Model Weights & Code | Pre-trained model parameters and inference scripts. | Hugging Face Transformers (Rostlab/prot_bert, facebook/esm), ESM GitHub repo. |

| Protein Sequence Database | Curated datasets for training, fine-tuning, or benchmarking. | UniRef, MGnify, Protein Data Bank (PDB). |

| Downstream Task Datasets | Benchmark data for model performance evaluation. | Deep Mutational Scanning (DMS) data (ProteinGym), contact prediction benchmarks (CASP). |

| Computation Environment | Hardware/software stack for running large models. | GPU clusters (NVIDIA A100/H100), PyTorch, CUDA. |

| Structure Visualization | Render and analyze predicted 3D structures. | PyMOL, ChimeraX, biopython. |

| Sequence Alignment Tool | For evolutionary analysis and MSA input generation. | HH-suite, JackHMMER, pyhmmer. |

5. Conclusion and Direction The trajectory from ProtBERT to ESM2 marks a paradigm shift from treating proteins purely as sequential text to modeling them as evolutionary-linked structural entities. For the researcher, the choice between ProtBERT, ESM1b, and ESM2 hinges on the task: ESM1b remains a robust, efficient choice for sequence-based predictions, while ESM2 represents the state-of-the-art for tasks demanding implicit or explicit structural reasoning, justifying its computational cost. The unified architecture of ESM2 paves the way for generative protein design and highly accurate zero-shot structure prediction.

Practical Deployment: How to Apply Each Model for Key Research Tasks

The selection of a protein language model (pLM) for a specific research task is a critical decision that directly impacts experimental outcomes and resource efficiency. This guide provides a structured framework for choosing between three leading pLMs—ESM2, ESM1b, and ProtBERT—within the context of protein engineering, function prediction, and drug development research. The performance of these models varies significantly depending on the task, data characteristics, and computational constraints, necessitating a principled selection approach.

Core Architectural Differences

A live search of recent literature and model repositories (e.g., Hugging Face, BioLM) confirms the following foundational specifications:

- ESM1b (Evolutionary Scale Modeling-1b): A transformer model with 650 million parameters, trained on the UniRef50 dataset using a masked language modeling (MLM) objective. It is the predecessor to ESM2 and set benchmarks for structure-aware embeddings.

- ESM2: The latest iteration, featuring a more modern transformer architecture with improved attention mechanisms. It is scaled from 8M to 15B parameters, with the 650M and 3B versions being most common for research. It was trained on a larger, more diverse dataset (UniRef+ metagenomic data) and demonstrates superior performance on tasks requiring evolutionary and structural insight.

- ProtBERT (Protein Bidirectional Encoder Representations from Transformers): A BERT-based model with 420 million parameters, trained on BFD (Big Fantastic Database) and UniRef100 datasets. Its training objective combines MLM and next sentence prediction, adapted for protein sequences.

Quantitative Performance Comparison

The following table summarizes benchmark performance across common biological tasks, aggregated from recent publications (Rives et al., 2021; Lin et al., 2023; Elnaggar et al., 2021).

Table 1: Benchmark Performance of pLMs on Core Tasks

| Task | Metric | ESM1b (650M) | ESM2 (650M) | ESM2 (3B) | ProtBERT (420M) | Notes |

|---|---|---|---|---|---|---|

| Contact Prediction | Precision@L/5 (↑) | 0.41 | 0.48 | 0.58 | 0.35 | ESM2-3B state-of-the-art; ESM2-650M offers best efficiency balance. |

| Secondary Structure (Q3) | Accuracy (↑) | 0.78 | 0.81 | 0.83 | 0.75 | Trained with fine-tuning on DSSP labels. |

| Solubility Prediction | AUROC (↑) | 0.86 | 0.89 | 0.91 | 0.84 | Binary classification task on experimental solubility data. |

| Fluorescence (Log Intensity) | Spearman's ρ (↑) | 0.73 | 0.79 | 0.82 | 0.68 | Prediction of protein fluorescence from sequence. |

| Inference Speed | Seq/sec (CPU, bs=1) (↑) | 12 | 10 | 3 | 8 | Approximate relative speed on a single Intel Xeon core, 1024 seq length. |

| Memory Footprint | Model Size (GB) (↓) | 2.4 | 2.4 | 11.5 | 1.7 | Disk storage for full precision (FP32) weights. |

Decision Framework & Selection Protocol

The selection process follows a structured decision tree based on primary research objectives and constraints.

Title: pLM Selection Decision Tree

Framework Logic

- Structural Biology Priority: For tasks where evolutionary covariation and 3D structure are paramount (e.g., contact prediction, fold classification), the ESM2 family is superior. Choose the largest feasible parameter size.

- Resource-Constrained Environments: For labs with limited GPU memory or need for high-throughput inference, ESM2-650M offers the best performance-to-efficiency ratio. ProtBERT is also a viable, lighter alternative for some non-structural tasks.

- Function & Variant Analysis: For tasks like function annotation, solubility, or stability prediction, all models are competitive. ProtBERT can be a strong, efficient baseline. For variant effect prediction, fine-tuned ESM1v/ESM2 models are often preferred.

- Baseline & Reproducibility: When comparing against established benchmarks, ESM1b remains a critical baseline for reproducibility of earlier studies.

Experimental Protocols for Model Evaluation

To apply the framework, researchers should conduct controlled evaluations.

Protocol: Benchmarking pLMs on a Custom Dataset

Objective: Systematically compare embedding utility from ESM1b, ESM2, and ProtBERT for a downstream prediction task (e.g., enzyme classification).

Workflow:

- Data Preparation: Curate a balanced dataset of protein sequences and labels. Split into train/validation/test sets (e.g., 70/15/15).

- Embedding Generation: Extract per-residue or per-sequence embeddings from each model's final layer (or specified optimal layer).

- Downstream Model Training: Train identical, simple classifiers (e.g., logistic regression, 2-layer MLP) on each set of frozen embeddings.

- Evaluation: Compare classifiers on the held-out test set using task-specific metrics (AUROC, Accuracy, F1).

Title: pLM Benchmarking Workflow

Protocol: Fine-tuning for a Specific Prediction Task

Objective: Maximize performance on a well-defined task (e.g., binding affinity prediction) by fine-tuning the pLM.

- Model Setup: Initialize with pre-trained weights of ESM2-650M (recommended starting point).

- Task Head Addition: Append a regression or classification head suitable for the task.

- Progressive Unfreezing: Initially train only the task head, then unfreeze and train upper transformer layers progressively to avoid catastrophic forgetting.

- Hyperparameter Tuning: Use the validation set to tune learning rate (typically very low, e.g., 1e-5), batch size, and dropout.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for pLM-Based Research

| Item/Category | Specific Example(s) | Function & Application |

|---|---|---|

| Model Hub | Hugging Face transformers, BioLM.ai, FairScale (for ESM) |

Provides pre-trained model weights, loading scripts, and inference interfaces. Essential for reproducibility. |

| Embedding Extraction | esm Python package, transformers library, bio-embeddings pipeline |

Tools to reliably extract residue-level or sequence-level embeddings from models in standardized formats. |

| Downstream Training | PyTorch Lightning, scikit-learn, TensorFlow/Keras | Frameworks for building and training simple or complex downstream prediction models on top of frozen embeddings. |

| Structure Visualization | PyMOL, ChimeraX, matplotlib for contact maps |

Critical for interpreting model outputs (e.g., contact maps) and relating predictions to 3D structure. |

| Benchmark Datasets | ProteinNet, DeepFri evaluation sets, FLIP benchmark, Variant effect datasets | Standardized datasets for fair model comparison and establishing baselines. |

| Compute Infrastructure | NVIDIA GPUs (≥16GB VRAM for 3B models), Google Colab Pro, AWS/Azure instances | Necessary hardware/cloud resources for fine-tuning large models or running high-throughput embedding generation. |

No single protein language model is optimal for all tasks. ESM2 generally provides state-of-the-art performance, particularly for structure-aware applications, with its 650M parameter version representing the best default choice for most labs. ProtBERT serves as a computationally efficient alternative for sequence-function tasks. ESM1b remains vital for benchmarking. By applying the structured decision framework and experimental protocols outlined, researchers can make informed, task-specific model selections, thereby maximizing the impact and efficiency of their computational biology research.

Embedding Extraction Protocols for Downstream Analysis (Function Prediction, Clustering)

This technical guide details protocols for extracting protein sequence embeddings from state-of-the-art language models—ESM2, ESM1b, and ProtBERT—and their application in downstream functional analysis. Embeddings, high-dimensional numerical representations of protein sequences, have become fundamental for tasks like protein function prediction, unsupervised clustering, and structure annotation. This guide is framed within a comparative overview of these three prominent models for researcher-led investigations in computational biology and drug development.

A comparative summary of key model architectures and training specifications.

Table 1: Core Model Specifications and Performance Benchmarks

| Feature | ESM1b (2021) | ESM2 (2022) | ProtBERT (2021) |

|---|---|---|---|

| Architecture | Transformer (Attention) | Evolved Transformer (ESM-2) | BERT (Bidirectional Encoder) |

| Parameters | 650M | 650M to 15B variants | 420M (BERT-base), 3B (BERT-large) |

| Training Data | UniRef50 (29M sequences) | UniRef50 (29M sequences) + high-quality structural clusters | BFD-100 (2.1B sequences) |

| Context Length | 1024 tokens | 1024 tokens (ESM2-650M) to 2048 tokens (ESM2-15B) | 512 tokens |

| Primary Output | Per-residue embeddings (Layer 33) | Per-residue & pooled sequence embeddings | Per-residue embeddings (Layer 30 for base) |

| Key Benchmark (Remote Homology Detection - Fold Classification) | ~0.80 ROC-AUC | ~0.85 ROC-AUC (ESM2-650M) | ~0.75 ROC-AUC |

| Computational Demand (Relative Inference Time) | 1.0x (Baseline) | 0.9x - 2.5x (varies by size) | 1.3x |

Core Embedding Extraction Protocols

Environment Setup & Dependencies

Research Reagent Solutions:

| Item / Tool | Function | Source / Package |

|---|---|---|

| PyTorch / TensorFlow | Deep learning framework for model loading and inference. | torch, tensorflow |

Hugging Face transformers |

API for loading ProtBERT and associated tokenizers. | transformers |

ESM Model Library (fair-esm) |

Official repository and Python package for ESM1b and ESM2 models. | fair-esm |

| Biopython | Handling and parsing FASTA sequence files. | biopython |

| NumPy / SciPy | Numerical operations and storage of embedding matrices. | numpy, scipy |

| Clustal Omega / MAFFT | (Optional) For generating multiple sequence alignments (MSA) if required by specific protocols. | External tools |

| CUDA-capable GPU | Accelerates inference for large models and sequence batches. | Hardware (NVIDIA) |

Detailed Extraction Workflow

The following diagram outlines the universal workflow for embedding extraction across models.

Figure 1: Universal Embedding Extraction Workflow (6 steps)

Protocol 1: Per-Residue Embedding Extraction (ESM1b/ESM2)

- Installation:

pip install fair-esm - Load Model and Alphabet:

- Prepare Sequence Data:

- Inference and Extraction:

Protocol 2: Sequence-Level (Pooled) Embedding Extraction

- Mean Pooling: Average per-residue embeddings across the sequence length.

- [CLS] Token (ProtBERT): Use the embedding of the special classification token.

Protocol 3: Embedding Extraction with Masked Inference (for Attention Analysis) Used to probe model understanding of specific residues.

Downstream Analysis Protocols

Function Prediction (Supervised Learning)

Experimental Protocol:

- Dataset: Split annotated protein dataset (e.g., from UniProt) into training/validation/test sets.

- Feature Generation: Extract sequence-level pooled embeddings for all proteins using a chosen model.

- Classifier Training: Train a shallow multilayer perceptron (MLP) or logistic regression model on training embeddings to predict Gene Ontology (GO) terms or Enzyme Commission (EC) numbers.

- Evaluation: Measure precision, recall, and F1-score on the held-out test set. Compare performance across embeddings from different models.

Table 2: Example Function Prediction Performance (Macro F1-Score)

| Model Embedding Source | GO Molecular Function | GO Biological Process | EC Number |

|---|---|---|---|

| ESM1b (mean pooled) | 0.62 | 0.55 | 0.78 |

| ESM2-650M (mean pooled) | 0.66 | 0.58 | 0.81 |

| ProtBERT ([CLS] token) | 0.59 | 0.52 | 0.75 |

| Traditional Features (e.g., ProtVec) | 0.48 | 0.41 | 0.65 |

Unsupervised Clustering and Diversity Analysis

Experimental Protocol:

- Embedding Dimensionality Reduction: Apply PCA or UMAP to reduce embeddings (e.g., 1280-dim) to 2-50 dimensions for visualization and clustering efficiency.

- Clustering: Apply k-means, DBSCAN, or hierarchical clustering on reduced embeddings.

- Validation: Use known protein families (e.g., Pfam) as ground truth. Compute metrics like Adjusted Rand Index (ARI) or Normalized Mutual Information (NMI).

- Analysis: Visualize clusters in 2D/3D to assess separation of functional or structural families.

Figure 2: Clustering & Diversity Analysis Pipeline

Advanced Protocol: Embedding Integration for Structure-Function Mapping

This protocol correlates sequence embeddings with structural properties.

Figure 3: Sequence Embedding & Structural Property Correlation

Methodology:

- Extract per-residue embeddings for a protein with a known structure (e.g., from PDB).

- Calculate a pairwise distance matrix from the structure's Cα atoms.

- Calculate a pairwise "attention" or "similarity" matrix from the per-residue embeddings (e.g., using cosine similarity).

- Compute the linear correlation (Pearson's r) between the off-diagonal elements of the distance matrix and the embedding similarity matrix. Higher inverse correlation suggests embeddings capture spatial proximity.

Implementing Zero-Shot and Few-Shot Learning for Novel Protein Discovery

This whitepaper presents a technical guide for implementing zero-shot and few-shot learning (ZSL/FSL) techniques to accelerate the discovery of novel proteins with desired functions. The approach is framed within a critical evaluation of three leading protein language models (pLMs): ESM2, ESM1b, and ProtBERT. The core thesis posits that while all three models provide powerful foundational representations, their architectural differences lead to varying performance in ZSL/FSL scenarios for functional annotation, stability prediction, and de novo design. ESM2's larger scale and refined attention mechanisms may offer superior generalization with minimal examples, whereas ProtBERT's bidirectional context and ESM1b's efficiency present distinct trade-offs for specific discovery pipelines.

Protein Language Models are transformer-based neural networks trained on millions of diverse protein sequences, learning evolutionary, structural, and functional patterns in a self-supervised manner.

Model Architectures & Training Data

- ESM1b (Evolutionary Scale Modeling-1b): A 650M parameter transformer model trained on UniRef50 (2021_03) (~250M sequences). It uses a BERT-style masked language modeling objective, predicting randomly masked amino acids in sequences.

- ProtBERT: A 420M parameter model based on the BERT architecture, trained on BFD (Big Fantastic Database) and UniRef100. It similarly employs a masked language modeling objective but on a different, broad dataset.

- ESM2: The successor to ESM1b, featuring a more modern transformer architecture (e.g., Rotary Position Embeddings). It is scaled from 8M to 15B parameters. The largest versions (ESM2 3B/15B) are trained on a vast, unified dataset of >60M unique sequences from UniRef90.

Quantitative Comparison of Pre-trained Models

Table 1: Core Specifications of Evaluated Protein Language Models

| Model | Parameters | Training Data | Context Window | Key Architectural Feature | Release Year |

|---|---|---|---|---|---|

| ESM1b | 650 million | UniRef50 (~250M seq) | 1024 tokens | Standard Transformer, MLM | 2021 |

| ProtBERT | 420 million | BFD + UniRef100 | 512 tokens | BERT-base, MLM | 2021 |

| ESM2 (3B) | 3 billion | Unified UR90/DMS (60M+ seq) | 1024 tokens | Rotary Position Embeddings | 2022 |

| ESM2 (15B) | 15 billion | Unified UR90/DMS (60M+ seq) | 1024 tokens | Rotary Position Embeddings, deeper layers | 2022 |

Zero-Shot and Few-Shot Learning Frameworks for Proteins

ZSL infers tasks unseen during training, while FSL adapts to new tasks with a small number of labeled examples (e.g., 1-100).

Zero-Shot Learning Protocols

1. Embedding Similarity Search:

- Method: Embed query protein sequences using a pLM. For a target function, create a "prototype" embedding by averaging embeddings of known proteins with that function (from a separate reference set). Rank novel queries by cosine similarity to the prototype.

- Protocol:

a. Extract per-residue embeddings from the final layer of the pLM (e.g.,

ESM2.forward(...).last_hidden_state). b. Generate a per-sequence representation via mean pooling over the sequence length. c. Compute the centroid (C_f) for functional class f:C_f = mean(Embed(seq_i) for all seq_i in reference_set_f). d. For a novel sequences_novel, score =cosine_similarity(Embed(s_novel), C_f).

2. Natural Language as a Supervisor:

- Method: Leverage pLMs fine-tuned on protein-text pairs (e.g., ESM-2 with a text head). Pose a functional classification as a textual prompt (e.g., "This protein is a [MASK] enzyme.").

- Protocol:

a. Use a multi-modal model like ProtGPT2 or a jointly trained encoder.

b. Format prompts:

"[CLS] The enzyme class of {protein_sequence} is [MASK].[SEP]". c. The model predicts tokens for[MASK]which are mapped to functional labels (e.g., "kinase", "hydrolase").

Diagram Title: Zero-Shot Learning Workflows for Protein Function Prediction

Few-Shot Learning Protocols

1. Embedding-Based Logistic Regression / SVM:

- Method: Use frozen pLM embeddings as fixed feature vectors for training a shallow classifier on k examples per class.

- Protocol: a. Embed all sequences (support/query sets) using the pLM. b. Train a logistic regression classifier with L2 regularization on the support set embeddings. c. Evaluate accuracy on the query set.

2. Prototypical Networks:

- Method: A metric-based meta-learning approach where classification is performed by distance to class prototypes in the embedding space.

- Protocol:

a. In each training episode, sample N classes with K support examples each (N-way K-shot).

b. Embed all sequences. Compute prototype for class n:

p_n = (1/K) * sum(Embed(s_k)). c. For a query embeddingq, compute distancesd(q, p_n)(e.g., Euclidean). Produce a softmax over negative distances. d. Loss is negative log-probability of the true class. The model learns an embedding space where classes form compact clusters.

3. Soft Prompt Tuning:

- Method: Keep the pLM backbone frozen but prepend a set of tunable, continuous "soft prompt" vectors to the input sequence embeddings. Only these prompts are updated during training on the few-shot task.

- Protocol:

a. Define

P(e.g., 20) tunable vectors of the same dimension as the pLM's embedding layer. b. For input sequence with embeddingsE_seq, construct model input:[P_1, P_2, ..., P_p, E_seq]. c. Feed forward through frozen pLM, obtain representation for a [MASK] token or CLS token. d. Project to output layer (e.g., enzyme class). Train only parameters ofPand the output layer.

Diagram Title: Few-Shot Learning Training Cycle for pLMs

Comparative Experimental Results

Recent benchmarks on tasks like enzyme commission (EC) number prediction, subcellular localization, and fluorescence protein engineering highlight model performance.

Table 2: Few-Shot Performance (Accuracy %) on Enzyme Commission (EC) Prediction (5-way, 10-shot)

| Model | Embedding + LR | Prototypical Net | Soft Prompt Tuning | Key Advantage |

|---|---|---|---|---|

| ESM1b (650M) | 68.2 ± 3.1 | 72.5 ± 2.8 | 71.0 ± 3.5 | Fast inference, solid baseline |

| ProtBERT (420M) | 65.8 ± 3.5 | 70.1 ± 3.0 | 69.3 ± 3.7 | Strong on homology-poor splits |

| ESM2 (3B) | 75.4 ± 2.5 | 78.9 ± 2.2 | 77.5 ± 2.6 | Best overall generalization |

| ESM2 (15B) | 76.1 ± 2.4 | 79.5 ± 2.1 | 78.8 ± 2.4 | Top performance, high resource cost |

Table 3: Zero-Shot Embedding Similarity for Remote Homology Detection (Mean ROC-AUC)

| Model | Fold Recognition (SCOP) | Superfamily Recognition | Family Recognition |

|---|---|---|---|

| ESM1b | 0.81 | 0.75 | 0.88 |

| ProtBERT | 0.79 | 0.73 | 0.86 |

| ESM2 (3B) | 0.86 | 0.80 | 0.92 |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools & Resources for Implementing pLM-Based Discovery

| Item / Resource | Function / Purpose | Example / Provider |

|---|---|---|

| Pre-trained Models | Foundational embeddings for sequences. | ESM2/ESM1b (Facebook AI), ProtBERT (HuggingFace) |

| Embedding Extraction Code | Scripts to generate sequence representations from pLMs. | bio-embeddings Python package, ESM repository. |

| Few-Shot Learning Library | Frameworks for prototyping meta-learning models. | learn2learn, torchmeta, scikit-learn for simple classifiers. |

| Protein Dataset Benchmarks | Standardized datasets for evaluating ZSL/FSL. | Tasks from TAPE, ProteinGym (DMS), DeepFri. |

| Hardware with GPU/TPU | Accelerated computing for large model inference/training. | NVIDIA A100/A6000 GPUs, Google Cloud TPU v4. |

| Sequence Search Database | For constructing reference sets and prototypes. | UniProt Knowledgebase, Pfam, MEROPS. |

| Functional Annotation DBs | Ground truth for training and evaluation. | Gene Ontology (GO), Enzyme Commission (EC), CATH. |

This whitepaper provides a technical guide for integrating two dominant protein structure prediction tools—ESMFold and AlphaFold2—into a unified research workflow. This discussion is framed within a broader thesis comparing protein language models (pLMs), specifically ESM2 (the evolutionary scale model), its predecessor ESM1b, and ProtBERT. This thesis posits that while ProtBERT excels at capturing nuanced semantic relationships in protein sequences for functional annotation, the ESM family, particularly ESM2, demonstrates superior performance in learning evolutionary-scale patterns directly relevant to 3D structural folding. The integration of ESMFold (built on ESM2) and AlphaFold2 leverages the complementary strengths of pLM embeddings and homologous sequence co-evolution analysis, offering researchers a powerful, tiered approach to structure prediction.

Core Architecture & Performance Comparison

A live search for recent benchmarking studies (e.g., on CASP15, PDB datasets) reveals key quantitative distinctions.

Table 1: Foundational Model Comparison for Structure Prediction

| Model | Core Architecture | Training Data | Key Strength for Structure | Typical TM-Score (vs. PDB) | Inference Speed |

|---|---|---|---|---|---|

| ESM2 (15B) | Transformer (Attention) | UniRef50 (67M seqs) | Single-sequence prediction, speed | 0.75 - 0.85 (varies by protein) | Very Fast (seconds) |

| ESM1b (650M) | Transformer | UniRef50 | General sequence representations | 0.65 - 0.75 | Fast |

| ProtBERT | BERT Transformer | UniRef100 | Functional semantics, masking | Not directly applicable | N/A |

| AlphaFold2 | Evoformer + Structure Module | BFD, MGnify, PDB (MSAs) | MSA-based, high accuracy | 0.85 - 0.95 | Slow (hours) |

Performance Metrics on Benchmark Sets

Table 2: Comparative Performance on Common Benchmarks (e.g., PDB100)

| Metric | AlphaFold2 | ESMFold | Notes |

|---|---|---|---|

| Average pLDDT | 92.4 | 85.7 | Higher is better (confidence) |

| TM-Score > 0.7 | 94% | 78% | Fold-level accuracy |

| RMSD (Å) | 1.2 | 2.8 | Lower is better (atomic accuracy) |

| GPU Hours per Prediction | 3-10 | 0.1-0.5 | Varies with sequence length & MSA depth |

Integrated Workflow Protocol

This protocol outlines a decision tree for leveraging both tools efficiently.

Experimental Protocol 1: Tiered Structure Prediction Pipeline

Objective: To obtain high-accuracy protein structures by strategically employing ESMFold for rapid screening and AlphaFold2 for refined, high-confidence predictions.

Materials & Software:

- Input: Protein sequence(s) in FASTA format.

- Hardware: GPU-enabled system (NVIDIA A100/T4 or equivalent).

- Software: Local installations or API access to ColabFold (integrates AF2), ESMFold (via Hugging Face, PyTorch), and necessary dependencies (Docker, Python 3.9+).

- Databases: (For AF2) Local copies or API access to MSA databases (UniRef90, BFD, MGnify).

Procedure:

- Sequence Pre-processing: Validate FASTA. Check PDB for existing experimental structures.

- Rapid Initial Fold Assessment with ESMFold:

- Run ESM2 (ESMFold) on the single sequence.

- Record pLDDT confidence score and predicted TM-score.

- Decision Point: If global pLDDT > 85, the ESMFold model may be sufficient for preliminary analysis. Proceed to step 4a.

- High-Accuracy Refinement with AlphaFold2:

- If high confidence is required or ESMFold pLDDT < 85, initiate AlphaFold2/ColabFold.

- Generate Multiple Sequence Alignments (MSAs) using MMseqs2 against specified databases.

- Execute the full AlphaFold2 prediction (5 models, ranked by pLDDT).

- Use the Amber relaxation procedure on the top-ranked model.

- Model Validation & Selection:

- a) For ESMFold output: Use predicted aligned error (PAE) plots to assess domain confidence.

- b) For AlphaFold2 output: Analyze pLDDT per residue and inter-domain PAE. Select the model with the highest confidence and lowest predicted violation scores.

- Integration Analysis: Superimpose high-confidence domains from both predictions using UCSF ChimeraX to identify consensus regions and divergent loops.

Experimental Protocol 2: Ab initio Protein Complex (Multimer) Prediction

Objective: Predict the structure of a protein complex from individual subunit sequences.

Procedure:

- Subunit Prediction: Use Protocol 1 for each individual subunit to generate confident monomer structures.

- Docking Preparation: Extract high-confidence domains (pLDDT > 80).

- Complex Assembly:

- Path A (AlphaFold-Multimer): Input paired sequences in desired stoichiometry. Run AlphaFold-Multimer with full MSA generation. This is computationally intensive but state-of-the-art.

- Path B (Hybrid): Use ESMFold to rapidly generate multiple conformational states for each subunit. Use these as flexible inputs for a docking software like HADDOCK or ClusPro, guided by interface predictions from pLM embeddings.

- Assessment: Evaluate complex models using interface pLDDT (from AF2) or HADDOCK score, and check physicochemical plausibility of the interface.

Visualization of Workflows

Tiered Prediction Workflow Decision Tree

Ab initio Protein Complex Prediction Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Integrated Structure Prediction

| Item / Resource | Provider / Package | Primary Function in Workflow |

|---|---|---|

| ESMFold Python API | Hugging Face transformers, fair-esm |

Provides direct access to the ESM2 model for single-sequence folding and embedding extraction. |

| ColabFold | GitHub: sokrypton/ColabFold | Streamlined, cloud-accessible implementation of AlphaFold2 and AlphaFold-Multimer using MMseqs2 for MSAs. |

| AlphaFold2 Local Docker | DeepMind GitHub Repository | Full local installation for maximum control and batch processing of predictions. |

| MMseqs2 Server/CLI | MPI Bioinformatics Toolkit | Rapid, sensitive generation of multiple sequence alignments (MSAs) required for AlphaFold2. |

| PDBx/mmCIF Tools | RCSB PDB, biopython |

Processing and validating input/output structural data in standard formats. |

| ChimeraX / PyMOL | UCSF, Schrödinger | Visualization, superposition, and comparative analysis of predicted 3D models. |

| HADDOCK | Bonvin Lab, Utrecht University | Biomolecular docking software for the hybrid complex prediction pathway. |

| GPU Compute Instance | (e.g., NVIDIA A100 on AWS/GCP/OCI) | Essential hardware for running both ESMFold and AlphaFold2 in a timely manner. |

Within the broader thesis comparing ESM2, ESM1b, and ProtBERT for protein language model (pLM) research, a critical application is the prediction of mutation effects. This guide provides a technical comparison of two prominent architectures derived from these families: the ESM1v ensemble and ProtBERT. These models transform protein sequences into statistical landscapes to infer the functional consequences of amino acid substitutions, a task vital for interpreting genomic variants in disease and engineering proteins.

Model Architectures and Training

ESM1v (Evolutionary Scale Modeling 1v)

ESM1v is an ensemble of five models, each a 650M parameter transformer trained exclusively on UniRef90 sequences (≈250 million proteins) using a masked language modeling (MLM) objective. A key differentiator is its training strategy: it masks contiguous spans of tokens, encouraging the learning of longer-range dependencies without using explicit evolutionary information (multiple sequence alignments - MSAs) during inference.

ProtBERT

ProtBERT is a BERT-based model (either "ProtBERT-BFD" trained on BFD clusters or "ProtBERT-UniRef100") with ~420M parameters. It uses a standard token masking strategy during MLM pre-training. While also an MSA-free pLM, its architectural lineage from BERT implies differences in attention mechanisms and contextual embeddings compared to the ESM lineage.

Quantitative Performance Comparison

The following table summarizes key benchmark results for variant effect prediction, primarily on deep mutational scanning (DMS) assays.

Table 1: Model Performance on Variant Effect Prediction Benchmarks

| Metric / Dataset | ESM1v (Ensemble Avg.) | ProtBERT-BFD | Notes |

|---|---|---|---|

| Spearman's ρ (Overall) | 0.40 | 0.31 | Average across 39 DMS proteins (Rao et al. 2019 evaluation). |

| MAE (Scaled Fitness) | 0.21 | 0.28 | Lower Mean Absolute Error is better. |

| AUC (Pathogenic vs. Neutral) | 0.86 | 0.87 | Performance on clinical variant datasets (e.g., ClinVar). |

| Inference Speed (seq/sec) | ~120 | ~150 | Approximate, on a single V100 GPU, batch size=1. |

| Parameters per Model | 650M | 420M | ESM1v uses 5 models in ensemble. |

| MSA Dependency | None (Zero-shot) | None (Zero-shot) | Both operate on single sequences. |

Data synthesized from Meier et al., 2021 (ESM1v) and Elnaggar et al., 2021 (ProtBERT), with updated benchmarks from recent evaluations.

Core Experimental Protocol for Variant Scoring

Below is a detailed methodology for using either model to score a set of missense variants on a protein of interest.

Protocol: Zero-Shot Variant Effect Prediction

A. Input Preparation

- Wild-type Sequence: Obtain the canonical amino acid sequence (e.g., from UniProt).

- Variant List: Define list of substitutions (e.g., V12L, G204R).

- Model Tokenization:

- ESM1v: Use the ESM tokenizer. Sequence is prefixed with a

<cls>token and each residue is tokenized. Special tokens are added. - ProtBERT: Use the BERT tokenizer specific to ProtBERT. It may split rare amino acids into sub-tokens.

- ESM1v: Use the ESM tokenizer. Sequence is prefixed with a

B. Computational Scoring Workflow

- Model Loading: Load the pre-trained model (

esm1v_t33_650M_UR90Sfor ESM1v,Rostlab/prot_bert_bfdfor ProtBERT). - Logit Extraction:

- Pass the tokenized wild-type sequence through the model.

- Extract the logits (pre-softmax scores) from the final layer at the sequence position(s) of interest.

- Likelihood Calculation:

- Apply a softmax function to the logits for the target position to get a probability distribution over all 20 amino acids.

- Let ( P{wt}(pos) ) be the probability assigned to the wild-type amino acid at that position.

- Let ( P{mt}(pos) ) be the probability assigned to the mutant amino acid at that position.

- Score Computation:

- The primary score is the log-likelihood ratio (LLR):

LLR = log( P_mt(pos) / P_wt(pos) ). - A positive LLR suggests the variant is more likely than the wild-type in the model's internal representation; negative suggests it is less likely. More negative scores often correlate with deleterious effects.

- The primary score is the log-likelihood ratio (LLR):

- ESM1v Ensemble: Repeat steps 2-4 for each of the five ESM1v models and average the LLR scores.

C. Calibration & Validation

- Baseline Correction: For a given protein, center scores by subtracting the median LLR of all possible single mutants.

- Experimental Correlation: Validate scores against experimental DMS data or known pathogenic/benign variants using Spearman's rank correlation.

Diagram Title: Zero-Shot Variant Scoring Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents & Computational Tools

| Item | Function in Experiment | Example/Note |

|---|---|---|

| Reference Protein Sequence | Serves as the wild-type input for scoring variants. | From UniProtKB. Must be canonical, full-length. |

| Benchmark Dataset | For validating model predictions against ground truth. | Deep Mutational Scanning (DMS) data from papers or MaveDB. |

| Clinical Variant Database | For assessing pathogenic/benign classification. | ClinVar, HGMD. |

| ESM1v Model Weights | Pre-trained parameters for the five ensemble models. | Available via Facebook Research's GitHub (esm). |

| ProtBERT Model Weights | Pre-trained parameters for the BERT-based model. | Available on Hugging Face Model Hub (Rostlab/). |

| Tokenization Library | Converts amino acid strings to model input IDs. | transformers library for ProtBERT; esm for ESM. |

| GPU Compute Instance | Accelerates model inference. | Minimum 16GB VRAM recommended for large batches. |

| Post-processing Scripts | For calculating LLR, averaging ensembles, and plotting. | Custom Python/Pandas scripts. |

Conceptual Pathway of pLM-Based Prediction

The following diagram illustrates the logical flow from protein sequence to functional prediction, highlighting the divergence and convergence of the two model approaches.

Diagram Title: pLM Variant Prediction Conceptual Pathway

Discussion and Outlook

In the context of the ESM2 vs. ESM1b vs. ProtBERT thesis, ESM1v represents a refined, ensemble-based specialization of the ESM1b architecture for variant prediction, often outperforming ProtBERT in correlating with DMS data. ProtBERT remains a robust, widely accessible baseline. The emerging paradigm favors large, MSA-free pLMs like ESM1v for zero-shot prediction due to their speed and competitive accuracy. Future directions include integrating these scores with structural features (via models like ESMFold) and fine-tuning on specific variant families for therapeutic development.

Overcoming Challenges: Best Practices for Performance, Efficiency, and Data Issues

This guide provides a technical analysis of computational resource management for state-of-the-art protein language models, specifically focusing on the ESM2 (15B parameter) model compared to its predecessor ESM1b (650M parameters) and the contemporaneous ProtBERT model. Framed within a broader thesis comparing these architectures for research in bioinformatics and drug development, this whitepaper details the memory footprint, runtime performance, and practical experimental protocols required for their effective deployment. Efficient management of GPU resources is paramount for researchers aiming to leverage these large-scale models for tasks such as structure prediction, function annotation, and variant effect prediction.

Quantitative Resource Comparison

The following tables summarize the key computational metrics for the models under discussion. These figures are based on standard inference and fine-tuning scenarios using mixed-precision training (FP16/BF16) on an NVIDIA A100 80GB GPU, with a batch size of 1 for sequence length 1024, unless otherwise specified.

Table 1: Model Architecture & Baseline Resource Requirements

| Model | Parameters | Hidden Size | Layers | Attention Heads | Estimated Disk Size (FP16) |

|---|---|---|---|---|---|

| ESM1b | 650 Million | 1280 | 33 | 20 | ~1.3 GB |

| ProtBERT (BERT-base) | 110 Million | 768 | 12 | 12 | ~0.22 GB |

| ESM2 (15B) | 15 Billion | 5120 | 48 | 40 | ~30 GB |

Table 2: GPU Memory Consumption & Runtime (Inference)

| Model | Sequence Length | GPU Memory (Peak, Inference) | Approx. Time per Sequence (ms) | Key Memory Bottleneck |

|---|---|---|---|---|

| ESM1b | 1024 | ~4.2 GB | 120 | Activations |

| ProtBERT | 1024 | ~1.1 GB | 45 | Activations |

| ESM2 (15B) | 1024 | ~30 GB* | 850 | Model Weights |

Note: Running ESM2 (15B) for inference requires techniques like model sharding or CPU offloading on an 80GB A100.

Table 3: GPU Memory Consumption & Runtime (Fine-tuning)

| Model | Sequence Length | Batch Size | GPU Memory (Peak, Full Fine-tuning) | Estimated Time/Epoch (10k seqs) |

|---|---|---|---|---|

| ESM1b | 512 | 8 | ~22 GB | ~30 minutes |

| ProtBERT | 512 | 32 | ~8 GB | ~10 minutes |

| ESM2 (15B) | 512 | 1* | >80 GB | ~8 hours* |

Note: Full fine-tuning of ESM2 (15B) is impractical on a single GPU. Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA are essential.

Experimental Protocols for Resource Benchmarking

Protocol: Measuring Peak GPU Memory during Inference

Objective: Quantify the maximum GPU memory allocated for a single forward pass.

- Environment Setup: Use Python with PyTorch (v2.0+), CUDA 11.8, and the

transformerslibrary. Initialize the model intorch.float16and move it to the GPU (cuda:0). - Memory Profiling: Use

torch.cuda.reset_peak_memory_stats()before inference. For a given input sequence (randomly generated token IDs of specified length), perform a model forward pass withtorch.no_grad(). - Measurement: Record

torch.cuda.max_memory_allocated()immediately after the forward pass. This value is the peak memory consumed for that specific sequence length and batch size (typically 1). - Variation: Repeat steps 2-3 for standardized sequence lengths (e.g., 128, 256, 512, 1024). Plot memory usage vs. sequence length.

Protocol: Comparative Fine-tuning with PEFT (LoRA)

Objective: Fine-tune large models (ESM2 15B) on a single GPU using Low-Rank Adaptation.

- Base Model Load: Load the pre-trained

esm2_t48_15B_UR50Dmodel in 16-bit precision (torch.float16) using thebitsandbyteslibrary for optimized loading. - LoRA Configuration: Apply LoRA adapters using a library like

peft. Target the query and value projection matrices in the attention layers. Typical settings:lora_r=8(rank),lora_alpha=16,dropout=0.1. - Training Setup: Freeze all base model parameters. Only the LoRA adapter parameters are trainable. Use a standard optimizer like AdamW with a low learning rate (1e-4).

- Resource Monitoring: Profile memory usage using

torch.cuda.memory_summary. Compare the memory footprint with and without LoRA applied to the ESM2 15B model.

Visualizations

Title: Resource Management Decision Flow for Protein Language Models

Title: Model Memory, Speed, and Scaling Factor Comparison

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Purpose | Example/Note |

|---|---|---|

| NVIDIA A100/A40/H100 GPU | Primary accelerator for model inference and training. High VRAM capacity (40-80GB) is critical for large models like ESM2 15B. | Essential for local experimentation with ESM2. |

| bitsandbytes Library | Enables 8-bit and 4-bit quantization of models, dramatically reducing memory footprint for loading and inference. | Allows loading ESM2 15B in 8-bit on a single 40GB GPU. |

| PEFT Library (LoRA, IA3) | Implements Parameter-Efficient Fine-Tuning methods. Allows adaptation of massive models by training only a tiny subset of parameters. | Key for fine-tuning ESM2 15B on single-GPU setups. |

| FlashAttention-2 | Optimized attention algorithm providing faster runtime and reduced memory usage for longer sequences. | Integrated into newer versions of PyTorch and model libraries. |

| NVIDIA DALI or PyTorch Dataloader | Efficient data loading and preprocessing pipelines to prevent CPU bottlenecks during GPU training. | Crucial for maximizing GPU utilization during fine-tuning. |

| Weights & Biases (W&B) / TensorBoard | Experiment tracking and visualization tools to monitor GPU utilization, memory, loss, and metrics in real-time. | For optimizing resource allocation across experimental runs. |

Hugging Face transformers & accelerate |

Core libraries providing unified APIs for model loading, distributed training, and mixed-precision handling. | Simplifies multi-GPU and multi-node training setups. |

| Model Sharding (e.g., FSDP) | Fully Sharded Data Parallel (FSDP) shards model parameters, gradients, and optimizer states across GPUs. | Necessary for fine-tuning ESM2 15B across multiple GPUs. |

Handling Out-of-Distribution and Low-Homology Sequences

This whitepaper serves as a detailed technical guide within a broader comparative thesis examining ESM2 (Evolutionary Scale Modeling 2), ESM1b, and ProtBERT for protein language modeling. A critical challenge for these models in real-world research and therapeutic development is their performance on Out-of-Distribution (OOD) and Low-Homology sequences—proteins that diverge significantly from the evolutionary and functional patterns seen in training data. This document provides in-depth methodologies, data comparisons, and practical toolkits for researchers addressing this frontier.

Model Architectures and Training Data Context

The foundational differences between the three models dictate their inherent OOD robustness.

- ESM1b (2019): A transformer model trained on UniRef50 (approximately 29 million sequences). It uses a masked language modeling (MLM) objective to learn evolutionary statistics.

- ProtBERT (2021): A BERT-style model trained on BFD100 (approximately 2.1 billion sequences) and UniRef100. Its larger, more diverse corpus aims to capture broader biological insights.

- ESM2 (2022): The state-of-the-art scalable transformer, with versions from 8M to 15B parameters. ESM2 650M was trained on UniRef50 (ESM2-650M) while the 3B and 15B models used the larger UniRef50+Clusters dataset. It introduces architectural improvements like rotary positional embeddings and a more compute-efficient pre-training approach.

Table 1: Core Model Specifications and Training Data

| Feature | ESM1b | ProtBERT | ESM2 (650M) | ESM2 (3B/15B) |

|---|---|---|---|---|

| Parameters | 650M | 420M | 650M | 3B, 15B |

| Training Data | UniRef50 (~29M seq) | BFD100 + UniRef100 (~2.1B seq) | UniRef50 | UniRef50 + Clusters |

| Context Window | 1024 | 512 | 1024 | 1024 |

| Key Innovation | Early protein LM | Large-scale corpus | Scalable transformer, RoPE | Ultralarge scale, SOTA |

Experimental Protocols for Benchmarking OOD Performance

To evaluate model robustness, researchers must design benchmarks that isolate distributional shift.

Protocol: Low-Homology Fold Classification

Objective: Assess ability to infer structural function without evolutionary signals.

- Dataset Curation: Use the Fold Classification benchmark from the Structural Classification of Proteins (SCOPe) database (e.g., SCOPe 2.07). Filter for sequences with <20% pairwise identity to those in the model's training data (requires cross-referencing with UniRef).

- Embedding Generation: Pass each sequence through the model. Extract per-residue embeddings and compute a mean-pooled representation for the whole protein.

- Classifier Training: Train a simple logistic regression or SVM classifier on the embeddings from a subset of folds.

- OOD Testing: Evaluate the classifier's accuracy on embeddings from held-out folds not seen during classifier training. This tests generalization to novel structural motifs.

Protocol: Synthetic or Engineered Sequence Saturation

Objective: Probe model understanding of sequence-function rules beyond natural variation.

- Design: Select a well-characterized protein (e.g., GFP, GB1). Use deep mutational scanning (DMS) data or generate all single/multiple point mutants within a region.

- Embedding & Regression: Compute embeddings for all variants. Use a shallow neural network to predict functional scores (e.g., fluorescence, stability) from embeddings.

- Metric: Calculate the Spearman correlation between predicted and experimental scores for high-order mutants (e.g., >3 mutations), which are inherently OOD.

Protocol: De Novo Protein Inference

Objective: Test on purely in silico generated proteins with no natural homology.

- Sequence Generation: Use protein sequence generation models (e.g., ProteinMPNN, AR-Diffusion) to produce sequences for a target fold that are filtered for low homology (<10% identity) to the natural proteome.

- Property Prediction: Use the language model embeddings as input to predictors for stability, solubility, or intended function.

- Validation: Correlate predictions with in vitro experimental results for the synthesized de novo proteins.

Table 2: Representative OOD Benchmark Results (Summarized)

| Benchmark Task | ESM1b | ProtBERT | ESM2-650M | ESM2-3B | Notes |

|---|---|---|---|---|---|

| SCOPe Fold (Low-Hom.) | 0.72 ± 0.03 | 0.68 ± 0.04 | 0.78 ± 0.02 | 0.81 ± 0.02 | Macro F1 score |

| GFP DMS (High-Order Mutants) | ρ=0.45 | ρ=0.42 | ρ=0.51 | ρ=0.58 | Spearman ρ |

| De Novo Solubility Pred. | MAE=0.31 | MAE=0.33 | MAE=0.28 | MAE=0.25 | Mean Abs Error |

Methodologies for Enhancing OOD Robustness

Embedding Space Calibration & Transformation

- Contrastive Learning Fine-Tuning: Fine-tune the model on positive (similar function) and negative (dissimilar) sequence pairs curated from OOD clusters to improve embedding discriminability.