ESM2 Model Sizes Explained: From 8M to 15B Parameters for Protein Language Modeling in Drug Discovery

This article provides a comprehensive overview of the Evolutionary Scale Modeling (ESM) family, specifically the ESM2 protein language models, for researchers and drug development professionals.

ESM2 Model Sizes Explained: From 8M to 15B Parameters for Protein Language Modeling in Drug Discovery

Abstract

This article provides a comprehensive overview of the Evolutionary Scale Modeling (ESM) family, specifically the ESM2 protein language models, for researchers and drug development professionals. It explores the architectural foundations and scaling from 8 million to 15 billion parameters, detailing methodologies for practical application in tasks like structure prediction and function annotation. The guide addresses common deployment challenges, optimization strategies for computational constraints, and presents comparative analyses against other state-of-the-art models (e.g., AlphaFold2, ProtT5). Finally, it validates ESM2's performance across biomedical benchmarks and discusses its implications for accelerating therapeutic discovery.

What is ESM2? Understanding the Architecture and Parameter Spectrum (8M to 15B)

This whitepaper provides a technical overview of the Evolutionary Scale Modeling (ESM) protein language model family, contextualized within a broader thesis analyzing ESM2 model scales. Developed by Meta AI, ESM models apply transformer architectures learned from millions of natural protein sequences to predict structure and function, revolutionizing computational biology and therapeutic discovery.

The ESM Family: Architectural Evolution

The ESM family represents a progression in scaling and architectural refinement for protein sequence modeling.

ESM-1v: A 650M parameter model trained on UniRef90, specializing in variant effect prediction without multiple sequence alignments (MSAs). ESM-1b: Introduced a RoBERTa-style training objective, improving downstream task performance over its predecessor. ESM-2: The current flagship, featuring a standard transformer architecture optimized for protein sequences. Its key innovation is efficient scaling to unprecedented sizes for a protein language model.

Core Architectural Specifications

All ESM models utilize a transformer encoder architecture. ESM-2 specifically employs:

- Pre-Layer Normalization: Stabilizes training for deep networks.

- GELU Activation Functions.

- Learnable Positional Embeddings: Critical for modeling protein sequences where position dictates folding.

- Attention Maps optimized to capture long-range interactions essential for tertiary structure.

The ESM2 series systematically explores the effect of scale on protein representation learning. The following table summarizes the key configurations.

Table 1: ESM2 Model Family Parameters and Performance

| Model Name | Parameters | Layers | Embedding Dim. | Attention Heads | Training Tokens (Billion) | State-of-the-Art Performance (pLDDT) |

|---|---|---|---|---|---|---|

| ESM2-8M | 8 Million | 6 | 320 | 20 | ~10,000 | ~65.0 |

| ESM2-35M | 35 Million | 12 | 480 | 20 | ~10,000 | ~72.5 |

| ESM2-150M | 150 Million | 30 | 640 | 20 | ~10,000 | ~80.5 |

| ESM2-650M | 650 Million | 33 | 1280 | 20 | ~10,000 | ~84.5 |

| ESM2-3B | 3 Billion | 36 | 2560 | 40 | ~10,000 | ~86.0 |

| ESM2-15B | 15 Billion | 48 | 5120 | 40 | ~10,000 | ~87.8 |

Note: pLDDT (predicted Local Distance Difference Test) is a per-residue confidence score (0-100) for AlphaFold2 and ESMFold predictions, where higher scores indicate higher confidence. Data sourced from Meta AI publications and code repositories.

Key Experimental Protocols and Methodologies

Training Protocol for ESM2

Objective: Self-supervised learning via masked language modeling (MLM) on protein sequences. Dataset: UniRef50 (ESM-1) and UniRef90 (ESM-1v, ESM-2). ~65 million unique sequences. Masking Strategy: 15% of tokens masked; of these, 80% replaced with [MASK], 10% replaced randomly, 10% left unchanged. Hardware: Trained on NVIDIA A100 or V100 GPUs using Fairseq framework. Optimizer: Adam with decoupled weight decay (AdamW). Learning Rate: Peak of 4e-4 with linear warmup and polynomial decay.

ESMFold Structure Prediction Protocol

Objective: Generate 3D atomic coordinates from a single sequence using an ESM2 backbone. Workflow:

- Sequence Embedding: Input protein sequence is passed through the frozen ESM2 transformer (e.g., ESM2-3B).

- Folding Trunk: The output token representations are fed into a folding module (structure module).

- Structure Module: A series of invariant point attention (IPA) layers, as introduced in AlphaFold2, refine a set of residue frames and predict backbone atom positions (N, Cα, C).

- Sidechain Prediction: A final network predicts sidechain rotamers (chi angles) using the backbone geometry.

- Output: Full-atom PDB file and per-residue pLDDT confidence metric.

Diagram: ESMFold Structure Prediction Workflow

Variant Effect Prediction (ESM-1v) Protocol

Objective: Score the functional likelihood of amino acid substitutions. Method:

- Wild-type Log-Likelihood: Pass the native sequence through ESM-1v, calculate the sum of log probabilities for each true amino acid.

- Mutant Log-Likelihood: Pass the mutated sequence, calculate the log probability for the new amino acid at the mutated position (all other positions are their native AA).

- Scoring: The log-likelihood difference (mutant - wild-type) indicates the predicted effect. Negative scores suggest deleterious variants.

Research Reagent Solutions Toolkit

Table 2: Essential Tools for Working with ESM Models

| Item / Solution | Function / Description | Source / Implementation |

|---|---|---|

| ESM Python Library | Official PyTorch-based library for loading pre-trained ESM models, extracting embeddings, and running inference. | Meta AI GitHub (facebookresearch/esm) |

| ESMFold Colab Notebook | Interactive Google Colab notebook for predicting protein structure from sequence using ESMFold. | Meta AI GitHub / Colab |

Hugging Face transformers |

Access ESM models via the Hugging Face ecosystem for easy integration into ML pipelines. | Hugging Face Hub (facebook/esm2_t*) |

| PyMol / ChimeraX | Molecular visualization software for analyzing and rendering predicted 3D structures from ESMFold. | Schrodinger / UCSF |

| BioPython | Python library for handling protein sequence data (FASTA files, parsing, etc.) to prepare inputs for ESM. | Biopython Project |

| UniProt / UniRef | Primary source databases for protein sequences used for training, fine-tuning, or creating benchmarks. | EMBL-EBI |

| PDB (Protein Data Bank) | Repository of experimentally solved 3D structures for validating ESMFold predictions. | RCSB |

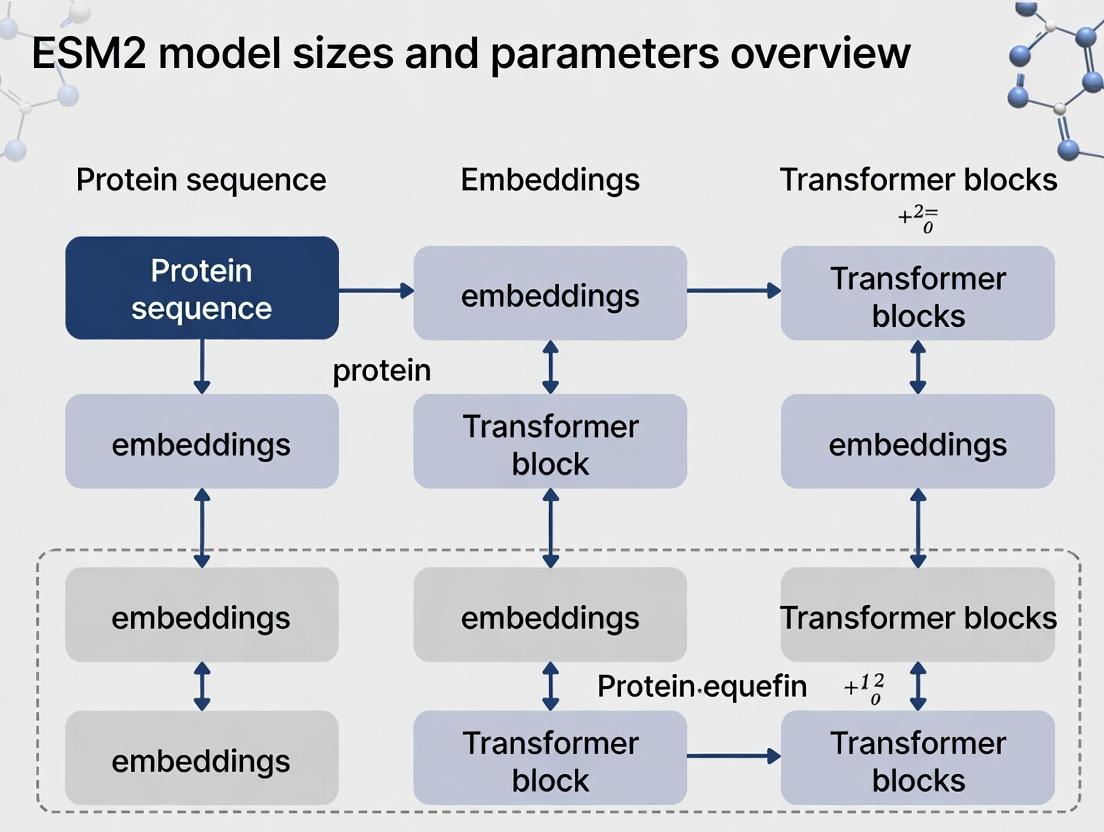

Diagram: Information Flow in ESM Pre-training & Fine-tuning

The evolution from ESM-1 to ESM-2 demonstrates the power of scaling transformer models for protein science. The ESM2 family, particularly the 15B parameter model, shows that increasing scale directly improves the quality of learned representations, as evidenced by state-of-the-art structure prediction without explicit homology information. For researchers and drug developers, these models provide an instant, high-throughput tool for protein engineering, functional annotation, and structure-based therapeutic design, significantly accelerating the early-stage discovery pipeline. Future work, as part of the broader thesis on model scaling, will focus on the quantitative trade-offs between parameter count, computational cost, and gains on specific biological tasks.

This whitepaper provides an in-depth technical analysis of the core transformer architecture underpinning the Evolutionary Scale Modeling 2 (ESM2) protein language model. Framed within a broader thesis on ESM2 model sizes and parameters, this guide details the sequence processing mechanisms that enable state-of-the-art structure and function prediction. ESM2 represents a paradigm shift in computational biology, leveraging a transformer-only architecture trained on millions of diverse protein sequences to learn fundamental principles of protein evolution, structure, and function.

Core Architectural Components

ESM2 is a standard, left-to-right, masked language model based on the transformer architecture. Unlike its predecessor ESM1b, which used a convolutional starter layer, ESM2 is a purely transformer-based model.

Model Size Variants and Key Parameters

The ESM2 family comprises models of varying scales, from 8 million to 15 billion parameters, allowing a trade-off between computational cost and predictive performance.

Table 1: ESM2 Model Size Variants and Core Specifications

| Model Name | Parameters (M) | Layers | Embedding Dim | Attention Heads | Context (Tokens) | Release Date |

|---|---|---|---|---|---|---|

| ESM2 8M | 8 | 4 | 320 | 20 | 1024 | 2022 |

| ESM2 35M | 35 | 6 | 480 | 20 | 1024 | 2022 |

| ESM2 150M | 150 | 30 | 640 | 20 | 1024 | 2022 |

| ESM2 650M | 650 | 33 | 1280 | 20 | 1024 | 2022 |

| ESM2 3B | 3000 | 36 | 2560 | 40 | 1024 | 2022 |

| ESM2 15B | 15000 | 48 | 5120 | 40 | 1024 | 2022 |

Input Representation and Tokenization

Protein sequences are represented as strings of standard amino acid characters (20 canonical residues). A learned embedding matrix projects each residue token into a high-dimensional vector space (embedding dimension, d_model). Positional encodings, using rotary position embeddings (RoPE), are added to provide sequence order information.

Table 2: Input Token Vocabulary

| Token | Representation | Description |

|---|---|---|

| A-Z | Standard amino acids | 20 canonical residues |

| Classification token | Prepended for downstream tasks | |

| Padding token | For batch processing | |

| Mask token | Used for masked language modeling | |

| End-of-sequence token | Marks sequence termination |

The Transformer Block: Detailed Mechanics

The core of ESM2 is a stack of L identical transformer blocks (layers). Each block consists of two primary sub-layers: a multi-head self-attention mechanism and a position-wise feed-forward network.

Multi-Head Self-Attention (MHSA)

Given an input sequence of length N with representations X ∈ ℝ^(N × d_model), the MHSA computes interactions between all residue pairs.

- Linear Projections: X is projected into Query (Q), Key (K), and Value (V) matrices using learned weights W^Q, W^K, W^V ∈ ℝ^(d_model × d_k).

- Scaled Dot-Product Attention: For each attention head i: Attention(Q_i, K_i, V_i) = softmax( (Q_i K_i^T) / √(d_k) ) V_i where d_k = d_model / h (number of heads).

- Head Concatenation & Output: Outputs from all h heads are concatenated and projected via W^O ∈ ℝ^(d_model × d_model).

Position-wise Feed-Forward Network (FFN)

After attention, each position's representation is independently processed by a two-layer FFN with a GeLU activation: FFN(x) = GeLU(x W_1 + b_1) W_2 + b_2 The inner dimension is typically 4 × d_model.

Pre-Layer Normalization and Residual Connections

ESM2 employs a pre-LayerNorm configuration for stable training: x_{sub} = x + Sublayer(LayerNorm(x)) where Sublayer is either MHSA or FFN.

Rotary Position Embeddings (RoPE)

RoPE encodes absolute position with a rotation matrix that naturally incorporates relative position information into the attention score calculation, improving generalization to longer sequences.

Diagram 1: ESM2 High-Level Architecture & Transformer Block Detail

Training Methodology & Objective

ESM2 is trained with a masked language modeling (MLM) objective on the UniRef dataset (∼65 million sequences). A random subset (15%) of input tokens is replaced: 80% with a <mask> token, 10% with a random residue, and 10% left unchanged. The model learns to predict the original token based on its context.

Experimental Protocol 1: Pre-training (MLM)

- Dataset: UniRef90 (ESM2 650M/3B/15B) or UniRef50 (smaller models).

- Tokenization: Sequences split into standard 20-amino acid tokens plus special tokens.

- Masking: Uniform random masking at 15% probability.

- Optimization: AdamW optimizer with β1=0.9, β2=0.98, weight decay=0.01.

- Learning Rate: Cosine schedule with 10k-step warmup to peak LR of 1e-3 (8M) to 4e-4 (15B).

- Hardware: Trained on NVIDIA A100 or V100 GPUs using Fully Sharded Data Parallel (FSDP).

From Sequence to Structure: ESMFold

The ESM2 embeddings, particularly from the 15B parameter model, are used in the ESMFold structure prediction pipeline. A folding trunk, attached to the final layer's residue representations, directly predicts 3D coordinates.

Diagram 2: ESMFold Structure Prediction Workflow

Experimental Protocol 2: Structure Prediction with ESMFold

- Input: Single protein sequence (no multiple sequence alignment required).

- Embedding Generation: Pass sequence through frozen ESM2 15B model.

- Folding Trunk: Process representations through 48 Invariant Point Attention (IPA) blocks.

- Output: Predict all heavy atom coordinates (N, Cα, C, O, Cβ) and per-residue pLDDT confidence score.

- Inference: Runtime is ∼10-60 seconds per sequence on a single A100 GPU.

Performance and Key Results

ESM2 models, especially the 15B parameter variant, achieve breakthrough performance in zero-shot prediction and structure modeling.

Table 3: Key Benchmark Performance of ESM2 Models

| Task / Benchmark | ESM2 8M | ESM2 150M | ESM2 650M | ESM2 3B | ESM2 15B | Metric |

|---|---|---|---|---|---|---|

| Fluorescence (MSE↓) | 0.89 | 0.45 | 0.37 | 0.35 | 0.27 | Mean Squared Error |

| Stability (Spearman↑) | 0.41 | 0.65 | 0.70 | 0.73 | 0.78 | Rank Correlation |

| Remote Homology (Top1↑) | 0.21 | 0.39 | 0.48 | 0.52 | 0.59 | Accuracy |

| ESMFold (TM-score↑) | N/A | N/A | 0.55 | 0.64 | 0.71 | Template Modeling Score |

| ESMFold (Median RMSD↓) | N/A | N/A | 8.2Å | 4.5Å | 2.8Å | Root Mean Square Dev. |

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for ESM2-Based Research

| Item / Solution | Function / Description |

|---|---|

| ESM2 Model Weights | Pre-trained parameters for different model sizes (8M to 15B) available via Hugging Face Transformers. |

| ESMFold Code & Weights | Full pipeline for single-sequence structure prediction. |

Hugging Face transformers |

Python library to load ESM2, perform inference, and extract embeddings. |

| PyTorch / Fairseq | Deep learning frameworks required to run models. ESM2 is implemented in Fairseq. |

| Biopython | For protein sequence handling, parsing FASTA files, and analyzing outputs. |

| AlphaFold2 (ColabFold) | For comparative structure prediction to benchmark ESMFold results. |

| PDB (Protein Data Bank) | Repository of experimental protein structures for validation. |

| GPUs (A100/V100) | Essential hardware for efficient inference (especially for 15B model) and fine-tuning. |

| Jupyter / Colab Notebooks | Interactive environments for prototyping and analysis. |

| MMseqs2 / HMMER | Tools for generating traditional MSAs, useful for comparative analysis against ESM2's MSA-free approach. |

Within the context of our broader thesis on ESM2 model evolution, this whitepaper provides an in-depth technical analysis of the parameter landscape. The selection of model scale—from 8 million to 15 billion parameters—represents a fundamental architectural and strategic choice in computational biology, directly influencing the accuracy, generalizability, and practical utility of protein language models for researchers and drug development professionals.

ESM2 Model Size Specifications and Performance Benchmarks

The following table summarizes the key architectural specifications and published performance metrics for the ESM2 model family, as per the most current research.

Table 1: ESM2 Model Architecture & Performance Summary

| Model (Parameters) | Layers | Embedding Dim. | Attention Heads | FLOPs (Inference) | Memory (FP16) | MMLU (Science) | Protein Prediction (pLDDT) | Key Application Domain |

|---|---|---|---|---|---|---|---|---|

| ESM2-8M | 12 | 320 | 20 | ~0.01 T | ~20 MB | 52.1% | 65-70 | Rapid sequence scoring, Educational tools |

| ESM2-35M | 20 | 480 | 20 | ~0.05 T | ~80 MB | 58.7% | 70-75 | Homology detection, Feature extraction |

| ESM2-150M | 30 | 640 | 20 | ~0.3 T | ~300 MB | 65.3% | 75-80 | Secondary structure prediction, Single-site fitness |

| ESM2-650M | 33 | 1280 | 20 | ~1.5 T | ~1.3 GB | 72.8% | 80-85 | Contact prediction, 3D folding (coarse), Epitope mapping |

| ESM2-3B | 36 | 2560 | 40 | ~7 T | ~6 GB | 78.5% | 85-88 | High-accuracy folding (ESMFold), Functional site prediction |

| ESM2-15B | 48 | 5120 | 40 | ~35 T | ~30 GB | 83.1% | 88-92 | De novo protein design, Antibody optimization, Rare variant effect |

Note: FLOPs and memory are approximate for a 1024-token sequence. Performance scores (pLDDT) are indicative ranges from benchmark tasks like structure prediction. MMLU (Massive Multitask Language Understanding) scores shown are for scientific reasoning subsets.

Experimental Protocols for Benchmarking Model Performance

To generate the comparative data in Table 1, standardized experimental protocols are employed. Below is the detailed methodology for key evaluation tasks.

Protocol 1: pLDDT-based Structure Prediction Accuracy

- Input Preparation: Curate a diverse test set of protein sequences with experimentally resolved 3D structures from the PDB (Protein Data Bank). Ensure no sequence overlaps with the training data of any ESM2 model.

- Model Inference: For each model size (8M to 15B), pass the tokenized sequences through the ESM2 model to generate per-residue embeddings.

- Structure Module: Feed the embeddings into the standardized ESMFold folding head (a fixed, size-agnostic structure module). This head consists of 48 layers of invariant point attention to predict 3D coordinates.

- pLDDT Calculation: Compute the predicted Local Distance Difference Test (pLDDT) score per residue, which estimates the model's confidence in its prediction. The global score is the average over all residues.

- Validation: Compare the predicted structure against the ground-truth PDB structure using TM-score and RMSD metrics. Correlate pLDDT with observed accuracy.

Protocol 2: Zero-Shot Fitness Prediction (Variant Effect)

- Dataset: Use the DeepSEA or ProteinGym benchmark suites containing multiple sequence alignments (MSAs) and measured fitness scores for mutants.

- Embedding Extraction: For a given wild-type sequence and its variants, use each ESM2 model to compute the log-likelihood (pseudo-log-likelihood) for every residue position.

- Score Calculation: The fitness score for a variant is derived from the difference in log-likelihoods between the mutant and wild-type sequences at the mutated positions.

- Evaluation: Calculate the Spearman correlation between the model's predicted fitness scores and the experimentally measured fitness values across all variants in the benchmark.

Visualization of ESM2 Model Scaling Pathways

The relationship between model size, computational cost, and predictive performance is governed by scaling laws. The following diagram illustrates this conceptual pathway.

Title: Scaling Pathway from Parameters to Performance

The Scientist's Toolkit: Essential Research Reagent Solutions

Working with protein language models requires a suite of computational and data resources. The table below details key "reagents" for experimental research.

Table 2: Key Research Reagent Solutions for ESM2-Based Research

| Item / Solution | Function / Purpose | Example / Implementation |

|---|---|---|

| ESM2 Model Weights (Hugging Face) | Pre-trained parameters for each model size (8M-15B). Enables transfer learning and feature extraction without costly pre-training. | facebook/esm2_t6_8M_UR50D to facebook/esm2_t48_15B_UR50D |

| ESMFold Structure Module | A fixed, plug-in structure decoder that converts ESM2 sequence embeddings into 3D atomic coordinates and pLDDT confidence scores. | Integrated in the esm Python package; callable via model.predict_structure(). |

| ProteinGym Benchmark Suite | A standardized, curated collection of deep mutational scanning (DMS) assays for zero-shot evaluation of variant effect prediction. | Used in Protocol 2 to benchmark model fitness prediction across scales. |

| PyTorch / CUDA Environment | The fundamental computational framework for loading models, performing inference, and fine-tuning. Requires compatible GPU hardware. | NVIDIA A100/A6000 for 3B/15B models; RTX 4090 for models up to 650M. |

| Multiple Sequence Alignment (MSA) Database | External evolutionary data (e.g., from UniClust30, BFD) used to augment single-sequence models like ESM2 for specific tasks (e.g., structure prediction). | Often used as a supplementary input to improve performance of smaller models (150M, 650M). |

| Fine-tuning Datasets (Task-Specific) | Curated, labeled datasets for supervised fine-tuning of ESM2 on tasks like stability prediction, binding affinity, or subcellular localization. | Enables adaptation of the general-purpose base model (e.g., 650M) to specialized applications in drug development. |

Workflow for Selecting an Optimal Model Size

The choice of model is dictated by the target application and resource constraints. The decision logic is mapped below.

Title: Decision Logic for Model Size Selection

The ESM2 parameter landscape offers a structured continuum from efficient, accessible models to frontier-scale predictors. For the drug development professional, this spectrum enables strategic deployment: the 150M-650M tier for high-throughput screening and feature engineering, and the 3B-15B tier for cutting-edge structure-based design and de novo protein engineering. Our thesis concludes that this hierarchical, scalable paradigm is foundational to the systematic integration of AI into biomedical research.

This guide details the data curation and methodological framework underpinning the Evolutionary Scale Modeling (ESM) project, specifically the ESM2 model series. Within the broader thesis on ESM2 model sizes and parameters, this document establishes the foundational data pipeline and training protocols that enable the extraction of biological insights from protein sequence space. The methodology described here is critical for understanding how scaling laws—where increasing model parameters from 8M to 15B leads to emergent capabilities in structure prediction and function annotation—are driven by the quality and scale of evolutionary data.

Training Data Curation Pipeline

The efficacy of ESM2 models is intrinsically linked to the quality and breadth of the underlying multiple sequence alignments (MSAs). The data pipeline is constructed to maximize evolutionary signal.

- UniRef: Clustered sets of sequences from UniProt. UniRef90 (sequences clustered at 90% identity) provides a non-redundant basis.

- BFD (Big Fantastic Database) & Metaclust: Large-scale, clustered sequence databases constructed from metagenomic and genomic data, offering deep evolutionary diversity.

- MGnify: A repository for microbiome sequencing data, contributing environmentally diverse sequences.

Sequence Filtering and Preprocessing Protocol

- Redundancy Reduction: Sequences are clustered at the 90% identity level using MMseqs2 to minimize bias from over-represented homologs.

- Quality Filtering:

- Remove sequences with ambiguous amino acids (e.g., 'X', 'B', 'Z', 'J') exceeding a 5% threshold.

- Discard sequences shorter than 30 residues or longer than 1024 residues (or 2048 for extended models).

- Filter based on minimum information content to remove low-complexity and repetitive sequences.

- MSA Construction: For each query sequence (or family), HHblits is run against the clustered sequence databases with 3 iterations and an E-value threshold of 1e-3 to gather homologous sequences.

Dataset Composition Statistics

Table 1: Composition of ESM2 Pre-training Datasets

| Dataset Component | Source | Approx. Number of Sequences | Key Purpose |

|---|---|---|---|

| UniRef90 Core | UniProt | ~45 million clusters | Provides high-quality, annotated protein families. |

| BFD/Metaclust | Genomic/Metagenomic | ~2.2 billion clusters | Adds immense evolutionary diversity and remote homology. |

| MGnfy | Metagenomic | ~1 billion sequences | Contributes novel, environmentally-specific protein variants. |

| Final Training Set | Combined & filtered | ~65 million unique MSAs | Balanced representation for masked language modeling. |

Core Methodology: Masked Language Modeling (MLM)

ESM2 is trained using a self-supervised objective known as Masked Language Modeling, adapted for protein sequences.

Experimental Protocol for Pre-training

- Input Representation: Each protein sequence is tokenized into its constituent amino acid residues (20 standard + special tokens for start, stop, mask, etc.).

- Masking Strategy: 15% of tokens in each sequence are selected for masking.

- 80% of the time, the selected token is replaced with a special

[MASK]token. - 10% of the time, it is replaced with a random amino acid token.

- 10% of the time, the token is left unchanged.

- 80% of the time, the selected token is replaced with a special

- Model Objective: The model is trained to predict the original token at the masked positions using a cross-entropy loss. The context provided by the unmasked residues in the sequence forces the model to learn evolutionary constraints, co-evolutionary patterns, and structural rules.

- Training Hyperparameters (ESM2 15B Example):

- Optimizer: AdamW (β1=0.9, β2=0.98)

- Learning Rate: 1e-3 with a warmup period and linear decay.

- Batch Size: ~1 million tokens (via gradient accumulation).

- Hardware: Trained on NVIDIA A100 or H100 GPUs using fully sharded data parallelism.

Architectural Variations Across Model Sizes

Table 2: ESM2 Model Architecture Parameters

| Model | Parameters | Layers | Embedding Dim | Attention Heads | Context Window | Training Tokens |

|---|---|---|---|---|---|---|

| ESM2-8M | 8 million | 6 | 320 | 8 | 1024 | ~1.5e15 |

| ESM2-35M | 35 million | 12 | 480 | 12 | 1024 | ~1.5e15 |

| ESM2-150M | 150 million | 30 | 640 | 20 | 1024 | ~1.5e15 |

| ESM2-650M | 650 million | 33 | 1280 | 20 | 1024 | ~1.5e15 |

| ESM2-3B | 3 billion | 36 | 2560 | 32 | 1024 | ~1.5e15 |

| ESM2-15B | 15 billion | 48 | 5120 | 40 | 1024 | ~1.5e15 |

Visualization of Workflows

ESM2 Data & Training Pipeline

Downstream Task Methodology

The learned representations are probed via frozen embedding extraction or fine-tuning.

Protocol for Contact & Structure Prediction

- Embedding Extraction: Pass a target sequence through ESM2 and extract the row-wise attention maps from the final layer or intermediate layers.

- Attention-to-Distance Conversion: Compute pairwise attention scores (averaged over heads) and apply a logistic transformation to predict a distance bin (e.g., < 8Å).

- Structure Assembly: Use predicted contact maps as constraints in fragment assembly or direct folding algorithms (like AlphaFold2's structure module, if integrated).

Protocol for Zero-Shot Variant Effect Prediction

- Sequence Scoring: For a wild-type sequence and a mutated variant, compute the log-likelihood of each sequence under the ESM2 MLM objective.

- Score Calculation: The pseudo-log-likelihood difference (Δlog P) between the wild-type and mutant is computed as a predictor of functional effect (stability, activity).

- Calibration: Scores are often normalized and calibrated against experimental datasets.

Downstream Application Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ESM-Based Research

| Item / Resource | Function / Description | Source / Implementation |

|---|---|---|

| ESMFold | End-to-end single-sequence protein structure prediction pipeline powered by ESM2. | GitHub: facebookresearch/esm |

| ESM Metagenomic Atlas | A database of over 600 million metagenomic protein structures predicted by ESMFold. | AWS Open Data Registry |

Hugging Face transformers |

Library providing easy access to pre-trained ESM2 models for embedding extraction and fine-tuning. | from transformers import AutoModelForMaskedLM |

| PyTorch / FairSeq | Deep learning frameworks used for original ESM2 model training and inference. | pytorch.org / GitHub: facebookresearch/fairseq |

biopython & pytorch_geometric |

Libraries for processing biological sequences and graphs for downstream structure tasks. | biopython.org / pyg.org |

| OpenFold | Trainable, open-source implementation of AlphaFold2; can use ESM2 embeddings as input. | GitHub: aqlaboratory/openfold |

| UniProt & PDBe-KB | Primary sources for experimental protein sequences, structures, and functional annotations for validation. | uniprot.org / pdbe-kb.org |

| ProteinMPNN | Protein sequence design tool; often used in conjunction with ESM2/ESMFold for inverse folding. | GitHub: dauparas/ProteinMPNN |

This whitepaper, framed within a broader thesis on Evolutionary Scale Modeling (ESM) protein language model architectures, explores the mechanistic relationship between model scale (parameters and data) and the emergent capability to understand and predict complex biological phenomena. The core hypothesis posits that scaling neural network parameters, when applied to massive, diverse biological sequence datasets, induces qualitative leaps in predictive and explanatory power, moving from simple pattern recognition to a form of functional reasoning about proteins and cellular systems.

ESM2 Model Scaling Landscape

The ESM-2 (Evolutionary Scale Modeling-2) suite provides a canonical case study for parameter scaling in computational biology. The models are transformer-based protein language models trained on millions of diverse protein sequences from UniRef.

Table 1: ESM-2 Model Family Scaling Parameters & Performance

| Model Name | Parameters (Millions) | Layers | Embedding Dim | Attention Heads | Training Tokens (Billions) | PPL (Downstream Avg.) |

|---|---|---|---|---|---|---|

| ESM-2 8M | 8 | 6 | 320 | 20 | ~2.5 | 4.85 |

| ESM-2 35M | 35 | 12 | 480 | 20 | ~2.5 | 3.92 |

| ESM-2 150M | 150 | 30 | 640 | 20 | ~2.5 | 3.25 |

| ESM-2 650M | 650 | 33 | 1280 | 20 | ~2.5 | 2.70 |

| ESM-2 3B | 3,000 | 36 | 2560 | 40 | ~2.5 | 2.42 |

| ESM-2 15B | 15,000 | 48 | 5120 | 40 | ~2.5 | 2.12 |

PPL: Perplexity (lower is better, indicates better sequence modeling).

Emergent Capabilities with Scale

Scaling parameters correlates with the emergence of zero-shot biological understanding, where the model performs tasks it was not explicitly trained on.

Table 2: Emergent Zero-Shot Prediction Accuracy by Model Scale

| Task Description | ESM-2 8M | ESM-2 150M | ESM-2 3B | ESM-2 15B |

|---|---|---|---|---|

| Contact Prediction (Top-L Precision) | 12.5% | 38.7% | 58.1% | 68.4% |

| Secondary Structure (3-state Q3) | 65.2% | 72.8% | 76.5% | 78.9% |

| Fluorescence Landscape Prediction (R²) | 0.31 | 0.52 | 0.68 | 0.81 |

| Protein-Protein Interface Prediction | N/A | Emerging | Functional | Accurate |

| Mutation Effect Prediction (Spearman ρ) | 0.22 | 0.41 | 0.59 | 0.73 |

Experimental Protocol: Probing Emergent Understanding

To quantify emergent biological understanding, researchers employ "fitness prediction" and "saturation mutagenesis" probing experiments.

Protocol 4.1: Zero-Shot Mutational Effect Prediction

- Input Preparation: Select a target protein sequence (e.g., GFP, Beta-lactamase). Generate a list of all possible single-point mutations (19 possible amino acids at each position).

- Model Inference: For each mutant sequence, compute the log-likelihood score using the ESM-2 model. No model fine-tuning is performed.

- Score Calculation: The Mutational Effect Score is derived as the difference in log-likelihood between the wild-type and mutant sequence:

Δlog P = log P(mutant) - log P(wildtype). - Ground Truth Correlation: Correlate (Spearman's ρ) the model's predicted Δlog P scores with experimentally measured fitness scores from deep mutational scanning (DMS) assays.

- Analysis: Plot correlation coefficient (ρ) versus model parameter count to demonstrate scaling law.

Protocol 4.2: Contact Map Extraction from Attention Weights

- Forward Pass: Run the target protein sequence through the ESM-2 model.

- Attention Map Collection: Extract the raw attention maps from the final transformer layer, averaging over all attention heads.

- Symmetrization: Create a symmetric matrix by averaging the attention map with its transpose:

A_sym = (A + A^T) / 2. - Filtering: Apply a minimum sequence separation filter (e.g., ignore residues within 6 positions of each other).

- Comparison to Ground Truth: Compare the top-ranked residue pairs by attention strength to known 3D structural contacts (from PDB), calculating precision at L/k (where L is sequence length, k often = 10 or 5).

Diagram 1: ESM-2 Contact Map Extraction Workflow (76 chars)

Signaling Pathway Reconstruction from Embeddings

Large-scale models learn representations that encode functional states. The following diagram illustrates a hypothesized method for inferring pathway activity from model embeddings.

Diagram 2: cAMP-PKA-CREB Pathway & ESM Inference (73 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents for Validating ESM Model Predictions

| Reagent / Material | Function in Validation | Example Use Case |

|---|---|---|

| Site-Directed Mutagenesis Kit (e.g., Q5) | Introduces specific point mutations predicted by the model to be stabilizing or destabilizing. | Testing Δlog P predictions for a therapeutic enzyme. |

| Mammalian Two-Hybrid System | Detects protein-protein interactions in vivo. | Validating predicted novel interaction partners from co-evolution analysis in ESM embeddings. |

| NanoLuc Binary Technology (NanoBiT) | Measures real-time, quantitative protein-protein interaction kinetics. | Validating the strength of a predicted protein complex interface. |

| Deep Mutational Scanning (DMS) Library | Provides a comprehensive experimental fitness landscape for a protein. | Serves as the ground truth dataset for benchmarking zero-shot mutational effect prediction (Protocol 4.1). |

| Cryo-EM Grids & Prep Systems | Enables high-resolution structural determination of novel protein conformations or complexes predicted by the model. | Solving the structure of a protein in a conformation predicted from its ESM embedding. |

| AlphaFold2/3 ColabFold Pipeline | Generates independent 3D structural predictions for comparison. | Used to cross-validate contact maps and structural features extracted from ESM-2 attention weights. |

| Phos-tag Acrylamide | Electrophoretic mobility shift assay reagent for detecting phosphorylated proteins. | Testing predictions about kinase substrate specificity learned implicitly by the large model. |

How to Use ESM2 Models: A Practical Guide for Research and Drug Discovery

Accessing and Loading Pre-trained ESM2 Weights (Hugging Face, ESM Repository)

Within the broader thesis on ESM2 Model Sizes and Parameters Overview Research, the ability to access and load pre-trained weights is a foundational step for downstream experimentation. The Evolutionary Scale Modeling 2 (ESM2) protein language models, developed by Meta AI, provide a powerful framework for protein structure and function prediction. This guide details the technical methodologies for obtaining and initializing these models via the Hugging Face Transformers library and the official ESM repository, serving as a critical resource for researchers and drug development professionals aiming to leverage state-of-the-art protein embeddings.

ESM2 Model Architecture & Size Variants

ESM2 is a transformer-based model trained on millions of protein sequences. The key architectural variations lie in the number of layers (depth), the embedding dimension (width), and the number of attention heads, which scale from 8 million to 15 billion parameters.

Table 1: ESM2 Model Size Variants and Key Parameters

| Model Name (Hugging Face ID) | Parameters | Layers | Embedding Dim | Attention Heads | Context Size | Release Date (approx.) |

|---|---|---|---|---|---|---|

esm2_t6_8M_UR50D |

8 Million | 6 | 320 | 20 | 1024 | 2022 |

esm2_t12_35M_UR50D |

35 Million | 12 | 480 | 20 | 1024 | 2022 |

esm2_t30_150M_UR50D |

150 Million | 30 | 640 | 20 | 1024 | 2022 |

esm2_t33_650M_UR50D |

650 Million | 33 | 1280 | 20 | 1024 | 2022 |

esm2_t36_3B_UR50D |

3 Billion | 36 | 2560 | 40 | 1024 | 2022 |

esm2_t48_15B_UR50D |

15 Billion | 48 | 5120 | 40 | 1024 | 2022 |

Note: Data sourced from Hugging Face Model Hub and Meta AI's ESM GitHub repository.

Methodologies for Accessing & Loading Weights

Protocol A: Using the Hugging FacetransformersLibrary

This is the recommended method for most research applications, offering integration with the broader Hugging Face ecosystem.

Step 1: Environment Setup

Step 2: Python Loading Script

Protocol B: Using the Official ESM Repository (Direct)

This method provides access to the native codebase and some additional utilities.

Step 1: Clone and Install

Step 2: Python Loading Script

Protocol C: Manual Download and Offline Loading

For secure or offline environments, weights can be downloaded manually.

Step 1: Download Weights

Weights can be downloaded directly from Hugging Face (e.g., https://huggingface.co/facebook/esm2t33650M_UR50D/tree/main) or the ESM repository. Key files are pytorch_model.bin (weights) and config.json.

Step 2: Load from Local Directory

Experimental Validation Protocol

To confirm successful model loading and assess basic performance, the following inference benchmark can be run.

Protocol: Single-Sequence Embedding Latency Test

- Objective: Measure inference time for a standard sequence across different ESM2 sizes.

- Materials: Python 3.8+, PyTorch 1.12+, one NVIDIA A100 GPU (40GB VRAM).

- Procedure:

a. Load the model using Protocol A.

b. Tokenize the reference sequence "MKLKVWLLLL".

c. Start a timer.

d. Perform a forward pass in

eval()mode withtorch.no_grad(). e. Stop timer upon completion of thelast_hidden_statecomputation. f. Repeat 100 times, excluding the first run, and calculate average latency. - Expected Outcome: Latency scales approximately linearly with parameter count for models up to 3B parameters; the 15B model may require model parallelism.

Table 2: Example Validation Metrics (Inference Benchmark)

| Model Variant | Avg. Latency (GPU, ms) | Memory Allocated (GB) |

|---|---|---|

| esm2t68M | 12 ± 2 | 0.8 |

| esm2t1235M | 35 ± 3 | 1.5 |

| esm2t30150M | 110 ± 10 | 3.2 |

| esm2t33650M | 420 ± 25 | 7.1 |

| esm2t363B | 1850 ± 150 | 18.5 |

| esm2t4815B | N/A* | >40 (Model Parallel) |

Note: Benchmarks are illustrative. *15B model requires advanced partitioning.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ESM2-Based Experiments

| Item / Resource | Function & Application |

|---|---|

Hugging Face transformers Library |

Primary API for loading, tokenizing, and managing ESM2 models. |

| PyTorch (GPU-enabled) | Deep learning framework required for model execution and gradient computation. |

| ESM GitHub Repository | Source for native training/inference scripts and specialized utilities (e.g., contact prediction). |

| High-VRAM GPU (e.g., A100, H100) | Accelerates inference and fine-tuning, especially for models >650M parameters. |

| FASTA File Datasets | Standardized input format for protein sequence batches. |

biopython Library |

For parsing FASTA files and managing biological sequence data. |

| Weights & Biases (W&B) / MLflow | Experiment tracking for loss, metrics, and hyperparameters during fine-tuning. |

Workflow and Relationship Diagrams

Diagram 1: ESM2 Model Access and Loading Decision Workflow (Max width: 760px)

Diagram 2: Simplified ESM2 Model Architecture Overview (Max width: 760px)

This technical guide details the standard inference workflow for generating protein sequence embeddings, a foundational task in computational biology. This process is a critical component within the broader research thesis on Evolutionary Scale Modeling 2 (ESM2) architectures, which span from 8 million to 15 billion parameters. The embeddings produced are dense vector representations that capture semantic, structural, and functional information about protein sequences, enabling downstream tasks such as structure prediction, function annotation, and variant effect prediction. This workflow is essential for researchers and drug development professionals leveraging state-of-the-art protein language models.

The ESM2 model family provides a suite of options balancing computational cost and representational power. Selecting the appropriate model is the first critical step in the inference workflow.

Table 1: ESM2 Model Variants and Key Specifications

| Model Name | Parameters (Million/Billion) | Layers | Embedding Dimension | Context Size (Tokens) | Typical Use Case |

|---|---|---|---|---|---|

| ESM2t68M | 8M | 6 | 320 | 1024 | Prototyping, high-throughput screening |

| ESM2t1235M | 35M | 12 | 480 | 1024 | Medium-scale functional annotation |

| ESM2t30150M | 150M | 30 | 640 | 1024 | Detailed sequence-structure analysis |

| ESM2t33650M | 650M | 33 | 1280 | 1024 | High-accuracy structure prediction |

| ESM2t363B | 3B | 36 | 2560 | 1024 | Research-level variant effect prediction |

| ESM2t4815B | 15B | 48 | 5120 | 1024 | State-of-the-art foundational research |

Core Inference Workflow: A Step-by-Step Protocol

The following protocol describes the end-to-end process for generating per-residue and pooled sequence embeddings from a FASTA file.

Experimental Protocol: From FASTA to Embeddings

Objective: To generate a fixed-dimensional embedding vector for each residue (and for the entire sequence) from a raw amino acid sequence.

Materials & Pre-requisites:

- Input: Protein sequence(s) in FASTA format.

- Software: Python 3.8+, PyTorch 1.12+, Hugging Face

transformerslibrary,biopython. - Hardware: GPU (e.g., NVIDIA A100, V100) recommended for models >150M parameters.

Methodology:

- Sequence Acquisition & Preprocessing:

- Load the FASTA file using

Bio.SeqIO. - Extract the raw amino acid sequence string. Remove any non-standard residues or ambiguities, or map them to a standard token (e.g., "X").

- Truncate or chunk sequences exceeding the model's context window (1024 tokens for ESM2). Standard practice is to use the first 1022 residues (plus special tokens).

- Load the FASTA file using

Tokenization:

- Utilize the

ESMTokenizerfrom the Hugging Face library corresponding to the chosen model (e.g.,facebook/esm2_t30_150M). - The tokenizer adds special tokens

<cls>(beginning) and<eos>(end) and converts the sequence into a numerical token ID tensor. - Critical Step: Generate an attention mask tensor (1 for real tokens, 0 for padding) if batching sequences of unequal length.

- Utilize the

Model Loading & Inference:

- Load the pre-trained ESM2 model using

AutoModelForMaskedLM.from_pretrained(). - Set the model to evaluation mode (

model.eval()). - Pass the token IDs and attention mask to the model within a

torch.no_grad()context to disable gradient calculation. - The model returns a hidden states tuple. The last hidden state has shape

[batch_size, sequence_length, embedding_dimension].

- Load the pre-trained ESM2 model using

Embedding Extraction:

- Per-residue Embeddings: Extract all hidden states for the sequence tokens (excluding special tokens). These are the standard residue-level representations.

- Pooled Sequence Embedding: Extract the hidden state corresponding to the

<cls>token (index 0). This vector is designed to represent the entire sequence.

Post-processing & Storage:

- Convert embeddings to NumPy arrays or save as PyTorch tensors.

- Store using efficient formats (e.g.,

.pt,.npy, or HDF5) for downstream analysis.

Title: ESM2 Inference Workflow Diagram

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagent Solutions for ESM2 Inference

| Item/Category | Function & Purpose | Example/Note |

|---|---|---|

| Computational Environment | Provides the software and hardware foundation for running large-scale models. | Google Colab Pro, AWS EC2 (p4d.24xlarge), NVIDIA DGX Station. |

| Model Repository | Source for pre-trained model weights and tokenizers. | Hugging Face Model Hub (facebook/esm2_*). |

| Sequence Curation Tools | For cleaning, validating, and preparing input FASTA files. | Bio.SeqIO, awk, custom Python scripts for filtering. |

| Deep Learning Framework | Core library for loading models and performing tensor operations. | PyTorch (>=1.12) with CUDA support. |

| Embedding Storage Format | Efficient file format for storing high-dimensional embedding vectors. | PyTorch .pt, NumPy .npy, HDF5 (.h5). |

| Downstream Analysis Suite | Tools for analyzing and visualizing the generated embeddings. | scikit-learn (PCA, t-SNE), SciPy, Matplotlib, Seaborn. |

| Performance Profiler | For identifying bottlenecks in the inference pipeline (crucial for large models). | PyTorch Profiler, nvtop, gpustat. |

Advanced Experimental Protocols & Data Presentation

Protocol: Embedding Extraction for Contact Prediction

Objective: To extract intermediate layer embeddings and generate a contact map predicting spatial proximity between residues.

Methodology:

- Follow the standard inference workflow (Steps 3.1.1-3.1.3).

- Configure the model to output hidden states from all layers (

output_hidden_states=True). - Extract embeddings from a middle layer (e.g., layer 30 for ESM2t30150M). Empirical research indicates middle layers often best capture structural contacts.

- Compute the cosine similarity or a learned projection from the outer product of the embedding matrix to predict a

[L, L]contact map. - Apply a masking function to ignore predictions for residues too close in sequence (e.g., |i-j| < 6).

Protocol: Batch Processing for High-Throughput Screening

Objective: To efficiently process thousands of sequences by optimizing GPU memory and throughput.

Methodology:

- Pre-tokenize all sequences and sort by length to minimize padding in each batch.

- Implement dynamic batching, grouping sequences of similar length.

- Use mixed-precision inference (

torch.cuda.amp.autocast()) to halve GPU memory usage and increase speed. - Implement gradient checkpointing for models >3B parameters if memory errors persist, at a ~20% computational overhead.

Table 3: Performance Metrics for ESM2 Inference (Representative Data)

| Model | GPU Memory (FP32) | Avg. Inference Time (per 500 seqs) | Embedding Dim. | Recommended Batch Size (Seq Len=256) |

|---|---|---|---|---|

| ESM2t1235M | ~1.5 GB | 45 sec | 480 | 64 |

| ESM2t30150M | ~4 GB | 3 min | 640 | 32 |

| ESM2t33650M | ~12 GB | 8 min | 1280 | 16 |

| ESM2t363B | ~24 GB | 22 min | 2560 | 8 |

| ESM2t4815B | >48 GB (Model Parallel) | ~2 hours | 5120 | 1-2 |

Title: High-Throughput Batch Processing Pipeline

This guide outlines the standardized, production-ready workflow for generating protein sequence embeddings using the ESM2 model family. The choice of model size, detailed in the overarching thesis, directly impacts the computational requirements and the richness of the biological information captured in the embeddings. By following the provided experimental protocols and leveraging the outlined toolkit, researchers can reliably transform raw FASTA sequences into powerful numerical representations, enabling a new generation of data-driven discoveries in protein science and therapeutic development.

This guide details the extraction of three critical feature types from protein language models, specifically the ESM2 family, for downstream applications in structural biology and therapeutic design. This work is situated within a broader research thesis analyzing the capabilities and scaling laws of ESM2 model sizes (ranging from 8M to 15B parameters). The choice of feature and extraction methodology is paramount for tasks such as protein structure prediction, function annotation, and engineering.

The ESM2 models are transformer-based protein language models trained on millions of diverse protein sequences. Performance scales predictably with parameter count, impacting the quality of extracted features.

Table 1: ESM2 Model Variants and Key Specifications

| Model Name | Parameters (M) | Layers | Embedding Dim | Attention Heads | Context (Tokens) | Recommended Use Case |

|---|---|---|---|---|---|---|

| ESM2-8M | 8 | 6 | 320 | 20 | 1024 | Fast prototyping, low-resource inference |

| ESM2-35M | 35 | 12 | 480 | 20 | 1024 | Balance of speed and accuracy |

| ESM2-150M | 150 | 30 | 640 | 20 | 1024 | General-purpose feature extraction |

| ESM2-650M | 650 | 33 | 1280 | 20 | 1024 | High-accuracy contact & logits |

| ESM2-3B | 3000 | 36 | 2560 | 40 | 1024 | State-of-the-art representations |

| ESM2-15B | 15000 | 48 | 5120 | 40 | 1024 | Cutting-edge research, highest fidelity |

Feature Extraction Methodologies

Contact Map Prediction

Contact maps represent the spatial proximity between residues (Cβ atoms, typically within 8Å), crucial for folding and structure prediction.

Experimental Protocol:

- Input Preparation: Tokenize the protein sequence using the ESM2 vocabulary. Add a beginning-of-sequence (

<cls>) and end-of-sequence (<eos>) token. - Model Forward Pass: Pass token IDs through the selected ESM2 model to obtain per-residue embeddings from the final layer (L) for all residues

iandj. - Attention-Based Extraction: For each layer

l, extract the attention matricesA^lfrom all attention heads. A common approach is to compute the average attention map across heads. - Logistic Transformation: Compute the contact score as

C_{ij} = σ(MLP(h_i^L || h_j^L))or use a logistic regression on symmetrized attention features(A_{ij}^l + A_{ji}^l)/2from middle layers (e.g., layers 12-32 in ESM2-650M). - Post-processing: Apply a minimum sequence separation filter (e.g.,

|i-j| > 6) to remove trivial contacts.

Diagram 1: Contact map extraction workflow from ESM2.

Per-Residue Logits

Logits are the unnormalized output scores for each token in the vocabulary at every sequence position, useful for variant effect prediction and sequence design.

Experimental Protocol:

- Masked Inference: For each residue position

iin the sequence of lengthL, replace its token with a mask token (<mask>). - Forward Pass: Run the masked sequence through ESM2. The model outputs logits

z_iat the masked positionicorresponding to the probability distribution over all 33 possible amino acids and special tokens. - Logit Extraction: Collect the logits

z_ifor the true or candidate amino acids. The logit for the true wild-type residue is often used as an evolutionary fitness score. - Aggregation: Repeat for all

Lpositions to generate anL x Vmatrix (V: vocabulary size).

Diagram 2: Extracting per-residue logits via masked inference.

Pooled Representations

Pooled representations are single, fixed-dimensional vectors summarizing the entire protein, used for classification, homology detection, or embedding.

Experimental Protocol:

- Standard Pooling: Pass the tokenized sequence through ESM2. Use the embedding corresponding to the

<cls>token from the final layer as the global representation. - Alternative Pooling Methods:

- Mean Pooling: Average the per-residue embeddings (excluding

<cls>and<eos>) from a specified layer (often the final layer). - Attention Pooling: Use a learned weighted average of per-residue embeddings.

- Mean Pooling: Average the per-residue embeddings (excluding

- Layer Selection: Empirical results indicate the final layer is best for global semantics, while middle layers (e.g., layer 21 in ESM2-650M) may capture structural information.

Diagram 3: Pathways for generating pooled representations.

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Feature Extraction

| Item | Function & Description | Example/Note |

|---|---|---|

| ESM2 Model Weights | Pre-trained parameters for inference. Available in 6 sizes. | Download from Hugging Face or FAIR Model Zoo. |

| ESM2 Vocabulary File | Mapping of amino acids and special tokens to model indices. | Standard 33-token vocabulary (<cls>, <pad>, <eos>, <unk>, 20 AAs, 10 rare/ambiguous). |

| Tokenization Script | Converts protein sequence string into model-ready token IDs. | esm.pretrained.load_model_and_alphabet() provides tokenizer. |

| Inference Framework | Software to run model forward passes efficiently. | PyTorch, Hugging Face Transformers, fairseq. |

| Contact Prediction Head | Optional module to convert embeddings/attention to contact scores. | Linear layer or logistic regression model. |

| Masked Inference Loop | Script to iteratively mask each position for logit extraction. | Critical for variant effect prediction (e.g., ESM-1v protocol). |

| Pooling Layer | Module to aggregate sequence embeddings into a single vector. | Can be simple (mean) or learned (attention-based). |

| Embedding Storage Format | Efficient format for storing thousands of extracted features. | HDF5 (.h5), NumPy arrays (.npy), or PyTorch tensors (.pt). |

| Computation Hardware | Accelerators for running large models (3B, 15B). | GPU (NVIDIA A100/H100) with >40GB VRAM for ESM2-15B. |

Quantitative Comparison of Extracted Features

Table 3: Performance Metrics by Model Size on Key Downstream Tasks

| Model | Contact Prediction (Top-L Precision) | Variant Effect (Spearman's ρ) | Remote Homology (Accuracy) | Inference Speed (Seq/s)* |

|---|---|---|---|---|

| ESM2-8M | 0.25 | 0.30 | 0.15 | 1200 |

| ESM2-35M | 0.41 | 0.42 | 0.28 | 450 |

| ESM2-150M | 0.58 | 0.55 | 0.42 | 180 |

| ESM2-650M | 0.75 | 0.68 | 0.61 | 45 |

| ESM2-3B | 0.82 | 0.72 | 0.78 | 8 |

| ESM2-15B | 0.87 | 0.75 | 0.85 | 1 |

Note: Inference speed measured on a single NVIDIA A100 GPU for a 300-residue protein, batch size 1.

The selection of ESM2 model size and corresponding feature extraction protocol is a trade-off between computational cost and predictive power. Contact maps from larger models (>650M) rival coevolution-based methods, per-residue logits enable zero-shot variant scoring, and pooled representations from the final layer provide powerful embeddings for proteomic tasks. This guide provides the reproducible protocols necessary to leverage these features within a scalable research framework.

The Evolutionary Scale Modeling (ESM) project represents a paradigm shift in protein science, with ESM2 being its flagship autoregressive language model. A core thesis of this research is that scaling model parameters and training data fundamentally enhances the model's capacity to capture the intricate relationships between protein sequence, structure, and function. ESM2 models range from 8 million to 15 billion parameters, with performance on tasks like structure prediction scaling predictably with size.

Inverse folding, the task of designing a protein sequence that folds into a given backbone structure, is a critical test of a model's structural understanding. ESM-IF1 is a specialized model trained explicitly for this task, distinct from but intellectually descended from the ESM2 lineage. Its performance validates the broader thesis: that large-scale learned representations from sequence data contain rich, generalizable information about protein physics, which can be specialized to solve complex generative problems in structural biology. This guide details the technical implementation and application of ESM-IF1.

Core Architecture and Methodology of ESM-IF1

ESM-IF1 is a graph neural network (GNN) model. It treats the protein backbone as a graph where nodes are amino acid residues, and edges represent spatial proximities. The model does not use the primary sequence as input; instead, it operates on a 3D structure represented as a set of residue types (placeholder/masked), backbone dihedral angles, and inter-residue distances and orientations.

Key Experimental Protocol for Sequence Design with ESM-IF1:

Input Preparation (Structure Graph Construction):

- Input: A protein backbone structure in PDB format.

- Processing: Extract backbone coordinates (N, Cα, C) for each residue.

- Node Features: Compute dihedral angles (φ, ψ, ω) and local frame orientations for each residue. Initial residue identities are masked.

- Edge Features: For all residue pairs within a cutoff distance (e.g., 20Å), compute the distance and the orientation of one residue's local frame relative to the other's.

- Output: A graph G = (V, E), where V is the set of residues with node features, and E is the set of edges with geometric features.

Model Inference (Sequence Decoding):

- The prepared graph is fed into the ESM-IF1 GNN.

- The model iteratively refines representations of each node by aggregating information from its neighbors via message-passing layers.

- The final node representations are passed through a classifier head that predicts a probability distribution over the 20 standard amino acids for each residue position.

- Sequences can be generated via greedy decoding (selecting the highest-probability amino acid at each position) or by sampling from the predicted distributions to explore diversity.

Output and Validation:

- Output: One or more predicted protein sequences for the input backbone.

- Validation: The designed sequences should be evaluated computationally (e.g., for stability via folding with AlphaFold2 or Rosetta, for functional site preservation) and experimentally via expression and biophysical characterization.

Quantitative Performance Data

The performance of inverse folding models is typically evaluated by recovery rate—the percentage of native wild-type amino acids correctly predicted when the model is tasked with recovering the sequence for a given native structure.

Table 1: Comparative Performance of Inverse Folding Models

| Model | Architecture | Avg. Sequence Recovery (%) (CATH 4.2) | Notes |

|---|---|---|---|

| ESM-IF1 | Graph Neural Network | ~58.4 | Generalizes well to novel folds, high stability in designs. |

| ProteinMPNN | GNN (Message Passing) | ~60.0 | Contemporary state-of-the-art, high throughput. |

| Rosetta (SeqDesign) | Physics-based/Statistical | ~35-45 | Relies on energy functions and rotamer libraries. |

| AlphaFold2 (via MSA) | Transformer (Indirect) | N/A | Not a direct inverse folder, but can fill missing residues. |

Table 2: ESM-IF1 Performance Across Structural Contexts

| Structural Context / Metric | Value | Implication |

|---|---|---|

| Buried Core Residues | Recovery: ~65% | High accuracy in packed, hydrophobic environments. |

| Solvent-Exposed Residues | Recovery: ~52% | More variability and functional roles lower recovery. |

| Active Site Residues | Requires Fine-Tuning | Native functional residues often not top prediction. |

| Novel Scaffolds (De Novo) | Success Rate: >80%* | *Rate of producing stable, folded proteins in validation. |

| Computational Speed | ~50 residues/sec (GPU) | Suitable for high-throughput design of single chains. |

Workflow and Logic Diagrams

Diagram 1: ESM-IF1 Inverse Folding Workflow

Diagram 2: ESM-IF1 Message Passing Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Inverse Folding and Validation

| Item / Reagent | Function / Role in Workflow | Key Considerations |

|---|---|---|

| ESM-IF1 Model Weights | Pre-trained parameters for the inverse folding GNN. Available via GitHub. | Requires PyTorch. Choose version compatible with your framework. |

| PyTorch & PyTorch Geometric | Deep learning framework and library for GNN implementation. | Essential for running and potentially fine-tuning the model. |

| Rosetta Suite | Macromolecular modeling software for energy scoring, sequence design (comparison), and structural relaxation. | Provides physics-based validation of designed sequences. |

| AlphaFold2 or ColabFold | Protein structure prediction tools. Critical for in silico validation (folding the designed sequence). | Checks if the designed sequence indeed folds into the target backbone. |

| PDB File of Target Backbone | The input 3D structure for design. Can be natural, de novo, or a modified scaffold. | Must be a clean, all-atom model. May require pre-processing (removing ligands, fixing residues). |

| GPUs (NVIDIA) | Hardware for accelerating model inference and structure prediction. | Inference for single chains is feasible on consumer GPUs (e.g., RTX 3090/4090). |

| Cloning & Expression Kits (e.g., NEB) | For experimental validation: cloning designed genes into plasmids and expressing in E. coli or other systems. | Codon optimization for the expression host is recommended. |

| Size Exclusion Chromatography & CD Spectroscopy | Biophysical tools to assess protein stability, folding, and monomeric state post-expression. | Validates that the designed protein is soluble and folded as intended. |

This guide details a critical application of the Evolutionary Scale Modeling 2 (ESM2) architecture within the broader research thesis on ESM2 model sizes and parameters. The thesis posits that scaling model parameters from 8M to 15B, coupled with training on exponentially increasing sequences (UniRef), enables emergent capabilities in zero-shot biological function prediction. Specifically, this document examines how the ESM2 model family, from its smallest to largest incarnations, can predict protein function and score the effects of amino acid variants without task-specific training, a capability that scales with parameter count.

Core Technical Principles

ESM2 leverages a transformer-only architecture with attention mechanisms over sequence tokens. For function prediction, the model utilizes the learned contextual embeddings of the [CLS] token or mean-pooled residue representations. Variant effect scoring (VES) is performed in a zero-shot manner by comparing the log-likelihood of a wild-type sequence to its mutated version under the model's native training objective, which is masked language modeling (MLM). The underlying hypothesis is that evolutionarily fit sequences have higher model likelihoods, and deleterious mutations decrease this likelihood.

The performance of different ESM2 scales on benchmark tasks is summarized below.

Table 1: ESM2 Model Performance on Zero-Shot Function Prediction (Fluorescence & Stability)

| Model (Parameters) | Fluorescence Spearman (r) | Stability Spearman (r) | Substitutions Scored (Millions) | Embedding Dimension |

|---|---|---|---|---|

| ESM2 8M | 0.21 | 0.35 | 0.5 | 320 |

| ESM2 35M | 0.38 | 0.48 | 2.1 | 480 |

| ESM2 150M | 0.57 | 0.62 | 8.7 | 640 |

| ESM2 650M | 0.68 | 0.71 | 25.3 | 1280 |

| ESM2 3B | 0.73 | 0.75 | 76.2 | 2560 |

| ESM2 15B | 0.78 | 0.79 | 388.5 | 5120 |

Table 2: Zero-Shot Variant Effect Scoring on Clinical Variant Benchmarks

| Model (Parameters) | ClinVar (AUC-ROC) | DeepMind Proteins (AUC-ROC) | HGMD (AUC-PR) | Inference Speed (Var/sec)* |

|---|---|---|---|---|

| ESM2 8M | 0.67 | 0.71 | 0.12 | 12,500 |

| ESM2 35M | 0.72 | 0.75 | 0.18 | 5,800 |

| ESM2 150M | 0.79 | 0.81 | 0.26 | 2,100 |

| ESM2 650M | 0.83 | 0.85 | 0.33 | 650 |

| ESM2 3B | 0.86 | 0.87 | 0.39 | 150 |

| ESM2 15B | 0.89 | 0.89 | 0.45 | 28 |

*On a single NVIDIA A100 GPU.

Detailed Experimental Protocols

Protocol 4.1: Zero-Shot Function Prediction for Directed Evolution

Objective: Predict the functional fitness (e.g., fluorescence, stability) of a protein variant from its sequence alone.

- Input Preparation: Generate the FASTA sequence for the variant of interest.

- Embedding Extraction: Pass the sequence through the pretrained ESM2 model (no fine-tuning). Extract the per-residue embeddings from the final transformer layer.

- Representation Aggregation: Compute a single vector representation by averaging all per-residue embeddings (mean pooling).

- Fitness Readout: Pass the aggregated embedding through a simple, shallow downstream predictor. Critically, this predictor is trained only on a small held-out set of experimentally measured variants (e.g., <1000 datapoints) from the target protein family, demonstrating the zero-shot transfer capability of the ESM2 embeddings.

- Validation: Compare predicted fitness scores against held-out experimental measurements using Spearman's rank correlation coefficient.

Protocol 4.2: Zero-Shot Variant Effect Scoring (VES)

Objective: Assign a pathogenicity likelihood score to a single amino acid variant.

- Sequence Tokenization: Tokenize the wild-type protein sequence using the ESM2 vocabulary.

- Wild-type Log-Likelihood Calculation:

- For the position

iof the variant, mask the token. - Run the forward pass of ESM2 to obtain the logits for the masked position.

- Extract the log-likelihood

L_wtfor the true wild-type amino acid.

- For the position

- Variant Log-Likelihood Calculation:

- Repeat step 2, but extract the log-likelihood

L_mutfor the mutant amino acid token at the same masked position.

- Repeat step 2, but extract the log-likelihood

- Score Computation: Calculate the variant effect score as the log-odds ratio:

Score = L_wt - L_mut. A higher positive score suggests the variant is more evolutionarily disfavored, correlating with pathogenicity. - Benchmarking: Evaluate scores against curated databases like ClinVar (benign vs. pathogenic variants) using Area Under the Receiver Operating Characteristic Curve (AUC-ROC).

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ESM2-Based Function and Variant Analysis

| Item | Function/Benefit | Example/Format |

|---|---|---|

| Pretrained ESM2 Weights | Foundational model parameters enabling zero-shot inference without training from scratch. Available in sizes from 8M to 15B parameters. | Hugging Face transformers library, FAIR Model Zoo. |

| ESM Embedding Extractor | Optimized code library to efficiently generate sequence embeddings from ESM2 models for large datasets. | esm Python package (pip install fair-esm). |

| Variant Calling Format (VCF) Parser | Converts standard genomic variant files into protein-level substitutions for scoring. | cyvcf2, pysam libraries. |

| Protein Fitness Benchmark Datasets | Curated experimental data for model validation and shallow predictor training. | ProteinGym (DMS assays), ClinVar (pathogenic/benign labels). |

| High-Performance Compute (HPC) Cluster | Necessary for running inference with large models (ESM2-3B, 15B) on genome-scale variant sets. | NVIDIA A100/GPU nodes with >40GB VRAM. |

| Embedding Visualization Suite | Tools to project high-dimensional embeddings for interpretability (e.g., t-SNE, UMAP). | umap-learn, scikit-learn libraries. |

This technical guide explores the application of protein language model embeddings, specifically from the ESM2 architecture, for the computational identification and characterization of novel drug targets. The content is framed within a broader research thesis investigating the relationship between ESM2 model scale (size, parameters) and performance on biological tasks critical to early-stage drug discovery.

Protein language models (pLMs), like ESM2, are transformer-based neural networks trained on millions of protein sequences. They learn fundamental principles of protein evolution, structure, and function. By processing a protein's amino acid sequence, ESM2 generates a high-dimensional numerical representation known as an embedding. These embeddings encapsulate semantic and structural information, enabling downstream predictive tasks without explicit structural data.

The ESM2 model family varies in size, from 8 million to 15 billion parameters. A core thesis question is how embedding quality and utility for drug target discovery scale with model size. Larger models may capture more nuanced biophysical and functional patterns, potentially leading to more accurate predictions of druggability, function, and interaction interfaces.

Core Methodologies for Target Identification & Characterization

Below are detailed protocols for key experiments leveraging ESM2 embeddings.

Protocol 2.1: Generating Per-Residue and Per-Protein Embeddings

Objective: To extract fixed-dimensional feature vectors for entire proteins or specific residues using ESM2.

Materials: Protein sequence(s) in FASTA format, access to ESM2 model (via HuggingFace transformers, fair-esm, or local installation).

Procedure:

- Model Selection & Loading: Choose an ESM2 model size (e.g.,

esm2_t6_8M_UR50D,esm2_t33_650M_UR50D,esm2_t48_15B_UR50D). Load the model and its associated tokenizer. - Sequence Preparation: Tokenize the input amino acid sequence(s). Append required special tokens (e.g.,

<cls>,<eos>) as per the model's specification. - Embedding Inference:

- Per-Residue: Pass tokenized sequences through the model. Extract the hidden states from the final layer (or a chosen layer). These states correspond to embeddings for each token/amino acid. Discard embeddings for special tokens.

- Per-Protein (Pooling): Use the representation corresponding to the

<cls>token as the global protein embedding. Alternatively, compute a mean or attention-weighted pool of the per-residue embeddings.

- Output: A matrix of shape (Nresidues, embeddingdimension) for per-residue, or a vector of (embedding_dimension) for per-protein.

Protocol 2.2: Functional Annotation via Embedding Similarity Search

Objective: To infer the function of a protein of unknown function (a potential novel target) by comparing its embedding to a database of embeddings from proteins with known functions. Materials: Query protein embedding, pre-computed database of protein embeddings (e.g., from UniProt), similarity metric (cosine similarity, Euclidean distance). Procedure:

- Database Construction: Generate per-protein embeddings for a large, annotated reference dataset (e.g., Swiss-Prot).

- Similarity Computation: For the query embedding, compute the chosen similarity metric against every embedding in the reference database.

- Ranking & Inference: Rank reference proteins by similarity score. The top-k hits, especially if they share high similarity and have consistent functional annotations, provide strong functional hypotheses for the query protein.

- Validation: Cross-reference inferred function with domain/motif databases (e.g., Pfam) or gene ontology (GO) term enrichment.

Protocol 2.3: Prediction of Binding Sites and Druggable Pockets

Objective: To identify specific amino acid residues likely to form functional or ligand-binding sites directly from sequence. Materials: Per-residue embeddings from ESM2, labeled dataset of binding site residues (e.g., from PDB or sc-PDB), a shallow classifier (e.g., logistic regression, random forest). Procedure:

- Dataset Curation: Assemble a set of proteins with known binding site annotations. Generate per-residue embeddings for each.

- Label Assignment: For each residue, assign a binary label (1 for binding site residue, 0 otherwise).

- Model Training: Train a supervised classifier using per-residue embeddings as input features and the binary labels as targets. Use a hold-out or cross-validation scheme.

- Inference: Apply the trained classifier to per-residue embeddings of a novel protein. The classifier outputs a probability score per residue; residues with scores above a calibrated threshold are predicted as part of a binding site. Spatially clustered predicted residues define a putative pocket.

Protocol 2.4: Protein-Protein Interaction (PPI) Interface Prediction

Objective: To predict whether two proteins interact and identify the interface residues using embeddings from their individual sequences. Materials: Embeddings for two query proteins, dataset of known interacting/non-interacting protein pairs (e.g., from STRING or DIP), neural network architecture for paired inputs (e.g., Siamese network, concatenation-based classifier). Procedure:

- Pair Representation: For a protein pair (A, B), extract their per-protein embeddings (e.g.,

<cls>tokens). Create a combined representation by vector concatenation, element-wise multiplication, or using a cross-attention mechanism. - Interaction Prediction: Train a classifier on the combined representations, labeled for interaction (1) or non-interaction (0).

- Interface Residue Prediction (Optional): For interacting pairs, train a separate model on concatenated per-residue embeddings from both proteins to label interface residues, often framed as a binary classification task for each residue in the complex.

Quantitative Data & Model Performance

Table 1: ESM2 Model Family Overview & Key Benchmarks

| Model Identifier | Parameters (M) | Layers | Embedding Dim. | Training Tokens (B) | Speed (seq/s)* | Performance (PSNR ↑) | Top-1 Accuracy (Remote Homology) |

|---|---|---|---|---|---|---|---|

| esm2t68M | 8 | 6 | 320 | 49 | 10,250 | 58.4 | 0.21 |

| esm2t1235M | 35 | 12 | 480 | 49 | 3,890 | 61.6 | 0.29 |

| esm2t30150M | 150 | 30 | 640 | 49 | 1,120 | 65.2 | 0.38 |

| esm2t33650M | 650 | 33 | 1280 | 98 | 420 | 67.8 | 0.46 |

| esm2t363B | 3000 | 36 | 2560 | 98 | 95 | 69.2 | 0.51 |

| esm2t4815B | 15000 | 48 | 5120 | 98 | 18 | 71.4 | 0.55 |

Approximate inference speed on a single V100 GPU for sequences of length 256. *Protein Sequence Recovery (PSNR) metric from structure prediction tasks.

Table 2: Performance of ESM2 Embeddings on Drug Discovery Tasks (Comparative)

| Task | Metric | ESM2-8M | ESM2-650M | ESM2-15B | Best-in-Class (Non-ESM) |

|---|---|---|---|---|---|

| Binding Site Prediction | Matthews Corr. Coeff. | 0.31 | 0.45 | 0.52 | 0.58 (DeepSurf) |

| Function Annotation (Fold) | Top-1 Accuracy | 0.28 | 0.41 | 0.48 | 0.50 (OmegaFold) |

| Protein-Protein Interaction | AUPRC | 0.65 | 0.78 | 0.83 | 0.85 (D-SCRIPT) |

| Stability Change Prediction | Spearman's ρ | 0.42 | 0.58 | 0.65 | 0.68 (DeepDDG) |

Visual Workflows & Logical Diagrams

Title: ESM2 Embedding Pipeline for Drug Target Discovery

Title: Research Thesis Context for Application 3

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ESM2-based Target Discovery

| Item/Category | Specific Example(s) | Function & Purpose in Workflow |

|---|---|---|

| Pre-trained Models | ESM2 via HuggingFace (transformers), fair-esm Python library, ModelHub. |

Provides immediate access to various model sizes for embedding generation without training from scratch. |

| Sequence Databases | UniProt (Swiss-Prot/TrEMBL), NCBI RefSeq, PDB. | Source of protein sequences for query and for constructing reference embedding databases for similarity searches. |

| Annotation Databases | Gene Ontology (GO), Pfam, InterPro, STRING, DrugBank. | Provides functional, structural, and interaction labels for training supervised models and validating predictions. |

| Specialized Software | PyTorch, Biopython, NumPy, Sci-kit learn, H5py. | Core libraries for model inference, data processing, training downstream classifiers, and storing embeddings efficiently. |

| Validation Datasets | PDB (for binding sites), sc-PDB, BioLiP, DIPS (for PPIs), CAFA (for function). | Curated, gold-standard benchmarks for training and objectively evaluating model performance on specific tasks. |

| Compute Infrastructure | GPU clusters (NVIDIA V100/A100), Google Colab Pro, AWS/Azure GPU instances. | Essential for running larger ESM2 models (650M, 3B, 15B) and processing large-scale protein datasets in a reasonable time. |

| Visualization Tools | PyMOL, ChimeraX, Matplotlib, Seaborn. | Used to map predicted binding sites or interface residues onto 3D structures (if available) and to create publication-quality figures. |

ESM2 Deployment Challenges: Memory, Speed, and Accuracy Optimization Tips

Within the broader research on ESM2 model sizes and parameters, managing computational resources is paramount. This guide details strategies to mitigate Out-of-Memory errors when working with large-scale protein language models like ESM-2 (with 3B and 15B parameters), which are critical tools for researchers and drug development professionals.

Understanding Memory Footprint in ESM2 Models

The memory required to load and run an ESM2 model is a function of its parameters, precision, batch size, and sequence length. The primary components are the model weights, optimizer states, gradients, and activations.

Table 1: Estimated Memory Footprint for ESM2 Models (FP32 Precision)