ESM-2 Model Size Selection Guide: Maximizing Performance for Biological Sequence Tasks

This guide provides a structured framework for selecting the optimal ESM-2 protein language model size for specific biological research and drug development tasks.

ESM-2 Model Size Selection Guide: Maximizing Performance for Biological Sequence Tasks

Abstract

This guide provides a structured framework for selecting the optimal ESM-2 protein language model size for specific biological research and drug development tasks. We cover foundational knowledge of the ESM-2 model family, methodological strategies for task-specific application, practical troubleshooting and optimization techniques, and validation against alternative tools. Aimed at researchers and bioinformaticians, this article synthesizes current best practices to help navigate the trade-offs between computational cost and predictive accuracy, from rapid sequence annotation to high-stakes protein function or stability prediction.

Demystifying ESM-2: From 8M to 15B Parameters - A Primer for Biologists

ESM-2 (Evolutionary Scale Modeling 2) is a state-of-the-art large language model for protein sequences, developed by Meta AI. It is the successor to ESM-1b and represents a family of models scaled up in size from 8 million to 15 billion parameters. ESM-2 learns evolutionary patterns from millions of natural protein sequences in the UniRef database, enabling accurate predictions of protein structure (through its ESMFold variant) and function without explicit structural supervision. The model family is designed to capture the evolutionary, structural, and functional constraints embedded in protein sequences.

Research Reagent Solutions for ESM-2 Experimentation

| Item | Function |

|---|---|

| ESM-2 Model Weights (Various Sizes) | Pre-trained parameters for the neural network. Different sizes (e.g., 650M, 3B, 15B) offer a trade-off between accuracy and computational cost. |

| PyTorch or JAX Framework | Deep learning libraries required to load and run the ESM-2 models. |

| High-Performance GPU (e.g., NVIDIA A100/H100) | Accelerates inference and fine-tuning, essential for larger model sizes. |

| Protein Sequence Dataset (e.g., from UniProt) | Custom dataset for task-specific fine-tuning or benchmarking. |

| HH-suite & PDB Database | Tools and databases for generating multiple sequence alignments (MSAs) and for structural comparison/validation. |

| Fine-Tuning Scripts (e.g., from ESM GitHub repo) | Code to adapt the pre-trained model to specific downstream tasks like fluorescence prediction or stability. |

ESM-2 Model Size Comparison & Selection Guide

The choice of model size is critical for balancing predictive performance, computational resource requirements, and task specificity within a research thesis.

| Model Parameters (Million/Billion) | Best Use Case / Task Recommendation | Key Performance Metric (Example) | Approx. GPU Memory (Inference) |

|---|---|---|---|

| 8M | Baseline, educational purposes, rapid prototyping on small datasets. | Low accuracy, fast. | < 1 GB |

| 35M | Exploring model behavior, simple sequence classification tasks. | Moderate speed/accuracy trade-off. | ~1 GB |

| 150M | Standard fine-tuning for function prediction, site-directed mutagenesis studies. | Good balance for most lab-scale tasks. | ~2-4 GB |

| 650M | Recommended starting point for detailed structural inference & function prediction in thesis research. | High accuracy without extreme cost. TM-score ~0.7 on CAMEO. | ~6-8 GB |

| 3B | High-accuracy structure/function prediction, production-level analysis for drug discovery projects. | State-of-the-art accuracy. TM-score >0.75. | ~16-24 GB |

| 15B | Cutting-edge research, ultimate accuracy for challenging targets (e.g., orphan folds, de novo design). | Pushes the boundaries of SOTA. Requires specialized infrastructure. | 40+ GB (Multi-GPU) |

Troubleshooting Guides & FAQs

Q1: I get "CUDA Out of Memory" errors when loading ESM-2. What should I do?

A: This is typically a model size issue. First, try using a smaller model (e.g., 650M instead of 3B). If you must use a large model, employ techniques like gradient checkpointing (model.set_chunk_size(128)), reduce batch size to 1, or use CPU-offloading features if available. Ensure your GPU driver and CUDA versions are compatible with your PyTorch installation.

Q2: How do I format protein sequences for input to ESM-2?

A: Sequences must be provided as standard amino acid strings (20 canonical letters). Always include the special start (<cls>) and separation (<eos>) tokens. Use the model's built-in tokenizer:

Q3: The model outputs nonsensical or low-confidence structure predictions for my protein of interest.

A: This often occurs for proteins with few evolutionary homologs. First, verify your input sequence is correct and does not contain non-standard residues. Use the esm.pretrained.esmfold_v1() model which is specifically trained for structure prediction. If confidence (pLDDT) is low overall, check if your protein is inherently disordered via complementary tools like IUPred2. If only a region has low confidence, it might be a flexible loop.

Q4: How do I fine-tune ESM-2 on my custom dataset for a specific biological task (e.g., solubility prediction)? A: Follow this protocol:

- Data Preparation: Create a labeled dataset (sequence, label) in a TSV file.

- Model Setup: Load a pre-trained model (e.g.,

esm2_t30_150M_UR50D). - Add a Regression/Classification Head: Append a

torch.nn.Linearlayer on top of the pooled output. - Training Loop: Freeze early layers initially, train only the new head. Use a task-appropriate loss (MSE for regression, Cross-Entropy for classification). Unfreeze more layers for final tuning.

- Validation: Use a held-out test set to prevent overfitting. The official ESM GitHub repository provides example fine-tuning scripts.

Q5: How accurate is ESMFold compared to AlphaFold2 for my thesis benchmarking? A: ESMFold is faster as it does not rely on external MSAs but may have lower accuracy on average. Use this validation protocol:

- Dataset: Select a high-quality, non-redundant set of structures from the PDB released after the model's training date (e.g., 2022-05-01 for ESM-2).

- Metrics: Calculate per-residue RMSD, TM-score, and GDT_TS using tools like

TM-align. - Comparison: Run both ESMFold and AlphaFold2 (via ColabFold) on your target sequences. For typical globular proteins with homologs, AlphaFold2 may outperform; for orphan proteins, ESMFold's performance may be closer. Tabulate results for clear comparison.

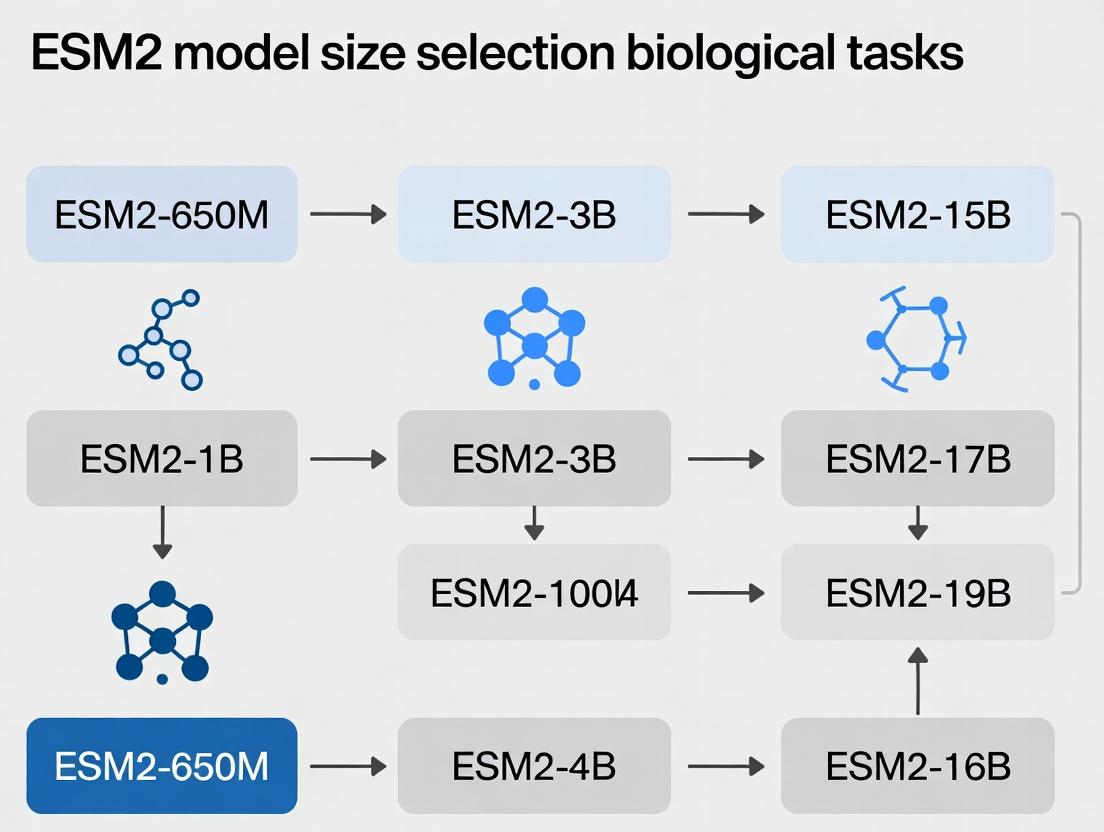

Diagram: ESM-2 Model Selection Workflow

Diagram: ESM-2 Architecture & Information Flow

Troubleshooting Guides & FAQs

Q1: During fine-tuning of an ESM2 model, I encounter "CUDA Out of Memory" errors. How can I proceed without access to larger GPUs? A: This is often due to the model size, batch size, or sequence length exceeding GPU VRAM. Mitigation strategies include:

- Gradient Accumulation: Use smaller effective batch sizes by accumulating gradients over multiple steps before updating weights.

- Gradient Checkpointing: Trade compute for memory by recomputing activations during the backward pass.

- Reduced Sequence Length: Truncate or strategically chunk input protein sequences, though this risks losing long-range context.

- Model Variant Selection: Switch to a smaller ESM2 variant (e.g., from 650M to 150M parameters). The table below provides memory estimates.

Q2: How do I choose between ESM2 model sizes (e.g., 8M, 35M, 150M, 650M, 3B, 15B) for my specific protein function prediction task? A: Selection is a trade-off between representational capacity, computational cost, and dataset size. Follow this protocol:

- Benchmark with a Mid-Sized Model: Start with ESM2-150M (or 35M for very small datasets < 10k samples) for rapid prototyping.

- Evaluate Scaling: If performance is suboptimal and resources allow, scale up to ESM2-650M or 3B. Use the performance vs. resources table as a guide.

- Consider Context: For tasks involving long protein sequences or complex multi-chain interactions, a model with a larger inherent context window (like ESM2-3B/15B) may be necessary, but may require the memory tricks from Q1.

Q3: What is the practical difference between the embedding dimension and the number of layers in ESM2? A: Both contribute to model capacity differently.

- Embedding Dimension: The width of the model, representing the richness of the feature vector for each amino acid position. Larger dimensions capture more nuanced per-residue information.

- Number of Layers: The depth of the model, determining how many sequential computational steps (attention, feed-forward networks) transform the initial embeddings. More layers enable modeling of more complex, hierarchical, and long-range dependencies within the protein sequence.

- See the "Model Anatomy Comparison" diagram.

Q4: For a novel, small-scale experimental dataset (e.g., enzyme activity for 200 variants), is fine-tuning a large ESM2 model advisable? A: Generally, no. Fine-tuning a very large model (650M+ parameters) on a tiny dataset is highly prone to overfitting. Recommended protocol:

- Use Fixed Embeddings: Extract frozen embeddings from a large ESM2 model as powerful input features for a simple, shallow downstream model (e.g., a Random Forest or a small MLP). This is computationally cheap and less prone to overfitting.

- Lightweight Fine-Tuning: If fine-tuning, use a small model (ESM2-8M or 35M) or employ heavy regularization (e.g., dropout, weight decay) and early stopping.

- Leverage Pre-Trained Heads: For tasks like variant effect prediction, use the pre-trained masked language modeling head without any fine-tuning via the

esm1vscore methodology.

Key Quantitative Data

Table 1: ESM2 Model Variants & Resource Requirements

| Model Name | Parameters | Layers | Embedding Dim | Attn Heads | Context | Approx. VRAM for Inference* | Typical Use Case |

|---|---|---|---|---|---|---|---|

| ESM2-8M | 8.4M | 6 | 320 | 20 | 1024 | < 1 GB | Education, tiny datasets |

| ESM2-35M | 35M | 12 | 480 | 20 | 1024 | ~1-2 GB | Small-scale protein property prediction |

| ESM2-150M | 150M | 30 | 640 | 20 | 1024 | ~3-4 GB | Standard benchmark for function prediction |

| ESM2-650M | 650M | 33 | 1280 | 20 | 1024 | ~10-12 GB | High-accuracy structure/function tasks |

| ESM2-3B | 3B | 36 | 2560 | 40 | 1024 | ~24-30 GB | State-of-the-art performance, long sequences |

| ESM2-15B | 15B | 48 | 5120 | 40 | 1024 | > 80 GB | Cutting-edge research, requires model parallelism |

*Estimates for batch size=1, sequence length ~512. Fine-tuning requires 3-4x more VRAM.

Table 2: Performance vs. Resources for Sample Biological Tasks

| Task (Dataset) | Best Model (Reported) | Key Metric | Recommended Starting Model (Cost-Effective) | Expected Metric Drop |

|---|---|---|---|---|

| Protein Function Prediction (GO) | ESM2-3B | F1 Max ~0.67 | ESM2-150M | ~0.05-0.08 F1 |

| Stability Prediction (FireProtDB) | ESM2-650M | Spearman ρ ~0.70 | ESM2-35M (with embeddings) | ~0.10-0.15 ρ |

| Contact Prediction (CASP14) | ESM2-3B | Top-L Precision ~0.85 | ESM2-650M | ~0.05 Precision |

| Mutation Effect (Deep Mut. Scan) | ESM1v (ensemble) | Spearman ρ ~0.48 | ESM2-150M (MLM scoring) | ~0.05-0.10 ρ |

Experimental Protocols

Protocol 1: Extracting Per-Residue Embeddings for Downstream Training

- Environment Setup: Install

fair-esmandPyTorch. - Load Model & Tokenizer:

model, alphabet = torch.hub.load("facebookresearch/esm:main", "esm2_t33_650M_UR50D")(select variant). - Prepare Sequence: Tokenize protein sequence using

alphabet. - Forward Pass: Run model with

repr_layers=[33]to get output from the final layer. - Extract Features: Isolate the last hidden state corresponding to sequence tokens (excluding

<cls>,<eos>,<pad>). This tensor is your[seq_len, embedding_dim]feature matrix. - Pool (Optional): Create a single sequence representation by mean-pooling over the sequence length dimension.

- Use in Classifier: Feed pooled or per-residue embeddings into a new, task-specific neural network or traditional ML model.

Protocol 2: Fine-Tuning ESM2 for a Binary Protein Classification Task

- Data Preparation: Format sequences and labels. Create a custom

Datasetclass. - Model Setup: Load a pretrained ESM2 model. Replace the default head with a classification head (e.g., linear layer on pooled output).

- Training Configuration:

- Loss: Binary Cross-Entropy.

- Optimizer: AdamW with learning rate

1e-5to5e-5. - Regularization: Dropout (0.1-0.5) on classifier, weight decay (

1e-2). - Scheduler: Linear warmup followed by cosine decay.

- Memory Management: Implement gradient checkpointing and accumulation if needed.

- Training Loop: Standard PyTorch loop with validation and early stopping.

- Evaluation: Report metrics like AUROC, AUPRC on a held-out test set.

Diagrams

ESM2 Model Anatomy: Embeddings vs Layers

Model Size Selection Logic for Biological Tasks

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ESM2-Based Research

| Item | Function & Relevance to Model Size Experiments |

|---|---|

| High VRAM GPU(s) (e.g., NVIDIA A100, H100) | Essential for training/fine-tuning larger models (ESM2-650M+). Enables larger batch sizes and longer context. |

GPU Memory Optimization Libraries (e.g., deepspeed, fairscale) |

Allows model parallelism and efficient offloading to train models that exceed single-GPU memory (e.g., ESM2-15B). |

ESM Protein Language Models (fair-esm PyPI package) |

The core pre-trained models in multiple sizes. Required for all experiments. |

| Protein Sequence Datasets (e.g., from CATH, PDB, UniProt) | Task-specific data for fine-tuning or evaluating model performance across different scales. |

| Sequence Batching & Chunking Scripts | Custom code to handle long sequences that exceed model context, critical for large-protein analysis with smaller models. |

| Embedding Visualization Tools (UMAP, t-SNE) | To qualitatively compare the representations learned by different model sizes and validate their biological relevance. |

| Hyperparameter Optimization Framework (e.g., Optuna, Ray Tune) | Systematically tune learning rates, dropout, etc., as optimal values can shift with model size. |

| Performance Benchmarking Suite (Precise metrics for task) | To quantitatively compare accuracy/speed trade-offs between model variants (8M to 15B). |

This technical support center is designed within the research context of selecting the appropriate ESM2 model size (from 8 million to 15 billion parameters) for specific protein-related tasks in computational biology and drug discovery. The guides below address common experimental hurdles.

Troubleshooting Guides & FAQs

Q1: My fine-tuning of ESM2-650M on a custom protein family dataset results in rapid overfitting and validation loss divergence. What are the primary mitigation strategies? A: This is a common issue when the dataset is small relative to model capacity. Implement the following protocol:

- Freeze Layers: Freeze all transformer layers except the final 2-4 and the classification head. Use lower learning rates (1e-5 to 1e-4).

- Aggressive Regularization: Apply dropout (rate 0.4-0.6) before the final layer. Use weight decay (0.01-0.1) and gradient clipping (max norm 1.0).

- Data Augmentation: Use reversible perturbations like random masking of residues (10-15%) or subsampling of multiple sequence alignment (MSA) rows during training.

- Early Stopping: Monitor validation loss with a patience of 3-5 epochs.

Q2: When using ESM2-3B or larger for inference on a local GPU, I encounter "CUDA Out of Memory" errors. How can I manage this? A: Memory scales with sequence length (O(n²) for attention). Apply these techniques:

- Gradient Checkpointing: Enable

model.gradient_checkpointing_enable()to trade compute for memory. - Half Precision: Use

model.half()and perform inference intorch.float16. - Sequence Chunking: For very long sequences (>1024 residues), implement a sliding window inference and aggregate embeddings.

- CPU Offloading: For inference only, consider using libraries like

accelerateto offload some layers to CPU memory.

Q3: The embeddings I extract from ESM2 for downstream tasks (e.g., protein-protein interaction prediction) yield poor performance. How should I diagnostically approach this problem? A: Poor transfer can stem from inappropriate embedding selection or task mismatch.

- Embedding Layer Selection: Do not default to the final layer. For structural/functional tasks, intermediate layers (e.g., layer 20-25 in ESM2-650M) often perform better. Systematically test averaging embeddings from layers 25-33.

- Aggregation Method: For per-protein embeddings, average the residue embeddings from the

<cls>token or across all residues, rather than just taking the final position. - Task-Specific Tuning: The embeddings may require non-linear projection. Add a simple 2-layer MLP head and fine-tune it on a small subset of your target data before full evaluation.

Q4: How do I select the optimal model size from the ESM2 zoo for my specific resource constraints and task accuracy needs? A: Follow this decision protocol based on empirical research:

- Define your primary task (e.g., variant effect prediction, fold classification, zero-shot fitness prediction).

- Assess your computational budget (GPU memory, time).

- Consult the performance benchmark table (see below) to identify the smallest model that delivers acceptable accuracy for your task class.

- Run a pilot study on a data subset with two model sizes (e.g., 650M and 3B) to confirm the performance-compute trade-off.

Quantitative Performance Benchmarks for Model Selection

Table 1: Comparative performance of ESM2 model sizes on key biological tasks. Scores are representative from literature (e.g., Flynn et al., 2022) and community benchmarks. PPI = Protein-Protein Interaction. MSA = Multiple Sequence Alignment.

| Model (Parameters) | Embedding Dim | GPU Mem (Inference) | Fluorescence Landscape (Spearman ρ) | Remote Homology (Top1 Acc) | PPI Prediction (AUROC) | Recommended Primary Use Case |

|---|---|---|---|---|---|---|

| ESM2-8M | 320 | ~0.5 GB | 0.21 | 0.15 | 0.62 | Education, debugging, simple sequence encoding on CPU. |

| ESM2-35M | 480 | ~1 GB | 0.38 | 0.22 | 0.71 | Rapid prototyping where speed is critical over peak accuracy. |

| ESM2-150M | 640 | ~2 GB | 0.58 | 0.35 | 0.79 | Large-scale annotation of canonical protein functions. |

| ESM2-650M | 1280 | ~4 GB | 0.73 | 0.50 | 0.85 | Sweet spot for most research tasks; balances accuracy and resource use. |

| ESM2-3B | 2560 | ~12 GB | 0.78 | 0.60 | 0.88 | High-stakes prediction where data is abundant; requires significant GPU. |

| ESM2-15B | 5120 | ~48 GB | 0.82 | 0.68 | 0.90 | State-of-the-art benchmarking, zero-shot tasks, and lead optimization in drug discovery. |

Experimental Protocol: Benchmarking Model Size for Variant Effect Prediction

Objective: Systematically evaluate the impact of ESM2 model size on predicting missense variant pathogenicity.

Methodology:

- Data Curation: Use the standardized ClinVar benchmark split (Miyazaki et al., 2022). Filter for high-confidence missense variants in proteins with unique structures.

- Embedding Extraction:

- For each model (8M, 35M, 150M, 650M, 3B), extract per-residue embeddings for the wild-type and mutant sequence.

- Use the

esm.pretrained.load_model_and_alphabet_core()function. - Compute the scalar difference as the Mean Squared Error between the wild-type and mutant residue embedding vectors at the variant position (layer 33 for larger models).

- Classification:

- Use the embedding difference as a single feature.

- Train a logistic regression classifier on 80% of the data using 5-fold cross-validation.

- Evaluate on a held-out 20% test set using AUROC and AUPRC.

- Analysis: Plot model size (parameters) versus AUROC. Perform statistical significance testing between model performances using DeLong's test.

Visualizations

ESM2 Model Selection Workflow for Biological Tasks

ESM2 Layer Selection for Downstream Tasks

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential materials and tools for working with the ESM2 Model Zoo.

| Item | Function & Relevance | Example/Note |

|---|---|---|

| PyTorch | Deep learning framework required to load and run ESM2 models. | Version 1.12+ with CUDA support for GPU acceleration. |

| ESM Library | Official Python package from Meta AI for model loading and utilities. | Install via pip install fair-esm. Contains pre-trained weights. |

| High-Memory GPU | Accelerates training and inference for models >650M parameters. | NVIDIA A100 (40GB+) for 3B/15B models; RTX 4090/A6000 for up to 3B. |

Hugging Face accelerate |

Manages device placement and memory optimization for large models. | Essential for offloading and mixed-precision inference. |

| Biopython | Handles protein sequence I/O, parsing FASTA files, and basic computations. | For preprocessing custom datasets before feeding to ESM2. |

| Scikit-learn | Provides simple classifiers (Logistic Regression, SVM) for downstream task evaluation on embeddings. | Used for rapid prototyping on extracted embedding features. |

| PDB Database | Source of protein structures for validating predictions (e.g., contact maps) or for multi-modal tasks. | Use structures corresponding to your sequence of interest. |

| Custom Fine-Tuning Script | Tailored training loop with layer freezing, learning rate scheduling, and task-specific heads. | Often required; template available in the official ESM repository. |

Technical Support Center

Troubleshooting Guide: ESM2 Model Performance

Q1: My fine-tuned ESM2 model for predicting binding affinity is showing poor accuracy (R² < 0.3) on the validation set, despite good training loss. What are the primary steps to diagnose this?

A1: This typically indicates overfitting or a data mismatch. Follow this diagnostic protocol:

- Check Data Leakage: Ensure no overlapping sequences between training and validation splits. Use MMseqs2 (80% sequence identity threshold) for strict clustering before splitting.

- Evaluate Model Size vs. Data Scale: Confirm your training dataset size is sufficient for the chosen ESM2 parameter count. As a rule of thumb:

- ESM2-8M: Effective with 10k - 50k curated sequences.

- ESM2-35M: Requires 50k - 250k sequences.

- ESM2-150M: Needs >250k sequences for stable fine-tuning.

- ESM2-650M+: Requires millions of data points; use only with large, high-quality datasets.

- Run a Zero-Shot Baseline: Use the frozen base ESM2 model to generate embeddings and train a simple Ridge regression on top. If this outperforms your fine-tuned model, the fine-tuning process is likely degrading pre-trained evolutionary knowledge.

Q2: When using ESM2 for zero-shot variant effect prediction (e.g., using the esm2_variant_prediction notebook), the scores for all mutants in a region are very similar and non-informative. What could be wrong?

A2: This often arises from the masking strategy and positional embedding leakage.

- Issue: The model may be using context from the

[MASK]token's position via attention, diluting the signal. - Solution: Implement a more stringent inference protocol:

- Reproduce the exact sequence with the wild-type residue at the target position.

- Encode this sequence to get the hidden state for the target position (hwt).

- Encode the sequence again with the mutant residue (no masking) to get hmut.

- Calculate the logit difference or cosine distance between hwt and hmut as the score. This bypasses the masking procedure and often yields sharper, more discriminative scores.

Q3: ESMFold predictions for my protein of interest are low confidence (pLDDT < 70) and show unrealistic loops. How can I improve the prediction?

A3: Low pLDDT scores often indicate regions not well-constrained by evolutionary data in the MSAs used to train ESM2.

- Step 1: Verify Evolutionary Coverage. Input your sequence into HHblits or JackHMMER against a large database (e.g., UniClust30). If the number of effective sequences (Neff) is below 100, the evolutionary context is weak.

- Step 2: Employ Hybrid Modeling.

- Generate the ESMFold structure.

- Use the low-confidence regions (pLDDT < 70) as flexible loops in a molecular dynamics (MD) simulation toolkit like GROMACS, applying gentle constraints to the high-confidence regions.

- This refines the local geometry without losing the global fold.

- Step 3: Consider Model Size. For ab initio folding of orphan proteins, the larger ESM2-3B or ESM2-15B models, which have a stronger generative language modeling capability, may yield better initial coordinates than smaller versions.

Frequently Asked Questions (FAQs)

Q: For a specific task like antibody affinity maturation, how do I choose between fine-tuning ESM2-650M versus ESM2-3B?

A: The choice hinges on your dataset size and computational budget. Refer to the quantitative guideline table below, derived from recent benchmarking studies.

Table: ESM2 Model Selection Guide for Specific Tasks

| Biological Task | Recommended Model(s) | Minimum Effective Dataset Size | Expected Performance Metric (Typical Range) | VRAM Minimum |

|---|---|---|---|---|

| Variant Effect Prediction | ESM2-35M, ESM2-150M | 5,000 variant labels | Spearman's ρ: 0.4 - 0.65 | 16 GB |

| Protein-Protein Interaction | ESM2-150M, ESM2-650M | 20,000 complex pairs | AUPRC: 0.7 - 0.85 | 32 GB |

| Antibody Affinity Optimization | ESM2-650M | 50,000 (scFv sequence, affinity) pairs | Mean ΔΔG RMSE: 1.2 - 1.8 kcal/mol | 40 GB |

| Zero-Shot Structure Annotation | ESM2-3B, ESM2-15B | Not Applicable (zero-shot) | Active Site Recall @ Top-10: 60% - 80% | 80 GB |

| Small-Scale Function Prediction | ESM2-8M, ESM2-35M | 10,000 sequences with GO terms | Macro F1-score: 0.55 - 0.75 | 12 GB |

Q: What is the recommended protocol for fine-tuning ESM2 on a custom dataset for a binary classification task (e.g., enzyme/non-enzyme)?

A: Follow this detailed methodology:

Data Preparation:

- Format: Create a

.csvfile with columns:sequence,label(0/1). - Split: Perform a stratified split by sequence similarity (using CD-HIT at 40% threshold) to get Train/Val/Test sets (70/15/15). This prevents homology leakage.

- Format: Create a

Model Setup:

- Load

esm2_t12_35M_UR50D(or a larger model per the selection table) from thefair-esmPython package. - Add a classification head: a dropout layer (p=0.2) followed by a linear layer projecting from the LM head dimension (e.g., 480 for 12-layer) to 2 classes.

- Load

Training Loop:

- Loss:

nn.CrossEntropyLoss() - Optimizer:

AdamWwith a learning rate of1e-5for the pretrained layers and1e-4for the classification head. - Schedule: Linear warmup (10% of epochs) followed by cosine decay.

- Key Regularization: Use gradient clipping (max norm = 1.0) and attention dropout (0.1) within the ESM2 configuration.

- Loss:

Evaluation:

- Monitor validation AUROC and loss. Early stopping patience of 5 epochs is recommended.

- Final evaluation on the held-out test set should report AUROC, AUPRC, and balanced accuracy.

Q: Can you list essential reagents and computational tools for replicating ESM2-based structure-function studies?

A: The following toolkit is essential for this research.

Table: Research Reagent Solutions for ESM2 Experiments

| Item / Resource | Provider / Source | Primary Function in Workflow |

|---|---|---|

| ESM2 Model Weights | Facebook AI Research (FAIR) | Pre-trained protein language model providing evolutionary insights and embeddings. |

| ESMFold | FAIR | End-to-end single-sequence protein structure prediction model built on ESM2. |

| PyTorch | Meta | Core deep learning framework for loading, fine-tuning, and running inference with ESM2. |

| Hugging Face Transformers | Hugging Face | Provides easy-to-use APIs for loading ESM2 models and tokenizers. |

| Biopython | Biopython Consortium | For parsing FASTA files, handling sequence alignments, and managing biological data structures. |

| UniProt API | EMBL-EBI | Retrieving protein sequences, functions, and annotations for dataset construction. |

| PDB (Protein Data Bank) | RCSB | Source of high-quality 3D structures for training, validation, and benchmarking. |

| AlphaFold DB | EMBL-EBI | Source of predicted structures for proteins lacking experimental data, useful for validation. |

| NVIDIA A100/A40 GPU | NVIDIA | Primary compute hardware for training medium-to-large ESM2 models (>150M params). |

| CUDA & cuDNN | NVIDIA | GPU-accelerated libraries essential for efficient PyTorch operations on NVIDIA hardware. |

Visualizations

Diagram 1: ESM2 Model Selection Decision Pathway

Diagram 2: ESM2 Fine-Tuning & Validation Workflow

Troubleshooting Guides & FAQs

Q1: My training run on ESM2-15B is failing with an "Out of Memory (OOM)" error, even on a GPU with 40GB VRAM. What are my main options? A: This is a common issue with larger ESM2 variants. The primary trade-off is between model size and hardware requirements. Your options are:

- Use Gradient Checkpointing: This trades compute for memory. It re-computes activations during the backward pass instead of storing them all.

- Reduce Batch Size: Lowering

per_device_train_batch_sizereduces memory consumption linearly but may affect convergence. - Employ Model Parallelism: For models >15B parameters, techniques like pipeline or tensor parallelism across multiple GPUs are often necessary.

- Downselect Model Size: Consider if a smaller ESM2 variant (e.g., 3B or 650M) is sufficient for your specific biological task's accuracy requirements.

Q2: For real-time analysis of protein variant libraries, my ESM2-8B model is too slow. How can I improve inference speed? A: Inference speed is critical for high-throughput tasks. Consider these approaches:

- Model Quantization: Reduce the numerical precision of weights (e.g., from FP16 to INT8). This reduces memory footprint and can accelerate inference.

- Switch to a Smaller Model: Benchmark a smaller ESM2 (e.g., 35M or 150M) for your task. The performance drop may be acceptable for a large speed gain.

- Optimize with ONNX Runtime or TensorRT: Convert the model to an optimized engine format for your specific hardware.

- Leverage Caching (Key-Value Cache): For autoregressive generation tasks, ensure you are using cached attention keys and values for already-processed tokens.

Q3: I need to fine-tune ESM2 for a specific protein function prediction task. How do I select the right model size given my limited compute budget? A: This is the core model size selection problem. Follow this protocol:

- Establish a Baseline: Start with the smallest model (ESM2-8M) on a representative subset of your data.

- Profiling Step: Measure key metrics for each model size (see Table 1).

- Iterative Scaling: Incrementally move to larger models (35M, 150M, 650M, 3B) until the performance gain (e.g., AUPRC) plateaus relative to the increase in cost and latency.

- Consider Fine-tuning vs. Embedding Extraction: For some tasks, using frozen embeddings from a large model as features for a small classifier offers a favorable cost/accuracy trade-off.

Table 1: ESM2 Model Family Trade-offs (Representative Metrics)

| Model (Parameters) | Approx. VRAM for Inference (FP16) | Approx. VRAM for Fine-tuning | Relative Inference Speed (Tokens/sec)* | Typical Use Case in Research |

|---|---|---|---|---|

| ESM2-8M | < 0.1 GB | < 0.5 GB | 10,000 | Quick prototyping, educational |

| ESM2-35M | ~0.2 GB | ~1 GB | 5,000 | Lightweight downstream tasks |

| ESM2-150M | ~0.5 GB | ~2.5 GB | 2,000 | Balanced option for fine-tuning |

| ESM2-650M | ~1.5 GB | ~8 GB | 800 | High-accuracy single-GPU fine-tuning |

| ESM2-3B | ~6 GB | ~24 GB | 200 | State-of-the-art accuracy, multi-GPU |

| ESM2-15B | ~30 GB | > 80 GB (Model Parallel) | 40 | Full-scale exploration, major resources |

*Speed is indicative, measured on a single A100 GPU for a 1024-token sequence. Actual results vary with sequence length and hardware.

Experimental Protocols

Protocol 1: Benchmarking Inference Speed & Memory Across ESM2 Sizes Objective: Systematically measure the computational trade-offs of different ESM2 models.

- Environment Setup: Use a machine with a dedicated GPU (e.g., A100 40GB). Standardize software (PyTorch, Transformers library).

- Model Loading: Load each ESM2 model (

esm2_t6_8M_UR50Dtoesm2_t48_15B_UR50D) intorch.float16precision. - Memory Profiling: For a fixed input sequence length (e.g., 512 and 1024), record the peak GPU memory allocated after a forward pass.

- Speed Test: Time the forward pass for 100 batches (batch size=1), average, and calculate tokens/second.

- Data Logging: Record results in a table format (as in Table 1).

Protocol 2: Task-Accuracy vs. Model Size Pareto Curve Objective: Determine the optimal model size for a specific predictive task (e.g., subcellular localization).

- Task & Data: Select a labeled dataset (e.g., DeepLoc 2.0). Create fixed train/validation/test splits.

- Fine-tuning: For each ESM2 size, perform supervised fine-tuning using the same hyperparameter tuning budget (e.g., 10 trials via Ray Tune).

- Evaluation: Measure primary accuracy metric (e.g., Top-1 accuracy) on the held-out test set.

- Resource Tracking: Simultaneously track total compute time (GPU-hours) and peak memory usage for each run.

- Analysis: Plot accuracy versus inference speed and computational cost to identify the Pareto-optimal model.

Visualizations

Title: Model Size Selection Trade-off Decision Path

Title: ESM2 Model Selection Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for ESM2 Model Selection Experiments

| Item/Reagent | Function/Benefit | Example/Notes |

|---|---|---|

| Hugging Face Transformers Library | Provides easy access to all pre-trained ESM2 models and tokenizers. | transformers package; use AutoModelForMaskedLM or task-specific heads. |

| PyTorch with CUDA | Core deep learning framework enabling GPU acceleration and mixed-precision training. | Required for fine-tuning. Use torch.cuda.amp for automatic mixed precision (AMP). |

| NVIDIA A100/A6000 or H100 GPU | High-VRAM GPU hardware necessary for fine-tuning larger models (650M+ parameters). | Access via cloud providers (AWS, GCP, Lambda Labs) or local clusters. |

| Gradient Checkpointing | Dramatically reduces memory usage by trading compute for memory. | Enable via model.gradient_checkpointing_enable() in PyTorch. |

| Bitsandbytes Library (LLM.int8()) | Enables quantization of very large models (8B, 15B) for lower memory inference/fine-tuning. | Allows loading 8-bit quantized models on consumer-grade GPUs. |

| Weights & Biases (W&B) / MLflow | Experiment tracking to log accuracy, loss, and resource consumption across model sizes. | Crucial for creating the accuracy vs. cost Pareto curve. |

| ONNX Runtime | Optimized inference engine to accelerate prediction speed after model selection. | Convert final selected PyTorch model to ONNX format for deployment. |

When Does Size Matter? Defining 'Small' vs. 'Large' for Typical Research Labs.

Within the context of selecting the appropriate ESM2 (Evolutionary Scale Modeling 2) protein language model for biological tasks, defining "small" and "large" labs is critical for aligning computational resources with research goals. This technical support center provides guidance for common experimental and computational issues.

Troubleshooting Guides & FAQs

Q1: My fine-tuning of the ESM2-8M model on a small protein dataset is producing poor accuracy. What could be wrong? A: This is often due to insufficient model capacity for complex tasks. The 8M parameter "small" model is ideal for simple sequence annotation or educational purposes. For meaningful research (e.g., predicting mutational effects), a "medium" (35M-150M) or "large" (650M+) model is typically required. Ensure your dataset, though small, is of high quality and relevant. First, try the ESM2-35M model.

Q2: I receive "CUDA out of memory" errors when trying to run the ESM2-650M model on our lab server. How can we proceed? A: This defines a hardware limitation common in "small" to "medium" labs. You have several options:

- Reduce Batch Size: Set

per_device_train_batch_size=1or2in your training script. - Use Gradient Accumulation: Simulate a larger batch size by accumulating gradients over several steps.

- Employ Model Parallelism: Split the model across multiple GPUs (requires code modification).

- Use FP16 Precision: Leverage mixed-precision training to halve memory usage.

- Consider a Smaller Model: Benchmark with ESM2-150M first; it may suffice for your task.

Q3: How do we decide which ESM2 model size to invest computational resources in for a new protein engineering project? A: Follow this validated protocol:

- Task Complexity Assessment: Classify your task. Use the table below for guidance.

- Resource Audit: Define your lab's "size" by available GPUs (see table).

- Pilot Experiment: Run a quick benchmark on a representative data subset using ESM2-8M, 35M, and 150M models.

- Scale Decision: Select the smallest model that meets your target performance threshold to conserve resources for full-scale experiments.

Table 1: ESM2 Model Sizes & Typical Lab Classifications

| Model Name | Parameters | Typical Use Case | Minimum GPU VRAM (Inference) | Minimum GPU VRAM (Fine-tuning) | Recommended Lab "Size" |

|---|---|---|---|---|---|

| ESM2-8M | 8 million | Intro, simple patterns | 2 GB | 4 GB | Small (1-2 entry GPUs) |

| ESM2-35M | 35 million | Basic structure/function | 4 GB | 8 GB | Small-Medium |

| ESM2-150M | 150 million | Mutational effect prediction | 8 GB | 16 GB | Medium (1-2 high-end GPUs) |

| ESM2-650M | 650 million | High-accuracy engineering | 16 GB | 32 GB+ | Large (Multi-GPU node) |

| ESM2-3B | 3 billion | State-of-the-art research | 40 GB+ | Multi-Node | Very Large (HPC cluster) |

Table 2: Experimental Protocol: Benchmarking Model for Task Fit

| Step | Procedure | Duration | Output |

|---|---|---|---|

| 1. Data Curation | Prepare a balanced, labeled dataset of 100-200 sequences. | 1-2 days | .fasta & .csv files |

| 2. Environment Setup | Install fair-esm, transformers, pytorch. |

1 hour | Configured conda environment |

| 3. Inference Test | Run embeddings on all models. | 2-4 hours | Embedding vectors per model |

| 4. Simple Classifier | Train a shallow logistic regression model on embeddings. | 1 hour | Accuracy score per ESM2 model |

| 5. Analysis | Plot accuracy vs. model size/compute time. | 30 min | Decision chart |

Visualizations

Title: ESM2 Model Selection Workflow for Labs

Title: Fine-tuning ESM2 for Downstream Tasks

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ESM2-Based Research |

|---|---|

| High-Quality Curated Dataset (e.g., from UniProt, PDB) | Provides clean, labeled sequences for model fine-tuning and evaluation. The foundation of task-specific learning. |

| GPU Cluster (NVIDIA A100/H100) or Cloud Credits (AWS, GCP, Azure) | Provides the essential computational horsepower for training and inferring with large models (650M+ parameters). |

PyTorch / Hugging Face transformers Library |

The primary software framework for loading, manipulating, and fine-tuning the ESM2 models. |

| Conda/Pip Environment Manager | Ensures reproducible software dependencies (specific versions of PyTorch, CUDA drivers, etc.). |

| Weights & Biases (W&B) or MLflow | Tracks experiments, logs training metrics, and manages model versions across different sizing experiments. |

| Jupyter Notebook / VS Code with Python | Interactive development environment for prototyping data pipelines and analyzing model outputs. |

| Bioinformatics Tools (HMMER, DSSP, etc.) | Used to generate traditional biological features for baseline comparisons against ESM2 embeddings. |

Task-Driven Selection: Matching ESM-2 Model Size to Your Biological Question

Troubleshooting Guides & FAQs

Q1: I'm working on a protein function prediction task and my ESM2 model is underperforming. Could it be the wrong model size? A: Likely yes. Protein function prediction is a high-complexity task requiring nuanced understanding of structure-function relationships. A small model (e.g., ESM2-8M) lacks the capacity. For this task, consider ESM2-650M or larger, especially if your curated dataset exceeds 10,000 labeled sequences. Ensure your dataset is balanced across functional classes.

Q2: During fine-tuning of ESM2-35M on my proprietary antibody sequence dataset (~5,000 sequences), I encounter GPU memory errors. What are my options? A: This is a common resource constraint. You have three primary options:

- Reduce Batch Size: Start by lowering the batch size to 8 or 4.

- Employ Gradient Accumulation: Mimic a larger batch size by accumulating gradients over multiple forward/backward passes before updating weights.

- Use Gradient Checkpointing: Trade compute time for memory by selectively recomputing activations during the backward pass. For a 5k-sequence dataset, downsizing to the ESM2-8M model is also a viable path to explore first.

Q3: How do I define "task complexity" for my specific biological problem to guide model selection? A: Task complexity can be operationalized along these axes, framed within the ESM2 selection thesis:

| Complexity Axis | Low Complexity Example | High Complexity Example | Recommended ESM2 Size Starting Point | |

|---|---|---|---|---|

| Output Specificity | Binary classification (e.g., soluble/insoluble) | Multi-label, fine-grained function prediction (e.g., EC number) | Small (8M-35M) | Large (650M-3B+) |

| Context Length | Short, single-domain proteins (< 400 AA) | Multi-domain proteins or protein complexes (> 1000 AA) | Any | 650M+ for long-range dependency |

| Data Signal Strength | Strong, conserved patterns (e.g., signal peptides) | Weak, evolutionary-distant patterns | Small | Large, with careful regularization |

Q4: I have a small, high-quality dataset (~1,000 structures). Is fine-tuning a large ESM2 model pointless? A: Not necessarily, but it requires a specific strategy. Direct fine-tuning of a 3B-parameter model on 1,000 samples high risk of overfitting. The recommended protocol is:

- Use the large model as a feature extractor. Pass your sequences through the frozen model to obtain per-residue or per-sequence embeddings.

- Train a lightweight predictor. Use the extracted embeddings as input to a simple classifier (e.g., a shallow MLP or Random Forest).

- Consider Parameter-Efficient Fine-Tuning (PEFT). Techniques like LoRA (Low-Rank Adaptation) can adapt a large model with very few trainable parameters, making it suitable for small datasets.

Experimental Protocol: Benchmarking ESM2 Model Sizes for a Novel Task

Objective: Systematically evaluate the performance-resource trade-off of different ESM2 models on a user-defined task.

Materials: (See "The Scientist's Toolkit" below) Methodology:

- Task & Dataset Preparation: Define a clear prediction task. Split your dataset (D) into training (Dtrain), validation (Dval), and test (Dtest) sets. Report |D|, |Dtrain|, |Dval|, |Dtest|.

- Baseline Establishment: Train a simple baseline model (e.g., logistic regression on sequence length/amino acid composition) on Dtrain. Evaluate on Dtest.

- ESM2 Feature Extraction: For each ESM2 model size (e.g., 8M, 35M, 150M, 650M, 3B), compute sequence embeddings for Dtrain and Dval using the frozen model.

- Predictor Training: Train identical shallow feed-forward networks on the extracted embeddings from each model size. Use D_val for early stopping.

- Full Fine-Tuning (Resource Permitting): Selectively fine-tune the larger models if Dtrain is sufficiently large (>10k samples). Use a low learning rate (1e-5 to 1e-4) and monitor Dval loss closely.

- Evaluation & Analysis: Compare all models on the held-out D_test set. Create a summary table of performance (e.g., AUC, Accuracy) vs. computational cost (GPU hours, memory footprint).

Visualizations

Title: ESM2 Model Selection Decision Framework

Title: ESM2 Fine-Tuning Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Relevance |

|---|---|

| ESM2 Model Suite (8M, 35M, 150M, 650M, 3B, 15B) | Pre-trained protein language models of varying capacities. Foundation for transfer learning. |

PyTorch / Hugging Face transformers Library |

Essential software frameworks for loading, fine-tuning, and running inference with ESM2 models. |

| NVIDIA GPU (e.g., A100, V100, RTX 4090) | Accelerates training and inference. Memory (VRAM) is the key constraint dictating feasible model size and batch size. |

| LoRA (Low-Rank Adaptation) Modules | Parameter-efficient fine-tuning (PEFT) method. Allows adaptation of large models with minimal trainable parameters, ideal for small datasets. |

| Weights & Biases (W&B) / MLflow | Experiment tracking tools to log performance metrics, hyperparameters, and resource usage across different model sizes. |

| Labeled Protein Dataset (e.g., from UniProt, PDB) | Task-specific gold-standard data. Size, quality, and label balance are critical for guiding model selection. |

Best Practices for Rapid Sequence Annotation and Embedding Extraction

Troubleshooting Guides & FAQs

Q1: My ESM2 embedding extraction script runs out of memory (OOM) when processing long protein sequences (e.g., > 2000 AA). What can I do? A: ESM2 models, especially the larger variants (e.g., ESM2 36-layer), have a maximum context length. For sequences exceeding this, you must split them. Best practice is to use a sliding window approach with overlap (typically 50-100 residues) to capture context at the seams. Re-embed each window and then average or pool the per-residue embeddings for the overlapping regions to create the final full-length representation. Consider downscaling from ESM2 36L to ESM2 12L or 8L for extremely long sequences.

Q2: The predicted per-residue annotations (like solvent accessibility or secondary structure) from my embeddings are inaccurate for transmembrane proteins. How should I adjust my protocol? A: The general-purpose ESM2 training data may have underrepresented transmembrane-specific patterns. First, ensure you are using embeddings from the middle or later layers (e.g., layer 20-36 in ESM2 36L), as they capture more task-specific features. For a thesis on model size selection, you should compare performance: fine-tune a small prediction head on a labeled transmembrane dataset (e.g., OPM, PDBTM) using embeddings extracted from different ESM2 sizes (8M, 35M, 150M, 650M, 3B, 15B). Often, the 650M or 3B parameter model offers the best trade-off between accuracy and resource use for this specialized task.

Q3: When performing rapid annotation across a large proteome, what is the optimal batch size and ESM2 model size for a single GPU (e.g., NVIDIA A100 40GB)? A: See the quantitative data table below. The optimal batch size is a balance between speed and memory. For proteome-scale work, efficiency is key. The ESM2 8M or 35M model is often sufficient for generating high-quality embeddings for downstream training. Use the following table as a guideline:

Table 1: ESM2 Model GPU Memory Footprint & Throughput (Approximate, A100 40GB)

| ESM2 Model Size | Parameters | Avg. Memory per Seq (1024 AA) | Max Batch Size (1024 AA) | Seqs/Sec (Inference) |

|---|---|---|---|---|

| ESM2 8M | 8 Million | ~0.1 GB | 256+ | 120-180 |

| ESM2 35M | 35 Million | ~0.15 GB | 128 | 80-120 |

| ESM2 150M | 150 Million | ~0.4 GB | 64 | 40-70 |

| ESM2 650M | 650 Million | ~1.2 GB | 24 | 15-25 |

| ESM2 3B | 3 Billion | ~4.5 GB | 6 | 4-8 |

| ESM2 15B | 15 Billion | ~22 GB | 1 | 0.5-1 |

Q4: How do I choose which ESM2 layer's embeddings to use for my specific biological task (e.g., binding site prediction vs. fitness prediction)? A: This is the core of effective model size selection. There is a known topology: earlier layers (0-5) capture primary structure, middle layers (6-20) capture secondary/tertiary patterns, and later layers (20+) are most task-specific. You must run a layer-wise ablation study as part of your thesis methodology.

Experimental Protocol: Layer & Model Size Ablation Study

- Task Selection: Choose a benchmark (e.g., fluorescence prediction from ProteinGym, stability change prediction).

- Embedding Extraction: For a fixed dataset, extract per-residue embeddings from multiple layers (e.g., 6, 12, 18, 24, 30, 36) of multiple ESM2 model sizes (e.g., 35M, 150M, 650M, 3B).

- Pooling: For a per-protein task, pool residue embeddings (e.g., mean pool).

- Prediction Head: Train a simple, identical downstream model (e.g., a linear regressor or a small MLP) on each set of embeddings.

- Evaluation: Compare performance (e.g., Spearman's ρ, MSE) across model sizes and layers. The optimal layer is often in the final third of the model.

Q5: My extracted embeddings lead to overfitting when training a small downstream model. How can I mitigate this? A: This indicates your downstream model is learning noise from the high-dimensional embeddings (typically 512-2560 dimensions). First, apply regularization (dropout, L2 penalty) to your downstream network. Second, use dimensionality reduction (PCA or UMAP) on a representative sample of embeddings before training, or employ feature selection. Third, for your thesis, demonstrate that a smaller ESM2 model's embeddings (e.g., 150M) may generalize better for certain tasks than the largest (15B) when data is limited, due to a lower risk of overfitting.

Experimental Workflow Diagram

Title: Workflow for ESM2 Model Size Selection & Embedding Application

Layer-Wise Information Flow Diagram

Title: Optimal ESM2 Layer & Model Size for Different Biological Tasks

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for ESM2-Based Annotation & Embedding Experiments

| Item | Function & Rationale |

|---|---|

ESM2 Model Suite (Hugging Face transformers) |

Pre-trained protein language models in sizes from 8M to 15B parameters. The core reagent for embedding extraction. |

| PyTorch / JAX (with GPU acceleration) | Deep learning frameworks necessary for efficient model loading and batched inference. |

| Biopython | For parsing FASTA files, handling sequence data, and performing basic bioinformatics operations pre- and post-embedding. |

Custom Downstream Head (e.g., PyTorch nn.Module) |

A small, task-specific neural network (like a 2-layer MLP) to map embeddings to predictions (e.g., stability score). |

| Specialized Benchmark Datasets (e.g., ProteinGym, DeepSF, PDBbind) | Curated, labeled datasets for training and evaluating the downstream model on specific biological tasks. |

Dimensionality Reduction Library (e.g., umap-learn, scikit-learn PCA) |

To reduce embedding dimensionality, combat overfitting, and enable visualization. |

| High-Memory GPU Instance (Cloud or Local, e.g., NVIDIA A100/V100) | Essential for extracting embeddings from larger ESM2 models (650M, 3B, 15B) in a reasonable time. |

Embedding Storage Solution (e.g., HDF5 files, NumPy .npy arrays) |

Efficient formats for storing large volumes of high-dimensional embedding data for repeated analysis. |

Troubleshooting Guides & FAQs

Q1: During inference with a large ESM2 model (e.g., ESM2-3B or 15B), my process is killed due to "Out Of Memory" (OOM) errors. How can I proceed? A: This is a common hardware limitation. Consider the following solutions:

- Downsample Model Size: Switch to a smaller, proven-effective model like ESM2-650M for initial experiments.

- Reduce Batch Size: Set batch size to 1 during inference.

- Use Gradient Checkpointing: Enable

model.gradient_checkpointing_enable()to trade compute for memory. - Employ Model Parallelism: For extremely large models, split the model across multiple GPUs using frameworks like DeepSpeed.

Q2: The contact maps predicted by a large ESM2 model are noisy and show poor precision for my small, disordered protein. What's wrong? A: Larger models are trained on broad datasets and may overfit to structural patterns common in well-folded domains. For disordered regions or small peptides, smaller models or specialized algorithms (like those trained on NMR ensembles) can outperform. Validate predictions against experimental biophysical data (e.g., CD spectroscopy, SAXS).

Q3: How do I choose the optimal ESM2 model size for a specific task like antibody structure prediction? A: Systematic benchmarking is required. You must:

- Define a Validation Set: Curate a non-redundant set of antibodies with known structures not in the ESM2 training set.

- Run Parallel Inference: Predict contacts/structures using ESM2 variants (e.g., 8M, 35M, 150M, 650M, 3B).

- Quantify Performance: Calculate precision for top-L/k contact predictions and TM-scores for 3D models (if using a folding algorithm like AlphaFold2 with ESM2 embeddings).

- Analyze the Trade-off: Plot performance vs. computational cost (memory, inference time) to identify the point of diminishing returns.

Q4: When fine-tuning ESM2 for a specialized contact prediction task, my loss fails to decrease. How should I debug? A: Follow this protocol:

- Check Data Leakage: Ensure no test sequences are present in your fine-tuning set via strict homology partitioning (e.g., <30% sequence identity).

- Freeze Early Layers: Try freezing the first 50-75% of the transformer layers and only fine-tune the top layers and the contact prediction head.

- Adjust Learning Rate: Use a much smaller LR (e.g., 1e-5) than pre-training and employ a learning rate scheduler.

- Inspect Gradient Flow: Use tools like

torch.autograd.gradto check for vanishing gradients in early layers.

Experimental Protocol: Benchmarking ESM2 Model Sizes for Contact Prediction

Objective: To determine the optimal ESM2 model size for predicting residue-residue contacts within a specific protein family (e.g., GPCRs).

Materials: See "Research Reagent Solutions" table below.

Methodology:

- Dataset Curation:

- Source all unique GPCR structures from the PDB.

- Extract sequences and generate multiple sequence alignments (MSAs) using

jackhmmeragainst the UniClust30 database. - Split proteins into training/validation/test sets using a <25% sequence identity cutoff across sets.

- Compute ground-truth contact maps from structures (Cβ atoms, 8Å threshold).

- Model Inference:

- Load pre-trained ESM2 models of varying sizes (35M to 3B parameters).

- For each target sequence, extract per-residue embeddings from the final transformer layer.

- Compute the attention map or the averaged product of embeddings (as per ESM-1b methodology) to generate a predicted contact map.

- Analysis:

- For each prediction, calculate the precision of the top-L/5, top-L/2, and top-L long-range contacts (sequence separation >24).

- Aggregate precision scores across the entire test set for each model size.

- Record peak GPU memory usage and inference time per protein.

Data Presentation

Table 1: Benchmarking ESM2 Model Sizes on GPCR Contact Prediction (Test Set Average)

| ESM2 Model (Parameters) | Top-L/5 Precision | Top-L Precision | GPU Memory (GB) | Inference Time (sec) |

|---|---|---|---|---|

| ESM2-35M | 0.42 | 0.21 | 1.2 | 0.5 |

| ESM2-150M | 0.58 | 0.34 | 2.8 | 1.8 |

| ESM2-650M | 0.69 | 0.48 | 6.5 | 5.2 |

| ESM2-3B | 0.71 | 0.50 | 18.7 | 22.1 |

| ESM2-15B | 0.72 | 0.51 | (OOM on 24GB) | N/A |

Table 2: Research Reagent Solutions

| Item | Function/Description | Example/Source |

|---|---|---|

| Pre-trained ESM2 Models | Protein language models of varying sizes for feature extraction. | Hugging Face transformers library, FAIR Model Zoo |

| Multiple Sequence Alignment (MSA) Tool | Generates evolutionary context from sequence databases. | jackhmmer (HMMER suite), hhblits |

| Contact Map Evaluation Scripts | Calculates precision metrics from predicted vs. true contacts. | contact_prediction tools from ESM repository |

| Structure Visualization Software | Visually inspect predicted contacts/structures. | PyMOL, ChimeraX |

| Gradient Checkpointing | Reduces GPU memory footprint during training/inference. | torch.utils.checkpoint |

| DeepSpeed | Enables model parallelism for extremely large models. | Microsoft DeepSpeed library |

Visualizations

Diagram Title: ESM2 Model Selection Workflow

Diagram Title: Troubleshooting OOM Errors

Technical Support Center: Troubleshooting ESM2 Fine-tuning

Troubleshooting Guides

Issue 1: Poor Downstream Task Performance After Fine-tuning

- Symptoms: Model accuracy on the target task (e.g., fluorescence prediction) is not significantly better than the pre-trained model or a random baseline. Validation loss plateaus early.

- Potential Causes & Solutions:

- Cause: Learning rate is too high, causing instability and failure to converge.

- Solution: Implement a learning rate sweep. Try lower rates (e.g., 1e-6, 5e-6, 1e-5) with a linear warmup (e.g., 10% of total steps) and cosine decay.

- Cause: Severe overfitting to the small downstream dataset.

- Solution: Apply aggressive regularization: increase dropout (0.3-0.5), use weight decay (0.01-0.1), and employ early stopping with a patience of 5-10 epochs.

- Cause: Inadequate task-specific adaptation of the model's final layers.

- Solution: Experiment with unfreezing more layers of the ESM2 model. Start by only training the classification head, then progressively unfreeze the top transformer layers (e.g., last 6, 3, 1).

- Cause: Learning rate is too high, causing instability and failure to converge.

Issue 2: "CUDA Out of Memory" Errors During Training

- Symptoms: Training crashes with GPU memory allocation errors, especially with larger batch sizes or longer sequences.

- Potential Causes & Solutions:

- Cause: Batch size is too large for the available GPU memory.

- Solution: Reduce the batch size (e.g., from 32 to 8 or 4). Use gradient accumulation to maintain an effective larger batch size. For example, set

batch_size=4andgradient_accumulation_steps=8to simulate a batch size of 32.

- Solution: Reduce the batch size (e.g., from 32 to 8 or 4). Use gradient accumulation to maintain an effective larger batch size. For example, set

- Cause: Protein sequences in the dataset are very long.

- Solution: Implement dynamic batching (sorting sequences by length) to minimize padding. Consider trimming or splitting extremely long sequences if biologically justified, or use a model variant trained on longer contexts (e.g., ESM2-33 or ESM2-36).

- Cause: High-precision (FP32) training is consuming excessive memory.

- Solution: Enable Automatic Mixed Precision (AMP) training (FP16). This can nearly halve memory usage and speed up training.

- Cause: Batch size is too large for the available GPU memory.

Issue 3: Inconsistent Reproduction of Published Results

- Symptoms: Performance metrics vary significantly between different training runs with the same hyperparameters.

- Potential Causes & Solutions:

- Cause: Lack of random seed fixing.

- Solution: Set a fixed seed for all random number generators (Python, NumPy, PyTorch) at the start of your script.

- Cause: Non-deterministic GPU operations.

- Solution: Set

torch.backends.cudnn.deterministic = Trueandtorch.backends.cudnn.benchmark = False. Note: This may slow down training.

- Solution: Set

- Cause: Data loading order variance.

- Solution: Use a fixed DataLoader worker seed and disable shuffling for validation/testing.

- Cause: Lack of random seed fixing.

Frequently Asked Questions (FAQs)

Q1: Which ESM2 model size (8M, 35M, 150M, 650M, 3B, 15B parameters) should I choose for my specific protein engineering task? A: The choice is a trade-off. For smaller datasets (<10k samples), smaller models (8M, 35M) are less prone to overfitting and train faster. For larger datasets, larger models (150M, 650M) can achieve higher accuracy but require more computational resources. The 3B and 15B models are typically used for zero-shot or few-shot inference rather than full fine-tuning due to their extreme size. See the quantitative comparison table below.

Q2: Should I fine-tune the entire model or just the final layers? A: This depends on your dataset size and similarity to the pre-training data. For small, novel tasks, freeze the core model and only train a task head. For larger datasets or tasks where you want the model to adapt its understanding of protein semantics (e.g., stability), perform gradual unfreezing or full fine-tuning with a low learning rate.

Q3: How do I format my protein sequence data for fine-tuning ESM2?

A: ESM2 expects sequences as standard FASTA strings (single-letter amino acid codes). You must tokenize them using the model's specific tokenizer. For a regression task (e.g., predicting melting temperature), your dataset should be a list of (sequence, float_value) pairs. For classification, it's (sequence, integer_label).

Q4: What is the typical workflow for a fine-tuning experiment? A: A standard workflow involves data preparation, model setup, training loop with validation, and final evaluation. The diagram below outlines this process.

Q5: How can I improve my model's generalization to unseen protein families? A: Use data augmentation techniques like reverse sequence, substring sampling (if structure is local), or adding minor noise to embeddings. Implement k-fold cross-validation across different protein family clusters to ensure robustness. Consider using LoRA (Low-Rank Adaptation) for more parameter-efficient and potentially generalizable fine-tuning.

Table 1: Comparison of ESM2 Model Sizes for Downstream Task Fine-tuning

| Model (Params) | Recommended Min. Dataset Size | Typical VRAM for Fine-tuning (BS=8) | Fluorescence Prediction (Spearman ρ)* | Stability Prediction (ΔΔG RMSE kcal/mol)* | Best Use Case |

|---|---|---|---|---|---|

| ESM2-8M | 1,000 - 5,000 sequences | 4-6 GB | 0.45 - 0.55 | 1.8 - 2.2 | Quick prototyping, very small datasets |

| ESM2-35M | 5,000 - 20,000 | 8-10 GB | 0.55 - 0.65 | 1.5 - 1.8 | Standard small-scale protein engineering |

| ESM2-150M | 20,000 - 100,000 | 12-16 GB | 0.65 - 0.75 | 1.2 - 1.5 | Large-scale mutational scans, lead optimization |

| ESM2-650M | 100,000+ | 24+ GB (Multi-GPU) | 0.75 - 0.82 | 1.0 - 1.3 | State-of-the-art accuracy for large datasets |

| ESM2-3B/15B | Few-shot inference | Inference only | N/A (Zero-shot) | N/A (Zero-shot) | Zero-shot variant effect prediction |

*Hypothetical performance ranges based on typical literature benchmarks. Actual results depend heavily on dataset quality and fine-tuning strategy.

Experimental Protocols

Protocol 1: Baseline Fine-tuning for Fluorescence Prediction

- Data Preparation: Split labeled fluorescence data (sequence, log fluorescence intensity) into 70/15/15 train/validation/test sets. Ensure no homologous protein leakage between splits using MMseqs2 clustering.

- Model Setup: Load

esm2_t12_35M_UR50Dfrom Hugging Facetransformers. Add a regression head (linear layer) on top of the mean-pooled representations. - Training: Use AdamW optimizer (lr=1e-5, weight_decay=0.05), MSE loss. Train for 50 epochs with a batch size of 8 (gradient accumulation if needed). Validate every epoch.

- Evaluation: Report Spearman's rank correlation coefficient (ρ) and Mean Squared Error (MSE) on the held-out test set.

Protocol 2: Progressive Unfreezing for Stability Prediction

- Initial Phase: Freeze all layers of

esm2_t30_150M_UR50D. Train only the classification/regression head for 10 epochs with a higher learning rate (1e-4). - Unfreezing: Unfreeze the last 3 transformer layers. Reduce learning rate to 5e-6. Train for another 20 epochs.

- Full Fine-tuning (Optional): For large datasets, unfreeze the entire model with a very low learning rate (1e-6) and train for 10-15 final epochs.

- Regularization: Use dropout (0.3) on the pooled output and early stopping (patience=7) based on validation loss.

Visualizations

ESM2 Fine-tuning Experimental Workflow

Decision Tree for ESM2 Model Size Selection

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Fine-tuning Experiments

| Item | Function/Description |

|---|---|

Hugging Face transformers Library |

Provides easy access to pre-trained ESM2 models, tokenizers, and training interfaces. |

| PyTorch / PyTorch Lightning | Core deep learning frameworks for defining the training loop, models, and data loaders. |

| Weights & Biases (W&B) / TensorBoard | Experiment tracking tools to log training/validation metrics, hyperparameters, and model artifacts. |

| MMseqs2 | Tool for clustering protein sequences to create non-redundant datasets and ensure no data leakage between splits. |

| CUDA-enabled NVIDIA GPU (e.g., A100, V100, RTX 4090) | Essential hardware for accelerating model training. VRAM size is the primary constraint. |

LoRA (Low-Rank Adaptation) Implementations (e.g., peft library) |

Allows efficient fine-tuning by training only small, rank-decomposition matrices, reducing overfitting risk. |

| Scikit-learn | Used for standard data splitting, metrics calculation (e.g., Spearman ρ, RMSE), and simple baselines. |

| APE (Antibody-PEscuide) or ProteinMPNN | Specialized tools for generating variant sequences, useful for data augmentation or zero-shot comparison. |

Guidelines for Mutational Effect Prediction and Variant Impact Analysis

Troubleshooting Guides and FAQs

Q1: When using ESM2 for variant effect prediction, my predictions show low correlation with experimental deep mutational scanning (DMS) data. What could be the issue? A1: This is often a model size selection mismatch. The ESM2 model family (ESM2-8M to ESM2-15B) has varying performance across tasks. For variant effect prediction on a specific protein family, larger models (ESM2-650M or 3B+) generally capture deeper evolutionary constraints but require more data for fine-tuning. Ensure your benchmark dataset (e.g., from ProteinGym) is not part of the model's pretraining data. Use a hold-out set from your specific DMS experiment for validation.

Q2: How do I interpret the ESM2 log-likelihood scores (pseudo-log-likelihood, PLL) for a mutation? Are there standardized thresholds for "deleterious" or "benign"? A2: Raw PLL scores are not standardized thresholds. You must calibrate scores against known benign variants (e.g., gnomAD) for your protein of interest. A common method is to compute the ΔPLL (mutant PLL - wild-type PLL). Negative ΔPLL suggests destabilization. For binary classification, use a reference set to establish percentiles.

Q3: I am fine-tuning ESM2 on a small dataset of clinical variants. The model is overfitting. What strategies are recommended? A3: Employ the following: 1) Use a smaller ESM2 model (e.g., ESM2-35M or 150M) as a starting point. 2) Apply aggressive dropout and weight decay. 3) Use layer-wise learning rate decay. 4) Leverage virtual adversarial training with sequences from the same family. 5) Implement early stopping based on a separated validation set.

Q4: For a drug development project, we need to analyze variants in a specific signaling pathway. How can ESM2 be used for multi-protein system impact analysis? A4: ESM2 predicts per-protein effects. For pathway analysis: 1) Run variant impact prediction for each protein in the pathway individually. 2) Integrate scores using a pathway-specific heuristic (e.g., weighted sum based on node centrality). 3) Complement with ESMFold to model structural changes at interaction interfaces. 4. Use a consensus approach with other tools (e.g., Alphafold2, DynaMut2) for interface stability.

Table 1: Performance of ESM2 Model Sizes on Key Variant Prediction Tasks

| Model Size (Parameters) | Spearman's ρ on ProteinGym (AVG) | Runtime per 100 Variants (CPU) | Minimum GPU Memory for Inference | Recommended Use Case |

|---|---|---|---|---|

| ESM2-8M | 0.28 | 45 sec | 2 GB | Large-scale pre-screen, education |

| ESM2-35M | 0.35 | 90 sec | 4 GB | Exploratory analysis on limited hardware |

| ESM2-150M | 0.41 | 4 min | 6 GB | General-purpose variant effect prediction |

| ESM2-650M | 0.48 | 12 min | 16 GB | High-stakes research, lead prioritization |

| ESM2-3B | 0.51 | 28 min | 32 GB (FP16) | Final validation, publication analysis |

| ESM2-15B | 0.53 | 110 min | 80 GB (FP16) | Benchmarking, novel method development |

Data sourced from recent benchmarks (2024) on ProteinGym and reported literature. Runtime tested on Intel Xeon 8-core CPU. Performance (ρ) is averaged across diverse protein families.

Experimental Protocols

Protocol 1: Benchmarking ESM2 Model Size for a Specific Protein Family

- Data Curation: Collate a deep mutational scanning (DMS) dataset for your target protein family (e.g., from the ProteinGym suite). Split data into training (60%), validation (20%), and test (20%) sets, ensuring no sequence overlap.

- Score Extraction: For each ESM2 model size (8M, 35M, 150M, 650M, 3B), compute the pseudo-log-likelihood (PLL) for every variant in the dataset using the

esm-variantsPython package. - Score Normalization: Calculate ΔΔPLL = (ΔPLLvariant - mean(ΔPLLsynonymous)) / std(ΔPLL_synonymous). Synonymous variants serve as a neutral baseline.

- Evaluation: Compute the Spearman's rank correlation coefficient between the normalized ΔΔPLL scores and the experimental fitness scores for the held-out test set.

- Analysis: Plot correlation (ρ) vs. model size and inference cost to determine the optimal model for your task.

Protocol 2: Fine-tuning ESM2 for Clinical Variant Pathogenicity Classification

- Dataset Preparation: Gather pathogenic variants (from ClinVar, with review status) and presumed benign variants (from gnomAD, with high allele frequency). Balance the dataset. Use sequence homology reduction (CD-HIT at 90%) to avoid overfitting.

- Model Setup: Load a pre-trained ESM2 model (start with 150M). Add a classification head: a dropout layer (p=0.3) followed by a linear layer mapping to 2 output nodes (benign/pathogenic).

- Training: Use AdamW optimizer (lr=1e-5, weight_decay=0.01). Apply a learning rate scheduler with warmup. Use weighted cross-entropy loss to handle class imbalance. Train for up to 20 epochs with early stopping on validation AUC.

- Validation: Perform 5-fold cross-validation. Report AUC-ROC, AUC-PR, and F1 score on the test folds. Compare performance against baseline methods (e.g., SIFT, PolyPhen-2) using DeLong's test for AUC comparison.

Visualizations

Title: ESM2-Based Variant Effect Prediction Workflow

Title: Signaling Pathway Analysis with Variant Impact Node

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Mutational Effect Analysis with ESM2

| Item | Function in Analysis | Example/Supplier |

|---|---|---|

| ESM2 Pretrained Models | Core inference engine for scoring variant effects. Available in sizes from 8M to 15B parameters. | Hugging Face Transformers Library (facebook/esm2_t*) |

| High-Quality Variant Datasets | For benchmarking and fine-tuning model predictions against empirical data. | ProteinGym (DMS), ClinVar (pathogenic/benign), gnomAD (population) |

| Structural Modeling Suite | To visualize and assess predicted structural consequences of variants. | ESMFold, AlphaFold2, PyMOL, DynaMut2 |

| GPU Computing Resources | Accelerates inference and fine-tuning, especially for models >650M parameters. | NVIDIA A100/A6000 (40-80GB VRAM), Cloud instances (AWS p4d, GCP a2) |

| Variant Annotation Database | Provides biological context (conservation, domain, known PTM sites) for interpretation. | UniProt, Pfam, InterPro |

| Stable Python Environment | Reproducible environment for running ESM2 and dependencies. | Conda, Docker container with PyTorch, Transformers, Biopython |

Special Considerations for Low-Resource and High-Throughput Screening Environments

Troubleshooting Guides & FAQs

Q1: My computational resources are limited. Which ESM2 model size is most efficient for initial, broad-scale protein function prediction in a high-throughput screen? A: For low-resource, high-throughput environments, ESM2-8M (8 million parameters) is recommended for initial screening. It provides a favorable balance between speed and basic functional insight. Reserve larger models (e.g., 650M) for subsequent, targeted analysis of promising hits.

Q2: During high-throughput virtual screening of protein-ligand interactions using ESM2 embeddings, I encounter "CUDA out of memory" errors. What are my options? A: This is common when batching large libraries. Implement these steps:

- Reduce Batch Size: Start with a batch size of 1 and increase gradually.

- Use CPU Inference: For ESM2-8M or 35M, CPU inference is viable and avoids GPU memory issues.

- Optimize Embedding Storage: Save embeddings as 16-bit floats (FP16) instead of 32-bit (FP32) to halve memory usage without significant precision loss.

- Use Model Offloading: Utilize libraries like

accelerateto offload parts of larger models to CPU RAM.

Q3: The ESM2 embeddings for my protein family show poor clustering correlation with experimental activity. How can I improve this without access to massive labeled datasets? A: This suggests the generalist ESM2 embeddings need task-specific adaptation.

- Approach: Apply low-rank adaptation (LoRA) fine-tuning. LoRA freezes the pre-trained model weights and injects trainable rank decomposition matrices into the transformer layers, dramatically reducing the number of parameters to train.

- Protocol:

- Gather your small, task-specific dataset (e.g., 100-500 sequences with activity labels).

- Install libraries:

pip install transformers peft. - Configure LoRA (target attention modules, rank

r=4or8). - Train for a small number of epochs (3-10) using a cross-entropy or regression loss.

- Extract embeddings from the adapted model for significantly improved task relevance.

Q4: For routine quality control of protein expression in high-throughput cell-based assays, can ESM2 replace multiple sequence alignment (MSA) tools that are computationally expensive? A: Yes, for rapid QC. ESM2's strength is single-sequence inference, eliminating the need for computationally intensive MSAs.

| Method | Avg. Time per Sequence (s) | Hardware | Primary Use Case |

|---|---|---|---|

| HHblits (MSA Generation) | ~30-60 | High-CPU Cluster | Deep evolutionary analysis |

| ESM2-35M (Inference) | ~0.1-0.3 | Standard Laptop CPU | High-throughput QC, sanity checks |

| ESM2-650M (Inference) | ~1-2 | Modern GPU (e.g., V100) | Detailed single-sequence feature extraction |

Protocol: ESM2-based Expression QC:

- Input: Your target protein sequence.

- Processing: Generate per-residue embeddings using

esm2_t6_8M_UR50Doresm2_t33_650M_UR50D. - Analysis: Calculate the mean pairwise cosine similarity between embeddings of your protein and a set of 50-100 known, well-expressed homologous sequences.

- Threshold: Sequences with a mean similarity score < 0.7 (empirical) may have folding/expression issues and warrant further inspection before moving to costly experimental screens.

Q5: How do I choose an ESM2 model size for a specific task when balancing accuracy and resource constraints? A: Follow this decision workflow:

ESM2 Model Selection Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ESM2-Based Screening Pipeline |

|---|---|

| Pre-Trained ESM2 Models (8M, 35M, 150M, 650M) | Foundational models for generating protein sequence embeddings without needing MSAs. Smaller models enable high-throughput. |

| LoRA (Low-Rank Adaptation) Modules | "Reagent" for efficient fine-tuning. Allows task-specific adaptation of large ESM2 models with minimal (µg-scale) labeled data. |

| FP16 Precision Converter | Reduces embedding memory footprint by 50%, crucial for storing millions of embeddings in high-throughput screens. |

| Cosine Similarity Metric | The primary "assay" for comparing embedding vectors to quantify sequence similarity, functional relatedness, or clustering. |

| UMAP/t-SNE Dimensionality Reduction | "Visualization dye" for projecting high-dimensional embeddings into 2D/3D space to identify clusters and outliers. |

| Small Labeled Dataset (Task-Specific) | The essential "calibrant" for fine-tuning. Even 100-500 curated examples can significantly steer model outputs. |

| Accelerate Library | Enables model and data parallelism, allowing large models to run on limited hardware via CPU offloading. |

Protocol: Fine-Tuning ESM2 with LoRA for Binding Site Prediction