ESM Protein Sequence Embedding Models: A Comprehensive Guide for AI-Driven Biomedical Discovery

This article provides a thorough exploration of Evolutionary Scale Modeling (ESM) for protein sequence embedding, tailored for computational biologists and drug discovery researchers.

ESM Protein Sequence Embedding Models: A Comprehensive Guide for AI-Driven Biomedical Discovery

Abstract

This article provides a thorough exploration of Evolutionary Scale Modeling (ESM) for protein sequence embedding, tailored for computational biologists and drug discovery researchers. We begin by establishing the foundational principles of protein language models and how ESM learns biological semantics from sequences. The guide then details practical methodologies for applying pre-trained ESM models (like ESM-2 and ESMFold) to tasks such as structure prediction, function annotation, and variant effect prediction. We address common challenges in implementation, including computational resource management and fine-tuning strategies. Finally, we present a comparative analysis of ESM against other embedding approaches, evaluating performance benchmarks and domain-specific utility. This synthesis aims to equip scientists with the knowledge to effectively integrate state-of-the-art protein language models into their research pipelines.

Understanding ESM: How AI Decodes the Language of Proteins

Protein Language Models (PLMs) are deep learning models trained on the evolutionary information contained in vast protein sequence databases. Inspired by natural language processing (NLP), they treat protein sequences as "sentences" composed of amino acid "words." By training on billions of sequences, PLMs learn the underlying "grammar" and "semantics" of protein structure and function, enabling them to generate informative, context-aware, fixed-dimensional vector representations known as semantic embeddings.

Within the thesis context of ESM (Evolutionary Scale Modeling) models, PLMs represent a paradigm shift from traditional alignment-based methods (like PSI-BLAST) to unsupervised, deep learning-based feature extraction. ESM models, such as ESM-2 and ESMFold, are specific, state-of-the-art instantiations of PLMs developed by Meta AI.

Key PLM Architectures and Performance Data

The following table summarizes key ESM model architectures and their capabilities, highlighting the scale of training and output dimensions.

Table 1: Comparative Overview of Major ESM Model Variants

| Model Name | Parameters | Training Sequences (Approx.) | Embedding Dimension | Key Capability | Publication Year |

|---|---|---|---|---|---|

| ESM-1b | 650M | 250M | 1280 | State-of-the-art at release for structure prediction tasks. | 2019 |

| ESM-2 | 8M to 15B | 65M (UniRef50) | 320 to 5120 | Improved architecture; scales reliably with parameter count. | 2022 |

| ESM-3 (Preview) | 98B | Not Disclosed | Not Disclosed | Multi-modal generation (sequence, structure, function). | 2024 |

| ESMFold | 15B (ESM-2 backbone) | 65M | 5120 | High-speed, high-accuracy atomic structure prediction from single sequence. | 2022 |

Experimental Protocol: Generating and Using Protein Embeddings

This protocol details the steps to generate semantic embeddings for a set of protein sequences using the ESM-2 model and to utilize them for a downstream task (e.g., protein family classification).

Protocol 3.1: Embedding Extraction with ESM-2

Objective: To convert raw protein sequences into fixed-length, semantically rich numerical vectors (embeddings).

Materials & Software:

- Python (v3.8+)

- PyTorch (v1.9+)

- Hugging Face

transformersanddatasetslibraries. - FASTA file containing query protein sequences.

- GPU (recommended for large batches).

Procedure:

- Environment Setup:

Load Model and Tokenizer:

Sequence Preparation and Tokenization:

Forward Pass and Embedding Extraction:

Protocol 3.2: Downstream Classification Using Embeddings

Objective: To train a simple classifier on extracted embeddings to predict protein family membership.

Procedure:

- Prepare Dataset: Use a labeled dataset (e.g., from Pfam). Extract embeddings for all sequences using Protocol 3.1.

- Train/Test Split: Split the embeddings and corresponding labels into training (80%) and testing (20%) sets.

- Train Classifier:

- Evaluate:

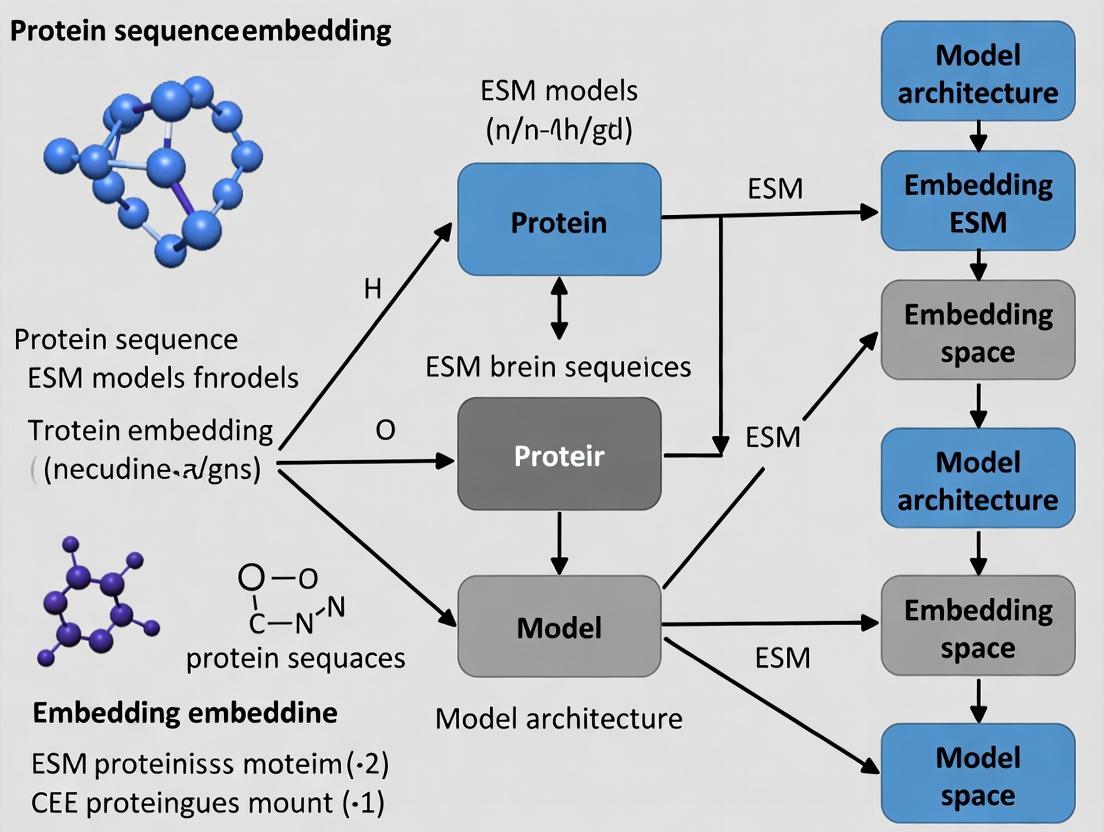

Visualization: PLM Workflow and Downstream Application

Title: PLM Embedding Generation and Downstream Use

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Resources for PLM-Based Research

| Item Name | Type | Function/Benefit | Source/Example |

|---|---|---|---|

| ESM-2 Model Weights | Pre-trained Model | Provides the core PLM for inference and fine-tuning. Multiple sizes available (8M to 15B parameters). | Hugging Face Hub (facebook/esm2_*) |

Hugging Face transformers |

Software Library | Provides easy-to-use APIs for loading, running, and fine-tuning transformer models like ESM. | https://huggingface.co/docs/transformers |

| UniRef Database | Protein Sequence Database | Curated, clustered sequence database used for training and benchmarking PLMs. | https://www.uniprot.org/uniref/ |

| PyTorch | Deep Learning Framework | The underlying tensor and neural network library required to run ESM models. | https://pytorch.org/ |

| ESMFold | Structure Prediction Tool | An end-to-end single-sequence structure predictor built on top of ESM-2 embeddings. | https://github.com/facebookresearch/esm |

| Pfam | Protein Family Database | A large collection of protein families, used as a benchmark for function prediction tasks. | http://pfam.xfam.org/ |

| ProteinMPNN | Protein Sequence Design | A graph-based model for sequence design, often used in tandem with structure predictors like ESMFold. | https://github.com/dauparas/ProteinMPNN |

Title: ESMFold Structure Prediction Pipeline

Within the broader thesis on leveraging deep learning for protein sequence embedding, the Evolutionary Scale Modeling (ESM) suite represents a paradigm shift. This progression from ESM-1b to ESM-2 and the subsequent ESMFold model encapsulates the transition from learning high-quality representations to enabling high-accuracy, computationally efficient structure prediction, thereby accelerating research in functional annotation and therapeutic design.

Application Notes

ESM-1b: Foundational Protein Language Modeling

Thesis Context: Establishes the premise that masked language modeling (MLM) on expansive evolutionary-scale datasets yields robust general-purpose protein sequence representations (embeddings).

- Core Innovation: A 650M parameter Transformer model trained via MLM on ~250 million protein sequences from UniRef.

- Primary Application: Learned embeddings serve as feature inputs for downstream tasks (e.g., contact prediction, secondary structure, variant effect prediction) without task-specific fine-tuning, demonstrating transfer learning efficacy.

- Limitation: While contacts derived from its attention maps informed structure, it was not a direct end-to-end structure predictor.

ESM-2: Scaling Laws and Improved Representations

Thesis Context: Tests the hypothesis that scaling model parameters (to 15B) and training data improves both sequence representations and direct structural information extraction.

- Core Innovation: A scaled Transformer architecture (150M to 15B parameters) trained on a unified dataset of sequences and structures.

- Primary Application: Produces state-of-the-art sequence embeddings. Critically, its intermediate representations (e.g., from layer 36 of the 36-layer, 3B parameter model) contain sufficient structural information to enable the development of ESMFold, bridging the sequence-structure gap more directly than ESM-1b.

ESMFold: High-Speed Structure Prediction

Thesis Context: Validates the thesis that embeddings from a protein language model (ESM-2) can be refined into accurate 3D coordinates with a much faster throughput than template-based or complex physics-based methods.

- Core Innovation: A head module attached to the frozen ESM-2 trunk. It uses a transformer to convert sequence embeddings into a 3D structure via a folded attention mechanism over residue pairs, outputting a structure in a single forward pass.

- Primary Application: Rapid atomic-level structure prediction (orders of magnitude faster than AlphaFold2), enabling high-throughput structural proteomics, screening, and the analysis of metagenomic databases.

Quantitative Model Comparison

Table 1: Comparative Specifications of ESM Models

| Feature | ESM-1b | ESM-2 (Largest) | ESMFold (Structure Module) |

|---|---|---|---|

| Parameters | 650 million | 15 billion | ESM-2 Trunk + Head |

| Training Data | ~250M sequences (UniRef) | ~60M sequences (UniRef+UR50) | ESM-2 + structural losses |

| Max Layers | 33 | 48 | 48 (trunk) + 8 (head) |

| Primary Output | Sequence Embeddings | Sequence Embeddings | 3D Atomic Coordinates |

| Inference Speed | Fast | Moderate (size-dependent) | Very Fast (~14 sec/protein) |

| TM-score (CAMEO) | N/A | N/A | ~0.8 (on par with AF2) |

Table 2: Performance on Key Downstream Tasks

| Task / Benchmark | ESM-1b Performance | ESM-2 (3B) Performance | Notes |

|---|---|---|---|

| Contact Prediction (Top L/L) | ~0.38 (PSICOV) | >0.55 (PSICOV) | Directly from attention maps. |

| Secondary Structure (Q3 Accuracy) | ~0.78 (CB513) | ~0.84 (CB513) | Linear probe on embeddings. |

| Structure Prediction (TM-score) | Not Applicable | 0.72 (on long proteins) | Via ESMFold framework. |

Experimental Protocols

Protocol 1: Extracting Protein Sequence Embeddings with ESM-2

Purpose: To generate a fixed-dimensional vector representation for a protein sequence using a pre-trained ESM-2 model.

- Environment Setup: Install PyTorch and the

fair-esmpackage. Use a GPU-enabled environment for larger models. - Model Loading: Select a model size (e.g.,

esm2_t36_3B_UR50D) and load it usingesm.pretrained.load_model_and_alphabet_core. - Sequence Preparation: Format the input sequence(s) as a list of strings. Use the model's batch converter to add the necessary tokens (e.g.,

<cls>,<eos>) and convert to token indices. - Forward Pass: Pass the tokenized batch through the model with

repr_layersset to the desired layer (e.g., 36). Setreturn_contacts=Trueif contact maps are needed. - Embedding Extraction: From the output, extract the last hidden state representations (

["representations"][layer]). The<cls>token representation is often used as the global sequence embedding. - Storage: Save the embeddings (NumPy arrays) for downstream analysis (e.g., classification, clustering).

Protocol 2: Predicting Protein Structure with ESMFold

Purpose: To predict the full atomic 3D structure of a protein sequence using ESMFold.

- Model Loading: Load the pre-trained ESMFold model via the

esm.modelsAPI. This loads both the frozen ESM-2 trunk and the structure module head. - Input Processing: Provide the protein sequence as a string. The model handles tokenization internally. Batched inference is supported.

- Structure Inference: Run a single forward pass:

output = model.infer(sequence). No MSA generation or external database search is required. - Output Parsing: The primary outputs are:

positions: 3D coordinates of the backbone and side-chain atoms (in Ångströms).confidence: The predicted Local Distance Difference Test (pLDDT) score per residue.

- Visualization & Validation: Save the coordinates as a PDB file. Use pLDDT to color-code the model in visualization tools (e.g., PyMOL, ChimeraX). Calculate metrics like TM-score against a known experimental structure if available.

Protocol 3: Fine-Tuning ESM Embeddings for a Specific Task

Purpose: To adapt the general-purpose ESM embeddings for a specialized prediction task (e.g., enzyme classification, solubility).

- Dataset Curation: Assemble a labeled dataset of protein sequences and corresponding labels. Split into training, validation, and test sets.

- Base Model Setup: Load a pre-trained ESM model (e.g.,

esm2_t12_35M_UR50Dfor efficiency). Add a task-specific classification/regression head on top. - Training Strategy: Initially freeze the ESM trunk and train only the new head for a few epochs. Then, optionally unfreeze all or part of the trunk for full fine-tuning. Use a low learning rate (e.g., 1e-5) to avoid catastrophic forgetting.

- Evaluation: Monitor performance on the validation set. Use metrics appropriate for the task (e.g., AUC-ROC, accuracy, mean squared error).

Visualization of ESM Evolution and Workflow

ESM Model Evolution and Output Flow

ESMFold End-to-End Prediction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for ESM-Based Protein Research

| Item | Function & Relevance |

|---|---|

ESM Python Library (fair-esm) |

Core software package providing APIs to load pre-trained ESM models (1b, 2, Fold), perform inference, and extract embeddings. |

| PyTorch (GPU-enabled) | Deep learning framework required to run the computationally intensive ESM models. A CUDA-compatible GPU is essential for practical use of larger models. |

| Jupyter / Python Environment | For interactive data analysis, running protocols, and visualizing results (embeddings, structures). |

| Biopython / Pandas | For handling and preprocessing sequence data, managing datasets for fine-tuning, and parsing output. |

| Visualization Suite (PyMOL, ChimeraX) | Critical for visualizing and analyzing the 3D structural predictions from ESMFold, including coloring by pLDDT confidence metric. |

| HMMER / HH-suite | (Optional but Contextual) While ESMFold is single-sequence, these tools for generating MSAs provide a baseline comparison against traditional co-evolution methods. |

| PDB Database (RCSB) | Source of experimental protein structures for validating and benchmarking ESMFold predictions. |

| Compute Infrastructure (HPC/Cloud) | Access to high-performance computing or cloud GPUs (AWS, GCP, Azure) is necessary for fine-tuning models or large-scale inference with ESM-2 (15B) or ESMFold. |

Within the broader thesis on Evolutionary Scale Modeling (ESM) for protein sequence embedding research, this document details the application notes and protocols for the core architecture: transformer models trained on massive evolutionary sequence datasets. These models, such as ESM-2 and ESM-3, leverage the information latent in the evolutionary record to infer protein structure, function, and fitness, providing powerful generalized embeddings for downstream biomedical research and drug development.

Core Model Architectures & Quantitative Comparisons

The field is defined by several key models trained on the UniRef database and other evolutionary sequence clusters.

Table 1: Comparative Overview of Key Evolutionary Sequence Transformer Models

| Model Name (Release Year) | Key Developer(s) | Parameters | Training Dataset (Size) | Context Window | Key Output / Embedding Dimension | Primary Public Access |

|---|---|---|---|---|---|---|

| ESM-1b (2019) | Meta AI (FAIR) | 650M | UniRef50 (~30M seqs) | 1,024 | 1,280 | GitHub, Hugging Face |

| ESM-2 (2022) | Meta AI (FAIR) | 8M to 15B | UniRef50 (~30M seqs) & UniRef90 (65M+ seqs) | 1,024 to 4,096 | 320 to 5,120 | GitHub, Hugging Face |

| ESM-3 (2024) | Meta AI (FAIR) | 98B | Multi-source (Billion-scale) | N/A | N/A (Generative Model) | API, Limited Release |

| MSA Transformer (2021) | Meta AI (FAIR) | 120M | UniRef30 (26M MSAs) | 1,024 | 768 | GitHub, Hugging Face |

| ProtT5-XL (2021) | Rost Lab | 3B | BFD100 (2.1B seqs) | 512 | 1,024 | GitHub, Hugging Face |

Application Notes

Generating Sequence Embeddings (ESM-2)

Embeddings are the vector representations of input sequences extracted from a model's hidden layers, encapsulating evolutionary and structural information.

Protocol: Per-Residue Embedding Extraction Using ESM-2

- Environment Setup: Install PyTorch and the

fair-esmlibrary via pip or conda. - Model Loading: Load a pre-trained ESM-2 model and its corresponding vocabulary.

- Data Preparation: Format protein sequence(s). Truncate sequences longer than the model's context window.

- Embedding Inference: Pass tokens through the model. Disable gradient calculation for speed and memory efficiency.

- Post-processing: Generate per-residue embeddings by excluding the specialized

<cls>,<eos>, and<pad>tokens. The output is a 2D tensor of shape (sequencelength, embeddingdimension).

Zero-Shot Mutation Effect Prediction (ESM-1v)

ESM-1v utilizes a masked language modeling objective to assess the likelihood of all possible amino acids at a given position.

Protocol: Scoring Missense Variants

- Model Loading: Load the ESM-1v model ensemble (five models).

- Sequence Masking: For a wild-type sequence (e.g.,

"MKTIIALSYIF..."), create a copy where the target residue position is replaced with the mask token (<mask>). - Likelihood Calculation: Pass the masked sequence through the model. The model outputs a log-likelihood distribution over the vocabulary for the masked position.

- Variant Scoring: Extract the log probability for the wild-type amino acid and for the mutant amino acid. The log-odds score is:

log2(p_mutant / p_wildtype). A positive score suggests the mutation is evolutionarily tolerated. - Ensemble Averaging: Repeat steps with all five ESM-1v models and average the log-odds scores for robust prediction.

Structure Prediction via Inverse Folding (ESM-IF1)

ESM-IF1 is conditioned on a protein backbone structure to predict a sequence that fits that fold.

Protocol: Fixed-Backbone Sequence Design

- Input Preparation: Obtain a protein backbone structure (

.pdbfile). From this, extract the 3D coordinates of the backbone atoms (N, Cα, C, O) and the unit vectors representing the local frame of each residue. - Model Inference: Use the ESM-IF1 API or library to encode the structural graph.

- Sequence Decoding: The model performs autoregressive decoding (or uses a decoder transformer) to generate the most probable amino acid sequence for the given structure, one position at a time.

- Output & Evaluation: The output is a designed sequence. Its compatibility with the input scaffold should be validated with folding prediction tools like AlphaFold2.

Visualized Workflows

Workflow for Training & Applying Evolutionary Sequence Transformers (86 chars)

ESM-1v Zero-Shot Variant Effect Prediction Protocol (78 chars)

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for ESM Applications

| Item / Resource | Function & Purpose | Example / Source |

|---|---|---|

| ESM Model Weights | Pre-trained parameters enabling inference without costly training. Foundational for all applications. | Hugging Face Hub (facebook/esm2_t*), ESM GitHub repository. |

| UniRef Databases | Clustered sets of protein sequences from UniProt, providing the evolutionary data for training and analysis. | UniRef50, UniRef90, UniRef100 from UniProt. |

| PDB (Protein Data Bank) | Repository of experimentally determined 3D protein structures. Used for validation, fine-tuning, and inverse folding tasks. | RCSB PDB (rcsb.org). |

| PyTorch / Deep Learning Framework | The essential software environment for loading models, performing tensor operations, and running inference. | PyTorch 1.12+, NVIDIA CUDA drivers for GPU acceleration. |

| High-Performance Computing (HPC) Cluster or Cloud GPU | Running large models (e.g., ESM-2 15B) or processing bulk sequences requires significant GPU memory and compute. | NVIDIA A100/A6000 GPUs, AWS EC2 (p4d instances), Google Cloud TPU. |

| Sequence Alignment Tool (Optional for MSA models) | Generates multiple sequence alignments for input into models like MSA Transformer. | HH-suite, JackHMMER. |

| Structure Visualization & Analysis Software | To visualize protein structures for design and validation of predictions from ESM-IF1 or ESMFold. | PyMOL, ChimeraX, Jupyter with py3Dmol. |

| Variant Annotation Databases | For benchmarking zero-shot variant effect predictions against experimental data. | Deep Mutational Scanning (DMS) datasets, ClinVar, gnomAD. |

What is a Protein Embedding? Defining the Vector Representation of Biological Function

Within the thesis on Evolutionary Scale Modeling (ESM) for protein sequence embedding research, a protein embedding is defined as a fixed-dimensional, real-valued vector representation that encodes semantic, structural, and functional information about a protein sequence. Generated by deep learning models—particularly protein language models (pLMs) like the ESM family—these embeddings transform discrete amino acid sequences into a continuous vector space where geometric relationships (distance, direction) correspond to biological relationships (evolutionary divergence, functional similarity, structural homology).

Core Quantitative Performance Data

The efficacy of protein embeddings is benchmarked by their performance on predictive tasks. The following table summarizes key quantitative results from recent ESM model evaluations.

Table 1: Performance Benchmarks of ESM Model Embeddings on Standard Tasks

| Model (ESM Variant) | Parameters | Primary Training Data | Contact Prediction (Top-L/L) | Remote Homology Detection (Fold Classification Accuracy) | Function Prediction (Gene Ontology AUC) | Perplexity |

|---|---|---|---|---|---|---|

| ESM-1b | 650M | UniRef50 (29M seqs) | 0.32 | 0.81 | 0.78 | 3.60 |

| ESM-2 (15B) | 15B | UniRef50 (29M seqs) | 0.50 | 0.89 | 0.85 | 2.67 |

| ESM-2 (650M) | 650M | UniRef50 (29M seqs) | 0.41 | 0.85 | 0.82 | 3.07 |

| ESM-3 (98B) | 98B | Multidomain (1B+ seqs) | 0.62 | 0.92 | 0.91* | 1.89* |

| ESM-1v | 650M | UniRef90 (86M seqs) | 0.33 | 0.83 | 0.80 (Variant Effect) | N/A |

*Preliminary reported results; AUC for GO molecular function prediction.

Experimental Protocols for Utilizing Protein Embeddings

Protocol 3.1: Generating Embeddings from ESM-2 for a Novel Protein Sequence

Objective: To compute a per-residue and/or sequence-level embedding for a novel amino acid sequence using a pre-trained ESM-2 model. Materials: See "The Scientist's Toolkit" below. Procedure:

- Sequence Preparation: Obtain the protein amino acid sequence in single-letter code. Ensure it contains only the 20 standard amino acids. Truncate sequences longer than the model's maximum context length (e.g., 1024 for ESM-2 650M).

- Environment Setup: In a Python environment, install

fair-esmandPyTorch. Load the pre-trained ESM-2 model and its corresponding alphabet/tokenizer. - Tokenization & Batching: Convert the sequence to model tokens, adding a beginning-of-sequence (

<cls>) and end-of-sequence (<eos>) token. Create a batch tensor. - Forward Pass: Pass the batch through the model with

repr_layersset to the final layer (e.g., 33 for the 650M model). Setneed_head_weights=False. - Embedding Extraction:

- Sequence-level (

<cls>token): Extract the vector representation corresponding to the<cls>token from the specified layer's output. - Per-residue: Extract the vector representations for all residue positions (excluding special tokens).

- Sequence-level (

- Output: Save the resulting tensor(s) (size: [1, seq_len, 1280] for per-residue) as a NumPy array or PyTorch tensor for downstream analysis.

Protocol 3.2: Fine-tuning Embeddings for Protein Function Prediction

Objective: To adapt a pre-trained ESM model to predict Gene Ontology (GO) terms for uncharacterized proteins. Materials: See "The Scientist's Toolkit" below. Procedure:

- Dataset Curation: Assemble a dataset of protein sequences with annotated GO terms (e.g., from UniProt). Split into training, validation, and test sets, ensuring no homology leakage.

- Model Architecture: Use the pre-trained ESM model as a frozen or partially unfrozen encoder. Attach a multi-layer perceptron (MLP) classification head with a sigmoid output for multi-label prediction.

- Training Loop:

- Compute sequence embeddings via the encoder for each batch.

- Pass the

<cls>token embedding through the MLP head. - Calculate loss using Binary Cross-Entropy (BCEWithLogitsLoss).

- Optimize using AdamW with a low learning rate (e.g., 1e-4). Employ gradient accumulation for large batches.

- Evaluation: Monitor performance via area under the precision-recall curve (AUPR) and F-max on the validation set. Evaluate final model on the held-out test set.

Visualizations: Workflows and Logical Relationships

Title: From Sequence to Vector: ESM Embedding Generation Workflow

Title: ESM Embedding Pipeline: Pre-training to Application

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Protein Embedding Research & Application

| Item | Function & Explanation | Example/Provider |

|---|---|---|

| Pre-trained ESM Models | Frozen transformer models providing the core embedding function. Different sizes offer trade-offs between accuracy and computational cost. | ESM-2 (650M, 3B, 15B), ESM-3 (98B) from Meta AI (GitHub). |

| ESM Protein Language Model Library | Python package for loading models, tokenizing sequences, and extracting embeddings. | fair-esm (via PyTorch Hub or GitHub). |

| High-Quality Protein Sequence Database | Curated datasets for training, fine-tuning, and benchmarking. Provides biological ground truth. | UniProt (annotated sequences), UniRef (clustered), AlphaFold DB (structures). |

| Specialized Compute Hardware | Accelerates model inference and training. Essential for large models (ESM-2 15B, ESM-3). | NVIDIA GPUs (e.g., A100, H100) with >40GB VRAM. Cloud platforms (AWS, GCP, Azure). |

| Downstream Task Datasets | Benchmark datasets to evaluate embedding quality on specific biological problems. | Protein Data Bank (PDB) for structure, CAFA for function, DeepFri for ligand binding. |

| Vector Search Database | Enables efficient similarity search across millions of embedding vectors for annotation transfer. | FAISS (Facebook AI Similarity Search), Hnswlib, Pinecone. |

| Visualization & Analysis Suite | Tools for dimensionality reduction and clustering of embedding spaces to uncover patterns. | UMAP, t-SNE, scikit-learn, Matplotlib, Seaborn. |

Within the broader thesis on Evolutionary Scale Modeling (ESM) for protein sequence embedding research, three interconnected concepts form the methodological and philosophical foundation. Self-Supervised Learning (SSL) provides the framework for learning rich representations from unlabeled data, a necessity given the vastness of protein sequence space and the paucity of experimentally determined structures and functions. Masked Language Modeling (MLM) is the predominant SSL technique adapted from natural language processing (NLP) to the biological "language" of amino acids. The Evolutionary Scale provides the critical source of supervision—the inherent patterns and constraints learned from billions of years of evolution captured in multiple sequence alignments (MSAs) and vast sequence databases. Together, they enable the creation of deep contextual representations that encode structural, functional, and evolutionary information directly from primary sequences.

Core Conceptual Framework & Quantitative Landscape

Table 1: Foundational Concepts in Protein Language Modeling

| Concept | Core Principle | Application in Protein Research | Key Metric/Outcome |

|---|---|---|---|

| Self-Supervised Learning | Learning generalizable representations by solving "pretext" tasks on unlabeled data. | Leverages vast, growing protein sequence databases (e.g., UniRef) without need for manual annotation. | Representation quality assessed via zero-shot or few-shot performance on downstream tasks (e.g., structure prediction). |

| Masked Language Modeling | A SSL task where random tokens in an input are masked, and the model learns to predict them from context. | Models learn the statistical constraints and co-evolutionary patterns of amino acids in protein sequences. | Perplexity (lower is better) on held-out sequences; accuracy of masked residue recovery. |

| Evolutionary Scale | Utilizing the natural variation in homologous sequences across the tree of life as a source of information. | Provides the signal for learning which sequence positions are functionally or structurally critical (conservation) and which covary. | Effective number of sequences in alignment; evolutionary coverage. |

Table 2: Evolutionary Scale of Major Protein Language Models (PLMs)

| Model (Year) | Training Data Source | Approx. Number of Parameters | Training Sequences | Key Evolutionary Insight Captured |

|---|---|---|---|---|

| ESM-2 (2022) | UniRef50 (clustered at 50% identity) | 650M to 15B | ~65 million | Single-sequence inference captures information traditionally requiring explicit MSAs. |

| ESMFold (2022) | UniRef50 | 15B | ~65 million | Demonstrated that scale (model size + data) enables high-accuracy structure prediction from one sequence. |

| ProtT5 (2021) | BFD100, UniRef50 | 3B (Encoder) | ~2.1 billion (BFD) | Leverages encoder-decoder architecture for tasks like mutation effect prediction. |

| AlphaFold2 (2021) | MSAs from UniRef90, BFD, etc. | ~21M (Evoformer) | Tens of millions of MSAs | Explicitly uses MSAs and pair representations; not a pure single-sequence PLM but sets performance benchmark. |

Application Notes & Experimental Protocols

Protocol 1: Generating Protein Sequence Embeddings Using ESM-2

Purpose: To extract per-residue and sequence-level embeddings from a protein sequence using a pre-trained ESM-2 model for downstream tasks (e.g., fitness prediction, contact mapping).

Materials & Workflow:

- Input: Protein amino acid sequence (single-letter code, canonical 20 amino acids).

- Environment Setup:

- Python 3.8+, PyTorch, fairseq, or the

transformerslibrary (if available for the model). - Install ESMP:

pip install fair-esm

- Python 3.8+, PyTorch, fairseq, or the

- Procedure:

a. Load Model and Tokenizer:

b. Prepare Data:

c. Generate Embeddings:

d. Process Embeddings:

- Per-residue: Remove embeddings for

<cls>,<eos>, and<pad>tokens. Thebatch_tokensprovide the mapping. - Per-protein: Pool residue embeddings (e.g., mean) or use the

<cls>token representation if the model provides it.

- Per-residue: Remove embeddings for

- Output: A 2D tensor of shape (sequencelength, embeddingdimension) for residues, or a 1D tensor for the whole sequence.

Note: Embeddings are context-sensitive. Always use the full native sequence for embedding generation.

Protocol 2: Zero-Shot Prediction of Mutation Effects (Fitness Prediction)

Purpose: To assess the functional impact of amino acid substitutions without training on labeled mutant data, using the MLM head of a PLM.

Materials: Pre-trained PLM with MLM head (e.g., ESM-1v, a model trained for variant prediction), wild-type sequence, list of mutations.

Procedure:

- Tokenize Wild-type Sequence.

- For each mutation (e.g., M1K):

a. Create a masked sequence where the target position token is replaced with the mask token.

b. Pass the masked sequence through the model.

c. Extract the logits for the masked position from the MLM head.

d. Calculate the log-odds score:

log2( p(mutant) / p(wild-type) )using the softmax probabilities from the logits. A positive score suggests the mutation is likely tolerated/beneficial; negative suggests deleterious. - Aggregate scores across multiple mutations (e.g., for a multi-mutant variant).

Validation: Benchmark scores against deep mutational scanning (DMS) experimental data using Spearman's rank correlation.

Table 3: Research Reagent Solutions Toolkit

| Item | Function/Application | Example/Notes |

|---|---|---|

| Pre-trained PLMs (ESM-2, ProtT5) | Foundational models for feature extraction. Provide rich, contextual sequence representations. | Available from GitHub (ESM) or HuggingFace Hub. Choose model size based on compute. |

| Protein Sequence Databases | Source of unsupervised training data and evolutionary information. | UniRef (clustered), UniProtKB (annotated), BFD/Big Fantastic Database. |

| Structure Prediction Suites | For validating embeddings via predicted structural metrics. | ESMFold (fast, single-sequence), AlphaFold2/3 (MSA-based, high accuracy). |

| DMS Benchmark Datasets | Experimental data for evaluating fitness/function predictions. | ProteinGym, FireProtDB. Used for zero-shot and fine-tuning validation. |

| MSA Generation Tools | To provide evolutionary context for analysis or for training/tuning other models. | HHblits, Jackhmmer, MMseqs2. Compute-intensive but gold standard. |

| Fine-tuning Frameworks | To adapt foundational PLMs to specific downstream tasks (e.g., solubility, localization). | PyTorch Lightning, HuggingFace Transformers Trainer API. |

Visualizations

Diagram 1: Conceptual workflow from sequence to task

Diagram 2: Masked language modeling training step

Diagram 3: Evolutionary knowledge implicit in PLM predictions

Within the broader thesis on leveraging Evolutionary Scale Modeling (ESM) for protein sequence embedding research, efficient access to pre-trained models is foundational. The ESM model hub, hosted primarily on platforms like GitHub and Hugging Face, provides standardized, version-controlled repositories of models ranging from ESM-2 (8M to 15B parameters) to specialized variants like ESMFold. This document outlines protocols for accessing, loading, and applying these models for research and drug development applications.

The ESM suite offers models of varying scales, enabling trade-offs between computational cost and predictive performance. Key quantitative metrics are summarized below.

Table 1: Overview of Major Pre-trained ESM Models (ESM-2 Series)

| Model Name | Parameters | Layers | Embedding Dimension | Context (Tokens) | Recommended Use Case |

|---|---|---|---|---|---|

| ESM-2 8M | 8 Million | 6 | 320 | 1024 | Rapid prototyping, educational purposes |

| ESM-2 35M | 35 Million | 12 | 480 | 1024 | Medium-scale sequence embedding, mutational effect screening |

| ESM-2 150M | 150 Million | 30 | 640 | 1024 | High-accuracy residue-level predictions, contact map inference |

| ESM-2 650M | 650 Million | 33 | 1280 | 1024 | State-of-the-art contact & structure prediction, robust embeddings |

| ESM-2 3B | 3 Billion | 36 | 2560 | 1024 | Cutting-edge research, ensemble leader, detailed functional site analysis |

| ESM-2 15B | 15 Billion | 48 | 5120 | 1024 | Maximum accuracy for structure (ESMFold), complex phenotype prediction |

Table 2: Performance Benchmarks (Representative Tasks)

| Model (Size) | PDB Contact Map Top-L/L Accuracy | Fluorescence Landscape Spearman's ρ | Stability Prediction (Spearman's ρ) | Inference Speed (Sequences/sec)* |

|---|---|---|---|---|

| ESM-2 8M | 0.12 / 0.05 | 0.28 | 0.31 | ~220 (CPU) |

| ESM-2 150M | 0.49 / 0.27 | 0.68 | 0.59 | ~45 (CPU) |

| ESM-2 650M | 0.77 / 0.55 | 0.83 | 0.71 | ~12 (GPU: V100) |

| ESM-2 3B | 0.84 / 0.66 | 0.85 | 0.75 | ~5 (GPU: V100) |

| ESM-2 15B | 0.88 / 0.74 | 0.87 | 0.78 | ~1 (GPU: A100) |

*Speed is approximate and depends on hardware and sequence length (example: 100-300 aa).

Core Protocols for Accessing and Utilizing Models

Protocol 3.1: Initial Setup and Environment Configuration

Objective: To create a reproducible Python environment for accessing ESM models.

- Create and activate a new conda environment:

conda create -n esm_research python=3.9 -yfollowed byconda activate esm_research. - Install core dependencies via pip:

- Verify installation by importing in Python:

import esm, torch.

Protocol 3.2: Direct Model Loading from the Hugging Face Hub

Objective: To load a pre-trained ESM model and its associated tokenizer using the Hugging Face transformers library.

- Import necessary modules.

- Specify the model identifier from the Hugging Face hub (e.g.,

"facebook/esm2_t6_8M_UR50D"). - Load the tokenizer and model.

- The model is now ready for inference (see Protocol 3.4).

Protocol 3.3: Loading Models via the OfficialesmPython Package

Objective: To load models using the native esm library, which offers specialized functions for biological tasks.

- Import the

esmpackage. - Load a model and its alphabet (handles tokenization).

- Prepare sequence data as a list of tuples (identifier, sequence).

- Use the

batch_converterto tokenize and prepare the batch.

Protocol 3.4: Protocol for Extracting Per-Residue Embeddings

Objective: To generate a vector embedding for each amino acid residue in a protein sequence, useful for downstream prediction tasks.

- Follow Protocol 3.3 steps 1-4 to load the model and tokenize sequences.

- Ensure no gradient computation for inference.

- Extract the embeddings from the specified layer.

- Generate per-residue embeddings by excluding padding and special tokens.

Protocol 3.5: Protocol for Contact Map Prediction

Objective: To predict the likelihood of amino acid pairs being in contact in the 3D structure.

- Load a model trained for or capable of contact prediction (e.g., ESM-2 650M or larger).

- Tokenize a single sequence (batch size of 1 for simplicity).

- Run the model with the

contacts=Trueargument. - Extract the contact map prediction.

Visualization of Workflows and Relationships

Title: ESM Model Access and Application Workflow

Title: ESM Model Input-Output and Application Pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for ESM-Based Research

| Item / Solution | Function / Purpose | Example / Specification |

|---|---|---|

| Pre-trained Model Weights | Core predictive function. Downloaded from official repositories. | esm2_t33_650M_UR50D.pt from GitHub releases or Hugging Face. |

| Model Alphabet (Tokenizer) | Converts amino acid sequences into numerical token IDs. Handles special tokens and padding. | esm.pretrained.load_model_and_alphabet() returns the alphabet object. |

| GPU Computing Instance | Accelerates model inference and training of downstream models. | AWS p3.2xlarge (V100), Google Cloud A2 (A100), or local NVIDIA GPU with >=16GB VRAM for larger models. |

| Sequence Dataset (FASTA) | Input data for embedding extraction or fine-tuning. | UniProt/Swiss-Prot canonical sequences, or custom mutant libraries in FASTA format. |

| Fine-tuning Dataset (Labeled) | For supervised task adaptation (e.g., stability, fluorescence). | CSV/TSV files with columns: sequence, label. |

| Embedding Storage Format | Efficient storage of high-dimensional embeddings for analysis. | Hierarchical Data Format (HDF5) or NumPy memory-mapped arrays (.npy). |

| Dimensionality Reduction Tool | Visualization and analysis of embedding spaces. | UMAP (umap-learn) or t-SNE (sklearn.manifold.TSNE). |

| Downstream ML Library | Building predictors on top of frozen embeddings. | Scikit-learn, PyTorch Lightning, or XGBoost. |

Practical Guide: Implementing ESM Embeddings for Protein Analysis

Within the broader thesis on advancing protein sequence embedding research, the ability to efficiently load and utilize pre-trained Evolutionary Scale Modeling (ESM) models is foundational. These models, trained on millions of diverse protein sequences, provide powerful, context-aware residue-level and sequence-level representations that serve as input features for downstream tasks in computational biology and drug development, such as function prediction, structure inference, and variant effect analysis. This protocol details the precise steps for loading the esm2_t33_650M_UR50D model, a 650-million parameter transformer with 33 layers, offering a balance between representational power and computational feasibility for many research settings.

Prerequisites & Environment Setup

Research Reagent Solutions

| Reagent/Solution | Function in Experiment | Specification/Notes |

|---|---|---|

| PyTorch | Deep learning framework for model loading and tensor operations. | Version 1.11+ recommended. CUDA support required for GPU acceleration. |

| fairseq | Facebook AI Research Sequence-to-Sequence Toolkit. Originally housed ESM models. | Now primarily used for legacy model loading. |

esm Python Package |

Official package for the ESM family of models. | Provides simplified, PyTorch-focused model loaders and utilities. |

| Biological Sequence Data | Input for the model. | Protein sequences in standard amino acid one-letter code (e.g., "MKTV..."). |

| High-Performance Compute (HPC) Environment | Provides resources for model inference. | GPU (e.g., NVIDIA A100, V100) with >16GB VRAM recommended for larger models. |

| Tokenizer (Integrated) | Converts amino acid sequences to model-compatible token indices. | Built into the esm package; maps residues to vocabulary indices. |

Installation Protocol

Core Protocol: Loading the ESM2 Model

Step-by-Step Code Implementation

Model Performance and Specification Data

Table 1: Key Specifications of Selected ESM2 Models

| Model Identifier | Parameters (M) | Layers | Embedding Dim | Training Tokens (B) | Recommended VRAM (GB) |

|---|---|---|---|---|---|

| esm2t1235M_UR50D | 35 | 12 | 480 | 1.1 | < 2 |

| esm2t30150M_UR50D | 150 | 30 | 640 | 10.0 | ~ 4 |

| esm2t33650M_UR50D | 650 | 33 | 1280 | 25.0 | ~ 16 |

| esm2t363B_UR50D | 3000 | 36 | 2560 | 65.0 | > 32 |

| esm2t4815B_UR50D | 15000 | 48 | 5120 | 65.0 | > 80 |

Table 2: Inference Benchmarks for esm2_t33_650M_UR50D (A100-SXM4-40GB)

| Batch Size | Sequence Length | Inference Time (s) | Peak GPU Memory (GB) |

|---|---|---|---|

| 1 | 128 | 0.08 | 1.5 |

| 4 | 256 | 0.21 | 4.2 |

| 8 | 512 | 0.89 | 14.1 |

| 2 | 1024 | 0.65 | 11.8 |

Advanced Experimental Protocols

Protocol A: Extracting Embeddings from Specific Layers

Different layers capture different levels of information (e.g., lower layers for local structure, higher layers for remote homology). This protocol details multi-layer extraction.

Protocol B: Computing Contact Maps from Attention Maps

Attention maps from the transformer layers can be used to predict residue-residue contacts, informing structural hypotheses.

Protocol C: Fine-tuning ESM2 for a Downstream Prediction Task

This protocol outlines the initial setup for supervised fine-tuning on a custom dataset (e.g., fluorescence prediction).

Visual Workflow

ESM2 Model Loading and Inference Workflow

Information Flow in the ESM2 Transformer Model

Within the broader thesis on Evolutionary Scale Modeling (ESM) for protein sequence embedding research, generating embeddings is a foundational task. ESM models, pre-trained on millions of diverse protein sequences, learn deep contextual representations. Per-residue embeddings capture the structural and functional context of each amino acid, while per-sequence embeddings provide a holistic, fixed-dimensional representation of the entire protein, essential for downstream tasks like protein classification, fitness prediction, and drug target identification.

Current Model Landscape & Performance Data

A live search reveals the current state-of-the-art ESM models and their key characteristics. Performance metrics like accuracy on structure prediction or variant effect benchmarks illustrate their predictive power.

Table 1: Key ESM Model Variants and Capabilities (2024)

| Model Name (Release) | Parameters | Embedding Dimension (Per-Residue) | Max Context | Primary Use Case & Notable Performance |

|---|---|---|---|---|

| ESM-3 (2024) | 98M to 15B | 2560 (for 15B) | 4000 | State-of-the-art structure & function prediction. Outperforms ESM-2 on structure benchmarks. |

| ESM-2 (2022) | 8M to 15B | 1280 (for 15B) | 1024 | General-purpose residue-level representation. Achieved 0.787 TM-score on CAMEO. |

| ESM-1v (2021) | 690M | 1280 | 1024 | Variant effect prediction. Top performer on deep mutational scanning benchmarks. |

| ESM-1b (2021) | 650M | 1280 | 1024 | Established baseline for many downstream tasks. |

| ESMFold (2022) | 670M | 1280 | 1024 | End-to-end single-sequence structure prediction. Comparable to AlphaFold2 on some targets. |

Table 2: Comparative Embedding Generation Speed (Approximate)

| Model Size | Hardware (GPU) | Time per 100 residues (Per-Residue) | Time per Sequence (Per-Sequence, ~300aa) |

|---|---|---|---|

| ESM-2 8M | NVIDIA A100 | ~10 ms | ~30 ms |

| ESM-2 650M | NVIDIA A100 | ~50 ms | ~150 ms |

| ESM-2 3B | NVIDIA A100 | ~200 ms | ~600 ms |

| ESM-3 15B | NVIDIA H100 | ~500 ms | ~1.5 s |

Experimental Protocols

Protocol 1: Generating Per-Residue Embeddings with ESM-2/3

Objective: Extract a contextualized embedding vector for each amino acid position in a protein sequence.

Research Reagent Solutions:

- Model Weights (

esm.pth): Pre-trained parameters of the ESM model. Function: Contains the learned biological knowledge. - ESM Python Library (

esm): Official PyTorch-based package. Function: Provides model loading, sequence tokenization, and inference utilities. - FASTA File: Contains the target protein sequence(s). Function: Input data source.

- PyTorch & CUDA: Deep learning framework and parallel computing platform. Function: Enables efficient tensor computations on GPU.

Methodology:

- Environment Setup: Install

pip install fair-esmandtorch. - Model Loading: Select the appropriate model (e.g.,

esm2_t33_650M_UR50D). - Sequence Preparation: Tokenize the input sequence(s), adding start (

<cls>) and end (<eos>) tokens. - Inference: Pass tokenized sequences through the model in inference mode (

model.eval()) withtorch.no_grad(). - Embedding Extraction: The model's final layer output (or a specified internal layer) provides the

(batch_size, sequence_length, embedding_dim)tensor of per-residue embeddings.

Protocol 2: Generating Per-Sequence Embeddings

Objective: Derive a single, global embedding vector that represents the entire protein sequence.

Research Reagent Solutions:

- Per-Residue Embeddings Tensor: Output from Protocol 1. Function: The basis for pooling.

- Pooling Function: Operation (e.g., mean, attention) to aggregate residue vectors. Function: Creates a fixed-size sequence-level representation.

Methodology:

- Generate Per-Residue Embeddings: Follow Protocol 1 to obtain the

sequence_representationstensor. - Apply Pooling Operation:

- Mean Pooling: Compute the mean over the sequence length dimension. Most common and robust.

- Attention Pooling: Use a learned attention mechanism to weight residues.

<cls>Token: Use the embedding at the special start token position (index 0), which is trained for sequence-level tasks.

Protocol 3: Benchmarking Embeddings on a Downstream Task (Protein Family Classification)

Objective: Evaluate the quality of generated embeddings by predicting protein family from embeddings using a simple classifier.

Research Reagent Solutions:

- Embedding Dataset: Pre-computed per-sequence embeddings for labeled proteins (e.g., from Swiss-Prot). Function: Training and testing data.

- Scikit-learn: Machine learning library. Function: Provides logistic regression/ SVM for rapid benchmarking.

- Evaluation Metrics (Accuracy, F1-score): Quantitative performance measures. Function: Assess embedding discriminative power.

Methodology:

- Data Preparation: Generate per-sequence embeddings for a labeled dataset (e.g., PFAM). Split into train/validation/test sets.

- Classifier Training: Train a logistic regression classifier on the training embeddings and labels.

- Evaluation: Predict on the held-out test set and calculate accuracy.

Visualizations

Workflow for Generating Embeddings with ESM

Downstream Applications of Protein Embeddings

- Model Selection: Use ESM-3 for cutting-edge performance, ESM-2 for balanced speed/accuracy, and ESM-1v for variant effect studies.

- Hardware Considerations: Larger models (3B+ parameters) require significant GPU memory (≥16GB). Optimize batch size accordingly.

- Reproducibility: Set random seeds for PyTorch (

torch.manual_seed) and NumPy. Save embeddings with metadata (model version, pooling method). - Pooling Choice: For per-sequence embeddings, mean pooling is recommended over the

<cls>token for generalizability across tasks. - Data Leakage: Ensure sequences in benchmark datasets are not in the model's pre-training data. Use provided splits or perform strict homology partitioning.

- Interpretation: Per-residue embeddings can be used for attention analysis or saliency maps to identify functionally important residues.

Within the broader thesis exploring Evolutionary Scale Modeling (ESM) for protein sequence embeddings, this application note addresses a central challenge in computational biology: the accurate, high-throughput prediction of protein function. ESM models, pre-trained on millions of diverse protein sequences, generate deep contextual embeddings that capture structural and functional constraints. This note details how these embeddings serve as superior input features for machine learning models tasked with annotating proteins with Gene Ontology (GO) terms, bypassing the need for explicit structural or evolutionary linkage data.

Application Notes

Protein function prediction models leverage ESM embeddings as fixed feature vectors. State-of-the-art approaches involve fine-tuning the embeddings or using them as input to specialized neural network architectures. Performance is benchmarked using standardized metrics on datasets like CAFA (Critical Assessment of Function Annotation). Key advantages include:

- Sequence-Only Input: Requires only the amino acid sequence, enabling function prediction for novel proteins with no homologs of known function.

- Rich Feature Representation: Embeddings implicitly encode physicochemical properties, secondary structure, and residue-residue interactions.

- Multi-Label Prediction: Models are trained to predict hundreds or thousands of GO terms across the Molecular Function (MF), Biological Process (BP), and Cellular Component (CC) ontologies simultaneously.

Quantitative Performance Summary (Representative Models)

Table 1: Performance comparison of ESM-embedding-based function prediction models on CAFA3 benchmark.

| Model / Method | Embedding Source | Max F1 (BP) | Max F1 (MF) | Max F1 (CC) | Key Architectural Innovation |

|---|---|---|---|---|---|

| DeepGOPlus (Baseline) | PSI-BLAST Profiles | 0.39 | 0.53 | 0.61 | CNN on sequence & homology |

| TALE | ESM-1b (Layer 33) | 0.45 | 0.58 | 0.68 | Transformer on embeddings & sequence |

| ESM-GO | ESM-2 (8M-35) | 0.51 | 0.64 | 0.72 | Fine-tuning ESM-2 with GO-specific heads |

| GOFormer | ESM-2 (650M) | 0.54 | 0.66 | 0.74 | Graph Transformer over GO hierarchy |

Note: F1 scores are the maximum achieved over the precision-recall curve. Data synthesized from CAFA3 assessments and recent publications (2022-2024).

Experimental Protocols

Protocol 1: Training a GO Term Prediction Model Using Pre-computed ESM Embeddings

Objective: To train a multi-label classifier for GO term annotation using fixed protein embeddings from ESM-2.

Materials: See "Scientist's Toolkit" below.

Procedure:

- Data Curation:

- Download a curated protein-GO term annotation dataset (e.g., from UniProt).

- Split data into training, validation, and test sets strictly by protein sequence similarity (<30% identity) to avoid homology bias.

- Filter GO terms to those with sufficient annotations (e.g., ≥50 training examples).

- Feature Generation:

- For each protein sequence in the datasets, generate an embedding using the

esm.pretrained.esm2_t33_650M_UR50D()model. - Extract the per-residue embeddings and compute the mean representation across the sequence to obtain a single 1280-dimensional feature vector per protein.

- Save vectors as NumPy arrays or PyTorch tensors.

- For each protein sequence in the datasets, generate an embedding using the

- Model Architecture & Training:

- Implement a neural network with:

- Input Layer: (1280 dimensions)

- Hidden Layers: 2-3 fully connected layers (e.g., 1024, 512 units) with ReLU activation and Dropout (p=0.3-0.5).

- Output Layer: Sigmoid-activated neurons equal to the number of filtered GO terms.

- Use Binary Cross-Entropy (BCE) loss with label smoothing.

- Optimize using AdamW optimizer (lr=1e-4) with early stopping based on validation loss.

- Implement a neural network with:

- Evaluation:

- Predict on the held-out test set.

- Calculate per-term and overall precision, recall, and F1 score across varying prediction thresholds.

- Generate Precision-Recall curves and compute the area under the curve (AUPR).

Protocol 2: Zero-Shot Function Prediction via Embedding Similarity

Objective: To infer putative GO terms for a novel protein by finding proteins with similar ESM embeddings in an annotated database.

Procedure:

- Reference Database Construction:

- Pre-compute and index ESM-2 mean embeddings for all proteins in a comprehensive database like Swiss-Prot.

- Query Processing:

- For a novel query protein sequence, compute its ESM-2 mean embedding (as in Protocol 1, Step 2).

- Similarity Search & Inference:

- Perform a k-nearest neighbor (k-NN) search (e.g., k=50) against the indexed reference embeddings using cosine similarity.

- Aggregate the GO annotations of the k nearest neighbors.

- Assign GO terms to the query protein based on a weighted score (e.g., sum of cosine similarities for each term) and apply a significance threshold.

Mandatory Visualization

Title: ESM Embedding Pipeline for GO Prediction

Title: GO Ontology Structure and Model Prediction Targets

The Scientist's Toolkit

Table 2: Essential Research Reagents and Tools for ESM-based Function Prediction

| Item / Resource | Function / Purpose | Example / Source |

|---|---|---|

| ESM-2 Model Weights | Provides the pre-trained transformer to generate protein sequence embeddings. | Available via Hugging Face transformers or Facebook Research's esm Python package. |

| GO Annotation Database | Serves as the ground truth for training and evaluation. | UniProt-GOA, Gene Ontology Consortium releases. |

| Curated Benchmark Datasets | Enables standardized training/testing with non-homologous splits. | CAFA challenge datasets, DeepGO datasets. |

| Deep Learning Framework | Provides environment for building, training, and evaluating neural network models. | PyTorch (recommended for ESM compatibility) or TensorFlow. |

| High-Performance Compute (HPC) | Accelerates embedding generation and model training. | GPU clusters (NVIDIA A100/V100) with ≥32GB VRAM for large models. |

| Embedding Search Index | Enables fast similarity searches for zero-shot prediction. | FAISS library (Facebook AI Similarity Search) for k-NN. |

| GO Term Slims | Reduced, high-level GO sets for more generalizable interpretation of results. | GO Consortium slims (e.g., generic, metazoan). |

| Evaluation Metrics Code | Calculates standard metrics for multi-label classification. | sklearn.metrics (precisionrecallcurve, f1_score), CAFA evaluation scripts. |

Application Notes

Within the broader thesis investigating ESM (Evolutionary Scale Modeling) models for protein sequence embeddings, the prediction of protein-protein interactions (PPIs) from sequence alone represents a critical downstream application. This task leverages the rich, context-aware representations learned by models like ESM-2 and ESMFold, which encapsulate evolutionary, structural, and functional information. The core premise is that the embeddings of two protein sequences, when combined and processed by a dedicated classifier, can indicate the likelihood of a physical or functional interaction. This capability is transformative for drug development, enabling the large-scale mapping of interactomes to identify novel drug targets, understand side-effect mechanisms, and elucidate disease pathways. Unlike methods reliant on known 3D structures or laborious experimental assays, sequence-based PPI prediction using ESM embeddings offers scalability and speed, applicable to any organism with genomic data.

Key Methodological Approaches

Current state-of-the-art methods typically follow a two-stage framework:

- Embedding Generation: Protein sequences are passed through a pre-trained ESM model to obtain per-residue or pooled (per-protein) embeddings.

- Interaction Prediction: Embeddings for a pair of proteins are combined (e.g., concatenated, element-wise product/difference) and fed into a neural network classifier (e.g., Multi-Layer Perceptron) to predict an interaction score.

Recent advancements focus on refining the pairing architecture and incorporating auxiliary information. Methods now often employ cross-attention mechanisms or transformer encoders to model the joint representation of the protein pair explicitly, rather than using simple concatenation. Furthermore, integrating embeddings from multiple ESM layers or combining them with predicted structural features (e.g., from ESMFold) has been shown to boost performance.

Performance Landscape

The following table summarizes the performance of selected ESM-based PPI prediction methods on standard benchmarks:

Table 1: Performance Comparison of ESM-based PPI Prediction Methods

| Method Name | Core Architecture | Benchmark Dataset(s) | Key Metric & Performance | Key Innovation |

|---|---|---|---|---|

| Embedding Concatenation + MLP | ESM-2 embeddings concatenated, processed by MLP | DSCRIPT benchmark (S. cerevisiae, human) | Average AUPR: ~0.75 | Baseline approach, simple and effective. |

| ESM-2 + Cross-Attention | ESM-2 embeddings processed by protein-pair cross-attention transformer | STRING (H. sapiens, multiple species) | Average AUROC: ~0.92 | Models interdependencies between protein pairs dynamically. |

| Multiscale ESM-GNN | Combines residue- and protein-level ESM-2 embeddings with Graph Neural Network (GNN) | BioGRID, HuRI (human) | F1-Score: ~0.87 | Integrates multi-scale information and network context. |

| ESMFold + Interface Prediction | Uses ESMFold to predict structure, then scores putative interfaces | Novel complex prediction (sketching) | DockQ Score (Top-1): >0.23 in 12.8% of cases | Moves towards structural explanation of interaction. |

AUPR: Area Under Precision-Recall Curve; AUROC: Area Under Receiver Operating Characteristic Curve. Performance is approximate and dataset-dependent.

Experimental Protocols

Protocol 1: Training a Binary PPI Classifier Using ESM-2 Embeddings

Objective: To train a neural network model that predicts whether two proteins interact, using fixed embeddings from ESM-2.

Materials & Software:

- Pre-trained ESM-2 model (e.g.,

esm2_t33_650M_UR50D). - PPI dataset with positive (interacting) and negative (non-interacting) pairs (e.g., from STRING or BioGRID).

- Python 3.8+, PyTorch, PyTorch Lightning, biopython, scikit-learn.

- GPU-enabled workstation (recommended).

Procedure:

Data Preparation:

- Download a curated PPI dataset. Ensure negative pairs are rigorously defined (e.g., proteins from different subcellular compartments).

- Split data into training, validation, and test sets (e.g., 70/15/15), ensuring no protein overlap between sets to avoid evaluation bias.

Embedding Extraction:

- For each unique protein sequence in the dataset, tokenize and pass it through the ESM-2 model.

- Extract the embeddings from the last layer (or a specific layer). Use the mean pooling of residue representations to create a single 1280-dimensional vector per protein.

- Store the embeddings in a dictionary keyed by protein ID.

Dataset and Model Construction:

- Create a PyTorch Dataset that, for each protein pair (A, B, label), retrieves their pre-computed embeddings.

- The model (

PPIMLP) should: a. Accept two embedding vectors (EA, EB). b. Combine them via a learned operation:combined = torch.cat([E_A, E_B, torch.abs(E_A - E_B), E_A * E_B], dim=-1). c. Pass the combined vector through 3-5 linear layers with ReLU activation and dropout. d. Output a single logit for binary classification.

Training and Evaluation:

- Train the model using binary cross-entropy loss and the AdamW optimizer.

- Monitor the validation AUROC/AUPR. Apply early stopping.

- Evaluate the final model on the held-out test set and report standard metrics (Precision, Recall, F1, AUROC, AUPR).

Protocol 2: Structure-Informed PPI Prediction Using ESMFold

Objective: To predict PPIs and generate a putative structural model of the interaction complex.

Materials & Software:

- ESMFold model.

- ColabFold or AlphaFold2 (for potential complex refinement).

- Computational cluster with high-performance GPU and >50GB RAM.

Procedure:

- Input Pair Selection: Select a pair of candidate interacting proteins.

- Monomer Structure Prediction:

- Run ESMFold individually on each protein sequence to generate predicted structures (PDB files) and per-residue confidence (pLDDT) scores.

- Docking and Interface Analysis (Sketching):

- Use a fast docking algorithm (e.g., based on geometric hashing or diffusion) to generate multiple possible complexes.

- For each docked pose, score the interface using metrics derived from the ESMFold outputs: a. pDockQ: Calculate the average pLDDT of residues within 10Å of the partner chain. Proposes >0.23 suggests a plausible model. b. Interface pTM: Adapt the predicted TM-score to the interface region.

- Rank poses by the composite interface score.

- Validation (Optional):

- If a known complex structure exists (e.g., in PDB), compare the top-ranked model to it using DockQ or TM-score.

- Perform mutagenesis in silico: simulate point mutations at the predicted interface and assess the change in predicted binding affinity (e.g., with

foldxorrosetta).

Mandatory Visualization

Title: ESM-2-based PPI Prediction Workflow

Title: Structure-informed PPI Prediction Pipeline

The Scientist's Toolkit

Table 2: Essential Research Reagents & Tools for PPI Prediction from Sequence

| Item | Category | Function in PPI Prediction |

|---|---|---|

| Pre-trained ESM Models (ESM-2, ESMFold) | Software/Model | Provides foundational protein sequence embeddings rich in evolutionary and structural information. The core feature generator. |

| STRING Database | Data Resource | Comprehensive repository of known and predicted PPIs, used as a gold-standard source for training and benchmarking. |

| BioGRID Database | Data Resource | Curated biological interaction repository with a focus on physical and genetic interactions from high-throughput studies. |

| PyTorch / PyTorch Lightning | Software Framework | Enables flexible construction, training, and deployment of neural network models for the interaction classifier. |

| AlphaFold2 / ColabFold | Software | Used for comparative analysis or refinement of ESMFold-predicted complex structures. Provides state-of-the-art structural accuracy. |

| DockQ | Software/Metric | Standardized metric for evaluating the quality of predicted protein-protein complex structures against a native reference. |

| PLIP (Protein-Ligand Interaction Profiler) | Software Tool | Can be adapted to analyze predicted protein-protein interfaces, detailing contacting residues and interaction types (H-bonds, salt bridges). |

| High-Performance GPU Cluster | Hardware | Essential for running large ESM models, extracting embeddings for whole proteomes, and performing structure predictions at scale. |

Within the broader thesis exploring ESM models for protein sequence embedding research, this application addresses a central challenge in genomic medicine: predicting the functional impact of protein-coding variants. ESM-1v (Evolutionary Scale Modeling-1 Variant), a 650M parameter model trained on UniRef90, represents a paradigm shift from traditional evolutionary conservation scores. It leverages deep learned representations to score the likelihood of amino acid substitutions in a zero-shot manner, without multiple sequence alignments or explicit structural data. This section details its application as a high-throughput in silico assay for missense mutation pathogenicity.

Core Mechanism & Validation Performance

ESM-1v calculates the log-likelihood of a mutated sequence relative to the wild-type. The model masks the residue at the variant position and compares the pseudo-log-likelihoods (PLLs) for all possible amino acids. The variant effect score is typically the difference in PLL between the mutant and wild-type residues. Empirical validation demonstrates state-of-the-art performance on multiple benchmark datasets.

Table 1: Performance Summary of ESM-1v on Benchmark Datasets

| Dataset | Description | Key Metric | ESM-1v Performance | Comparative Baseline (e.g., EVE) |

|---|---|---|---|---|

| DeepMut | Saturated mutagenesis of 10 proteins (fly & human) | Spearman's ρ (average) | 0.70 | 0.68 |

| ProteinGym | 87 DMS assays across diverse proteins | Mean Spearman's ρ (supervised) | 0.48 | 0.46 (EVE) |

| Clinical (ClinVar) | Pathogenic vs. benign missense variants | AUROC | 0.89 | 0.86 (CADD) |

| BLAT (E. coli) | Bacterial DMS assays for essential genes | Spearman's ρ | 0.51 | 0.41 (EVE) |

Detailed Experimental Protocol

Protocol 3.1: Scoring Missense Variants with ESM-1v

Objective: To compute the effect score for a given missense mutation using a pre-trained ESM-1v model.

Materials:

- Hardware: Computer with CUDA-capable GPU (≥8GB VRAM recommended).

- Software: Python (≥3.8), PyTorch, transformers library (Hugging Face), esm library (Facebook Research).

- Input Data: Wild-type protein sequence (FASTA format), list of mutations in 'M

' format (e.g., 'M128V').

Procedure:

- Environment Setup:

Load Model and Tokenizer:

Prepare Sequence and Mutation Data:

Compute Wild-type Log-Likelihoods:

Compute Mutant Scores:

A negative score suggests the mutation is less likely and potentially deleterious.

Protocol 3.2: Saturation Mutagenesis Scan

Objective: To predict the effect of all possible single amino acid substitutions across a protein region of interest.

Procedure: Extend Protocol 3.1 by iterating over all 19 possible mutations at each residue position in the target region. Output is best visualized as a heatmap (position x amino acid) of effect scores.

Visual Workflow

ESM1v Variant Scoring Workflow

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Toolkit for ESM-1v Variant Effect Prediction

| Item | Function/Description | Example/Provider |

|---|---|---|

| Pre-trained ESM-1v Model | Core deep learning model for sequence likelihood estimation. Loads via esm.pretrained. |

esm1v_t33_650M_UR90S_1 (Facebook Research) |

| High-Performance GPU | Accelerates model inference, essential for scanning many variants or full proteins. | NVIDIA A100, V100, or RTX 4090 (≥8GB VRAM) |

| Variant Benchmark Datasets | For validation and calibration of predictions against experimental data. | ProteinGym, DeepMut, ClinVar, BLAT |

| Python BioML Stack | Core programming environment and libraries. | PyTorch, Transformers, ESM, NumPy, Pandas |

| Variant Annotation Tools | To contextualize predictions with population frequency, conservation, etc. | Ensembl VEP, SnpEff (for integrated pipelines) |

| Visualization Library | For generating score heatmaps and publication-quality figures. | Matplotlib, Seaborn, Plotly |

| Structured Data Storage | For managing large-scale variant predictions and metadata. | SQLite, HDF5, or PostgreSQL database |

Integrating ESM with ESMFold for High-Accuracy Protein Structure Prediction

Application Notes

Within the broader thesis exploring ESM models for protein sequence embedding, the integration of the Evolutionary Scale Model (ESM) as a foundational language model with the ESMFold structure prediction module represents a paradigm shift. This approach leverages deep, unsupervised learning on millions of protein sequences to infer structural and functional properties directly from primary amino acid sequences. The core innovation is the use of ESM-2, a transformer-based protein language model, to generate high-quality sequence embeddings (or representations) that are directly fed into the folding trunk of ESMFold, bypassing the need for multiple sequence alignment (MSA) generation. This enables rapid, high-accuracy structure prediction from a single sequence.

The following quantitative data, derived from the model's performance on standard benchmarks like the CASP14 and CAMEO datasets, summarizes its accuracy and efficiency compared to other state-of-the-art methods.

Table 1: Performance Comparison of Protein Structure Prediction Methods

| Model | Inference Speed (aa/sec) | CASP14 TM-Score (Avg) | CAMEO lDDT (Avg) | MSA-Dependent? |

|---|---|---|---|---|

| ESMFold (Integrated) | 10-20 | 0.72 | 0.78 | No |

| AlphaFold2 | 1-2 | 0.85 | 0.84 | Yes |

| RoseTTAFold | 5-10 | 0.74 | 0.77 | Yes |

| trRosetta (MSA-based) | 3-5 | 0.68 | 0.73 | Yes |

Table 2: ESM-2 Embedding Model Variants

| ESM-2 Model | Parameters | Embedding Dimension | Context (Tokens) | Primary Use Case |

|---|---|---|---|---|

| esm2t68M_UR50D | 8 Million | 320 | 1,024 | Quick, low-resource embedding |

| esm2t30150M_UR50D | 150 Million | 640 | 1,024 | Standard balance of speed/accuracy |

| esm2t33650M_UR50D | 650 Million | 1,280 | 1,024 | High-accuracy embedding for large-scale studies |

| esm2t363B_UR50D | 3 Billion | 2,560 | 1,024 | State-of-the-art embedding for critical predictions |

Experimental Protocols

Protocol 1: Generating Protein Sequence Embeddings with ESM-2

Objective: To produce a fixed-dimensional representation (embedding) of a protein sequence for input into ESMFold.

Materials: FASTA file containing target protein sequence(s), Python environment with PyTorch and the fair-esm library installed.

Procedure:

1. Load Model and Alphabet: Instantiate the chosen ESM-2 model (e.g., esm2_t33_650M_UR50D) and its corresponding tokenizer.

2. Sequence Preparation: Tokenize the input protein sequence. Prepend a beginning-of-sequence (<cls>) token and append an end-of-sequence (<eos>) token.

3. Embedding Extraction: Pass the tokenized sequence through the ESM-2 model. Extract the hidden state representations from the final transformer layer.

4. Pooling (Optional): For a single per-sequence representation, apply mean pooling across the residue dimension, typically focusing on the <cls> token embedding.

5. Output: The output is a tensor of shape [sequencelength, embeddingdimension] or a pooled vector. This serves as the input features for ESMFold.

Protocol 2: End-to-End Structure Prediction with Integrated ESMFold

Objective: To predict the 3D coordinates of all heavy atoms in a protein from its amino acid sequence.

Materials: FASTA file, computing environment with CUDA-enabled GPU (recommended), and the esm Python package.

Procedure:

1. Model Loading: Load the pretrained ESMFold model, which internally contains the ESM-2 embedding module and the folding trunk.

2. Sequence Input: Provide the raw amino acid sequence as a string.

3. Forward Pass: Execute the model. Internally:

a. The sequence is embedded by the ESM-2 module.

b. The embeddings are passed through 48 transformer blocks in the folding trunk.

c. A structure module (inspired by AlphaFold2's "Structure Module") predicts distances and orientations, then outputs final 3D atomic coordinates.

4. Output Processing: The model outputs a PyTorch ProteinStructure object containing predicted atom coordinates (backbone and sidechains), per-residue confidence scores (pLDDT), and predicted aligned error (PAE).

5. Structure Refinement (Optional): Use Amber or Rosetta relaxation protocols to minimize steric clashes.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources

| Item | Function | Source/Example |

|---|---|---|

| ESM/ESMFold Python Package | Core library for loading models, running embeddings, and structure prediction. | GitHub: facebookresearch/esm |

| PyTorch | Deep learning framework required to run models. | pytorch.org |

| CUDA-capable GPU | Accelerates computation for models with billions of parameters. | NVIDIA (e.g., A100, V100, RTX 3090) |

| FASTA File | Standard format for input protein sequence(s). | User-provided or UniProt database |

| PDB File | Standard output format for storing predicted 3D atomic coordinates. | Generated by ESMFold |

| Jupyter Notebook / Python Script | Environment for prototyping and executing prediction pipelines. | Project Jupyter |

| Molecular Visualization Software | For visualizing, analyzing, and comparing predicted structures. | PyMOL, ChimeraX, VMD |

Visualizations

Title: ESM to ESMFold Integration Workflow

Title: ESMFold Architecture Breakdown

This application note details a case study within a broader thesis investigating the application of Evolutionary Scale Modeling (ESM) protein language models for generating informative sequence embeddings. The core thesis posits that ESM embeddings, which capture deep evolutionary and structural constraints from unlabeled sequence data, provide a superior feature space for computational tasks in therapeutic protein engineering compared to traditional sequence alignment-based methods. This case study validates that proposition by demonstrating a workflow for identifying and characterizing novel antigen targets for antibody development.

Background: ESM Embeddings as Biological Descriptors

ESM models, trained on millions of protein sequences, learn a high-dimensional representation (embedding) for each amino acid position and for the whole protein sequence. These embeddings encode information about evolutionary fitness, predicted structure, and function. For target identification, the embeddings of potential antigen proteins can be analyzed to locate conserved, surface-exposed regions likely to be functional and immunogenic—ideal targets for antibody binding.

Application Notes: Target Identification Workflow

Data Curation and Pre-processing

The target of interest was the oncogenic membrane protein TYRP1 (Tyrosinase-Related Protein 1), implicated in melanoma progression. The workflow required three datasets:

- Target Protein Sequence: Human TYRP1 (UniProt: P17643).

- Homolog Sequence Dataset: A set of 5,000 TYRP1 homologs from diverse vertebrates, retrieved via BLAST.

- Positive Control Set: Known antibody-epitope pairs for related melanogenic proteins (e.g., Tyr, TYRP2) from the IEDB database.

Generation of ESM Embeddings

The esm2_t33_650M_UR50D model was used. Per-residue embeddings (layer 33, embedding dimension: 1280) were generated for the human TYRP1 and all homologs. A mean-pooling operation across residues yielded a single global embedding vector for each homolog sequence.

Dimensionality Reduction and Cluster Analysis

The global embeddings for the homolog dataset were subjected to UMAP (Uniform Manifold Approximation and Projection) for visualization. This revealed evolutionary sub-clusters within the TYRP1 family.

Conservation & Surface Accessibility Prediction

- Conservation: The per-residue embeddings for the homolog set were used to compute a similarity score for each position, identifying evolutionarily constrained regions.

- Surface Accessibility: The ESM model's attention maps and an auxiliary logistic regression classifier (trained on ESM embeddings vs. DSSP surface accessibility labels) predicted solvent-exposed residues.

Epitope Region Prioritization

Positions exhibiting high conservation scores and high predicted surface accessibility were prioritized. A final shortlist of three putative epitope regions (10-15 amino acids each) on the extracellular loops of TYRP1 was generated for experimental validation.

Quantitative Validation Results

The prioritized epitopes were synthesized as peptides and screened for binding against a naive human Fab phage display library. The results were compared against a baseline method that used Parker hydrophilicity and multiple sequence alignment (MSAL) conservation.

Table 1: Comparison of Epitope Prediction Method Performance

| Method | Predicted Regions | # of Positive Binding Fabs Identified | Average Binding Affinity (KD) of Top 3 Fabs | Hit Rate (Fabs binding / screened) |

|---|---|---|---|---|

| ESM-Based Workflow | 3 | 17 | 45 nM | 1.7% |

| MSAL + Parker Hydrophilicity | 3 | 5 | 220 nM | 0.5% |

| Random Peptide Control | 3 | 0 | N/A | 0% |

Data from phage display panning and subsequent biolayer interferometry (BLI) analysis.

Experimental Protocols

Protocol 4.1: Generating ESM Embeddings for a Protein Family