ESM and ProtBERT: A Comprehensive Guide for Protein Language Models in Biomedical Research

This article provides researchers and drug development professionals with a detailed exploration of Evolutionary Scale Modeling (ESM) and ProtBERT, two pioneering protein language models (pLMs).

ESM and ProtBERT: A Comprehensive Guide for Protein Language Models in Biomedical Research

Abstract

This article provides researchers and drug development professionals with a detailed exploration of Evolutionary Scale Modeling (ESM) and ProtBERT, two pioneering protein language models (pLMs). We cover foundational concepts, methodological implementation, practical troubleshooting, and comparative validation. The guide examines their transformer-based architectures, training on massive protein sequence databases (like UniRef), and applications in predicting protein structure, function, and stability. It also addresses common challenges in deployment and optimization, compares performance against traditional and alternative deep learning methods, and discusses the future impact of pLMs on accelerating therapeutic discovery and precision medicine.

Decoding Protein Language Models: The Core Concepts Behind ESM and ProtBERT

Protein Language Models (pLMs) represent a paradigm shift in computational biology, applying deep learning architectures from natural language processing (NLP) to protein sequences. By treating amino acid sequences as sentences and residues as words, models like Evolutionary Scale Modeling (ESM) and ProtBERT learn semantic representations of protein structure and function. This technical guide provides an in-depth overview of the core principles, architectures, and methodologies of pLMs, contextualized within the broader thesis of comparing ESM and ProtBERT for research applications in protein engineering and therapeutic discovery.

Foundational Principles

The Linguistic Analogy

Proteins are linear polymers of 20 standard amino acids. This alphabet of "tokens" forms "sentences" (sequences) that fold into functional 3D structures, analogous to how word sequences convey semantic meaning.

Core Objective: Learning Generalizable Representations

The primary goal of pLMs is to learn high-dimensional, continuous vector embeddings (semantic embeddings) for protein sequences. These embeddings capture evolutionary, structural, and functional constraints, enabling predictions without explicit homology or structural data.

Transformer Architecture Fundamentals

Both ESM and ProtBERT are based on the Transformer architecture, which relies on a multi-head self-attention mechanism. The attention function maps a query and a set of key-value pairs to an output, computed as:

Attention(Q, K, V) = softmax(QK^T / √d_k)V

where Q, K, V are matrices of queries, keys, and values, and d_k is the dimensionality of the keys.

Model-Specific Implementations

ESM (Evolutionary Scale Modeling): Developed by Meta AI, the ESM family is trained on UniRef datasets using a masked language modeling (MLM) objective. The model learns by predicting randomly masked amino acids in sequences based on their context. Key versions include ESM-1v (for variant effect prediction) and ESM-2, which scales up to 15B parameters.

ProtBERT: Developed by the Rostlab, ProtBERT is also trained with an MLM objective on BFD and UniRef100. It utilizes the BERT architecture, which processes sequences bidirectionally, allowing each token to attend to all other tokens in the sequence.

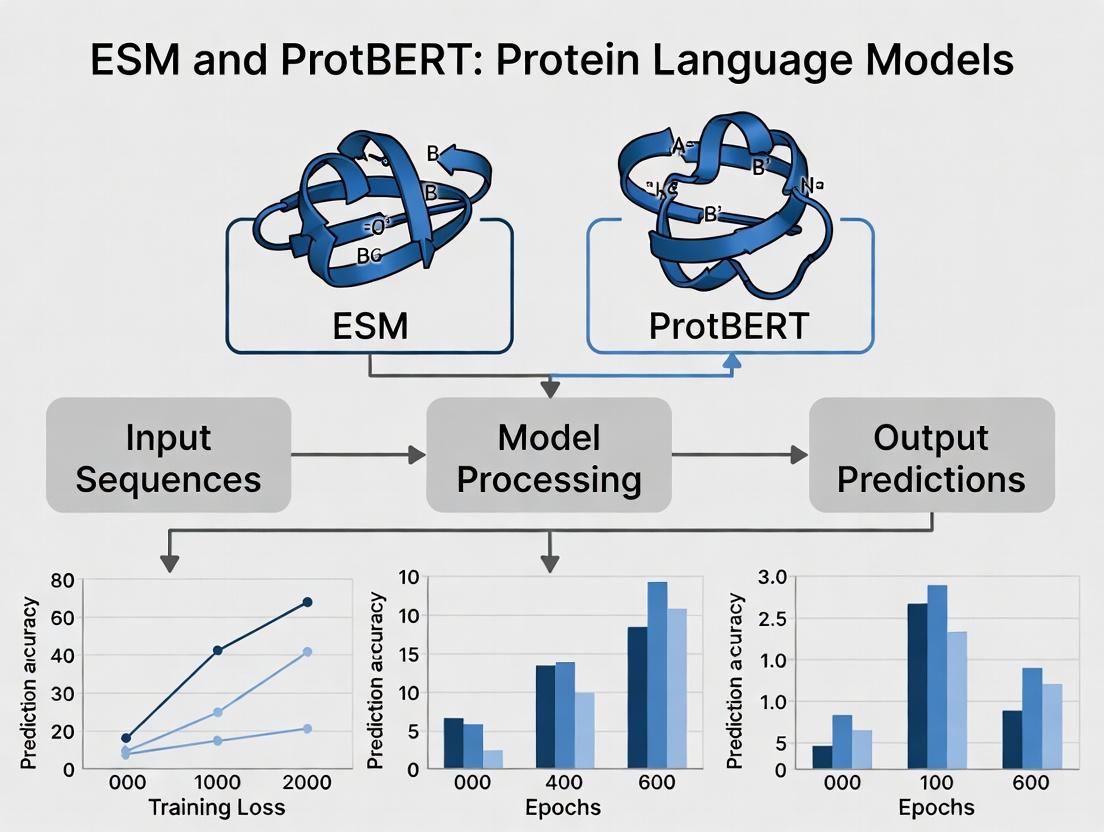

Diagram 1: Core pLM Transformer Architecture

Table 1: Architectural Comparison of ESM-2 and ProtBERT

| Feature | ESM-2 (15B) | ProtBERT |

|---|---|---|

| Base Architecture | Transformer (Encoder-only) | BERT (Transformer Encoder) |

| Parameters | Up to 15 billion | ~420 million (ProtBERT-BFD) |

| Training Data | UniRef50 (90M sequences) | BFD (2.1B seqs) & UniRef100 (220M seqs) |

| Context Window (Tokens) | 1024 | 512 |

| Embedding Dimension | 5120 (for largest model) | 1024 |

| Attention Heads | 40 | 16 |

| Layers | 48 | 30 |

| Primary Training Objective | Masked Language Modeling (MLM) | Masked Language Modeling (MLM) |

| Public Availability | Yes (Models & Code) | Yes (Models & Code) |

Experimental Protocols for pLM Evaluation

Protocol 1: Embedding Extraction for Downstream Tasks

Objective: Generate semantic embeddings for protein sequences to use as features in supervised learning tasks (e.g., structure prediction, function annotation).

- Sequence Preprocessing: Input protein sequences are tokenized into their constituent amino acid tokens. Special tokens (

[CLS],[SEP],[MASK]) are added as required by the specific model architecture. - Model Inference: Pass the tokenized sequence through the pre-trained pLM (e.g.,

esm.pretrained.esm2_t36_3B_UR50D()or"Rostlab/prot_bert"from Hugging Face). - Embedding Harvesting: Extract the hidden state representations from the final layer (or a specified layer) of the Transformer encoder. The

[CLS]token embedding often serves as a sequence-level representation, while individual residue embeddings capture local context. - Downstream Model Training: Use extracted embeddings as fixed or fine-tunable features to train task-specific models (e.g., a shallow neural network or logistic regression for protein-protein interaction prediction).

Protocol 2: Zero-Shot Variant Effect Prediction (e.g., with ESM-1v)

Objective: Predict the functional impact of a single amino acid variant without task-specific training.

- Wild-Type and Variant Sequence Preparation: Generate the wild-type sequence and the mutant sequence(s) with the single residue substitution.

- Log-Likelihood Calculation: For both sequences, compute the log-likelihood of the entire sequence under the pLM. For a sequence S of length L, the log-likelihood is the sum of log probabilities for each token given its context:

log P(S) = Σ_{i=1}^L log P(x_i | x_{\i}). - Score Assignment: The variant effect score is typically the difference in log-likelihoods:

Δlog P = log P(mutant) - log P(wild-type). A negative Δlog P suggests a deleterious effect. - Calibration & Evaluation: Compare scores against experimental deep mutational scanning data (e.g., from ProteinGym) to assess prediction accuracy using metrics like Spearman's rank correlation.

Protocol 3: Fine-Tuning for Specific Prediction Tasks

Objective: Adapt a general pLM to a specialized dataset (e.g., fluorescence, stability).

- Dataset Curation: Assemble a labeled dataset of protein sequences and corresponding experimental measurements.

- Model Head Addition: Append a task-specific regression or classification head (a feed-forward neural network) on top of the pLM's final embedding layer.

- Training Loop: Perform supervised training, often initially freezing the pLM backbone and only training the new head, followed by optional full-model fine-tuning with a low learning rate (e.g., 1e-5).

- Validation: Use hold-out test sets to evaluate performance, ensuring generalization beyond the training distribution.

Diagram 2: Typical pLM Experimental Workflow

Table 2: Performance Comparison on Benchmark Tasks (Representative Data)

| Benchmark Task | Metric | ESM-2 (3B) | ProtBERT-BFD | Traditional Method (e.g., EVE) |

|---|---|---|---|---|

| Remote Homology Detection (Fold Classification) | Top-1 Accuracy (%) | 88.2 | 85.7 | 77.5 (Profile HMM) |

| Variant Effect Prediction (ProteinGym Avg.) | Spearman's ρ | 0.48 | 0.42 | 0.45 (EVmutation) |

| Solubility Prediction | AUC-ROC | 0.91 | 0.89 | 0.82 (SOLpro) |

| Contact Prediction (Top L/5) | Precision | 0.65 | 0.58 | 0.55 (trRosetta) |

| Fluorescence Prediction (avGFP) | Spearman's ρ | 0.73 | 0.68 | 0.61 (DeepSequence) |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Resources for pLM Research

| Resource / Tool | Provider / Library | Primary Function |

|---|---|---|

| ESM Model Weights & Code | Meta AI (GitHub) | Pre-trained ESM models (ESM-2, ESM-1v, ESM-IF) for inference and fine-tuning. |

| ProtBERT Model Hub | Hugging Face | Pre-trained ProtBERT and ProtBERT-BFD models accessible via the transformers library. |

| PyTorch / TensorFlow | Meta / Google | Deep learning frameworks required for loading and executing pLMs. |

| Bioinformatics Datasets | UniProt, BFD, ProteinGym | Curated protein sequence and variant data for training, fine-tuning, and benchmarking. |

| Compute Infrastructure | NVIDIA GPUs (A100/H100), Google Cloud TPU v4 | Accelerated hardware essential for training large models (>1B params) and efficient inference on large-scale data. |

| Sequence Embedding Visualizers | UMAP, t-SNE (scikit-learn) | Dimensionality reduction tools for visualizing high-dimensional protein embeddings in 2D/3D. |

| Structure Prediction Suites | AlphaFold2, OpenFold | Tools for generating 3D structures from sequences, used to validate or complement pLM predictions (e.g., contact maps). |

Protein Language Models have firmly established themselves as foundational tools for encoding biological prior knowledge into machine-interpretable semantic embeddings. Within the thesis context, ESM models, with their massive scale, excel in zero-shot and few-shot learning scenarios, while ProtBERT offers a robust and computationally efficient alternative. The field is rapidly evolving towards multimodal architectures that integrate sequence, structure, and functional annotations, promising even deeper biological insights and accelerating the rational design of novel enzymes and therapeutics. Future research will focus on improving interpretability, efficiency for large-scale screening, and integration with generative models for de novo protein design.

Within the burgeoning field of computational biology, protein language models (pLMs) like Evolutionary Scale Modeling (ESM) and ProtBERT represent a paradigm shift. This technical guide posits that the Transformer architecture is the fundamental innovation enabling these models to decode the complex "language" of proteins, moving beyond sequence analysis to infer structure, function, and evolutionary relationships. For researchers in bioinformatics and drug development, understanding this architectural backbone is crucial for leveraging, fine-tuning, and innovating upon these powerful tools.

The Transformer Architecture: Core Components

The Transformer’s efficacy stems from its attention mechanisms, which allow the model to weigh the importance of all amino acids in a sequence when processing any single position.

Key Components:

- Self-Attention: Computes a weighted sum of values for each token, where weights are based on compatibility between the token and all others.

- Multi-Head Attention: Multiple self-attention layers run in parallel, allowing the model to jointly attend to information from different representation subspaces.

- Positional Encoding: Injects information about the relative or absolute position of tokens in the sequence, as the model itself is permutation-invariant.

- Feed-Forward Networks: Applied to each position separately and identically, often consisting of two linear transformations with a ReLU activation.

- Layer Normalization & Residual Connections: Critical for stable training over many layers.

Implementation in ESM and ProtBERT: A Comparative Analysis

ESM (from Meta AI) and ProtBERT (from NVIDIA) adapt the Transformer framework differently, leading to distinct strengths.

Table 1: Architectural and Training Comparison of ESM-2 and ProtBERT

| Feature | ESM-2 (Latest: 15B params) | ProtBERT (BERT-based) |

|---|---|---|

| Model Architecture | Transformer (Encoder-only) | Transformer (Encoder-only, BERT architecture) |

| Primary Training Objective | Causal Language Modeling (masked token prediction) | Masked Language Modeling (MLM) |

| Training Data | UniRef50 (60M sequences) + metagenomic data | BFD (UniRef90) ~2.1B clusters |

| Context Size (Tokens) | Up to 4,192 | 512 |

| Key Innovation | Scalable training to 15B parameters; enables state-of-the-art structure prediction. | Leverages proven BERT framework; strong on semantic (functional) understanding. |

| Primary Output | Per-residue embeddings; can be fine-tuned for structure (ESMFold). | Contextualized amino acid embeddings. |

Experimental Protocols for Leveraging pLMs

Protocol 1: Extracting Protein Embeddings for Downstream Tasks

- Input Preparation: Format protein amino acid sequence(s) as FASTA strings.

- Tokenization: Convert sequences into model-specific tokens (including special start/end tokens).

- Model Inference: Pass tokenized IDs through the pre-trained model (e.g.,

esm.pretrained.esm2_t33_650M_UR50D()orRostlab/prot_bert). - Embedding Capture: Extract the hidden state representations from the final (or a specified) Transformer layer.

- For per-sequence embeddings, often pool (mean/max) the per-residue embeddings.

- Downstream Application: Use embeddings as input features for supervised models predicting function, stability, or interactions.

Protocol 2: Fine-tuning for a Specific Prediction Task

- Dataset Curation: Assemble labeled dataset (e.g.,

[sequence, stability_label]). - Model Head Addition: Append a task-specific classification/regression head on top of the frozen or unfrozen base Transformer.

- Training Loop: Iterate on batches:

- Forward pass through base model + new head.

- Compute loss (e.g., Cross-Entropy, MSE).

- Backpropagate to update head (and optionally base) parameters.

- Evaluation: Validate on held-out sets using task-relevant metrics (AUROC, Accuracy, RMSE).

Visualizing Workflows and Relationships

Title: pLM Training and Application Pipeline

Title: Self-Attention & Multi-Head Mechanism

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for pLM Research

| Item | Function & Description | Example/Provider |

|---|---|---|

| Pre-trained Model Weights | Foundational parameters of the pLM, enabling transfer learning without training from scratch. | ESM-2 weights (Meta AI GitHub), ProtBERT (Hugging Face Hub) |

| Fine-tuning Datasets | Curated, labeled protein datasets for specialized tasks (e.g., fluorescence, stability). | ProteinGym (wild-type vs. mutant fitness), DeepAffinity (binding affinity). |

| Tokenization Library | Converts amino acid strings into model-specific token IDs. | ESM tokenizer, Hugging Face BertTokenizer for ProtBERT. |

| Deep Learning Framework | Software environment for model loading, inference, and fine-tuning. | PyTorch (primary for ESM), TensorFlow/PyTorch via Hugging Face Transformers. |

| Embedding Visualization Tool | Reduces high-dimensional embeddings to 2D/3D for exploratory analysis. | UMAP, t-SNE (via scikit-learn). |

| Structure Prediction Pipeline | Converts pLM embeddings/alignments into 3D atomic coordinates. | ESMFold (built-in), OpenFold. |

| High-Performance Compute (HPC) | GPU clusters for training large models or processing massive protein databases. | NVIDIA A100/H100 GPUs, Cloud platforms (AWS, GCP, Azure). |

This whitepaper details Meta AI's Evolutionary Scale Modeling (ESM) framework, a transformative approach in computational biology for learning protein representations directly from evolutionary sequence data. The core thesis posits that scaling transformer-based language models to hundreds of millions of protein sequences uncovers fundamental principles of protein structure, function, and evolutionary fitness, surpassing the scope of prior models like ProtBERT which were trained on smaller, curated datasets (e.g., UniRef100). For researchers, ESM represents a paradigm shift towards leveraging raw evolutionary scale to infer biological mechanisms.

Core Architecture and Scale

ESM models are transformer-based neural networks trained on the masked language modeling (MLM) objective, where random amino acids in sequences are masked and the model learns to predict them. The key innovation is the unprecedented scale of training data and model parameters.

- ESM-2: The current state-of-the-art, with versions ranging from 8M to 15B parameters.

- Training Data: The UniRef database (UniRef50/90), containing hundreds of millions of diverse protein sequences.

- Key Advancement: The 15B parameter ESM-2 model demonstrates that scaling enables accurate atomic-level structure prediction (outperforming many physics-based tools) directly from sequence, a capability not inherent to smaller models.

Table 1: Evolution of Key Protein Language Models

| Model (Year) | Developer | Params | Training Dataset Size | Key Capability |

|---|---|---|---|---|

| ProtBERT (2020) | BSC | 420M | ~200M sequences (UniRef100) | General-purpose protein sequence understanding, function prediction. |

| ESM-1b (2021) | Meta AI | 650M | 250M sequences (UniRef50) | State-of-the-art fitness & structure prediction at its release. |

| ESM-2 (2022) | Meta AI | 8M to 15B | ~60M+ sequences (UniRef90) | High-accuracy atomic structure prediction (ESMFold). |

Detailed Experimental Protocol: Zero-Shot Fitness Prediction

A benchmark experiment demonstrating ESM's biological relevance is zero-shot fitness prediction from deep mutational scanning (DMS) assays.

Protocol:

- Data Preparation: A wild-type protein sequence and a library of variant sequences (single/multiple mutations) from a DMS experiment are obtained. Fitness scores (e.g., log enrichment) for each variant are experimentally measured.

- Model Inference: The log-likelihood of each variant sequence is computed using the ESM model. The pseudo-log-likelihood (PLL) or the evolutionary index (esm1v) score is derived for each mutated position.

- Score Calculation: For each variant, the model score is calculated as the sum of the negative log probabilities (or a scaled PLL) for the mutated residues relative to the wild-type.

- Correlation Analysis: Spearman's rank correlation coefficient is computed between the model-derived scores and the experimental fitness scores across all variants. No model parameters are fine-tuned on the target protein family (hence "zero-shot").

Table 2: Representative ESM Performance on DMS Benchmarks (Spearman's ρ)

| Protein (DMS Dataset) | ESM-1v (Avg. of 5 models) | ESM-2 (3B) | ProtBERT-BFD |

|---|---|---|---|

| BRCA1 (RING) | 0.81 | 0.78 | 0.72 |

| TPK1 (Yeast) | 0.65 | 0.62 | 0.58 |

| BLAT (β-lactamase) | 0.79 | 0.80 | 0.75 |

Visualization of ESM Workflow and Structure Prediction

Diagram 1: ESM Training & Application Pipeline

Diagram 2: ESMFold Structure Prediction

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Resources for Working with ESM Models

| Item / Resource | Type | Function & Explanation |

|---|---|---|

| UniRef90/50 Database | Dataset | Curated, clustered protein sequence database from UniProt. Provides the evolutionary diversity necessary for training robust models. |

| ESM Model Weights (via Hugging Face) | Software/Model | Pre-trained model parameters (e.g., esm2_t36_3B_UR50D). Enables inference without prohibitive compute costs. |

| PyTorch / fairseq | Software Framework | The primary libraries on which ESM is built and for loading models for sequence embedding extraction. |

| Deep Mutational Scanning (DMS) Data | Experimental Data | Benchmark datasets (e.g., from ProteinGym) containing variant sequences and fitness scores for validating model predictions. |

| AlphaFold2 Database or PDB | Reference Data | Experimental or high-accuracy predicted structures for validating ESMFold outputs or analyzing structure-function relationships. |

| Jupyter / Colab Notebooks | Computing Environment | For prototyping, running inference, and analyzing embeddings, often with provided Meta AI example notebooks. |

| High-Performance GPU (e.g., A100) | Hardware | Accelerates inference, especially for larger models (3B, 15B) and structure prediction with ESMFold. |

The exploration of protein sequences through natural language processing (DL) represents a paradigm shift in computational biology. This whitepaper situates ProtBERT within the broader thesis of Evolutionary Scale Modeling (ESM), a framework dedicated to learning high-capacity models of protein sequences from massive, evolutionarily diverse datasets. ProtBERT, as a direct adaptation of the Bidirectional Encoder Representations from Transformers (BERT) architecture, exemplifies the application of transformer-based self-supervised learning to decode the semantic and syntactic "grammar" of proteins. For researchers, the ESM framework and its derivatives like ProtBERT provide powerful, general-purpose protein language models (pLMs) that yield state-of-the-art representations for downstream tasks such as structure prediction, function annotation, and variant effect prediction, thereby accelerating therapeutic discovery.

Technical Architecture and Adaptation

ProtBERT adapts the original BERT architecture to the protein "alphabet." The key adaptations are:

- Vocabulary: The input tokens are the 20 standard amino acids (plus special tokens like [CLS], [SEP], [MASK], and [PAD]). This replaces the ~30k subword vocabulary of text-based BERT.

- Embedding: Each amino acid token is mapped to a dense vector representation. Positional encodings are added to inform the model of the residue's order in the sequence.

- Transformer Encoder Stack: The core remains a multi-layer bidirectional Transformer encoder, identical to BERT-base (12 layers, 12 attention heads, hidden size 768) or BERT-large variants.

- Pre-training Objective: The model is trained using the Masked Language Modeling (MLM) objective. A random subset (typically 15%) of amino acids in an input sequence is replaced with a [MASK] token, and the model must predict the original identity based on the full contextual information from both left and right.

Experimental Protocols and Key Findings

Pre-training Protocol

Dataset: Large-scale, diverse protein sequence databases such as UniRef (UniProt Reference Clusters) or BFD (Big Fantastic Database). Sequences are clustered at a high identity threshold (e.g., 30% for UniRef30) to reduce redundancy and enforce evolutionary diversity. Procedure:

- Data Preparation: Sample sequences from the clustered database. Apply minimal preprocessing (e.g., truncation to a maximum length, typically 512 tokens).

- Masking: Randomly mask 15% of tokens in each sequence. Among masked tokens, 80% are replaced with [MASK], 10% with a random amino acid, and 10% left unchanged.

- Training: Optimize the model using the cross-entropy loss on the masked positions. Use the AdamW optimizer with weight decay, linear learning rate warmup, and inverse square root decay.

Downstream Task Fine-tuning Protocol

For tasks like Secondary Structure Prediction (Q3/Q8) or Remote Homology Detection (Fold Classification):

- Input: Protein sequences for the task.

- Representation Extraction: Pass sequences through the pre-trained ProtBERT. Use the contextual embeddings from the final layer (often averaging over sequence positions or using the [CLS] token representation) as features.

- Task-Specific Head: Add a simple classifier (e.g., a multi-layer perceptron) on top of the extracted features.

- Fine-tuning: Jointly train the task-specific head and, optionally, the entire ProtBERT model on the labeled downstream dataset with a small learning rate.

Table 1: ProtBERT Performance on Key Downstream Tasks vs. Baseline Methods.

| Task | Metric | ProtBERT (BERT-base) | LSTM Baseline | 1D-CNN Baseline | Notes |

|---|---|---|---|---|---|

| Secondary Structure (Q3) | Accuracy | ~76% | ~73% | ~72% | CASP12 dataset |

| Remote Homology (SCOP) | Top-1 Acc | ~30% | ~15% | ~20% | Fold-level classification |

| Fluorescence | Spearman ρ | ~0.68 | ~0.41 | ~0.50 | Directed evolution landscape prediction |

| Stability | Spearman ρ | ~0.73 | ~0.45 | ~0.55 | Mutational stability prediction |

Visualizations

Title: ProtBERT Pre-training and Fine-tuning Workflow

Title: ProtBERT Model Architecture for Masked Residue Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Working with ProtBERT and pLMs.

| Resource | Type | Primary Function / Description |

|---|---|---|

| ESM / HuggingFace Model Hub | Software | Repository to download pre-trained ProtBERT/ESM model weights (e.g., Rostlab/prot_bert). |

| PyTorch / TensorFlow | Software | Deep learning frameworks required for loading, fine-tuning, and running inference with the models. |

| Biopython | Software | Library for parsing protein sequence data (FASTA files), managing biological data structures. |

| UniProtKB | Database | Comprehensive resource for protein sequence and functional annotation; source for fine-tuning data. |

| PDB (Protein Data Bank) | Database | Repository of 3D protein structures; used for tasks like structure prediction from sequence. |

| AlphaFold2 Database | Database | Provides high-accuracy predicted structures for most proteins; useful as ground truth or comparison. |

| GPUs (e.g., NVIDIA A100) | Hardware | Accelerators essential for efficient model training and inference due to the model's large size. |

| Jupyter / Colab | Software | Interactive computing environments for prototyping and analysis. |

Within the landscape of protein language models like ESM (Evolutionary Scale Modeling) and ProtBERT, the quality, scale, and construction of training datasets are foundational to model performance. This guide details the core datasets—UniRef and BFD—that underpin these models, operating at the billion-sequence scale. For researchers in computational biology and drug development, understanding these data resources is critical for interpreting model capabilities, biases, and potential applications.

Core Datasets: Architecture and Curation

Modern protein language models are trained on clustered sets of protein sequences derived from public databases. The two primary resources are UniRef and the Big Fantastic Database (BFD).

UniRef (UniProt Reference Clusters): Produced by the UniProt consortium, UniRef clusters sequences from its underlying databases (Swiss-Prot, TrEMBL, PIR-PSD) at defined identity thresholds to reduce redundancy. UniRef100 provides all sequences, UniRef90 clusters at 90% identity, and UniRef50 at 50% identity. It is characterized by high-quality, manually curated annotations.

BFD (Big Fantastic Database): A large-scale, metagenomics-focused dataset created specifically for training protein prediction models. It combines sequences from various sources, including UniProt, MGnify, and others, and is aggressively clustered at low sequence identity. It emphasizes breadth and diversity over manual annotation.

Table 1: Quantitative Comparison of UniRef and BFD

| Feature | UniRef (v.2023_01) | BFD (v. used in ESM-2) | Key Implication for Model Training |

|---|---|---|---|

| Primary Source | UniProtKB (Curated/Reviewed) | UniProt, MGnify, others | UniRef offers higher per-sequence quality; BFD offers ecological diversity. |

| Clustering Identity | 50% (UniRef50), 90%, 100% | ~30% (MMseqs2 Linclust) | BFD's lower threshold yields more diverse, less redundant sets. |

| Approx. Sequence Count | ~50 million (UniRef50) | ~2.2 billion (pre-cluster) | Scale directly enables learning of long-range interactions and rare folds. |

| Approx. Cluster Count | ~45 million (UniRef50) | ~65 million (post-cluster) | Determines the effective number of training examples. |

| Typical Use in Models | ProtBERT, Early ESM models | ESM-2, AlphaFold (MSA input) | BFD's scale was crucial for scaling ESM to 15B parameters. |

Dataset Construction Methodologies

UniRef Clustering Protocol:

- Input: All protein sequences in UniProtKB.

- Alignment: Use of CD-HIT or MMseqs2 for pairwise sequence comparison.

- Clustering: Greedy incremental clustering based on a user-defined identity threshold (e.g., 50%) over the full length of the sequence.

- Representative Selection: The longest sequence in each cluster is chosen as the representative.

- Annotation Propagation: Annotations from member sequences are summarized for the representative.

BFD Construction Protocol (as for ESM training):

- Aggregation: Collect sequences from UniProt, MGnify metagenomic assemblies, and environmental samples.

- Pre-processing: Filter extremely short (<30 residues) and long (>10,000 residues) sequences. Use deduplication.

- Large-Scale Clustering: Apply MMseqs2

linclustwith a stringent ~30% sequence identity threshold. This step reduces redundancy while maximizing structural diversity. - Representative Selection: Select a central sequence within each cluster.

- Formatting: Convert the final set to a format suitable for deep learning (e.g., a FASTA file with masked random residues for MLM tasks).

Experimental Workflow for Model Pre-training

The following diagram illustrates the standard pipeline for constructing training data and pre-training models like ESM.

Title: Workflow for Protein Language Model Pre-training Data Creation

Table 2: Essential Resources for Working with Protein Training Data

| Resource / Tool | Type | Primary Function |

|---|---|---|

| UniRef (via UniProt) | Database | Gold-standard, annotated protein clusters for training or benchmarking. |

| BFD / MGnify | Database | Massive-scale, diverse sequence sets for large model training. |

| MMseqs2 | Software Tool | Ultra-fast clustering and profiling of protein sequences. Essential for dataset creation. |

| HMMER | Software Tool | Building and searching profile hidden Markov models for sensitive sequence homology detection. |

| ESM Metagenomic Atlas | Pre-computed Database | Provides instant access to ESM-2 embeddings for the entire BFD/UniRef50, enabling rapid analysis. |

| PyTorch / Hugging Face Transformers | Software Library | Framework for loading, fine-tuning, and deploying pre-trained models like ESM and ProtBERT. |

| PDB (Protein Data Bank) | Database | Source of high-resolution protein structures for model validation and fine-tuning (e.g., for structure prediction). |

Impact on Model Performance: Experimental Evidence

The choice and scale of training data directly determine model performance on downstream tasks. Key experiment types include:

Ablation Study on Data Scale:

- Protocol: Train otherwise identical ESM model architectures on progressively larger datasets (e.g., UniRef50 vs. full BFD). Evaluate on a suite of benchmarks including remote homology detection (Fold classification), contact prediction, and fluorescence/stability prediction.

- Methodology: Use standard train/validation/test splits for each benchmark. For contact prediction, measure precision at L/5 (top predictions for a protein of length L). For fitness prediction, report Spearman's correlation between predicted and experimental scores.

- Outcome: Models trained on BFD consistently outperform those trained on UniRef50 alone, particularly on tasks requiring recognition of distant evolutionary relationships.

Comparison of ProtBERT (UniRef) vs. ESM-2 (BFD):

- Protocol: Compare the embeddings from ProtBERT (trained on UniRef100) and ESM-2 (trained on BFD) on a protein structure classification task using Structural Classification of Proteins (SCOP) fold categories.

- Methodology: 1. Extract sequence embeddings from the final layer of each model. 2. For a held-out test set of SCOP domains, train a simple logistic regression classifier on the embeddings to predict the fold class. 3. Compare classification accuracy.

- Outcome: ESM-2 embeddings, informed by greater diversity, typically achieve higher accuracy, especially on "hard" folds with few sequence homologs.

Title: From Training Data to Downstream Task Performance

The ascendancy of protein language models like ESM and ProtBERT is inextricably linked to their training data foundations. UniRef provides a benchmark of quality and annotation, while BFD and its billion-sequence scale unlock unprecedented diversity, driving models toward a more fundamental understanding of protein sequence-structure-function relationships. For researchers applying these models, this knowledge is vital for selecting appropriate pre-trained models, designing fine-tuning strategies, and critically interpreting results in computational drug discovery and protein engineering.

Protein language models (pLMs) like Evolutionary Scale Modeling (ESM) and ProtBERT represent a paradigm shift in computational biology. Framed within the broader thesis of comparing these architectures for researcher application, this guide elucidates how they transform protein sequences into dense numerical vectors—embeddings—that capture evolutionary, structural, and functional semantics. These embeddings serve as foundational inputs for downstream predictive tasks in bioinformatics and drug discovery.

Core Concepts: From Sequence to Vector Space

A protein embedding is a fixed-dimensional, real-valued vector representation generated by a pLM. The model is trained on millions of natural protein sequences (e.g., from UniRef) to learn the statistical patterns of amino acid co-evolution. Each position in a protein sequence is contextualized by its entire sequence environment, encoding biological properties without explicit supervision.

Quantitative Comparison of ESM and ProtBERT Model Families

Table 1: Key Architectural and Performance Metrics of Prominent pLMs

| Model (Representative Version) | Parameters | Training Data (Sequences) | Embedding Dimension (per residue) | Key Benchmark (e.g., Contact Prediction P@L/5) | Primary Architectural Note |

|---|---|---|---|---|---|

| ESM-2 (ESM2 650M) | 650 Million | 65 Million (UniRef50) | 1280 | 0.81 | Transformer-only, trained from scratch. |

| ESM-1b | 650 Million | 27 Million (UniRef50) | 1280 | 0.74 | Predecessor to ESM-2. |

| ProtBERT (ProtBERT-BFD) | 420 Million | 2.1 Billion (BFD) | 1024 | 0.72 | BERT-style, masked language modeling on BFD. |

| ESM-1v (650M) | 650 Million | 98 Million (MGnify) | 1280 | N/A | Variant-focused, excels at variant effect prediction. |

Biological Significance of Embeddings: Encoded Information

Protein embeddings implicitly capture multi-scale biological information:

- Primary Structure: Amino acid physicochemical properties.

- Secondary & Tertiary Structure: Patterns indicative of alpha helices, beta sheets, and residue contacts.

- Evolutionary Constraints: Conservation and co-evolutionary signals across protein families.

- Function: Molecular function (e.g., catalytic sites), cellular localization, and protein-protein interaction interfaces.

Experimental Protocols for Validating Embedding Utility

Protocol 5.1: Embedding Extraction for Downstream Tasks

- Input Preparation: Format protein sequences as FASTA strings.

- Model Loading: Load pre-trained model weights (e.g.,

esm.pretrained.esm2_t33_650M_UR50D()). - Tokenization & Inference: Tokenize sequence, add special tokens (

<cls>,<eos>), and pass through model. - Embedding Capture: Extract the final hidden layer representations for each token. The

<cls>token embedding often serves as the whole-protein representation. - Downstream Application: Use embeddings as features for classifiers (e.g., SVM, CNN) or for similarity search.

Protocol 5.2: Zero-Shot Variant Effect Prediction (ESM-1v)

- Generate Wild-type and Variant Log-Likelihoods: For a given sequence and a set of single-point mutations, compute the log-likelihood difference (

Δlog P(X | S)). - Score Mutations: Rank mutations by their Δlog likelihoods. Lower scores indicate more deleterious variants.

- Validation: Correlate model scores with experimental deep mutational scanning (DMS) fitness data using Spearman's rank correlation.

Visualization of Core Concepts and Workflows

Diagram 1: Protein Embedding Generation Workflow (63 chars)

Diagram 2: Biological Information Encoded in Embeddings (62 chars)

Table 2: Key Resources for Protein Embedding Research

| Item / Resource | Function / Purpose | Example / Source |

|---|---|---|

| Pre-trained pLM Weights | Foundation for generating embeddings without training from scratch. | ESM models (Facebook AI), ProtBERT (Hugging Face Hub). |

| Protein Sequence Database | Source of query sequences and background data for training/fine-tuning. | UniProt, UniRef, BFD, MGnify. |

| Benchmark Datasets | For evaluating embedding quality on specific tasks. | Protein Data Bank (PDB) for structure, DeepLoc for localization, DMS datasets for variant effects. |

| Embedding Extraction Library | Software to load models and run inference. | esm (PyTorch), transformers (Hugging Face), bio-embeddings pipeline. |

| Downstream Analysis Toolkit | Libraries for clustering, classification, and visualization of embeddings. | Scikit-learn (PCA, t-SNE, classifiers), NumPy, SciPy. |

| High-Performance Compute (HPC) | GPU acceleration is essential for processing large batches or long sequences. | NVIDIA GPUs (e.g., A100, V100) with CUDA-enabled PyTorch. |

The advent of large-scale protein language models (pLMs), such as ESM (Evolutionary Scale Modeling) and ProtBERT, has revolutionized the field of computational biology and drug discovery. These models leverage the statistical patterns in millions of protein sequences to learn fundamental principles of protein structure and function. At their core, the primary architectural distinction lies in their pre-training objectives: Autoregressive (AR) modeling versus Masked Language Modeling (MLM). This technical guide, framed within a broader thesis on model overview for researchers, dissects this foundational difference, its technical implications, and its downstream effects on research applications.

Foundational Architectures and Training Objectives

ESM's Autoregressive (Causal) Objective

ESM-1b and ESM-2 utilize a standard Transformer decoder architecture. The model is trained to predict the next amino acid in a sequence given all preceding amino acids. This unidirectional context mimics the generative process of sequences.

Mathematical Formulation:

Given a protein sequence ( S = (a1, a2, ..., aN) ), the AR objective maximizes the likelihood:

[

P(S) = \prod{i=1}^{N} P(ai | a{

Protocol: During training, a causal attention mask is applied within the Transformer, allowing each position to attend only to previous positions. The model outputs a probability distribution over the 20 standard amino acids (plus special tokens) for the next position. Loss is computed as the negative log-likelihood of the true next token.

ProtBERT's Masked Language Modeling (BERT-style) Objective

ProtBERT and its variant ProtBERT-BFD are based on the Transformer encoder architecture, as popularized by BERT. A random subset (~15%) of amino acids in an input sequence is masked, and the model is trained to predict the original identities based on the bidirectional context from all non-masked positions.

Mathematical Formulation: For a masked sequence ( S{\text{masked}} ), the objective is to maximize: [ \sum{i \in M} \log P(ai | S{\backslash M}) ] where ( M ) is the set of masked positions and ( S_{\backslash M} ) represents the unmasked context.

Protocol: Input sequences are tokenized, and masking is applied stochastically (replacing tokens with [MASK], a random token, or leaving them unchanged). The model's final hidden states at masked positions are fed into a classifier over the vocabulary. The loss is computed only over the masked positions.

Core Architectural Comparison Table

Table 1: Architectural and Objective Comparison between ESM and ProtBERT

| Feature | ESM (e.g., ESM-2) | ProtBERT (e.g., ProtBERT-BFD) |

|---|---|---|

| Core Architecture | Transformer Decoder | Transformer Encoder |

| Pre-training Objective | Autoregressive (Next-token prediction) | Masked Language Modeling (MLM) |

| Context Processing | Unidirectional (Causal) | Bidirectional |

| Primary Model Family | ESM-1b, ESM-2 (15B params) | ProtBERT, ProtBERT-BFD |

| Training Data | UniRef50/90 (Millions of sequences) | BFD (Billion of sequences) + UniRef100 |

| Representative Use | Generative design, evolutionary scoring | Fine-tuning for classification, per-token tasks |

Implications for Learned Representations and Downstream Tasks

The choice of objective fundamentally shapes the information encoded in the model's latent representations.

ESM's AR Objective:

- Strengths: Excels at tasks involving sequence generation, likelihood estimation, and zero-shot variant effect prediction. The unidirectional model learns a coherent probability distribution over sequences, useful for evolutionary analysis.

- Limitations: The lack of bidirectional context can limit the depth of per-residue contextual understanding for some structure/function prediction tasks, as each position cannot "see" downstream residues.

ProtBERT's MLM Objective:

- Strengths: Provides rich, context-dense representations for each residue, informed by the entire sequence. This is particularly powerful for per-residue tasks like secondary structure prediction, contact prediction, or binding site identification after fine-tuning.

- Limitations: Not inherently a generative model for full sequences. The masking objective can lead to a discrepancy between pre-training (seeing

[MASK]tokens) and fine-tuning (seeing full sequences).

Quantitative Performance Comparison

Table 2: Benchmark Performance on Key Tasks (Representative Data)

| Benchmark Task | Metric | ESM-2 (15B) | ProtBERT-BFD | Notes |

|---|---|---|---|---|

| Remote Homology Detection (Fold classification) | Accuracy (%) | 88.2 | 86.4 | ESM-2 shows strong performance due to deep evolutionary learning. |

| Secondary Structure Prediction (Q3 Accuracy) | Q3 Accuracy (%) | 81.2 | 84.7 | ProtBERT's bidirectional context provides a slight edge. |

| Contact Prediction (Top L/L long-range precision) | Precision (%) | 58.1 | 52.3 | ESM-2's 15B parameter model excels at capturing long-range interactions. |

| Variant Effect Prediction (Spearman's ρ) | Spearman Correlation | 0.48 | 0.41 | ESM's likelihood-based approach is naturally suited for this task. |

Experimental Protocols for Model Evaluation

Protocol 5.1: Zero-Shot Variant Effect Prediction (ESM's Strength)

- Input Preparation: Generate wild-type and mutant sequence pairs.

- Scoring with ESM: For each sequence, compute the log-likelihood ( \log P(S) ) using the ESM model's autoregressive head.

- Effect Calculation: Compute the log-likelihood ratio (LLR): ( \text{LLR} = \log P(\text{mutant}) - \log P(\text{wild-type}) ). A more negative LLR suggests a deleterious effect.

- Validation: Correlate LLR scores with experimentally measured fitness scores or clinical pathogenicity labels (e.g., from DeepMind's ClinVar benchmarks).

Protocol 5.2: Fine-tuning for Per-Residue Classification (ProtBERT's Strength)

- Task Definition: Define a classification task (e.g., secondary structure: Helix, Strand, Coil).

- Data Annotation: Use DSSP on PDB structures to generate per-residue labels for a dataset of sequences with known structure.

- Model Adaptation: Add a linear classification head on top of ProtBERT's final hidden states.

- Training: Fine-tune the entire model (or only the head) using cross-entropy loss, masking loss for padding positions.

- Evaluation: Report per-residue accuracy (Q3) on a held-out test set of proteins.

Visualization of Core Distinctions

Diagram 1: Core Training Objectives: AR vs MLM

Diagram 2: Research Pathway Selection Based on Objective

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for pLM Research

| Tool/Resource | Type | Primary Function | Example/Provider |

|---|---|---|---|

| ESM / ProtBERT Models | Software Model | Pre-trained pLMs for feature extraction, fine-tuning, or inference. | Hugging Face Transformers, FairSeq |

| Protein Sequence Database | Data | Source of sequences for training, evaluation, or as a reference for wild-type sequences. | UniProt, UniRef, BFD |

| Structure Database | Data | Provides ground truth structural data for tasks like contact prediction or secondary structure fine-tuning. | Protein Data Bank (PDB) |

| Variant Effect Benchmark | Dataset | Curated datasets for evaluating zero-shot prediction performance (e.g., pathogenic vs. benign mutations). | DeepMind's ClinVar benchmark |

| Fine-tuning Framework | Software | High-level libraries to facilitate adaptation of pLMs to custom downstream tasks. | PyTorch Lightning, Hugging Face Trainer |

| Computation Hardware (GPU/TPU) | Hardware | Accelerates model training, fine-tuning, and inference due to the large size of modern pLMs. | NVIDIA A100, Google Cloud TPU v4 |

| Structure Prediction Suite | Software | Tools for generating or analyzing protein structures, often used in conjunction with pLM embeddings. | AlphaFold2, PyMOL |

| Evolutionary Coupling Analysis Tools | Software | Provides independent evolutionary signals for validating pLM-predicted contacts or co-evolution. | EVcouplings, plmDCA |

Implementing ESM and ProtBERT: Step-by-Step Workflows and Research Applications

Within the broader thesis of understanding transformer-based protein language models (pLMs), this guide provides a technical roadmap for researchers to access and utilize three foundational pre-trained models: ESM-2, ESMfold, and ProtBERT. These models, developed by Meta AI (ESM family) and the ProtBERT team, have become critical tools for decoding protein sequence-structure-function relationships, offering powerful applications in computational biology and therapeutic design. This document serves as an in-depth technical primer for researchers and drug development professionals seeking to integrate these state-of-the-art tools into their experimental pipelines.

The models are hosted on two primary platforms: Hugging Face transformers library and GitHub repositories. The table below summarizes the core access details and model characteristics.

Table 1: Model Specifications and Access Points

| Model Name | Primary Developer | Key Function | Hugging Face Hub Model ID | GitHub Repository | Primary Framework |

|---|---|---|---|---|---|

| ESM-2 | Meta AI | Protein sequence representation learning | facebook/esm2_t[6,12,30,33,36,48] |

facebookresearch/esm | PyTorch |

| ESMfold | Meta AI | High-accuracy protein structure prediction | facebook/esmfold_v1 |

facebookresearch/esm | PyTorch |

| ProtBERT | BioBERT Team | Protein sequence understanding (BERT-based) | Rostlab/prot_bert |

agemagician/ProtTrans | TensorFlow/PyTorch |

Table 2: Quantitative Performance Summary (Representative Benchmarks)

| Model | Task | Key Metric (Dataset) | Reported Performance | Parameters (Largest) |

|---|---|---|---|---|

| ESM-2 (15B) | Remote Homology Detection (SCOP) | Top-1 Accuracy | 0.89 | 15 Billion |

| ESMfold | Structure Prediction (CASP14) | TM-score (on TBM targets) | 0.72 (median) | 690 Million |

| ProtBERT | Secondary Structure Prediction (CB513) | Q3 Accuracy | 0.77 | 420 Million |

Detailed Access and Implementation Protocols

Protocol 1: Accessing Models via Hugging Facetransformers

Objective: To load pre-trained model weights and tokenizers for inference using the Hugging Face ecosystem.

Materials & Software:

- Python 3.8+

pip install transformers torch biopython

Methodology:

Protocol 2: Cloning and Using GitHub Repositories

Objective: To access full codebases, including training scripts, advanced inference pipelines, and utility functions.

Methodology:

- Clone the repository:

- Use ESMfold via repository scripts:

- For ProtBERT (from ProtTrans):

- Clone the repository and follow installation instructions for either TensorFlow or PyTorch backend.

The Scientist's Toolkit: Essential Research Reagents & Software

| Item | Function/Specification | Source/Example |

|---|---|---|

| Pre-trained Model Weights | Frozen parameters of the neural network trained on evolutionary-scale data. | Hugging Face Hub, GitHub releases |

| Model Tokenizer | Converts amino acid sequences into model-readable token IDs with special tokens. | Bundled with transformers model |

| GPU Compute Instance | Accelerated hardware for model inference (minimum 16GB VRAM for large models). | NVIDIA A100, V100, or comparable |

| PyTorch/TensorFlow | Deep learning frameworks required to run model forward passes. | Version 1.12.0+ |

| Biopython | Library for handling biological sequence data (parsing FASTA, etc.). | biopython package |

| PDB File Parser | For handling and comparing predicted 3D structures (e.g., biopython PDB module). |

Required for structure analysis |

Protocol 3: Experimental Workflow for Functional Site Prediction

Objective: To combine ESM-2 embeddings with a downstream classifier to predict catalytic residues.

Methodology:

- Embedding Extraction: Generate per-residue embeddings for a curated dataset of enzyme sequences using ESM-2 (

facebook/esm2_t33_650M_UR50D). - Label Alignment: Align embeddings to ground truth catalytic residue annotations from the Catalytic Site Atlas (CSA).

- Classifier Training: Train a simple logistic regression or MLP classifier on the embeddings, using 5-fold cross-validation.

- Evaluation: Report precision, recall, and F1-score on a held-out test set.

Visualized Workflows

Access and Inference Workflow for pLMs

Typical Experimental Pipeline Using pLM Embeddings

Accessing ESM-2, ESMfold, and ProtBERT via the outlined interfaces provides researchers with immediate capability to leverage state-of-the-art protein representations. The choice between Hugging Face for rapid inference and GitHub for full experimental flexibility depends on the specific research goals. Integrating these models into standardized bioinformatics pipelines, as demonstrated, enables systematic investigation of protein sequence landscapes, accelerating discovery in protein engineering and drug development.

This technical guide details the critical data preparation pipeline required for applying protein language models like ESM (Evolutionary Scale Modeling) and ProtBERT to research in computational biology and drug development. Proper preprocessing is foundational for leveraging these models' capacity to learn structural and functional patterns from protein sequences. This pipeline transforms raw biological sequence data into a format digestible by deep learning architectures.

Foundational Concepts: ESM & ProtBERT

ESM and ProtBERT are transformer-based models pre-trained on millions of protein sequences. ESM models, from Meta AI, are trained on UniRef datasets using a masked language modeling objective, learning evolutionary relationships. ProtBERT, from the ProtTrans family, adapts BERT's architecture specifically for proteins. Both require precise tokenization of amino acid sequences into discrete numerical IDs.

The Data Preparation Pipeline

Input: FASTA File Acquisition & Validation

The pipeline begins with FASTA format files. Each record contains a sequence identifier (header) and the amino acid sequence using the standard 20-letter code.

Experimental Protocol: FASTA Validation and Cleaning

- Source Data: Download sequences from curated databases (UniProt, PDB).

- Validation: Check for invalid characters (B, J, O, U, X, Z) beyond the standard 20. Decide on handling ambiguous residues (e.g., 'X').

- Redundancy Reduction: Use CD-HIT at a 90-95% sequence identity threshold to create a non-redundant set.

- Sequence Length Filtering: Filter sequences based on model constraints (e.g., ESM-2 maximum context length of 1024).

Table 1: Common Public Protein Sequence Datasets

| Dataset | Size (Approx.) | Description | Common Use Case |

|---|---|---|---|

| UniRef100 | Millions of clusters | Comprehensive, non-redundant protein sequences | Large-scale pre-training |

| UniRef90 | Tens of millions | 90% identity clusters | Balanced diversity/size |

| PDB | ~200k sequences | Experimentally determined structures | Structure-function studies |

| Swiss-Prot | ~500k sequences | Manually annotated, high-quality | Fine-tuning, benchmarking |

Title: FASTA File Cleaning and Validation Workflow

Core Step: Tokenization

Tokenization maps each amino acid in a sequence to a unique integer ID defined by the model's vocabulary.

Experimental Protocol: Tokenization for ESM/ProtBERT

- Import Model Tokenizer: Load the tokenizer from the model's library (e.g.,

esm.pretrained.load_model_and_alphabet()ortransformers.AutoTokenizer.from_pretrained("Rostlab/prot_bert")). - Add Special Tokens: Prepend the sequence with a beginning-of-sequence token (

<cls>or<s>) and append an end-of-sequence token (<eos>). This is often handled automatically. - Map Residues: Convert each amino acid letter (e.g., 'M', 'A', 'K') to its corresponding token ID.

- Padding/Truncation: For batch processing, pad sequences to the longest in the batch (or a predefined max length) using a padding token ID, or truncate exceeding sequences.

Table 2: Tokenization Comparison: ESM-2 vs ProtBERT

| Aspect | ESM-2 Tokenizer | ProtBERT Tokenizer |

|---|---|---|

| Vocabulary | 33 tokens (20 AAs + special) | 31 tokens (20 AAs + special) |

| Special Tokens | <cls>, <eos>, <pad>, <unk>, <mask> |

[PAD], [UNK], [CLS], [SEP], [MASK] |

| Beginning Token | <cls> |

[CLS] |

| End Token | <eos> |

(Not always appended) |

| Padding Side | Right | Right |

| Max Length | 1024 (ESM-2 3B/650M) | 512 (BERT-base constraint) |

Advanced Preparation: Creating Input Features

Beyond tokens, models may use additional input features.

Experimental Protocol: Generating Attention Masks

- Create Mask: Generate a binary attention mask array parallel to the token IDs.

- Assign Values: Set

1for all real tokens (including special<cls>). Set0for all<pad>tokens. - Purpose: The mask prevents the model from attending to padding positions during self-attention computation.

Output: Batching and DataLoader Configuration

Final step organizes tokenized data for model input.

Experimental Protocol: PyTorch DataLoader Setup

- Define Dataset Class: Create a

torch.utils.data.Datasetclass that returns a dictionary for each sequence:{'input_ids': token_ids, 'attention_mask': attention_mask}. - Collate Function: Define a custom function to dynamically pad sequences within a batch to the batch's maximum length.

- Instantiate DataLoader: Use

torch.utils.data.DataLoaderwith the Dataset, collate function, and desired batch size.

Title: Tokenization and Tensor Creation Process

The Scientist's Toolkit

Table 3: Essential Research Reagents & Software for Data Preparation

| Item Name | Category | Function/Benefit |

|---|---|---|

| UniProt Knowledgebase | Data Source | Primary source of high-quality, annotated protein sequences. |

| PDB (Protein Data Bank) | Data Source | Source for sequences with experimentally determined 3D structures. |

| CD-HIT Suite | Bioinformatics Tool | Rapidly clusters sequences to remove redundancy at chosen identity threshold. |

| Biopython | Python Library | Provides parsers for FASTA and other biological formats, enabling easy sequence manipulation. |

| PyTorch | Deep Learning Framework | Provides Dataset and DataLoader classes for efficient batching and data management. |

| Hugging Face Transformers | Python Library | Provides ProtBERT tokenizer and utilities, standardizing NLP approaches for proteins. |

| ESM (Meta AI) | Python Library | Provides official ESM model loading, tokenization, and inference utilities. |

| Seaborn/Matplotlib | Python Library | Used for visualizing sequence length distributions, token frequencies, etc. |

Quality Control & Best Practices

- Sequence Length Distribution: Always plot the distribution of sequence lengths in your dataset before and after filtering.

- Token Frequency Check: Ensure no unexpected high frequency of

<unk>or padding tokens, indicating tokenization issues. - Memory Mapping: For very large datasets, use PyTorch's memory-mapped datasets (e.g.,

torch.utils.data.Datasetfromnumpy.memmap) to avoid loading all data into RAM.

A rigorous, reproducible data preparation pipeline is the critical first step in any research pipeline utilizing protein language models. Standardizing the process from FASTA to tokenized tensors ensures that downstream analyses and model predictions are based on clean, correctly formatted inputs, enabling researchers to reliably extract biological insights for drug discovery and protein engineering.

Extracting and Interpreting Per-Residue and Per-Sequence Embeddings

Within the broader thesis on protein language models (pLMs) like Evolutionary Scale Modeling (ESM) and ProtBERT, understanding their vector representations—embeddings—is foundational. These models transform discrete amino acid sequences into continuous, high-dimensional vector spaces that capture evolutionary, structural, and functional constraints learned from billions of sequences. This guide details the technical methodologies for extracting and interpreting the two primary types of embeddings: per-residue (a vector for each amino acid position) and per-sequence (a single vector representing the entire protein). Mastery of these techniques is critical for researchers and drug development professionals applying pLMs to tasks like variant effect prediction, structure inference, and functional annotation.

Model Architectures & Embedding Origins

ESM and ProtBERT, while both transformer-based, have distinct architectures that influence their embeddings.

- ESM (Evolutionary Scale Modeling): Primarily uses a standard transformer encoder architecture. The final layer's hidden states directly provide per-residue embeddings. A per-sequence embedding is often derived by taking the mean of all per-residue embeddings or by using the vector corresponding to a special

<cls>token prepended during training. - ProtBERT: Adapts the BERT (Bidirectional Encoder Representations from Transformers) architecture. It is trained with a masked language modeling objective on protein sequences. Per-residue embeddings are extracted from the final hidden layer. The per-sequence representation is typically obtained from the special

[CLS]token, designed to aggregate sequence-level information.

Table 1: Core Architectural Comparison for Embedding Extraction

| Feature | ESM-2 (Representative) | ProtBERT |

|---|---|---|

| Model Type | Transformer Encoder | BERT-like Encoder |

| Training Objective | Causal Language Modeling | Masked Language Modeling (MLM) |

| Special Tokens | <cls>, <eos>, <pad> |

[CLS], [SEP], [PAD], [MASK] |

| Primary Per-Residue Source | Final Layer Hidden States | Final Layer Hidden States |

| Primary Per-Sequence Source | Mean Pooling or <cls> token |

[CLS] token embedding |

| Typical Embedding Dimension | 512, 640, 1280, 2560 (ESM-2) | 1024 |

Experimental Protocols for Embedding Extraction

Protocol 3.1: Extracting Per-Residue Embeddings

Objective: Obtain a vector representation for each amino acid position in a protein sequence.

- Sequence Preparation: Format the input amino acid sequence as a string (e.g.,

"MKYLL...").Replace rare or ambiguous amino acids (e.g., 'U', 'O', 'Z') with a standard token (e.g.,"<unk>") or mask them as per model specification. - Tokenization: Use the model's specific tokenizer to convert the sequence into token IDs. This includes adding necessary special tokens (e.g.,

<cls>for ESM,[CLS]and[SEP]for ProtBERT). - Model Inference: Pass the token IDs through the model in inference mode (

no_grad()). Extract the last hidden state from the model's output. This tensor has the shape[batch_size, sequence_length, embedding_dimension]. - Alignment & Trimming: Map the output vectors back to the original amino acid positions, carefully removing the vectors corresponding to special tokens (like

[CLS]) if a 1:1 residue-to-vector mapping is required.

Diagram: Workflow for Per-Residue Embedding Extraction

Protocol 3.2: Extracting Per-Sequence Embeddings

Objective: Obtain a single, fixed-dimensional vector representing the entire protein sequence.

- Follow Steps 1-3 of Protocol 3.1 to tokenize and run model inference.

- Vector Pooling Strategy: Apply the model-appropriate pooling method:

- For ProtBERT: Isolate the vector corresponding to the first token (

[CLS]). - For ESM: Either isolate the

<cls>token vector or compute the mean pool across the sequence dimension of the last hidden state (often excluding padding tokens). Mean pooling is frequently used and has shown strong performance.

- For ProtBERT: Isolate the vector corresponding to the first token (

- Normalization (Optional): Apply L2 normalization to the resulting vector for downstream tasks like similarity search.

Diagram: Strategies for Per-Sequence Embedding Generation

Interpretation and Downstream Application

Embeddings are not intrinsically interpretable; their utility is revealed through downstream tasks.

Table 2: Quantitative Performance on Benchmark Tasks Using Embeddings

| Downstream Task | Model & Embedding Type | Typical Metric & Reported Performance (Example) | Key Interpretation |

|---|---|---|---|

| Remote Homology Detection | ESM-2 (Per-Sequence) | Fold-Level Accuracy: ~0.90 | Sequence embeddings encode functional/structural similarity beyond pairwise sequence alignment. |

| Secondary Structure Prediction | ESM-2 (Per-Residue) | Q3 Accuracy: ~0.84 | Per-residue vectors contain local structural information accessible via simple classifiers (MLP). |

| Variant Effect Prediction | ESM-1v (Per-Residue) | Spearman's ρ vs. experimental fitness: ~0.70 | Embedding delta (mutant - wild-type) reflects the perturbation of the local structural/functional landscape. |

| Protein-Protein Interaction Prediction | ProtBERT (Per-Sequence) | AUC-ROC: ~0.85 | Joint representation of two protein embeddings (concatenation, dot product) models interaction propensity. |

Protocol 4.1: Interpretability via Embedding Projection Objective: Visualize the high-dimensional embedding space to assess clustering of protein families.

- Embedding Corpus: Extract per-sequence embeddings for a diverse set of proteins with known family annotations (e.g., from Pfam).

- Dimensionality Reduction: Apply techniques like t-SNE (t-distributed Stochastic Neighbor Embedding) or UMAP (Uniform Manifold Approximation and Projection) to project embeddings to 2D or 3D.

- Visualization & Analysis: Plot the reduced vectors, coloring points by protein family. Qualitative assessment of cluster separation indicates the model's ability to learn biologically meaningful representations.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Resources for Embedding Workflows

| Item Name | Type/Provider | Function in Experiment |

|---|---|---|

Hugging Face transformers |

Python Library | Provides pre-trained model loading, tokenization, and standard interfaces for ESM, ProtBERT, and related pLMs. |

| PyTorch / JAX | Deep Learning Framework | Core tensor operations and efficient GPU-accelerated model inference. |

| BioPython | Python Library | Handles FASTA I/O, sequence parsing, and managing biological data formats. |

| ESM Model Zoo | FAIR (Meta AI) | Repository of pre-trained ESM model weights (e.g., ESM-2, ESM-1v, ESMFold) for direct use. |

| ProtBERT Weights | BFD / RostLab | Pre-trained weights for ProtBERT models, typically accessed via Hugging Face. |

| Scikit-learn | Python Library | Provides tools for dimensionality reduction (PCA, t-SNE), clustering, and training simple downstream classifiers (logistic regression, SVM). |

| NumPy / SciPy | Python Libraries | Foundational numerical operations and statistical analysis of embedding vectors (e.g., cosine similarity, distance metrics). |

| Plotly / Matplotlib | Python Library | Creation of publication-quality visualizations for embedding projections and result analysis. |

| Protein Data Bank (PDB) | Database | Source of ground-truth structural and functional annotations for validating embedding interpretations. |

| Pfam Database | Database | Provides curated protein family annotations for benchmarking sequence embedding clustering. |

This whitepaper details the first major application within a broader thesis investigating the efficacy of protein language models (pLMs), specifically ESM (Evolutionary Scale Modeling) and ProtBERT, for computational protein design. The core thesis posits that pLMs, trained on evolutionary sequence statistics, encode fundamental principles of protein structure and function, enabling zero-shot prediction of mutational outcomes without task-specific training. This application focuses on predicting biophysical properties like stability and fitness directly from sequence, a foundational task for protein engineering and therapeutic development.

Theoretical Foundation: From Sequence Embeddings to Phenotype

Both ESM and ProtBERT generate contextual embeddings for each amino acid in a sequence. The hypothesis for zero-shot prediction is that the model's internal representations capture the "wild-type" sequence context. A mutation perturbs this context, and the resulting change in the model's likelihood or hidden state representations can be correlated with experimental measures.

- ESM's Approach: Primarily uses the log-likelihood score of the mutated sequence. The pseudo-log-likelihood (PLL) or the change in PLL (ΔPLL) between wild-type and mutant is computed. A significant drop in likelihood suggests the mutation is non-native and potentially destabilizing.

- ProtBERT's Approach: Often utilizes the embeddings from the final layer. The difference in the vector representation (e.g., cosine similarity, Euclidean distance) for the mutated residue versus the wild-type, aggregated with its context, serves as a predictive feature.

Experimental Protocols for Benchmarking

Protocol 1: Predicting Protein Stability (ΔΔG)

- Dataset Curation: Use standardized benchmarks like S669, Myoglobin stability, or ThermoMutDB. Split data into training (for baseline models) and hold-out test sets strictly for evaluation.

- Sequence Scoring with pLMs:

- For ESM-1v or ESM-2: Tokenize the wild-type and mutant sequences. Compute the log probabilities for each position using the model's masked language modeling head. Calculate ΔPLL = PLL(mutant) - PLL(wild-type).

- For ProtBERT: Pass the sequence through the model. Extract the hidden state vector for the mutated position. Compute a distortion metric (e.g., norm of the difference vector) between wild-type and mutant contexts.

- Regression Model: Fit a simple linear regression (or ridge regression) using the computed ΔPLL or embedding distortion metric from step 2 as the sole feature to predict experimental ΔΔG values on the training set. For true zero-shot, directly correlate the raw metric with ΔΔG without regression.

- Evaluation: Report Pearson's r and Spearman's ρ between predictions and experimental values on the hold-out test set.

Protocol 2: Predicting Fitness from Deep Mutational Scanning (DMS)

- Dataset Curation: Use DMS data from proteins like GB1, BRCA1, or beta-lactamase. Fitness scores are typically normalized log enrichment scores.

- Model Scoring: Apply the same scoring methods as in Protocol 1 (ΔPLL for ESM, embedding distortion for ProtBERT) for every single-point mutant in the DMS dataset.

- Aggregation: For multi-variant sequences, scores can be summed (assuming independence).

- Evaluation: Compute rank correlation coefficients (Spearman's ρ) between the model's scores and the experimental fitness scores across all variants.

Summarized Quantitative Data

Table 1: Performance Comparison on Stability Prediction (ΔΔG)

| Model / Method | Dataset | Pearson's r | Spearman's ρ | Notes |

|---|---|---|---|---|

| ESM-1v (ΔPLL) | S669 | 0.61 | 0.59 | Zero-shot, no structure |

| ESM-2 (15B) Embedding | Myoglobin | 0.73 | 0.70 | Linear probe on embeddings |

| ProtBERT (ΔEmbedding) | S669 | 0.52 | 0.50 | Zero-shot, cosine distance |

| Rosetta DDG | S669 | 0.65 | 0.63 | Requires high-quality structure |

| DeepSequence | S669 | 0.67 | 0.65 | Requires MSA |

Table 2: Performance Comparison on Fitness Prediction (DMS)

| Model / Method | Protein (DMS) | Spearman's ρ | Notes |

|---|---|---|---|

| ESM-1v (ΔPLL) | GB1 | 0.48 | Zero-shot, average of 5 models |

| ESM-1v (ΔPLL) | BRCA1 | 0.34 | Zero-shot, RBD domain |

| ESM-2 (ΔPLL) | beta-lactamase | 0.58 | Zero-shot |

| ProtBERT-BFD | GB1 | 0.42 | Zero-shot, embedding-based |

| EVmutation (MSA) | GB1 | 0.53 | Requires deep MSA |

Visualizations

(Title: Zero-Shot Prediction Workflow with pLMs)

(Title: ESM ΔPLL Calculation for a Single Mutation)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Zero-Shot Mutation Prediction Studies

| Item / Resource | Function & Description |

|---|---|

| ESM Model Suite (ESM-1v, ESM-2, ESM-IF1) | Pre-trained pLMs for direct scoring and embedding extraction. Accessed via Hugging Face Transformers or FairSeq. |

| ProtBERT Model (ProtBERT-BFD) | Alternative pLM trained on BFD, available through Hugging Face, for comparative studies. |

| DMS & Stability Datasets (S669, ThermoMutDB, ProteinGym) | Curated benchmark datasets for training and rigorous evaluation of prediction methods. |

| Hugging Face Transformers Library | Primary API for loading, tokenizing, and running inference with pLMs in Python. |

| PyTorch / JAX | Deep learning frameworks required to run the models and perform gradient computations if needed. |

| EVcouplings / DeepSequence | Traditional MSA-based baseline methods essential for comparative performance analysis. |

| FoldX or Rosetta | Physics/structure-based baseline methods to compare against zero-shot sequence-only approaches. |

| ProteinGym Benchmark Suite | Integrated platform for large-scale, standardized benchmarking across many DMS assays. |

Within the broader thesis on leveraging deep learning for protein science, this chapter details the application of protein language models (pLMs), such as ESM (Evolutionary Scale Modeling) and ProtBERT, for the critical task of protein function prediction and annotation. Accurately assigning Gene Ontology (GO) terms and Enzyme Commission (EC) numbers is fundamental to understanding biological processes and accelerating drug discovery. This guide provides a technical overview of the methodologies, experimental protocols, and state-of-the-art performance metrics for researchers and industry professionals.

Core Methodology and Model Architectures

Function prediction with pLMs typically follows a transfer learning paradigm. A pre-trained model, which has learned generalizable representations of protein sequences from billions of examples, is fine-tuned on labeled datasets for specific functional annotation tasks.

- ESM-2 & ESMFold: The ESM family, particularly ESM-2 (up to 15B parameters), provides state-of-the-art sequence representations. ESMFold enables direct 3D structure prediction from sequence, which can inform function.

- ProtBERT & ProtBERT-BFD: These BERT-based models, trained on massive protein sequence corpora (UniRef100/BFD), excel at capturing intricate semantic and syntactic relationships within protein "language."

Table 1: Comparison of Core pLMs for Function Prediction

| Model | Architecture | Training Data | Max Params | Key Output for Function Prediction |

|---|---|---|---|---|

| ESM-2 | Transformer (Decoder-like) | UniRef50 (269M seqs) | 15 Billion | Sequence embeddings (per-residue & per-sequence) |

| ProtBERT | BERT (Encoder) | BFD (2.1B seqs) & UniRef100 | 420 Million | Contextual sequence embeddings (CLS token) |

| ProtT5 | T5 (Encoder-Decoder) | BFD & UniRef100 | 770 Million | Sequence embeddings from encoder |

Experimental Protocol: Fine-tuning for GO/EC Prediction

A standard protocol for training a function prediction classifier using pLM embeddings is outlined below.

Protocol 1: Fine-tuning a GO Term Predictor using ESM-2 Embeddings

Data Curation:

- Source protein sequences and their GO annotations (Molecular Function, Biological Process, Cellular Component) from the UniProt-GOA database.

- Filter for experimentally validated annotations (evidence codes: EXP, IDA, IPI, etc.).

- Split data into training, validation, and test sets, ensuring no >30% sequence similarity between splits (e.g., using CD-HIT).

Feature Extraction:

- Use a pre-trained ESM-2 model (e.g.,

esm2_t33_650M_UR50D) to generate embeddings for each protein sequence. - Extract the per-sequence representation (the embedding corresponding to the

<cls>token or by mean-pooling residue embeddings).

- Use a pre-trained ESM-2 model (e.g.,

Classifier Design & Training:

- The prediction task is framed as a multi-label classification problem. A shallow neural network is appended to the frozen pLM backbone.

- Architecture:

Input Embedding (1280-dim) -> Dropout (0.5) -> Linear Layer (1024) -> ReLU -> Dropout (0.5) -> Linear Output Layer (N_GO_terms). - Use Binary Cross-Entropy (BCE) loss with logits to handle multiple labels per sample.

- Train for 20-50 epochs using the AdamW optimizer (lr=1e-4), monitoring F1-max on the validation set.

Evaluation:

- Evaluate on the held-out test set using standard metrics: Precision-Recall curves, F1 score, and area under the curve (AUPR).

Protocol 2: EC Number Prediction via Protocol Classification EC number prediction can be treated as a hierarchical multi-class classification over four levels (e.g., 1.2.3.4).

- Use ProtBERT embeddings as input features.

- Train four separate classifiers, one for each EC digit, where the output of the previous classifier can be used as additional context for the next.

- Loss is computed as the sum of cross-entropy losses for each hierarchical level.

Table 2: Benchmark Performance of pLMs on Protein Function Prediction Tasks

| Model | Task (Dataset) | Key Metric | Reported Performance | Reference (Year) |

|---|---|---|---|---|

| ESM-2 (650M) | GO Prediction (CAFA3) | F-max (Molecular Function) | 0.592 | Lin et al. (2023) |

| ProtBERT-BFD | GO Prediction (DeepGOPlus) | AUPR (Biological Process) | 0.397 | Brandes et al. (2022) |

| ESM-1b + ConvNet | EC Prediction (EnzymeNet) | Top-1 Accuracy (4th digit) | 0.781 | Sapiens (2021) |

| ProtT5-XL-U50 | Remote Homology Detection (SCOP) | Accuracy | 0.904 | Elnaggar et al. (2021) |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Protein Function Prediction Experiments

| Item | Function / Description | Example / Source |

|---|---|---|

| Pre-trained pLMs | Provide foundational protein sequence representations for feature extraction or fine-tuning. | ESM-2, ProtBERT (Hugging Face, GitHub) |

| Annotation Databases | Source of ground-truth labels for training and evaluation. | UniProt-GOA (GO terms), BRENDA (EC numbers) |

| Benchmark Suites | Standardized datasets for fair model comparison. | CAFA (Critical Assessment of Function Annotation) |

| Sequence Clustering Tools | Ensure non-redundant dataset splits to prevent data leakage. | CD-HIT, MMseqs2 |

| Deep Learning Framework | Environment for building, training, and evaluating models. | PyTorch, PyTorch Lightning |

| Embedding Extraction Libraries | Simplified interfaces to generate embeddings from pLMs. | transformers (Hugging Face), bio-embeddings pipeline |

| Functional Enrichment Tools | Analyze and visualize high-level functional trends in predicted terms. | DAVID, GOrilla |

Workflow and Pathway Visualizations

- General Workflow for pLM-based Function Prediction

- Fine-tuning Protocol with Frozen Backbone

- Data Pipeline for Model Training & Evaluation

The Evolutionary Scale Modeling (ESM) project represents a paradigm shift in protein science, leveraging deep learning on evolutionary sequence data to infer protein structure and function. This whitepaper details the third critical application: high-accuracy protein structure prediction via ESMFold. ESMFold, derived from the ESM-2 language model, operates distinctly from AlphaFold2. While both achieve remarkable accuracy, ESMFold utilizes a single sequence-to-structure transformer, bypassing explicit multiple sequence alignment (MSA) generation and pairing, thus offering a substantial reduction in inference time. This application is the structural culmination of the semantic representations learned by ESM and ProtBERT models, demonstrating how latent evolutionary and linguistic patterns in sequences directly encode three-dimensional folding principles.

Core Architecture and Methodology