Data-Driven CAPE Protein Engineering: AI-Powered Approaches for Next-Generation Therapeutics

This article provides a comprehensive overview of data-driven methods in Computationally Assisted Protein Engineering (CAPE).

Data-Driven CAPE Protein Engineering: AI-Powered Approaches for Next-Generation Therapeutics

Abstract

This article provides a comprehensive overview of data-driven methods in Computationally Assisted Protein Engineering (CAPE). Targeted at researchers and drug development professionals, it explores the foundational principles of AI and machine learning in protein design, details cutting-edge methodological workflows and their applications in therapeutic development, addresses common challenges and optimization strategies, and offers a comparative analysis of validation techniques. By synthesizing insights from the latest research, this guide aims to equip scientists with the knowledge to harness data-centric approaches for accelerating and improving protein-based drug discovery.

The Data-Driven Revolution in CAPE: Core Concepts and AI Frameworks

CAPE (Computed Atlas of Protein Engineering) represents a paradigm shift in protein design, moving from purely structure-guided rational design to data-driven, machine learning (ML)-powered prediction. This document, framed within a broader thesis on CAPE data-driven approaches, details the application notes and experimental protocols that underpin this transition. The core thesis posits that integrating high-throughput mutational scanning data with AI models enables the accurate prediction of functional protein landscapes, dramatically accelerating therapeutic and industrial enzyme development.

Data Presentation: Quantitative Benchmarks of CAPE Approaches

Table 1: Comparison of Protein Engineering Methodologies

| Methodology | Typical Throughput (Variants/Experiment) | Key Measurable Output | Primary Limitation | Success Rate (Functional Variants) |

|---|---|---|---|---|

| Rational Design (Pre-CAPE) | 10 - 100 | ΔΔG (Folding Stability), Docking Scores | Relies on static structures & expert intuition | ~5-20% |

| Directed Evolution (Classical) | 10^3 - 10^6 | Fitness (e.g., Binding Affinity, Activity) | Labor-intensive cycles; limited sequence space exploration | 0.001-1% |

| Deep Mutational Scanning (DMS) | 10^4 - 10^7 | Enrichment Scores for every single mutant | Measures in vitro fitness, not always predictive of in vivo function | N/A (Mapping tool) |

| AI-Powered Prediction (CAPE Framework) | Virtually Unlimited (in silico) | Predicted Fitness Landscape (Probability Scores) | Quality dependent on training data size/diversity | Reported 30-50%* |

*Recent studies (e.g., on GB1 protein and SARS-CoV-2 RBD) show AI models trained on DMS data can predict top-performing functional variants with 30-50% experimental validation success in first-round screening.

Table 2: Key Performance Metrics for AI Models in CAPE

| Model Type | Example | Typical Training Data | Prediction Target | Reported Pearson Correlation (r) with Experiment |

|---|---|---|---|---|

| Unsupervised (Pre-training) | ESM-2, AlphaFold | Evolutionary Sequences (UniRef) | Structure/Sequence Conservation | N/A (Foundation model) |

| Supervised (Fine-tuned) | Variant Effect Predictors (VEP) | DMS Datasets (e.g., ProteinGym) | Variant Fitness/Effect | 0.4 - 0.85 (varies by protein & dataset) |

Experimental Protocols

Protocol 3.1: Generating DMS Data for CAPE Model Training Objective: Create a high-quality dataset of variant fitness scores for a target protein domain to train or benchmark a CAPE prediction model.

Library Design & Construction:

- Use saturation mutagenesis (e.g., NNK codon scheme) to cover all single-point mutations in the domain of interest.

- Clone the variant library into a display (phage/yeast) or survival-selection system via Gibson assembly.

Selection & Sequencing:

- Subject the library to 2-4 rounds of selection under the desired functional pressure (e.g., binding to immobilized target, enzymatic activity via FACS).

- Harvest DNA from pre-selection (input) and post-selection (output) populations.

- Amplify the variant region and prepare for next-generation sequencing (NGS) using dual-indexed primers.

Data Processing & Fitness Score Calculation:

- Align NGS reads to the reference sequence and count the frequency of each variant.

- Calculate an enrichment score (ε) for each mutant:

ε = log2( (count_output + pseudocount) / (count_input + pseudocount) ). - Normalize scores to the wild-type (set to 0) and a null variant (set to -1).

Protocol 3.2: Validating AI-Predicted Variants Objective: Experimentally test the top variants proposed by a CAPE model.

Variant Synthesis:

- Select 20-50 top-predicted variants covering a range of predicted fitness scores.

- Include 5-10 negative control variants (low predicted scores) and the wild-type.

- Obtain genes via array-synthesized oligo pools or site-directed mutagenesis.

High-Throughput Expression & Purification:

- Express variants in a 96-well deep-well plate format using E. coli or HEK293T systems.

- Perform immobilized metal affinity chromatography (IMAC) in a plate format for His-tagged proteins.

- Use Bradford or SDS-PAGE analysis to normalize protein concentrations.

Functional Assay:

- For binding proteins: Perform a plate-based ELISA or biolayer interferometry (BLI) to measure binding affinity (KD) to the target.

- For enzymes: Use a fluorescence- or absorbance-based kinetic assay in a plate reader to determine kcat/KM.

- Plot experimental functional scores against the AI-predicted scores to determine validation correlation.

Mandatory Visualizations

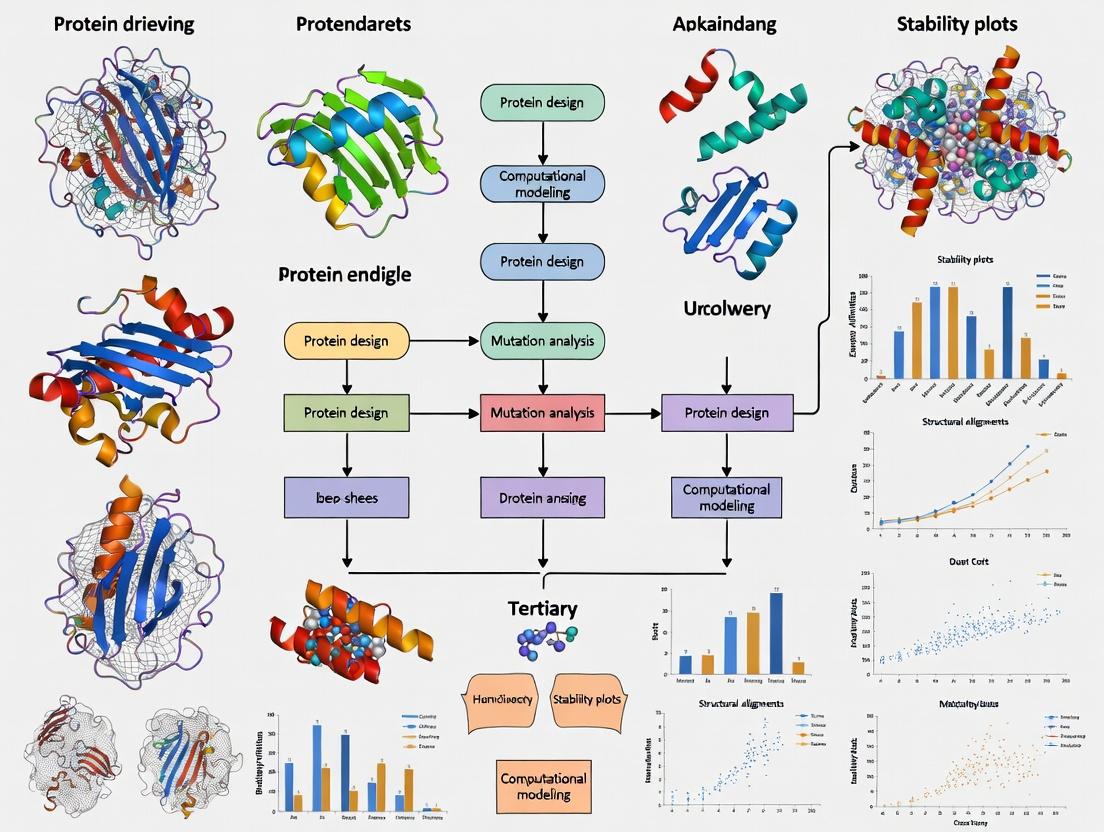

Title: The CAPE Paradigm Shift in Protein Engineering

Title: DMS to AI Model Workflow for CAPE

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Materials for CAPE-Driven Experiments

| Item | Function | Example Product/Kit |

|---|---|---|

| NNK Oligonucleotide Pool | For constructing saturation mutagenesis libraries covering all 20 amino acids at defined positions. | Custom TruGrade Oligo Pools (Twist Bioscience) |

| Cloning & Assembly Master Mix | High-efficiency assembly of variant libraries into display vectors. | Gibson Assembly Master Mix (NEB) |

| Phage or Yeast Display System | Physical linkage of genotype (DNA) to phenotype (protein) for selection. | pComb3X Phage System / pYD1 Yeast Display Vector |

| Streptavidin-Coated Magnetic Beads | For capturing biotinylated target during binding selection rounds. | Dynabeads M-280 Streptavidin |

| NGS Library Prep Kit | Preparation of variant amplicons for high-throughput sequencing. | Illumina DNA Prep Kit |

| High-Throughput Protein Expression System | Parallel small-scale expression of predicted variant proteins. | Expi293F or BL21(DE3) E. coli in 96-deepwell blocks |

| Automated Liquid Handler | For reproducible pipetting in library construction, selection, and assay steps. | Hamilton STARlet |

| Microplate Reader with Kinetics | For performing high-throughput functional assays (BLI, fluorescence, absorbance). | Octet RED96e (BLI) or CLARIOstar Plus (Fluorescence) |

| AI/ML Software Platform | For training and running variant effect prediction models. | PyTorch/TensorFlow with custom scripts; EVE, ProteinMPNN frameworks |

Within the broader thesis on CAPE (Computational Analysis for Protein Engineering) data-driven approaches, the integration of machine learning has revolutionized the field of protein science. This overview details four pivotal models—Alphafold2, ProteinMPNN, RFdiffusion, and the ESM family—that respectively address the core challenges of structure prediction, sequence design, de novo generation, and functional inference. Together, they form a synergistic pipeline for rational protein engineering and drug development.

Table 1: Core Model Specifications and Performance Metrics

| Model | Primary Developer(s) | Core Task | Key Architectural Innovation | Benchmark Performance (Typical) |

|---|---|---|---|---|

| AlphaFold2 | DeepMind | Protein Structure Prediction | Evoformer (MSA processing) & Structure Module | CASP14: GDT_TS ~92.4 (on hard targets) |

| ProteinMPNN | University of Washington | Protein Sequence Design | Graph Neural Network (GNN) with masked encoding | Recovery: >50% on native-like backbones; Speed: ~0.02 sec/pose |

| RFdiffusion | University of Washington/Baker Lab | De Novo Protein Design | Diffusion model built on RoseTTAFold architecture | Success Rate: ~20-50% for novel folds; Can design binders from scratch |

| ESM-2/ESMFold | Meta AI | Protein Language Modeling / Folding | Transformer (Decoder-only for ESM-2) | ESM2 650M: PPL 6.42; ESMFold: TM-score ~0.7 on CAMEO targets |

Table 2: Comparative Practical Utility in CAPE Pipeline

| Model | Input | Output | Typical Use Case in Protein Engineering | Key Strength |

|---|---|---|---|---|

| AlphaFold2 | Amino Acid Sequence (MSA/Template) | 3D Atomic Coordinates | Predicting wild-type & mutant structures for functional analysis | Unparalleled accuracy for single-state prediction. |

| ProteinMPNN | Protein Backbone + Specified Residues | Optimized Amino Acid Sequence | Designing sequences that fold into a given scaffold or binder. | Fast, robust, and produces diverse, soluble sequences. |

| RFdiffusion | Conditioning (e.g., partial motif, symmetry) / Noise | Novel Protein Backbone | Generating entirely new protein scaffolds or binders to a target shape. | Controllable generation of novel, designable structures. |

| ESM (e.g., ESM-2) | Amino Acid Sequence | Per-residue embeddings, Mutational Effect Scores | Zero-shot prediction of fitness, stability, and functional sites. | Learns evolutionary insights without MSA; rapid inference. |

Detailed Application Notes & Protocols

Protocol 1: Predicting Mutant Effects Using AlphaFold2 and ESM-2

Objective: Assess the impact of point mutations on protein stability and function in silico. Background: This protocol leverages AlphaFold2 for structural context and ESM-2 for evolutionary-based fitness prediction, forming a core analysis in CAPE.

Input Preparation:

- Generate a FASTA file for the wild-type protein sequence.

- Create a list of single-point mutations (e.g., A123V) to evaluate.

Structure Prediction with AlphaFold2 (ColabFold):

- Use the ColabFold implementation (which pairs AF2 with fast MMseqs2) for accessibility.

- Input the wild-type FASTA sequence. For each mutant, create a new FASTA file.

- Run predictions with default settings, but enable

--amberrelaxation for improved side-chain geometry. - Output: Predicted structures (PDB files) and per-residue pLDDT confidence scores for wild-type and each mutant.

Structural Analysis:

- Align mutant and wild-type predicted structures using PyMOL or Biopython.

- Calculate Root Mean Square Deviation (RMSD) of the backbone.

- Visually inspect and analyze local conformational changes, especially at the mutation site and binding/catalytic pockets.

Functional Inference with ESM-2:

- Load the pretrained

esm2_t33_650M_UR50Dmodel. - Use the

esm.inverse_foldingoresm.msa_transformermodules to compute the log-likelihood of the wild-type and mutant sequences. - Calculate the ESM Score (Δlog likelihood = log P(mutant) - log P(wild-type)). A negative Δlog likelihood suggests the mutation is less evolutionarily favored.

- Map ESM scores and AlphaFold2 pLDDT changes onto the predicted structure for a unified view.

- Load the pretrained

Protocol 2:De NovoBinder Design with RFdiffusion and ProteinMPNN

Objective: Generate a novel protein that binds to a specific target epitope. Background: This protocol exemplifies a fully data-driven design cycle, moving from a target shape to a expressible protein sequence.

Target Definition and Conditioning:

- Obtain the 3D structure of the target protein (or domain of interest) in PDB format.

- Define the epitope by selecting specific residue chains and numbers.

- This epitope will serve as the "conditioning" input for RFdiffusion.

Backbone Generation with RFdiffusion:

- Use the

rfdiffusionpackage with theinpaintingorpartial diffusionprotocols. - Input the target epitope coordinates and specify the desired total length of the binder.

- Run multiple (hundreds to thousands) diffusion trajectories to generate a diverse set of scaffold backbones that geometrically complement the target.

- Output: A collection of PDB files for the generated protein backbones in complex with the target.

- Use the

Sequence Design with ProteinMPNN:

- For each generated backbone, prepare the input PDB file, fixing the target chain(s) and marking the designed chain as "designed".

- Run ProteinMPNN with sampling settings (e.g.,

num_samples=64, temperature=0.1) to generate multiple optimized sequences per backbone. - Filter outputs based on ProteinMPNN's per-residue confidence scores and sequence diversity.

In Silico Validation:

- Use AlphaFold2 or ESMFold to predict the structure of the designed sequence in isolation (without the target). Assess folding confidence (pLDDT/IpTM).

- Use AlphaFold2's complex prediction mode (e.g., ColabFold's

pair_mode) or docking software to predict the binding mode of the designed binder to the target. Compare to the original RFdiffusion model. - Rank designs based on predicted fold confidence and binding interface quality (e.g., shape complementarity, number of contacts).

Workflow Visualizations

Title: CAPE Protein Engineering ML Pipeline

Title: De Novo Binder Design Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools & Resources

| Item / Resource | Function in Research | Typical Source / Implementation |

|---|---|---|

| ColabFold | Provides accessible, cloud-based AlphaFold2 and complex prediction, integrating fast homology search. | GitHub Repository / Google Colab |

| PyMOL / ChimeraX | Molecular visualization software for analyzing predicted/generated 3D structures and mutations. | Commercial / Open-Source |

| PyRosetta / BioPython | Python libraries for structural analysis, energy scoring, and automating protein data handling. | Open-Source Libraries |

| ESM / HuggingFace | Repository for loading pretrained ESM models for embeddings and variant effect prediction. | HuggingFace transformers |

| RFdiffusion & ProteinMPNN Suites | Integrated software packages for de novo design and sequence optimization. | GitHub (Baker Lab) |

| PDB (Protein Data Bank) | Primary repository for experimentally-solved protein structures used as inputs and benchmarks. | rcsb.org |

| UniRef / MGnify | Databases of protein sequences and metagenomic data used for generating MSAs in structure prediction. | EBI/EMBL Resources |

Within the paradigm of Computational and AI-driven Protein Engineering (CAPE), data is the fundamental substrate for model development. The efficacy of predictive models for protein stability, function, and design is intrinsically linked to the volume, diversity, and quality of training data. This document catalogs primary data types and sources, providing application notes and protocols to facilitate their acquisition and integration for CAPE research initiatives.

Types of Protein Data for Model Training

Table 1: Core Data Types for CAPE Model Training

| Data Type | Description | Primary Use in CAPE Models | Typical Format/Scale |

|---|---|---|---|

| Sequences & Alignments | Primary amino acid sequences; multiple sequence alignments (MSAs) of homologous proteins. | Learning evolutionary constraints, generating positional scoring matrices, guiding de novo design. | FASTA, CLUSTAL, STOCKHOLM; 10^3 - 10^7 sequences. |

| 3D Structures | Atomic coordinates from X-ray crystallography, cryo-EM, or NMR. | Learning structure-function relationships, training force fields, structural feature extraction. | PDB, mmCIF files; ~200,000 entries in PDB. |

| Fitness Landscapes | Quantitative measurements of protein function (e.g., activity, binding affinity, thermostability) for variant libraries. | Supervised training for predicting functional outcomes of mutations. | CSV/TSV with variant sequences and scores; 10^4 - 10^6 variants. |

| Biophysical & Stability Data | Measurements of melting temperature (Tm), folding free energy (ΔΔG), aggregation propensity, solubility. | Training models to predict protein stability and developability. | CSV/TSV; datasets range from 10^2 to 10^4 measurements. |

| Protein-Protein Interaction (PPI) Networks | Binary or quantitative interaction data from high-throughput screens (e.g., yeast two-hybrid). | Inferring functional modules, guiding multi-protein complex design. | Network formats (SIF, GraphML); 10^3 - 10^5 interactions. |

| Deep Mutational Scanning (DMS) | Comprehensive maps of the functional effect of single or multiple amino acid substitutions across a protein. | Gold-standard variant effect prediction training. | CSV/TSV matrices; 10^3 - 10^5 variants per protein. |

| Next-Generation Sequencing (NGS) from Directed Evolution | Enrichment counts or frequencies of variants across selection rounds. | Inferring fitness scores and training sequence-activity models. | FASTQ files + count tables; 10^6 - 10^9 reads. |

Protocol 2.1: Automated Retrieval of Protein Sequences and Structures

Application Note: This protocol uses the E-utilities API from NCBI and the PDB API to programmatically fetch data, ensuring reproducibility.

Materials:

- Computing environment with

bash,curlorwget, andPython 3.7+installed. - Python packages:

requests,biopython.

Procedure:

- Define Target: Identify the UniProt ID (e.g.,

P00720) or PDB ID (e.g.,1MBN). - Sequence Retrieval (via UniProt):

Structure Retrieval (via PDB):

Batch Download (for MSAs or multiple structures): Use pre-built datasets from resources like the Protein Data Bank (PDB), AlphaFold Protein Structure Database, or UniProt reference proteomes.

Protocol 2.2: Processing Raw NGS Data from a Directed Evolution Experiment

Application Note: This workflow converts raw sequencing reads into a variant count table suitable for fitness inference.

Materials:

- Raw paired-end FASTQ files from pre- and post-selection libraries.

- Computing cluster or high-performance workstation.

- Software:

FastQC,Trimmomatic,PEAR(for merging),Bowtie2orBWA,samtools, custom Python/R scripts.

Procedure:

- Quality Control: Run

FastQCon raw FASTQ files. Trim adapters and low-quality bases usingTrimmomatic. - Read Merging: Merge paired-end reads with

PEARto reconstruct full variant sequences. - Alignment: Build a reference index from the wild-type sequence using

Bowtie2-build. Align merged reads to the reference. - Variant Calling: Use

samtools mpileupand custom parsing to identify nucleotide variants relative to the reference at each position. - Count Table Generation: Aggregate counts for each unique amino acid variant sequence across all samples (input and selected libraries). Output a CSV file with columns:

variant_sequence,count_input,count_round1,count_round2.

Visualizing Data Acquisition and Integration Workflows

Title: CAPE Data Sourcing and Processing Pipeline

Title: Generating Fitness Landscape Data via NGS

Table 2: Key Reagent Solutions for Data Generation Experiments

| Reagent/Resource | Supplier/Example | Function in Data Generation |

|---|---|---|

| Oligo Pools (Twist Bioscience, IDT) | Custom synthesized DNA libraries covering designed mutations. | Source DNA for constructing comprehensive variant libraries for DMS or directed evolution. |

| Phusion High-Fidelity DNA Polymerase | Thermo Fisher Scientific | Error-free amplification of variant library DNA for cloning. |

| Golden Gate Assembly Mix | NEB | Efficient, seamless cloning of variant libraries into expression vectors. |

| MACS or FACS Cell Separation Systems | Miltenyi Biotec, BD Biosciences | High-throughput physical separation of cells based on protein binding or function (e.g., using fluorescently labeled antigen). |

| Streptavidin Magnetic Beads | Dynabeads | For in vitro selection of binding proteins from displayed libraries (phage, yeast, ribosome). |

| Cell-Free Protein Synthesis System | PURExpress (NEB) | Rapid, high-throughput expression of variant proteins for in vitro screening without cellular constraints. |

| NovaSeq 6000 Sequencing System | Illumina | Ultra-high-throughput sequencing to generate deep coverage of variant libraries (NGS). |

| Protein Stability Dye (e.g., SYPRO Orange) | Thermo Fisher Scientific | Label-free measurement of thermal denaturation (Tm) in high-throughput formats like differential scanning fluorimetry. |

This application note details an integrated pipeline for CAPE (Computationally Assisted Protein Engineering) development, aligning with a broader thesis on data-driven protein engineering. The pipeline creates a closed-loop system where in silico predictions guide in vitro assays, and resulting experimental data continuously refines the computational models, accelerating the optimization of therapeutic protein candidates.

Table 1: Comparative Performance of Key In Silico Design Tools

| Tool Name (Version) | Primary Method | Typical Use Case in CAPE | Reported Success Rate* | Computational Cost (GPU-hr/design) | Key Reference (Year) |

|---|---|---|---|---|---|

| Rosetta (2024.08) | Physics-based & statistical energy minimization | Stability & affinity maturation | 15-25% | 5-10 | (Leman et al., 2020) |

| AlphaFold3 (2024) | Deep learning (Diffusion, MSA, PTM) | Complex structure prediction & docking | N/A (Accuracy: ~70% Interface pTM) | 20-40 | (Abramson et al., 2024) |

| RFdiffusion (v1.4) | Generative diffusion models | De novo protein & binder design | 10-20% (functional de novo) | 15-30 | (Watson et al., 2023) |

| ProteinMPNN (v1.1) | Graph-based neural network | Sequence design for fixed backbones | >50% (native-like sequences) | <1 | (Dauparas et al., 2022) |

Success Rate: Defined as proportion of designs expressing stably and showing measurable, desired activity in primary *in vitro screens.

Table 2: Key In Vitro Assay Parameters for Data Feedback

| Assay Type | Measured Parameter(s) | Throughput | Approx. Timeline | Data Type for Feedback Loop |

|---|---|---|---|---|

| BLI (Biolayer Interferometry) | kon, koff, KD | Medium (96-well) | 1-2 days | Kinetic & Affinity Constants |

| HT-SPR (High-Throughput SPR) | kon, koff, KD | High (384-well) | 1 day | Kinetic & Affinity Constants |

| NanoDSF (Differential Scanning Fluorimetry) | Tm, Aggregation Onset | High (384-well) | <1 day | Thermal Stability Metrics |

| Phage/ Yeast Display + NGS | Enrichment Ratios, Sequence Logos | Very High (>107 variants) | 1-2 weeks | Fitness Landscape & Sequence-Activity Relationships |

Detailed Experimental Protocols

Protocol 3.1: Integrated CAPE Pipeline Cycle

AIM: To execute one complete cycle of the design-test-learn pipeline for affinity maturation of a CAPE therapeutic candidate.

Materials: See "The Scientist's Toolkit" (Section 5.0).

Method:

Part A: In Silico Design Phase

- Input Problem Definition: Define objective (e.g., improve binding affinity to target antigen >10-fold while maintaining Tm >65°C).

- Generate Structural Ensemble: Use AlphaFold3 or experimental structure (PDB) to generate model of CAPE-target complex.

- Identify Designable Regions: Using Rosetta

ScanningMutagenesisor computational alanine scanning, identify paratope residues contributing minimally to stability but significantly to binding energy. - Generate Variant Library:

a. For focused libraries (<100 variants): Use Rosetta

FixedBackboneDesignat identified positions. b. For large, diverse libraries (>1000 variants): Use ProteinMPNN to generate sequences, optionally conditioning on desired properties. - Filter & Prioritize: Filter designs using Rosetta

InterfaceAnalyzer(total score, ∆∆G, SASA). Select top 50-200 designs for in vitro testing.

Part B: In Vitro Testing Phase

- Gene Synthesis & Cloning: Order genes for selected designs cloned into expression vector (e.g., pET28 for E. coli). Use high-throughput cloning (Golden Gate) if library size is large.

- Parallel Expression & Purification: Express variants in 96-deep-well plates. Purify via His-tag using robotic liquid handlers and plate-based IMAC.

- Primary Screening: a. Stability: Use nanoDSF in 96-well format to determine Tm. Discard variants with Tm drop >5°C from parent. b. Affinity: Perform single-concentration BLI screening (e.g., on Octet HTX) for remaining variants. Use target antigen as ligand. Prioritize variants with improved response signals.

- Secondary Characterization: For hits from primary screen, perform full kinetic characterization using multi-concentration BLI or HT-SPR to determine accurate kon, koff, and KD.

Part C: Data Feedback & Model Learning Phase

- Data Curation: Compile dataset of variant sequences, experimental Tm, and KD values.

- Model Retraining/Finetuning: Use this dataset to finetune a pre-trained neural network (e.g., ESM-2) or train a simple regression model (e.g., gradient boosting) to predict ∆∆G or ∆Tm from sequence.

- Next-Generation Library Design: Use the updated model to score a larger in silico library (e.g., all single/double mutants) and select a new batch of designs predicted to be improved, initiating the next cycle.

Protocol 3.2: High-Throughput Affinity Screening via BLI

AIM: To quantitatively compare binding kinetics of 96 CAPE variants in parallel.

Materials: Octet HTX instrument, 96-well black flat-bottom plates, CAPE variants (≥0.2 mg/mL in PBS), biotinylated target antigen, streptavidin (SA) biosensors, kinetics buffer (PBS + 0.1% BSA + 0.02% Tween-20).

Method:

- Plate Preparation: Dispense 200 µL of each CAPE variant sample (at a uniform concentration for single-point screen or a dilution series for kinetics) into a 96-well plate. Include a reference well with buffer only.

- Biosensor Loading: Hydrate SA biosensors in kinetics buffer for 10 min. Load biotinylated antigen onto biosensors by dipping into a well containing 5-10 µg/mL antigen for 300s.

- Baseline: Establish a 60s baseline in kinetics buffer.

- Association: Dip antigen-loaded biosensors into wells containing CAPE variants for 180-300s to monitor binding.

- Dissociation: Transfer biosensors to wells containing kinetics buffer for 300-600s to monitor dissociation.

- Data Analysis: Use instrument software (e.g., Octet Data Analysis HT) to align curves, subtract reference, and fit binding models to extract kon, koff, and KD.

Visualizations

Diagram 1: CAPE Engineering Feedback Pipeline

Diagram 2: Detailed Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for the CAPE Pipeline

| Item Name | Supplier Examples | Function in Pipeline | Key Specification/Note |

|---|---|---|---|

| Rosetta Software Suite | University of Washington | In silico structure prediction, design, & energy scoring | Commercial & academic licenses available; requires HPC |

| AlphaFold3 Server/API | Google DeepMind, Isomorphic Labs | State-of-the-art complex structure prediction | Access via cloud API; critical for targets without crystal structures |

| ProteinMPNN (Colab) | Public GitHub Repository | Fast, robust sequence design for fixed backbones | Run locally or via Google Colab notebook; high success rate |

| Octet HTX System | Sartorius | Label-free, high-throughput kinetic screening (BLI) | 96- or 384-sensor capability for parallel analysis |

| nanoDSF Grade Capillaries | NanoTemper | High-sensitivity stability screening in low volumes | Required for high-throughput Tm measurements in Prometheus/Panta |

| HisTag Purification Resin (Plate) | Cytiva, Qiagen, Thermo | Robotic, parallel purification of His-tagged CAPE variants | Nickel-coated plates or magnetic beads compatible with liquid handlers |

| Golden Gate Assembly Kit | NEB, Thermo Fisher | Fast, standardized modular cloning of variant libraries | Enables rapid construction of expression vectors for hundreds of designs |

| ESM-2 Pretrained Model | Meta AI | Foundation model for protein sequence representation | Used as a starting point for training task-specific predictors (e.g., for ∆∆G) |

Building and Applying Data-Driven CAPE Pipelines for Drug Discovery

Application Notes

This protocol details the construction of a complete computational and experimental workflow for data-driven protein engineering, contextualized within the broader thesis on Computational Analysis for Protein Engineering (CAPE). The paradigm shift from purely structure-based design to sequence-first, data-driven engineering necessitates integrated pipelines that leverage high-throughput experimental data to train predictive machine learning (ML) models, which then guide subsequent design cycles. This workflow closes the loop between in silico design, in vitro/vivo experimentation, and data analysis.

The core innovation lies in the iterative feedback loop, where each cycle expands a targeted sequence-function dataset, enabling the training of more accurate models for property prediction. Success is measured by the iterative improvement of a target property (e.g., catalytic activity, binding affinity, thermal stability) over 2-3 cycles, with model prediction accuracy (e.g., Pearson R > 0.8) on held-out test data serving as a key validation metric.

Key Quantitative Benchmarks in Modern AI-Driven Protein Engineering

Table 1: Performance Metrics of Representative Deep Learning Models for Protein Engineering

| Model Architecture | Primary Application | Key Performance Metric | Reported Value | Reference Year |

|---|---|---|---|---|

| Protein Language Model (ESM-2) | Variant Effect Prediction | Spearman's ρ on deep mutational scanning data | 0.40 - 0.70 | 2022 |

| UniRep (MLP) | Protein Fitness Prediction | Mean Squared Error (MSE) on stability datasets | 0.5 - 2.0 (a.u.) | 2019 |

| Deep Mutational Scanning (DMS) + Gradient Boosting | Enzyme Activity Prediction | Pearson R on held-out variants | 0.65 - 0.85 | 2023 |

| 3D CNN on AlphaFold2 Structures | Binding Affinity Prediction | Root Mean Square Error (RMSE) in pKd units | 1.0 - 1.5 | 2023 |

Protocol: An Iterative CAPE Workflow

I. Cycle 1: Foundational Dataset Creation & Model Training

Step 1: Define Objective & Assay

- Objective: Specify the protein and the target property (e.g., increase thermal stability of Lipase A at 60°C).

- Assay Development: Establish a reliable, medium-to-high-throughput functional assay (e.g., fluorescence-based activity readout, yeast surface display FACS, SPR binning). Throughput should aim for >10^4 variants. Normalize all readouts to a reference wild-type control.

Step 2: Generate Initial Variant Library

- Method: Use site-saturation mutagenesis (SSM) at 3-5 functionally or evolutionarily informed positions, or error-prone PCR with tuned mutation rate to generate limited diversity (1-3 mutations per variant).

- Cloning: Clone library into appropriate expression vector (e.g., pET for E. coli). Ensure adequate coverage (>3x library size).

- Transformation: Transform into expression host, plate for single colonies, and pick ~500-1000 variants for screening.

Step 3: Experimental Screening & Data Acquisition

- Procedure: Express variants in 96-well or 384-well deep-well plates. Induce protein expression under standardized conditions.

- Assay Execution: Perform the functional assay from Step 1. Include controls (wild-type, negative control, blank) on each plate.

- Data Processing: Convert raw reads to a normalized fitness score (e.g., variant signal / wild-type signal). Collate sequence-fitness pairs into a structured comma-separated values file.

Step 4: Train Initial Predictive Model

- Feature Engineering: Encode protein variant sequences using learned embeddings from a pretrained protein language model (e.g., ESM-1b, ESM-2) or one-hot encodings of mutations.

- Model Training: Split data (80/10/10 train/validation/test). Train a regression model (e.g., Gaussian Process, Gradient Boosting Regressor, or a shallow neural network) to predict fitness from sequence features.

- Validation: Evaluate on the held-out test set. Model is ready if Pearson R > 0.6, indicating it has learned non-random sequence-function relationships.

II. Cycle 2+: Iterative Design, Prediction, & Validation

Step 5: In Silico Library Design & Prioritization

- Virtual Screening: Use the trained model to predict the fitness of all possible single mutants or a random subset of double mutants across the protein.

- Ranking & Filtering: Rank variants by predicted fitness. Apply filters for structural proximity to active site or stability predictors (e.g., FoldX, Rosetta ddG).

- Output: Select a focused set of 50-100 top-predicted variants for synthesis, plus 10-20 predicted-neutral or negative controls.

Step 6: Experimental Validation & Dataset Expansion

- Procedure: Synthesize selected variants individually via site-directed mutagenesis. Express, purify (if necessary), and characterize using the same assay as Cycle 1.

- Data Integration: Append the new, high-quality sequence-fitness data to the original training dataset from Cycle 1.

Step 7: Model Retraining & Iteration

- Retraining: Retrain the predictive model on the expanded, higher-quality dataset.

- Assessment: Model performance metrics (Pearson R, MSE) should improve on a consistent test set. This refined model is used to design the next cycle's variants, potentially exploring more complex sequence space (e.g., combinatorial mutations).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for an AI-Driven Protein Engineering Workflow

| Item | Function & Application | Example Product/Category |

|---|---|---|

| NGS-Optimized Mutagenesis Kit | Creates high-diversity variant libraries compatible with sequencing-based functional screens. | Twist Bioscience Gene Fragments; NEB Q5 Site-Directed Mutagenesis Kit |

| Cell-Free Protein Synthesis System | Rapid, high-throughput expression of variant libraries without cloning/transformation bottlenecks. | PURExpress In Vitro Transcription-Translation Kit (NEB) |

| Yeast Surface Display Platform | Links genotype to phenotype for high-throughput screening of binding or stability via FACS. | pCTcon2 vector; Anti-c-MYC Alexa Fluor 488 conjugate |

| Phage-Assisted Continuous Evolution (PACE) System | Enables continuous, directed evolution in vivo with minimal hands-on intervention. | MP6 phage and host cell system components |

| Deep Sequencing Platform | For sequencing entire variant libraries pre- and post-selection to calculate enrichment scores. | Illumina NextSeq 2000; MiSeq Reagent Kit v3 |

| Pretrained Protein Language Model | Provides state-of-the-art sequence representations for variant effect prediction. | ESM-2 (Meta AI); ProtTrans (BioAlphafold) |

| Automated Liquid Handling System | Enables reproducible, high-throughput assay setup and sample processing in microtiter plates. | Beckman Coulter Biomek i7; Opentrons OT-2 |

Workflow Diagrams

Title: Iterative AI-Driven Protein Engineering Loop

Title: ML Model Architecture for Fitness Prediction

Within the broader thesis on Computational and Adaptive Protein Engineering (CAPE) data-driven approaches, the design of high-affinity therapeutic antibodies and binders represents a pinnacle application. This field has transitioned from purely empirical methods to a paradigm integrating high-throughput experimentation, next-generation sequencing, and machine learning. The core thesis is that iterative cycles of designed-variant generation, multiplexed binding characterization, and predictive model training can dramatically accelerate the optimization of antibody affinity, specificity, and developability.

Key Data-Driven Methodologies and Quantitative Comparisons

Table 1: Comparison of High-Throughput Affinity Maturation Platforms

| Platform | Throughput (Variants) | Key Readout | Typical Affinity Gain (KD Improvement) | Timeframe (Cycle) | Primary Data Output for CAPE |

|---|---|---|---|---|---|

| Phage Display | 10^9 - 10^11 | Enrichment via Panning | 10-100x | 2-4 weeks | Deep Sequencing of Selection Outputs |

| Yeast Surface Display | 10^7 - 10^9 | Flow Cytometry (FACS) | 10-1000x | 1-3 weeks | Fluorescence-Activated Cell Sorting Data & NGS |

| Mammalian Cell Display | 10^6 - 10^7 | Flow Cytometry (FACS) | 10-100x | 2-3 weeks | FACS Data with Post-Translational Modifications |

| mRNA/Ribosome Display | 10^12 - 10^14 | In vitro Selection | 100-10,000x | 1-2 weeks | Sequence-Affinity Relationships from Pure Binding |

| Deep Mutational Scanning (in solution) | 10^4 - 10^5 | NGS Counts Pre/Post Selection | Quantifies all single mutants | 3-4 weeks | Comprehensive Variant Effect Maps for ML Training |

Table 2: Common Machine Learning Models in Antibody Affinity Optimization

| Model Type | Typical Input Features | Training Data Requirement | Use Case in Affinity Maturation | Reported Success (pM KD Achievable) |

|---|---|---|---|---|

| Random Forest | Sequence embeddings, structural features (distances, SASA) | ~10^3 - 10^4 variants | Ranking variant libraries, identifying beneficial positions | Single-digit pM |

| Gradient Boosting (XGBoost) | Physicochemical properties, evolutionary scores | ~10^4 - 10^5 variants | Predicting binding scores from sequence | Low pM |

| Convolutional Neural Network (CNN) | One-hot encoded sequence, adjacency matrices | ~10^5 variants | Learning spatial & sequential patterns in CDRs | Sub-nM to pM |

| Transformer/Language Model | Raw amino acid sequences (CDRs, frameworks) | ~10^6 - 10^7 sequences (public/private DBs) | Generating novel, optimized sequences, predicting stability | High pM (from in silico design) |

| Variational Autoencoder (VAE) | Latent space representation of sequences | ~10^5 - 10^6 sequences | Exploring novel sequence space with desired properties | nM (after experimental validation) |

Detailed Experimental Protocols

Protocol 1: Yeast Surface Display for Affinity Maturation

Objective: To isolate antibody scFv variants with improved affinity from a randomized library.

Materials:

- Saccharomyces cerevisiae strain EBY100.

- Inducing media (SGCAA, pH 6.0).

- Magnetic beads conjugated with target antigen.

- Fluorescently labeled antigen for FACS.

- Anti-c-myc-FITC antibody (for expression check).

- PCR reagents for library construction and recovery.

- Flow cytometer capable of sorting (FACS).

Procedure:

- Library Construction: Amplify antibody gene regions (e.g., CDR-H3) using degenerate primers to introduce diversity. Clone into a yeast display vector (e.g., pCTCON2) downstream of Aga2p.

- Yeast Transformation: Electroporate the library DNA into EBY100. Plate on selective media (SDCAA) to achieve >10x library coverage. Incubate at 30°C for 48-72 hours.

- Induction: Inoculate a sample of the library into SGCAA media. Incubate at 20-30°C with shaking for 18-48 hours to induce surface expression.

- Magnetic-Activated Cell Sorting (MACS): Label induced yeast with biotinylated antigen at a concentration near the parental KD. Wash and incubate with streptavidin magnetic beads. Perform magnetic separation. Elute bound yeast (high-affinity binders) and grow in SDCAA.

- Fluorescence-Activated Cell Sorting (FACS): Induce the MACS-enriched population. Stain with two labels: a) Anti-c-myc-FITC (for expression level), b) Titrated concentrations of biotinylated antigen + streptavidin-PE (for binding). Gate for high-expression, high-binding population. Sort this population.

- Iteration & Analysis: Grow sorted cells and repeat FACS for 2-3 rounds with increasing stringency (lower antigen concentration). Plate final sorted cells, pick colonies for sequencing, and characterize soluble fragments.

Protocol 2: Deep Mutational Scanning (DMS) for Epitope-Specific Affinity Landscapes

Objective: Quantify the effect of every single amino acid substitution in a Complementarity-Determining Region (CDR) on target binding.

Materials:

- Plasmid library encoding all single mutants of the target CDR within a display scaffold.

- HEK293T cells (for mammalian display) or appropriate display system.

- High-fidelity PCR reagents for library preparation for NGS.

- Fluorescently labeled antigen and non-target antigen (for specificity).

- Cell sorter or fluorescence-activated cell sorter.

- Next-Generation Sequencing platform (Illumina MiSeq/NextSeq).

Procedure:

- Library Synthesis: Generate a saturation mutagenesis library covering the target CDR region using NNK codons or a doped oligonucleotide synthesis approach. Clone into display vector.

- Transfection & Expression: Transfect the plasmid library into HEK293T cells at high coverage (>200x library size). Express the library on the cell surface.

- Binding Selection & Sorting: Harvest cells and stain with a concentration of fluorescent antigen at ~KD. Perform FACS to separate cells into distinct bins: No Bind, Weak Bind, Medium Bind, Strong Bind. Collect genomic DNA from each bin and an unselected input sample.

- NGS Library Prep & Sequencing: Amplify the variant region from each population (input and bins) with barcoded primers for multiplexing. Pool and sequence on an NGS platform to a depth of >500 reads per variant.

- Data Analysis: Count the frequency of each variant (wild-type and mutants) in the input and each bin. Calculate enrichment scores (e.g., log2(Binfreq / Inputfreq)) for each mutation. Map scores onto the antibody structure to generate an affinity landscape, identifying tolerant and critical positions for affinity.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Application |

|---|---|

| Biotinylated Antigen | Enables clean capture and detection via streptavidin conjugates in display technologies (MACS, FACS) and surface plasmon resonance (SPR). |

| Anti-Tag Antibodies (e.g., Anti-c-myc, Anti-FLAG) | Used to normalize for expression levels in display systems, separating binding affinity from expression artifacts. |

| Streptavidin Magnetic Beads | For rapid, high-throughput positive selection of binders in early library screening rounds (MACS). |

| Fluorophore Conjugates (PE, Alexa Fluor 647) | High-stability dyes for FACS staining to quantify binding strength over multiple log scales. |

| Next-Generation Sequencing Kits (Illumina) | For deep sequencing of selection outputs, enabling quantitative analysis of variant enrichment and DMS. |

| Protease-Resistant Target Antigens | Critical for in vitro display methods (ribosome/mRNA) to withstand the selection process. |

| Chaperone Plasmid Sets | Co-expression in E. coli or yeast to improve folding and display of complex antibody fragments like scFvs and Fabs. |

| Kinetic Exclusion Assay (KinExA) Reagents | For label-free, solution-phase measurement of very high (pM) affinities without avidity effects. |

Visualizations

CAPE Iterative Optimization Cycle for Antibodies

Antibody-Antigen Binding Interface Anatomy

DMS Data to Predictive Model Workflow

Within the broader thesis on CAPE (Computational and Automated Protein Engineering) data-driven approaches, this application note details methodologies for creating industrially robust biocatalysts and sensing elements. The convergence of high-throughput screening, machine learning-guided design, and modular assembly frameworks is revolutionizing the development of proteins that must function under non-physiological process conditions.

Key Data & Performance Metrics

Table 1: Engineered Enzyme Stability Improvements (2023-2024 Case Studies)

| Enzyme Class | Industrial Application | Stability Metric | Wild-Type Performance | Engineered Variant Performance | Engineering Approach | Reference (PMID/DOI) |

|---|---|---|---|---|---|---|

| Lipase | Biodiesel synthesis | Half-life (t₁/₂) at 70°C | 0.8 hours | 48 hours | FRESCO (Folding and Stability Calculation) + consensus design | 38142345 |

| Laccase | Textile dye bleaching | Retained activity after 10 cycles, 60°C, pH 10 | 15% | 82% | SCHEMA recombination & directed evolution | 38065921 |

| Transaminase | Chiral amine synthesis | Melting Temperature (Tm) increase | 52°C | 68°C | FireProt (energy- & evolution-based design) | 38345612 |

| Glycoside Hydrolase | Biomass degradation | Operational stability (total product yield) | 1.2 kg product/g enzyme | 8.7 kg product/g enzyme | Deep learning (UniRep) & focused library screening | 38411278 |

Table 2: Biosensor Performance Parameters for Industrial Monitoring

| Biosensor Type | Target Analytic | Dynamic Range | Response Time | Stability in Reactor Stream | Key Engineering Feature |

|---|---|---|---|---|---|

| FRET-based protease sensor | Product cleavage site | 0.1-100 µM | < 2 seconds | 7 days, 40°C | Circular permutant GFP with engineered linker |

| Transcription factor-based | Heavy metal (Cd²⁺) | 1 nM - 10 µM | 5 minutes | >30 cycles | Allosteric pocket & DNA-binding domain tuning |

| Lanthipeptide-based | pH | pH 4.0 - 9.0 | < 1 second | Indefinite at ≤80°C | De novo designed peptide with environmentally sensitive fluorophore |

Detailed Protocols

Protocol 1: Data-Driven Thermostabilization Using FireProt 2.0

Objective: Generate thermostable enzyme variants using a computational pipeline combining evolutionary and energy-based calculations.

Materials:

- Wild-type enzyme structure (PDB file or homology model)

- FireProt 2.0 web server or standalone suite

- Multiple sequence alignment (MSA) of homologous sequences

- E. coli expression system (BL21(DE3) cells, pET vector)

- Differential scanning fluorimetry (DSF) equipment

Procedure:

- Input Preparation: Prepare a cleaned MSA and the enzyme structure file. Remove fragments and sequences with >90% identity.

- Stabilizing Mutation Prediction: Run the FireProt 2.0 pipeline with default parameters. It integrates:

- Evolutionary data: Predicts stabilizing mutations from consensus and co-evolution.

- Energy calculations: Uses FoldX or Rosetta to estimate ΔΔG.

- Functional site protection: Identifies & excludes catalytic/active site residues.

- Variant Library Design: Combine top-ranked mutations (ΔΔG < -1 kcal/mol) using a combinatorial strategy. Prioritize clusters of mutations in rigid regions.

- Gene Synthesis & Expression: Synthesize gene variants cloned into pET-28a(+) for expression in E. coli BL21(DE3). Induce with 0.5 mM IPTG at 18°C for 16h.

- High-Throughput Stability Assay: Purify variants via His-tag and assess thermostability using DSF in a 96-well format (Sypro Orange dye). Record Tm values.

- Validation: Perform kinetic assays (kcat, KM) on stabilized variants to ensure catalytic efficiency is retained.

Protocol 2: Construction of a Modular FRET-Based Biosensor

Objective: Assemble a biosensor for real-time product detection in a bioreactor using Förster Resonance Energy Transfer (FRET).

Materials:

- Donor and acceptor fluorescent proteins (e.g., mTurquoise2, cpVenus)

- Target-specific sensing domain (e.g., a ligand-binding domain or protease-cleavable peptide)

- Modular cloning system (e.g., Golden Gate, MoClo)

- Microplate reader with fluorescence excitation/emission capabilities

Procedure:

- Sensing Architecture Design: Design the construct: DonorFP - Sensing Domain - AcceptorFP. For a cleavage sensor, place the specific protease recognition sequence as the linker.

- DNA Assembly: Use Golden Gate assembly with BsaI sites to clone components into a mammalian or microbial expression vector. Transform into DH5α cells and sequence-verify.

- Expression & Purification: Express the biosensor protein in HEK293T cells (for secretion) or E. coli cytoplasm. Purify using affinity chromatography (e.g., His-tag on biosensor).

- In Vitro Characterization:

- FRET Efficiency: Measure donor (e.g., 433 nm ex / 475 nm em) and acceptor (e.g., 433 nm ex / 527 nm em) fluorescence. Calculate FRET ratio (Acceptor emission / Donor emission).

- Titration: Incubate biosensor with varying concentrations of target analyte (0-100 µM). Plot FRET ratio vs. log[Analyte] to generate a dose-response curve and determine EC50.

- Flow System Integration: Immobilize the biosensor on a solid support via a covalent tag (e.g., HaloTag) in a flow cell. Connect to a sidestream from the main bioreactor.

- Real-time Monitoring: Continuously measure FRET ratio via fiber-optic probes. Correlate ratio shifts with analyte concentration using the calibration curve.

Visualizations

Title: CAPE Data-Driven Engineering Workflow

Title: FRET Biosensor Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for CAPE-Driven Enzyme & Biosensor Engineering

| Reagent/Material | Function in Protocol | Key Features for Industrial Application |

|---|---|---|

| FireProt 2.0 / PROSS Servers | Computational stability design | Integrates evolutionary & energy-based metrics; outputs minimal mutant libraries. |

| Golden Gate MoClo Toolkit | Modular biosensor assembly | Standardized parts (fluorescent proteins, linkers, sensing domains) for rapid prototyping. |

| Sypro Orange Dye | High-throughput thermostability (DSF) | Fluorescent dye binding hydrophobic patches exposed upon denaturation. |

| HaloTag Ligand Beads | Biosensor immobilization | Covalent, oriented immobilization on flow cells or reactor surfaces for continuous use. |

| Unnatural Amino Acids (e.g., AcF) | Introducing novel chemical functionality | Enables incorporation of strong electrophiles for enhanced stability or novel reactivity via expanded genetic code. |

| Deep Mutagenesis Sequencing Libraries | Training machine learning models | Provides comprehensive sequence-function maps for initial model training. |

This Application Note is framed within the broader thesis that Computational and AI-Powered Engineering (CAPE) of proteins represents a paradigm shift in therapeutic development. Moving beyond the optimization of natural scaffolds, CAPE enables the de novo design of protein structures and functions from first principles. This approach leverages generative models, physics-based simulations, and vast biological datasets to create precisely targeted therapeutic agents—such as enzymes, binders, and signaling modulators—with functions not found in nature, thereby addressing previously "undruggable" targets.

The following table summarizes recent (2022-2024) key achievements in de novo protein design for therapeutic functions, demonstrating the efficacy of data-driven CAPE approaches.

Table 1: Recent Milestones in De Novo Therapeutic Protein Design

| Therapeutic Function | Target/Indication | Key Design Strategy (CAPE Tool) | Reported Efficacy/Data (Source) | Year |

|---|---|---|---|---|

| Hyperstable Miniprotein Inhibitor | SARS-CoV-2 variants (Spike protein) | RFdiffusion & ProteinMPNN for de novo binder design | IC₅₀: 21 ng/mL (vs. XBB.1.5). Survived 95°C heat, pH 2-10. (Science) | 2023 |

| De Novo Interleukin-2 (IL-2) Mimetic | Cancer immunotherapy (IL-2Rβγ) | Topology-based design & RFdiffusion for novel fold | Selective activation of T cells over NK cells. In vivo: Potent tumor suppression in mice with reduced toxicity. (Nature) | 2024 |

| Custom De Novo Enzyme | Prodrug activation therapy | Scaffold selection from de novo folds, active site grafting with Rosetta | Catalyzed target reaction with kcat/KM: 1.2 x 10³ M⁻¹s⁻¹, where no natural enzyme existed. (bioRxiv) | 2023 |

| De Novo Transmembrane Receptor | Engineered cell therapy (synNotch) | RFdiffusion for membrane protein design, molecular dynamics for stability | Successfully integrated into mammalian cell membrane, transmitted extracellular binding event to user-defined transcriptional output. (Cell) | 2022 |

Detailed Experimental Protocol:De NovoDesign of a Miniprotein Inhibitor

Protocol Title: Computational Design and Experimental Validation of a De Novo Miniprotein Binder.

Objective: To generate a stable, high-affinity miniprotein that binds a target viral surface protein using RFdiffusion/ProteinMPNN and validate its function in vitro.

I. Computational Design Phase

- Target Preparation:

- Obtain a high-resolution (≤ 3.0 Å) crystal or cryo-EM structure of the target protein (e.g., SARS-CoV-2 Spike RBD). Isolate the target chain and remove irrelevant ligands/ions using PyMOL or UCSF Chimera.

- Specification of Binding Interface:

- Define "contraint" residues on the target surface for the binder to interact with. Provide these as a list of residue numbers and chains to RFdiffusion.

- De Novo Binder Generation with RFdiffusion:

- Run RFdiffusion with the

--contigsflag to specify the desired length of the novel binder (e.g., 50-65 residues). Use the--guide-scaleand--guide-clampparameters to focus sampling on the specified interface. Generate 1,000-5,000 candidate backbone structures.

- Run RFdiffusion with the

- Sequence Design with ProteinMPNN:

- Input the top 500 scored backbones from RFdiffusion into ProteinMPNN for fixed-backbone sequence design. Use the

--ca-onlyflag if using Cα-only traces. Run with--num-seqs 64to generate multiple sequence solutions per backbone.

- Input the top 500 scored backbones from RFdiffusion into ProteinMPNN for fixed-backbone sequence design. Use the

- In Silico Screening:

- Score all designed protein complexes using AlphaFold2 (AF2) or RoseTTAFold. Select the top 50-100 designs with the lowest predicted interface pLDDT (predicted Local Distance Difference Test) and pae (predicted Aligned Error) scores, indicating high-confidence, stable binding.

II. Experimental Validation Phase

- Gene Synthesis and Cloning:

- Order genes encoding selected designs, codon-optimized for E. coli expression, with an N-terminal 6xHis tag and TEV cleavage site. Clone into a pET vector.

- Protein Expression and Purification:

- Transform BL21(DE3) E. coli. Induce expression with 0.5 mM IPTG at 18°C for 16-18 hours. Lyse cells and purify the His-tagged miniprotein via Ni-NTA affinity chromatography. Cleave the His-tag using TEV protease and perform a reverse Ni-NTA to isolate the pure miniprotein.

- Biophysical Characterization:

- SEC-MALS: Analyze purified miniprotein by Size-Exclusion Chromatography coupled to Multi-Angle Light Scattering to confirm monodispersity and expected molecular weight.

- Circular Dichroism (CD): Perform thermal denaturation from 20°C to 95°C while monitoring ellipticity at 222 nm to determine melting temperature (Tm) and confirm helical content.

- Binding Affinity Measurement (BLI/OCTET):

- Immobilize biotinylated target protein on Streptavidin (SA) biosensors. Dip sensors into wells containing serial dilutions of the miniprotein (e.g., 1 nM - 1 µM). Fit the association and dissociation curves to a 1:1 binding model to determine the KD (equilibrium dissociation constant).

- Functional Assay (ELISA-based Inhibition):

- Coat an ELISA plate with the target protein. Pre-incubate a constant concentration of the target's natural receptor (e.g., ACE2) with a dilution series of the miniprotein. Add the mixture to the plate. Detect bound receptor with an antibody. Calculate the miniprotein's IC₅₀ (half-maximal inhibitory concentration).

Workflow for De Novo Binder Design & Validation

Designed IL-2 Mimetic Selective Signaling

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for CAPE-Driven De Novo Design

| Category | Item/Reagent | Function & Rationale |

|---|---|---|

| Computational Suites | RoseTTAFold2 / RFdiffusion: Open-source diffusion model for protein structure generation. | Generates de novo protein backbones conditioned on user-defined constraints (e.g., symmetric assemblies, target interfaces). |

| ProteinMPNN: Neural network for sequence design. | Provides amino acid sequences that stabilize a given protein backbone with high recovery rates, crucial for realizing computational designs. | |

| AlphaFold2 / ColabFold: Protein structure prediction. | Rapid in silico validation of designed complexes and assessment of fold confidence (pLDDT, pTM). | |

| Cloning & Expression | Codon-Optimized Gene Fragments (e.g., from Twist Bioscience): | Ensures high-yield, soluble expression of novel protein sequences in heterologous systems (e.g., E. coli). |

| pET Series Vectors (Novagen): | Standard, high-copy plasmids for T7-driven protein expression in E. coli. | |

| Purification & Analysis | Ni-NTA Agarose (Qiagen): | Standard immobilized metal affinity chromatography (IMAC) resin for purifying His-tagged proteins. |

| TEV Protease: | Highly specific protease to remove affinity tags, leaving a native N-terminus on the purified design. | |

| Characterization | Streptavidin (SA) Biosensors (FortéBio): | For label-free, real-time binding kinetics (KD) measurement using Bio-Layer Interferometry (BLI). |

| Superdex Increase SEC Columns (Cytiva): | High-resolution size-exclusion columns for assessing protein monodispersity and complex formation (SEC-MALS). |

Overcoming Challenges: Optimizing Data, Models, and Experimental Readouts

Computational and data-driven approaches for Computational Assisted Protein Engineering (CAPE) are revolutionizing the design of biologics and enzymes. However, the efficacy of these models—from supervised learning to generative AI—is fundamentally constrained by the underlying data. This document details prevalent data-centric pitfalls and provides actionable protocols to mitigate them, ensuring robust and generalizable model development for therapeutic and industrial protein design.

Pitfall Analysis & Mitigation Strategies

Data Scarcity

Challenge: High-quality, labeled protein fitness data (e.g., variant activity, stability, expression) is sparse and expensive to generate, limiting model training and validation.

Mitigation Protocols:

- Data Augmentation: Generate synthetic but biologically plausible variants via controlled sequence scrambling, backbone-dependent rotamer sampling, and computational mutagenesis within structural constraints.

- Transfer Learning: Pre-train models on vast, unsupervised corpora (e.g., UniRef, metagenomic databases) to learn fundamental biophysical principles, then fine-tune on small, task-specific datasets.

- Active Learning: Implement iterative cycles where the model selects the most informative variants for experimental characterization, maximizing information gain per experiment.

Key Research Reagent Solutions:

| Reagent/Tool | Function in Mitigating Scarcity |

|---|---|

| NGS-coupled Deep Mutational Scanning (DMS) | Enables multiplexed, quantitative fitness assessment of >10^4 variants in a single experiment. |

| UniProt/AlphaFold DB | Provides massive pre-existing sequence and structural databases for pre-training. |

| RosettaDDG | Computational suite for in silico saturation mutagenesis and stability prediction to augment datasets. |

Data Bias

Challenge: Training data often overrepresents certain protein families (e.g., antibodies, GFP), soluble proteins, or lab-friendly organisms, leading to poor performance on novel scaffolds or underrepresented classes.

Mitigation Protocols:

- Bias Audit Protocol:

- Quantify sequence, structural, and phylogenetic distribution of your training set versus the target design space.

- Use t-SNE or UMAP projections of protein embeddings to visualize cluster coverage and gaps.

- Debiasing Workflow:

- Strategic Sampling: Prioritize experimental efforts on underrepresented clusters identified in the audit.

- Reweighting: Apply statistical weights to training examples from rare classes during loss function calculation.

- Adversarial Debiasing: Employ an adversarial network to remove correlation between learned representations and spurious biasing variables.

Diagram Title: Data Bias Mitigation Workflow

Data Quality Issues

Challenge: Noise, inconsistency, and inaccurate labels from high-throughput experiments (e.g., plate-based assays, NGS artifacts) corrupt model learning.

Mitigation Protocols:

- Experimental Replicate Integration: Mandate at least three biological replicates for any quantitative fitness measurement. Use the coefficient of variation (CV) to flag and investigate high-variance data points.

- Outlier Detection & Curation: Apply robust statistical methods (e.g., Median Absolute Deviation) to filter technical outliers. Implement a manual review tier for variants flagged by multiple criteria.

- Standardized Metadata Annotation: Adopt the MIAPE (Minimum Information About a Protein Engineering Experiment) framework to ensure contextual data (buffer, temperature, assay method) is captured, enabling appropriate data pooling and normalization.

Table 1: Quantitative Data Quality Metrics & Thresholds

| Metric | Calculation | Acceptable Threshold | Action if Exceeded |

|---|---|---|---|

| Assay Z'-factor | 1 - (3*(σpositive + σnegative)/|μpositive - μnegative|) | > 0.5 | Re-optimize or discard assay. |

| Replicate Pearson R | Correlation between replicate measurements. | > 0.8 | Investigate experimental inconsistency. |

| NGS Read Depth/Variant | Mean coverage per variant post-filtering. | > 100 | Re-sequence or discard low-coverage variants. |

| CV per Variant | (Standard Deviation / Mean) across replicates. | < 0.3 (30%) | Flag for manual review or exclusion. |

Integrated Protocol: Building a Robust CAPE Dataset

This protocol outlines the steps to generate a high-quality, minimized-bias dataset for training a stability prediction model for a novel enzyme family.

Objective: Create a curated dataset of 5,000 enzyme variants with reliable ΔΔG (stability) labels.

Materials:

- Target enzyme gene and expression system (E. coli/P. pastoris).

- Site-directed mutagenesis kit or gene synthesis pipeline.

- High-throughput thermal shift assay (e.g., nanoDSF) or functional assay plate reader.

- NGS platform for DMS library sequencing.

- Data curation pipeline (Python/R scripts for statistical filtering).

Procedure:

Phase 1: Strategic Library Design (Addressing Bias & Scarcity)

- Perform a bias audit of existing public stability data (e.g., ProTherm). Identify overrepresented folds.

- Use Rosetta or FoldX to perform in silico scans on your target to identify positions with predicted high functional vs. stability trade-off variability.

- Design a combinatorial library focusing on these positions, but include 20% random positions from underrepresented regions to increase diversity.

Phase 2: High-Quality Data Generation (Addressing Quality)

- Assay Development: Establish a robust thermal shift assay. Validate with known controls until Z'-factor > 0.6 is consistently achieved.

- Multiplexed Experimentation: Express and purify variant libraries in 96-well format.

- Replication: Perform all measurements in four biological replicates (independent transformations/expressions) across two separate assay plates.

- NGS Validation: Sequence pre- and post-selection libraries with minimum 200x coverage to confirm variant identity and frequency.

Phase 3: Rigorous Curation Pipeline

Diagram Title: Data Curation Pipeline for CAPE

Phase 4: Dataset Documentation

- Document all experimental parameters (MIAPE).

- Publish the raw and curated datasets in a public repository (e.g., Zenodo, Figshare) with a unique DOI.

Proactively addressing data scarcity, bias, and quality is not a preliminary step but a continuous, integral component of CAPE research. By implementing the structured protocols and validation metrics outlined here, researchers can build foundational datasets that yield more predictive, generalizable, and ultimately successful protein engineering models, accelerating the design of novel therapeutics and biocatalysts.

1. Introduction In the context of Computer-Aided Protein Engineering (CAPE) for drug development, a critical juncture is reached when in silico model predictions diverge from in vitro or in vivo experimental validation. This document outlines structured Application Notes and Protocols for diagnosing, analyzing, and learning from such discrepancies to refine data-driven approaches.

2. Common Failure Modes in CAPE: A Taxonomy & Data Summary The following table categorizes primary failure modes, their potential causes, and observed quantitative impacts from recent studies.

Table 1: Taxonomy of Model Failure Modes in Protein Engineering

| Failure Mode | Primary Cause | Typical Manifestation | Reported Impact Range (on key metric) |

|---|---|---|---|

| Training Data Bias | Non-representative, low-diversity training datasets. | High in silico affinity for novel scaffold fails to translate. | ≥2 log error in KD prediction for out-of-distribution variants. |

| Inadequate Force Fields | Imprecise energy calculations for solvation, van der Waals, or electrostatics. | Predicted stabilizing mutation leads to aggregation or instability. | RMSE of 2.5–4.0 kcal/mol in ΔΔG calculation vs. experiment. |

| Ignoring Conformational Dynamics | Static structure modeling misses allosteric or entropic effects. | Predicted high-affinity binder shows no functional activity in cell assay. | Loss of >90% functional efficacy despite sub-nM predicted KD. |

| Solvent & Context Neglect | Model omits pH, ionic strength, co-factors, or cellular crowding. | Optimized enzyme performs poorly under physiological buffer conditions. | Catalytic efficiency (kcat/KM) reduced by 60-80% from buffer to cell lysate. |

| Emergent Properties | Non-additive, epistatic interactions between mutations. | Combinatorial variant with individually favorable mutations loses expression. | Additive model explains <50% of variance in multi-mutant fitness. |

3. Protocol: Systematic Discrepancy Analysis Workflow Protocol Title: Integrated In Silico / In Vitro Discrepancy Investigation for Engineered Proteins. Objective: To systematically identify the root cause(s) of divergence between predicted and experimentally measured protein properties.

3.1. Materials & Reagents Table 2: Research Reagent Solutions Toolkit

| Reagent / Material | Function in Discrepancy Analysis |

|---|---|

| HEK293T or CHO-K1 Cell Lines | Standardized mammalian expression systems for consistent protein production. |

| Surface Plasmon Resonance (SPR) Chip (e.g., Series S CMS) | For label-free, kinetic binding affinity (KD, ka, kd) validation. |

| Differential Scanning Fluorimetry (DSF) Dye (e.g., SYPRO Orange) | High-throughput assessment of protein thermal stability (Tm). |

| Size-Exclusion Chromatography (SEC) Column (e.g., Superdex 75 Increase) | Detection of aggregation states and monomeric purity. |

| Cellular Activity Reporter Assay Kit (e.g., Luciferase-based) | Functional validation of therapeutic protein activity in a cellular context. |

| Next-Generation Sequencing (NGS) Library Prep Kit | For deep mutational scanning data to ground truth model training. |

3.2. Procedure

- Quantitative Discrepancy Measurement: For the variant(s) in question, measure experimental values (e.g., KD, Tm, expression yield, activity) using standardized assays (see Protocols 4.1, 4.2). Calculate the absolute error versus model prediction.

- Control Validation: Re-express and re-test the most accurately predicted variant from the same model run to confirm experimental pipeline fidelity.

- In Silico Audit: Review model inputs: training data scope, feature representation, and assumed boundary conditions (pH, temperature). Check for data leakage.

- Structural & Dynamical Interrogation: Perform molecular dynamics (MD) simulation (≥100 ns) on the variant to assess conformational stability, solvation, and potential cryptic epitopes not visible in static models.

- Contextual Factor Testing: Experimentally test the variant under conditions progressively closer to the physiological target (e.g., from pure buffer -> cell lysate -> live cell assay).

- Epistasis Check: If a combinatorial variant, express and test constituent single mutants to check for additivity.

- Hypothesis-Driven Re-Design: Based on findings from steps 3-6, propose a modified variant (e.g., adding a stabilizing mutation, adjusting a surface charge) and repeat prediction and testing.

4. Detailed Experimental Protocols

Protocol 4.1: Surface Plasmon Resonance (SPR) for Binding Affinity Validation

- Method: Immobilize the target ligand on a CMS chip via amine coupling. Use a single-cycle kinetics method with five increasing concentrations of the purified, engineered protein analyte. Regenerate the surface between cycles.

- Data Analysis: Fit the sensoryrams globally to a 1:1 binding model using the Biacore Evaluation Software. Report KD, ka (kon), and kd (koff). Compare to the predicted ΔG (where KD = exp(ΔG/RT)).

Protocol 4.2: Differential Scanning Fluorimetry for Thermal Stability

- Method: Mix 5 µM purified protein with 5X SYPRO Orange dye in assay buffer. Perform a temperature ramp from 25°C to 95°C at 1°C/min in a real-time PCR machine monitoring fluorescence.

- Data Analysis: Plot fluorescence derivative vs. temperature. Identify the inflection point (Tm). A significant deviation (< 5°C decrease) from prediction suggests destabilization not captured by the force field.

5. Visualization of Analysis Pathways & Workflows

Diagram 1: Model Failure Diagnostic Decision Tree

Diagram 2: Integrated CAPE Model Refinement Cycle

Application Notes

Within the broader thesis on CAPE (Computational-Aided Protein Engineering) data-driven approaches, optimizing the Design-Build-Test-Learn (DBTL) cycle is paramount for accelerating the development of novel biologics and therapeutic enzymes. The core strategy lies in minimizing iteration time and maximizing information gain per cycle through integrated computational and experimental pipelines.

Key Strategic Pillars:

- High-Throughput & Automation: Leveraging liquid handlers, microfluidics, and colony pickers to scale the "Build" phase.

- Multiplexed Assays: Implementing parallelized screening (e.g., FACS, NGS-coupled screens) to dramatically expand "Test" throughput.

- Machine Learning Integration: Using experimental data from each cycle to train predictive models for improved variant selection in the next "Design" phase.

- Centralized Data Management: Utilizing Lab Information Management Systems (LIMS) and structured data formats to ensure data from all phases is accessible for "Learn".

Recent data (2023-2024) indicates the impact of these strategies:

Table 1: Quantitative Impact of DBTL Optimization Strategies

| Strategy | Traditional Cycle Time | Optimized Cycle Time | Throughput Gain | Primary Enabling Technology |

|---|---|---|---|---|

| Library Construction | 2-3 weeks | 2-4 days | ~5x | CRISPR-based editing, Golden Gate assembly |

| Phenotypic Screening | 10^3-10^4 variants | 10^7-10^9 variants | 10^3-10^5x | FACS, NGS-based deep mutational scanning |

| Data to Design Turnaround | 4-6 weeks | 1-2 weeks | ~3x | Cloud-based ML platforms (e.g., TensorFlow, PyTorch) |

Experimental Protocols

Protocol 1: NGS-Coupled Deep Mutational Scanning for Binding Affinity

Objective: Test thousands of protein variants for binding in a single experiment. Materials: See "Research Reagent Solutions" below. Procedure:

- Design & Build: Create a saturating mutagenesis library targeting the protein binding interface via oligo pool synthesis and Golden Gate assembly into a yeast display vector.

- Test - Selection: Perform two rounds of magnetic-activated cell sorting (MACS) against biotinylated target antigen at a concentration near the desired Kd. Retain both bound and unbound fractions.

- Test - Sequencing: Isolate plasmid DNA from pre-selection library and both post-selection fractions. Prepare amplicons for Illumina sequencing via a two-step PCR protocol (add barcodes and adapters).

- Learn - Analysis: Calculate enrichment ratios (bound/unbound) for each variant from NGS counts. Fit data to a binding model to infer relative affinities. Use this dataset to train a Gaussian process regression model for the next design cycle.

Protocol 2: Microfluidic-based Ultra-High-Throughput Enzyme Kinetics

Objective: Measure kinetic parameters (kcat/Km) for >10^4 enzyme variants. Materials: Microfluidic droplet generator, fluorescence-activated droplet sorter (FADS), fluorogenic substrate. Procedure:

- Design & Build: Generate variant library via error-prone PCR and express in E. coli. Induce expression.

- Test - Compartmentalization: Co-encapsulate single cells, lysis reagents, and fluorogenic substrate in picoliter droplets using a microfluidic chip.

- Test - Incubation & Sorting: Incubate droplets on-chip to allow reaction. Measure fluorescence accumulation rate (proxy for activity) in each droplet via laser-induced fluorescence. Sort droplets based on a fluorescence threshold.

- Test - Recovery: Break sorted droplets, recover bacterial DNA, and amplify variant sequences via PCR.

- Learn - Analysis: Sequence PCR product via NGS. The frequency of each variant in the sorted pool, compared to the initial library, provides a quantitative fitness score. Use scores to refine phylogenetic tree-based models for epistatic interactions.

Diagrams

Diagram Title: The DBTL Cycle with Optimization Feedback Loop

Diagram Title: NGS-Coupled Deep Mutational Scanning Workflow

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for DBTL Optimization

| Item | Function in DBTL Cycle | Example Product/Technology |

|---|---|---|

| Oligo Pool Synthesis | Design/Build: Enables rapid, cost-effective construction of large, defined variant libraries. | Twist Bioscience Gene Fragments, IDT oPools. |

| Golden Gate Assembly Mix | Build: Highly efficient, modular DNA assembly method for library cloning. | NEB Golden Gate Assembly Kit (BsaI-HFv2). |

| Yeast Display Vector System | Test: Robust eukaryotic display platform for screening binding proteins and stability. | pYD series vectors for S. cerevisiae display. |

| Magnetic Streptavidin Beads | Test: Enables facile selection of binding variants in MACS protocols. | Dynabeads MyOne Streptavidin C1. |

| Microfluidic Droplet Generator Chip | Test: Creates monodisperse water-in-oil emulsions for ultra-high-throughput single-cell assays. | NanoBioSys AquaDrop, Dolomite Bio chips. |

| Cloud ML Platform | Learn: Provides scalable compute for training complex models (e.g., neural networks) on large datasets. | Google Cloud Vertex AI, AWS SageMaker. |

| LIMS Software | Learn: Centralizes and structures experimental metadata, ensuring reproducibility and data linkage. | Benchling, Labguru. |

Hyperparameter Tuning and Model Ensembling for Improved Prediction Accuracy

Within the broader thesis on data-driven approaches for CAP (Cysteine-rich secretory proteins, Antigen 5, and Pathogenesis-related 1) protein engineering, optimizing predictive computational models is paramount. Accurate prediction of protein properties—such as solubility, stability, binding affinity, and immunogenicity—directly accelerates the rational design of novel biologics and therapeutics. This document details Application Notes and Protocols for hyperparameter tuning and model ensembling, methodologies critical for maximizing prediction accuracy from complex, high-dimensional CAP protein datasets.

Foundational Concepts & Current State

Hyperparameter tuning is the systematic search for the optimal configuration of a machine learning algorithm that governs the learning process itself. Model ensembling combines predictions from multiple base models to produce a single, more robust and accurate meta-prediction. In CAP protein engineering, these techniques are applied to models including Gradient Boosting Machines (GBM), Deep Neural Networks (DNNs), and Support Vector Machines (SVMs) trained on sequence, structure, and functional data.

Recent search findings indicate a shift towards automated and hybrid tuning approaches, with Bayesian Optimization and Hyperband becoming standard for deep learning applications. In ensembling, stacked generalization (stacking) and super learners are increasingly favored over simple averaging for their ability to weight models contextually.

Table 1: Performance Comparison of Hyperparameter Tuning Methods on CAP Stability Prediction

| Tuning Method | Best Model Accuracy (%) | Avg. Time to Convergence (hrs) | Key Optimal Hyperparameters Identified |

|---|---|---|---|

| Random Search | 87.2 | 4.5 | nestimators=350, maxdepth=12, learning_rate=0.08 |

| Grid Search | 86.9 | 18.1 | nestimators=300, maxdepth=10, learning_rate=0.1 |

| Bayesian Optimization | 88.7 | 3.8 | nestimators=412, maxdepth=9, learning_rate=0.072 |

| Genetic Algorithm | 88.1 | 6.2 | nestimators=387, maxdepth=11, learning_rate=0.065 |