Computational Protein Design: Principles, Methods, and Clinical Applications

Computational protein design (CPD) has evolved from a theoretical concept into a powerful tool for creating novel proteins with tailored functions.

Computational Protein Design: Principles, Methods, and Clinical Applications

Abstract

Computational protein design (CPD) has evolved from a theoretical concept into a powerful tool for creating novel proteins with tailored functions. This article provides a comprehensive overview for researchers and drug development professionals, covering the foundational principles of CPD, including the 'inverse folding problem' and key energy functions. It explores cutting-edge methodologies, from Rosetta and OSPREY to machine learning tools like ProteinMPNN and RFDiffusion, and their application in designing therapeutic antibodies, enzymes, and stable vaccine immunogens. The review also addresses critical troubleshooting aspects, such as overcoming low designability and marginal stability, and examines the rigorous validation frameworks that confirm the success of computational designs in both silico and experimental settings, ultimately highlighting the transformative impact of CPD on biomedicine.

From Inverse Folding to De Novo Design: Core Principles of Computational Protein Design

Computational protein design represents a fundamental paradigm shift in biomedical research, enabling the creation of novel proteins with tailored functions for therapeutic, industrial, and research applications. At the core of this paradigm lies the inverse folding problem, a critical computational challenge that involves identifying amino acid sequences that will fold into a predetermined three-dimensional protein structure [1] [2]. This problem stands in direct contrast to the traditional protein folding problem, which predicts the native structure of a given amino acid sequence. The significance of inverse folding extends across multiple domains, from drug development to enzyme engineering, as it provides researchers with a systematic methodology for creating proteins that nature has not explored [3].

The computational complexity of inverse folding differs substantially from traditional folding problems. Unlike folding, which scales exponentially with chain length, advanced design strategies for inverse folding can scale linearly with chain length, making the design of even large proteins computationally tractable [1]. This scalability is crucial for exploring the vast landscape of possible protein sequences—a space so immense that the fraction sampled by nature is infinitesimally small, estimated to be less than 1×10⁻³⁰⁰ of all possible sequences [3]. Inverse folding models serve as sophisticated guides in this expansive sequence space, enabling researchers to navigate toward functional proteins with desired structural characteristics.

Recent advances in machine learning and artificial intelligence have dramatically accelerated progress in solving the inverse folding problem. Modern computational approaches now allow researchers to rapidly generate hundreds of candidate sequences that are predicted to fold into a target structure, significantly compressing the traditional "design, make, test, analyze" cycle that has historically constrained protein engineering efforts [2] [3]. These developments are transforming the field of computational protein design, opening new possibilities for addressing challenges in medicine, technology, and sustainability that were previously intractable through conventional approaches.

Core Principles and Computational Challenges

Fundamental Principles of Inverse Folding

The theoretical foundation of inverse folding rests on several key principles derived from protein biophysics and structural biology. Central to these principles is the understanding that structural stability requires not only the burial of hydrophobic residues in the protein core but also strategic placement of additional hydrophobic residues on the surface to optimize folding energetics [1]. This nuanced understanding represents a significant advancement over earlier simplistic models that strictly adhered to a "hydrophobic inside, polar outside" strategy. Research on self-avoiding hydrophobic/polar chains has demonstrated that to avoid unwanted conformational states, designed sequences must possess neither too many nor too few hydrophobic residues, highlighting the delicate balance required for successful protein design [1].

Another critical principle involves the relationship between sequence diversity and structural conservation. Inverse folding models operate on the fundamental assumption that proteins with divergent sequences can retain similar function as long as their structures remain reasonably conserved [2]. This principle enables the exploration of sequence spaces far beyond natural homologs while maintaining structural and functional integrity. The resulting sequence identities from inverse folding typically range between >0.4 and <0.75, allowing researchers to sample a much broader portion of the sequence landscape compared to traditional methods that rely on limited point mutations [2]. This expansive sampling capability is particularly valuable for enzyme design, where exploring diverse sequences can lead to discovering variants with enhanced properties such as improved stability or novel catalytic activity.

Key Computational Challenges

Despite significant advances, inverse folding methodologies face several substantial computational challenges. A primary challenge involves the multiplicity of solutions—the recognition that many different amino acid sequences can fold into structurally similar proteins, a phenomenon known as structural degeneracy [1]. This degeneracy complicates the identification of optimal sequences, as the computational model must select from numerous possible solutions while ensuring the resulting protein not only folds into the desired conformation but also avoids alternative low-energy states.

Functional preservation presents another significant challenge, particularly when redesigning natural enzymes and binding proteins. Traditional inverse folding models focused primarily on structural stability often produce functionally impaired proteins because they fail to preserve residues critical for catalytic activity or molecular recognition [4]. This limitation stems from the models' optimization for folding energetics without incorporating constraints for functional sites. Related to this is the challenge of conformational dynamics, as optimizing sequences for a single static structure may impair the protein's ability to undergo functionally essential conformational changes [4]. This is particularly problematic for enzymes and binding proteins that rely on structural flexibility for their biological activity.

More recently, the integration of evolutionary information has emerged as a crucial strategy for addressing these challenges. By incorporating multiple sequence alignments and other evolutionary constraints, next-generation inverse folding models can better distinguish between residues critical for function versus those primarily involved in structural stability [4]. This integration helps preserve functional sites while allowing extensive sequence variation in other regions, enabling the design of proteins that maintain biological activity despite significant sequence divergence from natural counterparts.

Computational Methodologies and Models

Traditional Physics-Based Approaches

Early computational approaches to inverse folding relied heavily on physics-based models and energy minimization strategies. These methods employed atomistic force fields and statistical potentials to evaluate the compatibility between amino acid sequences and target structures [1]. The primary objective was to identify sequences that minimized the free energy of the target conformation, thereby ensuring it would represent the lowest accessible free energy state. These physics-based approaches incorporated explicit modeling of molecular interactions, including van der Waals forces, electrostatics, solvation effects, and hydrogen bonding [1].

A key insight from these early studies was the importance of hydrogen bonding networks, particularly in β-sheet structures, for achieving mechanical stability and resistance to environmental extremes [5]. Research demonstrated that systematically maximizing hydrogen-bond networks within force-bearing β strands could produce proteins with exceptional stability, exhibiting unfolding forces exceeding 1,000 pN—approximately 400% stronger than natural titin immunoglobulin domains [5]. These designed proteins retained structural integrity even after exposure to extreme conditions such as 150°C, highlighting the potential of physics-based design principles for creating robust protein systems.

While valuable, these traditional approaches faced significant limitations in computational efficiency and accuracy. The complexity of accurately modeling all relevant molecular interactions often restricted applications to small proteins or required substantial computational resources. Additionally, these methods struggled with the vastness of sequence space and the subtle nature of protein folding energetics, particularly long-range interactions and cooperative effects that are challenging to capture with simplified energy functions.

Machine Learning and Deep Learning Models

The field of inverse folding has been transformed by the introduction of machine learning models, particularly deep learning approaches trained on large datasets of known protein structures and sequences. These models have dramatically improved both the efficiency and accuracy of protein design, enabling the rapid generation of diverse sequences for complex target structures.

Table 1: Key Machine Learning Models for Inverse Folding

| Model Name | Core Architecture | Key Features | Typical Applications |

|---|---|---|---|

| ProteinMPNN [2] | Autoregressive neural network | Fast sequence generation, confidence scores, soluble protein training | De novo protein design, enzyme engineering, therapeutic proteins |

| ABACUS-T [4] | Sequence-space denoising diffusion | Atomic sidechains, ligand interactions, multiple conformational states | Functional enzyme redesign, allosteric proteins, ligand-binding proteins |

| ESM-IF1 [2] | Transformer-based | Evolutionary scale modeling, masked structure prediction | Protein variant generation, stability optimization |

| RFdiffusion [2] | Diffusion model | Backbone structure generation, complex design | Protein binders, symmetric assemblies, novel folds |

These machine learning approaches operate on different principles than traditional physics-based methods. For example, ProteinMPNN uses an autoregressive architecture that predicts amino acids sequentially while conditioning on both the target structure and previously generated residues [2]. This approach allows for efficient sampling of sequence space and can generate hundreds of candidate sequences in minutes. The model is typically trained on massive datasets of masked protein structures, where it learns to predict original sequences from partially obscured structural information [2]. During inference, the model receives a protein backbone (often with masked side chains) and generates plausible sequences that would fold into that structure.

More advanced models like ABACUS-T employ a denoising diffusion probabilistic model (DDPM) in sequence space, which uses successive reverse diffusion steps to generate amino acid sequences from a fully "noised" starting sequence [4]. A distinctive feature of ABACUS-T is that at each denoising step, both residue types and sidechain conformations are decoded, and each step is self-conditioned with the output amino acid sequence from the previous step [4]. This approach, combined with integration of evolutionary information from multiple sequence alignments and pre-trained protein language models, enables more accurate inverse folding that better preserves functional sites.

Advanced Frameworks: ABACUS-T and Multimodal Integration

The ABACUS-T framework represents a significant advancement in inverse folding methodology through its multimodal approach that unifies several critical features into a single computational framework [4]. Unlike previous models that focused primarily on structural compatibility, ABACUS-T incorporates multiple sources of information to enhance both structural accuracy and functional preservation in designed proteins.

Core Architectural Innovations

ABACUS-T employs a sequence-space denoising diffusion probabilistic model (DDPM) that generates amino acid sequences through successive refinement steps [4]. The model begins with a fully "noised" sequence where residue types at all positions are undetermined, then progressively specifies these residues through a series of reverse diffusion steps. Key innovations include the simultaneous decoding of both residue types and sidechain conformations at each step, and a self-conditioning mechanism that incorporates the output from previous denoising steps [4]. This self-conditioning, implemented using a pre-trained Evolutionary Scale Modelling (ESM) sequence language model, significantly improves inference accuracy compared to earlier approaches.

The model can be further enhanced with three types of optional input beyond a single backbone structure: atomic structures of ligand molecules, multiple conformational states of the backbone, and evolutionary information from multiple sequence alignments (MSA) [4]. This multimodal integration addresses critical limitations of previous inverse folding methods, particularly their tendency to disrupt functional sites when optimizing for structural stability alone.

Experimental Validation and Performance

ABACUS-T has demonstrated remarkable experimental success across multiple protein engineering challenges. When applied to an allose binding protein, the model generated variants with 17-fold higher binding affinity while retaining conformational change functionality [4]. Redesigned versions of endo-1,4-β-xylanase and TEM β-lactamase maintained or surpassed wild-type activity while achieving substantial increases in thermostability (ΔTₘ ≥ 10°C) [4]. In the case of OXA β-lactamase, ABACUS-T enabled rational alteration of substrate specificity while simultaneously enhancing protein stability. Notably, these significant enhancements were achieved by testing only a few designed sequences, each containing dozens of simultaneously mutated residues relative to wild-type enzymes [4].

Table 2: Performance Metrics of Advanced Inverse Folding Models

| Model | Structural Accuracy | Functional Preservation | Thermostability Enhancement | Design Speed |

|---|---|---|---|---|

| ProteinMPNN [2] | High (TM-score >0.7) | Moderate (requires fixed positions) | Significant (ΔTₘ ~5-15°C) | Very Fast (100s of sequences/min) |

| ABACUS-T [4] | Very High (TM-score >0.8) | High (maintains activity) | Very Significant (ΔTₘ ≥10°C) | Moderate (requires more computation) |

| Traditional Methods [1] | Moderate | Low to Moderate | Variable | Slow (extensive sampling required) |

The performance advantages of ABACUS-T over ablated versions highlight the importance of its integrated features. Comparative analyses show that the full model outperforms versions with smaller ESM models, removed self-conditioning, or excluded ligand modeling [4]. These results confirm that the multimodal approach provides tangible benefits for designing functional proteins, particularly for enzymes and other proteins where specific molecular interactions are critical for biological activity.

Experimental Protocols and Validation

Computational Workflow and Implementation

The standard computational workflow for inverse folding begins with structure preparation, which involves obtaining or generating a target protein backbone structure. This structure may come from experimental sources (X-ray crystallography, NMR, cryo-EM) or computational prediction tools like AlphaFold2 [2]. For functional proteins, critical regions such as active sites or binding interfaces may be partially fixed to preserve functionality, though advanced models like ABACUS-T can automatically identify and preserve these regions through integrated evolutionary information [4].

The next step involves sequence generation using inverse folding models. For ProteinMPNN, this typically includes specifying fixed positions to guide the model away from nonsensical outputs and excluding problematic amino acids (e.g., cysteines to prevent unwanted disulfide bonds) [2]. The model can be run with different versions, such as the "soluble" model trained specifically on soluble proteins to enhance the likelihood of generating well-behaved variants. ABACUS-T employs a more complex process that can incorporate multiple backbone conformations and ligand coordinates to preserve functional dynamics and binding sites [4].

Following sequence generation, candidates are filtered based on confidence metrics (e.g., ProteinMPNN's score, where values closer to zero indicate better predictions) and structural validation using tools like AlphaFold2 to predict the structures of designed sequences [2]. The similarity between predicted and target structures is quantified using metrics like TM-align score, with higher scores indicating better structural matches. This computational validation helps prioritize candidates for experimental testing before moving to resource-intensive laboratory work.

Experimental Validation Techniques

Experimental validation of computationally designed proteins employs multiple complementary techniques to assess structural integrity, stability, and function. Structural validation typically involves biophysical methods such as circular dichroism (CD) spectroscopy to verify secondary structure content, nuclear magnetic resonance (NMR) spectroscopy to confirm tertiary structure, and X-ray crystallography for atomic-level structural determination [5] [4]. For the latter, the solution NMR structure of designed proteins (e.g., A339 in referenced studies) can be deposited in the Protein Data Bank (PDB) for public access and verification [5].

Thermal stability assessments measure the melting temperature (Tₘ) of designed proteins using techniques like differential scanning calorimetry (DSC) or thermal shift assays [4]. Successful designs typically show significant increases in Tₘ (ΔTₘ ≥ 10°C) compared to wild-type proteins, demonstrating the effectiveness of inverse folding in enhancing structural robustness [4]. For mechanostable proteins, single-molecule force spectroscopy techniques like atomic force microscopy (AFM) can quantify unfolding forces, with high-performance designs exhibiting resistance exceeding 1,000 pN [5].

Functional assays are crucial for validating that designed proteins maintain or enhance biological activity. For enzymes, these assays measure catalytic parameters (Kₘ, kₐₜₜ) against relevant substrates [4]. For binding proteins, surface plasmon resonance (SPR) or isothermal titration calorimetry (ITC) determine binding affinity and specificity [4]. In successful applications, designed proteins have shown substantially improved function, such as 17-fold higher binding affinity in redesigned allose binding proteins, while maintaining essential functional characteristics like ligand-induced conformational changes [4].

Research Reagent Solutions

The experimental implementation of inverse folding requires specialized reagents and computational resources for expressing, purifying, and characterizing designed proteins. The following table outlines essential research reagents and their applications in the protein design pipeline.

Table 3: Essential Research Reagents for Inverse Folding Implementation

| Reagent/Resource | Function | Application Examples |

|---|---|---|

| Expression Vectors | Protein production in host systems | Plasmid systems for E. coli, yeast, or mammalian expression |

| Chromatography Media | Protein purification | Ni-NTA for His-tagged proteins, ion-exchange, size exclusion |

| Stability Assays | Thermal stability measurement | Differential scanning calorimetry, thermal shift dyes |

| Structural Biology Tools | Structure verification | Crystallization screens, NMR isotopes, cryo-EM grids |

| Functional Assays | Activity assessment | Enzyme substrates, binding partners, cellular activity reporters |

| Computational Resources | Running design models | GPU clusters for deep learning, cloud computing platforms |

Specialized computational tools form another critical component of the inverse folding reagent toolkit. ProteinMPNN and ABACUS-T provide the core inverse folding capabilities, while AlphaFold2 serves as a crucial validation tool for predicting structures of designed sequences [2] [4]. Molecular dynamics software like GROMACS enables all-atom simulations to assess structural dynamics and stability [5]. For specialized applications, automated execution scripts for annealing simulations and steered molecular dynamics (SMD), available via GitHub repositories, provide standardized protocols for evaluating mechanical properties of designed proteins [5].

Access to curated protein databases represents another essential resource for inverse folding research. Databases such as MaxQB offer comprehensive collections of high-resolution mass spectrometry data that can inform design decisions and provide experimental validation benchmarks [6]. The Protein Data Bank (PDB) remains the primary source of structural templates for design projects, while specialized resources like the Global Proteome Machine and PeptideAtlas provide peptide identification data that can guide sequence selection [6].

Applications in Biotechnology and Medicine

The inverse folding paradigm has enabled groundbreaking applications across multiple domains of biotechnology and medicine. In therapeutic protein engineering, inverse folding has been used to design protein binders that target specific regions of disease-relevant proteins and receptors [2]. For example, small protein binders designed to inhibit Human PDCD1 have shown promise as cancer immunotherapies, with inverse folding enabling the creation of alternative versions with improved pharmacological properties [2]. The ability to rapidly generate diverse sequences for a target structure allows researchers to optimize therapeutic proteins for stability, specificity, and reduced immunogenicity while maintaining target recognition.

In enzyme engineering, inverse folding has proven particularly valuable for enhancing stability under industrial conditions while maintaining or improving catalytic activity. Successful applications include the redesign of endo-1,4-β-xylanase and TEM β-lactamase, which achieved substantial increases in thermostability (ΔTₘ ≥ 10°C) without compromising enzymatic function [4]. More advanced implementations have enabled the rational alteration of substrate specificity, as demonstrated with OXA β-lactamase, where inverse folding facilitated the creation of variants with altered antibiotic selectivity profiles [4]. These applications highlight how inverse folding can address dual objectives of stability enhancement and functional optimization simultaneously.

The technology has also enabled the creation of novel protein materials with exceptional properties. By maximizing hydrogen-bond networks within β-sheets, researchers have designed proteins with extreme mechanical stability, forming hydrogels that maintain structural integrity at high temperatures [5]. These materials showcase how inverse folding can produce proteins with properties exceeding those found in nature, opening possibilities for applications in biomaterials, drug delivery, and industrial biocatalysis where robustness under extreme conditions is essential.

Emerging Trends and Developments

The field of inverse folding is evolving rapidly, with several emerging trends shaping its future trajectory. Multimodal integration represents a significant direction, as exemplified by ABACUS-T's combination of structural, evolutionary, and conformational information [4]. This approach addresses the critical challenge of functional preservation while enabling extensive sequence exploration, potentially expanding the applicability of inverse folding to more complex protein systems such as allosteric enzymes and molecular machines.

Another important trend involves the integration of protein language models that have been pre-trained on massive sequence databases [4]. These models capture evolutionary constraints and patterns that are difficult to derive from structural information alone, providing implicit guidance for maintaining functional sites and foldability during sequence design. As these language models become more sophisticated and incorporate more diverse sequences, they are likely to further enhance the success rate of inverse folding methods, particularly for challenging design targets with limited structural homologs.

There is also growing interest in dynamics-aware inverse folding that considers multiple conformational states rather than single static structures [4]. This approach recognizes that many proteins require structural flexibility for their function, and designing sequences that stabilize a single conformation may inadvertently impair biological activity. By incorporating conformational ensembles from molecular dynamics simulations or experimental sources, next-generation inverse folding models could better preserve functional dynamics while still enhancing stability.

The inverse folding problem represents a cornerstone of the computational protein design paradigm, providing a systematic methodology for navigating the vast sequence space to identify proteins that fold into predetermined structures. From its origins in physics-based energy minimization to current deep learning approaches, the field has made remarkable progress in solving this fundamental challenge. Modern inverse folding models like ProteinMPNN and ABACUS-T can rapidly generate diverse sequences that fold into target structures with high accuracy, enabling applications ranging from therapeutic protein engineering to industrial enzyme design.

Despite these advances, significant challenges remain, particularly in preserving complex functions while enhancing stability and in designing proteins with specified dynamical properties. The integration of multimodal information—combining structural, evolutionary, and conformational data—represents a promising direction for addressing these challenges. As inverse folding methodologies continue to mature, they are poised to dramatically accelerate the protein design cycle, potentially enabling the routine creation of proteins with custom-tailored properties for diverse applications in medicine, biotechnology, and materials science. This progress will further establish computational protein design as a transformative discipline capable of addressing challenges beyond the reach of traditional protein engineering approaches.

Computational protein design (CPD) addresses the inverse folding problem: identifying amino acid sequences that will fold into a specific three-dimensional structure and perform a desired function [7]. At the heart of every CPD pipeline lies the energy function—a mathematical model that quantifies the structural stability and functional compatibility of protein sequences. These functions serve as objective functions to guide the exploration of vast sequence-structure spaces, distinguishing viable designs from non-functional ones. The fundamental principle governing this process is the thermodynamic hypothesis formulated by Anfinsen, which states that a protein's native structure corresponds to its global minimum free energy state [8]. Energy functions in CPD broadly fall into two categories: physics-based potentials derived from fundamental physical principles, and knowledge-based potentials derived from statistical analyses of known protein structures in databases. The strategic balance between these approaches represents a core challenge in advancing the field, as both offer complementary advantages and limitations that must be carefully weighed for different design applications.

Theoretical Foundations of Energy Functions

Physics-Based Energy Functions

Physics-based energy functions, also known as ab initio or molecular mechanics force fields, compute the potential energy of a protein structure using terms derived from fundamental physical principles. These functions typically comprise several components that collectively describe covalent and non-covalent interactions:

The AMBER force field provides a representative framework for physics-based approaches, with energy terms including bond stretching, angle bending, torsional rotations, van der Waals interactions, and electrostatic calculations [9]. For solvation effects, which are critical for accurate energy assessment, physics-based functions often employ implicit solvent models. The Generalized Born (GB) model is particularly prevalent in CPD applications as it captures essential solvation physics while remaining computationally tractable for design algorithms [9].

A significant advantage of physics-based functions is their transferability—they can be applied to non-biological polymers, non-canonical amino acids, and novel fold spaces not represented in existing protein databases [9]. This makes them particularly valuable for de novo design projects aiming to explore entirely new regions of protein structural space. However, this generality comes at substantial computational cost, and accuracy can be limited by approximations in the physical models, particularly in representing long-range interactions and solvent effects.

Knowledge-Based Energy Functions

Knowledge-based energy functions, also known as statistical potentials or empirical potentials, derive from statistical analyses of residue-residue contact patterns, torsion angles, and other structural features observed in experimentally determined protein structures. These approaches are grounded in the inverse Boltzmann principle, which converts observed frequencies of structural features into effective energy terms under the assumption that naturally occurring proteins sample low-energy states.

These functions leverage the rich structural information contained in databases such as the Protein Data Bank (PDB), extracting empirical preferences for amino acid interactions, backbone dihedral angles, hydrogen-bonding geometries, and packing densities [9]. The BLOSUM substitution matrices represent one widely used form of knowledge-based information that captures evolutionary constraints on amino acid replacements [9].

The primary strength of knowledge-based potentials lies in their efficiency and implicit capture of complex physical effects that are challenging to model explicitly. By learning from nature's solutions, these functions incorporate the net effects of sophisticated physical phenomena without requiring explicit computation. However, this approach suffers from database bias—it cannot recommend novel structural solutions or amino acid configurations not already present in the training data, potentially limiting innovation in protein design.

Table 1: Comparison of Physics-Based and Knowledge-Based Energy Functions

| Feature | Physics-Based Potentials | Knowledge-Based Potentials |

|---|---|---|

| Theoretical Basis | Fundamental physical principles (molecular mechanics) | Statistical analysis of protein databases |

| Key Components | Bond stretching, angle bending, van der Waals, electrostatics, GB solvation | Residue-residue contact potentials, torsion potentials, hydrogen-bond statistics |

| Representative Implementations | AMBER with GB solvent [9] | Statistical torsion potentials [9], BLOSUM matrices [9] |

| Computational Cost | High | Low to moderate |

| Transferability | High (novel folds, non-biological polymers) | Limited to observed structural space |

| Key Strengths | Physically rigorous, applicable to novel chemistries | Efficient, implicitly captures complex physics |

| Major Limitations | Approximations in physical models, computational expense | Database bias, limited innovation capacity |

Integrated Approaches: Balancing Both Potentials

Hybrid Energy Functions

Recognizing the complementary strengths of both approaches, modern CPD pipelines increasingly employ hybrid energy functions that strategically combine physics-based and knowledge-based terms. A representative example comes from the successful redesign of a PDZ domain, where researchers used a physics-based function for the folded state (AMBER force field with GB solvation) coupled with a knowledge-based potential for the unfolded state [9]. This hybrid approach leveraged the accuracy of physics-based models for describing specific atomic interactions in the native structure while using efficient statistical potentials to estimate the conformational ensemble of the denatured state.

The theoretical justification for this partitioning lies in the different structural precision required for modeling each state. The folded state possesses a well-defined, unique structure where specific atomic interactions critically determine stability, making it amenable to physics-based description. Conversely, the unfolded state represents a heterogeneous ensemble where statistical averages across many configurations may sufficiently capture its energetic properties.

Energy Function Integration in CPD Workflows

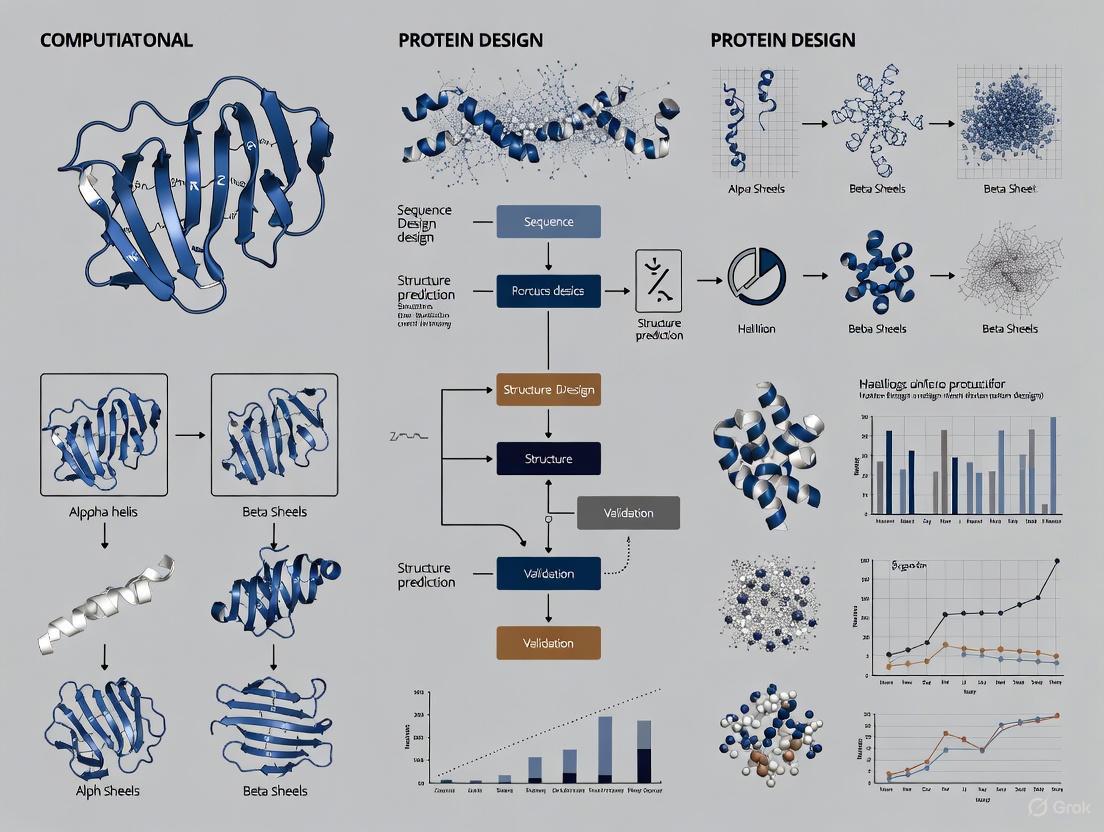

The integration of energy functions within complete CPD workflows involves multiple sophisticated components working in concert. The following diagram illustrates how these elements interact in a modern computational design pipeline:

Figure 1: Integration of energy functions within a computational protein design workflow. Energy functions guide sampling algorithms and sequence optimization, with successful candidates proceeding through validation stages.

Implementation in Modern CPD Frameworks

Sampling Algorithms and Sequence Optimization

The enormous complexity of protein sequence-structure space necessitates sophisticated sampling algorithms that can efficiently identify low-energy combinations. Monte Carlo simulations represent one widely used approach, where random mutations and conformational changes are accepted or rejected based on the calculated energy change according to the Metropolis criterion [9]. In the PDZ redesign study, Monte Carlo simulations exploring 3.7 × 10⁷⁶ possible sequence variations successfully identified thousands of low-energy sequences, demonstrating the power of this approach when guided by appropriate energy functions [9].

Dead-end elimination (DEE) algorithms provide complementary sampling by systematically eliminating rotamer combinations that cannot be part of the global energy minimum solution, thus pruning the search space [10]. These algorithms have been extended with backbone flexibility to enhance sampling of both sequence and structural space, acknowledging the intimate coupling between sequence variation and backbone conformational changes [11].

The Rise of Machine Learning Approaches

Recent advances have introduced machine learning models that implicitly capture aspects of both physics-based and knowledge-based potentials through deep learning on vast protein databases. ProteinMPNN has emerged as a powerful neural network for solving the "inverse folding" problem—designing sequences for given backbone structures—effectively functioning as a highly sophisticated knowledge-based potential [12]. Meanwhile, RFdiffusion applies diffusion models to generate novel protein backbones de novo, enabling the creation of new folds not present in existing databases [12].

These AI-driven approaches represent a convergence of physical and knowledge-based principles: they learn from natural proteins (knowledge-based) but can generalize to novel folds (physics-like). The RoseTTAFold diffusion framework exemplifies this synthesis, combining a structure prediction network trained on known structures with a generative diffusion process that explores new structural spaces [12].

Experimental Validation and Case Studies

Successful Redesign of a PDZ Domain

A landmark demonstration of physics-based energy functions came from the complete redesign of a PDZ domain using the AMBER force field with GB solvation for the folded state [9]. The experimental protocol involved several key stages:

Backbone Preparation: The design process began with the high-resolution X-ray structure of the apo CASK PDZ domain, maintaining the backbone conformation throughout the design process.

Sequence Design Space: 61 of 83 residues (73.5% of the sequence) were allowed to mutate freely to any amino acid except glycine or proline, while 13 peptide-binding residues maintained wild-type identity and 9 glycine/proline positions remained fixed.

Monte Carlo Sampling: Extended simulations generated thousands of sequences, with the 2,000 lowest-energy candidates selected for further analysis.

Empirical Filtering: Sequences were filtered using knowledge-based criteria including isoelectric point, fold recognition confidence, cavity presence, and chemical similarity to natural PDZ domains.

The three selected designs contained approximately 60% mutated residues (50-51 mutations each) yet all exhibited native-like circular dichroism spectra and 1D-NMR spectra, with two designs demonstrating upshifted thermal denaturation in the presence of peptide ligand—strong evidence of correct folding to functional PDZ structures [9].

Table 2: Key Experimental Results from PDZ Redesign Study

| Design Candidate | Number of Mutations | Structural Characterization | Functional Assessment |

|---|---|---|---|

| Candidate 1350 | 50 mutations (60.2%) | Native-like CD spectra, folded 1D-NMR | Peptide binding demonstrated |

| Candidate 1555 | 51 mutations (61.4%) | Native-like CD spectra, folded 1D-NMR | Peptide binding demonstrated |

| Candidate 1669 | 51 mutations (61.4%) | Native-like CD spectra, folded 1D-NMR | Inconclusive binding data |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for CPD Validation

| Reagent/Material | Function in CPD Validation |

|---|---|

| CASK PDZ Domain Template | Structural scaffold for redesign experiments [9] |

| Monte Carlo Simulation Software | Sampling sequence space and identifying low-energy variants [9] |

| Generalized Born Solvent Model | Implicit solvation for physics-based energy calculations [9] |

| Circular Dichroism Spectrometer | Assessing secondary structure content of designed proteins [9] |

| NMR Spectroscopy | Evaluating tertiary structure and folding properties [9] |

| Thermal Denaturation Assays | Measuring protein stability and ligand binding effects [9] |

| ProteinMPNN | Machine learning-based sequence design for given structures [12] |

| RFdiffusion | Generative AI for de novo backbone design [12] |

Current Challenges and Future Perspectives

Despite significant advances, energy functions in CPD continue to face several fundamental challenges. The accuracy of physics-based functions remains limited by approximations in force field parameters and solvation models, while knowledge-based approaches struggle with generalization beyond the training data distribution. The enormous computational expense of rigorous physics-based scoring presents practical constraints on the complexity and scale of design projects.

Future developments will likely focus on tightly integrated human-AI frameworks that leverage the respective strengths of computational and experimental approaches. The emerging seven-toolkit workflow—encompassing database search, structure prediction, function prediction, sequence generation, structure generation, virtual screening, and DNA synthesis—represents a systematic approach to organizing these tools into a coherent engineering discipline [13].

The integration of quantum mechanical calculations for modeling critical electronic interactions, particularly in enzyme active sites, promises to enhance the accuracy of physics-based potentials for challenging functional design problems [10]. Simultaneously, multi-state design frameworks are evolving to explicitly consider the conformational heterogeneity and thermodynamic equilibria that underlie protein function, moving beyond single-structure optimization [8].

The following diagram illustrates the complex relationship between different energy function types and their performance characteristics:

Figure 2: Relationship between energy function types and their performance characteristics, showing how hybrid approaches integrate advantages while mitigating limitations.

The strategic balance between physics-based and knowledge-based energy functions represents a core principle in computational protein design research. While physics-based potentials provide fundamental principles and transferability to novel design spaces, knowledge-based potentials offer efficiency and implicit encoding of nature's evolutionary solutions. The most successful CPD pipelines increasingly adopt hybrid approaches that leverage the complementary strengths of both paradigms, often enhanced by machine learning methods that transcend traditional categories.

The successful redesign of a PDZ domain using primarily physics-based potentials demonstrates that fundamental physical principles can guide protein design, while extensive empirical filtering highlights the practical value of incorporating knowledge-based criteria [9]. As the field advances, the integration of more sophisticated physical models, larger and more diverse structural databases, and increasingly powerful machine learning algorithms will further blur the distinctions between these approaches, leading to more robust and accurate energy functions that accelerate the design of novel proteins for therapeutic, industrial, and scientific applications.

The accurate modeling of protein flexibility stands as one of the most significant challenges in computational structural biology. Proteins are dynamic entities whose functional capabilities emerge from their ability to sample conformational ensembles rather than exist as static structures. Within this paradigm, rotamer libraries and backbone sampling techniques provide the fundamental mathematical and statistical frameworks for representing structural flexibility in computationally tractable models. These approaches enable researchers to navigate the vast conformational space available to proteins, facilitating advances in structure prediction, protein design, and therapeutic development. The integration of these methods represents a core principle in modern computational protein design: that meaningful functional predictions require accounting for structural plasticity at both the side-chain and backbone levels. This technical guide examines the current state of rotamer library development and backbone sampling methodologies, providing researchers with both theoretical foundations and practical implementation strategies for modeling protein flexibility.

Rotamer Libraries: Statistical Frameworks for Side-Chain Conformational Sampling

Theoretical Foundations and Historical Development

Rotamer libraries address the combinatorial challenge of side-chain placement by leveraging the observation that side-chain dihedral angles tend to cluster around energetically favored conformations known as rotamers. The development of these libraries has evolved from simple backbone-independent statistics to sophisticated backbone-dependent probability models that capture the critical relationship between local main-chain conformation and side-chain conformational preferences.

The first backbone-dependent rotamer library was introduced by Dunbrack in 1993, derived from statistical analysis of 132 high-resolution protein structures [14]. This library established the fundamental principle that rotamer probabilities vary systematically with backbone dihedral angles (φ and ψ). Subsequent refinements incorporated Bayesian statistical methods to provide improved probability estimates, particularly in sparsely populated regions of the Ramachandran map [15] [14]. The Bayesian approach implemented by Dunbrack and Cohen in 1997 introduced a prior probability based on the assumption that the steric and electrostatic effects of φ and ψ dihedral angles act independently, significantly improving the library's predictive power [14].

A major advancement came with the development of smoothed backbone-dependent rotamer libraries using kernel density estimation and kernel regression with von Mises distribution kernels [15]. This approach addressed a critical limitation of earlier discrete libraries: their lack of smoothness and continuity across the Ramachandran map, which caused artifacts in structure prediction and design algorithms that utilize derivative-based optimization methods [15]. The kernel-based method enables evaluation of rotamer probabilities, mean angles, and variances as continuous functions of φ and ψ, providing the mathematical smoothness required for modern gradient-based optimization algorithms [15].

Table 1: Evolution of Backbone-Dependent Rotamer Libraries

| Library Version | Key Innovations | Statistical Methodology | Applications |

|---|---|---|---|

| Dunbrack 1993 [14] | First backbone-dependent library | Raw counts in 20°×20° (φ,ψ) bins | Side-chain prediction |

| Dunbrack & Cohen 1997 [14] | Bayesian priors, periodic kernel | Bayesian statistics with 10°×10° bins | Homology modeling, early protein design |

| Dunbrack 2002 [15] | Improved treatment of non-rotameric degrees of freedom | Updated Bayesian model | Structure prediction, molecular replacement |

| Shapovalov & Dunbrack 2011 [15] | Smooth continuous probabilities | Adaptive kernel density estimation | Flexible-backbone design, gradient-based optimization |

| MEDFORD 2022 [16] | High (φ,ψ) coverage via metadynamics | Bias-exchange metadynamics simulations | Cyclic peptides, noncanonical amino acids |

Key Rotamer Library Types and Their Applications

Rotamer libraries can be broadly categorized into two primary types with distinct characteristics and applications. Backbone-independent rotamer libraries (BBIRLs) provide statistical information about side-chain conformations without reference to backbone geometry, while backbone-dependent rotamer libraries (BBDRLs) express rotamer frequencies and mean dihedral angles as functions of backbone conformation [14].

Comparative studies have revealed that BBIRLs can generate conformations that closely match native structures when they contain very large numbers of rotamers (7,000-50,000 conformations) [17]. However, for practical applications in protein design and side-chain prediction, BBDRLs consistently achieve higher performance despite having fewer total rotamers [17]. This advantage stems from the energy term derived from rotamer probabilities associated with specific backbone torsion angle subspaces, which provides critical information for distinguishing between amino acid identities and their conformational variants [17]. Additionally, the backbone-dependent restriction of conformational search spaces significantly accelerates computational searching, making BBDRLs more efficient despite their apparent complexity [17].

Table 2: Comparison of Rotamer Library Types in Practical Applications

| Performance Metric | Backbone-Independent (BBIRL) | Backbone-Dependent (BBDRL) | Significance |

|---|---|---|---|

| Side-chain reproduction accuracy | Higher with very large libraries (>7000 rotamers) [17] | Competitive with optimized libraries [17] | BBIRLs can reproduce native geometries but require large conformational sets |

| Side-chain prediction accuracy | 87% for χ₁, 74% for χ₁+₂ (20° cutoff) [17] | 84-86% for χ₁, 71-75% for χ₁+₂ (40° cutoff) [17] | BBDRLs achieve high accuracy with more physically realistic search spaces |

| Sequence recapitulation in design | Lower performance in native sequence recovery [17] | Higher performance in native sequence recovery [17] | Backbone-dependent probabilities better distinguish amino acid identities |

| Computational speed | Slower despite smaller libraries [17] | Faster due to restricted search spaces [17] | Backbone-dependent filtering dramatically reduces conformational searching |

| Coverage of unusual backbones | Limited to experimentally observed conformations | Limited to experimentally observed conformations | Both struggle with noncanonical backbone geometries |

Table 3: Research Reagent Solutions for Rotamer-Based Modeling

| Resource | Function | Application Context |

|---|---|---|

| Dunbrack Rotamer Library [15] [14] | Provides backbone-dependent rotamer probabilities | Side-chain packing in structure prediction and protein design |

| MEDFORD Library [16] | Offers expanded coverage of (φ,ψ) space via metadynamics | Modeling cyclic peptides and noncanonical amino acids |

| SCWRL Algorithm [18] | Implements rapid side-chain placement using rotamer libraries | Homology modeling and structure prediction |

| Rosetta Software Suite [15] [14] | Utilizes rotamer libraries for protein design and structure prediction | De novo protein design, protein folding, and docking |

| Dynameomics Library [14] | Provides dynamics-derived rotamer distributions | Sampling across thermally accessible conformational states |

Backbone Sampling Methodologies: Beyond Rigid Scaffolds

The Critical Role of Backbone Flexibility

While rotamer libraries address side-chain flexibility, the modeling of backbone dynamics presents distinct challenges that require specialized methodologies. The protein backbone serves as the structural scaffold upon which side-chains are arranged, and its conformation directly influences both the available rotameric states and their probabilities [15] [14]. Backbone flexibility becomes particularly important when modeling conformational changes upon ligand binding, designing proteins with novel folds, or working with constrained peptides that sample unusual regions of the Ramachandran map [16].

Traditional rotamer libraries face limitations when the backbone deviates significantly from commonly observed conformations in protein crystal structures. This is particularly relevant for cyclic peptides and engineered proteins where backbone strain can force dihedral angles into regions sparsely populated in natural proteins [16]. Additionally, methods that incorporate backbone flexibility have demonstrated improved performance in protein design applications, as they allow optimization of both sequence and structure simultaneously [15].

Computational Approaches for Backbone Sampling

Multiple computational strategies have been developed to address the challenge of backbone flexibility, each with distinct advantages and limitations. Molecular dynamics (MD) simulations provide atomistic detail and physical realism but face significant computational barriers for simulating biologically relevant timescales [19]. Enhanced sampling methods like metadynamics have been employed to improve coverage of conformational space, as demonstrated by the MEDFORD rotamer library which uses bias-exchange metadynamics to achieve comprehensive sampling of the Ramachandran map [16].

The Essential Dynamics Sampling (EDS) technique represents an alternative approach that reduces the effective dimensionality of the sampling problem by focusing on collective motions derived from principal component analysis of protein trajectories [19]. This method has successfully simulated protein folding processes using only a fraction of the system's total degrees of freedom [19]. For protein-ligand interactions, steered molecular dynamics (SMD) simulations incorporate flexibility by applying restrained potentials to selected Cα atoms, balancing the need to prevent overall protein rotation while maintaining natural flexibility during unbinding processes [20].

Diagram 1: Backbone Sampling Methodologies - A classification of computational approaches for modeling protein backbone flexibility, showing their relationships and primary applications.

Integrated Methodologies: Combining Rotamer Libraries with Backbone Sampling

Synergistic Approaches in Protein Design

The most advanced computational protein design methodologies integrate rotamer-based side-chain sampling with backbone flexibility, creating synergistic systems that more accurately capture protein structural biology's fundamental principles. This integration typically involves iterative optimization protocols that alternate between refining side-chain placements using rotamer libraries and adjusting backbone conformations through various sampling techniques [15].

The development of smoothed rotamer libraries has been particularly valuable for these integrated approaches, as they enable the use of derivative-based optimization methods that require continuous probability functions [15]. When backbone minimization is performed using algorithms that compute gradients with respect to backbone dihedral angles, the smoothness of the rotamer probability functions prevents optimization artifacts and improves convergence [15]. This capability has become increasingly important as backbone flexibility is incorporated into comparative modeling and protein design methods [15].

Experimental Protocols for Integrated Flexibility Modeling

Protocol 1: Development of a Smoothed Backbone-Dependent Rotamer Library

Data Curation: Collect high-resolution protein structures from the Protein Data Bank, applying quality filters based on resolution and electron density map quality [15].

Density-Based Filtering: Implement algorithms like REDUCE to assign optimal orientations for ambiguous groups such as Asn/Gln side-chain amides and His ring flips [15].

Adaptive Kernel Density Estimation: For each rotamer r of a given residue type, determine a probability density estimate ρ(φ,ψ|r) using von Mises distribution kernels with bandwidths adapted to local data density [15].

Bayesian Inversion: Apply Bayes' theorem to convert ρ(φ,ψ|r) to P(r|φ,ψ) using backbone-independent rotamer probabilities P(r) as priors [15].

Kernel Regression for Dihedral Angles: Use adaptive kernel regression estimators to determine mean dihedral angles and variances as functions of backbone conformation [15].

Non-Rotameric Degrees of Freedom: Model continuous probability density estimates for sp2-sp3 hybridized dihedral angles (e.g., Asn/Asp χ₂) as functions of backbone and rotameric degrees of freedom [15].

Protocol 2: MEDFORD Library Development via Metadynamics

Dipeptide System Preparation: Construct Ace-X-Nme dipeptides for each amino acid of interest, with initial structures in both α-helical and β-sheet regions [16].

Force Field Selection: Employ the RSFF2 force field for canonical amino acids and AMBER ff99SB with GAFF parameters for noncanonical amino acids [16].

Bias-Exchange Metadynamics: Perform 200ns simulations with one biased and five neutral replicas, applying a two-dimensional (φ,ψ) bias in the biased replica [16].

Convergence Validation: Calculate normalized integrated product (NIP) between distributions from different initial structures to verify sampling convergence [16].

Rotamer Probability Calculation: Combine data from all replicas and calculate P(χₐₗₗ|φ,ψ) by binning data in backbone dihedral space [16].

Rotamer Definition: For rotameric dihedrals, define three rotamers (r60, r180, r300) as (0°,120°], (120°,240°], and (240°,360°] respectively [16].

Diagram 2: Rotamer Library Development Workflow - A comprehensive workflow for developing both statistics-based and simulation-based rotamer libraries, showing key steps and methodological choices.

Applications in Protein Design and Structural Biology

Structure Prediction and Validation

Rotamer libraries serve as essential components in protein structure prediction pipelines, providing discrete conformational search spaces and statistical energy terms that guide side-chain placement. In homology modeling, tools like SCWRL leverage backbone-dependent rotamer libraries to rapidly assemble side-chain conformations onto model backbones, achieving prediction accuracies of approximately 85% for χ₁ angles when building side-chains onto native backbones [18]. Perhaps more importantly, these methods maintain useful prediction accuracy (approximately 74% for χ₁) in homology modeling scenarios where side-chains are placed onto non-native backbones, demonstrating their value for practical modeling applications [18].

In structure validation, rotamer libraries provide statistical benchmarks for identifying unusual side-chain conformations that may indicate modeling errors or interesting biological phenomena. The backbone-dependent probabilities enable context-specific assessment of side-chain geometry, distinguishing between energetically unfavorable conformations and those stabilized by specific backbone environments [15].

Computational Protein Design

The most extensive application of rotamer libraries lies in computational protein design, where they define the conformational search space for sequence optimization. Rotamer-based design methods explore the vast combinatorial space of amino acid sequences and their conformations by evaluating rotamer combinations using physics-based and knowledge-based energy functions [21] [8]. The integration of backbone flexibility has been particularly transformative for design applications, enabling the creation of novel protein folds and functions not observed in nature [21] [22].

Recent advances incorporate machine learning and deep learning approaches with traditional rotamer-based methods, leading to powerful hybrid systems that leverage both physical principles and statistical patterns learned from protein databases [21] [22]. These integrated approaches have produced remarkable successes in de novo protein design, including the creation of custom enzymes, protein-based materials, and therapeutic candidates with precisely tuned properties [21] [8].

Future Directions and Emerging Challenges

The field of flexible protein modeling continues to evolve rapidly, driven by advances in computational power, algorithmic innovation, and expanding structural databases. Several promising directions are shaping the next generation of rotamer libraries and backbone sampling methods. Machine learning-enhanced sampling approaches are reducing computational costs while improving coverage of conformational space [21] [22]. Multi-state design methodologies are addressing the challenge of designing proteins that perform functions requiring conformational changes [8]. Expanded coverage of noncanonical amino acids is enabling the design of proteins with novel chemical functionalities [16].

Despite these advances, significant challenges remain. Accurate energy function parameterization continues to limit the reliability of design predictions, particularly for polar interactions and electrostatic effects [22]. Conformational dynamics modeling across multiple timescales presents persistent computational challenges [20]. The integration of experimental data with computational models requires improved methods for reconciling structural, thermodynamic, and kinetic information [8]. Addressing these challenges will require continued collaboration between computational and experimental researchers, advancing both the theoretical foundations and practical applications of protein flexibility modeling in computational structural biology.

The twin challenges of navigating protein sequence space and conformational space represent fundamental problems in computational protein design (CPD). The sequence space for a typical protein encompasses an astronomically large number of possibilities (20^N for a protein of N residues), while the conformational space involves exploring the vast number of possible three-dimensional structures each sequence might adopt. Efficient algorithms that can search and optimize within these spaces are crucial for advancing protein design, enabling the creation of novel enzymes, therapeutics, and functional materials. This technical guide examines state-of-the-art algorithms for addressing these challenges, contextualized within the broader principles of computational protein design research.

The computational complexity of these problems is substantial. Multiple sequence alignment (MSA), a foundational bioinformatics problem, is known to be NP-complete [23]. Similarly, protein design involves searching through combinatorial sequence and conformational spaces that grow exponentially with protein size [24]. This guide systematically reviews algorithmic strategies—from traditional global optimization techniques to modern deep learning approaches—that enable researchers and drug development professionals to efficiently navigate these complex spaces.

Algorithmic Foundations for Space Navigation

Traditional Optimization Approaches

Traditional approaches to navigating sequence and conformational spaces have relied on rigorous optimization frameworks. These methods formulate protein design as discrete optimization problems and employ advanced algorithmic techniques to find optimal or near-optimal solutions.

Conformational Space Annealing (CSA) combines elements of simulated annealing, genetic algorithms, and Monte Carlo with minimization to maintain conformational diversity while searching for low-energy states [23]. The algorithm maintains a bank of diverse local minima and systematically explores the neighborhood of these solutions. Its application to multiple sequence alignment (MSACSA) demonstrated superior performance compared to progressive alignment methods by more effectively satisfying pairwise constraints [23].

Cost Function Network (CFN) processing has been integrated into protein design packages like Osprey to significantly accelerate provable rigid backbone design methods [24]. By combining CFN lower bounds with A* search and novel side-chain positioning-based branching schemes, this approach enables much faster enumeration of suboptimal sequences, expanding the accessible solution space for CPD problems [24].

Table 1: Traditional Optimization Algorithms for Sequence and Conformational Space Navigation

| Algorithm | Optimization Approach | Key Features | Application Examples |

|---|---|---|---|

| Conformational Space Annealing (CSA) | Hybrid: SA, GA, MCM | Maintains diverse local minima; distance measure between conformations | MSACSA for multiple sequence alignment [23] |

| Cost Function Network + A* Search | Combinatorial optimization | Provable guarantees; efficient lower bounds; suboptimal solution enumeration | Osprey CPD package for protein design [24] |

| Simulated Annealing (SA) | Stochastic global optimization | Simple implementation; versatile application | Sum-of-pair score optimization for MSA [23] |

AI-Driven Approaches

Recent advances in deep learning have revolutionized navigation of protein sequence and conformational spaces. These methods learn complex relationships from structural data, enabling more efficient exploration and optimization.

CARBonAra (Context-aware Amino acid Recovery from Backbone Atoms and heteroatoms) is a protein sequence generator based on the Protein Structure Transformer (PeSTo) architecture [25]. This geometric transformer operates solely on atomic coordinates and element names, allowing it to process proteins in complex with diverse molecular entities (small molecules, nucleic acids, lipids, etc.). The model achieves a median sequence recovery rate of 51.3% for monomer design and 56.0% for dimer design, performing competitively with state-of-the-art methods while offering unique context-aware capabilities [25].

PVQD (Protein Vector Quantization and Diffusion) employs a vector-quantized autoencoder to learn discrete latent representations of protein backbones, combined with denoising diffusion probabilistic models for generation [26]. Unlike methods that diffuse directly in 3D space, PVQD operates in a learned latent space, enabling unified structure prediction and design while better capturing conformational dynamics. The approach generates backbones with natural-like compositions of secondary structures and reproduces experimental structural variations in benchmark proteins [26].

Table 2: AI-Driven Methods for Protein Sequence and Structure Exploration

| Method | Architecture | Key Capabilities | Performance Metrics |

|---|---|---|---|

| CARBonAra | Geometric Transformer | Context-aware sequence design; handles non-protein entities | 51.3% monomer, 56.0% dimer sequence recovery [25] |

| PVQD | Vector-Quantized Autoencoder + Diffusion | Unified prediction/design; models conformational dynamics | RecRMSD < 2.0Å reconstruction accuracy [26] |

| ECNet | Evolutionary Context-Integrated Neural Network | Combines local and global evolutionary contexts | High success rate in β-lactamase engineering [27] |

Quantitative Performance Comparison

Rigorous evaluation of algorithmic performance is essential for selecting appropriate methods for specific protein design challenges. The following table summarizes key quantitative metrics for recently developed approaches.

Table 3: Quantitative Performance Comparison of Navigation Algorithms

| Method | Sequence Recovery | Structure Accuracy | Computational Efficiency | Key Advantages |

|---|---|---|---|---|

| MSACSA | N/A (alignment method) | More accurate vs. SPEM | N/A | Provides diverse suboptimal alignments [23] |

| CARBonAra | 51.3% (monomer), 56.0% (dimer) | TM-score > 0.9 (AF2 prediction) | ~3x faster than ProteinMPNN | Molecular context awareness [25] |

| PVQD | N/A | recRMSD < 2.0Å (reconstruction) | Competitive with direct 3D diffusion | Models conformational flexibility [26] |

| CFN-based A* | N/A | GMEC identification | Orders of magnitude speedup | Provable optimality guarantees [24] |

Experimental Protocols and Methodologies

MSACSA Implementation Protocol

The MSACSA algorithm implements a direct optimization approach for multiple sequence alignment through the following detailed methodology:

Library Construction: Generate a library of pairwise constraints by performing pairwise alignment for all combinations of sequence pairs using a third-party alignment method [23].

Weight Assignment: Assign weights to each aligned residue pair based on the correlation coefficient between two profiles from the residue pair, generated by PSI-BLAST. Linearly rescale weights to 0.01 ≤ w ≤ 1.0 [23].

Energy Function Definition: Define the energy of an alignment A with N sequences and M aligned columns as: E(A) = 1 - [Σwij]/[ΣWij], where w_ij are weights of aligned residue pairs present in the library [23].

Local Minimization: Perform stochastic quenching through horizontal and vertical moves consisting of random insertion, deletion, and relocation of gaps. Continue until no improvement is found for N = 10NLmax consecutive attempts (Lmax is the largest sequence length) [23].

Conformational Space Exploration: Maintain a bank of diverse local minima using a distance measure between alignments based on residue mismatches in pairwise sequence alignments [23].

CARBonAra Training and Inference Protocol

The CARBonAra model implements context-aware protein sequence design through the following experimental methodology:

Data Preparation:

- Collect approximately 370,000 subunits from RCSB PDB biological assemblies, with an additional 100,000 subunits for validation [25].

- Create a testing dataset of ~70,000 subunits distinct from the training set with no shared CATH domains and filtered at <30% sequence identity [25].

- Include all molecular entities in complexes (ions, ligands, nucleic acids) during training.

Model Architecture:

- Input: Coordinates and elements of backbone atoms (Cα, C, N, O) with virtual Cβ atoms added using ideal bond angles and lengths [25].

- Core: Geometric transformer operations processing information from 8 to 64 nearest neighbors, with equivariant encoding of vectorial state and invariant scalar state [25].

- Output: Position-specific scoring matrix (PSSM) representing amino acid confidences at each position.

Sequence Sampling:

- Utilize multi-class amino acid predictions to generate a space of potential sequences.

- Implement strategies for tailored sequence generation to achieve specific objectives like minimal sequence identity or low sequence similarity [25].

- Enable autoregressive predictions by imprinting prior sequence information into backbone atoms using one-hot encoding.

PVQD Framework Protocol

The PVQD method for protein backbone generation and conformational sampling implements the following methodology:

Auto-encoder Training:

- Train a vector-quantized autoencoder (VQ-VAE) with an 8192-vector codebook to represent residue structural contexts as discrete codes [26].

- Use an SE(3)-invariant encoder to embed residue structures into latent space.

- Employ a structure decoder to reconstruct 3D structures from quantized vectors.

- Fine-tune the decoder on structures cropped to 640 residues to improve reconstruction accuracy on larger proteins [26].

Latent Space Diffusion:

- Implement Gaussian noise diffusion in the learned latent space to model joint distribution of quantization vectors.

- Use 400 denoising steps with linear configuration of noise schedule [26].

- Enable sequence-conditioned generation by embedding conditions during fine-tuning of the unconditioned diffusion network.

Structure Generation and Evaluation:

- Sample vectors from the diffusion model and decode into 3D structures with the pre-trained decoder.

- Evaluate generated backbones using mutual TM-scores for diversity and self-consistent RMSD (scRMSD) for designability [26].

- Compare against direct-3D-diffusion methods (SCUBA-D, Chroma, RFdiffusion) for structural biases and conformational dynamics.

Visualization of Algorithmic Workflows

MSACSA Optimization Flowchart

MSACSA Optimization Workflow: This diagram illustrates the iterative process of Conformational Space Annealing for multiple sequence alignment, showing how diverse solutions are maintained and refined.

CARBonAra Architecture Diagram

CARBonAra Model Architecture: This diagram shows the flow of information through the geometric transformer architecture, from input coordinates to sequence probabilities and final sequence sampling.

PVQD Generation Pipeline

PVQD Generation Pipeline: This diagram illustrates the two-stage process of vector quantization followed by latent space diffusion for protein backbone generation and conformational sampling.

Table 4: Key Computational Tools and Resources for Protein Space Navigation

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| Osprey with CFN | Software Package | Provable protein design with Cost Function Networks | Rigid backbone protein design with guarantees [24] |

| ProteinMPNN | Deep Learning Model | Protein sequence design from backbone structures | High-accuracy sequence design for given scaffolds [25] |

| AlphaFold2 | Structure Prediction | Protein structure prediction from sequence | Validation of designed sequences and structures [25] |

| RFdiffusion | Generative Model | De novo protein backbone generation | Creating novel protein scaffolds [26] |

| PSI-BLAST | Bioinformatics Tool | Profile construction and homology detection | Generating evolutionary information for MSAs [23] |

| RCSB PDB | Database | Experimental protein structures | Training data source and validation benchmark [25] |

Efficient navigation of sequence and conformational spaces remains a central challenge in computational protein design, with significant implications for drug development and protein engineering. This technical guide has examined algorithmic strategies ranging from traditional optimization approaches like conformational space annealing to modern AI-driven methods such as geometric transformers and latent space diffusion models.

The integration of these approaches represents the future of protein design. Methods like CARBonAra that incorporate molecular context and PVQD that unify structure prediction and design highlight the trend toward more versatile, context-aware algorithms. As these methods continue to evolve, they will expand our capability to design proteins with novel functions, advancing applications in therapeutics, biocatalysis, and biomaterials.

For researchers and drug development professionals, selecting the appropriate algorithmic strategy depends on specific design objectives: traditional optimization methods provide mathematical guarantees for well-defined problems, while AI-driven approaches offer greater flexibility and context awareness for complex design challenges. The continued development and integration of these approaches will further our fundamental understanding of sequence-structure-function relationships and expand the scope of designable proteins.

The field of computational protein design (CPD) has traditionally been framed as an inverse folding problem: given a target backbone structure, identify a sequence that will fold into it [28]. This approach, while productive, often relied on laborious, low-throughput methods like directed evolution and was constrained by our incomplete understanding of biophysics [13]. The advent of deep learning has fundamentally transformed this paradigm, shifting the field from a structure-to-sequence optimization problem to a generative process where novel proteins with tailored functions can be created from simple molecular specifications [12] [13]. This generative paradigm, powered by architectures such as diffusion models and protein language models, enables the de novo creation of protein structures and sequences that not only fold stably but also perform specific biological functions, from binding targets to catalyzing reactions [12] [5].

The breakthrough can be attributed to two key developments. First, powerful structure prediction networks like AlphaFold2 and RoseTTAFold provided a deep understanding of protein structure implicit in their architectures [12] [13]. Second, generative AI models adapted from image and language generation demonstrated an unprecedented capacity to sample the vast protein sequence and structural space, moving beyond the limitations of the natural protein universe observed in the Protein Data Bank [12] [28]. This whitepaper examines the core principles, methodologies, and applications of this generative shift, providing researchers with a technical framework for leveraging these tools in computational protein design research.

Core Principles of Generative Protein Design

Generative protein design is underpinned by several foundational principles that distinguish it from traditional computational approaches. A key insight is that native protein structures represent low free energy states, and stabilizing forces—particularly hydrophobic core formation and hydrogen bonding—can be systematically engineered through computational means [5] [8]. The generative approach leverages this by using deep learning networks as universal approximators to learn the complex relationships between sequence, structure, and function from vast biological datasets [28].

These models exhibit several crucial properties. They operate in a rotationally equivariant manner, meaning they model three-dimensional structures in a global representation frame-independent way, which is essential for realistic protein geometry [12]. Furthermore, they enable conditional generation, where the design process can be guided at each step by specific objectives through the provision of conditioning information, such as partial structures, functional motifs, or symmetry constraints [12]. This capability transforms protein design from a problem of structure completion to one of programmable creation from specifications.

Table 1: Fundamental Forces in Protein Folding and Stability

| Force/Interaction | Role in Protein Stability | Generative Design Application |

|---|---|---|

| Hydrophobic Effect | Forms hydrophobic core; segregates non-polar residues from solvent [8] | Core packing optimization in de novo designs |

| Hydrogen Bonding | Stabilizes secondary structures (e.g., β-sheets); enables resistance to mechanical stress [5] | Deliberate network maximization for extreme stability |

| Electrostatic Interactions | Salt bridges and polar interactions on protein surface [8] | Functional site engineering for binding and catalysis |

The Generative AI Toolbox for Protein Design

The generative protein design workflow integrates specialized tools that operate in a coordinated framework. A 2025 review in Nature Reviews Bioengineering formalized this process into a systematic, seven-part toolkit that maps AI tools to specific stages of the protein design lifecycle [13].

- Protein Database Search (T1): Identifying structural and sequence homologs for inspiration.

- Protein Structure Prediction (T2): Predicting 3D structures from sequences using tools like AlphaFold2.

- Protein Function Prediction (T3): Annotating function and identifying binding sites.

- Protein Sequence Generation (T4): Generating novel sequences based on evolutionary patterns or structural constraints.

- Protein Structure Generation (T5): Creating novel protein backbones de novo or from templates.

- Virtual Screening (T6): Computationally assessing candidates for properties like stability and binding.

- DNA Synthesis & Cloning (T7): Translating final designs into DNA sequences for experimental expression [13].

This framework transforms a collection of powerful but disconnected tools into a coherent engineering discipline, enabling researchers to construct customized workflows for specific design challenges [13].

Key Generative Models and Architectures