Comparative Assessment of Protein Large Language Models: Performance, Applications, and Future Directions in Bioinformatics and Drug Discovery

Protein Large Language Models (PLMs), built on transformer architectures, are revolutionizing the analysis of protein sequences for function prediction, structure determination, and de novo design.

Comparative Assessment of Protein Large Language Models: Performance, Applications, and Future Directions in Bioinformatics and Drug Discovery

Abstract

Protein Large Language Models (PLMs), built on transformer architectures, are revolutionizing the analysis of protein sequences for function prediction, structure determination, and de novo design. This article provides a comprehensive comparative assessment of major PLMs, including the ESM series, ProtBERT, and ProGen, evaluating their performance against traditional methods like BLASTp on critical tasks such as Enzyme Commission number prediction. We explore their foundational principles, diverse methodological applications across bioinformatics, and key challenges such as data scarcity and model interpretability. Aimed at researchers, scientists, and drug development professionals, this review synthesizes empirical evidence to guide model selection, highlights emerging best practices for optimization, and outlines the transformative potential of integrating PLMs into biomedical research pipelines.

From Text to Proteins: Demystifying the Architecture and Core Concepts of Protein Language Models

The analogy of protein sequences as a language, where amino acids are words and structural motifs are sentences, has fundamentally reshaped computational biology. This perspective has enabled the application of powerful natural language processing (NLP) techniques to decode the complex relationship between protein sequence and function. Protein Language Models (pLMs), pre-trained on millions of protein sequences, learn deep statistical patterns and evolutionary constraints, allowing them to generate meaningful representations (embeddings) that predict various functional and structural properties [1] [2]. This guide provides a comparative assessment of leading pLMs, evaluating their performance across key biological tasks to inform researchers and drug development professionals in selecting optimal tools for their specific applications.

Performance Comparison of Major Protein Language Models

Benchmarking pLMs for Enzyme Function Prediction

A critical application of pLMs is predicting enzyme function, classified by Enzyme Commission (EC) numbers. A comprehensive 2025 study directly compared the performance of several pLMs against the traditional gold standard, BLASTp.

Table 1: Performance Comparison of pLMs and BLASTp on EC Number Prediction

| Model / Method | Overall Performance | Strength | Weakness |

|---|---|---|---|

| BLASTp | Marginally better overall [3] | Excels at predicting certain EC numbers, especially with clear homologs [3] | Cannot assign function to proteins without homologous sequences [3] |

| ESM2 | Best-performing pLM [3] | More accurate for difficult-to-annotate enzymes and sequences with <25% identity to known proteins [3] | Still requires improvement to surpass BLASTp in mainstream annotation [3] |

| ProtBERT | Competitive pLM performance [3] | Often fine-tuned for specific prediction tasks [3] | Performance context-dependent; a comprehensive comparison showed ESM2's superiority [3] |

| ESM1b | Good pLM performance [3] | Effective as a feature extractor [3] | Outperformed by the newer ESM2 model [3] |

| One-Hot Encoding DL Models | Lower performance [3] | - | Surpassed by pLMs combined with fully-connected neural networks [3] |

The study concluded that while BLASTp maintains a slight overall advantage, pLMs provide complementary predictions. An ensemble approach using both BLASTp and pLMs, particularly ESM2, was found to be more effective than either method alone [3].

The Impact of Model Size and Embedding Compression

The trend towards ever-larger models raises practical questions about computational cost versus performance gain. A 2025 systematic evaluation of ESM-style models shed light on this trade-off and on optimal feature extraction methods.

Table 2: Impact of Model Size and Embedding Compression on Transfer Learning

| Factor | Impact on Performance & Practicality | Recommendation |

|---|---|---|

| Model Size | Larger models (e.g., ESM-2 15B) do not necessarily outperform smaller ones when data is limited. Medium-sized models (e.g., ESM-2 650M, ESM C 600M) perform closely behind larger counterparts [1]. | ESM C 600M offers an optimal balance of performance and efficiency for most realistic biological applications [1]. |

| Embedding Compression | The method of compressing high-dimensional embeddings before downstream prediction is critical. Mean pooling (averaging embeddings across all sequence sites) consistently outperformed other compression methods like max pooling and iDCT [1]. | Use mean embeddings as the default compression strategy for transfer learning, as it is strictly superior for diverse sequences and performs well on mutational data [1]. |

Experimental Protocols for pLM Evaluation

Protocol for EC Number Prediction

The comparative assessment of pLMs for EC number prediction was conducted as a multi-label classification problem, accounting for promiscuous and multi-functional enzymes [3].

- Data Preparation: Protein sequences and their EC numbers were extracted from the SwissProt and TrEMBL sections of UniProtKB. To avoid bias from highly similar sequences, only representative sequences from UniRef90 clusters (sequences with <90% identity) were retained [3].

- Feature Extraction: Representations (embeddings) were extracted from the final layers of pre-trained pLMs, including ESM2, ESM1b, and ProtBERT. For comparison, models using one-hot encodings of amino acid sequences (e.g., DeepEC, D-SPACE) were also implemented [3].

- Model Training & Evaluation: The extracted embeddings were used as input features for fully-connected deep neural networks (DNNs). The performance of these DNNs was rigorously benchmarked against each other and against the standard BLASTp tool [3].

Protocol for Assessing Model Size and Transfer Learning

The evaluation of model size and compression methods involved a systematic pipeline for transfer learning via feature extraction [1].

- Datasets: The study used two types of data: 1) 41 Deep Mutational Scanning (DMS) datasets measuring the effects of point mutations, and 2) 12 diverse metrics (e.g., physicochemical properties) computed for proteins from the PISCES database [1].

- Embedding Extraction and Compression: For a given protein sequence, embeddings were extracted from the last hidden layer of various-sized pLMs. These embeddings were then compressed using different methods (mean pooling, max pooling, iDCT, PCA).

- Downstream Prediction: The compressed embeddings were used as features in a regularized regression model (LassoCV) to predict the target variable (e.g., mutation effect, instability index). Model performance was measured by the variance explained (R²) on a held-out test set [1].

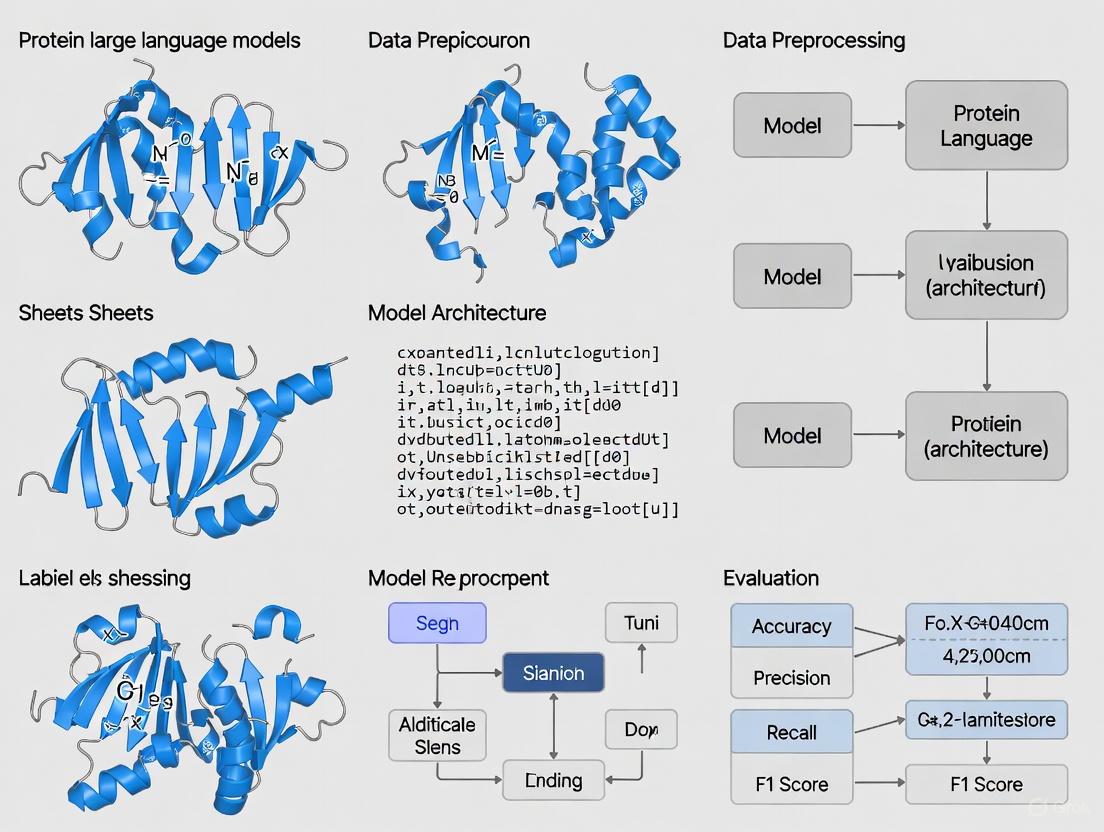

Visualizing Experimental Workflows

The following diagrams illustrate the core experimental protocols discussed, providing a clear visual reference for the methodologies.

Workflow for pLM-based EC Number Prediction

Workflow for Transfer Learning via Feature Extraction

Table 3: Essential Databases and Tools for pLM Research

| Resource Name | Type | Primary Function in pLM Research |

|---|---|---|

| UniProtKB [3] | Protein Sequence Database | Source of millions of protein sequences for pre-training pLMs and for creating benchmark datasets. Includes SwissProt (manual) and TrEMBL (automated) annotations. |

| ESM-2 [3] [1] | Protein Language Model | A state-of-the-art pLM based on the transformer architecture. Available in sizes from 8 million to 15 billion parameters for balancing performance and compute. |

| ESM C (Cambrian) [1] | Protein Language Model | A high-performance model demonstrating that smaller, efficiently trained models can compete with much larger counterparts. |

| AlphaFold Database (AFDB) [4] [5] | Protein Structure Database | Repository of over 214 million predicted protein structures. Used for tasks linking sequence to structure and for developing structure-based tools. |

| SARST2 [5] | Structural Alignment Tool | Enables rapid and accurate alignment of protein structures against massive databases like the AFDB, facilitating structural homology searches. |

| PISCES Database [1] | Protein Sequence Culling Set | Provides curated, non-redundant protein sequences for benchmarking and evaluating computational methods. |

The application of large language models (LLMs) to biological sequences represents a paradigm shift in computational biology. These models, adapted from natural language processing, treat biological sequences—such as proteins, DNA, and RNA—as texts written in a "language" of amino acids or nucleotides [6]. Their ability to capture complex patterns in these sequences has revolutionized tasks ranging from protein function prediction to de novo molecular design. The performance and applicability of these models are fundamentally governed by their underlying transformer architecture: encoder-only, decoder-only, or a hybrid encoder-decoder [6]. Understanding the distinct capabilities, limitations, and optimal use cases for each architecture is crucial for researchers and drug development professionals aiming to leverage artificial intelligence for biological discovery. This guide provides a comparative assessment of these core architectures, with a specific focus on their performance and protocols in protein LLM research.

Architectural Fundamentals and Biological Adaptations

The original transformer architecture, introduced for machine translation, contained both an encoder and a decoder [7]. The encoder's role is to process and understand the input sequence, creating a rich, contextualized representation. The decoder then uses this representation to generate an output sequence [8]. In biology, this concept translates to, for example, taking a protein sequence as input and generating a functional annotation or a related structural sequence as output.

Subsequent evolution has produced three dominant paradigms:

Encoder-Only Models (e.g., BERT, RoBERTa): These models use bidirectional self-attention, meaning each position in the input sequence can attend to all other positions. This allows them to develop a deep, context-aware understanding of the entire sequence [9] [7]. They are typically pre-trained using Masked Language Modeling (MLM), where random tokens in the input are masked and the model must predict them based on the surrounding context [7]. In biology, a "token" may be an amino acid or a small peptide fragment. This makes them exceptionally powerful for discriminative tasks like classification.

Decoder-Only Models (e.g., GPT series): These models employ masked or unidirectional self-attention. Each token can only attend to previous tokens in the sequence, preventing it from seeing "future" information [9]. This architecture is inherently autoregressive, making it ideal for generation [7]. It is pre-trained on autoregressive language modeling, where the goal is to predict the next token in a sequence given all previous tokens [9]. In a biological context, this allows for the generation of novel, plausible protein sequences.

Encoder-Decoder Models (e.g., T5): These models retain the full two-part structure of the original transformer. The encoder processes the input sequence into a contextual representation, and the decoder generates the output sequence autoregressively, while also attending to the encoder's output [9]. This design is suited for sequence-to-sequence tasks where the output is heavily dependent on the input, such as translating a protein sequence into a functional description or predicting a reaction product from a substrate [7].

Figure 1: Core architectural differences and primary strengths of encoder-only, decoder-only, and encoder-decoder transformer models in protein analysis.

A critical technical consideration is the rank of the attention weight matrices. Bidirectional attention in encoders can suffer from a "low-rank bottleneck," where the attention weights become similar across tokens, potentially homogenizing information. In contrast, the unidirectional attention in decoders tends to preserve a higher rank, maintaining distinct token identities and potentially offering greater expressive power, especially for generation [9].

Comparative Performance in Protein Analysis

A prime example for comparing these architectures is the task of Enzyme Commission (EC) number prediction, a fundamental problem in functional genomics. EC numbers provide a hierarchical classification for enzyme function. The task is typically framed as a multi-label classification problem, where a model must predict all relevant EC numbers for a given protein sequence [3].

Key Experimental Findings

A comprehensive 2025 study provides a direct performance comparison of different protein LLMs, primarily encoder-only architectures, for EC number prediction [10] [3]. The researchers assessed models by using them as feature extractors; the embeddings they produced were fed into a fully connected neural network for the final classification.

Table 1: Performance Comparison of Protein LLMs on EC Number Prediction [10] [3]

| Model | Architecture | Key Performance Insight | Relative Strength |

|---|---|---|---|

| ESM2 | Encoder-Only | Best performing LLM; more accurate on difficult annotations and enzymes without homologs. | Excels where sequence identity to known proteins falls below 25%. |

| ESM1b | Encoder-Only | Strong performance, but generally surpassed by ESM2. | Effective feature extractor for enzyme function. |

| ProtBERT | Encoder-Only | Competitive performance, can be used for fine-tuning. | An alternative encoder-based approach. |

| BLASTp | Non-LLM Alignment | Marginally better overall results than individual LLMs. | Gold standard for proteins with clear homologs; fails without homologs. |

| One-Hot Encoding DL | Non-LLM Baseline | Performance surpassed by all LLM-based models. | Serves as a simple baseline. |

The central conclusion is that encoder-only protein LLMs and BLASTp offer complementary strengths. While BLASTp retains a slight edge for routine annotation of proteins with strong homologs, encoder-only LLMs like ESM2 demonstrate superior capability for "difficult-to-annotate enzymes," particularly when sequence identity to known proteins is low (<25%) [10] [3]. This suggests that LLMs learn fundamental biochemical principles beyond simple sequence homology.

Broader Performance Across Tasks

Beyond EC number prediction, the architecture determines suitability for broader tasks in biology:

Table 2: Architectural Suitability for Key Tasks in Protein Research [9] [6] [7]

| Task Category | Example Tasks | Optimal Architecture | Rationale |

|---|---|---|---|

| Discriminative / Classification | Function prediction, stability classification, subcellular localization, sentiment analysis (for scientific text). | Encoder-Only | Bidirectional context provides a rich, holistic understanding of the entire sequence, ideal for making a single prediction per input. |

| Generative / Design | De novo protein design, generating sequences with specific properties, text generation (e.g., writing papers). | Decoder-Only | Autoregressive nature is inherently designed for generating coherent sequences (amino acid or text) one token at a time. |

| Sequence-to-Sequence | Protein structure-to-sequence translation, reaction prediction, text summarization (of scientific documents). | Encoder-Decoder | The architecture is designed to map one complex sequence to another, leveraging both full input understanding and autoregressive generation. |

For discriminative tasks, decoder-only models can be repurposed through prompt engineering and in-context learning, but this typically requires the model to be very large and a well-designed prompt to be effective [9].

Experimental Protocols for Protein LLM Evaluation

To ensure reproducible and meaningful results, benchmarking studies follow rigorous protocols. The following workflow outlines a standard methodology for evaluating protein LLMs on a task like EC number prediction, based on current research practices [10] [3].

Figure 2: Standardized experimental workflow for benchmarking protein LLMs on a functional prediction task.

Detailed Methodology

Data Curation and Preprocessing: The standard data source is UniProtKB/SwissProt, a manually annotated protein sequence database [3]. The dataset must be filtered to include only proteins with experimentally verified EC numbers to ensure label accuracy.

Train/Validation/Test Split with Homology Reduction: A critical step to prevent data leakage and overoptimistic performance is to ensure that no protein in the test set has high sequence similarity to any protein in the training set. This is achieved by clustering the entire dataset using UniRef90 (90% sequence identity clusters) and ensuring that all proteins from the same cluster are assigned to the same data split [3]. This tests the model's ability to generalize to novel protein folds and families.

Feature Extraction: In this protocol, the protein LLMs (e.g., ESM2, ESM1b, ProtBERT) are used as feature extractors. The input protein sequence is passed through the pre-trained model, and the hidden state representations (embeddings) for each amino acid position are extracted. These embeddings are often pooled (e.g., by taking the mean or using the special

<CLS>token's embedding) to create a single, fixed-dimensional vector representing the entire protein [3].Classifier Training: The extracted protein embeddings serve as input features for a downstream classifier. A common approach is to use a fully connected neural network (a deep neural network, DNN) with a final sigmoid activation function for multi-label prediction [3]. The weights of the protein LLM are typically frozen during this stage; only the classifier is trained. This tests the inherent quality of the representations learned by the pre-trained LLM.

Evaluation and Comparative Analysis: The model's predictions are evaluated on the held-out test set using metrics like accuracy, precision, recall, and F1-score. Performance is compared against baseline methods, most importantly BLASTp, to establish the relative utility of the LLM approach [3].

Successfully applying or benchmarking protein LLMs requires a suite of computational tools and data resources.

Table 3: Essential Research Reagents for Protein LLM Experiments

| Resource / Tool | Type | Function and Relevance | Example |

|---|---|---|---|

| Pre-trained Protein LLMs | Model Weights | Provide the foundational model for transfer learning or feature extraction. Essential for any downstream task. | ESM2 [10], ProtBERT [3], ProtGPT2 [6] |

| Curated Protein Databases | Dataset | Source of high-quality, annotated protein sequences for training and evaluation. | UniProtKB/SwissProt [3], Protein Data Bank (PDB) [6] |

| Homology Clustering Tools | Software/Database | Critical for creating non-redundant benchmarks to prevent data leakage and test generalization. | UniRef90 [3] |

| Sequence Alignment Tools | Software | The traditional gold standard for function prediction; serves as a key baseline for comparison. | BLASTp, DIAMOND [10] [3] |

| Deep Learning Frameworks | Software Library | Provide the environment for building, training, and evaluating classifier models on top of LLM embeddings. | PyTorch, TensorFlow, JAX |

The comparative assessment of transformer architectures reveals a clear, task-dependent landscape for protein research. Encoder-only models currently dominate discriminative tasks like function prediction, offering robust performance and even complementing traditional tools like BLASTp, especially for proteins with distant homology. Decoder-only models unlock powerful capabilities for generative tasks, such as designing novel protein sequences. The encoder-decoder architecture remains relevant for complex sequence-to-sequence mapping problems.

Future research will likely focus on several key areas: developing more sophisticated hybrid architectures that seamlessly combine discriminative and generative understanding; improving model efficiency to handle longer biological sequences, such as entire genomes; and creating better benchmarks that robustly measure a model's grasp of biochemical principles rather than its ability to memorize homology. For scientists and drug developers, the choice of architecture is not a question of which is universally best, but which is the most appropriate tool for the specific biological question at hand.

The field of computational biology has witnessed a revolutionary transformation in how proteins are represented and analyzed, moving from simple numerical embeddings to sophisticated large language models that capture complex biological principles. Protein Language Models (PLMs) have emerged as powerful tools that learn the "language of life" by treating amino acid sequences as textual data and employing self-supervised learning on massive sequence databases [11]. This evolution began with early embedding methods like ProtVec, which provided fixed representations for protein sequences, and has advanced to modern transformer-based models including ESM (Evolutionary Scale Modeling) and ProtBERT, which leverage attention mechanisms to capture long-range dependencies and evolutionary patterns [11] [12]. These models have demonstrated remarkable capabilities in predicting protein structure, function, stability, and interactions, becoming indispensable tools for researchers and drug development professionals [11] [13]. The comparative assessment of these models reveals distinct performance advantages across various biological tasks, enabling more accurate and efficient protein analysis pipelines.

Historical Development: From Simple Embeddings to Contextual Representations

The journey of protein representation learning has followed a trajectory similar to natural language processing, beginning with static word embeddings and progressing to contextual, transformer-based models. Table 1 summarizes the key evolutionary milestones in protein language models.

Table 1: Historical Evolution of Protein Representation Methods

| Generation | Representative Models | Key Innovation | Limitations |

|---|---|---|---|

| Early Embeddings | ProtVec, Seq2Vec | Fixed vector representations for k-mers | Limited contextual understanding; inability to capture long-range dependencies |

| First-generation PLMs | LSTM-based models, CNN-based models | Sequence-aware processing using recurrent or convolutional architectures | Limited context window; gradual performance improvement |

| Modern Transformer PLMs | ESM-1b, ProtBERT, ESM-2 | Self-attention mechanisms; transfer learning; large-scale pre-training | High computational requirements; extensive training data needed |

| Next-generation PLMs | ESM-3, ESM Cambrian, ProtT5 | Generative capabilities; multi-task learning; improved efficiency | Increasing model complexity; specialized hardware requirements |

Early embedding methods like ProtVec treated protein sequences as collections of k-mers (short subsequences of amino acids), generating fixed vector representations for each k-mer regardless of its context within the full protein sequence [11]. These methods, while computationally efficient, failed to capture the complex contextual relationships that govern protein structure and function. The introduction of deep learning architectures, particularly Long Short-Term Memory (LSTM) networks and Convolutional Neural Networks (CNNs), marked a significant advancement by enabling sequence-aware processing that could capture local patterns and short-range dependencies [14].

The true revolution began with the adaptation of the transformer architecture for protein sequences, enabling models to capture long-range interactions between amino acids that are critical for understanding protein folding and function [11] [12]. Models like ProtBERT (released in 2020) and the ESM family (ESM-1b, ESM-2, and beyond) leveraged self-supervised pre-training on massive protein sequence databases, learning rich contextual representations that encapsulate evolutionary information, biophysical properties, and functional characteristics [12] [15]. The ESM-2 model, introduced in 2022, particularly stood out for its ability to predict atomic-level protein structure directly from individual sequences, demonstrating the remarkable biological insights captured by these models [15].

Comparative Architecture Analysis: ESM vs. ProtBERT

Model Architectures and Training Approaches

The ESM and ProtBERT model families represent two prominent approaches to protein language modeling, with distinct architectural choices and training methodologies. ProtBERT is based on the BERT (Bidirectional Encoder Representations from Transformers) architecture and employs a masked language modeling objective during pre-training [12]. The model is trained to predict randomly masked amino acids in protein sequences, learning bidirectional contextual representations. ProtBERT was pre-trained on UniRef100, a dataset comprising 217 million protein sequences, using a vocabulary of 21 amino acids (with rare amino acids mapped to "X") [12]. The training procedure treated each protein sequence as a separate document, without using next-sentence prediction, and employed the LAMB optimizer with a learning rate of 0.002 [12].

The ESM (Evolutionary Scale Modeling) family, particularly ESM-2, utilizes a transformer architecture with rotary positional embeddings and was trained on larger and more diverse datasets including UniRef, MGnify, and JGI databases [15] [16]. ESM-2 introduced significant scaling in model size, with parameter counts ranging from 8 million to 15 billion, enabling the model to capture increasingly complex protein patterns [15] [1]. A key innovation in the ESM lineage is ESM Cambrian, which employs a two-stage training process: initial training with a context length of 512 amino acids, followed by extended training with a context length of 2048 [16]. This approach, combined with modified architecture elements like Pre-Layer Normalization and SwiGLU activations, has yielded significant performance improvements over previous generations [16].

Performance Comparison Across Biological Tasks

Table 2 provides a comprehensive performance comparison of modern PLMs across key protein prediction tasks, synthesizing data from multiple benchmarking studies.

Table 2: Performance Comparison of Modern Protein Language Models

| Model | Parameters | EC Number Prediction (Accuracy) | Secondary Structure (Q3 Score) | Variant Effect Prediction | Training Data Size |

|---|---|---|---|---|---|

| ESM-2 8M | 8 million | Moderate | ~70% | Limited | UR50/D (Millions of sequences) |

| ESM-2 650M | 650 million | High | ~76% | Good | UR50/D (Millions of sequences) |

| ESM-2 15B | 15 billion | Very High | ~84% | Very Good | UR50/D (Millions of sequences) |

| ESM C 600M | 600 million | Very High | ~82% | Very Good | 2B clusters (70% identity) |

| ProtBERT | 420 million | High | ~81% (CASP12) | Good | UniRef100 (217M sequences) |

| ESM-1v | 650 million | N/A | N/A | State-of-the-art | UniRef90 |

In enzyme commission (EC) number prediction, a critical task for functional annotation, ESM-2 has demonstrated superior performance compared to other single-sequence models. A comprehensive assessment revealed that while BLASTp still provides marginally better results overall, ESM-2 stood out as the best model among tested LLMs, providing more accurate predictions on difficult annotation tasks and for enzymes without homologs [17]. Importantly, the study found that LLMs and alignment-based methods complement each other, with an ensemble approach delivering performance surpassing individual techniques [17].

For secondary structure prediction, ProtBERT achieves a Q3 score of approximately 75% on the CASP12 dataset and 83% on TS115, while ESM-2 models show scaling effects with larger models (ESM-2 15B) reaching Q3 scores of around 84% [12] [1]. In sub-cellular localization tasks, ProtBERT reaches 79% accuracy on DeepLoc, demonstrating its capability to capture global protein features [12].

Recent evaluations of model size efficiency have revealed that medium-sized models (100 million to 1 billion parameters) often provide the optimal balance between performance and computational requirements. Studies show that ESM-2 650M and ESM C 600M demonstrate consistently good performance, falling only slightly behind their larger counterparts (ESM-2 15B and ESM C 6B) despite being many times smaller [1]. This is particularly important for real-world applications where computational resources may be constrained.

Experimental Assessment: Methodologies and Protocols

Standardized Evaluation Frameworks

The comparative assessment of protein language models typically follows rigorous experimental protocols to ensure fair and reproducible benchmarking. The standard methodology involves transfer learning via feature extraction, where embeddings are obtained from pre-trained PLMs and used as input features for downstream prediction tasks [1]. The standard workflow begins with embedding extraction from the final hidden layer of the PLM, followed by embedding compression (typically using mean pooling across sequence length), and finally training supervised models (such as regularized regression or neural networks) on the target task [1].

For EC number prediction, the problem is framed as a multi-label classification task incorporating promiscuous and multi-functional enzymes. Each protein sequence is associated with a binary label vector indicating all relevant EC numbers at all hierarchical levels [17]. Models are evaluated using precision-recall metrics and compared against baseline methods including BLASTp and models using one-hot encodings [17].

In variant effect prediction, models are tested on Deep Mutational Scanning (DMS) datasets which measure the functional impact of single amino acid substitutions. The evaluation typically involves measuring the correlation between model predictions and experimental measurements across dozens of diverse proteins [1].

Embedding Compression and Feature Extraction

A critical methodological consideration in PLM evaluation is the approach to embedding compression. Research has demonstrated that mean pooling (averaging embeddings across all amino acid positions) consistently outperforms alternative compression methods including max pooling, inverse Discrete Cosine Transform (iDCT), and PCA [1]. For diverse protein sequences, mean pooling was strictly superior in all cases, while for DMS data focusing on point mutations, some alternative methods occasionally performed slightly better on specific datasets [1]. This finding has important practical implications for researchers implementing PLM-based solutions.

Diagram 1: Standard PLM evaluation workflow showing the process from protein sequence to performance evaluation, with embedding compression as a critical step.

Table 3 provides a comprehensive overview of essential resources for researchers working with protein language models, including datasets, software tools, and pre-trained models.

Table 3: Essential Research Resources for Protein Language Model Applications

| Resource Category | Specific Tools/Databases | Key Features/Applications | Access Method |

|---|---|---|---|

| Protein Databases | UniRef, MGnify, JGI | Training data sources; sequence retrieval | Public download via FTP |

| Pre-trained Models | ESM-2, ESM Cambrian, ProtBERT, ProtT5 | Feature extraction; fine-tuning | Hugging Face; GitHub repositories |

| Software Libraries | Transformers, PyTorch, Biopython | Model implementation; sequence processing | pip/conda install |

| Evaluation Benchmarks | DeepLoc, CASP, DMS datasets | Performance validation; model comparison | Public repositories |

| Specialized Hardware | GPU clusters, TPU pods | Training large models; efficient inference | Cloud services; institutional HPC |

Implementation Guide and Code Examples

Implementing protein language models for research applications typically begins with embedding extraction. The following workflow illustrates a standard approach using Hugging Face transformers:

For ProtBERT, implementation involves loading the pre-trained model and tokenizer, followed by sequence processing and embedding extraction [12]. The tokenizer requires uppercase amino acids and maps rare residues (U, Z, O, B) to "X" [12]. Embeddings can be extracted at the residue level or pooled to create a single protein-level representation.

For ESM models, the process is similar but utilizes ESM-specific tokenization and model classes [15]. The ESM framework provides models of various sizes, from ESM-2 8M with 8 million parameters to ESM-2 15B with 15 billion parameters, allowing researchers to select the appropriate scale for their computational resources and accuracy requirements [15] [1].

A critical best practice is the use of mean pooling for creating protein-level embeddings, as this approach has been systematically demonstrated to outperform alternative compression methods across diverse tasks [1]. For specialized applications focusing on specific protein regions or point mutations, residue-level embeddings may be more appropriate.

Future Directions and Emerging Trends

The evolution of protein language models continues at a rapid pace, with several emerging trends shaping their future development. Model interpretability represents a major frontier, with recent research applying sparse autoencoders to disentangle the biological features learned by PLMs [18] [19]. This approach expands the dense representations within neural networks across more neurons, making it possible to identify which nodes correspond to specific protein features such as molecular function, protein family, or cellular location [18].

The scaling laws observed in natural language processing also appear to hold for protein models, with systematic performance improvements observed as model size, data quantity, and computational resources increase [16]. However, recent research suggests diminishing returns for some applications, with medium-sized models (100 million to 1 billion parameters) often providing the optimal balance between performance and efficiency [1]. The introduction of ESM Cambrian demonstrates this trend, with its 600M parameter model rivaling the performance of much larger ESM-2 models [16].

Multimodal architectures that integrate sequence, structure, and functional data represent another promising direction [13]. Models like ESM-3 have begun incorporating structural constraints and functional annotations during training, creating more comprehensive protein representations [16]. As these technologies mature, protein language models are poised to become even more powerful tools for drug discovery, protein engineering, and fundamental biological research.

The evolution from early embeddings like ProtVec to modern transformer-based models represents a quantum leap in our ability to computationally understand and predict protein behavior. The comparative assessment of ESM and ProtBERT models reveals distinct strengths and optimal application domains: ESM models generally excel in structural prediction and variant effect analysis, while ProtBERT provides robust performance across various function prediction tasks. For researchers and drug development professionals, medium-sized models (particularly ESM-2 650M and ESM C 600M) typically offer the best balance of performance and computational efficiency for most applications. As the field advances, the integration of interpretability methods, multimodal learning, and responsible development practices will further enhance the utility and reliability of these transformative tools in biological research and therapeutic development.

Protein Large Language Models (pLMs) have emerged as transformative tools in computational biology, leveraging architectures from natural language processing to interpret the "language" of proteins defined by their amino acid sequences. These models learn complex biochemical and evolutionary patterns through self-supervised pre-training on vast sequence databases, enabling breakthroughs in protein function prediction, structure inference, and de novo protein design [20] [11]. Within this rapidly evolving landscape, several key model families have established distinct niches and capabilities. This guide provides a comparative assessment of four prominent pLM families—ESM, ProtBERT, ProGen, and ProtGPT2—focusing on their architectural principles, training data, and experimental performance across biological tasks. Understanding their relative strengths and limitations is crucial for researchers and drug development professionals to select optimal tools for specific applications, from functional annotation to therapeutic protein design.

The ESM (Evolutionary Scale Modeling) family, developed by Meta AI, includes models ranging from 8 million to 15 billion parameters, with ESM2 representing its most advanced iteration [1] [18]. These models employ a transformer architecture with a masked language modeling objective, trained on millions of diverse protein sequences from UniProt [20]. ProtBERT, part of the ProtTrans family, is a BERT-based model pre-trained on UniProtKB and the BFD (Big Fantastic Database) containing billions of sequences, yielding contextualized embeddings that capture deep evolutionary information [17] [20]. In contrast, ProGen and ProtGPT2 represent autoregressive transformer models designed for generative protein design. ProGen employs a conditional language modeling approach that can incorporate property tags during training, while ProtGPT2 is a GPT-2-style model trained on UniRef50, focusing on sampling novel, functional protein sequences that explore uncharted regions of protein space [21] [20].

Table 1: Comparative Specifications of Major Protein Language Model Families

| Model Family | Primary Architecture | Key Training Data | Model Size Range | Primary Application Domain |

|---|---|---|---|---|

| ESM | Transformer (Masked LM) | UniProt | 8M - 15B parameters | Function prediction, structure prediction |

| ProtBERT | Transformer (BERT) | UniProtKB, BFD | ~420M parameters | Function prediction, feature extraction |

| ProGen | Transformer (Autoregressive) | UniRef50, with control tags | 1.2B parameters | Controlled protein generation |

| ProtGPT2 | Transformer (GPT-2) | UniRef50 | 738M parameters | De novo protein generation |

Experimental Protocols for Performance Benchmarking

Enzyme Commission Number Prediction

Accurately predicting Enzyme Commission (EC) numbers represents a critical benchmark for protein function prediction capabilities. Standard experimental protocols define EC number prediction as a multi-label classification problem that accounts for promiscuous and multi-functional enzymes [17]. Each protein sequence is associated with a binary label vector indicating its EC number assignments across all four hierarchical levels.

The standard workflow involves: (1) Data Curation - extracting protein sequences and their EC numbers from SwissProt and TrEMBL sections of UniProtKB, keeping only UniRef90 cluster representatives to reduce redundancy; (2) Feature Extraction - obtaining protein sequence representations (embeddings) from the final hidden layers of pre-trained pLMs; (3) Model Training - employing fully connected neural networks that use these embeddings as input features to predict EC numbers; (4) Performance Comparison - evaluating models against traditional methods like BLASTp using metrics such as precision, recall, and F1-score across different sequence identity thresholds [17] [10].

Transfer Learning for Protein Property Prediction

Transfer learning protocols assess how effectively pLM embeddings capture biologically meaningful information for downstream prediction tasks. The standard methodology involves: (1) Embedding Extraction - generating sequence representations from various pLM architectures; (2) Embedding Compression - applying compression methods like mean pooling to handle high-dimensional embeddings; (3) Predictive Modeling - using compressed embeddings as features in regularized regression models (e.g., LassoCV) to predict various protein properties [1] [22].

These experiments typically evaluate performance across two dataset types: Deep Mutational Scanning (DMS) data measuring effects of single amino acid variants on fitness and function, and diverse protein sequences from databases like PISCES with computed properties including physicochemical characteristics, instability index, and secondary structure content [1].

Protein Generation and Validation

For generative models like ProtGPT2 and ProGen, experimental protocols focus on evaluating the quality, diversity, and functionality of novel sequences. The standard approach involves: (1) Sequence Generation - sampling novel protein sequences using specific decoding strategies (e.g., top-k sampling with k=950 for ProtGPT2); (2) Statistical Analysis - comparing amino acid propensities and biochemical properties of generated sequences against natural counterparts; (3) Structural Validation - using predictive tools like AlphaFold to assess whether generated sequences fold into stable, well-structured proteins; (4) Functional Assessment - conducting sensitive sequence searches (e.g., with HHblits) to determine evolutionary novelty and mapping generated sequences within protein similarity networks to visualize coverage of protein space [21].

Performance Comparison and Experimental Data

Enzyme Function Prediction

Comparative studies reveal nuanced performance differences between pLMs and traditional methods for EC number prediction. When combining pLM embeddings with fully connected neural networks, these models surpass deep learning approaches relying on one-hot encodings of amino acid sequences [17]. In direct comparisons, BLASTp provides marginally better overall results, but pLMs and alignment methods show complementary strengths, with each approach excelling on different subsets of EC numbers [10].

Among pLMs, ESM2 consistently achieves the best performance, particularly for challenging annotation tasks and enzymes without close homologs (sequence identity <25%) [17] [10]. This demonstrates the particular value of pLMs for annotating poorly characterized enzyme families. The complementary nature of these approaches is highlighted by the finding that ensembles combining BLASTp and pLM predictions achieve performance superior to either method alone [17].

Table 2: Performance Comparison on EC Number Prediction Tasks

| Model/Method | Overall Accuracy | Performance on Low-Homology Sequences (<25% identity) | Key Strengths |

|---|---|---|---|

| BLASTp | Highest overall | Limited | Excellent for sequences with clear homologs |

| ESM2 + FCNN | Slightly below BLASTp | Best among pLMs | Difficult annotations, enzyme families without homologs |

| ProtBERT + FCNN | Moderate | Moderate | Feature extraction for specific functional domains |

| One-hot encoding + DL | Lowest | Limited | Baseline performance |

Transfer Learning Efficiency

Systematic evaluations of model size versus performance reveal that larger models do not universally outperform smaller counterparts, particularly when training data is limited [1] [22]. Medium-sized models such as ESM-2 650M and ESM C 600M demonstrate consistently strong performance, falling only slightly behind the massive ESM-2 15B and ESM C 6B models despite being substantially more computationally efficient [1].

For embedding compression in transfer learning, mean pooling consistently outperforms alternative methods (max pooling, iDCT, PCA) across diverse protein prediction tasks [1] [22]. This simple approach of averaging embeddings across all sequence positions provides particularly strong performance for widely diverged sequences, though some specialized compression methods occasionally slightly outperform mean pooling on specific Deep Mutational Scanning datasets where single mutations have large functional effects [1].

Protein Generation Quality

ProtGPT2 effectively generates novel protein sequences with natural amino acid propensities, with 88% of generated sequences predicted to be globular—a proportion similar to natural proteins [21]. Sensitive sequence searches reveal that ProtGPT2 sequences are evolutionarily distant from natural proteins yet maintain structural integrity, with AlphaFold predictions yielding well-folded structures containing novel topologies not present in current databases [21].

ProGen demonstrates capabilities for controlled generation through its training on tagged sequences, enabling targeted creation of proteins with specific functional or structural properties [20] [23]. Both models can explore uncharted regions of protein space while maintaining biological plausibility, making them valuable for engineering novel enzymes and therapeutic proteins.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Resources for Protein Language Model Research

| Resource Name | Type | Primary Function | Relevance to pLM Research |

|---|---|---|---|

| UniProt Knowledgebase | Database | Comprehensive protein sequence and functional annotation | Primary training data source for most pLMs; benchmark for function prediction |

| PISCES Database | Database | Curated set of protein sequences with structural information | Evaluation of transfer learning capabilities on diverse sequences |

| Deep Mutational Scanning (DMS) Data | Experimental Dataset | Measurement of mutational effects on protein fitness | Benchmark for variant effect prediction and model interpretability |

| AlphaFold2 | Software Tool | Protein structure prediction from sequence | Validation of structural properties of generated protein sequences |

| HHblits | Software Tool | Sensitive sequence searching and homology detection | Assessment of evolutionary novelty for generated protein sequences |

| ESM-2 650M/ESM C 600M | Pre-trained Model | Medium-sized protein language models | Optimal balance of performance and efficiency for most research applications |

The comparative assessment of ESM, ProtBERT, ProGen, and ProtGPT2 reveals a specialized landscape where each model family offers distinct advantages for particular research applications. ESM models excel in function prediction tasks, especially for proteins with limited homology, while ProtBERT provides robust feature extraction capabilities. The generative models ProGen and ProtGPT2 enable exploration of novel protein sequences, with the former offering conditional generation and the latter specializing in sampling natural-like protein space. Notably, model size does not universally dictate performance, with medium-sized models often providing the optimal balance of predictive accuracy and computational efficiency for real-world research settings. The complementary strengths of traditional alignment methods and pLMs suggest that hybrid approaches often yield the most robust results, particularly for challenging functional annotation tasks. As protein language models continue to evolve, their integration into biomedical research pipelines promises to accelerate drug development and protein engineering efforts.

From Sequence to Function: Methodological Approaches and Real-World Applications of PLMs

Task-Specific Fine-Tuning Strategies for Downstream Predictions

In the rapidly evolving field of artificial intelligence applied to biological sciences, protein large language models (pLMs) have emerged as powerful tools for decoding the complex language of life. These models, pre-trained on millions of protein sequences, learn fundamental principles of protein biochemistry, evolution, and structure. However, their true potential is often realized only through task-specific fine-tuning - the process of adapting these general-purpose models to specialized downstream prediction tasks. As the field moves beyond using static embeddings, comparative assessment of fine-tuning methodologies becomes crucial for researchers, scientists, and drug development professionals seeking to maximize predictive performance while managing computational constraints. This guide provides a comprehensive comparison of contemporary fine-tuning strategies, supported by experimental data and practical implementation protocols.

Understanding Protein Language Models and Fine-Tuning

Protein language models such as ESM2, ProtT5, and Ankh learn rich representations of protein sequences through self-supervised pre-training on vast datasets like UniRef50, which contains approximately 45 million protein sequences [24]. These models capture evolutionary relationships and biochemical properties without explicit experimental annotation. While embeddings from pre-trained pLMs have demonstrated state-of-the-art performance across diverse tasks, research shows that task-specific supervised fine-tuning consistently boosts prediction accuracy further [25].

Fine-tuning involves adding a simple prediction head (such as a feed-forward neural network) on top of the pLM encoder and applying supervised training to both the pLM encoder and the prediction head. This approach differs from using static embeddings because it allows the model to adapt its representations to task-specific objectives, accessing information stored across all layers rather than being limited to the final hidden layer [25].

Comparative Analysis of Fine-Tuning Performance Across Models and Tasks

Performance Gains from Fine-Tuning

Recent large-scale evaluations of three state-of-the-art pLMs (ESM2, ProtT5, Ankh) across eight diverse prediction tasks revealed that supervised fine-tuning almost always improves downstream predictions compared to using frozen embeddings [25]. The improvements were particularly pronounced for problems with small datasets, such as fitness landscape predictions of single proteins.

Table 1: Performance Impact of Fine-Tuning Across Protein Prediction Tasks

| Task Category | Example Tasks | Typical Performance Gain | Notable Observations |

|---|---|---|---|

| Per-Residue Predictions | Secondary structure, Disorder, Solvent accessibility | +1-2 percentage points for secondary structure; +2.2 points for disorder prediction | Secondary structure shows limited gains, possibly due to upper performance limits [25] |

| Fitness Landscapes | GFP, AAV, GB1 mutational effects | Significant improvements, especially for Ankh models | Performance relies less on transfer from pre-training [25] |

| Global Protein Properties | Stability, Solubility, Subcellular localization | Consistent improvements across most models | Fine-tuning particularly beneficial for subcellular location prediction [25] |

| Function Prediction | Enzyme activity, Binding assays | Notable gains, especially with limited data | Medium-sized models often sufficient with proper fine-tuning [1] |

Model Size Considerations

Contrary to trends in natural language processing, larger pLMs do not always outperform smaller ones for biological applications, especially when data is limited. Medium-sized models (100 million to 1 billion parameters) often achieve competitive performance while being substantially more efficient [1].

Table 2: Model Size vs. Performance in Transfer Learning

| Model Size Category | Example Models | Parameter Range | Best Use Cases |

|---|---|---|---|

| Small | ESM-2 8M, ESM-2 35M | <100 million parameters | Rapid prototyping, very limited data |

| Medium | ESM-2 150M, ESM-2 650M, ESM C 600M | 100M - 1B parameters | Most practical applications; optimal balance of performance and efficiency [1] |

| Large | ESM-2 3B, ESM-2 15B, ESM C 6B | >1 billion parameters | Large datasets; tasks requiring capture of complex relationships [1] |

Systematic evaluation has shown that medium-sized models like ESM-2 650M and ESM C 600M demonstrate consistently good performance, falling only slightly behind their larger counterparts despite being many times smaller [1]. This makes them practical choices for transfer learning in realistic biological applications where computational resources may be constrained.

Parameter-Efficient Fine-Tuning (PEFT) Methods

LoRA and Alternative PEFT Strategies

For larger pLMs, full fine-tuning can be prohibitively expensive. Parameter-efficient fine-tuning methods address this challenge by updating only a small fraction of the model's parameters. Low-Rank Adaptation (LoRA) has emerged as a particularly effective approach, achieving similar improvements to full fine-tuning while consuming substantially fewer resources and providing up to 4.5-fold acceleration of training [25].

In comparative studies on ProtT5 for sub-cellular location prediction, LoRA (training only 0.25% of parameters) and DoRA (0.28%) outperformed other PEFT methods like IA3 (0.12%) and Prefix-tuning (0.5%), with all methods showing improvements over pre-trained embeddings [25]. Runtime for training and testing were within ±10% between methods, except for DoRA which was about 30% slower than the other three.

Implementation Advantages of LoRA

The significant advantage of LoRA lies in its ability to make fine-tuning feasible on commercial GPUs with limited memory. For example, applying LoRA to the ProtT5 model (which has over 1.2 billion parameters) reduces trainable parameters to just over 3 million, making it possible to fine-tune on a GPU with approximately 10GB of memory [24]. This represents a reduction of more than 99% in trainable parameters while retaining most of the performance benefits of full fine-tuning.

Experimental Protocols for Effective Fine-Tuning

Workflow for Protein Model Fine-Tuning

The following diagram illustrates a standardized workflow for fine-tuning protein language models on downstream prediction tasks:

Data Selection and Preparation Strategies

Efficient data selection is crucial for successful fine-tuning. Recent advancements like Data Whisperer demonstrate that selecting optimal training subsets can balance performance and computational costs [26]. This training-free, attention-based method leverages few-shot in-context learning to identify informative examples, achieving superior performance with just 10% of data in some cases while providing 7.4× speedup over previous methods [26].

For embedding compression prior to transfer learning, research indicates that mean pooling consistently outperforms alternative compression methods across diverse protein prediction tasks [1]. In evaluations across 40 deep mutational scanning datasets and diverse protein sequences from the PISCES database, mean embeddings led to increases in variance explained between 5-20 percentage points for DMS data and 20-80 percentage points for diverse protein sequences compared to other compression methods [1].

Hyperparameter Configuration

Optimal fine-tuning requires careful hyperparameter selection. Based on experimental results:

- Learning rates between 1e-4 and 1e-5 typically work well for protein prediction tasks

- Batch sizes should be maximized within GPU memory constraints

- Early stopping should be implemented based on validation performance plateauing

- LoRA parameters (rank, alpha) should be tuned for specific tasks and models

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Tools for Protein Model Fine-Tuning

| Tool Category | Specific Tools/Resources | Function | Access/Implementation |

|---|---|---|---|

| Pre-trained pLMs | ESM-2 series, ProtT5, Ankh | Provide foundational protein sequence representations | HuggingFace Transformers library [24] |

| Fine-tuning Frameworks | PyTorch, HuggingFace Transformers | Model architecture and training implementation | Open-source Python libraries [24] |

| Parameter-efficient Methods | LoRA, DoRA, IA3 | Reduce computational requirements for fine-tuning | PEFT library; custom implementation [25] [24] |

| Data Selection Tools | Data Whisperer, Nuggets | Identify informative training subsets | GitHub repositories [26] |

| Embedding Compression | Mean pooling, iDCT, PCA | Reduce embedding dimensionality while retaining information | Custom implementation [1] |

| Evaluation Metrics | MCC, AUC-ROC, Spearman correlation | Quantify model performance on specific tasks | Scikit-learn, custom implementations [24] |

| Specialized Datasets | Deep mutational scanning data, PISCES sequences | Provide task-specific labels for fine-tuning | Public biological databases [1] |

Case Study: Dephosphorylation Site Prediction

A practical implementation of these principles demonstrates fine-tuning ProtT5 for dephosphorylation site prediction, a binary classification task involving recognition of tyrosine dephosphorylation sites [24]. The process involved:

- Data Preparation: Collecting protein sequences with annotated tyrosine dephosphorylation sites

- Model Configuration: Adding a classification head to ProtT5 and implementing LoRA to reduce trainable parameters from 1.2 billion to 3.5 million

- Training: Fine-tuning on the specialized dataset with performance validation after each epoch

- Evaluation: Assessing performance using Matthews correlation coefficient (MCC), specificity, sensitivity, accuracy, and ROC-AUC

This case study exemplifies how task-specific fine-tuning enables accurate predictions even when labeled datasets are small, overcoming limitations of traditional feature engineering approaches [24].

Decision Framework and Future Directions

Selection Guidelines

The following diagram provides a decision framework for selecting appropriate fine-tuning strategies based on task requirements and available resources:

Emerging Trends

Future developments in protein model fine-tuning will likely focus on:

- Unified frameworks for multi-task chart understanding that systematically synthesize various information types [27]

- Enhanced data selection methods that more efficiently identify optimal training subsets [26]

- Specialized adaptation techniques for particular biological domains such as antibody engineering or enzyme design

- Integration of structural information through multi-modal approaches combining sequence and structural data

Task-specific fine-tuning represents a crucial methodology for maximizing the utility of protein language models in biological research and drug development. The experimental evidence consistently demonstrates that supervised fine-tuning, particularly using parameter-efficient methods like LoRA, substantially boosts prediction performance across diverse tasks while managing computational costs. Medium-sized models often provide the optimal balance between performance and efficiency for realistic biological applications. As the field advances, following standardized protocols while selecting appropriate strategies based on specific task requirements will enable researchers to extract maximum value from these powerful computational tools. The continued refinement of fine-tuning methodologies promises to further bridge the gap between computational predictions and experimental biology, accelerating discoveries in basic research and therapeutic development.

The accurate prediction of protein function is a cornerstone of modern biology, with profound implications for understanding disease mechanisms, guiding drug discovery, and exploiting biotechnology. The functions of proteins are formally annotated using two primary systems: Enzyme Commission (EC) numbers, which describe catalytic activities in a hierarchical numerical format, and Gene Ontology (GO) terms, which describe molecular functions, biological processes, and cellular components in a structured vocabulary [28]. However, a massive annotation gap exists; while databases like UniProt contain hundreds of millions of protein sequences, less than 0.3% have experimentally validated functions [11]. This gap has driven the development of sophisticated computational methods, with protein Language Models (pLMs) emerging as particularly powerful tools. This guide provides a comparative assessment of these methods, focusing on their performance in predicting EC numbers and GO terms.

Performance Comparison of Protein Function Prediction Tools

The field of automated function prediction has evolved from simple sequence alignment to advanced deep learning. Similarity-based tools like BLASTp transfer annotations from the most similar sequence in a database. Signature-based methods like InterProScan identify known functional domains. More recently, protein Language Models (pLMs) such as ESM2, ProtT5, and ProtBERT learn complex representations from millions of sequences, enabling function prediction even for proteins with no known homologs [11] [25].

Table 1: Performance Comparison of Tools for EC Number Prediction

| Tool / Method | Core Methodology | Reported Performance (EC) | Key Strengths |

|---|---|---|---|

| BLASTp | Sequence alignment & similarity search | Marginally outperforms many DL models overall [3] | Gold standard for homology-based annotation; highly reliable for clear homologs. |

| ProteInfer | Deep dilated convolutional neural network | Complementary to BLASTp; an ensemble of both surpasses either alone [29] | Rapid prediction; provides coarse-grained functional localization via Class Activation Mapping (CAM). |

| ESM2 (LLM) | Transformer-based protein language model | Best among tested pLMs; excels on difficult annotations and enzymes without homologs [3] | Effective where sequence identity to reference database falls below 25%. |

| ProtBERT (LLM) | Transformer-based protein language model | Performance improves with fine-tuning, but overall suboptimal compared to ESM2 and BLASTp [3] | Demonstrates the potential of fine-tuning pLMs for specific tasks. |

| PhiGnet | Statistics-informed graph neural network | N/A (Focus on residue-level significance) | Quantifies functional significance of individual residues; works from sequence alone. |

Table 2: Performance Comparison of Tools for GO Term Prediction

| Tool / Method | Core Methodology | Reported Performance (GO) | Key Strengths |

|---|---|---|---|

| InterProScan | Signature-based (e.g., HMMER, PROSITE) | Precision: 0.937, Recall: 0.543, F1: 0.688 [29] | High precision; integrates multiple databases. |

| ProteInfer | Deep dilated CNN | F1: 0.885; Recall of 0.835 at a precision of .937 [29] | Much higher recall than InterProScan at high precision; single model for all predictions. |

| GOHPro | GO similarity-based heterogeneous network propagation | Outperformed 6 state-of-the-art methods, with Fmax improvements of 6.8 to 47.5% over methods like exp2GO [30] | Effectively integrates PPI networks, domain data, and GO hierarchy semantics; robust to data sparsity. |

| Fine-tuned pLMs (e.g., ProtT5) | Fine-tuned protein language models | Task-specific fine-tuning almost always improves downstream predictions over static embeddings [25] | Adapts general-purpose pLMs to specific prediction tasks, maximizing performance. |

A critical finding from recent research is that pLMs and BLASTp have complementary strengths. While BLASTp may have a slight overall advantage, pLMs like ESM2 demonstrate superior performance for specific EC numbers and, crucially, for annotating enzymes that lack close homologs (e.g., when sequence identity to proteins in the reference database is below 25%) [3]. For GO term prediction, novel network-based methods like GOHPro show significant improvements by explicitly modeling the complex relationships between proteins and the GO hierarchy [30].

Experimental Protocols for Key Studies

Protocol: Benchmarking Protein LLMs for EC Number Prediction

This protocol is based on the comparative assessment of ESM2, ESM1b, and ProtBERT [3].

Data Curation and Preprocessing:

- Source: Swiss-Prot (manually reviewed) and TrEMBL (automatically annotated) sections of UniProtKB.

- Filtering: To reduce redundancy, only representative sequences from UniRef90 clusters are retained. This ensures no two sequences in the dataset share more than 90% identity.

- Problem Formulation: EC number prediction is treated as a hierarchical multi-label classification task. Each protein sequence is assigned a binary vector representing the presence or absence of every possible EC number, including all parent terms in the hierarchy.

Model Training and Fine-tuning:

- Feature Extraction: For pLMs, sequences are fed into pre-trained models (ESM2, ESM1b, ProtBERT) to generate numerical representations (embeddings).

- Classifier: These embeddings are used as input to a fully connected neural network classifier.

- Comparison Models: Models like DeepEC and D-SPACE, which use one-hot encodings of amino acid sequences instead of pLM embeddings, are implemented for baseline comparison.

Performance Evaluation:

- Benchmarking: The predictive performance of the pLM-based classifiers is rigorously compared against each other and against the traditional BLASTp tool.

- Analysis: Performance is dissected based on the difficulty of the annotation task and the degree of homology between the query sequence and proteins in the reference database.

Protocol: Fine-Tuning pLMs for Diverse Prediction Tasks

This protocol outlines the methodology for enhancing pLM performance on specific tasks, as described in [25].

Model and Task Selection:

- Models: Three state-of-the-art pLMs are selected: ESM2, ProtT5, and Ankh.

- Tasks: The models are evaluated on eight diverse tasks, including per-residue prediction (e.g., secondary structure, disorder) and per-protein prediction (e.g., subcellular localization, fitness landscapes).

Fine-tuning Strategy:

- Full Fine-tuning vs. PEFT: The practice of adding a simple prediction head (ANN) to the pLM encoder and updating all model weights via supervised training is compared to Parameter-Efficient Fine-Tuning (PEFT) methods.

- PEFT Method - LoRA: Low-Rank Adaptation (LoRA) is employed as a primary PEFT method. It freezes the pre-trained model weights and injects trainable rank decomposition matrices into each transformer layer, dramatically reducing the number of trainable parameters (e.g., to 0.25% of the full model).

Evaluation:

- The performance of fine-tuned models is compared to a baseline that uses static, pre-trained embeddings without updating the pLM weights.

- Training time and computational resource consumption are also compared between full fine-tuning and PEFT.

Protocol: GOHPro for GO Term Prediction

This protocol details the novel heterogeneous network approach from [30].

Network Construction:

- Protein Functional Similarity Network: This is built by integrating two sources of information:

- Domain Structural Similarity: Calculated from protein-protein interaction (PPI) network topology and protein domain composition from the Pfam database.

- Modular Similarity: Derived from protein complex information obtained from the Complex Portal.

- GO Semantic Similarity Network: This network is generated based on the hierarchical "isa" and "partof" relationships between GO terms.

- Heterogeneous Network Integration: The protein network and the GO network are linked via known protein-GO term annotations to form a single, integrated heterogeneous network.

- Protein Functional Similarity Network: This is built by integrating two sources of information:

Network Propagation:

- A network propagation algorithm is applied to the heterogeneous network. This algorithm simulates the flow of functional information from annotated proteins to unannotated ones, leveraging both the protein functional similarities and the GO semantic relationships.

Prioritization and Evaluation:

- After propagation, GO terms for uncharacterized proteins are prioritized based on their association scores.

- The method is evaluated on yeast and human datasets against six state-of-the-art methods using the Fmax metric.

Workflow Visualization

The following diagram illustrates the typical experimental workflow for developing and benchmarking a pLM-based function prediction method, integrating elements from the cited protocols.

Workflow for Protein Function Prediction Benchmarking

The GOHPro method employs a distinct, network-based architecture for GO term prediction, as shown below.

GOHPro Heterogeneous Network Architecture

Table 3: Essential Databases, Tools, and Models for Protein Function Prediction Research

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| UniProtKB | Database | The central repository for protein sequence and functional annotation data, used for training and testing models [31] [3]. |

| Pfam | Database | A collection of protein families and domains, used to build domain-based functional similarity networks [30]. |

| Complex Portal | Database | A manually curated resource of macromolecular complexes, used to inform modular similarity in network methods [30]. |

| Gene Ontology (GO) | Database / Vocabulary | Provides the structured, hierarchical set of terms used for standardizing protein function annotations [28] [30]. |

| ESM2 | Protein Language Model | A state-of-the-art transformer-based pLM used to generate powerful sequence representations for function prediction [25] [3]. |

| ProtT5 | Protein Language Model | Another leading pLM, often used in comparative studies and known to benefit significantly from fine-tuning [25]. |

| BLASTp | Software Tool | The gold-standard homology-based search tool, used as a critical baseline for benchmarking new methods [3] [29]. |

| InterProScan | Software Tool | A signature-based method that scans sequences against multiple protein family databases, used for performance comparison [29]. |

| LoRA (Low-Rank Adaptation) | Algorithm | A Parameter-Efficient Fine-Tuning (PEFT) method that allows for effective adaptation of large pLMs with minimal computational overhead [25]. |

The field of computational structural biology has been revolutionized by the advent of deep learning, transitioning from predicting individual protein folds to modeling intricate multi-chain complexes. This evolution addresses one of biology's fundamental challenges: understanding how proteins assemble into functional complexes that drive cellular processes. While AlphaFold2 marked a watershed moment for single-chain prediction, accurately capturing inter-chain interactions remains a formidable challenge that next-generation methods are now tackling [32].

This guide provides a comparative assessment of contemporary protein structure prediction tools, with a specific focus on their performance in predicting protein complexes—a capability crucial for applications in drug discovery and protein engineering. We evaluate methods including DeepSCFold, AlphaFold3, AlphaFold-Multimer, and specialized docking approaches, analyzing their performance across standardized benchmarks to provide researchers with objective data for selecting appropriate tools.

Key Methodological Approaches

Protein structure prediction has evolved through distinct methodological phases:

- Template-Based Modeling: Early approaches like MODBASE leveraged homology modeling, generating structures based on detectable templates from databases like PDB. This method remains effective when high-quality templates exist but fails for novel folds [33].

- Physical Docking Methods: Tools like ZDOCK, HADDOCK, and AutoDock CrankPep (ADCP) assemble monomer structures into complexes based on physicochemical principles and shape complementarity. These methods face challenges in conformational sampling and energy function accuracy [32] [34].

- Deep Learning Revolution: AlphaFold2 introduced an end-to-end deep learning architecture that jointly embeds multiple sequence alignments (MSAs) and pairwise features through Evoformer blocks, enabling atomic-level accuracy for monomeric structures [35].

- Complex-Specific Extensions: AlphaFold-Multimer adapted the AlphaFold2 framework specifically for multimers, while newer approaches like DeepSCFold incorporate structural complementarity predictions directly from sequence information [32].

- Unified Complex Prediction: AlphaFold3 represents the most comprehensive approach, predicting structures of protein complexes with diverse biomolecular partners including DNA, RNA, and ligands [36].

The Role of Protein Language Models

Protein Large Language Models (pLLMs) like ESM2, ESM1b, and ProtBERT have emerged as powerful tools for extracting structural and functional information directly from sequences. These models, pre-trained on millions of protein sequences, learn evolutionary patterns and biophysical properties that inform structure prediction [11] [3]. While initially developed for function prediction, their embeddings have proven valuable for inferring structural features, particularly for proteins with few homologs, achieving competitive performance with traditional tools like BLASTp for certain annotation tasks [3].

Performance Benchmarking

Protein Complex Structure Prediction

Table 1: Performance comparison of protein complex prediction tools on CASP15 multimer targets

| Method | TM-score Improvement | Key Strengths | Limitations |

|---|---|---|---|

| DeepSCFold | +11.6% vs AlphaFold-Multimer, +10.3% vs AlphaFold3 | Excels in antibody-antigen interfaces; captures structural complementarity | Requires construction of paired MSAs |

| AlphaFold3 | Reference baseline | Unified biomolecular complex prediction | Underestimates flexible binding pockets [37] |

| AlphaFold-Multimer | -10.3% vs DeepSCFold | Direct extension of AF2 framework | Lower accuracy for intertwined complexes |

| Yang-Multimer | Moderate performance | Extensive sampling strategies | Computationally intensive |

| MULTICOM3 | Moderate performance | Diverse paired MSA construction | Limited for non-co-evolving complexes |

DeepSCFold demonstrates notable performance advantages, particularly for challenging targets like antibody-antigen complexes from the SAbDab database, where it enhances prediction success rates for binding interfaces by 24.7% and 12.4% over AlphaFold-Multimer and AlphaFold3, respectively [32]. This suggests that explicitly modeling structural complementarity from sequence information provides benefits beyond co-evolutionary signals alone.

Binding Affinity and Flexible Interface Prediction

Table 2: Performance in predicting mutation-induced binding free energy changes (SKEMPI 2.0 database)

| Method | Pearson Correlation (Rp) | RMSE (kcal/mol) | Application Scope |

|---|---|---|---|

| MT-TopLap (PDB structures) | 0.88 | 0.937 | Gold standard reference |

| MT-TopLapAF3 (AF3 structures) | 0.86 | 1.025 | General protein-protein complexes |

| TopLapNetGBT | 0.87 | N/A | Topological deep learning |

| mCSM-PPI2 | 0.82 | N/A | Traditional machine learning |

Independent validation using the SKEMPI 2.0 database (containing 317 protein-protein complexes and 8,338 mutations) reveals that while AlphaFold3 achieves a strong Pearson correlation of 0.86 for predicting binding free energy changes, it results in an 8.6% increase in root-mean-square error compared to original PDB structures [36]. This indicates that while AF3 captures global binding modes effectively, it has limitations in precisely modeling interface flexibility and side-chain packing critical for affinity predictions.

Performance on Specialized Targets

For protein-peptide interactions, specialized docking tools like AutoDock CrankPep (ADCP) achieve a 62% docking success rate, while AlphaFold2 models trained specifically for multimeric assemblies show remarkable performance for peptides [34]. A consensus approach combining ADCP and AlphaFold2 reaches 60% success for top-ranking results and 66% for top-5 results, suggesting complementary strengths.

For challenging targets like snake venom toxins and nuclear receptors, AlphaFold2 shows limitations in capturing structural flexibility. In nuclear receptors, AF2 systematically underestimates ligand-binding pocket volumes by 8.4% on average and misses functional asymmetry in homodimeric receptors where experimental structures show conformational diversity [37].

Experimental Protocols and Methodologies

Benchmarking Standards

Robust evaluation of protein complex prediction tools requires standardized protocols:

CASP Assessment Protocol

- Uses recently solved structures not yet publicly disclosed for blind testing

- Evaluates global fold accuracy (TM-score) and atomic precision (RMSD)

- Provides domains for both template-based and free modeling categories [35]

SKEMPI 2.0 Validation

- Employs 317 protein-protein complexes with 8,330 mutation-induced binding free energy changes

- Uses 10-fold cross-validation with Pearson correlation and RMSE metrics

- Applies topological deep learning (TDL) for consistent feature extraction [36]

Antibody-Antigen Complex Evaluation

- Leverages SAbDab database containing structural antibody data

- Measures interface success rate focusing on CDR-epitope interactions

- Particularly challenging due to limited co-evolutionary signals [32]

DeepSCFold Methodology

Diagram 1: DeepSCFold workflow for protein complex structure prediction (Title: DeepSCFold Architecture)

DeepSCFold employs a sophisticated pipeline that integrates sequence-based deep learning with protein-language model insights: