CAPE vs. CASP: A Comparative Analysis of AI-Powered Protein Structure Prediction Tools for Biomedical Research

This article provides a comprehensive comparison of the CAPE (Continuous Automated Protein Evaluation) platform and the CASP (Critical Assessment of protein Structure Prediction) competition, two pivotal forces shaping modern structural...

CAPE vs. CASP: A Comparative Analysis of AI-Powered Protein Structure Prediction Tools for Biomedical Research

Abstract

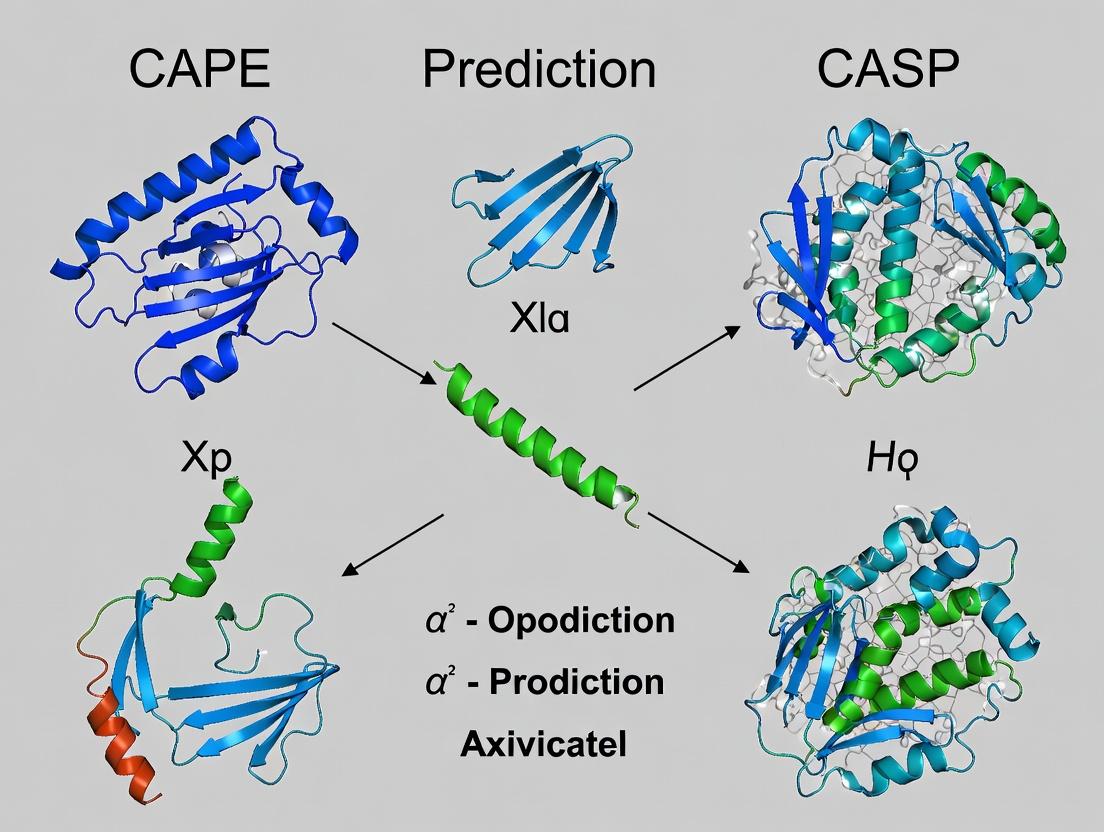

This article provides a comprehensive comparison of the CAPE (Continuous Automated Protein Evaluation) platform and the CASP (Critical Assessment of protein Structure Prediction) competition, two pivotal forces shaping modern structural biology. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of each, details their methodological approaches and applications in drug discovery, addresses common troubleshooting and optimization strategies, and presents a rigorous validation and comparative analysis of their predictive accuracy, utility, and limitations. The goal is to equip professionals with the knowledge to strategically select and leverage these tools to accelerate biomedical research.

Understanding CAPE and CASP: Core Concepts and Historical Evolution in Protein Folding

Within the ongoing research discourse on computational protein structure prediction, a critical methodological and philosophical divide exists between Continuous Automated Model Evaluation (CAPE) platforms and the periodic Critical Assessment of protein Structure Prediction (CASP) experiment. While CAPE represents a continuous, community-wide benchmarking system, CASP is a biennial, double-blind competition that has historically defined the state-of-the-art. This whitepaper provides an in-depth technical examination of the CASP competition, its protocols, and its outcomes, framing its role as the definitive arbiter of progress against which CAPE and other continuous assessment methods are often compared. The ultimate thesis posits that while CAPE offers rapid iteration, CASP provides the rigorous, prospective testing necessary for definitive breakthroughs, as evidenced by the AlphaFold2 watershed moment in CASP14.

CASP is a community experiment to objectively assess the performance of protein structure prediction methods. Established in 1994, it runs every two years, providing a blind test where predictors submit models for protein structures whose experimental determinations are not yet publicly available. This prospective design is crucial for preventing method overfitting and providing a true measure of predictive power.

Core Experimental Protocol and Workflow

The CASP competition follows a meticulously controlled, multi-stage workflow.

Diagram Title: CASP Competition Experimental Workflow

Assessment Categories and Metrics

CASP evaluates predictions across several categories, each with defined quantitative metrics. The core assessment is performed by independent assessors.

Table 1: Primary CASP Assessment Categories and Metrics

| Category | Description | Key Quantitative Metrics |

|---|---|---|

| Template-Based Modeling (TBM) | Targets with identifiable homologs of known structure. | GDT_TS (Global Distance Test Total Score), TM-score, RMSD (Cα atoms) |

| Free Modeling (FM) | Targets with no detectable structural templates. | GDT_TS, TM-score, RMSD, CAD (Contact Area Difference) |

| Template-Free | Subset of FM; truly novel folds. | GDT_TS, TM-score |

| Accuracy Estimation | Assessment of a model's own confidence. | Local Distance Difference Test (lDDT) per-residue error estimates |

| Quality Assessment (QA) | Ranking of provided models without knowing the native structure. | Z-scores relative to other groups' models |

| Residue-Residue Contacts | Prediction of spatial proximity between residues. | Precision/Recall for long-range contacts (>24 seq. separation) |

Table 2: Key Metric Definitions and Interpretation

| Metric | Calculation | Interpretation (Range) | Threshold for "Good" Prediction |

|---|---|---|---|

| GDT_TS | % of Cα residues under distance cutoffs (1, 2, 4, 8 Å). | 0-100 (Higher is better) | >50 for moderate, >80 for high accuracy |

| TM-score | Structural similarity measure, length-independent. | 0-1 (Higher is better) | >0.5 indicates correct fold topology |

| RMSD | Root-mean-square deviation of Cα atomic positions. | 0-∞ Å (Lower is better) | <2Å for high-accuracy core, context-dependent |

| lDDT | Local Distance Difference Test for model confidence. | 0-100 (Higher is better) | >70 indicates reliable local geometry |

The Scientist's Toolkit: Key Research Reagent Solutions in CASP

Table 3: Essential Computational Tools & Resources in CASP Research

| Tool/Resource | Provider/Type | Primary Function in CASP |

|---|---|---|

| AlphaFold2 (AF2) | DeepMind / End-to-end Deep Learning | De novo structure prediction via Evoformer and structure module. |

| RoseTTAFold | Baker Lab / Deep Learning | Three-track neural network integrating sequence, distance, and coordinates. |

| MODELLER | Šali Lab / Comparative Modeling | Builds models from alignments and known template structures (TBM). |

| I-TASSER | Zhang Lab / Hierarchical Modeling | Combines template identification, ab initio fragment assembly, and refinement. |

| HH-suite | Bioinformatics Tool Suite | Sensitive sequence searching and alignment for homology detection. |

| PSI-BLAST | NCBI / Sequence Analysis | Profile-based sequence searching to find distant homologs. |

| MMseqs2 | Bioinformatics Tool | Ultra-fast sequence searching and clustering for massive databases. |

| PDB (Protein Data Bank) | Worldwide PDB / Database | Source of known structures for template modeling and method training. |

| UniRef90/UniClust30 | UniProt / Sequence Databases | Curated non-redundant sequence databases for multiple sequence alignment (MSA) generation. |

Visualizing the Assessment Hierarchy

The assessment process involves a hierarchy of metrics and comparisons.

Diagram Title: CASP Assessment Hierarchy

Key Historical Results and Impact

CASP has chronicled the revolutionary progress in the field. CASP13 (2018) saw the emergence of deep learning-based methods making significant inroads, particularly in contact prediction. CASP14 (2020) marked a paradigm shift with AlphaFold2 achieving median GDT_TS scores >90 for many targets, a performance often indistinguishable from experimental accuracy. This event validated deep learning architectures (Evoformer, SE(3)-transformers) and highlighted the critical importance of large, diverse MSAs and accurate template information.

CASP versus CAPE: A Core Tension

CASP's rigorous, periodic, and prospective blind testing stands in contrast to CAPE's continuous, retrospective benchmarking on known structures. While CAPE enables rapid feedback and iteration for developers, CASP's blinded design prevents unconscious bias and target-specific tuning, making it the "gold standard" for claiming a fundamental advance. The CASP protocol ensures that predictors cannot leverage knowledge of the final answer, a safeguard not inherently present in continuous assessment platforms. Thus, within the broader thesis, CASP remains the definitive arena for validating revolutionary new methods, as demonstrated by its role in certifying the AlphaFold2 breakthrough, while CAPE serves as an essential tool for incremental development and monitoring of method robustness over time.

Thesis Context: CAPE vs. CASP in Protein Structure Prediction

The field of protein structure prediction has been historically benchmarked by the Critical Assessment of Structure Prediction (CASP) experiments. While CASP provides invaluable periodic snapshots of model performance, its episodic nature creates gaps in rapid, iterative evaluation. This whitepaper introduces the Continuous, AI-Driven Evaluation Platform (CAPE), a paradigm shift towards real-time, automated, and granular assessment of AI-predicted protein structures. CAPE is designed to operate not as a replacement for CASP, but as a complementary, high-throughput system that enables continuous model refinement, immediate feedback on architectural changes, and accelerated application in drug discovery pipelines.

Core Architecture and Workflow

CAPE’s architecture is built on a microservices framework that automates the evaluation lifecycle. The core workflow integrates prediction submission, structure analysis, and metric dissemination.

Diagram Title: CAPE Continuous Evaluation Pipeline

Key Evaluation Metrics: A Quantitative Framework

CAPE calculates a suite of metrics, extending beyond the standard CASP metrics like GDT_TS and lDDT. It incorporates physics-based and functional site accuracy measures crucial for drug development.

Table 1: Core CAPE Evaluation Metrics vs. Traditional CASP Focus

| Metric Category | Specific Metric | CAPE Emphasis | Typical CASP Reporting | Utility in Drug Development |

|---|---|---|---|---|

| Global Fold | GDT_TS, TM-score | High-throughput, per-target trends | Primary focus per target | Assesses overall model viability. |

| Local Accuracy | lDDT, RMSD | Atom-level confidence scores | Reported, but less granular | Critical for binding site modeling. |

| Physical Plausibility | MolProbity Score, Rama Z | Real-time steric/energy flags | Limited post-analysis | Identifies non-viable structures early. |

| Functional Site | PockDrug Score, Site RMSD | Automated binding pocket assessment | Rarely assessed systematically | Directly informs virtual screening. |

| Ensemble Dynamics | Predicted Aligned Error (PAE) | Landscape analysis across submissions | Gaining prominence (AlphaFold2) | Guides model selection & uncertainty. |

Experimental Protocol: A Standard CAPE Evaluation Run

This protocol details the steps for a research team to submit and evaluate a new protein structure prediction model on CAPE.

- A. Preparation:

- Model Containerization: Package the prediction model into a Docker or Singularity container. The container must accept a FASTA sequence as input and output a PDB file or equivalent.

- Target Dataset Selection: From the CAPE continuously updating target set (including newly solved structures from the PDB with held-out sequences), select a benchmark suite (e.g., "Membrane Proteins Q4 2024").

- B. Submission & Automated Execution:

- Submit the container image URI and selected target suite via the CAPE REST API.

- CAPE's orchestrator launches parallelized prediction jobs on a compute cluster.

- Each generated structure is automatically passed to the analysis pipeline.

- C. Analysis Pipeline:

- Structure Alignment: Uses TMalign or Dali for structural superposition against the experimental reference.

- Metric Computation: Executes parallelized scripts to calculate all metrics in Table 1.

- Quality Control: Flags predictions with severe steric clashes (MolProbity > 2.5) for review.

- D. Data Aggregation & Visualization:

- Results are stored in a time-stamped database entry linked to the model version.

- A comparative report is generated, benchmarking against baseline models (e.g., AlphaFold2, ESMFold, RoseTTAFold).

- Results are pushed to the researcher's dashboard and available via API.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents and Tools for CAPE-Aligned Research

| Item | Function in CAPE-Centric Research | Example/Provider |

|---|---|---|

| Standardized Benchmark Datasets | Provides a consistent, evolving set of targets for model comparison. Prevents data leakage. | CAPE Core Targets, PDB Hold-Out Sets |

| Containerization Software | Ensures model reproducibility and seamless integration into the CAPE automated pipeline. | Docker, Singularity |

| Structure Analysis Suites | Backbone for local/global metric calculation within the CAPE workflow. | Biopython, PyMOL scripts, ProDy, VMD |

| Molecular Dynamics Engines | Used for post-prediction refinement and physical plausibility checks outside CAPE's core loop. | GROMACS, AMBER, OpenMM |

| Specialized Function Libraries | Enables calculation of advanced metrics like binding site similarity. | pocketutils, fpocket, scikit-learn |

| Visualization Dashboards | For interpreting CAPE's multi-dimensional output and tracking model evolution over time. | Grafana, Streamlit, Plotly Dash |

Signaling Pathway: From CAPE Feedback to Model Refinement

The CAPE platform closes the loop between evaluation and model development, creating a continuous improvement cycle.

Diagram Title: CAPE-Driven Model Development Cycle

Comparative Analysis: CAPE vs. CASP

Table 3: Operational Comparison: CAPE vs. CASP

| Feature | CAPE (Continuous Platform) | CASP (Periodic Experiment) |

|---|---|---|

| Evaluation Cadence | Continuous, on-demand. | Biennial, fixed schedule. |

| Feedback Speed | Hours to days. | Months (post-experiment). |

| Primary Goal | Rapid iteration, model debugging, application readiness. | Community-wide benchmarking, identifying major advances. |

| Target Selection | Dynamic, can include application-specific sets (e.g., drug targets). | Fixed, blind set for a given round. |

| Granularity | Enables per-residue, per-model version tracking. | Averages across targets per group. |

| Integration | Designed for CI/CD pipelines in AI labs and pharma. | Manual submission and analysis. |

CAPE represents an essential evolution in the ecosystem of protein structure prediction validation. By providing a continuous, AI-driven evaluation platform, it addresses the critical need for agile assessment in an era of rapidly evolving models. Framed within the broader thesis, CAPE is not the competitor to CASP but its necessary complement: where CASP declares major victories, CAPE enables the daily campaigns of optimization and practical translation, ultimately accelerating the path from predicted structure to functional insight and therapeutic discovery.

This whiteprames the evolution of protein structure prediction within the critical dialectic of CAPE (Continuous Automated Performance Evaluation) versus CASP (Critical Assessment of Structure Prediction) research paradigms. We trace the field from its biochemical foundations to the contemporary deep learning revolution, providing technical methodologies, quantitative comparisons, and essential research toolkits.

Foundations: Anfinsen's Dogma and the Thermodynamic Hypothesis

The principle that a protein's native structure is determined solely by its amino acid sequence, under physiological conditions, established the computational challenge.

Key Experimental Protocol: Ribonuclease A Renaturation (Anfinsen, 1973)

- Denaturation: Purified RNase A is treated with 8M Urea and β-mercaptoethanol to reduce disulfide bonds, destroying enzymatic activity.

- Renaturation: The denaturant and reductant are slowly removed via dialysis into an oxidizing buffer.

- Assay: Recovery of enzymatic activity is measured spectrophometrically using cCMP substrate hydrolysis, confirming spontaneous refolding to the native, functional state.

The CASP Era: Benchmarking and Community Progress

CASP, a blind biennial competition, became the gold standard for assessing prediction methodologies.

Quantitative Data: CASP Performance Evolution

| CASP Edition (Year) | Key Methodology | Top GDT_TS (Global) | Key Advancement |

|---|---|---|---|

| CASP3 (1998) | Threading, Comparative Modeling | ~40 | Large-scale fold recognition |

| CASP7 (2006) | Fragment Assembly, Rosetta | ~60 | Ab initio for small proteins |

| CASP10 (2012) | Consensus, Hybrid Methods | ~70 | Integration of sparse experimental data |

| CASP13 (2018) | AlphaFold (v1) - Deep Learning | ~70 | End-to-end distance geometry |

| CASP14 (2020) | AlphaFold2 - Attention-based | ~92 (Median) | Revolution in accuracy for hard targets |

CASP Assessment Protocol

- Target Release: Organizers release amino acid sequences of recently solved but unpublished structures.

- Prediction Window: Teams submit 3D coordinate models within a set timeframe.

- Blind Assessment: Predictions are compared to experimental structures using metrics like GDT_TS (Global Distance Test), RMSD, and local error estimates.

- Public Analysis: Results are presented at a meeting and published, driving methodological innovation.

The CAPE Paradigm: Continuous Automated Evaluation

CAPE represents a shift towards continuous, large-scale benchmarking on known structures, enabling rapid iteration for machine learning models.

Quantitative Data: CAPE vs. CASP Paradigm

| Feature | CASP Paradigm | CAPE Paradigm |

|---|---|---|

| Temporal Cadence | Biennial, discrete events | Continuous, on-demand |

| Target Nature | "Blind", novel folds | Curated from PDB (historical) |

| Primary Goal | Rigorous assessment, community benchmark | Rapid model training & validation |

| Feedback Cycle | Slow (2-year) | Fast (minutes/hours) |

| Key Metric | GDT_TS on de novo targets | Per-domain RMSD/LDDT on diverse folds |

| Exemplar Platform | CASP competition | AlphaFold DB training, ESMFold eval |

The AlphaFold Revolution: A Technical Breakdown

AlphaFold2 (AF2) represents a paradigm shift by integrating deep learning with biophysical principles.

Core AlphaFold2 Architecture & Workflow

Diagram Title: AlphaFold2 Core Architecture Dataflow

Detailed AF2 Experimental/Inference Protocol

- Input Processing:

- Generate Multiple Sequence Alignment (MSA) using JackHMMER/MMseqs2 against sequence databases (UniRef, BFD).

- Query protein-protein homology using HHblits against PDB70.

- Evoformer Processing:

- Embed MSA and pairwise features into initial representations.

- Pass through 48 Evoformer blocks with triangular self-attention, updating MSA and pair representations iteratively.

- Structure Module:

- Use pair representation to predict initial backbone frames (rotations & translations) for each residue.

- Iteratively refine (8 cycles) the 3D structure via invariant point attention, producing final atomic coordinates (including side chains from SCWRL4/idealization).

- Output & Confidence:

- Output PDB file with predicted coordinates.

- Calculate per-residue pLDDT confidence score (0-100), indicating predicted local accuracy.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in Structure Prediction |

|---|---|

| UniRef90/UniClust30 | Curated protein sequence databases for generating deep Multiple Sequence Alignments (MSAs), essential for evolutionary coupling analysis. |

| HH-suite (HHblits/HHsearch) | Software suite for fast, sensitive protein homology detection and HMM-HMM comparison against databases like PDB70. |

| JackHMMER/MMseqs2 | Tools for iterative sequence database searching to build MSAs from sequence profiles. |

| PyMol / UCSF ChimeraX | Molecular visualization software for analyzing, comparing, and rendering predicted 3D structures. |

| Rosetta Suite | Comprehensive software for de novo structure prediction, design, and docking; used as a benchmark and hybrid method component. |

| AlphaFold2 Colab Notebook / Local Docker | Accessible implementations for running AlphaFold2 predictions without extensive local compute resources. |

| PDB (Protein Data Bank) | Repository of experimentally determined 3D structures; the ultimate source of ground truth for training and validation. |

| CASP & CAMEO Targets | Blind test sets for rigorous, unbiased evaluation of prediction method performance. |

| Google Cloud TPU / NVIDIA GPU Clusters | Specialized hardware (Tensor Processing Units, Graphics Units) required for training and efficient inference of large deep learning models like AF2. |

CAPE vs. CASP: A Synergistic Future

The relationship between the two paradigms is complementary and drives progress.

Diagram Title: CAPE-CASP Synergistic Feedback Cycle

The journey from Anfinsen's postulate to AlphaFold's atomic accuracy has been defined by the interplay between foundational biochemistry (CASP's rigorous test) and data-driven engineering (CAPE's rapid iteration). The future of structural bioinformatics lies in leveraging the CAPE paradigm to develop next-generation models, rigorously validated by the CASP framework, ultimately accelerating functional annotation and therapeutic discovery.

The field of protein structure prediction has undergone a revolutionary transformation with the advent of deep learning methods like AlphaFold2 and RoseTTAFold. This advancement necessitates an equally sophisticated evolution in how we assess and validate predictive models. The core objectives of Critical Assessment of Structure Prediction (CASP) and Continuous Automated Performance Evaluation (CAPE) represent two complementary yet distinct paradigms for this task. This whitepaper, framed within the broader thesis of CAPE versus CASP as research infrastructures, provides a technical analysis of their methodologies, experimental protocols, and implications for computational biology and drug development.

Core Methodologies and Technical Frameworks

The CASP Benchmarking Paradigm

CASP is a community-wide, double-blind experiment conducted biennially. Its primary objective is to provide an independent assessment of the state of the art in protein structure prediction.

Experimental Protocol for CASP Target Selection and Assessment:

- Target Identification: Organizers select protein sequences whose structures are soon to be solved by experimental methods (X-ray crystallography, cryo-EM, NMR) but are not yet publicly available.

- Sequence Release: The target sequences are released to predictors in multiple stages over a several-month period.

- Prediction Submission: Research groups worldwide submit their predicted 3D coordinates for each target within a strict deadline.

- Experimental Structure Determination: Experimentalists solve the target structures.

- Blinded Assessment: Independent assessors, who are unaware of the identity of the predictors, compare predictions to the experimental "ground truth" using standardized metrics (e.g., GDT_TS, RMSD, lDDT).

- Results Publication: A public meeting and proceedings detail the performance of all methods, identifying leading approaches and technological trends.

The CAPE Continuous Monitoring Paradigm

CAPE, conceptualized as a response to the rapid pace of post-AlphaFold2 development, aims for continuous, automated evaluation. Its core objective is to track the performance of prediction servers and software tools in near-real-time on newly solved structures.

Experimental Protocol for CAPE Pipeline:

- Automated Data Harvesting: A pipeline continuously monitors the Protein Data Bank (PDB) and other sources for newly released experimental protein structures.

- Sequence Deduplication: New structures are filtered to remove sequences highly similar to those already in the evaluation set, ensuring a test of generalizability.

- Automated Prediction Trigger: The sequence of a new, unique structure is automatically sent to registered prediction servers via their public APIs.

- Standardized Evaluation: Received predictions are compared to the experimental structure using a consistent set of metrics (e.g., pLDDT, RMSD, TM-score) in a fully automated workflow.

- Dynamic Leaderboard Update: A public leaderboard is updated, ranking servers by performance across recent structures, often categorized by protein type (e.g., monomers, complexes, membrane proteins).

Diagram 1: CASP vs. CAPE Workflow Comparison

Quantitative Comparison of Core Metrics and Outcomes

Table 1: Core Operational Characteristics

| Feature | CASP (Benchmarking) | CAPE (Continuous Monitoring) |

|---|---|---|

| Primary Objective | Definitive, snapshot assessment of peak capability. | Tracking real-world, operational performance over time. |

| Temporal Cadence | Discrete, biennial cycles. | Continuous, daily/weekly updates. |

| Target Selection | Curated, forward-looking "hard" targets; often novel folds. | Retrospective, all newly solved PDB structures post-deduplication. |

| Evaluation Focus | Methodological breakthroughs on challenging problems. | Robustness, reliability, and speed on routine & novel structures. |

| Key Output | Authoritative ranking per CASP cycle; detailed methodological insights. | Live leaderboard; performance trends over time. |

Table 2: Technical and Assessment Metrics

| Aspect | CASP | CAPE |

|---|---|---|

| Key Metrics | GDTTS, GDTHA, lDDT-Cα, RMSD, Z-scores. | pLDDT, TM-score, RMSD, Interface Score (for complexes). |

| Assessment Type | Manual, in-depth analysis by human assessors. | Fully automated, standardized pipeline. |

| Target Difficulty | Intentionally high; emphasizes unsolved problems. | Reflects natural distribution of PDB deposits. |

| Throughput | ~100 targets per cycle. | Hundreds to thousands of structures per month. |

| Turnaround Time | Months for full assessment cycle. | Hours/days from PDB release to evaluation. |

Signaling Pathways in Evaluation: From Sequence to Score

The evaluation logic for both paradigms follows a defined computational pathway from the initial input to the final performance metric.

Diagram 2: Evaluation Logic Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for CASP/CAPE Research

| Item | Function & Relevance | Example/Source |

|---|---|---|

| AlphaFold2 (ColabFold) | State-of-the-art prediction server/model; baseline for CAPE monitoring and competitor in CASP. | GitHub: deepmind/alphafold; colabfold.mmseqs.com |

| RoseTTAFold | Leading alternative deep learning method for protein structure and complex prediction. | Server: robetta.bakerlab.org; GitHub: RosettaCommons/RoseTTAFold |

| OpenMM | High-performance toolkit for molecular simulation; used for refinement and molecular dynamics validation of predictions. | openmm.org |

| PyMOL / ChimeraX | Molecular visualization software critical for qualitative assessment and analysis of prediction errors. | pymol.org; www.rbvi.ucsf.edu/chimerax/ |

| PDB (Protein Data Bank) | Primary repository of experimental structures; source of ground truth for both CASP (post-event) and CAPE (continuously). | rcsb.org |

| lDDT Calculation Tool | Computes the local Distance Difference Test, a key accuracy metric used in both CASP and CAPE evaluations. | SWISS-MODEL repository tools |

| TM-score Software | Calculates Template Modeling score, a metric for measuring global fold similarity, commonly used in CAPE pipelines. | Zhang Lab Scripts |

| CAPE Leaderboard API | Programmatic access to continuous evaluation results, enabling integration into meta-analysis and tool development workflows. | (Hypothetical) cape-eval.org/api |

Implications for Research and Drug Development

The coexistence of CASP and CAPE frameworks serves distinct but critical needs. CASP remains the gold standard for methodological stress-testing, driving fundamental research by posing the field's hardest challenges. It answers: "What is the absolute limit of our best methods under ideal focus?"

In contrast, CAPE provides the ecosystem surveillance vital for applied science and drug discovery. It answers: "How reliably and accurately does this publicly available tool perform on the protein I just discovered?" For drug development professionals, CAPE-like monitoring offers practical guidance on which prediction servers to integrate into pipelines for target identification, characterization, and structure-based drug design, ensuring decisions are based on current, demonstrated performance rather than historical reputation.

Within the thesis of CAPE versus CASP, these frameworks are not adversaries but complementary engines driving protein structure prediction forward. CASP sets the ambitious, discrete goals and rigorously defines the state of the art. CAPE ensures that the translation of these advancements into robust, reliable, and accessible tools is transparently monitored. Together, they create a virtuous cycle: breakthrough methods proven in CASP are rapidly deployed and their real-world utility measured by CAPE, whose findings then inform the design of the next CASP experiment. For researchers and drug developers, understanding both paradigms is essential for critically evaluating tools and shaping the future of structural biology.

The field of protein structure prediction is defined by two competing yet complementary paradigms: Critical Assessment of protein Structure Prediction (CASP), a community-wide blind challenge, and Continuous Automated Model Evaluation and Improvement (CAPE), representing high-throughput, automated pipelines. CASP operates as a periodic, discrete "community challenge," marshaling global research efforts toward solving specific target proteins in a competitive, expert-driven environment. In contrast, CAPE embodies the "automated pipeline" philosophy, leveraging continuous integration of new data, automated retraining, and systematic benchmarking without discrete competition cycles. This whitepaper delineates the core architectural differences between these two approaches, analyzing their implications for research velocity, model generalizability, and real-world application in drug discovery.

Foundational Architecture & Operational Model

Community Challenge (CASP) Architecture: The CASP model is built on a centralized, event-driven architecture. A central organizing committee selects and releases sequences of experimentally determined but unpublished protein structures at regular intervals (e.g., biannually). Research groups worldwide submit predictions within a defined timeframe. A separate assessment team then evaluates submissions using rigorous metrics. The architecture is cyclic, punctuated by periods of intense activity (competition) and analysis.

Automated Pipeline (CAPE) Architecture: The CAPE paradigm employs a decentralized, continuous integration/continuous deployment (CI/CD) pipeline. New protein sequences and structures from public databases (e.g., PDB, AlphaFold DB) are ingested automatically. Models are retrained, evaluated, and deployed without human intervention. This architecture is linear and always-on, designed for constant incremental improvement.

Table 1: Core Operational Characteristics

| Characteristic | Community Challenge (CASP) | Automated Pipeline (CAPE) |

|---|---|---|

| Temporal Model | Discrete, periodic cycles (e.g., 2 years) | Continuous, real-time updating |

| Trigger Mechanism | Release of new target proteins | Ingestion of new data into repository |

| Evaluation Cadence | Post-submission, batch analysis | On-the-fly, with automated benchmarking |

| Primary Driver | Human expertise & collaboration | Automated algorithms & compute infrastructure |

| Outcome Focus | Peak performance on hardest targets | Consistent, reliable performance on bulk tasks |

Data Flow & Information Processing

Experimental Protocols & Benchmarking

CASP Assessment Protocol:

- Target Selection & Release: Organizers obtain protein sequences from structural biologists prior to publication. Targets are categorized (e.g., Free Modeling, Template-Based).

- Prediction Window: A strict submission window (typically weeks) is announced. Groups submit predicted 3D coordinates in standardized format.

- Blinded Assessment: The assessment team calculates metrics like GDT_TS (Global Distance Test Total Score), lDDT (local Distance Difference Test), and RMSD (Root Mean Square Deviation).

- Results Analysis: Statistical significance is tested. Performance is analyzed per target, category, and group. Methods are dissected in publications.

CAPE Continuous Evaluation Protocol:

- Data Stream Curation: Automated scripts scrape newly released PDB entries, filter for quality (resolution, R-factor), and cluster to reduce redundancy.

- Train/Validation/Test Splits: Temporal splits are used (e.g., test on proteins deposited after training cut-off) to avoid data leakage.

- Automated Benchmarking: Upon model update, predictions are generated for the held-out test set. Predefined metrics (lDDT, TM-score, RMSD) are computed automatically.

- Performance Dashboarding: Results are logged, compared against previous model versions, and visualized on a live dashboard. Performance regression triggers alerts.

Table 2: Quantitative Performance Metrics Comparison

| Metric | CASP Context (Typical Top Tier) | CAPE Context (Typical High-Throughput) | Interpretation |

|---|---|---|---|

| Average GDT_TS | 75-90 (for Free Modeling targets) | 85-95 (on broad PDB test set) | Higher in CAPE due to easier, curated targets. |

| Average lDDT | 70-85 | 80-92 | lDDT is less sensitive to large backbone shifts. |

| Coverage | ~100-150 unique targets per cycle | 1000s of structures evaluated continuously | CAPE provides broader statistical power. |

| Turnaround Time | Months from target release to assessment | Minutes to hours from model update to evaluation | CAPE enables rapid iteration. |

| Compute Cost | ~10^6-10^7 CPU/GPU hours per group per cycle | ~10^5 CPU/GPU hours per automated training run | CASP effort is concentrated; CAPE is distributed. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials & Platforms

| Item / Solution | Function in Context | Primary Use Case |

|---|---|---|

| AlphaFold2/3 Codebase | Open-source deep learning model for protein structure prediction. | Core engine for both CASP submissions and CAPE pipelines. |

| RoseTTAFold | Alternative deep learning model leveraging trRosetta and neural networks. | Comparative model for benchmarking and ensemble methods. |

| ColabFold | Cloud-based, accelerated pipeline combining MMseqs2 and AlphaFold. | Rapid prototyping and prediction without extensive local compute. |

| Modeller | Tool for comparative or homology modeling by satisfaction of spatial restraints. | Template-based modeling, especially in CASP. |

| PyMOL / ChimeraX | Molecular visualization systems for analyzing and presenting 3D structural predictions. | Visual validation, analysis of active sites, and figure generation. |

| PDBx/mmCIF Format Files | Standardized file format for representing macromolecular structure data. | Submission format for CASP; data ingestion for CAPE. |

| CASP Prediction Center Server | Centralized portal for target distribution and submission collection. | Infrastructure backbone of the CASP challenge. |

| Google Cloud / AWS TPU/GPU | High-performance computing platforms for training massive neural networks. | Providing the computational substrate for both paradigms. |

| Nextflow / Snakemake | Workflow management systems for creating reproducible, scalable bioinformatics pipelines. | Orchestrating complex CAPE-style automated pipelines. |

| MolProbity | Structure validation toolset that checks steric clashes, rotamer outliers, and geometry. | Final quality check of predicted models before submission or release. |

Implications for Drug Development

The architectural divergence creates distinct value propositions for pharmaceutical R&D.

Community Challenge (CASP) Value:

- Pushes Boundaries: Focus on the hardest, often most biologically interesting targets (e.g., membrane proteins, large complexes).

- Methodological Innovation: Competitive pressure yields novel algorithmic insights that can later be productized.

- Expert Curation: Human insight addresses unusual edge cases not well-handled by automated systems.

Automated Pipeline (CAPE) Value:

- Scalability: Enables proteome-wide structural annotation for target identification and safety assessment (e.g., predicting off-target interactions).

- Speed & Integration: Predictions can be integrated directly into drug design pipelines (e.g., for virtual screening, functional site prediction).

- Consistency & Reliability: Provides a stable, always-available resource for non-specialist researchers.

The "community challenge" and "automated pipeline" architectures are not mutually exclusive. The future of structural bioinformatics lies in a hybrid model where CAPE-like pipelines provide the continuous, scalable backbone for everyday research and drug development. Simultaneously, CASP-like challenges will continue to serve as crucial crucibles for innovation, focusing community effort on unsolved problems—such as conformational dynamics, protein-protein interactions with low-affinity binders, and the integration of experimental data—that push the field forward. This synergy ensures that peak performance translates into robust, democratized tools, accelerating the pace of discovery from bench to bedside.

Methodologies, Workflows, and Practical Applications in Drug Discovery

The Critical Assessment of protein Structure Prediction (CASP) provides a rigorous, double-blind experimental framework for evaluating computational protein structure prediction methodologies. This stands in contrast to the Continuous Automated Performance Evaluation (CAPE) system, which offers ongoing, real-time assessment. This whitepaper details the core CASP workflow, a cornerstone for benchmarking progress in the field and driving algorithmic innovation, particularly in the post-AlphaFold2 era. The structured, time-bound CASP model remains essential for validating generalized methodological advances against the constant, application-focused testing of CAPE.

The Core CASP Experiment Cycle: A Technical Breakdown

Target Selection and Release

Experimenters (the CASP organizers) identify protein structures recently solved by experimental means (primarily X-ray crystallography, cryo-EM, and NMR) but not yet publicly deposited in the Protein Data Bank (PDB). These targets are categorized by difficulty (e.g., Template-Based Modeling, Free Modeling) and structural features.

Experimental Protocol for Target Preparation:

- Identification: Establish collaborations with structural genomics centers and individual labs to receive pre-publication coordinates.

- Anonymization: Remove all identifying metadata (e.g., protein name, organism, publication details).

- Sequencing: Provide predictors only with the amino acid sequence(s) of the target. For complexes, sequences may be provided individually.

- Categorization: Classify targets based on the presence of detectable homologs in the PDB at the time of release.

- Release: Sequester the experimental structure in a secure database (the "CASP vault") for subsequent comparison.

Prediction Windows and Submission

Predictors (assessees) are given a strict timeframe to analyze the target sequence and submit their predicted 3D coordinates.

Methodology for Prediction Submission:

- Window Opening: The target sequence is released on the CASP prediction server.

- Analysis Period: Predictors utilize any computational method, often involving multiple sequence alignment generation, deep learning models (e.g., AlphaFold2, RoseTTAFold), and molecular dynamics refinement.

- Formatting: Predictions must conform to the CASP-prescribed format (typically a PDB file with specific header requirements).

- Submission Deadline: Predictions must be uploaded before the window closes, typically spanning 3-4 weeks for regular targets and 1-3 days for "server" targets.

Blind Assessment and Evaluation

After the prediction window closes, independent assessors compare the submissions against the experimentally determined structure using quantitative metrics.

Protocol for Blind Assessment:

- Structure Alignment: Assessors use tools like

TM-alignandLGAto superimpose predicted models onto the experimental structure. - Metric Calculation: Key metrics are computed (see Table 1).

- Z-Score Calculation: For each target and metric, a Z-score is calculated for each prediction group to normalize performance across targets of varying difficulty:

Z = (raw_score - mean_all_groups) / standard_deviation_all_groups. - Ranking: Groups are ranked by summed Z-scores across all targets to determine overall performance.

Table 1: Key CASP Assessment Metrics

| Metric | Full Name | Technical Description | Evaluation Focus |

|---|---|---|---|

| GDT_TS | Global Distance Test Total Score | Percentage of Cα atoms under specified distance cutoffs (1, 2, 4, 8 Å). | Overall fold accuracy. |

| GDT_HA | Global Distance Test High Accuracy | GDT_TS with stricter distance thresholds (0.5, 1, 2, 4 Å). | High-precision atomic detail. |

| RMSD | Root Mean Square Deviation | Root-mean-square of atomic distances after optimal superposition. | Local atomic precision. |

| TM-score | Template Modeling Score | Scale-invariant measure (0-1) assessing topological similarity. | Correct fold topology. |

| lDDT | local Distance Difference Test | Local superposition-free score evaluating per-residue local distance accuracy. | Local atomic plausibility. |

Visualizing the CASP Workflow

Diagram 1: The CASP experiment cycle.

Diagram 2: CASP prediction timeline.

Table 2: Essential Resources for CASP-Style Prediction Research

| Resource / Reagent | Type | Primary Function in CASP Workflow |

|---|---|---|

| AlphaFold2 (Open Source) | Software Suite | End-to-end deep learning system for predicting protein 3D structure from sequence. |

| RoseTTAFold | Software Suite | A three-track neural network for simultaneous sequence, distance, and coordinate prediction. |

| Modeller | Software Suite | Comparative modeling by satisfaction of spatial restraints. |

| HMMER / HH-suite | Bioinformatics Tool | Generation of deep multiple sequence alignments and hidden Markov models for homology detection. |

| PyRosetta | Software Library | Python interface to Rosetta, enabling scripted protein modeling and design. |

| ColabFold | Web Service | Cloud-based, accelerated implementation of AlphaFold2 and RoseTTAFold. |

| PDB (Protein Data Bank) | Database | Source of template structures for comparative modeling; post-assessment verification. |

| UniRef90/UniClust30 | Database | Non-redundant sequence clusters for efficient MSA generation. |

| TM-align / LGA | Assessment Software | Structural alignment tools used by CASP assessors; also for internal validation. |

| CASP Prediction Server | Web Infrastructure | Official portal for target sequence release and model submission. |

The Critical Assessment of protein Structure Prediction (CASP) experiment has served as the gold-standard, biannual competition for evaluating the state of computational protein folding since 1994. While instrumental, its episodic nature and fixed deadlines create latency in assessing rapidly evolving methodologies. In response, the Continuous Automated Protein Structure Prediction Evaluation (CAPE) initiative has emerged as a complementary, real-time paradigm. The CAPE pipeline represents a paradigm shift toward persistent, automated benchmarking, enabling immediate feedback on methodological advances. This whitepaper details the core technical infrastructure of the CAPE pipeline, encompassing automated target selection, model submission, and real-time scoring, framing it as the operational engine that sustains continuous assessment in contrast to CASP's periodic snapshot.

Pipeline Architecture & Core Components

The CAPE pipeline is a cloud-native, microservices-based system designed for high throughput and low latency. Its three-phase workflow integrates seamlessly to provide a continuous evaluation loop.

Automated Target Selection Protocol

Target selection is triggered autonomously upon the public release of a novel protein structure by the Protein Data Bank (PDB) or analogous repositories.

Methodology:

- PDB/RCSB Feed Monitoring: A dedicated service subscribes to the RSS/API feeds of major structural databases (PDB, EMDB, Alphafold DB). New entries trigger a download and parsing job.

- Pre-Filtering Criteria: Entries are filtered using the following rules:

- Experimental Method: Only structures solved by X-ray crystallography (resolution ≤ 2.5 Å) or cryo-EM (resolution ≤ 3.5 Å) are considered to ensure high-confidence ground truth.

- Sequence Uniqueness: The protein's sequence is compared against a rolling database of all previously used CAPE targets via BLAST. A maximum sequence identity threshold (e.g., <30% over >80% coverage) is enforced to prevent redundancy.

- Complexity Heuristics: Simple, short peptides (<50 residues) and structures with excessive missing backbone atoms (>10%) are excluded.

- Canonicalization: The experimental structure is processed to remove non-protein ligands, solvent, and alternate conformations, leaving a canonical protein chain for evaluation.

- Target Release: The curated target sequence, along with metadata (source PDB ID, release date), is published to the CAPE target queue, initiating the prediction window.

Quantitative Target Selection Metrics (Representative 6-Month Period):

| Metric | Value |

|---|---|

| Total PDB Entries Screened | 8,542 |

| Passed Experimental Method Filter | 5,120 |

| Passed Sequence Uniqueness Filter | 892 |

| Final Approved CAPE Targets | 743 |

| Average Target Length (residues) | 312 |

| Median Resolution (Å) | 2.1 |

Automated Model Submission Interface

Prediction groups interact with CAPE via a standardized RESTful API, enabling full automation of model submissions.

Submission Protocol:

- Authentication: Each registered research group uses API keys for programmatic access.

- Target Polling: Groups can query the

/targets/currentendpoint to retrieve the list of active target sequences and their unique CAPE identifiers. - Model Format Specification: Submissions must adhere to a strict format:

- File Format: PDB format or mmCIF.

- Required Fields: Model must contain all atoms of the protein backbone. Chain IDs must match the canonicalized target.

- Metadata: A JSON manifest must accompany each submission, specifying the prediction method (e.g., "AlphaFold2-multimer-v2.3", "RosettaFold", "In-house template-based").

- Automated Submission: Groups upload their predicted structure (PDB file) and manifest to the

/submit/{cape_id}endpoint. The system performs immediate, basic validation (file integrity, sequence alignment check) and acknowledges receipt.

Real-Time Scoring Engine

Upon successful submission, the scoring engine is immediately invoked. The core metric is the Global Distance Test (GDT), specifically GDT_TS, which measures the spatial similarity between the predicted and experimental structures.

Scoring Methodology:

- Structural Alignment: The predicted model is algorithmically superimposed onto the experimental ground truth using the TM-align algorithm, which optimizes the TM-score objective function.

- GDTTS Calculation: For a set of distance cutoffs (1, 2, 4, and 8 Å), the algorithm calculates the percentage of Cα atoms in the prediction that fall within the cutoff distance of their corresponding atoms in the experimental structure after optimal superposition. GDTTS is the average of these four percentages.

- Formula:

GDT_TS = (P1 + P2 + P4 + P8) / 4 - Where

Pxis the percentage of residues under distance cutoff x Å.

- Formula:

- Ancillary Metrics: In parallel, the system calculates:

- RMSD: Root-mean-square deviation of Cα atoms after superposition.

- Local Distance Difference Test (lDDT): A model-quality estimator that is more sensitive to local accuracy.

- Result Publication: Scores, ranking on the specific target, and historical performance trends are published via the public API (

/results/{cape_id}) and updated on the CAPE leaderboard within minutes of submission.

Representative Scoring Data for a Single Target (CAPE20240017):

| Prediction Group | Method | GDT_TS | RMSD (Å) | lDDT | Submission Timestamp (UTC) |

|---|---|---|---|---|---|

| Group A | AlphaFold3 | 92.4 | 0.98 | 0.91 | 2024-07-14 14:32:11 |

| Group B | RosettaFold2 | 86.7 | 1.85 | 0.83 | 2024-07-14 15:11:42 |

| Group C | In-house Hybrid | 78.2 | 2.94 | 0.75 | 2024-07-14 17:45:03 |

Visualizing the CAPE Workflow

Diagram 1: The CAPE Continuous Evaluation Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Essential computational tools and resources for participating in or analyzing the CAPE pipeline.

| Reagent Solution | Function in CAPE Context |

|---|---|

| CAPE RESTful API | Programmatic interface for target retrieval, automated model submission, and results fetching. Enables integration into group-specific prediction workflows. |

| Biopython / BioJava | Libraries for parsing PDB/mmCIF files, handling protein sequences, and performing basic structural operations essential for pre-submission formatting. |

| TM-align / USCF Chimera | Core structural alignment algorithms used by the CAPE scoring engine. Researchers use them locally for pre-submission quality assurance. |

| Docker / Singularity | Containerization technologies to encapsulate complex prediction software (e.g., AlphaFold, RoseTTAFold) ensuring reproducible, portable environments for automated runs. |

| Apache Airflow / Nextflow | Workflow management systems to orchestrate multi-step prediction pipelines, from target fetch to submission, triggered by new CAPE target releases. |

| JupyterLab with NGLview | Interactive environment for the rapid visualization and qualitative comparison of predicted models against experimental ground truth post-scoring. |

Comparative Analysis: CAPE vs. CASP Experimental Protocols

The fundamental difference lies in the experimental design and trigger mechanism.

CASP Experiment Protocol:

- Target Identification: The CASP organizers privately solicit upcoming, unpublished protein structures from experimentalists worldwide.

- Prediction Window: Targets are released in batches over a multi-month period. Predictors have a strict, predefined deadline (typically days to weeks) to submit models for each target.

- Blind Assessment: All predictions are collected before the experimental structures are made public. A centralized team performs a comprehensive, manual evaluation using a suite of metrics.

- Periodic Analysis: Results are analyzed and presented at a post-experiment meeting, culminating in a publication.

CAPE Experiment Protocol:

- Target Trigger: The pipeline automatically triggers on the public release of an experimental structure, removing the need for private collaboration.

- Continuous Window: The target is available for prediction indefinitely, allowing groups to submit models at any time with their latest methods.

- Automated Assessment: Scoring is performed immediately and automatically upon submission using a standardized, transparent metric suite (GDT_TS, lDDT).

- Real-Time Publication: Scores and rankings are published in real-time to a live leaderboard, providing instant feedback.

This contrast positions CAPE not as a replacement for CASP's deep, holistic analysis, but as a continuous, agile complement that captures incremental progress and democratizes access to benchmarking.

Integration with AlphaFold2, RoseTTAFold, and Other AI Models

The field of protein structure prediction has undergone a seismic shift, moving from the biennial Critical Assessment of Structure Prediction (CASP) competition to a continuous, real-time evaluation paradigm exemplified by initiatives like CAPE (Continuous Automated Protein Structure Prediction Evaluation). This whitepaper explores the technical integration of leading AI models—AlphaFold2, RoseTTAFold, and their successors—within this new operational context, providing a guide for researchers and drug development professionals.

Model Architectures and Core Algorithms

AlphaFold2

AlphaFold2, developed by DeepMind, employs a novel end-to-end deep learning architecture based on an Evoformer module and a structure module. The Evoformer processes multiple sequence alignments (MSAs) and pairwise features through attention mechanisms, while the structure module iteratively refines a 3D backbone and side-chain atom cloud.

RoseTTAFold

Developed by the Baker Lab, RoseTTAFold uses a three-track neural network that simultaneously reasons about protein sequence, distance constraints, and 3D structure. Its key innovation is the seamless flow of information between 1D sequence, 2D distance map, and 3D coordinate tracks.

Emerging and Specialized Models

- AlphaFold3 (DeepMind): Extends prediction to protein-ligand, protein-nucleic acid, and post-translational modification complexes using a diffusion-based architecture.

- ESMFold (Meta): A large language model approach that predicts structure from a single sequence, bypassing the need for MSA generation, offering speed advantages.

- OpenFold: An open-source, trainable implementation of AlphaFold2, enabling community-driven model refinement and specialization.

Quantitative Performance Comparison

The table below summarizes key performance metrics from recent CAPE/CASP evaluations and benchmark studies.

Table 1: Performance Metrics of Major AI Structure Prediction Models

| Model | Avg. TM-Score (Monomer) | Avg. GDT_TS (Monomer) | Avg. Interface RMSD (Complex) | Inference Time (Typical Target) | Key Dependency |

|---|---|---|---|---|---|

| AlphaFold2 | 0.88 | 87.2 | 4.5 Å (AF-Multimer) | 10-30 min | Extensive MSA, Templates |

| RoseTTAFold | 0.82 | 80.5 | 5.2 Å | 15-45 min | Extensive MSA |

| AlphaFold3 | 0.91 (Prot) | 89.1 (Prot) | 1.4 Å | ~1-2 hours | Sequence only (Diffusion) |

| ESMFold | 0.75 | 70.3 | N/A | <1 min | Single Sequence |

| OpenFold | 0.87 | 86.5 | Comparable to AF2 | 10-30 min | Extensive MSA |

Metrics derived from CASP15, CAPE benchmarks, and model publications. TM-Score >0.5 indicates correct topology. GDT_TS (Global Distance Test) is a percentage measure of structural accuracy.

Detailed Experimental Protocols for Integration

Protocol: Running an Integrated Prediction Pipeline for a Novel Target

Objective: Generate and evaluate high-confidence structural models for a novel protein sequence by leveraging multiple AI tools.

Materials: See "The Scientist's Toolkit" below.

Methodology:

Sequence Pre-processing & Feature Generation:

- Input the target amino acid sequence in FASTA format.

- MSA Generation: Use JackHMMER or MMseqs2 to search against large sequence databases (UniRef90, BFD, MGnify). For speed-optimized pipelines, use the MMseqs2 API provided by ColabFold.

- Template Search (Optional): Use HHsearch against the PDB70 database to find structural homologs. This step is crucial for AlphaFold2 but omitted for models like ESMFold or AlphaFold3.

Model Inference:

- AlphaFold2/OpenFold: Configure the model to use the generated MSA and template features. Run 5 models (different random seeds) with 3 recycle iterations each. Use Amber relaxation on the top-ranked model.

- RoseTTAFold: Feed the same MSA into the three-track network. Generate multiple models through stochastic sampling.

- Specialized Models: For complexes, run AlphaFold3 or AF-Multimer. For rapid screening, run ESMFold in parallel.

Model Selection & Validation:

- Rank models by the model's internal confidence metric: pLDDT (per-residue) and predicted Aligned Error (PAE) for intra-chain confidence, or ipTM+pTM for complexes.

- Use MolProbity or PDBSum for steric clash and geometric quality analysis.

- Perform consensus analysis across models from different methods. Regions predicted with high pLDDT (>80) and low inter-model variance are high-confidence.

Experimental Cross-Validation (If applicable):

- Design mutagenesis experiments based on predicted active sites/interfaces.

- Use predicted structures for molecular docking studies with known ligands.

- Validate low-resolution topology with SAXS data, or predicted interfaces with cross-linking mass spectrometry.

Protocol: Fine-tuning on a Specific Protein Family

Objective: Improve prediction accuracy for a specialized target class (e.g., GPCRs, antibodies) by fine-tuning a base model.

- Curate a high-quality dataset of structures and sequences for the target family from the PDB.

- Use OpenFold's training script to continue training from a pre-trained checkpoint, focusing on the new dataset. Adjust the learning rate and apply gradient clipping.

- Implement a masking strategy during training to simulate the prediction of variable regions (e.g., antibody CDR loops).

- Benchmark the fine-tuned model against the base model on a held-out set of family-specific targets.

Visualizing Integration Workflows

Diagram 1: Multi-Model Protein Structure Prediction Workflow

Diagram 2: The CAPE Continuous Evaluation Feedback Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for AI-Driven Structure Prediction Research

| Item / Resource | Function / Purpose | Access / Example |

|---|---|---|

| ColabFold | A streamlined, cloud-based pipeline combining fast MMseqs2 MSA generation with AlphaFold2/RoseTTAFold. Dramatically lowers entry barrier. | Google Colab notebook; https://github.com/sokrypton/ColabFold |

| AlphaFold DB | Pre-computed predictions for nearly all cataloged proteins (UniProt). Provides instant models for known sequences, serving as a ground truth proxy. | https://alphafold.ebi.ac.uk |

| OpenFold | Trainable, open-source implementation of AlphaFold2. Essential for model fine-tuning, experimentation, and understanding model mechanics. | https://github.com/aqlaboratory/openfold |

| PyMol / ChimeraX | Molecular visualization suites. Critical for analyzing predicted models, measuring distances, and preparing publication-quality figures. | Commercial & academic licenses; https://www.cgl.ucsf.edu/chimerax/ |

| PDBx/mmCIF Tools | Libraries for handling the mmCIF file format output by AlphaFold2, which contains confidence scores and multiple models. | Biopython, Bio3D, RCSB PDB software suite |

| Molecular Dynamics (MD) Software (e.g., GROMACS, AMBER) | Used to refine and validate AI-predicted structures by simulating physical movements, assessing stability, and exploring conformational dynamics. | Open-source & commercial packages |

| Specialized Datasets (e.g., PDB, SAbDab for antibodies) | Curated, high-quality experimental structures for specific protein families. Used for benchmarking, training, and fine-tuning. | https://www.rcsb.org; http://opig.stats.ox.ac.uk/webapps/sabdab |

Application in Identifying Drug Targets and Binding Sites

The Critical Assessment of protein Structure Prediction (CASP) experiments have long served as the benchmark for evaluating computational protein folding methodologies. However, the translation of structural prediction accuracy to real-world drug discovery outcomes remains a significant challenge. This has catalyzed the emergence of a new paradigm: the Critical Assessment of Protein Engineering (CAPE). While CASP focuses on predicting a protein's native state from its sequence, CAPE shifts the focus to functional prediction, including the identification of binding sites, allosteric pockets, and the mutational impact on ligand affinity. This whitepaper contextualizes modern drug target and binding site identification within this evolving CAPE-centric framework, where the ultimate metric is not folding accuracy alone, but predictive utility in therapeutic design.

Core Methodologies for Target and Binding Site Identification

Sequence-Based and Evolutionary Methods

- ConSurf: Maps evolutionary conservation scores onto a protein structure to identify functionally crucial regions, often corresponding to binding sites.

- AlphaFold2 Multimer & AF-DB: Predicts structures of protein complexes. The AlphaFold Protein Structure Database (AF-DB) provides pre-computed models for vast proteomes, enabling in silico screening for potential drug targets.

Geometry and Energy-Based Methods

- FPocket, SiteMap: Algorithms that detect cavities based on van der Waals spheres and physico-chemical properties (hydrophobicity, polarity) to predict potential binding pockets.

- GRID, MCSS: Probe-based methods that map favorable interaction energies (e.g., for a methyl group, a carbonyl oxygen) within a binding site to characterize pharmacophoric features.

Template-Based and Machine Learning Methods

- COACH, CAVIAR: Meta-servers that integrate predictions from multiple methods (sequence, geometry, template comparison) to achieve higher accuracy.

- DeepSite, DeepSurf, AlphaFold3: Deep learning models trained on protein-ligand complexes to directly predict binding probabilities per residue or atom.

Comparative Performance Metrics

Table 1: Quantitative Comparison of Binding Site Prediction Tools (Top-1 Pocket Detection)

| Method | Type | Average DCC (Å) | Success Rate (>0.5 DCC) | Key Advantage |

|---|---|---|---|---|

| AlphaFold3 | Deep Learning | 1.2-2.5* | ~85%* | Integrates sequence & ligand info |

| DeepSite | Deep Learning | 3.8 | 75% | Robust to apo structures |

| FPocket | Geometric | 4.2 | 71% | Fast, open-source |

| COACH (Meta) | Consensus | 3.5 | 80% | High reliability |

| SiteMap | Energy-Based | 3.9 | 73% | Detailed pharmacophore output |

Estimated from early benchmark studies; DCC = Distance between predicted and true pocket Centers.

Experimental Protocols for Validation

Protocol: Site-Directed Mutagenesis with Functional Assay

Purpose: To validate the functional importance of a computationally predicted binding site.

- In Silico Prediction: Identify key residues in the putative binding pocket using a consensus of tools (e.g., AlphaFold3, Fpocket, conservation analysis).

- Mutagenesis Primer Design: Design primers to introduce point mutations (e.g., alanine substitution) at each target codon.

- Cloning & Expression: Generate mutant constructs via PCR-based site-directed mutagenesis, express and purify wild-type and mutant proteins.

- Binding Assay: Perform Isothermal Titration Calorimetry (ITC) or Surface Plasmon Resonance (SPR) to measure binding affinity (Kd) of a known ligand or fragment.

- Analysis: A significant reduction in binding affinity (>10-fold increase in Kd) for a mutant confirms the residue's role in the binding site.

Protocol: X-Ray Crystallography with Fragment Soaking

Purpose: To obtain experimental structural confirmation of a predicted binding site.

- Protein Crystallization: Grow crystals of the apo (ligand-free) target protein.

- Fragment Library Preparation: Prepare a cocktail of small, soluble fragment molecules.

- Soaking: Briefly immerse the apo crystal in a stabilizing solution containing the fragment cocktail.

- Data Collection & Processing: Collect diffraction data at a synchrotron source. Solve the structure by molecular replacement using the apo model.

- Difference Map Analysis: Calculate a Fourier difference map (e.g., Fobs–Fcalc). Positive electron density in a predicted pocket indicates bound fragment(s), providing definitive experimental validation of the site's druggability.

Visualizing Workflows and Pathways

Diagram 1: Drug Target ID & Validation Workflow (87 chars)

Diagram 2: GPCR Signaling with Binding Sites (76 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Binding Site Validation Experiments

| Reagent / Material | Function / Application | Supplier Examples |

|---|---|---|

| HisTrap HP Column | Immobilized-metal affinity chromatography (IMAC) for purification of His-tagged recombinant proteins. | Cytiva, Thermo Fisher |

| Site-Directed Mutagenesis Kit | Efficiently introduces point mutations into plasmid DNA for functional testing of predicted residues. | Agilent (QuikChange), NEB |

| Protease Inhibitor Cocktail | Prevents proteolytic degradation of target proteins during extraction and purification. | Roche, Sigma-Aldrich |

| HaloTag Technology | Covalent protein tag enabling versatile immobilization for binding assays (SPR, pulldown). | Promega |

| Fragment Library (e.g., 1000 compounds) | A curated collection of small, diverse molecules for experimental screening by X-ray or SPR. | Enamine, Charles River |

| Series S Sensor Chip NTA | SPR chip for capturing His-tagged proteins to measure ligand binding kinetics in real-time. | Cytiva |

| CryoProtection Oil | Protects crystals during flash-cooling in liquid nitrogen for X-ray data collection. | MiTeGen |

| AlphaFold2/3 ColabFold Notebook | Cloud-based, accessible implementation of AlphaFold for custom structure prediction. | DeepMind, GitHub |

The Critical Assessment of protein Structure Prediction (CASP) has long been the benchmark for evaluating computational methods in predicting static protein structures. However, a paradigm shift is emerging towards the Critical Assessment of Protein Engineering (CAPE), which focuses on functional prediction, design, and the interpretation of variants, including disease mutations. While CASP answers "What is the structure?", CAPE addresses "How will the protein function or malfunction?". This whitepaper situates advanced use cases—from de novo design to disease mechanism elucidation—within this evolving CAPE-centric framework, leveraging the most accurate structural models from CASP-tested algorithms as foundational inputs.

Core Methodologies and Experimental Protocols

High-Throughput Variant Effect Prediction for Disease Mutations

Protocol: Deep Mutational Scanning (DMS) Coupled with AlphaFold2/RosettaFold Analysis

- Library Construction: Use site-directed mutagenesis (e.g., PCR-based) or oligo synthesis to create a comprehensive variant library for the target gene.

- Functional Selection/Assay: Clone library into an appropriate expression vector. Use FACS (for fluorescent reporters), growth selection (for antibiotic resistance or essential genes), or phage/bacterial display (for binding affinity) to link variant genotype to phenotypic readout.

- High-Throughput Sequencing: Pre- and post-selection, perform NGS (Next-Generation Sequencing) on the variant pool.

- Enrichment Score Calculation: Compute variant functional scores from the log2 ratio of post- to pre-selection sequence counts.

- Computational Integration:

- Generate structural models for all variants using AlphaFold2 (via ColabFold) or ESMFold.

- Use tools like

foldxorrosetta_ddgto calculate predicted ΔΔG (change in folding stability). - Compute evolutionary conservation scores (e.g., from

omics/evcouplings).

- Model Training: Train a machine learning model (e.g., gradient boosting) on DMS data using structural (ΔΔG, buried surface area), evolutionary, and sequence features to predict pathogenicity for novel variants.

DMS and Structure Integration Workflow

De NovoProtein Design for Therapeutic Scaffolds

Protocol: RFdiffusion/AlphaFlow Based De Novo Backbone Generation

- Specify Design Goal: Define functional site (e.g., enzyme active site geometry, protein-protein interaction epitope) using structural motifs or constraints.

- Conditional Generation: Use RFdiffusion, providing conditioning (e.g., partial structure, inverse folding latent vector) to guide backbone generation towards desired topology.

- Sequence Design: Pass generated backbone through ProteinMPNN or ESM-IF1 to propose optimal, stable, and expressible amino acid sequences.

- In Silico Filtering: Filter designs using:

- AlphaFold2 self-consistency (pLDDT > 85, pTM > 0.8).

- Rosetta

ref2015/beta_nov16energy scores. - Aggregation propensity (e.g., with

amyloidoraggrescan3d). - Structural similarity to target motif (TM-score > 0.7).

- Experimental Validation: Express top designs in E. coli or cell-free system, purify via His-tag, and validate structure via SEC-MALS (monodispersity) and circular dichroism (foldedness). High-resolution validation uses X-ray crystallography or Cryo-EM.

De Novo Protein Design Pipeline

Table 1: Performance Metrics for Disease Mutation Prediction Tools (Trained on ClinVar/DMS Data)

| Tool/Method | AUC-ROC (Pathogenic vs Benign) | Key Features Used | Benchmark Dataset |

|---|---|---|---|

| AlphaMissense | 0.90 - 0.95 | AF2 pLDDT, MSA statistics, protein language model log-likelihoods | ClinVar, HGMD |

| ESM1v (Evolutionary Scale Modeling) | 0.86 - 0.92 | Masked marginal log-likelihoods from 650M-parameter language model | DeepMutDB |

| PrimateAI | 0.91 - 0.94 | Evolutionary conservation from primate sequences, population data | Clinical cohorts |

| FoldX | 0.75 - 0.82 | Empirical force field (ΔΔG of stability) | S2648 benchmark |

| Integrated ML (e.g., Envision) | 0.92 - 0.96 | Structural (ΔΔG), evolutionary, sequence, network features | Large-scale DMS studies |

Table 2: Success Rates in De Novo Protein Design (2022-2024)

| Design Method | Experimental Success Rate (Folded/Monomeric) | High-Res Structure Solved | Typical Design Cycle Time |

|---|---|---|---|

| RFdiffusion + ProteinMPNN | 50% - 80% | ~20% (of expressed designs) | 2-4 weeks (compute + experimental triage) |

| Rosetta ab initio + FixBB | 10% - 25% | ~5% | 4-8 weeks |

| AlphaFlow | 40% - 70% (preliminary) | Data pending | 1-3 weeks |

| Generative LSTM (pre-2022) | 5% - 15% | <2% | 8-12 weeks |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents and Resources for CAPE-Centric Experiments

| Item | Supplier/Resource Example | Function in Protocol |

|---|---|---|

| Phusion U Hot Start DNA Polymerase | Thermo Fisher, NEB | High-fidelity PCR for site-saturation mutagenesis library construction. |

| Twist Bioscience Oligo Pools | Twist Bioscience | Affordable, high-quality synthesized oligo libraries for gene-scale variant synthesis. |

| NEBuilder HiFi DNA Assembly Master Mix | New England Biolabs | Seamless cloning of variant libraries into expression vectors. |

| Ni-NTA Superflow Agarose | Qiagen | Standardized purification of His-tagged designed proteins or variant libraries. |

| Superdex 75 Increase 10/300 GL | Cytiva | Size-exclusion chromatography (SEC) for assessing monodispersity of designed proteins. |

| JASCO J-1500 CD Spectrophotometer | JASCO Inc. | Circular dichroism for rapid assessment of secondary structure and thermal stability. |

| Structure Prediction Servers: | ||

| - AlphaFold Server | EMBL-EBI | Easy-access, no-code AF2 multimer predictions. |

| - ColabFold | GitHub (Sergey Ovchinnikov) | Free, cloud-based AF2/ESMFold with customization via Google Colab. |

| Design Software: | ||

| - RFdiffusion | GitHub (Baker Lab) | State-of-the-art diffusion model for de novo and binder backbone generation. |

| - ProteinMPNN | GitHub (Baker Lab) | Robust inverse folding network for sequence design on fixed backbones. |

| Analysis Suites: | ||

| - PyRosetta | University of Washington | Python interface to Rosetta for energy calculations (ΔΔG) and structural analysis. |

| - FoldX5 | VUB Brussel | Fast empirical calculation of protein stability changes upon mutation. |

Overcoming Challenges: Accuracy Limits, Data Inputs, and Model Optimization

The Critical Assessment of protein Structure Prediction (CASP) has long been the benchmark for evaluating computational methods on well-folded, globular protein domains. However, the Continuous Automated Model Evaluation (CAPE) paradigm, as implemented in resources like the EBI AlphaFold Protein Structure Database, emphasizes continuous, large-scale prediction and real-world applicability. This shift exposes a critical blind spot shared by many leading algorithms: the poor handling of Low-Complexity Regions (LCRs) and Intrinsically Disordered Proteins/Regions (IDPs/IDRs). These segments lack a stable three-dimensional structure under physiological conditions, yet are pivotal in signaling, regulation, and disease. This whitepaper details the technical pitfalls in predicting their behavior and outlines experimental strategies for validation.

Defining the Challenge: LCRs vs. IDRs

While often conflated, LCRs and IDRs represent distinct concepts requiring different analytical approaches.

- Low-Complexity Regions (LCRs): Characterized by a biased amino acid composition, often with repeats of a few residues (e.g., poly-Q, poly-A). They are identified through sequence analysis.

- Intrinsically Disordered Regions (IDRs): Defined by their lack of fixed tertiary structure under native conditions. They are identified through biophysical experiments or high-confidence prediction.

Table 1: Distinguishing Features of LCRs and IDRs

| Feature | Low-Complexity Regions (LCRs) | Intrinsically Disordered Regions (IDRs) |

|---|---|---|

| Primary Definition | Sequence composition bias | Conformational ensemble in solution |

| Key Detection Method | Sequence entropy algorithms (SEG, SLAST) | NMR, CD, SAXS, or predictors (e.g., IUPred2A) |

| May Form Stable Structure? | Can sometimes fold (e.g., coiled coils) | May undergo disorder-to-order transition upon binding |

| Typical Pitfall in Prediction | Over-prediction of false structure due to pattern matching | Under-prediction, often modeled as extended loops with spurious confidence |

Pitfalls in Computational Prediction (CAPE Workflows)

In CAPE-style continuous evaluation, models like AlphaFold2 and RoseTTAFold routinely assign high per-residue confidence (pLDDT) scores to LCRs, generating plausible-looking but biologically incorrect rigid structures. This stems from training data dominated by structured proteins and the reliance on multiple sequence alignments (MSAs), which are shallow or non-existent for disordered regions.

Table 2: Performance of Major Tools on Disordered Regions (CASP15 Data)

| Prediction Tool / Resource | Disorder Prediction Capability | Reported AUC for IDR Detection | Key Limitation for LCRs/IDRs |

|---|---|---|---|

| AlphaFold2 | Indirect (low pLDDT) | ~0.85 (inferred) | Generates overconfident, compact structures for LCRs |

| RoseTTAFold | Indirect (low pLDDT) | ~0.82 (inferred) | Similar to AF2; sensitive to MSA depth |

| IUPred2A | Primary function | 0.92 | Excellent for IDRs, may miss context-dependent folding |

| ESPRITZ | Primary function | 0.94 | High accuracy for various disorder types |

| AF2 with pLDDT<70 | Common heuristic | ~0.88 | High false negative rate for folded domains with low pLDDT |

Key Experimental Protocols for Validation

Computational predictions for LCRs/IDRs must be validated empirically. Below are core methodologies.

Protocol 1: Circular Dichroism (CD) Spectroscopy for Disorder Confirmation

- Objective: Determine the secondary structure content of a purified protein/region.

- Procedure:

- Sample Prep: Purify recombinant protein in phosphate buffer (pH 7.4). Adjust concentration to 0.1-0.3 mg/mL in a low-UV-absorbing buffer.

- Data Acquisition: Load sample into a quartz cuvette (path length 0.1 cm). Acquire spectra from 260 nm to 180 nm at 20°C using a spectropolarimeter.

- Analysis: A spectrum with a strong negative peak near 200 nm and low ellipticity at 222 nm is indicative of disorder. Compare to folded controls (e.g., α-helical: minima at 222/208 nm; β-sheet: minimum at 218 nm).

- Interpretation: Quantify percent disorder using deconvolution algorithms (e.g., CONTINLL).

Protocol 2: Small-Angle X-ray Scattering (SAXS) for Conformational Ensemble Analysis

- Objective: Obtain low-resolution structural information and assess flexibility in solution.

- Procedure:

- Sample & Buffer Matching: Purify protein to >95% homogeneity. Dialyze into suitable buffer (e.g., 20 mM Tris, 150 mM NaCl). Precisely match the reference buffer.

- Synchrotron Data Collection: Measure scattering intensity I(q) across a q-range (e.g., 0.01 < q < 3.0 nm⁻¹). Use multiple concentrations to check for aggregation.

- Data Processing: Subtract buffer scattering. Generate the pair-distance distribution function P(r) via indirect Fourier transform. Compute the dimensionless Kratky plot ((qRg)²I(q)/I(0) vs. qRg).

- Interpretation: A bell-shaped P(r) and a plateau in the Kratky plot indicate a disordered ensemble. Use ensemble modeling tools (e.g., EOM, ENSEMBLE) to generate representative conformers.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Studying LCRs/IDRs

| Reagent / Material | Function & Application |

|---|---|

| SUMO or MBP Fusion Tags | Enhance solubility and expression of aggregation-prone IDRs during recombinant production. |

| TEV or HRV 3C Protease | High-specificity cleavage to remove solubility tags without leaving artifactual residues. |

| Size Exclusion Chromatography (SEC) Matrix (e.g., Superdex 75 Increase) | Analyze hydrodynamic radius and monodispersity of purified IDR samples. |

| NMR Isotope Labels (¹⁵N-NH₄Cl, ¹³C-Glucose) | Enable residue-level conformational analysis via multidimensional NMR spectroscopy. |

| Phase Separation Buffers (e.g., PEG-8000, Ficoll) | Induce and study liquid-liquid phase separation of LCRs in vitro. |

| Disorder-Predicting Software (IUPred2A, PONDR) | Computational first-pass assessment of disorder propensity from sequence. |