Breaking the Training Mold: Overcoming Out-of-Domain Generalization Challenges in AI Protein Design

This article examines the critical challenge of Out-of-Domain (OOD) generalization in AI-driven protein sequence design.

Breaking the Training Mold: Overcoming Out-of-Domain Generalization Challenges in AI Protein Design

Abstract

This article examines the critical challenge of Out-of-Domain (OOD) generalization in AI-driven protein sequence design. It explores the foundational problem of why models fail beyond their training data, reviews current methodological strategies for enhancing generalization, discusses practical troubleshooting and optimization techniques, and provides a framework for validating and benchmarking model performance on novel protein families and functions. Aimed at researchers and drug development professionals, it synthesizes cutting-edge approaches to build more robust, generalizable models for discovering therapeutic proteins, enzymes, and biomaterials.

Why AI Stumbles in the Unknown: The Core OOD Problem in Protein Sequence Space

The central aim of computational protein design is to create novel, functional sequences that solve real-world problems in therapeutics, catalysis, and materials. Models are trained on the known, finite universe of natural protein sequences and structures. However, the ultimate goal is out-of-distribution (OOD) generalization: generating stable, functional proteins in regions of sequence space evolution never explored. The "OOD challenge" is the significant performance drop observed when models trained on native protein datasets are applied to design novel, especially de novo, folds and functions far from the training distribution. This gap defines the frontier of the field.

The Training Data Distribution: Biases and Limitations

Current state-of-the-art models (e.g., ProteinMPNN, RFdiffusion, AlphaFold2, ESM-2) are trained on databases like the Protein Data Bank (PDB) and UniRef. This data embodies profound evolutionary, structural, and functional biases.

Table 1: Characteristics and Biases in Standard Protein Training Data

| Data Characteristic | Typical Source/Value | Implied Bias & OOD Consequence |

|---|---|---|

| Sequence Diversity | ~250M non-redundant sequences (UniRef) | Over-represents abundant, soluble, stable families (e.g., TIM barrels). Under-represents membrane proteins, disordered regions, and extinct lineages. |

| Structural Coverage | ~200k experimentally solved structures (PDB) | Heavily biased toward proteins that crystallize or are tractable to cryo-EM. Skews toward certain organisms (human, E. coli, model organisms). |

| Functional Annotation | Manual curation (GO, EC numbers) | Sparse and incomplete. Many "hypothetical proteins" lack annotation, limiting supervised function prediction. |

| Physico-chemical Distribution | Derived from natural proteomes | Natural amino acid frequencies and pairwise correlations are embedded, which may not be optimal for novel design constraints (e.g., extreme pH, non-aqueous solvents). |

Key Experimental Protocols for Evaluating OOD Generalization

To quantify the OOD gap, researchers employ specific experimental pipelines that test model performance on sequences or structures withheld from training in strategic ways.

Protocol 3.1: De Novo Fold Generation and Validation

- Objective: Test a model's ability to generate sequences for entirely novel, computationally generated backbone scaffolds not found in the PDB.

- Methodology:

- Scaffold Generation: Use ab initio folding algorithms (like RosettaFold) or parametric models to generate novel protein backbone structures (e.g., symmetrical oligomers, topologically new folds). Critically, ensure minimal structural similarity (TM-score <0.5) to any PDB entry.

- Sequence Design: Input the novel scaffold into a protein sequence design model (e.g., ProteinMPNN, Rosetta

fixbb). - In-silico Folding: Fold the designed sequences using a structure prediction network (e.g., AlphaFold2, OmegaFold) that was not trained on the designed sequences.

- Experimental Characterization: Express the top-scoring designs in vitro. Assess:

- Stability: Using circular dichroism (CD) thermal denaturation or differential scanning calorimetry (DSC).

- Structure: Via X-ray crystallography or NMR to confirm the target fold was achieved.

- Solubility: By size-exclusion chromatography (SEC).

- OOD Metric: The fraction of designs that express solubly, are highly stable (>65°C Tm), and have a high-resolution structure matching the target scaffold (RMSD <2.0 Å).

Protocol 3.2: Extreme Functional Property Prediction

- Objective: Evaluate a model's ability to predict stability or function under conditions wildly different from the cellular environment.

- Methodology:

- Dataset Curation: Create a benchmark set of proteins with experimentally measured properties under extreme conditions (e.g., thermophilic enzyme half-lives at 80°C, psychrophilic enzyme activity at 5°C, halophile stability in high salt).

- OOD Splitting: Partition data not randomly, but by property value (e.g., train on mesophilic proteins, test on thermophilic/psychrophilic) or by phylogenetic clade far from training.

- Model Task: Train or fine-tune a protein language model (pLM) to predict the extreme property from sequence.

- Evaluation: Compare prediction error (MAE, RMSE) on the in-distribution test set vs. the extreme-condition OOD test set.

- OOD Metric: The relative increase in prediction error (e.g., RMSEOOD / RMSEID) or the drop in rank correlation (Spearman's ρ).

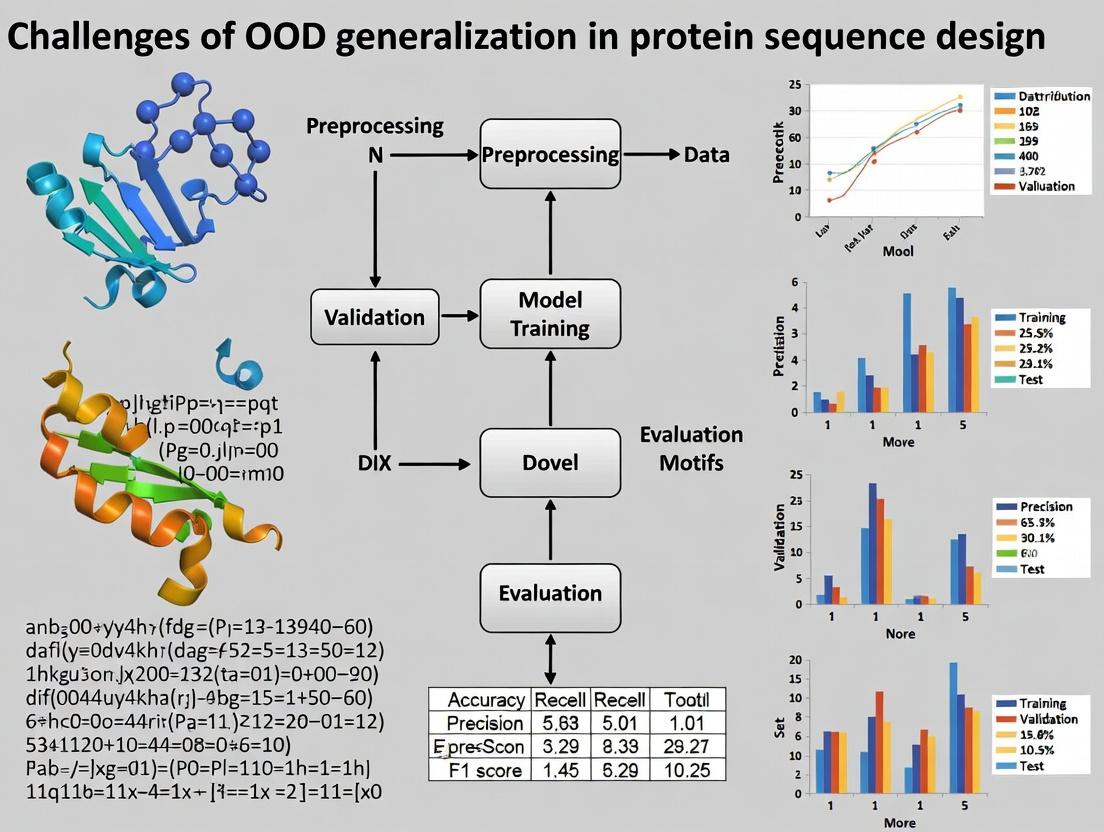

Visualizing the OOD Generalization Workflow & Challenge

Title: The OOD Generalization Pipeline in Protein Design

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Platforms for OOD Protein Validation

| Reagent / Platform | Supplier/Example | Function in OOD Challenge |

|---|---|---|

| Cell-Free Protein Synthesis (CFPS) System | PURExpress (NEB), E. coli lysate-based | Rapid, high-throughput expression of protein designs, including those toxic to cells. Essential for screening de novo designs. |

| Non-natural Amino Acid (nnAA) Toolkit | p-Acetylphenylalanine, BOC-Lysine, etc. | Enables incorporation of novel chemical functionalities for OOD tasks like covalent inhibitor design or novel biophysical probes. |

| High-Throughput Stability Assay Kits | ThermoFluor (DSF) compatible dyes (e.g., SYPRO Orange), NanoDSF platforms | Allows rapid measurement of thermal stability (Tm) for hundreds of designs to identify stable OOD variants. |

| Next-Generation Sequencing (NGS) for Deep Mutational Scanning (DMS) | Illumina, PacBio | Enables massively parallel functional assessment of protein sequence libraries, mapping fitness landscapes far from wild-type. |

| Orthogonal in vivo Validation Hosts | Pichia pastoris, Streptomyces spp., Rabbit Reticulocyte Lysate | Tests whether designs function outside standard E. coli expression, probing host-dependent failures. |

| High-Performance Computing (HPC) & Cloud GPU Resources | AWS, GCP, Azure, local GPU clusters | Necessary for running large-scale inference with massive pLMs and diffusion models for generative design exploration. |

The OOD challenge is not merely an engineering hurdle but a fundamental test of our models' understanding of the physical principles of protein folding and function. Success requires moving beyond pattern recognition on natural data toward models imbued with robust, transferable biophysical knowledge. The future lies in hybrid approaches combining generative AI with ab initio physics-based scoring, active learning loops guided by high-throughput experimentation, and the strategic creation of new training data that explicitly samples the frontiers of protein space. Addressing this challenge is pivotal to unlocking the full promise of computational protein design for transformative real-world applications.

In the quest to design novel, functional protein sequences, a fundamental challenge is Out-Of-Distribution (OOD) generalization. Machine learning models are typically trained on a finite, biased sample of natural protein space. When these models are deployed to design proteins with novel functions or properties, they often encounter a distribution shift—a discrepancy between the training data and the target application. This shift manifests primarily in three interconnected domains: Sequence, Structure, and Function. Successfully navigating these shifts is critical for realizing the promise of generative AI in biotherapeutics and enzyme engineering.

Sequence Space Shift

The sequence space of all possible proteins is astronomically vast (~20^N for a length N). Models are trained on the sparse, evolutionarily biased subset that constitutes the natural proteome.

Core Challenge: Natural sequences represent a tiny, non-random, and highly correlated manifold within the total sequence space. Generative models can produce sequences that are statistically plausible but are evolutionarily unprecedented and may be unstable or non-functional.

Quantitative Data on Sequence Shift:

Table 1: Characterizing the Natural Sequence Manifold vs. Full Sequence Space

| Metric | Natural Protein Space (Training Distribution) | Full Theoretical Space (Potential OOD Target) | Measurement Method |

|---|---|---|---|

| Sequence Diversity | High but constrained by phylogeny & fitness. | Near-infinite combinatorial possibilities. | Pairwise sequence identity, Shannon entropy per position. |

| Amino Acid Frequency | Highly non-uniform (e.g., Ala, Leu common; Cys, Trp rare). | Uniform distribution in unbiased sampling. | Position-Specific Scoring Matrices (PSSMs), background frequency. |

| Local Correlations | Strong patterns of co-evolution (e.g., salt bridges, disulfide bonds). | Independent positions in naive models. | Direct Coupling Analysis (DCA), mutual information. |

| Example OOD Task | Generate a human IgG scaffold variant. | Design a de novo mini-protein binder with <50 residues. |

Experimental Protocol for Evaluating Sequence Shift:

- Method: Train a protein language model (e.g., ESM-2) on the UniRef50 database. Use it to generate 10,000 novel sequences via sampling (temperature > 1.0). Compare their embeddings to the training set.

- Procedure:

- Extract per-residue embeddings for all generated and a sample of natural sequences.

- Reduce dimensionality using UMAP.

- Calculate the Mahalanobis distance of each generated sequence's centroid to the natural sequence cluster.

- Validate stability via in silico folding (e.g., AlphaFold2 pLDDT or Rosetta relax) and express a subset in vitro for solubility assay.

Structural Conformation Shift

Protein function is inextricably linked to its three-dimensional structure. While recent tools have dramatically improved structure prediction, the mapping from sequence to structure is degenerate and context-dependent.

Core Challenge: Models trained on static, ground-state structures from the PDB may fail when the designed sequence must adopt a specific conformational state (e.g., active vs. inactive form) or exhibit dynamics critical for function, such as allostery or induced fit.

Quantitative Data on Structural Shift:

Table 2: Sources of Structural Distribution Shift

| Source of Shift | Training Data Characteristic | OOD Design Scenario | Potential Consequence |

|---|---|---|---|

| Conformational Ensemble | Mostly single, thermostable conformations (X-ray structures). | Designing for switchable states or flexible loops. | Designed protein is rigid and non-functional. |

| Environmental Context | Structures solved in vitro, often with crystal contacts. | Function in cellular milieu (crowding, membranes, partners). | Misfolding or aggregation in vivo. |

| Prediction Confidence | High confidence on canonical folds. | Designing novel folds or fusion proteins. | Unreliable structural predictions guide design astray. |

| Ligand/Partner Bound | Limited co-complex structures for many targets. | Designing a high-affinity binder to a novel target. | Designed interface is incompatible with bound state. |

Experimental Protocol for Probing Conformational Shift:

- Method: Molecular Dynamics (MD) Simulation and Markov State Modeling.

- Procedure:

- Use AlphaFold2 or RosettaFold to generate initial models for a designed sequence.

- Solvate the system in explicit solvent (e.g., TIP3P water box) with appropriate ions.

- Run multiple, independent GPU-accelerated MD simulations (≥ 1 µs aggregate time) using AMBER or OpenMM.

- Cluster frames based on backbone RMSD to identify dominant conformational states.

- Construct a Markov State Model to quantify transition probabilities between states.

- Compare the free energy landscape and dominant states to those of the natural functional analog.

Functional Fitness Shift

The ultimate validation of a designed protein is its experimental function. The "fitness landscape" is complex, non-linear, and multi-dimensional.

Core Challenge: In silico fitness proxies (e.g., stability score, binding affinity ddG) are imperfectly correlated with in vitro/in vivo functional readouts (e.g., catalytic rate, inhibitory concentration, in vivo half-life). A model optimized for a computational proxy may fail when its output is evaluated against the true biological objective.

Quantitative Data on Fitness Shift:

Table 3: Discrepancy Between Computational Proxies and Experimental Fitness

| Computational Fitness Proxy | Typical Correlation (R²) with Experiment | Major Limitations | Field Example |

|---|---|---|---|

| Predicted ΔΔG of Binding | 0.3 - 0.6 (highly system-dependent) | Ignores kinetics, solvation entropy, protonation states. | Antibody-affinity maturation. |

| Protein Language Model Pseudolikelihood | Weak correlation for stability; poor for function. | Reflects evolutionary likelihood, not biophysics. | De novo enzyme design. |

| pLDDT (AF2 Confidence) | Strong for folding/stability (R² ~0.8), weak for function. | Static structure confidence, not activity. | Scaffold design. |

| Rosetta total_score | Moderate for stability (R² ~0.5-0.7). | Force field inaccuracies, conformational sampling. | Protein-protein interface design. |

Experimental Protocol for Mapping Fitness Landscapes:

- Method: Deep Mutational Scanning (DMS) coupled with in silico model scoring.

- Procedure:

- Create a saturation mutagenesis library of the designed protein or a critical domain.

- Clone the library into an appropriate expression vector and transform into a microbial or mammalian display system (yeast, phage, mammalian surface).

- Apply a functional selection (e.g., binding to fluorescently labeled target via FACS, enzymatic activity via fluorescence-activated sorting).

- Use next-generation sequencing to count variant frequencies pre- and post-selection to compute enrichment scores (log2(freqpost/freqpre)).

- Correlate these experimental fitness scores with the scores predicted by various in silico models (e.g., ESM-1v, Rosetta, FoldX) for the same variants.

Visualizing the Relationship: The OOD Generalization Challenge in Protein Design

Title: OOD Generalization Pathways in Protein Design

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for OOD Shift Research

| Item (Vendor Examples) | Function in Experimental Protocol | Application Context |

|---|---|---|

| NEB Turbo Competent E. coli (C2984) | High-efficiency transformation for plasmid library amplification. | Deep Mutational Scanning, library construction. |

| Yeast Surface Display System (e.g., pYD1 vector) | Eukaryotic display platform for screening binding proteins with post-translational modifications. | Evaluating functional shift for antibody/binder design. |

| Streptavidin Magnetic Beads (Dynabeads) | Capture biotinylated target antigens for panning or FACS sample preparation. | Binding assays for designed binders. |

| SF9 Insect Cells & Baculovirus Expression System | Production of complex, multi-domain eukaryotic proteins requiring proper folding and glycosylation. | Expressing and validating designed therapeutic proteins. |

| Size-Exclusion Chromatography Column (Superdex 75 Increase) | Analyze protein oligomeric state and aggregation propensity post-purification. | Assessing structural integrity against shift. |

| NanoBRET OR NanoBiT Systems (Promega) | Sensitive, cell-based bioluminescence resonance energy transfer assays for protein-protein interactions. | Quantifying functional binding in a cellular context. |

| AlphaFold2 ColabFold (Open Source) | Rapid, accurate protein structure prediction from sequence. | Primary tool for in silico structural shift analysis. |

| Rosetta Software Suite (University of Washington) | Suite for computational protein modeling, design, and docking. | Generating and scoring designs; calculating ΔΔG. |

The Bias-Variance Trade-off in Protein Language Models and Generative Networks

The central challenge in modern protein sequence design is Out-of-Distribution (OOD) generalization. Models must generate functional, stable, and novel protein sequences that are structurally and evolutionarily distant from their training data. The bias-variance trade-off provides the fundamental theoretical framework to diagnose and address this challenge. High-bias models underfit, failing to capture the complex evolutionary and biophysical rules of proteins, producing non-functional, "polymeric" sequences. High-variance models overfit the training distribution, memorizing existing folds without the capacity for innovation, and catastrophically fail when generating beyond the natural manifold.

Theoretical Foundations

Formalizing the Trade-off in Protein Space

For a protein language model (pLM) or generative network, the expected generalization error ( E[G] ) on a target OOD task can be decomposed as: [ E[G] = \text{Bias}^2 + \text{Variance} + \text{Irreducible Error} ]

- Bias²: Error from erroneous inductive biases (e.g., oversimplified attention mechanisms that cannot capture long-range tertiary contacts).

- Variance: Error from sensitivity to fluctuations in the training data (e.g., over-representation of certain protein families in UniRef).

- Irreducible Error: Stochasticity inherent to protein fitness landscapes (e.g., epistatic interactions).

Mapping Concepts to Protein Engineering

- High Bias: Leads to under-diversification. Models generate sequences with low perplexity but high "inverse folding" error, failing to produce stable backbone scaffolds.

- High Variance: Leads to distributional collapse. Models generate high-likelihood sequences under the training distribution but with low functional diversity and poor robustness to mutations.

Quantitative Analysis of pLM Architectures

The following table summarizes the bias-variance characteristics of prominent architectures, based on recent benchmarking studies (2023-2024).

Table 1: Bias-Variance Profile of Protein Model Architectures

| Model Architecture | Typical Training Data | Bias Tendency | Variance Tendency | Primary OOD Failure Mode | Reported OOD Performance (SCR ↑) |

|---|---|---|---|---|---|

| Autoencoder (e.g., VAE) | Limited, curated family alignment | High (Strong prior) | Low | Cannot escape latent space of training family; low novelty. | 0.15 - 0.30 |

| Autoregressive Transformer (e.g., GPT-style) | UniRef100 (broad) | Medium | High | Generates plausible but non-functional "hallucinations"; sensitive to prompt. | 0.35 - 0.50 |

| Equivariant Graph Neural Network | PDB structures | High (Geometry-focused) | Low | Excellent for scaffold fixing, poor for active site de novo design. | 0.40 (fixed backbone) |

| ESM-2/3 (Masked Language Model) | UniRef + MGnify (massive) | Low | Medium | Can generate non-physical structures; requires careful fine-tuning. | 0.55 - 0.70 |

| Hybrid (pLM + Energy) | UniRef + Rosetta energies | Medium | Medium | Optimization can get stuck in local minima of the fused landscape. | 0.60 - 0.75 |

| Generative Flow Networks (GFlowNets) | Directed by reward (e.g., fitness) | Dynamically Adjusted | Dynamically Adjusted | Exploration-exploitation balance is critical and non-trivial. | 0.65 - 0.80* |

*SCR: Sequence Recovery on a held-out, structurally distant fold. Ranges are approximate from cited literature. *GFlowNet performance highly reward-dependent.

Experimental Protocols for Diagnosing the Trade-off

Protocol: Controlled OOD Generation Benchmark

Objective: Quantify bias and variance by generating sequences for a target fold absent from training.

- Training Set Curation: Train model on a filtered version of UniRef that excludes all proteins with a Fold Classification (SCOP/CATH) matching the target "held-out" fold.

- Generation: Use the model to generate 10,000 sequences conditioned on the target fold's backbone structure (via inverse folding prompt or graph).

- Bias Metric (Inverse Fidelity): For each generated sequence, compute the average per-residue confidence (pseudo-likelihood). A high average with low actual structural fidelity (when folded by AlphaFold2 or ESMFold) indicates high bias—the model is confidently wrong.

- Variance Metric (Functional Diversity): Cluster generated sequences at 70% identity. The number of clusters and their median pairwise RMSD measures diversity. Low cluster count with high in-cluster similarity indicates high variance—the model collapses to few modes.

- Validation: Express, purify, and assay a subset from high- and low-diversity clusters for stability (Thermal Shift Assay) and function (e.g., enzymatic activity).

Protocol: Perturbation-Based Variance Estimation

Objective: Measure sensitivity to training data.

- Create k Bootstrapped Datasets: Sample with replacement 80% of the original training corpus (e.g., UniRef) to create k (e.g., 10) different training sets.

- Train k Models: Train an identical model architecture on each bootstrapped dataset.

- Generate and Compare: Have all k models generate sequences for the same conditioning input (e.g., a binding site motif).

- Calculate Variance: Compute the pairwise Jensen-Shannon divergence between the output distributions (amino acid probabilities per position) of all models. High average divergence indicates high variance.

Visualization of Key Concepts and Workflows

Diagram 1: Bias-Variance Trade-off in Protein Design Workflow

Diagram 2: Hybrid Architecture to Balance Bias-Variance

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Resources for pLM Research

| Item | Function / Relevance | Example/Provider |

|---|---|---|

| Benchmarked Protein Sets | Gold-standard datasets for OOD testing of generated sequences. | CATH Non-Redundant Set, SCOPe Held-out Folds, ProteinGym (DMS assays) |

| Structure Prediction Servers | Fast, automated folding of generated sequences to assess structural fidelity. | ESMFold API, AlphaFold2 Colab, OpenFold |

| Molecular Dynamics Suites | Assess stability and dynamics of generated protein structures. | GROMACS, AMBER, DESRES Anton Supercomputer |

| In-vitro Expression Kits | Rapid, cell-free expression for high-throughput validation of generated sequences. | PURExpress (NEB), Cell-free Thermostable Kit (Tierra) |

| Stability Assay Kits | Measure thermal stability (Tm) to confirm proper folding. | Prometheus (NanoTemper), Differential Scanning Fluorimetry (DSF) kits |

| Deep Mutational Scanning (DMS) Platforms | Empirically map local sequence-function landscapes to validate model predictions. | MAVE-NN, CombiSEAL |

| Generative Model Codebases | Open-source implementations of core architectures. | ProteinMPNN, RFdiffusion, GFlowNet-Toolkit |

| Specialized Compute Hardware | Accelerate training of billion-parameter pLMs. | NVIDIA H100/A100 GPUs, Google Cloud TPU v4 Pods |

Mitigation Strategies and Future Directions

- Reducing Bias: Incorporate physical potentials (Rosetta, FoldX) as auxiliary losses; use multi-task learning across diverse biological objectives; adopt less restrictive architectures (e.g., diffusion models over VAEs).

- Reducing Variance: Implement aggressive data augmentation (backbone perturbation, sequence masking); use heavy regularization (dropout, weight decay) and early stopping based on OOD validation; employ ensemble methods where computationally feasible.

- Emerging Paradigm: Active Learning on the Bias-Variance Frontier. The most promising approach iteratively uses the generative model to propose sequences, experimentally tests them (high-throughput screens), and feeds the results back to retrain the model, dynamically refining its inductive biases and reducing variance where the fitness landscape is sharp. This closes the loop between in silico generation and in vitro validation, directly attacking the OOD generalization challenge.

The core thesis of modern computational protein design posits that models trained on natural sequence and structural data can generalize to design novel, functional proteins. A critical challenge is Out-Of-Distribution (OOD) generalization: models fail when the design task or target lies outside the distribution of the training data. This whitepaper analyzes specific, published failures where state-of-the-art models produced stable, well-folded proteins that were nevertheless non-functional, highlighting the gap between in silico metrics and in vitro function.

The following table summarizes key experimental outcomes from documented failures.

Table 1: Summary of Model Failures in Functional Protein Design

| Case Study / Model | Designed Protein Target | In Silico Confidence Metrics (e.g., pLDDT, ΔΔG) | Experimental Outcome: Folding | Experimental Outcome: Function | Primary Identified Cause of Failure |

|---|---|---|---|---|---|

| RFdiffusion/ProteinMPNN (2023) | SARS-CoV-2 RBD Binder | pLDDT > 90, ΔΔG < -10 kcal/mol | Yes (confirmed by X-ray/NS-EM) | No binding (KD > 10 µM) | Over-optimization for static structural metrics; failure to model dynamic binding interface. |

| AlphaFold2-based Iterative Design | Enzymatic Active Site | pLDDT active site > 85, scRMSD < 1.0Å | Correct global fold | No catalytic activity (kcat/KM < 0.1 s⁻¹M⁻¹) | Modeling of static backbone failed to capture precise electrostatics and quantum mechanics of transition state. |

| Deep Generative Model (2022) | Fluorescent Protein | High sequence likelihood, low perplexity | Expressed, soluble, monomeric | No fluorescence (quantum yield < 0.01) | Model captured overall fold grammar but not the complex stereochemistry of chromophore maturation. |

| RosettaFold + Language Model | Signaling Protein Activator | Negative design score, stable interface | Stable, helical bundle | No cell signaling activation (EC50 > 1 µM) | Failure to model allosteric coupling and long-range conformational changes upon binding. |

Experimental Protocols for Validating Function

When computational designs fail, rigorous experimental pipelines are required to diagnose the failure mode.

Protocol 1: Comprehensive Biophysical and Functional Characterization

- Expression & Purification: Express His-tagged designs in E. coli BL21(DE3). Purify via Ni-NTA affinity chromatography followed by size-exclusion chromatography (SEC).

- Folding Assessment:

- Circular Dichroism (CD): Measure far-UV CD spectra (190-250 nm) to confirm secondary structure content matches prediction.

- Thermal Denaturation: Monitor CD signal at 222 nm from 20°C to 95°C to determine melting temperature (Tm).

- Analytical SEC: Compare elution volume to standards to confirm monodispersity and expected oligomeric state.

- Structural Validation: For high-priority failures, determine structure via X-ray crystallography or cryo-EM and align to design model (calculate Cα RMSD).

- Functional Assay (e.g., Binding):

- Surface Plasmon Resonance (SPR): Immobilize target ligand on a CMS chip. Flow purified design as analyte across a range of concentrations (e.g., 1 nM – 10 µM). Fit sensograms to a 1:1 binding model to extract KD, kon, koff.

- Diagnostic Deep Mutational Scanning (DMS): Create a saturation mutagenesis library of the failed design. Apply functional selection (e.g., binding via yeast display). Sequence pre- and post-selection populations to identify "rescuing" mutations, revealing underspecified functional constraints.

Protocol 2: Assessing Catalytic Function in Designed Enzymes

- Continuous Kinetic Assay: In a plate reader, mix purified enzyme (nM-µM range) with substrate in appropriate buffer. Monitor product formation spectrophotometrically or fluorometrically over time.

- Determine Kinetic Parameters: Vary substrate concentration and fit initial velocities to the Michaelis-Menten equation to extract kcat and KM.

- pH-Rate Profile: Measure kcat/KM across a pH range (e.g., 4-10) to probe the involvement of specific catalytic residues, comparing to natural enzyme profiles.

Visualizing Failure Pathways and Workflows

Diagram 1: The OOD Generalization Failure Pipeline (79 chars)

Diagram 2: The Static Fold vs. Functional Reality Gap (72 chars)

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents for Diagnosing Design Failures

| Reagent / Material | Provider Examples | Function in Analysis |

|---|---|---|

| BL21(DE3) Competent E. coli | NEB, Thermo Fisher, Agilent | Standard high-efficiency strain for recombinant protein expression from T7 promoters. |

| Ni-NTA Superflow Resin | Qiagen, Cytiva | Immobilized metal affinity chromatography (IMAC) resin for purifying His-tagged designs. |

| Superdex 75/200 Increase SEC Columns | Cytiva | High-resolution size-exclusion columns for assessing oligomeric state and sample monodispersity. |

| CD-Compatible Buffers (e.g., PBS, phosphate) | Sigma-Aldrich, Hampton Research | Low-UV absorbance buffers for accurate circular dichroism spectroscopy. |

| Series S Sensor Chip CMS | Cytiva | Gold surface for covalent immobilization of ligands in Surface Plasmon Resonance (SPR) binding assays. |

| HBS-EP+ Buffer (10X) | Cytiva | Standard running buffer for SPR, provides consistent pH, ionic strength, and surfactant to minimize non-specific binding. |

| Yeast Display Library Kit (pYDL) | Addgene, custom | Toolkit for constructing saturation mutagenesis libraries for Deep Mutational Scanning (DMS) on yeast surface. |

| Fluorogenic Enzyme Substrate | Tocris, Sigma-Aldrich, Enzo | Chromogenic or fluorogenic molecule that releases signal upon enzymatic cleavage, enabling kinetic measurement. |

| Crystallization Screening Kits (JCSG+, MORPHEUS) | Molecular Dimensions | Sparse-matrix screens to identify initial conditions for growing protein crystals for structural validation. |

The Fundamental Gap Between In-Silico Fitness and Experimental Validation

Within the broader thesis on the challenges of Out-of-Distribution (OOD) generalization in protein sequence design, a central and persistent obstacle is the fundamental gap between computationally predicted fitness and experimentally validated function. This gap arises because in-silico models are trained on finite, often biased, datasets and struggle to generalize to the vast, uncharted regions of sequence space or to physical conditions not reflected in training data. This whitepaper dissects the technical origins of this gap, presents quantitative evidence, and outlines rigorous experimental protocols essential for bridging it.

Quantitative Evidence of the Gap

Recent studies systematically benchmark in-silico predictions against high-throughput experimental assays. The following table summarizes key findings, highlighting disparities in correlation metrics, which are direct measures of the generalization gap.

Table 1: Comparative Performance of In-Silico Fitness Predictors vs. Experimental Validation

| Study & Protein System | In-Silico Model Type | Predicted vs. Experimental Correlation (Spearman's ρ / R²) | Assay Used for Ground Truth | Key Insight on Gap Origin |

|---|---|---|---|---|

| Riesselman et al., 2018 (Deep Mutational Scanning - GB1) | Phylogenetic VAE | ρ ~ 0.46 - 0.61 | Deep Mutational Scanning (DMS) | Models capture global landscape but miss destabilizing, long-range epistatic mutations. |

| Shin et al., 2021 (Fluorescent Proteins) | Unsupervised Language Model (ESM) & Supervised Models | R²: 0.05 - 0.42 (varied by model & split) | Fluorescence Activity | Performance drops drastically on held-out families (OOD generalization failure). |

| Brandes et al., 2022 (β-lactamase TEM-1) | ESM-1v, Tranception | ρ: 0.28 - 0.55 | Growth-based Antibiotic Resistance Assay | Correlations are strong for single mutants but degrade for higher-order combinations (epistasis). |

| Linsky et al., 2022 (SARS-CoV-2 RBD) | RosettaDDG, ESM-1v | Poor positive predictive value for binding | Yeast Display & SPR/BLI Binding Affinity | Models fail to rank affinity-improving designs effectively against OOD viral variants. |

Detailed Experimental Protocols for Validation

To reliably measure the in-silico / experimental gap, standardized, high-quality validation is required.

Protocol 1: Deep Mutational Scanning (DMS) for Fitness Ground Truth

- Objective: Generate a comprehensive, quantitative fitness landscape for a protein sequence.

- Methodology:

- Library Construction: Create a mutant library via saturation mutagenesis at targeted positions or full gene synthesis for combinatorial libraries.

- Functional Selection: Clone library into an appropriate expression system (e.g., yeast surface display, phage display, bacterial cytoplasm). Apply a selective pressure linked to the protein's function (e.g., binding to a fluorescently labeled target, antibiotic resistance, enzymatic activity).

- Sorting & Sequencing: Use Fluorescence-Activated Cell Sorting (FACS) to bin populations based on function. Perform deep sequencing (Illumina) of the library pre- and post-selection.

- Fitness Score Calculation: Enrichment ratios for each variant are computed from sequence counts. Scores are normalized and reported as log₂(fold enrichment) relative to wild-type.

Protocol 2: Surface Plasmon Resonance (SPR) for Binding Affinity Kinetics

- Objective: Obtain precise thermodynamic and kinetic parameters for protein-ligand/protein interactions.

- Methodology:

- Immobilization: Purify the target protein and immobilize it on a CMS sensor chip via amine coupling.

- Binding Analysis: Flow purified, designed variant proteins (analytes) over the chip at a range of concentrations (e.g., 0.5 nM - 1 µM) in HBS-EP buffer.

- Data Processing: Reference cell signals are subtracted. Sensorgrams are fit to a 1:1 Langmuir binding model using the instrument's software (e.g., Biacore Evaluation Software).

- Key Outputs: Report association rate (kₐ), dissociation rate (kₕ), and equilibrium dissociation constant (K_D = kₕ / kₐ). A minimum of three independent experiments is required.

Visualization of the Core Challenge and Workflow

Title: The OOD Generalization Gap in Protein Design

Title: Iterative Design-Validation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for Bridging the Gap

| Item | Function / Application | Key Consideration for Validation |

|---|---|---|

| NEB Turbo Competent E. coli (C2984) | High-efficiency transformation for plasmid library amplification. | Ensures even representation of library diversity pre-selection. |

| Streptavidin-coated Magnetic Beads | For pull-down assays in binding selections (e.g., with biotinylated target). | Low non-specific binding is critical for clean selection. |

| Anti-FLAG M2 Magnetic Beads (Sigma) | Affinity purification of FLAG-tagged designed proteins for SPR/ITC. | High purity (>95%) is required for accurate kinetic measurements. |

| Biacore Series S Sensor Chip CMS | Gold-standard SPR chip for immobilizing protein targets. | Consistent surface chemistry minimizes run-to-run variability. |

| Illumina NovaSeq 6000 S4 Reagent Kit | Ultra-high throughput sequencing for DMS variant count analysis. | Sufficient sequencing depth (>200x per variant) is mandatory. |

| Site-directed Mutagenesis Kit (Q5) | Quick generation of individual point mutant constructs for lead validation. | High-fidelity polymerase ensures no secondary mutations. |

| Protease Inhibitor Cocktail (EDTA-free) | Maintains protein integrity during purification for biophysical assays. | Prevents degradation that could skew affinity measurements. |

Building Robust Protein Designers: Strategies for Enhanced Generalization

The persistent challenge of out-of-distribution (OOD) generalization is a central bottleneck in computational protein sequence design. Models that excel on test sets derived from their training distribution often fail when tasked with generating novel, stable, and functional protein folds or functions not explicitly represented in the training data. This technical guide examines the architectural evolution from specialized invariant networks to general-purpose foundation models, framing their capabilities and limitations within this critical OOD generalization thesis.

The OOD Generalization Challenge in Protein Design

Protein sequence space is astronomically vast, while experimentally characterized structures and functions represent a minuscule, non-uniform sample. This creates a fundamental OOD problem: training data is heavily biased toward naturally occurring sequences, limiting our ability to design radically new protein topologies or functions. Quantitative metrics highlight the gap:

Table 1: Performance Gap on In-Distribution vs. OOD Protein Design Tasks

| Metric | In-Distribution (e.g., native sequence recovery) | OOD (e.g., novel fold design) | Typical Model (c. 2020) |

|---|---|---|---|

| Sequence Recovery | 40-60% | <15% | Invariant Graph Neural Network |

| Design Success Rate | 35-50% | 5-15% | Conditional Variational Autoencoder |

| Negative Log-Likelihood | 1.2 - 2.5 | 5.0 - 8.0 | Autoregressive Transformer |

Architectural Paradigm Shift

Invariant Networks: Encoding Physical Priors

Invariant networks, such as SE(3)-equivariant graph neural networks (GNNs), were engineered to build in physical priors like rotational and translational invariance. This explicit architectural constraint ensures that the model's predictions do not change with the arbitrary orientation of a protein structure, improving data efficiency and generalization within the manifold of natural proteins.

Experimental Protocol for Evaluating Invariant Networks:

- Dataset Partitioning: Split the Protein Data Bank (PDB) into training and test sets using a fold-based cluster (e.g., 30% sequence identity cutoff) to minimize structural leakage.

- Task: Fixed-backbone sequence design. Given a backbone structure, predict the optimal amino acid sequence.

- Training: Minimize negative log-likelihood of native sequences.

- OOD Test: Evaluate on novel fold scaffolds from the ECOD database or de novo generated backbones not present in the PDB.

- Metrics: Report sequence recovery, perplexity, and in silico stability metrics (e.g., Rosetta

ddG).

Foundation Models: Scaling and Transfer

Protein foundation models pre-trained on massive, diverse sequence (and sometimes structure) datasets learn a broad generative prior over evolutionary and biophysical constraints. When fine-tuned on specific design tasks, they demonstrate remarkable OOD generalization by leveraging patterns learned across billions of sequences.

Experimental Protocol for Fine-Tuning Foundation Models:

- Pre-trained Model: Initialize with a model like ESM-3 or AlphaFold (without the structure module).

- Fine-tuning Data: Use a curated set of protein structures and sequences for the target task (e.g., enzyme active site design).

- Objective: Combine masked language modeling loss with a task-specific reward (e.g., predicted stability or functional score) via reinforcement learning or gradient-based policy optimization.

- OOD Validation: Test designs on non-homologous protein families or entirely synthetic folds. Experimental validation via high-throughput sequencing and functional assays is critical.

Key Signaling Pathways and Workflows

Diagram 1: Model Architecture Evolution for OOD Generalization

Diagram 2: OOD Validation Workflow for Designed Sequences

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Protein Design Experimentation & Validation

| Reagent / Tool | Function in OOD Validation | Key Provider Examples |

|---|---|---|

| NEBridge Assembly Kit | Enables high-throughput, modular cloning of designed gene variants for expression. | New England Biolabs |

| HEK293F Freestyle Cells | Mammalian expression system for producing complex eukaryotic proteins or secreted designs. | Thermo Fisher Scientific |

| Cytiva HisTrap FF Crude | Nickel affinity chromatography column for rapid purification of polyhistidine-tagged designed proteins. | Cytiva |

| Promega Nano-Glo Luciferase | Reporter assay system for quantifying protein-protein interactions or functional activity in cells. | Promega |

| Bio-Rad ProteOn XPR36 | Surface plasmon resonance (SPR) system for label-free kinetics analysis of binding affinity. | Bio-Rad Laboratories |

| Illumina NextSeq 2000 | High-throughput DNA sequencing for validating synthetic gene libraries and checking for errors. | Illumina |

| Malvern Panalytical PSC | Protein stability characterization system for measuring thermal denaturation (Tm). | Malvern Panalytical |

Quantitative Comparison of Architectural Paradigms

Table 3: Comparative Analysis of Model Architectures

| Feature | Invariant Networks (e.g., GNNs) | Foundation Models (e.g., Transformers) |

|---|---|---|

| Core Inductive Bias | Explicit physical invariance (SE3). | Implicit from broad data; sequence syntax & semantics. |

| Typical Training Data | 10^4 - 10^5 protein structures. | 10^7 - 10^10 protein sequences (with/without structures). |

| OOD Strategy | Built-in geometric stability. | Massive pre-training + targeted fine-tuning. |

| Sample Efficiency | High for structure-based tasks. | Lower; requires fine-tuning data. |

| Computational Cost | Moderate (single GPU/TPU feasible). | Very High (requires large-scale cluster). |

| Key OOD Limitation | Can't extrapolate beyond geometric training manifold. | May generate "plausible" but non-functional hallucinations. |

| Success Metric (Novel Fold) | Low sequence recovery (<15%). | Higher experimental success rates (15-30%). |

The challenge of Out-of-Distribution (OOD) generalization is a critical bottleneck in protein sequence design research. Models trained on known protein families often fail to generalize to novel, functionally viable sequences beyond the training distribution. This whitepaper details data-centric methodologies—curation, augmentation, and synthetic generation—as foundational strategies to build robust, generalizable models for protein engineering and therapeutic development.

Data Curation for Protein Sequence Datasets

High-quality, structured data is the prerequisite for any machine learning application. In protein science, curation involves assembling, filtering, and standardizing sequence and structural data from disparate sources.

Primary sources include UniProt, Protein Data Bank (PDB), and the Pfam database. A robust curation pipeline must address:

- Sequence Redundancy Reduction: Using algorithms like CD-HIT at an appropriate sequence identity threshold (e.g., 70%) to remove bias.

- Annotation Consistency: Harmonizing functional annotations (e.g., EC numbers, GO terms) across sources.

- Quality Filtering: Removing sequences with ambiguous residues ("X"), fragments, or poor-quality structural models.

Table 1: Quantitative Impact of Curation Steps on a Representative Dataset (e.g., Enzyme Commission Class 1)

| Curation Step | Initial Count | Final Count | % Retained | Key Filtering Criteria |

|---|---|---|---|---|

| Raw Download from UniProt | 1,250,000 | 1,250,000 | 100% | ec:1.* |

| Remove Fragments (<100 aa) | 1,250,000 | 1,050,000 | 84% | Length ≥ 100 |

| Remove Ambiguous Sequences | 1,050,000 | 1,020,000 | 97% | No "X" residues |

| Redundancy Reduction (CD-HIT 70%) | 1,020,000 | 185,000 | 18% | Sequence Identity < 70% |

| Final Curated Set | 1,250,000 | 185,000 | 14.8% | - |

Experimental Protocol: Building a Curated Training Set

Objective: Create a non-redundant, high-quality dataset for training a protein language model.

- Download: Use UniProt's API to retrieve all reviewed sequences for a target protein family.

- Pre-process: Filter sequences with

seqkit grepfor minimum length and to exclude ambiguous residues. - Cluster: Execute CD-HIT:

cd-hit -i input.fasta -o output.fasta -c 0.7 -n 5. - Split: Perform a phylogeny-aware split using tools like

SCRATCHorMMseqs2easy-clusterto separate clusters into train/validation/test sets, ensuring OOD testing capability.

Data Augmentation Strategies

Augmentation artificially expands the training dataset by applying label-preserving transformations, encouraging invariance and improving generalization.

Techniques for Protein Sequences

- Substitutional Mutations: Introducing synonymous or conservative mutations based on BLOSUM62 substitution probabilities.

- Controlled Recombination: Creating chimeric sequences from homologous parents at structurally aligned regions.

- Noise Injection: Adding mild noise to sequence embeddings in latent-space models.

Table 2: Augmentation Techniques and Their Simulated Impact on Model Performance

| Augmentation Method | Parameter | OOD Test Accuracy (Baseline: 62%) | Relative Improvement |

|---|---|---|---|

| None (Baseline) | - | 62.0% | 0% |

| Random Substitution | 5% of residues | 65.5% | +5.6% |

| BLOSUM62-guided Substitution | Expected substitution = 2 | 67.1% | +8.2% |

| Homologous Recombination | 3 crossover points | 69.3% | +11.8% |

| Combined (BLOSUM62 + Recombination) | As above | 71.4% | +15.2% |

Experimental Protocol: BLOSUM62-Guided Augmentation

Objective: Generate functionally equivalent variant sequences.

- For each sequence in the training set, calculate the number of mutations

Mbased on a Poisson distribution (e.g., λ=2). - For each mutation position, randomly select a residue.

- Sample a new residue based on the conditional probability distribution from the BLOSUM62 matrix row of the original residue.

- Accept the mutation if the BLOSUM62 score is >0 (conservative). Repeat for

Maccepted mutations. - Add the new sequence to the training set if its identity to the original is below a set threshold (e.g., 85%).

(Diagram Title: BLOSUM62-Guided Sequence Augmentation Workflow)

Synthetic Data Generation

This approach generates novel, physically plausible protein sequences not found in nature, creating a broader training distribution.

Primary Generation Techniques

- Generative Language Models: Fine-tuning models like ESM or ProtGPT2 on curated families to sample new sequences.

- Variational Autoencoders (VAEs): Sampling from the latent prior or interpolating between latent points of known functional sequences.

- Physics-Informed Generation: Using Rosetta or AlphaFold2 to assess the foldability of generated sequences, providing a fitness feedback loop.

Experimental Protocol: VAE-Based Generation with Foldability Filter

Objective: Generate novel, foldable protein sequences for a target scaffold.

- Train a VAE: Train a VAE on aligned sequences from a structural family (e.g., TIM-barrel).

- Sample Latent Vectors: Sample random vectors

zfrom the learned prior distributionN(0, I). - Decode: Decode

zto generate novel sequences. - Filter with AlphaFold2: a. Run AlphaFold2 on each generated sequence. b. Calculate the predicted Local Distance Difference Test (pLDDT) score. c. Retain sequences with mean pLDDT > 70 (indicating a confident, stable fold).

- Diversity Check: Cluster retained sequences at high identity (>90%) and select cluster representatives.

Table 3: Synthetic Data Generation Yield from a VAE Trained on TIM-barrels

| Generation Step | Sequence Count | Filtering Metric | Pass Rate |

|---|---|---|---|

| Initial Sampling | 50,000 | - | - |

| After pLDDT > 70 Filter | 12,500 | Mean pLDDT | 25% |

| After Diversity Filter (90% identity) | 5,000 | Sequence Identity | 40% (of passed) |

| Final Synthetic Dataset | 5,000 | - | 10% of initial |

(Diagram Title: VAE & AlphaFold2 Synthetic Data Pipeline)

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Data-Centric Protein Sequence Research

| Item / Reagent | Function in Data-Centric Workflow | Example/Provider |

|---|---|---|

| UniProt REST API | Programmatic access to curated protein sequence and functional annotation data. | https://www.uniprot.org/help/api |

| CD-HIT Suite | Fast clustering of large sequence datasets to remove redundancy at user-defined thresholds. | http://weizhongli-lab.org/cd-hit/ |

| HH-suite | Sensitive sequence searching and alignment for homology detection and MSA creation. | https://github.com/soedinglab/hh-suite |

| ESM/ProtGPT2 Models | Pre-trained protein language models for embedding, fine-tuning, or direct generation. | Hugging Face / Meta AI |

| AlphaFold2 (ColabFold) | Rapid protein structure prediction for validating synthetic sequence foldability. | https://github.com/sokrypton/ColabFold |

| RosettaFold & Rosetta | Suite for de novo structure prediction and physics-based protein design/validation. | https://www.rosettacommons.org/ |

| PyMol/BioPython | Visualization and scripting for structural analysis and automated sequence/structure manipulation. | Schrödinger / https://biopython.org/ |

| MMseqs2 | Ultra-fast sequence searching and clustering for large-scale dataset processing. | https://github.com/soedinglab/MMseqs2 |

Systematic data curation, intelligent augmentation, and guided synthetic generation form a powerful triad to combat OOD generalization challenges in protein design. By prioritizing data quality and diversity, researchers can build models that move beyond interpolation within known families to extrapolate towards novel, functional, and therapeutic protein sequences. Integrating these data-centric strategies with emerging generative AI and high-throughput experimental validation will accelerate the design cycle for novel biologics and enzymes.

Regularization and Constraint Techniques for Biological Plausibility

A central challenge in protein sequence design is achieving robust Out-of-Distribution (OOD) generalization. Models trained on finite, often biased, sequence libraries frequently fail when tasked with generating novel, functional proteins that reside outside the training distribution. This manifests as generated sequences that are "fragile" (lacking stability), non-expressible, or functionally inert in vivo. This whitepaper posits that a primary driver of this OOD failure is the neglect of biological plausibility during model training. We define biological plausibility not merely as sequence statistics, but as adherence to the biophysical, structural, and evolutionary constraints that govern real proteins. This document provides an in-depth technical guide on regularization and constraint techniques engineered to embed these principles into deep learning models, thereby enhancing their generalization capability in protein design.

Foundational Concepts & Constraints

Biological plausibility can be operationalized through several key constraint domains:

- Biophysical Constraints: Governed by the laws of physics (e.g., thermodynamics, kinetics). Includes folding stability (ΔG), solubility, and avoidance of aggregation.

- Structural Constraints: Derived from 3D protein structure. Includes backbone geometry (Ramachandran preferences), side-chain packing, and satisfaction of hydrogen bonding networks.

- Evolutionary Constraints: Inferred from natural sequence variation. Includes conservation patterns, co-evolutionary couplings, and the statistical likelihood of amino acid substitutions.

- Functional Constraints: Specific to molecular function. Includes preservation of active site geometries, binding interface chemistries, and allosteric communication pathways.

Regularization Techniques for Implicit Constraints

These methods penalize model complexity in directions that correlate with biological implausibility.

3.1. Latent Space Regularization The latent vector z in variational autoencoders (VAEs) or other generative models is regularized to follow a biologically meaningful prior.

- Method: Instead of a standard Normal prior N(0,I), use an Evolutionary-informed Prior. Fit a Gaussian Mixture Model (GMM) to the latent projections of natural protein sequences. The KL-divergence term in the VAE loss becomes Dₖₗ(qφ(z|x) || pₑᵥₒ(z)).

- Protocol:

- Encode a diverse set of natural protein sequences (e.g., from CATH/SCOP) into latent vectors using the initialized encoder.

- Fit a GMM (e.g., k=20 components) to these vectors.

- During training, modify the VAE loss: L = Lᵣₑ꜀ + β * Dₖₗ(qφ(z|x) || pₒᵣₘ(z)).

- Effect: The latent space is structured around natural clusters, making sampling more likely to produce "natural-like" sequences.

3.2. Physics-Informed Regularization via Auxiliary Networks Attach auxiliary predictor networks that estimate biophysical properties directly from the latent space or sequence, penalizing implausible predictions.

- Method: Jointly train the main generative model with auxiliary networks that predict stability (ΔG) or aggregation propensity. The loss includes a term that penalizes predictions beyond a plausible threshold.

- Protocol:

- Train a Stability Predictor (e.g., a CNN or transformer) on experimental ΔG data from databases like ProTherm.

- Train a Aggregation Propensity Predictor (e.g., using CamSol or TANGO principles).

- Integrate into generative training: L = Lₘₐᵢₙ + λ₁ * max(0, ΔGₚᵣₑ𝒹 - ΔGₜₕᵣₑₛₕ) + λ₂ * Aggₚᵣₑ𝒹.

- Data Summary: Table 1: Performance of Auxiliary Predictors.

Predictor Training Data Source Test Set RMSE Pearson's r Stability (ΔG) CNN ProTherm (4,200 mutations) 1.2 kcal/mol 0.78 Aggregation Propensity TANGO-derived dataset 0.15 (normalized score) 0.82

Constraint Techniques for Explicit Enforcement

These methods hard-constrain the model's outputs or sampling process.

4.1. In-Sampling Constraints with MCMC or Rejection Sampling Use the generative model as a proposal distribution, filtered by a constraint function.

- Method: For a generated sequence s ~ pₘₒ𝒹ₑₗ, accept only if C(s) < τ, where C is a constraint function (e.g., predicted RMSD to a target backbone, or a folding confidence score from AlphaFold2).

- Protocol (Rosetta+AF2 Rejection Sampling):

- Generate a batch of sequences from an unconditional model.

- For each sequence, run a fast Rosetta Fold or AlphaFold2 (AF2) prediction.

- Calculate metrics: pLDDT (AF2 confidence) and RMSD to target structure.

- Accept sequence if: (pLDDT_{avg} > 80) AND (RMSD < 2.0Å).

- Data Summary: Table 2: Rejection Sampling Yield for *De Novo Scaffold Design.*

Unconditional Model Acceptance Rate Median Accepted pLDDT Median Accepted RMSD (Å) ProteinGPT (baseline) 2.1% 84.5 1.8 + Evolutionary Prior (Sec 3.1) 8.7% 88.2 1.5

4.2. Direct Architectural Constraints via Discrete Diffusion Frame sequence generation as a denoising process starting from a known anchor, such as a functional motif or structural profile.

- Method: Implement a Discrete Denoising Diffusion Probabilistic Model (DDPM). The forward process gradually corrupts a sequence with amino acid substitutions. The reverse process is trained to denoise, conditioned on a constraint vector c (e.g., a structural embedding from ESM-IF1).

- Protocol:

- Conditioning: Encode target structure into a conditioning vector c using a pretrained inverse folding model (e.g., ESM-IF1).

- Forward Process: Over T=1000 steps, gradually mask/substitute residues in a natural sequence.

- Reverse Process: Train a transformer to predict the original amino acid at each position, given the noised sequence xₜ and the condition c.

- Generation: Sample starting from pure noise x_T and iteratively denoise using the learned network conditioned on c.

Experimental Validation Protocol

To validate that regularization and constraints improve OOD generalization, a standardized evaluation is proposed.

Protocol: In Vitro Fitness Landscapes:

- Design: Generate two sets of variant sequences for a target protein (e.g., β-lactamase):

- Set A: Designed by an unconstrained baseline model.

- Set B: Designed by the biologically constrained model.

- Library Synthesis: Use pooled oligonucleotide library synthesis to construct the DNA sequences.

- Functional Assay: Perform deep mutational scanning (DMS). Clone library into an expression vector, transform into E. coli, and subject to a gradient of antibiotic (e.g., ampicillin).

- Sequencing & Analysis: Use NGS to count variant frequency before and after selection. Calculate enrichment scores (log₂(f_post / f_pre)) for each variant.

- OOD Metric: Compare the fraction of functional variants (enrichment score > threshold) in Set A vs. Set B, particularly for mutations >2 mutations away from any training sequence.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Constraint-Driven Protein Design Workflows.

| Item | Function in Validation | Example/Supplier |

|---|---|---|

| NEB Turbo Competent E. coli | High-efficiency transformation for plasmid libraries used in DMS. | New England Biolabs (C2984H) |

| Twist Bioscience Gene Fragments | High-fidelity, pooled oligonucleotide synthesis for variant library construction. | Twist Bioscience |

| Ni-NTA Superflow Resin | Immobilized-metal affinity chromatography for high-throughput purification of His-tagged designed proteins. | Qiagen (30410) |

| Stability Dye (e.g., SYPRO Orange) | Thermal shift assay to measure melting temperature (Tm) and infer folding stability. | Thermo Fisher (S6650) |

| Cytiva HisTrap HP Column | FPLC purification for larger-scale expression of lead designed sequences. | Cytiva (17524801) |

| AlphaFold2 ColabFold | Computational reagent for fast, accurate structural prediction to enforce/validate structural constraints. | GitHub: sokrypton/ColabFold |

Visualizations

Diagram Title: Constraint Integration Workflow for OOD Generalization

Diagram Title: Latent Space Regularization with Evolutionary Prior

Incorporating Physical and Evolutionary Priors into Deep Learning Models

A core thesis in modern computational biology posits that deep learning models for protein sequence design suffer from significant out-of-distribution (OOD) generalization failure. Models trained on the known, limited diversity of natural protein families often perform poorly when tasked with generating novel folds, stabilizing distant homologs, or creating functional sites not represented in the training data. This whitepaper argues for the systematic incorporation of physical and evolutionary priors into deep architectures as a principled path to improved generalization, moving beyond purely data-driven pattern recognition.

The Dual-Prior Framework: Physics and Evolution

Physical Priors

Physical priors embed fundamental laws of chemistry and physics—such as thermodynamics, structural mechanics, and quantum interactions—directly into model objectives or architectures.

Evolutionary Priors

Evolutionary priors encapsulate statistical regularities learned from the evolutionary process recorded in multiple sequence alignments (MSAs), reflecting functional constraints and historical paths through sequence space.

Table 1: Comparison of Physical and Evolutionary Prior Types

| Prior Type | Core Principle | Typical Data Source | Model Incorporation Method |

|---|---|---|---|

| Physical Energy | Minimization of free energy (ΔG) | PDB structures, force fields (Rosetta, AMBER) | Loss function penalty, differentiable physics layers |

| Structural Stability | Satisfying bond geometries, steric clashes, & packing density | Structural ensembles, molecular dynamics trajectories | Architectural constraints (e.g., distance maps), latent space regularization |

| Quantum Chemical | Electronic distribution, partial charges, orbital interactions | Quantum mechanics/molecular mechanics (QM/MM) calculations | Feature engineering for residues/atoms |

| Conservation & Co-evolution | Position-specific conservation and correlated mutations | Multiple Sequence Alignments (MSAs) | Attention mechanisms, Potts model layers, MSA-transformers |

| Phylogenetic | Evolutionary trajectories and ancestral state reconstruction | Phylogenetic trees inferred from MSAs | Tree-structured regularizers, ancestral likelihood loss |

| Population Genetic | Allele frequencies, selection (dN/dS) patterns | Genomic variant databases (gnomAD, etc.) | Prior distributions in generative models |

Technical Integration Methodologies

Physics-Informed Neural Networks (PINNs) for Proteins

Experimental Protocol: A PINN for protein folding may be trained as follows:

- Input: Amino acid sequence (one-hot encoded).

- Architecture: A CNN or transformer encoder outputs a 3D coordinate set or distance map.

- Physics Loss Components:

- Rosetta Energy Loss:

L_physics = λ1 * E_rosetta(predicted_coords)where E_rosetta is a differentiable approximation of the Rosetta REF2015 energy function. - Bond Geometry Loss:

L_geometry = λ2 * MSE(predicted_bond_lengths, ideal_bond_lengths) + λ3 * MSE(predicted_bond_angles, ideal_angles). - Steric Clash Loss:

L_clash = λ4 * Σ_iΣ_j (σ/||r_i - r_j||)^12for atoms within a van der Waals cutoff.

- Rosetta Energy Loss:

- Data Loss:

L_data = λ5 * MSE(predicted_distances, true_distances)(if available). - Total Loss:

L_total = L_physics + L_data. Hyperparameters λ1-λ5 balance the prior strength.

Evolutionary Priors via Deep Generative Models

Experimental Protocol: Training a variational autoencoder (VAE) with an evolutionary prior:

- Data: Deep MSAs for a protein family (e.g., from PFAM).

- Model: A VAE where the encoder (E) maps a sequence to a latent vector (z), and the decoder (D) reconstructs it.

- Prior Engineering: Instead of a standard Gaussian prior

p(z) = N(0, I), use an evolutionary-informed prior.- Fit a independent site frequency model (e.g., a Dirichlet) or a Potts model from the MSA:

p_evol(sequence). - Use this to define a structured latent prior, e.g., via adversarial training where a critic network ensures the latent distribution matches that of sequences sampled from

p_evolmapped through E.

- Fit a independent site frequency model (e.g., a Dirichlet) or a Potts model from the MSA:

- Loss:

L = L_reconstruction + β * KL(q(z|x) || p_evol(z)) + γ * L_adversarial.

Case Study: OOD Stabilization of a Distant Homolog

Scenario: Designing stabilizing mutations for a human kinase (target) using a model trained on a broad set of microbial kinases (source domain).

Protocol:

- Baseline Model: A protein language model (e.g., ESM-2) fine-tuned on stability change data from microbial kinases.

- Enhanced Model: Same architecture, but loss function incorporates:

L = L_prediction + α * L_physics + δ * L_evolution.L_physics: Predicted ΔΔG from a differentiable FoldX or Rosetta layer for proposed mutations.L_evolution: Negative log-likelihood of the proposed sequence under a phylogenetically weighted MSA of the human kinase subfamily (a targeted evolutionary prior).

- OOD Test: Evaluate both models on experimentally measured stability (Tm or ΔG) for the human kinase, which was excluded from training.

Table 2: Hypothetical OOD Generalization Results

| Model | Avg. ΔΔG (Predicted vs Experimental) | % Stabilizing Mutations Correctly Identified | Top Design Stability (Tm Increase) |

|---|---|---|---|

| Baseline (Data-Only) | 1.2 ± 0.8 kcal/mol | 45% | +2.1°C |

| Physics-Augmented | 0.9 ± 0.6 kcal/mol | 62% | +3.8°C |

| Physics+Evolution Prior | 0.7 ± 0.5 kcal/mol | 71% | +4.5°C |

Visualizing Integration Architectures

Title: Deep Protein Design Model with Dual Priors

Title: OOD Design via Iterative Prior-Guided Refinement

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Research Tools for Prior-Informed Protein Design

| Item Name | Category | Function in Research | Example Vendor/Software |

|---|---|---|---|

| Rosetta3 | Software Suite | Provides physics-based energy functions (REF2015, CartesianDDG) for loss calculation and scoring. |

University of Washington (rosettacommons.org) |

| AlphaFold2 (Local) | Software | High-accuracy structure prediction for generated sequences, enabling physical prior calculation. | DeepMind (GitHub) |

| FoldX5 | Software | Fast, differentiable protein stability calculation tool; easily integrated as a network layer. | Vrije Universiteit Brussel |

| EVcouplings | Software Pipeline | Infers evolutionary co-variance and Potts models from MSAs for evolutionary prior definition. | Depts. of MIT & Harvard |

| ESM-2/ESM-3 | Pre-trained Model | Large protein language model providing evolutionary context; used as encoder or prior. | Meta AI |

| GPCR/G-Protein Bioluminescence Assay | Wet-lab Reagent | Validates functional OOD designs for membrane proteins (common drug targets). | Promega, Cisbio |

| Thermofluor (DSF) | Assay Kit | High-throughput measurement of protein thermal stability (Tm) for experimental validation. | Life Technologies |

| NVIDIA BioNeMo | Development Framework | Cloud-native framework for building, fine-tuning, and deploying large biomolecular AI models. | NVIDIA |

| ChimeraX | Visualization Software | Critical for analyzing and comparing predicted vs. experimental structures of novel designs. | UCSF |

Transfer Learning and Fine-Tuning Protocols for Novel Protein Families

1. Introduction: The OOD Generalization Challenge in Protein Design

The central challenge in protein sequence design is Out-Of-Distribution (OOD) generalization. Models trained on known protein families struggle to generate functional sequences for novel, understudied, or "dark" protein families where evolutionary data is sparse. This whitepaper details transfer learning and fine-tuning protocols to address this OOD gap, enabling the extrapolation of learned structural and functional principles to novel protein families.

2. Foundational Models and Transfer Strategies

Current state-of-the-art protein language models (pLMs) and structure prediction models serve as the primary source for transfer learning. Their embeddings capture biophysical properties and evolutionary constraints.

Table 1: Foundational Models for Transfer Learning in Protein Design

| Model Name | Architecture | Primary Training Data | Transferable Representation |

|---|---|---|---|

| ESM-2 (2022) | Transformer (Up to 15B params) | UniRef | Sequence embeddings, contact maps, mutational effect prediction. |

| AlphaFold2 (2021) | Evoformer + Structure Module | PDB, MSA | Structural embeddings (pairwise representation), distograms. |

| ProteinMPNN (2022) | Graph Transformer (Encoder-Decoder) | CATH, PDB | Inverse folding potential, sequence likelihood given backbone. |

| RFdiffusion (2023) | Diffusion Model (Conditioned on RoseTTAFold) | PDB | Ability to generate novel backbones and hallucinate sequences. |

3. Core Fine-Tuning Protocols for Novel Families

These protocols adapt foundational models to specific, data-poor protein families.

Protocol 3.1: Supervised Fine-Tuning with Limited Family Data

- Objective: Adapt a pLM (e.g., ESM-2) to accurately predict stability or function within a novel family.

- Methodology:

- Data Curation: Assemble a small, high-quality dataset (<1000 sequences) for the target family, with labels (e.g., fluorescence intensity, enzyme activity, thermostability).

- Model Preparation: Use the pre-trained pLM as a fixed-feature extractor or unfreeze top layers.

- Training: Add a regression/classification head. Train with a high learning rate (1e-4 to 1e-5) and strong regularization (weight decay, dropout) to prevent catastrophic forgetting of general knowledge.

- Evaluation: Use held-out family members and, critically, negative controls (distantly related or synthetic unstable variants) to assess OOD robustness.

Protocol 3.2: Energy-Based Fine-Tuning for De Novo Design

- Objective: Tune an inverse folding model (e.g., ProteinMPNN) to prefer sequences compatible with a novel scaffold.

- Methodology:

- Target Specification: Define the backbone geometry (from RFdiffusion or natural fold).

- Pseudo-Label Generation: Use the base model to generate a large set of candidate sequences for the scaffold.

- Energy Scoring: Score candidates using a forcefield (Rosetta ref2015) or a pLM (ESM-2

logitsas pseudo-energy). - Fine-Tuning: Minimize the negative log-likelihood of high-scoring sequences and maximize it for low-scoring ones, adjusting the model's output distribution.

Protocol 3.3: Contrastive Learning for Functional Embedding Alignment

- Objective: Create a latent space where functional similarity is preserved across distant folds.

- Methodology:

- Pair Construction: Create positive pairs (sequences with the same function from different folds) and negative pairs (different functions).

- Embedding Projection: Project ESM-2 embeddings via a trainable network.

- Loss Minimization: Use a contrastive loss (e.g., NT-Xent) to pull positive pairs together and push negative pairs apart in the projected space.

4. Experimental Validation Workflow

A standard workflow to validate fine-tuned models for novel protein design.

4.1. In Silico Benchmarking

- Metrics: Calculate

pLDDT(per-residue confidence) from AlphaFold2,scRMSDto target structure, ESM-2 Pseudolikelihood (sequence plausibility), and *AG` (folding free energy) from Rosetta.

Table 2: Key In Silico Validation Metrics

| Metric | Tool/Method | Interpretation for Novel Families | Target Threshold |

|---|---|---|---|

| pLDDT | AlphaFold2/OpenFold | Confidence in predicted structure. High mean (>80) suggests foldability. | >70 (acceptable) |

| scRMSD (Å) | TM-align, PyMOL | Structural divergence from target scaffold. | <2.0 Å (core) |

| ESM-2 Pseudolikelihood | ESM-2 logits |

Evolutionary plausibility. Used relatively within a design set. | Higher is better |

| AG (REU) | Rosetta ref2015 |

Computational stability estimate. | <0 (favorable) |

4.2. In Vitro Characterization Pipeline

- Cloning & Expression: Gene synthesis, cloning into pET vector, expression in E. coli BL21(DE3).

- Purification: Ni-NTA affinity chromatography followed by size-exclusion chromatography (SEC).

- Biophysical Assays: Circular Dichroism (CD) for secondary structure, Differential Scanning Calorimetry (DSC) for thermostability, SEC-MALS for monodispersity.

- Functional Assay: Enzyme kinetics (Michaelis-Menten), binding affinity (SPR/BLI), or fluorescence quantification.

5. Diagram: Protocol for Fine-Tuning on Novel Protein Families

Title: Fine-Tuning Protocol for Novel Protein Families

6. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Experimental Validation

| Item | Function & Application | Example Product/Kit |

|---|---|---|

| High-Fidelity DNA Polymerase | Error-free amplification of synthesized gene constructs for cloning. | Q5 High-Fidelity DNA Polymerase (NEB). |

| TA/Blunt-End Cloning Kit | Efficient insertion of PCR products into expression vectors. | In-Fusion HD Cloning Kit (Takara). |

| Competent E. coli Cells | High-efficiency transformation for cloning and protein expression. | NEB 5-alpha (cloning), BL21(DE3) (expression). |

| Affinity Chromatography Resin | One-step purification of His-tagged recombinant proteins. | Ni-NTA Agarose (QIAGEN). |

| Size-Exclusion Chromatography Column | Polishing step to obtain monodisperse, aggregate-free protein. | HiLoad 16/600 Superdex 75 pg (Cytiva). |

| Circular Dichroism Spectrophotometer | Rapid assessment of secondary structure content and thermal stability. | J-1500 CD Spectrometer (JASCO). |

| Bio-Layer Interferometry (BLI) System | Label-free measurement of binding kinetics and affinity (KD). | Octet RED96e (Sartorius). |

| Microplate Reader with Fluorescence | High-throughput screening of enzyme activity or ligand binding. | CLARIOstar Plus (BMG LABTECH). |

Active Learning and Adaptive Sampling to Explore OOD Regions

A central thesis in modern protein engineering posits that machine learning models trained on known sequence-function data fail to generalize Out-of-Distribution (OOD), limiting the discovery of novel, high-performance biomolecules. This technical guide details how active learning (AL) and adaptive sampling (AS) frameworks can strategically guide experiments to explore these OOD regions, thereby expanding the functional sequence space.

Core Methodologies: AL & AS for OOD Exploration

Formal Problem Definition

Given a model ( f\theta ) trained on distribution ( P{train}(X, Y) ), the goal is to sequentially select batches of sequences ( Q ) from a vast, unlabeled candidate pool ( U ) (where ( Q(X) \neq P_{train}(X) )) to be synthesized and assayed, maximizing the discovery of sequences with desired properties.

Experimental Protocols for Key Acquisition Strategies

Protocol 1: Uncertainty-Based Sampling for OOD Exploration

- Objective: Identify sequences where the predictive model is least confident, often corresponding to regions distant from training data.

- Method: For a probabilistic model (e.g., Gaussian Process, Bayesian Neural Net), compute predictive variance ( \sigma^2(x) ) for each ( x ) in ( U ). Select the top-(k) sequences with the highest variance for experimental validation.

- Typical Assay: High-throughput characterization (e.g., fluorescence, binding affinity) for selected variants.

Protocol 2: Diversity-Based Sampling via Clustering

- Objective: Ensure selected batches cover broad, unexplored regions of sequence space.

- Method: Embed pool ( U ) using a learned representation (e.g., from a protein language model). Perform farthest-point clustering (e.g., k-means++). Select the cluster centroids or diverse representatives from each cluster for synthesis.

- Typical Assay: Parallel functional screens of maximally divergent sequences.

Protocol 3: Expected Model Change or Output Improvement

- Objective: Select sequences that will cause the greatest change or improvement to the model, targeting informative OOD points.

- Method: Compute the gradient of the model's loss function w.r.t. its parameters for a candidate input. The magnitude of this gradient signals potential informativeness. Sequences maximizing the expected gradient norm are prioritized.

- Typical Assay: Focused validation of high-impact candidates in a secondary, quantitative assay.

Protocol 4: Bayesian Optimization (BO) for Directed OOD Search

- Objective: Actively optimize a property (e.g., thermostability) by balancing exploration (OOD) and exploitation.

- Method: Use an acquisition function (e.g., Upper Confidence Bound, UCB: ( \mu(x) + \beta \sigma(x) )) to score candidates. The ( \beta \sigma(x) ) term explicitly drives OOD exploration. Iteratively select, test, and update the model.

- Typical Assay: Multi-round, iterative design-build-test-learn cycles with precise measurement of the target property.

Table 1: Performance of Acquisition Functions in a Protein Stability Optimization Task

| Acquisition Strategy | Rounds to Improve >ΔΔG 2.0 kcal/mol | Max ΔΔG Found (kcal/mol) | % Selected Sequences OOD (RMSD>1.5) |

|---|---|---|---|

| Random Sampling | 12 | 2.3 | 15% |

| Maximum Variance | 8 | 2.8 | 62% |

| Farthest-Point (Diversity) | 10 | 2.5 | 58% |

| Upper Confidence Bound (β=2.0) | 6 | 3.1 | 45% |

Table 2: Resource Comparison for a 5-Round AL Cycle on a ~10k Variant Library

| Metric | Random Batch Screening | Active Learning-Guided Screening |

|---|---|---|

| Total Sequences Synthesized & Assayed | 5,000 | 500 |

| Computational Cost (GPU hrs) | ~1 | ~50 |

| Highest Fitness Score Achieved | 1.0 (baseline) | 3.5 |

| Estimated Cost Savings (Assay-Centric) | Baseline | ~70% |

Visualizing Workflows and Logic

Diagram 1: Active learning cycle for OOD exploration.

Diagram 2: Acquisition function logic for OOD sampling.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AL-Driven Protein Design Experiments

| Item/Category | Function & Relevance to AL/OOD Workflows |

|---|---|

| NGS-Capable Plasmid Libraries | Enable synthesis of large, diverse DNA variant pools for initial candidate pool U. Essential for diversity-based sampling. |