Beyond Pearson: Why Spearman Correlation is Powering the Next Generation of Protein Function Prediction

This article explores the critical role of Spearman's rank correlation coefficient in advancing the field of protein function prediction.

Beyond Pearson: Why Spearman Correlation is Powering the Next Generation of Protein Function Prediction

Abstract

This article explores the critical role of Spearman's rank correlation coefficient in advancing the field of protein function prediction. As computational models grow increasingly complex—integrating sequences, structures, and multi-modal data—the non-parametric, rank-based nature of Spearman correlation has become a gold standard for robust performance evaluation. We cover its foundational principles, methodological applications in state-of-the-art models like S3F and PRESCOTT, strategies for optimization and troubleshooting, and its use in rigorous model validation and benchmarking. Aimed at researchers and drug development professionals, this review synthesizes how Spearman correlation provides crucial insights into a model's ability to capture the true functional landscape of proteins, ultimately guiding more reliable predictions for therapeutic design and functional annotation.

What is Spearman Correlation and Why Does it Matter for Protein Science?

In the quantitative analysis of scientific data, correlation statistics are indispensable for measuring the strength and direction of associations between two variables. While the Pearson correlation coefficient is the most widely known measure, it is based on parametric assumptions that are not always met by real-world research data, particularly in emerging fields like bioinformatics and protein science. Spearman's rank correlation coefficient (denoted as ρ or rs*) serves as a nonparametric alternative that measures the strength and direction of monotonic relationships between two ranked variables, whether the relationship is linear or not [1] [2].

This nonparametric measure is named after Charles Spearman, who developed the coefficient in 1904 [2]. The fundamental principle behind Spearman's correlation is that it assesses how well an arbitrary monotonic function can describe the relationship between two variables, without making any assumptions about the frequency distribution of the variables [3]. This characteristic makes it particularly valuable for analyzing data that may not satisfy the strict normality assumption required for Pearson's correlation, or when dealing with ordinal data or numerical data with outliers.

The application of Spearman's correlation has become increasingly prominent in protein function prediction research, where researchers often need to evaluate the association between computational predictions and experimental measurements. For instance, in benchmarking protein mutation effect predictors or evaluating protein complex structure modeling accuracy, Spearman's correlation provides a robust statistical framework for validation [4] [5] [6]. Its rank-based nature makes it less sensitive to outliers and skewed distributions, which are common in high-throughput biological data.

Theoretical Foundation of Spearman's Rank Correlation

Key Concepts and Mathematical Formulation

Spearman's rank correlation operates on a simple yet powerful premise: it converts raw numerical values into ranks and then measures the correlation between these ranks. The conversion to ranks eliminates the influence of extreme values and normalizes the distribution, making the method resistant to outliers that could disproportionately influence parametric measures [7]. The mathematical formulation of Spearman's correlation depends on whether there are tied ranks in the data.

For data without tied ranks, the Spearman coefficient is calculated using the following formula:

$$rs = 1 - \frac{6 \sum di^2}{n(n^2 - 1)}$$

Where:

- $d_i$ = the difference between the two ranks for each observation

- $n$ = the number of observations [1] [2]

When tied ranks exist (i.e., when two or more values in either variable are identical), the formula requires adjustment. In such cases, Spearman's correlation is calculated similarly to Pearson's correlation but applied to the rank values:

$$rs = \frac{\operatorname{cov}(RX, RY)}{\sigma{RX} \sigma{R_Y}}$$

Where:

- $\operatorname{cov}(RX, RY)$ = the covariance of the rank variables

- $\sigma{RX}$ and $\sigma{RY}$ = the standard deviations of the rank variables [2]

The resulting coefficient ranges from -1 to +1, where +1 indicates a perfect monotonic increasing relationship, -1 indicates a perfect monotonic decreasing relationship, and 0 suggests no monotonic relationship [8].

Assumptions and Appropriate Use Cases

Unlike parametric correlation measures, Spearman's rank correlation has minimal assumptions, which contributes to its versatility across diverse research contexts:

- Ordinal, Interval, or Ratio Data: The test requires that both variables are at least ordinal, meaning the values can be ranked in a meaningful order. It can also be applied to interval and ratio data [1] [7].

- Monotonic Relationship: The variables should have a monotonic relationship, meaning that as one variable increases, the other tends to either consistently increase or decrease, though not necessarily at a constant rate [1] [8].

- Paired Observations: Each observation must consist of two paired values, with both measurements coming from the same experimental unit or subject [9].

A key advantage of Spearman's correlation is its appropriateness for analyzing monotonic but nonlinear relationships. For example, in protein fitness prediction, the relationship between computational scores and experimental measurements may follow a logarithmic or asymptotic pattern rather than a straight line, making Spearman's correlation particularly suitable for evaluation [6].

Table 1: Comparison of Correlation Coefficient Types

| Feature | Spearman | Pearson | Kendall |

|---|---|---|---|

| Data Type | Ordinal, interval, ratio | Interval, ratio | Ordinal, interval, ratio |

| Relationship Type | Monotonic | Linear | Monotonic |

| Assumptions | Monotonic relationship, paired observations | Linear relationship, normality, homoscedasticity | Monotonic relationship, paired observations |

| Sensitivity to Outliers | Low | High | Low |

| Interpretation | Strength/direction of monotonic relationship | Strength/direction of linear relationship | Probability of concordant vs discordant pairs |

Comparative Analysis of Correlation Coefficients

Spearman vs. Pearson Correlation

The choice between Spearman and Pearson correlation depends largely on the nature of the data and the research question. While both coefficients range from -1 to +1 with similar interpretations for direction and strength, they differ fundamentally in their underlying assumptions and applications.

Pearson correlation measures the degree of linear relationship between two continuous variables and assumes that both variables are normally distributed, the relationship is linear, and the data is homoscedastic (constant variance) [3]. Violations of these assumptions can lead to misleading correlation values. In contrast, Spearman correlation assesses monotonic relationships without distributional assumptions, making it more robust for non-normal data or in the presence of outliers [7].

The practical distinction becomes evident when analyzing relationships that are consistent in direction but not necessarily linear. For example, in protein fitness prediction, the relationship between evolutionary scale model (ESM) scores and experimental fitness measurements may follow a monotonic but curvilinear pattern. In such cases, Spearman correlation would more accurately capture the association than Pearson correlation [6].

Spearman vs. Kendall's Tau

Kendall's tau is another nonparametric rank-based correlation measure that, like Spearman, assesses monotonic relationships. While both are valid for ordinal data and robust to outliers, they differ in computational approach and interpretation. Spearman's coefficient is based on the difference in ranks, while Kendall's tau calculates the probability of concordant versus discordant pairs [3].

In research applications, Spearman correlation is generally more sensitive to detecting monotonic relationships, especially with larger sample sizes, while Kendall's tau is often preferred for smaller samples or when there are many tied ranks. In protein research, Spearman remains more widely reported, particularly in benchmark studies comparing computational predictions with experimental measurements [5] [6].

Table 2: Guidelines for Selecting Correlation Coefficients in Protein Research

| Research Scenario | Recommended Coefficient | Rationale |

|---|---|---|

| Comparing model predictions with experimental measurements | Spearman | Less sensitive to outliers and non-linear monotonic relationships |

| Assessing linear relationship between structural features | Pearson | Appropriate when linearity and normality assumptions are met |

| Small sample size with many tied ranks | Kendall | More accurate with limited data and tied values |

| Evaluating docking scores with binding affinity | Spearman | Binding relationships often follow monotonic but non-linear patterns |

Application in Protein Function Prediction Research

Benchmarking Protein Mutation Effect Predictors

In protein engineering and variant interpretation, accurately predicting the functional consequences of mutations remains a fundamental challenge. The VenusMutHub benchmark study exemplifies how Spearman correlation is employed to evaluate 23 computational models across 905 small-scale experimental datasets spanning 527 proteins with diverse functional properties including stability, activity, binding affinity, and selectivity [5].

In this rigorous assessment, Spearman correlation serves as the primary metric for comparing model predictions with direct biochemical measurements rather than surrogate readouts. This approach provides a more accurate assessment of model performance for specific molecular functions. The rank-based nature of Spearman correlation is particularly valuable in this context because different predictors may output scores on different scales, and the relationship between prediction scores and experimental measurements may not be linear across the entire range of effects.

The benchmark results reveal substantial variation in model performance across different protein properties, with certain models excelling at predicting stability effects while others perform better for binding affinity predictions. This nuanced evaluation, facilitated by Spearman correlation, provides practical guidance for selecting appropriate prediction methods in protein engineering applications where accurate prediction of specific functional properties is crucial [5].

Evaluating Protein Complex Structure Modeling

Recent advances in protein complex structure prediction have generated powerful new tools such as AlphaFold-Multimer, AlphaFold3, and DeepSCFold. In the comprehensive evaluation of these methods, Spearman correlation has emerged as a key statistical measure for assessing model accuracy.

DeepSCFold, a recently developed pipeline for improving protein complex structure modeling, uses sequence-based deep learning to predict protein-protein structural similarity and interaction probability. When benchmarked on protein complex targets from CASP15, DeepSCFold demonstrated significant improvements in accuracy, achieving an 11.6% and 10.3% improvement in TM-score compared to AlphaFold-Multimer and AlphaFold3, respectively [4].

The critical role of Spearman correlation in this evaluation extends beyond global structure assessment to local interface accuracy. For antibody-antigen complexes from the SAbDab database, DeepSCFold enhanced the prediction success rate for antibody-antigen binding interfaces by 24.7% and 12.4% over AlphaFold-Multimer and AlphaFold3, respectively [4]. These improvements were quantified using Spearman correlation between predicted and actual structural quality metrics, highlighting how this statistical measure enables precise comparison of methodological advances in this rapidly evolving field.

Assessing Structure-Based Fitness Prediction

The application of Spearman correlation in protein fitness prediction has revealed important insights about the relationship between structural features and protein function. In a systematic exploration of zero-shot structure-based protein fitness prediction, researchers used Spearman correlation to evaluate how different modeling choices affect downstream fitness prediction accuracy [6].

This research examined the performance of structure-based models on the ProteinGym benchmark, which contains deep mutational scanning (DMS) substitution assays measuring quantitative protein functions across different taxonomies and function types. The findings demonstrated that the choice of protein structure (predicted versus experimental) significantly impacts prediction accuracy, with AlphaFold2 predicted structures achieving higher Spearman correlation than experimental structures in 74.5% of monomeric proteins and 80% of multimeric proteins [6].

Furthermore, the analysis revealed challenges in predicting fitness consequences for intrinsically disordered regions (IDRs), which lack a fixed 3D structure. The study found that 28% of unique UniProt IDs in ProteinGym are proteins annotated with disordered regions in sequences covered by DMS assays. These disordered regions adversely affected prediction accuracy across all model types, highlighting an important limitation in current structure-based prediction approaches that researchers quantified using Spearman correlation [6].

Experimental Protocols for Correlation Analysis

Protocol 1: Benchmarking Computational Models

Objective: To evaluate the performance of protein mutation effect predictors using Spearman correlation between computational predictions and experimental measurements.

Materials and Methods:

- Dataset Curation: Collect small-scale experimental data from published literature and public databases, ensuring direct biochemical measurements rather than surrogate readouts. The VenusMutHub study utilized 905 datasets spanning 527 proteins across diverse functional properties [5].

- Model Selection: Include computational models representing various methodological paradigms, such as sequence-based, structure-informed, and evolutionary approaches. The benchmark should encompass both established and newly developed predictors.

- Prediction Generation: Run each model on the curated datasets using standardized input formats and parameter settings to ensure comparable results.

- Correlation Calculation: Compute Spearman correlation coefficients between model predictions and experimental measurements for each protein and functional property.

- Statistical Analysis: Compare correlation coefficients across models and protein properties using appropriate statistical tests to determine significant differences in performance.

- Visualization: Create scatter plots with ranked data to visually inspect monotonic relationships and identify potential outliers or nonlinear patterns.

Interpretation: Higher Spearman correlation values indicate better model performance. However, researchers should also consider the confidence intervals and p-values associated with each correlation coefficient to assess statistical significance.

Protocol 2: Evaluating Structure-Function Relationships

Objective: To assess the relationship between protein structural features and functional measurements using Spearman correlation.

Materials and Methods:

- Structural Feature Extraction: Calculate relevant structural parameters (e.g., solvent accessibility, secondary structure, residue depth, B-factors) from experimental or predicted structures.

- Functional Data Collection: Obtain quantitative functional measurements for protein variants, preferably from standardized assays such as deep mutational scanning.

- Data Integration: Map structural features to corresponding functional measurements, ensuring proper alignment of sequence positions.

- Rank Transformation: Convert both structural features and functional measurements to rank values, handling ties appropriately by assigning average ranks.

- Correlation Analysis: Compute Spearman correlation between structural features and functional measurements across all variants.

- Stratified Analysis: Perform subgroup analyses based on protein regions (e.g., ordered vs. disordered regions, binding interfaces vs. solvent-exposed surfaces) [6].

Interpretation: Significant Spearman correlations suggest monotonic relationships between structural features and function. Positive correlations indicate that higher-ranked structural values associate with higher-ranked functional measurements, while negative correlations suggest inverse relationships.

Research Reagent Solutions

Table 3: Essential Computational Tools for Protein Correlation Analysis

| Tool/Resource | Function | Application Example |

|---|---|---|

| ProteinGym Benchmark | Standardized platform for evaluating fitness predictions | Assessing model performance on DMS assays [6] |

| AlphaFold2/3 | Protein structure prediction | Generating structural features for correlation analysis [4] [6] |

| DeepSCFold | Protein complex structure modeling | Predicting protein-protein interaction interfaces [4] |

| VenusMutHub | Curated benchmark for mutation effect predictors | Comparing model performance across diverse protein properties [5] |

| STRING Database | Protein-protein interaction network data | Source for combined score prediction in PPI networks [10] |

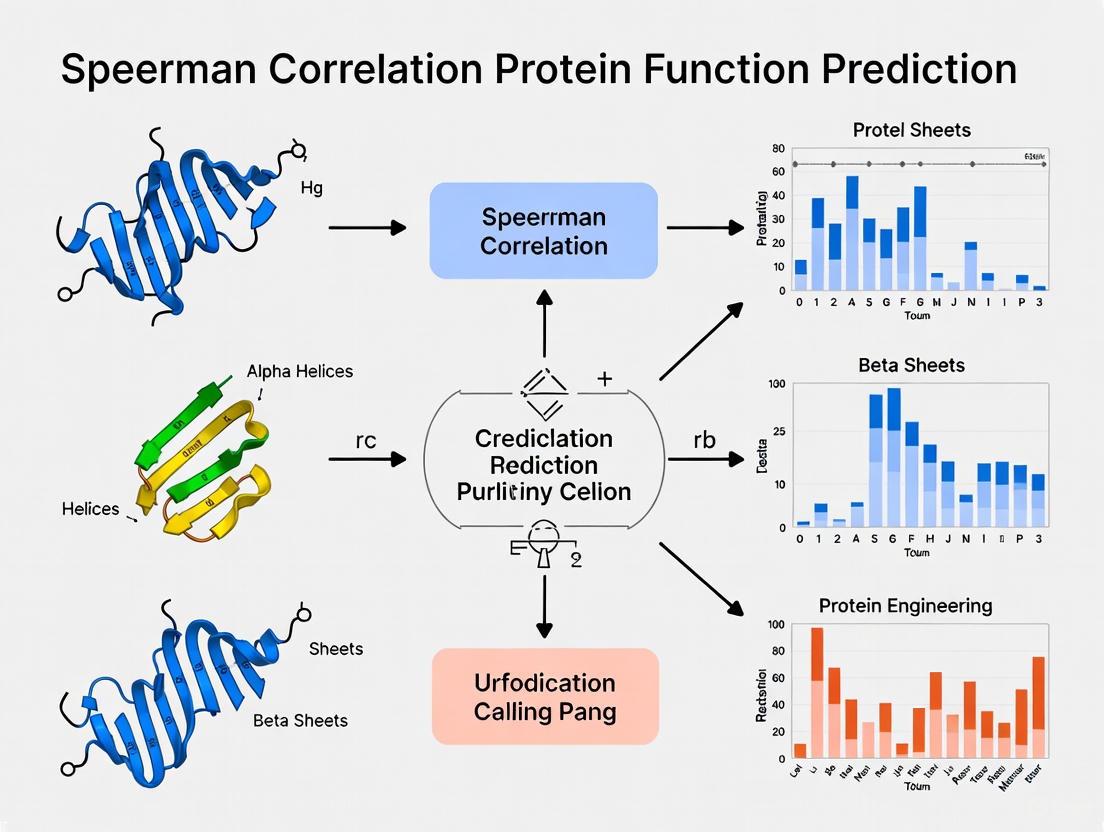

Workflow Visualization

Spearman Correlation Analysis Workflow

Spearman's rank correlation coefficient provides a robust, nonparametric method for assessing monotonic relationships between variables, making it particularly valuable in protein research where data often violate parametric assumptions. Its application in benchmarking protein mutation effect predictors, evaluating structure modeling accuracy, and assessing structure-function relationships has established it as an essential statistical tool in computational biology and bioinformatics.

The continued development of protein prediction models and the expansion of experimental datasets will further reinforce the importance of Spearman correlation in validating computational methods. As the field advances toward more integrated multi-modal approaches, this nonparametric measure will remain crucial for objectively comparing model performance and driving progress in protein function prediction research.

In the data-driven world of protein bioinformatics, accurately evaluating computational predictions against experimental results is paramount. Spearman's rank correlation coefficient (ρ) serves as a fundamental statistical metric for this purpose, measuring the strength and direction of monotonic relationships between two ranked variables. Unlike Pearson's correlation, which assesses linear relationships, Spearman's ρ evaluates whether one variable tends to increase as another increases, without requiring the relationship to be linear [2]. This makes it particularly valuable for analyzing complex biological relationships in protein fitness landscapes and Deep Mutational Scanning (DMS) assays, where relationships are often monotonic but not necessarily linear.

The calculation of Spearman's ρ involves converting raw data points into ranks and assessing how well the relationship between these ranks can be described by a monotonic function. For a dataset without tied ranks, the coefficient is calculated as ρ = 1 - (6∑dᵢ²)/(n(n²-1)), where dᵢ represents the difference between the two ranks for each observation, and n is the sample size [11]. The resulting value ranges from +1 for a perfect positive monotonic relationship to -1 for a perfect negative relationship, with 0 indicating no monotonic association [2]. This statistical power has established Spearman's correlation as a cornerstone metric in protein function prediction research, enabling robust benchmarking of computational methods against experimental data.

The Role of Spearman Correlation in Protein Fitness Landscape Analysis

Mapping Sequence to Function in Fitness Landscapes

Protein fitness landscapes represent the complex relationship between protein sequence and function, where each point in sequence space maps to a fitness value representing a measurable property like catalytic activity, thermostability, or binding affinity [12]. Navigating these landscapes efficiently requires accurate computational models that can predict the functional consequences of mutations. Spearman's correlation provides a crucial validation metric for assessing how well these predictions correlate with experimental measurements, guiding protein engineers toward beneficial mutations.

Machine learning approaches have revolutionized our ability to infer sequence-function relationships from experimental data. These models employ various architectures including multi-layer perceptrons (MLPs), convolutional neural networks (CNNs), recurrent neural networks (RNNs), and transformers [12]. As these models generate fitness predictions for thousands of variants, Spearman's correlation offers a non-parametric measure to evaluate how well the predicted fitness rankings align with experimentally determined rankings, enabling researchers to select the most reliable models for protein engineering applications.

Benchmarking Predictive Models with Spearman Correlation

In protein fitness prediction, Spearman's correlation is frequently employed to benchmark computational methods against experimental data. For instance, the VenusMutHub benchmark study evaluated 23 computational models across diverse protein properties including stability, activity, binding affinity, and selectivity [5]. This comprehensive assessment utilized small-scale experimental datasets featuring direct biochemical measurements rather than surrogate readouts, providing a rigorous evaluation framework where Spearman correlation helped identify the most appropriate prediction methods for specific protein engineering applications.

The GCPNet-EMA method, a geometric message passing neural network for protein structure accuracy estimation, demonstrates the application of Spearman correlation in benchmarking. In rigorous computational benchmarks, this method demonstrated significantly higher correlation with ground-truth measures of structural accuracy compared to baseline state-of-the-art methods, with Spearman correlation being one key metric for evaluating multimer structure accuracy estimation [13]. Similarly, SPIRED-Fitness, an end-to-end framework for predicting protein structure and fitness from single sequences, employs correlation metrics to validate its performance against experimental data [14].

Deep Mutational Scanning (DMS) and Correlation Analysis

Fundamentals of DMS Workflows

Deep Mutational Scanning (DMS) has emerged as a powerful high-throughput approach for mapping protein fitness landscapes by experimentally measuring the functional effects of thousands of mutations in parallel [15]. The standard DMS workflow involves four critical steps: (1) generating a comprehensive library of genetic variants through saturation mutagenesis or error-prone PCR; (2) subjecting the library to functional selection that links genotype to phenotype; (3) using next-generation sequencing to count variants before and after selection; and (4) analyzing the data to calculate enrichment or fitness scores for each mutation [15]. This process generates massive datasets quantifying the functional impact of mutations, providing ideal ground-truth data for validating computational predictions.

The quality of DMS data hinges on several technical considerations often overlooked. Library quality and bias must be carefully controlled, as uneven variant distribution in the initial library can skew results. The stringency of selection pressure requires optimization—overly stringent selection may only identify top variants while weak selection fails to distinguish functional from non-functional variants. Additionally, sufficient sequencing depth and error correction strategies like Unique Molecular Identifiers (UMIs) are essential for accurate variant quantification [15]. These factors directly impact the reliability of fitness scores used in Spearman correlation analysis.

DMS Data as a Benchmark for Computational Methods

DMS data provides the experimental foundation for evaluating computational protein fitness predictors. The rise of multi-task learning approaches that leverage DMS data from multiple sources has further emphasized the importance of robust correlation metrics [16]. These methods aim to learn general representations of protein fitness that transfer across proteins and functions, with Spearman correlation serving as a key metric for evaluating their performance on held-out test data.

The application of DMS extends across various protein engineering scenarios, including mapping antibody-antigen interfaces with single-residue resolution, predicting viral evolution by identifying escape mutations, and guiding protein engineering efforts by providing a comprehensive roadmap of mutations that enhance stability, activity, or binding affinity [15]. In each application, Spearman correlation provides a standardized way to assess how well computational predictions match experimental results, enabling data-driven protein design.

Comparative Performance of Protein Fitness Prediction Methods

Table 1: Performance Comparison of Protein Structure and Fitness Prediction Methods

| Method | Type | Key Features | Reported Performance |

|---|---|---|---|

| GCPNet-EMA | Geometric neural network | Uses 3D graph representations with scalar and vector features | >10% higher correlation vs. ground-truth per-residue accuracy vs. AlphaFold 2 [13] |

| SPIRED-Fitness | End-to-end framework | Integrates structure prediction with fitness estimation | Comparable to state-of-the-art with 5x faster inference [14] |

| GeoFitness | Structure-based | Uses features from AlphaFold-predicted structures | Improves prediction of mutational effects on fitness [14] |

| EnQA-MSA | Accuracy estimation | Leverages multiple sequence alignments | Baseline for tertiary structure EMA [13] |

| AF2-plDDT | Accuracy estimation | AlphaFold 2's predicted lDDT | Lower correlation with ground-truth vs. GCPNet-EMA [13] |

Table 2: Evaluation Metrics for Protein Structure Accuracy Estimation Methods

| Method | Per-Residue Correlation | Per-Model Correlation | Inference Speed | Training Efficiency |

|---|---|---|---|---|

| GCPNet-EMA | >10% higher than baseline | >6% higher than baseline | 47% faster | - |

| SPIRED | - | - | ~5x acceleration | ≥10x reduction in training cost |

| ESMFold | High accuracy | High accuracy | Moderate | High resource requirement |

| OmegaFold | Comparable to SPIRED | Comparable to SPIRED | Moderate with recycling | - |

Experimental Protocols for Method Evaluation

Standardized Benchmarking Frameworks

Rigorous evaluation of protein fitness prediction methods requires standardized benchmarking frameworks. The VenusMutHub benchmark addresses this need by providing 905 small-scale experimental datasets curated from published literature and public databases, spanning 527 proteins across diverse functional properties including stability, activity, binding affinity, and selectivity [5]. These datasets feature direct biochemical measurements rather than surrogate readouts, offering a more rigorous assessment of model performance for protein engineering applications where predicting specific molecular functions is crucial.

For tertiary structure estimation, researchers typically adopt standardized test datasets and computational metrics to ensure comparable evaluations. Standard practice involves reporting mean squared error (MSE), mean absolute error (MAE), and Pearson's correlation coefficient at per-residue and per-model levels [13]. For multimer structure accuracy estimation, common metrics include per-target Pearson's correlation and Spearman's correlation (SpearCor), the latter being defined as 1 - (6∑dᵢ²)/(n(n²-1)), where dᵢ represents the difference between the ranks of ground-truth and predicted values [13].

Data Processing and Analysis Workflow

DMS-Correlation Analysis Workflow

The experimental workflow for evaluating protein fitness predictors follows a systematic process as illustrated in Figure 1. The process begins with experimental data generation through DMS, involving mutant library generation, functional selection, and next-generation sequencing to calculate variant frequencies and fitness scores [15]. In parallel, computational predictions are generated for the same variants using the methods being evaluated. Both experimental fitness scores and computational predictions undergo rank transformation before conducting Spearman correlation analysis to evaluate method performance.

This workflow highlights the importance of consistent data processing to ensure fair comparisons between methods. The use of rank transformation makes Spearman correlation particularly valuable for protein fitness prediction, as it reduces the impact of outliers and non-normal data distributions that commonly occur in biological measurements. This property ensures robust performance evaluation even when the relationship between predicted and experimental values follows a monotonic but non-linear pattern.

Essential Research Reagents and Tools

Table 3: Key Research Reagent Solutions for Protein Fitness Landscapes and DMS Assays

| Reagent/Resource | Function | Application in Protein Fitness Analysis |

|---|---|---|

| Saturation Mutagenesis Libraries | Generates comprehensive variant collections | Creates input libraries for DMS experiments [15] |

| Next-Generation Sequencing Platforms | Quantifies variant frequency | Measures enrichment before/after selection in DMS [15] |

| Unique Molecular Identifiers (UMIs) | Error correction for sequencing | Distinguishes true variants from PCR/sequencing errors [15] |

| Protein Language Models (ESM) | Learns evolutionary information | Provides sequence representations for fitness prediction [14] |

| AlphaFold Protein Structure Database | Source of predicted structures | Provides structural features for methods like GeoFitness [13] [14] |

| Deep Mutational Scanning Datasets | Experimental fitness measurements | Serves as ground truth for model training and validation [16] |

Spearman's rank correlation coefficient provides an essential statistical framework for evaluating protein fitness prediction methods in the rapidly advancing field of protein bioinformatics. Its ability to assess monotonic relationships without assuming linearity makes it particularly suitable for analyzing complex sequence-function relationships in protein fitness landscapes and DMS data. As computational methods continue to evolve, with approaches like GCPNet-EMA and SPIRED-Fitness offering improved correlation with experimental measurements, Spearman's correlation remains a fundamental metric for benchmarking progress and guiding method selection.

The integration of advanced machine learning architectures with rich biological data represents the future of protein fitness prediction. As these methods become more sophisticated, their ability to capture complex relationships in protein fitness landscapes will continue to improve. Spearman correlation will play a crucial role in validating these advances, ensuring that computational predictions accurately reflect biological reality, and ultimately accelerating the engineering of novel proteins with tailored functions for biotechnology and medicine.

The Role of Correlation Metrics in Standardized Benchmarks (e.g., ProteinGym)

In the field of protein fitness prediction, the proliferation of machine learning models has created an urgent need for standardized and rigorous benchmarking. ProteinGym addresses this need as a large-scale and holistic set of benchmarks specifically designed for protein fitness prediction and design, curating over 250 standardized deep mutational scanning (DMS) assays spanning millions of mutated sequences [17] [18]. Within this framework, correlation metrics serve as the fundamental quantitative measures for evaluating model performance, with Spearman's rank correlation emerging as the primary metric for assessing predictive accuracy across diverse biological contexts [19]. This guide examines the central role of these metrics within ProteinGym, providing researchers with methodological insights and comparative performance data to navigate this critical benchmarking resource.

ProteinGym Benchmark Composition and Design

ProteinGym consolidates an extensive collection of experimental data and model predictions to facilitate comprehensive comparisons of mutation effect predictors. The benchmark's architecture encompasses several key components:

DMS Assays: The substitution benchmark includes ~2.7 million missense variants across 217 DMS assays, while the indel benchmark covers ~300k mutants across 74 DMS assays [18]. These assays span five principal functional readouts: organismal fitness, enzymatic activity, stability, binding affinity, and expression levels [19].

Clinical Variants: The benchmark incorporates annotated human clinical variants, including 2,525 clinical proteins for substitutions and 1,555 for indels, providing complementary data to the DMS measurements [18].

Stratification System: ProteinGym implements a sophisticated stratification protocol that groups performance by MSA depth (Low: Neff/L <1, Medium: 1-100, High: >100), mutation depth (single, double, triple), taxonomic origin, and functional categories [19]. This enables granular analysis of model strengths and weaknesses across different biological contexts.

The benchmark employs a zero-shot evaluation principle, where models are not fine-tuned on the assay data used for evaluation, ensuring a fair comparison of their inherent predictive capabilities [19]. This rigorous design makes ProteinGym particularly valuable for assessing how well models generalize to novel protein families and functions.

Correlation Metrics in ProteinGym: Methodologies and Applications

Primary Metric: Spearman's Rank Correlation

Spearman's rank correlation (ρ) serves as the primary performance metric in ProteinGym for DMS benchmarks [19]. This non-parametric measure assesses how well the predicted fitness scores rank mutants compared to their experimental rankings, without assuming a linear relationship between variables. The metric is calculated as the Pearson correlation between the rank values of the predicted and experimental scores.

The mathematical formulation for fitness score prediction follows two main conventions in ProteinGym:

- Likelihood Ratio (Autoregressive models): Fx = log[P(xmut)/P(xwt)] [19]

- Log-Odds (Masked Language models): F̂(S^mt,S^wt) = Σ[i∈M] [logP(si^mt|S\M) - logP(si^wt|S\M)] [19]

Spearman correlation is particularly well-suited for protein fitness prediction because it focuses on the ordinal relationship between predictions and experimental results, which is often more biologically relevant than exact numerical accuracy. A higher Spearman correlation indicates that a model better captures the relative functional impacts of mutations, which is crucial for prioritizing variants in protein engineering pipelines.

Complementary Performance Metrics

While Spearman correlation is the primary ranking metric, ProteinGym employs several additional metrics to provide a comprehensive assessment of model performance:

AUC (Area Under the ROC Curve): Evaluates binary classification performance for beneficial/deleterious mutation prediction, with binarization thresholds set manually or at the median [18] [19].

MCC (Matthews Correlation Coefficient): Assesses the quality of binary classifications, particularly useful for imbalanced datasets [18] [19].

NDCG (Normalized Discounted Cumulative Gain): Evaluates the quality of top-ranked predictions, emphasizing the accuracy of the highest-scoring variants [18] [19].

Top-10% Recall: Measures the fraction of true top-10% experimental variants captured in the predicted top-10% [19].

MSE (Mean Squared Error): Used primarily in supervised learning settings to measure the average squared difference between predicted and experimental values [18].

These complementary metrics address different aspects of model performance, from overall ranking accuracy to specific utility in real-world protein engineering scenarios where identifying top-performing variants is paramount.

Experimental Protocols and Aggregation Methods

ProteinGym implements standardized protocols to ensure consistent and reproducible model evaluation:

Variant Preprocessing: Silent variants are omitted, duplicates are averaged, and variants without measurements are dropped from analysis [19].

Aggregation Methodology: Performance metrics are aggregated by UniProt ID to avoid biasing results toward proteins with multiple DMS assays, then averaged across proteins and functional categories [18] [19].

Stratified Reporting: Results are systematically broken down by functional category, MSA depth, taxonomic kingdom, and mutation depth to reveal performance patterns [19].

Statistical Robustness: The benchmark provides bootstrapped standard errors for aggregated metrics to reflect variance with respect to individual assays [18].

This meticulous approach ensures that performance comparisons reflect true methodological differences rather than artifacts of evaluation methodology.

Performance Comparison Across Model Architectures

The table below summarizes the performance of major model classes on the ProteinGym substitution benchmark, demonstrating how Spearman correlation reveals architectural advantages across different functional contexts:

Table 1: Spearman Correlation (ρ) Across Model Families on ProteinGym Substitution Benchmarks

| Model/Modality | Mean ρ | Stability | Binding | Key Characteristics |

|---|---|---|---|---|

| ESM2 OFS-PP (Indels) | 0.574 | 0.582 | ~0.53 | Sequence-only, autoregressive |

| S3F (Seq+Str+Surf) | 0.470 | - | - | Integrates sequence, structure, and surface features |

| EvoIF-MSA (Ensemble) | 0.518 | - | - | Fuses within-family and cross-family evolutionary information |

| SCISOR (Indels) | 0.573 | - | - | Diffusion-based indel prediction |

| ESM-1v NLR-tuned | 0.396 | - | - | Fine-tuned with negative log-likelihood ratio |

| ESM-2 650M (Zero-shot) | 0.414 | - | - | Large-scale protein language model |

| VenusREM | State-of-the-art | - | - | Retrieval-enhanced, multimodal integration |

Note: Performance values are compiled from the ProteinGym leaderboard and associated publications [19] [20].

The data reveals several important patterns. First, multi-modal approaches that intelligently combine sequence, structure, and evolutionary information (e.g., EvoIF-MSA, VenusREM) generally achieve superior performance compared to unimodal methods [21] [19] [20]. Second, specialized architectures for indel prediction (e.g., SCISOR) can achieve performance competitive with substitution prediction, despite the greater complexity of modeling length-altering mutations [19].

Performance Stratification by Biological Context

The table below illustrates how model performance varies across different biological contexts, highlighting the importance of stratified benchmarking:

Table 2: Performance Stratification by Protein Function and MSA Depth

| Model Category | Stability | Enzymatic Activity | Binding Affinity | Low MSA Depth | High MSA Depth |

|---|---|---|---|---|---|

| Sequence-Based | Moderate | Variable | Moderate | Weaker | Stronger |

| Structure-Based | Strong | Moderate | Strong | More Robust | Strong |

| Evolution-Based | Moderate | Strong | Strong | Weaker | Strongest |

| Multi-Modal | Strongest | Strongest | Strongest | Most Robust | Strongest |

Note: Patterns are synthesized from performance breakdowns reported in benchmark analyses [19] [20].

This stratification reveals critical limitations and strengths across methodological paradigms. Structure-based methods demonstrate particular advantage for stability prediction, where physical constraints play a dominant role, while evolution-based approaches excel for enzymatic activity and binding, where evolutionary conservation provides strong signals [19]. Importantly, multi-modal models show the most consistent performance across contexts, particularly for low MSA depth proteins where evolutionary information is scarce [21] [20].

Experimental Workflow and Research Reagents

The following diagram illustrates the standard experimental workflow for benchmarking protein fitness predictors using ProteinGym:

Diagram 1: ProteinGym Benchmarking Workflow (Width: 760px)

Essential Research Reagent Solutions

The table below details key resources available through ProteinGym for conducting comprehensive fitness prediction benchmarks:

Table 3: ProteinGym Research Reagent Solutions

| Resource | Description | Utility in Fitness Prediction | Access Method |

|---|---|---|---|

| DMS Substitution Benchmarks | 217 assays, ~2.7M variants [18] | Primary evaluation data for substitution effects | Zenodo/ProteinGym website |

| DMS Indel Benchmarks | 74 assays, ~300k variants [18] | Specialized evaluation of insertion/deletion effects | Zenodo/ProteinGym website |

| Zero-shot Model Scores | Predictions from 79 models on DMS substitutions [17] | Baseline comparisons and ensemble methods | ProteinGym R package [17] |

| Multiple Sequence Alignments | Jackhmmer/UniRef100 MSAs for DMS assays [18] [19] | Evolutionary context and co-evolutionary signals | Zenodo download [18] |

| AlphaFold2 Structures | Predicted structures for 197 proteins [17] | Structural features for geometry-aware models | ProteinGym R package [17] |

| Clinical Variant Benchmarks | Pathogenic/benign classifications from clinical sources [18] | Assessment of clinical variant effect prediction | Zenodo download [18] |

These resources collectively provide the foundation for rigorous, reproducible benchmarking across diverse methodological approaches, from sequence-only models to complex multi-modal architectures.

Methodological Implementation Guide

Implementing Spearman Correlation for Fitness Prediction

For researchers implementing Spearman correlation within protein fitness prediction pipelines, the following technical considerations are essential:

Variant Scoring: For zero-shot evaluation, compute fitness scores using established conventions appropriate to the model architecture:

Correlation Calculation: Use standard statistical libraries (e.g., scipy.stats.spearmanr in Python) to compute the rank correlation between predicted scores and experimental measurements across all variants in an assay.

Aggregation Protocol: Follow ProteinGym's standardized aggregation method:

- Compute Spearman correlation for each DMS assay independently

- Average correlations at the UniProt ID level (not by individual variant)

- Calculate final performance as the mean across proteins [19]

Stratification: Report performance stratified by functional category, MSA depth, and taxonomic group to provide nuanced insights into model capabilities and limitations.

Advanced Multi-Modal Integration

Leading-performing models like EvoIF and VenusREM demonstrate the power of integrating complementary information sources:

Diagram 2: Multi-Modal Predictor Architecture (Width: 760px)

The EvoIF framework exemplifies this approach by combining: (i) within-family profiles from retrieved homologs using sequence similarity searches (e.g., HHblits) or structure similarity searches (e.g., Foldseek), and (ii) cross-family structural-evolutionary constraints distilled from inverse folding logits [21]. This fusion of complementary evolutionary signals achieves state-of-the-art performance while using only 0.15% of the training data required by larger models [21].

Similarly, VenusREM implements a retrieval-enhanced architecture that integrates sequence, structure, and evolutionary information through disentangled multi-head cross-attention layers, demonstrating that explicit incorporation of homologous sequences significantly boosts prediction accuracy [20].

ProteinGym has established itself as the definitive benchmarking platform for protein fitness prediction, with Spearman's rank correlation serving as its central metric for model evaluation. The benchmark's rigorous design, comprehensive dataset coverage, and sophisticated stratification protocols provide researchers with unparalleled insights into model performance across diverse biological contexts.

The empirical evidence consistently demonstrates that multi-modal integration of sequence, structure, and evolutionary information yields superior performance across functional categories, with models like EvoIF and VenusREM establishing new state-of-the-art benchmarks [21] [19] [20]. However, performance stratification reveals that no single approach dominates across all biological contexts, highlighting the continued need for specialized methods tailored to specific protein families, functions, and data availability conditions.

As the field advances, ProteinGym's standardized evaluation framework and correlation-driven assessment methodology will continue to play a crucial role in guiding the development of more accurate, robust, and biologically-informed fitness prediction models, ultimately accelerating progress in protein engineering and therapeutic development.

Spearman Correlation in Action: Driving Modern Prediction Models and Workflows

In the field of computational biology, accurately modeling the protein fitness landscape is crucial for designing novel functional proteins. The fitness landscape represents a multivariate function that describes how mutations impact protein fitness, and accurately modeling these relationships enables more effective protein engineering with desired traits [22]. However, a significant challenge persists: the scarcity of experimentally collected functional labels relative to the vastness of protein sequence space [22]. This scarcity has prompted the development of self-supervised protein representation learning approaches for predicting mutation effects.

Spearman's rank correlation coefficient has emerged as a critical evaluation metric in this domain because it assesses a model's ability to correctly rank the functional impact of protein variants, which is often more important than predicting absolute values for practical applications like protein engineering. The metric measures how well a model can prioritize beneficial mutations over deleterious ones, making it particularly valuable for zero-shot fitness prediction where models must generalize to unseen mutations without task-specific training [23] [24]. Within this context, the Sequence-Structure-Surface Fitness (S3F) model represents a significant advancement in multi-scale representation learning, achieving state-of-the-art performance on standardized benchmarks as measured by Spearman's correlation [25] [22].

Experimental Protocols and Benchmarking Frameworks

ProteinGym Benchmark Suite

The ProteinGym benchmark serves as the primary evaluation framework for assessing protein fitness prediction models, comprising 217 substitution deep mutational scanning (DMS) assays and encompassing over 2.4 million mutated sequences across more than 200 diverse protein families [22]. This extensive benchmark provides a standardized platform for comparing model performance using Spearman's correlation as a key metric. In DMS experiments, fitness is quantitatively measured as the relative change in a variant's abundance from pre-selection to post-selection populations, normalized to the change in the wild-type's abundance [23]. The calculation is expressed as:

$$ F(S^{\text{mt}}, S^{\text{wt}}) = \log\left(\frac{N{\text{post}}^{\text{mt}}/N{\text{pre}}^{\text{mt}}}{N{\text{post}}^{\text{wt}}/N{\text{pre}}^{\text{wt}}}\right) $$

where a positive fitness value indicates a beneficial mutation, a negative value indicates a deleterious mutation, and a value near zero suggests a neutral effect on protein function [23].

S3F Model Architecture and Training Protocol

The S3F framework implements a sophisticated multi-scale representation learning approach through these key methodological components:

- Sequence Representation: Utilizes embeddings from protein language models (pLMs) like ESM-2-650M, which are pre-trained on massive protein sequence databases using masked language modeling objectives [26].

- Structure Encoder: Incorporates Geometric Vector Perceptron (GVP) networks to process protein backbone structures, enabling message passing among spatially proximate residues [22].

- Surface Encoder: Models protein surfaces as point clouds and facilitates message passing between neighboring points to capture detailed surface topology features critical for biomolecular interactions [22].

- Pre-training Strategy: The complete model is pre-trained using a residue type prediction objective on the CATH dataset, enabling zero-shot prediction of mutation effects without requiring DMS-specific training [26].

The S3F implementation builds upon a foundational Sequence-Structure Fitness Model (S2F) by augmenting it with specialized surface representations, creating a comprehensive multi-scale architecture [22].

Comparative Performance Analysis

Quantitative Benchmark Results

Table 1: Performance comparison of protein fitness prediction models on ProteinGym benchmark

| Model | Architecture Type | Spearman Correlation | Key Features |

|---|---|---|---|

| S3F | Multi-scale (Sequence-Structure-Surface) | State-of-the-art | Integrates sequence, structure, and surface representations |

| S2F | Hybrid (Sequence-Structure) | Competitive | Combines sequence representations with backbone structure |

| EvoIF | Lightweight evolutionary | State-of-the-art/Competitive | Integrates within-family profiles and cross-family structural constraints |

| AlphaMissense | Hybrid sequence-structure | High (but not SOTA) | Incorporates structural prediction losses with weak supervision |

| SaProt | Structure-based | Limited improvement over sequence | Utilizes structure tokens from Foldseek |

| Sequence-only pLMs | Sequence-based | Baseline performance | Family-agnostic models trained on evolutionary sequences |

The S3F model establishes new state-of-the-art performance on the ProteinGym benchmark, demonstrating an 8.5% improvement in Spearman's rank correlation compared to previous leading methods when augmented with alignment information [22]. This significant enhancement underscores the value of integrating complementary protein representations across multiple biological scales. The model achieves this superior performance while maintaining substantially fewer trainable parameters compared to other baselines, reducing pre-training time to several days on commodity hardware [22].

Performance Across Functional Categories

Table 2: Performance breakdown by protein function categories

| Function Category | S3F Performance | Improvement over Sequence-Only | Key Insights |

|---|---|---|---|

| Structure-dependent functions | Highest improvement | Significant | Surface topology critical for accuracy |

| Binding interfaces | Strong improvement | Substantial | Enhanced representation of interaction surfaces |

| Enzymatic activity | Consistent gains | Moderate | Captures active site geometry constraints |

| Stability assays | Reliable prediction | Moderate | Backbone structure features particularly valuable |

| All assays combined | State-of-the-art | 8.5% overall | Comprehensive multi-scale advantage |

Breakdown analysis across different types of assays reveals that S3F's multi-scale learning approach provides consistent improvements, with particularly enhanced performance for structure-related functions where surface topology plays a critical role [22]. The incorporation of structure and surface features demonstrates the capacity to correct biases inherent in sequence-based methods and improves the model's ability to capture epistatic effects—interactions between mutations that are difficult to model using sequence information alone [22].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key research reagents and computational tools for protein fitness prediction

| Resource | Type | Function | Access |

|---|---|---|---|

| ProteinGym Benchmark | Dataset | 217 DMS assays with over 2.4M variants | Publicly available |

| CATH Dataset | Training Data | Protein domain structures for pre-training | Publicly available |

| ESM-2-650M | Protein Language Model | Provides sequence representations | Public weights |

| Geometric Vector Perceptron (GVP) | Architecture | Encodes protein backbone structure | Open source |

| S3F Codebase | Model Implementation | Multi-scale fitness prediction | GitHub repository |

| Foldseek | Tool | Structure similarity search for MSA construction | Publicly available |

Architectural Framework and Signaling Pathways

S3F Multi-Scale Integration Workflow

Protein Fitness Prediction Experimental Pipeline

Discussion: Implications for Protein Engineering and Drug Development

The superior performance of S3F as measured by Spearman correlation demonstrates the critical importance of multi-scale protein representation for accurate fitness prediction. By integrating sequence, structure, and surface information within a unified framework, S3F captures complementary biological constraints that operate at different spatial scales [22]. This approach addresses a fundamental limitation of previous methods that either focused on individual modalities or achieved only incremental improvements when combining sequence and structure alone.

The practical implications of these advances are substantial for researchers and drug development professionals. Accurate zero-shot fitness prediction enables computational screening of protein variants at scale, reducing reliance on expensive and time-consuming experimental assays. The S3F model's ability to prioritize mutations with beneficial functional effects accelerates protein engineering pipelines for therapeutic development, enzyme design, and biomaterial innovation [25] [22]. Furthermore, the model's capacity to capture epistatic interactions provides insights into the complex sequence-function relationships that govern protein evolution and function.

Future directions in this field will likely focus on extending multi-scale frameworks to incorporate additional biological information, such as dynamic conformational changes, co-factor interactions, and environmental conditions. As protein language models and structure prediction methods continue to advance, integration with comprehensive multi-scale approaches like S3F promises to further enhance our ability to navigate protein fitness landscapes and design novel proteins with precision.

The ability to accurately predict the fitness effects of protein mutations is a cornerstone of modern biology, with profound implications for understanding genetic diseases and engineering novel enzymes. However, the proliferation of computational predictors has made the assessment of their respective benefits a formidable challenge, often mired by evaluations on distinct, limited datasets. The ProteinGym benchmark has emerged as a critical solution to this problem, providing a large-scale, standardized platform for holistic comparison [18] [27] [28]. By encompassing millions of mutated sequences from diverse protein families and incorporating clinical annotations, ProteinGym enables robust validation of predictors across different functional regimes. This guide objectively compares the performance of various methodological paradigms using experimental data from ProteinGym, providing researchers with a data-driven framework for selecting appropriate tools. The analysis is framed within the broader thesis that Spearman correlation with deep mutational scanning (DMS) measurements provides a reliable assessment of a model's ability to capture the underlying protein fitness landscape, an assertion increasingly supported by independent clinical validations [29].

The ProteinGym Benchmarking Framework

Architecture and Composition

ProteinGym is a comprehensive collection of benchmarks specifically designed for protein fitness prediction and design. Its architecture is built around two primary components: a substitution benchmark comprising approximately 2.7 million missense variants across 217 DMS assays and 2,525 clinical proteins, and an indel benchmark including around 300,000 mutants across 74 DMS assays and 1,555 clinical proteins [18]. Each processed dataset within these benchmarks provides the mutant description, the full mutated sequence, a continuous DMS score representing experimental measurement (where higher values indicate higher fitness), and a binarized fitness classification [18]. This extensive scale and standardization address critical limitations of previous benchmarks that relied on limited sets of proteins, enabling performance evaluation across a vastly diverse sequence-space [28].

A key innovation of ProteinGym is its robust evaluation framework, which employs multiple metrics tailored to different application scenarios. For DMS benchmarks in the zero-shot setting, performance is assessed using Spearman correlation, Normalized Discounted Cumulative Gain (NDCG), Area Under the Curve (AUC), Matthews Correlation Coefficient (MCC), and Top-K recall [18]. These metrics are aggregated by UniProt ID to prevent bias toward proteins with multiple DMS assays and by different functional categories [18]. This multi-faceted approach provides a more holistic view of model capabilities compared to single-metric evaluations.

Experimental Validation and Clinical Relevance

The experimental foundation of ProteinGym rests on Deep Mutational Scanning (DMS) assays, which provide high-throughput functional measurements for thousands of variants in parallel [28]. A significant advantage of using DMS data for benchmarking is the substantial reduction in data circularity problems that plague many clinical variant assessments [29]. Unlike clinical datasets that rely on previously assigned pathogenicity labels (often used to train predictors), DMS datasets provide independent functional measurements, minimizing variant-level circularity [29].

Recent research has demonstrated a strong correspondence between VEP performance on DMS-based benchmarks and their accuracy in clinical variant classification, particularly for predictors not directly trained on human clinical variants [29]. This correlation validates the use of ProteinGym as a reliable proxy for assessing the potential real-world utility of fitness predictors in medical contexts. The benchmark includes diverse functional assay types categorized as either direct assays (measuring the target protein's ability to carry out native functions like interactions with natural partners) or indirect assays (commonly growth rate experiments where the measured attribute is not directly controlled by the target protein) [29].

The following diagram illustrates the end-to-end ProteinGym evaluation workflow:

Performance Comparison of Methodological Paradigms

Quantitative Benchmark Results

ProteinGym has enabled the systematic comparison of over 70 diverse models from various computational biology subfields [27] [28]. The table below summarizes the performance of major methodological paradigms based on aggregated results from the substitution benchmark:

Table 1: Performance Comparison of Protein Fitness Prediction Paradigms on ProteinGym Substitution Benchmark

| Methodological Paradigm | Representative Models | Average Spearman Correlation | Key Strengths | Notable Limitations |

|---|---|---|---|---|

| Structure-Based Models | ESM-IF1, ProteinMPNN, MIF | Variable (see Table 2) | Excellent for stability assays; captures spatial constraints | Performance depends on structure quality; struggles with disordered regions |

| Protein Language Models | ESM2, TranceptEVE | Varies by model size and training | Strong generalizability; requires no explicit MSA | Lower performance on targets with deep MSAs |

| Evolutionary Models | EVE, DeepSequence | High for conserved proteins | Powerful co-evolutionary signals | Performance drops with shallow MSAs |

| Inverse Folding Methods | ESM-IF1, ProteinMPNN | Moderate to high | Explicit structure conditioning | Limited by structure availability/quality |

| Supervised Methods | Various | Context-dependent | Can leverage known assay data | Risk of overfitting; generalizability concerns |

The performance hierarchy among these paradigms is context-dependent, varying according to protein taxonomy, multiple sequence alignment (MSA) depth, and functional assay type [6] [28]. For instance, structure-based models particularly excel in predicting stability effects, while evolutionary models show strength in capturing functional constraints in well-conserved protein families.

Case Study: Structure-Based Predictors

Structure-based predictors leverage protein tertiary structure information to assess mutation effects. The advent of accurate structure prediction tools like AlphaFold2 has significantly increased the availability and utility of these methods [6]. Recent benchmarking on ProteinGym reveals several critical insights about this paradigm.

Table 2: Structure-Based Model Performance on Selected ProteinGym Assays

| Model | Structural Input | Average Spearman | Ordered Regions Performance | Disordered Regions Performance |

|---|---|---|---|---|

| ESM-IF1 | AlphaFold2 predicted | 0.42 | High | Significantly reduced |

| ESM-IF1 | Experimental structures | 0.38 | High | Moderate |

| ProtSSN | Multimodal (sequence + structure) | 0.45 | High | Moderate |

| SaProt | Structure-aware language model | 0.43 | High | Moderate to low |

A critical finding from ProteinGym analyses is that the choice of protein structure significantly impacts prediction accuracy. Contrary to expectations, models using AlphaFold2-predicted structures often achieve higher Spearman correlations than those using experimental structures (74.5% of monomeric assays and 80% of multimeric assays) [6]. This counterintuitive result may stem from predicted structures representing a "consensus" conformation potentially more reflective of the protein's average state in solution.

However, structure-based models face particular challenges with intrinsically disordered regions (IDRs), which lack fixed 3D structures. Approximately 29% of ProteinGym DMS assays involve proteins with disordered regions in the sequenced covered by the assay [6]. These regions affect not only structure-based models but also multi-modal models and protein language models, likely because disordered regions tend to be fast-evolving and less conserved [6]. The following diagram illustrates how disordered regions impact prediction accuracy across different model types:

Experimental Protocols and Methodologies

Standardized Evaluation Methodology

The ProteinGym benchmarking protocol follows a rigorous, standardized methodology to ensure fair comparison across diverse models. The evaluation process for zero-shot fitness prediction involves several critical stages:

Data Preparation and Partitioning: The benchmark is stratified by UniProt IDs to avoid biasing results toward proteins with multiple DMS assays. Additional stratification by functional categories (activity, binding, expression, organismal fitness, and stability) ensures balanced assessment across functional domains [18].

Model Scoring: All models are evaluated on the same set of mutants using a consistent scoring interface. For substitution assays, models predict fitness effects based on the mutant sequence and, where applicable, structural information [18].

Performance Calculation: The primary metric for DMS benchmarks is Spearman's rank correlation coefficient between predicted scores and experimental measurements. This non-parametric measure assesses how well the model ranks variants by fitness without assuming a linear relationship [18] [29].

Statistical Aggregation: Performance metrics are aggregated using robust statistical methods that account for variance across individual assays. Bootstrapped standard errors are computed to reflect uncertainty in the aggregated metrics [18].

This methodology specifically addresses limitations of previous benchmarks by including appropriate metrics for both zero-shot and supervised settings, implementing careful aggregation strategies to prevent dataset bias, and providing confidence intervals for performance estimates [18] [28].

Case Study Protocol: Assessing Disordered Region Impact

To illustrate the depth of ProteinGym-enabled analyses, we detail the specific protocol used to assess the impact of intrinsically disordered regions on prediction accuracy [6]:

Disordered Region Identification:

- Annotate disordered regions in ProteinGym assay sequences using the DisProt database

- Calculate disorder content for each protein (percentage of residues in disordered regions)

- Categorize assays based on whether mutations fall in ordered, disordered, or mixed regions

Stratified Performance Analysis:

- Compute Spearman correlations separately for mutations in ordered vs. disordered regions

- Compare performance differentials across model architectures (structure-based, sequence-based, multi-modal)

- Statistically assess whether performance differences are significant using paired tests

Structure Comparison:

- For proteins with both experimental and predicted structures, calculate structural similarity metrics

- Correlate structure quality metrics with prediction accuracy differences

- Examine specific case studies (e.g., α-synuclein, NKX3-1) where structural differences explain performance variations

This protocol revealed that disordered regions negatively impact predictions across all model types, with structure-based models showing the largest performance degradation [6]. The analysis also found that 28% of unique UniProt IDs in ProteinGym are proteins annotated as having disordered regions in regions covered by DMS assays [6].

Essential Research Reagents and Computational Tools

The experimental and computational research in protein fitness prediction relies on a curated set of reagent solutions and tools. The following table details key resources referenced in the ProteinGym benchmark and related studies:

Table 3: Essential Research Reagent Solutions for Protein Fitness Prediction

| Resource Category | Specific Tools/Databases | Primary Function | Application in Benchmarking |

|---|---|---|---|

| Benchmark Platforms | ProteinGym, VenusMutHub, PROBE | Standardized model evaluation | Primary performance assessment on curated datasets [18] [5] [30] |

| Protein Structure Prediction | AlphaFold2, ESMFold, RoseTTAFold | Generate 3D protein structures | Provide structural inputs for structure-based predictors [6] |

| Sequence Databases | UniRef30/90, UniProt, BFD, Metaclust | Source of evolutionary information | Construct multiple sequence alignments for co-evolutionary methods [18] |

| DMS Repositories | MaveDB, ProteinGym raw assays | Experimental fitness measurements | Ground truth for model validation [18] [29] |

| Clinical Variant Databases | ClinVar, gnomAD, dbNSFP | Expert-curated variant annotations | Clinical benchmarking and validation [18] [29] |

| Multi-modal Frameworks | DeepSCFold, MMPFP | Integrate sequence and structure data | Advanced prediction combining multiple information sources [4] [31] |

These resources collectively enable comprehensive assessment and development of protein fitness predictors. Researchers should select tools based on their specific application requirements, considering factors such as protein family characteristics, available data types (sequence, structure, or both), and computational resources.

The ProteinGym benchmark has established itself as an indispensable resource for validating protein fitness predictions, enabling direct comparison of diverse methodological paradigms on a standardized platform. Through systematic evaluation of over 70 models, several key insights have emerged: (1) model performance is highly context-dependent, varying by protein taxonomy, MSA depth, and functional assay type; (2) structure-based predictors show particular promise but are sensitive to structural accuracy and struggle with disordered regions; (3) multi-modal approaches that intelligently combine sequence and structural information generally provide more robust performance across diverse protein environments; and (4) DMS-based benchmarking using Spearman correlation provides a reliable assessment strategy that correlates well with clinical classification performance for methods not exposed to clinical training data.

As the field advances, several challenges remain, including improved handling of disordered regions, better integration of multi-modal information, and development of more robust evaluation metrics. The continued expansion of ProteinGym with new assays and model submissions will further solidify its role as the gold standard for protein fitness prediction validation, ultimately accelerating both therapeutic development and protein engineering applications.

The accurate prediction of protein structures represents one of the most significant advances in computational biology in recent years. However, the practical utility of these predicted models depends entirely on our ability to assess their quality and reliability. Quality Assessment (QA) methods serve as the critical bridge between raw structural predictions and their meaningful biological application, particularly in protein function prediction research. The Critical Assessment of protein Structure Prediction (CASP) experiments have been instrumental in driving progress in this field by providing standardized, blind benchmarks for evaluating methodological advancements [32]. These community-wide assessments have created a competitive yet collaborative framework where research groups worldwide test their methods on experimentally determined but unpublished structures, enabling objective comparison of performance and tracking of progress over time.

Within the context of protein function prediction, structural model quality estimation takes on even greater importance. Research has consistently demonstrated that function annotation accuracy is highly dependent on structural model quality, with poor-quality models leading to erroneous functional inferences [33]. This relationship has motivated the development of sophisticated QA methods that can accurately estimate both local (per-residue) and global (per-model) reliability, enabling researchers to identify trustworthy regions of structural models suitable for functional analysis. The integration of these QA methods with function prediction pipelines has become essential for accurate functional annotation, particularly for the millions of proteins that lack experimental characterization but have been modeled computationally [33].

CASP Challenges: The Gold Standard for Method Evaluation

Evolution of CASP Evaluation Frameworks

The CASP challenges have established increasingly sophisticated evaluation methodologies since their inception. CASP employs a time-delayed evaluation strategy where predictors submit their models for proteins whose structures have been experimentally determined but not yet published. The organizers then assess these predictions using standardized metrics against the experimental structures once they become publicly available [32]. This blind assessment approach eliminates bias and ensures objective comparison. The CASP framework has evolved significantly from CAFA1 to CAFA2, with the latter featuring expanded analysis in terms of dataset size, variety, and assessment metrics [32]. This expansion has allowed for more comprehensive benchmarking across different protein classes and structural features.

The evaluation methodology in CASP distinguishes between two fundamental assessment approaches: protein-centric evaluation and term-centric evaluation [32]. The protein-centric approach assesses a method's ability to predict all correct ontological terms for a given protein, treating the problem as a multi-label classification task where the output space is extremely large due to the multitude of possible functional terms. In contrast, the term-centric approach evaluates how well methods can assign a specific ontology term to appropriate protein sequences, essentially framing it as a binary classification problem for each term [32]. Both approaches provide valuable insights, with the former being particularly relevant for comprehensive function prediction and the latter for specific functional attribute identification.

Benchmark Construction and Evaluation Metrics

CASP employs rigorously constructed benchmark sets to ensure fair and comprehensive method assessment. In CAFA2, organizers introduced no-knowledge benchmarks (proteins without any experimental annotations prior to prediction) and limited-knowledge benchmarks (proteins with some prior experimental annotations in certain ontologies but not others) [32]. This distinction allows evaluators to assess how different types of prior knowledge affect prediction performance. Additionally, CAFA2 implemented both full evaluation mode (assessing all benchmark proteins) and partial evaluation mode (assessing only proteins for which a method made predictions), accommodating both general-purpose methods and specialized approaches designed for specific protein classes [32].

The metrics used in CASP evaluation have become increasingly sophisticated. For protein-centric evaluation, precision-recall curves and remaining uncertainty-misinformation curves serve as primary assessment tools [32]. These metrics effectively capture the trade-offs between prediction completeness and accuracy. For quality assessment methods, CASP employs metrics including mean squared error (MSE), mean absolute error (MAE), Pearson's correlation coefficient (Cor), and Spearman's correlation coefficient (SpearCor) [13]. These quantitative measures provide comprehensive insights into different aspects of performance, with Spearman correlation being particularly valuable for assessing the ranking capability of QA methods without assuming linear relationships.

Table 1: Key Evaluation Metrics in CASP Challenges

| Metric | Formula | Interpretation | Application in CASP | ||

|---|---|---|---|---|---|

| Mean Squared Error (MSE) | (\frac{1}{n}\sum{i=1}^{n}(yi-\hat{y}_i)^2) | Measures average squared difference between predicted and actual values | Quantifies overall deviation in accuracy estimates | ||

| Mean Absolute Error (MAE) | (\frac{1}{n}\sum_{i=1}^{n} | yi-\hat{y}i | ) | Measures average absolute difference | More robust to outliers than MSE |

| Pearson's Correlation (Cor) | (\frac{\sum{i=1}^{n}(\hat{y}i-\bar{\hat{y}})(yi-\bar{y})}{\sqrt{\sum{i=1}^{n}(\hat{y}i-\bar{\hat{y}})^2\sum{i=1}^{n}(y_i-\bar{y})^2}}) | Measures linear relationship between predicted and actual values | Assesses linear association in accuracy scores | ||

| Spearman's Correlation (SpearCor) | (1-\frac{6\sum d_i^2}{n(n^2-1)}) | Measures monotonic relationship using rank order | Evaluates ranking capability of QA methods |

Advanced QA Methods: Architecture and Performance

Geometric Deep Learning Approaches

Recent advances in quality assessment have been driven by geometric deep learning methods that explicitly incorporate three-dimensional structural information. The Geometry-Complete Perceptron Network for EMA (GCPNet-EMA) represents a significant innovation in this space by operating directly on 3D protein structures represented as point clouds [13]. This method featurizes protein structures using both scalar features (e.g., residue types) and vector-valued features (e.g., directional vectors between residues), then applies geometry-complete graph convolution layers to learn expressive representations of protein geometry [13]. This architecture allows GCPNet-EMA to capture complex geometric relationships that traditional methods might miss, leading to more accurate quality estimates.

The performance advantages of this geometric approach are substantial. In rigorous benchmarks, GCPNet-EMA demonstrated 47% faster inference times while achieving over 10% higher correlation with ground-truth measures of per-residue structural accuracy compared to state-of-the-art baseline methods [13]. For tertiary structure assessment, GCPNet-EMA achieved a per-residue Pearson correlation of 0.7058 and per-model correlation of 0.9046, outperforming methods like AlphaFold 2's predicted lDDT (plDDT) and EnQA-MSA [13]. These improvements are particularly notable for multimer structure assessment, where GCPNet-EMA showed 6% higher correlation for per-target accuracy estimation compared to existing methods [13]. The superior performance stems from the method's ability to leverage rich geometric information directly from 3D structures through its physics-inspired architecture.

Statistics-Informed Graph Networks for Function Prediction