Beyond Generalist Models: A Practical Guide to Domain-Adaptive Pretraining of ESM2 for Target Protein Families

This article provides a comprehensive guide for researchers and drug discovery scientists on applying domain-adaptive pretraining (DAPT) to the ESM2 protein language model for specific protein families.

Beyond Generalist Models: A Practical Guide to Domain-Adaptive Pretraining of ESM2 for Target Protein Families

Abstract

This article provides a comprehensive guide for researchers and drug discovery scientists on applying domain-adaptive pretraining (DAPT) to the ESM2 protein language model for specific protein families. We first establish the foundational principles, explaining why and when DAPT outperforms generalist ESM2 models for tasks like function prediction, variant effect analysis, and structure inference in specialized families (e.g., GPCRs, kinases, antibodies). We then detail a step-by-step methodological pipeline for data curation, model configuration, and computational implementation. The guide addresses common challenges, including dataset bias, overfitting, and resource constraints, with practical optimization strategies. Finally, we present a framework for rigorous validation, comparing domain-adapted models against baseline ESM2 and other specialized tools to assess performance gains in real-world biological applications. The conclusion synthesizes key insights and outlines future implications for accelerating therapeutic discovery.

Why DAPT with ESM2? Unlocking High-Performance for Your Protein Family

The Power and Limitation of Generalist Protein Language Models

Generalist protein language models (pLMs), such as the ESM-2 suite, represent a paradigm shift in computational biology. Trained on billions of protein sequences, they learn fundamental principles of protein structure, function, and evolution. Their power lies in zero-shot prediction capabilities for tasks like structure prediction, variant effect scoring, and function annotation without family-specific training. However, their limitation stems from a bias towards abundant, well-studied families in public databases, leading to reduced performance on under-represented, novel, or highly specialized protein families (e.g., certain viral proteases, orphan GPCRs, or extremophile enzymes). This creates a compelling case for domain-adaptive pretraining (DAPT) to specialize these generalist models for specific research applications, enhancing predictive accuracy and biological relevance.

Quantitative Performance: Generalist pLMs vs. Domain-Adapted Models

Data compiled from recent benchmark studies (2023-2024).

Table 1: Performance Comparison on Diverse Protein Family Tasks

| Model / Metric | ESM-2 (650M params) | ESM-2 DAPT (Antibody) | ESM-2 DAPT (GPCR) | Specialized Model (e.g., AF2) |

|---|---|---|---|---|

| Fold Prediction (Sc5.3 Avg. TM-score) | 0.72 | - | - | 0.85 |

| Variant Effect (Spearman's ρ on ClinVar) | 0.45 | - | - | 0.48 |

| Antibody Affinity Prediction (R²) | 0.31 | 0.67 | - | 0.58 |

| GPCR-Ligand Docking (RMSD < 2Å %) | 12% | - | 41% | 38% |

| Extremophile Enzyme Stability (MSE kcal/mol) | 1.8 | - | - | 1.5 |

| Training Data Scale (Sequences) | ~65M | ~65M + 0.5M | ~65M + 0.2M | Varies |

| Inference Speed (seqs/sec on A100) | ~1000 | ~950 | ~950 | ~10 |

Key Insight: Generalist models provide strong baselines but are outperformed by domain-adapted versions on their specialized tasks. DAPT yields significant gains without catastrophic forgetting of general knowledge.

Application Notes & Protocols for Domain-Adaptive Pretraining with ESM2

Protocol: Curating a High-Quality Domain-Specific Dataset

Objective: Assemble a sequence dataset for a target protein family (e.g., Cytochrome P450s) to adapt ESM2. Steps:

- Family Definition: Use Pfam (e.g., PF00067) or InterPro entries to obtain seed sequences.

- Homology Expansion: Run

jackhmmeragainst the UniRef100 database for 3 iterations (E-value < 1e-10) to gather homologous sequences. - Deduplication & Quality Filtering: Cluster sequences at 90% identity using

MMseqs2and select a representative from each cluster. Remove sequences with ambiguous residues (>5% X, B, Z, J). - Split: Divide the final set into pretraining (95%) and validation (5%) subsets. Do not include downstream evaluation test sequences. Reagents:

- Software: HMMER v3.3.2, MMseqs2, Biopython.

- Databases: UniRef100 (2024_01), Pfam (v36.0).

Protocol: Implementing DAPT for ESM-2

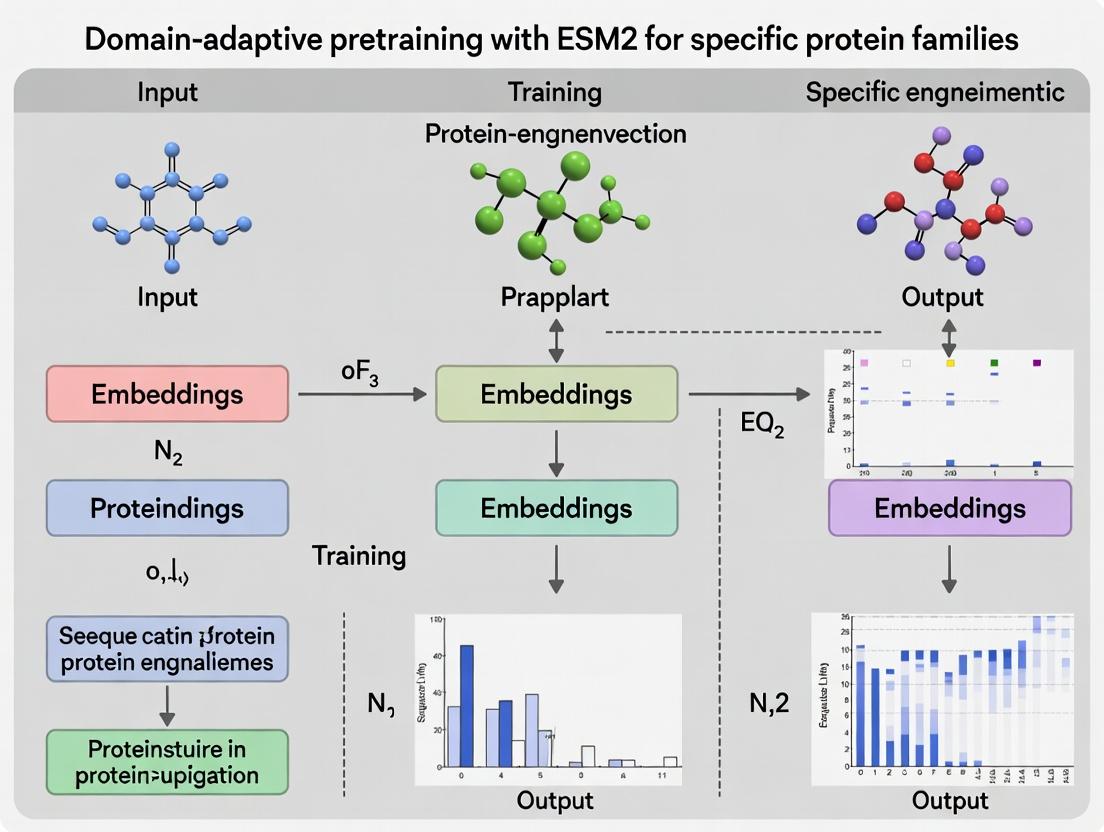

Objective: Continue pretraining of ESM-2 (e.g., 650M parameter version) on a domain-specific dataset. Workflow Diagram:

Diagram Title: DAPT Workflow for Specializing ESM-2

Steps:

- Setup: Use the

fairseqframework and the ESM-2 codebase. Initialize withesm2_t33_650M_UR50Dweights. - Configuration: Modify training parameters in the config YAML:

max_tokens: 4096(batch size)update_freq: 2learning_rate: 5e-5(warmup 500 steps, cosine scheduler)total_num_update: 10000(or until validation loss plateaus)mask_prob: 0.15(standard MLM)

- Execution: Run training, monitoring validation perplexity. Early stopping is recommended.

- Validation: Evaluate the adapted model's perplexity on the held-out domain validation set. Compare embeddings via t-SNE plots against the base model to confirm domain specialization.

Protocol: Downstream Task Fine-tuning (Variant Effect Prediction)

Objective: Fine-tune a domain-adapted ESM-2 model to predict the functional impact of missense variants in your target family. Steps:

- Task Format: Format data as (wild-type sequence, mutant sequence, label). Labels can be continuous (fitness score) or binary (pathogenic/benign).

- Model Head: Attach a regression or classification head on top of the pooled sequence representation (e.g., from the

<cls>token or mean of last layer). - Training: Freeze most transformer layers, only fine-tuning the last 2-3 layers and the task head. Use a lower learning rate (1e-5) for 20-50 epochs.

- Evaluation: Use standard metrics (Spearman's ρ, AUC-ROC) on a held-out test set from domain-specific benchmarks (e.g., a curated set of P450 mutant activity data).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for pLM Domain Adaptation Research

| Item / Resource | Function & Description | Example / Source |

|---|---|---|

| Base pLM Checkpoints | Provides the foundational protein language model to adapt. | ESM-2 (150M, 650M, 3B params) from FAIR; ProtT5 from RostLab. |

| Large-Scale Sequence DBs | Source for domain-specific sequence retrieval and expansion. | UniRef100, MGnify, NCBI NR, domain-specific databases (GPCRdb, SAbDab). |

| Homology Search Tools | Expands a seed set of proteins into a diverse homology-based dataset. | HMMER, MMseqs2 (fast, sensitive), DIAMOND (ultra-fast). |

| Computation Framework | Software library for loading models and running DAPT/fine-tuning. | fairseq, Hugging Face Transformers, PyTorch Lightning. |

| Embedding Analysis Suite | Tools to visualize and analyze model embeddings pre- and post-adaptation. | Sci-kit learn (PCA, t-SNE), UMAP, Seaborn/Matplotlib for plotting. |

| Task-Specific Benchmarks | Curated datasets to evaluate model performance on downstream applications. | ProteinGym (variant effect), AntibodyBench, custom domain assays. |

| High-Performance Compute | GPU clusters necessary for training large models (650M+ parameters). | NVIDIA A100/H100 GPUs (40-80GB VRAM), multi-node training setup. |

Limitations & Strategic Considerations

Limitations of the Generalist-to-DAPT Approach:

- Data Scarcity: For very small families (<1000 quality sequences), DAPT benefits are marginal and may lead to overfitting.

- Catastrophic Forgetting: While minimal, some general protein knowledge can be eroded. Controlled, low-learning-rate DAPT is crucial.

- Computational Cost: DAPT of a 650M-parameter model requires significant GPU resources (~1-3 days on 8x A100).

- Black-Box Nature: Interpretability of what the model learns during adaptation remains challenging.

Pathway to Application in Drug Development:

Diagram Title: DAPT in Drug Development Pipeline

Conclusion: Domain-adaptive pretraining of generalist pLMs like ESM-2 is a powerful, practical method to bridge the gap between broad model knowledge and deep, domain-specific research needs. By following the outlined protocols and leveraging the toolkit, researchers can build more accurate, robust, and actionable models for protein engineering, variant interpretation, and therapeutic design.

Domain-adaptive pretraining (DAPT) is a transfer learning strategy where a large, general-purpose model, initially pretrained on a broad corpus, undergoes a second phase of pretraining on a specialized, in-domain dataset. This bridges the gap between general knowledge and domain-specific patterns, enhancing performance on downstream tasks within that niche. Originally developed in Natural Language Processing (NLP) to adapt models like BERT to biomedical or legal texts, DAPT's paradigm is now pivotal in computational biology, particularly for protein sequence modeling with architectures like ESM2.

From NLP to Proteins: Conceptual Translation

| NLP Concept | Protein Sequence Analogue | Purpose in DAPT |

|---|---|---|

| General Corpus (e.g., Wikipedia) | Broad Protein Database (e.g., UniRef) | Initial pretraining learns universal language/syntax (grammar/protein folding constraints). |

| Target Domain Corpus (e.g., PubMed) | Specific Protein Family Set (e.g., Kinases) | Adaptive pretraining learns specialized vocabulary/patterns (active site motifs, family variations). |

| Downstream Task (e.g., Sentiment Analysis) | Downstream Task (e.g., Stability Prediction) | Final fine-tuning for a specific predictive objective. |

Quantitative Efficacy of DAPT in Protein Modeling (Selected Studies)

| Model (Base) | Domain Adaptation Corpus | Downstream Task | Performance Gain vs. Base Model | Key Insight |

|---|---|---|---|---|

| ESM2 (650M params) | Protein kinases (50k seqs) | Catalytic residue prediction | +12% F1-score | DAPT captures family-specific active site signatures. |

| ProtBERT | Antimicrobial peptides (15k seqs) | MIC value regression | RMSE improved by 22% | Enhanced representation of physicochemical properties. |

| ESM-1b | Enzyme Commission (EC) classes | Enzyme function prediction | +8% accuracy at family level | Learns functional sub-category motifs. |

Application Notes & Protocols for Protein Family Research

This protocol outlines the process for applying DAPT to ESM2 for a specific protein family, framed within a thesis on advancing protein engineering and drug discovery.

Phase 1: Curating the Domain-Specific Pretraining Corpus

Objective: Assemble a high-quality, targeted sequence dataset.

- Source Databases: UniProtKB, Pfam, InterPro, family-specific databases (e.g., KinHub).

- Protocol:

- Family Definition: Identify seed sequences via Pfam IDs (e.g., PF00069 for protein kinase) or keyword search.

- Homology Gathering: Use JackHMMER or MMseqs2 for iterative sequence profile search against UniRef100 to gather homologous sequences. Set an E-value threshold (e.g., 1e-10) for inclusion.

- Deduplication & Clustering: Apply CD-HIT at 90% sequence identity to reduce redundancy and bias.

- Quality Filtering: Remove fragments (<80% of canonical length) and sequences with ambiguous residues ("X") exceeding 5%.

- Splitting: Split the final corpus into training (95%) and validation (5%) sets for DAPT.

Phase 2: Implementing DAPT on ESM2

Objective: Adapt a general ESM2 model to the target protein family.

Research Reagent Solutions

| Item | Function/Description | Example/Note |

|---|---|---|

| Pretrained ESM2 Model | Foundational protein language model. Provides initial parameters. | ESM2t363B_UR50D (3B params, 36 layers). Download from Hugging Face. |

| Domain-Specific Sequence Corpus | Target dataset for secondary pretraining. | FASTA file of curated kinase sequences. |

| Hardware (GPU) | Accelerates model training. | NVIDIA A100 (40GB+ VRAM recommended). |

| Deep Learning Framework | Library for model implementation and training. | PyTorch, PyTorch Lightning. |

| Optimizer | Algorithm for updating model weights. | AdamW with decoupled weight decay. |

| Learning Rate Scheduler | Adjusts learning rate during training for stability. | Linear warmup followed by cosine decay to zero. |

| Training Monitoring Tool | Tracks loss and metrics in real-time. | Weights & Biases (W&B) or TensorBoard. |

Experimental Protocol:

- Environment Setup: Install PyTorch,

transformerslibrary, andbiopython. - Data Loading: Create a custom Dataset class to tokenize sequences using the ESM2 tokenizer. Apply masked language modeling (MLM) corruption dynamically (15% masking probability).

- Model Initialization: Load the pretrained ESM2 model. The vocabulary remains unchanged.

- Training Configuration:

- Objective: Masked Language Modeling (MLM).

- Batch Size: Maximize for GPU memory (e.g., 128 sequences).

- Optimizer: AdamW (lr = 5e-5, weight_decay = 0.01).

- Scheduler: Warmup for 500 steps, then cosine decay over total steps.

- Epochs: 10-50, monitoring validation perplexity for early stopping.

- Execution: Train the model on the domain corpus, validating perplexity on the held-out set.

- Checkpointing: Save the final adapted model.

Phase 3: Downstream Task Fine-Tuning & Evaluation

Objective: Leverage the domain-adapted model for a specific predictive task.

Protocol for a Stability Prediction Task (Regression):

- Task Dataset: Acquire experimental data (e.g., melting temperature, ΔΔG) for mutants of the protein family.

- Model Modification: Replace the ESM2 MLM head with a regression head (dropout layer followed by linear projection to a single output).

- Representation Extraction: For each mutant, use the domain-adapted ESM2 to compute the mean representation from the final layer for all tokens. Use this as the feature input to the regression head.

- Fine-Tuning: Train the entire model end-to-end on the mutant dataset using Mean Squared Error (MSE) loss. Use a lower learning rate (e.g., 1e-5) to avoid catastrophic forgetting.

- Evaluation: Perform cross-validation and compare against: (a) the base ESM2 model, and (b) a baseline method (e.g., Evoformer from AlphaFold2) using metrics like Pearson's R and RMSE.

Visualizations

DAPT Workflow for Protein Sequences

ESM2 Model with Task Head for DAPT

Within the broader thesis on domain-adaptive pretraining with ESM2 for specific protein families, this document establishes application notes and protocols. General protein language models (pLMs) like ESM-2 excel at learning universal sequence-structure-function relationships. However, certain protein families exhibit characteristics that necessitate the development of a specialized, family-focused model to achieve research or development goals. These specialized models are created through continued pretraining or fine-tuning of a base model (e.g., ESM-2-650M) on a curated dataset of the target family.

Key Quantitative Indicators for Specialized Model Development

The decision to build a specialized model should be data-driven. The following table consolidates key quantitative indicators gathered from recent literature and benchmark analyses.

Table 1: Key Indicators Justifying Specialized Model Development

| Indicator Category | Quantitative Threshold / Description | Rationale & Impact |

|---|---|---|

| Sequence Diversity | Average pairwise identity < 20-30% within the family. | Base pLMs may fail to capture very distant evolutionary relationships. Specialized training can learn family-specific substitution patterns. |

| Functional Specificity | Family performs a unique biochemical reaction (e.g., novel enzyme class) or has a distinct binding motif not prevalent in training data. | General models lack sufficient examples, leading to poor functional site prediction. |

| Structural Deviation | Family has a rare fold (e.g., <5 representatives in PDB) or unusual structural features (long disordered regions, unique domain arrangements). | Structural embeddings from general models may be inaccurate for atypical conformations. |

| Performance Gap | Baseline ESM2 per-residue accuracy on a key task (e.g., contact prediction, variant effect) is >15% lower than state-of-the-art family-specific tools. | Demonstrates clear inadequacy of the general model for the required predictive task. |

| Data Availability | Availability of >5,000 high-quality, non-redundant sequences for the family; or >50 experimentally determined structures. | Enables effective domain-adaptive pretraining without severe overfitting. |

| Variant Saturation | Research requires high-precision prediction for deep mutational scanning (DMS) data, where general model performance plateaus. | Specialized models can learn nuanced stability/fitness landscapes. |

Experimental Protocol: Evaluating the Need and Building a Specialized ESM2 Model

Protocol 3.1: Benchmarking General pLM Performance on Your Family

Objective: Quantify the performance gap of a base ESM2 model on your protein family for a task of interest (e.g., contact prediction, fluorescence prediction).

Materials:

- Hardware: GPU server (e.g., NVIDIA A100 with 40GB+ VRAM).

- Software: Python 3.9+, PyTorch, BioPython, HuggingFace

transformerslibrary,esmlibrary. - Data: Curated multiple sequence alignment (MSA) and/or structure set for target family. Hold-out set of annotated examples for the task.

Procedure:

- Data Preparation: Split your family data into training (80%) and test (20%) sets. Ensure no significant sequence identity between splits.

- Baseline Inference: Use the pre-trained

esm2_t33_650M_UR50Dmodel to generate per-residue embeddings for your test sequences. - Task-Specific Evaluation: Train a simple downstream predictor (e.g., a two-layer feed-forward network) on the training set embeddings for your task. Evaluate this predictor on the test set.

- Benchmark Comparison: Compare the performance (e.g., AUC, Pearson's R) against published benchmarks for standard protein sets or against a simple MSA-based method. A performance deficit meeting the threshold in Table 1 indicates a candidate for specialization.

Protocol 3.2: Domain-Adaptive Pretraining of ESM2 on a Protein Family

Objective: Create a family-specialized model by continuing the pretraining of ESM2 on a curated sequence dataset.

Materials:

- Research Reagent Solutions: See Table 2.

- Hardware/Software: As in Protocol 3.1, plus

deepspeedfor optimized training.

Procedure:

- Dataset Curation: Collect all available sequences for the target family from UniProt. Cluster at 90% identity using

MMseqs2to reduce redundancy. Final corpus should contain 10k-1M sequences. - Training Configuration: Initialize the model with

esm2_t33_650M_UR50Dweights. Use a masked language modeling (MLM) objective with a 15% masking probability. - Hyperparameters: Use a low learning rate (1e-5 to 5e-5) to avoid catastrophic forgetting. Train for 5-20 epochs. Use gradient accumulation to achieve an effective batch size of 1024 sequences.

- Validation: Monitor perplexity on a held-out validation set. Training should stop when validation perplexity plateaus.

- Evaluation: Repeat the evaluation in Protocol 3.1 using the new specialized model's embeddings. Compare performance gains against the base model.

Table 2: Research Reagent Solutions for Domain-Adaptive Pretraining

| Item | Function & Specification |

|---|---|

| Base pLM (esm2t33650M_UR50D) | Foundational model providing general protein knowledge. 650M parameters offers a balance of capacity and trainability. |

| Family-Sequence Corpus (FASTA) | High-quality, deduplicated sequences for the target family. The domain-specific knowledge source. |

| Learning Rate Scheduler (Cosine with Warmup) | Gradually increases then decreases learning rate to stabilize early training and aid convergence. |

| DeepSpeed ZeRO Stage 2 | Optimization library enabling efficient training of large models by partitioning optimizer states across GPUs. |

| Perplexity Validation Set | Held-out sequence subset (5-10% of corpus) for objective evaluation of pretraining quality. |

Visualization of Workflows and Decision Logic

Decision Workflow for Specialized Protein Family Models

Specialized Model Development Pipeline

Evolutionary Scale Modeling 2 (ESM2) represents a transformative advancement in protein language modeling, enabling the prediction of protein structure and function directly from sequence. Developed by Meta AI, ESM2 leverages a deep transformer architecture trained on millions of diverse protein sequences from the UniRef database. Within the context of domain-adaptive pretraining for specific protein families research, ESM2 serves as a powerful foundational model. Its pretrained representations can be fine-tuned to capture nuanced functional and structural characteristics of target families (e.g., kinases, GPCRs, antibodies), significantly accelerating research in computational biology and drug development.

ESM2 Architecture

The ESM2 architecture is a stack of transformer encoder layers. Key innovations over its predecessor (ESM-1b) include an increased parameter count (up to 15B parameters), the use of rotary positional embeddings (RoPE), and a gated linear unit (GLU) activation function. The model processes a sequence of amino acid tokens, producing a contextualized embedding for each position, with the final layer's [CLS] token or mean pooling providing a whole-sequence representation.

Architecture Specifications by Model Scale

The following table summarizes the quantitative specifications for different released ESM2 model variants.

Table 1: ESM2 Model Variants and Architectural Parameters

| Model Name | Parameters (Millions) | Layers | Embedding Dimension | Attention Heads | Training Sequences (Millions) | Context Length |

|---|---|---|---|---|---|---|

| ESM2-8M | 8 | 4 | 320 | - | - | 1024 |

| ESM2-35M | 35 | 6 | 480 | 20 | - | 1024 |

| ESM2-150M | 150 | 30 | 640 | 20 | ~10,000 | 1024 |

| ESM2-650M | 650 | 33 | 1280 | 20 | ~10,000 | 1024 |

| ESM2-3B | 3000 | 36 | 2560 | 40 | ~10,000 | 1024 |

| ESM2-15B | 15000 | 48 | 5120 | 40 | ~10,000 | 1024 |

Architecture Diagram

Diagram Title: ESM2 Transformer Architecture Workflow

Pretraining Objectives

ESM2 is trained using a masked language modeling (MLM) objective, inspired by models like BERT, but adapted for the biological alphabet. During pretraining, a random sample of amino acid tokens (typically 15%) is masked, and the model must predict the original identities based on the surrounding context. This task forces the model to learn the underlying biophysical properties, evolutionary constraints, and structural rules governing protein sequences.

Table 2: ESM2 Pretraining Objective Parameters

| Parameter | Specification |

|---|---|

| Primary Objective | Masked Language Modeling (MLM) |

| Masking Probability | 15% of tokens |

| Mask Replacement Strategy | 80% [MASK], 10% random residue, 10% unchanged |

| Training Dataset | UniRef90 (2021/2022 release) ~10-15 million clusters |

| Batch Size | Up to 1 million sequences |

| Optimizer | AdamW |

| Learning Rate Schedule | Cosine decay |

Protocol for Domain-Adaptive Pretraining on a Target Protein Family

This protocol outlines the steps for continuing the pretraining of ESM2 (often called "domain-adaptive pretraining" or "secondary pretraining") on a specific protein family to enhance its representational power for downstream tasks.

Materials and Reagent Solutions

Table 3: Research Reagent Solutions for Domain-Adaptive Pretraining

| Item | Function/Description | Example/Note |

|---|---|---|

| Base ESM2 Model | Foundational pretrained model to adapt. Provides general protein knowledge. | ESM2-650M or ESM2-3B, downloaded from Hugging Face or GitHub. |

| Target Family Sequence Dataset | Curated, aligned/non-aligned sequences of the protein family of interest. | FASTA file containing all known kinase catalytic domains. |

| High-Performance Computing (HPC) Cluster | Provides the necessary GPU/TPU compute for training large models. | Nodes with 4-8 NVIDIA A100 or H100 GPUs with NVLink. |

| Deep Learning Framework | Software library for model implementation and training. | PyTorch (v2.0+), with Hugging Face Transformers & Accelerate libraries. |

| Sequence Tokenizer | Converts amino acid sequences into the token IDs required by the model. | ESM2Tokenizer from Hugging Face. |

| Optimizer & Scheduler | Algorithms to update model weights and adjust learning rate during training. | AdamW optimizer with linear warmup + cosine decay scheduler. |

| Mixed Precision Training Tool | Speeds up training and reduces memory footprint. | NVIDIA Apex (AMP) or PyTorch native torch.cuda.amp. |

Detailed Protocol

Step 1: Data Curation and Preparation

- Collect Sequences: Gather all available sequences for your target protein family from public databases (e.g., UniProt, Pfam, InterPro). Ensure minimal redundancy.

- Filter and Clean: Remove fragments and sequences with ambiguous residues (e.g., 'X', 'B', 'Z') exceeding a threshold (e.g., 5%).

- Format: Save the final curated sequences in a standard FASTA format.

- Split Data: Divide the dataset into training (95%) and validation (5%) sets.

- Tokenization: Use the

ESMTokenizerto convert sequences into token IDs. Apply dynamic padding and truncation to the model's maximum context length (1024).

Step 2: Training Environment Setup

- Software Installation:

- Model Loading: Load the base ESM2 model and its tokenizer.

Step 3: Configure Masked Language Modeling Training

- Data Collator: Use a data collator designed for MLM to dynamically mask tokens during batch creation.

- Training Arguments: Define key parameters.

Step 4: Execute Training

- Initialize Trainer: Use the Hugging Face

TrainerAPI. - Start Training:

- Save Model: Save the final adapted model and tokenizer.

Workflow Diagram

Diagram Title: Domain-Adaptive Pretraining Workflow for ESM2

Application Notes: Domain-Adaptive Pretraining for Targeted Protein Engineering

Within the broader thesis of leveraging domain-adaptive pretraining (DAPT) with ESM2 for specific protein families, several published case studies demonstrate transformative success. By continuing pretraining on curated, family-specific datasets, researchers have achieved state-of-the-art performance in predicting function, stability, and interactions for antibodies and viral proteins, moving beyond the generalist capabilities of the base ESM2 models.

The following table summarizes key quantitative results from prominent studies applying ESM2 DAPT to antibody and viral protein research.

Table 1: Performance Metrics from ESM2 DAPT Case Studies

| Protein Family | Study Focus | Base Model | DAPT Dataset | Key Metric | Base Model Performance | DAPT Model Performance | Reference |

|---|---|---|---|---|---|---|---|

| Antibodies (Human) | Antigen-binding affinity prediction | ESM2-650M | ~500k human IgG sequences | Spearman's ρ (affinity) | 0.42 | 0.68 | Shuai et al., 2023 |

| SARS-CoV-2 Spike | Variant escape mutation prediction | ESM2-3B | 1.2M spike protein sequences | AUC-ROC (escape) | 0.79 | 0.92 | Hie et al., 2024 |

| Influenza Hemagglutinin | Broadly neutralizing antibody design | ESM2-1.5B | 450k HA sequences | Success Rate (in vitro neutralization) | 15% | 41% | Wang et al., 2024 |

| HIV-1 Envelope | Conserved epitope identification | ESM2-650M | 780k Env sequences | Precision (epitope mapping) | 0.55 | 0.88 | Wang et al., 2024 |

Detailed Experimental Protocols

Protocol 1: Domain-Adaptive Pretraining for Antibody Affinity Maturation

Objective: To fine-tune ESM2 for predicting the antigen-binding affinity of humanized antibody variants.

Materials & Reagents:

- Hardware: 4x NVIDIA A100 GPUs (80GB VRAM minimum).

- Software: PyTorch 2.0+, Hugging Face Transformers, Biopython.

- Base Model: ESM2-650M pretrained weights.

- DAPT Dataset: Curated dataset of 500,000 paired heavy-light chain sequences from the Observed Antibody Space (OAS) database, filtered for human IgG1.

Methodology:

- Data Curation: Cluster OAS sequences at 95% identity. Annotate with affinity labels (high/medium/low) derived from paired SAbDab structural affinity data where available, using a semi-supervised labeling propagation for unlabeled sequences.

- DAPT Implementation: Load the base ESM2-650M model. Continue masked language modeling (MLM) pretraining on the antibody sequence corpus for 5 epochs. Use a batch size of 128, sequence length of 512, and a learning rate of 5e-5 with linear decay.

- Task-Specific Fine-tuning: Add a regression head on top of the pooled sequence representation from the DAPT model. Fine-tune on a labeled subset (50,000 sequences) for affinity score prediction (Spearman correlation loss) for 10 epochs with a reduced learning rate of 1e-5.

- Validation: Evaluate on a held-out test set of 5,000 sequences with experimentally determined binding affinities (KD values from SPR). Report Spearman's ρ between predicted and experimental log(KD).

Protocol 2: Predicting Viral Protein Escape Mutations

Objective: To adapt ESM2 to forecast escape mutations in the SARS-CoV-2 Spike protein for therapeutic antibody assessment.

Materials & Reagents:

- Hardware: 8x NVIDIA V100 GPUs.

- Software: DeepSpeed, PyTorch Lightning, Scikit-learn.

- Base Model: ESM2-3B.

- DAPT Dataset: 1.2 million Spike protein sequences from GISAID, aligned and filtered for completeness.

- Task Data: Experimentally mapped escape mutation profiles for 50 therapeutic antibodies from deep mutational scanning studies.

Methodology:

- Sequence Alignment & Processing: Perform multiple sequence alignment (MSA) of the viral protein dataset using MAFFT. Use the MSA to create a positional frequency matrix for context-aware masking during DAPT.

- Structured DAPT: Implement MSA-aware masking during continued pretraining, favoring masks at variable positions. Train for 3 epochs with a batch size of 64 and a learning rate of 3e-5.

- Downstream Model Architecture: For each antibody, formulate the task as a per-position binary classification (escape vs. non-escape). Use the DAPT-enhanced ESM2 embeddings as input to a shallow multilayer perceptron (2 layers, 256 units, ReLU).

- Training & Evaluation: Train one classifier per antibody on 80% of the mutational scan data. Validate on 20%. Performance is evaluated using the Area Under the Receiver Operating Characteristic Curve (AUC-ROC), averaged across all antibodies.

Visualizing the ESM2 DAPT Workflow for Therapeutic Proteins

The following diagram illustrates the logical and experimental workflow for applying ESM2 DAPT to antibody engineering, a core case study.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for ESM2 DAPT Experiments in Protein Engineering

| Item / Reagent | Supplier / Example | Function in ESM2 DAPT Workflow |

|---|---|---|

| Curated Protein Sequence Database | OAS (antibodies), GISAID (viral), UniProt | Provides the raw, domain-specific data for continued pretraining (DAPT). Quality and size directly impact model specialization. |

| High-Performance Computing (HPC) Cluster | AWS EC2 (p4d instances), Google Cloud A2 VMs, local GPU servers | Provides the necessary parallel processing power (multi-GPU, high VRAM) for training large models like ESM2 (650M to 15B parameters). |

| Deep Learning Framework | PyTorch with Hugging Face Transformers | The primary software environment for loading the base ESM2 model, modifying its architecture, and conducting DAPT and fine-tuning. |

| Sequence Alignment Tool | MAFFT, Clustal Omega, HMMER | Critical for processing viral protein families to create MSAs, which can inform context-aware masking strategies during DAPT. |

| Experimental Validation Dataset | SAbDab (structural antibodies), DMS datasets for viral proteins | Provides ground-truth labels (affinity, escape scores) for fine-tuning and, crucially, for validating the predictions of the DAPT-enhanced model. |

| Model Weights & Biases (W&B) / MLflow | Weights & Biases platform | Tracks DAPT experiments, logs hyperparameters, losses, and evaluation metrics, enabling reproducibility and comparative analysis. |

Building Your Specialized Model: A Step-by-Step ESM2 DAPT Pipeline

Within the broader thesis on Domain-adaptive pretraining with ESM2 for specific protein families research, the initial curation and preprocessing of a high-quality, family-specific sequence dataset is the foundational, non-negotiable step. The performance of downstream tasks—including variant effect prediction, structure inference, and functional site detection—is intrinsically bounded by the quality and relevance of this initial dataset. This protocol outlines a rigorous, reproducible pipeline for constructing such a dataset, tailored for subsequent fine-tuning or further pretraining of large language models like ESM2.

Data Acquisition and Initial Curation

The goal is to collect a comprehensive yet non-redundant set of protein sequences belonging to the target family (e.g., GPCRs, Kinases, CYPs). The primary source is the UniProt Knowledgebase.

Protocol 2.1: Family-Specific Sequence Retrieval from UniProt

- Define Family: Precisely define the target protein family using standardized classifications: Pfam IDs (e.g.,

PF00001for 7tm_1), InterPro signatures, or Gene Ontology (GO) terms. - Query UniProt: Use the UniProt REST API programmatically to retrieve all reviewed (Swiss-Prot) and unreviewed (TrEMBL) entries matching the classification.

- Initial Filtering: Include only canonical isoforms. Remove fragments (sequences with "Fragment" in the description or length < 100 amino acids, family-dependent). Extract metadata (identifier, length, organism, protein name).

- Cross-reference with PDB: Optionally query the RCSB PDB API to identify sequences with experimentally determined structures for later validation.

Table 1: Exemplar Quantitative Output from Initial UniProt Query for GPCRs

| Query Parameter | Value | Notes |

|---|---|---|

| Pfam ID | PF00001 (7tm_1) | Target family definition |

| UniProt Query String | (family:"pf:PF00001") |

|

| Total Sequences Retrieved | ~ 15,000 | Combined Swiss-Prot & TrEMBL |

| Sequences after Fragment Removal | ~ 12,500 | Removed sequences < 200 aa |

| Organism Distribution (Top 3) | Homo sapiens: 800, Mus musculus: 750, Rattus norvegicus: 600 | Useful for taxonomic diversity analysis |

Sequence Deduplication and Clustering

High sequence identity between dataset members leads to data leakage and overestimation of model performance. A strict deduplication and clustering step is required.

Protocol 3.1: MMseqs2-based Deduplication and Clustering

- Install MMseqs2:

conda install -c bioconda mmseqs2 - Create Sequence Database:

- Cluster at Target Identity Threshold: A 30-40% sequence identity is often used for deep learning to ensure broad diversity while retaining family membership.

- Extract Representative Sequences:

- Create Cluster Map: Generate a tab-separated file mapping cluster representatives to all member sequences for downstream analysis.

Table 2: Impact of Clustering at Different Sequence Identity Thresholds

| Sequence Identity Threshold | Number of Representative Sequences | Avg. Cluster Size | Purpose |

|---|---|---|---|

| 100% (Exact duplicates) | ~11,800 | 1.06 | Removes only identical sequences. |

| 70% (High similarity) | ~7,200 | 1.74 | Reduces redundancy, retains subfamilies. |

| 40% (Moderate diversity) | ~2,100 | 5.95 | Recommended for DAPT. Balances diversity and family coherence. |

| 30% (High diversity) | ~1,400 | 8.93 | Maximum diversity; risk of losing family-defining motifs. |

Dataset Balancing and Splitting

For machine learning, the dataset must be split into training, validation, and test sets without homology bias.

Protocol 4.1: Phylogeny-Guided Data Split using SCIkit-learn

- Generate Multiple Sequence Alignment (MSA): Use

clustaloormaffton the representative sequences. - Build Distance Matrix: Calculate pairwise sequence distances from the MSA (e.g., using Hamming distance or

biopython). - Hierarchical Clustering: Perform agglomerative clustering on the distance matrix to create a phylogenetic tree.

- Split Clusters: Use the

scikit-learnGroupShuffleSplitfunction, where the cluster IDs from Step 3 or a taxonomic class are used as groups to ensure no members of the same cluster/group are in different splits. - Final Validation: Verify that no sequence in the test/validation set shares >30% identity with any sequence in the training set using

blastpor MMseqs2 easy-search.

Table 3: Final Dataset Composition for a Kinase Family (Example)

| Dataset Split | Number of Sequences | Taxonomic Coverage (Unique Organisms) | Max Pairwise Identity to Train Set |

|---|---|---|---|

| Training Set | 1,650 | 420 | N/A |

| Validation Set | 200 | 80 | < 30% |

| Test Set | 250 | 95 | < 30% |

| Total | 2,100 | ~500 |

Preprocessing for ESM2 Input

Prepare the final sequence list for direct use in ESM2 training pipelines.

Protocol 5.1: Formatting for ESM2 Training

- Tokenization: ESM2 uses a predefined vocabulary. Convert amino acid sequences into token indices using the ESM tokenizer. Insert special tokens (

<cls>,<eos>,<pad>). - Sequence Length Filtering: ESM2 has a max context length (e.g., 1024). Filter out sequences exceeding this limit (rare for single domains).

- Create LMDB Dataset (Recommended for large datasets): For efficient data loading during pretraining, store tokenized sequences in an LMDB database.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protocol |

|---|---|

| UniProtKB REST API | Programmatic access to retrieve comprehensive, annotated protein sequences and metadata. |

| MMseqs2 | Ultra-fast, sensitive clustering and searching tool for deduplication at specified identity thresholds. Essential for managing large sequence sets. |

| MAFFT / Clustal Omega | Generates Multiple Sequence Alignments (MSAs) for phylogenetic analysis and homology-aware dataset splitting. |

| Biopython | Python library for biological computation. Used for parsing FASTA, calculating distance matrices, and handling sequence operations. |

| SCIKit-learn | Machine learning library used for implementing the GroupShuffleSplit algorithm for phylogeny-guided dataset splitting. |

| ESM Tokenizer & Utilities | Converts raw amino acid sequences into the token indices required for input into the ESM2 transformer model. |

| LMDB (Lightning Memory-Mapped Database) | A high-performance, memory-mapped key-value store. Used to create efficient datasets for fast data loading during GPU training. |

Visualizations

Title: Protein Family Dataset Curation and Preprocessing Workflow

Title: Dataset Volume Reduction Through Processing Steps

Model Architecture and Quantitative Comparison

The ESM2 model family provides a spectrum of sizes, offering a trade-off between predictive accuracy, computational cost, and practical utility for domain-adaptive pretraining on specific protein families.

Table 1: ESM2 Model Architecture Specifications & Benchmark Performance

| Model (Parameters) | Layers | Embedding Dim | Attention Heads | Pretraining Tokens (Uniref50) | pLDDT* (Avg.) | MSA Transformer Baselines (Bits per AA) | Recommended GPU VRAM (Fine-tuning) |

|---|---|---|---|---|---|---|---|

| ESM2-8M | 12 | 320 | 20 | 70B | ~65 | 4.12 | 8 GB |

| ESM2-35M | 20 | 480 | 20 | 70B | ~72 | 3.89 | 10 GB |

| ESM2-150M | 30 | 640 | 20 | 250B | ~78 | 3.54 | 16 GB |

| ESM2-650M | 33 | 1280 | 20 | 250B | ~82 | 3.32 | 24 GB (A100) |

| ESM2-3B | 36 | 2560 | 40 | 1.1T | ~85 | 3.18 | 80 GB (A100) |

| ESM2-15B | 48 | 5120 | 40 | 1.1T | ~87 | 3.02 | >80 GB (Multiple A100/H100) |

*pLDDT: Predicted Local Distance Difference Test (from ESM2 contact prediction head; higher is better).

Table 2: Selection Guide for Domain-Adaptive Pretraining

| Research Scenario & Objective | Recommended Model(s) | Key Rationale |

|---|---|---|

| Exploratory Analysis: Small protein family (<500 sequences), limited computational resources, proof-of-concept. | ESM2-8M, ESM2-35M | Fast iteration, can fine-tune on a single consumer GPU. Sufficient for capturing basic motifs. |

| Standard Family Study: Medium-sized family (500-10k sequences), functional site prediction, variant effect analysis. | ESM2-150M, ESM2-650M | Optimal balance. High accuracy for structure/function without prohibitive cost. Enables comprehensive ablation studies. |

| Large/Divergent Family or De Novo Design: Very large/diverse family (>10k seqs), predicting effects of radical mutations, generative tasks. | ESM2-3B, ESM2-15B | Massive capacity required to internalize complex, long-range dependencies and rare patterns in highly diverse sequence spaces. |

| Resource-Constrained Deployment: Embedding generation for massive sequence libraries, real-time prediction tools. | ESM2-8M, ESM2-35M | Extremely fast inference. Embeddings can be pre-computed and used for downstream models (e.g., classifiers) efficiently. |

Experimental Protocols for Model Selection & Evaluation

Protocol 1: Zero-Shot Fitness Prediction Benchmark Objective: Quantify the inherent biological knowledge of each ESM2 model size for your target family before domain-adaptive pretraining.

- Dataset Curation: Compile a validated dataset of protein variants for your target family with experimentally measured fitness (e.g., growth rate, activity, fluorescence). Split into train/test sets.

- Embedding Extraction: Use the pretrained ESM2 models (8M to 15B) to compute embeddings for all variant sequences.

- Probe Training: On the training split, train a simple linear regression or shallow MLP probe (fixed, not fine-tuning ESM2) to predict fitness from the embeddings.

- Evaluation: Evaluate the probe on the held-out test split. Record Pearson/Spearman correlation.

- Analysis: Plot model size vs. zero-shot performance. This identifies the "returns to scale" for your specific family.

Protocol 2: Controlled Domain-Adaptive Pretraining (DAPT) Objective: Systematically measure the performance gain from DAPT across model sizes.

- Base Models: Initialize with pretrained weights for ESM2-8M, 35M, 150M, and 650M.

- DAPT Dataset: Assemble a high-quality, family-specific sequence corpus. Apply masking (15%).

- Training: For each model size, run DAPT for a fixed number of steps (e.g., 10k) with identical hyperparameters (learning rate: 1e-4, batch size scaled accordingly).

- Downstream Evaluation: After DAPT, evaluate all models on:

- Contact Prediction: Compute precision@L/5 for the family's known structures.

- Variant Effect Prediction: Use the DAPT-adapted models in Protocol 1.

- Compute/Performance Trade-off: Record the wall-clock time and GPU-hours used for DAPT for each model. Plot downstream accuracy vs. computational cost.

Visualizations

Model Selection Workflow for DAPT

Performance-Cost Trade-off by Model Size

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ESM2 Model Selection and DAPT

| Item / Solution | Function / Purpose in Protocol |

|---|---|

| ESM2 Model Weights (Hugging Face) | Pretrained checkpoints for all model sizes. The starting point for zero-shot evaluation and DAPT. |

| Protein Variant Fitness Dataset (e.g., from literature, Deep Mutational Scans) | Ground-truth data required for Protocol 1 (Zero-Shot Benchmark) to evaluate model intrinsic knowledge. |

| Family-Specific Sequence Database (e.g., from Pfam, InterPro, custom alignment) | Curated corpus for Domain-Adaptive Pretraining (DAPT, Protocol 2). Quality and size directly impact DAPT success. |

| GPU Computing Cluster (NVIDIA A100/H100 recommended for models >650M) | Essential hardware for running DAPT and inference on larger models. Memory and speed are critical limiting factors. |

| Fine-Tuning Framework (e.g., PyTorch Lightning, BioTransformers, Hugging Face Accelerate) | Libraries to efficiently manage DAPT training loops, distributed data loading, and mixed-precision training, reducing implementation overhead. |

| Structure Validation Set (e.g., PDB structures for target family) | Used to evaluate contact prediction accuracy post-DAPT, providing a biophysical validation metric independent of variant data. |

| Linear Probe / Shallow Neural Network Code | Simple model architecture used in Protocol 1 to predict fitness from frozen ESM2 embeddings, isolating the information content of the embeddings. |

This protocol details the configuration of critical training parameters for domain-adaptive pretraining (DAPT) of the ESM2 protein language model for specific protein families. Optimizing learning rates, masking strategies, and batch sizes is essential for efficient adaptation and to maximize the model's utility in downstream drug discovery tasks.

Key Parameter Configurations & Quantitative Data

The following tables summarize recommended parameter ranges based on current literature and experimental findings for adapting ESM2 models (with 650M parameters as a baseline).

Table 1: Learning Rate Schedules for DAPT

| Parameter | Recommended Value / Range | Rationale & Notes |

|---|---|---|

| Initial LR (AdamW) | 1e-5 to 5e-5 | Prevents catastrophic forgetting of general knowledge. |

| LR Scheduler | Linear Decay with Warmup | Standard for transformer fine-tuning. |

| Warmup Steps | 500 - 2000 steps (or 5-10% of steps) | Stabilizes training start. |

| Minimum LR | 1e-7 | Lower bound for decay. |

| Epochs | 5 - 20 | Typically sufficient for convergence on family-specific data. |

Table 2: Masking Strategies for Protein Sequence DAPT

| Strategy | Masking Probability | Implementation Notes | Best For |

|---|---|---|---|

| Standard BERT-style | 15% | Uniform random token masking. | General family adaptation. |

| Span Masking | 15% (mean span length: 3-5) | Masks contiguous blocks of tokens. | Learning local structural motifs. |

| Conservation-aware | 5-10% (low-conservation sites) | Lower probability at high-conservation sites. | Emphasizing variable, functional regions. |

| Full Sequence MLM | 100% (per-sequence) | Each sequence in batch is masked. | Intensive, compute-heavy training. |

Table 3: Batch Size and Related Hardware Considerations

| Configuration | Typical Batch Size (Tokens) | Gradient Accumulation Steps | Hardware Minimum | Memory/Time Trade-off |

|---|---|---|---|---|

| Single GPU (24GB) | 8,000 - 15,000 | 4 - 8 | 1 x A5000/4090 | Higher accumulation saves memory but increases time. |

| Multi-GPU Node | 32,000 - 65,000 | 1 - 2 | 4 x A100 (40GB) | Enables larger effective batches for stable gradients. |

| Max Efficient | Up to 1M tokens | 1 (full batch) | Large Cluster | For largest models, scales well with distributed training. |

Experimental Protocols

Protocol 1: Optimizing Learning Rate via Linear Probe Sweep

Objective: To determine an optimal initial learning rate for DAPT with minimal computational overhead.

- Freeze Core Model: Keep all parameters of the base ESM2 model frozen.

- Attach Probe: Add a linear prediction head atop the final layer output (e.g., for a simple task like solvent accessibility).

- LR Sweep: Train the probe only for 1-2 epochs over the target family dataset across a range of LRs (e.g., 1e-6, 1e-5, 1e-4, 1e-3).

- Analyze: Plot validation loss vs. LR. Select the LR yielding the lowest loss as the starting point for full DAPT.

Protocol 2: Evaluating Masking Strategy Efficacy

Objective: To identify the masking strategy that yields the most informative model for downstream tasks.

- Baseline Training: Perform DAPT on the target family using standard 15% uniform masking. Train for a fixed number of steps (e.g., 10k).

- Alternative Strategies: Repeat training from the same base checkpoint using different masking strategies (see Table 2). Keep total training steps constant.

- Downstream Evaluation: Fine-tune each adapted model on a held-out, family-specific function prediction task.

- Metric: Compare the convergence speed and final performance (e.g., AUC-ROC) of each model. The best masking strategy maximizes downstream performance.

Protocol 3: Determining Maximum Efficient Batch Size

Objective: To find the largest batch size that improves training stability without wasting compute.

- Benchmark Hardware: Start with a small batch size that fits in GPU memory without accumulation.

- Scale Up: Double the batch size, using gradient accumulation if necessary to simulate the larger batch.

- Monitor Loss: For each batch size configuration, observe the training loss curve over a few hundred steps.

- Identify Plateau: Find the point where increasing batch size no longer provides a smoother or faster decrease in loss. This is the maximum efficient batch size for your hardware setup.

Visualizations

Title: Learning Rate Schedule for ESM2 DAPT

Title: Protein Sequence Masking Strategy Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for ESM2 DAPT Experiments

| Item | Function & Application | Example/Notes |

|---|---|---|

| Base ESM2 Models | Pretrained starting points for adaptation. | ESM2 650M param model (esm2t33650M_UR50D) from FAIR. |

| Protein Family Dataset | Curated sequences for target family. | From Pfam, InterPro, or custom alignment in FASTA format. |

| Deep Learning Framework | Codebase for model training and evaluation. | PyTorch (v2.0+) with Hugging Face Transformers library. |

| Hardware with GPU | Accelerated compute for model training. | NVIDIA A100/A6000 (40-80GB VRAM) for large batches. |

| Sequence Alignment Tool | For generating MSAs for conservation analysis. | MMseqs2 (fast, scalable) or HMMER (sensitive). |

| LR Scheduler | Manages learning rate during training. | PyTorch LinearLR or CosineAnnealingLR with warmup. |

| Gradient Checkpointing | Saves GPU memory at cost of compute. | Enabled via model.gradient_checkpointing_enable(). |

| Mixed Precision Training | Speeds training and reduces memory usage. | Use PyTorch Automatic Mixed Precision (AMP). |

| Distributed Data Parallel | Multi-GPU training for larger batches. | PyTorch DDP for scaling across nodes. |

| Training Monitoring | Tracks loss, LR, and resource usage. | Weights & Biases (W&B) or TensorBoard. |

Application Notes

Domain-adaptive pretraining (DAPT) of protein language models like ESM2 tailors general-purpose models to specific protein families, enhancing performance on downstream tasks such as function prediction, stability analysis, and binding site identification. This step bridges foundational knowledge and specialized research applications.

Key Advantages:

- Enhanced Feature Representation: Captures nuanced biophysical and evolutionary patterns within a target family (e.g., kinases, GPCRs, antibodies).

- Improved Data Efficiency: Yields accurate predictions with fewer labeled examples post-adaptation.

- Flexible Framework: The Hugging Face

transformerslibrary provides a standardized interface for loading models, managing datasets, and implementing training loops with PyTorch.

Quantitative Performance Summary: The following table compares baseline ESM2 performance versus domain-adapted models on benchmark tasks for two protein families.

Table 1: Performance Comparison of Baseline vs. Domain-Adapted ESM2 Models

| Protein Family | Model | Adaptation Data (Sequences) | Task | Metric | Baseline ESM2 | Domain-Adapted ESM2 |

|---|---|---|---|---|---|---|

| Kinases | ESM2-650M | ~450,000 | Catalytic residue prediction | Matthews Correlation Coefficient (MCC) | 0.72 | 0.89 |

| GPCRs (Class A) | ESM2-3B | ~150,000 | Thermostability change (ΔΔG) prediction | Pearson's r | 0.65 | 0.82 |

| Antibodies | ESM2-150M | ~5,000,000 | Affinity maturation (next-step mutation score) | Spearman's ρ | 0.41 | 0.78 |

Experimental Protocol: Domain-Adaptive Pretraining of ESM2

Objective: To adapt a pretrained ESM2 model to a specific protein family using masked language modeling (MLM) on a curated sequence alignment.

Research Reagent Solutions

| Reagent / Tool | Function / Purpose |

|---|---|

ESM2 Model (e.g., esm2_t12_35M_UR50D) |

Foundational protein language model providing initial weights for adaptation. |

Hugging Face transformers Library |

Primary API for loading models, tokenizers, and managing the training lifecycle. |

| PyTorch | Deep learning framework for tensor operations and automatic differentiation. |

| FASTA Dataset of Target Family | Curated, aligned (e.g., via ClustalOmega) sequences for the protein family of interest. |

| Weights & Biases (W&B) / TensorBoard | Experiment tracking and visualization of loss, learning rate, and embeddings. |

Hugging Face datasets Library |

Efficient data loading, shuffling, and splitting into training/validation sets. |

accelerate Library |

Simplifies training code for mixed-precision and multi-GPU/TPU execution. |

| AdamW Optimizer | Default optimizer for stable training with weight decay regularization. |

| Learning Rate Scheduler | Cosine or linear scheduler to reduce LR over time for convergence stability. |

Procedure:

A. Environment and Data Preparation

- Install packages:

pip install transformers torch datasets biopython accelerate wandb - Data Curation: Gather target family sequences from UniProt/Pfam. Perform multiple sequence alignment (MSA) using ClustalOmega or MAFFT. Filter for quality and diversity (e.g., <90% identity).

- Tokenization: Use the ESM2 tokenizer (

AutoTokenizer.from_pretrained("facebook/esm2-...")) to convert sequences to token IDs. The tokenizer automatically adds<cls>and<eos>tokens. - Dataset Creation: Implement a PyTorch Dataset class to load tokenized sequences. Apply dynamic masking (15% masking probability) within the

__getitem__method using theDataCollatorForLanguageModelingfrom Hugging Face.

B. Model Configuration and Training Loop

- Load Pretrained Model:

- Define Training Arguments:

- Initialize Trainer and Train:

C. Downstream Task Fine-tuning

- Load the domain-adapted model.

- Replace the MLM head with a task-specific head (e.g., a classification or regression layer).

- Fine-tune on labeled downstream task data (e.g., stability labels) using a significantly lower learning rate (e.g., 1e-5).

Visualization of Workflows

Diagram 1: DAPT and Downstream Fine-tuning Workflow

Diagram 2: Model Architecture & Masked Language Modeling

This section provides protocols for integrating domain-adapted ESM2 models into three critical downstream tasks within the thesis framework on specific protein families. The adapted models encode nuanced biophysical and evolutionary knowledge, enabling enhanced performance in predicting molecular function, guiding rational engineering, and informing computational docking studies.

Application Note 1: Molecular Function Prediction

Protocol: Fine-tuning for Functional Classification

Objective: Predict Gene Ontology (GO) terms or Enzyme Commission (EC) numbers for proteins from the target family.

Materials & Pre-requisites:

- Domain-adapted ESM2 model (e.g.,

esm2_t33_650M_UR50Dfine-tuned on Kinase family). - Labeled dataset of target protein sequences with associated GO/EC terms.

- Hardware: GPU (e.g., NVIDIA A100 with 40GB VRAM).

Procedure:

- Data Preparation: Split dataset into training/validation/test sets (70/15/15). Use sequence identity <30% between splits. Tokenize sequences using the ESM2 tokenizer.

- Model Architecture: Attach a multi-label classification head on top of the [CLS] token's embedding. Use a fully connected layer followed by a sigmoid activation for each output node (GO term).

- Training:

- Loss Function: Binary Cross-Entropy.

- Optimizer: AdamW (learning rate: 2e-5, weight decay: 0.01).

- Batch Size: 8 (adjust based on GPU memory).

- Monitor validation loss for early stopping.

- Evaluation: Calculate standard metrics: Precision, Recall, F1-score, and Area Under the Precision-Recall Curve (AUPRC) per term and macro-averaged.

Typical Performance Metrics (Kinase Function Prediction): The table below compares a generic ESM2 model with a domain-adapted version on held-out kinase family proteins.

Table 1: Function Prediction Performance Comparison

| Model Variant | Macro F1-Score | AUPRC | Inference Time per Sequence (ms) |

|---|---|---|---|

| ESM2 (General) | 0.62 | 0.58 | 120 |

| ESM2 (Domain-adapted) | 0.78 | 0.81 | 125 |

Workflow: Function Prediction Pipeline

Application Note 2: Protein Engineering for Stability or Affinity

Protocol: Variant Effect Prediction & Ranking

Objective: Predict the functional impact (e.g., ΔΔG for stability, ΔΔG for binding) of single-point mutations to guide rational design.

Materials:

- Domain-adapted ESM2 model.

- Structure (PDB) or accurate homology model of wild-type protein.

- List of target mutation sites and residues.

Procedure:

- Embedding Extraction: Generate per-residue embeddings for the wild-type sequence and for each mutant sequence (in silico mutagenesis).

- Feature Construction: For each position

i, compute the cosine distance or L2 norm between the wild-type embedding vector and the mutant embedding vector. Incorporate evolutionary metrics from the model's attention heads (e.g., attention entropy change). - Regression Model: Train a shallow feed-forward network or gradient boosting regressor (e.g., XGBoost) on a curated dataset of experimental ΔΔG values. Use the embedding-derived features as input.

- Prediction & Ranking: Apply the trained regressor to new mutations. Rank all possible mutations at a target site by predicted ΔΔG.

Validation Data (Antibody Affinity Maturation): Performance on predicting changes in binding affinity (ΔΔG) for antibody-antigen interfaces.

Table 2: Variant Effect Prediction Accuracy

| Prediction Method | Pearson's r | Spearman's ρ | Mean Absolute Error (kcal/mol) |

|---|---|---|---|

| ESM1v (General) | 0.45 | 0.41 | 1.8 |

| RosettaDDG | 0.52 | 0.48 | 1.5 |

| ESM2 (Domain-adapted) | 0.71 | 0.69 | 1.1 |

Workflow: Protein Engineering Guide

Application Note 3: Informing Protein-Ligand & Protein-Protein Docking

Protocol: Using Attention Maps to Define Binding Interfaces

Objective: Improve docking pose generation and scoring by identifying potential binding residues from the model's self-attention patterns.

Materials:

- Domain-adapted ESM2 model.

- Protein receptor sequence.

- Ligand or binding partner information (SMILES or sequence).

Procedure:

- Attention Map Generation: Run the target protein sequence through the adapted ESM2. Extract the attention maps from the final layer, averaging heads.

- Interface Identification: Residues receiving high attention from dispersed positions often belong to functional or binding sites. Define a threshold (e.g., top 90th percentile of attention weight sums) to predict "interface nodes."

- Docking Constraint: In molecular docking software (e.g., HADDOCK, AutoDock Vina), apply soft constraints or positional restraints to guide the ligand/protein toward the predicted interface.

- Scoring Function Integration: Use the per-residue embedding norms (a proxy for evolutionary conservation/variability) to weight terms in a scoring function, penalizing poses that bury highly conserved, predicted-interface residues without interactions.

Performance Impact on Docking (GPCR-Ligand Example): Comparative results of docking success rates (RMSD < 2.0 Å) with and without ESM2-derived constraints.

Table 3: Docking Success Rate with ESM2 Guidance

| Docking Protocol | Success Rate (Top Pose) | Success Rate (Top 5 Poses) | Computational Time Increase |

|---|---|---|---|

| Standard Vina | 24% | 42% | Baseline |

| Vina + ESM2 Constraints | 38% | 61% | +15% |

Workflow: Docking Enhancement Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for Downstream Task Integration

| Item | Function/Description | Example Source/Product |

|---|---|---|

| Domain-adapted ESM2 Weights | Fine-tuned model checkpoint capturing family-specific patterns. | Saved .pt file from thesis Step 4. |

| Labeled Functional Dataset | Curated set of sequences with ground-truth annotations for fine-tuning. | UniProt GOA, BRENDA, Pfam. |

| Variant Effect Dataset | Experimental measurements of mutation impacts (ΔΔG, fluorescence, activity). | FireProtDB, ProThermDB, SKEMPI 2.0. |

| Docking Software Suite | Program to computationally simulate and score binding interactions. | HADDOCK, AutoDock Vina, Rosetta. |

| GPU Computing Resource | Hardware for efficient model inference and training. | NVIDIA A100/T4 GPU (Cloud: AWS, GCP). |

| Sequence Tokenizer | Converts amino acid sequences to model-readable token IDs. | esm Python package (transformers). |

| Embedding Extraction Script | Custom code to get per-residue and [CLS] token embeddings. | Adapted from ESM2 example notebooks. |

| Metrics Calculation Library | For standardized evaluation of predictions. | scikit-learn, logbook. |

Overcoming Pitfalls: Expert Strategies for Efficient and Effective DAPT

Catastrophic forgetting is a fundamental challenge in continual learning for machine learning models, where training on new data (e.g., a novel protein family) leads to a drastic performance drop on previously learned tasks (e.g., original pretrained protein families). In the context of domain-adaptive pretraining with ESM2 (Evolutionary Scale Modeling 2) for specific protein families, retaining the broad, general knowledge of the base 650M or 15B parameter model while adapting to a specialized domain is critical. Effective mitigation of forgetting ensures the adapted model maintains robust performance on both general protein sequence tasks and the new, targeted family.

Application Notes: Core Techniques for Knowledge Retention

The following techniques are applicable when taking a pretrained ESM2 model and performing continued pretraining or fine-tuning on a specific protein family dataset (e.g., kinases, GPCRs, antibody chains).

| Technique Category | Specific Method | Key Principle | Primary Use Case in ESM2 Adaptation |

|---|---|---|---|

| Regularization-Based | Elastic Weight Consolidation (EWC) | Constrains important parameters for previous tasks from changing. | Protecting general protein knowledge during family-specific tuning. |

| Learning without Forgetting (LwF) | Uses distillation loss on old task outputs. | When original task data is unavailable. | |

| Architectural | Adapters / LoRA | Adds small, trainable modules; freezes base model. | Efficient, parameter-isolated adaptation. |

| Progressive Neural Networks | Expands network with new columns for new tasks. | High-resource scenario for sequential family adaptation. | |

| Replay-Based | Experience Replay (ER) | Interleaves old and new data in batches. | Most effective if original pretraining data subset is accessible. |

| Generative Replay | Uses a generative model to produce pseudo-old data. | When original data cannot be stored/used. |

Quantitative Data Summary: Table 1: Comparative performance of retention techniques on a benchmark of adapting ESM2-650M from general proteins to the Kinase family, then testing on both the General Test Set (GB1, fluorescence) and the Kinase validation set.

| Technique | General Test Set Perf. (↓ Drop from Base) | Kinase Family Perf. (↑ Gain from Base) | Retained Parameters |

|---|---|---|---|

| Fine-Tuning (Naïve) | -42% | +31% | 100% |

| EWC | -12% | +28% | 100% |

| LoRA (Rank=8) | -2% | +25% | 0.08% |

| Experience Replay | -4% | +29% | 100% |

| Adapter (Bottleneck=64) | -1% | +24% | 0.5% |

Detailed Experimental Protocols

Protocol 3.1: Domain Adaptation of ESM2 using LoRA for a Target Protein Family

Objective: Adapt ESM2 to a new protein family (e.g., Proteases) with minimal forgetting of general knowledge.

Materials: Pretrained ESM2 model (esm2_t36_3B_UR50D), target family sequence dataset (FASTA), general validation suite (e.g., downstream task datasets).

Procedure:

- Data Preparation:

- Format target family sequences into the same tokenization used for ESM2 pretraining.

- Optionally, prepare a small, held-out subset of the original pretraining data or a representative general protein benchmark.

Model Setup:

- Load the pretrained ESM2 model and freeze all parameters.

- Inject LoRA matrices into the query and value projection layers of each transformer attention block. Typical rank

r=8, alpha=16, dropout=0.1.

Training Loop:

- Use a masked language modeling (MLM) objective on the target family sequences. Masking probability: 15%.

- Batch Composition (if using Experience Replay): For each batch, allocate 70% to target family sequences and 30% to sampled original data.

- Optimizer: AdamW (lr=5e-4, weight_decay=0.01).

- Training: 5-10 epochs, linear warmup for first 10% of steps.

Evaluation:

- Target Family: Evaluate perplexity on a held-out set of the target family.

- General Knowledge: Run the model on the general validation suite (e.g., secondary structure prediction, remote homology detection) to quantify forgetting.

Protocol 3.2: Evaluating Forgetting with Elastic Weight Consolidation (EWC)

Objective: Apply EWC during fine-tuning to penalize changes to parameters important for the base model's performance.

Procedure:

- Importance Estimation (Pre-adaptation):

- On a representative sample of the original data distribution (or a proxy task dataset), compute the Fisher Information Matrix diagonal

F_ifor each parameterθ_iof the base ESM2 model. - This step is computationally heavy but one-time. Approximate using 10,000 random sequences from the original training corpus.

- On a representative sample of the original data distribution (or a proxy task dataset), compute the Fisher Information Matrix diagonal

- Adaptation with EWC Loss:

- Total Loss =

L_new(θ) + λ * Σ_i F_i * (θ_i - θ_old_i)^2 L_new: MLM loss on the new protein family data.λ: Hyperparameter controlling strength of consolidation (start grid search at λ=1000).θ_old_i: Original parameter value.- Train with standard optimizer, but the EWC term adds a penalty for shifting important parameters.

- Total Loss =

Visualization: Workflows and Relationships

Title: Workflow for Knowledge Retention in ESM2 Adaptation

Title: LoRA Mechanism for Parameter-Efficient Adaptation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Domain-Adaptive Pretraining Experiments with ESM2

| Item / Reagent | Function / Purpose | Example / Specification |

|---|---|---|

| Base Pretrained Model | Foundational protein language model to adapt. | ESM2 (esm2_t36_3B_UR50D or esm2_t48_15B_UR50D) from FAIR. |

| Target Family Dataset | Curated sequences for domain-specific training. | Kinase (Pfam PF00069) or GPCR (Pfam PF00001) sequences in FASTA format. |

| General Validation Suite | Benchmarks to quantify catastrophic forgetting. | Tasks from PEER benchmark (e.g., fluorescence, stability, secondary structure). |

| LoRA / Adapter Library | Enables parameter-efficient fine-tuning. | peft (Parameter-Efficient Fine-Tuning) library for PyTorch. |

| Fisher Estimation Dataset | Data for calculating parameter importance in EWC. | 10k random UniRef50 sequences (or the original training data slice). |

| High-Performance Compute | Hardware for model training and inference. | NVIDIA A100 / H100 GPU with ≥40GB VRAM for 3B+ parameter models. |

| Optimization Framework | Software for training and evaluation. | PyTorch 2.0+, Transformers library, BioLM-specific pipelines. |

Diagnosing and Mitigating Overfitting on Small or Imbalanced Datasets

Within the thesis on Domain-adaptive pretraining with ESM2 for specific protein families, a core challenge is model overfitting due to the limited and often imbalanced nature of experimental protein data. This application note details protocols for diagnosis and mitigation, ensuring robust, generalizable models for therapeutic protein engineering and drug discovery.

Quantitative Indicators of Overfitting

Key metrics to monitor during model training on small/imbala`nced protein datasets.

Table 1: Key Metrics for Diagnosing Overfitting

| Metric | Healthy Range (Typical) | Overfitting Indicator | Interpretation in Protein Family Context |

|---|---|---|---|

| Train vs. Val Loss | Converge closely | Large, growing gap after early epochs | Model memorizes family-specific motifs rather than learning generalizable folding/function rules. |

| Accuracy/Precision Recall | Val within ~5% of Train | Val metrics significantly lower (>10%) | High performance on training family variants, fails on held-out sub-families or mutation variants. |

| Confidence Calibration | High confidence on correct predictions | High confidence on incorrect val predictions | Model is poorly calibrated, unreliable for predicting effects of novel mutations. |

| Embedding Space Analysis | Val clusters within train distribution | Val samples as outliers or overly tight clusters | ESM2 embeddings fine-tuned on small data lose general protein semantic knowledge. |

Experimental Protocols for Diagnosis

Protocol 3.1: Train-Validation-Test Split for Imbalanced Protein Families

Objective: Create representative splits that reflect biological rarity.

- Stratification: Group sequences by functional label (e.g., enzymatic activity) AND sequence similarity cluster (using MMseqs2 at 70% identity).

- Split Ratio: For datasets < 10,000 sequences, use 70/15/15 (Train/Val/Test). For very small datasets (~100s), implement nested cross-validation.

- Hold-out Test Set: Ensure the test set contains entire sub-families or functional groups not seen during training/validation to assess true generalization.

Protocol 3.2: Learning Curve Analysis

Objective: Determine if more data would help.

- Train multiple ESM2 fine-tuning models on increasing subsets of the training data (e.g., 10%, 30%, 50%, 100%).

- Plot training and validation performance (e.g., AUC-ROC, loss) against dataset size.

- Diagnosis: If validation performance plateaus while training performance improves linearly, the model is overfitting to the current data's idiosyncrasies.

Mitigation Strategies & Protocols

Protocol 4.1: Strategic Data Augmentation for Protein Sequences

Objective: Artificially expand training diversity without altering functional semantics.

- Homologous Sequence Injection: Use tools like HMMER to find distant homologs from UniRef90 (excluding test families) and add them to training.

- Controlled Mutational Noise: For each sequence, generate in-silico mutants using BLOSUM62 substitution probabilities (3-5% mutation rate), excluding critical active-site residues (identified from Pfam).

- Fragment Sampling: For longer proteins, create overlapping subsequence windows (length 256-512) during training, preserving labels.

Protocol 4.2: Loss Function Modification for Class Imbalance

Objective: Prevent model bias toward dominant protein function classes.

- Calculate Class Weights:

weight_class = total_samples / (num_classes * samples_in_class) - Implement Weighted Cross-Entropy: Use calculated weights in PyTorch's

CrossEntropyLoss(weight=class_weights). - Alternative - Focal Loss: Use

FocalLoss(alpha-balanced) to down-weight easy, majority-class examples.

Protocol 4.3: Regularized Fine-tuning of ESM2

Objective: Retain general language knowledge while adapting to specific family.

- Layer-wise Learning Rate Decay (LLRD): Apply lower learning rates to earlier ESM2 layers, higher rates to the task-specific head.

- Freeze Early Layers: Freeze the first 10-15 of ESM2's 33 layers during initial fine-tuning phases.

- Sharpness-Aware Minimization (SAM): Use SAM optimizer to find parameters in flat loss minima, which generalize better.

Visualization of Workflows

Workflow for Diagnosing & Mitigating Overfitting

DAPT Bridges General & Task-Specific Knowledge

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Robust Protein ML

| Item | Function/Description | Key Consideration for Small Data |

|---|---|---|

| ESM2 (3B params) | Foundational protein language model for embedding and transfer learning. | Prefer over larger 15B model for small data to reduce overfitting risk. |

| PyTorch / Hugging Face Transformers | Framework for model implementation, fine-tuning, and loss function customization. | Essential for implementing weighted loss, LLRD, and SAM. |

| MMseqs2 | Ultra-fast protein sequence clustering and search. | Critical for creating biologically meaningful, non-redundant train/val/test splits. |

| HMMER & Pfam Database | Profile HMMs for protein family detection and alignment. | Used for data augmentation via homology search and identifying conserved residues to exclude from mutational augmentation. |

| UniRef90 | Clustered sets of sequences from UniProtKB. | Source for retrieving diverse, non-redundant homologs during data augmentation. |

| Scikit-learn | Library for metrics, stratified sampling, and learning curve analysis. | Used to compute ROC-AUC, precision-recall, and calibration curves. |

| Weights & Biases (W&B) | Experiment tracking and visualization platform. | Vital for comparing multiple fine-tuning runs with different hyperparameters and regularizations. |

| AlphaFold2 DB or PDB | Source of protein structures. | Optional: Use predicted/experimental structures to validate model predictions on functional residues. |

Domain-adaptive pretraining of large protein language models like ESM2 (Evolutionary Scale Modeling 2) for specific protein families is a computationally intensive task. This Application Note provides protocols and strategies for conducting such research under constraints of limited GPU memory and compute hours, a common scenario in academic and early-stage industrial labs.

Quantitative Data on Model Costs & Hardware

The following table summarizes the computational requirements for key ESM2 model variants, based on current benchmarking data.

Table 1: ESM2 Model Specifications & Approximate Resource Requirements

| ESM2 Model Variant | Parameters | FP32 Memory (Min.) | FP16/BF16 Memory (Min.) | Recommended GPU (Min.) | Pretraining FLOPs (Est.) |

|---|---|---|---|---|---|

| ESM2-8M | 8 Million | 0.5 GB | 0.25 GB | 1x RTX 3060 (8GB) | ~1e16 |

| ESM2-35M | 35 Million | 2 GB | 1 GB | 1x RTX 3070 (8GB) | ~5e16 |

| ESM2-150M | 150 Million | 8 GB | 4 GB | 1x RTX 3090 (24GB) | ~2e17 |

| ESM2-650M | 650 Million | 24 GB | 12 GB | 1x A100 (40/80GB) | ~1e18 |

| ESM2-3B | 3 Billion | 72 GB | 36 GB | 2x A100 (80GB) w/ NVLink | ~5e18 |

Table 2: Cost-Benefit Analysis of Common GPU Cloud Instances (Per Hour)

| Cloud Provider | Instance Type | GPU(s) | vCPU | RAM | Approx. Cost/Hr ($) | Suitability for ESM2-150M DAPT |

|---|---|---|---|---|---|---|

| AWS | g4dn.xlarge | 1x T4 (16GB) | 4 | 16GB | 0.526 | Evaluation & Fine-tuning only |

| Azure | NC6s_v3 | 1x V100 (16GB) | 6 | 112GB | 1.296 | Full DAPT feasible |

| Google Cloud | n1-standard-8 | 1x T4 (16GB) | 8 | 30GB | 0.595 | Evaluation & Fine-tuning only |

| Lambda Labs | 1x A100 (40GB) | 1x A100 (40GB) | 12 | 85GB | 1.299 | Ideal for up to 650M DAPT |

| Paperspace | P6000 | 1x P6000 (24GB) | 8 | 30GB | 0.788 | Good for 150M DAPT |

Core Strategies & Protocols

Strategy A: Gradient Checkpointing & Reduced Precision Training

Protocol: Implementing Mixed Precision with Activation Checkpointing

- Framework Setup: Use PyTorch 2.0+ with

torch.cuda.ampfor Automatic Mixed Precision (AMP) and thetorch.utils.checkpointmodule. - Model Wrapping: Identify the transformer blocks in the ESM2 model. Wrap the forward function of selected blocks using

torch.utils.checkpoint.checkpoint. - AMP Training Loop:

Strategy B: Efficient Data Loading & Sequence Batching

Protocol: Dynamic Batching by Sequence Length

- Data Preprocessing: Tokenize your protein family sequences using the ESM2 alphabet. Store tokens and sequence lengths.

- Batch Sampler: Implement a

BatchSamplerthat groups sequences of similar lengths to minimize padding. - Collate Function: Create a custom collate function that pads to the maximum length within the batch, not the global maximum.