Beyond Docking: How Deep Learning Revolutionizes Protein-Ligand Interaction Prediction in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on the transformative role of deep learning (DL) in predicting protein-ligand interactions (PLI).

Beyond Docking: How Deep Learning Revolutionizes Protein-Ligand Interaction Prediction in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the transformative role of deep learning (DL) in predicting protein-ligand interactions (PLI). We begin by exploring the core challenges of traditional computational methods and the fundamental concepts of PLI. We then detail key methodological architectures, including graph neural networks and transformers, and their practical applications in virtual screening and binding affinity prediction. The guide addresses common challenges, such as data scarcity and model interpretability, offering strategies for troubleshooting and optimization. Finally, we present a comparative analysis of state-of-the-art tools and validation frameworks, benchmarking their performance against established methods. This synthesis aims to equip scientists with the knowledge to effectively integrate DL into their computational pipelines, accelerating rational drug design.

The Protein-Ligand Puzzle: Why Deep Learning Is a Game Changer in Structural Bioinformatics

Molecular docking and scoring functions are cornerstone computational tools in structure-based drug design, tasked with predicting the binding pose of a small molecule (ligand) within a protein's active site and estimating the strength of that interaction (binding affinity). While instrumental in virtual screening and lead optimization, these methods possess well-documented limitations that constrain their predictive accuracy and reliability. This application note details these challenges within the broader research context of developing deep learning (DL) models to transcend these limitations and achieve more accurate protein-ligand interaction prediction.

The primary challenges can be categorized into force field inaccuracies, scoring function deficiencies, and conformational sampling issues. The following table summarizes key quantitative benchmarks that highlight these limitations.

Table 1: Benchmarking Performance of Classical Docking & Scoring Functions

| Limitation Category | Typical Benchmark Metric | Representative Performance (State-of-the-Art Classical Methods) | Implication for Drug Discovery |

|---|---|---|---|

| Pose Prediction (Sampling & Scoring) | Root-Mean-Square Deviation (RMSD) < 2.0 Å from crystallographic pose | ~70-80% success rate on curated datasets (e.g., PDBbind Core Set) | ~20-30% of predicted binding modes are incorrect, misleading downstream analysis. |

| Affinity Prediction (Scoring) | Pearson's R (linear correlation) between predicted and experimental ΔG/pKi | R ≈ 0.6 - 0.7 on cross-validation within PDBbind; drops significantly to R ~0.3-0.5 on blind tests. | Poor ranking of ligands; limited utility for quantitative affinity prediction. |

| Virtual Screening Enrichment | Enrichment Factor (EF) at 1% of database screened | EF₁% varies widely (5-30) and is highly target- and library-dependent; often inconsistent. | Inefficient identification of true hits, leading to high experimental validation costs. |

| Protein Flexibility | Success rate on targets with substantial binding site conformational change | Dramatic decrease (>50% drop) compared to rigid receptors. | Failure to dock ligands that induce fit or require alternative side-chain rotamers. |

| Solvation & Entropy | Correlation for ligands with high solvation/entropic penalty | Systematic errors; scoring functions struggle with hydrophobic vs. polar desolvation. | Incorrect preference for charged or overly polar ligands, skewing lead optimization. |

Detailed Experimental Protocols for Benchmarking

Protocol 3.1: Standardized Evaluation of Docking Pose Accuracy

Objective: To assess a docking program's ability to reproduce a known crystallographic ligand pose. Materials:

- Software: Docking suite (e.g., AutoDock Vina, GOLD, Glide), RDKit or Open Babel for file preparation.

- Dataset: Curated set of protein-ligand complexes from PDBbind Core Set (or CASF benchmark).

- Hardware: Multi-core Linux workstation or cluster.

Procedure:

- Dataset Preparation: Download the PDBbind Core Set. For each complex, extract the protein and the cognate ligand from the PDB file.

- Protein Preparation: Using the docking suite's tools or a tool like Schrödinger's Protein Preparation Wizard (described conceptually):

- Add missing hydrogen atoms.

- Assign protonation states for His, Asp, Glu, Lys at pH 7.4.

- Optimize hydrogen-bonding networks.

- Remove crystallographic water molecules, except conserved, structural ones.

- Ligand Preparation: Generate 3D conformations from the ligand's SMILES string. Assign correct tautomeric and protonation states.

- Grid Generation: Define a docking search space. Typically, a box centered on the crystallographic ligand's centroid with dimensions 20x20x20 ų.

- Docking Execution: Run the docking algorithm to generate a specified number of poses (e.g., 10-20) per ligand.

- Pose Analysis: Align the protein structure from the docking output to the original crystallographic protein structure. Calculate the RMSD between the heavy atoms of the top-ranked docked pose and the crystallographic ligand pose. A pose with RMSD ≤ 2.0 Å is considered successfully predicted.

- Calculation: Report the success rate as (Number of complexes with RMSD ≤ 2.0 Å / Total number of complexes) * 100%.

Protocol 3.2: Evaluating Scoring Function Affinity Prediction

Objective: To evaluate the correlation between scoring function-predicted binding affinities and experimental values. Procedure:

- Dataset: Use the PDBbind refined set with associated experimental Kd/Ki/IC50 values (converted to ΔG in kcal/mol).

- Complex Preparation: Prepare the native crystallographic complex (no re-docking). This assesses pure scoring, not pose prediction.

- Scoring: For each prepared native complex, compute the score using the target scoring function (e.g., Vina, ChemPLP, X-Score).

- Statistical Analysis: Perform linear regression between the computed scores and experimental ΔG. Calculate Pearson's correlation coefficient (R), the standard deviation (SD), and the mean absolute error (MAE). Use 5-fold cross-validation to avoid overfitting.

Visualizing the Docking Workflow and Its Failure Points

Title: Molecular Docking Workflow and Inherent Limitation Points

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Docking & Scoring Research

| Item | Category | Function / Application |

|---|---|---|

| PDBbind Database | Benchmark Dataset | Curated collection of protein-ligand complexes with binding affinity data for training and testing scoring functions. |

| CASF Benchmark Sets | Benchmark Dataset | Specially designed benchmarks for scoring (CASF-2013, 2016) to evaluate pose prediction, ranking, scoring, and screening power. |

| DUD-E / DEKOIS 2.0 | Benchmark Dataset | Databases of decoys for evaluating virtual screening enrichment, containing known actives and property-matched inactives. |

| AutoDock Vina / GNINA | Docking Software | Widely used, open-source docking programs with configurable scoring functions; GNINA incorporates CNN scoring. |

| Schrödinger Suite (Glide) | Commercial Software | Industry-standard software for high-throughput docking and scoring, with advanced force fields and sampling protocols. |

| GOLD / MOE | Commercial Software | Docking suites offering genetic algorithm sampling and diverse scoring function options (GoldScore, ChemPLP, etc.). |

| Open Babel / RDKit | Cheminformatics Library | Open-source toolkits for essential ligand preparation tasks: format conversion, protonation, conformer generation. |

| Amber/CHARMM Force Fields | Molecular Mechanics | Advanced force fields for post-docking refinement via MM/PBSA or MM/GBSA to improve affinity estimates. |

| Rosetta Ligand | Macromolecular Modeling | Protocol for docking with explicit backbone and side-chain flexibility, useful for challenging induced-fit targets. |

| DeepDock/DeepBind | Deep Learning Tools | Emerging DL frameworks trained to predict poses and affinity directly from structural data, addressing classical limitations. |

The Deep Learning Paradigm Shift: A Logical Pathway

The limitations outlined above provide a direct rationale for the integration of deep learning. The following diagram conceptualizes this transition.

Title: From Classical Limitations to Deep Learning Solutions in Docking

Protein-ligand interactions (PLIs) are specific, non-covalent molecular associations between a protein (typically an enzyme or receptor) and a binding partner molecule, the ligand (e.g., a drug candidate, substrate, or inhibitor). These interactions are governed by complementary shape, electrostatics, and hydrophobic effects, forming the foundational mechanism by which most drugs exert their therapeutic effect. In drug discovery, understanding and modulating these interactions is paramount for designing potent, selective, and safe therapeutics. Within the context of deep learning for PLI prediction, computational models aim to accurately predict binding affinity, pose, and kinetics, accelerating the identification of viable drug candidates.

Application Notes

Note 1: Quantitative Characterization of Binding The strength and specificity of a PLI are quantified through key biophysical parameters. The following table summarizes these metrics and their significance in early-stage drug discovery.

Table 1: Key Quantitative Metrics for Protein-Ligand Interactions

| Metric | Description | Typical Experimental Method | Significance in Drug Discovery |

|---|---|---|---|

| Dissociation Constant (Kd) | Concentration of ligand at which half the protein binding sites are occupied. | Isothermal Titration Calorimetry (ITC), Surface Plasmon Resonance (SPR). | Primary measure of binding strength (potency). Lower nM/pM Kd indicates stronger binding. |

| Half-Maximal Inhibitory Concentration (IC50) | Concentration of an inhibitor required to reduce a specific biological activity by half. | Enzymatic activity assay, Cell-based assay. | Functional measure of inhibitory potency under assay conditions. |

| Gibbs Free Energy (ΔG) | Energetic favorability of the binding interaction. | Calculated from Kd (ΔG = RT ln(Kd)). | Fundamental thermodynamic driver; target for computational prediction. |

| Enthalpy (ΔH) & Entropy (ΔS) | Heat change and disorder change upon binding. | Isothermal Titration Calorimetry (ITC). | Guides lead optimization by revealing driving forces (e.g., hydrogen bonds vs. hydrophobic effect). |

| Kinetic Constants (kon, koff) | Association and dissociation rates. | Surface Plasmon Resonance (SPR), Stopped-Flow. | k_off correlates with drug residence time, often linked to efficacy and duration. |

Note 2: The Role of Deep Learning in PLI Analysis Deep learning models address challenges in predicting the metrics in Table 1. They utilize diverse inputs: protein sequences/structures, ligand SMILES strings/3D graphs, and complex interaction fingerprints. Current research focuses on models that predict binding affinity (Kd/IC50), binding pose (docking), and the effects of mutations (missense variants) on drug binding.

Experimental Protocols

Protocol 1: Surface Plasmon Resonance (SPR) for Binding Kinetics Objective: Determine the real-time association (kon) and dissociation (koff) rates, and the equilibrium dissociation constant (Kd) for a protein-ligand interaction. Materials: Biacore or comparable SPR instrument, CMS sensor chip, running buffer (e.g., HBS-EP), amine-coupling reagents (EDC, NHS), target protein, ligand in DMSO.

- Surface Preparation: Dilute protein to 10-50 µg/mL in 10 mM sodium acetate buffer (pH 4.0-5.5). Activate a flow cell on the CMS chip with a 1:1 mix of 0.4 M EDC and 0.1 M NHS for 7 minutes.

- Ligand Immobilization: Inject the diluted protein solution over the activated surface for 7 minutes to achieve a desired immobilization level (typically 50-200 Response Units, RU). Deactivate remaining esters with 1 M ethanolamine-HCl (pH 8.5) for 7 minutes. A reference flow cell is prepared without protein.

- Kinetic Analysis: Prepare a dilution series of the analyte (ligand) in running buffer (e.g., 0.78 nM to 100 nM). Inject each concentration over the protein and reference surfaces for 2-3 minutes (association phase), followed by running buffer alone for 5-10 minutes (dissociation phase). Regenerate the surface with a mild buffer (e.g., 10 mM glycine pH 2.0) between cycles.

- Data Processing: Subtract the reference cell signal from the active cell sensorgrams. Fit the corrected data to a 1:1 Langmuir binding model using the instrument's software to extract kon, koff, and calculate Kd (Kd = koff / kon).

Protocol 2: Molecular Docking with Deep Learning-Based Scoring Objective: Predict the binding pose and affinity of a ligand within a protein's active site using a hybrid docking/deep learning workflow. Materials: Protein structure (PDB file), ligand structure (SDF/MOL2), docking software (AutoDock Vina, GNINA), deep learning scoring function (e.g., DeepDock, EquiBind).

- Structure Preparation: Prepare the protein receptor: remove water molecules, add missing hydrogens, assign correct protonation states for residues (e.g., HIS, ASP, GLU) using tools like UCSF Chimera or Schrodinger's Protein Preparation Wizard. Prepare the ligand: generate 3D coordinates, optimize geometry, and assign partial charges using RDKit or Open Babel.

- Defining the Search Space: Define a docking grid box centered on the binding site of interest. The box dimensions should be large enough to accommodate ligand flexibility (e.g., 25 Å x 25 Å x 25 Å).

- Classical Docking Pose Generation: Perform exhaustive conformational sampling using a classical docking engine (e.g., AutoDock Vina). Output the top 20-100 ranked poses.

- Deep Learning Re-scoring & Pose Selection: Input the generated protein-ligand complex poses into a pre-trained deep learning model (e.g., a graph neural network). The model scores each pose based on learned representations of physical interactions. Select the pose with the best predicted affinity score as the final prediction.

- Validation: Compare the top-ranked pose with a known co-crystal structure (if available) by calculating the Root-Mean-Square Deviation (RMSD) of ligand heavy atoms.

Visualizations

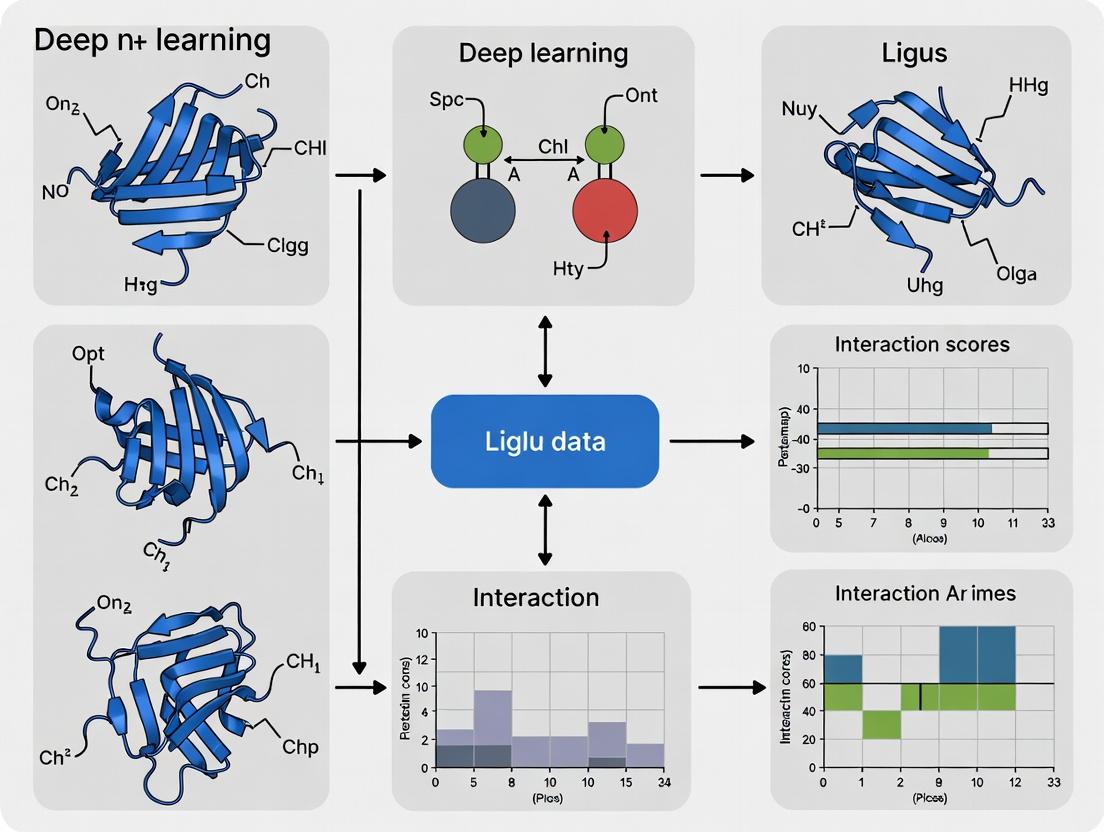

Title: Hybrid Deep Learning Docking Workflow

Title: Central Role of PLIs in Drug Discovery

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Protein-Ligand Interaction Studies

| Item | Function & Application |

|---|---|

| Recombinant Purified Protein | High-purity, functional protein target for in vitro binding assays (SPR, ITC, FA). |

| Compound/Ligand Library | Collection of small molecules for screening; includes drug-like molecules and fragments. |

| Biacore CMS Sensor Chip | Gold sensor surface with a carboxymethylated dextran matrix for covalent protein immobilization in SPR. |

| Isothermal Titration Calorimeter (ITC) | Instrument that directly measures heat change upon binding to provide full thermodynamic profile (Kd, ΔH, ΔS, stoichiometry). |

| Fluorescence Polarization (FP) Tracer | Fluorescently labeled ligand to monitor displacement by unlabeled compounds in competitive binding assays. |

| Crystallization Screening Kits | Sparse matrix screens to identify conditions for growing protein-ligand co-crystals for structural validation. |

| Deep Learning Ready Datasets (e.g., PDBbind) | Curated databases of protein-ligand complexes with binding affinity data for training and validating predictive models. |

| High-Performance Computing (HPC) Cluster | Infrastructure for running molecular dynamics simulations and training large deep learning models. |

Within the broader thesis on deep learning for protein-ligand interaction prediction, this document addresses the foundational step: the transformation of raw, complex molecular and structural data into learned, hierarchical representations. This process is critical for enabling models to capture intricate biophysical and biochemical patterns that dictate binding affinity and specificity.

Core Encoding Strategies & Quantitative Comparison

Deep learning models employ distinct strategies to encode molecular entities. The following table summarizes the primary approaches, their common architectures, and key performance characteristics as reported in recent literature (2023-2024).

Table 1: Comparative Analysis of Molecular Data Encoding Strategies

| Encoding Strategy | Target Data Type | Common Model Architectures | Key Advantages | Reported Top-1 Accuracy / RMSE (Typical Range)* | Computational Cost (FLOPs per sample) |

|---|---|---|---|---|---|

| Graph Neural Networks (GNNs) | Molecular Graphs (Atoms as nodes, bonds as edges) | GCN, GAT, MPNN, 3D-GNN | Captures topological structure and functional groups natively. | AUC-PR: 0.85-0.92 (Binding Site Prediction) | 1E8 - 1E10 |

| Voxelized 3D CNNs | 3D Electron Density/Grids | 3D CNN, VoxNet | Excellent at learning from spatial/electrostatic fields. | RMSE: 1.2-1.8 kcal/mol (Affinity Prediction) | 1E9 - 1E11 |

| Sequence-based Encoders | Protein/Ligand SMILES Strings | Transformer, LSTM, CNN-1D | Leverages vast sequence databases; efficient. | AUC-ROC: 0.88-0.95 (Activity Classification) | 1E7 - 1E9 |

| SE(3)-Equivariant Networks | 3D Point Clouds (Atomic Coordinates) | SE(3)-Transformer, Tensor Field Networks | Invariant to rotations/translations; essential for pose prediction. | RMSD: 1.0-2.5 Å (Ligand Docking) | 1E9 - 1E11 |

| Geometric Deep Learning | Combined Graph + 3D Coordinates | GNN with Spherical Harmonics | Unifies topological and geometric information. | RMSD: 0.5-1.5 Å (Binding Pose) | 1E10 - 1E12 |

*Performance metrics are task-dependent. Ranges are aggregated from recent studies on benchmarks like PDBBind, DUD-E, and CASF.

Detailed Experimental Protocols

Protocol 3.1: Training a Graph Neural Network for Binding Affinity Prediction

Objective: To predict protein-ligand binding affinity (pKd/pKi) using a message-passing GNN.

Materials: See "The Scientist's Toolkit" (Section 5). Workflow:

- Data Curation: Download the PDBBind 2023 refined set. Filter complexes with resolution < 2.5 Å.

- Graph Construction:

- For each complex, generate a molecular graph for the ligand using RDKit (atoms as nodes, bonds as edges).

- Extract protein residues within 6 Å of the ligand. Represent each residue alpha-carbon as a node.

- Create edges between all ligand atoms and protein residue nodes. Edge features include distance and covalent/non-covalent indicator.

- Feature Engineering:

- Node Features: For atoms: atomic number, hybridization, degree, formal charge, valence. For residues: amino acid type, secondary structure, solvent accessible surface area.

- Edge Features: Bond type (single, double, aromatic), spatial distance (binned), interaction type (hydrogen bond, ionic, hydrophobic mask).

- Model Training (PyTorch Geometric):

- Training Loop: Use Mean Squared Error (MSE) loss with the Adam optimizer (lr=0.001). Employ 5-fold cross-validation. Apply learning rate decay and early stopping based on validation loss.

Protocol 3.2: Fine-tuning a Protein Language Model for Interaction Hotspot Prediction

Objective: To adapt a pre-trained protein Transformer to predict binding residues from primary sequence. Workflow:

- Pre-trained Model: Initialize with ESM-3 (150M parameters) or ProtT5 embeddings.

- Dataset Preparation: Use the SKEMPI 2.0 or a custom dataset of mutation effects. Annotate each residue as binding (1) or non-binding (0) based on a 4 Å cutoff from any ligand atom.

- Model Architecture: Add a task-specific head on top of the pre-trained encoder: a bidirectional LSTM or a 1D CNN followed by a linear classifier per residue.

- Fine-tuning: Employ masked language modeling loss combined with a binary cross-entropy loss for the downstream task. Use a low learning rate (5e-5) and gradual unfreezing of the encoder layers over 10 epochs.

- Evaluation: Report per-residue precision, recall, and Matthews Correlation Coefficient (MCC) on a held-out test set.

Visualization of Key Concepts

Title: Hierarchical Encoding of Molecular Data in Deep Learning

Title: Standardized Training & Evaluation Workflow for Interaction Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Deep Learning-Based Molecular Encoding Research

| Item Name & Common Vendor | Category | Primary Function in Research |

|---|---|---|

| RDKit (Open-Source) | Software Library | Core cheminformatics toolkit for converting SMILES to molecular graphs, calculating 2D/3D descriptors, and handling chemical data. |

| PyTorch Geometric (PyG) | Deep Learning Framework | Specialized library for building and training Graph Neural Networks (GNNs) on irregular data like molecular graphs and point clouds. |

| AlphaFold Protein Structure Database (EMBL-EBI) | Data Resource | Source of high-accuracy predicted protein structures for targets lacking experimental crystallography data. |

| ESM/ProtT5 Pre-trained Models (Hugging Face) | Pre-trained Model | Large protein language models providing powerful, transferable sequence representations for downstream fine-tuning tasks. |

| PDBBind & CASF Datasets | Benchmark Data | Curated, quality-filtered datasets of protein-ligand complexes with binding affinity data, essential for training and standardized benchmarking. |

| DOCKSTRING & MoleculeNet Benchmarks | Benchmark Suite | Unified datasets and tasks for evaluating machine learning models on molecular property prediction and virtual screening. |

| OpenMM or GROMACS | Simulation Software | Molecular dynamics packages used to generate conformational ensembles or refine docked poses, providing dynamic structural data for model training. |

| GNINA (Open-Source) | Docking Software | CNN-based molecular docking tool used for generating initial ligand poses or as a baseline comparator for deep learning models. |

| Weights & Biases (W&B) or MLflow | Experiment Tracking | Platforms to log hyperparameters, metrics, and model artifacts, ensuring reproducibility and efficient management of deep learning experiments. |

| AWS EC2 (p3/g4 instances) or Google Cloud TPUs | Computing Infrastructure | Cloud-based high-performance computing resources with GPUs/TPUs necessary for training large-scale geometric deep learning models. |

Within the broader thesis on deep learning for protein-ligand interaction prediction, the quality, scale, and relevance of training data are paramount. Three public databases—PDBbind, BindingDB, and ChEMBL—form a critical ecosystem, each offering unique and complementary data for model development and validation. This document provides detailed application notes and protocols for the effective utilization of these resources, framed for researchers and drug development professionals.

Database Comparative Analysis

The table below summarizes the key quantitative and qualitative characteristics of the three primary databases.

Table 1: Core Database Characteristics for Protein-Ligand Interaction Prediction

| Feature | PDBbind | BindingDB | ChEMBL |

|---|---|---|---|

| Primary Focus | High-quality 3D structures with binding affinity data. | Measured binding affinities (Ki, Kd, IC50) for proteins, chiefly. | Broad bioactive molecules with drug-like properties, bioactivity data. |

| Core Data Type | Structural complexes (PDB-derived) with measured binding affinities (Kd, Ki, IC50). | Quantitative binding data (Kd, Ki, IC50) for protein-ligand pairs, often without public 3D structures. | Bioactivity data (IC50, Ki, EC50, etc.), ADMET, molecular descriptors, some structures. |

| Key Metric | ~23,000 biomolecular complexes; ~19,000 with binding affinity data (2023 release). | ~2.5 million binding data entries for ~8,700 protein targets & ~1 million compounds (2024). | ~2.3 million compounds; ~17 million bioactivity data points (ChEMBL33). |

| Curation Level | Highly curated, manually refined binding site coordinates and affinity data. | Manually curated from literature, with standardized units and target mapping. | Extensively curated and standardized from literature, integrated with other resources. |

| Structural Coverage | Complete 3D atomic coordinates for all complexes. | Limited (~25% entries have linked PDB structures). | Limited; links to PDB and other structure sources where available. |

| Best Use Case | Structure-based model training (e.g., scoring functions, binding pose prediction). | Affinity prediction model training and validation for known targets. | Ligand-based model training, cheminformatics, polypharmacology, ADMET prediction. |

Application Notes & Experimental Protocols

Protocol: Constructing a High-Quality Training Set from PDBbind

Objective: To create a non-redundant, high-quality dataset of protein-ligand complexes with binding affinity labels for structure-based deep learning.

Materials & Workflow:

- Data Acquisition: Download the latest PDBbind "refined" and "general" sets from the official website (

http://www.pdbbind.org.cn). The refined set is pre-filtered for higher quality. - Structure Preprocessing:

- Isolate the protein and ligand molecules from the PDB file.

- Protein Preparation: Add hydrogens, assign protonation states at pH 7.4, and fix missing side chains using tools like

PDBFixeror theProteinPreparemodule in BIOVIA Discovery Studio. - Ligand Preparation: Extract the ligand SDF/MOL2, assign correct bond orders and formal charges, and generate 3D conformations if needed using RDKit or Open Babel.

- Binding Site Definition & Feature Extraction:

- Define the binding pocket as all protein residues with any atom within a cutoff distance (e.g., 6.5 Å) from any ligand atom.

- Generate voxelized grids or graph representations of the binding site.

- Compute molecular features for the ligand (e.g., pharmacophore features, atomic partial charges) and protein (e.g., residue type, secondary structure, electrostatic potential).

- Dataset Splitting: Perform sequence identity-based clustering (e.g., using CD-HIT at 30% threshold) to ensure no homologous proteins appear in both training and test sets, preventing data leakage.

Visualization: PDBbind Data Processing Workflow

Protocol: Integrating BindingDB Affinity Data for Target-Specific Model Training

Objective: To augment training data with extensive binding affinity measurements for a specific protein target of interest.

Materials & Workflow:

- Target-Centric Query: On the BindingDB website (

https://www.bindingdb.org), search by UniProt ID or target name. - Data Export and Standardization:

- Export all results (Ki, Kd, IC50 values). Ensure units are standardized (nM recommended).

- For Ki/IC50 values, convert to pKi/pIC50 (-log10(value in M)).

- Remove duplicate entries and compounds with ambiguous stereochemistry.

- Ligand Standardization: Use RDKit to canonicalize SMILES strings, neutralize charges, and remove salts and solvents.

- Structure Pairing (if applicable): Cross-reference compounds with PDB or use molecular docking to generate putative binding poses if experimental structures are unavailable for the target.

- Data Merging: Combine this target-specific affinity data with structural data from PDBbind for the same target to create a rich, multi-faceted dataset.

Protocol: Leveraging ChEMBL for Ligand-Based and Off-Target Prediction

Objective: To build a dataset for ligand-based interaction prediction or multi-target activity modeling.

Materials & Workflow:

- Activity Data Retrieval: Use the ChEMBL web interface or API (

https://www.ebi.ac.uk/chembl) to download bioactivity data for a target family (e.g., Kinases, GPCRs). Filter for 'IC50', 'Ki', 'Kd' with defined standard relations (e.g., '=', '<'). - Data Cleaning and Thresholding:

- Standardize units to nM and calculate pChEMBL values (-log10(concentration in M)).

- Apply an activity threshold (e.g., pChEMBL > 6.0 for actives, < 5.0 for inactives) to create a binary classification dataset.

- Descriptor/Fingerprint Generation: For each compound, compute molecular descriptors (e.g., molecular weight, LogP) and fingerprints (e.g., ECFP4, MACCS keys) using RDKit or CDK.

- Assay Awareness: Retain ChEMBL assay ID metadata to account for experimental variability when creating multi-assay datasets.

Visualization: Multi-Source Data Integration Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Libraries for Data Curation and Model Training

| Tool / Resource | Primary Function | Relevance to Data Ecosystem |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | Ligand standardization, SMILES parsing, 2D/3D descriptor calculation, fingerprint generation from ChEMBL/BindingDB data. |

| PDBFixer / BIOVIA DS | Protein structure preparation. | Adding missing atoms, assigning protonation states for PDBbind structures before feature extraction. |

| Open Babel | Chemical file format conversion. | Interconversion between PDB, MOL2, SDF formats for ligands extracted from databases. |

| CD-HIT | Sequence clustering tool. | Creating non-redundant training/validation splits from PDBbind based on protein sequence identity. |

| DOCK 6 / AutoDock Vina | Molecular docking software. | Generating putative binding poses for BindingDB/ChEMBL ligands when experimental structures are absent. |

| PyTorch / TensorFlow | Deep learning frameworks. | Building and training neural networks (Graph Neural Networks, CNNs) on the integrated datasets. |

| MOLECULAR OPERATING ENVIRONMENT (MOE) | Commercial modeling suite. | Integrated environment for structure preparation, binding site analysis, and descriptor calculation across all data sources. |

Within the broader thesis on deep learning for protein-ligand interaction (PLI) prediction, this document delineates the critical evolution from classical machine learning (ML) to deep neural networks (DNNs). This shift is not merely algorithmic but represents a fundamental transition in feature representation, from expert-curated descriptors to learned hierarchical abstractions, enabling superior prediction of binding affinities, poses, and virtual screening outcomes in drug discovery.

Quantitative Comparison: Classical ML vs. Deep Learning for PLI

Table 1: Performance Benchmark of Representative Methods on Common PLI Datasets (e.g., PDBbind, CASF)

| Method Category | Example Model | Key Features/Descriptors | Typical RMSE (pK/pKd) | Typical Classification AUC | Computational Cost (Relative) | Feature Engineering Requirement |

|---|---|---|---|---|---|---|

| Classical ML | Random Forest (RF) | SIFt, FP2, Ligand/Protein Descriptors | ~1.4 - 1.8 | 0.75 - 0.85 | Low | High (Critical) |

| Classical ML | Support Vector Machine (SVM) | 2D/3D Molecular Fingerprints, MIFs | ~1.5 - 2.0 | 0.70 - 0.82 | Medium | High |

| Deep Learning | 3D Convolutional Neural Network (e.g., 3D-CNN) | Voxelized 3D Protein-Ligand Complex | ~1.2 - 1.5 | 0.82 - 0.90 | High | Low (Grid Generation) |

| Deep Learning | Graph Neural Network (e.g., GNN, GAT) | Atomic-level Graph (Nodes: Atoms, Edges: Bonds/Distances) | ~1.0 - 1.4 | 0.86 - 0.92 | Medium-High | Low (Graph Construction) |

| Deep Learning | SE(3)-Equivariant Network (e.g., EquiBind) | 3D Point Clouds (Invariant to Rotation/Translation) | N/A (Pose Prediction) | N/A | High | Very Low |

Table 2: Data Requirements and Interpretability Trade-off

| Aspect | Classical ML (e.g., RF, SVM) | Deep Neural Networks (e.g., GNN, 3D-CNN) |

|---|---|---|

| Training Dataset Size | Often effective with 10^2 - 10^3 complexes | Generally requires 10^3 - 10^4+ complexes for robustness |

| Descriptor Relevance | Directly interpretable (e.g., molecular weight, pharmacophore) | Learned features are abstract; requires post-hoc interpretation (e.g., saliency maps) |

| Dependency on Structural Resolution | High (requires accurate complex structures for descriptor calc.) | Can be robust to noise; some models (GNNs) can handle partial structural data. |

| Ability to Model Long-Range Interactions | Limited by descriptor design | Inherently captured through multiple network layers. |

Detailed Experimental Protocols

Protocol 3.1: Classical ML Pipeline for PLI Affinity Prediction (Using RF/SVM)

Objective: To predict binding affinity (pKd/Ki) using engineered features. Materials: PDBbind core dataset, RDKit, scikit-learn, computing cluster/node. Procedure:

- Data Curation: Download and pre-process the PDBbind v2020 refined set. Extract protein-ligand complexes. Remove co-crystals with covalent bonds or peptides.

- Feature Engineering: a. Ligand Descriptors: Using RDKit, calculate 200+ 1D/2D descriptors (e.g., LogP, TPSA, count of rotatable bonds). b. Protein Descriptors: Use Protr or custom scripts to generate amino acid composition, pseudo-amino acid composition (PseAAC) from the binding site residue sequence. c. Complex Descriptors: Use Numpy to compute interaction fingerprints (e.g., PLIF) by analyzing contacts (H-bonds, hydrophobic, ionic) within 4.5Å.

- Feature Integration & Selection: Concatenate all feature vectors. Apply variance thresholding and SelectKBest based on mutual information with the target affinity.

- Model Training: Split data 80/10/10 (train/validation/test). For RF, perform grid search over

n_estimators(100,500) andmax_depth(10,30,None). For SVM, optimizeC(0.1, 1, 10) andgamma. - Validation: Evaluate using Root Mean Square Error (RMSE) and Pearson's R on the test set.

Protocol 3.2: Deep Learning Pipeline for PLI using a Graph Neural Network (GNN)

Objective: To predict binding affinity using an atomic graph representation. Materials: PDBbind dataset, PyTorch, PyTorch Geometric (PyG), RDKit, GPU (e.g., NVIDIA V100/A100). Procedure:

- Graph Representation Generation: a. For each complex, define atoms of the ligand and protein residues within 5-10Å of the ligand as nodes. b. Node features: Atom type, hybridization, degree, formal charge, aromaticity (for ligand); residue type, backbone/sidechain indicator (for protein). Use one-hot encoding. c. Edges: Connect nodes within a cutoff distance (e.g., 4.5Å). Edge features: distance (binned), bond type (if covalent).

- Model Architecture (GNN): a. Implement a network with 4-5 Graph Convolutional Network (GCN) or Graph Attention (GAT) layers. Each layer updates node embeddings by aggregating messages from neighbors. b. Follow with a global pooling layer (e.g., global mean or attention pooling) to obtain a single graph-level embedding. c. Add 3 fully connected (dense) layers with ReLU activation and dropout (p=0.2) to regress the binding affinity value.

- Training: Use Mean Squared Error (MSE) loss and AdamW optimizer. Employ a learning rate scheduler (ReduceLROnPlateau). Train for 300-500 epochs with early stopping. Use a 70/15/15 split, ensuring no similar proteins are across sets (cluster sequence similarity).

- Evaluation: Report RMSE, R², and MAE on the held-out test set. Generate visualizations of atomic contributions using a method like Grad-CAM for GNNs.

Visualization: Key Concepts and Workflows

Title: Classical ML Pipeline for PLI Prediction

Title: Deep Learning Pipeline for PLI Prediction

Title: The Core Paradigm Shift in PLI Modeling

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Resources for Modern PLI Deep Learning Research

| Item Name/Category | Function/Description | Example/Provider |

|---|---|---|

| Curated Benchmark Datasets | Provide standardized, high-quality data for training and fair comparison of models. | PDBbind, BindingDB, DUD-E, DEKOIS 2.0 |

| Deep Learning Frameworks | Libraries providing efficient implementations of neural network layers and training loops. | PyTorch (with PyTorch Geometric for GNNs), TensorFlow (with DeepChem), JAX. |

| Molecular Processing Suites | Toolkits for reading, writing, and manipulating molecular structures and calculating baseline features. | RDKit, Open Babel, MDAnalysis (for MD trajectories). |

| Structure Preparation Software | Prepare protein-ligand complexes for simulation or analysis (add H, optimize H-bonds, minimize). | Schrödinger Maestro, MOE, OpenEye Toolkits, PDBFixer. |

| High-Performance Computing (HPC) | GPU clusters for training large DNNs on thousands of complexes in a reasonable time. | NVIDIA DGX Systems, cloud instances (AWS EC2 P3/P4, GCP A2/A3). |

| Model Interpretation Tools | Post-hoc analysis to understand which structural features drove a DNN's prediction. | Captum (for PyTorch), DeepLIFT, integrated gradients, custom saliency maps. |

| Visualization Software | Critical for inspecting 3D complexes and interpreting model attention/contributions. | PyMOL, ChimeraX, NGL Viewer (for web), Matplotlib/Seaborn (for metrics). |

Architectures in Action: A Guide to Deep Learning Models for Binding Prediction

The accurate prediction of protein-ligand interactions is a central challenge in structural biology and computational drug discovery. Within a broader thesis on deep learning for this task, Graph Neural Networks (GNNs) provide a powerful framework by directly operating on the inherent graph structure of molecules. Unlike grid-based representations (e.g., voxels), graphs naturally encode atoms as nodes and bonds as edges, preserving topological and relational information critical for understanding binding affinity and molecular properties.

Foundational Concepts: Molecular Graph Representation

A molecule is represented as an undirected graph ( G = (V, E) ), where:

- V (Nodes): Atoms, characterized by features such as atom type, hybridization, valence, and partial charge.

- E (Edges): Chemical bonds, with features like bond type (single, double, triple), conjugation, and stereo configuration.

Application Notes & Key Protocols

Protocol: Constructing a Molecular Graph from a SMILES String

Objective: Convert a Simplified Molecular Input Line Entry System (SMILES) string into a featurized graph suitable for GNN input.

Materials & Software: RDKit (Python cheminformatics toolkit), PyTorch, PyTorch Geometric (PyG) or Deep Graph Library (DGL).

Procedure:

- SMILES Parsing: Use

rdkit.Chem.MolFromSmiles()to parse the SMILES string into an RDKit molecule object. - Node Feature Extraction: For each atom in the molecule, compute a feature vector. A common minimal feature set includes:

- Atom type (one-hot encoded for common elements: C, N, O, F, S, P, Cl, Br, I, etc.)

- Degree (number of bonded neighbors)

- 该试剂盒包含从靶标识别到先导物优化的关键资源。

- Edge Index & Feature Extraction: Identify covalent bonds. Create an edge index (a 2 x num_edges tensor for source and target nodes). For each bond, compute features:

- Bond type (single, double, triple, aromatic)

- Conjugation

- Presence in a ring

- Graph Assembly: Package node features, edge indices, and edge features into a graph data object (e.g.,

torch_geometric.data.Data).

Protocol: A Standard Message-Passing GNN for Molecular Property Prediction

Objective: Implement a GNN to learn a representation vector (embedding) for an input molecular graph, used for regression (e.g., predicting binding affinity pIC50) or classification.

Architecture: Message Passing Neural Network (MPNN) framework.

Procedure:

- Initialization: Set atom features as initial node embeddings ( h_v^{(0)} ).

- Message Passing (K layers): For each graph convolution layer ( k = 1...K ): a. Message Function: For each edge (u,v), compute a message: ( m{uv}^{(k)} = M^{(k)}(hu^{(k-1)}, hv^{(k-1)}, e{uv}) ), where ( e{uv} ) are edge features. b. Aggregation: For each node ( v ), aggregate messages from its neighborhood ( N(v) ): ( av^{(k)} = \text{AGG}^{(k)}({m{uv}^{(k)}, u \in N(v)}) ). Common AGG functions include sum, mean, or max. c. Update Function: Combine the node's previous embedding and aggregated message to produce a new embedding: ( hv^{(k)} = U^{(k)}(hv^{(k-1)}, av^{(k)}) ).

- Readout (Global Pooling): After K layers, generate a graph-level representation from all node embeddings: ( hG = R({hv^{(K)} | v \in G}) ). Common readouts include global mean/max/sum pooling or more advanced Set2Set layers.

- Prediction Head: Pass the graph embedding ( h_G ) through multi-layer perceptrons (MLPs) to produce the final prediction (e.g., a scalar for energy, a probability for activity).

Protocol: Training a GNN for Binding Affinity Prediction

Objective: Train the model from Protocol 3.2 on a dataset like PDBbind to predict experimental binding constants.

Dataset: PDBbind (refined set, ~5,000 protein-ligand complexes with Kd/Ki values).

Workflow:

- Data Preparation: For each complex in the dataset:

- Extract the ligand SMILES.

- Convert the ligand to a featurized graph (Protocol 3.1).

- Use the negative logarithmic transformation of the binding constant as the target label: ( pK = -\log{10}(Kd/K_i) ).

- Training Loop: a. Split data into training, validation, and test sets (e.g., 80/10/10%). b. Use Mean Squared Error (MSE) loss between predicted and true pK values. c. Optimize using Adam optimizer. d. Implement early stopping based on validation loss.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Function in GNN-based Molecular Modeling |

|---|---|

| RDKit | Open-source cheminformatics toolkit for parsing SMILES, generating 2D/3D molecular structures, and calculating molecular descriptors and fingerprints. Essential for graph construction and feature generation. |

| PyTorch Geometric (PyG) | A library built upon PyTorch specifically for deep learning on graphs. Provides efficient data loaders, common GNN layer implementations, and standard benchmark datasets (e.g., MoleculeNet). |

| Deep Graph Library (DGL) | An alternative framework for GNN implementation that supports multiple backends (PyTorch, TensorFlow). Known for its efficiency on large graphs. |

| MoleculeNet | A benchmark collection of molecular datasets for tasks like solubility (ESOL), toxicity (Tox21), and binding affinity (PDBbind). Used for standardized model evaluation. |

| Open Graph Benchmark (OGB) | Provides large-scale, realistic benchmark datasets and tasks for graph ML, including the ogbg-mol* series for molecular property prediction. |

| Schrödinger Suite / OpenEye Toolkit | Commercial software offering high-performance molecular modeling, docking, and force field calculations. Often used to generate high-quality 3D conformations or labels for supervised learning. |

Table 1: Performance of Representative GNN Models on MoleculeNet Benchmark Datasets (Classification AUC-ROC / Regression RMSE)

| Model Architecture | ClinTox (AUC) | Tox21 (AUC) | ESOL (RMSE) | FreeSolv (RMSE) | PDBbind (RMSE in pK) |

|---|---|---|---|---|---|

| Graph Convolutional Network (GCN) | 0.832 | 0.769 | 1.19 | 2.41 | 1.50 |

| Graph Attention Network (GAT) | 0.851 | 0.785 | 1.08 | 2.23 | 1.45 |

| AttentiveFP | 0.879 | 0.826 | 0.89 | 1.87 | 1.38 |

| DeeperGCN | 0.868 | 0.811 | 0.95 | 1.98 | 1.41 |

| State-of-the-Art (2023-24) | ~0.90+ | ~0.85+ | ~0.80 | ~1.60 | ~1.20 |

Note: Values are illustrative approximations from literature. SOTA performance is rapidly evolving.

Table 2: Common Atom and Bond Feature Dimensions for Molecular Graphs

| Feature Type | Description | Dimension (Example) |

|---|---|---|

| Atom Features | Atom identity (one-hot), degree, formal charge, hybridization, aromaticity, # of H, chirality, etc. | ~30-100 |

| Bond Features | Bond type, conjugation, in a ring, stereo configuration. | ~10-15 |

Visualization of Workflows and Architectures

Title: GNN Model Training Workflow for Molecular Property Prediction

Title: A Single Message-Passing Step in a GNN Layer

Within the broader thesis on deep learning for protein-ligand interaction prediction, 3D-CNNs represent a foundational architecture for directly processing three-dimensional structural and physicochemical data. Unlike models that rely on simplified fingerprints or 2D projections, 3D-CNNs operate on volumetric grids, preserving the spatial and electronic information critical for understanding molecular recognition. This protocol focuses on the application of 3D-CNNs to predict binding affinities and poses by learning from voxelized representations of electron density maps and multi-channel property grids derived from protein-ligand complexes.

Data Preparation and Grid Generation Protocol

Source Data and Initial Processing

Data for training 3D-CNNs is typically sourced from structural databases such as the Protein Data Bank (PDB). The relevant complexes must be pre-processed.

Protocol 2.1.1: Complex Preparation

- Input: PDB ID (e.g., 1A2C) or structure file.

- Processing Steps:

- Remove water molecules and crystallographic additives using

biopythonorOpen Babel. - Add missing hydrogen atoms and assign protonation states at pH 7.4 using

PDB2PQRorMOE. - Perform energy minimization (500 steps of steepest descent) with the AMBER force field to relieve steric clashes.

- Remove water molecules and crystallographic additives using

- Output: A cleaned PDB file for the protein-ligand complex.

Volumetric Grid Construction

The core input for a 3D-CNN is a 3D grid centered on the binding site. Each grid point (voxel) holds one or more channels of information.

Protocol 2.2.1: Multi-Channel Grid Generation

- Define Grid Bounds: Create a cubic box extending 10 Å in each direction from the centroid of the crystallographic ligand.

- Set Resolution: Define voxel size (spacing). Common values are 0.5 Å or 1.0 Å, resulting in grid dimensions (e.g., 20Å/0.5Å = 40 voxels per edge).

- Compute Grid Channels: For each atom within the box, map its properties to the grid using a Gaussian-smearing function. Standard channels include:

- Channel 1 (Electron Density): Approximated using the atom's partial charge and van der Waals radius.

- Channel 2 (Hydrophobicity): Based on the atom's Kyte-Doolittle hydropathy index.

- Channel 3 (Hydrogen Bond Donor): Binary indicator for potential donor atoms (e.g., O-H, N-H).

- Channel 4 (Hydrogen Bond Acceptor): Binary indicator for potential acceptor atoms (e.g., carbonyl O).

- Channel 5 (Atomic Occupancy): Simple count of atom proximity.

- Tool: Execute using

GNINA'scg2gridfunction or a custom Python script utilizingNumPy.

Data Summary: Typical Grid Parameters

| Parameter | Value 1 (High-Res) | Value 2 (Standard) | Notes |

|---|---|---|---|

| Box Size (Å) | 20x20x20 | 24x24x24 | Centered on ligand |

| Voxel Spacing (Å) | 0.5 | 1.0 | Determines grid dimensions |

| Grid Dimensions (voxels) | 40³ = 64,000 | 24³ = 13,824 | Directly impacts GPU memory |

| Common # Channels | 5-19 | 5-8 | Depends on feature set |

3D-CNN Model Architecture & Training Protocol

A typical 3D-CNN for affinity prediction follows an encoder-type architecture.

Protocol 3.1: Model Implementation (PyTorch)

- Input Layer: Accepts a 5D tensor of shape

(batch_size, channels, depth, height, width). - Convolutional Blocks:

- Use 3-4 sequential blocks of 3D Convolution, 3D Batch Normalization, and ReLU Activation.

- Example block:

Conv3d(in=8, out=16, kernel_size=3, stride=1, padding=1) -> BatchNorm3d(16) -> ReLU(). - Incorporate 3D MaxPooling layers (

kernel_size=2, stride=2) after every 1-2 blocks.

- Global Pooling & Fully Connected Layers:

- Flatten feature maps using 3D Adaptive Average Pooling to a fixed size.

- Pass through 2-3 fully connected (dense) layers with Dropout (p=0.3-0.5) for regularization.

- Final layer outputs a single scalar for regression (binding affinity: pKd, pKi) or a probability for classification (binder/non-binder).

- Compilation:

- Loss Function: Mean Squared Error (MSE) for regression.

- Optimizer: Adam with initial learning rate of 1e-4, decayed by 0.5 every 50 epochs.

- Metric: Root Mean Square Error (RMSE) and Pearson's R.

Experimental Training Workflow

Diagram Title: 3D-CNN Training Workflow for Affinity Prediction

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protocol | Example Tool/Software |

|---|---|---|

| Structural Database | Source of protein-ligand complex coordinates. | RCSB Protein Data Bank (PDB) |

| Structure Preparer | Adds hydrogens, corrects protonation, minimizes energy. | UCSF Chimera, MOE, Schrödinger Maestro |

| 3D Grid Generator | Voxelizes molecular structures into multi-channel grids. | GNINA, DeepChem, custom Python (NumPy) |

| 3D-CNN Framework | Provides libraries for building and training volumetric networks. | PyTorch (torch.nn), TensorFlow (Keras) |

| GPU Computing Resource | Accelerates training of computationally intensive 3D convolutions. | NVIDIA V100/A100 GPU, Cloud (AWS, GCP) |

| Affinity Benchmark Set | Curated data for training and evaluation. | PDBbind, CASF-2016, DUD-E |

| Hyperparameter Optimizer | Automates the search for optimal model parameters. | Optuna, Ray Tune, Weights & Biases Sweeps |

Key Experimental Results & Performance

Recent studies benchmark 3D-CNNs against traditional scoring functions and other deep learning models.

Table: Performance Comparison on PDBbind v2020 Core Set

| Model Architecture | Input Type | Test RMSE (pK) | Pearson's R | Reference (Year) |

|---|---|---|---|---|

| 3D-CNN (Basic) | 5-Channel Grid (1Å) | 1.42 | 0.78 | Ragoza et al. (2017) |

| 3D-CNN (DenseNet) | 14-Channel Grid (0.5Å) | 1.23 | 0.83 | Stepniewska-Dziubinska et al. (2020) |

| Pafnucy | 19-Channel Grid (1Å) | 1.19 | 0.85 | Stepniewska-Dziubinska et al. (2020) |

| Traditional SF | Heuristic/Force Field | 1.50 - 1.90 | 0.60 - 0.72 | CASF-2016 Benchmark |

Diagram Title: 3D-CNN Architecture for Affinity Regression

Within the field of deep learning for protein-ligand interaction prediction, a central challenge is modeling complex, long-range dependencies. Traditional convolutional and recurrent neural networks struggle with these non-local interactions, which are critical for understanding protein folding, allostery, and binding site formation. Transformer and attention-based models have emerged as a transformative solution, fundamentally shifting the paradigm by enabling direct, pairwise interactions between all elements in a sequence or structure, regardless of distance.

Core Technical Framework

The self-attention mechanism is the foundational operation. For an input sequence of embeddings, it computes a weighted sum of values for each position, where the weights are derived from compatibility queries and keys. This allows any residue or atom in a 3D structure to influence any other. In protein-ligand prediction, this framework is adapted to heterogeneous data types:

- Sequence-Based Models: Operate on amino acid sequences, capturing long-range patterns that define tertiary structure.

- Structure-Based Models: Use 3D coordinates (e.g., as graphs or point clouds), where attention weights can be modulated by spatial distance.

- Hybrid Models: Integrate sequential, structural, and evolutionary (MSA) information, as epitomized by AlphaFold2.

Application Notes & Protocols

The following notes and protocols detail the implementation and evaluation of transformer architectures for predicting binding affinities (pIC50/Kd) and binding poses.

Application Note 1: Sequence-Based Binding Affinity Prediction

This protocol uses a protein and ligand SMILES encoder to predict binding affinity, capturing contextual patterns without explicit 3D data.

Experimental Protocol:

- Data Curation: Curate protein-ligand pairs with experimentally measured pIC50 values from sources like PDBbind or BindingDB. Split data into training, validation, and test sets (70/15/15%) at the protein family level to prevent homology bias.

- Input Representation:

- Protein: Use amino acid sequence converted to integer indices. Pad/truncate to a fixed length (e.g., 1024). Embedding dimension (d_model) = 256.

- Ligand: Convert SMILES string into a token sequence (e.g., using Byte Pair Encoding). Max length = 128. Embedding dimension = 256.

- Model Architecture:

- Two independent transformer encoder stacks (N=4 layers, attention heads=8) process protein and ligand tokens.

- Apply global mean pooling to each encoder's output to obtain fixed-size protein and ligand representations.

- Concatenate these representations and pass through a 3-layer Multilayer Perceptron (MLP) regressor (hidden layers: 512, 128; output: 1 neuron for pIC50).

- Training: Use Mean Squared Error (MSE) loss, AdamW optimizer (learning rate=1e-4), batch size=32, for 100 epochs with early stopping.

Quantitative Performance Summary (Benchmark on PDBbind v2020 Core Set):

| Model Architecture | RMSE (pIC50) | MAE (pIC50) | Pearson's R | Spearman's ρ |

|---|---|---|---|---|

| Transformer (Seq-Based) | 1.15 | 0.91 | 0.78 | 0.76 |

| CNN-BiLSTM (Baseline) | 1.42 | 1.12 | 0.68 | 0.65 |

| Random Forest (on fingerprints) | 1.61 | 1.28 | 0.55 | 0.53 |

Protocol Workflow Diagram:

Title: Sequence-based affinity prediction workflow.

Application Note 2: Structure-Based Binding Pose Scoring

This protocol uses a graph transformer to score docked protein-ligand poses by modeling the 3D interaction graph.

Experimental Protocol:

- Data & Pose Generation: Use CASF-2016 benchmark. Generate decoy poses for each ligand using molecular docking software (e.g., AutoDock Vina).

- Graph Construction: Represent each complex as a heterogeneous graph.

- Nodes: Protein residues (Cα) and ligand atoms.

- Edges: Include intramolecular edges (within protein/ligand, cutoff=4.5Å) and intermolecular edges (protein-ligand, cutoff=6.0Å). Edge features: distance, vector.

- Model Architecture: Implement a Graph Transformer network.

- Node features: atom type, residue type, charge.

- Use 6 transformer layers with multi-head attention (heads=8). Attention is calculated between connected nodes, with edge features added to the attention bias.

- A final readout layer produces a scalar score for the entire graph.

- Training & Evaluation: Train with a hinge-rank loss to distinguish native poses from decoys. Evaluate by computing the success rate of ranking the native pose top-1 among decoys.

Quantitative Performance Summary (CASF-2016 Scoring Power):

| Scoring Method | Success Rate (Top-1%) | Pearson's R (vs. Exp. Affinity) | RMSE (pKd) |

|---|---|---|---|

| Graph Transformer | 68.2% | 0.81 | 1.32 |

| NNScore 2.0 | 52.7% | 0.63 | 1.89 |

| AutoDock Vina | 48.1% | 0.60 | 2.01 |

The Scientist's Toolkit: Key Research Reagents & Materials

| Item | Function/Description |

|---|---|

| PDBbind Database | Curated collection of protein-ligand complexes with binding affinity data for training & benchmarking. |

| CASF Benchmark Sets | Standardized datasets (e.g., CASF-2016) for fair evaluation of scoring, docking, and ranking powers. |

| RDKit | Open-source cheminformatics toolkit for SMILES processing, ligand fingerprinting, and molecular visualization. |

| Biopython | Python library for protein sequence and structure parsing (e.g., PDB files). |

| PyTorch Geometric | Library for building Graph Neural Networks (GNNs) and Graph Transformers with GPU acceleration. |

| Hugging Face Transformers | Repository providing pre-trained transformer models and easy-to-use fine-tuning frameworks. |

| AlphaFold2 (ColabFold) | For generating high-accuracy protein structure predictions when experimental structures are unavailable. |

| AutoDock Vina | Widely-used molecular docking program for generating ligand pose decoys. |

Graph Transformer Architecture Diagram:

Title: Graph transformer for pose scoring.

Transformer models have proven highly effective at capturing the long-range interactions essential for accurate protein-ligand interaction prediction. Future directions include developing more efficient attention mechanisms (e.g., linear, equivariant) for larger systems, better integration of temporal dynamics for allostery studies, and the creation of foundation models pre-trained on vast molecular corpora for transfer learning in low-data drug discovery projects.

Application Notes

The prediction of protein-ligand interactions (PLI) is a cornerstone of modern computational drug discovery. Traditional unimodal models, which rely solely on protein sequences or ligand SMILES strings, face fundamental limitations in capturing the complex physical and chemical determinants of molecular recognition. Hybrid and multimodal architectures represent a paradigm shift, integrating disparate but complementary data modalities to significantly enhance predictive accuracy and generalization. The core thesis posits that the synergistic integration of sequence (evolutionary information via PSSMs, embeddings from models like ESM-2), structure (3D coordinates, geometric graphs, surface descriptors), and chemical features (ligand fingerprints, quantum chemical properties, physicochemical descriptors) within a unified deep learning framework is essential for moving beyond correlation towards a more mechanistic understanding of interactions. This approach directly addresses the limitations of static datasets by enabling models to learn the biophysical principles governing affinity and specificity.

Current research demonstrates that multimodal models consistently outperform their unimodal counterparts on benchmarks like PDBbind and BindingDB. Key advancements include the use of geometric deep learning (e.g., graph neural networks on molecular graphs) to process 3D structure, coupled with transformer-based encoders for sequence context. A critical application note is the handling of absent or low-quality structural data; effective architectures implement parallel input streams with cross-attention mechanisms, allowing the model to weigh modalities dynamically. For instance, in a lead optimization campaign, a model can prioritize chemical feature signals when analyzing congeneric series with a single protein structure. Furthermore, integrating explicit chemical features (e.g., partial charges, hydrophobicity indices) mitigates the risk of models learning spurious statistical artifacts from raw data alone. The table below summarizes the performance gains from representative multimodal architectures.

Table 1: Performance Comparison of Representative Multimodal PLI Prediction Models

| Model Name | Modalities Integrated | Key Architectural Features | Benchmark (PDBbind Core Set) RMSE ↓ / R² ↑ |

|---|---|---|---|

| DeepDTAF | Sequence (Prot), Chemical (Lig) | CNN on protein & ligand 1D representations | 1.42 RMSE / 0.67 R² |

| Pafnucy | Structure (Prot-Lig Complex) | 3D CNN on voxelized complex | 1.27 RMSE / 0.74 R² |

| SIGN | Structure (Graph), Sequence | GNN on protein & ligand graphs, ResNet | 1.19 RMSE / 0.77 R² |

| MultiBind (SOTA) | Sequence, Structure, Chemical | Transformer + GNN fusion, cross-modality attention | 1.05 RMSE / 0.82 R² |

Experimental Protocols

Protocol 1: Data Preparation for a Three-Modal Protein-Ligand Model

Objective: To curate and preprocess aligned protein sequence, 3D structure, and ligand chemical feature data for training a hybrid model. Materials: Protein Data Bank (PDB) files, corresponding ligand SDF/Mol2 files, UniProt IDs, cheminformatics toolkit (RDKit, Open Babel), computational structural tools (PDBfixer, Modeller).

Protein Sequence & Evolutionary Feature Extraction:

- For a given protein target, retrieve its canonical amino acid sequence from UniProt using the API (

https://www.uniprot.org/uniprot/{ID}.fasta). - Generate a Position-Specific Scoring Matrix (PSSM) using three iterations of PSI-BLAST against the UniRef90 database. Convert the PSSM into a normalized, per-residue feature vector of size 20.

- Alternatively, extract pre-computed protein language model embeddings (e.g., from ESM-2) using the

esmPython library, yielding a feature vector of size 1280 per residue.

- For a given protein target, retrieve its canonical amino acid sequence from UniProt using the API (

Protein-Ligand Structural Processing:

- Download the protein-ligand complex PDB file (e.g.,

4xyz.pdb). Isolate the ligand and the protein's binding site residues (defined as any atom within 6 Å of the ligand). - Use PDBfixer to add missing hydrogen atoms and side chains, and parameterize the system with a force field (e.g., AMBER ff14SB) using a tool like

OpenMM. - Represent the binding site as a graph: Nodes are protein residues. Define edges based on spatial proximity (Cα atoms within 10 Å) or covalent bonds. Node features include residue type, solvent accessible surface area, and backbone dihedrals.

- Download the protein-ligand complex PDB file (e.g.,

Ligand Chemical Feature Extraction:

- From the ligand SDF file, use RDKit to compute:

- A 2048-bit Morgan fingerprint (radius=2).

- A set of 200-dimensional functional class fingerprints (FCFP).

- Physicochemical descriptors: molecular weight, LogP, topological polar surface area, number of hydrogen bond donors/acceptors, and rotatable bonds.

- For a ligand graph, represent atoms as nodes and bonds as edges. Node features: atom type, hybridization, degree, formal charge, partial charge (calculated via RDKit). Edge features: bond type, conjugated status, and spatial distance.

- From the ligand SDF file, use RDKit to compute:

Data Alignment & Storage:

- Ensure all three modality representations (sequence/PSSM, structure/graph, chemical/fingerprints) are indexed by the same complex identifier.

- Store the aligned dataset in a hierarchical format (e.g., HDF5) for efficient loading during training. Each sample contains the protein sequence features, the protein graph, the ligand graph, and the ligand fingerprint/descriptor vector, along with the experimental binding affinity label (e.g., pKd).

Protocol 2: Training a Cross-Attention Multimodal Fusion Network

Objective: To train a neural network that integrates protein sequence embeddings, a protein structural graph, and ligand chemical features to predict binding affinity. Network Architecture: The model consists of three encoders and a fusion decoder.

Modality-Specific Encoders:

- Sequence Encoder: Pass the PSSM or ESM-2 embedding through a 1D convolutional layer or a bidirectional LSTM to produce a sequence context vector

S. - Structure Encoder: Process the protein graph using a 3-layer Graph Attention Network (GAT). Perform global mean pooling on the final node embeddings to produce a structure vector

G. - Chemical Encoder: Pass the ligand Morgan fingerprint through a fully connected (dense) neural network to produce a chemical vector

C. The ligand graph can optionally be processed with a separate GAT.

- Sequence Encoder: Pass the PSSM or ESM-2 embedding through a 1D convolutional layer or a bidirectional LSTM to produce a sequence context vector

Cross-Modality Attention Fusion:

- Treat the structure vector

Gas the primary context (query). UseGto attend to the sequence vectorSand chemical vectorCvia separate cross-attention blocks. - The cross-attention operation:

Attention(Q, K, V) = softmax((Q*K^T)/√d_k) * V, where for sequence fusion,Q=G_proj,K=S_proj,V=S_proj. - The outputs are context-aware vectors

G_s(structure informed by sequence) andG_c(structure informed by chemistry).

- Treat the structure vector

Fusion and Regression:

- Concatenate the original vectors

G,S,Cwith the fused vectorsG_sandG_c. - Pass this concatenated multimodal representation through a final multi-layer perceptron (MLP) with dropout for regularization.

- The output layer is a single neuron for regression (predicting pKd/pKi).

- Concatenate the original vectors

Training Procedure:

- Loss Function: Use Mean Squared Error (MSE) between predicted and experimental binding affinities.

- Optimizer: AdamW with a learning rate of 1e-4 and weight decay of 1e-5.

- Batch Size: 32.

- Validation: Perform a time-split or stratified split by protein family to avoid data leakage. Monitor validation loss for early stopping.

- Training Time: Approximately 24-48 hours on a single NVIDIA V100 GPU for a dataset of ~15,000 complexes.

Diagrams

Title: Multimodal PLI Model Training Workflow

Title: Cross-Attention Fusion Mechanism

The Scientist's Toolkit

Table 2: Essential Research Reagents & Tools for Multimodal PLI Experiments

| Item | Function in Protocol | Example Source / Tool |

|---|---|---|

| PDBbind Database | Curated benchmark dataset of protein-ligand complexes with experimental binding affinities. | http://www.pdbbind.org.cn |

| UniProt Knowledgebase | Provides canonical protein sequences and functional annotation for sequence feature extraction. | https://www.uniprot.org |

| RDKit | Open-source cheminformatics toolkit for ligand processing, fingerprint generation, and descriptor calculation. | https://www.rdkit.org |

| PSI-BLAST | Generates Position-Specific Scoring Matrices (PSSMs) for evolutionary sequence profiles. | NCBI BLAST+ suite |

| ESM-2 Model | State-of-the-art protein language model for generating contextual residue embeddings without alignment. | Meta AI (Hugging Face) |

| PyTorch Geometric (PyG) | Library for building and training Graph Neural Networks (GNNs) on structural graphs. | https://pytorch-geometric.readthedocs.io |

| OpenMM / PDBfixer | Toolkit for adding missing atoms to PDB structures and preparing systems for simulation/analysis. | https://openmm.org |

| DGL-LifeSci | Library for graph-based deep learning on molecules and biomolecules, built on Deep Graph Library. | https://lifesci.dgl.ai |

| HDF5 Format | Hierarchical data format for efficient storage and retrieval of large, aligned multimodal datasets. | HDF5 Group libraries |

Within the broader thesis on Deep Learning for Protein-Ligand Interaction Prediction, three primary practical applications dominate computational drug discovery. These are not isolated tasks but interconnected pillars that accelerate the identification and optimization of novel therapeutics. Virtual screening efficiently prioritizes candidate molecules from vast libraries, affinity regression models quantify the strength of the predicted interaction, and pose prediction provides the structural rationale, informing medicinal chemistry. The advent of deep learning has significantly enhanced the accuracy, speed, and applicability of each of these domains by learning complex, non-linear relationships directly from structural and sequence data.

Application Notes

Virtual Screening (VS)

Objective: To computationally rank millions of compounds for their likelihood of binding to a specific protein target, drastically reducing the number of compounds requiring expensive experimental testing.

Deep Learning Advancements: Traditional methods like docking rely on physical force fields and are computationally intensive. Deep learning-based VS uses learned representations to predict binding, offering superior speed and, in many cases, improved enrichment of true actives.

- Structure-Based: Models like EquiBind (Stärk et al., 2022) and DeepDock use geometric deep learning to predict binding poses and scores directly.

- Ligand-Based: If known active compounds exist, models can perform similarity searching in a learned chemical space.

- Recent Trend: Hybrid models that integrate protein sequence/structure, ligand SMILES, and interaction fingerprints are becoming standard, offering robust performance even with moderate protein flexibility.

Binding Affinity (pIC50/Kd) Regression

Objective: To predict a quantitative measure of binding strength, typically reported as pIC50 (-log10(IC50)) or dissociation constant (Kd). Accurate prediction is crucial for lead optimization.

Deep Learning Advancements: Moving beyond scoring functions, deep learning models regress affinity from data.

- Key Datasets: PDBbind, BindingDB, and KIBA are widely used benchmarks.

- Model Architectures: Graph Neural Networks (GNNs) for ligands, Convolutional Neural Networks (CNNs) for protein binding sites, and attention-based networks (Transformers) are prevalent.

- State-of-the-Art: Models like DeepAffinity+ and PotentialNet iteratively pass messages between protein and ligand atom graphs to capture mutual influence. Recent models also incorporate explicit non-covalent interaction features (e.g., hydrogen bonds, pi-stacking).

Pose Prediction (Docking)

Objective: To predict the three-dimensional orientation (pose) of a ligand bound within a protein's binding pocket. A correct pose is a prerequisite for reliable affinity estimation and structure-based design.

Deep Learning Advancements: Classical docking suffers from sampling and scoring challenges. Deep learning approaches reframe pose prediction as a generative or discriminative task.

- Sampling: Models like DiffDock (Corso et al., 2022) use diffusion models to generate likely poses, demonstrating state-of-the-art accuracy without relying on exhaustive sampling.

- Scoring & Ranking: CNNs and GNNs are trained to distinguish native-like poses from decoys, providing a more reliable ranking than traditional force fields.

- Template-Based: For targets with known similar complexes, deep learning can accurately refine poses based on structural templates.

Table 1: Performance Comparison of Recent Deep Learning Methods

| Application | Model Name (Year) | Key Architecture | Benchmark Dataset | Reported Performance |

|---|---|---|---|---|

| Virtual Screening | EquiBind (2022) | Geometric GNN, SE(3) Invariance | PDBbind | >800x faster than Glide; comparable enrichment |

| Virtual Screening | DeepDock | 3D CNN on Voxelized Complex | DUD-E | AUC > 0.8 for multiple targets |

| Affinity Regression | PotentialNet (2018) | Hierarchical GNN | PDBbind v2016 | Pearson's R = 0.822 on core set |

| Affinity Regression | GraphDTA (2020) | GNN (Ligand) + CNN (Protein) | KIBA | MSE = 0.139 on KIBA test set |

| Pose Prediction | DiffDock (2022) | Diffusion Model, SE(3) Equivariant | PDBbind | Top-1 Accuracy > 50% (near-native pose) |

| Pose Prediction | AlphaFold3 (2024) | Diffusion, Pairformer | Novel Complexes | Significantly outperforms traditional docking |

Experimental Protocols

Protocol 3.1: Implementing a Deep Learning Virtual Screening Pipeline

Objective: To screen a library of 1M compounds against a target protein using a pre-trained deep learning model.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Target Preparation: Obtain the 3D structure of the target protein (e.g., from PDB). Prepare the structure using

pdbfixerandpropka:- Remove water molecules and heteroatoms (except co-factors).

- Add missing hydrogens and side chains.

- Assign protonation states at physiological pH.

- Ligand Library Preparation: Convert the compound library (in SDF or SMILES format) to a standardized format using

RDKit.- Apply chemical sanitization and neutralization.

- Generate plausible 3D conformers for each molecule.

- Optimize conformer geometry with the MMFF94 force field.

- Binding Site Definition: Define the binding pocket coordinates (x,y,z center and box size). Use the native ligand's position or a tool like

fpocket. - Model Inference: Load a pre-trained model (e.g., EquiBind). For each ligand:

- The model predicts a binding pose within the defined pocket.

- The model outputs a scalar binding score or probability.

- Ranking & Analysis: Rank all compounds by their predicted score. Select the top 1,000-10,000 for further analysis or experimental validation.

Protocol 3.2: Training a pIC50 Regression Model

Objective: To train a GraphDTA-style model to predict binding affinity from protein sequence and ligand SMILES.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Data Curation: Download the KIBA dataset. Split data into training (80%), validation (10%), and test (10%) sets using scaffold splitting on ligands to avoid data leakage.

- Data Processing:

- Ligands: Convert SMILES strings to molecular graphs using

RDKit. Nodes represent atoms (featurized by atom type, degree, etc.), edges represent bonds (featurized by bond type). - Proteins: Represent protein sequences as strings or convert to a graph of amino acid residues.

- Ligands: Convert SMILES strings to molecular graphs using

- Model Architecture: Implement a dual-input network.

- Ligand Branch: A GNN (e.g., GCN, GAT) to generate a molecular fingerprint.

- Protein Branch: A 1D CNN or Transformer to generate a protein sequence fingerprint.

- Fusion: Concatenate the two fingerprint vectors and pass through fully connected layers for final pIC50 regression.

- Training: Train for 100-200 epochs using Mean Squared Error (MSE) loss and the Adam optimizer. Use the validation set for early stopping.

- Evaluation: Evaluate the final model on the held-out test set using standard metrics: Pearson's R, RMSE, and MAE.

Protocol 3.3: Running and Evaluating DiffDock for Pose Prediction

Objective: To predict the binding pose for a given ligand-protein pair using DiffDock.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Environment Setup: Install DiffDock from its official repository, ensuring all dependencies (PyTorch, PyTorch Geometric, etc.) are met.

- Input Preparation: Prepare the protein file in

.pdbformat and the ligand file in.sdfor.mol2format. The ligand should be placed roughly in the binding site (can be done with a quick traditional docking run). - Running Inference: Execute the DiffDock inference script, specifying the paths to the protein and ligand files. The model will generate a user-defined number of candidate poses (e.g., 40).

- Pose Ranking: DiffDock outputs poses along with a confidence score (estimated log-likelihood). Rank poses by this score.

- Evaluation (If ground truth is known): Align the predicted ligand pose to the experimentally determined (ground truth) pose using the protein's backbone atoms. Calculate the Root Mean Square Deviation (RMSD) of the ligand's heavy atoms. An RMSD < 2.0 Å is typically considered a successful prediction.

Diagram 1: Deep Learning for PLI Prediction Workflow

Diagram 2: GraphDTA Model Architecture for Affinity Prediction

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions and Materials

| Item | Category | Function/Description |

|---|---|---|

| PDBbind Database | Data | Curated collection of protein-ligand complexes with binding affinity data for training and benchmarking. |

| BindingDB | Data | Public database of measured binding affinities, focusing on drug-target interactions. |

| RDKit | Software | Open-source cheminformatics toolkit for molecule manipulation, featurization, and conformer generation. |

| PyTorch / TensorFlow | Software | Core deep learning frameworks for building and training neural network models. |

| PyTorch Geometric (PyG) | Software | Extension library for implementing Graph Neural Networks on irregularly structured data. |

| OpenMM / MDTraj | Software | Tools for molecular dynamics simulation and trajectory analysis, used for dataset generation and validation. |

| AutoDock Vina | Software | Traditional docking software, often used for generating initial poses or baseline comparisons. |

| Schrödinger Suite | Commercial Software | Industry-standard platform for computational chemistry, includes Glide for docking and Maestro for visualization. |

| Google Colab Pro / AWS EC2 | Hardware/Cloud | Provides access to GPUs (e.g., NVIDIA V100, A100) necessary for training large deep learning models. |

| CUDA Toolkit | Software | NVIDIA's parallel computing platform, essential for accelerating deep learning computations on GPUs. |

Overcoming Hurdles: Strategies to Improve Deep Learning Model Performance and Reliability

Within protein-ligand interaction (PLI) prediction research, the scarcity of high-quality, experimentally validated binding affinity data (e.g., from ITC, SPR) severely limits the development of robust deep learning models. This application note details practical protocols for three critical paradigms—Data Augmentation, Transfer Learning, and Few-Shot Learning—to overcome this bottleneck, directly supporting a thesis on advancing deep learning for accurate and generalizable PLI prediction in drug discovery.

Data Augmentation Techniques for PLI Data

Data augmentation creates synthetic training samples from existing data to improve model generalization. For structured PLI data, this goes beyond simple image rotations.

Key Techniques & Protocols

Protocol 2.1.1: Coordinate-Based Molecular Perturbation