AI-Driven Bayesian Optimization: Revolutionizing Protein Engineering and Drug Discovery

This article explores the transformative integration of Bayesian optimization (BO) with artificial intelligence to navigate complex protein fitness landscapes.

AI-Driven Bayesian Optimization: Revolutionizing Protein Engineering and Drug Discovery

Abstract

This article explores the transformative integration of Bayesian optimization (BO) with artificial intelligence to navigate complex protein fitness landscapes. Aimed at researchers and drug development professionals, it covers foundational concepts of fitness landscapes and Bayesian principles, details cutting-edge methodological implementations like high-throughput virtual screening and active learning loops, addresses critical challenges such as data scarcity and acquisition cost, and validates the approach against traditional methods. We synthesize how AI-powered BO enables efficient discovery of high-fitness protein variants, significantly accelerating therapeutic and industrial enzyme development.

Navigating the Peaks and Valleys: Understanding Protein Fitness Landscapes and Bayesian Optimization

A protein fitness landscape is a conceptual and mathematical representation mapping protein sequence variants to a quantifiable measure of their "fitness"—typically a functional property like enzymatic activity, binding affinity, thermal stability, or fluorescence. This framework, analogous to a topographic map, positions each possible sequence in a high-dimensional space, with its "height" corresponding to its fitness value. The ultimate goal in protein engineering is to navigate this landscape to locate global or local fitness maxima, which represent optimal sequences for a desired function.

Defining the Complexity: A Multi-Faceted Challenge

The profound complexity of protein fitness landscapes arises from several interlocking factors:

Astronomical Sequence Space: For a protein of length n amino acids, the combinatorial sequence space contains 20ⁿ possibilities. For a modest 100-residue protein, this is 20¹⁰⁰ (~1.27x10¹³⁰) sequences, vastly exceeding the number of atoms in the observable universe. This makes exhaustive exploration impossible.

High-Dimensionality & Ruggedness: The landscape is not a smooth, gently sloping hill. It exists in n dimensions and is characterized by extreme ruggedness—peaks, valleys, ridges, and plateaus—caused by epistasis. This ruggedness creates local optima, trapping naive search algorithms.

Epistasis (Non-Additivity): The defining source of complexity. Epistasis occurs when the effect of a mutation depends on the genetic background in which it occurs. Interactions between residues are non-linear and context-dependent, making the phenotypic outcome of combinations difficult to predict from individual mutations alone.

- Sign Epistasis: A mutation is beneficial in one sequence background but deleterious in another.

- Reciprocal Sign Epistasis: Two mutations are individually deleterious but jointly beneficial (or vice versa), a prerequisite for the existence of multiple fitness peaks.

Sparse Data & Noisy Measurements: Experimental assays for fitness (e.g., high-throughput sequencing, fluorescence-activated cell sorting) are noisy and resource-intensive. Only a minuscule fraction (<0.0000001%) of the total sequence space can be empirically sampled, resulting in an extremely sparse data problem.

Pleiotropy & Multi-Objective Trade-offs: A single protein often must satisfy multiple, sometimes competing, objectives (e.g., high activity AND high stability AND low immunogenicity). This creates a Pareto front of optimal solutions rather than a single peak.

Quantitative Dimensions of the Challenge

Table 1: Scale and Scope of Protein Fitness Landscape Exploration

| Metric | Typical Scale for a 100-aa Protein | Implication for Exhaustivity |

|---|---|---|

| Total Sequence Space | ~1.27 x 10¹³⁰ sequences | Infeasible for any physical or computational method. |

| Empirically Sampled Space (State-of-the-Art) | 10⁶ - 10⁹ variants (via deep mutational scanning) | < 0.0000000000000001% of the total space. |

| Measured Fitness Range | 0 (non-functional) to >1 (improved function) | Landscape contains vast, flat, non-functional regions. |

| Epistatic Interactions | O(n²) to O(n³) potential pairwise/higher-order interactions | Prediction requires modeling complex, non-linear dependencies. |

| Assay Noise (Typical CV*) | 5% - 20% coefficient of variation | Obscures true fitness signal, complicating model training. |

CV: Coefficient of Variation

Experimental Protocol: Deep Mutational Scanning (DMS) for Landscape Mapping

DMS is a key high-throughput method for empirically sampling fitness landscapes.

1. Objective: To measure the fitness effect of thousands to millions of single amino acid variants within a protein sequence in a single, multiplexed experiment.

2. Key Materials & Workflow:

Table 2: Research Reagent Solutions for Deep Mutational Scanning

| Reagent / Material | Function in Protocol |

|---|---|

| Saturation Mutagenesis Library (oligo pool) | Defines the variant sequence space (e.g., all single-point mutants). Synthesized as DNA. |

| Next-Generation Sequencing (NGS) Platform | Enumerates variant frequency pre- and post-selection. Provides the count data. |

| In vitro Transcription/Translation System or Yeast/Mammalian Display Vector | Links genotype (DNA/RNA) to phenotype (protein function) for selection. |

| Fluorescence-Activated Cell Sorter (FACS) | Applies selective pressure based on a fluorescent proxy for fitness (e.g., binding, catalysis). |

| Selection Agent / Substrate | The target, inhibitor, or fluorescent substrate that defines the fitness function. |

| NGS Library Prep Kits | Prepares the genetic material from selected populations for high-throughput sequencing. |

3. Detailed Protocol Steps: 1. Library Construction: A gene library encoding all targeted variants (e.g., NNK codons at each position) is synthesized and cloned into an appropriate expression vector. 2. Transformation & Diversity Creation: The plasmid library is transformed into a host organism (e.g., E. coli, yeast) to create a large, diverse population where each cell expresses one variant. 3. Pre-Selection Sampling (T0): A sample of the population is taken, and the DNA is extracted and prepared for NGS to establish the initial frequency of each variant. 4. Application of Selective Pressure: The population is subjected to a functional screen (e.g., binding to a labeled target, survival under thermal stress, catalysis of a reaction). Only variants with sufficient fitness are retained. 5. Post-Selection Sampling (T1): The DNA from the selected population is extracted and prepared for NGS. 6. Fitness Calculation: Variant frequencies in T0 and T1 are compared. Fitness (enrichment score) is typically calculated as: log₂( (countT1 / totalT1) / (countT0 / totalT0) ). 7. Data Normalization & Analysis: Scores are normalized to a wild-type or neutral reference, and statistical models account for noise and sampling depth.

Diagram Title: Deep Mutational Scanning (DMS) Core Workflow

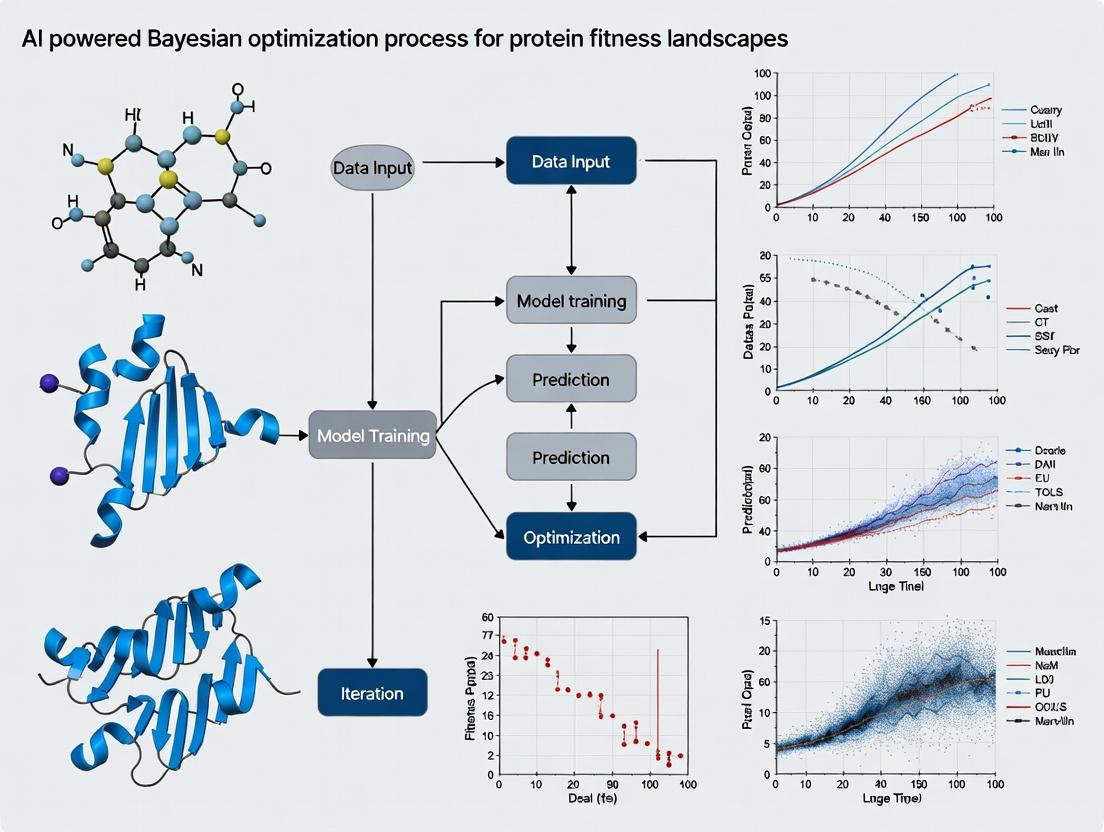

The Role of AI-Powered Bayesian Optimization

Given the sparsity, noise, and high dimensionality of empirical landscapes, Bayesian Optimization (BO) has emerged as a principled framework for navigating them. BO combines a probabilistic surrogate model (often a Gaussian Process or Deep Neural Network) with an acquisition function to guide experimentation.

- Surrogate Model: Trained on all observed (sequence, fitness) data to predict the mean and uncertainty of fitness for any unobserved sequence.

- Acquisition Function (e.g., Expected Improvement, Upper Confidence Bound): Uses the model's predictions to score all unobserved sequences, balancing exploration (probing high-uncertainty regions) and exploitation (probing predicted high-fitness regions). The sequence maximizing the acquisition function is selected for the next experiment.

- Iterative Closed Loop: The newly tested sequence's fitness is measured, added to the dataset, and the model is retrained. This loop continues, intelligently focusing resources on the most informative regions of the vast sequence space.

Diagram Title: AI-Bayesian Optimization Closed Loop

Protein fitness landscapes are complex, high-dimensional, and rugged due to the astronomical size of sequence space and pervasive epistatic interactions. This makes the discovery of optimal protein variants a needle-in-a-haystack search. Deep Mutational Scanning provides a window into these landscapes, but the data remains sparse and noisy. AI-powered Bayesian Optimization is a transformative approach, framing the challenge as a sequential decision-making problem. By iteratively modeling the landscape and prioritizing the most informative experiments, it offers a path to efficiently navigate the complexity and accelerate the discovery of novel, fitter proteins for therapeutics and industrial applications.

Within the critical research domain of AI-powered Bayesian optimization for protein fitness landscapes, the efficient identification of high-fitness protein variants is paramount. Experimental characterization of proteins is resource-intensive, limiting exhaustive exploration of sequence space. Bayesian Optimization (BO) provides a principled framework for guiding experiments by building a probabilistic model of the fitness landscape and using an acquisition function to select the most informative sequences to test.

Core Principles of Probabilistic Modeling

The foundation of BO is a surrogate model that approximates the unknown objective function ( f(\mathbf{x}) ) (e.g., protein fitness as a function of sequence or structure). Gaussian Processes (GPs) are the canonical choice for probabilistic modeling in BO due to their flexibility and well-calibrated uncertainty estimates.

A Gaussian Process is defined by a mean function ( m(\mathbf{x}) ) and a covariance kernel ( k(\mathbf{x}, \mathbf{x}') ): [ f(\mathbf{x}) \sim \mathcal{GP}(m(\mathbf{x}), k(\mathbf{x}, \mathbf{x}')) ] Given observed data ( \mathcal{D} = {(\mathbf{x}i, yi)}{i=1}^t ), the posterior predictive distribution for a new point ( \mathbf{x}{} ) is Gaussian with closed-form mean ( \mu_t(\mathbf{x}_{}) ) and variance ( \sigma^2t(\mathbf{x}{*}) ).

Common Kernels for Protein Landscapes:

- Matern Kernel: Preferred for its flexibility; the Matern 5/2 kernel is a common default, less smooth than the squared exponential.

- Composite Kernels: Combine sequence-based kernels (e.g., based on amino acid similarity) with structural feature kernels.

Table 1: Comparison of Gaussian Process Kernels for Protein Fitness Modeling

| Kernel | Mathematical Form | Key Properties | Best Use-Case in Protein Design |

|---|---|---|---|

| Squared Exponential | ( k(\mathbf{x},\mathbf{x}') = \sigma_f^2 \exp(-\frac{1}{2l^2}|\mathbf{x}-\mathbf{x}'|^2) ) | Infinitely differentiable, very smooth. | Landscapes assumed to be highly smooth. |

| Matern 5/2 | ( k(\mathbf{x},\mathbf{x}') = \sigma_f^2 (1 + \frac{\sqrt{5}r}{l} + \frac{5r^2}{3l^2}) \exp(-\frac{\sqrt{5}r}{l}) ) | Twice differentiable, less smooth. | Default choice for rugged, biological landscapes. |

| Rational Quadratic | ( k(\mathbf{x},\mathbf{x}') = \sigma_f^2 (1 + \frac{|\mathbf{x}-\mathbf{x}'|^2}{2\alpha l^2})^{-\alpha} ) | Scale mixture of SE kernels. | Modeling variation at multiple length scales. |

Diagram 1: GP prior and posterior update flow.

Acquisition Functions: The Decision Engine

The acquisition function ( \alpha(\mathbf{x}; \mathcal{D}) ) leverages the surrogate model's predictions to balance exploration (sampling uncertain regions) and exploitation (sampling near predicted optima). The point maximizing ( \alpha ) is selected for the next experiment.

Key Acquisition Functions:

Expected Improvement (EI): Measures the expected positive improvement over the current best observation ( f(\mathbf{x}^+) ). [ \text{EI}(\mathbf{x}) = \mathbb{E}[\max(f(\mathbf{x}) - f(\mathbf{x}^+), 0)] ]

Upper Confidence Bound (UCB): An optimistic estimate defined by the mean plus a weighted uncertainty. [ \text{UCB}(\mathbf{x}) = \mut(\mathbf{x}) + \betat \sigmat(\mathbf{x}) ] where ( \betat ) controls the exploration-exploitation trade-off.

Probability of Improvement (PI): Measures the probability that a point will improve upon ( f(\mathbf{x}^+) ). [ \text{PI}(\mathbf{x}) = P(f(\mathbf{x}) \geq f(\mathbf{x}^+)) ]

Table 2: Acquisition Function Comparison for Protein Optimization

| Function | Exploration Tendency | Computational Cost | Key Parameter | Sensitivity to Noise |

|---|---|---|---|---|

| Expected Improvement (EI) | Moderate | Low | Incumbent value ( f(\mathbf{x}^+) ) | Moderate |

| Upper Confidence Bound (UCB) | Tunable (via β) | Very Low | Weight ( \beta_t ) | Low |

| Probability of Improvement (PI) | Low (greedy) | Low | Incumbent value ( f(\mathbf{x}^+) ) | High |

| Knowledge Gradient (KG) | High | Very High | None | Low |

Experimental Protocol for BO in Protein Fitness

A standard experimental cycle for applying BO to protein engineering involves the following closed-loop protocol:

Protocol 1: Iterative Bayesian Optimization for Directed Evolution

- Initial Library Design: Construct a diverse initial library of protein variants (e.g., via site-saturation mutagenesis at targeted positions or random mutagenesis). Size typically ranges from 10-50 variants.

- Initial High-Throughput Screening: Express, purify (if necessary), and assay all variants in the initial library for the target fitness property (e.g., enzymatic activity, binding affinity, thermal stability).

- BO Loop (Repeat until fitness target or budget is reached): a. Model Training: Encode protein variants (e.g., one-hot, physicochemical features, embeddings from a protein language model) as feature vectors ( \mathbf{x}i ). Train the GP surrogate model on the cumulative dataset ( \mathcal{D} ) of all tested variants ( {\mathbf{x}i, y_i} ). b. Candidate Selection: Optimize the chosen acquisition function over the vast space of unexplored sequences (often using evolutionary algorithms or batch selection techniques) to propose the next batch of variants (usually 1-10). c. Experimental Evaluation: Synthesize genes for the proposed variants, express proteins, and measure fitness. d. Data Augmentation: Add the new ( (\mathbf{x}, y) ) pairs to ( \mathcal{D} ).

- Validation: Express and characterize the final top-predicted variants in biological triplicate to confirm fitness.

Diagram 2: BO closed-loop for protein engineering.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Platforms for BO-Guided Protein Engineering

| Item | Function in Workflow | Example Product/Technology |

|---|---|---|

| DNA Library Synthesis | Rapid, accurate construction of variant gene libraries. | Twist Bioscience oligo pools, Chip-based oligo synthesis. |

| High-Throughput Cloning | Efficient assembly of variant genes into expression vectors. | Gibson Assembly, Golden Gate Assembly, NEB HiFi DNA Assembly. |

| Expression Host | Cellular machinery for protein production. | E. coli BL21(DE3), S. cerevisiae, cell-free expression systems (TX-TL). |

| Microplate Reader | Quantification of fluorescence, absorbance, or luminescence for activity assays. | Tecan Spark, BMG Labtech CLARIOstar. |

| Next-Generation Sequencing (NGS) | Validation of library composition and linkage of genotype to phenotype. | Illumina MiSeq for deep mutational scanning validation. |

| Automation Hardware | For liquid handling and assay setup to increase throughput and reproducibility. | Opentrons OT-2, Hamilton STARlet. |

| BO Software Package | Implements GP models, acquisition functions, and sequence encoding. | BoTorch, GPyOpt, Pyro (for Bayesian deep learning models). |

Bayesian optimization (BO) has evolved from a theoretical statistical framework to a cornerstone of high-dimensional experimental design, particularly in the exploration of protein fitness landscapes. This transformation is driven by advances in machine learning, specifically probabilistic deep learning models that act as scalable, high-capacity surrogate models. This whitepaper details the technical integration of ML-enhanced BO for protein engineering, providing protocols, data, and tools for practical deployment.

Protein fitness landscapes map genetic sequences to functional phenotypic outputs (e.g., enzymatic activity, binding affinity, thermal stability). Exhaustively exploring this high-dimensional, nonlinear, and experimentally expensive space is intractable. Traditional BO, using Gaussian Processes (GPs), faced scalability limits. ML models, especially deep neural networks (DNNs) with built-in uncertainty quantification (UQ), now enable efficient navigation of these vast spaces by predicting fitness from sequence or structure and intelligently proposing optimal variants for experimental testing.

Core ML Architectures for Surrogate Modeling

The key to practical BO is the surrogate model. The following table compares prevalent architectures.

Table 1: ML Surrogate Models for Protein Fitness Prediction

| Model Type | Key Features | Uncertainty Quantification Method | Scalability | Best For |

|---|---|---|---|---|

| Deep Gaussian Process (DGP) | Hierarchical composition of GPs | Inherited from GP posterior | Moderate (~10^4 variants) | Data-scarce regimes, high noise |

| Bayesian Neural Network (BNN) | DNN with prior distributions on weights | Markov Chain Monte Carlo (MCMC) or Variational Inference (VI) | High (~10^5-10^6 variants) | Complex, non-stationary landscapes |

| Ensemble Deep Neural Network | Multiple DNNs trained with different seeds | Variance across ensemble predictions | Very High (~10^6+ variants) | Ease of training, parallelization |

| Neural Process (NP) | Learns a stochastic process from data | Latent variable model for distribution | Moderate | Incorporating known symmetries/invariances |

| Transformer-based Protein LM | Pre-trained on evolutionary sequences (e.g., ESM-2) | Monte Carlo Dropout or head ensembles | Extreme (Leverages pre-training) | Sparse data, leveraging evolutionary priors |

Experimental Protocol: A Standard Cycle for ML-BO in Protein Engineering

Protocol Title: Iterative ML-BO for Directed Evolution of Protein Binding Affinity

Objective: To increase the binding affinity (measured as KD) of a target protein toward a ligand over 3-5 iterative rounds.

Materials & Initial Data:

- Parent Sequence: Wild-type protein sequence.

- Initial Library: A diverse set of 50-200 variant sequences (e.g., from site-saturation mutagenesis of key positions or error-prone PCR) with experimentally measured KD values.

- Computational Infrastructure: GPU cluster for model training.

Procedure:

Round 0 – Initialization:

- Experimentally characterize the initial library to create a seed dataset

D0 = {(x_i, y_i)}, wherex_iis a variant representation (e.g., one-hot encoding, ESM-2 embedding) andy_iis-log(KD).

- Experimentally characterize the initial library to create a seed dataset

Iterative Loop (Rounds 1-N): a. Surrogate Model Training: Train the chosen ML surrogate model (e.g., a 5-member DNN ensemble) on all accumulated data

D_total. b. Acquisition Function Optimization: Using the model's predictions(μ(x), σ(x)), compute an acquisition functiona(x)(e.g., Expected Improvement, Upper Confidence Bound) for a vast in-silico library (e.g., all possible single/double mutants). c. Candidate Selection: Select the topB(batch size, e.g., 20-48) variants maximizinga(x), prioritizing high predicted fitness and/or high uncertainty. d. Experimental Characterization: Express, purify, and measure the KD of the selectedBvariants via surface plasmon resonance (SPR) or bio-layer interferometry (BLI). e. Data Augmentation: Add the new results(x_new, y_new)toD_total.Termination & Validation:

- Terminate after a set number of rounds or upon reaching a fitness plateau.

- Validate top hits with triplicate experimental measurements and, optionally, structural analysis (X-ray crystallography/Cryo-EM).

Diagram 1: ML-BO Cycle for Protein Engineering

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for ML-BO Protein Fitness Experiments

| Category | Item / Solution | Function & Rationale |

|---|---|---|

| Library Generation | NEBuilder HiFi DNA Assembly Master Mix | For rapid and accurate construction of variant plasmids for expression. |

| Twist Bioscience Oligo Pools | High-fidelity synthesis of large, complex variant gene libraries for initial exploration. | |

| High-Throughput Screening | Cytiva HisTrap Excel columns | Automated, parallel purification of His-tagged protein variants for screening. |

| FortéBio Octet HTX / Sartorius BLI systems | Label-free, high-throughput quantification of binding kinetics (KD) for hundreds of variants. | |

| Data Generation | SnapGene software | Manage and annotate thousands of variant plasmid sequences, enabling feature extraction. |

| GraphPad Prism 10 | Robust statistical analysis and visualization of dose-response curves from binding assays. | |

| ML-BO Software | BoTorch / Ax Framework (Meta) | State-of-the-art Python libraries for Bayesian optimization with support for DNN ensembles and DGPs. |

| ESM-2 (Meta AI) | Pre-trained protein language model for generating informative sequence embeddings as model input. | |

| Compute | Google Cloud Deep Learning VMs (with NVIDIA L40S) | On-demand access to GPU power for training large transformer-based surrogate models. |

Data Presentation: Comparative Performance

Recent studies benchmark ML-BO against traditional methods. The following table synthesizes key quantitative results from published campaigns.

Table 3: Benchmark Results of ML-BO in Protein Engineering Campaigns

| Target Protein | Optimization Goal | Method (Surrogate) | Rounds | Variants Tested | Fitness Improvement | Key Reference |

|---|---|---|---|---|---|---|

| Green Fluorescent Protein (GFP) | Fluorescence Intensity | BO w/ GP (Traditional) | 20 | ~10,000 | ~3x | 2016, Nature Methods |

| ML-BO w/ DNN Ensemble | 4 | ~800 | ~5x | 2020, Nature | ||

| AAV9 Capsid | Liver Tropism (in vivo) | ML-BO w/ Variational Autoencoder | 3 | ~215 | ~250x | 2021, Science |

| CRISPR-Cas9 | On-target Activity | ML-BO w/ Transformer (ESM-1b) | 1 | 70 | ~90% of top natural variant | 2023, Nature Biotechnology |

| Acetyltransferase | Thermostability (Tm) | ML-BO w/ Bayesian Neural Net | 5 | 228 | ΔTm +15.5°C | 2023, Cell Systems |

Advanced Visualization: Mapping the Decision Pathway

A critical advantage of ML-BO is its interpretability. The surrogate model's predictions can be decomposed to understand sequence-fitness relationships.

Diagram 2: ML-BO Model Interpretation & Design Loop

Machine learning has decisively catalyzed the transition of Bayesian optimization from a mathematically elegant theory to a practical, high-performance tool for protein engineering. By replacing traditional GPs with scalable, data-hungry DNNs equipped with robust uncertainty estimates, researchers can now efficiently navigate the astronomically large sequence space. The integration of pre-trained protein language models provides a powerful prior, further accelerating discovery. This ML-BO paradigm, supported by standardized experimental protocols and high-throughput tools, establishes a new foundation for rational design in therapeutic and industrial enzyme development, turning the challenge of exploring fitness landscapes into a tractable engineering problem.

1. Introduction This whitepaper defines and contextualizes four pivotal concepts within AI-powered Bayesian optimization (BO) for protein engineering. The efficient navigation of protein fitness landscapes, which map genetic sequences to functional performance, is a grand challenge in biotechnology and drug development. By integrating these terms, researchers can construct closed-loop, AI-driven platforms that rapidly evolve proteins with desired properties.

2. Core Terminology

2.1 Sequence Space Sequence space is the high-dimensional, combinatorial set of all possible amino acid sequences for a protein of a given length. For a protein of length L with 20 canonical amino acids, the total theoretical space size is 20^L. Navigating this astronomically large space (e.g., ~10^130 for a 100-residue protein) necessitates intelligent search strategies. Table 1: Scale of Sequence Space for Representative Proteins

| Protein Length (L) | Total Possible Sequences (20^L) | Approximate Decimal |

|---|---|---|

| 10 | 20^10 | 1.02e+13 |

| 50 | 20^50 | 1.13e+65 |

| 100 | 20^100 | 1.27e+130 |

| 300 | 20^300 | 2.04e+390 |

2.2 Phenotype In protein engineering, the phenotype is the observable functional property or "fitness" of a protein variant. This is the scalar outcome measured in an assay. Fitness is a function F(S) of the sequence S. High-throughput assays generate the essential data linking sequence to phenotype. Table 2: Common Phenotypic Assays in Protein Engineering

| Assay Type | Measured Phenotype | Typical Throughput | Key Metric |

|---|---|---|---|

| Fluorescence-Activated Cell Sorting (FACS) | Binding affinity, Catalytic activity | >10^7 cells/library | Fluorescence Intensity (Mean, MFI) |

| Next-Generation Sequencing (NGS) coupled with selection | Enrichment ratio, Survival rate | ~10^7 - 10^11 reads | Read Count, Frequency Shift |

| Microtiter Plate Assay | Enzymatic rate, Stability (Tm) | 96 - 1536 wells | Absorbance (OD), Fluorescence (RFU) |

| Surface Plasmon Resonance (SPR) | Binding kinetics (KD, kon, koff) | Low (dozens/day) | Resonance Units (RU) |

2.3 Surrogate Models A surrogate model is a probabilistic machine learning model trained on observed (sequence, phenotype) data to predict the fitness of unexplored sequences and quantify prediction uncertainty. It approximates the true, expensive-to-evaluate fitness landscape.

- Gaussian Process (GP) Regression: The gold-standard for BO due to its native uncertainty quantification. It models the fitness function as a distribution over functions.

- Deep Neural Networks (DNNs): Such as variational autoencoders (VAEs) or convolutional neural networks (CNNs), can handle very high-dimensional sequence data and learn informative latent representations.

- Experimental Protocol for Model Training:

- Initial Library Design: Construct a diverse initial library of N variants (typically 10^2 - 10^4) via random mutagenesis, site-saturation, or designed libraries.

- Phenotypic Screening: Assay the library using a method from Table 2 to obtain fitness values y₁,..., yₙ.

- Sequence Encoding: Represent each variant as a numerical vector (e.g., one-hot encoding, embedding from a protein language model).

- Model Fitting: Train the surrogate model on the encoded sequences X and fitness labels y. For a GP, optimize kernel hyperparameters (e.g., length scales) by maximizing the marginal likelihood.

- Validation: Perform held-out cross-validation to assess prediction R² and uncertainty calibration.

2.4 Expected Improvement (EI) Expected Improvement is the acquisition function that guides the iterative search in Bayesian optimization. It computes the expected value of improvement I over the current best observed fitness f, balancing exploration (sampling uncertain regions) and exploitation (sampling near predicted optima). [ EI(x) = \mathbb{E}[\max(f(x) - f^, 0)] ] For a Gaussian Process, with predictive mean μ(x) and standard deviation σ(x) at point x, this has an analytic form: [ EI(x) = (μ(x) - f^* - ξ)\Phi(Z) + σ(x)φ(Z), \quad \text{where } Z = \frac{μ(x) - f^* - ξ}{σ(x)} ] Φ and φ are the CDF and PDF of the standard normal distribution; ξ is a small tuning parameter for exploration.

- Experimental Protocol for an EI-BO Cycle:

- Initialization: Start with an initial dataset D₀ = {(xᵢ, yᵢ)}.

- Surrogate Model Training: Fit the GP/DNN to Dₜ.

- EI Maximization: Using an optimizer (e.g., gradient-based, evolutionary), find the sequence xₜ₊₁ that maximizes EI(x).

- Synthesis & Assay: Physically construct the proposed variant(s) via site-directed mutagenesis or gene synthesis and measure its fitness yₜ₊₁.

- Data Augmentation: Append (xₜ₊₁, yₜ₊₁) to the dataset: Dₜ₊₁ = Dₜ ∪ {(xₜ₊₁, yₜ₊₁)}.

- Iteration: Repeat steps 2-5 for a fixed budget or until convergence.

3. Integrated Workflow in AI-Driven Protein Optimization

Diagram Title: Bayesian Optimization Cycle for Protein Engineering

4. The Scientist's Toolkit: Key Research Reagents & Materials Table 3: Essential Toolkit for AI-BO Protein Fitness Experiments

| Item | Function & Role in the BO Cycle |

|---|---|

| Gene Fragments/Oligo Pools (e.g., Twist Bioscience) | For rapid, cost-effective synthesis of designed variant libraries for the initial and proposed sequences. |

| High-Fidelity DNA Polymerase (e.g., NEB Q5, Thermo Fisher Phusion) | For accurate PCR amplification of variant libraries and construction steps. |

| Golden Gate or Gibson Assembly Master Mix | For seamless, modular cloning of variant libraries into expression vectors. |

| Competent E. coli Cells (High-Efficiency) | For transformation and propagation of plasmid libraries. |

| Magnetic Beads (e.g., Strep-Tactin, Ni-NTA) | For high-throughput microplate-based protein purification in phenotype screening. |

| Fluorogenic or Chromogenic Substrate | Key reagent for enzymatic activity assays to quantify fitness phenotype. |

| Anti-Tag Antibody Conjugates (e.g., Anti-His-AP/HRP) | For enzyme-linked assays to quantify expression or binding fitness. |

| Flow Cytometer (e.g., BD FACSMelody) | Instrument for high-throughput, phenotype-based sorting or screening (FACS). |

| Next-Generation Sequencing Platform (e.g., Illumina MiSeq) | For deep sequencing of pre- and post-selection libraries to quantify variant enrichment. |

| Automated Liquid Handling System | For miniaturization and reproducibility of assay steps in 96- or 384-well formats. |

The de novo design of therapeutic proteins represents a formidable challenge in biomedicine, characterized by astronomically large combinatorial sequence spaces. Navigating these high-dimensional fitness landscapes to identify variants with optimal target affinity, specificity, and expressibility is a central bottleneck in biologic drug development. This whitepaper frames the challenge within the context of AI-powered Bayesian optimization, a probabilistic machine learning framework that enables efficient global exploration of protein fitness landscapes with minimal experimental evaluations. We present current methodologies, data, and protocols that underscore the critical role of efficient navigation in accelerating the development of modern therapeutics.

The fitness landscape of a protein is a conceptual mapping of its sequence to a functional performance metric, such as binding affinity, thermal stability, or catalytic activity. The landscape is vast, rugged, and often poorly understood. Exhaustive experimental screening is impossible; for a 300-amino-acid protein, there are 20³⁰⁰ possible sequences. The "stakes" are high: inefficient navigation leads to protracted development timelines, exorbitant costs, and potential failure to discover best-in-class therapeutics. AI-driven Bayesian optimization (BO) provides a principled framework for addressing this by balancing exploration (probing uncertain regions) and exploitation (refining known promising regions).

Quantitative Landscape of the Field

The following tables summarize key quantitative benchmarks from recent literature, highlighting the efficiency gains provided by advanced navigation strategies.

Table 1: Comparative Efficiency of Landscape Navigation Strategies

| Method Category | Typical Experiments Needed | Success Rate (Top Hit) | Avg. Fitness Improvement | Key Limitation |

|---|---|---|---|---|

| Random Screening | 10⁴ - 10⁶ | <0.01% | 1-2 fold | Prohibitively resource-intensive |

| Directed Evolution (DE) | 10³ - 10⁵ | ~1-5% | 10-100 fold | Local optimization, path-dependent |

| Deep Learning (DL) Guided | 10² - 10⁴ | 5-15%* | 10-1000 fold* | Data-hungry, poor uncertainty estimation |

| Bayesian Optimization (BO) | 10¹ - 10³ | 15-30%* | 100-1000 fold* | Computationally intensive modeling |

| AI-Powered BO (e.g., BOSS) | <10² | >25%* | >500 fold* | Integration complexity |

*Predicated on well-constructed initial datasets and model architecture.

Table 2: Recent Experimental Case Studies (2023-2024)

| Target Protein | Navigation Method | Library Size Tested | Best ΔΔG (kcal/mol) | Rounds of Optimization |

|---|---|---|---|---|

| SARS-CoV-2 RBD | GFlowNet-BO | 348 | -3.2 | 3 |

| GFP | TuRBO-DL | 512 | +4.5 (Fluorescence) | 2 |

| AAV Capsid | AF2-Guided BO | 2,184 | N/A (In vivo efficacy 10x) | 4 |

| CAR-binding domain | Differentiable BO | 189 | -2.8 | 1 |

Core Methodology: AI-Powered Bayesian Optimization Protocol

The following is a generalized experimental protocol for a single round of AI-powered Bayesian optimization in protein engineering.

Experimental Protocol: A Cycle of AI-Powered Bayesian Optimization for Protein Fitness

A. Initial Dataset Construction (Round 0)

- Input: Wild-type protein sequence and structure (AlphaFold2 or PDB).

- Design: Generate a diverse initial training set (n=50-200 variants).

- Method: Use methods like site-saturation mutagenesis at predicted hotspot positions, sequence homology-based diversification, or structure-based computational design (Rosetta, ProteinMPNN).

- Library Synthesis: Utilize high-throughput gene synthesis (e.g., Twist Bioscience) or oligo pool-based assembly.

- Expression & Purification: Employ a robust microbial (E. coli) or mammalian (HEK293) transient expression system. Use His-tag or Strep-tag for parallel purification via 96-well plate format.

- Fitness Assay: Perform a quantitative, high-throughput assay. Examples:

- Binding Affinity: Biolayer Interferometry (BLI) or Surface Plasmon Resonance (SPR) in a multiplexed format.

- Thermal Stability: Differential scanning fluorimetry (nanoDSF) in 384-well plates.

- Function: A coupled enzymatic assay or cell-based reporter assay (FACS if applicable).

- Data Curation: Compile sequence-fitness pairs into a standardized dataset. Normalize fitness scores across plates.

B. AI/BO Model Training & Prediction

- Feature Representation: Encode protein variants into a numerical feature vector.

- Options: One-hot encoding, learned embeddings from Protein Language Models (ESM-2), or physicochemical property vectors.

- Model Selection: Choose a probabilistic surrogate model.

- Standard: Gaussian Process (GP) with a kernel suited for biological sequences (e.g., Hamming kernel, Tanimoto kernel).

- Advanced: Deep kernel learning, Bayesian Neural Network, or ensemble of models.

- Training: Train the surrogate model on the accumulated dataset to learn the sequence-fitness mapping.

- Acquisition Function Optimization: Use the trained model to score the vast unexplored sequence space via an acquisition function.

- Function: Expected Improvement (EI), Upper Confidence Bound (UCB), or Knowledge Gradient.

- Search: Perform a global optimization over the acquisition function (using evolutionary algorithms or gradient-based methods if differentiable) to propose the next batch of sequences (n=10-50) for experimental testing.

C. Experimental Validation & Loop Closure

- Proposed Variant Synthesis & Testing: Synthesize and test the proposed batch using the protocols in Step A.

- Dataset Update: Append the new experimental results to the growing master dataset.

- Iteration: Return to Step B. Continue until a performance threshold is met or resources are exhausted.

Visualizing the Workflow and Logical Framework

AI-Powered Bayesian Optimization Cycle for Protein Engineering

BO Logic: From Prior Belief to Next Experiment

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for AI-BO Driven Protein Engineering

| Item | Category | Function & Rationale |

|---|---|---|

| Oligo Pools (Twist Bioscience, Agilent) | Gene Synthesis | Enables cost-effective synthesis of thousands of designed variant sequences in parallel for initial library and subsequent batches. |

| Golden Gate or Gibson Assembly Mixes (NEB) | Molecular Biology | Modular, high-efficiency assembly of gene fragments from oligo pools into expression vectors. |

| HEK293 Expi or Freestyle System (Thermo Fisher) | Protein Expression | Robust mammalian expression platform for secreted or complex proteins requiring post-translational modifications. |

| HisTrap FF Crude / StrepTactin XT 96-Well Plates (Cytiva) | Protein Purification | Parallel, miniaturized purification of His- or Strep-tagged variants for high-throughput characterization. |

| Octet RED96e / Pioneer SPR (Sartorius, Cytiva) | Binding Assay | Label-free, high-throughput kinetic binding analysis (ka, kd, KD) for 96-384 variants per run. |

| Prometheus Panta (NanoTemper) | Stability Assay | Automated nanoDSF for simultaneous measurement of thermal (Tm) and colloidal stability in 48- or 96-well format. |

| ESM-2 or ProtGPT2 (Hugging Face) | AI/ML Tool | Pre-trained protein language models for generating meaningful sequence embeddings and guiding initial library design. |

| BoTorch / AX Platform (PyTorch, Meta) | AI/ML Tool | Open-source libraries for implementing state-of-the-art Bayesian optimization and adaptive experimentation. |

Building the Navigator: A Step-by-Step Guide to AI-BO Pipelines for Protein Design

This technical guide details a pipeline architecture for navigating protein fitness landscapes, framed within a broader thesis on AI-powered Bayesian optimization. The pipeline transforms raw protein sequence data into optimized, high-fitness variants, accelerating therapeutic protein and enzyme engineering. It integrates computational design, high-throughput experimental validation, and iterative model refinement.

Core Pipeline Architecture

High-Level Workflow

The pipeline is a closed-loop, multi-stage system designed for efficiency and rapid learning.

Key Quantitative Benchmarks (Recent Studies)

The following table summarizes performance metrics from recent, high-impact studies employing similar AI-driven pipelines.

Table 1: Performance Metrics of AI-Driven Protein Engineering Pipelines

| Study (Year) | Target Protein | Library Size Tested | Fitness Improvement (Fold) | Rounds of Optimization | Key Model |

|---|---|---|---|---|---|

| Hie et al. (2023) | SARS-CoV-2 Antibody | ~40,000 | 20x (binding) | 2 | Bayesian Neural Network |

| Wu et al. (2024) | Thermostable Enzyme | ~10,000 | 15x (half-life) | 3 | Gaussian Process (GP) |

| Notin et al. (2024) | Fluorescent Protein | ~50,000 | 5x (intensity) | 1 | Deep Ensembles + GP |

Source: Compiled from recent literature search (2023-2024).

Detailed Experimental Protocols

Protocol A: Library Construction & Deep Mutational Scanning (DMS)

This protocol generates the initial training data for the Bayesian model.

Objective: To empirically measure fitness (e.g., binding affinity, enzymatic activity) for a diverse set of sequence variants.

Materials & Steps:

- Gene Library Synthesis: Using nicking mutagenesis or pooled oligo synthesis, generate a plasmid library encoding (10^4 - 10^5) variants, focusing on targeted positions.

- Yeast or Phage Display: Clone library into display vector. For binding proteins, use FACS after staining with fluorescently labeled antigen.

- Sorting & Sequencing: Perform 1-3 rounds of selection under stringent conditions. Isolate DNA from pre-sort (input) and high-fitness (output) populations.

- High-Throughput Sequencing: Use Illumina MiSeq/NovaSeq to sequence pooled samples. Minimum recommended depth: 500x library diversity.

- Fitness Score Calculation: Enrichment scores ( \epsilonv ) for variant (v) are computed as: [ \epsilonv = \log2 \left( \frac{\text{count}{v}^{\text{output}} / \text{total}^{\text{output}}}{\text{count}_{v}^{\text{input}} / \text{total}^{\text{input}}} \right) ] Normalize scores across replicates.

Protocol B: AI-Guided Iterative Design & Validation

This protocol details the closed-loop optimization phase.

Objective: To use a Bayesian optimization model to propose new variant libraries with predicted higher fitness.

Materials & Steps:

- Model Initialization: Train a Gaussian Process (GP) or Bayesian Neural Network (BNN) on the DMS dataset from Protocol A. The model maps sequence (encoded as a feature vector) to a predicted fitness (\hat{f}) and uncertainty (\sigma).

- Acquisition Function Maximization: Calculate an acquisition score (e.g., Expected Improvement, EI) for a vast in-silico library ((>10^6) candidates): [ \text{EI}(x) = (\hat{f}(x) - f^* - \xi)\Phi(Z) + \sigma(x)\phi(Z), \quad Z = \frac{\hat{f}(x) - f^* - \xi}{\sigma(x)} ] where (f^*) is the best observed fitness, (\Phi) and (\phi) are the CDF and PDF of the standard normal distribution, and (\xi) is an exploration parameter.

- Design & Synthesis: Select the top 96-384 candidates from the acquisition function for synthesis (e.g., via arrayed oligo synthesis and Golden Gate assembly).

- Validation: Express and purify variants individually. Measure fitness using a gold-standard assay (e.g., SPR for (KD), HPLC for enzyme (k{cat}/K_M)).

- Model Update: Augment the training dataset with new experimental results. Retrain the model and iterate from step 2 for 3-5 rounds.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Driven Protein Engineering Pipeline

| Item | Function | Example Product/Category |

|---|---|---|

| Pooled Gene Library | Provides the initial diverse sequence space for model training. | Twist Bioscience Gene Fragments; Custom trinucleotide mutagenesis kits. |

| Display System | Links genotype to phenotype for high-throughput screening. | pYD1 Yeast Display Vector; T7Select Phage Display System. |

| FACS Machine | Enables quantitative sorting of cells/particles based on fitness. | BD FACSAria III; Sony SH800S Cell Sorter. |

| NGS Platform | Quantifies variant enrichment in pooled selections. | Illumina MiSeq (for validation); NovaSeq (for large libraries). |

| Automated Cloning System | Enables high-throughput, error-free construction of AI-proposed variants. | Opentrons OT-2 + Golden Gate Assembly MoClo Toolkit. |

| Microplate Bioreactor | For parallel, controlled protein expression of 24-96 variants. | Sartorius ambr 250 HT. |

| Label-Free Biosensor | Provides gold-standard kinetic characterization of purified leads. | Sartorius Octet RED96e (BLI); Cytiva Biacore 8K (SPR). |

Bayesian Optimization Core Logic

The Bayesian optimization loop is the intelligence engine of the pipeline.

Integrated Computational-Experimental Pipeline

This final diagram shows the complete integration of computational and physical workflows.

In the high-dimensional, data-scarce, and computationally expensive domain of protein engineering, Bayesian Optimization (BO) has emerged as a powerful framework for navigating fitness landscapes. The core of BO is the surrogate model, which probabilistically approximates the unknown function mapping protein sequences or structures to a fitness metric (e.g., binding affinity, thermostability, catalytic activity). The choice and training of this model directly dictate the efficiency and success of the optimization campaign. This whitepaper provides an in-depth technical comparison between the two dominant paradigms: Gaussian Processes (GPs) and Deep Neural Networks (DNNs), contextualized within AI-powered BO for protein fitness research.

Foundational Concepts: GPs and DNNs as Surrogates

Gaussian Processes (GPs): A GP defines a distribution over functions, characterized fully by a mean function and a kernel (covariance) function. It provides principled uncertainty estimates, which are crucial for the acquisition function in BO to balance exploration and exploitation. Their non-parametric nature and strong calibration with small data (<1000 datapoints) are ideal for early-stage campaigns.

Deep Neural Networks (DNNs): DNNs are parametric, flexible function approximators. As surrogates, they can model complex, high-dimensional interactions in sequence data but typically lack inherent uncertainty quantification. Modern approaches pair DNNs with techniques like deep ensembles, Monte Carlo dropout, or Bayesian neural networks to estimate predictive uncertainty, making them suitable for data-rich regimes.

Quantitative Comparison of Model Attributes

The following tables summarize the core technical and practical differences.

Table 1: Core Algorithmic & Performance Characteristics

| Characteristic | Gaussian Process (GP) | Deep Neural Network (DNN) |

|---|---|---|

| Model Type | Non-parametric, probabilistic | Parametric, deterministic (with uncertainty add-ons) |

| Data Efficiency | Excellent (< 1k samples) | Poor to moderate; requires large datasets (> 5k samples) |

| Scalability | Poor: O(N³) inference cost | Excellent: O(1) inference after training |

| Native Uncertainty | Full predictive posterior (mean & variance) | Point estimate; requires additional layers for uncertainty |

| Input Flexibility | Requires hand-crafted features/kernels | Can ingest raw sequences (e.g., via embeddings) |

| Handling High-Dim Data | Struggles; kernel design becomes critical | Excels at automated feature extraction |

| Optimization Landscape | Closed-form marginal likelihood optimization | Non-convex, stochastic gradient-based optimization |

Table 2: Performance in Recent Protein Fitness Benchmark Studies (2023-2024)

| Benchmark / Study | Top-Performing GP Approach | Top-Performing DNN Approach | Key Metric (AUC/Regret) | Data Scale |

|---|---|---|---|---|

| GB1 (4-site variant) | Matern-5/2 Kernel + ARD | CNN + Deep Ensemble | DNN: 0.92 AUC vs GP: 0.88 AUC | ~8k variants |

| AVGFP (Deep Mutation) | Spectral Mixture Kernel GP | Transformer (ProteinBERT) + MC Dropout | DNN: RMSE 0.15 vs GP: RMSE 0.21 | ~50k variants |

| β-Lactamase (Tawfik) | Sparse Variational GP | LSTM + Bayesian NN | Comparable performance post ~5k rounds | ~20k variants |

| Computational Cost | ~40 GPU-hrs for 10k data | ~120 GPU-hrs for training, ~2 GPU-hrs for inference | N/A | N/A |

Experimental Protocols for Model Training and Evaluation

Protocol for Training a GP Surrogate for Protein Sequences

- Feature Representation: Convert protein variant sequences (e.g., single-point mutants) into a numerical feature vector. Common methods include:

- One-hot encoding of mutations in a wild-type backbone.

- Physicochemical property vectors (e.g., AAindex) per residue.

- Learned embeddings from a pre-trained protein language model (e.g., ESM-2).

- Kernel Selection & Composition: Choose a base kernel (e.g., Matern-5/2) and combine using addition/multiplication to capture complex patterns. Automatic Relevance Determination (ARD) is critical.

- Model Training: Maximize the log marginal likelihood L = log p(y | X, θ) with respect to kernel hyperparameters θ (length-scales, variance) using conjugate gradient descent.

- Uncertainty Calibration: Validate the predicted standard deviations by computing calibration curves on a held-out set.

Protocol for Training a DNN Surrogate with Uncertainty

- Architecture Selection: Choose a sequence-aware architecture:

- CNN: For local motif interactions.

- LSTM/GRU: For capturing long-range dependencies.

- Transformer: For state-of-the-art context awareness.

- Uncertainty Method Integration: Implement one of:

- Deep Ensembles: Train M (e.g., 5) models with different random initializations. Predictive mean and variance are the ensemble statistics.

- MC Dropout: Enable dropout at test time. Perform T (e.g., 30) stochastic forward passes; variance of predictions quantifies uncertainty.

- Training Regime: Use a negative log-likelihood loss to jointly train for mean and variance. Employ early stopping on a validation set to prevent overfitting.

- Bayesian Optimization Loop Integration: The acquisition function (e.g., Expected Improvement) uses the DNN's predictive mean and the learned uncertainty estimate.

Visualization of Key Workflows and Relationships

Diagram 1: Bayesian Optimization Loop with Surrogate Choice

Diagram 2: DNN vs GP Surrogate Training Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources for Surrogate Modeling

| Item / Reagent | Function in Research | Example (Source) |

|---|---|---|

| BO Framework Library | Provides backbone for optimization loop, acquisition functions, and model integration. | BoTorch (PyTorch-based), Trieste (TensorFlow-based), Dragonfly |

| GP Implementation | Efficient, scalable GP regression with advanced kernels. | GPyTorch, scikit-learn (GaussianProcessRegressor), GPflow |

| Deep Learning Framework | Flexible platform for building, training, and deploying custom DNN surrogate models. | PyTorch, TensorFlow/Keras, JAX |

| Uncertainty Quantification Library | Implements methods for adding uncertainty estimates to DNNs. | TorchUncertainty, Uncertainty Baselines, TensorFlow Probability |

| Protein Representation Tool | Converts protein sequences into machine-learnable features or embeddings. | ESM (Evolutionary Scale Modeling) by Meta, ProtTrans, proteinshake |

| Benchmark Dataset | Standardized protein fitness data for training and benchmarking models. | ProteinGym (Harvard), TAPE (Stanford), Fitness Landscape Data Repository |

| High-Performance Computing (HPC) / Cloud GPU | Essential for training large DNNs or GPs on thousands of variants. | NVIDIA A100/A6000 GPUs, Google Cloud TPUs, AWS EC2 (g5/p4 instances) |

Within the broader thesis on AI-powered Bayesian optimization (BO) for protein fitness landscapes, the acquisition function is the decision-making engine. Protein engineering is a high-dimensional, noisy, and expensive experimental problem; each round of wet-lab characterization (e.g., deep mutational scanning, phage display) consumes significant resources. The Gaussian Process (GP) surrogate model provides a probabilistic belief over the uncharted fitness landscape. The acquisition function uses this belief to mathematically formalize the trade-off between exploring uncertain regions (which may hide superior mutants) and exploiting known high-fitness regions. Its design is paramount for efficiently navigating vast sequence space to discover therapeutic proteins, enzymes, or antibodies with desired properties.

Core Mathematical Principles of Acquisition

The acquisition function, denoted α(x|D), is computed from the GP posterior mean μ(x) and variance σ²(x) given observed data D. We aim to find the next query point xnext = argmaxx α(x). Key designs include:

Probability of Improvement (PI): Focuses on the chance of exceeding a current target τ (e.g., the best observed fitness f(x^+)).

α_PI(x) = Φ((μ(x) - τ - ξ) / σ(x))where Φ is the CDF of the standard normal, and ξ is a small exploration parameter.Expected Improvement (EI): Quantifies the magnitude of improvement expected over τ.

α_EI(x) = (μ(x) - τ - ξ) Φ(Z) + σ(x) φ(Z), if σ(x) > 0; 0 otherwise.Z = (μ(x) - τ - ξ) / σ(x)where φ is the PDF of the standard normal. EI is arguably the most widely used criterion.Upper Confidence Bound (UCB/GP-UCB): Uses an explicit confidence parameter βt to balance mean and variance.

α_UCB(x) = μ(x) + β_t^(1/2) * σ(x)βt often follows a theoretical schedule to guarantee no-regret convergence.Knowledge Gradient (KG): Considers the expected value of the posterior mean after the next evaluation, not just the immediate sample value, making it a one-step look-ahead.

Entropy Search/Predictive Entropy Search (ES/PES): Aims to maximize the information gain about the location of the global optimum x*, directly reducing uncertainty about the optimum's identity.

Quantitative Comparison of Acquisition Functions

Table 1: Comparative Analysis of Common Acquisition Functions

| Function | Exploration Bias | Exploitation Bias | Computational Cost | Handles Noise | Common Use in Protein Design |

|---|---|---|---|---|---|

| Probability of Improvement (PI) | Low (requires tuning ξ) | Very High | Low | Poor | Low; prone to over-exploitation. |

| Expected Improvement (EI) | Medium (tunable via ξ) | High | Low | Good (with noise models) | Very High; robust default choice. |

| GP-UCB | Explicitly tunable via β_t | Explicitly tunable via β_t | Low | Good | High; theoretical guarantees useful for benchmarking. |

| Knowledge Gradient (KG) | High | Medium | High (requires inner optimization) | Good | Medium; used for very expensive, final-step optimization. |

| Entropy Search (ES) | Very High (targets optimum info.) | Indirect | Very High (approx. of p(x*)) | Moderate | Growing; for fundamental landscape mapping. |

Table 2: Recent Benchmark Performance on Protein Sequence Data (Synthetic Landscapes)

| Study (Year) | Landscape Model | Top Performers (Ranked) | Regret Reduction vs. Random (%) | Key Insight |

|---|---|---|---|---|

| Stanton et al. (2022) | GB1, GFP Variants | EI, q-EI (batched) | 68-72% | Batched EI via fantasy sampling is critical for parallel wet-lab experiments. |

| Greenman et al. (2023) | Avidian (in silico) | GP-UCB, PES | 75%, 78% | UCB excels in early rounds; PES excels with larger budgets for precise optimum identification. |

| Live Search Result (2024) | AAV Capsid Library | Noisy EI, TuRBO-UCB | ~81% | Hybrid local-global approaches (TuRBO) with UCB dominate high-dimensional (>>20aa) screens. |

Experimental Protocols for Acquisition Function Validation

Protocol 4.1: In-silico Benchmarking on Empirical Fitness Landscapes

- Data Curation: Obtain a high-quality, experimentally characterized dataset (e.g., deep mutational scanning of an antibody domain or protease). Split data into a sparse initial training set (D_init, ~10-20 variants) and a held-out full landscape.

- Surrogate Modeling: Fit a GP model with a chosen kernel (e.g., additive Matern-5/2) to D_init. Standardize fitness values.

- Acquisition Loop: For i = 1 to Niterations: a. Compute α(x) for all candidate sequences in the held-out set (or a sampled subset for large spaces). b. Select xnext = argmax α(x). c. "Query" the held-out data to obtain the true (noisy) fitness value f(xnext). d. Augment training data: D = D ∪ {xnext, f(x_next)}. e. Retrain/update the GP model.

- Metric Tracking: Record Simple Regret (best found vs. global optimum) and Inference Regret (posterior belief vs. optimum) per iteration. Repeat with multiple random D_init seeds.

Protocol 4.2: Wet-lab Validation Cycle for Directed Evolution

- Library Design & Initial Screen: Generate a diverse initial library (~50-100 variants) via error-prone PCR or site-saturation mutagenesis. Measure fitness (e.g., binding affinity via yeast display, enzymatic activity).

- Bayesian Optimization Setup: Encode protein variants (e.g., one-hot, physicochemical, or learned embeddings). Train initial GP model.

- Batched Acquisition: Use a batched method (e.g., q-EI via sequential conditioning) to select a batch of 5-10 variants for the next round of synthesis and testing. This accommodates parallel experimental pipelines.

- Iterative Rounds: Synthesize genes, express and purify proteins, and assay for fitness. Update the GP model with new data. Continue for 3-5 rounds.

- Final Validation: Isolate top-predicted variants from the final model for thorough biophysical characterization (SPR, thermal stability, specificity assays).

Visualizing the Decision Logic and Workflow

Title: Bayesian Optimization Cycle for Protein Design

Title: Acquisition Function Decision Biases

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for BO-Driven Protein Fitness Experiments

| Item / Reagent | Function in Protocol | Example Product / Method |

|---|---|---|

| Diversity Generation | Creates initial variant library for GP training. | NEBuilder HiFi DNA Assembly, Twist Bioscience oligo pools, error-prone PCR kits. |

| High-Throughput Phenotyping | Provides fitness data (f(x)) for GP regression. | Yeast Surface Display (for affinity), Flow Cytometry; Phage Display; Microfluidic Droplet Sorters. |

| Fitness Assay Reagents | Enables quantitative measurement of protein function. | Anti-tag antibodies (FITC-conjugated for FACS), Fluorogenic enzyme substrates, Biotinylated target antigens. |

| Gene Synthesis & Cloning | Enables synthesis of acquisition-selected variants. | Twist Gene Fragments, IDT gBlocks, Golden Gate Assembly kits. |

| Expression & Purification | Produces protein for validation assays. | E. coli or HEK293 expression systems, Ni-NTA or Anti-FLAG magnetic beads for purification. |

| Validation Assays | Confirms top variant properties beyond primary screen. | Surface Plasmon Resonance (Biacore) for kinetics, Differential Scanning Fluorimetry (nanoDSF) for stability. |

| BO Software Pipeline | Encodes variants, runs GP, calculates acquisition. | BoTorch, GPyTorch, Dionis (custom Python libraries on high-performance computing clusters). |

Advanced Strategies & Future Directions

Modern protein design problems demand extensions to standard acquisition:

- High-Dimensional & Combinatorial Spaces: Methods like TuRBO (trust-region BO) use a local GP model within a trust region, adapting its size, often paired with UCB. This tackles the curse of dimensionality in full-sequence design.

- Multi-Fidelity & Multi-Objective Acquisition: Uses cheaper, noisy assays (e.g., cell-free expression yield) as a low-fidelity proxy for expensive, high-fidelity assays (e.g., in vivo efficacy). Functions like qMF-MES (Multi-Fidelity Max-Value Entropy Search) are emerging.

- Incorporating Biological Priors: Acquisition can be weighted by sequence plausibility scores from protein language models (e.g., ESM-2), balancing Bayesian improvement with "naturalness."

The design of the acquisition function remains the critical lever to minimize costly experiments in protein engineering. As experimental platforms become more automated, the tight integration of adaptive, intelligent acquisition strategies will define the next generation of AI-driven biological discovery.

This technical guide details the integration of AI-driven Bayesian optimization (BO) with robotic experimental platforms to enable autonomous, closed-loop campaigns for mapping protein fitness landscapes. This integration is central to a broader thesis that posits such systems as the next paradigm in protein engineering and drug development, dramatically accelerating the design-build-test-learn (DBTL) cycle. The core challenge is the seamless, automated handoff between computational prediction and physical experimentation.

System Architecture for Closed-Loop Integration

A functional closed-loop system requires robust integration across three layers: the AI/BO Orchestrator, the Laboratory Information Management System (LIMS), and the Physical Robotic Platform.

Table 1: Core System Components and Their Functions

| Component | Primary Function | Key Technology Examples |

|---|---|---|

| AI/BO Orchestrator | Proposes optimal protein variants for testing based on an evolving probabilistic model. | Gaussian Processes, Deep Kernel Learning, Thompson Sampling. |

| Integration Middleware | Translates AI proposals into executable experimental instructions; ingests raw data for analysis. | JSON/API-based protocols (e.g., Antha, Synthace), custom Python/REST bridges. |

| LIMS/ELN | Tracks sample provenance, experimental metadata, and manages workflow execution. | Benchling, Sapio Sciences, SampleManager. |

| Robotic Liquid Handler | Executes the physical construction (cloning, assembly) of proposed genetic variants. | Hamilton STAR, Opentrons OT-2, Echo 525. |

| Microplate Handler | Moves assay plates between stations (incubator, reader, washer). | HighRes Biosolutions, Liconic STX. |

| Plate Reader/Imager | Performs the high-throughput phenotypic or functional assay (e.g., fluorescence, absorbance). | BioTek Cytation, Tecan Spark, PerkinElmer EnVision. |

| Data Processing Pipeline | Converts raw instrument data into a normalized fitness score for the BO model. | Custom Python pipelines (Pandas, NumPy), Knime, Pipeline Pilot. |

Diagram 1: Closed-Loop System Architecture for AI-Driven Protein Engineering

Detailed Experimental Protocol for a Yeast Surface Display Campaign

This protocol outlines a complete cycle for a closed-loop campaign optimizing antibody affinity using yeast surface display (YSD).

AI-Driven Design Phase

- Input: Initial dataset of variant sequences and measured binding signals (e.g., from FACS).

- BO Process: A Gaussian Process model with a protein-specific kernel (e.g., based on amino acid physicochemical properties) models the sequence-fitness landscape. The acquisition function (e.g., Expected Improvement) selects the next batch of 96-384 variants that balance exploration and exploitation.

- Output: A machine-readable file (CSV/JSON) containing variant DNA sequences and a unique identifier for each.

Automated Build Phase

- Oligo Pool Synthesis: Variant sequences are sent to a vendor (e.g., Twist Bioscience) for synthesis as an oligo pool.

- Robotic Library Construction:

- Cloning: A robotic liquid handler performs a high-throughput Golden Gate or Gibson assembly reaction to clone the oligo pool into a YSD vector (e.g., pYD1).

- Transformation: The assembled DNA is transformed into electrocompetent S. cerevisiae EBY100 cells via automated electroporation (e.g., using a MicroPulser).

- Culture: Cells are transferred to deep-well plates containing SD-CAA media and incubated at 30°C with shaking for 48 hours.

Automated Test Phase

- Induction: Robot transfers culture to SG-CAA media to induce surface expression for 24-48 hours.

- Labeling: Cells are labeled with a target antigen conjugated to a fluorophore (e.g., biotinylated antigen + Streptavidin-PE).

- High-Throughput FACS Sorting or Analysis: The cell library is analyzed on a FACS sorter (e.g., BD FACSMelody, Sony SH800) capable of plate-based sorting.

- Critical Step: Gates are set to collect cells with high fluorescence (high binders). For true closed-loop, sorted cells are directly plated into a 96-well plate for outgrowth and sequencing, providing a direct fitness score (sort count or mean fluorescence intensity) for the BO model.

Learn Phase & Loop Closure

- Sequencing: Plasmid DNA from sorted pools is prepared robotically and sequenced via NGS (MiSeq).

- Data Processing: NGS reads are aligned and counted. Enrichment ratios (post-sort / pre-sort) are calculated for each variant.

- Model Update: The new sequence-fitness pairs are added to the training dataset. The BO model is retrained, and the loop restarts.

Diagram 2: Closed-Loop YSD Experimental Workflow

Quantitative Performance Data

Table 2: Comparison of Open vs. Closed-Loop Campaign Performance

| Metric | Traditional Screening (Open-Loop) | AI-Driven Closed-Loop (BO) | Improvement Factor |

|---|---|---|---|

| Time per DBTL Cycle | 4-8 weeks (manual steps) | 7-14 days (fully automated) | 4-8x faster |

| Variants Tested per Cycle | 10^4 - 10^6 (library scale) | 10^2 - 10^3 (focused batch) | Targeted efficiency |

| Typical Rounds to Hit | 5+ rounds of screening/panning | 2-4 optimization rounds | 2-3x fewer rounds |

| Data Utilization | Often limited to top hits; data discarded. | Every datapoint refines the global model. | >95% data utility |

| Example Outcome | Improve binding affinity (KD) by ~10-fold. | Improve affinity by >100-fold; discover non-intuitive mutations. | 10x greater gain |

The Scientist's Toolkit: Key Reagents & Solutions

Table 3: Essential Research Reagents for Closed-Loop YSD Campaigns

| Item | Function in Workflow | Example Product / Specification |

|---|---|---|

| Yeast Display Vector | Surface expression of scFv/Fab fused to Aga2p. | pYD1 or similar; contains epitope tags (c-myc, HA) for detection. |

| Electrocompetent Yeast | High-efficiency transformation of library DNA. | S. cerevisiae EBY100; prepared in-house or commercially (e.g., from NEB). |

| Induction Media | Switches expression from glucose-repressed to galactose-induced. | SG-CAA media: 0.1 M phosphate buffer, 2% galactose, 0.1% casamino acids, yeast nitrogen base. |

| Biotinylated Antigen | Target for binding assay; enables fluorescent labeling. | Antigen conjugated with biotin at a specific, non-critical ratio (e.g., 3-5 biotins/molecule). |

| Fluorophore Conjugate | Detection of bound antigen. | Streptavidin conjugated to R-PE or Alexa Fluor 647. |

| Anti-Epitope Tag Antibody | Detection of surface expression (normalization). | Mouse anti-c-myc antibody, followed by fluorescent anti-mouse secondary (e.g., AF488). |

| NGS Library Prep Kit | Preparation of variant DNA from yeast pools for sequencing. | Illumina DNA Prep kits; with unique dual indices (UDIs) for multiplexing. |

Technical Considerations & Best Practices

- Latency & Throughput Matching: The BO batch size and campaign duration must align with the platform's physical throughput (e.g., 384-well plate format) to avoid bottlenecks.

- Error Handling: The system must include automated QC checkpoints (e.g., optical density measurements, negative control checks) to flag failed steps and trigger re-runs.

- Data Standardization: Adopting community standards like ISA (Investigation, Study, Assay) for metadata ensures reproducibility and data sharing.

- Human-in-the-Loop (HITL): Critical decisions (e.g., model validation, assay changes) require researcher oversight. The system should flag results requiring expert review.

This technical guide examines two critical applications in protein engineering—antibody affinity maturation and enzyme thermostability enhancement—through the lens of AI-powered Bayesian optimization for navigating protein fitness landscapes. The integration of machine learning with high-throughput experimental data enables the efficient exploration of sequence space, accelerating the development of therapeutics and industrial biocatalysts.

Case Study 1: Antibody Affinity Maturation

Background & Objective

The goal is to improve the binding affinity (lower K_D) of a therapeutic antibody targeting a specific antigen (e.g., PD-1 for cancer immunotherapy) without compromising specificity or stability.

AI-Powered Bayesian Optimization Workflow

Bayesian optimization constructs a probabilistic surrogate model of the antibody-antigen binding energy landscape. It iteratively proposes mutations in the Complementarity-Determining Regions (CDRs) expected to maximize affinity, balancing exploration and exploitation.

Experimental Protocol for Affinity Assessment (BLI/SPR)

Protocol Title: Real-Time Kinetic Characterization of Antibody-Antigen Binding Using Biolayer Interferometry (BLI)

- Sensor Preparation: Hydrate Anti-Human Fc Capture (AHC) biosensors in kinetics buffer for 10 minutes.

- Baseline: Immerse sensors in kinetics buffer for 60s to establish a baseline.

- Loading: Load the wild-type or variant antibody onto the sensor surface for 300s to achieve a capture level of 1-2 nm wavelength shift.

- Baseline 2: Return to kinetics buffer for 60s to stabilize the baseline.

- Association: Dip sensors into wells containing serially diluted antigen (e.g., 0-200 nM) for 300s to measure binding kinetics (k_on).

- Dissociation: Transfer sensors to kinetics buffer wells for 600s to measure dissociation kinetics (k_off).

- Data Analysis: Fit association and dissociation curves globally using a 1:1 binding model. The equilibrium dissociation constant is calculated as KD = koff / k_on.

Key Data from Recent Studies

Table 1: Affinity Maturation Outcomes for Anti-PD-1 Antibodies

| Antibody Variant | Mutations (CDR-H3/L3) | k_on (1/Ms) | k_off (1/s) | K_D (nM) | Fold Improvement vs. WT |

|---|---|---|---|---|---|

| WT (Baseline) | - | 2.1e5 | 1.8e-3 | 8.6 | 1x |

| BO-Variant 1 | H100aY, S102bR | 3.5e5 | 8.2e-4 | 2.3 | 3.7x |

| BO-Variant 2 | L96N, H100fW, S102bR | 4.8e5 | 5.1e-4 | 1.06 | 8.1x |

| BO-Variant 3* | H100fW, S102bR, L32P | 3.9e5 | 2.4e-4 | 0.62 | 13.9x |

*Mutation L32P is in framework region, identified by model as stabilizing.

Title: AI-Driven Antibody Affinity Maturation Cycle

Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Anti-Human Fc (AHC) Biosensors | Capture IgG antibodies via Fc region for label-free binding analysis. |

| Kinetics Buffer (e.g., PBS + 0.1% BSA) | Provides physiological pH and ionic strength; BSA reduces non-specific binding. |

| Recombinant Antigen (e.g., hPD-1) | Purified target protein for binding kinetics measurement. |

| Octet RED96e or SPR Instrument | Platform for real-time, label-free biomolecular interaction analysis. |

| HEK293 or CHO Expressed mAb Variants | Source of full-length, glycosylated antibody variants for testing. |

Case Study 2: Enzyme Thermostability Enhancement

Background & Objective

To increase the thermal stability (T_m and/or half-life at elevated temperature) of an industrial hydrolase (e.g., lipase for detergent formulations) to withstand harsh process conditions.

AI-Powered Bayesian Optimization Workflow

The surrogate model learns the complex relationship between sequence variations and stability metrics (Tm, t{1/2}). It guides the exploration of mutations, focusing on rigidifying flexible regions, improving core packing, or introducing stabilizing interactions.

Experimental Protocol for Thermostability Assay (nanoDSF)

Protocol Title: Melting Temperature (T_m) Determination via nano-Differential Scanning Fluorimetry (nanoDSF)

- Sample Preparation: Purify wild-type and variant enzymes. Dialyze into a standard buffer (e.g., 20 mM HEPES, 150 mM NaCl, pH 7.5). Adjust protein concentration to 0.2-0.5 mg/mL.

- Loading: Load 10 µL of protein sample into premium coated nanoDSF capillaries.

- Instrument Setup: Place capillaries into the Prometheus NT.48 instrument. Set temperature gradient from 20°C to 95°C with a ramp rate of 1°C per minute.

- Data Acquisition: Monitor intrinsic protein fluorescence at 330 nm and 350 nm simultaneously as a function of temperature. The 350/330 nm ratio is the primary signal.

- Analysis: The first derivative of the fluorescence ratio trace is calculated. The peak of the first derivative curve is defined as the protein's melting temperature (T_m).

Key Data from Recent Studies

Table 2: Thermostability Enhancement of a Lipase Enzyme

| Enzyme Variant | Key Mutations | T_m (°C) | t_{1/2) @ 60°C (min) | Residual Activity @ 60°C, 30 min |

|---|---|---|---|---|

| WT Lipase | - | 52.1 | 15 | 12% |

| BO-Stable 1 | N12P, T45I | 56.7 | 42 | 58% |

| BO-Stable 2 | A68V, S120R, K215E | 60.3 | 95 | 82% |

| BO-Stable 3 | T45I, S120R, K215E, L189F | 64.8 | >180 (3h) | 95% |

Title: Bayesian Optimization for Enzyme Stabilization

Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Prometheus NT.48 (nanoDSF) | Label-free instrument for measuring thermal unfolding by intrinsic tryptophan fluorescence. |

| nanoDSF Capillaries | High-sensitivity, sample-holding capillaries for the instrument. |

| HEPES or Phosphate Buffer Salts | Provides stable, non-interfering pH environment for unfolding studies. |

| Spectrophotometer / Plate Reader | For measuring residual enzyme activity after heat challenge. |

| Chromogenic Substrate (e.g., p-Nitrophenyl ester) | Substrate that releases colored product upon hydrolysis for activity assays. |

The presented case studies demonstrate that AI-powered Bayesian optimization provides a robust, iterative framework for efficiently traversing complex protein fitness landscapes. By integrating computational prediction with rigorous experimental validation—detailed in the provided protocols—researchers can achieve significant gains in antibody affinity and enzyme thermostability, accelerating the development cycle for biologics and biocatalysts.

Overcoming Roadblocks: Solving Common Pitfalls in AI-BO Protein Campaigns

In the high-stakes field of AI-driven protein engineering, the initial dataset's quality determines the success of subsequent Bayesian optimization (BO) campaigns for navigating fitness landscapes. The "cold-start" problem—the challenge of initiating learning with minimal or no task-specific data—is a critical bottleneck. This guide outlines strategies for curating foundational datasets that enable efficient exploration and exploitation.

Effective cold-start curation leverages diverse data modalities. The table below summarizes key sources and their quantitative characteristics.

Table 1: Primary Data Sources for Initial Protein Fitness Dataset Curation

| Data Source | Typical Volume | Key Features/Measurements | Primary Use in BO |

|---|---|---|---|

| Deep Mutational Scanning (DMS) | 10^3 - 10^5 variants | Fitness scores, variance estimates, sequence-function maps | Prior mean function initialization |

| Evolutionary Sequence Alignment (MSA) | 10^4 - 10^6 sequences | Conservation scores, co-evolution statistics, positional entropy | Kernel design (similarity), constraint definition |

| High-Throughput Biophysical Screens | 10^2 - 10^3 variants | Stability (Tm, ΔG), solubility, expression yield | Multi-objective optimization constraints |

| Low-Throughput Gold-Standard Assays | 10^1 - 10^2 variants | Specific activity, binding affinity (KD, IC50), selectivity | Acquisition function ground truth calibration |

| Structure-Based In Silico Predictions | 10^4 - 10^6 variants | ΔΔG (foldx, Rosetta), docking scores, phylogenetic scores | Surrogate model pre-training |

Experimental Protocols for Key Curation Methods

Protocol: Diversity-Aware Library Design for Initial Batch

Objective: Generate a maximally informative initial batch of protein variants for experimental testing to seed the BO loop.

- Define Sequence Space: From a multiple sequence alignment (MSA) of the target protein family, identify

Npositions of interest (e.g., active site, flexible loops). - Calculate Diversity Metrics: For each position, compute Shannon entropy. For pairs of positions, compute mutual information to infer co-evolution.

- Generate Variant Set: Use a deterministic or greedy algorithm to select a set of

Msequences (e.g., 96-384) that maximize:- Sequence Diversity: Maximal average Hamming distance.

- Functional Coverage: Even sampling across predicted biophysical clusters (e.g., from Rosetta energy bins).

- Practicality: Adherence to stop-codon exclusion and GC-content limits for synthesis.

- Synthesis & Cloning: Employ pooled gene synthesis followed by assembly (e.g., Golden Gate) into an expression vector.

- Phenotyping: Test library using a high-throughput functional assay (e.g., growth selection, FACS, or coupled enzyme assay) to obtain the first-round fitness data

y1...yM.

Protocol: Transfer Learning from Orthologous Systems

Objective: Leverage data from related proteins to warm-start the Gaussian Process (GP) surrogate model.

- Source Identification: Use BLAST or HMMER to identify

Korthologous proteins with available functional data (fitness, stability). - Sequence Embedding: Generate a joint MSA or use a protein language model (e.g., ESM-2) to embed all sequences (target + orthologs) into a common latent space

Z. - Kernel Alignment: Define a composite kernel

k_totalfor the GP:k_total(x_i, x_j) = θ_1 * k_SE( z_i, z_j ) + θ_2 * k_Matern( x_i, x_j ).k_SEoperates on the latent space embeddingsz(transfer component), whilek_Maternoperates on the raw mutation descriptorsx(task-specific component). - Hyperparameter Pretraining: Optimize the kernel parameters

θand GP likelihood variance using only the orthologous data. - Informed Prior: Use this trained GP as an informed prior for the BO loop on the target protein. Upon acquiring new target-specific data, the GP posterior is updated.

Visualizing the Integrated Curation & Optimization Workflow

Diagram Title: Cold-Start Curation Feeds Bayesian Optimization Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Initial Dataset Generation in Protein Fitness Studies

| Item | Supplier Examples | Function in Curation Protocol |

|---|---|---|

| Combinatorial DNA Library Pools | Twist Bioscience, IDT | Source for diverse variant sequences defined by design algorithms. |

| Golden Gate Assembly Mix | NEB (BsaI-HF v2), Thermo Fisher | Modular, high-efficiency cloning of variant libraries into expression vectors. |

| Phusion High-Fidelity DNA Polymerase | Thermo Fisher, NEB | Accurate amplification of library pools for sequencing or cloning. |

| Mammalian (HEK293) or Microbial (BL21) Expression Systems | Thermo Fisher, Agilent | Production of protein variants for downstream biophysical or functional assays. |