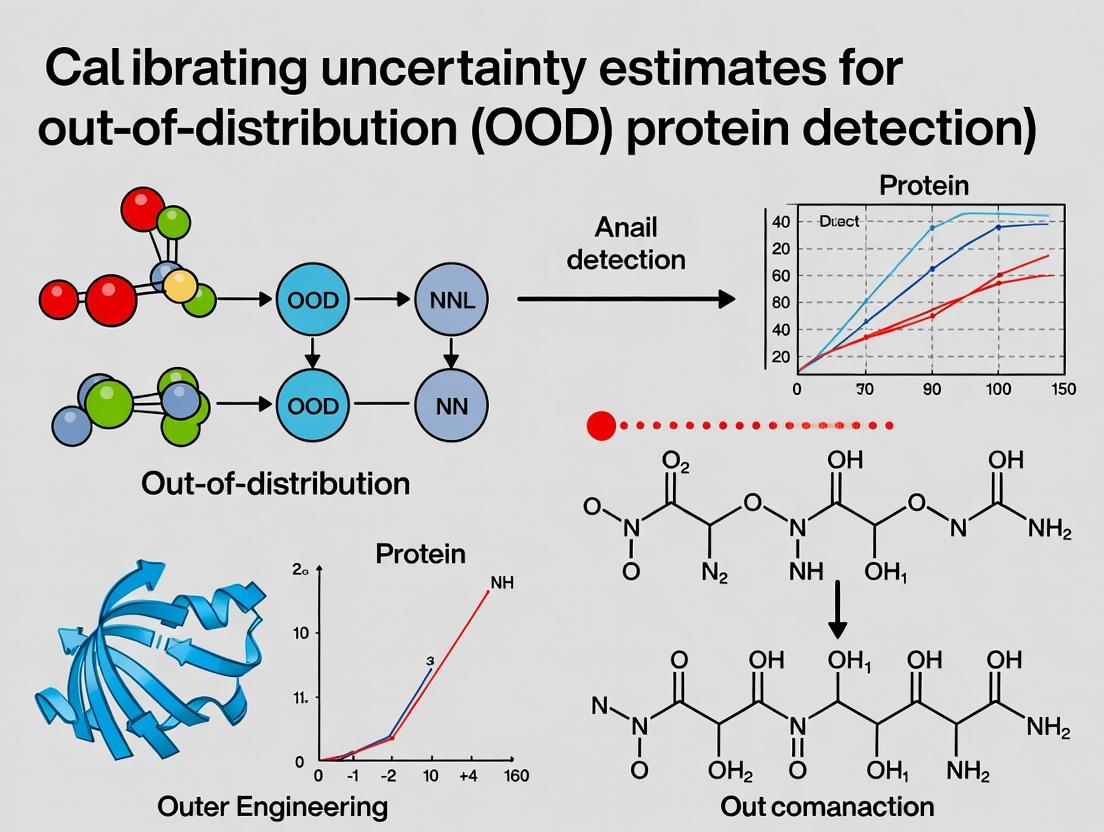

Accurate OOD Detection in Proteins: How to Calibrate Uncertainty Estimates for Reliable AI Models

This article provides a comprehensive guide for researchers and drug development professionals on calibrating uncertainty estimates for Out-of-Distribution (OOD) protein detection.

Accurate OOD Detection in Proteins: How to Calibrate Uncertainty Estimates for Reliable AI Models

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on calibrating uncertainty estimates for Out-of-Distribution (OOD) protein detection. We explore the foundational principles of uncertainty quantification in protein machine learning, detail current methodological approaches for calibration (including Bayesian and ensemble techniques), address common pitfalls and optimization strategies, and validate these methods through comparative analysis against established benchmarks. The goal is to equip practitioners with the knowledge to build more reliable, trustworthy models for critical applications in protein engineering, function prediction, and therapeutic design.

Why OOD Detection Fails in Protein AI: The Critical Role of Uncertainty Calibration

Technical Support Center: OOD Detection & Uncertainty Calibration

Frequently Asked Questions (FAQs)

Q1: Our model performs with >95% accuracy on held-out test data, but fails catastrophically on new, real-world protein sequences. What is the root cause? A: This is a classic Out-Of-Distribution (OOD) detection failure. The test data was likely from the same distribution as your training data (IID). Real-world data contains novel folds, unseen domains, or biochemical characteristics not represented in your training set. Your model lacks a properly calibrated uncertainty estimate; it makes high-confidence predictions on these novel inputs instead of flagging them as OOD.

Q2: Which uncertainty quantification method is best for detecting OOD proteins: Monte Carlo Dropout, Deep Ensembles, or evidential deep learning? A: The choice depends on your trade-off between computational cost and performance. See the quantitative comparison below.

Table 1: Comparison of Uncertainty Quantification Methods for OOD Detection

| Method | Principle | OOD Detection Performance (Average AUROC) | Computational Cost | Key Advantage for Protein Science |

|---|---|---|---|---|

| Monte Carlo Dropout | Approximates Bayesian inference via stochastic forward passes. | 0.82 - 0.88 | Low | Easy to implement on existing models. |

| Deep Ensembles | Trains multiple models with different initializations. | 0.90 - 0.95 | High | Gold standard for accuracy and uncertainty. |

| Evidential Deep Learning | Places a prior over likelihood and learns its parameters. | 0.85 - 0.92 | Medium | Directly models epistemic uncertainty. |

Data synthesized from recent benchmarks on AlphaFold-2 embeddings and novel fold databases (2023-2024).

Q3: How can we create a robust benchmark to test our OOD detection pipeline? A: You need a deliberately constructed OOD test set. Follow this experimental protocol.

Experimental Protocol: Constructing a Protein OOD Benchmark

- Define In-Distribution (ID) Data: Use CATH or SCOP to select a non-redundant set of proteins from specific superfamilies (e.g., Alpha-Beta class).

- Define OOD Data:

- Remote Homology: Select proteins from different folds within the same class (e.g., Globin-like fold vs. TIM barrel fold).

- Novel Folds: Use the latest CASP "Free Modeling" targets or entries from the PDB labeled as "new fold".

- Engineered/De Novo Proteins: Include sequences from designed protein databases (e.g., PDB-Dev).

- Feature Extraction: Generate per-residue and per-sequence embeddings using a pre-trained protein language model (e.g., ESM-2).

- Model Training & Evaluation: Train your predictive model (e.g., for stability, function) only on ID data. Evaluate using metrics like AUROC, False Positive Rate at 95% True Positive Rate (FPR95), and Accuracy vs. Uncertainty plots to assess OOD detection.

Q4: What are the primary metrics to evaluate OOD detection performance? A: Rely on metrics that separate ID and OOD distributions based on uncertainty scores.

Table 2: Key Metrics for Evaluating OOD Detection

| Metric | Formula/Description | Interpretation |

|---|---|---|

| AUROC | Area Under the Receiver Operating Characteristic curve. | 1.0 = Perfect separation, 0.5 = Random guessing. |

| FPR95 | False Positive Rate when True Positive Rate is 95%. | Lower is better. Measures how many OOD samples slip through. |

| Detection Error | Min. possible error rate for classifying ID vs. OOD. | Combined error from misclassifying both ID and OOD data. |

Troubleshooting Guide

Issue T1: Model uncertainty is not correlated with prediction error. High-uncertainty predictions can be correct, and low-uncertainty ones can be wrong.

- Cause: Poorly calibrated uncertainty. The model's confidence does not reflect its true probability of being correct.

- Solution: Apply temperature scaling or isotonic regression on a validation set to calibrate the uncertainty scores. For evidential models, check the regularization strength on the evidence.

Issue T2: The OOD detector flags too many valid, in-distribution sequences as anomalous, hampering throughput.

- Cause: The uncertainty threshold is set too aggressively. The definition of your "ID" training data may be too narrow.

- Solution: Adjust the decision threshold based on acceptable risk. Consider outlier exposure, where you train the detector with examples of "background" OOD data to sharpen its boundaries.

Issue T3: OOD detection works at the sequence level, but fails at the critical residue level (e.g., for predicting catalytic sites).

- Cause: Using only global (per-sequence) embeddings loses local, structural context.

- Solution: Implement a per-residue uncertainty method. Use 3D convolutional networks on predicted structures or graph networks on residue contact maps to capture local epistemic uncertainty.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in OOD/Uncertainty Research |

|---|---|

| ESM-2/ProtBERT Embeddings | Pre-trained protein language model embeddings serve as foundational, informative features for downstream OOD detection models. |

| AlphaFold2 (LocalColabFold) | Generates predicted structures for novel sequences; structural divergence from confidence metrics (pLDDT) can be an OOD signal. |

| CATH/SCOP Database | Provides the hierarchical classification (Class, Architecture, Topology, Homology) essential for defining ID and OOD splits. |

| PDB-Dev / CASP Targets | Source of bona fide OOD examples, including de novo designed proteins and novel fold predictions. |

| Uncertainty Baselines (e.g., SNGP) | Software libraries implementing Spectral Normalized Gaussian Process layers to improve distance-awareness in deep networks. |

| Calibration Libraries (e.g., netcal) | Python libraries for implementing Platt scaling, temperature scaling, and histogram binning to calibrate model uncertainties. |

Visualization: OOD Detection Workflow & Model Architecture

OOD Detection Workflow for Protein Sequences

Deep Ensemble Architecture for Uncertainty

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: My model’s uncertainty estimates are overconfident on out-of-distribution (OOD) protein sequences. How can I diagnose if this is an epistemic or aleatoric uncertainty issue?

- Answer: First, conduct a targeted experiment. Split your validation data into "In-Distribution" (ID) and a curated "OOD" set (e.g., proteins from a different fold class). Calculate the predictive entropy (total uncertainty) for both sets. Then, use an approximation method like Deep Ensembles (for epistemic) or Monte Carlo Dropout at test time (for both). Compare the breakdown.

- Low Entropy on OOD with High Confidence: Suggests your model is failing to capture epistemic uncertainty—it doesn't "know what it doesn't know."

- High Entropy on both ID and OOD: May indicate high aleatoric uncertainty (inherent noise in the data is high) or a model that is poorly calibrated.

- Protocol: Use the following workflow:

Table 1: Diagnostic Results Interpretation

| Scenario | Total Uncertainty (Predictive Entropy) on OOD | Epistemic Uncertainty Component | Likely Diagnosis |

|---|---|---|---|

| 1 | Low | Low | Critical Failure: Model is overconfident. Epistemic uncertainty is not captured. |

| 2 | High | Low | High Aleatoric Uncertainty (inherent data noise for the model). |

| 3 | High | High | Model is appropriately uncertain (epistemic captured). |

| 4 | Low | High | Unlikely; review calculation methods. |

Diagnosis Workflow for Poor OOD Uncertainty

FAQ 2: When using Monte Carlo Dropout for uncertainty estimation on protein language model embeddings, my epistemic uncertainty values are consistently low. Is the method failing?

- Answer: This is a common issue. Monte Carlo Dropout approximates Bayesian inference, but its effectiveness depends on dropout placement and strength. In fixed protein embeddings (e.g., from ESM-2), dropout applied only to the final classifier head may not capture uncertainty in the representations themselves.

- Protocol: Implement a two-tier dropout:

- Embedding Dropout: Apply dropout stochastically to the input embeddings (or intermediate features) during inference.

- Classifier Dropout: Standard dropout in the fully connected layers.

- Quantitative Check: Increase the dropout rate (e.g., from 0.1 to 0.5) and the number of stochastic forward passes (e.g., from 30 to 100). Monitor if the epistemic uncertainty (measured as the variance across predictions) begins to scale with OOD distance. See Table 2.

- Protocol: Implement a two-tier dropout:

Table 2: Effect of MC Dropout Parameters on Uncertainty Capture

| Parameter | Typical Setting | Enhanced Setting for OOD | Measured Outcome |

|---|---|---|---|

| Dropout Rate | 0.1 - 0.2 | 0.3 - 0.5 | Increases spread of stochastic predictions. |

| Number of Forward Passes (T) | 30 | 100 | Reduces variance of the uncertainty estimate. |

| Dropout Placement | Classifier only | Embedding + Classifier | Captures uncertainty in feature extraction phase. |

| Expected Change in Epistemic (OOD) | Low/Static | Should Increase | More meaningful uncertainty signal. |

FAQ 3: How do I calibrate aleatoric uncertainty estimates for a protein property regression task (e.g., stability ΔΔG)?

- Answer: Aleatoric uncertainty is data-dependent and should be learned heteroscedastically by the model. The primary issue is mis-specification of the likelihood function.

- Protocol: Modify your model's output layer to predict both a mean (μ) and a variance (σ²) for each input protein variant.

- Model Change: Use a negative log-likelihood (NGL) loss for training:

Loss = 0.5 * (log(σ²) + (y - μ)² / σ²). - Calibration Step: After training, apply Temperature Scaling on Variance. Fit a scalar parameter

Ton a validation set to optimize the likelihood, scaling the predicted variance:σ²_calibrated = T * σ². - Validation: Check calibration by plotting predicted variances against squared errors on a held-out set. They should be correlated.

- Model Change: Use a negative log-likelihood (NGL) loss for training:

- Protocol: Modify your model's output layer to predict both a mean (μ) and a variance (σ²) for each input protein variant.

Aleatoric Uncertainty Calibration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Uncertainty Calibration Experiments in Protein ML

| Item / Solution | Function in Research |

|---|---|

| CATH/SCOP Datasets | Provides hierarchical, fold-based classification for constructing rigorous ID/OOD protein sequence splits. |

| AlphaFold DB / PDB | Source of experimental structures for generating confidence metrics (pLDDT) to compare against learned uncertainties. |

| ESM-2/ProtBERT Models | Pre-trained protein language models used as foundational feature extractors. Baseline for epistemic uncertainty. |

| Deep Ensembles Scripts | Code for training multiple model instances with different random seeds. Gold standard for epistemic uncertainty approximation. |

| LAVA (Likelihood-Aware VAEs) | Framework for generative modeling of proteins, useful for defining latent-space priors and improving OOD detection. |

| Calibration Metrics Library | Contains implementations of Expected Calibration Error (ECE), Brier Score, and NLL for proper scoring. |

| PDBbind / SKEMPI 2.0 | Curated datasets for protein-ligand affinity or protein-protein interaction ΔΔG, used for heteroscedastic regression tasks. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My model shows high confidence (e.g., >90%) on a novel sequence from a different fold, but the prediction is wrong. What is happening and how can I diagnose it? A: This is the core symptom of the calibration gap on Out-Of-Distribution (OOD) data. The model’s confidence scores do not reflect its actual accuracy on novel sequences. To diagnose:

- Run an OOD Detection Test: Calculate the model’s prediction entropy or use a dedicated OOD score (e.g., pLDDT for AlphaFold2, pTM for ESMFold) on a held-out set of known OOD sequences (e.g., from a different CATH/SCOP fold).

- Create a Reliability Diagram: Bin your model’s confidence scores (e.g., 0-10%, 10-20%, etc.) and plot the average confidence in each bin against the actual accuracy of predictions in that bin. A perfectly calibrated model will have points on the diagonal. For OOD data, points will fall significantly below the diagonal.

- Check Table 1: Compare your model’s Expected Calibration Error (ECE) on in-distribution vs. OOD test sets.

Table 1: Typical Calibration Error Metrics for Protein Models on Different Data Types

| Data Type | Model Confidence (Avg.) | Actual Accuracy (Avg.) | Expected Calibration Error (ECE) |

|---|---|---|---|

| In-Distribution Test Set | 0.89 | 0.87 | 0.02 - 0.04 |

| Novel Fold (OOD) Set | 0.82 | 0.31 | 0.35 - 0.55 |

| Designed/ Synthetic Set | 0.78 | 0.24 | 0.40 - 0.60 |

Q2: What experimental protocol can I use to quantitatively measure model calibration on my custom OOD dataset? A: Follow this protocol to compute calibration metrics. Protocol: Quantifying Calibration Error

- Dataset Preparation: Curate three datasets: (A) In-Distribution validation set, (B) Hold-out test set from your target distribution, (C) Novel OOD set (e.g., synthetic proteins, different organism proteome).

- Model Inference: Run your model (e.g., AlphaFold2, ESMFold, RosettaFold) on all sequences in each dataset. Extract per-residue or per-structure confidence metrics (pLDDT, pTM).

- Accuracy Calculation: For each prediction, compute the accuracy metric (e.g., TM-score against a known experimental structure for fold-level assessment).

- Binning and ECE Calculation: Group predictions into M=10 bins based on their predicted confidence. For each bin m, calculate:

- Average Confidence:

conf(m) - Average Accuracy:

acc(m) - Weight:

|B_m| / N(fraction of samples in bin) - Compute ECE:

ECE = Σ_{m=1}^{M} weight(m) * |acc(m) - conf(m)|

- Average Confidence:

- Visualization: Plot the reliability diagram.

Q3: Are there specific signaling pathways or protein families where this overconfidence is most problematic for drug discovery? A: Yes, models are often overconfident on rapidly evolving pathogen proteins (e.g., viral envelope proteins, antibiotic resistance enzymes) and human proteins with low homology to well-characterized families (e.g., orphan GPCRs, cancer-testis antigens). Overconfidence here can lead to wasted resources on incorrect virtual screens.

Diagram: Overconfidence in Pathogen Protein Modeling

Q4: What post-hoc methods can I apply to better calibrate uncertainty estimates for OOD detection? A: Several methods can be applied after model training:

- Temperature Scaling: Learn a single parameter

Tto soften the softmax distribution of confidence scores:scaled_confidence = softmax(logits / T). OptimizeTon a separate validation set. - Ensemble Methods: Run multiple models (e.g., with different random seeds, submodels) and use the variance of predictions as an uncertainty metric. High variance indicates higher uncertainty.

- Conformal Prediction: Use a hold-out calibration set to compute prediction sets that guarantee a user-defined coverage probability (e.g., 90% of true structures will be within the set), providing rigorous uncertainty quantification.

Diagram: Post-Hoc Calibration Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Calibration Research |

|---|---|

| AlphaFold2/ColabFold | Standard model for generating protein structure predictions and associated pLDDT confidence scores. |

| ESMFold | Alternative high-speed model providing pTM global confidence scores for calibration comparison. |

| ProteinMPNN | Protein sequence design tool to generate synthetic, OOD sequences for stress-testing models. |

| CATH/SCOP Database | Curated protein structure classification for defining in-distribution and OOD (novel fold) test sets. |

| TM-score Software | Metric for quantifying structural prediction accuracy (used as ground truth for calibration plots). |

| UniProt Proteomes | Source for extracting novel sequences from under-represented organisms to create OOD benchmarks. |

| PyTorch/TensorFlow | Frameworks for implementing temperature scaling, ensemble methods, and custom loss functions. |

In the research on calibrating uncertainty estimates for out-of-distribution (OOD) protein detection, accurate evaluation of model confidence is paramount. This technical support center details three core metrics—Expected Calibration Error (ECE), Brier Score, and Negative Log-Likelihood (NLL)—used to assess and troubleshoot the calibration of predictive uncertainty in deep learning models. Proper use of these metrics ensures reliable OOD detection, a critical component for robust AI applications in drug discovery and protein engineering.

Troubleshooting Guides & FAQs

Q1: My model has high accuracy but a very poor (high) ECE. What does this mean, and how can I fix it? A: This indicates a calibration error. Your model is overconfident (predicts probabilities near 1.0 for correct classes) or underconfident, even when it's correct. To troubleshoot:

- Verify Your ECE Calculation: Ensure you are using a sufficient number of bins (typically 10-15) and that bins are equally spaced in probability space (not equal-sized bins of samples).

- Apply Post-hoc Calibration: Use temperature scaling (a single parameter adjustment on the logits) on your validation set. This is a lightweight and effective fix for modern neural networks.

- Check for Distribution Shift: Evaluate if your validation/test set has a different distribution from your training set, which can artificially inflate ECE.

Q2: When should I prioritize Brier Score over NLL, or vice versa, for my OOD protein detection model? A: The choice depends on your primary concern:

- Use Brier Score if your focus is on the overall accuracy of the probability estimates, penalizing both calibration and refinement. It is more robust to extreme probability values.

- Use NLL if your focus is on the model's likelihood of the data or you need a strictly proper scoring rule that is sensitive to the full predictive distribution. It heavily penalizes highly confident incorrect predictions.

- For OOD Detection: NLL is often preferred as OOD samples typically receive lower likelihood (higher NLL). Monitoring the distribution of NLL scores for in-distribution vs. OOD samples is a common diagnostic.

Q3: I implemented temperature scaling, but my NLL got worse. What went wrong? A: This is a common issue. Temperature scaling optimizes for NLL (or ECE) on the validation set. If your NLL worsens, check:

- Data Leakage: Ensure the temperature parameter is optimized only on the held-out validation set, not the test set.

- Overfitting to Validation Set: With a very small validation set, the optimized temperature can be unstable. Use cross-validation or a larger validation set.

- Implementation Error: The temperature

Tscales the logits(z)asz/T. AT > 1decreases confidence (flattens probabilities), whileT < 1increases confidence. Verify the optimization finds a sensibleT(often between 1.0 and 3.0).

Q4: How do I interpret a Brier Score? What is a "good" value? A: The Brier Score is a mean squared error, so lower is better. A perfect model has a Brier Score of 0. The worst possible score depends on the number of classes (K). For a binary classification, the worst score is 0.25 for a model that predicts 0.5 for all samples. Interpretation is always relative to a baseline (e.g., the uncalibrated model or a random classifier). A reduction of 0.01 in Brier Score is generally considered a meaningful improvement.

Metric Comparison & Data Presentation

Table 1: Core Metrics for Uncertainty Calibration Evaluation

| Metric | Formula (Multiclass) | Range | Interpretation (Lower is Better) | Sensitivity | ||

|---|---|---|---|---|---|---|

| Expected Calibration Error (ECE) | (\sum_{m=1}^{M} \frac{ | B_m | }{n} | \text{acc}(Bm) - \text{conf}(Bm) |) | [0, 1] | Measures the average gap between accuracy and confidence across probability bins. | Calibration only. |

| Brier Score | (\frac{1}{N} \sum{i=1}^{N} \sum{k=1}^{K} (y{i,k} - \hat{p}{i,k})^2) | [0, 2] for K classes | Measures the mean squared error of the probability estimates (calibration + refinement). | Calibration & Refinement. Robust. | ||

| Negative Log-Likelihood (NLL) | (-\frac{1}{N} \sum{i=1}^{N} \sum{k=1}^{K} y{i,k} \log(\hat{p}{i,k})) | [0, ∞) | Measures the average negative log of the predicted probability assigned to the true label. | Strictly Proper. Sensitive to tails. |

Table 2: Typical Impact of Common Issues on Evaluation Metrics

| Issue | Expected Impact on ECE | Expected Impact on Brier Score | Expected Impact on NLL |

|---|---|---|---|

| Overconfidence | Increased | Slightly Increased | Greatly Increased |

| Underconfidence | Increased | Increased | Increased |

| Label Noise | Increased | Greatly Increased | Greatly Increased |

| OOD Samples in Test Set | Unpredictable, often Increased | Increased | Greatly Increased |

Experimental Protocols

Protocol 1: Computing Expected Calibration Error (ECE)

- Input: Model predictions (probability vectors (\hat{p}i)) and true labels (yi) for (N) test samples.

- Partition: Sort predictions by their maximum confidence (\max(\hat{p}_i)) and partition into (M) equal-interval bins (e.g., M=10: [0.0, 0.1), ..., [0.9, 1.0]).

- Calculate per bin: For each bin (Bm), compute:

- Bin Accuracy: (\text{acc}(Bm) = \frac{1}{|Bm|} \sum{i \in Bm} \mathbf{1}(\hat{y}i = yi))

- Bin Confidence: (\text{conf}(Bm) = \frac{1}{|Bm|} \sum{i \in Bm} \max(\hat{p}i))

- Compute ECE: (\text{ECE} = \sum{m=1}^{M} \frac{|Bm|}{n} | \text{acc}(Bm) - \text{conf}(Bm) |)

Protocol 2: Temperature Scaling for Calibration

- Train Model: Train your classification neural network as usual.

- Separate Validation Set: Reserve a calibration set (a subset of the training or a separate validation set) that was not used for training.

- Optimize Temperature:

- Introduce a single scalar parameter (T > 0).

- For all logits (zi) in the calibration set, compute scaled probabilities: (\hat{p}i = \text{Softmax}(z_i / T)).

- Optimize (T) by minimizing the NLL (or ECE) on the calibration set, using a held-out portion or via cross-validation.

- Apply: Use the optimized (T) to scale logits of all future predictions (test time, OOD evaluation).

Mandatory Visualizations

Title: ECE Calculation Workflow

Title: Model Calibration Process & Outcome

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Uncertainty Calibration Experiments

| Item | Function in Experiment |

|---|---|

| Deep Learning Framework (PyTorch/TensorFlow) | Provides the core environment for building, training, and evaluating neural network models for protein classification/OOD detection. |

| Uncertainty Baselines Library | Offers standardized implementations of calibration metrics (ECE, NLL), calibration methods (temperature scaling), and OOD detection benchmarks. |

| Protein Sequence Embedding Model (e.g., ESM-2, ProtBERT) | A pre-trained transformer that converts raw protein amino acid sequences into informative numerical feature vectors (embeddings). |

| Curated Protein Dataset (e.g., Pfam, SwissProt) | A high-quality, labeled dataset of protein families/classes used for training the in-distribution classification model. |

| OOD Protein Dataset (e.g., remote homologs, synthetic sequences) | A held-out dataset with sequences deliberately chosen to be distinct from the training distribution, used for evaluating OOD detection performance. |

| Calibration Validation Set | A stratified split from the in-distribution training data, used exclusively for tuning calibration parameters (like temperature T). |

Troubleshooting Guides & FAQs

FAQ 1: I am getting poor OOD detection performance on my custom dataset split. What could be the issue?

- Answer: This is often due to data leakage or insufficient domain gap between your In-Distribution (ID) and Out-of-Distribution (OOD) sets. Ensure your splitting protocol strictly separates proteins at the appropriate homology level. For structural datasets like CATH/SCOPe, splits must be based on fold or superfamily, not random. Verify that no OOD protein shares significant sequence similarity (>25-30% identity) with any ID protein. Use tools like MMseqs2 for clustering check.

FAQ 2: When using CATH or SCOPe, at which hierarchical level should I split data to create a meaningful OOD detection benchmark?

- Answer: The appropriate level depends on your research question. For strict, fold-level OOD detection, hold out entire Class or Fold groups. For a more granular, superfamily-level challenge, hold out Superfamilies within a shared fold. The table below summarizes standard practices.

FAQ 3: How do I handle chain selection and pre-processing for proteins with multiple domains in CATH?

- Answer: Proteins with multiple discontinuous domains pose a challenge. Use the CATH "DomainParser" or the official domain definitions provided in the CATH hierarchy files. Treat each domain as an independent sample if your model is domain-based. For whole-chain models, you must decide to use the largest domain or the whole chain, but this must be consistent and clearly documented, as it affects OOD difficulty.

FAQ 4: My uncertainty scores are not calibrated—high for ID and low for OOD. How can I debug this?

- Answer: This inverse calibration suggests your model's uncertainty estimator is failing. First, verify your dataset splits. Then, check if your model is severely overfitting to ID data, losing general discriminative power. Implement temperature scaling or Monte Carlo Dropout during inference for neural networks to improve uncertainty estimation. Ensure you are using a proper scoring function like Negative Log Likelihood or Brier Score for calibration assessment.

FAQ 5: Are there standardized custom splits for CATH/SCOPe available to compare against other studies?

- Answer: Yes, several recent papers provide predefined splits. For CATH, splits from the ProteinWorkshop benchmark or FlatSITE study are commonly used. For SCOPe, check the splits used in TM-Vec or FoldSeek papers. Always cite the source of the splits to ensure reproducibility. See the protocol below for creating your own.

| Dataset | Primary Use | Recommended OOD Split Level | Key Quantitative Metrics (Typical) | Data Leakage Pitfall |

|---|---|---|---|---|

| CATH v4.3 | Protein Structure Classification | Hold-out at Fold (F) level | ~4,800 folds, ~130,000 domains | Domains from same protein chain in different sets. |

| SCOPe 2.07 | Structural & Evolutionary Relationship | Hold-out at Fold or Superfamily (SF) level | ~1,200 folds, ~40,000 domains | Including similar folds (e.g., Rossmann-like) across sets. |

| Custom (PDB) | Tailored to specific hypothesis | Based on sequence identity (<25%) or function | Variable | Insufficient sequence identity threshold during clustering. |

Experimental Protocols

Protocol 1: Creating a Custom OOD Split from PDB

- Source Data: Download a non-redundant set of protein structures from the PDB.

- Cluster: Use MMseqs2 (

easy-cluster) with a strict sequence identity threshold (e.g., 25%) to create clusters. - Define ID/OOD: Select clusters covering a specific functional class (e.g., kinases) as ID. Clusters from a different, well-separated class (e.g., GPCRs) or with no annotated similarity become OOD.

- Validate Gap: Perform all-vs-all BLAST between ID and OOD sets; confirm no significant hits (E-value < 1e-5).

- Public Availability: Deposit the list of PDB IDs and chains for each split in a repository (e.g., GitHub).

Protocol 2: Standardized Evaluation of OOD Detection Performance

- Train Model: Train your protein model (e.g., a graph neural network or language model) on the ID training set.

- Generate Scores: On the ID test set and the OOD set, compute an uncertainty score (e.g., predictive entropy, variance, softmax max probability).

- Compute Metric: Treat OOD detection as a binary classification task. Calculate standard metrics:

- AUROC (Area Under Receiver Operating Characteristic Curve): Ideal is 1.0, random is 0.5.

- AUPR (Area Under Precision-Recall Curve): More informative for imbalanced sets.

- FPR at 95% TPR: The False Positive Rate when True Positive Rate is 95%. Lower is better.

- Assess Calibration: Use Expected Calibration Error (ECE) to measure if the model's confidence aligns with its accuracy, separately on ID and OOD data.

Visualization: OOD Benchmark Creation Workflow

Title: Workflow for Creating an OOD Protein Benchmark

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in OOD Protein Detection Research |

|---|---|

| MMseqs2 | Fast clustering of protein sequences to define non-redundant sets and ensure no homology between ID and OOD splits. |

| DSSP/H DSSP | Calculates secondary structure and solvent accessibility features from 3D coordinates, used as input for structure-based models. |

| PyMOL/BioPython | For visualizing and programmatically processing PDB files, checking domain boundaries, and rendering OOD examples. |

| ESM-2/ProtTrans | Pre-trained protein language models used as base feature extractors or for generating embeddings for sequence-based OOD detection. |

| AlphaFold DB | Source of high-quality predicted structures for novel protein sequences, expanding potential OOD test sets. |

| CALIBER (or similar) | Toolkit for calibrating neural network uncertainty estimates (e.g., via temperature scaling) critical for reliable OOD scores. |

| RDKit | (For small-molecule binding sites) Can be used to compute ligand-based features for functional OOD detection tasks. |

Practical Guide: Methods to Calibrate Uncertainty for OOD Protein Detection

Troubleshooting Guides & FAQs

Q1: After applying Temperature Scaling to my protein language model's logits, my confidence scores are all near 1.0. What went wrong?

A1: This typically indicates an implementation error where the temperature parameter (T) is being multiplied, not divided. The correct operation is scaled_logits = logits / T. Verify your code ensures T > 0. A common best practice is to initialize T=1.0 and optimize via gradient descent on a validation set, constraining T to be positive (e.g., by parameterizing it as exp(w)).

Q2: My Platt Scaling (Logistic Regression) model outputs poorly calibrated probabilities, even on the validation set. How should I debug this? A2: Follow this diagnostic protocol:

- Check Feature Dependence: Platt Scaling uses the model's original confidence score (e.g., softmax of the logit for the predicted class) as the only feature. Ensure you are not using the full vector of logits.

- Inspect Label Binarization: For binary OOD detection, your labels should be 1 (In-Distribution, ID) and 0 (Out-of-Distribution, OOD). Confirm they are correctly assigned.

- Prevent Overfitting: Use L2 regularization. The solver (e.g., L-BFGS) must be configured with a positive

Cvalue (inverse of regularization strength). Start withC=1.0and adjust. - Validate Data Leakage: The validation set used for Platt Scaling fitting must be separate from the test set used for final evaluation.

Q3: Which scaling method is more suitable for large, multi-class protein function prediction models? A3: See the comparative analysis below. Temperature Scaling is generally preferred for multi-class settings due to its simplicity and stability.

Table 1: Comparison of Temperature vs. Platt Scaling for Protein Models

| Aspect | Temperature Scaling | Platt Scaling |

|---|---|---|

| Complexity | Single parameter (T). | Two parameters (weight, bias). |

| Risk of Overfitting | Very Low. | Higher, requires regularization. |

| Applicability | Multi-class classification. | Primarily binary (ID vs. OOD). |

| Optimization | Negative Log Likelihood (NLL) on validation set. | Logistic regression (max likelihood) on validation set. |

| Typical Performance (ECE Reduction)* | 60-80% reduction in Expected Calibration Error. | 50-75% reduction, but can vary. |

| Key Assumption | Calibration error is axis-aligned. | Logits follow a sigmoidal distribution. |

*Performance based on recent benchmarks (e.g., on DeepFam or UniProt-derived datasets). ECE reduction is relative to the uncalibrated model.

Q4: How do I prepare a proper validation set for calibrating protein model uncertainty? A4:

- Source: Hold out a portion of your in-distribution training data (e.g., 20% of known protein families). Do not use OOD data at this stage.

- Size: Several hundred to thousands of samples are typically sufficient.

- Protocol: For each sample in the validation set, you need:

- The model's raw logits.

- The ground-truth class label (for Temperature Scaling) or a binary ID/OOD label (for Platt Scaling).

- Procedure: Train your base model on the training split. Compute logits for the validation split. Use only these logits and labels to optimize the temperature or Platt parameters.

Experimental Protocols

Protocol 1: Implementing Temperature Scaling

Objective: Learn an optimal temperature parameter T to calibrate a multi-class protein classifier. Materials: See "Research Reagent Solutions" below. Method:

- Train Base Model: Train your protein sequence model (e.g., CNN, Transformer) on your ID dataset.

- Generate Validation Logits: Run the trained model on the held-out calibration validation set. Save the logits vector for each sample and its true class label.

- Optimize Temperature:

- Parameterize

T = exp(w)to ensure positivity. - Define the loss function as the Negative Log Likelihood (NLL) over the validation set:

L = -∑ log( softmax(logits_i / T)[true_class_i] ). - Optimize

wusing a gradient-based optimizer (e.g., Adam, L-BFGS) for 50-100 iterations.

- Parameterize

- Apply: For new predictions, compute

calibrated_softmax = softmax(logits / T_optimized).

Protocol 2: Implementing Platt Scaling for OOD Detection

Objective: Fit a logistic regression model to map model confidence scores to calibrated ID probabilities. Materials: See "Research Reagent Solutions" below. Method:

- Create Calibration Set: Construct a dataset containing:

- ID Samples: From your calibration validation set.

- Near-OOD Samples: Optional but recommended. Use phylogenetically distant protein families not in the training set.

- Labels: Assign 1 for ID, 0 for OOD.

- Extract Features: For each sample, compute the base model's maximum softmax confidence:

s_i = max(softmax(logits_i)). - Fit Logistic Regressor: Train a

sklearn.linear_model.LogisticRegressionmodel with:s_ias the sole feature (may be reshaped to 2D array).- Strong L2 regularization (e.g.,

C=0.1or lower). - Solver: 'lbfgs'.

- Apply: For a new sample's confidence score

s, the calibrated probability of being ID isPlatt(s) = σ(a * s + b), wherea, bare the learned parameters.

Visualizations

Title: Temperature Scaling Experimental Workflow

Title: Platt Scaling Transformation Logic

Research Reagent Solutions

Table 2: Essential Materials for Calibration Experiments

| Item | Function & Description |

|---|---|

| Curated Protein Dataset (e.g., Pfam, UniProt) | In-distribution (ID) data for training and calibrating the base model. Must have clear family/function labels. |

| Hold-out Validation Set | A subset of ID data, not used in training, dedicated for learning temperature T or Platt parameters. |

| Out-of-Distribution (OOD) Benchmark Set | Sequences from distant folds, synthetic proteins, or different kingdoms (e.g., viral vs. human) to evaluate OOD detection. |

| Deep Learning Framework (PyTorch/TensorFlow/JAX) | For training the base protein model and extracting logits. |

| Optimization Library (e.g., scipy.optimize, sklearn) | To minimize NLL for Temperature Scaling or fit LogisticRegression for Platt Scaling. |

| Calibration Metric Calculator (ECE, AUROC) | Code to compute Expected Calibration Error (for ID) and Area Under ROC Curve (for OOD detection). |

| Regularized Logistic Regression Model | Pre-configured with L2 penalty to prevent overfitting during Platt Scaling. |

Troubleshooting & FAQ Center

Frequently Asked Questions

Q1: During evaluation, my MC Dropout model's predictive entropy remains low even for clearly Out-of-Distribution (OOD) protein sequences. What could be the cause? A: This is often due to overconfident logits. Apply temperature scaling to the softmax layer before calculating the entropy. Use a validation set of known OOD samples to tune the temperature parameter (T > 1). Furthermore, ensure you are using enough stochastic forward passes (e.g., 50-100, not just 10) during inference to properly approximate the posterior.

Q2: My Variational Inference (VI) model fails to converge, with the KL divergence term exploding. How can I stabilize training? A: This is a classic sign of the "KL collapse." Implement KL annealing: gradually increase the weight of the KL divergence term in the ELBO loss over the first several epochs (e.g., from 0 to 1). Alternatively, use the "free bits" method, which sets a minimum threshold for the KL per latent variable, preventing overly aggressive regularization.

Q3: How do I choose between MC Dropout and Bayesian Neural Networks (BNNs) via VI for protein OOD detection? A: The choice involves a trade-off between computational cost and uncertainty quality. MC Dropout is easier to implement as a modification to a deterministic network but may yield less reliable posterior approximations. VI is more principled but computationally heavier. For initial prototyping with large protein language models (e.g., ESM-2), MC Dropout is pragmatic. For final, calibrated models, a purpose-built VI BNN is preferable.

Q4: The uncertainty scores from my Bayesian model do not correlate well with observed error rates on a held-out test set. How can I improve calibration? A: This indicates poor uncertainty calibration. Implement a post-hoc calibration step. Split your in-distribution data into train/calibration sets. Use the calibration set to fit an isotonic regression or a Platt scaling model that maps your uncertainty metric (e.g., predictive variance) to an empirical error probability. This is critical for trustworthy OOD detection.

Q5: What is a practical way to set a threshold on an uncertainty metric for flagging OOD protein sequences? A: Use the accuracy vs. coverage curve on your in-distribution validation set. Define a target acceptable error rate for in-distribution data (e.g., 5%). Find the uncertainty threshold where the model's error rate on the validation set reaches this target. Sequences with uncertainty above this threshold are flagged as potential OOD. This ensures the threshold is tied to a performance guarantee on known data.

Experimental Protocols

Protocol 1: Calibrating MC Dropout for Protein Sequence Classification

- Model: A standard deep neural network (e.g., CNN or Transformer) with Dropout layers inserted before every weight layer.

- Training: Train as a standard deterministic model. Do not reduce dropout rate at train time.

- Inference (MC Sampling): For each input protein sequence, perform T=50 forward passes with dropout active. Collect the T softmax probability vectors.

- Uncertainty Quantification: Calculate the mean softmax vector (predictive mean). Compute the predictive entropy: H = -∑c pc * log(pc), where pc is the mean probability for class c.

- Calibration: Fit a temperature scalar T to the logits using a validation set. Apply scaling during MC sampling.

Protocol 2: Implementing Mean-Field Variational Inference for a BNN

- Model Definition: For each network weight wi, define a variational posterior q(wi | θi) as a Gaussian N(μi, σi²). The prior p(wi) is a zero-mean Gaussian.

- Loss Function: Maximize the Evidence Lower Bound (ELBO): L(θ) = E_q[log p(D|w)] - β * KL(q(w|θ) || p(w)). The β term can be annealed.

- Reparameterization Trick: Sample via ε ~ N(0,1), wi = μi + σ_i * ε to allow gradient backpropagation through the sampling operation.

- Training: Use stochastic gradient descent on the variational parameters {μi, σi}.

- Inference: Sample multiple weight instantiations from the trained q(w|θ) to approximate the predictive distribution.

Table 1: Comparison of Uncertainty Estimation Methods

| Method | Principle | Computational Overhead | Uncertainty Quality | Ease of Implementation |

|---|---|---|---|---|

| MC Dropout | Approx. VI via Dropout | Low (xT forward passes) | Moderate | Very High (dropout in eval mode) |

| Mean-Field VI | Optimize Parametric Posterior | Moderate-High | Good-High | Moderate (requires reparam trick) |

| Deep Ensembles | Point Estimate Ensemble | High (train N models) | High | High (trivial but costly) |

| Stochastic VI | Scalable VI for Big Data | Moderate | Good | Complex |

Table 2: Typical OOD Detection Performance Metrics (Example Benchmark)

| Model (on CATH vs. Novel Fold) | AUROC | AUPR | FPR@95%TPR | Threshold (Entropy) |

|---|---|---|---|---|

| Deterministic CNN | 0.78 | 0.65 | 0.41 | 0.87 |

| + MC Dropout (T=30) | 0.86 | 0.77 | 0.28 | 1.15 |

| + MC Dropout + Temp Scaling | 0.91 | 0.84 | 0.19 | 1.02 |

| BNN via MFVI | 0.93 | 0.88 | 0.15 | 0.95 |

Visualizations

Title: MC Dropout Inference & Calibration Workflow

Title: Variational Inference Training Loop for BNNs

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bayesian DL for Protein OOD |

|---|---|

| JAX/NumPyro | Probabilistic programming framework ideal for flexible, high-performance implementation of VI and MCMC for BNNs. |

| PyTorch with Pyro | Deep learning library with a probabilistic programming extension, suitable for prototyping VI models. |

| ESM-2 (Evolutionary Scale Modeling) | Pre-trained protein language model backbone. Bayesian layers can be appended to its embeddings for uncertainty-aware fine-tuning. |

| CATH/SCOPe Datasets | Curated protein structure classification databases. Standard in-distribution datasets for training; novel folds serve as ground-truth OOD test sets. |

| Uncertainty Baselines | Benchmarking suite containing implementations of MC Dropout, Deep Ensembles, SNGP, etc., for fair comparison. |

| TensorFlow Probability | Library for probabilistic reasoning and Bayesian analysis, integrates with TF/Keras for BNN construction. |

| Calibration Metrics (ECE, MCE) | Expected Calibration Error and Maximum Calibration Error. Quantify the gap between predicted confidence and actual accuracy. |

Technical Support Center: Troubleshooting & FAQs

FAQs on Methodology & Theory

Q1: For Out-of-Distribution (OOD) protein detection, should I use Deep Ensembles or Snapshot Ensembles? What are the key practical differences? A: The choice depends on your computational resources and performance needs.

- Deep Ensembles: Train multiple independent models from different random initializations. Superior uncertainty calibration and OOD detection performance but requires N times the computational cost for N models.

- Snapshot Ensembles: Train a single model through a cyclic learning rate schedule, saving snapshots at minima. Provides robust uncertainty at a fraction of the cost, but diversity (and thus uncertainty quality) may be lower than Deep Ensembles.

Table 1: Ensemble Method Comparison for Protein Sequence Classification

| Feature | Deep Ensemble | Snapshot Ensemble |

|---|---|---|

| Training Cost | High (N independent trains) | Low (~1-2x single model cost) |

| Inference Cost | High (N forward passes) | High (N forward passes) |

| Uncertainty Quality | Very High (High model diversity) | High (Moderate diversity) |

| Best for | Final deployment, top performance | Prototyping, resource-constrained research |

| Key Hyperparameter | Number of ensemble members (M) | Cycle length, learning rate range |

Q2: My ensemble's uncertainty scores are not discriminating between in-distribution and OOD protein sequences. What could be wrong? A: This is a common calibration issue. Potential causes and fixes:

- Cause 1: Low Model Diversity. All ensemble members are making similar errors.

- Fix for Deep Ensembles: Ensure weight initialization and data shuffling are truly random. Consider using different architectures or subsets of training data per member.

- Fix for Snapshot Ensembles: Increase the learning rate cycle amplitude to encourage visits to more distant minima.

- Cause 2: Poorly Calibrated Outputs. Softmax probabilities are overconfident.

- Fix: Apply temperature scaling to calibrate the ensemble's predictive distribution. Use a validation set to tune the temperature parameter T (where softmax(logits / T)).

- Cause 3: Inadequate OOD Metric. Using only predictive entropy may be insufficient.

- Fix: Use mutual information (MI) across the ensemble. MI captures disagreement and is often more sensitive to OOD data.

MI = Predictive Entropy - Average Entropy of Members.

- Fix: Use mutual information (MI) across the ensemble. MI captures disagreement and is often more sensitive to OOD data.

Q3: How do I implement an entropy-based OOD detector for protein families using an ensemble? A: Follow this experimental protocol:

- Train Ensemble: Train your chosen ensemble (Deep or Snapshot) on your in-distribution protein dataset (e.g., a specific family or functional class).

- Forward Pass: For a new sequence x, obtain N sets of class probabilities (for C classes) from all ensemble members: {P₁(y|x), ..., Pₙ(y|x)}.

- Calculate Predictive Distribution: Compute the mean probability:

P_avg(y|x) = (1/N) * Σᵢ Pᵢ(y|x). - Compute Entropy: Calculate the predictive entropy:

H(y|x) = - Σ_c P_avg(y=c|x) * log P_avg(y=c|x). - Set Threshold: Using a held-out validation set (in-distribution) and a known OOD set, plot distributions of

H(y|x). Determine an optimal threshold τ that maximizes a metric like the F1-score for OOD detection. - Detect: For a test sequence, if

H(y|x) > τ, flag it as OOD.

Title: Entropy-Based OOD Detection Workflow for Proteins

Q4: What are the essential reagents and tools for benchmarking ensemble methods in computational protein research? A: The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Toolkit for Protein Uncertainty Research

| Tool / "Reagent" | Function / Purpose |

|---|---|

| Protein Language Model (e.g., ESM-2, ProtBERT) | Foundation for sequence embeddings. Transfer learning is essential for robust feature extraction. |

| Structured Database (e.g., UniProt, PFAM) | Source of in-distribution training and validation protein families/classes. |

| OOD Benchmark Dataset (e.g., SWISS-Prot vs. TrEMBL splits, remote homology datasets from SCOP) | Controlled "challenge sets" for evaluating OOD detection performance. |

| Deep Learning Framework (PyTorch/TensorFlow) with Uncertainty Libs (Pyro, TensorFlow Probability, Lightning-Bolts) | Infrastructure for building, training, and sampling from probabilistic ensembles. |

| Calibration Metrics Library (e.g., uncertainty-toolbox, netcal) | To compute metrics like Expected Calibration Error (ECE), Brier Score, and OOD detection AUROC. |

| High-Performance Compute (HPC) Cluster or Cloud GPU Instances | Necessary for training large ensembles and conducting rigorous hyperparameter searches. |

Q5: How do I visualize the decision boundaries or uncertainty landscapes of my protein ensemble model? A: Use dimensionality reduction on model embeddings/latent spaces.

- Extract Embeddings: For a set of sequences (mix of in-distribution and OOD), get the final-layer representations from each ensemble member.

- Reduce Dimensionality: Apply UMAP or t-SNE to the average embedding across the ensemble.

- Color Code: Create two overlay plots:

- Plot 1 (Ground Truth): Points colored by their true label (in-distribution class vs. OOD).

- Plot 2 (Model Uncertainty): Points colored by the ensemble's predictive entropy (H(y|x)).

Title: Visualizing Ensemble Uncertainty Landscape for Proteins

Technical Support Center

Troubleshooting Guide

Q1: The Mahalanobis distance scores for my in-distribution protein embeddings are not forming a distinct, separable distribution from the OOD proteins. What could be the cause?

A: This is a common issue. The primary causes and solutions are:

- Cause 1: Poor latent space separation. The model's latent space may not be discriminative enough. In-distribution and OOD samples are not well-clustered.

- Solution: Re-evaluate the feature extractor's training. Consider using contrastive or metric learning losses (e.g., triplet loss) during training to improve intra-class compactness and inter-class separation.

- Cause 2: Incorrect covariance matrix estimation. The sample covariance matrix calculated from the training embeddings may be singular or ill-conditioned, especially in high-dimensional latent spaces.

- Solution: Apply regularization. Use the formula: Σ_reg = Σ + λI, where λ is a small positive scalar (e.g., 1e-6) and I is the identity matrix. This ensures invertibility.

- Cause 3: Non-Gaussian in-distribution. The Mahalanobis distance assumption of a Gaussian-distributed in-distribution (ID) manifold may be violated.

- Solution: Consider fitting a Gaussian Mixture Model (GMM) to multiple ID clusters and calculating the minimum Mahalanobis distance to any component. Alternatively, explore non-parametric distance metrics like k-NN distance.

Q2: When deploying the scoring function, I experience high computational latency. How can I optimize it?

A: The bottleneck is typically the matrix inversion in the distance calculation: d² = (x - μ)^T Σ^(-1) (x - μ).

- Solution 1: Pre-compute and cache. Pre-compute the inverse of the regularized covariance matrix (Σ_reg^(-1)) and the mean vector (μ) from the training set once. Store these for inference.

- Solution 2: Dimensionality reduction. Apply Principal Component Analysis (PCA) to the latent embeddings before calculating distances. This reduces the dimensionality, making the inversion cheaper and potentially denoising the features. Retain components explaining >95% variance.

Q3: How should I set the threshold for classifying a sample as OOD based on the Mahalanobis distance score?

A: There is no universal threshold. You must calibrate it on a separate validation set containing both ID and known OOD samples (e.g., a different protein family).

- Protocol:

- Calculate Mahalanobis distances for the ID validation set and the known OOD validation set.

- Plot the distributions (histograms or density plots).

- Choose a threshold that maximizes a metric like the True Negative Rate (ID recall) while maintaining an acceptable False Positive Rate (OOD samples misclassified as ID). A common statistical starting point is the 95th or 99th percentile of the ID validation distance distribution.

Q4: My model performs well on held-out test data but fails to detect semantically similar OOD proteins (e.g., a homologous protein from a different organism). Why?

A: This indicates the latent space may be encoding superficial features (like sequence length patterns) rather than deep functional semantics. The Mahalanobis distance is only as good as the embedding space.

- Solution:

- Improve Embeddings: Use a protein language model (e.g., ESM-2, ProtBERT) fine-tuned on your specific task to generate embeddings that better capture functional and structural semantics.

- Hybrid Scoring: Combine the Mahalanobis distance with an energy-based score or the maximum softmax probability from the classifier head for more robust detection.

Frequently Asked Questions (FAQs)

Q: What is the precise mathematical definition of the Mahalanobis distance used for OOD scoring in a latent space Z?

A: For a test sample's latent embedding z, the Mahalanobis distance M(z) to the in-distribution (ID) data is calculated as: M(z) = √((z - μ)^T Σ^(-1) (z - μ)) where:

- μ is the mean vector of the ID training embeddings.

- Σ is the covariance matrix of the ID training embeddings (often regularized as Σ_reg = Σ + λI). Higher values of M(z) indicate a higher likelihood of the sample being OOD.

Q: Can I use Mahalanobis distance with any deep learning model architecture?

A: Yes, provided the model has a well-defined latent layer or embedding space (e.g., the penultimate layer of a classifier, the output of an encoder). The method is architecture-agnostic but depends entirely on the quality and discriminative nature of the extracted embeddings.

Q: What are the main advantages and disadvantages of Mahalanobis distance compared to other OOD detection methods?

A:

| Method | Advantages | Disadvantages |

|---|---|---|

| Mahalanobis Distance | Captures feature correlations via covariance. Simple, deterministic calculation after training. No need to modify model architecture. | Assumes ID data forms a single multivariate Gaussian cluster. Sensitive to estimation errors in high dimensions. Can be computationally heavy for very high-D spaces. |

| Max Softmax Probability | Trivial to compute from a standard classifier. | Often overconfident; poor performance. |

| Monte Carlo Dropout | Provides a Bayesian uncertainty estimate. | Increases inference time. Requires dropout layers. |

| Deep Ensembles | State-of-the-art performance. Robust. | Very high training and inference computational cost. |

Q: What are the essential preprocessing steps for the latent embeddings before computing the distance?

A:

- Centering: Subtract the pre-computed training mean μ from all embeddings (test and train).

- Whitening (Implicit): Multiplying by Σ^(-1/2) transforms the data so its covariance becomes the identity matrix. The Mahalanobis distance calculation effectively performs whitening.

- Regularization: As noted, adding λI to the covariance matrix is critical for numerical stability.

- (Optional) PCA: Reduce dimensionality to the top k principal components to de-noise and speed up computation.

Experimental Protocol: OOD Detection for Proteins using Mahalanobis Distance

Objective: To calibrate uncertainty for OOD protein detection by implementing a Mahalanobis distance scoring mechanism on model latent embeddings.

Materials & Input Data:

- ID Training Set: Curated dataset of protein sequences/structures from target families (e.g., GPCRs).

- Validation Set: Contains ID proteins and known OOD proteins (e.g., Kinases).

- Test Set: Contains ID proteins and novel OOD proteins for final evaluation.

- Trained Feature Extractor: A neural network (CNN, Transformer, etc.) trained on the ID training set for a task like classification or reconstruction.

Procedure:

- Embedding Extraction:

- Forward pass all ID training samples through the trained model.

- Extract the vector from the designated latent layer (e.g., the layer before the final classification head).

- Store these embeddings as matrix X_train ∈ R^(n x d), where n is the number of samples and d is the latent dimension.

Parameter Calculation:

- Compute the mean vector: μ = (1/n) Σ_{i=1}^n x_i.

- Compute the covariance matrix: Σ = (1/(n-1)) Σ_{i=1}^n (x_i - μ)(x_i - μ)^T.

- Apply regularization: Σ_reg = Σ + λI, with λ = 1e-6.

- Calculate the inverse covariance matrix: Σ_reg^(-1).

- Cache μ and Σ_reg^(-1).

Distance Scoring for a New Sample:

- Obtain the test sample's latent embedding z.

- Calculate the squared Mahalanobis distance: d² = (z - μ)^T Σ_reg^(-1) (z - μ).

- Use d (the square root) as the OOD score.

Threshold Calibration (Using Validation Set):

- Calculate scores for the ID and known OOD validation splits.

- Determine a threshold τ that satisfies the desired trade-off (e.g., 95% ID recall).

- Classify: If d > τ, then sample is OOD; else, ID.

Evaluation (Using Test Set):

- Calculate standard OOD detection metrics: AUROC, AUPR, FPR at 95% TPR.

Visualizations

Title: Mahalanobis OOD Scoring Workflow for Proteins

Title: Mahalanobis Distance in Latent Space Concept

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Pre-trained Protein Language Model (e.g., ESM-2) | Provides high-quality, semantically rich initial embeddings for protein sequences, improving latent space structure. |

| Structured Protein Datasets (e.g., CATH, SCOPe, Pfam) | Source of well-annotated in-distribution and out-of-distribution protein families for training and evaluation. |

| Numerical Computation Library (e.g., PyTorch, TensorFlow, NumPy) | Enables efficient calculation of embeddings, covariance matrices, matrix inverses, and distance scores. |

| Regularization Parameter (λ) | A small scalar (e.g., 1e-6) added to the diagonal of the covariance matrix to ensure numerical stability and invertibility. |

| Principal Component Analysis (PCA) Tool | Used optionally to reduce the dimensionality of latent embeddings, mitigating the "curse of dimensionality" and noise. |

| Validation Set with Known OOD Proteins | Critical for calibrating the detection threshold (τ); must contain proteins known to be functionally/structurally distinct from the ID set. |

| OOD Detection Metrics Calculator (AUROC, AUPR, FPR@95TPR) | Scripts/libraries to quantitatively evaluate the performance of the Mahalanobis scoring method against baselines. |

Troubleshooting Guides & FAQs

Q1: During DUQ training, my model's gradient penalty loss becomes unstable or explodes. What could be the cause and how do I fix it? A1: This is often due to an excessively high gradient penalty coefficient or too large a learning rate. The gradient penalty in DUQ ensures the Lipschitz continuity of the feature extractor. Follow this protocol:

- Reduce the gradient penalty coefficient (lambda) from the default of 0.1 to 0.01 or 0.001.

- Implement gradient clipping (max norm = 1.0) as an additional safeguard.

- Ensure your batch size is sufficient (≥ 64) and contains diverse in-distribution samples.

- Monitor the ratio of the gradient penalty loss to the cross-entropy loss; they should be within one order of magnitude.

Q2: When using DEUP for OOD protein detection, the epistemic uncertainty estimates are consistently low for all inputs, including clear OOD samples. How can I improve sensitivity? A2: Low discriminative power often stems from poorly calibrated prior variance or an inadequate training set for the prior model. Implement this validation protocol:

- Recalibrate Prior Variance: On a validation set, compute the Negative Log Likelihood (NLL). Systematically adjust the prior variance (σ²) and retest NLL. Use the value that minimizes validation NLL.

- Enhance Prior Training: Ensure your prior model training set includes a broad spectrum of protein sequences/families, not just the primary in-distribution task. Incorporate evolutionary or synthetic variants.

- Check Gradient Signal: Verify that the loss (NLL + λ * MSE) provides sufficient gradient for the posterior variance network. You may need to adjust the λ coefficient.

Q3: My model's uncertainty scores do not correlate with prediction error on the in-distribution test set. What diagnostic steps should I take? A3: Poor calibration indicates a breakdown in the uncertainty quantification mechanism. Execute this diagnostic workflow:

- Compute Calibration Metrics: Calculate Expected Calibration Error (ECE) and plot reliability diagrams for your in-distribution test set.

- Isolate the Issue: If ECE is high:

- For DUQ: Check the RBF kernel scaling. Re-initialize the centroid embeddings and verify the gradient penalty is being applied correctly (see Q1).

- For DEUP: Verify the prior predictions are meaningful. If the prior is poorly trained, the posterior will be poorly calibrated.

- Regularization Check: Temporarily increase the strength of your core regularizer (gradient penalty for DUQ, prior loss weight for DEUP) by 10x. If calibration improves, slowly reduce the strength while monitoring ECE.

Diagram Title: OOD Calibration Issue Diagnosis Workflow

Q4: How do I construct a meaningful OOD validation set for protein sequences when the possible "unknown" space is vast? A4: A tiered approach is essential. Do not rely on a single OOD set. Construct the following table for comprehensive evaluation:

| OOD Set Tier | Composition Example | Purpose | Expected Uncertainty Trend |

|---|---|---|---|

| Near-OOD | Protein families from a different fold class within the same organism. | Test sensitivity to biologically relevant divergence. | Moderately higher than in-distribution. |

| Far-OOD | Proteins from a distant organism (e.g., bacterial vs. human). | Test generalization to sequence-space outliers. | Significantly higher than in-distribution. |

| Adversarial | Sequences generated via language model or with perturbed active sites. | Stress-test the model's uncertainty boundaries. | Should be highest. |

Protocol for Construction:

- Use tools like MMseqs2 to cluster training sequences at a strict identity threshold (e.g., 90%). All clusters not represented in training are candidate OOD pools.

- For Near-OOD, select clusters with some structural similarity (e.g., same SCOP Class but different Fold).

- For Far-OOD, select clusters from a different phylogenetic domain.

Q5: What are the key hyperparameters for DEUP and DUQ, and what are typical starting values for protein sequence data (e.g., using embeddings from ESM2)?

| Method | Hyperparameter | Description | Typical Starting Value (Protein Data) |

|---|---|---|---|

| DUQ | gradient_penalty (λ) |

Weight of the Lipschitz penalty. | 0.1 (Adjust per Q1) |

sigma |

RBF kernel length-scale. | 0.3 (Tune on validation NLL) | |

num_centroids |

Number of class prototype vectors. | Equal to number of training classes. | |

| DEUP | prior_variance (σ²) |

Fixed variance of the prior model. | 1.0 (Calibrate per Q2) |

prior_weight (β) |

Weight of the prior loss term. | 0.1 | |

hidden_dim |

Size of the variance network. | 64 |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in OOD/Uncertainty Research |

|---|---|

| Pre-trained Protein LM (e.g., ESM2, ProtT5) | Provides foundational sequence embeddings. Acts as a strong feature extractor, capturing evolutionary and structural information crucial for defining in-distribution. |

| MMseqs2/LINCLUST | Used for rapid, sensitive sequence clustering. Critical for creating non-redundant training sets and defining held-out clusters for OOD validation sets. |

| PDB (Protein Data Bank) | Source of experimental structures. Used to validate or interpret model predictions, and to define OOD sets based on structural divergence (fold, class). |

| AlphaFold DB | Source of high-accuracy predicted structures. Expands the structural space available for analysis when experimental structures are lacking for OOD sequences. |

| UniProt Knowledgebase | Comprehensive protein sequence and functional information database. The primary source for constructing broad, diverse in-distribution training sets and sourcing OOD sequences. |

| GPUs (e.g., NVIDIA A100/H100) | Accelerates training of large feature extractors and enables rapid hyperparameter sweeps for tuning regularization strengths and network architectures. |

Calibration Metrics Library (e.g., netcal) |

Provides implementations of ECE, NLL, reliability diagrams, and other metrics essential for quantitatively evaluating uncertainty quality. |

Diagram Title: DEUP Inference & Training Data Flow

Troubleshooting Guides & FAQs

Q1: I have computed logits from my fine-tuned ESM2 model, but the softmax probabilities are consistently overconfident, even for Out-of-Distribution (OOD) sequences. What is the first step I should take? A1: This is the core symptom of an uncalibrated model. The first step is to apply a post-hoc calibration method like Temperature Scaling. This involves training a single scalar parameter (temperature, T) on a validation set to "soften" the softmax distribution. Use the Negative Log Likelihood (NLL) loss on your held-out validation data (in-distribution proteins only) to optimize T.

Q2: When I apply Temperature Scaling, my validation accuracy drops slightly. Is this expected? A2: Yes, this is expected and often desirable. Calibration aims to align predicted confidence with empirical accuracy, not to maximize accuracy. A perfectly calibrated model's confidence should reflect its true probability of being correct. A slight accuracy drop can occur as the probability distribution becomes less "peaky" and more reflective of true uncertainty.

Q3: How do I evaluate whether my calibration is effective for OOD detection? A3: You must evaluate on two separate tasks:

- In-Distribution Calibration: Use metrics like Expected Calibration Error (ECE) and Reliability Diagrams on your in-distribution test set.

- OOD Detection Performance: Use metrics computed on a mixture of in-distribution and OOD samples. Key metrics include:

- AUROC: Area Under the Receiver Operating Characteristic curve.

- AUPR: Area Under the Precision-Recall curve.

- FPR at 95% TPR: False Positive Rate when True Positive Rate is 95%. A decrease in OOD detection performance after calibration indicates you may be over-softening the scores.

Q4: My chosen OOD dataset (e.g., viral proteins) returns very high calibrated softmax scores, failing to be flagged as OOD. What could be wrong? A4: This suggests the OOD data may be within the model's learned manifold. Consider:

- Data Contamination: Ensure your OOD sequences were not in the pre-training or fine-tuning data.

- Need for Alternative Scores: Softmax may be insufficient. Implement an ensemble of models or use Prediction Entropy or Mahalanobis Distance in the model's embedding space as your uncertainty score.

- Architectural Limits: The model may have learned features that generalize too well. You may need to integrate OOD-aware training objectives.

Q5: I'm implementing an ensemble for uncertainty estimation. How many model instances are typically needed, and what are the computational trade-offs? A5: For ESM2, due to its size, even 3-5 ensemble members can yield significant benefits. The primary trade-off is linear increase in compute and memory.

| Ensemble Size | Expected AUROC Improvement (Typical Range) | Training Compute Factor | Inference Compute Factor |

|---|---|---|---|

| 1 (Baseline) | 0.00 (Reference) | 1x | 1x |

| 3 | +0.02 to +0.08 | ~3x | 3x |

| 5 | +0.04 to +0.12 | ~5x | 5x |

Experimental Protocol: Temperature Scaling on ESM2

Objective: Calibrate a fine-tuned ESM2 model's confidence estimates using a held-out validation set.

Prerequisites:

- Fine-tuned ESM2 model (e.g., on a specific protein family classification task).

- Pre-processed in-distribution validation dataset (not used for training).

- PyTorch/TorchMD-Net environment with the

transformerslibrary.

Steps:

- Load Model & Validation Data: Load your trained model and the validation DataLoader.

- Extract Logits & Labels: Perform a forward pass on the validation set without gradient computation. Store the logits and true labels.

- Define Temperature Model: Create a

nn.Modulethat wraps your ESM2 model and applies a temperature parameterTto the logits before softmax.

- Optimize Temperature: On the validation logits, optimize the

T parameter using the NLL loss. Use a small learning rate (e.g., 0.01) and LBFGS optimizer for ~100-200 iterations.

- Validate: After training, freeze

T. Compute the ECE on the validation set pre- and post-scaling to quantify improvement.

- Apply to New Data: For inference on test or OOD data, pass inputs through the

TemperatureScaledModel to obtain calibrated probabilities.

Experimental Workflow Diagram

Title: Uncertainty Calibration & OOD Detection Workflow for ESM2

OOD Detection Scoring Methods Diagram

Title: Four Uncertainty Scoring Methods from a Single ESM2 Forward Pass

The Scientist's Toolkit: Research Reagent Solutions

Item

Function in Calibration/OOD Research

ESM2 (650M/3B params)

Foundational protein language model. Provides sequence embeddings and logits for downstream tasks.

PyTorch / Transformers

Core frameworks for model loading, modification, and inference.

Temperature Scaling (nn.Parameter)

A single, trainable scalar parameter used to calibrate softmax confidence.

Expected Calibration Error (ECE)

Primary metric for quantifying calibration error by binning predictions by confidence.

AUROC/AUPR Metrics

Threshold-agnostic metrics for evaluating OOD detection performance.

OOD Protein Datasets

Curated sets (e.g., viral, plant, synthetic proteins) distinct from training data to test generalization.

LBFGS Optimizer

Second-order optimization method often used for efficiently finding the optimal temperature parameter.

Mahalanobis Distance Calculator

Function to compute distance of embeddings to in-distribution class centroids for feature-space uncertainty.

Debugging OOD Detection: Common Pitfalls and Advanced Optimization Tips

Troubleshooting Guides & FAQs

Q1: Why does my model show high accuracy but poor uncertainty calibration on Out-of-Distribution (OOD) protein sequences?

A: This is a common symptom of overconfidence. The model may have learned the training distribution too well, assigning high confidence even to novel OOD proteins. Key diagnostic tools are the Reliability Diagram and Confidence Histogram. If the Reliability Diagram curve deviates significantly from the diagonal, it indicates miscalibration. A Confidence Histogram skewed heavily towards high confidence (>0.9) with frequent errors suggests the model is not appropriately hedging its bets.

Q2: My Reliability Diagram shows a classic "sigmoid" shape. What does this mean for my OOD protein detector?

A: A sigmoid-shaped curve (below the diagonal for mid-confidences, above for extremes) indicates systematic overconfidence. In the context of protein detection, this means your model's predicted probabilities are too extreme (too close to 0 or 1). An OOD protein might receive a confidence score of 0.95 for being "in-distribution" when it should be much lower, leading to missed OOD detection.

Q3: How do I choose between Temperature Scaling, Platt Scaling, and Histogram Binning for calibrating my protein classifier?

A: The choice depends on your model's architecture and the nature of miscalibration.

- Temperature Scaling (TS): Best for modern neural networks. It uses a single scalar parameter to "soften" logits. It preserves prediction ranking—critical for maintaining accuracy on in-distribution proteins.

- Platt Scaling: A more flexible logistic regression on the logits. Useful for non-neural models or cases where the miscalibration is not monotonic. Risk of overfitting on small validation sets.

- Histogram Binning: Non-parametric and robust. Groups predictions into bins and assigns a calibrated score per bin. Useful when the model's scores have a complex, non-sigmoid distortion.

Protocol: Implementing Temperature Scaling for a Protein Sequence Model

- Train your model on your in-distribution protein dataset.

- Split a held-out validation set from your in-distribution data. Do not use OOD data for calibration tuning.

- Forward pass the validation set through the model to obtain logits

z_iand predicted confidencesσ(z_i). - Optimize the temperature parameter

Tby minimizing the Negative Log Likelihood (NLL) on the validation set:L = -Σ y_i * log(σ(z_i/T)). - Apply the optimized

Tto scale all future logits (both in-distribution and OOD) at inference:confidence_calibrated = σ(z_i / T).

Q4: What quantitative metrics should I report alongside Reliability Diagrams for publication?

A: Always report Expected Calibration Error (ECE) and Maximum Calibration Error (MCE). For OOD detection, also report the Brier Score.

| Metric | Formula (Conceptual) | Interpretation | Optimal Value | ||

|---|---|---|---|---|---|

| Expected Calibration Error (ECE) | `Σ ( | acc(Bm) - conf(Bm) | * n_m/N)` | Weighted average of calibration error across confidence bins. | 0 |

| Maximum Calibration Error (MCE) | `max | acc(Bm) - conf(Bm) | ` | Worst-case calibration error in any bin. Critical for high-stakes applications. | 0 |

| Brier Score | Σ (y_i - p_i)^2 / N |

Measures both calibration and refinement (accuracy). Lower is better. | 0 | ||

| Negative Log Likelihood (NLL) | -Σ y_i * log(p_i) |

Proper scoring rule. Penalizes both over- and under-confidence. | Lower is better |

Protocol: Calculating Expected Calibration Error (ECE)

- Bin Predictions: Partition the model's confidence scores [0,1] into

Mequally spaced bins (e.g., M=10: [0,0.1), [0.1,0.2), ...). - Compute Bin Statistics: For each bin

B_m:conf(B_m): Average confidence of predictions in the bin.acc(B_m): Empirical accuracy (fraction correct) of predictions in the bin.n_m: Number of samples in the bin.

- Calculate Weighted Error:

ECE = Σ (|acc(B_m) - conf(B_m)| * n_m / N), whereNis the total number of samples.

Visualization: OOD Calibration Diagnostics Workflow

Title: OOD Protein Detector Calibration Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Tool | Function in Calibration Research |

|---|---|

| Uncertainty Baselines (Google) | A comprehensive library for benchmarking calibration metrics (ECE, NLL) and methods (TS, Ensembles) on standard datasets. |

| PyTorch / TensorFlow Probability | Frameworks enabling easy implementation of probabilistic layers, loss functions (NLL), and calibration scaling techniques. |

| SWISS-PROT / AlphaFold DB | High-quality, curated in-distribution protein datasets for training and validation. Critical for establishing a reliable baseline. |

| Pfam / SCOPe | Sources for defining out-of-distribution (OOD) protein families or folds to test generalization and uncertainty estimation. |

| Calibration Zoo (CaliZoo) | A benchmark suite specifically for evaluating calibration under distribution shift, relevant for OOD protein scenarios. |

| MMseqs2 | Tool for clustering protein sequences to create non-redundant train/validation/OOD splits with controlled similarity thresholds. |

Technical Support Center: Troubleshooting & FAQs

Troubleshooting Guides

Issue 1: Model Shows High Accuracy on Validation Set but Poor Real-World Protein Detection

| Symptom | Likely Cause | Diagnostic Check |

|---|---|---|

| High in-distribution accuracy (>95%) | Covariate Shift: Lab protein prep differs from field samples. | 1. Run Maximum Mean Discrepancy (MMD) test between training and new sample embeddings. |

| Low out-of-distribution (OOD) AUROC (<0.7) | Label Shift: Pathogenic variant prevalence differs. | 2. Apply Black-Box Shift Estimation (BBSE) to estimate new label marginal. |

| Overconfident wrong predictions | Prior Probability Shift: Training class balance is artificial. | 3. Check predicted vs. observed class ratios on a recent, labeled batch. |

Experimental Protocol for Diagnosis:

- Collect a small, labeled sample from the target environment (N ≥ 100).

- Extract model embeddings (penultimate layer activations) for both training and target samples.

- Calculate MMD Statistic:

- Use a radial basis function (RBF) kernel: ( k(x, x') = \exp(-\gamma ||x - x'||^2) ).

- Compute ( MMD^2 = \frac{1}{n^2} \sum{i,j} k(xi, xj) + \frac{1}{m^2} \sum{i,j} k(yi, yj) - \frac{2}{nm} \sum{i,j} k(xi, y_j) ).

- Interpretation: An MMD p-value < 0.05 indicates significant covariate shift.

Issue 2: Uncertainty Scores are Not Reliable for OOD Protein Sequences