Accelerating Protein Design: How Protein Transformers Power Next-Generation In Silico Directed Evolution

Directed evolution, the laboratory method for engineering biomolecules, has moved decisively into the digital realm.

Accelerating Protein Design: How Protein Transformers Power Next-Generation In Silico Directed Evolution

Abstract

Directed evolution, the laboratory method for engineering biomolecules, has moved decisively into the digital realm. This article explores the transformative integration of protein transformer models—a class of deep learning architectures—into the directed evolution pipeline. We first establish the foundational concepts of both directed evolution and the self-attention mechanisms underpinning transformers. The core of the guide details modern methodological workflows, from encoding protein sequences and generating variant libraries to fitness prediction and in silico screening. We address critical challenges in model training, data scarcity, and navigating vast sequence spaces, providing strategies for optimization and troubleshooting. Finally, we benchmark leading models like ESM, ProtGPT2, and ProteinBERT, validating their predictions against experimental data and comparing their strengths for specific applications. This comprehensive resource is tailored for researchers and drug development professionals seeking to leverage AI-driven in silico directed evolution for accelerated protein design, therapeutic discovery, and enzyme engineering.

From Lab Bench to Digital Code: The Foundational Shift to AI-Driven Protein Evolution

Within a broader thesis exploring in silico directed evolution using protein transformers, it is critical to understand the foundational wet-lab paradigm. Traditional directed evolution is an iterative, experimental process that mimics natural selection to optimize proteins for desired traits. This application note recaps the core cycles, serving as a benchmark against which computational methods are compared.

The traditional paradigm consists of four sequential steps repeated over multiple generations.

Protocol 1.0: The Standard Directed Evolution Workflow

Step 1: Library Creation Objective: Generate genetic diversity in the target gene. Detailed Methodology:

- Gene Preparation: Isolate and amplify the wild-type gene via PCR.

- Diversity Introduction: Apply one or more mutagenesis methods.

- Error-Prone PCR (epPCR): Standard Protocol:

- Set up a 50 µL PCR reaction: 10-100 ng template DNA, 1X proprietary error-prone buffer (e.g., with Mn²⁺), 0.2 mM each dNTP (biased ratios, e.g., lowering dATP/dGTP), 0.5 µM each primer, 5 U Taq polymerase.

- Cycle conditions: Initial denaturation: 95°C for 2 min; 25-30 cycles of [95°C for 30 sec, 55-60°C for 30 sec, 72°C for 1 min/kb]; final extension: 72°C for 5 min.

- The mutation rate is tuned by adjusting Mn²⁺ concentration, dNTP bias, and cycle number.

- DNA Shuffling: Protocol:

- Fragment the gene(s) using DNase I to generate random 50-100 bp fragments.

- Purify fragments and reassemble using a PCR-like assembly reaction without primers for 40 cycles (94°C 30s, 50-55°C 30s, 72°C 30s).

- Amplify the full-length reassembled products using external primers in a final PCR.

- Error-Prone PCR (epPCR): Standard Protocol:

- Cloning: Ligate the diversified gene pool into an appropriate expression vector (e.g., plasmid). Transform into competent E. coli cells to create the library, aiming for a size >10⁴ independent clones to cover diversity.

Step 2: Expression & Screening/Selection Objective: Identify variants with improved functional properties. Detailed Methodology:

- Expression: Plate transformed cells on agar or culture in multi-well plates to induce protein expression (e.g., with IPTG).

- Assay: Apply a high-throughput assay. For an enzyme, this could involve:

- Colony Screen: Transfer colonies to a membrane, lyse cells, and incubate with a fluorogenic or chromogenic substrate. Active variants produce a detectable signal (halo/color).

- Microtiter Plate Screen: Grow clones in 96- or 384-well plates, lyse, and assay activity spectrophotometrically/fluorometrically.

- Identification: Isolate clones exhibiting a signal above a predefined threshold (e.g., 150% of wild-type activity).

Step 3: Hit Characterization Objective: Validate and quantify the performance of lead variants. Detailed Methodology:

- Sequence Analysis: Sequence the gene(s) of top-performing hits to identify mutations.

- Protein Purification: Express and purify the variant protein using affinity chromatography (e.g., His-tag purification).

- Biophysical/Biochemical Characterization: Determine kinetic parameters (kcat, KM), stability (Tm via DSF, half-life), and expression yield. Compare directly to the parent variant.

Step 4: Iteration Objective: Use the best variant(s) as template(s) for the next cycle of evolution. Detailed Methodology: The gene from the best-characterized hit becomes the new template for Step 1. Methods may shift from random (epPCR) to more focused (site-saturation mutagenesis at identified hot-spot residues) in later cycles.

Table 1: Common Mutagenesis Methods and Their Output Characteristics

| Method | Avg. Mutation Rate (per gene) | Library Diversity | Primary Use Case |

|---|---|---|---|

| Error-Prone PCR | 1-10 mutations | High (10⁶-10⁹) | Broad exploration, early rounds |

| DNA Shuffling | 1-4 crossovers + mutations | High (10⁶-10¹⁰) | Recombination of beneficial mutations |

| Site-Saturation Mutagenesis | 1-5 targeted residues (all 20 AA) | Medium (10²-10⁵) | Focused optimization of key positions |

| Oligo-Mediated Mutagenesis | Precise, user-defined | Low (10¹-10³) | Introduction of specific changes |

Table 2: Typical Cycle Metrics and Timeline for a Laboratory Evolution Project

| Phase | Approx. Duration (Weeks) | Key Output | Success Metric |

|---|---|---|---|

| Library Construction & Transformation | 1-2 | Mutant Library | Library size > 10⁶ CFU |

| Primary Screening | 2-4 | Hit Variants | 10-100 hits with >2x improvement |

| Hit Characterization | 2-3 | Lead Variant(s) | Confirmed improved kcat/KM & stability |

| One Full Cycle | 5-9 | Improved Template | Ready for next iteration |

| Typical Project (3-5 cycles) | 15-45 | Final Evolved Protein | >100-10,000x overall improvement |

Visualization of Workflows

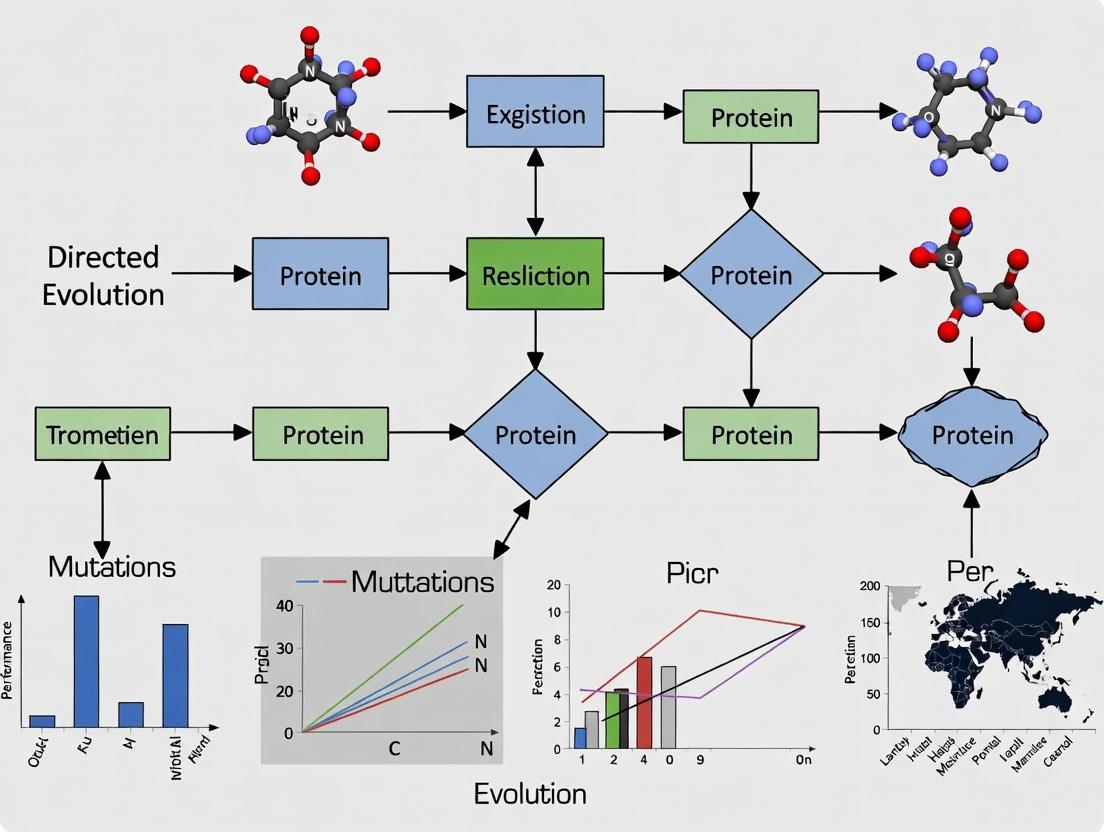

Diagram 1: The Directed Evolution Cycle

Diagram 2: Library Creation Methods

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Traditional Directed Evolution

| Item | Function & Application | Example/Note |

|---|---|---|

| Mutazyme II | Error-prone polymerase. Generates balanced, unbiased mutations across AT/GC sites. | Used in epPCR Step 1. |

| DNase I (RNase-free) | Randomly cleaves DNA to create fragments for DNA shuffling. | Critical for recombination-based library generation. |

| Gateway / Golden Gate Cloning Kit | Enables rapid, efficient, and seamless transfer of mutant gene libraries into expression vectors. | Speeds up Step 1 (Cloning). |

| Chromogenic/Fluorogenic Substrate | Detects enzymatic activity in colonies or cell lysates. Enables high-throughput screening. | Core of Step 2 (Screening). |

| HisTrap HP Column | Immobilized metal affinity chromatography (IMAC) for rapid purification of His-tagged variant proteins. | Essential for Step 3 (Characterization). |

| Thermal Shift Dye (e.g., Sypro Orange) | Measures protein thermal stability (Tm) via Differential Scanning Fluorimetry (DSF). | Key for stability assessment in Step 3. |

| 96-/384-Well Microplate Reader | Quantifies absorbance, fluorescence, or luminescence for high-throughput kinetic assays. | Workhorse for screening and characterization. |

What Are Protein Language Models (pLMs) and Transformers? Demystifying the Core AI Architecture

Within the paradigm of in silico directed evolution for protein engineering, traditional methods like site-saturation mutagenesis or random mutagenesis are computationally expensive and low-throughput. The core thesis of this research posits that protein Language Models (pLMs) and their underlying Transformer architecture offer a revolutionary framework for predicting fitness landscapes, enabling rational, high-probability exploration of sequence space. This application note details the core concepts, protocols, and toolkits for leveraging pLMs in this context.

Core Architecture Demystified

The Transformer: Foundational Blocks

The Transformer is a neural network architecture based on a "self-attention" mechanism, dispensing with recurrence and convolution. For protein sequences, tokens represent amino acids or sequence fragments.

Key Components:

- Embedding Layer: Converts each amino acid token into a dense vector.

- Self-Attention Layer: Computes a weighted sum of all token representations at each position, capturing long-range dependencies in the sequence.

- Feed-Forward Network: Applied per position for non-linear transformation.

- Encoder Stack: Multiple layers of the above components build a deep contextual understanding of the input sequence.

From NLP to pLMs

Protein Language Models are Transformers trained on vast corpora of protein sequences (e.g., UniRef) using self-supervised objectives, most commonly Masked Language Modeling (MLM). During MLM training, random amino acids in a sequence are masked, and the model learns to predict them based on the full context, thereby internalizing the "grammar" and "semantics" of natural protein sequences.

Quantitative Performance Data

Table 1: Benchmarking Key pLMs on Protein Engineering Tasks

| Model (Year) | Training Data Size | Key Task | Reported Metric (Performance) | Relevance to In Silico Directed Evolution |

|---|---|---|---|---|

| ESM-2 (2022) | Up to 15B parameters (UR50/D) | Missense variant effect prediction | Spearman's ρ ~0.70 on Deep Mutational Scanning (DMS) benchmarks | High; embedding fitness predictions directly from sequence. |

| ProtBERT | ~216M parameters (UniRef100) | Secondary structure prediction | Accuracy ~0.73 (3-class) | Medium; learns structural constraints useful for evolution. |

| AlphaFold2 | PDB, MSA | Structure Prediction | TM-score >0.9 on CASP14 targets | Indirect; structural context informs fitness hypotheses. |

| ProteinMPNN | PDB structures | De novo backbone design | Recovery rate >0.40 on native sequences | High; enables fast sequence design for fixed backbones. |

Table 2: Comparison of pLM Embedding Utilization Methods

| Method | Input | Output | Protocol Complexity | Computational Cost |

|---|---|---|---|---|

| Embedding Extraction | Single sequence | Per-residue feature vector | Low | Low |

| Fine-Tuning | Task-specific dataset (e.g., stability data) | Adapted model weights | High | Very High |

| Masked Inference (MLM) | Sequence with masked position(s) | Log-likelihoods for all 20 AAs | Medium | Low-Medium |

Experimental Protocols

Protocol 4.1: Zero-Shot Fitness Prediction Using pLM Embeddings

Objective: Predict the functional impact of single-point mutations without task-specific training.

Materials:

- Wild-type protein sequence (FASTA format).

- List of target mutations (e.g., A23V, G45R).

- Pre-trained pLM (e.g., ESM-2 model from Hugging Face).

Procedure:

- Embedding Generation:

- Tokenize the wild-type sequence using the model's tokenizer.

- Pass the tokenized sequence through the pLM encoder (e.g.,

esm2_t33_650M_UR50D). - Extract the hidden-state embeddings from the final layer. Output shape: [SeqLen, EmbedDim].

- Mutation Scoring via Embedding Distance:

- For each target mutation (e.g., position 23, Alanine → Valine):

- Isolate the embedding vector for the wild-type residue at position 23 (hwt).

- From the model's vocabulary, obtain the embedding vector for the mutant residue (hmut). This is the learned embedding for the token "V".

- Compute the cosine similarity or Euclidean distance between hwt and hmut.

- Interpretation: Lower cosine similarity (greater distance) may indicate a larger functional perturbation, as the model's internal representation of the mutant residue deviates more from the contextually expected one.

- For each target mutation (e.g., position 23, Alanine → Valine):

Protocol 4.2:In SilicoSaturation Mutagenesis Scan with MLM

Objective: Rank all possible amino acid substitutions at a given position by their model log-likelihood.

Materials: As in Protocol 4.1.

Procedure:

- Sequence Masking:

- For a target position i, create 20 copies of the wild-type sequence, each masking position i with the model's mask token (e.g.,

<mask>).

- For a target position i, create 20 copies of the wild-type sequence, each masking position i with the model's mask token (e.g.,

- Masked Language Model Inference:

- Pass each of the 20 masked sequences through the pLM.

- At the output layer corresponding to mask position i, the model produces a probability distribution over the 20 standard amino acids.

- Record the log probability (log p) assigned to the original wild-type amino acid and to each of the 19 possible mutants.

- Fitness Score Calculation:

- A common fitness score (S) is the log-likelihood ratio: Smut = log p(mutant) - log p(wild-type).

- Interpretation: A positive Smut suggests the mutant is more "natural" or likely in that context than the wild-type according to the model's learned evolutionary distribution. This can be used as a proxy for stability or foldability.

Protocol 4.3: Fine-Tuning a pLM on Experimental Fitness Data

Objective: Adapt a general pLM to predict quantitative fitness from a specific directed evolution dataset.

Materials:

- Dataset of protein variant sequences and associated fitness scores (e.g., fluorescence, binding affinity).

- Pre-trained pLM (e.g., ESM-2).

- Deep learning framework (PyTorch/TensorFlow).

Procedure:

- Data Preparation:

- Split variant sequences and fitness scores into training (80%), validation (10%), and test (10%) sets.

- Tokenize all sequences.

- Model Architecture Modification:

- Remove the pLM's final MLM head.

- Append a regression head: typically a Global Average Pooling layer followed by one or more fully connected layers with a single output neuron.

- Training Loop:

- Freeze the pLM parameters for the first few epochs, training only the regression head.

- Unfreeze the entire model and train with a low learning rate (e.g., 1e-5).

- Use Mean Squared Error (MSE) loss between predicted and experimental fitness scores.

- Monitor validation loss for early stopping.

- Validation: Evaluate the fine-tuned model's performance on the held-out test set using Pearson/Spearman correlation.

Visualization Diagrams

Title: Transformer Encoder Architecture for pLMs

Title: Protocol for pLM Saturation Mutagenesis Scan

The Scientist's Toolkit

Table 3: Essential Research Reagents & Resources for pLM-Based Directed Evolution

| Item | Function/Description | Example/Provider |

|---|---|---|

| pLM Pre-trained Models | Foundation models providing base knowledge of protein sequence space. | ESM-2 (Meta AI), ProtBERT (DeepMind), OmegaFold (Helixon) |

| Protein Sequence Database | Source of evolutionary information for model training or MSA generation. | UniProt, UniRef, Pfam |

| Fitness Dataset (Benchmark) | Experimental data for model validation and fine-tuning. | ProteinGym (DMS benchmarks), FireProtDB |

| Deep Learning Framework | Software library for model loading, inference, and fine-tuning. | PyTorch, TensorFlow, JAX |

| Model Hub/Repository | Platform to access, share, and version-control models. | Hugging Face Model Hub, GitHub |

| High-Performance Compute (HPC) | GPU/TPU clusters for training large models or scanning massive libraries. | Local GPU servers, Cloud (AWS, GCP, Azure), TPU VMs |

| Structure Prediction Tool | Provides 3D structural context to validate or inform pLM predictions. | AlphaFold2, ColabFold, RoseTTAFold |

| Sequence Design Tool | For de novo sequence generation based on pLM or structural outputs. | ProteinMPNN, RFdiffusion |

Application Notes: Transformers for Protein Fitness Prediction and Design

Core Application: Protein transformers, such as ESM-2, ESM-3, and ProtGPT2, have demonstrated state-of-the-art performance in predicting protein function and stability from sequence alone. Their self-attention mechanism allows them to model long-range dependencies in amino acid sequences, capturing the complex epistatic interactions that define protein fitness landscapes.

Key Performance Data:

Table 1: Comparison of Leading Protein Transformer Models for Fitness Prediction

| Model | Parameters | Training Data | Key Metric (e.g., Spearman ρ on Benchmark) | Primary Application |

|---|---|---|---|---|

| ESM-2 (15B) | 15 Billion | UniRef + MGnify (65M seq) | 0.83 (Spearman ρ on deep mutational scanning) | Zero-shot fitness prediction, structure |

| ESM-3 (98B) | 98 Billion | Expanded multi-omic dataset | 0.89 (Spearman ρ, outperforms ESM-2) | Full-sequence generative design |

| ProtGPT2 | 738 Million | UniRef50 (50M seq) | N/A (Generative model) | De novo sequence generation |

| MSA Transformer | 640 Million | Multiple Sequence Alignments | 0.79 (Spearman ρ, strong with MSA) | Fitness prediction with evolutionary context |

Signaling Pathway for In Silico Directed Evolution:

Diagram Title: Transformer-Driven Directed Evolution Cycle

Experimental Protocols

Protocol 2.1: Zero-Shot Fitness Prediction Using ESM-2

Objective: To predict the functional effect of single-point mutations without task-specific training.

Materials:

- Pre-trained ESM-2 model (esm2t363B_UR50D or larger).

- Target protein sequence (FASTA format).

- List of mutations (e.g., A23V, G45R).

- Python environment with PyTorch and the

fair-esmlibrary.

Procedure:

- Sequence Encoding: Load the pre-trained ESM-2 model and tokenizer. Encode the wild-type sequence to obtain per-residue logits.

- Mutation Scoring: For each mutation, compute the log-odds ratio: score = log(P_mutant / P_wild-type), where P is the model's probability for the amino acid at that position.

- Fitness Inference: Aggregate scores (e.g., sum across multiple mutations). A higher score suggests increased fitness/stability relative to wild-type.

- Calibration (Optional): Normalize scores using a control set of known neutral mutations.

Expected Output: A ranked list of variants with predicted fitness scores.

Protocol 2.2:De NovoSequence Generation with ProtGPT2

Objective: To generate novel, plausible protein sequences conditioned on a desired property or starting motif.

Materials:

- ProtGPT2 model (Hugging Face

transformerslibrary). - Starting sequence or prompt (e.g., first 20 amino acids).

- Sampling parameters (temperature, top-k, top-p).

Procedure:

- Model Setup: Load ProtGPT2 using the

AutoModelForCausalLMandAutoTokenizerfrom Hugging Face. - Prompt Design: Provide a starting sequence as prompt. The model will autoregressively complete the sequence.

- Generation: Generate sequences using nucleus sampling (top-p=0.95) with a temperature of 0.8 to balance diversity and quality. Generate a large pool (e.g., 10,000 sequences).

- Filtering: Filter generated sequences for length and remove duplicates. Use a separate classifier (e.g., ESM-2) to predict and select for desired properties (thermostability, solubility).

Protocol 2.3: Full-sequence Optimization with ESM-3

Objective: To design an entire protein sequence for a specified function using a guided generative approach.

Materials:

- Access to ESM-3 generative model (API or local if available).

- Specification of functional constraints (e.g., binding motif, catalytic triad, stability profile).

Procedure:

- Constraint Definition: Define constraints as positional priors or as a scoring function.

- Iterative Decoding: Use the model's conditional generation capability to propose sequences meeting constraints. This often involves Markov Chain Monte Carlo (MCMC) sampling in sequence space, guided by the model's likelihood and the constraint function.

- Multiproperty Optimization: Jointly optimize by combining multiple predictive heads from the model (e.g., fitness, localization, expression).

- In Silico Validation: Run the final candidate sequences through separate in silico assays (foldability via AlphaFold2, aggregation prediction).

Research Reagent Solutions Toolkit

Table 2: Essential In Silico Research Toolkit for Protein Transformer Work

| Item / Solution | Function / Purpose | Example / Provider |

|---|---|---|

| Pre-trained Models | Base models for transfer learning, fine-tuning, or zero-shot prediction. | ESM-2/3 (Meta), ProtGPT2 (Hugging Face), MSA Transformer (Meta) |

| Fine-tuning Datasets | Curated sets of mutant fitness data for task-specific model adaptation. | ProteinGym (DMS assays), FireProtDB (stability data) |

| Compute Infrastructure | GPU/TPU clusters for model training and large-scale inference. | NVIDIA A100/H100, Google Cloud TPU v4, AWS P5 instances |

| Sequence Embedding Tools | Generate fixed-length vector representations of proteins for downstream tasks. | esm-extract (from ESM), PerResidueEmbeddings |

| Structure Prediction Integration | Validate designed sequences for foldability and structural integrity. | AlphaFold2, RoseTTAFold, ESMFold |

| High-throughput In Silico Assay Pipelines | Automated systems to score variants for multiple properties in parallel. | Custom Snakemake/Nextflow pipelines integrating ESM, AF2, and Aggrescan3D |

| Experiment Management Platform | Track design-build-test-learn cycles, linking in silico predictions to lab data. | Benchling, Atlas by Inscripta, custom MLflow/Weights & Biases setups |

This document provides essential definitions and application notes for key machine learning concepts as applied to directed evolution in silico using protein transformers. The ability to navigate sequence-function landscapes computationally is revolutionizing protein engineering, enabling the rapid design of novel enzymes, therapeutics, and biomaterials.

Core Terminology & Quantitative Framework

Embeddings

Definition: Numerical, fixed-dimensional vector representations of protein sequences (or their constituent amino acids) that capture semantic, structural, or functional relationships. Similar proteins/amino acids have similar vector representations in this learned space.

Application in Protein Science: Transformers convert a protein sequence (e.g., "MAEGE...") into a series of embedding vectors. Each amino acid is initially represented by a learned embedding that encodes its chemical and contextual identity.

Quantitative Data Summary: Table 1: Common Embedding Dimensions in Protein Transformer Models

| Model Name | Embedding Dimension (per residue) | Total Model Parameters | Primary Training Data |

|---|---|---|---|

| ESM-2 (15B) | 5120 | 15 Billion | UniRef (millions of sequences) |

| ProtBERT | 1024 | 420 Million | BFD & UniRef |

| AlphaFold2 (Evoformer) | 256 (per MSA row/template) | ~93 Million | PDB, MSA databases |

| CARP (640M) | 1280 | 640 Million | CATH, UniRef |

Attention Mechanisms

Definition: A computational technique that allows a model to weigh the importance of different parts of the input sequence (e.g., amino acids) when generating an output representation for a specific position. It answers "where to look" within the sequence context.

Application in Protein Science: Enables modeling of long-range interactions between distal amino acids in a folded protein. A residue in a binding pocket can "attend to" residues forming the allosteric site, capturing evolutionary couplings and structural constraints without explicit 3D coordinates.

Experimental Protocol: Analyzing Attention Maps for Functional Site Discovery

- Objective: Identify putative functional residues (e.g., catalytic sites, binding interfaces) from a pre-trained protein transformer's attention maps.

- Materials: Pre-trained model (e.g., ESM-2), protein sequence(s) of interest, Python environment with PyTorch and transformers library.

- Procedure:

- Sequence Input: Tokenize the target protein sequence.

- Model Inference: Pass tokens through the model, extracting attention weights from all layers and heads.

- Aggregation: Average attention weights across all heads and layers, or analyze specific layers (early=local, late=global).

- Visualization: Generate a 2D heatmap (rows=query residues, columns=key residues).

- Analysis: Identify residues that receive consistently high attention from many other positions. Cross-reference these with known domain annotations or mutate them in silico to test functional impact predictions.

Latent Space

Definition: A lower-dimensional, continuous vector space that is learned to represent the compressed essence of high-dimensional input data (e.g., all possible protein sequences). Points in this space correspond to proteins, and directions often correspond to meaningful biological properties.

Application in Protein Science: Serves as a navigable fitness landscape. Operators like interpolation (between two functional proteins) or guided traversal (along an axis of increased stability) enable rational in silico design.

Quantitative Data Summary: Table 2: Latent Space Operations & Outcomes in Directed Evolution

| Operation | Input | Typical Latent Dimension | Example Outcome (Validated Experimentally) |

|---|---|---|---|

| Interpolation | Two parent sequences | 512-1024 | A chimeric enzyme with hybrid activity (PMID: 35537221) |

| Gradient Ascent | Starting sequence + fitness predictor | 128-256 | Variants with ~10x improved thermostability (PMID: 36747658) |

| Sampling near a point | A single high-fitness sequence | Varies by model | Novel diverse sequences with retained function (>80% success rate) |

| Property-guided traversal | Sequence + property labels (e.g., soluble/insoluble) | 512 | Generation of soluble variants of membrane protein segments |

Experimental Protocol: Latent Space Interpolation for Protein Engineering

- Objective: Generate novel, functional protein sequences by interpolating between two parent sequences in a semantically meaningful latent space.

- Materials: A sequence-to-sequence autoencoder model (e.g., ProteinVAE) or a decoder-capable transformer (e.g., ProtGPT2), two parent protein sequences (Seq A, Seq B).

- Procedure:

- Encode: Encode Seq A and Seq B into their latent vectors, zA and zB.

- Interpolate: Compute a linear path in latent space: zi = zA + αi * (zB - zA), where αi ranges from 0 to 1 in N steps (e.g., N=10).

- Decode: For each interpolated vector zi, decode it into a novel amino acid sequence Si.

- Filter & Analyze: Use in silico tools (e.g., foldability predictors like ESMFold, stability calculators) to filter plausible sequences.

- Validate: Select top candidates for in vitro synthesis and functional assays.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for In Silico Directed Evolution

| Item / Resource | Function in Workflow | Example / Provider |

|---|---|---|

| Pre-trained Protein LMs (ESM-2, ProtBERT) | Provide foundational embeddings and attention patterns for sequences. | Hugging Face Hub, BioLM API |

| Structure Prediction Servers (ESMFold, OmegaFold) | Rapidly assess 3D structure viability of designed sequences. | ESMFold Colab, OmegaFold Web Server |

| Computational Stability Predictors (ddG, ΔΔG) | Estimate the change in folding free energy upon mutation. | FoldX, Rosetta ddG_monomer, ESM-IF1 |

| Multiple Sequence Alignment (MSA) Generators | Provide evolutionary context for a seed sequence, crucial for some models. | HHblits (Uniclust30), MMseqs2 (ColabFold) |

| In Silico Saturation Mutagenesis Suites | Systematically score all possible single-point mutants. | PyMOL mutagenesis wizard, Rosetta cartesian_ddg |

| Fitness Prediction Models (UniRep, TAPE) | Map sequences to predicted functional scores (e.g., fluorescence, activity). | Model-specific GitHub repositories |

Visualizations

Title: From Sequence to Design via Embeddings, Attention, and Latent Space

Title: Directed Evolution In Silico via Latent Space Gradient Ascent

This document provides application notes and protocols for major protein transformer models, framed within a research thesis on Directed evolution in silico using protein transformers. The core hypothesis posits that generative and structure-predicting transformers can dramatically accelerate the design-test-learn cycle of directed evolution by predicting fitness landscapes, generating novel functional sequences, and inferring structural constraints without exhaustive laboratory screening.

Model Families: Capabilities & Quantitative Comparison

Table 1: Comparative Overview of Major Protein Transformer Families

| Model Family | Key Model (Representative) | Primary Architecture | Training Data & Scale | Primary Output | Key Contribution to In Silico Directed Evolution |

|---|---|---|---|---|---|

| ESM (Evolutionary Scale Modeling) | ESM-2 (15B params) | Transformer Encoder | UniRef50 (250M sequences) | Per-residue embeddings, contact maps, fitness predictions | Enables zero-shot prediction of functional effects of mutations (fitness landscapes). |

| ProtGPT2 | ProtGPT2 (738M params) | Transformer Decoder (GPT-2 style) | UniRef50 (100M sequences) | De novo protein sequences (autoregressive generation) | Generates novel, plausible, and diverse protein sequences for exploration of sequence space. |

| AlphaFold | AlphaFold2 (AF2) | Evoformer + Structure Module | PDB + MSA (UniRef90, MGnify) | 3D atomic coordinates (structure) | Predicts the structural consequence of designed variants, enabling structure-based filtering. |

| Related: ProteinMPNN | ProteinMPNN (Fast, 6M params) | Transformer Encoder (invariant) | PDB structures & sequences | Optimized sequences for a given backbone | Provides a powerful inverse design tool for fixing a scaffold and generating compatible sequences. |

Table 2: Performance Benchmarks (Representative Quantitative Data)

| Model / Task | Metric | Reported Performance | Implication for Directed Evolution |

|---|---|---|---|

| ESM-2 (Contact Prediction) | Precision@L/5 (long-range) | ~85% (for large models) | Infers structural constraints from sequence alone, guiding stable designs. |

| ESM-1v (Variant Effect) | Zero-shot Spearman's ρ (on deep mutational scans) | 0.38 - 0.40 (average) | Predicts mutation effects without task-specific training, screening variants in silico. |

| ProtGPT2 (Generation) | Perplexity (on held-out sequences) | ~16.5 | Lower perplexity indicates generation of "protein-like" sequences, reducing search space. |

| AlphaFold2 (Structure) | CASP14 GDT_TS (median) | ~92.4 (for high accuracy targets) | High-confidence structural models allow for functional annotation (e.g., active site geometry). |

| ProteinMPNN (Design) | Recovery Rate (native sequence) | ~33% (vs. ~25% for Rosetta) | Generates diverse, high-accuracy sequences for a fixed backbone, enabling scaffold repurposing. |

Application Notes & Detailed Protocols

Protocol: Zero-Shot Mutation Effect Prediction with ESM-1v/2

Purpose: To rank all possible single-point mutants of a wild-type protein by predicted fitness, enabling prioritization for laboratory validation. Application in Thesis: Forms the core of the in silico screening phase, replacing early-stage low-throughput mutagenesis screens.

- Input Preparation: Obtain the wild-type amino acid sequence (e.g.,

"MQIFVKTLTG..."). Define the positional range for mutagenesis (e.g., active site residues 50-70). - Model Loading: Use the

esmPython package. Load theesm1v_t33_650M_UR90Smodel (or one of the five ESM-1v models) and its associated alphabet/tokenizer. - Inference for All Possible Mutations:

- Tokenize the wild-type sequence.

- For each position in the target range, mask it with the

<mask>token. - Pass the masked sequence through the model to obtain log-probabilities for all 20 standard amino acids at the masked position.

- The log probability is interpreted as a proxy for fitness (higher log p ≈ higher predicted fitness).

- Data Analysis: Rank mutations by the log-likelihood ratio (LLR) of the mutant vs. wild-type amino acid. Export a ranked table (Residue, Mutation, LLR) for experimental validation.

Protocol:De NovoProtein Sequence Generation with ProtGPT2

Purpose: To generate large libraries of novel, protein-like sequences for a given fold or family. Application in Thesis: Expands exploration beyond natural sequence space, providing candidates for ab initio design or as starting points for optimization.

- Environment Setup: Install

transformersandtorch. Load the pretrained ProtGPT2 model and tokenizer. - Sequence Generation:

- Define a starting prompt (e.g., the

<|endoftext|>token for ab initio generation, or a seed sequence like"M"). - Use the

model.generate()function with parameters tuned for diversity and quality:

- Define a starting prompt (e.g., the

- Post-processing: Decode token IDs to amino acid sequences. Filter sequences based on length, absence of rare amino acids, or predicted structural properties (e.g., using ESMFold for fast structure prediction).

Protocol: Structure-Based Filtering with AlphaFold2 or ESMFold

Purpose: To validate the structural plausibility and specific fold of sequences generated by ProtGPT2 or selected by ESM variant scoring. Application in Thesis: Acts as a high-fidelity computational filter, ensuring designed variants are likely to fold correctly before resource-intensive wet-lab expression.

- Input: A FASTA file containing candidate sequences (from Protocol 3.1 or 3.2).

- Structure Prediction Run:

- For ESMFold (Fast): Use the

esmframework. ESMFold is optimized for speed (inference in seconds) and is suitable for screening hundreds to thousands of designs. - For AlphaFold2 (High Accuracy): Use ColabFold (localAF2 or via cloud). ColabFold combines AF2 with fast MMseqs2 for MSA generation. Best for final validation of top candidates (tens of sequences).

- For ESMFold (Fast): Use the

- Analysis Metrics: For each predicted structure, examine:

- pLDDT (per-residue confidence): High average pLDDT (>80-90) suggests a well-folded, confident prediction.

- Predicted Aligned Error (PAE): Assess domain packing and global fold confidence. Low inter-domain PAE indicates a stable quaternary structure.

- Structural Clustering: Compare to a reference wild-type structure using TM-score (>0.5 suggests similar fold).

Protocol: Fixed-Backbone Sequence Design with ProteinMPNN

Purpose: To design optimal sequences that will stabilize a given protein backbone (e.g., a scaffold from AF2 or a natural template). Application in Thesis: Enables precise "refactoring" of a chosen structural scaffold with novel sequences, optimizing for stability or compatibility with a new function.

- Input Preparation: Obtain a backbone structure in PDB format (

.pdbor.cif). This can be a crystal structure, an AF2 prediction, or a computational scaffold. - Run ProteinMPNN:

- Clone the official ProteinMPNN repository.

- Prepare the input JSON file specifying chain IDs and optional fixed positions (e.g., to preserve catalytic residues).

- Execute the main design script:

- Output & Validation: The output is a FASTA file of designed sequences. These should be processed through structure prediction (Protocol 3.3) to verify they adopt the intended backbone.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools & Resources

| Item / Reagent | Provider / Source | Function in In Silico Directed Evolution Pipeline |

|---|---|---|

| ESM / ESMFold | Meta AI (GitHub, Hugging Face) | Provides foundational sequence embeddings, zero-shot fitness prediction, and rapid structure prediction for high-throughput filtering. |

| ProtGPT2 Model | Hugging Face Model Hub (nferruz/ProtGPT2) |

The core generative model for exploring novel, protein-like sequence space autoregressively. |

| AlphaFold2 / ColabFold | DeepMind / ColabFold Team | Gold-standard structure prediction for final validation of designed variants and functional site analysis. |

| ProteinMPNN | University of Washington (GitHub) | The state-of-the-art tool for fixed-backbone inverse design, generating stable sequences for any given scaffold. |

| PyTorch / JAX | PyTorch Team / Google | Core deep learning frameworks required to run and often fine-tune the above models. |

Hugging Face transformers |

Hugging Face | Standardized Python library for loading and using transformer models like ProtGPT2. |

| PDB (Protein Data Bank) | RCSB.org | Source of high-quality experimental structures for training, validation, and use as design scaffolds. |

| UniRef Database | UniProt Consortium | Curated clusters of protein sequences forming the primary training data for ESM and ProtGPT2. |

Workflow Visualizations

(Diagram 1 Title: In Silico Directed Evolution Workflow)

(Diagram 2 Title: Transformer Roles in the Thesis Framework)

Building Your Digital Evolution Pipeline: A Step-by-Step Methodological Guide

Within a broader thesis on directed evolution in silico using protein transformers, the initial and most critical phase is the construction of a high-quality, representative training corpus. This corpus forms the foundational language from which transformer models learn protein grammar, function, and evolutionary constraints. Suboptimal data leads to models with poor predictive power, limiting their utility in guiding protein engineering for therapeutic development.

Application Notes & Protocols

Protocol: Multi-Source Data Acquisition & Aggregation

Objective: To compile a comprehensive, non-redundant initial dataset from public repositories. Methodology:

- Source Identification: Programmatically access (via APIs or FTP) key databases:

- UniProtKB/Swiss-Prot: For high-quality, manually annotated sequences.

- Protein Data Bank (PDB): For sequences with confirmed 3D structural data.

- Pfam and InterPro: For domain-family classification.

- NCBI GenPept: For broader sequence diversity.

- Automated Download: Use scripts (e.g., Python with

requests,biopython) to retrieve FASTA files and relevant metadata (organism, function, evidence code). - Temporal Filtering: Prioritize entries updated within the last 5 years to reflect current knowledge.

- Initial Merge: Concatenate data from all sources into a master FASTA file.

Key Quality Metric: Initial raw sequence count.

Protocol: Rigorous Deduplication & Clustering

Objective: To remove sequence redundancy and ensure data diversity, preventing model bias. Methodology:

- CD-HIT Suite Application: Use

cd-hitorMMseqs2to cluster sequences at a specified identity threshold (e.g., 90% for family-level diversity, 30% for fold-level). - Command Example:

cd-hit -i raw_data.fasta -o clustered_data.fasta -c 0.9 -n 5 -M 16000 - Representative Selection: From each cluster, select the longest sequence or the one with the highest annotation quality (Swiss-Prot over TrEMBL).

- Generate Cluster Reports: Document cluster sizes and representative sequences.

Table 1: Impact of Clustering Identity Threshold on Corpus Size

| Source Database | Raw Sequences | After 90% ID Clustering | After 60% ID Clustering | After 30% ID Clustering |

|---|---|---|---|---|

| UniProtKB | ~220 million | ~15 million | ~5 million | ~1 million |

| PDB | ~200,000 | ~150,000 | ~100,000 | ~50,000 |

| Combined (Example) | ~220.2M | ~15.15M | ~5.1M | ~1.05M |

Protocol: Curation via Annotation-Driven Filtering

Objective: To retain sequences with reliable functional and structural metadata. Methodology:

- Filter by Evidence: Retain sequences with experimental evidence codes (e.g., EXP, IDA, IPI in UniProt) or inferred from phylogeny (IBA).

- Remove Fragments: Exclude sequences annotated as "Fragment."

- Length Filtering: Discard sequences below 50 and above 2000 amino acids to standardize input dimensions for the transformer.

- Taxonomic Stratification: Ensure balanced representation across target taxonomic groups (e.g., Bacteria, Archaea, Eukaryota) if applicable to the research goal.

Table 2: Sequence Attrition After Annotation Filtering (Example)

| Curation Step | Sequences Remaining | % of Previous Step |

|---|---|---|

| Post-Clustering (90% ID) | 15,150,000 | 100% |

| Experimental Evidence Filter | 2,720,000 | 18% |

| Remove Fragments | 2,650,000 | 97% |

| Length Filter (50-2000 aa) | 2,600,000 | 98% |

| Final Curated Corpus | ~2.6 million | 17% of clustered |

Protocol: Train-Validation-Test Split with Homology Reduction

Objective: To create data partitions that prevent data leakage and enable robust evaluation. Methodology:

- Compute All-vs-All Similarity: Use

MMseqs2for fast similarity search on the curated corpus. - Greedy Partitioning: Implement a homology-reduction algorithm:

- Assign the first sequence to the training set.

- For each subsequent sequence, if it shares >25% identity with any sequence in the test set, assign to training; if >25% identity with any in validation, assign to training; else, assign proportionally to maintain set ratios (e.g., 80/10/10).

- Final Split Verification: Confirm no pair of sequences across the test/validation and training sets exceeds the similarity threshold (e.g., 25% ID).

Visualizations

Diagram 1: High-Level Corpus Construction Workflow

Diagram 2: Logic for Homology-Reduced Data Partitioning

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Protein Sequence Corpus Curation

| Tool / Resource | Type | Primary Function | Key Parameter / Note |

|---|---|---|---|

| CD-HIT | Software Suite | Fast clustering of protein/DNA sequences to remove redundancy. | -c (sequence identity threshold). Use cd-hit-est for nucleotides. |

| MMseqs2 | Software Suite | Ultra-fast, sensitive sequence searching and clustering. Scalable for massive datasets. | --min-seq-id for clustering, easy-cluster workflow. |

| Biopython | Python Library | Programmatic access to biological databases, parsing of FASTA/GenBank, sequence manipulation. | Bio.SeqIO module for file I/O; Bio.Entrez for NCBI access. |

| UniProt REST API | Web API | Programmatic retrieval of up-to-date protein sequences and rich annotations. | Queries via https://rest.uniprot.org. Essential for automated pipelines. |

| Pandas & NumPy | Python Libraries | Data manipulation, filtering, and statistical analysis of sequence metadata. | DataFrames for managing annotation tables and filtering operations. |

| HMMER (hmmer.org) | Software Suite | Profile hidden Markov model searches for domain identification (Pfam). | hmmscan to annotate sequences with domain architecture. |

| AWS/GCP Cloud Compute | Infrastructure | Essential for running memory- and CPU-intensive clustering on million-sequence datasets. | Use preemptible VMs for cost-effective large-scale cd-hit jobs. |

Within the broader thesis on Directed Evolution In Silico Using Protein Transformers, sequence encoding represents the fundamental data pre-processing step that translates raw amino acid (AA) strings into a numerical format interpretable by deep learning models. This transformation is critical for leveraging transformer architectures (e.g., ESM, ProtBERT) to predict fitness landscapes, enabling the in silico screening of vast mutant libraries before physical synthesis.

Encoding methods vary in complexity from simple one-hot vectors to dense contextual embeddings from pre-trained models. The choice significantly impacts model performance in downstream tasks like stability or function prediction.

Table 1: Quantitative Comparison of Primary Amino Acid Encoding Methods

| Encoding Method | Dimensionality per AA | Contextual Awareness | Common Use Case | Key Advantage | Key Limitation |

|---|---|---|---|---|---|

| One-Hot | 20 (or 21 w/ gap) | No | Baseline models | Simplicity, interpretability | No similarity info, sparse |

| Blosum62 | 20 | No | Evolutionary scoring | Encodes biochemical similarity | Fixed, non-contextual |

| Learned Embedding (e.g., from ESM-2) | 512-1280 | Yes | State-of-the-art fitness prediction | High-level semantic features, context-aware | Computationally intensive |

| k-mer / n-gram | Variable | Limited (local) | CNN-based models | Captures local motifs | Can lose sequential order |

| Physicochemical Vectors | 5-10+ | No | Feature engineering | Direct biophysical interpretation | Manual feature selection |

Detailed Experimental Protocols

Protocol 3.1: Generating One-Hot and Blosum62 Encodings

Objective: Convert a protein sequence of length L into fixed-dimensional matrices. Materials: Python 3.8+, NumPy, Biopython, sequence string (e.g., "MVLSPADKTN"). Procedure:

- One-Hot Encoding:

a. Define a canonical 20-amino acid alphabet:

AAs = ['A','C','D',...,'Y','V']. b. For each residue in the sequence, create a zero vector of length 20. c. Set the index corresponding to the AA's position in the alphabet to 1. d. Output is an L x 20 matrix. - Blosum62 Encoding:

a. Import the BLOSUM62 matrix via Biopython's

Bio.SubsMat.MatrixInfo.blosum62. b. For each residue, map the AA to its corresponding row in the BLOSUM62 matrix as a 20-dimensional vector. c. Output is an L x 20 matrix of substitution scores.

Protocol 3.2: Extracting Contextual Embeddings with ESM-2

Objective: Generate per-residue embeddings that encapsulate global sequence context using a pre-trained protein language model.

Materials: Python 3.8+, PyTorch, fair-esm library, GPU recommended.

Procedure:

- Installation:

pip install fair-esm - Load Model and Tokenizer:

- Prepare Data and Tokenize:

- Forward Pass:

- Extract Per-Residue Embeddings: Remove representations for special tokens (CLS, EOS, PAD). Output is an L x D matrix (D=512 for ESM2-650M).

Visualization: Encoding Workflow for Directed Evolution

Title: Protein Sequence Encoding Pathways for ML

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for Sequence Encoding

| Item | Function & Description | Source/Example |

|---|---|---|

| ESM-2 Model Weights | Pre-trained transformer parameters for generating state-of-the-art contextual embeddings. | Hugging Face / Facebook AI Research |

| AlphaFold DB | Source of high-quality protein sequences and structures for training/validation. | EMBL-EBI |

| BioPython | Library for biological computation including BLOSUM matrix access and sequence parsing. | Biopython.org |

| PyTorch / TensorFlow | Deep learning frameworks essential for running and fine-tuning encoder models. | PyTorch.org / TensorFlow.org |

| Hugging Face Transformers | Repository and library providing easy access to thousands of pre-trained models, including protein LMs. | HuggingFace.co |

| GPUs (e.g., NVIDIA A100) | Hardware acceleration for efficient forward passes of large transformer models. | Cloud providers (AWS, GCP) or local clusters |

| PDB (Protein Data Bank) | Curated repository of 3D structures to correlate embeddings with structural features. | RCSB.org |

| UniProt | Comprehensive resource for protein sequence and functional annotation data. | UniProt.org |

Within the paradigm of directed evolution in silico using protein transformers, Step 3 involves the sophisticated generation of mutant sequence libraries. Moving beyond random point mutations, this phase leverages transformer model predictions to create targeted, functionally enriched variant libraries, optimizing for properties like stability, binding affinity, or catalytic activity. This protocol details strategies for intelligent library generation, a critical step in computationally driven protein engineering.

Core Strategies for Intelligent Library Design

Gradient-Based Attribution & Hotspot Identification

Transformer models (e.g., ESM-2, ProtGPT2) enable the calculation of attribution scores (e.g., gradients, attention weights) to identify residues critical to function. This guides focused mutagenesis.

Protocol: Calculating Integrated Gradients for Residue Prioritization

- Input: Wild-type protein sequence, trained protein language model (PLM) or fine-tuned model for a specific property (e.g., thermostability prediction head).

- Procedure:

- Define a Baseline: Use a padded or masked sequence as a neutral baseline.

- Forward Pass: Compute the model's prediction score (e.g., log-likelihood of stability) for the native sequence.

- Gradient Calculation: Using automatic differentiation (PyTorch/TensorFlow), compute the gradient of the prediction score with respect to each amino acid residue's embedding at the final model layer.

- Path Integral: Approximate the integral of gradients along the straight-line path from the baseline input to the actual input sequence. This yields an attribution score per position.

- Rank Residues: Rank all residues by the absolute value of their integrated gradient score. The top N (e.g., 10-20) constitute predicted "hotspots" for mutagenesis.

Sequence Landscaping with Generative Models

Conditional generative models (e.g., tuned ProtGPT2, ESM-2 for inverse folding) can sample novel sequences that fulfill a specified property threshold.

Protocol: Conditional Sequence Generation with a Fine-Tuned Transformer

- Input: Fine-tuned autoregressive transformer model (e.g., ProtGPT2 fine-tuned on stable protein families).

- Procedure:

- Conditioning: Prepend a learned stability token or feed property embeddings into the model's context.

- Controlled Sampling: Use nucleus sampling (top-p) with a temperature parameter (T=0.7-1.0) to balance diversity and quality. Set the start token to the native sequence's N-terminal or a class token.

- Generation: Autoregressively generate 1,000-10,000 novel sequences.

- Filtering: Pass all generated sequences through a discriminative classifier (e.g., a separately trained ESM-2 classification head) to filter for those predicted to meet the target property, creating a focused library.

In-Painting & Controlled Masked Infilling

Using masked language models (e.g., ESM-2) to redesign specific regions while holding the rest of the structure/sequence context constant.

Protocol: Region-Specific Redesign via Iterative Masking

- Input: Protein sequence with a defined region (e.g., a flexible loop, binding patch) selected for redesign.

- Procedure:

- Mask Selection: Replace all amino acids in the target region with the model's mask token.

- Iterative Infilling: For each masked position (in random order), the model predicts a probability distribution over all 20 amino acids.

- Sampling: Sample from the top-k (e.g., k=5) predictions per position, weighted by their softmax probability. This creates multiple combinatorial variants of the target region.

- Context Preservation: The unmasked portion of the sequence provides structural context, ensuring generated variants are more likely to fold correctly.

Table 1: Performance Metrics of In Silico Mutagenesis Strategies

| Strategy | Typical Library Size (Generated) | Computational Cost (GPU hrs) | Avg. Predicted Fitness Gain* | Primary Use Case |

|---|---|---|---|---|

| Random Point Mutagenesis | 10^6 - 10^8 | <0.1 | 0.1 - 0.5 ΔΔG (ns) | Baseline, exploration of local space |

| Gradient-Based Hotspots | 10^3 - 10^4 | 1-5 | 1.0 - 3.0 ΔΔG (ns) | Focused optimization of known scaffolds |

| Conditional Generation | 10^4 - 10^5 | 2-10 | Variable, high diversity | De novo design & global exploration |

| Controlled In-Painting | 10^2 - 10^3 | 0.5-2 | 0.5 - 2.0 ΔΔG (ns) | Functional site or local region engineering |

* Hypothetical ΔΔG (kcal/mol) or normalized stability score (ns) improvement over wild-type, based on model predictions. Actual experimental variance occurs.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for In Silico Library Generation

| Item | Function in Protocol |

|---|---|

| Pre-trained Protein LM (e.g., ESM-2 650M/3B params) | Foundation model providing sequence embeddings and base generative/attribution capabilities. |

| Fine-Tuned Model Checkpoint | Transformer model adapted via transfer learning to predict specific protein properties (stability, expression, activity). |

| Gradient Calculation Framework (PyTorch/TensorFlow) | Enables automatic differentiation for attribution map generation (Integrated Gradients, Saliency). |

| Controlled Sampling Library (e.g., Transformers by Hugging Face) | Provides implemented methods for top-k, top-p, and temperature-controlled sequence generation. |

| High-Performance Computing (HPC) Cluster with GPU Nodes | Essential for running large model inferences and gradient calculations on thousands of sequences. |

| Sequence Log-Likelihood Scoring Script | Custom script to calculate and rank generated sequences by their model-assigned probability (perplexity). |

Workflow and Protocol Visualization

Title: Directed Evolution In Silico Library Generation Workflow

Title: Protocol: Gradient-Based Hotspot Identification

The integration of protein transformers has transformed in silico mutagenesis from a stochastic simulation to a targeted, predictive design process. By applying gradient attribution, conditional generation, and controlled in-painting, researchers can generate high-quality, functionally enriched variant libraries that dramatically increase the efficiency of the directed evolution cycle, accelerating the development of novel enzymes, therapeutics, and biomaterials.

Within directed evolution in silico using protein transformers, the final computational step translates model outputs into a singular, predictive fitness score. This score integrates orthogonal stability, functional, and expressibility metrics—each derived from transformer predictions—to prioritize variants for physical synthesis and testing. This protocol details the prediction and scoring framework essential for closed-loop in silico evolution.

Application Notes: Core Predictive Metrics

A comprehensive fitness score is assembled from three primary predicted properties. The following table summarizes the key metrics, their predictive basis, and biological significance.

Table 1: Core Fitness Prediction Metrics & Their Transformer-Based Estimation

| Metric | Description | Predictive Basis (Transformer Model) | Typical Prediction Output | Relevance to Fitness |

|---|---|---|---|---|

| ΔΔG Stability | Predicted change in folding free energy relative to wild-type. | ESM-2 or ESM-3 (for variant effect), ProteinMPNN (for sequence probability), or dedicated stability predictors (e.g., ThermoNet). | Scalar value (kcal/mol). Negative values indicate increased stability. | Foundation for proper folding and cellular solubility. High stability often correlates with expressibility. |

| Functional Activity | Predicted probability of retaining or enhancing target molecular function (e.g., binding, catalysis). | Fine-tuned protein language model (pLM) on task-specific data, or structure-based models like AlphaFold2 for binding site conformation. | Probability score (0-1) or relative activity (% of wild-type). | Directly linked to primary design objective. Must be balanced with stability. |

| Expressibility Score | Predicted likelihood of high soluble yield in a production system (e.g., E. coli). | Ensemble of pLMs (e.g., ESM-2) trained on proteomic abundance data or predictors of aggregation propensity (e.g., CamSol solubility). | Composite score (e.g., 0-10) or probability. | Critical for downstream experimental validation and scale-up. Incorporates solubility, translation efficiency, and degradation signals. |

Experimental Protocols for Model Inference & Scoring

Protocol 3.1: Inference of Individual Fitness Components

- Objective: Generate quantitative predictions for ΔΔG, Function, and Expressibility for a given variant sequence.

- Materials:

- Input: FASTA file of variant protein sequences.

- Software/APIs: Python environment with libraries for PyTorch, HuggingFace Transformers, and model-specific APIs (e.g., ESM, ProteinMPNN, AlphaFold2 via ColabFold).

- Hardware: GPU (NVIDIA A100 or equivalent recommended) for rapid inference.

- Procedure:

- Sequence Embedding: Pass each variant sequence through a base pLM (e.g., ESM-2-650M) to generate a per-residue embedding matrix.

- Stability (ΔΔG) Prediction:

- Feed the wild-type and variant sequence embeddings into a regression head fine-tuned on experimental ΔΔG data (e.g., from ProTherm database).

- Alternatively, use a dedicated stability model (e.g., ThermoNet) by submitting sequences via its web API or local container.

- Functional Activity Prediction:

- For binding: Use a fine-tuned pLM classifier trained on positive/negative binding sequences, or generate predicted structures with AlphaFold2 and compute a docking score (e.g., with HADDOCK) against a fixed target.

- For catalysis: Use a model fine-tuned on enzyme commission (EC) number or kinetic parameters (kcat/KM).

- Expressibility Prediction:

- Compute the sequence's aggregation propensity using CamSol or TANGO algorithms.

- Simultaneously, pass sequence embeddings through a classifier trained on E. coli soluble expression data (e.g., from SECReTE database).

- Combine normalized aggregation and solubility scores into a single expressibility metric.

- Output: Compile all predictions into a structured JSON or CSV file.

Protocol 3.2: Composite Fitness Scoring Function

- Objective: Integrate individual metrics into a unified, rankable fitness score.

- Procedure:

- Normalization: For each variant list, min-max normalize each metric (ΔΔG, Function, Expressibility) to a [0, 1] scale, where 1 is most desirable (stable, active, expressible).

- Weighted Combination: Apply researcher-defined weights (ws, wf, we) that sum to 1.0, reflecting project priorities (e.g., 0.4 for function, 0.3 for stability, 0.3 for expressibility).

- Scoring Equation: Compute the composite fitness score (F):

- F = (ws * N( -ΔΔG )) + (wf * N(Function)) + (we * N(Expressibility))

- Note: ΔΔG is negated so lower (more negative) ΔΔG yields a higher normalized value N( -ΔΔG ).

- Ranking & Selection: Rank all variants by composite score F. Select top N variants (e.g., top 50) for the next experimental cycle or for in vitro validation.

Visualization of the Fitness Scoring Workflow

Title: Fitness Scoring Integration Workflow

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Resources for In Silico Fitness Prediction

| Item | Function & Relevance | Example/Provider |

|---|---|---|

| Pre-trained Protein Language Model (pLM) | Foundation for generating sequence embeddings used as input for all specialized prediction heads. | ESM-2/ESM-3 (Meta AI), ProtT5 (Rostlab) |

| Variant Effect Prediction Model | Directly predicts stability changes (ΔΔG) or pathogenicity from sequence. | ESM-1v, ESM-2 (variant scoring), INPS3D |

| Structure Prediction Engine | Critical for function prediction when 3D conformation is needed (e.g., binding site analysis). | AlphaFold2 (via ColabFold), RoseTTAFold |

| Solubility/Aggregation Predictor | Computes expressibility and solubility profiles from sequence alone. | CamSol (in-house or web server), TANGO |

| Expression Database | Provides training data for expressibility classifiers. Correlates sequence features with experimental yield. | SECReTE (E. coli expression), Swiss-Prot (annotations) |

| Stability Dataset | Benchmark for training or validating stability predictors. | ProTherm, ThermoMutDB |

| Directed Evolution Dataset | Task-specific activity data for fine-tuning functional activity predictors. | ProteinGym (DMS assays), published DMS studies |

| High-Performance Computing (HPC) / Cloud GPU | Enables rapid inference across thousands of variants for multiple models. | NVIDIA A100 GPU, Google Cloud Platform, AWS EC2 |

| Workflow Orchestration Tool | Automates the multi-step prediction and scoring pipeline. | Nextflow, Snakemake, custom Python scripts |

Application Notes

Directed evolution in silico, powered by protein language models and transformers, has accelerated the engineering of biomolecules with tailor-made functions. This phase translates computational predictions into tangible in vitro and in vivo validation, focusing on three core application verticals.

- Therapeutic Design: The focus is on engineering high-affinity, specific antibodies, deimmunized enzymes, and stable peptide therapeutics. Models predict mutations that optimize binding affinity (ΔΔG), reduce immunogenicity (by removing T-cell epitopes), and enhance thermodynamic stability, moving candidates directly from virtual libraries to in vitro characterization.

- Enzyme Engineering: The goal is to create enzymes with novel or enhanced catalytic activity for industrial biocatalysis. Models predict sequences that stabilize non-natural transition states, alter substrate specificity, or confer robustness under non-physiological conditions (e.g., high temperature, organic solvents). Key metrics include kcat/Km and total turnover number (TTN).

- Biosensor Development: This involves engineering allosteric proteins and fluorescent biosensors for high-sensitivity detection. Models design ligand-binding domains and predict insertion points for reporters (e.g., GFP) to maximize signal-to-noise ratio upon analyte binding. Critical parameters are dynamic range and limit of detection (LOD).

Table 1: Quantitative Benchmarks from Recent Applications

| Application | Target | Model Used | Key Metric | Result (Computational) | Result (Experimental) |

|---|---|---|---|---|---|

| Therapeutic | Anti-PD-1 Antibody | ProteinMPNN, RFdiffusion | Affinity (KD) | Top 50 designs predicted ΔΔG < -2.5 kcal/mol | Best variant showed 5-fold improved KD (180 pM) vs. wild-type |

| Enzyme | PETase for plastic degradation | ESM-2, MSA Transformer | Activity on PET film | 200,000 sequences ranked by stability & active site geometry | Top design showed 2.3x higher depolymerization rate at 40°C |

| Biosensor | Glutamate Biosensor | RoseTTAFold | Fluorescence Response (ΔF/F0) | Designs predicted >200% signal change | Validated sensor showed 180% ΔF/F0 with nM LOD |

Experimental Protocols

Protocol 1: High-Throughput Affinity Maturation of an Antibody Fragment Objective: Experimentally validate computationally designed antibody variants for improved antigen binding.

- Virtual Library Generation: Using a parent Fab crystal structure, generate 10,000 variant sequences with ProteinMPNN, focusing on CDR loops. Filter using ESM-IF1 for folding probability.

- Affinity Prediction: Score all variants using a trained transformer (e.g., IgLM fine-tuned on Ab-Ag complexes) to predict ΔΔG of binding.

- Gene Synthesis & Cloning: Select the top 200 sequences for synthesis as oligonucleotide pools. Clone into a yeast surface display vector (e.g., pYD1) via Gibson assembly.

- Yeast Surface Display Screening: Induce expression in Saccharomyces cerevisiae EBY100. Label with biotinylated antigen and detect with streptavidin-PE. Use FACS to isolate the top 0.5% highest-binding population.

- Characterization: Sequence recovered clones, express soluble Fab, and determine affinity via bio-layer interferometry (BLI) using an Octet system.

Protocol 2: Validating Engineered Enzyme Activity Objective: Measure the catalytic efficiency of a computationally designed hydrolase variant.

- Protein Production: Express and purify wild-type and designed enzymes (with a His6-tag) from E. coli BL21(DE3) via Ni-NTA chromatography.

- Activity Assay: Perform reaction with primary substrate (e.g., p-nitrophenyl ester) in suitable buffer at 30°C. Monitor product formation spectrophotometrically (e.g., at 405 nm for p-nitrophenol) for 2 minutes.

- Kinetic Analysis: Determine initial velocities (V0) across a minimum of 8 substrate concentrations (from 0.1Km to 10Km). Fit data to the Michaelis-Menten equation using GraphPad Prism to derive kcat and Km.

- Thermal Stability Assessment: Use differential scanning fluorimetry (DSF). Incubate protein with SYPRO Orange dye, ramp temperature from 25°C to 95°C at 1°C/min, and monitor fluorescence. Report melting temperature (Tm).

Visualizations

Title: Therapeutic Antibody Design & Validation Workflow

Title: Biosensor Mechanism: Analyte-Induced Signal Output

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Application |

|---|---|

| Yeast Surface Display System (pYD1 vector, EBY100 strain) | Platform for displaying antibody fragments on yeast for high-throughput FACS-based affinity screening. |

| Bio-Layer Interferometry (BLI) Instrument (e.g., Sartorius Octet) | Label-free technology for real-time measurement of protein-protein binding kinetics (KD, kon, koff). |

| Oligonucleotide Pool Library Synthesis | Enables cost-effective synthesis of thousands of designed DNA sequences for cloning variant libraries. |

| Fluorescent Dyes for Stability (e.g., SYPRO Orange) | Used in differential scanning fluorimetry (DSF) to measure protein thermal stability (Tm). |

| His-Tag Purification Kits (Ni-NTA resin) | Standardized method for rapid purification of engineered proteins expressed in E. coli. |

| Microfluidic Droplet Sorters | Allows ultra-high-throughput screening of enzyme or biosensor variants based on fluorescence activity. |

Navigating the Latent Space: Troubleshooting Model Pitfalls and Optimizing Predictions

Application Notes

The directed evolution of proteins in silico using transformer-based models is constrained by a fundamental bottleneck: the scarcity of high-quality, experimentally characterized sequences for novel or understudied protein families. This scarcity limits the model's ability to learn meaningful structure-function relationships, leading to poor predictive performance and unreliable variant generation.

Current strategies focus on leveraging transfer learning and data augmentation from evolutionarily related families, alongside the generation of high-quality synthetic data through ancestral sequence reconstruction or in silico mutagenesis. The integration of physics-based scoring functions and active learning loops that prioritize experimental validation is critical for breaking the scarcity deadlock. Success in this area accelerates the discovery of enzymes, therapeutics, and biosensors from non-canonical protein folds.

Table 1: Comparative Analysis of Data Augmentation Strategies for Novel Protein Families

| Strategy | Mechanism | Typical Data Increase | Key Limitation | Best For |

|---|---|---|---|---|

| Homologous Sequence Mining (e.g., HHblits) | Finds evolutionarily related sequences from databases. | 10x - 1000x | Limited by natural diversity; bias towards well-studied clades. | Families with some known homologs. |

| In Silico Saturation Mutagenesis | Computationally generates all single-point mutants from a seed sequence. | ~20Lx (L=length) | Exponential growth; vast majority are non-functional. | Small, stable scaffolds (<200 aa). |

| Ancestral Sequence Reconstruction (ASR) | Infers probable ancestral sequences to expand diversity. | 10x - 50x | Computational complexity; uncertainty in reconstruction. | Deeply phylogenied families. |

| Generative Model Sampling (e.g., ProteinVAE) | Samples latent space of a generative model trained on broad datasets. | 100x - 10,000x | Risk of generating physically implausible sequences. | Scaffolds with known fold topology. |

| Structure-Based Threading & Design (e.g., Rosetta) | Generates sequences compatible with a target fold. | 100x - 1000x | Requires accurate 3D structure; high compute cost. | Novel folds with solved structures. |

Experimental Protocols

Protocol 1: Iterative Active Learning for Low-Data Protein Families

Objective: To efficiently expand a functional sequence dataset for a novel protein family using a cycle of model prediction, prioritized experimental testing, and model retraining.

Materials:

- Seed Sequences: A small set (<50) of known functional sequences for the target family.

- Base Model: A pre-trained protein language model (e.g., ESM-2, ProtBERT).

- In Vitro Assay: A medium-throughput functional assay (e.g., enzymatic activity, binding via ELISA/SPR).

- Compute Infrastructure: GPU-enabled server for model fine-tuning and inference.

Procedure:

- Initial Model Fine-tuning: Fine-tune the base protein transformer model on the seed sequences using a masked language modeling objective.

- Sequence Generation & Scoring: a. Use the fine-tuned model to generate a large library (e.g., 10,000) of variant sequences via sampling or by scoring mutations in a wild-type background. b. Rank variants using a composite score combining model confidence (pseudo-likelihood) and predicted stability (from tools like FoldX or DeepDDG).

- Priority Selection: Select the top 96-384 candidates for experimental testing, with a bias towards sequences that are diverse in sequence space but high in predicted score.

- Experimental Validation: Express, purify, and assay the selected variants using the established in vitro assay.

- Data Curation & Retraining: Add the experimentally validated functional sequences (positives) to the training set. Optionally, add non-functional sequences (negatives) to a separate negative dataset. Retrain the model on the expanded dataset.

- Iteration: Repeat steps 2-5 for 3-5 cycles or until model performance plateaus.

Protocol 2: Structure-Guided Synthetic Data Augmentation

Objective: To create a large, diverse, and physically plausible training dataset for a novel protein family using a known or predicted tertiary structure.

Materials:

- Template Structure: A high-resolution X-ray or Cryo-EM structure of a representative family member, or a high-confidence AlphaFold2 prediction.

- Protein Design Suite: Rosetta3 or similar software package.

- Sequence Alignment: Multiple Sequence Alignment (MSA) of any available homologs.

Procedure:

- Structure Preparation: Clean the template structure (remove ligands, add hydrogens, optimize side-chains) using PDBFixer or the Rosetta

relaxprotocol. - Define Designable Regions: Based on the MSA and functional sites, specify which residues are allowed to mutate (e.g., solvent-exposed positions, binding pocket residues).

- In Silico Sequence Design:

a. Run the Rosetta

FastDesignorFixbbprotocol to generate sequences that are energetically favorable for the target fold. b. Use different positional constraints (e.g., varying amino acid preferences at key sites) to generate diverse sequence families. Generate 5,000-50,000 unique sequences. - Filtration & Quality Control: a. Filter sequences by Rosetta total energy score (< -1.0 REU per residue). b. Remove sequences with poor predicted stability metrics (e.g., high ΔΔG via DeepDDG). c. Cluster sequences at 70% identity to reduce redundancy.

- Validation & Integration: Select a small subset (50-100) of the generated sequences for experimental validation (as in Protocol 1). Integrate the successfully validated sequences as high-quality synthetic data into subsequent transformer model training pipelines.

Visualization

Diagram 1: Active Learning Cycle for Data Scarcity

Diagram 2: Synthetic Data Augmentation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Overcoming Data Scarcity

| Item | Function in Context | Example Product/Resource |

|---|---|---|

| Pre-trained Protein LM | Foundation model for transfer learning; provides general linguistic understanding of proteins. | ESM-2 (Meta AI), ProtBERT (BioBERT), AlphaFold (Protein Structure). |

| High-Throughput Cloning Kit | Enables rapid construction of expression vectors for hundreds of prioritized variants. | NEB Gibson Assembly Master Mix, Golden Gate Assembly kits. |

| Cell-Free Protein Synthesis System | Rapid expression of protein variants without cloning into cells; ideal for screening. | PURExpress (NEB), Expressway (Thermo Fisher). |

| Automated Liquid Handler | For setting up parallelized in vitro assays (PCR, purification, activity assays). | Beckman Coulter Biomek, Opentrons OT-2. |

| Biolayer Interferometry (BLI) System | Label-free, medium-throughput measurement of binding kinetics/affinity for engineered binders. | Sartorius Octet, ForteBio. |

| Microplate Spectrophotometer/Fluorimeter | Essential for enzymatic activity or stability assays on many variants in parallel. | Tecan Spark, BMG Labtech CLARIOstar. |

| Cloud Compute Credits | Access to GPU/TPU resources for large-scale model training and sequence generation. | Google Cloud TPUs, AWS EC2 P3/P4 instances, Azure NDv4. |

| Protein Stability Prediction API | Computational filtration of generated sequences based on predicted ΔΔG. | DeepDDG web server, FoldX plugin for YASARA. |

| Ancestral Reconstruction Pipeline | Software to generate diverse, likely-functional ancestral sequences. | IQ-TREE (PAML), HyPhy, GRASP. |

Within the thesis on "Directed evolution in silico using protein transformers," a critical challenge is the generation of plausible but non-functional protein sequences—termed here as 'nonsense' sequences. These are outputs from generative language models that exhibit high syntactic likelihood (i.e., resemble natural protein sequences in residue composition and local patterns) but possess no stable fold, measurable activity, or expressible structure. This Application Note details protocols to identify, mitigate, and filter such hallucinations, ensuring that in silico directed evolution pipelines yield functionally promising candidates for wet-lab validation.

Quantitative Analysis of Hallucination Indicators

Recent studies have characterized metrics that correlate with non-functional model hallucinations. The following table summarizes key quantitative indicators and their thresholds for flagging potential nonsense sequences.

Table 1: Quantitative Metrics for Identifying Hallucinated/Non-Functional Sequences

| Metric | Description | Typical Range (Functional) | Flagging Threshold (Potential Nonsense) | Reference (Year) |

|---|---|---|---|---|

| Perplexity (Sequence) | Model's uncertainty in generating the sequence. Lower is more likely. | Varies by model & family. | Significant outlier (>2 std dev above family mean) | Brandes et al. (2023) |

| pLDDT (AlphaFold2) | Predicted local distance difference test. Confidence in structure. | >70 (Good) | Average < 50 | Tunyasuvunakool et al. (2021) |

| ΔΔG (FoldX/ Rosetta) | Predicted change in folding free energy vs. wild-type. | Near 0 or negative (stabilizing) | > +10 kcal/mol (highly destabilizing) | Linsky et al. (2022) |

| Embedding Deviation | Cosine distance from cluster centroid in ESM-2 embedding space. | Low within-family deviation. | >90th percentile of training set distribution | Shanehsazzadeh et al. (2024) |

| Hydrophobic Patch Score | Abnormal aggregation of hydrophobic residues on surface. | < 0.5 | > 0.8 | Buel & Walters (2022) |

Experimental Protocols for Hallucination Detection

Protocol 3.1:In SilicoFiltration Pipeline for Generative Model Outputs

Objective: To filter a large set of model-generated protein sequences and flag those likely to be non-functional hallucinations prior to synthesis. Materials: List of generated FASTA sequences, access to ESM-2/ESMFold or AlphaFold2, compute cluster. Procedure:

- Input: A set of 10,000 model-generated variant sequences in FASTA format.

- Perplexity Filtering:

- Pass each sequence back through the generative model (e.g., ProtGPT2, ProGen2) to calculate its mean token-wise perplexity.

- Exclude sequences with perplexity >2 standard deviations above the mean calculated for a reference set of natural homologs.

- Structural Confidence Assessment:

- Submit remaining sequences to ESMFold (batch mode) for rapid structure prediction.

- Calculate the mean pLDDT score per sequence.

- Discard all sequences with a mean pLDDT < 50.

- Stability Prediction:

- For sequences passing Step 3, use FoldX (or Rosetta

ddg_monomer) to calculate the ΔΔG of folding relative to a stable template structure. - Flag sequences with ΔΔG > +10 kcal/mol for manual inspection.

- For sequences passing Step 3, use FoldX (or Rosetta

- Output: A refined list of sequences (typically 10-20% of initial set) prioritized for in vitro testing.

Protocol 3.2: Wet-Lab Validation of Expressibility and Solubility