Accelerating Drug Discovery: How CAPE Cloud Platform is Transforming Protein Engineering

This article explores the CAPE (Compute-Aided Protein Engineering) cloud computing platform, a powerful solution for researchers, scientists, and drug development professionals.

Accelerating Drug Discovery: How CAPE Cloud Platform is Transforming Protein Engineering

Abstract

This article explores the CAPE (Compute-Aided Protein Engineering) cloud computing platform, a powerful solution for researchers, scientists, and drug development professionals. It covers foundational concepts, including cloud architecture and core algorithms like AlphaFold integration and molecular dynamics. We detail practical methodologies for running virtual mutagenesis and affinity maturation campaigns. The guide provides troubleshooting strategies for common computational bottlenecks and result interpretation. Finally, it validates CAPE's performance against traditional HPC clusters and other platforms like RosettaCloud, analyzing its cost-efficiency, speed, and impact on accelerating therapeutic protein development from lead identification to optimization.

What is CAPE? Demystifying the Cloud Platform for Next-Gen Protein Design

CAPE (Computational Architecture for Protein Engineering) is a cloud-native platform designed to democratize and accelerate the rational design of proteins. Its core thesis posits that the integration of high-performance computing (HPC), specialized machine learning (ML) models, and an intuitive collaborative interface removes traditional bottlenecks in protein engineering workflows. By abstracting infrastructure complexity, CAPE allows researchers to focus on biological design rather than computational logistics, accelerating the path from hypothesis to validated protein construct for therapeutics, enzymes, and materials.

Core Technical Pillars and Quantitative Benchmarks

CAPE’s architecture is built upon four interconnected pillars, each contributing to measurable performance gains.

Table 1: Quantitative Performance Benchmarks of CAPE Core Modules

| CAPE Module | Key Metric | Benchmark Performance | Traditional Workflow Equivalent |

|---|---|---|---|

| RosettaCloud Integration | DDG (ΔΔG) calculation time (per 1000 variants) | ~45 minutes | 24-72 hours (local cluster) |

| AlphaFold2 Ensemble | Prediction speed (avg. 400 residue protein) | ~3.2 minutes | ~30 minutes (local GPU) |

| EquiBind Docking Suite | Ligand pose prediction time | < 10 seconds | 2-5 minutes (standard tool) |

| Cumulative Workflow | End-to-end design cycle (in silico) | 4-6 hours | 5-10 business days |

Foundational Experimental Protocols Enabled by CAPE

Protocol: High-Throughput Virtual Saturation Mutagenesis

Objective: Systematically evaluate the stability and binding affinity of all possible single-point mutants in a protein region of interest. CAPE Workflow:

- Input: Upload wild-type PDB structure or generate with integrated AlphaFold2.

- Region Definition: Select residue range (e.g., binding pocket 32-58).

- Pipeline Configuration:

- Stability Prediction: Launch RosettaDDGPrediction protocol across all 19 possible mutations per position.

- Folding Validation: Parallel execution of AlphaFold2 for each mutant to assess fold preservation.

- Affinity Analysis: If a ligand is defined, initiate high-throughput docking via EquiBind.

- Output Consolidation: Results are aggregated into a heatmap table (ΔΔG, pLDDT, docking score) for variant prioritization.

Protocol: De Novo Enzyme Active Site Design

Objective: Design a novel protein scaffold accommodating a specified transition state analog. CAPE Workflow:

- Motif Scaffolding: Use RFdiffusion (integrated) to generate backbone scaffolds around a user-defined catalytic triad (e.g., Ser-His-Asp) geometry.

- Sequence Design: Employ ProteinMPNN on the top 100 scaffolds to generate stable, foldable sequences.

- Filtration & Ranking:

- Filter sequences by AlphaFold2 predicted confidence (pLDDT > 80).

- Rank remaining by Rosetta energy score.

- Perform molecular dynamics (MD) simulation via integrated OpenMM for stability assessment (short, 10ns simulations).

- Final Selection: Top 5 constructs proceed to in vitro testing.

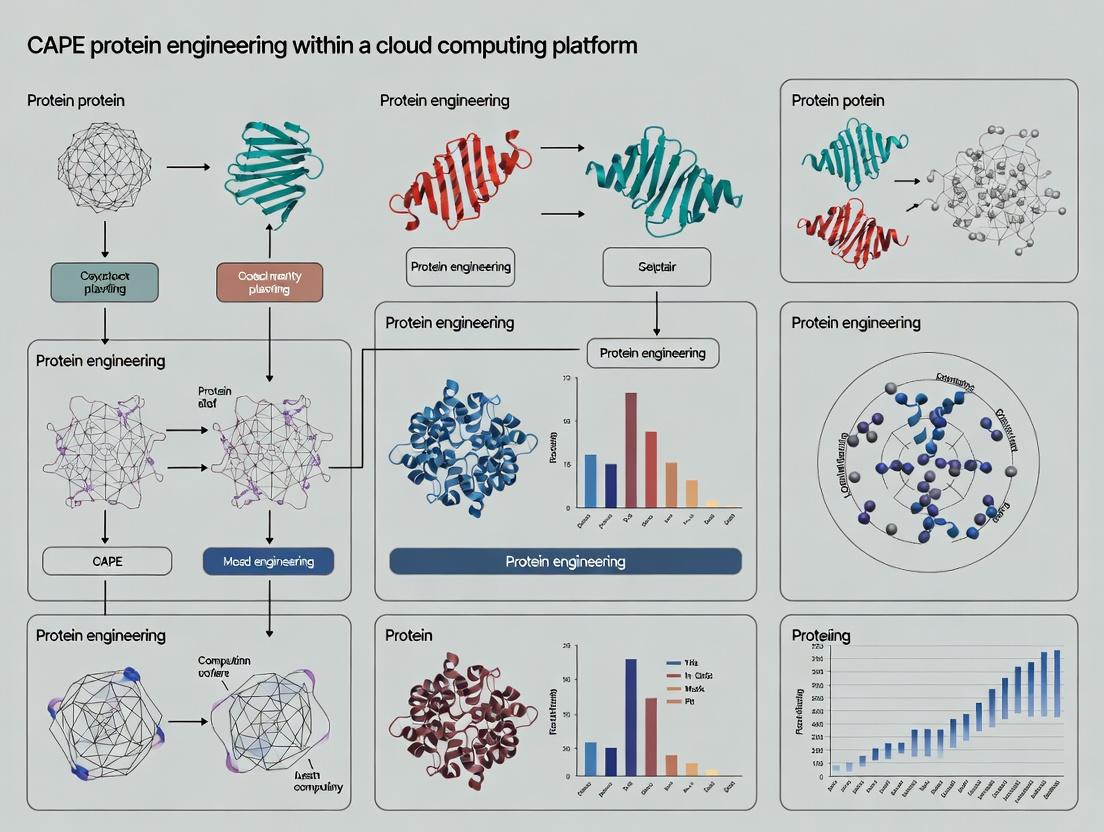

Visualizing the CAPE Integrated Workflow

Diagram Title: CAPE Platform Integrated Protein Engineering Workflow

Diagram Title: In Silico Affinity Maturation Pipeline on CAPE

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Computational Tools for CAPE-Designed Protein Validation

| Reagent/Tool | Function in Validation | Typical Post-CAPE Use Case |

|---|---|---|

| CHO Expi293F Expression System | High-yield transient protein production for eukaryotic proteins (e.g., antibodies, enzymes). | Express top 5-10 CAPE-designed antibody variants for binding assays. |

| Ni-NTA or HisTrap HP Columns | Affinity purification of His-tagged designed proteins. | Initial purification of novel enzyme constructs prior to kinetic analysis. |

| Surface Plasmon Resonance (Biacore 8K) | Label-free kinetic analysis (KD, kon, koff) of protein-ligand or protein-protein interactions. | Quantify binding affinity improvements of designed receptor mutants. |

| Size-Exclusion Chromatography (SEC) | Assess aggregation state and monodispersity of purified protein. | Confirm designed protein folds as a monomer/complex as predicted. |

| Circular Dichroism (CD) Spectrometer | Determine secondary structure composition and thermal stability (Tm). | Validate that de novo designed alpha-helical bundle matches computational predictions. |

| Kinetic Assay Kits (e.g., EnzCheck) | Measure enzymatic activity (turnover number, Michaelis constant). | Characterize the catalytic efficiency of a designed enzyme variant. |

Vision: The Accessible Future of Protein Engineering

CAPE's vision extends beyond a toolkit to become a collaborative, living platform. Future development is focused on:

- Automated Continuous Learning: Integrating user-generated experimental results (e.g., binding affinities, expression yields) back into ML models to improve predictive accuracy.

- Federated Learning Schemes: Allowing organizations to improve shared models without exposing proprietary data.

- Low-Code Experiment Design: Enabling biologists to construct complex computational experiments via graphical workflows, further lowering the barrier to entry.

By consolidating disparate tools into a unified, scalable, and user-centric cloud environment, CAPE aims to fundamentally shift the paradigm of protein engineering from a specialized, resource-intensive task to an accessible, iterative, and data-driven science.

Within the cutting-edge field of computational protein engineering, the CAPE (Computational Analysis and Protein Engineering) research platform represents a paradigm shift. This platform leverages a sophisticated, multi-layered cloud architecture to accelerate the discovery and optimization of therapeutic proteins. This technical guide deconstructs the platform's core cloud service models—Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS)—detailing how each tier supports specific, computationally intensive workflows in biophysical simulation, molecular dynamics, and machine learning-driven protein design.

Cloud Service Model Architecture

The CAPE platform employs a hybrid service model, strategically distributing computational workloads across IaaS, PaaS, and SaaS layers to balance control, scalability, and development agility for research teams.

Quantitative Comparison of Service Layers

Table 1: Comparison of Cloud Service Models in the CAPE Research Platform

| Component | IaaS Layer | PaaS Layer | SaaS Layer |

|---|---|---|---|

| Primary Control | Researcher/Admin | Platform DevOps | CAPE Platform |

| User Management | OS, Security Patches | User Roles, Access Policies | Project-Level Permissions |

| Scalability | Manual/Auto-scaling VM Groups | Auto-scaling Containers & Services | Fully Managed, Transparent |

| Typical Provisioning Time | Minutes to Hours | Seconds to Minutes (Containers) | Immediate (Web Access) |

| Key CAPE Use Case | Raw MD Simulation Clusters, Bulk Data Lakes | ML Training Pipelines, Batch Docking Jobs | Interactive Design, Analysis, Reporting |

IaaS: The Computational Foundation

The IaaS layer provides the raw, high-performance compute and storage necessary for large-scale simulations. A core experiment enabled by this layer is High-Throughput Molecular Dynamics (HT-MD) for Protein Stability Screening.

Experimental Protocol: HT-MD for Mutant Stability

Objective: To computationally assess the thermodynamic stability of thousands of protein variants by simulating folding trajectories.

- Structure Preparation: Input wild-type and mutant PDB files are parameterized using a force field (e.g., AMBER ff19SB).

- System Setup: Each structure is solvated in a TIP3P water box with neutralizing ions, using the

tleapmodule. - Energy Minimization: Two-stage minimization (steepest descent, then conjugate gradient) to remove steric clashes.

- Equilibration: Gradual heating to 310K under NVT ensemble (50ps), followed by pressure equilibration under NPT ensemble (100ps).

- Production MD: Unrestrained simulation for 100-200ns per variant, executed in parallel on GPU-accelerated IaaS instances (e.g., AWS p4d/Google Cloud a2).

- Analysis: Trajectories are analyzed for Root Mean Square Deviation (RMSD), Radius of Gyration (Rg), and calculate free energy (ΔG) via MMPBSA/MMGBSA methods on the PaaS layer.

PaaS: The Orchestrated Workflow Engine

The PaaS layer abstracts IaaS complexity, providing containerized, reproducible environments for data pipelines. A key workflow is the Machine Learning-Based Protein Fitness Prediction.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Reagents for CAPE Workflows

| Item | Function in CAPE Platform |

|---|---|

| Container Images (Docker) | Reproducible, self-contained environments for Rosetta, GROMACS, PyTorch. |

| Kubernetes Helm Charts | Defines, installs, and upgrades complex application stacks on the PaaS layer. |

| Workflow Manager (Apache Airflow DAGs) | Orchestrates multi-step analysis pipelines (e.g., MD pre-proc → simulation → analysis). |

| Specialized Python Libraries (BioPython, MDTraj) | Provide essential functions for sequence manipulation, structural analysis, and trajectory parsing. |

| Persistent Volume Claims (PVCs) | Dynamically provisioned high-I/O storage for intermediate simulation data. |

SaaS: The Integrated Research Environment

The SaaS layer delivers the CAPE web application, integrating all underlying services into a cohesive interface for design, visualization, and collaboration. It directly hosts applications like Interactive Free Energy Perturbation (FEP) Analysis.

Experimental Protocol: SaaS-Guided FEP Analysis

Objective: To calculate relative binding free energies (ΔΔG) for a ligand series against a target protein.

- Project Setup: User uploads a protein-ligand complex PDB via the SaaS interface to initiate a project.

- Automated Setup: The SaaS backend calls a PaaS service which uses a tool like

pmxto generate hybrid topology and coordinate files for the alchemical transformation. - Job Dispatch: The SaaS platform submits the FEP simulation job to a configured queue, which is executed on IaaS GPU resources.

- Real-Time Monitoring: Users monitor job progress, live plots of λ-window energy distributions, and convergence metrics via the SaaS dashboard.

- Integrated Analysis: Upon completion, results are processed and visualized within the interface, showing ΔΔG values, decomposition plots, and structural insights.

The CAPE research platform's efficacy in protein engineering is intrinsically linked to its deliberate cloud architecture. The IaaS layer delivers brute-force computational power, the PaaS layer ensures scalable and reproducible scientific workflows, and the SaaS layer provides an accessible, collaborative research environment. This integrated model enables researchers to move seamlessly from hypothesis to large-scale simulation to analyzed result, dramatically accelerating the cycle of therapeutic protein design and optimization.

Within the CAPE protein engineering cloud computing platform, integrating diverse computational engines is paramount for accelerating the design and analysis of novel proteins. This whitepaper provides an in-depth technical guide to four pivotal algorithms—AlphaFold, ESMFold, GROMACS, and Rosetta—framed within CAPE's mission to provide a unified, scalable research environment for drug discovery and protein science.

AlphaFold (DeepMind)

A deep learning system that predicts protein 3D structure from its amino acid sequence with atomic accuracy. Its Evoformer architecture leverages multiple sequence alignments (MSAs) and a self-attention mechanism to model physical and evolutionary constraints.

ESMFold (Meta AI)

A transformer-based protein language model that predicts structure end-to-end from a single sequence, bypassing the need for MSAs. Built upon the ESM-2 language model, it enables rapid inference suitable for high-throughput screening within CAPE.

GROMACS

A high-performance molecular dynamics (MD) package optimized for simulating Newtonian equations of motion for systems with hundreds to millions of particles. It is essential for studying protein dynamics, folding, and ligand interactions.

Rosetta (RosettaCommons)

A comprehensive software suite for de novo protein design, structure prediction, and docking. Its energy functions and sampling algorithms enable the computational design of novel protein structures and functions.

Quantitative Performance Comparison

Table 1: Key Algorithm Performance Metrics (Representative Data)

| Algorithm | Primary Task | Typical Speed (CAPE Implementation) | Key Accuracy Metric | Optimal Use Case in CAPE |

|---|---|---|---|---|

| AlphaFold2 | Structure Prediction | Minutes to hours per target | GDT_TS ~85-90 (CASP14) | High-accuracy static structures with templates |

| ESMFold | Structure Prediction | Seconds to minutes per target | GDT_TS ~60-80 (on test sets) | Ultra-high-throughput fold screening |

| GROMACS | Molecular Dynamics | ns/day dependent on system size & HW | RMSD, RMSE from experimental data | Dynamics, stability, binding free energy |

| Rosetta | Design & Docking | Hours to days per design cycle | Designability score, ddG (ΔΔG) | De novo design, functional optimization |

Detailed Methodologies & CAPE Integration

Experimental Protocol: High-Throughput Variant Stability Screening on CAPE

This protocol leverages CAPE's orchestration to chain algorithms.

- Input Sequence Generation: Generate thousands of variant sequences from a wild-type scaffold using CAPE's design interface.

- Rapid Fold Assessment: Process all variants through ESMFold for initial structure prediction (seconds each). Filter out variants predicted with low confidence (pLDDT < 70).

- Refined Structure Prediction: Pass filtered subset (~100s) to AlphaFold for high-accuracy structure prediction.

- Energy Minimization & Relaxation: Use Rosetta

FastRelaxto refine AlphaFold outputs, removing steric clashes and optimizing side-chain packing. - Molecular Dynamics Equilibration: For top candidates (~10s), run short (10-100 ns) GROMACS simulations in explicit solvent to assess stability (backbone RMSD, fluctuations).

- Analysis & Ranking: CAPE aggregates metrics (pLDDT, predicted RMSD, Rosetta energy, MD RMSD) into a unified dashboard for researcher decision-making.

Diagram: CAPE Workflow for Protein Variant Screening

Experimental Protocol:De NovoProtein Design with Rosetta & MD Validation

- Motif Specification: Define desired structural motifs (helices, sheets) and functional sites in CAPE.

- Rosetta Parametric Design: Run RosettaScripts (

helix_from_sequence,ParametricDesign) to generate backbone blueprints. - Sequence Design: Use

FastDesignto pack optimal amino acids onto the backbone, optimizing the Rosetta energy function. - In Silico Folding Validation: Subject designed sequences to ESMFold/AlphaFold to check if they fold into the intended structure (inverse folding check).

- Stability Simulation: Run extended (µs-scale) GROMACS simulations on selected designs to assess folding stability and dynamics under near-physiological conditions.

- Experimental Shipment: CAPE platform formats final designs and associated data for DNA synthesis and wet-lab validation.

Diagram:De NovoDesign & Validation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational "Reagents" on CAPE

| Item (Software/Module) | Function in CAPE Workflow | Typical Use Case |

|---|---|---|

| HH-suite | Generates Multiple Sequence Alignments (MSAs) for AlphaFold. | Input preprocessing for template-based structure prediction. |

| OpenMM | GPU-accelerated MD engine alternative to GROMACS. | Rapid prototyping of MD simulations on CAPE GPU nodes. |

| PyRosetta | Python interface to Rosetta. | Scripting custom protein design protocols within CAPE Jupyter notebooks. |

| ColabFold | Integrated AlphaFold2/ESMFold with accelerated MSA. | User-friendly batch structure prediction via CAPE's task wrapper. |

| Pliant | (CAPE Native Tool) Manages workflow orchestration between different engines. | Chaining ESMFold → Rosetta → GROMACS in a single automated pipeline. |

| VMD/ChimeraX | Molecular visualization. | Analyzing and visualizing predicted structures and MD trajectories in CAPE's web viewer. |

| AMBER/CHARMM Force Fields | Parameter sets for MD. | Defining atomistic interactions for accurate GROMACS/OpenMM simulations. |

The integration of AlphaFold, ESMFold, GROMACS, and Rosetta within the CAPE platform creates a synergistic computational engine far greater than the sum of its parts. This unified environment enables researchers to move seamlessly from de novo design and high-throughput screening to detailed dynamic validation, dramatically compressing the protein engineering cycle and accelerating therapeutic development.

Within the broader thesis on the CAPE (Computational Analysis and Protein Engineering) cloud platform, this document explores its specific alignment with the needs of biopharma and academic research. The convergence of high-performance computing (HPC), machine learning (ML), and scalable data management in CAPE directly addresses critical bottlenecks in modern protein engineering and drug discovery workflows.

Core Technical Challenges Addressed by CAPE

Current research faces significant hurdles in computational resource access, collaboration, and reproducibility. CAPE's architecture is engineered to resolve these.

Quantitative Analysis of Research Bottlenecks

The table below summarizes common challenges and CAPE's targeted solutions.

| Research Bottleneck | Impact on Productivity | CAPE's Solution |

|---|---|---|

| Local HPC Queue Times | Delays of 24-72 hours for MD simulation jobs. | On-demand, scalable cloud clusters with near-instant job initiation. |

| Software & Dependency Management | ~30% of researcher time spent installing/configuring tools (e.g., Rosetta, GROMACS, PyMol). | Pre-configured, containerized software environments accessible via web interface. |

| Data Silos & Collaboration | Version conflicts and data sharing delays between computational and experimental teams. | Centralized, version-controlled data repository with fine-grained access controls. |

| High-Performance ML Model Training | Prohibitive cost and expertise required for training large protein language models. | Access to pre-trained models (e.g., AlphaFold2, ESMFold) and GPU clusters for fine-tuning. |

| Reproducibility | <20% of computational studies are fully reproducible due to environment drift. | Snapshotting of complete computational environments (code, data, software). |

Detailed Experimental Protocols Enabled by CAPE

Protocol: High-Throughput Virtual Affinity Maturation

Objective: Identify antibody variant sequences with improved binding affinity for a target antigen.

- Starting Structure: Load a PDB file of the antibody-antigen complex into the CAPE workspace.

- RosettaScan Setup: Using the CAPE Rosetta module, define the residues for mutation (typically CDR regions). Specify the amino acid alphabet for scanning (e.g., 20 standard AAs).

- Distributed Computing: CAPE automatically parallelizes the generation and energy minimization of thousands of mutant structures across its cloud compute nodes.

- Binding Energy Calculation: For each mutant, the platform computes the binding free energy difference (ΔΔG) using the Rosetta ddg_monomer protocol.

- ML-Driven Filtering: Results are fed into an integrated graph neural network (GNN) model trained on experimental binding data to prioritize variants with high predicted ΔΔG improvement and favorable developability profiles.

- Output: A ranked table of candidate variants with structural visualization for downstream experimental validation.

Protocol: Multi-Timescale Molecular Dynamics for Conformational Dynamics

Objective: Characterize the conformational landscape and allosteric mechanisms of a protein target.

- System Preparation: Use the CAPE Prepare tool to solvate the protein in a TIP3P water box, add ions for physiological concentration, and neutralize the system.

- Equilibration: Run a standardized two-step equilibration using the integrated GROMACS engine:

- NVT ensemble for 100 ps to stabilize temperature.

- NPT ensemble for 100 ps to stabilize pressure.

- Production Simulation: Launch multiple, independent replica simulations (e.g., 10 x 1 µs) using CAPE's automated job distribution across GPU instances.

- On-the-Fly Analysis: CAPE tools perform continuous trajectory analysis for root-mean-square deviation (RMSD), fluctuation (RMSF), and principal component analysis (PCA).

- Free Energy Calculation: Use the integrated PLUMED plugin for enhanced sampling or calculate binding free energies using MMPBSA/MMGBSA methods on selected trajectory frames.

- Visualization: Render molecular movies and interactive 3D plots of the PCA subspace directly in the CAPE visualization portal.

Visualization of Key Workflows

Diagram 1: High-throughput in silico mutagenesis workflow.

Diagram 2: CAPE's integrated data-ML feedback loop.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential "digital reagents" and tools accessible within the CAPE platform that are critical for the protocols described.

| Tool/Reagent | Category | Function in Research |

|---|---|---|

| Pre-trained Protein Language Models (ESM-2, ProtGPT2) | AI/ML Model | Generates novel, plausible protein sequences and infers evolutionary constraints for design. |

| AlphaFold2 Multimer | Structure Prediction | Predicts 3D structures of protein complexes (e.g., antibody-antigen) with high accuracy. |

| Rosetta Suit (ddg_monomer, Flex ddG, pyrosetta) | Computational Biophysics | Suite for protein design, energy scoring, and predicting stability/binding energy changes. |

| GROMACS/AMBER GPU-Optimized | Molecular Dynamics | Performs high-performance, multi-timescale simulations for conformational sampling. |

| PLUMED | Enhanced Sampling | Plugin for free energy calculations and guiding simulations along reaction coordinates. |

| PyMOL/ChimeraX Integrations | Visualization | Provides real-time, interactive 3D visualization and analysis of structures and trajectories. |

| JupyterLab with BioPython | Analysis Environment | Customizable notebook environment for scripting, data analysis, and generating publication-quality figures. |

| Versioned Data Lake | Data Management | Secure, centralized repository for raw data, processed results, and analysis pipelines, ensuring reproducibility. |

The CAPE platform is architected to directly meet the evolving technical demands of both biopharma researchers, who require robust, scalable, and compliant pipelines for accelerated drug discovery, and academic labs, which benefit from accessible, state-of-the-art computational tools without significant capital investment. By integrating advanced simulation, AI/ML, and collaborative data management into a unified cloud environment, CAPE eliminates traditional barriers, enabling researchers to focus on scientific innovation rather than infrastructure. This aligns perfectly with the core thesis of CAPE as a transformative, community-driven platform for the next generation of protein science.

The CAPE (Cloud-based Advanced Protein Engineering) platform represents a paradigm shift in computational biology, enabling researchers to perform sophisticated protein design, molecular dynamics (MD) simulations, and high-throughput virtual screening through a unified cloud interface. This guide provides a foundational walkthrough for initiating research within the CAPE ecosystem, framed within the broader thesis that integrated, scalable cloud computing platforms are critical for accelerating the pace of discovery in rational drug design and protein-based therapeutic development.

Access and Initial Navigation

Access to CAPE is typically granted via institutional license. Upon logging in, users are presented with a consolidated dashboard. Core navigation modules are summarized in Table 1.

Table 1: Core CAPE Interface Modules

| Module Name | Primary Function | Key Metrics Displayed |

|---|---|---|

| Project Hub | Central repository for all user projects. | Active projects, storage used, shared collaborators. |

| Simulation Queue | Manages submitted computational jobs. | Job status (Queued/Running/Complete/Failed), node hours consumed. |

| Visualization Studio | Integrated molecular viewer for 3D structural analysis. | RMSD, binding affinity (ΔG in kcal/mol), interactive plots. |

| Data Library | Public and private databases of protein structures/sequences. | >100 million entries (e.g., PDB, AlphaFold DB, UniProt). |

| Analysis Toolkit | Suite of post-processing tools (e.g., for trajectory analysis). | Statistical outputs (mean, standard deviation), plotted time-series. |

Establishing Your First Project: A Step-by-Step Protocol

This protocol outlines the creation of a standard project aimed at performing a virtual alanine scan on a protein-ligand complex—a common first experiment to assess residue contribution to binding energy.

Experimental Protocol 3.1: Initial Project Setup and Alanine Scan

- Project Creation: From the Project Hub, click "New Project." Provide a title (e.g., "FabAInhibitorAlaScan"), description, and select a relevant template ("Ligand Binding Affinity Scan").

- Data Import:

- In the Data Library, search for your protein of interest (e.g., PDB ID: 1ABC). Select and import it into the project's "Structures" folder.

- Alternatively, upload a private structure file (

.pdb,.cif). CAPE will automatically validate topology.

- System Preparation:

- Open the structure in Visualization Studio. Use the integrated "Prepare" workflow.

- Steps: Add missing hydrogens → Assign protonation states at pH 7.4 → Fill in missing side chains using SCWRL4 algorithm → Solvate in a cubic TIP3P water box with 10 Å buffer → Add 0.15 M NaCl ions for neutralization.

- This generates the fully parameterized system for simulation.

- Job Configuration – Alanine Scan:

- Navigate to the "Experiments" tab and select "Create New."

- Choose "Residue Scanning" from the experiment menu.

- Parameters: Select the imported protein-ligand complex. Define the scan region (e.g., binding site residues within 5Å of the ligand). Choose "Alanine" as the mutation target.

- Compute Settings: Select a pre-configured "Rapid MM/GBSA" method. This uses molecular mechanics with Generalized Born and surface area solvation for ΔG calculation. Approximate runtime: 2-3 minutes per mutation on a standard CAPE GPU node.

- Execution and Monitoring: Submit the job. Monitor its progress in the Simulation Queue, where real-time metrics like completed mutations/total mutations are displayed.

- Analysis: Upon completion, results auto-populate in the project's "Analysis" section. A table of ΔΔG values (change in binding energy upon mutation) for each scanned residue is generated.

Core Workflow Visualization

Diagram 1: CAPE Core User Workflow for a Simulation Project (78 chars)

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Reagents for a CAPE Alanine Scanning Experiment

| Item/Resource | Function in the Experiment | Source/Format in CAPE |

|---|---|---|

| Reference Protein Structure | Provides the atomic coordinates for the wild-type system. | PDB file (uploaded or from integrated DB). |

| Force Field Parameters | Defines the potential energy function for molecular mechanics calculations. | Pre-loaded options (e.g., AMBER ff19SB, CHARMM36m). |

| Solvation Model | Implicitly or explicitly represents solvent effects (water, ions). | Pre-configured settings (e.g., GB-Neck2, OBC, TIP3P box). |

| Ligand Parameterization Tool | Generates missing force field parameters for non-standard small molecules. | Integrated GAFF2 parameter generator via antechamber. |

| Mutation Engine | Systematically alters selected residues to alanine in the structural model. | "Residue Scan" module with SCWRL4 or RosettaFixBB. |

| Binding Free Energy Method | Calculates the ΔΔG of binding for each mutant. | MM/GBSA, MM/PBSA, or more advanced FEP/MBAR protocols. |

| Trajectory Analysis Suite | Processes simulation output to extract energetics and structural metrics. | Integrated CPPTRAJ/MDTraj tools for RMSD, energy decomposition. |

Interpreting Initial Results and Next Steps

After running Protocol 3.1, results are presented in both tabular and graphical forms (e.g., a bar chart of ΔΔG per residue). Key residues are identified as "hot spots" where mutation to alanine causes a significant destabilization (ΔΔG > 1.0 kcal/mol). The next logical experiment, guided by the platform, might be a focused library design around these hot spots for potential affinity maturation, leveraging CAPE's deep learning-based variant prediction tools. This iterative cycle of computational hypothesis, experiment, and analysis exemplifies the core thesis of CAPE: reducing the traditional design-build-test cycle time from months to days through integrated cloud computing.

From Sequence to Structure: A Step-by-Step Guide to Running Protein Engineering Workflows on CAPE

This guide details a structured workflow for computational protein engineering, designed explicitly for implementation within a Computational Protein Engineering (CAPE) cloud computing platform. The CAPE thesis posits that integrating scalable cloud infrastructure with modular, automated computational and experimental protocols accelerates the protein design-test-learn cycle. This campaign blueprint operationalizes that thesis.

Campaign Planning & Objective Scoping

A successful campaign begins with precise objective definition. Objectives must be Specific, Measurable, Achievable, Relevant, and Time-bound (SMART).

Table 1: Campaign Objective Scoping Framework

| Scoping Dimension | Key Questions | Example for Thermostable Enzyme |

|---|---|---|

| Target Property | What is the primary property to engineer? | Increase melting temperature (Tm). |

| Property Metric | How will it be measured experimentally? | Differential scanning fluorimetry (DSF). |

| Acceptable Trade-offs | What other properties must be maintained? | Specific activity ≥ 80% of wild-type. |

| Library Scale | What is the feasible experimental throughput? | 96 variants per round. |

| Success Criteria | What result defines a successful campaign? | 3 variants with ΔTm ≥ +10°C. |

Core Computational Workflow

The computational phase generates a prioritized variant library for experimental testing.

Protocol 3.1: Multiple Sequence Alignment (MSA) & Conservation Analysis

- Objective: Identify evolutionarily conserved and variable positions.

- Method:

- Sequence Retrieval: Use HMMER or JackHMMER against UniRef or similar databases to gather homologous sequences.

- Alignment: Perform MSA using MAFFT or Clustal Omega.

- Analysis: Compute per-position entropy or conservation scores (e.g., via the BLOSUM62 matrix or Shannon entropy). Positions with low conservation are potential diversification targets.

Protocol 3.2: Structure-Based Analysis

- Objective: Identify structural determinants of stability/function.

- Method:

- Model Preparation: Obtain a crystal structure or generate a high-quality AlphaFold2 prediction. Clean the structure (add hydrogens, assign charges).

- Stability Analysis: Perform computational mutagenesis scanning with FoldX or Rosetta ddG_monomer to predict ΔΔG of stability for single-point mutations.

- Site Saturation: For chosen positions, use Rosetta's fixbb or a similar tool to rank all 20 amino acid substitutions.

Protocol 3.3: In Silico Library Design & Filtering

- Objective: Combine constraints to design a focused library.

- Method:

- Combine Scores: Integrate evolutionary (MSA) and biophysical (ΔΔG) scores into a composite ranking.

- Apply Filters: Remove variants with:

- Predicted ΔΔG > +2.0 kcal/mol (highly destabilizing).

- Introduction of unpaired cysteines.

- Disruption of known catalytic residues (from catalytic site atlas).

- Diversity Sampling: Select top-ranked unique variants, ensuring coverage across multiple target positions to avoid over-concentration on a single site.

Diagram 1: The CAPE Campaign Workflow

Experimental Validation Workflow

Computational predictions require empirical validation.

Protocol 4.1: Automated DNA Library Construction (Golden Gate/MoClo)

- Objective: Generate the plasmid library for expression.

- Reagents: Variant gene fragments (synthesized as oligo pools), acceptor vector, Type IIS restriction enzyme (e.g., BsaI), T4 DNA Ligase, ATP.

- Method:

- Set up a Golden Gate assembly: Mix 50 ng acceptor vector, 20 ng pooled insert fragments, 1 µL BsaI-HFv2, 1 µL T4 DNA Ligase, 1x Ligase buffer, in 20 µL total.

- Thermocycle: (37°C, 5 min; 16°C, 5 min) x 30 cycles; 60°C, 10 min; 80°C, 10 min.

- Transform into competent E. coli (NEB 10-beta), plate on selective agar, and pick 96 colonies for culture and plasmid DNA extraction.

Protocol 4.2: Microscale Expression & Purification

- Objective: Produce purified protein for assays.

- Method:

- Inoculate 1 mL deep-well blocks with auto-induction media. Express for 24 hours at 20°C.

- Lyse cells via sonication or chemical lysis (BugBuster Master Mix).

- Purify via His-tag using a robotic platform with nickel-coated magnetic beads. Elute in 150 µL of assay-compatible buffer.

Protocol 4.3: High-Throughput Stability & Activity Assays

- Objective: Measure key fitness parameters.

- Method (Differential Scanning Fluorimetry - DSF):

- Mix 10 µL of purified protein with 5 µL of 10X SYPRO Orange dye in a transparent 384-well plate.

- Run on a real-time PCR machine: Ramp temperature from 25°C to 95°C at 1°C/min, monitoring fluorescence.

- Calculate Tm from the first derivative of the melt curve.

- Method (Activity Assay - Kinetic Readout):

- In a 96-well plate, combine 20 µL of eluted protein with 80 µL of reaction substrate at Km concentration.

- Monitor product formation spectrophotometrically or fluorometrically every 30 seconds for 10 minutes.

- Calculate initial velocity (V0). Report as relative activity compared to wild-type.

Table 2: Key Research Reagent Solutions

| Reagent/Material | Supplier Examples | Function in Campaign |

|---|---|---|

| Oligo Pool (Library Synthesis) | Twist Bioscience, IDT | Source of all designed variant DNA sequences. |

| Type IIS Restriction Enzyme (BsaI) | New England Biolabs (NEB) | Enables scarless, modular DNA assembly (Golden Gate). |

| High-Throughput Cloning Strain | NEB 10-beta Electrocompetent E. coli | Reliable transformation for complex plasmid libraries. |

| Nickel Magnetic Beads (His-tag Purification) | Cytiva MagneHis, Thermo Scientific HisPur | Rapid, plate-based protein purification. |

| SYPRO Orange Protein Gel Stain | Thermo Fisher Scientific | Fluorescent dye for thermal denaturation (DSF) assays. |

| BugBuster Protein Extraction Reagent | MilliporeSigma | Non-mechanical cell lysis for high-throughput processing. |

Data Integration & Machine Learning Loop

The CAPE platform's core value is closing the design loop.

Protocol 5.1: Data Curation & Feature Encoding

- Objective: Create a training dataset for model improvement.

- Method: For each tested variant, compile:

- Features: One-hot encoded mutation string, physicochemical properties (e.g., volume, hydrophobicity change), structural features (solvent accessibility, distance to active site).

- Labels: Experimental Tm and Activity from Protocols 4.3.

- Platform Action: The CAPE platform automatically appends this round's data to a central campaign database.

Protocol 5.2: Model Training & Next-Round Design

- Objective: Generate improved predictions for the next cycle.

- Method:

- Train a Gaussian Process Regression or Random Forest model on the accumulated dataset to predict experimental outcomes from sequence/structure features.

- Use the model to score a in silico saturation mutagenesis library at all candidate positions.

- Select the next 96 variants via an acquisition function (e.g., Expected Improvement) that balances exploring uncertain regions of sequence space and exploiting predicted high-fitness areas.

Diagram 2: The Machine Learning Optimization Loop

Quantitative Benchmarks & Resource Planning

Effective planning requires realistic benchmarks for time and cost.

Table 3: Typical Campaign Timeline & Cloud Compute Resources (Per Cycle)

| Phase | Duration | Key CAPE Cloud Compute Resources | Estimated Core-Hours |

|---|---|---|---|

| Computational Design | 2-3 days | High-CPU instances for MSA, FoldX/Rosetta scans, ML inference. | 200-500 |

| Wet-Lab Experimental | 10-14 days | (Orchestration & data logging only). | N/A |

| Data Analysis & Model Update | 1-2 days | GPU instances (optional) for ML model training. | 20-100 (GPU) |

| Total per Cycle | ~14-19 days | Total Compute Cost (Est.): $50 - $200 |

This workflow, executed within an integrated CAPE platform, transforms protein engineering from a series of disjointed experiments into a directed, data-driven campaign, significantly accelerating the path to engineered solutions.

This whitepaper details the critical data preprocessing pipeline within the CAPE (Computational Analysis and Protein Engineering) cloud platform research framework. Accurate and standardized input data is the cornerstone of reliable computational protein engineering, directly impacting the success of downstream tasks like structure prediction, virtual screening, and de novo design. We present standardized methodologies for formatting biological sequences, molecular structures, and engineering target specifications to ensure reproducibility, interoperability, and optimal performance of CAPE's cloud-based algorithms.

The CAPE platform orchestrates complex computational workflows across distributed cloud resources. Inconsistent data formats create bottlenecks, errors, and unreproducible results. This guide establishes the mandatory data formatting protocols for CAPE research, emphasizing FAIR (Findable, Accessible, Interoperable, Reusable) principles. Standardization enables high-throughput analysis, federated learning across datasets, and robust model training for generative protein design.

Formatting Protein Sequences

Sequence Acquisition and Validation

Primary amino acid sequences are the most fundamental input. Sources include UniProt, GenBank, and proprietary databases. CAPE mandates validation to ensure sequence integrity.

Protocol 2.1.1: Sequence Validation and Canonicalization

- Input: Raw sequence string (FASTA, plain text).

- Remove Headers/Metadata: Isolate the pure amino acid character string.

- Character Validation: Confirm all characters belong to the standard 20-amino acid alphabet (A, C, D, E, F, G, H, I, K, L, M, N, P, Q, R, S, T, V, W, Y). Flag and document any non-canonical residues (e.g., "U" for selenocysteine, "O" for pyrrolysine).

- Case Standardization: Convert all characters to uppercase.

- Remove Gaps and Whitespace: Delete all dash ("-"), period ("."), space, and newline characters.

- Length Check: Record sequence length; sequences under 5 residues are flagged as invalid peptides.

- Output: Canonicalized sequence string, validation report log.

Multiple Sequence Alignment (MSA) Formatting

MSAs are critical for evolutionary coupling analysis and profile-based modeling tools like AlphaFold2.

Protocol 2.2.1: MSA Preprocessing for Cloud Deployment

- Input: Raw MSA in Stockholm, A3M, or FASTA format.

- Format Conversion: Convert to compressed A3M format using

hhfilter(from HH-suite) to reduce redundancy (maximum 90% sequence identity). - Gap Handling: Ensure consistent gap representation ('-' for deletions, '.' for insertions relative to the query sequence).

- Metadata Stripping: Remove all annotation lines, retaining only sequence identifiers and the aligned sequences.

- Cloud-Optimized Storage: Save the final A3M file alongside a JSON manifest containing query sequence ID, database source, version, and generation parameters.

Table 1: Quantitative Benchmarks for MSA Generation (Live Search Data)

| Database/Tool | Avg. Time per Query (CPU hrs) | Avg. Sequences Retrieved | Recommended Min. Depth for AF2 | Cloud Service Cost per 1000 Queries (Est.) |

|---|---|---|---|---|

| UniRef30 (2023_01) | 2.5 | 12,450 | 128 | $45.00 |

| BFD/MGnify | 1.8 | 8,750 | N/A | $32.00 |

| ColabFold (MMseqs2) | 0.02 | 5,200 | 64 | $1.50* |

| HHblits (UniClust30) | 3.1 | 9,800 | 128 | $52.00 |

*Primarily GPU cost.

Preparing Molecular Structure Data

Protein Data Bank (PDB) File Processing

Raw PDB files require cleaning and standardization for molecular dynamics (MD) and structure-based design.

Protocol 3.1.1: PDB Standardization Pipeline

- Download & Parse: Fetch PDB or mmCIF file from RCSB PDB.

- Biological Assembly Selection: Extract the correct biological assembly as specified by the database.

- Remove Heteroatoms: Strip all non-protein residues (waters, ions, ligands) unless specified as part of the target (e.g., a cofactor).

- Alternate Location Handling: Retain only the highest occupancy conformer for residues with alternate locations ("A" records).

- Missing Atom/Residue Flagging: Log all missing heavy atoms and residues (e.g., disordered loops).

- Protonation State Assignment: Use

PDB2PQRorPropKa(at pH 7.4) to assign protonation states for histidine residues and other titratable groups. - Format Output: Save the cleaned structure in both PDB and PDBx/mmCIF format. For MD, convert to GROMACS/AMBER format using

pdb2gmxortleap.

Structure Quality Metrics and Filtering

Table 2: Acceptable Quality Thresholds for Experimental Structures

| Metric | Threshold for Homology Modeling | Threshold for De Novo Design Training | Tool for Assessment |

|---|---|---|---|

| Resolution (X-ray) | ≤ 3.0 Å | ≤ 2.5 Å | PDB Header |

| R-free | ≤ 0.30 | ≤ 0.25 | PDB Header |

| Clashscore | ≤ 10 | ≤ 5 | MolProbity |

| Ramachandran Outliers | ≤ 3% | ≤ 1% | MolProbity/PHENIX |

| Sidechain Rotamer Outliers | ≤ 2% | ≤ 1% | MolProbity |

Defining Target Engineering Specifications

Target specifications must be unambiguous, machine-readable, and quantifiable.

Stability & Expression Optimization

Specifications are encoded in JSON Schema.

Binding Affinity & Specificity

Protocol 4.2.1: Specifying Protein-Protein Interface Engineering Goals

- Define Binding Partners: Provide cleaned PDB files for receptor and ligand. If complex exists, use its structure; otherwise, provide a docked model.

- Delineate Interface Residues: Residues with any atom within 5Å of the binding partner are considered interface.

- Set Affinity Targets: Specify desired change in binding energy (ΔΔG in kcal/mol) or dissociation constant (Kd).

- Define Specificity: List off-target proteins (by UniProt ID) against which binding should be minimized or abolished.

- Output: A YAML specification file for the CAPE binder design module.

Visualization of CAPE Data Preparation Workflow

Diagram 1: CAPE Data Preparation and Validation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Data Preparation

| Item | Function in Data Prep | Example/Supplier |

|---|---|---|

| HH-suite3 | Generates sensitive MSAs from sequence databases. Essential for co-evolution analysis. | GitHub: soedinglab/hh-suite |

| ColabFold | Cloud-optimized pipeline for fast MSA generation and protein structure prediction via MMseqs2. | GitHub: sokrypton/ColabFold |

| Biopython | Python library for parsing, manipulating, and validating sequence and structure data. | Biopython.org |

| PDB2PQR | Prepares structures for computational analysis by adding hydrogens, assigning charge states. | Server.pdb2pqr.org |

| Rosetta Commons Software | Suite for energy calculation (ddG), protein design, and structure refinement. Requires license. | RosettaCommons.org |

| MolProbity / PHENIX | Validates structural geometry, identifies clashes, and assesses overall model quality. | MolProbity |

| Docker / Singularity | Containerization tools to encapsulate entire software environments, ensuring reproducibility on CAPE cloud. | Docker.io, Apptainer.org |

| CAPE Format Validator | Platform-specific tool to check JSON/YAML specs, sequence format, and structure file compliance. | CAPE Platform Module v1.2+ |

Meticulous data input preparation as outlined here is not a preliminary step but the foundation of successful computational protein engineering on the CAPE platform. Adherence to these protocols ensures that the massive computational power of cloud resources is applied to meaningful, high-quality data, directly accelerating the cycle of design, build, test, and learn in therapeutic and industrial protein development. Future CAPE research will focus on automating these pipelines further and integrating real-time data from high-throughput experiments.

Within the CAPE (Cloud-based Automated Protein Engineering) platform research, virtual mutagenesis represents a cornerstone computational methodology. It enables the in silico simulation of amino acid substitutions to predict their impact on protein function, stability, and binding, thereby guiding rational experimental design. This guide details strategies for implementing two critical approaches: exhaustive saturation scanning and the design of focused libraries, which are pivotal for efficient protein optimization in therapeutic development.

Core Computational Strategies

Saturation Scanning (Complete Site Exploration)

This strategy involves systematically substituting each position in a target protein region with all 20 canonical amino acids. Within CAPE, this is not merely a brute-force calculation but is optimized via cloud-distributed computing.

Protocol: Cloud-Implemented Single-Site Saturation Scan

- Input Preparation: Upload the wild-type protein structure (PDB format) to the CAPE platform. Define the target region (e.g., binding pocket residues 25-40).

- Structural Preprocessing: The platform launches parallel jobs to relax the input structure using a MM minimization protocol (e.g., 500 steps steepest descent, 1000 steps conjugate gradient).

- Mutation Enumeration: For each target position

i, the system generates 19 mutant structural models using a backbone-dependent rotamer library. - Energy Evaluation: Each mutant model undergoes a rapid energy evaluation using a force field (e.g., Rosetta ref2015 or a CHARMM/AMBER-derived scoring function). The CAPE scheduler distributes these ~

(n*19)independent jobs across scalable cloud compute instances. - Analysis: Per-residue energy scores are collated. The output includes a ΔΔG (change in folding free energy) and often a predicted change in binding affinity (ΔΔG_bind) for each variant.

Table 1: Representative Output Data from a Virtual Saturation Scan of a Catalytic Residue

| Position | Wild-Type AA | Mutant AA | Predicted ΔΔG (kcal/mol) | Predicted ΔΔG_bind (kcal/mol) | Conservation Score |

|---|---|---|---|---|---|

| D30 | Asp | Ala | +2.75 | +3.21 | 0.95 |

| D30 | Asp | Glu | +0.12 | -0.05 | 0.92 |

| D30 | Asp | Lys | +4.51 | +5.88 | 0.95 |

| D31 | Ser | Thr | -0.25 | +0.10 | 0.78 |

| ... | ... | ... | ... | ... | ... |

Focused Library Design (Knowledge-Driven Filtering)

Focused libraries are constructed by filtering saturation scan results using multiple criteria to select a manageable set of high-probability variants for physical testing.

Protocol: Designing a Stability- and Function-Optimized Library

- Perform Virtual Saturation Scan (as above).

- Apply Multi-Parameter Filters:

- Energy Threshold: Discard all variants with ΔΔG > +2.0 kcal/mol (likely destabilizing).

- Functional Score: For binding sites, retain variants with ΔΔG_bind < +1.0 kcal/mol.

- Conservation Analysis: Integrate evolutionary data from tools like

HMMER; penalize substitutions not observed in the protein family. - Structural Clash Check: Remove variants with severe side-chain steric clashes (van der Waals overlap > 0.4 Å).

- Diversity Selection: Cluster remaining variants by side-chain properties (e.g., charge, volume). Select a representative subset (e.g., 50-100 variants) that maximizes chemical diversity at the targeted positions.

- Library Assembly: Output the final list of gene sequences for synthesis. CAPE can directly export instructions compatible with automated oligo synthesis platforms.

Table 2: Comparison of Virtual Mutagenesis Strategies on CAPE

| Parameter | Saturation Scanning | Focused Library Design |

|---|---|---|

| Primary Goal | Exhaustively map mutational landscape | Design a minimal, high-quality set for testing |

| Typical Scale | 100s to 1000s of in silico variants | 10s to 100s of physical variants |

| Key Computational Cost | High (linear scaling with positions) | Moderate (cost dominated by initial scan) |

| Output | Complete energy matrix for all positions | Curated list of gene sequences |

| Best For | Identifying key functional residues, discovery | Lead optimization, stability engineering |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Virtual Mutagenesis on CAPE

| Item | Function in the Workflow |

|---|---|

| High-Resolution Protein Structure (PDB) | Essential starting point for structural modeling and energy calculations. |

| Force Field/Scoring Function (e.g., Rosetta, FoldX) | Provides the physics-based or knowledge-based potential to evaluate mutant stability. |

Evolutionary Coupling Analysis Software (e.g., plmc) |

Identifies co-evolving residues to guide multi-site library design. |

| Cloud Compute Instance (GPU-optimized) | Accelerates molecular dynamics simulations or deep learning-based predictions. |

| Oligo Pool Synthesis Service | Physically manufactures the DNA encoding the designed focused library. |

| Automated Colony Picker | Enables high-throughput screening of the expressed physical library. |

Visualizing Workflows and Relationships

(Fig 1: Virtual Mutagenesis to Focused Library Pipeline)

(Fig 2: CAPE Platform Advantages for Mutagenesis)

Advanced Integration: Machine Learning-Guided Loops

The most current iterations of platforms like CAPE integrate virtual saturation data as training features for machine learning (ML) models. A common loop involves:

- Initial virtual scan of a region.

- Experimental testing of a focused subset.

- Using the experimental data (e.g., stability, activity) to train a supervised ML model (e.g., gradient boosting or a convolutional neural network on protein graphs).

- The trained model predicts fitness for all possible variants in a much larger sequence space.

- A new, model-informed focused library is designed and tested, closing the loop.

This approach dramatically increases the efficiency of searching the vast sequence space, moving from pure physical simulation to simulation-augmented predictive models.

Virtual mutagenesis, executed via cloud platforms like CAPE, transforms protein engineering from a purely empirical art into a data-driven design discipline. Saturation scanning provides the foundational map of sequence-structure-function relationships, while focused library design translates computational insights into practical experimental queries. The integration of these strategies within an automated, scalable cloud environment enables researchers to navigate protein fitness landscapes with unprecedented speed and precision, directly accelerating the development of novel enzymes, therapeutics, and biomaterials.

The Computational Analysis Platform for Engineering (CAPE) is a cloud-native research environment designed to accelerate protein design and optimization. Within this platform, the accurate prediction of binding affinity and protein stability is a cornerstone for rational drug design and enzyme engineering. This technical guide details the integration of physics-based free energy calculations with machine learning (ML) models to deliver robust, scalable predictions on the CAPE platform, enabling high-throughput virtual screening and protein variant prioritization.

Core Methodological Frameworks

Physics-Based Free Energy Calculations

These methods provide a rigorous, theoretically grounded route to estimating changes in free energy (ΔΔG) due to mutations or ligand binding.

Key Experimental Protocols:

A. Alchemical Free Energy Perturbation (FEP)

- Objective: Calculate the relative binding free energy (ΔΔG_bind) between two similar ligands to a common protein target.

- Protocol:

- System Preparation: Solvate the protein-ligand complex in an explicit solvent (e.g., TIP3P water) and add ions for neutralization. Use CAPE’s automated AMBER/CHARMM parameter assignment.

- Topology Generation: Create a dual-topology or hybrid-topology file where the perturbed atoms (those differing between ligands) coexist.

- λ-Schedule Definition: Define a series of 12-24 intermediate non-physical states (λ windows) that morph ligand A into ligand B. A sample schedule is provided in Table 1.

- Equilibration & Production: Run molecular dynamics (MD) simulations for each λ window (e.g., 4 ns equilibration, 10 ns production per window) using GPU-accelerated engines (e.g., OpenMM, GROMACS) on CAPE.

- Analysis: Use the Multistate Bennett Acceptance Ratio (MBAR) or Thermodynamic Integration (TI) to compute the ΔΔG from the ensemble of energies collected across all λ windows.

- Error Analysis: Perform replica simulations with different initial velocities to estimate standard error.

B. Equilibrium Thermodynamic Integration (TI) for Protein Stability

- Objective: Predict the change in folding free energy (ΔΔG_fold) for a point mutation in a protein.

- Protocol:

- Wild-type & Mutant Simulation: Prepare separate simulation systems for the folded and unfolded states of both the wild-type and mutant protein.

- Coupling Parameter (λ): Alchemically mutate the residue in both states. A common 11-point Gaussian quadrature λ schedule is used.

- Simulation Run: Perform extensive sampling for each λ in both states (e.g., 100 ns per window) to ensure convergence of the derivative 〈∂H/∂λ〉.

- Free Energy Integration: Numerically integrate 〈∂H/∂λ〉 over λ from 0 (wild-type) to 1 (mutant) for both folded and unfolded states. ΔΔGfold = ΔGmutfold - ΔGwt_fold.

Diagram Title: Alchemical Free Energy Perturbation (FEP) Protocol

Machine Learning Models

ML models offer rapid predictions by learning from large datasets of experimental or computational ΔΔG values.

Key Model Architectures & Protocols:

A. Training a Graph Neural Network (GNN) for Affinity Prediction

- Objective: Train a model to predict binding affinity from the 3D structure of a protein-ligand complex.

- Protocol:

- Data Curation: Assemble a dataset of protein-ligand complexes with associated binding constants (K_d, IC50, ΔG). CAPE’s internal database can be merged with public sources like PDBbind.

- Graph Representation: Represent each complex as a graph. Nodes are atoms, with features like element type, hybridization. Edges represent bonds or spatial proximity within a cutoff distance.

- Model Architecture: Implement a Message-Passing Neural Network (MPNN). Node features are updated iteratively by aggregating messages from neighboring nodes.

- Training: Use a 70/15/15 train/validation/test split. Employ mean squared error (MSE) loss and the Adam optimizer. Train on CAPE’s GPU clusters.

- Validation: Report performance metrics on the held-out test set (See Table 2).

B. Training a Transformer-based Model for Stability Prediction

- Objective: Predict ΔΔG_fold from protein sequence and structural context.

- Protocol:

- Input Encoding: Use a pre-trained protein language model (e.g., ESM-2) to generate embeddings for each residue in the sequence. Concatenate with structural features (e.g., solvent accessibility, secondary structure) from the wild-type structure.

- Model Architecture: A transformer encoder block attends to the sequence of residue embeddings, capturing long-range interactions that determine stability.

- Training Data: Use databases like ProTherm or S669. The model is trained to minimize the difference between predicted and experimental ΔΔGfold.

- Inference: For a novel mutation, the model processes the sequence and extracted features, outputting a predicted ΔΔGfold in seconds.

Diagram Title: ML Model Development and Deployment Workflow

Table 1: Example λ-Schedule for Alchemical FEP (12 Windows)

| λ Window | λ Value | Purpose |

|---|---|---|

| 1 | 0.0000 | Pure state A |

| 2 | 0.0447 | Early perturbation |

| 3 | 0.1445 | |

| 4 | 0.2869 | Mid-point of transformation |

| 5 | 0.4447 | |

| 6 | 0.6000 | |

| 7 | 0.7445 | |

| 8 | 0.8667 | |

| 9 | 0.9555 | Late perturbation |

| 10 | 0.9953 | |

| 11 | 0.9995 | |

| 12 | 1.0000 | Pure state B |

Table 2: Performance Comparison of Affinity Prediction Methods on CASF-2016 Benchmark

| Method | Type | RMSD (kcal/mol) | Pearson's r | Spearman's ρ | Avg. Time per Prediction |

|---|---|---|---|---|---|

| MM/PBSA | End-point | 2.45 | 0.45 | 0.42 | ~30 min |

| FEP/MBAR | Alchemical | 1.45 | 0.78 | 0.75 | ~24-72 GPU-hrs |

| GNN (GraphScore) | ML | 1.68 | 0.70 | 0.68 | < 1 sec |

| Hybrid (FEP-guided ML) | Hybrid | 1.38 | 0.81 | 0.79 | ~5 sec + FEP data |

Data synthesized from recent literature (2023-2024). RMSD: Root Mean Square Deviation. Hybrid models use FEP results on a subset to train/refine a faster ML predictor.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Prediction Workflow |

|---|---|

| Explicit Solvent Force Fields (OPC4, TIP4P-FB) | Provides accurate modeling of water and ion interactions critical for solvation free energies in FEP. |

| GPU-Accelerated MD Engines (OpenMM, GROMACS) | Enables the nanoseconds-to-microseconds timescale sampling required for converged free energy calculations on CAPE. |

| Pre-trained Protein Language Models (ESM-2, ProtT5) | Generates informative, context-aware residue embeddings from sequence alone, serving as input for stability ML models. |

| Structured Benchmark Datasets (PDBbind, ProTherm, S669) | Provides gold-standard experimental data for training ML models and validating both physics-based and hybrid methods. |

| Automated Workflow Managers (Nextflow, Snakemake) | Orchestrates complex, multi-step prediction pipelines (MD → Analysis → ML) on CAPE's cloud infrastructure. |

| Free Energy Analysis Suites (alchemical-analysis.py, pymbar) | Implements robust statistical methods (MBAR, TI) to extract ΔΔG values from raw simulation data. |

Integrated Hybrid Approach on the CAPE Platform

The synergy of both paradigms is achieved through a cyclical workflow:

- Initial Screening: Ultra-fast ML models scan vast mutational or chemical space.

- Focused Validation: Top candidates from the ML screen are analyzed with rigorous, high-cost FEP/TI calculations.

- Active Learning: The results from the physical calculations are fed back to retrain and improve the ML models, closing the loop and enhancing predictive accuracy over time.

This hybrid approach, seamlessly integrated into the CAPE cloud platform, provides researchers with a powerful, scalable, and continuously improving suite of tools for running affinity and stability predictions.

This technical guide details the analysis phase within the CAPE (Computational Assisted Protein Engineering) cloud platform research framework. The platform integrates high-throughput simulation, molecular dynamics, and machine learning to accelerate therapeutic protein design. Accurate interpretation of output data, visualization via heatmaps, and systematic variant ranking are critical for deriving actionable engineering insights.

Core Output Data Types from CAPE Platform

The CAPE platform generates multi-modal data streams from in silico experiments. Key data types are summarized in Table 1.

Table 1: Core Output Data Types from CAPE Platform Simulations

| Data Type | Description | Typical Format | Primary Use in Analysis |

|---|---|---|---|

| Variant Fitness Scores | Predicted functional activity (e.g., binding affinity, enzymatic kcat/KM). | Numerical CSV | Primary ranking metric. |

| Stability Metrics (ΔΔG) | Predicted change in folding free energy relative to wild-type. | Numerical CSV | Filtering for stable variants. |

| Sequence Entropy | Position-wise conservation/variability from multiple sequence alignment. | Numerical CSV | Identifying mutable vs. conserved sites. |

| Molecular Dynamics (MD) Trajectories | Time-series data of atomic coordinates, energies, and distances. | DCD/XTCOut, LOG files | Assessing conformational dynamics and stability. |

| Pose Analysis Data | Metrics (RMSD, interaction fingerprints) for ligand/protein binding poses. | CSV, JSON | Evaluating binding mode consistency. |

Experimental Protocol: Generating Data for Analysis

This protocol outlines a standard in silico saturation mutagenesis study executed on the CAPE platform.

Protocol Title: In Silico Saturation Mutagenesis and Stability Screening

- Input Wild-type Structure: Upload a PDB file of the target protein to the CAPE workspace.

- Define Target Region: Select protein residues (e.g., active site, binding interface) for comprehensive mutagenesis (all 20 amino acids).

- Configure RosettaDDGPrediction: Use the CAPE-integrated module with the

beta_nov16score function. Set-ddg:mut_iterationsto 50 for robustness. - Configure FoldX Stability Analysis: Use the CAPE wrapper for FoldX5 (RepairPDB, BuildModel, Stability commands) with default parameters.

- Configure Binding Affinity Prediction: For protein-ligand systems, deploy the CAPE

AutoDock Vinapipeline with an exhaustiveness value of 32. - Submit to Cloud Compute: Launch the parallelized job across 1000+ CPU/GPU instances via CAPE's job scheduler.

- Data Aggregation: The platform automatically collates all output files (scores, logs, trajectories) into a structured project database.

Interpreting Heatmaps for Spatial Analysis

Heatmaps are indispensable for visualizing positional and combinatorial data.

4.1 Sequence-Function Heatmap: Maps amino acid substitutions at each position to a computed fitness score (e.g., ΔΔG of binding). It quickly identifies permissive (many positive mutations) and critical (mostly deleterious mutations) positions.

4.2 Correlation Heatmap: Shows pairwise correlations between different metrics (e.g., fitness score vs. stability ΔΔG vs. solubility score) across all variants. Reveals trade-offs or synergies between protein properties.

Diagram Title: Workflow for generating correlation heatmaps in CAPE.

Variant Ranking: A Multi-Criteria Decision Framework

Ranking must move beyond single metrics. CAPE implements a weighted filtering and scoring system.

5.1 Primary Filtering: Discard variants predicted to be severely destabilizing (ΔΔG > 5 kcal/mol) or non-expressing (low solubility score).

5.2 Composite Score Calculation: A weighted composite score (CS) is calculated for each passing variant:

CS = w1*Fitness_Score + w2*(-ΔΔG_Stability) + w3*Conservation_Score

Weights (w1, w2, w3) are user-defined based on project goals (default: 0.6, 0.3, 0.1).

Table 2: Top-Ranked Variants from a Model CAPE Study on an Enzyme

| Variant ID | Fitness Score (↑Better) | Stability ΔΔG (↓Better) | Composite Score | Rank |

|---|---|---|---|---|

| WT | 1.00 | 0.00 | 0.650 | 10 |

| L12F | 1.85 | -0.25 | 1.205 | 2 |

| A34W | 2.30 | +1.10 | 1.150 | 4 |

| K77R | 1.50 | -0.80 | 1.210 | 1 |

| D102N | 1.65 | +0.50 | 0.990 | 7 |

Note: Variant A34W has high fitness but poor stability, lowering its composite rank.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents & Tools for Experimental Validation of CAPE Predictions

| Item | Function in Validation | Example/Supplier |

|---|---|---|

| NEB Gibson Assembly Master Mix | Cloning of designed variant genes from synthesized oligos. | New England Biolabs |

| Polymerase (High-Fidelity) | Amplification of plasmid DNA for sequencing and protein expression. | Q5 (NEB), Phusion (Thermo) |

| HEK293F or ExpiCHO Cells | Mammalian expression system for therapeutic proteins. | Thermo Fisher Scientific |

| Ni-NTA or Anti-FLAG Agarose | Affinity purification of His-tagged or FLAG-tagged protein variants. | Qiagen, Sigma-Aldrich |

| Surface Plasmon Resonance (SPR) Chip | Immobilization ligand for kinetic binding assays (KD, kon, koff). | Series S Sensor Chip (Cytiva) |

| Promega NanoLuc Luciferase | Reporter assay for functional activity in cellular contexts. | Promega |

| Stable Isotope-Labeled Media (SILAC) | Mass-spectrometry based stability or turnover measurements. | Cambridge Isotope Labs |

Integrating MD Trajectory Analysis

Long-timescale MD simulations on CAPE's cloud GPU clusters provide dynamic insights.

Protocol for MD Analysis:

- Simulation: Run 3x 500ns replicates for top-ranked variants using CAPE's GROMACS/AMBER pipeline.

- Post-Processing: Use integrated

MDAnalysisto calculate:- Root Mean Square Deviation (RMSD) of backbone.

- Radius of Gyration (Rg).

- Inter-residue hydrogen bond occupancy.

- Visualization: Render dynamic heatmaps of distance matrices or project trajectories onto Principal Component Analysis (PCA) plots to compare conformational landscapes.

Diagram Title: MD analysis workflow for variant validation.

Effective analysis on the CAPE platform transforms raw data into a prioritized variant list. This list, enriched by heatmaps and MD insights, directs the next design-build-test-learn cycle. The integration of multi-parameter ranking with scalable cloud computing is central to the thesis that intelligent data interpretation is the rate-limiting step in computational protein engineering.

Solving Computational Challenges: Best Practices for Optimizing CAPE Performance and Results

Within the specialized field of computational protein engineering, the CAPE (Computer-Aided Protein Engineering) platform paradigm has emerged as a transformative force. This cloud-based framework integrates molecular dynamics (MD), free energy perturbation (FEP), and deep learning models to predict protein stability, binding affinity, and function. However, the very power of these large-scale, iterative simulations presents significant financial and operational risks. Unmanaged cloud resource consumption can lead to catastrophic cost overruns, derailing research projects. This guide details common pitfalls and provides structured methodologies to maintain fiscal control without compromising scientific rigor.

Core Pitfalls and Quantitative Analysis

The following table summarizes the primary cost drivers and their typical impact observed in CAPE-related research projects.

Table 1: Primary Cost Overrun Drivers in CAPE Simulations

| Pitfall Category | Description | Typical Cost Impact (vs. Planned) | Root Cause |

|---|---|---|---|

| Unbounded Conformational Sampling | Running MD simulations without defining clear convergence criteria (e.g., RMSD, energy plateau). | 200-400% increase | Lack of pre-defined stopping rules leads to unnecessary prolonged sampling. |

| Inefficient Instance Selection | Using high-memory/GPU instances for tasks that are not compute-bound (e.g., pre-processing, analysis). | 50-150% increase | Poor mapping of software requirements to cloud instance types. |

| Data Management Neglect | Storing all raw trajectory data (TB-scale) in high-performance storage without tiering or compression. | 100-300% increase | Failure to implement lifecycle policies for simulation data. |

| Orphaned Resources | Leaving compute instances, storage volumes, or container clusters running after job completion. | Variable, can be infinite | Lack of automated shutdown and resource tagging protocols. |

| FEP Protocol Redundancy | Running duplicate alchemical transformation windows or failing to validate force field parameters pre-production. | 75-125% increase | Inadequate pilot studies and workflow validation. |

Experimental Protocols for Cost-Aware Simulation

Adopting rigorous, tiered experimental protocols is essential for efficient CAPE research.

Protocol 1: Tiered Free Energy Perturbation (FEP) Validation

- Objective: Accurately calculate ΔΔG of binding for a series of ligand variants while minimizing unnecessary computational expense.

- Methodology:

- Pilot System Calibration: Run a single, known ligand-protein transformation using 2-3 different force fields (e.g., AMBER/CHARMM/OPLS) on a small, controlled cluster (e.g., 4 nodes). Compare results to experimental ΔΔG. Select the best-performing force field.

- Window Optimization: For the selected force field, run a limited set of lambda windows (e.g., 12) and analyze energy overlap. Use this to determine the minimum number of windows required for sufficient overlap (< 1.0 kT) for your specific system.

- Production Run: Execute the full ligand series using the optimized force field and lambda schedule. Implement automated convergence monitoring (e.g., using pymbar). Halt simulations upon reaching statistical significance (error < 0.5 kcal/mol) rather than a fixed time limit.

- Analysis & Archival: Process data immediately. Compress raw trajectory data using lossless compression (e.g., XTC format) and move to cold storage (e.g., Amazon S3 Glacier, Google Cloud Storage Coldline) after 30 days.

Protocol 2: Scalable Molecular Dynamics for Stability Prediction

- Objective: Predict the change in protein thermal stability (ΔTm) upon mutation via large-scale, parallel MD simulations.

- Methodology:

- Resource Template Definition: Use infrastructure-as-code (e.g., Terraform, AWS CloudFormation) to define a repeatable cluster configuration. Specify auto-scaling policies to scale from a minimum of 10 to a maximum of 100 compute nodes based on queue depth.

- Job Segmentation & Checkpointing: Segment the simulation of each mutant into independent, restartable 10ns segments. Use frequent checkpointing (every 5000 steps). This allows for preemption by lower-cost spot/preemptible instances without losing work.

- Centralized Monitoring: Implement a cloud monitoring dashboard (e.g., using Grafana) to track in real-time: aggregate core-hours consumed, cost per mutant, and simulation progress (RMSD, Rg). Set budget alerts at 50%, 80%, and 100% of allocated funds.

- Automated Post-Processing: Trigger an analysis serverless function (e.g., AWS Lambda, Google Cloud Function) upon job completion to calculate metrics, generate plots, and terminate the compute cluster.

Visualizations of Key Workflows

Tiered FEP Workflow for Cost Control

Real-Time Cost and Performance Monitoring Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Tools for Cost-Effective CAPE Simulations

| Item | Function in CAPE Research | Cost-Control Rationale |

|---|---|---|

| Cloud Cost Management Tools (e.g., AWS Cost Explorer, GCP Cost Table) | Provides detailed, tag-based breakdowns of spending per project, simulation type, or research team. | Enables precise attribution and identification of expensive workflows for optimization. |

| Infrastructure-as-Code (IaC) Templates (e.g., Terraform modules, AWS CDK) | Codifies the deployment of compute clusters, storage, and networking, ensuring reproducibility. | Eliminates configuration drift and "golden instance" sprawl; allows quick teardown of resources. |

| Preemptible / Spot Instances | Significantly discounted compute capacity that can be interrupted with short notice. | Can reduce compute costs by 60-90% for fault-tolerant, checkpointed simulations like MD. |

| Workflow Orchestrators (e.g., Nextflow, Snakemake on Kubernetes) | Manages complex, multi-step simulation pipelines, handling dependencies and failures. | Maximizes resource utilization and ensures failed steps are re-run automatically, avoiding waste. |

| Lifecycle Storage Policies | Automated rules to transition data from hot to cold storage and eventually to deletion. | Drastically reduces storage costs for massive trajectory files that are rarely accessed after initial analysis. |

| Containerized Software Stacks (e.g., Docker/Singularity for GROMACS, AMBER) | Packages simulation software, dependencies, and environment into a portable, version-controlled unit. | Ensures consistency, reduces setup time, and allows optimal use of cloud-optimized instances. |

For researchers operating within a CAPE platform framework, avoiding cost overruns is not merely an administrative task but a core component of sustainable scientific practice. By implementing structured experimental protocols, leveraging tiered computational strategies, deploying robust monitoring, and utilizing the modern toolkit of cloud resource management, teams can harness the full power of large-scale simulation while maintaining firm control over their budget. This disciplined approach ensures that financial resources are directly and efficiently converted into robust, high-impact scientific insights.

In the context of CAPE (Computational Analysis and Protein Engineering) cloud computing platform research, parameter tuning is a fundamental task for balancing predictive accuracy with computational resource constraints. The CAPE platform is designed to accelerate in silico drug discovery by providing scalable, high-throughput molecular dynamics (MD) and free energy perturbation (FEP) simulations. The core challenge lies in selecting simulation parameters—such as time step, cutoff distances, and sampling duration—that yield biologically relevant data without prohibitive computational cost. This guide provides a technical framework for systematic parameter optimization tailored to cloud-based protein engineering workflows.

Key Simulation Parameters and Their Impact

The following parameters are primary levers for controlling the accuracy-speed trade-off in biomolecular simulations on the CAPE platform.

Table 1: Core Simulation Parameters and Their Typical Impact

| Parameter | Typical Range | Impact on Accuracy | Impact on Speed (Computational Cost) | Primary Consideration in CAPE |

|---|---|---|---|---|

| Integration Time Step (Δt) | 1 - 4 fs | Larger Δt can reduce accuracy of bond dynamics (requires constraints like LINCS/SHAKE). | Linear increase with Δt. Larger Δt allows fewer steps per ns. | Critical for long-timescale folding simulations. 2 fs is often the default balance. |

| Non-bonded Cutoff Radius | 0.9 - 1.4 nm | Shorter cutoffs can introduce artifacts in electrostatic and van der Waals forces. | Cubic decrease with cutoff radius. Larger cutoffs significantly increase neighbor search load. | Must be paired appropriately with long-range electrostatics method (PME). |

| PME Grid Spacing (Fourier Spacing) | 0.12 - 0.16 nm | Coarser grid reduces accuracy of long-range electrostatic forces. | Finer grid increases FFT computation and communication overhead. | Optimized for GPU-accelerated nodes on CAPE cloud. |